CBLSTM-AE: A Hybrid Deep Learning Framework for Predicting Energy Consumption

Abstract

:1. Introduction

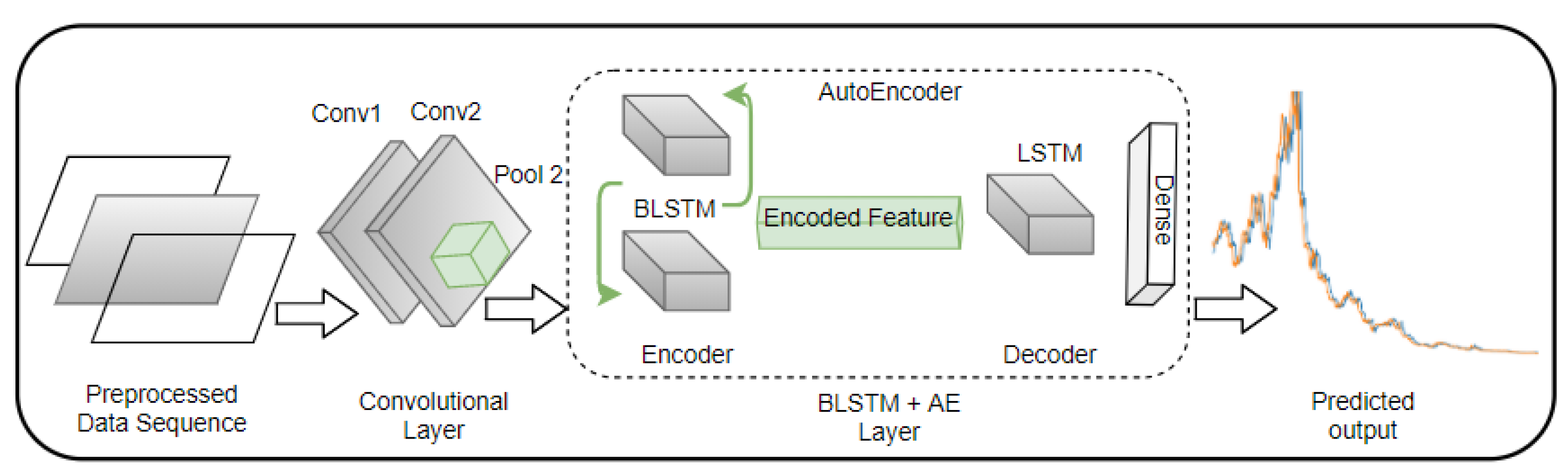

- We proposed a hybrid deep learning architecture comprising two CNN layers and an AE with BLSTM as the encoding layer and LSTM as the decoding layer for load forecasting of real energy consumers.

- Peak load varies among buildings and countries, resulting in a poor generalisation of DNN models. Thus, the generalisation ability of the framework is tested using a varied length of datasets across households and SMEs over two different countries—the UK and Canada.

- As energy consumption prediction (ECP) can be greatly impacted by irregular occupant behaviours, weather uncertainties, and the nonlinearity of building dynamics [20,21,22,23], the impact of these dynamics was not addressed in [7,18,19]. Thus, this work explores the effect of weather and weekly index in the proposed framework.

2. Literature Review

3. Proposed Framework (CBLSTM-AE)

3.1. Data Cleaning and Rolling Window

3.2. Proposed CBLSTM-AE

3.2.1. CNN

3.2.2. BLSTM-AE

3.2.3. LSTM-AE

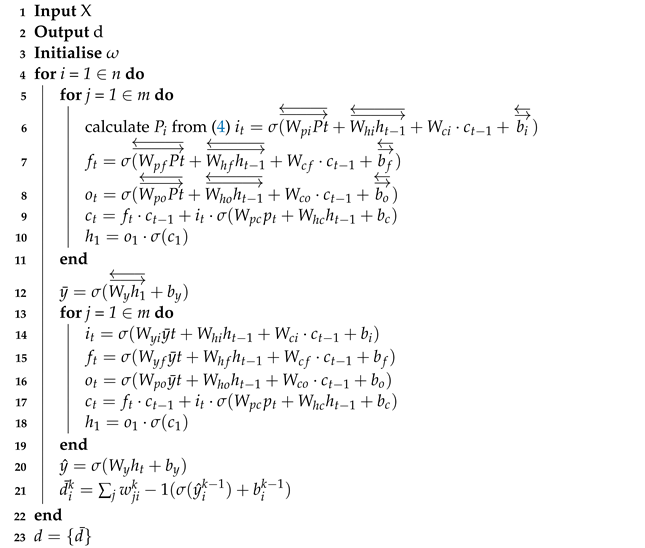

| Algorithm 1: CBLSTM-AE Algorithm. |

|

4. Framework Evaluation

4.1. Dataset Description

4.2. Experimental Setup and Evaluation Metrics

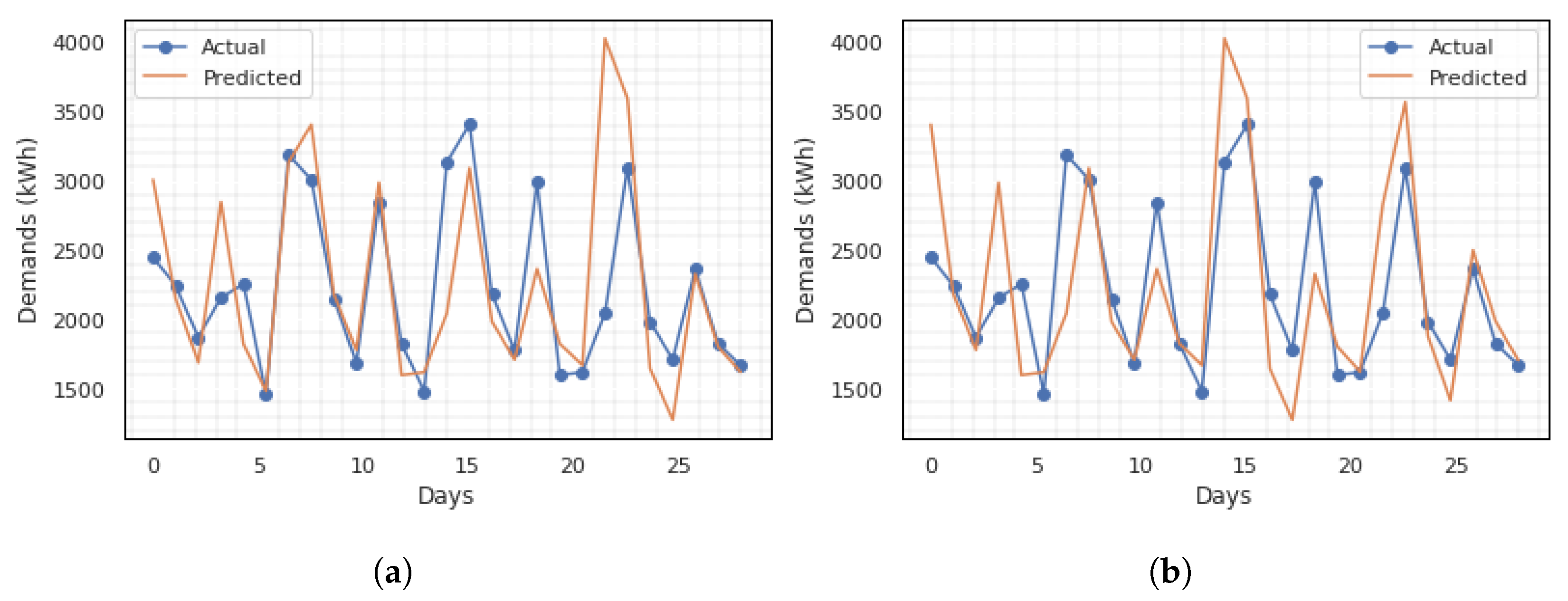

5. Results and Discussion

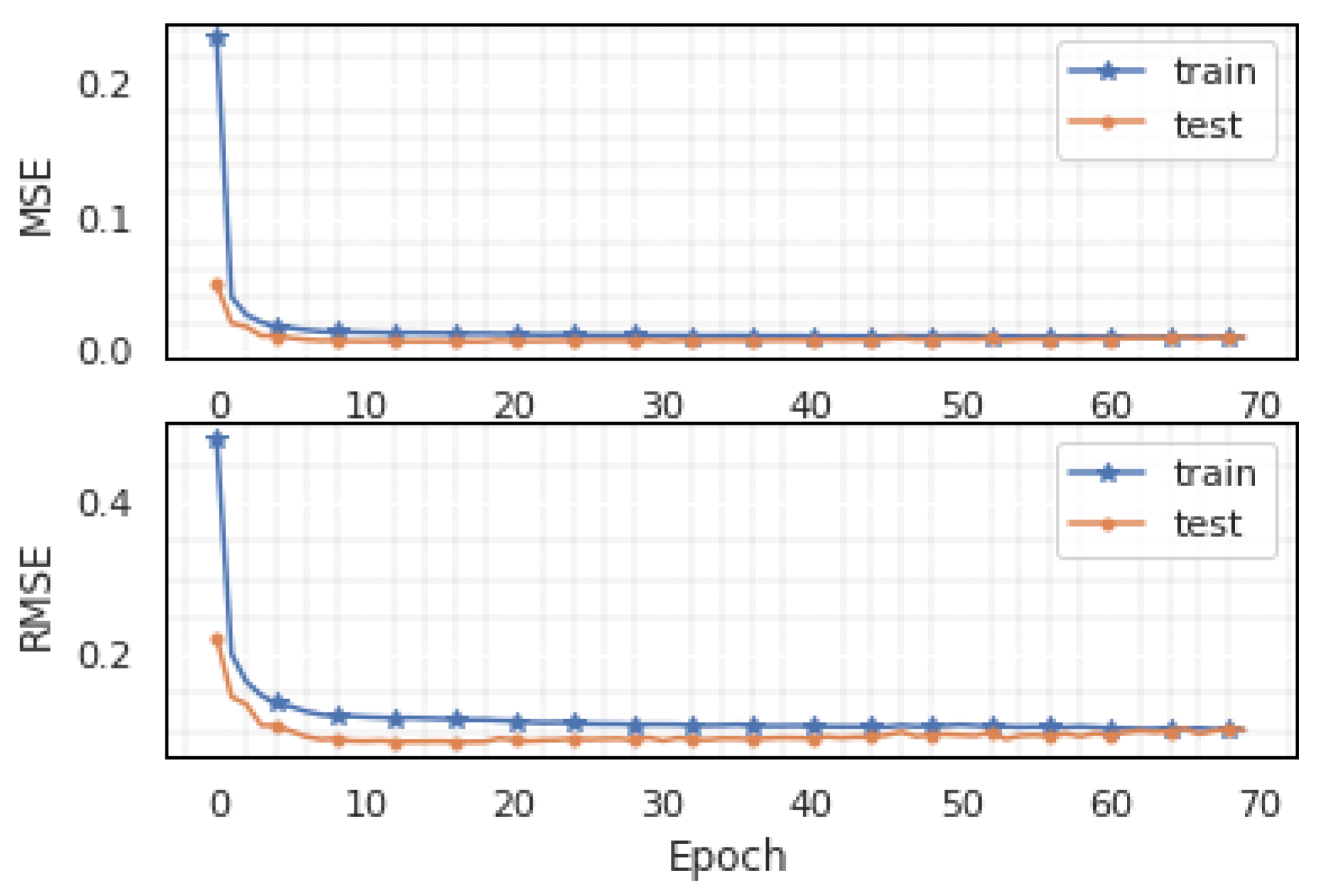

5.1. Experiment on Rolling Window Input

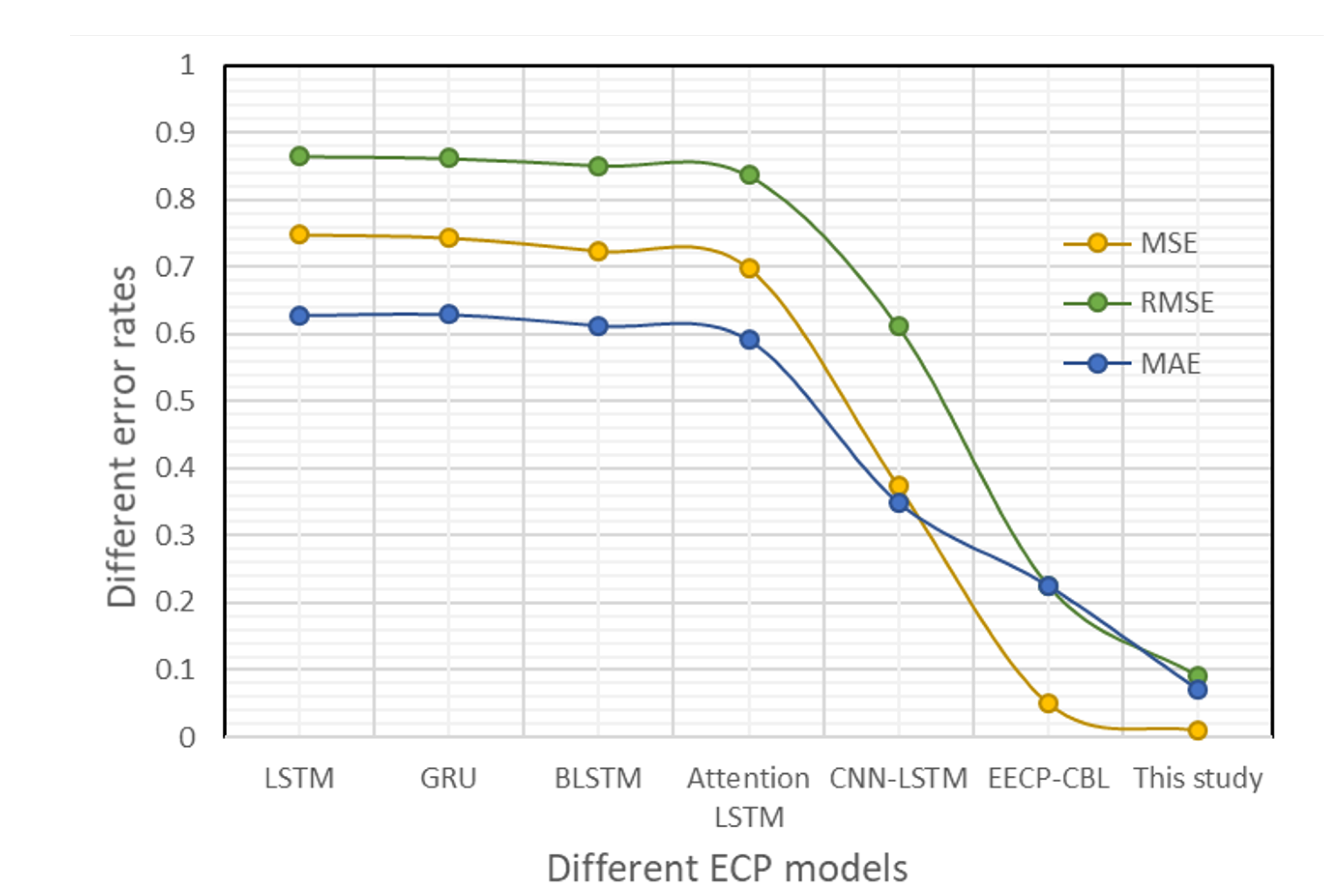

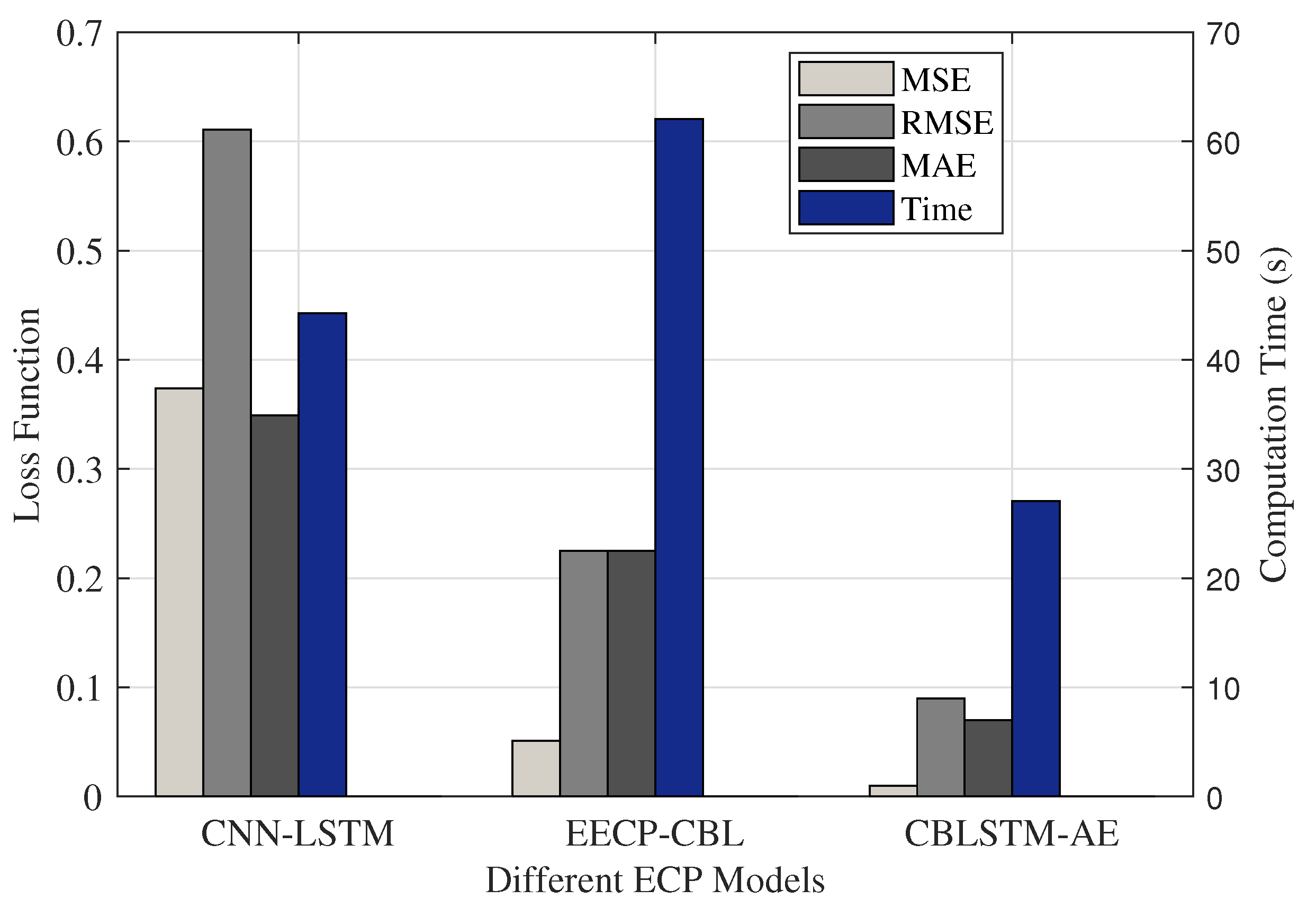

5.2. Comparison with State-of-the-Art

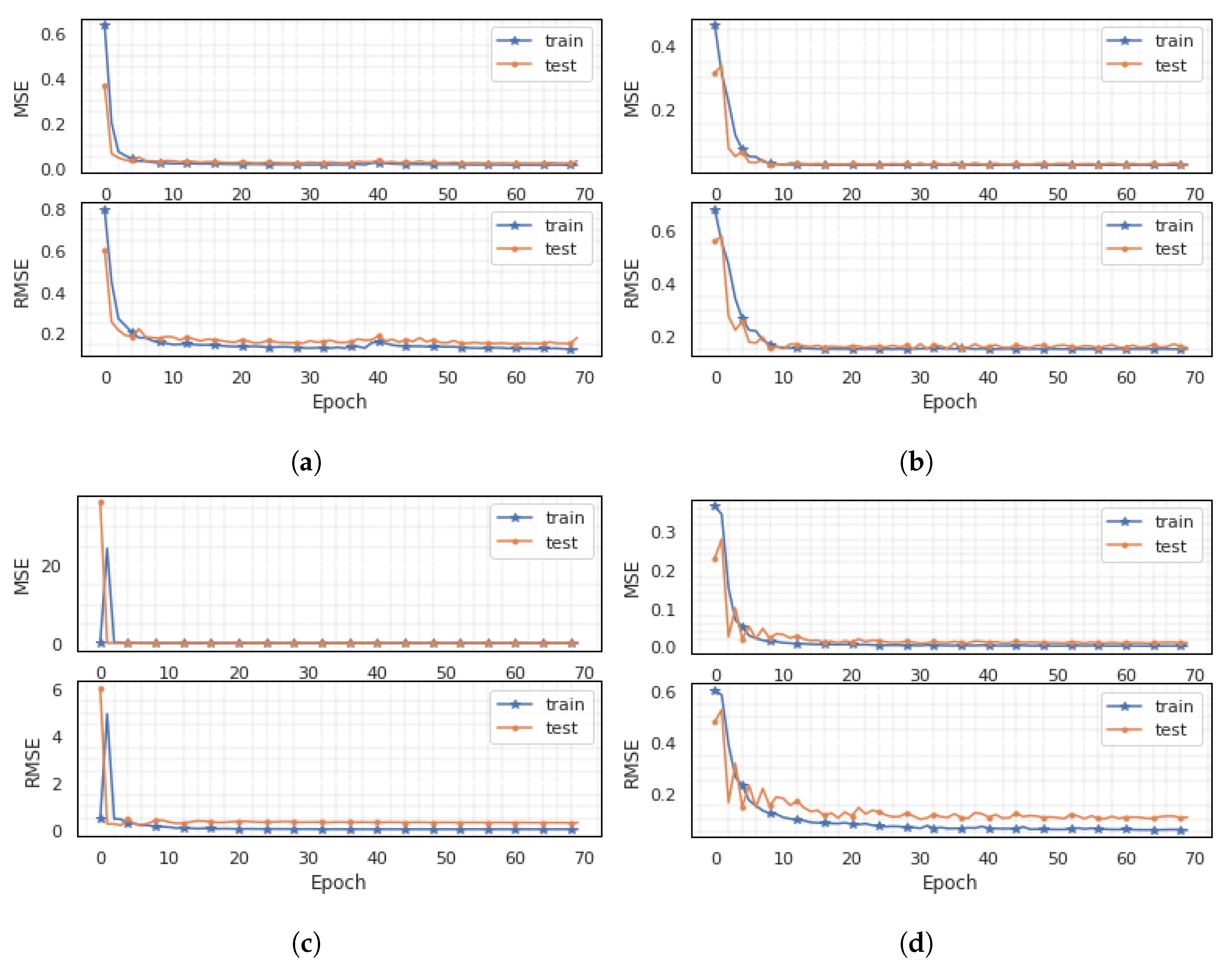

5.3. Generalisation Ability of CBLSTM-AE

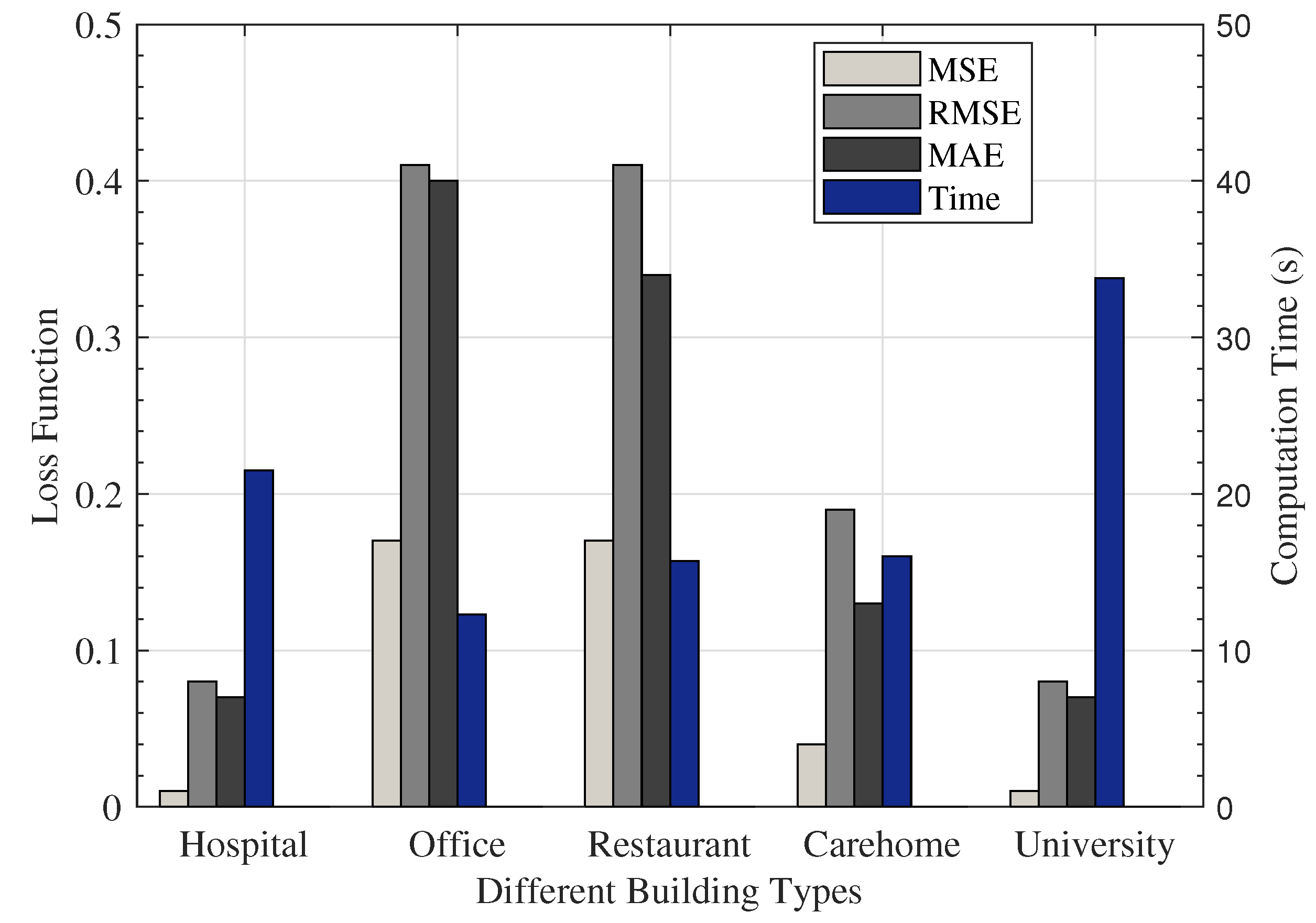

5.4. Performance Analysis for Different Dataset

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Velickov, S. Energy 4.0: Digital Twin for Electric Utilities, Grid Edge and Internet of Electricity. 2020. Available online: https://www.linkedin.com/pulse/energy-40-digital-twin-electric-utilities-grid-edge-slavco-velickov/ (accessed on 16 November 2020).

- Bunn, D.; Farmer, E.D. Comparative Models for Electrical Load Forecasting; John Wiley and Sons Inc.: New York, NY, USA, 1985. [Google Scholar]

- Chitalia, G.; Pipattanasomporn, M.; Garg, V.; Rahman, S. Robust short-term electrical load forecasting framework for commercial buildings using deep recurrent neural networks. Appl. Energy 2020, 278, 115410. [Google Scholar] [CrossRef]

- Jogunola, O.; Adebisi, B.; Ikpehai, A.; Popoola, S.I.; Gui, G.; Gacanin, H.; Ci, S. Consensus Algorithms and Deep Reinforcement Learning in Energy Market: A Review. IEEE Internet Things J. 2020, 8, 4211–4227. [Google Scholar] [CrossRef]

- Jogunola, O.; Wang, W.; Adebisi, B. Prosumers Matching and Least-Cost Energy Path Optimisation for Peer-to-Peer Energy Trading. IEEE Access 2020, 8, 95266–95277. [Google Scholar] [CrossRef]

- Jogunola, O.; Tsado, Y.; Adebisi, B.; Hammoudeh, M. VirtElect: A Peer-to-Peer Trading Platform for Local Energy Transactions. IEEE Internet Things J. 2021. [Google Scholar] [CrossRef]

- Kim, T.Y.; Cho, S.B. Predicting residential energy consumption using CNN-LSTM neural networks. Energy 2019, 182, 72–81. [Google Scholar] [CrossRef]

- Khan, Z.A.; Hussain, T.; Ullah, A.; Rho, S.; Lee, M.; Baik, S.W. Towards Efficient Electricity Forecasting in Residential and Commercial Buildings: A Novel Hybrid CNN with a LSTM-AE based Framework. Sensors 2020, 20, 1399. [Google Scholar] [CrossRef] [Green Version]

- Somu, N.; MR, G.R.; Ramamritham, K. A hybrid model for building energy consumption forecasting using long short term memory networks. Appl. Energy 2020, 261, 114131. [Google Scholar] [CrossRef]

- Zaidi, B.H.; Ullah, I.; Alam, M.; Adebisi, B.; Azad, A.; Ansari, A.R.; Nawaz, R. Incentive Based Load Shedding Management in a Microgrid Using Combinatorial Auction with IoT Infrastructure. Sensors 2021, 21, 1935. [Google Scholar] [CrossRef]

- Muzaffar, S.; Afshari, A. Short-term load forecasts using LSTM networks. Energy Procedia 2019, 158, 2922–2927. [Google Scholar] [CrossRef]

- Dagdougui, H.; Bagheri, F.; Le, H.; Dessaint, L. Neural network model for short-term and very-short-term load forecasting in district buildings. Energy Build. 2019, 203, 109408. [Google Scholar] [CrossRef]

- Fan, C.; Xiao, F.; Zhao, Y. A short-term building cooling load prediction method using deep learning algorithms. Appl. Energy 2017, 195, 222–233. [Google Scholar] [CrossRef]

- Kim, J.; Moon, J.; Hwang, E.; Kang, P. Recurrent inception convolution neural network for multi short-term load forecasting. Energy Build. 2019, 194, 328–341. [Google Scholar] [CrossRef]

- Cai, M.; Pipattanasomporn, M.; Rahman, S. Day-ahead building-level load forecasts using deep learning vs. traditional time-series techniques. Appl. Energy 2019, 236, 1078–1088. [Google Scholar] [CrossRef]

- Wang, Z.; Wang, Y.; Zeng, R.; Srinivasan, R.S.; Ahrentzen, S. Random Forest based hourly building energy prediction. Energy Build. 2018, 171, 11–25. [Google Scholar] [CrossRef]

- Candanedo, L.M.; Feldheim, V.; Deramaix, D. Data driven prediction models of energy use of appliances in a low-energy house. Energy Build. 2017, 140, 81–97. [Google Scholar] [CrossRef]

- Le, T.; Vo, M.T.; Vo, B.; Hwang, E.; Rho, S.; Baik, S.W. Improving electric energy consumption prediction using CNN and Bi-LSTM. Appl. Sci. 2019, 9, 4237. [Google Scholar] [CrossRef] [Green Version]

- Ullah, F.U.M.; Ullah, A.; Haq, I.U.; Rho, S.; Baik, S.W. Short-term prediction of residential power energy consumption via CNN and multi-layer bi-directional LSTM networks. IEEE Access 2019, 8, 123369–123380. [Google Scholar] [CrossRef]

- Massana, J.; Pous, C.; Burgas, L.; Melendez, J.; Colomer, J. Short-term load forecasting in a non-residential building contrasting models and attributes. Energy Build. 2015, 92, 322–330. [Google Scholar] [CrossRef] [Green Version]

- Yildiz, B.; Bilbao, J.I.; Sproul, A.B. A review and analysis of regression and machine learning models on commercial building electricity load forecasting. Renew. Sustain. Energy Rev. 2017, 73, 1104–1122. [Google Scholar] [CrossRef]

- Walter, T.; Price, P.N.; Sohn, M.D. Uncertainty estimation improves energy measurement and verification procedures. Appl. Energy 2014, 130, 230–236. [Google Scholar] [CrossRef] [Green Version]

- Gianniou, P.; Liu, X.; Heller, A.; Nielsen, P.S.; Rode, C. Clustering-based analysis for residential district heating data. Energy Convers. Manag. 2018, 165, 840–850. [Google Scholar] [CrossRef]

- Khan, A.; Chiroma, H.; Imran, M.; Bangash, J.I.; Asim, M.; Hamza, M.F.; Aljuaid, H. Forecasting electricity consumption based on machine learning to improve performance: A case study for the organization of petroleum exporting countries (OPEC). Comput. Electr. Eng. 2020, 86, 106737. [Google Scholar] [CrossRef]

- Zhong, H.; Wang, J.; Jia, H.; Mu, Y.; Lv, S. Vector field-based support vector regression for building energy consumption prediction. Appl. Energy 2019, 242, 403–414. [Google Scholar] [CrossRef]

- Ahmad, M. Seasonal decomposition of electricity consumption data. Rev. Integr. Bus. Econ. Res. 2017, 6, 271. [Google Scholar]

- Fayaz, M.; Shah, H.; Aseere, A.M.; Mashwani, W.K.; Shah, A.S. A framework for prediction of household energy consumption using feed forward back propagation neural network. Technologies 2019, 7, 30. [Google Scholar] [CrossRef] [Green Version]

- Fayaz, M.; Kim, D. A prediction methodology of energy consumption based on deep extreme learning machine and comparative analysis in residential buildings. Electronics 2018, 7, 222. [Google Scholar] [CrossRef]

- Li, C.; Ding, Z.; Zhao, D.; Yi, J.; Zhang, G. Building energy consumption prediction: An extreme deep learning approach. Energies 2017, 10, 1525. [Google Scholar] [CrossRef]

- Almalaq, A.; Zhang, J.J. Evolutionary deep learning-based energy consumption prediction for buildings. IEEE Access 2018, 7, 1520–1531. [Google Scholar] [CrossRef]

- Wen, L.; Zhou, K.; Yang, S.; Lu, X. Optimal load dispatch of community microgrid with deep learning based solar power and load forecasting. Energy 2019, 171, 1053–1065. [Google Scholar] [CrossRef]

- Hebrail, G.; Berard, A. Individual Household Electric Power Consumption Data Set. Available online: https://archive.ics.uci.edu/ml/datasets/individual+household+electric+power+consumption (accessed on 16 November 2020).

- Shi, H.; Xu, M.; Li, R. Deep learning for household load forecasting—A novel pooling deep RNN. IEEE Trans. Smart Grid 2017, 9, 5271–5280. [Google Scholar] [CrossRef]

- Ullah, A.; Haydarov, K.; Ul Haq, I.; Muhammad, K.; Rho, S.; Lee, M.; Baik, S.W. Deep learning assisted buildings energy consumption profiling using smart meter data. Sensors 2020, 20, 873. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Ullah, I.; Ahmad, R.; Kim, D. A prediction mechanism of energy consumption in residential buildings using hidden markov model. Energies 2018, 11, 358. [Google Scholar] [CrossRef] [Green Version]

- Google. Welcome to Colaboratory. 2020. Available online: https://colab.research.google.com/ (accessed on 16 November 2020).

- Brownlee, J. Better Deep Learning: Train Faster, Reduce Overfitting, and Make Better Predictions; Machine Learning Mastery: Melbourne, Australia, 2018. [Google Scholar]

| Prediction Models | Ref. | Year | Method | Period | Description |

|---|---|---|---|---|---|

| Statistical Models | [16] | 2018 | Random forest | Hourly, monthly, yearly | Hourly building energy prediction using trained RF with different parameter tuning. They also investigated the impact of behaviour changes on prediction accuracy. |

| [17] | 2017 | Multiple LR, RF, gradient boosting | - | Discussed feature filtering and ranking using different statistical modelling. | |

| Machine Learning Models | [27] | 2019 | Neural network | Short-term: hourly, day, weekly | Proposed a feedforward backpropagation neural network on energy consumption data with statistical moments. |

| [25] | 2019 | SVR | Hourly | A vector field-based SVR for ECP is proposed by approximating the high nonlinearity between input and output to linearity. | |

| Deep Learning Models | [28] | 2018 | Deep extreme learning machine | Weekly, monthly | The authors explored deep extreme learning machine (DELM), adaptive neuro-fuzzy inference system (ANFIS), and ANN. They proposed DELM for ECP due to its performance over ANN and ANFIS. |

| [33] | 2017 | Pooling-based DRNN | - | Addresses overfitting forecasting performance using a pooling-based DRNN, testing their solution on real smart meters in Ireland. | |

| Hybrid Models | [7] | 2019 | CNN-LSTM | Short and medium-term | CNNs for spatial features extraction and LSTM for temporal features modelling. |

| [18] | 2019 | CNN-BLSTM (EECP-CBL) | Short, medium and long-term | CNNs for spatial features extraction and BLSTM for features modelling for final prediction. | |

| [14] | 2019 | RICNN | 48 time steps, 30 min interval | Integrate RNN and 1-D convolution inception module to calibrate hidden state vector values and prediction time. | |

| [34] | 2020 | AE and SOM | - | Deep AE for representational learning with result fed into an adaptive self-organizing map (SOM) clustering algorithm, before performing a statistical analysis on the obtained clustered data for prediction. | |

| This study | 2021 | CBLSTM-AE | 30 min interval and 24 h | Proposed a hybrid architecture of CNN with an AE-BLSTM as the encoder and LSTM as the decoder to correctly predict electricity consumption, while testing the generalisation ability on various datasets in a real environment. |

| Building Name | Average Demand (kWh) | Data Length (Weeks) | Building Type | Occupancy | Location |

|---|---|---|---|---|---|

| Hospital | 13,306.99 | 144 | Hospital | ∼450 | UK |

| Office | 92 | SME | ∼30 | Canada | |

| Restaurant | 71 | SME | ∼60 | UK | |

| Carehome | 69 | Residential | ∼30 | UK | |

| MMU | 260 | University | ∼400 | UK |

| No. | Layer Type | Neurons | Param |

|---|---|---|---|

| 1 | Input | 8 | 8 |

| 2 | Convolution1D | 64 | 1600 |

| 3 | Convolution1D | 64 | 12,352 |

| 4 | MaxPooling1D | 64 | 0 |

| 5 | Bidirectional | 128 | 66,048 |

| 6 | Flatten | 128 | 0 |

| 7 | Repeat vector | 128 | 0 |

| 8 | LSTM | 64 | 49,408 |

| 9 | TimeDistributed (Dense) | 32 | 2080 |

| 10 | TimeDistributed (Dense) | 1 | 33 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jogunola, O.; Adebisi, B.; Hoang, K.V.; Tsado, Y.; Popoola, S.I.; Hammoudeh, M.; Nawaz, R. CBLSTM-AE: A Hybrid Deep Learning Framework for Predicting Energy Consumption. Energies 2022, 15, 810. https://doi.org/10.3390/en15030810

Jogunola O, Adebisi B, Hoang KV, Tsado Y, Popoola SI, Hammoudeh M, Nawaz R. CBLSTM-AE: A Hybrid Deep Learning Framework for Predicting Energy Consumption. Energies. 2022; 15(3):810. https://doi.org/10.3390/en15030810

Chicago/Turabian StyleJogunola, Olamide, Bamidele Adebisi, Khoa Van Hoang, Yakubu Tsado, Segun I. Popoola, Mohammad Hammoudeh, and Raheel Nawaz. 2022. "CBLSTM-AE: A Hybrid Deep Learning Framework for Predicting Energy Consumption" Energies 15, no. 3: 810. https://doi.org/10.3390/en15030810

APA StyleJogunola, O., Adebisi, B., Hoang, K. V., Tsado, Y., Popoola, S. I., Hammoudeh, M., & Nawaz, R. (2022). CBLSTM-AE: A Hybrid Deep Learning Framework for Predicting Energy Consumption. Energies, 15(3), 810. https://doi.org/10.3390/en15030810