Author Contributions

Conceptualisation, Y.X., F.-S.L., W.M. and E.Y.; methodology, Y.X., F.-S.L., W.M. and E.Y.; software, Y.X.; validation, Y.X., F.-S.L., W.M. and E.Y.; formal analysis, Y.X.; investigation, Y.X., F.-S.L., W.M. and E.Y.; resources, F.-S.L., W.M., E.Y. and Y.X.; data curation, Y.X.; writing—original draft preparation, Y.X.; writing—review and editing, F.-S.L., W.M., E.Y. and Y.X.; visualisation, Y.X.; supervision, F.-S.L. and W.M.; project administration, F.-S.L. and W.M.; funding acquisition, F.-S.L. and W.M. All authors have read and agreed to the published version of the manuscript.

Figure 1.

Weather Research and Forecasting Pre-Processing System domain configuration used for the numerical weather forecasts over the wind farm. The four nested domains (centred on the location of the wind farm) are labelled as d01 (the coarsest corresponding to the entire domain), d02, d03, and d04 (the finest).

Figure 1.

Weather Research and Forecasting Pre-Processing System domain configuration used for the numerical weather forecasts over the wind farm. The four nested domains (centred on the location of the wind farm) are labelled as d01 (the coarsest corresponding to the entire domain), d02, d03, and d04 (the finest).

Figure 2.

Direct interactions between the various Weather Research and Forecasting (WRF) physics options [

31].

Figure 2.

Direct interactions between the various Weather Research and Forecasting (WRF) physics options [

31].

Figure 3.

Architecture diagram of the adaptive neuro-fuzzy inference system (ANFIS) model.

Figure 3.

Architecture diagram of the adaptive neuro-fuzzy inference system (ANFIS) model.

Figure 4.

Architecture diagram of the multi-hour ahead wind power forecasting system.

Figure 4.

Architecture diagram of the multi-hour ahead wind power forecasting system.

Figure 5.

Evaluation of the power curve model using the 4-day test dataset of the wind speed and wind power measurements.

Figure 5.

Evaluation of the power curve model using the 4-day test dataset of the wind speed and wind power measurements.

Figure 6.

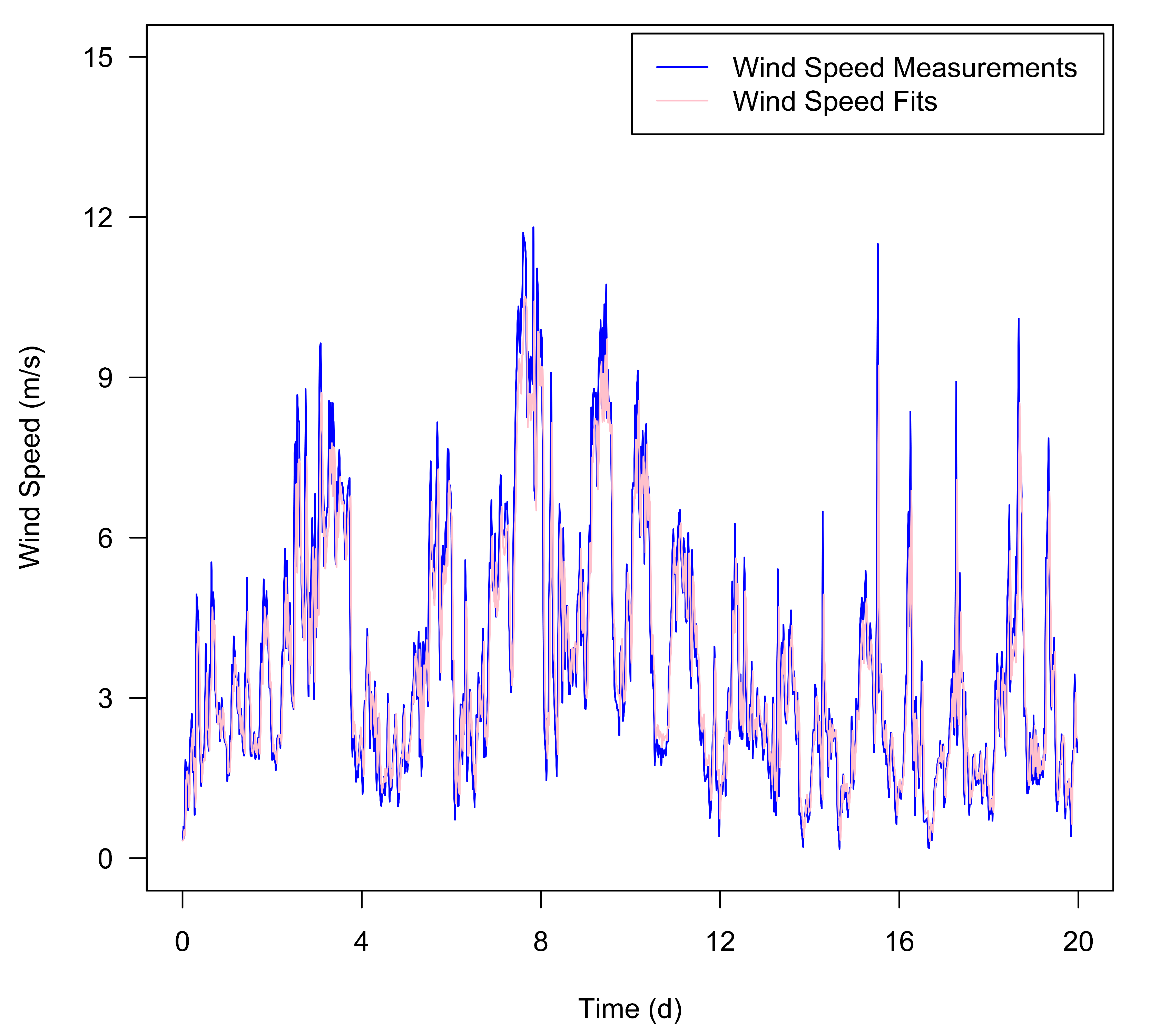

Comparison between the wind speed fits provided by the ARIMA model and the historical wind speed measurements from the 20-day training dataset.

Figure 6.

Comparison between the wind speed fits provided by the ARIMA model and the historical wind speed measurements from the 20-day training dataset.

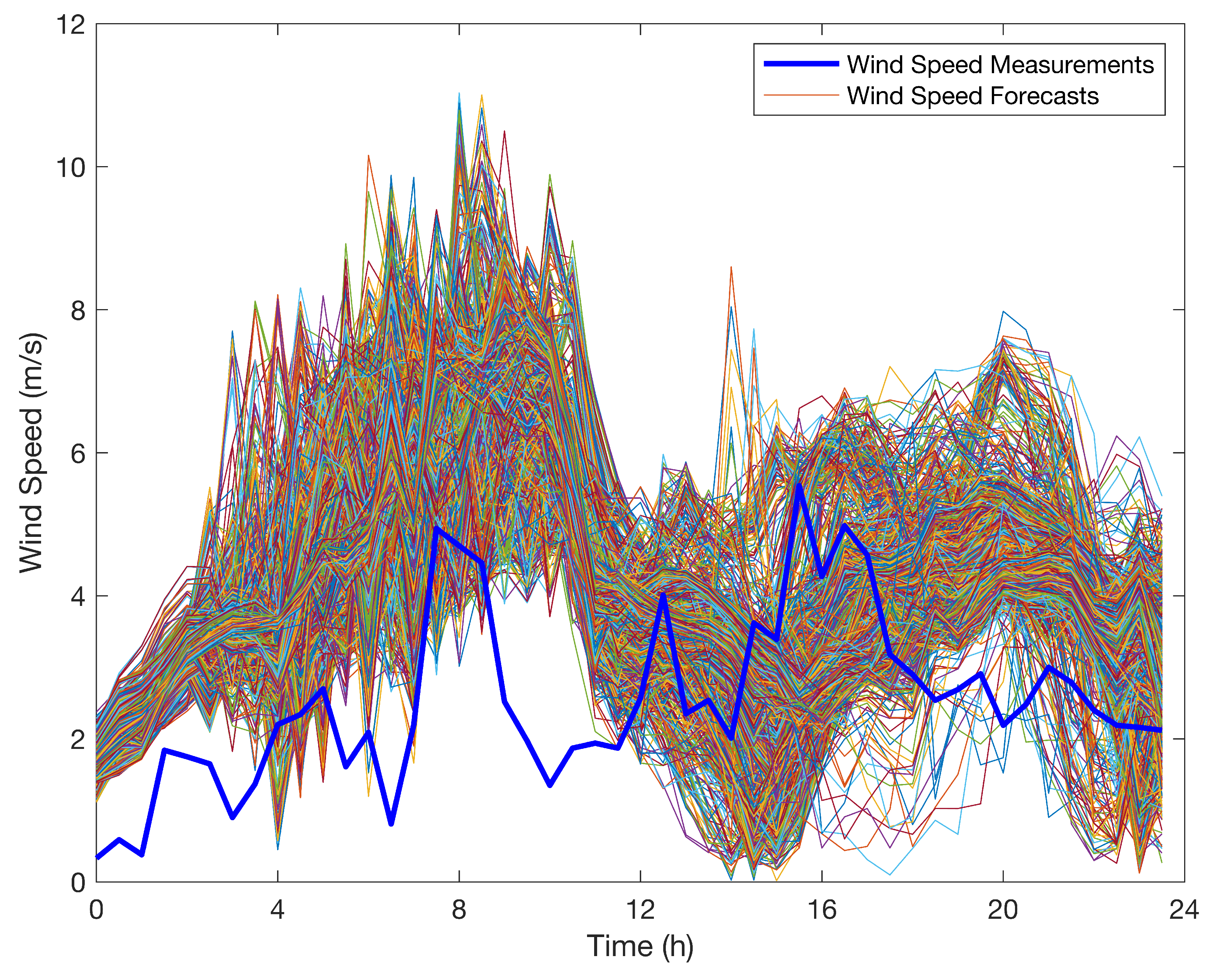

Figure 7.

Comparison of the wind speed forecasts provided by the 1334 different WRF models and the historical wind speed measurements obtained on 11 August 2015 (the first day of the training dataset).

Figure 7.

Comparison of the wind speed forecasts provided by the 1334 different WRF models and the historical wind speed measurements obtained on 11 August 2015 (the first day of the training dataset).

Figure 8.

Comparison of the multi-hour ahead wind power forecasts provided by the proposed forecasting system and the historical wind power measurements from the 4-day test dataset.

Figure 8.

Comparison of the multi-hour ahead wind power forecasts provided by the proposed forecasting system and the historical wind power measurements from the 4-day test dataset.

Table 1.

The various parameterisation schemes (used in this study) for each of the five physics options in the WRF model: the microphysics parameterisation (mp_physics), cumulus parameterisation (cu_physics), planetary boundary-layer parameterisation (bl_pbl_physics), surface-layer parameterisation (sf_sfclay_physics), and land-surface parameterisation (sf_surface_physics) [

32].

Table 1.

The various parameterisation schemes (used in this study) for each of the five physics options in the WRF model: the microphysics parameterisation (mp_physics), cumulus parameterisation (cu_physics), planetary boundary-layer parameterisation (bl_pbl_physics), surface-layer parameterisation (sf_sfclay_physics), and land-surface parameterisation (sf_surface_physics) [

32].

| Number | mp_physics | cu_physics | bl_pbl_physics | sf_sfclay_physics | sf_surface_physics |

|---|

| 1 | Purdue Lin | Kain–Fritsch | Yonsei University | Revised MM5 | Rapid Update Cycle |

| 2 | Ferrier Eta | Betts–Miller–Janjic | Mellor–Yamada–Janjic | Eta Similarity | Noah-Multi-Physics |

| 3 | WRF Single-Moment 6-Class | Grell–Freitas | Quasi-Normal Scale Elimination | Quasi-Normal Scale Elimination | Community Land Model Version 4 |

| 4 | Thompson et al. | Grell 3D | Mellor–Yamada Nakanishi and Niino Level 3 | Mellor–Yamada Nakanishi and Niino | |

| 5 | Milbrandt–Yau Double-Moment 7-Class | Zhang–McFarlane | BouLac | Total Energy–Mass Flux | |

| 6 | Stony Brook University (Y. Lin) | Kain–Fritsch–Cumulus Potential | University of Washington | | |

| 7 | WRF Double-Moment 6-Class | Multi-Scale Kain–Fritsch | Total Energy–Mass Flux | | |

| 8 | NSSL Single-Moment 6-Class | New Tiedtke | Shin–Hong | | |

| 9 | NSSL-LFO Single-Moment 6-Class | | Grenier–Bretherton–McCaa | | |

| 10 | Thompson Aerosol-Aware | | | | |

Table 2.

Four common types of criteria used in the technique for order of preference by similarity to ideal solution (TOPSIS) scheme and their characteristics.

Table 2.

Four common types of criteria used in the technique for order of preference by similarity to ideal solution (TOPSIS) scheme and their characteristics.

| Type | Characteristic |

|---|

| Benefit criterion | The bigger, the better. |

| Cost criterion | The smaller, the better. |

| Intermediate criterion | The closer to a specific value, the better. |

| Interval criterion | The closer to a specific interval, the better. |

Table 3.

Segmental and overall rankings of the top six candidate WRF models as determined using the 20-day wind speed training dataset.

Table 3.

Segmental and overall rankings of the top six candidate WRF models as determined using the 20-day wind speed training dataset.

| Model Index | 1st 4-Day | 2nd 4-Day | 3rd 4-Day | 4th 4-Day | 5th 4-Day | 20-Day |

|---|

| 1 | 2 | 1 | 2 | 5 | 2 | 1 |

| 2 | 1 | 2 | 3 | 2 | 6 | 5 |

| 3 | 4 | 7 | 1 | 7 | 3 | 4 |

| 4 | 5 | 5 | 6 | 1 | 1 | 3 |

| 5 | 3 | 4 | 4 | 4 | 4 | 2 |

| 6 | 6 | 3 | 5 | 47 | 5 | 6 |

Table 4.

Segmental and overall rankings of the 5-in-1 (ensemble) and top five WRF models as determined using the 20-day wind speed training dataset.

Table 4.

Segmental and overall rankings of the 5-in-1 (ensemble) and top five WRF models as determined using the 20-day wind speed training dataset.

| Model Index | 1st 4-Day | 2nd 4-Day | 3rd 4-Day | 4th 4-Day | 5th 4-Day | 20-Day |

|---|

| 1 | 2 | 2 | 3 | 4 | 3 | 2 |

| 2 | 3 | 3 | 4 | 5 | 7 | 6 |

| 3 | 5 | 8 | 1 | 7 | 4 | 4 |

| 4 | 6 | 6 | 7 | 2 | 2 | 5 |

| 5 | 4 | 5 | 5 | 3 | 5 | 3 |

| 5-1 | 1 | 1 | 2 | 1 | 1 | 1 |

Table 5.

Rankings of the 5-in-1 (ensemble) and top five WRF models in accordance with the similarity score as determined using the 4-day wind speed test dataset.

Table 5.

Rankings of the 5-in-1 (ensemble) and top five WRF models in accordance with the similarity score as determined using the 4-day wind speed test dataset.

| Rank | Model Index | Similarity Score |

|---|

| 1 | 3 | 0.9856 |

| 2 | 5-1 | 0.9641 |

| 3 | 4 | 0.9489 |

| 4 | 1 | 0.9447 |

| 5 | 5 | 0.7364 |

| 6 | 2 | 0.6197 |

Table 6.

Evaluation results for the wind speed forecasting using the corrected and original 5-in-1 (ensemble) WRF models applied to the 4-day test dataset.

Table 6.

Evaluation results for the wind speed forecasting using the corrected and original 5-in-1 (ensemble) WRF models applied to the 4-day test dataset.

| Model | MB (m·s) | MAE (m·s) | RMSE (m·s) | IA | MAPE | SMAPE |

|---|

| Corrected 5-in-1 (ensemble) WRF model | 0.21 | 1.07 | 1.35 | 0.89 | 53.71% | 36.73% |

| Original 5-in-1 (ensemble) WRF model | 0.70 | 1.29 | 1.61 | 0.83 | 64.59% | 42.15% |

Table 7.

Four-day average mean bias for the wind speed forecasting using the persistence, autoregressive integrated moving average (ARIMA), and WRF-TOPSIS models applied to the 4-day test dataset.

Table 7.

Four-day average mean bias for the wind speed forecasting using the persistence, autoregressive integrated moving average (ARIMA), and WRF-TOPSIS models applied to the 4-day test dataset.

| 4-Day Average MB (m·s) | Persistence Model | ARIMA Model | WRF-TOPSIS Model |

|---|

| Forecast | 30 min | −0.01 | −0.14 | 0.21 |

| 1 h | −0.03 | −0.20 | 0.21 |

| 1.5 h | −0.05 | −0.29 | 0.21 |

| time | 2 h | −0.05 | −0.31 | 0.21 |

| 3 h | −0.11 | −0.28 | 0.21 |

| 4 h | −0.18 | −0.45 | 0.21 |

| horizon | 6 h | −0.32 | −0.32 | 0.21 |

| 8 h | −0.42 | −0.67 | 0.21 |

| 12 h | −0.57 | −0.59 | 0.21 |

| 24 h | −0.42 | −0.78 | 0.21 |

Table 8.

Four-day average mean absolute error for the wind speed forecasting using the persistence, ARIMA, and WRF-TOPSIS models applied to the 4-day test dataset.

Table 8.

Four-day average mean absolute error for the wind speed forecasting using the persistence, ARIMA, and WRF-TOPSIS models applied to the 4-day test dataset.

| 4-Day Average MAE (m·s) | Persistence Model | ARIMA Model | WRF-TOPSIS Model |

|---|

| Forecast | 30 min | 0.61 | 0.61 | 1.07 |

| 1 h | 0.83 | 0.72 | 1.07 |

| 1.5 h | 0.99 | 0.83 | 1.07 |

| time | 2 h | 1.10 | 0.95 | 1.07 |

| 3 h | 1.22 | 0.95 | 1.07 |

| 4 h | 1.41 | 1.06 | 1.07 |

| horizon | 6 h | 1.62 | 1.32 | 1.07 |

| 8 h | 1.78 | 1.37 | 1.07 |

| 12 h | 2.22 | 1.49 | 1.07 |

| 24 h | 2.16 | 1.44 | 1.07 |

Table 9.

Four-day average root mean squared error for the wind speed forecasting using the persistence, ARIMA, and WRF-TOPSIS models applied to the 4-day test dataset.

Table 9.

Four-day average root mean squared error for the wind speed forecasting using the persistence, ARIMA, and WRF-TOPSIS models applied to the 4-day test dataset.

| 4-Day Average RMSE (m·s) | Persistence Model | ARIMA Model | WRF-TOPSIS Model |

|---|

| Forecast | 30 min | 0.91 | 0.91 | 1.35 |

| 1 h | 1.24 | 1.05 | 1.35 |

| 1.5 h | 1.45 | 1.24 | 1.35 |

| time | 2 h | 1.54 | 1.36 | 1.35 |

| 3 h | 1.68 | 1.37 | 1.35 |

| 4 h | 1.86 | 1.50 | 1.35 |

| horizon | 6 h | 2.07 | 1.67 | 1.35 |

| 8 h | 2.32 | 1.77 | 1.35 |

| 12 h | 2.77 | 1.92 | 1.35 |

| 24 h | 2.67 | 1.91 | 1.35 |

Table 10.

Four-day average index of agreement for the wind speed forecasting using the persistence, ARIMA, and WRF-TOPSIS models applied to the 4-day test dataset.

Table 10.

Four-day average index of agreement for the wind speed forecasting using the persistence, ARIMA, and WRF-TOPSIS models applied to the 4-day test dataset.

| 4-Day Average IA | Persistence Model | ARIMA Model | WRF-TOPSIS Model |

|---|

| Forecast | 30 min | 0.94 | 0.95 | 0.89 |

| 1 h | 0.89 | 0.93 | 0.89 |

| 1.5 h | 0.86 | 0.90 | 0.89 |

| time | 2 h | 0.85 | 0.89 | 0.89 |

| 3 h | 0.83 | 0.89 | 0.89 |

| 4 h | 0.80 | 0.88 | 0.89 |

| horizon | 6 h | 0.77 | 0.85 | 0.89 |

| 8 h | 0.72 | 0.85 | 0.89 |

| 12 h | 0.64 | 0.83 | 0.89 |

| 24 h | 0.67 | 0.84 | 0.89 |

Table 11.

Four-day average mean absolute percentage error for the wind speed forecasting using the persistence, ARIMA, and WRF-TOPSIS models applied to the 4-day test dataset.

Table 11.

Four-day average mean absolute percentage error for the wind speed forecasting using the persistence, ARIMA, and WRF-TOPSIS models applied to the 4-day test dataset.

| 4-Day Average MAPE | Persistence Model | ARIMA Model | WRF-TOPSIS Model |

|---|

| Forecast | 30 min | 22.86% | 23.31% | 53.71% |

| 1 h | 31.46% | 27.76% | 53.71% |

| 1.5 h | 38.37% | 30.53% | 53.71% |

| time | 2 h | 42.70% | 36.57% | 53.71% |

| 3 h | 53.43% | 36.85% | 53.71% |

| 4 h | 61.19% | 42.36% | 53.71% |

| horizon | 6 h | 69.59% | 55.50% | 53.71% |

| 8 h | 80.40% | 54.16% | 53.71% |

| 12 h | 98.38% | 60.58% | 53.71% |

| 24 h | 89.47% | 52.67% | 53.71% |

Table 12.

Four-day average symmetric mean absolute percentage error for the wind speed forecasting using the persistence, ARIMA, and WRF-TOPSIS models applied to the 4-day test dataset.

Table 12.

Four-day average symmetric mean absolute percentage error for the wind speed forecasting using the persistence, ARIMA, and WRF-TOPSIS models applied to the 4-day test dataset.

| 4-Day Average SMAPE | Persistence Model | ARIMA Model | WRF-TOPSIS Model |

|---|

| Forecast | 30 min | 21.68% | 21.47% | 36.73% |

| 1 h | 28.47% | 24.92% | 36.73% |

| 1.5 h | 33.58% | 27.49% | 36.73% |

| time | 2 h | 37.59% | 32.22% | 36.73% |

| 3 h | 42.53% | 30.76% | 36.73% |

| 4 h | 49.11% | 36.35% | 36.73% |

| horizon | 6 h | 55.76% | 42.97% | 36.73% |

| 8 h | 59.11% | 45.63% | 36.73% |

| 12 h | 71.62% | 47.73% | 36.73% |

| 24 h | 67.38% | 46.28% | 36.73% |

Table 13.

Four-day average mean bias for the wind power forecasting using the direct persistence, indirect persistence-ANFIS, ARIMA-ANFIS, and WRF-TOPSIS-ANFIS models applied to the 4-day test dataset.

Table 13.

Four-day average mean bias for the wind power forecasting using the direct persistence, indirect persistence-ANFIS, ARIMA-ANFIS, and WRF-TOPSIS-ANFIS models applied to the 4-day test dataset.

| 4-Day Average MB (kW) | Direct Persistence Model | Indirect Persistence-ANFIS Model | ARIMA-ANFIS Model | WRF-TOPSIS-ANFIS Model |

|---|

| Forecast | 30 min | −1.4 | −0.5 | −24.0 | −16.6 |

| 1 h | −2.8 | −2.0 | −33.3 | −16.6 |

| 1.5 h | −3.7 | −3.0 | −45.7 | −16.6 |

| time | 2 h | −4.7 | −4.0 | −49.5 | −16.6 |

| 3 h | −9.6 | −9.1 | −49.7 | −16.6 |

| 4 h | −17.3 | −17.1 | −63.6 | −16.6 |

| horizon | 6 h | −31.2 | −31.5 | −64.7 | −16.6 |

| 8 h | −38.7 | −39.7 | −89.6 | −16.6 |

| 12 h | −48.5 | −49.7 | −87.1 | −16.6 |

| 24 h | −38.7 | −40.0 | −104.6 | −16.6 |

Table 14.

Four-day average mean absolute error for the wind power forecasting using the direct persistence, indirect persistence-ANFIS, ARIMA-ANFIS, and WRF-TOPSIS-ANFIS models applied to the 4-day test dataset.

Table 14.

Four-day average mean absolute error for the wind power forecasting using the direct persistence, indirect persistence-ANFIS, ARIMA-ANFIS, and WRF-TOPSIS-ANFIS models applied to the 4-day test dataset.

| 4-Day Average MAE (kW) | Direct Persistence Model | Indirect Persistence-ANFIS Model | ARIMA-ANFIS Model | WRF-TOPSIS-ANFIS Model |

|---|

| Forecast | 30 min | 48.6 | 50.8 | 49.0 | 77.8 |

| 1 h | 69.1 | 68.4 | 57.2 | 77.8 |

| 1.5 h | 80.3 | 79.8 | 68.9 | 77.8 |

| time | 2 h | 87.5 | 87.6 | 74.0 | 77.8 |

| 3 h | 95.5 | 95.1 | 78.3 | 77.8 |

| 4 h | 107.4 | 105.7 | 77.1 | 77.8 |

| horizon | 6 h | 116.4 | 115.4 | 100.5 | 77.8 |

| 8 h | 129.1 | 126.7 | 98.6 | 77.8 |

| 12 h | 157.3 | 155.2 | 111.8 | 77.8 |

| 24 h | 161.4 | 159.5 | 110.2 | 77.8 |

Table 15.

Four-day average root mean squared error for the wind power forecasting using the direct persistence, indirect persistence-ANFIS, ARIMA-ANFIS, and WRF-TOPSIS-ANFIS models applied to the 4-day test dataset.

Table 15.

Four-day average root mean squared error for the wind power forecasting using the direct persistence, indirect persistence-ANFIS, ARIMA-ANFIS, and WRF-TOPSIS-ANFIS models applied to the 4-day test dataset.

| 4-Day Average RMSE (kW) | Direct Persistence Model | Indirect Persistence-ANFIS Model | ARIMA-ANFIS Model | WRF-TOPSIS-ANFIS Model |

|---|

| Forecast | 30 min | 104.7 | 104.7 | 104.4 | 129.8 |

| 1 h | 139.8 | 138.9 | 115.0 | 129.8 |

| 1.5 h | 158.2 | 157.6 | 137.0 | 129.8 |

| time | 2 h | 167.7 | 166.7 | 142.8 | 129.8 |

| 3 h | 178.7 | 177.5 | 150.5 | 129.8 |

| 4 h | 191.7 | 189.5 | 154.4 | 129.8 |

| horizon | 6 h | 197.9 | 194.9 | 169.6 | 129.8 |

| 8 h | 214.6 | 210.5 | 179.0 | 129.8 |

| 12 h | 242.2 | 237.7 | 193.4 | 129.8 |

| 24 h | 259.1 | 254.0 | 195.6 | 129.8 |

Table 16.

Four-day average index of agreement for the wind power forecasting using the direct persistence, indirect persistence-ANFIS, ARIMA-ANFIS, and WRF-TOPSIS-ANFIS models applied to the 4-day test dataset.

Table 16.

Four-day average index of agreement for the wind power forecasting using the direct persistence, indirect persistence-ANFIS, ARIMA-ANFIS, and WRF-TOPSIS-ANFIS models applied to the 4-day test dataset.

| 4-Day Average IA | Direct Persistence Model | Indirect Persistence-ANFIS Model | ARIMA-ANFIS Model | WRF-TOPSIS-ANFIS Model |

|---|

| Forecast | 30 min | 0.82 | 0.82 | 0.78 | 0.66 |

| 1 h | 0.66 | 0.65 | 0.73 | 0.66 |

| 1.5 h | 0.56 | 0.55 | 0.54 | 0.66 |

| time | 2 h | 0.53 | 0.53 | 0.53 | 0.66 |

| 3 h | 0.54 | 0.54 | 0.52 | 0.66 |

| 4 h | 0.41 | 0.41 | 0.50 | 0.66 |

| horizon | 6 h | 0.42 | 0.42 | 0.40 | 0.66 |

| 8 h | 0.33 | 0.34 | 0.40 | 0.66 |

| 12 h | 0.18 | 0.18 | 0.34 | 0.66 |

| 24 h | 0.21 | 0.21 | 0.38 | 0.66 |

Table 17.

Four-day average accuracy rate for the wind power forecasting using the direct persistence, indirect persistence-ANFIS, ARIMA-ANFIS, and WRF-TOPSIS-ANFIS models applied to the 4-day test dataset.

Table 17.

Four-day average accuracy rate for the wind power forecasting using the direct persistence, indirect persistence-ANFIS, ARIMA-ANFIS, and WRF-TOPSIS-ANFIS models applied to the 4-day test dataset.

| 4-Day Average Accuracy Rate | Direct Persistence Model | Indirect Persistence-ANFIS Model | ARIMA-ANFIS Model | WRF-TOPSIS-ANFIS Model |

|---|

| Forecast | 30 min | 93.02% | 93.02% | 93.04% | 91.35% |

| 1 h | 90.68% | 90.74% | 92.33% | 91.35% |

| 1.5 h | 89.45% | 89.49% | 90.86% | 91.35% |

| time | 2 h | 88.82% | 88.89% | 90.48% | 91.35% |

| 3 h | 88.09% | 88.17% | 89.96% | 91.35% |

| 4 h | 87.22% | 87.37% | 89.71% | 91.35% |

| horizon | 6 h | 86.81% | 87.01% | 88.69% | 91.35% |

| 8 h | 85.69% | 85.97% | 88.06% | 91.35% |

| 12 h | 83.85% | 84.15% | 87.11% | 91.35% |

| 24 h | 82.73% | 83.07% | 86.96% | 91.35% |

Table 18.

Four-day average qualification rate for the wind power forecasting using the direct persistence, indirect persistence-ANFIS, ARIMA-ANFIS, and WRF-TOPSIS-ANFIS models applied to the 4-day test dataset.

Table 18.

Four-day average qualification rate for the wind power forecasting using the direct persistence, indirect persistence-ANFIS, ARIMA-ANFIS, and WRF-TOPSIS-ANFIS models applied to the 4-day test dataset.

| 4-Day Average Qualification Rate | Direct Persistence Model | Indirect Persistence-ANFIS Model | ARIMA-ANFIS Model | WRF-TOPSIS-ANFIS Model |

|---|

| Forecast | 30 min | 98.96% | 98.96% | 99.48% | 97.92% |

| 1 h | 97.40% | 97.40% | 98.96% | 97.92% |

| 1.5 h | 96.35% | 96.35% | 97.92% | 97.92% |

| time | 2 h | 96.35% | 96.35% | 96.88% | 97.92% |

| 3 h | 93.75% | 93.23% | 96.35% | 97.92% |

| 4 h | 92.19% | 93.75% | 96.35% | 97.92% |

| horizon | 6 h | 94.79% | 94.79% | 93.75% | 97.92% |

| 8 h | 90.62% | 91.15% | 94.27% | 97.92% |

| 12 h | 90.10% | 90.62% | 92.19% | 97.92% |

| 24 h | 88.54% | 89.06% | 92.19% | 97.92% |

Table 19.

Model rankings for each forecast time horizon according to the similarity score.

Table 19.

Model rankings for each forecast time horizon according to the similarity score.

| Similarity Score | Rank 1 | Rank 2 | Rank 3 | Rank 4 |

|---|

| Forecast | 30 min | Direct persistence model (0.9727) | Indirect persistence-ANFIS model (0.9617) | ARIMA-ANFIS model (0.5034) | WRF-TOPSIS-ANFIS model (0.2039) |

| 1 h | ARIMA-ANFIS model (0.7086) | Indirect persistence-ANFIS model (0.3620) | Direct persistence model (0.3433) | WRF-TOPSIS-ANFIS model (0.3414) |

| 1.5 h | ARIMA-ANFIS model (0.7177) | WRF-TOPSIS-ANFIS model (0.5111) | Indirect persistence-ANFIS model (0.2546) | Direct persistence model (0.2435) |

| time | 2 h | WRF-TOPSIS-ANFIS model (0.8042) | ARIMA-ANFIS model (0.6587) | Indirect persistence-ANFIS model (0.2701) | Direct persistence model (0.2649) |

| 3 h | WRF-TOPSIS-ANFIS model (0.9391) | ARIMA-ANFIS model (0.6067) | Indirect persistence-ANFIS model (0.2732) | Direct persistence model (0.2656) |

| 4 h | WRF-TOPSIS-ANFIS model (0.9865) | ARIMA-ANFIS model (0.6230) | Indirect persistence-ANFIS model (0.2713) | Direct persistence model (0.2602) |

| horizon | 6 h | WRF-TOPSIS-ANFIS model (1.0000) | ARIMA-ANFIS model (0.3744) | Indirect persistence-ANFIS model (0.2071) | Direct persistence model (0.2013) |

| 8 h | WRF-TOPSIS-ANFIS model (1.0000) | ARIMA-ANFIS model (0.4394) | Indirect persistence-ANFIS model (0.2206) | Direct persistence model (0.2133) |

| 12 h | WRF-TOPSIS-ANFIS model (1.0000) | ARIMA-ANFIS model (0.4237) | Indirect persistence-ANFIS model (0.1911) | Direct persistence model (0.1898) |

| 24 h | WRF-TOPSIS-ANFIS model (1.0000) | ARIMA-ANFIS model (0.4805) | Indirect persistence-ANFIS model (0.2191) | Direct persistence model (0.2163) |

Table 20.

Evaluation results of the multi-hour ahead wind power forecasting system applied to the 4-day test dataset.

Table 20.

Evaluation results of the multi-hour ahead wind power forecasting system applied to the 4-day test dataset.

| Metric | 30 min to 24 h (Every 30 min) |

|---|

| MB (kW) | −16.6 |

| MAE (kW) | 77.8 |

| RMSE (kW) | 129.8 |

| IA | 0.66 |

| Accuracy rate | 91.35% |

| Qualification rate | 97.92% |