An Adaptive Compression Method for Lightweight AI Models of Edge Nodes in Customized Production

Abstract

1. Introduction

2. Related Work

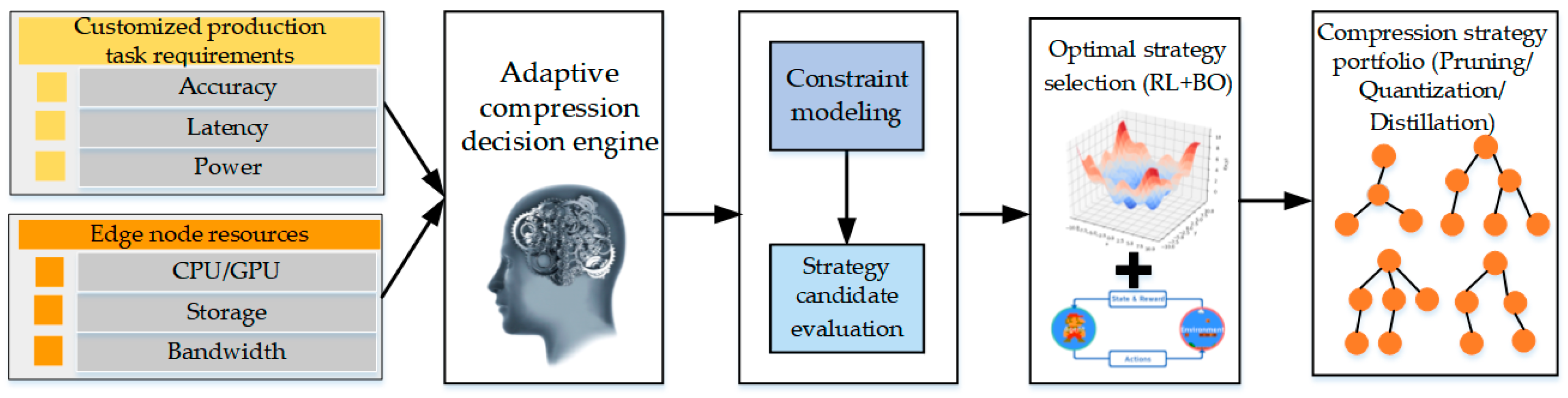

3. System Framework

4. System Model and Method

4.1. Task Requirement Analysis Model

4.2. Edge Resource Description Model

| Listing 1. Edge device ontology. |

| Edge Device ├── ComputeUnit │ ├── CPU: core, frequency, utilization, SIMD │ └── GPU: SM, freq, VRAM, tensor_support ├── MemoryUnit: size, bandwidth ├── NetworkUnit: bandwidth, latency, loss └── StorageUnit: rnd_read, rnd_write |

4.3. Multi-Strategy Compression Candidate Pool

- (1)

- Pruning-based strategies. This layer covers various pruning approaches from structural to parameter levels, including structured pruning, unstructured weight sparsification, importance-score-based pruning, and dynamic pruning strategies that adjust pruning rates at runtime. Each method is encoded into the candidate pool using a triplet (method, granularity, ratio).

- (2)

- Quantization-based strategies. Quantization schemes are selected based on device computational characteristics and may include symmetric or asymmetric quantization, weight/activation/layer-wise quantization, quantization-aware training or post-training quantization, and per-layer mixed-precision search. Strategies are represented as tuples (bitwidth, calibration_method, per_layer_flag).

- (3)

- Low-rank decomposition strategies. Suitable for matrix-intensive models such as Transformers, this layer includes SVD decomposition for fully connected layers, CP/Tucker decomposition for convolutional kernels, and block-wise low-rank decomposition. Each strategy is parameterized as (rank, block_size).

- (4)

- Knowledge distillation strategies. Designed to preserve accuracy under high compression ratios, this layer supports logits distillation, feature distillation, attention-map distillation, and response-based versus hint-based distillation approaches. Strategies are encoded using a tuple (loss_type, temperature, loss_weight).

4.4. Hybrid RL and BO Decision Engine

| Algorithm 1: Adaptive compression strategy search |

| Input: Task constraint vector T, hardware resource vector R*, candidate strategy pool S = {s1, s2, …, sn}, initial model M0 Output: Optimal compression strategy combination π* 1: Initialize strategy network πθ and value network Vφ 2: Construct state representation x0 = Embed(T, R*) 3: MT ← M0 4: for episode = 1 to MaxEpisode do 5: for step = 1 to MaxStep do 6: Select compression action a_t ~ πθ(a | x_t) 7: Apply action a_t to model → M_t = Compress(MT, a_t) 8: Evaluate M_t to obtain: 9: accuracy Acc_t, latency Lat_t, energy E_t 10: Compute reward: 11: r_t = w1·Acc_t − w2·Lat_t − w3·E_t 12: Update state representation x_{t + 1} = f(M_t, T, R*) 13: Store transition (x_t, a_t, r_t, x_{t + 1}) 14: if termination condition satisfied then 15: break 16: end if 17: end for 18: Update πθ and Vφ using collected transitions 19: end for 20: Extract best-performing strategy sequence π* from policy πθ 21: return π* |

4.5. Closed-Loop Runtime Optimization

| Algorithm 2: Closed-loop deployment-time compression adjustment |

| Input: Deployed model MT, constraint thresholds (Acc_min, Lat_max), adjustment operator Adjust() Output: Adaptively optimized deployed model M* 1: Initialize performance buffer B ← ∅ 2: while system is running do 3: Monitor real-time performance: 4: Acc_cur = MeasureAccuracy(MT) 5: Lat_cur = MeasureLatency(MT) 6: Append (Acc_cur, Lat_cur) to buffer B 7: if Mean(B.acc) < Acc_min then 8: // Accuracy is degraded 9: MT ← Adjust(MT, direction = “decompress”) 10: else if Mean(B.lat) > Lat_max then 11: // Latency violation 12: MT ← Adjust(MT, direction = “compress”) 13: end if 14: if Convergence(B) == true then 15: break 16: end if 17: end while 18: return M* |

5. Experimental Results

5.1. Experimental Setup

5.2. Results and Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Hu, Y.; Jia, Q.; Yao, Y.; Lee, Y.; Lee, M.; Wang, C.; Zhou, X.; Xie, R.; Yu, F.R. Industrial Internet of Things Intelligence Empowering Smart Manufacturing: A Literature Review. IEEE Internet Things J. 2024, 11, 19143–19167. [Google Scholar] [CrossRef]

- Kong, R.W.M.; Ning, D.; Kong, T.H.T. AI Intelligent Learning for Manufacturing Automation. Int. J. Mech. Ind. Technol. 2025, 13, 1–9. [Google Scholar]

- Li, Z.; Mei, X.; Sun, Z.; Xu, J.; Zhang, J.; Zhang, D.; Zhu, J. A Reference Framework for the Digital Twin Smart Factory Based on Cloud-Fog-Edge Computing Collaboration. J. Intell. Manuf. 2025, 36, 3625–3645. [Google Scholar] [CrossRef]

- Tang, J.; Wang, T.; Tian, H.; Yu, W. AI- and Security-Empowered End–Edge–Cloud Modular Platform in Complex Industrial Processes: A Case Study on Municipal Solid Waste Incineration. Sensors 2025, 25, 6973. [Google Scholar] [CrossRef]

- Su, W.; Xu, G.; He, Z.; Machica, I.K.; Quimno, V.; Du, Y.; Kong, Y. Cloud-Edge Computing-Based ICICOS Framework for Industrial Automation and Artificial Intelligence: A Survey. J. Circuits Syst. Comput. 2023, 32, 2350168. [Google Scholar] [CrossRef]

- Garcia, A.; Oregui, X.; Ojer, M. Edge Architecture for the Automation and Control of Flexible Manufacturing Lines. Procedia Comput. Sci. 2024, 237, 305–312. [Google Scholar] [CrossRef]

- Dantas, P.V.; Da Silva, W.S.; Cordeiro, L.C.; Carvalho, C.B. A Comprehensive Review of Model Compression Techniques in Machine Learning. Appl. Intell. 2024, 54, 11804–11844. [Google Scholar] [CrossRef]

- Perez, A.T.E.; Rossit, D.A.; Tohme, F.; Vásquez, Ó.C. Mass Customized/Personalized Manufacturing in Industry 4.0 and Blockchain: Research Challenges, Main Problems, and the Design of an Information Architecture. Inf. Fusion 2022, 79, 44–57. [Google Scholar] [CrossRef]

- Foukalas, F.; Tziouvaras, A. Edge Artificial Intelligence for Industrial Internet of Things Applications: An Industrial Edge Intelligence Solution. IEEE Ind. Electron. Mag. 2021, 15, 28–36. [Google Scholar] [CrossRef]

- Sakr, C.; Khailany, B. Espace: Dimensionality Reduction of Activations for Model Compression. Adv. Neural Inf. Process. Syst. 2024, 37, 17489–17517. [Google Scholar]

- Shah, S.M.; Lau, V.K.N. Model Compression for Communication Efficient Federated Learning. IEEE Trans. Neural Netw. Learn. Syst. 2021, 34, 5937–5951. [Google Scholar] [CrossRef]

- Siaterlis, G.; Franke, M.; Klein, K.; Hribernik, K.A.; Papapanagiotakis, G.; Palaiologos, S.; Antypas, G.; Nikolakis, N.; Alexopoulos, K. An IIoT Approach for Edge Intelligence in Production Environments Using Machine Learning and Knowledge Graphs. Procedia CIRP 2022, 106, 282–287. [Google Scholar] [CrossRef]

- Li, Z.; Li, H.; Meng, L. Model Compression for Deep Neural Networks: A Survey. Computers 2023, 12, 60. [Google Scholar] [CrossRef]

- Liu, D.; Zhu, Y.; Liu, Z.; Liu, Y.; Han, C.; Tian, J.; Li, R.; Yi, W. A Survey of Model Compression Techniques: Past, Present, and Future. Front. Robot. AI 2025, 12, 1518965. [Google Scholar] [CrossRef]

- Zawish, M.; Davy, S.; Abraham, L. Complexity-Driven Model Compression for Resource-Constrained Deep Learning on Edge. IEEE Trans. Artif. Intell. 2024, 5, 3886–3901. [Google Scholar] [CrossRef]

- Hoefler, T.; Alistarh, D.; Ben-Nun, T.; Dryden, N.; Peste, A. Sparsity in Deep Learning: Pruning and Growth for Efficient Inference and Training in Neural Networks. J. Mach. Learn. Res. 2021, 22, 1–124. [Google Scholar]

- He, Y.; Xiao, L. Structured Pruning for Deep Convolutional Neural Networks: A Survey. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 46, 2900–2919. [Google Scholar] [CrossRef] [PubMed]

- Kurtić, E.; Frantar, E.; Alistarh, D. ZipLM: Inference-Aware Structured Pruning of Language Models. Adv. Neural Inf. Process. Syst. 2023, 36, 65597–65617. [Google Scholar]

- Deng, L.; Li, G.; Han, S.; Shi, L.; Xie, Y. Model Compression and Hardware Acceleration for Neural Networks: A Comprehensive Survey. Proc. IEEE 2020, 108, 485–532. [Google Scholar] [CrossRef]

- Cai, Y.; Chen, L.; Lou, Y. Knowledge Distillation-Based Lightweight Image Compression Method. Procedia Comput. Sci. 2025, 271, 65–71. [Google Scholar] [CrossRef]

- Choudhary, T.; Mishra, V.; Goswami, A.; Sarangapani, J. A Comprehensive Survey on Model Compression and Acceleration. Artif. Intell. Rev. 2020, 53, 5113–5155. [Google Scholar] [CrossRef]

- Liu, J.; Zhuang, B.; Zhuang, Z.; Guo, Y.; Huang, J.; Zhu, J.; Tan, M. Discrimination-Aware Network Pruning for Deep Model Compression. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 44, 4035–4051. [Google Scholar] [CrossRef] [PubMed]

- Huang, X.; Zhou, S. Dynamic Compression Ratio Selection for Edge Inference Systems with Hard Deadlines. IEEE Internet Things J. 2020, 7, 8800–8810. [Google Scholar] [CrossRef]

- Chen, Z.; Xu, T.B.; Du, C.; Liu, C.-L.; He, H. Dynamical Channel Pruning by Conditional Accuracy Change for Deep Neural Networks. IEEE Trans. Neural Netw. Learn. Syst. 2020, 32, 799–813. [Google Scholar] [CrossRef]

- Wang, K.; Liu, Z.; Lin, Y.; Lin, J.; Han, S. Hardware-Centric AutoML for Mixed-Precision Quantization. Int. J. Comput. Vis. 2020, 128, 2035–2048. [Google Scholar] [CrossRef]

- Balaskas, K.; Karatzas, A.; Sad, C.; Siozios, K.; Anagnostopoulos, I.; Zervakis, G.; Henkel, J. Hardware-Aware DNN Compression via Diverse Pruning and Mixed-Precision Quantization. IEEE Trans. Emerg. Top. Comput. 2024, 12, 1079–1092. [Google Scholar] [CrossRef]

- Lu, S.; Yan, Y.; Zhang, Y.; Liu, Y.; Yu, S.; Gao, Z.-W. A Knowledge Distillation-Based Network Compression Framework for Lifecycle Management of Lithium-Ion Batteries. J. Supercomput. 2025, 81, 1147. [Google Scholar] [CrossRef]

- Kai, A.; Zhu, L.; Gong, J. Efficient Compression of Large Language Models with Distillation and Fine-Tuning. J. Comput. Sci. Softw. Appl. 2023, 3, 30–38. [Google Scholar]

- Ullah, F.U.M.; Muhammad, K.; Haq, I.U.; Khan, N.; Heidari, A.A.; Baik, S.W.; de Albuquerque, V.H.C. AI-Assisted Edge Vision for Violence Detection in IoT-Based Industrial Surveillance Networks. IEEE Trans. Ind. Inform. 2021, 18, 5359–5370. [Google Scholar] [CrossRef]

- Zhu, Z.; Han, G.; Jia, G.; Shu, L. Modified DenseNet for Automatic Fabric Defect Detection with Edge Computing for Minimizing Latency. IEEE Internet Things J. 2020, 7, 9623–9636. [Google Scholar] [CrossRef]

- Xie, R.; Gu, D.; Tang, Q.; Huang, T.; Yu, F.R. Workflow Scheduling in Serverless Edge Computing for the Industrial Internet of Things: A Learning Approach. IEEE Trans. Ind. Inform. 2022, 19, 8242–8252. [Google Scholar] [CrossRef]

- Halenar, I.; Halenarova, L.; Tanuska, P.; Vazan, P. Machine Condition Monitoring System Based on Edge Computing Technology. Sensors 2024, 25, 180. [Google Scholar] [CrossRef] [PubMed]

- Surabhi, M.D. Resilient Manufacturing in the Era of Industry 4.0: Leveraging AI and Edge Computing for Real-Time Quality Control and Predictive. J. Artif. Intell. Big Data Discip. 2024, 1, 50–61. [Google Scholar] [CrossRef]

- Huang, P.; Zeng, L.; Chen, X.; Huang, L.; Zhou, Z.; Yu, S.; Luo, K. Edge Robotics: Edge-Computing-Accelerated Multi-Robot Simultaneous Localization and Mapping. IEEE Internet Things J. 2022, 9, 14087–14102. [Google Scholar] [CrossRef]

- Liu, Y.; Wang, S.; Zhao, Q.; Du, S.; Zhou, A.; Ma, X.; Yang, F. Dependency-Aware Task Scheduling in Vehicular Edge Computing. IEEE Internet Things J. 2020, 7, 4961–4971. [Google Scholar] [CrossRef]

- Huang, L.; Qin, J.; Zhou, Y.; Zhu, F.; Liu, L.; Shao, L. Normalization Techniques in Training DNNs: Methodology, Analysis and Application. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 10173–10196. [Google Scholar] [CrossRef]

- Cong, X.Y.; Yu, Z.H.; Fanti, M.P.; Mangini, A.M.; Li, Z.W. Predictability Verification of Fault Patterns in Labeled Petri Nets. IEEE Trans. Autom. Control 2025, 70, 1973–1980. [Google Scholar] [CrossRef]

| Edge Device | CUDA Cores | FP32 Performance | Memory (GB) | Power Consumption (W) |

|---|---|---|---|---|

| Jetson Nano | 128 | 472 GFLOPS | 4 | 10 |

| Jetson TX2 | 256 | 1.3 TFLOPS | 8 | 15 |

| Jetson Xavier NX | 384 | 21 TOPS | 8 | 15 |

| Jetson Orin Nano | 1024 | 40 TOPS | 16 | 15 |

| Method | mAP (%) | Latency (ms) | Memory Usage (MB) | Power Consumption (W) | p-Value (vs. SP) |

|---|---|---|---|---|---|

| Source model | 95.7 ± 0.2 | 68.4 ± 1.5 | 612 ± 8 | 10.2 ± 0.3 | - |

| SP (40%) | 92.1 ± 0.4 | 45.2 ± 1.3 | 381 ± 6 | 8.6 ± 0.2 | - |

| SQ (8 bit) | 93.3 ± 0.3 | 41.9 ± 1.1 | 355 ± 5 | 7.9 ± 0.2 | - |

| KD | 94.4 ± 0.3 | 52.7 ± 1.6 | 410 ± 7 | 8.8 ± 0.3 | - |

| RL-P | 94.8 ± 0.3 | 39.5 ± 1.2 | 332 ± 6 | 7.5 ± 0.2 | - |

| AMS-RLBO | 95.2 ± 0.2 | 28.3 ± 0.9 | 301 ± 5 | 7.1 ± 0.2 | 0.002 |

| Model | Method | mAP (%) | Latency (ms) | Memory Usage (MB) | Compression Robustness | p-Value |

|---|---|---|---|---|---|---|

| YOLOv5s | Original | 95.7 ± 0.2 | 68.4 ± 1.5 | 612 ± 8 | - | - |

| YOLOv5s | SP | 92.1 ± 0.4 | 45.2 ± 1.3 | 381 ± 6 | Low | - |

| YOLOv5s | AMS-RLBO | 95.2 ± 0.2 | 28.3 ± 0.9 | 301 ± 5 | High | 0.003 |

| YOLOv8n | Original | 97.1 ± 0.2 | 61.2 ± 1.4 | 654 ± 9 | - | - |

| YOLOv8n | SP | 93.4 ± 0.5 | 47.8 ± 1.5 | 420 ± 7 | Medium | - |

| YOLOv8n | AMS-RLBO | 96.4 ± 0.3 | 33.7 ± 1.1 | 338 ± 6 | Medium-High | 0.009 |

| Method | mAP@[0.5:0.95] (%) | Latency (ms) | Memory Usage (MB) | p-Value |

|---|---|---|---|---|

| Original YOLOv5s | 36.8 ± 0.3 | 64.2 ± 1.6 | 612 ± 8 | - |

| SP | 33.4 ± 0.4 | 44.7 ± 1.2 | 389 ± 6 | - |

| SQ | 34.1 ± 0.3 | 40.8 ± 1.1 | 361 ± 5 | - |

| AMS-RLBO | 35.6 ± 0.3 | 29.8 ± 0.9 | 312 ± 5 | 0.012 |

| Method | Top-1 (%) | Speedup Ratio | Parameter Reduction (%) | p-Value |

|---|---|---|---|---|

| Source model | 78.4 ± 0.3 | 1.0× | 0 | - |

| SP | 74.1 ± 0.5 | 1.9× | 40 | - |

| SQ | 75.6 ± 0.4 | 2.2× | 0 | - |

| RL-P | 76.2 ± 0.4 | 2.5× | 43 | - |

| AMS-RLBO | 77.1 ± 0.3 | 3.3× | 52 | 0.008 |

| Method | Jetson Nano | Jetson TX2 | Xavier NX | Orin Nano |

|---|---|---|---|---|

| SQ | 41.9 | 28.7 | 14.5 | 9.1 |

| RL-P | 39.5 | 24.9 | 13.1 | 8.2 |

| AMS-RLBO | 28.3 | 19.4 | 11.7 | 7.4 |

| Method Variants | mAP (%) | Latency (ms) |

|---|---|---|

| No BO | 94.3 | 34.9 |

| No RL | 93.7 | 41.3 |

| No Feedback | 94.8 | 32.7 |

| AMS-RLBO | 95.2 | 28.3 |

| Method | Average Power Consumption (W) | 1 h Accumulated Energy (kJ) |

|---|---|---|

| Source model | 10.2 | 36.7 |

| SP | 8.6 | 31.0 |

| RL-P | 7.5 | 27.0 |

| AMS-RLBO | 7.1 | 25.6 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Jiang, C.; Hou, M.; Wang, H. An Adaptive Compression Method for Lightweight AI Models of Edge Nodes in Customized Production. Sensors 2026, 26, 383. https://doi.org/10.3390/s26020383

Jiang C, Hou M, Wang H. An Adaptive Compression Method for Lightweight AI Models of Edge Nodes in Customized Production. Sensors. 2026; 26(2):383. https://doi.org/10.3390/s26020383

Chicago/Turabian StyleJiang, Chun, Mingxin Hou, and Hongxuan Wang. 2026. "An Adaptive Compression Method for Lightweight AI Models of Edge Nodes in Customized Production" Sensors 26, no. 2: 383. https://doi.org/10.3390/s26020383

APA StyleJiang, C., Hou, M., & Wang, H. (2026). An Adaptive Compression Method for Lightweight AI Models of Edge Nodes in Customized Production. Sensors, 26(2), 383. https://doi.org/10.3390/s26020383