Application of Variational AutoEncoder (VAE) Model and Image Processing Approaches in Game Design

Abstract

1. Introduction

| Game Platform | Company | Device |

|---|---|---|

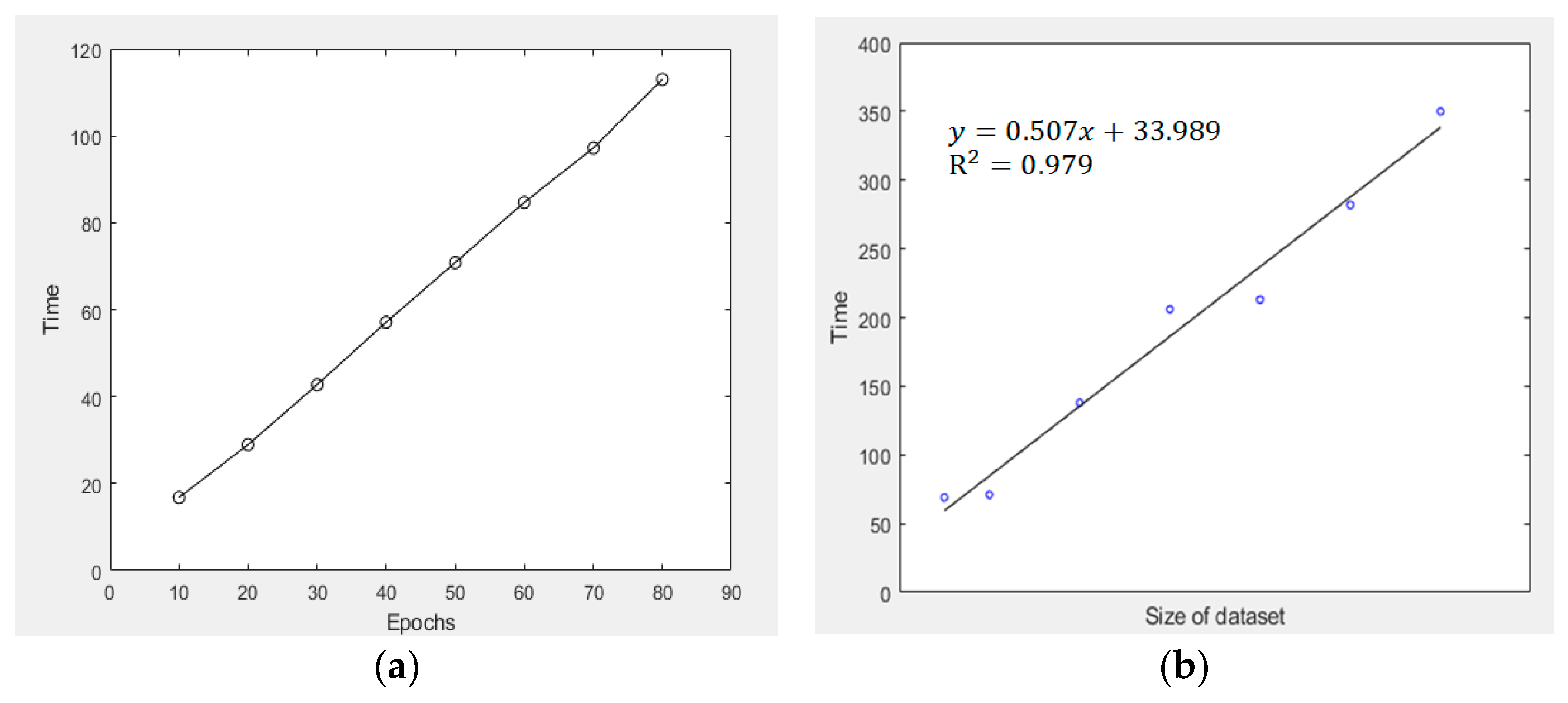

| Personal Computer (PC) | Microsoft | Desktop/laptop computers |

| Mobile Phone | Apple, Google, Samsung etc. | Smartphones |

| Xbox [18] | Microsoft | Xbox game console |

| PlayStation (PS) [19] | Sony | PlayStation 1–5 |

| Switch [20] | Nintendo | Nintendo 3DS/ Nintendo Switch |

2. Flowchart and Data Sources

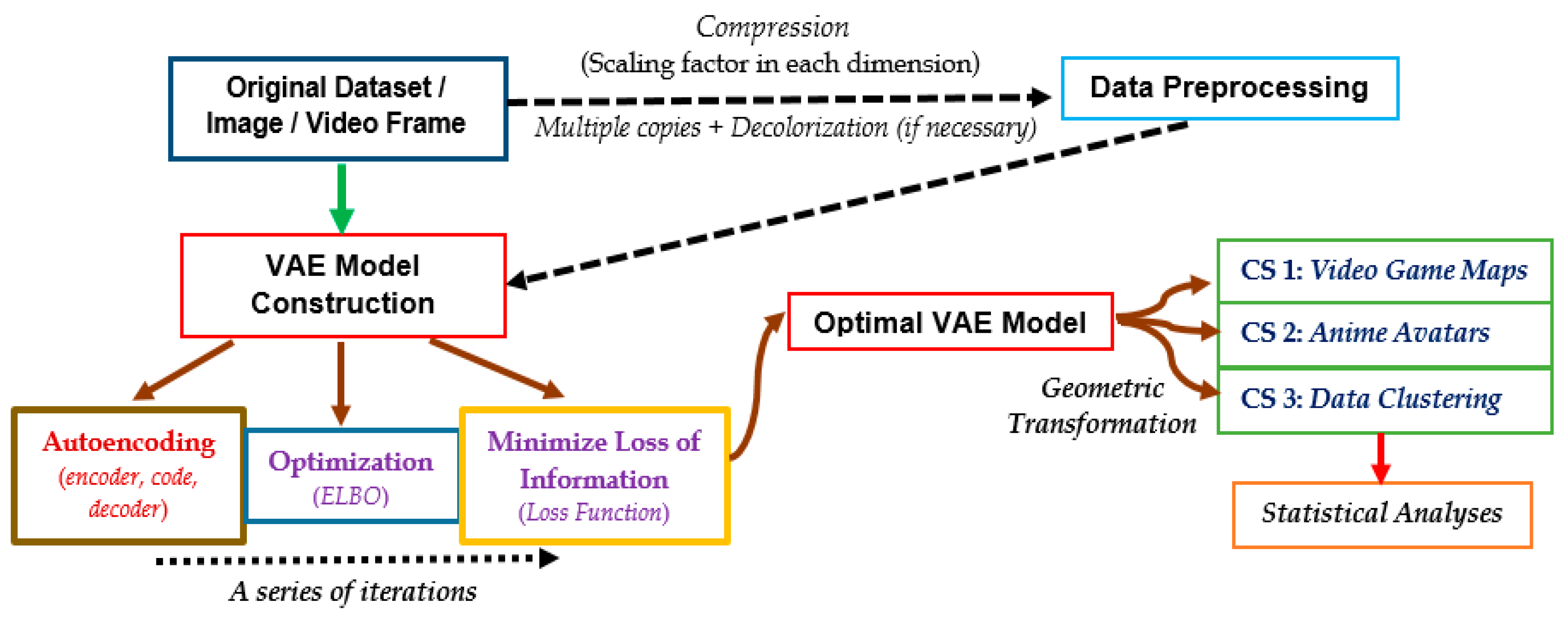

2.1. Overview of This Study

2.2. Data Sources and Description

2.2.1. Game Map from Arknights

2.2.2. Characters from Konachan

2.2.3. Modified National Institute of Standards and Technology (MNIST) Database

3. Methodologies: Steps of the VAE Model

3.1. Data Preprocessing

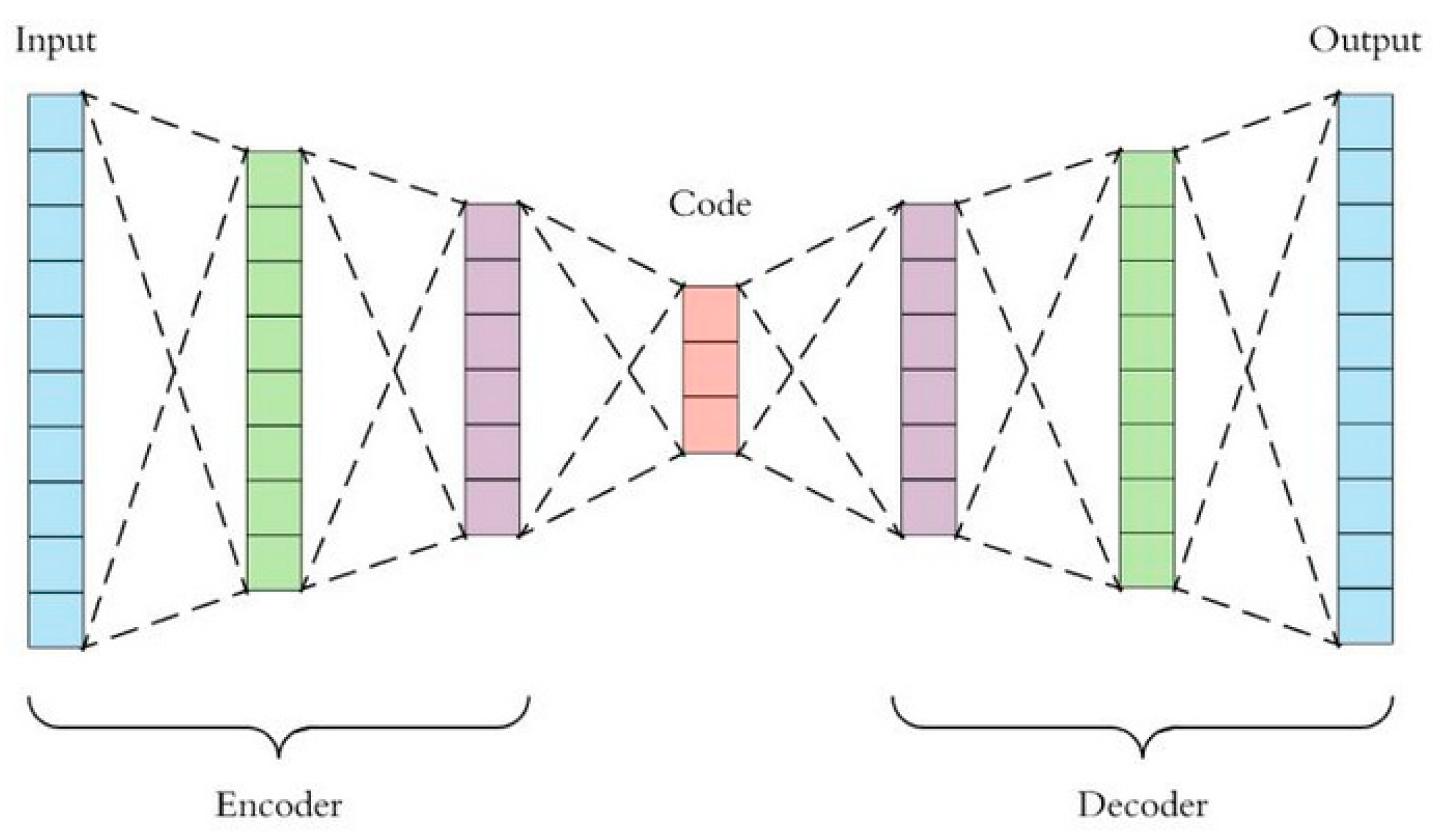

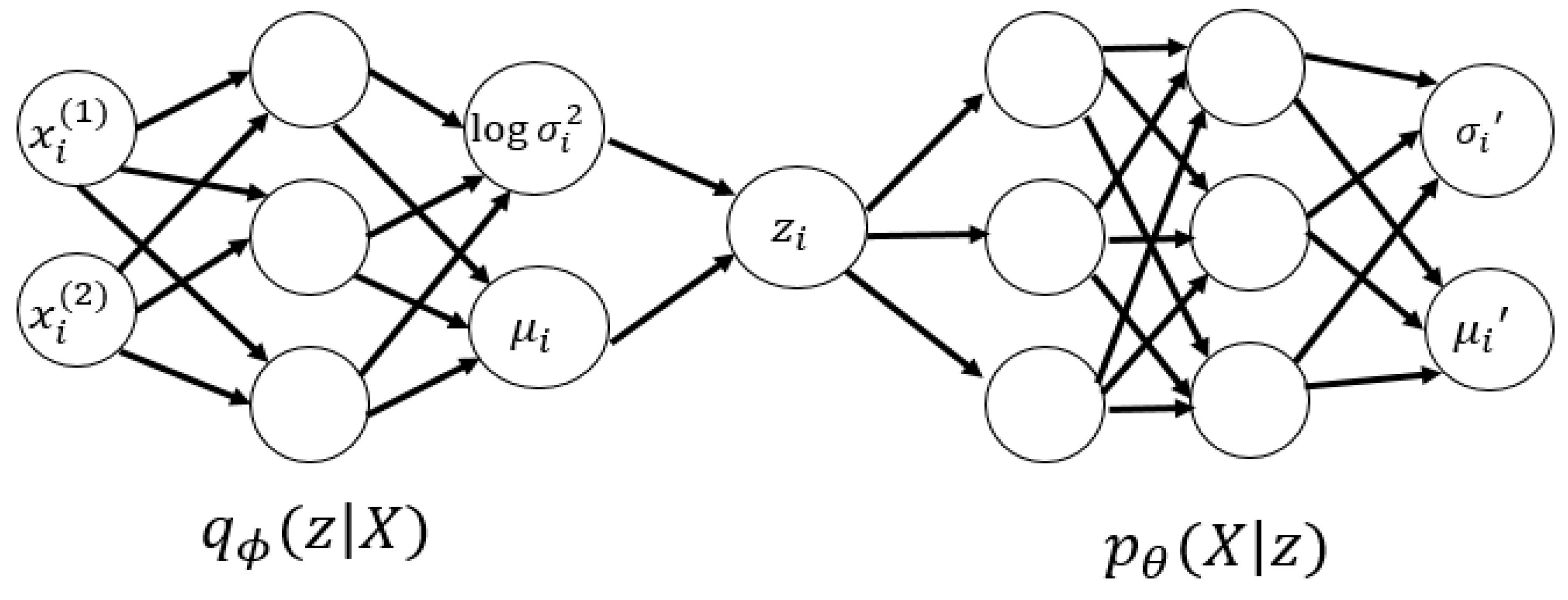

3.2. Autoencoding, Variational AutoEncoder (VAE) and Decoding Processes

3.3. Steps of the VAE Model

3.4. Evidence Lower Bound (ELBO) of the VAE Model

3.5. General Loss Function of the VAE Model

3.6. Loss Function of the VAE Model in Clustering

3.7. Statistical Metrics and Spatial Assessment

4. Numerical Experiments and Results

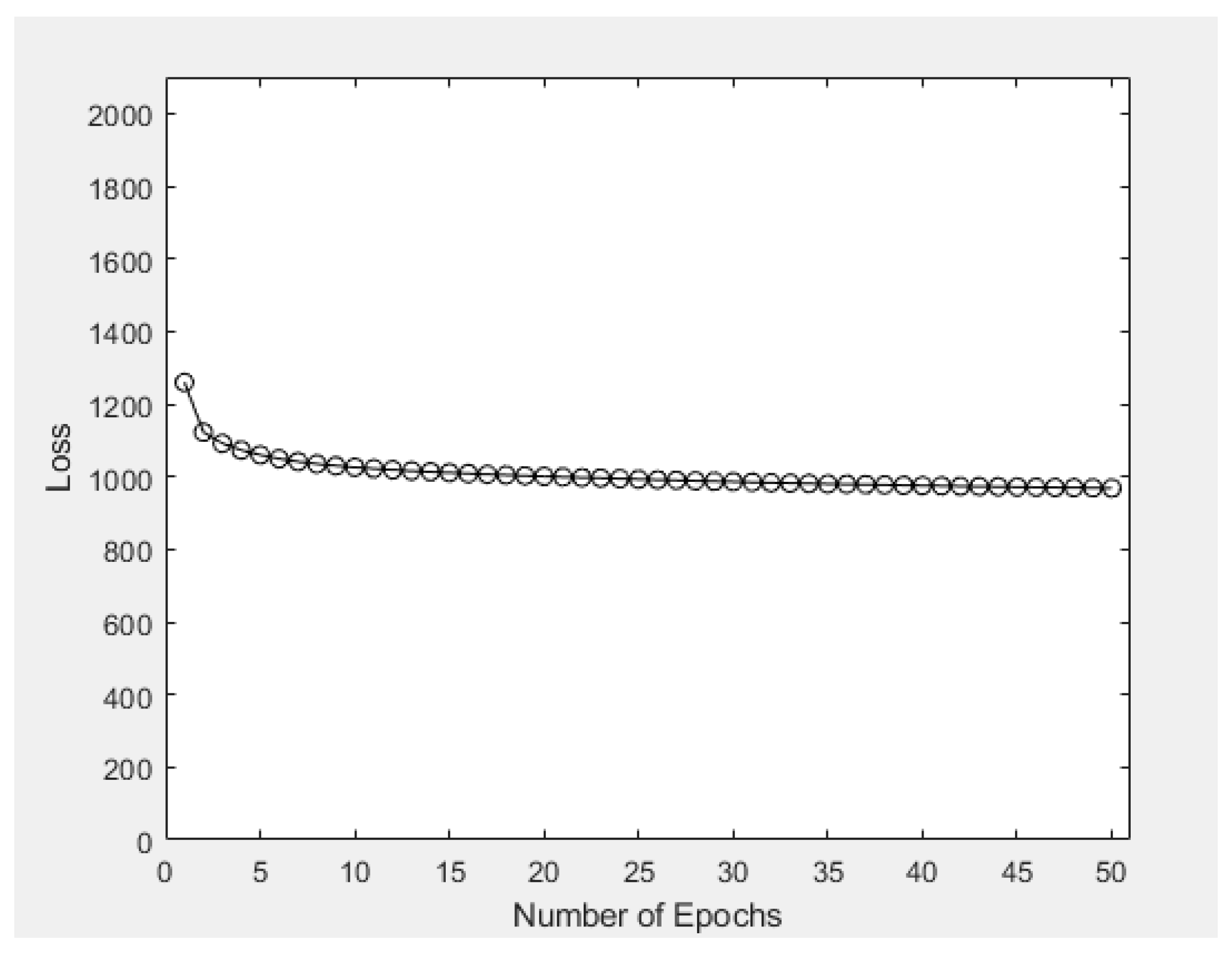

4.1. Case Study 1: Generation of Video Game Maps

4.2. Case Study 2: Generating Anime Avatars via the VAE Model

4.3. Case Study 3: Application of VAE Model to Data Clustering

4.4. Insights from Results of Case Studies & Practical Implementation

5. Discussions and Limitations

5.1. Deficiencies of a Low-Dimensional Manifold & Tokenization

5.2. Image Compression, Clarity of Outputs & Model Training

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Moyer-Packenham, P.S.; Lommatsch, C.W.; Litster, K.; Ashby, J.; Bullock, E.K.; Roxburgh, A.L.; Shumway, J.F.; Speed, E.; Covington, B.; Hartmann, C.; et al. How design features in digital math games support learning and mathematics connections. Comput. Hum. Behav. 2019, 91, 316–332. [Google Scholar] [CrossRef]

- Berglund, A.; Berglund, E.; Siliberto, F.; Prytz, E. Effects of reactive and strategic game mechanics in motion-based games. In Proceedings of the 2017 IEEE 5th International Conference on Serious Games and Applications for Health (SeGAH), Perth, Australia, 2–4 April 2017; pp. 1–8. [Google Scholar]

- Petrovas, A.; Bausys, R. Procedural Video Game Scene Generation by Genetic and Neutrosophic WASPAS Algorithms. Appl. Sci. 2022, 12, 772. [Google Scholar] [CrossRef]

- Amani, N.; Yuly, A.R. 3D modeling and animating of characters in educational game. In Journal of Physics: Conference Series; IOP Publishing: Bristol, UK, 2019; p. 012025. [Google Scholar]

- Patoli, M.Z.; Gkion, M.; Newbury, P.; White, M. Real time online motion capture for entertainment applications. In Proceedings of the 2010 Third IEEE International Conference on Digital Game and Intelligent Toy Enhanced Learning, Kaohsiung, Taiwan, 12–16 April 2010; pp. 139–145. [Google Scholar]

- Lukosch, H.K.; Bekebrede, G.; Kurapati, S.; Lukosch, S.G. A scientific foundation of simulation games for the analysis and design of complex systems. Simul. Gaming 2018, 49, 279–314. [Google Scholar] [CrossRef] [PubMed]

- OpenDotLab. Invisible Cities. Available online: https://opendot.github.io/ml4ainvisible-cities/ (accessed on 24 February 2023).

- Li, W.; Zhang, P.; Zhang, L.; Huang, Q.; He, X.; Lyu, S.; Gao, J. Object-driven text-to-image synthesis via adversarial training. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 12166–12174. [Google Scholar]

- Sarkar, A.; Cooper, S. Towards Game Design via Creative Machine Learning (GDCML). In Proceedings of the IEEE Conference on Games (CoG), Osaka, Japan, 24–27 August 2020; pp. 1–6. [Google Scholar]

- GameLook. Netease Game Artificial Intelligence Laboratory Sharing: AI Technology Applied in Games. Available online: http://www.gamelook.com.cn/2019/03/353413/ (accessed on 24 February 2023).

- History of Video Games. Available online: https://en.wikipedia.org/wiki/History_of_video_games (accessed on 24 February 2023).

- Wang, Q. Video game classification inventory. Cult. Mon. 2018, 4, 30–31. [Google Scholar]

- Need for Speed™ on Steam. Available online: https://store.steampowered.com/app/1262540/Need_for_Speed/ (accessed on 24 February 2023).

- Genshin Impact. Available online: https://genshin.hoyoverse.com/en/ (accessed on 24 February 2023).

- Game Design Basics: How to Start Creating Video Games. Available online: https://www.cgspectrum.com/blog/game-design-basics-how-to-start-building-video-games (accessed on 24 February 2023).

- Zhang, B. Design of mobile augmented reality game based on image recognition. J. Image Video Proc. 2017, 90. [Google Scholar] [CrossRef]

- Tilson, A.R. An Image Generation Methodology for Game Engines in Real-Time Using Generative Deep Learning Inference Frameworks. Master’s Thesis, University of Regina, Regina, Canada, 2021. [Google Scholar]

- Xbox Official Site. Available online: https://www.xbox.com/en-HK/ (accessed on 24 February 2023).

- PlayStation® Official Site. Available online: https://www.playstation.com/en-hk/ (accessed on 24 February 2023).

- Nintendo Switch Lite. Available online: https://www.nintendo.co.jp/hardware/detail/switch-lite/ (accessed on 24 February 2023).

- Edwards, G.; Subianto, N.; Englund, D.; Goh, J.W.; Coughran, N.; Milton, Z.; Mirnateghi, N.; Ali Shah, S.A. The role of machine learning in game development domain—A review of current trends and future directions. In Proceedings of the 2021 Digital Image Computing: Techniques and Applications (DICTA), Gold Coast, Australia, 16–30 July 2021; pp. 1–7. [Google Scholar]

- Elasri, M.; Elharrouss, O.; Al-Maadeed, S.; Tairi, H. Image generation: A review. Neural Process Lett. 2022, 54, 4609–4646. [Google Scholar] [CrossRef]

- Yin, H.H.F.; Ng, K.H.; Ma, S.K.; Wong, H.W.H.; Mak, H.W.L. Two-state alien tiles: A coding-theoretical perspective. Mathematics 2022, 10, 2994. [Google Scholar] [CrossRef]

- Justesen, N.; Bontrager, P.; Togelius, J.; Risi, S. Deep learning for video game playing. IEEE Trans. Games 2020, 12, 1–20. [Google Scholar] [CrossRef]

- Gow, J.; Corneli, J. Towards generating novel games using conceptual blending. In Proceedings of the Eleventh Artificial Intelligence and Interactive Digital Entertainment Conference, Santa Cruz, CA, USA, 14–18 November 2015. [Google Scholar]

- Sarkar, A.; Cooper, S. Blending levels from different games using LSTMs. In Proceedings of the AIIDE Workshop on Experimental AI in Games, Edmonton, AB, Canada, 13–17 November 2018. [Google Scholar]

- Sarkar, A.; Yang, Z.; Cooper, S. Controllable level blending between games using variational autoencoders. In Proceedings of the AIIDE Workshop on Experimental AI in Games, Atlanta, GA, USA, 8–9 October 2019. [Google Scholar]

- Moghaddam, M.M.; Boroomand, M.; Jalali, M.; Zareian, A.; Daeijavad, A.; Manshaei, M.H.; Krunz, M. Games of GANs: Game-theoretical models for generative adversarial networks. Artif Intell Rev. 2023. [Google Scholar] [CrossRef]

- Awiszus, M.; Schubert, F.; Rosenhahn, B. TOAD-GAN: Coherent style level generation from a single example. In Proceedings of the Sixteenth AAAI Conference on Artificial Intelligence and Interactive Digital Entertainment (AIIDE-20), Virtual, 19–23 October 2020. [Google Scholar]

- Schrum, J.; Gutierrez, J.; Volz, V.; Liu, J.; Lucas, S.; Risi, S. Interactive evolution and exploration within latent level-design space of generative adversarial networks. In Proceedings of the 2020 Genetic and Evolutionary Computation Conference, Cancún, Mexico, 8–12 July 2020. [Google Scholar]

- Torrado, R.E.; Khalifa, A.; Green, M.C.; Justesen, N.; Risi, S.; Togelius, J. Bootstrapping conditional GANs for video game level generation. In Proceedings of the 2020 IEEE Conference on Games (CoG), Osaka, Japan, 24 February 2020. [Google Scholar]

- Emekligil, F.G.A.; Öksüz, İ. Game character generation with generative adversarial networks. In Proceedings of the 2022 30th Signal Processing and Communications Applications Conference (SIU), Safranbolu, Turkey, 1 March 2022; pp. 1–4. [Google Scholar]

- Kim, J.; Jin, H.; Jang, S.; Kang, S.; Kim, Y. Game effect sprite generation with minimal data via conditional GAN. Expert Syst. Appl. 2023, 211, 118491. [Google Scholar] [CrossRef]

- Cinelli, L.P.; Marins, M.A.; da Silva, E.A.B.; Netto, S.L. Variational Autoencoder. In Variational Methods for Machine Learning with Applications to Deep Networks; Springer: Cham, Switzerland, 2021; pp. 111–149. [Google Scholar]

- Kingma, D.P.; Welling, M. Auto-encoding variational bayes. In Proceedings of the International Conference on Learning Representations (ICLR), Banff, AB, Canada, 14–16 April 2014. [Google Scholar]

- Cai, L.; Gao, H.; Ji, S. Multi-stage variational auto-encoders for coarse-to-fine image generation. In Proceedings of the 2019 SIAM International Conference on Data Mining, Edmonton, AB, Canada, 2–4 May 2019; pp. 630–638. [Google Scholar]

- Cai, F.; Ozdagli, A.I.; Koutsoukos, X. Variational autoencoder for classification and regression for out-of-distribution detection in learning-enabled cyber-physical systems. Appl. Artif. Intell. 2022, 36, 2131056. [Google Scholar] [CrossRef]

- Kaur, D.; Islam, S.N.; Mahmud, M.A. A variational autoencoder-based dimensionality reduction technique for generation forecasting in cyber-physical smart grids. In Proceedings of the 2021 IEEE International Conference on Communications Workshops (ICC Workshops), Montreal, QC, Canada, 18 June 2021; pp. 1–6. [Google Scholar]

- Vuyyuru, V.A.; Rao, G.A.; Murthy, Y.V.S. A novel weather prediction model using a hybrid mechanism based on MLP and VAE with fire-fly optimization algorithm. Evol. Intel. 2021, 14, 1173–1185. [Google Scholar] [CrossRef]

- Lin, S.; Clark, R.; Birke, R.; Schonborn, S.; Trigoni, N.; Roberts, S. Anomaly detection for time series using VAE-LSTM hybrid model. In Proceedings of the 2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Barcelona, Spain, 4–8 May 2020; pp. 4322–4326. [Google Scholar]

- Bao, J.; Chen, D.; Wen, F.; Li, H.; Hua, G. CVAE-GAN: Fine-grained image generation through asymmetric training. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2764–2773. [Google Scholar]

- Arknights. Available online: https://www.arknights.global/ (accessed on 25 February 2023).

- Installing the Unity Hub. Available online: https://docs.unity3d.com/2020.1/Documentation/Manual/GettingStartedInstallingHub.html (accessed on 25 February 2023).

- Anime Wallpapers. Available online: https://konachan.com/ (accessed on 25 February 2023).

- The MNIST Database of Handwritten Digits. Available online: http://yann.lecun.com/exdb/mnist/ (accessed on 13 March 2023).

- Kaplun, V.; Shevlyakov, A. Contour Pattern Recognition with MNIST Dataset. In Proceedings of the Dynamics of Systems, Mechanisms and Machines (Dynamics), Omsk, Russia, 15–17 November 2022; pp. 1–3. [Google Scholar]

- Nocentini, O.; Kim, J.; Bashir, M.Z.; Cavallo, F. Image classification using multiple convolutional neural networks on the fashion-MNIST dataset. Sensors 2022, 22, 9544. [Google Scholar] [CrossRef] [PubMed]

- How to Develop a CNN for MNIST Handwritten Digit Classification. Available online: https://machinelearningmastery.com/how-to-develop-a-convolutional-neural-network-from-scratch-for-mnist-handwritten-digit-classification/ (accessed on 26 February 2023).

- Lu, C.; Xu, L.; Jia, J. Contrast preserving decolorization. In Proceedings of the IEEE International Conference on Computational Photography (ICCP), Seattle, WA, USA, 28–29 April 2012. [Google Scholar]

- Jolliffe, I.T.; Cadima, J. Principal component analysis: A review and recent developments. Phil. Trans. R. Soc. A 2016, 374, 20150202. [Google Scholar] [CrossRef]

- Baldi, P. Autoencoders, unsupervised learning, and deep architectures. J. Mach. Learn. Res. 2012, 27, 37–50. [Google Scholar]

- Balodi, T. 3 Difference Between PCA and Autoencoder with Python Code. Available online: https://www.analyticssteps.com/blogs/3-difference-between-pca-and-autoencoder-python-code (accessed on 26 February 2023).

- Ding, M. The road from MLE to EM to VAE: A brief tutorial. AI Open 2022, 3, 29–34. [Google Scholar] [CrossRef]

- Difference Between a Batch and an Epoch in a Neural Network. Available online: https://machinelearningmastery.com/difference-between-a-batch-and-an-epoch/ (accessed on 26 February 2023).

- Roy, K.; Ishmam, A.; Taher, K.A. Demand forecasting in smart grid using long short-term memory. In Proceedings of the 2021 International Conference on Automation, Control and Mechatronics for Industry 4.0 (ACMI), Rajshahi, Bangladesh, 8–9 July 2021; pp. 1–5. [Google Scholar]

- Jawahar, M.; Anbarasi, L.J.; Ravi, V.; Prassanna, J.; Graceline Jasmine, S.; Manikandan, R.; Sekaran, R.; Kannan, S. CovMnet–Deep Learning Model for classifying Coronavirus (COVID-19). Health Technol. 2022, 12, 1009–1024. [Google Scholar] [CrossRef]

- Gupta, S.; Porwal, R. Combining laplacian and sobel gradient for greater sharpening. IJIVP 2016, 6, 1239–1243. [Google Scholar] [CrossRef]

- Ul Din, S.; Mak, H.W.L. Retrieval of Land-Use/Land Cover Change (LUCC) maps and urban expansion dynamics of hyderabad, pakistan via landsat datasets and support vector machine framework. Remote Sens. 2021, 13, 3337. [Google Scholar] [CrossRef]

- Drouyer, S. VehSat: A large-scale dataset for vehicle detection in satellite images. In Proceedings of the IGARSS 2020—2020 IEEE International Geoscience and Remote Sensing Symposium, Waikoloa, HI, USA, 26 September–2 October 2020; pp. 268–271. [Google Scholar]

- Wang, W.; Han, C.; Zhou, T.; Liu, D. Visual recognition with deep nearest centroids. In Proceedings of the Eleventh International Conference on Learning Representations (ICLR 2023), Kigali, Rwanda, 1–5 May 2023. [Google Scholar]

- Kalatzis, D.; Eklund, D.; Arvanitidis, G.; Hauberg, S. Variational autoencoders with Riemannian Brownian motion priors. In Proceedings of the 37th International Conference on Machine Learning, Online, 13–18 July 2020; Volume 119. [Google Scholar]

- Armi, L.; Fekri-Ershad, S. Texture image analysis and texture classification methods. Int. J. Image Process. Pattern Recognit. 2019, 2, 1–29. [Google Scholar]

- Scheunders, P.; Livens, S.; van-de-Wouwer, G.; Vautrot, P.; Van-Dyck, D. Wavelet-based texture analysis. Int. J. Comput. Sci. Inf. Manag. 1998, 1, 22–34. [Google Scholar]

- Arivazhagan, S.; Ganesan, L.; Kumar, T.S. Texture classification using ridgelet transform. Pattern Recognit. Lett. 2006, 27, 1875–1883. [Google Scholar] [CrossRef]

- Idrissa, M.; Acheroy, M. Texture classification using Gabor filters. Pattern Recognit. Lett. 2002, 23, 1095–1102. [Google Scholar] [CrossRef]

- González, A. Measurement of areas on a sphere using fibonacci and latitude–longitude lattices. Math. Geosci. 2010, 42, 49–64. [Google Scholar] [CrossRef]

- Cao, Z.; Liu, D.; Wang, Q.; Chen, Y. Towards unbiased label distribution learning for facial pose estimation using anisotropic spherical gaussian. In Proceedings of the European Conference on Computer Vision (ECCV 2022), Tel Aviv, Israel, 23–27 October 2022. [Google Scholar]

- Xenopoulos, P.; Rulff, J.; Silva, C. ggViz: Accelerating large-scale esports game analysis. Proc. ACM Hum. Comput. Interact. 2022, 6, 238. [Google Scholar] [CrossRef]

- ImageNet. Available online: https://www.image-net.org/ (accessed on 28 February 2023).

- Xie, D.; Cheng, J.; Tao, D. A new remote sensing image dataset for large-scale remote sensing detection. In Proceedings of the 2019 IEEE International Conference on Real-Time Computing and Robotics (RCAR), Irkutsk, Russia, 4–9 August 2019; pp. 153–157. [Google Scholar]

- Mak, H.W.L.; Laughner, J.L.; Fung, J.C.H.; Zhu, Q.; Cohen, R.C. Improved satellite retrieval of tropospheric NO2 column density via updating of Air Mass Factor (AMF): Case study of Southern China. Remote Sens. 2018, 10, 1789. [Google Scholar] [CrossRef]

- Lin, Y.; Lv, F.; Zhu, S.; Yang, M.; Cour, T.; Yu, K.; Cao, L.; Huang, T. Large-scale image classification: Fast feature extraction and SVM training. In CVPR 2011; IEEE: New York, NY, USA, 2011; pp. 1689–1696. [Google Scholar]

- Biswal, A. What are Generative Adversarial Networks (GANs). Available online: https://www.simplilearn.com/tutorials/deep-learning-tutorial/generative-adversarial-networks-gans (accessed on 28 February 2023).

| Number of Epochs | Average Loss | Decrease in Average Loss with 1 More Epoch |

|---|---|---|

| 1 | 1259.3 | Not applicable |

| 2 | 1122.4 | 136.9 |

| 3 | 1091.5 | 30.9 |

| 4 | 1072.9 | 18.6 |

| 5 | 1059.7 | 13.2 |

| 6 | 1049.2 | 10.5 |

| 7 | 1041.1 | 6.9 |

| 8 | 1034.8 | 6.3 |

| 9 | 1029.8 | 5.0 |

| 10 | 1025.6 | 4.2 |

| 11 | 1021.9 | 3.7 |

| 12 | 1018.7 | 3.2 |

| 13 | 1015.5 | 3.2 |

| 14 | 1013.1 | 2.4 |

| 15 | 1010.7 | 2.4 |

| 16 | 1008.4 | 2.3 |

| Number of Epochs | Average Accuracy |

|---|---|

| 3 | 29.7 |

| 5 | 57.2 |

| 8 | 69.7 |

| 10 | 69.9 |

| 20 | 74.3 |

| 30 | 80.7 |

| 40 | 83.7 |

| 50 | 85.4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mak, H.W.L.; Han, R.; Yin, H.H.F. Application of Variational AutoEncoder (VAE) Model and Image Processing Approaches in Game Design. Sensors 2023, 23, 3457. https://doi.org/10.3390/s23073457

Mak HWL, Han R, Yin HHF. Application of Variational AutoEncoder (VAE) Model and Image Processing Approaches in Game Design. Sensors. 2023; 23(7):3457. https://doi.org/10.3390/s23073457

Chicago/Turabian StyleMak, Hugo Wai Leung, Runze Han, and Hoover H. F. Yin. 2023. "Application of Variational AutoEncoder (VAE) Model and Image Processing Approaches in Game Design" Sensors 23, no. 7: 3457. https://doi.org/10.3390/s23073457

APA StyleMak, H. W. L., Han, R., & Yin, H. H. F. (2023). Application of Variational AutoEncoder (VAE) Model and Image Processing Approaches in Game Design. Sensors, 23(7), 3457. https://doi.org/10.3390/s23073457