Tactile-Sensing Technologies: Trends, Challenges and Outlook in Agri-Food Manipulation

Abstract

:1. Introduction

- Context of tactile sensation research: We provide a comprehensive overview of tactile sensation technology in Section 2 and core algorithms in Section 3 that are used in robotics and automation research. This discussion offers a solid foundation and understanding of the current state-of-the-art technologies in the field.

- Tactile sensation in agri-food: Our review paper focuses on the application of tactile sensation research specifically in the agri-food domain, which has not been covered in other tactile sensation review papers. In Section 4, we present a concise and comprehensive examination of the current research on tactile sensation applied to various aspects of agri-food, highlighting its significance and potential impact.

- Systematic Review of Shortcomings and Challenges: We contribute a systematic review of the shortcomings and use case challenges associated with the developed tactile sensor technologies for agri-food use cases. This critical assessment, presented in Section 5, addresses an aspect that has been largely disregarded in other review papers on tactile sensors [43,44,45,46,47,48,49].

2. Tactile-Sensing Technologies

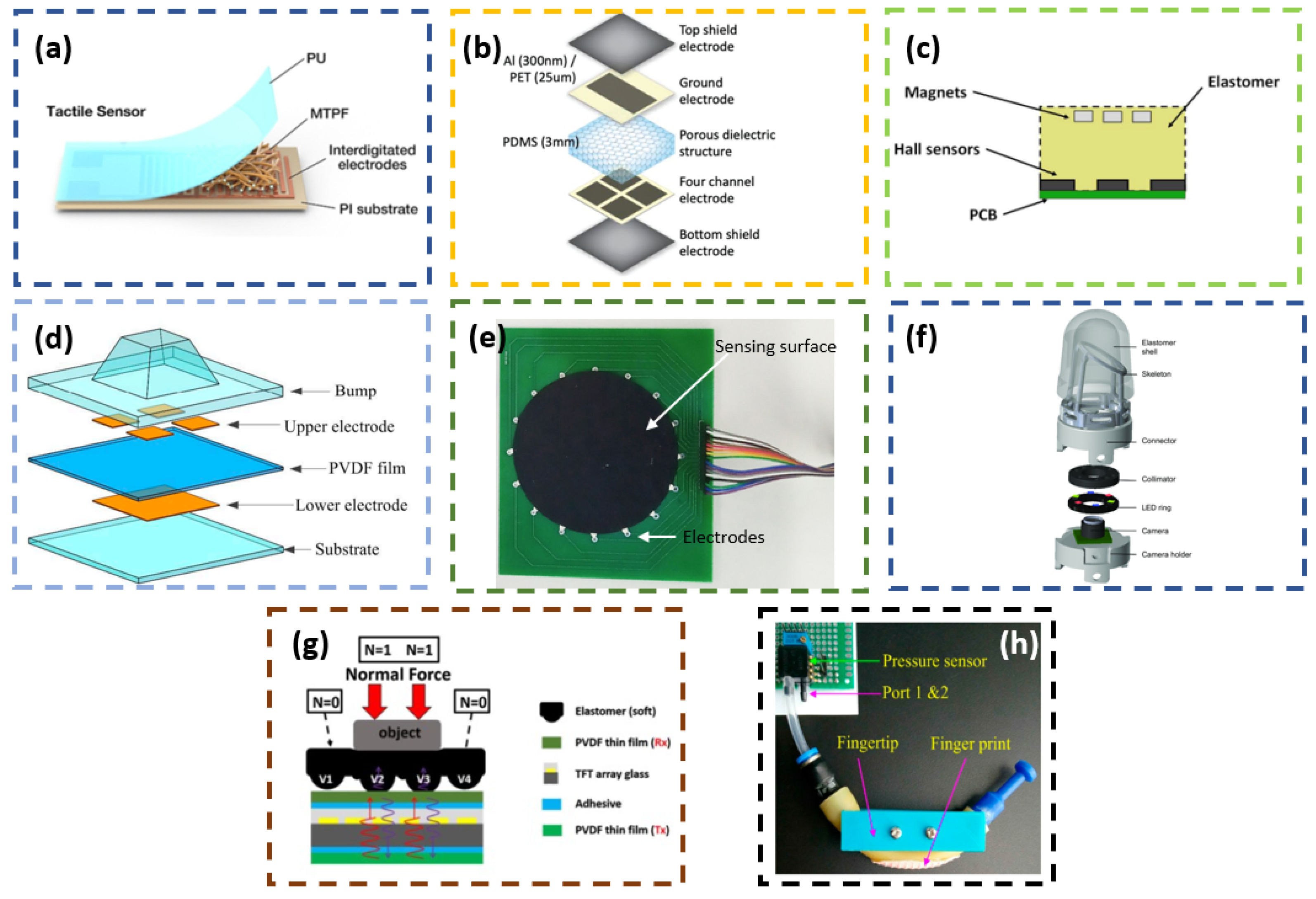

2.1. Transduction Methods

2.1.1. Resistive and Piezoresistive

2.1.2. Capacitive

2.1.3. Magnetic and Hall Effect

2.1.4. Piezoelectric

2.1.5. Electrical Impedance Tomography

2.1.6. Camera/Vision Based

2.1.7. Optic Fiber Based

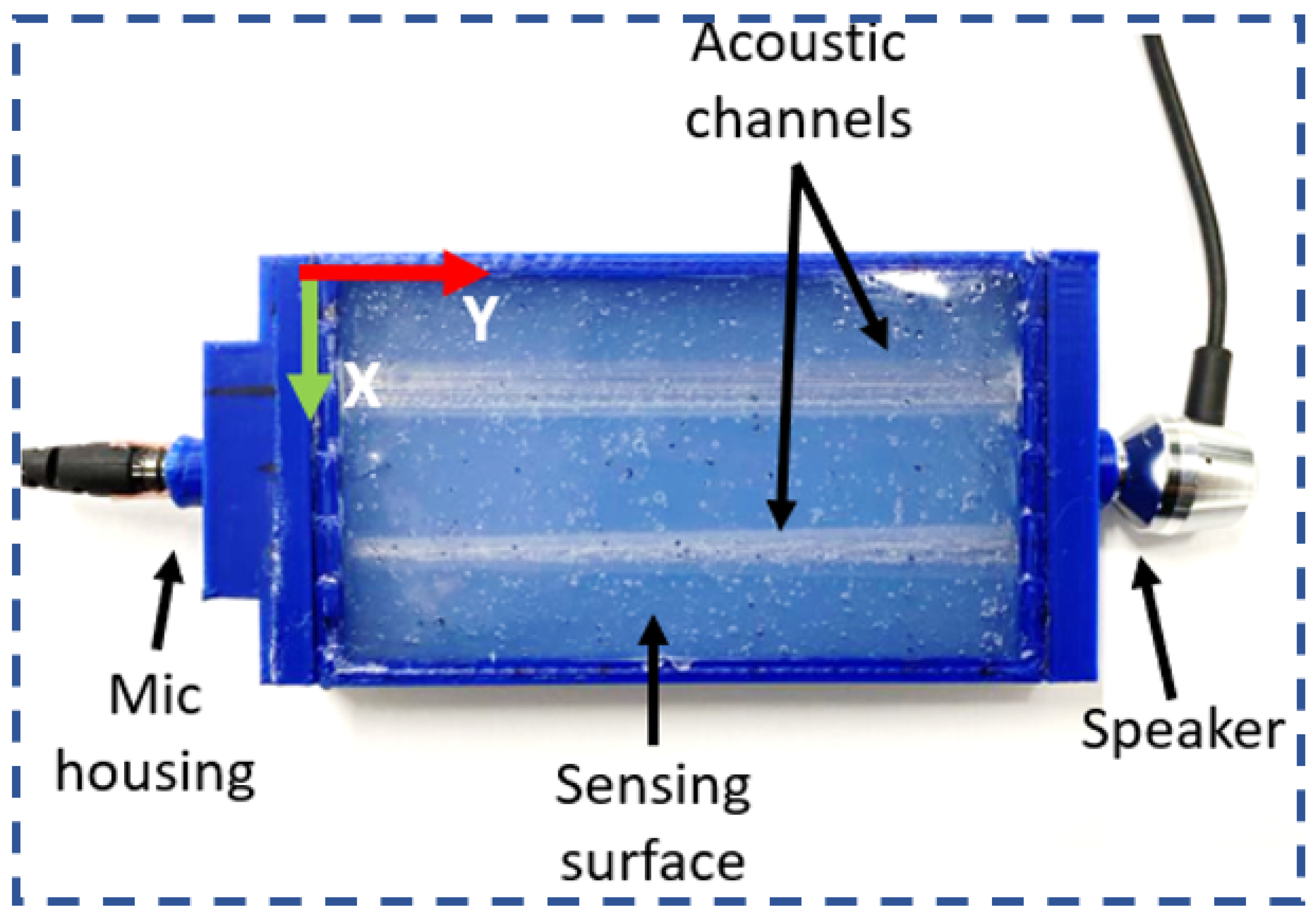

2.1.8. Acoustics

2.1.9. Fluid Based

2.1.10. Triboelectric

2.1.11. Combination of Various Methods

2.2. Tactile Features

2.2.1. Contact Force

2.2.2. Contact Location

2.2.3. Contact Deformation

2.2.4. Other Features

2.3. Advancements in Tactile Sensing

2.3.1. Low-Cost Tactile-Sensing Techniques

2.3.2. Self-Powered Tactile Sensors

2.3.3. Anti-Microbial Feature of Tactile Sensors

| Tactile Feature | Pr/Re | C | Cr | O | Ma | Pe | EIT | Ac | Tr | F | Com |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Normal Force | [101] | [82,89,102,103,104] | [32,56,57,84] | [70,105,106] | [53,90] | - | - | [71,91] | [77] | [76] | [78] |

| Shear Force | - | [82,87,104] | [84] | - | [53,90] | - | - | - | - | - | [78] |

| Tangential Force | - | [89,103] | - | - | - | - | - | - | - | - | - |

| Torque | - | [82,87] | [107] | - | [53] | - | - | - | - | - | [78] |

| Pressure | [81,88,108,109] | [87] | - | - | - | [110] | [55] | - | [111,112] | - | [79,80] |

| Vibration | - | - | - | - | - | - | - | - | - | [76] | [80] |

| Contact Location | [101] | - | [58,63,64,84] | [70,105] | [90] | - | [55] | [75,91,93] | - | - | [78,80] |

| Deformation/Object Shape/geometry | [101] | - | [59,62,65,68,107,113] | [17,114] | - | - | - | [71,72,74] | - | [115] | [80] |

| Surface texture | - | - | [60,63,116] | [117] | - | - | - | - | [77] | [76] | - |

| Pose/Orientation | - | - | [66,67] | - | - | - | - | - | - | - | - |

| Temperature | - | - | - | - | - | - | - | [93] | - | - | [79] |

3. Tactile Sensors in Robotics and Automation

3.1. Food Item Feature Extraction

3.2. Food Item Grasping

3.3. Food Item Identification

3.4. Selective Harvesting Motion Planning and Control

3.5. Food Preparation and Kitchen Robotics

3.6. Summary

4. Applications of Tactile Sensors in Agri-Food

4.1. Force Control

4.2. Robotic Grasping

4.3. Slip Detection

4.4. Texture Recognition

4.5. Compliance Control

4.6. Object Recognition

4.7. Three-Dimensional Shape Reconstruction

4.8. Haptic Feedback

4.9. Object Pushing

4.10. Summary

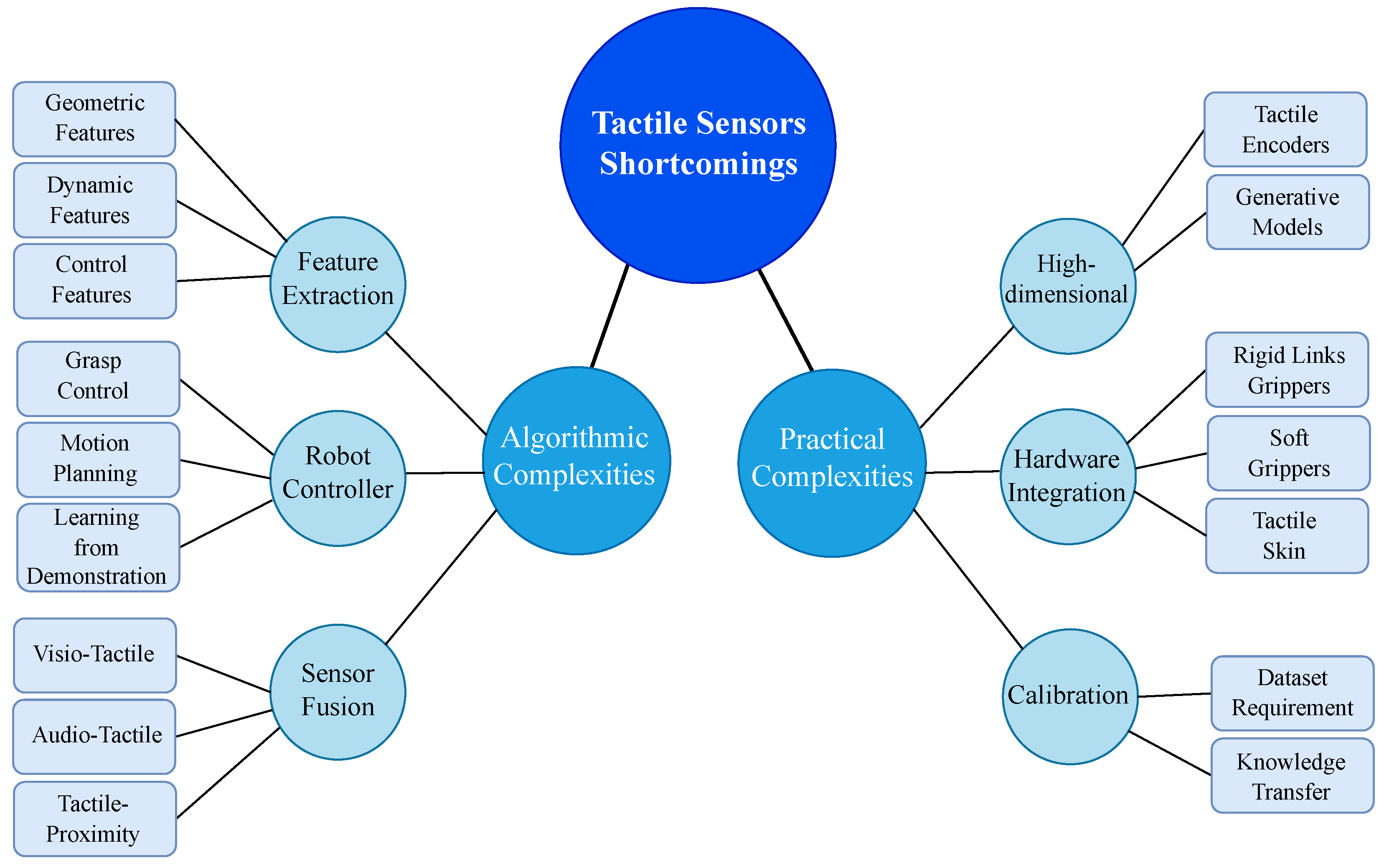

5. Complexities Associated with Tactile Sensors

5.1. Tactile Feature Extraction

5.1.1. Geometric Features

5.1.2. Dynamic Features

5.1.3. Controller Features

5.2. Robot Controller

5.2.1. Grasp Control

5.2.2. Motion Planning

5.2.3. Learning from Demonstration

5.3. Sensor Fusion

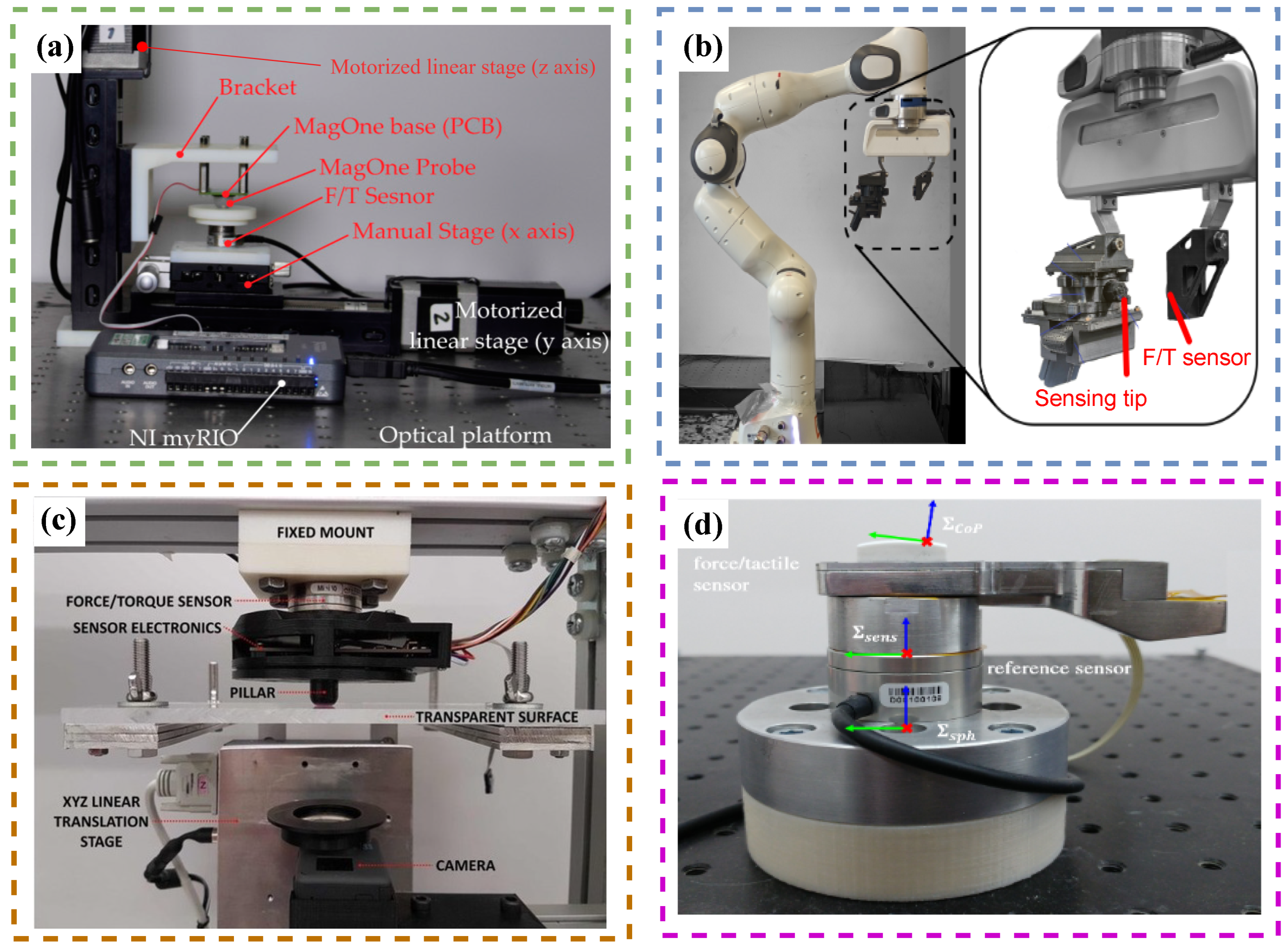

5.4. Sensor Calibration

5.5. The Curse of Dimensionality

5.6. Hardware Integration and Scalibility

5.6.1. Rigid Links Grippers

5.6.2. Soft Grippers

5.6.3. Tactile Skin

6. Future Trends and Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Duckett, T.; Pearson, S.; Blackmore, S.; Grieve, B.; Chen, W.H.; Cielniak, G.; Cleaversmith, J.; Dai, J.; Davis, S.; Fox, C.; et al. Agricultural robotics: The future of robotic agriculture. arXiv 2018, arXiv:1806.06762. [Google Scholar]

- Zou, L.; Ge, C.; Wang, Z.J.; Cretu, E.; Li, X. Novel tactile sensor technology and smart tactile sensing systems: A review. Sensors 2017, 17, 2653. [Google Scholar] [CrossRef] [PubMed]

- Dong, J.; Cong, Y.; Sun, G.; Zhang, T. Lifelong robotic visual-tactile perception learning. Pattern Recognit. 2022, 121, 108176. [Google Scholar] [CrossRef]

- Zhang, Z.; Zhou, J.; Yan, Z.; Wang, K.; Mao, J.; Jiang, Z. Hardness recognition of fruits and vegetables based on tactile array information of manipulator. Comput. Electron. Agric. 2021, 181, 105959. [Google Scholar] [CrossRef]

- Blanes, C.; Ortiz, C.; Mellado, M.; Beltrán, P. Assessment of eggplant firmness with accelerometers on a pneumatic robot gripper. Comput. Electron. Agric. 2015, 113, 44–50. [Google Scholar] [CrossRef]

- Ramirez-Amaro, K.; Dean-Leon, E.; Bergner, F.; Cheng, G. A semantic-based method for teaching industrial robots new tasks. KI-Künstliche Intelligenz 2019, 33, 117–122. [Google Scholar] [CrossRef]

- Dean-Leon, E.; Pierce, B.; Bergner, F.; Mittendorfer, P.; Ramirez-Amaro, K.; Burger, W.; Cheng, G. TOMM: Tactile omnidirectional mobile manipulator. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017; pp. 2441–2447. [Google Scholar]

- Scimeca, L.; Maiolino, P.; Cardin-Catalan, D.; del Pobil, A.P.; Morales, A.; Iida, F. Non-destructive robotic assessment of mango ripeness via multi-point soft haptics. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 1821–1826. [Google Scholar]

- Ribeiro, P.; Cardoso, S.; Bernardino, A.; Jamone, L. Fruit quality control by surface analysis using a bio-inspired soft tactile sensor. In Proceedings of the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Las Vegas, NV, USA, 24 October 2020–24 January 2021; pp. 8875–8881. [Google Scholar]

- Maharshi, V.; Sharma, S.; Prajesh, R.; Das, S.; Agarwal, A.; Mitra, B. A Novel Sensor for Fruit Ripeness Estimation Using Lithography Free Approach. IEEE Sens. J. 2022, 22, 22192–22199. [Google Scholar] [CrossRef]

- Cortés, V.; Blanes, C.; Blasco, J.; Ortiz, C.; Aleixos, N.; Mellado, M.; Cubero, S.; Talens, P. Integration of simultaneous tactile sensing and visible and near-infrared reflectance spectroscopy in a robot gripper for mango quality assessment. Biosyst. Eng. 2017, 162, 112–123. [Google Scholar] [CrossRef]

- Blanes, C.; Mellado, M.; Beltrán, P. Tactile sensing with accelerometers in prehensile grippers for robots. Mechatronics 2016, 33, 1–12. [Google Scholar] [CrossRef]

- Zhang, J.; Lai, S.; Yu, H.; Wang, E.; Wang, X.; Zhu, Z. Fruit Classification Utilizing a Robotic Gripper with Integrated Sensors and Adaptive Grasping. Math. Probl. Eng. 2021, 2021, 7157763. [Google Scholar] [CrossRef]

- Li, N.; Yin, Z.; Zhang, W.; Xing, C.; Peng, T.; Meng, B.; Yang, J.; Peng, Z. A triboelectric-inductive hybrid tactile sensor for highly accurate object recognition. Nano Energy 2022, 96, 107063. [Google Scholar] [CrossRef]

- Riffo, V.; Pieringer, C.; Flores, S.; Carrasco, C. Object recognition using tactile sensing in a robotic gripper. Insight-Non-Destr. Test. Cond. Monit. 2022, 64, 383–392. [Google Scholar] [CrossRef]

- Li, G.; Zhu, R. A multisensory tactile system for robotic hands to recognize objects. Adv. Mater. Technol. 2019, 4, 1900602. [Google Scholar] [CrossRef]

- Lyu, C.; Xiao, Y.; Deng, Y.; Chang, X.; Yang, B.; Tian, J.; Jin, J. Tactile recognition technology based on Multi-channel fiber optical sensing system. Measurement 2023, 216, 112906. [Google Scholar] [CrossRef]

- Cook, J.N.; Sabarwal, A.; Clewer, H.; Navaraj, W. Tactile sensor array laden 3D-printed soft robotic gripper. In Proceedings of the 2020 IEEE SENSORS, Las Vegas, NV, USA, 25–29 October 2020; pp. 1–4. [Google Scholar]

- Liu, S.Q.; Adelson, E.H. Gelsight fin ray: Incorporating tactile sensing into a soft compliant robotic gripper. In Proceedings of the 2022 IEEE 5th International Conference on Soft Robotics (RoboSoft), Edinburgh, UK, 4–8 April 2022; pp. 925–931. [Google Scholar]

- Hohimer, C.J.; Petrossian, G.; Ameli, A.; Mo, C.; Pötschke, P. 3D printed conductive thermoplastic polyurethane/carbon nanotube composites for capacitive and piezoresistive sensing in soft pneumatic actuators. Addit. Manuf. 2020, 34, 101281. [Google Scholar] [CrossRef]

- Zhou, H.; Wang, X.; Kang, H.; Chen, C. A Tactile-enabled Grasping Method for Robotic Fruit Harvesting. arXiv 2021, arXiv:2110.09051. [Google Scholar]

- Zhou, H.; Kang, H.; Wang, X.; Au, W.; Wang, M.Y.; Chen, C. Branch interference sensing and handling by tactile enabled robotic apple harvesting. Agronomy 2023, 13, 503. [Google Scholar] [CrossRef]

- Yamaguchi, A.; Atkeson, C.G. Tactile behaviors with the vision-based tactile sensor FingerVision. Int. J. Hum. Robot. 2019, 16, 1940002. [Google Scholar] [CrossRef]

- Dischinger, L.M.; Cravetz, M.; Dawes, J.; Votzke, C.; VanAtter, C.; Johnston, M.L.; Grimm, C.M.; Davidson, J.R. Towards intelligent fruit picking with in-hand sensing. In Proceedings of the 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, 27 September–1 October 2021; pp. 3285–3291. [Google Scholar]

- Zhou, H.; Xiao, J.; Kang, H.; Wang, X.; Au, W.; Chen, C. Learning-based slip detection for robotic fruit grasping and manipulation under leaf interference. Sensors 2022, 22, 5483. [Google Scholar] [CrossRef]

- Tian, G.; Zhou, J.; Gu, B. Slipping detection and control in gripping fruits and vegetables for agricultural robot. Int. J. Agric. Biol. Eng. 2018, 11, 45–51. [Google Scholar] [CrossRef]

- Misimi, E.; Olofsson, A.; Eilertsen, A.; Øye, E.R.; Mathiassen, J.R. Robotic handling of compliant food objects by robust learning from demonstration. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 6972–6979. [Google Scholar]

- Schuetz, C.; Pfaff, J.; Sygulla, F.; Rixen, D.; Ulbrich, H. Motion planning for redundant manipulators in uncertain environments based on tactile feedback. In Proceedings of the 2015 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Hamburg, Germany, 28 September–3 October 2015; pp. 6387–6394. [Google Scholar]

- Nazari, K.; Gandolfi, G.; Talebpour, Z.; Rajendran, V.; Rocco, P.; Ghalamzan E, A. Deep Functional Predictive Control for Strawberry Cluster Manipulation using Tactile Prediction. arXiv 2023, arXiv:2303.05393. [Google Scholar]

- Tsuchiya, Y.; Kiyokawa, T.; Ricardez, G.A.G.; Takamatsu, J.; Ogasawara, T. Pouring from deformable containers using dual-arm manipulation and tactile sensing. In Proceedings of the 2019 Third IEEE International Conference on Robotic Computing (IRC), Naples, Italy, 25–27 February 2019; pp. 357–362. [Google Scholar]

- Wang, X.d.; Sun, Y.h.; Wang, Y.; Hu, T.j.; Chen, M.h.; He, B. Artificial tactile sense technique for predicting beef tenderness based on FS pressure sensor. J. Bionic Eng. 2009, 6, 196–201. [Google Scholar] [CrossRef]

- Yamaguchi, A.; Atkeson, C.G. Combining finger vision and optical tactile sensing: Reducing and handling errors while cutting vegetables. In Proceedings of the 2016 IEEE-RAS 16th International Conference on Humanoid Robots (Humanoids), Cancun, Mexico, 15–17 November 2016; pp. 1045–1051. [Google Scholar]

- Zhang, K.; Sharma, M.; Veloso, M.; Kroemer, O. Leveraging multimodal haptic sensory data for robust cutting. In Proceedings of the 2019 IEEE-RAS 19th International Conference on Humanoid Robots (Humanoids), Toronto, ON, Canada, 15–17 October 2019; pp. 409–416. [Google Scholar]

- Shimonomura, K.; Chang, T.; Murata, T. Detection of Foreign Bodies in Soft Foods Employing Tactile Image Sensor. Front. Robot. AI 2021, 8, 774080. [Google Scholar] [CrossRef] [PubMed]

- DelPreto, J.; Liu, C.; Luo, Y.; Foshey, M.; Li, Y.; Torralba, A.; Matusik, W.; Rus, D. ActionSense: A multimodal dataset and recording framework for human activities using wearable sensors in a kitchen environment. Adv. Neural Inf. Process. Syst. 2022, 35, 13800–13813. [Google Scholar]

- Baldini, G.; Albini, A.; Maiolino, P.; Cannata, G. An Atlas for the Inkjet Printing of Large-Area Tactile Sensors. Sensors 2022, 22, 2332. [Google Scholar] [CrossRef]

- Lin, W.; Wang, B.; Peng, G.; Shan, Y.; Hu, H.; Yang, Z. Skin-inspired piezoelectric tactile sensor array with crosstalk-free row+ column electrodes for spatiotemporally distinguishing diverse stimuli. Adv. Sci. 2021, 8, 2002817. [Google Scholar] [CrossRef]

- Mattens, F. The sense of touch: From tactility to tactual probing. Australas. J. Philos. 2017, 95, 688–701. [Google Scholar] [CrossRef]

- Imami, D.; Valentinov, V.; Skreli, E. Food safety and value chain coordination in the context of a transition economy: The role of agricultural cooperatives. Int. J. Commons 2021, 15, 21–34. [Google Scholar] [CrossRef]

- Mostafidi, M.; Sanjabi, M.R.; Shirkhan, F.; Zahedi, M.T. A review of recent trends in the development of the microbial safety of fruits and vegetables. Trends Food Sci. Technol. 2020, 103, 321–332. [Google Scholar] [CrossRef]

- Žuntar, I.; Petric, Z.; Bursać Kovačević, D.; Putnik, P. Safety of probiotics: Functional fruit beverages and nutraceuticals. Foods 2020, 9, 947. [Google Scholar] [CrossRef]

- Fleetwood, J.; Rahman, S.; Holland, D.; Millson, D.; Thomson, L.; Poppy, G. As clean as they look? Food hygiene inspection scores, microbiological contamination, and foodborne illness. Food Control 2019, 96, 76–86. [Google Scholar] [CrossRef]

- Tabrik, S.; Behroozi, M.; Schlaffke, L.; Heba, S.; Lenz, M.; Lissek, S.; Güntürkün, O.; Dinse, H.R.; Tegenthoff, M. Visual and tactile sensory systems share common features in object recognition. eNeuro 2021, 8. [Google Scholar] [CrossRef] [PubMed]

- Smith, E.; Calandra, R.; Romero, A.; Gkioxari, G.; Meger, D.; Malik, J.; Drozdzal, M. 3d shape reconstruction from vision and touch. Adv. Neural Inf. Process. Syst. 2020, 33, 14193–14206. [Google Scholar]

- Kappassov, Z.; Corrales, J.A.; Perdereau, V. Tactile sensing in dexterous robot hands. Robot. Auton. Syst. 2015, 74, 195–220. [Google Scholar] [CrossRef]

- Hu, Z.; Lin, L.; Lin, W.; Xu, Y.; Xia, X.; Peng, Z.; Sun, Z.; Wang, Z. Machine Learning for Tactile Perception: Advancements, Challenges, and Opportunities. Adv. Intell. Syst. 2023, 2200371. [Google Scholar] [CrossRef]

- Chi, C.; Sun, X.; Xue, N.; Li, T.; Liu, C. Recent progress in technologies for tactile sensors. Sensors 2018, 18, 948. [Google Scholar] [CrossRef]

- Zhu, Y.; Liu, Y.; Sun, Y.; Zhang, Y.; Ding, G. Recent advances in resistive sensor technology for tactile perception: A review. IEEE Sens. J. 2022, 22, 15635–15649. [Google Scholar] [CrossRef]

- Peng, Y.; Yang, N.; Xu, Q.; Dai, Y.; Wang, Z. Recent advances in flexible tactile sensors for intelligent systems. Sensors 2021, 21, 5392. [Google Scholar] [CrossRef]

- Wei, Y.; Xu, Q. An overview of micro-force sensing techniques. Sens. Actuators A Phys. 2015, 234, 359–374. [Google Scholar] [CrossRef]

- Tiwana, M.I.; Redmond, S.J.; Lovell, N.H. A review of tactile sensing technologies with applications in biomedical engineering. Sens. Actuators A Phys. 2012, 179, 17–31. [Google Scholar] [CrossRef]

- Wang, C.; Dong, L.; Peng, D.; Pan, C. Tactile sensors for advanced intelligent systems. Adv. Intell. Syst. 2019, 1, 1900090. [Google Scholar] [CrossRef]

- Rehan, M.; Saleem, M.M.; Tiwana, M.I.; Shakoor, R.I.; Cheung, R. A Soft Multi-Axis High Force Range Magnetic Tactile Sensor for Force Feedback in Robotic Surgical Systems. Sensors 2022, 22, 3500. [Google Scholar] [CrossRef] [PubMed]

- Soleimani, M.; Friedrich, M. E-skin using fringing field electrical impedance tomography with an ionic liquid domain. Sensors 2022, 22, 5040. [Google Scholar] [CrossRef] [PubMed]

- Wu, H.; Zheng, B.; Wang, H.; Ye, J. New Flexible Tactile Sensor Based on Electrical Impedance Tomography. Micromachines 2022, 13, 185. [Google Scholar] [CrossRef] [PubMed]

- Fang, B.; Sun, F.; Yang, C.; Xue, H.; Chen, W.; Zhang, C.; Guo, D.; Liu, H. A dual-modal vision-based tactile sensor for robotic hand grasping. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, Australia, 21–25 May 2018; pp. 4740–4745. [Google Scholar]

- Zhang, T.; Cong, Y.; Li, X.; Peng, Y. Robot tactile sensing: Vision based tactile sensor for force perception. In Proceedings of the 2018 IEEE 8th Annual International Conference on CYBER Technology in Automation, Control, and Intelligent Systems (CYBER), Tianjin, China, 19 July–23 July 2018; pp. 1360–1365. [Google Scholar]

- Ward-Cherrier, B.; Pestell, N.; Cramphorn, L.; Winstone, B.; Giannaccini, M.E.; Rossiter, J.; Lepora, N.F. The tactip family: Soft optical tactile sensors with 3d-printed biomimetic morphologies. Soft Robot. 2018, 5, 216–227. [Google Scholar] [CrossRef]

- Lin, X.; Wiertlewski, M. Sensing the frictional state of a robotic skin via subtractive color mixing. IEEE Robot. Autom. Lett. 2019, 4, 2386–2392. [Google Scholar] [CrossRef]

- Ward-Cherrier, B.; Pestell, N.; Lepora, N.F. Neurotac: A neuromorphic optical tactile sensor applied to texture recognition. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 2654–2660. [Google Scholar]

- Sferrazza, C.; D’Andrea, R. Design, motivation and evaluation of a full-resolution optical tactile sensor. Sensors 2019, 19, 928. [Google Scholar] [CrossRef]

- Do, W.K.; Kennedy, M. DenseTact: Optical tactile sensor for dense shape reconstruction. In Proceedings of the 2022 International Conference on Robotics and Automation (ICRA), Philadelphia, PA, USA, 23–27 May 2022; pp. 6188–6194. [Google Scholar]

- Li, R.; Platt, R.; Yuan, W.; ten Pas, A.; Roscup, N.; Srinivasan, M.A.; Adelson, E. Localization and manipulation of small parts using gelsight tactile sensing. In Proceedings of the 2014 IEEE/RSJ International Conference on Intelligent Robots and Systems, Chicago, IL, USA, 14–18 September 2014; pp. 3988–3993. [Google Scholar]

- Gomes, D.F.; Luo, S. GelTip tactile sensor for dexterous manipulation in clutter. In Tactile Sensing, Skill Learning, and Robotic Dexterous Manipulation; Elsevier: Amsterdam, The Netherlands, 2022; pp. 3–21. [Google Scholar]

- Romero, B.; Veiga, F.; Adelson, E. Soft, round, high resolution tactile fingertip sensors for dexterous robotic manipulation. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 4796–4802. [Google Scholar]

- Padmanabha, A.; Ebert, F.; Tian, S.; Calandra, R.; Finn, C.; Levine, S. Omnitact: A multi-directional high-resolution touch sensor. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 618–624. [Google Scholar]

- Alspach, A.; Hashimoto, K.; Kuppuswamy, N.; Tedrake, R. Soft-bubble: A highly compliant dense geometry tactile sensor for robot manipulation. In Proceedings of the 2019 2nd IEEE International Conference on Soft Robotics (RoboSoft), Seoul, Republic of Korea, 14–18 April 2019; pp. 597–604. [Google Scholar] [CrossRef]

- Lambeta, M.; Chou, P.W.; Tian, S.; Yang, B.; Maloon, B.; Most, V.R.; Stroud, D.; Santos, R.; Byagowi, A.; Kammerer, G.; et al. Digit: A novel design for a low-cost compact high-resolution tactile sensor with application to in-hand manipulation. IEEE Robot. Autom. Lett. 2020, 5, 3838–3845. [Google Scholar] [CrossRef]

- Trueeb, C.; Sferrazza, C.; D’Andrea, R. Towards vision-based robotic skins: A data-driven, multi-camera tactile sensor. In Proceedings of the 2020 3rd IEEE International Conference on Soft Robotics (RoboSoft), New Haven, CT, USA, 15 May–15 July 2020; pp. 333–338. [Google Scholar]

- Kappassov, Z.; Baimukashev, D.; Kuanyshuly, Z.; Massalin, Y.; Urazbayev, A.; Varol, H.A. Color-Coded Fiber-Optic Tactile Sensor for an Elastomeric Robot Skin. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 2146–2152. [Google Scholar] [CrossRef]

- Chuang, C.H.; Weng, H.K.; Chen, J.W.; Shaikh, M.O. Ultrasonic tactile sensor integrated with TFT array for force feedback and shape recognition. Sens. Actuators A Phys. 2018, 271, 348–355. [Google Scholar] [CrossRef]

- Shinoda, H.; Ando, S. A tactile sensor with 5-D deformation sensing element. In Proceedings of the IEEE International Conference on Robotics and Automation, Minneapolis, MI, USA, 22–28 April 1996; Volume 1, pp. 7–12. [Google Scholar]

- Ando, S.; Shinoda, H. Ultrasonic emission tactile sensing. IEEE Control Syst. Mag. 1995, 15, 61–69. [Google Scholar]

- Ando, S.; Shinoda, H.; Yonenaga, A.; Terao, J. Ultrasonic six-axis deformation sensing. IEEE Trans. Ultrason. Ferroelectr. Freq. Control 2001, 48, 1031–1045. [Google Scholar] [CrossRef] [PubMed]

- Shinoda, H.; Ando, S. Ultrasonic emission tactile sensor for contact localization and characterization. In Proceedings of the 1994 IEEE International Conference on Robotics and Automation, San Diego, CA, USA, 8–13 May 1994; pp. 2536–2543. [Google Scholar]

- Gong, D.; He, R.; Yu, J.; Zuo, G. A pneumatic tactile sensor for co-operative robots. Sensors 2017, 17, 2592. [Google Scholar] [CrossRef] [PubMed]

- Yao, G.; Xu, L.; Cheng, X.; Li, Y.; Huang, X.; Guo, W.; Liu, S.; Wang, Z.L.; Wu, H. Bioinspired triboelectric nanogenerators as self-powered electronic skin for robotic tactile sensing. Adv. Funct. Mater. 2020, 30, 1907312. [Google Scholar] [CrossRef]

- Lu, Z.; Gao, X.; Yu, H. GTac: A biomimetic tactile sensor with skin-like heterogeneous force feedback for robots. IEEE Sens. J. 2022, 22, 14491–14500. [Google Scholar] [CrossRef]

- Ma, M.; Zhang, Z.; Zhao, Z.; Liao, Q.; Kang, Z.; Gao, F.; Zhao, X.; Zhang, Y. Self-powered flexible antibacterial tactile sensor based on triboelectric-piezoelectric-pyroelectric multi-effect coupling mechanism. Nano Energy 2019, 66, 104105. [Google Scholar] [CrossRef]

- Park, K.; Yuk, H.; Yang, M.; Cho, J.; Lee, H.; Kim, J. A biomimetic elastomeric robot skin using electrical impedance and acoustic tomography for tactile sensing. Sci. Robot. 2022, 7, eabm7187. [Google Scholar] [CrossRef] [PubMed]

- Chang, K.; Guo, M.; Pu, L.; Dong, J.; Li, L.; Ma, P.; Huang, Y.; Liu, T. Wearable nanofibrous tactile sensors with fast response and wireless communication. Chem. Eng. J. 2023, 451, 138578. [Google Scholar] [CrossRef]

- Ham, J.; Huh, T.M.; Kim, J.; Kim, J.O.; Park, S.; Cutkosky, M.R.; Bao, Z. Porous Dielectric Elastomer Based Flexible Multiaxial Tactile Sensor for Dexterous Robotic or Prosthetic Hands. Adv. Mater. Technol. 2023, 8, 2200903. [Google Scholar] [CrossRef]

- Yu, P.; Liu, W.; Gu, C.; Cheng, X.; Fu, X. Flexible piezoelectric tactile sensor array for dynamic three-axis force measurement. Sensors 2016, 16, 819. [Google Scholar] [CrossRef]

- Andrussow, I.; Sun, H.; Kuchenbecker, K.J.; Martius, G. Minsight: A Fingertip-Sized Vision-Based Tactile Sensor for Robotic Manipulation. Adv. Intell. Syst. 2023, 2300042. [Google Scholar] [CrossRef]

- Yousef, H.; Boukallel, M.; Althoefer, K. Tactile sensing for dexterous in-hand manipulation in robotics—A review. Sens. Actuators A Phys. 2011, 167, 171–187. [Google Scholar] [CrossRef]

- Dahiya, R.S.; Metta, G.; Valle, M.; Sandini, G. Tactile sensing—From humans to humanoids. IEEE Trans. Robot. 2009, 26, 1–20. [Google Scholar] [CrossRef]

- Zheng, H.; Jin, Y.; Wang, H.; Zhao, P. DotView: A Low-Cost Compact Tactile Sensor for Pressure, Shear, and Torsion Estimation. IEEE Robot. Autom. Lett. 2023, 8, 880–887. [Google Scholar] [CrossRef]

- Sygulla, F.; Ellensohn, F.; Hildebrandt, A.C.; Wahrmann, D.; Rixen, D. A flexible and low-cost tactile sensor for robotic applications. In Proceedings of the 2017 IEEE International Conference on Advanced Intelligent Mechatronics (AIM), Singapore, 29 May–3 June 2017; pp. 58–63. [Google Scholar]

- Yao, T.; Guo, X.; Li, C.; Qi, H.; Lin, H.; Liu, L.; Dai, Y.; Qu, L.; Huang, Z.; Liu, P.; et al. Highly sensitive capacitive flexible 3D-force tactile sensors for robotic grasping and manipulation. J. Phys. D Appl. Phys. 2020, 53, 445109. [Google Scholar] [CrossRef]

- Yan, Y.; Hu, Z.; Yang, Z.; Yuan, W.; Song, C.; Pan, J.; Shen, Y. Soft magnetic skin for super-resolution tactile sensing with force self-decoupling. Sci. Robot. 2021, 6, eabc8801. [Google Scholar] [CrossRef]

- Vishnu, R.S.; Mandil, W.; Parsons, S.; Ghalamzan E, A. Acoustic Soft Tactile Skin (AST Skin). arXiv 2023, arXiv:2303.17355. [Google Scholar]

- Stachowsky, M.; Hummel, T.; Moussa, M.; Abdullah, H.A. A slip detection and correction strategy for precision robot grasping. IEEE/ASME Trans. Mechatron. 2016, 21, 2214–2226. [Google Scholar] [CrossRef]

- Wall, V.; Zöller, G.; Brock, O. Passive and Active Acoustic Sensing for Soft Pneumatic Actuators. arXiv 2022, arXiv:2208.10299. [Google Scholar] [CrossRef]

- Zöller, G.; Wall, V.; Brock, O. Acoustic sensing for soft pneumatic actuators. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 6986–6991. [Google Scholar]

- Zöller, G.; Wall, V.; Brock, O. Active acoustic contact sensing for soft pneumatic actuators. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 7966–7972. [Google Scholar]

- Zhu, M.; Wang, Y.; Lou, M.; Yu, J.; Li, Z.; Ding, B. Bioinspired transparent and antibacterial electronic skin for sensitive tactile sensing. Nano Energy 2021, 81, 105669. [Google Scholar] [CrossRef]

- Wang, S.; Xiang, J.; Sun, Y.; Wang, H.; Du, X.; Cheng, X.; Du, Z.; Wang, H. Skin-inspired nanofibrillated cellulose-reinforced hydrogels with high mechanical strength, long-term antibacterial, and self-recovery ability for wearable strain/pressure sensors. Carbohydr. Polym. 2021, 261, 117894. [Google Scholar] [CrossRef]

- Cui, X.; Chen, J.; Wu, W.; Liu, Y.; Li, H.; Xu, Z.; Zhu, Y. Flexible and breathable all-nanofiber iontronic pressure sensors with ultraviolet shielding and antibacterial performances for wearable electronics. Nano Energy 2022, 95, 107022. [Google Scholar] [CrossRef]

- Ippili, S.; Jella, V.; Lee, J.M.; Jung, J.S.; Lee, D.H.; Yang, T.Y.; Yoon, S.G. ZnO–PTFE-based antimicrobial, anti-reflective display coatings and high-sensitivity touch sensors. J. Mater. Chem. A 2022, 10, 22067–22079. [Google Scholar] [CrossRef]

- Tian, X.; Hua, T. Antibacterial, scalable manufacturing, skin-attachable, and eco-friendly fabric triboelectric nanogenerators for self-powered sensing. ACS Sustain. Chem. Eng. 2021, 9, 13356–13366. [Google Scholar] [CrossRef]

- Si, Z.; Yu, T.C.; Morozov, K.; McCann, J.; Yuan, W. RobotSweater: Scalable, Generalizable, and Customizable Machine-Knitted Tactile Skins for Robots. arXiv 2023, arXiv:2303.02858. [Google Scholar]

- Maslyczyk, A.; Roberge, J.P.; Duchaine, V.; Loan Le, T.H. A highly sensitive multimodal capacitive tactile sensor. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017; pp. 407–412. [Google Scholar]

- Xu, D.; Hu, B.; Zheng, G.; Wang, J.; Li, C.; Zhao, Y.; Yan, Z.; Jiao, Z.; Wu, Y.; Wang, M.; et al. Sandwich-like flexible tactile sensor based on bioinspired honeycomb dielectric layer for three-axis force detection and robotic application. J. Mater. Sci. Mater. Electron. 2023, 34, 942. [Google Scholar] [CrossRef]

- Arshad, A.; Saleem, M.M.; Tiwana, M.I.; ur Rahman, H.; Iqbal, S.; Cheung, R. A high sensitivity and multi-axis fringing electric field based capacitive tactile force sensor for robot assisted surgery. Sens. Actuators A Phys. 2023, 354, 114272. [Google Scholar] [CrossRef]

- Fujiwara, E.; de Oliveira Rosa, L. Agar-based soft tactile transducer with embedded optical fiber specklegram sensor. Results Opt. 2023, 10, 100345. [Google Scholar] [CrossRef]

- Xie, H.; Jiang, A.; Seneviratne, L.; Althoefer, K. Pixel-based optical fiber tactile force sensor for robot manipulation. In Proceedings of the SENSORS, 2012 IEEE, Daegu, Republic of Korea, 22–25 October 2012; pp. 1–4. [Google Scholar] [CrossRef]

- Althoefer, K.; Ling, Y.; Li, W.; Qian, X.; Lee, W.W.; Qi, P. A Miniaturised Camera-based Multi-Modal Tactile Sensor. arXiv 2023, arXiv:2303.03093. [Google Scholar]

- Kang, Z.; Li, X.; Zhao, X.; Wang, X.; Shen, J.; Wei, H.; Zhu, X. Piezo-Resistive Flexible Pressure Sensor by Blade-Coating Graphene–Silver Nanosheet–Polymer Nanocomposite. Nanomaterials 2023, 13, 4. [Google Scholar] [CrossRef] [PubMed]

- Ohashi, H.; Yasuda, T.; Kawasetsu, T.; Hosoda, K. Soft Tactile Sensors Having Two Channels With Different Slopes for Contact Position and Pressure Estimation. IEEE Sens. Lett. 2023, 7, 2000704. [Google Scholar] [CrossRef]

- Sappati, K.K.; Bhadra, S. Flexible piezoelectric 0–3 PZT-PDMS thin film for tactile sensing. IEEE Sens. J. 2020, 20, 4610–4617. [Google Scholar] [CrossRef]

- Lu, D.; Liu, T.; Meng, X.; Luo, B.; Yuan, J.; Liu, Y.; Zhang, S.; Cai, C.; Gao, C.; Wang, J.; et al. Wearable triboelectric visual sensors for tactile perception. Adv. Mater. 2023, 35, 2209117. [Google Scholar] [CrossRef] [PubMed]

- Chang, K.B.; Parashar, P.; Shen, L.C.; Chen, A.R.; Huang, Y.T.; Pal, A.; Lim, K.C.; Wei, P.H.; Kao, F.C.; Hu, J.J.; et al. A triboelectric nanogenerator-based tactile sensor array system for monitoring pressure distribution inside prosthetic limb. Nano Energy 2023, 111, 108397. [Google Scholar] [CrossRef]

- Hu, J.; Cui, S.; Wang, S.; Zhang, C.; Wang, R.; Chen, L.; Li, Y. GelStereo Palm: A Novel Curved Visuotactile Sensor for 3D Geometry Sensing. IEEE Trans. Ind. Inf. 2023; early access. [Google Scholar] [CrossRef]

- Sepehri, A.; Helisaz, H.; Chiao, M. A fiber Bragg grating tactile sensor for soft material characterization based on quasi linear viscoelastic analysis. Sens. Actuators A Phys. 2023, 349, 114079. [Google Scholar] [CrossRef]

- Jenkinson, G.P.; Conn, A.T.; Tzemanaki, A. ESPRESS. 0: Eustachian Tube-Inspired Tactile Sensor Exploiting Pneumatics for Range Extension and SenSitivity Tuning. Sensors 2023, 23, 567. [Google Scholar] [CrossRef]

- Cao, G.; Jiang, J.; Lu, C.; Gomes, D.F.; Luo, S. TouchRoller: A Rolling Optical Tactile Sensor for Rapid Assessment of Textures for Large Surface Areas. Sensors 2023, 23, 2661. [Google Scholar] [CrossRef]

- Peyre, K.; Tourlonias, M.; Bueno, M.A.; Spano, F.; Rossi, R.M. Tactile perception of textile surfaces from an artificial finger instrumented by a polymeric optical fibre. Tribol. Int. 2019, 130, 155–169. [Google Scholar] [CrossRef]

- Kootstra, G.; Wang, X.; Blok, P.M.; Hemming, J.; Van Henten, E. Selective harvesting robotics: Current research, trends, and future directions. Curr. Robot. Rep. 2021, 2, 95–104. [Google Scholar] [CrossRef]

- Ishikawa, R.; Hamaya, M.; Von Drigalski, F.; Tanaka, K.; Hashimoto, A. Learning by Breaking: Food Fracture Anticipation for Robotic Food Manipulation. IEEE Access 2022, 10, 99321–99329. [Google Scholar] [CrossRef]

- Drimus, A.; Kootstra, G.; Bilberg, A.; Kragic, D. Design of a flexible tactile sensor for classification of rigid and deformable objects. Robot. Autom. Syst. 2014, 62, 3–15. [Google Scholar] [CrossRef]

- Mandil, W.; Ghalamzan-E, A. Combining Vision and Tactile Sensation for Video Prediction. arXiv 2023, arXiv:2304.11193. [Google Scholar]

- Johansson, R.S.; Flanagan, J.R. Coding and use of tactile signals from the fingertips in object manipulation tasks. Nat. Revi. Neurosci. 2009, 10, 345–359. [Google Scholar] [CrossRef] [PubMed]

- Deng, Z.; Jonetzko, Y.; Zhang, L.; Zhang, J. Grasping force control of multi-fingered robotic hands through tactile sensing for object stabilization. Sensors 2020, 20, 1050. [Google Scholar] [CrossRef] [PubMed]

- Jara, C.A.; Pomares, J.; Candelas, F.A.; Torres, F. Control framework for dexterous manipulation using dynamic visual servoing and tactile sensors’ feedback. Sensors 2014, 14, 1787–1804. [Google Scholar] [CrossRef]

- Bicchi, A.; Kumar, V. Robotic grasping and contact: A review. In Proceedings of the 2000 ICRA, Millennium Conference, IEEE International Conference on Robotics and Automation, Symposia Proceedings (Cat. No. 00CH37065), San Francisco, CA, USA, 24–28 April 2000; Volume 1, pp. 348–353. [Google Scholar]

- Bekiroglu, Y.; Laaksonen, J.; Jorgensen, J.A.; Kyrki, V.; Kragic, D. Assessing grasp stability based on learning and haptic data. IEEE Trans. Robot. 2011, 27, 616–629. [Google Scholar] [CrossRef]

- Lynch, P.; Cullinan, M.F.; McGinn, C. Adaptive grasping of moving objects through tactile sensing. Sensors 2021, 21, 8339. [Google Scholar] [CrossRef]

- Kroemer, O.; Daniel, C.; Neumann, G.; Van Hoof, H.; Peters, J. Towards learning hierarchical skills for multi-phase manipulation tasks. In Proceedings of the 2015 IEEE International Conference on Robotics and Automation (ICRA), Washington, DC, USA, 26–30 May 2015; pp. 1503–1510. [Google Scholar]

- Kolamuri, R.; Si, Z.; Zhang, Y.; Agarwal, A.; Yuan, W. Improving grasp stability with rotation measurement from tactile sensing. In Proceedings of the 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, 27 September–1 October 2021; pp. 6809–6816. [Google Scholar]

- Hogan, F.R.; Bauza, M.; Canal, O.; Donlon, E.; Rodriguez, A. Tactile regrasp: Grasp adjustments via simulated tactile transformations. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 2963–2970. [Google Scholar]

- Mahler, J.; Liang, J.; Niyaz, S.; Laskey, M.; Doan, R.; Liu, X.; Ojea, J.A.; Goldberg, K. Dex-net 2.0: Deep learning to plan robust grasps with synthetic point clouds and analytic grasp metrics. arXiv 2017, arXiv:1703.09312. [Google Scholar]

- Kalashnikov, D.; Irpan, A.; Pastor, P.; Ibarz, J.; Herzog, A.; Jang, E.; Quillen, D.; Holly, E.; Kalakrishnan, M.; Vanhoucke, V.; et al. Qt-opt: Scalable deep reinforcement learning for vision-based robotic manipulation. arXiv 2018, arXiv:1806.10293. [Google Scholar]

- Matak, M.; Hermans, T. Planning Visual-Tactile Precision Grasps via Complementary Use of Vision and Touch. IEEE Robot. Autom. Lett. 2022, 8, 768–775. [Google Scholar] [CrossRef]

- Romeo, R.A.; Zollo, L. Methods and sensors for slip detection in robotics: A survey. IEEE Access 2020, 8, 73027–73050. [Google Scholar] [CrossRef]

- Chen, W.; Khamis, H.; Birznieks, I.; Lepora, N.F.; Redmond, S.J. Tactile sensors for friction estimation and incipient slip detection—Toward dexterous robotic manipulation: A review. IEEE Sens. J. 2018, 18, 9049–9064. [Google Scholar] [CrossRef]

- Yang, H.; Hu, X.; Cao, L.; Sun, F. A new slip-detection method based on pairwise high frequency components of capacitive sensor signals. In Proceedings of the 2015 5th International Conference on Information Science and Technology (ICIST), Kopaonik, Serbia, 8–11 March 2015; pp. 56–61. [Google Scholar]

- Romeo, R.A.; Oddo, C.M.; Carrozza, M.C.; Guglielmelli, E.; Zollo, L. Slippage detection with piezoresistive tactile sensors. Sensors 2017, 17, 1844. [Google Scholar] [CrossRef] [PubMed]

- Su, Z.; Hausman, K.; Chebotar, Y.; Molchanov, A.; Loeb, G.E.; Sukhatme, G.S.; Schaal, S. Force estimation and slip detection/classification for grip control using a biomimetic tactile sensor. In Proceedings of the 2015 IEEE-RAS 15th International Conference on Humanoid Robots (Humanoids), Seoul, Republic of Korea, 3–5 November 2015; pp. 297–303. [Google Scholar]

- Veiga, F.; Van Hoof, H.; Peters, J.; Hermans, T. Stabilizing novel objects by learning to predict tactile slip. In Proceedings of the 2015 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Hamburg, Germany, 28 September–3 October 2015; pp. 5065–5072. [Google Scholar]

- James, J.W.; Pestell, N.; Lepora, N.F. Slip detection with a biomimetic tactile sensor. IEEE Robot. Autom. Lett. 2018, 3, 3340–3346. [Google Scholar] [CrossRef]

- Kaboli, M.; Yao, K.; Cheng, G. Tactile-based manipulation of deformable objects with dynamic center of mass. In Proceedings of the 2016 IEEE-RAS 16th International Conference on Humanoid Robots (Humanoids), Cancun, Mexico, 15–17 November 2016; pp. 752–757. [Google Scholar]

- Van Wyk, K.; Falco, J. Calibration and analysis of tactile sensors as slip detectors. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, Australia, 21–25 May 2018; pp. 2744–2751. [Google Scholar]

- Romano, J.M.; Hsiao, K.; Niemeyer, G.; Chitta, S.; Kuchenbecker, K.J. Human-inspired robotic grasp control with tactile sensing. IEEE Trans. Robot. 2011, 27, 1067–1079. [Google Scholar] [CrossRef]

- Hasegawa, H.; Mizoguchi, Y.; Tadakuma, K.; Ming, A.; Ishikawa, M.; Shimojo, M. Development of intelligent robot hand using proximity, contact and slip sensing. In Proceedings of the 2010 IEEE International Conference on Robotics and Automation, Anchorage, AL, USA, 3–7 May 2010; pp. 777–784. [Google Scholar]

- Li, J.; Dong, S.; Adelson, E. Slip detection with combined tactile and visual information. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, Australia, 21–25 May 2018; pp. 7772–7777. [Google Scholar]

- Zhang, Y.; Kan, Z.; Tse, Y.A.; Yang, Y.; Wang, M.Y. Fingervision tactile sensor design and slip detection using convolutional lstm network. arXiv 2018, arXiv:1810.02653. [Google Scholar]

- Garcia-Garcia, A.; Zapata-Impata, B.S.; Orts-Escolano, S.; Gil, P.; Garcia-Rodriguez, J. Tactilegcn: A graph convolutional network for predicting grasp stability with tactile sensors. In Proceedings of the 2019 International Joint Conference on Neural Networks (IJCNN), Budapest, Hungary, 14–19 July 2019; pp. 1–8. [Google Scholar]

- Mandil, W.; Nazari, K.; Ghalamzan E, A. Action conditioned tactile prediction: A case study on slip prediction. arXiv 2022, arXiv:2205.09430. [Google Scholar]

- Nazari, K.; Mandill, W.; Hanheide, M.; Esfahani, A.G. Tactile dynamic behaviour prediction based on robot action. In Proceedings of the towards Autonomous Robotic Systems: 22nd Annual Conference, TAROS 2021, Lincoln, UK, 8–10 September 2021; Springer: Berlin/Heidelberg, Germany, 2021; pp. 284–293. [Google Scholar]

- Nazari, K.; Mandil, W.; Esfahani, A.M.G. Proactive slip control by learned slip model and trajectory adaptation. In Proceedings of the Conference on Robot Learning, PMLR, Auckland, New Zealand, 8 March 2023; pp. 751–761. [Google Scholar]

- Mayol-Cuevas, W.W.; Juarez-Guerrero, J.; Munoz-Gutierrez, S. A first approach to tactile texture recognition. In Proceedings of the SMC’98 Conference Proceedings, 1998 IEEE International Conference on Systems, Man, and Cybernetics (Cat. No. 98CH36218), San Diego, CA, USA, 14 October 1998; Volume 5, pp. 4246–4250. [Google Scholar]

- Muhammad, H.; Recchiuto, C.; Oddo, C.M.; Beccai, L.; Anthony, C.; Adams, M.; Carrozza, M.C.; Ward, M. A capacitive tactile sensor array for surface texture discrimination. Microelect. Eng. 2011, 88, 1811–1813. [Google Scholar] [CrossRef]

- Drimus, A.; Petersen, M.B.; Bilberg, A. Object texture recognition by dynamic tactile sensing using active exploration. In Proceedings of the 2012 IEEE RO-MAN: The 21st IEEE International Symposium on Robot and Human Interactive Communication, Paris, France, 9–13 September 2012; pp. 277–283. [Google Scholar]

- Chun, S.; Kim, J.S.; Yoo, Y.; Choi, Y.; Jung, S.J.; Jang, D.; Lee, G.; Song, K.I.; Nam, K.S.; Youn, I.; et al. An artificial neural tactile sensing system. Nat. Electr. 2021, 4, 429–438. [Google Scholar] [CrossRef]

- Jamali, N.; Sammut, C. Material classification by tactile sensing using surface textures. In Proceedings of the 2010 IEEE International Conference on Robotics and Automation, Anchorage, AL, USA, 3–7 May 2010; pp. 2336–2341. [Google Scholar]

- Li, R.; Adelson, E.H. Sensing and recognizing surface textures using a gelsight sensor. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, 23–28 June 2013; pp. 1241–1247. [Google Scholar]

- Song, Z.; Yin, J.; Wang, Z.; Lu, C.; Yang, Z.; Zhao, Z.; Lin, Z.; Wang, J.; Wu, C.; Cheng, J.; et al. A flexible triboelectric tactile sensor for simultaneous material and texture recognition. Nano Energy 2022, 93, 106798. [Google Scholar] [CrossRef]

- Luo, S.; Bimbo, J.; Dahiya, R.; Liu, H. Robotic tactile perception of object properties: A review. Mechatronics 2017, 48, 54–67. [Google Scholar] [CrossRef]

- Tsuji, S.; Kohama, T. Using a convolutional neural network to construct a pen-type tactile sensor system for roughness recognition. Sens. Actuators A Phys. 2019, 291, 7–12. [Google Scholar] [CrossRef]

- Gao, Y.; Hendricks, L.A.; Kuchenbecker, K.J.; Darrell, T. Deep learning for tactile understanding from visual and haptic data. In Proceedings of the 2016 IEEE International Conference on Robotics and Automation (ICRA), Stockholm, Sweden, 16–21 May 2016; pp. 536–543. [Google Scholar]

- Taunyazov, T.; Chua, Y.; Gao, R.; Soh, H.; Wu, Y. Fast texture classification using tactile neural coding and spiking neural network. In Proceedings of the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Las Vegas, NV, USA, 24 October 2020–24 January 2021; pp. 9890–9895. [Google Scholar]

- Luo, S.; Yuan, W.; Adelson, E.; Cohn, A.G.; Fuentes, R. Vitac: Feature sharing between vision and tactile sensing for cloth texture recognition. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, Australia, 21–25 May 2018; pp. 2722–2727. [Google Scholar]

- Howe, R.D. Tactile sensing and control of robotic manipulation. Adv. Robot. 1993, 8, 245–261. [Google Scholar] [CrossRef]

- Su, Z.; Fishel, J.A.; Yamamoto, T.; Loeb, G.E. Use of tactile feedback to control exploratory movements to characterize object compliance. Front. Neurorobot. 2012, 6, 7. [Google Scholar] [CrossRef] [PubMed]

- Dean-Leon, E.; Bergner, F.; Ramirez-Amaro, K.; Cheng, G. From multi-modal tactile signals to a compliant control. In Proceedings of the 2016 IEEE-RAS 16th International Conference on Humanoid Robots (Humanoids), Cancun, Mexico, 15–17 November 2016; pp. 892–898. [Google Scholar]

- Dean-Leon, E.; Guadarrama-Olvera, J.R.; Bergner, F.; Cheng, G. Whole-body active compliance control for humanoid robots with robot skin. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 5404–5410. [Google Scholar]

- Calandra, R.; Ivaldi, S.; Deisenroth, M.P.; Peters. Learning torque control in presence of contacts using tactile sensing from robot skin. In Proceedings of the 2015 IEEE-RAS 15th International Conference on Humanoid Robots (Humanoids), Seoul, Republic of Korea, 3–5 November 2015; pp. 690–695. [Google Scholar]

- Xu, D.; Loeb, G.E.; Fishel, J.A. Tactile identification of objects using Bayesian exploration. In Proceedings of the 2013 IEEE International Conference on Robotics and Automation, Karlsruhe, Germany, 6–10 May 2013; pp. 3056–3061. [Google Scholar]

- Goger, D.; Gorges, N.; Worn, H. Tactile sensing for an anthropomorphic robotic hand: Hardware and signal processing. In Proceedings of the 2009 IEEE International Conference on Robotics and Automation, Kobe, Japan, 12–17 May 2009; pp. 895–901. [Google Scholar]

- Pezzementi, Z.; Plaku, E.; Reyda, C.; Hager, G.D. Tactile-object recognition from appearance information. IEEE Trans. Robot. 2011, 27, 473–487. [Google Scholar] [CrossRef]

- Li, G.; Liu, S.; Wang, L.; Zhu, R. Skin-inspired quadruple tactile sensors integrated on a robot hand enable object recognition. Sci. Roboti. 2020, 5, eabc8134. [Google Scholar] [CrossRef]

- Pastor, F.; García-González, J.; Gandarias, J.M.; Medina, D.; Closas, P.; García-Cerezo, A.J.; Gómez-de Gabriel, J.M. Bayesian and neural inference on lstm-based object recognition from tactile and kinesthetic information. IEEE Robot. Autom. Lett. 2020, 6, 231–238. [Google Scholar] [CrossRef]

- Yuan, W.; Dong, S.; Adelson, E.H. Gelsight: High-resolution robot tactile sensors for estimating geometry and force. Sensors 2017, 17, 2762. [Google Scholar] [CrossRef]

- Okamura, A.M.; Richard, C.; Cutkosky, M.R. Feeling is believing: Using a force-feedback joystick to teach dynamic systems. J. Eng. Educ. 2002, 91, 345–349. [Google Scholar] [CrossRef]

- Pacchierotti, C.; Sinclair, S.; Solazzi, M.; Frisoli, A.; Hayward, V.; Prattichizzo, D. Wearable haptic systems for the fingertip and the hand: Taxonomy, review, and perspectives. IEEE Trans. Haptics 2017, 10, 580–600. [Google Scholar] [CrossRef]

- Lee, M.H.; Nicholls, H.R. Review Article Tactile sensing for mechatronics—A state of the art survey. Mechatronics 1999, 9, 1–31. [Google Scholar] [CrossRef]

- Okamura, A.M. Haptic feedback in robot-assisted minimally invasive surgery. Curr. Opin. Urol. 2009, 19, 102. [Google Scholar] [CrossRef]

- Sun, Z.; Zhu, M.; Shan, X.; Lee, C. Augmented tactile-perception and haptic-feedback rings as human-machine interfaces aiming for immersive interactions. Nat. Commun. 2022, 13, 5224. [Google Scholar] [CrossRef] [PubMed]

- Tian, S.; Ebert, F.; Jayaraman, D.; Mudigonda, M.; Finn, C.; Calandra, R.; Levine, S. Manipulation by feel: Touch-based control with deep predictive models. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 818–824. [Google Scholar]

- Vouloutsi, V.; Cominelli, L.; Dogar, M.; Lepora, N.; Zito, C.; Martinez-Hernandez, U. Towards Living Machines: Current and future trends of tactile sensing, grasping, and social robotics. Bioinspir. Biomim. 2023, 18, 025002. [Google Scholar] [CrossRef]

- Siegrist, M.; Hartmann, C. Consumer acceptance of novel food technologies. Nat. Food 2020, 1, 343–350. [Google Scholar] [CrossRef] [PubMed]

- Lezoche, M.; Hernandez, J.E.; Díaz, M.d.M.E.A.; Panetto, H.; Kacprzyk, J. Agri-food 4.0: A survey of the supply chains and technologies for the future agriculture. Comput. Ind. 2020, 117, 103187. [Google Scholar] [CrossRef]

- Carmela Annosi, M.; Brunetta, F.; Capo, F.; Heideveld, L. Digitalization in the agri-food industry: The relationship between technology and sustainable development. Manag. Decis. 2020, 58, 1737–1757. [Google Scholar] [CrossRef]

- Miranda, J.; Ponce, P.; Molina, A.; Wright, P. Sensing, smart and sustainable technologies for Agri-Food 4.0. Comput. Ind. 2019, 108, 21–36. [Google Scholar] [CrossRef]

- Gandarias, J.M.; Garcia-Cerezo, A.J.; Gomez-de Gabriel, J.M. CNN-based methods for object recognition with high-resolution tactile sensors. IEEE Sens. J. 2019, 19, 6872–6882. [Google Scholar] [CrossRef]

- Platkiewicz, J.; Lipson, H.; Hayward, V. Haptic edge detection through shear. Sci. Rep. 2016, 6, 23551. [Google Scholar] [CrossRef]

- Parvizi-Fard, A.; Amiri, M.; Kumar, D.; Iskarous, M.M.; Thakor, N.V. A functional spiking neuronal network for tactile sensing pathway to process edge orientation. Sci. Rep. 2021, 11, 1320. [Google Scholar] [CrossRef]

- Yuan, X.; Zou, J.; Sun, L.; Liu, H.; Jin, G. Soft tactile sensor and curvature sensor for caterpillar-like soft robot’s adaptive motion. In Proceedings of the 2019 International Conference on Robotics, Intelligent Control and Artificial Intelligence, Long Beach, CA, USA, 9–15 June 2019; pp. 690–695. [Google Scholar]

- Luo, S.; Mou, W.; Althoefer, K.; Liu, H. Novel tactile-sift descriptor for object shape recognition. IEEE Sens. J. 2015, 15, 5001–5009. [Google Scholar] [CrossRef]

- Amirkhani, G.; Goodridge, A.; Esfandiari, M.; Phalen, H.; Ma, J.H.; Iordachita, I.; Armand, M. Design and Fabrication of a Fiber Bragg Grating Shape Sensor for Shape Reconstruction of a Continuum Manipulator. IEEE Sens. J. 2023, 23, 12915–12929. [Google Scholar] [CrossRef]

- Sotgiu, E.; Aguiam, D.E.; Calaza, C.; Rodrigues, J.; Fernandes, J.; Pires, B.; Moreira, E.E.; Alves, F.; Fonseca, H.; Dias, R.; et al. Surface texture detection with a new sub-mm resolution flexible tactile capacitive sensor array for multimodal artificial finger. J. Microelectromech. Syst. 2020, 29, 629–636. [Google Scholar] [CrossRef]

- Pang, Y.; Xu, X.; Chen, S.; Fang, Y.; Shi, X.; Deng, Y.; Wang, Z.L.; Cao, C. Skin-inspired textile-based tactile sensors enable multifunctional sensing of wearables and soft robots. Nano Energy 2022, 96, 107137. [Google Scholar] [CrossRef]

- Abd, M.A.; Paul, R.; Aravelli, A.; Bai, O.; Lagos, L.; Lin, M.; Engeberg, E.D. Hierarchical tactile sensation integration from prosthetic fingertips enables multi-texture surface recognition. Sensors 2021, 21, 4324. [Google Scholar] [CrossRef]

- Liu, W.; Zhang, G.; Zhan, B.; Hu, L.; Liu, T. Fine Texture Detection Based on a Solid–Liquid Composite Flexible Tactile Sensor Array. Micromachines 2022, 13, 440. [Google Scholar] [CrossRef]

- Choi, D.; Jang, S.; Kim, J.S.; Kim, H.J.; Kim, D.H.; Kwon, J.Y. A highly sensitive tactile sensor using a pyramid-plug structure for detecting pressure, shear force, and torsion. Adv. Mater. Technol. 2019, 4, 1800284. [Google Scholar] [CrossRef]

- Weng, L.; Xie, G.; Zhang, B.; Huang, W.; Wang, B.; Deng, Z. Magnetostrictive tactile sensor array for force and stiffness detection. J. Magn. Magn. Mater. 2020, 513, 167068. [Google Scholar] [CrossRef]

- Zhang, Y.; Ju, F.; Wei, X.; Wang, D.; Wang, Y. A piezoelectric tactile sensor for tissue stiffness detection with arbitrary contact angle. Sensors 2020, 20, 6607. [Google Scholar] [CrossRef]

- Christopher, C.T.; Fath Elbab, A.M.; Osueke, C.O.; Ikua, B.W.; Sila, D.N.; Fouly, A. A piezoresistive dual-tip stiffness tactile sensor for mango ripeness assessment. Cogent Eng. 2022, 9, 2030098. [Google Scholar] [CrossRef]

- Li, Y.; Cao, Z.; Li, T.; Sun, F.; Bai, Y.; Lu, Q.; Wang, S.; Yang, X.; Hao, M.; Lan, N.; et al. Highly selective biomimetic flexible tactile sensor for neuroprosthetics. Research 2020, 2020. [Google Scholar] [CrossRef]

- Li, Y.; Zhao, M.; Yan, Y.; He, L.; Wang, Y.; Xiong, Z.; Wang, S.; Bai, Y.; Sun, F.; Lu, Q.; et al. Multifunctional biomimetic tactile system via a stick-slip sensing strategy for human–machine interactions. npj Flex. Electron. 2022, 6, 46. [Google Scholar] [CrossRef]

- Wi, Y.; Florence, P.; Zeng, A.; Fazeli, N. Virdo: Visio-tactile implicit representations of deformable objects. In Proceedings of the 2022 International Conference on Robotics and Automation (ICRA), Philadelphia, PA, USA, 23–27 May 2022; pp. 3583–3590. [Google Scholar]

- Huang, H.J.; Guo, X.; Yuan, W. Understanding dynamic tactile sensing for liquid property estimation. arXiv 2022, arXiv:2205.08771. [Google Scholar]

- Zhao, D.; Sun, F.; Wang, Z.; Zhou, Q. A novel accurate positioning method for object pose estimation in robotic manipulation based on vision and tactile sensors. Int. J. Adv. Manuf. Technol. 2021, 116, 2999–3010. [Google Scholar] [CrossRef]

- Sui, R.; Zhang, L.; Li, T.; Jiang, Y. Incipient slip detection method with vision-based tactile sensor based on distribution force and deformation. IEEE Sens. J. 2021, 21, 25973–25985. [Google Scholar] [CrossRef]

- Gomes, D.F.; Lin, Z.; Luo, S. GelTip: A finger-shaped optical tactile sensor for robotic manipulation. In Proceedings of the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Las Vegas, NV, USA, 24 October 2020–24 January 2021; pp. 9903–9909. [Google Scholar]

- von Drigalski, F.; Hayashi, K.; Huang, Y.; Yonetani, R.; Hamaya, M.; Tanaka, K.; Ijiri, Y. Precise multi-modal in-hand pose estimation using low-precision sensors for robotic assembly. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xian, China, 30 May–5 June 2021; pp. 968–974. [Google Scholar]

- Patel, R.; Ouyang, R.; Romero, B.; Adelson, E. Digger finger: Gelsight tactile sensor for object identification inside granular media. In Proceedings of the Experimental Robotics: The 17th International Symposium; Springer: Berlin/Heidelberg, Germany, 2021; pp. 105–115. [Google Scholar]

- Abderrahmane, Z.; Ganesh, G.; Crosnier, A.; Cherubini, A. A deep learning framework for tactile recognition of known as well as novel objects. IEEE Trans. Ind. Inf. 2019, 16, 423–432. [Google Scholar] [CrossRef]

- Schmitz, A.; Maiolino, P.; Maggiali, M.; Natale, L.; Cannata, G.; Metta, G. Methods and technologies for the implementation of large-scale robot tactile sensors. IEEE Trans. Robot. 2011, 27, 389–400. [Google Scholar] [CrossRef]

- Spiers, A.J.; Liarokapis, M.V.; Calli, B.; Dollar, A.M. Single-grasp object classification and feature extraction with simple robot hands and tactile sensors. IEEE Trans. Haptics 2016, 9, 207–220. [Google Scholar]

- Tenzer, Y.; Jentoft, L.P.; Howe, R.D. The feel of MEMS barometers: Inexpensive and easily customized tactile array sensors. IEEE Robot. Autom. Mag. 2014, 21, 89–95. [Google Scholar] [CrossRef]

- Chen, Y.; Lin, J.; Du, X.; Fang, B.; Sun, F.; Li, S. Non-destructive Fruit Firmness Evaluation Using Vision-Based Tactile Information. In Proceedings of the 2022 International Conference on Robotics and Automation (ICRA), Philadelphia, PA, USA, 23–27 May 2022; pp. 2303–2309. [Google Scholar]

- Wan, C.; Cai, P.; Guo, X.; Wang, M.; Matsuhisa, N.; Yang, L.; Lv, Z.; Luo, Y.; Loh, X.J.; Chen, X. An artificial sensory neuron with visual-haptic fusion. Nat. Commun. 2020, 11, 4602. [Google Scholar]

- Dang, H.; Allen, P.K. Stable grasping under pose uncertainty using tactile feedback. Auton. Robot. 2014, 36, 309–330. [Google Scholar]

- Papadimitriou, C.H. Computational complexity. In Encyclopedia of Computer Science; Wiley: Hoboken, NJ, USA, 2003; pp. 260–265. [Google Scholar]

- Kim, M.; Yang, J.; Kim, D.; Yun, D. Soft tactile sensor to detect the slip of a Robotic hand. Measurement 2022, 200, 111615. [Google Scholar] [CrossRef]

- Fu, X.; Zhang, J.; Xiao, J.; Kang, Y.; Yu, L.; Jiang, C.; Pan, Y.; Dong, H.; Gao, S.; Wang, Y. A high-resolution, ultrabroad-range and sensitive capacitive tactile sensor based on a CNT/PDMS composite for robotic hands. Nanoscale 2021, 13, 18780–18788. [Google Scholar] [PubMed]

- Cho, M.Y.; Lee, J.W.; Park, C.; Lee, B.D.; Kyeong, J.S.; Park, E.J.; Lee, K.Y.; Sohn, K.S. Large-Area Piezoresistive Tactile Sensor Developed by Training a Super-Simple Single-Layer Carbon Nanotube-Dispersed Polydimethylsiloxane Pad. Adv. Intell. Syst. 2022, 4, 2100123. [Google Scholar] [CrossRef]

- Lee, D.H.; Chuang, C.H.; Shaikh, M.O.; Dai, Y.S.; Wang, S.Y.; Wen, Z.H.; Yen, C.K.; Liao, C.F.; Pan, C.T. Flexible piezoresistive tactile sensor based on polymeric nanocomposites with grid-type microstructure. Micromachines 2021, 12, 452. [Google Scholar]

- Wang, S.; Lambeta, M.; Chou, P.W.; Calandra, R. Tacto: A fast, flexible, and open-source simulator for high-resolution vision-based tactile sensors. IEEE Robot. Autom. Lett. 2022, 7, 3930–3937. [Google Scholar] [CrossRef]

- Zhang, Y.; Kan, Z.; Yang, Y.; Tse, Y.A.; Wang, M.Y. Effective estimation of contact force and torque for vision-based tactile sensors with helmholtz–hodge decomposition. IEEE Robot. Autom. Lett. 2019, 4, 4094–4101. [Google Scholar] [CrossRef]

- Wang, A.; Kurutach, T.; Liu, K.; Abbeel, P.; Tamar, A. Learning robotic manipulation through visual planning and acting. arXiv 2019, arXiv:1905.04411. [Google Scholar]

- Nguyen, V.D. Constructing force-closure grasps. Int. J. Robot. Res. 1988, 7, 3–16. [Google Scholar]

- Han, L.; Li, Z.; Trinkle, J.C.; Qin, Z.; Jiang, S. The planning and control of robot dextrous manipulation. In Proceedings of the 2000 ICRA, Millennium Conference, IEEE International Conference on Robotics and Automation, Symposia Proceedings (Cat. No. 00CH37065), San Francisco, CA, USA, 24–28 April 2000; Volume 1, pp. 263–269. [Google Scholar]

- Liu, Y.; Jiang, D.; Tao, B.; Qi, J.; Jiang, G.; Yun, J.; Huang, L.; Tong, X.; Chen, B.; Li, G. Grasping posture of humanoid manipulator based on target shape analysis and force closure. Alex. Eng. J. 2022, 61, 3959–3969. [Google Scholar]

- He, L.; Lu, Q.; Abad, S.A.; Rojas, N.; Nanayakkara, T. Soft fingertips with tactile sensing and active deformation for robust grasping of delicate objects. IEEE Robot. Autom. Lett. 2020, 5, 2714–2721. [Google Scholar] [CrossRef]

- Wen, R.; Yuan, K.; Wang, Q.; Heng, S.; Li, Z. Force-guided high-precision grasping control of fragile and deformable objects using semg-based force prediction. IEEE Robot. Autom. Lett. 2020, 5, 2762–2769. [Google Scholar] [CrossRef]

- Yin, Z.H.; Huang, B.; Qin, Y.; Chen, Q.; Wang, X. Rotating without Seeing: Towards In-hand Dexterity through Touch. arXiv 2023, arXiv:2303.10880. [Google Scholar]

- Khamis, H.; Xia, B.; Redmond, S.J. Real-time friction estimation for grip force control. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xian, China, 30 May–5 June 2021; pp. 1608–1614. [Google Scholar]

- Zhang, Y.; Yuan, W.; Kan, Z.; Wang, M.Y. Towards learning to detect and predict contact events on vision-based tactile sensors. In Proceedings of the Conference on Robot Learning, PMLR, Cambridge, MA, USA, 16–18 November 2020; pp. 1395–1404. [Google Scholar]

- Prescott, T.J.; Diamond, M.E.; Wing, A.M. Active touch sensing. Philos. Trans. R. Soc. B Biol. Sci. 2011, 2989–2995. [Google Scholar] [CrossRef] [PubMed]

- Proske, U.; Gandevia, S.C. The kinaesthetic senses. J. Physiol. 2009, 587, 4139–4146. [Google Scholar] [CrossRef]

- Görner, M.; Haschke, R.; Ritter, H.; Zhang, J. Moveit! task constructor for task-level motion planning. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 190–196. [Google Scholar]

- Liu, S.; Liu, P. Benchmarking and optimization of robot motion planning with motion planning pipeline. Int. J. Adv. Manuf. Technol. 2022, 118, 949–961. [Google Scholar] [CrossRef]

- Ravichandar, H.; Polydoros, A.S.; Chernova, S.; Billard, A. Recent advances in robot learning from demonstration. Annu. Rev. Control Robot. Auton. Syst. 2020, 3, 297–330. [Google Scholar] [CrossRef]

- Sanni, O.; Bonvicini, G.; Khan, M.A.; López-Custodio, P.C.; Nazari, K.; Ghalamzan E., A.M. Deep movement primitives: Toward breast cancer examination robot. In Proceedings of the AAAI Conference on Artificial Intelligence, Online, 22 February–1 March 2022; Volume 36, pp. 12126–12134. [Google Scholar]

- Dabrowski, J.J.; Rahman, A. Fruit Picker Activity Recognition with Wearable Sensors and Machine Learning. arXiv 2023, arXiv:2304.10068. [Google Scholar]

- Ngiam, J.; Khosla, A.; Kim, M.; Nam, J.; Lee, H.; Ng, A.Y. Multimodal deep learning. In Proceedings of the 28th International Conference on Machine Learning (ICML-11), Washington, DC, USA, 28 June–2 July 2011; pp. 689–696. [Google Scholar]

- Joshi, G.; Walambe, R.; Kotecha, K. A review on explainability in multimodal deep neural nets. IEEE Access 2021, 9, 59800–59821. [Google Scholar] [CrossRef]

- Calandra, R.; Owens, A.; Jayaraman, D.; Lin, J.; Yuan, W.; Malik, J.; Adelson, E.H.; Levine, S. More than a feeling: Learning to grasp and regrasp using vision and touch. IEEE Robot. Autom. Lett. 2018, 3, 3300–3307. [Google Scholar] [CrossRef]

- Palermo, F.; Konstantinova, J.; Althoefer, K.; Poslad, S.; Farkhatdinov, I. Implementing tactile and proximity sensing for crack detection. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 632–637. [Google Scholar]

- Liang, J.; Wu, J.; Huang, H.; Xu, W.; Li, B.; Xi, F. Soft sensitive skin for safety control of a nursing robot using proximity and tactile sensors. IEEE Sens. J. 2019, 20, 3822–3830. [Google Scholar] [CrossRef]

- Wang, H.; De Boer, G.; Kow, J.; Alazmani, A.; Ghajari, M.; Hewson, R.; Culmer, P. Design methodology for magnetic field-based soft tri-axis tactile sensors. Sensors 2016, 16, 1356. [Google Scholar] [CrossRef] [PubMed]

- Khamis, H.; Xia, B.; Redmond, S.J. A novel optical 3D force and displacement sensor–Towards instrumenting the PapillArray tactile sensor. Sens. Actuators A Phys. 2019, 291, 174–187. [Google Scholar] [CrossRef]

- Mukashev, D.; Zhuzbay, N.; Koshkinbayeva, A.; Orazbayev, B.; Kappassov, Z. PhotoElasticFinger: Robot Tactile Fingertip Based on Photoelastic Effect. Sensors 2022, 22, 6807. [Google Scholar] [CrossRef]

- Costanzo, M.; De Maria, G.; Natale, C.; Pirozzi, S. Design and calibration of a force/tactile sensor for dexterous manipulation. Sensors 2019, 19, 966. [Google Scholar] [CrossRef] [PubMed]

- Zapata-Impata, B.S.; Gil, P.; Torres, F. Learning spatio temporal tactile features with a ConvLSTM for the direction of slip detection. Sensors 2019, 19, 523. [Google Scholar] [CrossRef]

- Bimbo, J.; Luo, S.; Althoefer, K.; Liu, H. In-hand object pose estimation using covariance-based tactile to geometry matching. IEEE Robot. Autom. Lett. 2016, 1, 570–577. [Google Scholar] [CrossRef]

- Lancaster, P.; Yang, B.; Smith, J.R. Improved object pose estimation via deep pre-touch sensing. In Proceedings of the 2017 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Vancouver, BC, Canada, 24–28 September 2017; pp. 2448–2455. [Google Scholar]

- Villalonga, M.B.; Rodriguez, A.; Lim, B.; Valls, E.; Sechopoulos, T. Tactile object pose estimation from the first touch with geometric contact rendering. In Proceedings of the Conference on Robot Learning, PMLR, London, UK, 8–11 November 2021; pp. 1015–1029. [Google Scholar]

- Li, T.; Sun, X.; Shu, X.; Wang, C.; Wang, Y.; Chen, G.; Xue, N. Robot grasping system and grasp stability prediction based on flexible tactile sensor array. Machines 2021, 9, 119. [Google Scholar] [CrossRef]

- Funabashi, S.; Morikuni, S.; Geier, A.; Schmitz, A.; Ogasa, S.; Torno, T.P.; Somlor, S.; Sugano, S. Object recognition through active sensing using a multi-fingered robot hand with 3d tactile sensors. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Madrid, Spain, 1–5 October 2018; pp. 2589–2595. [Google Scholar]

- Wang, L.; Ma, L.; Yang, J.; Wu, J. Human somatosensory processing and artificial somatosensation. Cyborg Bionic Syst. 2021, 2021, 9843259. [Google Scholar] [CrossRef]

- Langdon, A.J.; Boonstra, T.W.; Breakspear, M. Multi-frequency phase locking in human somatosensory cortex. Prog. Biophys. Mol. Biol. 2011, 105, 58–66. [Google Scholar] [CrossRef]

- Härtner, J.; Strauss, S.; Pfannmöller, J.; Lotze, M. Tactile acuity of fingertips and hand representation size in human Area 3b and Area 1 of the primary somatosensory cortex. NeuroImage 2021, 232, 117912. [Google Scholar] [CrossRef] [PubMed]

- Abdeetedal, M.; Kermani, M.R. Grasp and stress analysis of an underactuated finger for proprioceptive tactile sensing. IEEE/ASME Trans. Mechatron. 2018, 23, 1619–1629. [Google Scholar] [CrossRef]

- Ntagios, M.; Nassar, H.; Pullanchiyodan, A.; Navaraj, W.T.; Dahiya, R. Robotic hands with intrinsic tactile sensing via 3D printed soft pressure sensors. Adv. Intell. Syst. 2020, 2, 1900080. [Google Scholar] [CrossRef]

- Luo, S.; Zhou, X.; Tang, X.; Li, J.; Wei, D.; Tai, G.; Chen, Z.; Liao, T.; Fu, J.; Wei, D.; et al. Microconformal electrode-dielectric integration for flexible ultrasensitive robotic tactile sensing. Nano Energy 2021, 80, 105580. [Google Scholar] [CrossRef]

- Shintake, J.; Cacucciolo, V.; Floreano, D.; Shea, H. Soft robotic grippers. Adv. Mater. 2018, 30, 1707035. [Google Scholar] [CrossRef]

- Dahiya, R.; Akinwande, D.; Chang, J.S. Flexible electronic skin: From humanoids to humans [scanning the issue]. Proc. IEEE 2019, 107, 2011–2015. [Google Scholar] [CrossRef]

- Zhang, Y.; Lin, Z.; Huang, X.; You, X.; Ye, J.; Wu, H. A Large-Area, Stretchable, Textile-Based Tactile Sensor. Adv. Mater. Technol. 2020, 5, 1901060. [Google Scholar] [CrossRef]

| Robot Task Type | Tactile Sensor Type | Cited Research |

|---|---|---|

| Robot Control Tasks | Force Control | [45,86,122,123,124,124] |

| Robotic Grasping | [123,125,126,127,128,129,130,131,132,133] | |

| Slip Detection | [123,134,135,136,137,138,139,140,141,142,143,144,145,146,147,148,149,150] | |

| Object Pushing | [29,121,179] | |

| Compliance Control | [163,164,165,166,167] | |

| Haptic Feedback | [174,175,176,177,178] | |

| Feature Extraction Tasks | Texture Recognition | [47,60,151,152,153,155,156,157,158,159,160,161,162] |

| Object Recognition | [43,45,86,120,156,160,168,169,170,171,172] | |

| 3D Shape Reconstruction | [44,62,173] |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mandil, W.; Rajendran, V.; Nazari, K.; Ghalamzan-Esfahani, A. Tactile-Sensing Technologies: Trends, Challenges and Outlook in Agri-Food Manipulation. Sensors 2023, 23, 7362. https://doi.org/10.3390/s23177362

Mandil W, Rajendran V, Nazari K, Ghalamzan-Esfahani A. Tactile-Sensing Technologies: Trends, Challenges and Outlook in Agri-Food Manipulation. Sensors. 2023; 23(17):7362. https://doi.org/10.3390/s23177362

Chicago/Turabian StyleMandil, Willow, Vishnu Rajendran, Kiyanoush Nazari, and Amir Ghalamzan-Esfahani. 2023. "Tactile-Sensing Technologies: Trends, Challenges and Outlook in Agri-Food Manipulation" Sensors 23, no. 17: 7362. https://doi.org/10.3390/s23177362

APA StyleMandil, W., Rajendran, V., Nazari, K., & Ghalamzan-Esfahani, A. (2023). Tactile-Sensing Technologies: Trends, Challenges and Outlook in Agri-Food Manipulation. Sensors, 23(17), 7362. https://doi.org/10.3390/s23177362