Review on Human Action Recognition in Smart Living: Sensing Technology, Multimodality, Real-Time Processing, Interoperability, and Resource-Constrained Processing

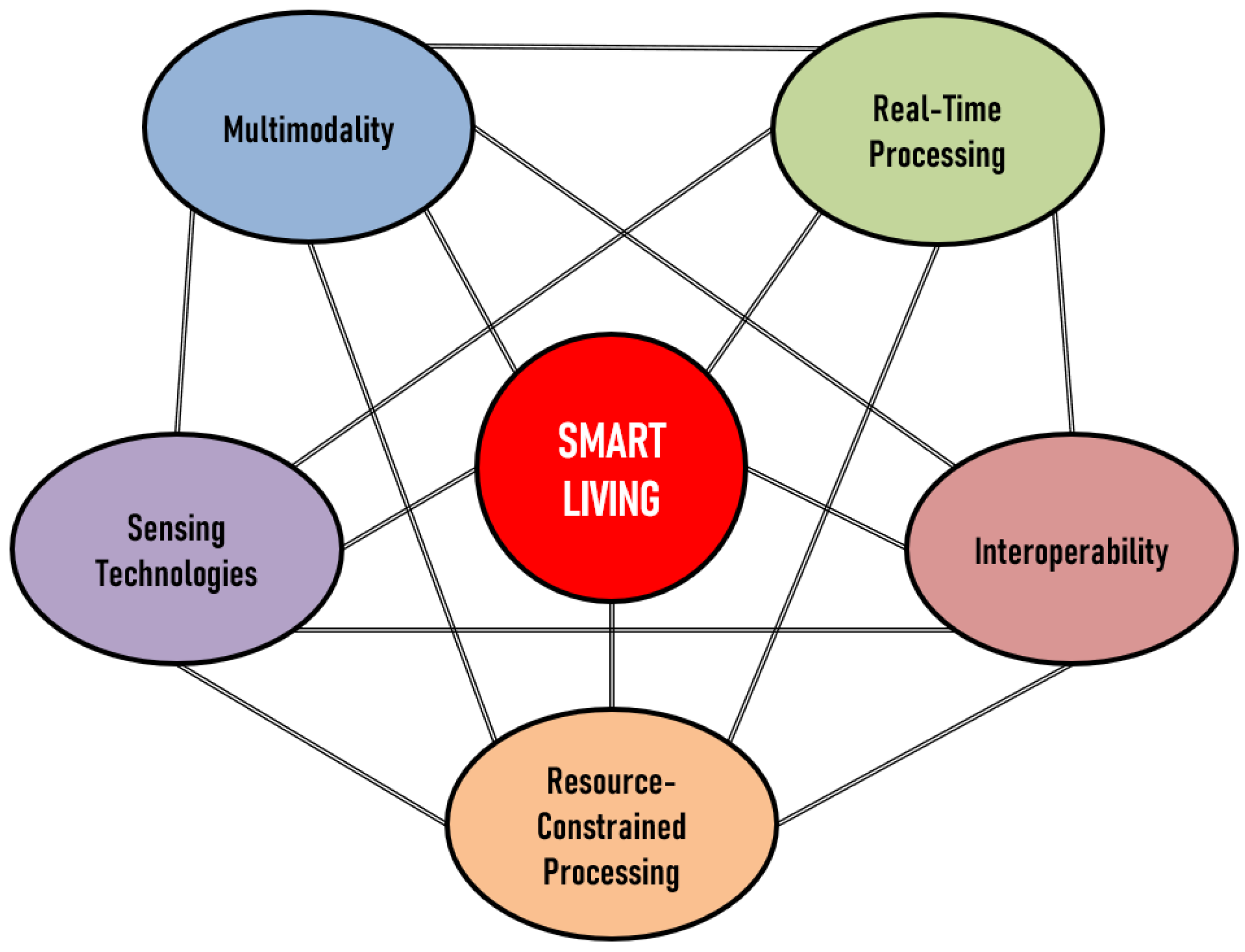

Abstract

1. Introduction

1.1. General Background on HAR

1.2. HAR in Smart Living Focusing on Sensing Technology, Multimodality, Real-Time Processing, Interoperability, and Resource-Constrained Processing

2. Review of Related Works and Rationale for This Comprehensive Study

3. Common Publicly Available Datasets

4. Performance Metrics

- TP: True Positives—the number of positive cases correctly identified by a classifier;

- TN: True Negatives—the number of negative cases correctly identified as negative by a classifier;

- FP: False Positives—the number of negative cases incorrectly identified as positive by a classifier;

- FN: False Negatives—the number of positive cases incorrectly identified as negative by a classifier.

5. Recent State of the Art on HAR in Smart Living

5.1. Sensing Technologies

- Inertial sensors (accelerometers and gyroscopes) that measure acceleration and angular velocity to detect motion and orientation.

- Pressure sensors that measure force per unit area, enabling the detection of physical interactions, such as touch or contact between objects.

- Acoustic sensors that capture sound waves to identify events such as footsteps, speech, or glass breaking.

- Vibration sensors that detect oscillations and vibrations in structures or objects can indicate various activities or events.

- Ultrasound sensors that use high-frequency sound waves to measure distance or detect movement are often employed in obstacle detection and proximity sensing.

- Contact switch sensors that detect a physical connection’s open or closed state, such as doors or windows.

- Magnetic sensors that detect changes in magnetic fields are often used for tracking the movement or orientation of objects.

- Visual spectrum sensors, such as cameras, capture images and videos to recognize activities, gestures, and facial expressions.

- Infrared or near-infrared sensors, including cameras, Passive Infrared (PIR) sensors, and IR arrays, can detect heat signatures, enabling motion detection and human presence recognition.

- RF systems such as WiFi and radar utilize wireless signals to sense movement, location, and even breathing patterns.

- Visible spectrum sensors (e.g., cameras) that capture images in the range of wavelengths perceivable by the human eye.

- Infrared sensors that detect thermal radiation emitted by objects help identify human presence and motion.

- Radio-frequency sensors that employ wireless signals to track movement, proximity, and location.

- Mechanical wave/vibration sensors, including audio, sonar, and inertial sensors, which capture sound waves, underwater echoes, or physical oscillations, respectively.

- Body-worn sensors, predominantly inertial sensors, that are attached to the human body to monitor activities [73], gestures [74], and postures [75], but also physiological sensors measuring neurovegetative parameters, such as heart rate, respiration rate, and blood pressure [76]. Wearable sensors can also be employed for comprehensive health monitoring, enabling continuous tracking of vital signs [77].

5.2. Multimodality

5.3. Real-Time Processing

5.4. Interoperability

5.4.1. Device and Protocol Interoperability

5.4.2. Data Format Interoperability

5.4.3. Semantic Interoperability

5.4.4. Interoperability in Machine Learning Algorithms

5.5. Resource-Constrained Processing

6. Critical Discussion

6.1. Sensing Technologies

6.2. Multimodality

6.3. Real-Time Processing

6.4. Interoperability

6.5. Resource-Constrained Processing

6.6. A Short Note on Sensing Modalities

6.7. A Short Note on Wearable Solutions

7. The Authors’ Perspective

8. Conclusions

- Holistic Approach to Sensing Technologies: Smart living solutions need a comprehensive view considering various sensing technologies, their strengths, limitations, and potential synergies in a HAR system.

- Trade-offs between Multiple Factors: Sensing technologies require balancing accuracy, computational resources, power use, and user acceptability. Tailored solutions often outperform a one-size-fits-all approach in HAR systems.

- Perceived Intrusiveness and Privacy Concerns: HAR’s success depends on user acceptance, necessitating minimization of invasiveness and addressing privacy issues. RF sensing is less intrusive but not without concerns, similar to EMI.

- Sensor Fusion: Techniques to combine data from multiple sensors can improve HAR system accuracy and robustness, leveraging the strengths of different sensing technologies to overcome individual limitations.

- Societal Implications: Beyond technical aspects, sensing technology adoption has broader societal implications. Interdisciplinary collaborations and open dialogues are needed to address ethical, legal, and social consequences, emphasizing the need for ethical guidelines and regulations.

- Resource Intensity: Handling multiple sensing modalities in HAR systems is resource-intensive, and while there are proposed solutions, their resource demands may limit applicability.

- Privacy Concerns: Collecting large amounts of user data for multimodal sensing raises privacy issues that require careful handling.

- Data Heterogeneity: The diverse data from various sensors can lead to missing or unreliable measurements. Proposed solutions, such as data augmentation and sensor-independent fusion techniques, need further refinement.

- Individualized Environments and User Behaviors: Developing HAR systems often requires extensive knowledge of the environment and behaviors. Though feasible in laboratory conditions, this is impractical in real settings. State-of-the-art advancements generally prioritize recognition performance, overlooking the initial setup. Thus, approaches identifying activity sequences from motion patterns and incorporating higher-level functionality via bootstrapping could be beneficial.

- Choice of Sensing Modality: The selection of sensing technologies greatly impacts the system’s real-time processing ability. Each modality, such as depth cameras or wearable sensors, has its pros and cons, and their effectiveness varies based on the environment, type of activities, and data quality requirements.

- Optimization of Computational Models: Lightweight computational models and optimization techniques, such as model quantization and pruning are essential for real-time processing. However, trade-offs between performance and the ability to learn complex representations need careful consideration.

- Data Preprocessing and Feature Extraction: Techniques such as sliding window-based feature representation and skeleton extraction can improve real-time processing efficiency and accuracy, but their limitations and suitability should be carefully evaluated.

- Privacy and Security: User privacy and security should not be compromised in the quest for real-time processing. The use of non-intrusive sensors and privacy-preserving techniques can help maintain user trust.

- Adaptability and Scalability: HAR systems should be adaptable and scalable to incorporate new sensing modalities, computational models, and optimization techniques as technology evolves.

- Standardization: It is critical for seamless integration and communication among diverse devices and systems. Stakeholders must collaborate to develop open standards that promote interoperability.

- Ontologies and Semantic Technologies: They enhance interoperability by providing a formal representation of knowledge and facilitating meaningful data sharing across devices and systems.

- Continuous Updates and Adaptation: As technologies evolve, systems need ongoing updates and adaptations to maintain accuracy and effectiveness and to ensure interoperability. This requires investment in resources and effort.

- Integration of Data Sources: Interoperability enables seamless data exchange between different sensors, improving system accuracy and efficiency. This is particularly beneficial in healthcare and other domains where monitoring and recognition of activities are crucial.

- Innovation and Collaboration: Interoperability fosters innovative solutions that address complex challenges and can be integrated into diverse environments to cater to varying user needs.

- Computational Efficiency: The balance between computational efficiency and accuracy is crucial, requiring lightweight algorithms and architectures. Yet, maintaining accuracy while reducing load remains a challenge.

- Data Management: Dealing with large amounts of data requires effective algorithms and techniques such as data pruning and compression. Maintaining a balance between reducing the data and preserving its integrity while also implementing efficient communication protocols for dependable data transmission is crucial.

- Energy Efficiency: Energy-efficient algorithms and dynamic power management are key, especially for portable devices. Exploring energy-harvesting technologies and alternative energy sources is an important future direction.

- Holistic Approach: Addressing resource constraints requires interdisciplinary collaboration and a culture of research prioritizing resource-constrained processing. Ongoing innovation is necessary for developing and refining effective and sustainable technology solutions.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| AAL | Ambient Assisted Living |

| ADL | Activity of Daily Living |

| BiGRU | Bi-directional Gated Recurrent Unit |

| BSO | Bee Swarm Optimization |

| CNN | Convolutional Neural Network |

| CPD | Change Point Detection |

| CPI | Change Point Index |

| CSI | Channel State Information |

| DE | Differential Evolution |

| DL | Deep Learning |

| DT | Decision Tree |

| EAR | Exercise Activity Recognition |

| EDL | Enhanced Deep Learning |

| EMI | Electromagnetic Interference |

| ERT | Extremely Randomized Trees |

| FFNN | Feed-Forward Neural Network |

| GRU | Gated Recurrent Unit |

| GSA | Gravitational Search Algorithm |

| GTB | Gradient Tree Boosting |

| HAN | Home Area Network |

| HAR | Human Action Recognition |

| HMM | Hidden Markov Model |

| IBCN | Improved Bayesian Convolution Network |

| ILM | Intrusive Load Monitoring |

| IMU | Inertial Measuring Unit |

| ICT | Information and Communication Technology |

| IoT | Internet of Things |

| KNN | K-Nearest Neighbors |

| LR | Logistic Regression |

| LSTM | Long Short-Term Memory |

| LSVM | Linear Support Vector Machine |

| MDS | Time-variant mean Doppler shift |

| ML | Machine Learning |

| MLP | Multilayer perceptron |

| MPA | Marine Predator Algorithm |

| NIC | Network Interface Card |

| PIR | Passive Infrared |

| PSO | Particle Swarm Optimization |

| RCN | Residual Convolutional Network |

| RF | Radio Frequency |

| RFC | Random Forest Classifier |

| RGB | Red-Green-Blue |

| RiSH | Robot-integrated Smart Home |

| RNN | Recurrent Neural Network |

| SDE | Sensor Distance Error |

| SDR | Software Defined Radio |

| sEMG | Surface Electromyography |

| SFFS | Sequential Floating Forward Search |

| SKT | Skin Temperature |

| SSA | Salp Swarm Algorithm |

| STFT | Short-Time Fourier Transform |

| SVM | Support Vector Machine |

| TCN | Temporal Convolutional Network |

| TENG | Triboelectric Nanogenerator |

| TTN | Two-stream Transformer Network |

| UWB | Ultra-Wideband |

| W-IoT | Wearable Internet of Things |

References

- Shami, M.R.; Rad, V.B.; Moinifar, M. The structural model of indicators for evaluating the quality of urban smart living. Technol. Forecast. Soc. Chang. 2022, 176, 121427. [Google Scholar] [CrossRef]

- Liu, X.; Lam, K.; Zhu, K.; Zheng, C.; Li, X.; Du, Y.; Liu, C.; Pong, P.W. Overview of spintronic sensors with internet of things for smart living. IEEE Trans. Magn. 2019, 55, 1–22. [Google Scholar] [CrossRef]

- Yasirandi, R.; Lander, A.; Sakinah, H.R.; Insan, I.M. IoT products adoption for smart living in Indonesia: Technology challenges and prospects. In Proceedings of the 2020 8th International Conference on Information and Communication Technology (ICoICT), Yogyakarta, Indonesia, 24–26 June 2020; pp. 1–6. [Google Scholar]

- Caragliu, A.; Del Bo, C. Smartness and European urban performance: Assessing the local impacts of smart urban attributes. Innov. Eur. J. Soc. Sci. Res. 2012, 25, 97–113. [Google Scholar] [CrossRef]

- Dameri, R.P.; Ricciardi, F. Leveraging smart city projects for benefitting citizens: The role of ICTs. In Smart City Networks: Through Internet Things; Springer: Cham, Switzerland, 2017; pp. 111–128. [Google Scholar]

- Giffinger, R.; Haindlmaier, G.; Kramar, H. The role of rankings in growing city competition. Urban Res. Pract. 2010, 3, 299–312. [Google Scholar] [CrossRef]

- Khan, M.S.A.; Miah, M.A.R.; Rahman, S.R.; Iqbal, M.M.; Iqbal, A.; Aravind, C.; Huat, C.K. Technical Analysis of Security Management in Terms of Crowd Energy and Smart Living. J. Electron. Sci. Technol. 2018, 16, 367–378. [Google Scholar]

- Han, M.J.N.; Kim, M.J. A critical review of the smart city in relation to citizen adoption towards sustainable smart living. Habitat Int. 2021, 108, 102312. [Google Scholar]

- Chourabi, H.; Nam, T.; Walker, S.; Gil-Garcia, J.R.; Mellouli, S.; Nahon, K.; Pardo, T.A.; Scholl, H.J. Understanding smart cities: An integrative framework. In Proceedings of the 2012 45th Hawaii International Conference on System Sciences, Maui, HI, USA, 4–7 January 2012; pp. 2289–2297. [Google Scholar]

- Zin, T.T.; Htet, Y.; Akagi, Y.; Tamura, H.; Kondo, K.; Araki, S.; Chosa, E. Real-time action recognition system for elderly people using stereo depth camera. Sensors 2021, 21, 5895. [Google Scholar] [CrossRef]

- Rathod, V.; Katragadda, R.; Ghanekar, S.; Raj, S.; Kollipara, P.; Anitha Rani, I.; Vadivel, A. Smart surveillance and real-time human action recognition using OpenPose. In Proceedings of the ICDSMLA 2019: Proceedings of the 1st International Conference on Data Science, Machine Learning and Applications, Hyderabad, India, 29–30 March 2019; pp. 504–509. [Google Scholar]

- Lu, M.; Hu, Y.; Lu, X. Driver action recognition using deformable and dilated faster R-CNN with optimized region proposals. Appl. Intell. 2020, 50, 1100–1111. [Google Scholar] [CrossRef]

- Sowmyayani, S.; Rani, P.A.J. STHARNet: Spatio-temporal human action recognition network in content based video retrieval. Multimed. Tools Appl. 2022, 1–16. [Google Scholar] [CrossRef]

- Rodomagoulakis, I.; Kardaris, N.; Pitsikalis, V.; Mavroudi, E.; Katsamanis, A.; Tsiami, A.; Maragos, P. Multimodal human action recognition in assistive human-robot interaction. In Proceedings of the 2016 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Shanghai, China, 20–25 March 2016; pp. 2702–2706. [Google Scholar]

- Jain, V.; Gupta, G.; Gupta, M.; Sharma, D.K.; Ghosh, U. Ambient intelligence-based multimodal human action recognition for autonomous systems. ISA Trans. 2023, 132, 94–108. [Google Scholar] [CrossRef]

- Shotton, J.; Fitzgibbon, A.; Cook, M.; Sharp, T.; Finocchio, M.; Moore, R.; Kipman, A.; Blake, A. Real-time human pose recognition in parts from single depth images. In Proceedings of the CVPR 2011, Colorado Springs, CO, USA, 20–25 June 2011; pp. 1297–1304. [Google Scholar]

- Keestra, M. Understanding human action. Integraiting meanings, mechanisms, causes, and contexts. In Transdisciplinarity in Philosophy and Science: Approaches, Problems, Prospects = Transdistsiplinarnost v Filosofii i Nauke: Podkhody, Problemy, Perspektivy; Navigator: Moscow, Russia, 2015; pp. 201–235. [Google Scholar]

- Ricoeur, P. Oneself as Another; University of Chicago Press: Chicago, IL, USA, 1992. [Google Scholar]

- Blake, R.; Shiffrar, M. Perception of human motion. Annu. Rev. Psychol. 2007, 58, 47–73. [Google Scholar] [CrossRef]

- Li, C.; Hua, T. Human action recognition based on template matching. Procedia Eng. 2011, 15, 2824–2830. [Google Scholar] [CrossRef]

- Thi, T.H.; Zhang, J.; Cheng, L.; Wang, L.; Satoh, S. Human action recognition and localization in video using structured learning of local space-time features. In Proceedings of the 2010 7th IEEE International Conference on Advanced Video and Signal Based Surveillance, Boston, MA, USA, 29 August–1 September 2010; pp. 204–211. [Google Scholar]

- Weinland, D.; Ronfard, R.; Boyer, E. A survey of vision-based methods for action representation, segmentation and recognition. Comput. Vis. Image Underst. 2011, 115, 224–241. [Google Scholar] [CrossRef]

- Gu, F.; Chung, M.H.; Chignell, M.; Valaee, S.; Zhou, B.; Liu, X. A survey on deep learning for human activity recognition. ACM Comput. Surv. (CSUR) 2021, 54, 1–34. [Google Scholar] [CrossRef]

- Bocus, M.J.; Li, W.; Vishwakarma, S.; Kou, R.; Tang, C.; Woodbridge, K.; Craddock, I.; McConville, R.; Santos-Rodriguez, R.; Chetty, K.; et al. OPERAnet, a multimodal activity recognition dataset acquired from radio frequency and vision-based sensors. Sci. Data 2022, 9, 474. [Google Scholar] [CrossRef]

- Vidya, B.; Sasikumar, P. Wearable multi-sensor data fusion approach for human activity recognition using machine learning algorithms. Sens. Actuators A Phys. 2022, 341, 113557. [Google Scholar] [CrossRef]

- Ma, S.; Sigal, L.; Sclaroff, S. Learning activity progression in lstms for activity detection and early detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 1942–1950. [Google Scholar]

- Kong, Y.; Kit, D.; Fu, Y. A discriminative model with multiple temporal scales for action prediction. In Proceedings of the Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, 6–12 September 2014; pp. 596–611. [Google Scholar]

- Chen, S.; Yao, H.; Qiao, F.; Ma, Y.; Wu, Y.; Lu, J. Vehicles driving behavior recognition based on transfer learning. Expert Syst. Appl. 2023, 213, 119254. [Google Scholar] [CrossRef]

- Dai, Y.; Yan, Z.; Cheng, J.; Duan, X.; Wang, G. Analysis of multimodal data fusion from an information theory perspective. Inf. Sci. 2023, 623, 164–183. [Google Scholar] [CrossRef]

- Xian, Y.; Hu, H. Enhanced multi-dataset transfer learning method for unsupervised person re-identification using co-training strategy. IET Comput. Vis. 2018, 12, 1219–1227. [Google Scholar] [CrossRef]

- Saleem, G.; Bajwa, U.I.; Raza, R.H. Toward human activity recognition: A survey. Neural Comput. Appl. 2023, 35, 4145–4182. [Google Scholar] [CrossRef]

- Arshad, M.H.; Bilal, M.; Gani, A. Human Activity Recognition: Review, Taxonomy and Open Challenges. Sensors 2022, 22, 6463. [Google Scholar] [CrossRef] [PubMed]

- Kulsoom, F.; Narejo, S.; Mehmood, Z.; Chaudhry, H.N.; Butt, A.; Bashir, A.K. A review of machine learning-based human activity recognition for diverse applications. Neural Comput. Appl. 2022, 34, 18289–18324. [Google Scholar] [CrossRef]

- Sun, Z.; Ke, Q.; Rahmani, H.; Bennamoun, M.; Wang, G.; Liu, J. Human action recognition from various data modalities: A review. IEEE Trans. Pattern Anal. Mach. Intell. 2022, 45, 3200–3225. [Google Scholar] [CrossRef] [PubMed]

- Najeh, H.; Lohr, C.; Leduc, B. Towards supervised real-time human activity recognition on embedded equipment. In Proceedings of the 2022 IEEE International Workshop on Metrology for Living Environment (MetroLivEn), Cosenza, Italy, 25–27 May 2022; pp. 54–59. [Google Scholar]

- Bian, S.; Liu, M.; Zhou, B.; Lukowicz, P. The state-of-the-art sensing techniques in human activity recognition: A survey. Sensors 2022, 22, 4596. [Google Scholar] [CrossRef] [PubMed]

- Gupta, N.; Gupta, S.K.; Pathak, R.K.; Jain, V.; Rashidi, P.; Suri, J.S. Human activity recognition in artificial intelligence framework: A narrative review. Artif. Intell. Rev. 2022, 55, 4755–4808. [Google Scholar] [CrossRef]

- Ige, A.O.; Noor, M.H.M. A survey on unsupervised learning for wearable sensor-based activity recognition. Appl. Soft Comput. 2022, 127, 109363. [Google Scholar] [CrossRef]

- Ma, N.; Wu, Z.; Cheung, Y.; Guo, Y.; Gao, Y.; Li, J.; Jiang, B. A Survey of Human Action Recognition and Posture Prediction. Tsinghua Sci. Technol. 2022, 27, 973–1001. [Google Scholar] [CrossRef]

- Singh, P.K.; Kundu, S.; Adhikary, T.; Sarkar, R.; Bhattacharjee, D. Progress of human action recognition research in the last ten years: A comprehensive survey. Arch. Comput. Methods Eng. 2022, 29, 2309–2349. [Google Scholar] [CrossRef]

- Kong, Y.; Fu, Y. Human action recognition and prediction: A survey. Int. J. Comput. Vis. 2022, 130, 1366–1401. [Google Scholar] [CrossRef]

- Roggen, D.; Calatroni, A.; Rossi, M.; Holleczek, T.; Förster, K.; Tröster, G.; Lukowicz, P.; Bannach, D.; Pirkl, G.; Ferscha, A.; et al. Collecting complex activity datasets in highly rich networked sensor environments. In Proceedings of the 2010 Seventh International Conference on Networked Sensing Systems (INSS), Kassel, Germany, 15–18 June 2010; pp. 233–240. [Google Scholar]

- Reiss, A.; Stricker, D. Introducing a new benchmarked dataset for activity monitoring. In Proceedings of the 2012 16th International Symposium on Wearable Computers, Newcastle, UK, 18–22 June 2012; pp. 108–109. [Google Scholar]

- Micucci, D.; Mobilio, M.; Napoletano, P. Unimib shar: A dataset for human activity recognition using acceleration data from smartphones. Appl. Sci. 2017, 7, 1101. [Google Scholar] [CrossRef]

- Cook, D.J. Learning setting-generalized activity models for smart spaces. IEEE Intell. Syst. 2012, 27, 32. [Google Scholar] [CrossRef]

- Cook, D.J.; Crandall, A.S.; Thomas, B.L.; Krishnan, N.C. CASAS: A smart home in a box. Computer 2012, 46, 62–69. [Google Scholar] [CrossRef]

- Cook, D.J.; Schmitter-Edgecombe, M. Assessing the quality of activities in a smart environment. Methods Inf. Med. 2009, 48, 480–485. [Google Scholar]

- Singla, G.; Cook, D.J.; Schmitter-Edgecombe, M. Recognizing independent and joint activities among multiple residents in smart environments. J. Ambient. Intell. Humaniz. Comput. 2010, 1, 57–63. [Google Scholar] [CrossRef]

- Cook, D.J.; Crandall, A.; Singla, G.; Thomas, B. Detection of social interaction in smart spaces. Cybern. Syst. Int. J. 2010, 41, 90–104. [Google Scholar] [CrossRef]

- Weiss, G.M.; Yoneda, K.; Hayajneh, T. Smartphone and smartwatch-based biometrics using activities of daily living. IEEE Access 2019, 7, 133190–133202. [Google Scholar] [CrossRef]

- Vaizman, Y.; Ellis, K.; Lanckriet, G. Recognizing detailed human context in the wild from smartphones and smartwatches. IEEE Pervasive Comput. 2017, 16, 62–74. [Google Scholar] [CrossRef]

- Zhang, M.; Sawchuk, A.A. USC-HAD: A daily activity dataset for ubiquitous activity recognition using wearable sensors. In Proceedings of the 2012 ACM Conference on Ubiquitous Computing, Pittsburgh, PA, USA, 5–8 September 2012; pp. 1036–1043. [Google Scholar]

- Stiefmeier, T.; Roggen, D.; Ogris, G.; Lukowicz, P.; Troster, G. Wearable activity tracking in car manufacturing. IEEE Pervasive Comput. 2008, 7, 42–50. [Google Scholar] [CrossRef]

- Martínez-Villaseñor, L.; Ponce, H.; Brieva, J.; Moya-Albor, E.; Núñez-Martínez, J.; Peñafort-Asturiano, C. UP-fall detection dataset: A multimodal approach. Sensors 2019, 19, 1988. [Google Scholar] [CrossRef]

- Kelly, J. UK Domestic Appliance-Level Electricity (UK-DALE) Dataset. 2017. Available online: https://jack-kelly.com/data/ (accessed on 6 February 2023).

- Arrotta, L.; Bettini, C.; Civitarese, G. The marble dataset: Multi-inhabitant activities of daily living combining wearable and environmental sensors data. In Proceedings of the Mobile and Ubiquitous Systems: Computing, Networking and Services: 18th EAI International Conference, MobiQuitous 2021, Virtual, 8–11 November 2021; pp. 451–468. [Google Scholar]

- Schuldt, C.; Laptev, I.; Caputo, B. Recognizing human actions: A local SVM approach. In Proceedings of the 17th International Conference on Pattern Recognition, Cambridge, UK, 26 August 2004; Volume 3, pp. 32–36. [Google Scholar]

- Blank, M.; Gorelick, L.; Shechtman, E.; Irani, M.; Basri, R. Actions as space-time shapes. In Proceedings of the Tenth IEEE International Conference on Computer Vision (ICCV’05), Beijing, China, 17–21 October 2005; Volume 1, pp. 1395–1402. [Google Scholar]

- Rodriguez, M.D.; Ahmed, J.; Shah, M. Action mach a spatio-temporal maximum average correlation height filter for action recognition. In Proceedings of the 2008 IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008; pp. 1–8. [Google Scholar]

- Sucerquia, A.; López, J.D.; Vargas-Bonilla, J.F. SisFall: A fall and movement dataset. Sensors 2017, 17, 198. [Google Scholar] [CrossRef]

- Niemann, F.; Reining, C.; Moya Rueda, F.; Nair, N.R.; Steffens, J.A.; Fink, G.A.; Ten Hompel, M. Lara: Creating a dataset for human activity recognition in logistics using semantic attributes. Sensors 2020, 20, 4083. [Google Scholar] [CrossRef] [PubMed]

- Anguita, D.; Ghio, A.; Oneto, L.; Parra, X.; Reyes-Ortiz, J.L. A public domain dataset for human activity recognition using smartphones. In Proceedings of the European Symposium on Artificial Neural Networks, Bruges, Belgium, 24–26 April 2013; pp. 437–442. [Google Scholar]

- Shoaib, M.; Bosch, S.; Incel, O.D.; Scholten, H.; Havinga, P.J. Complex human activity recognition using smartphone and wrist-worn motion sensors. Sensors 2016, 16, 426. [Google Scholar] [CrossRef] [PubMed]

- Chen, C.; Jafari, R.; Kehtarnavaz, N. UTD-MHAD: A multimodal dataset for human action recognition utilizing a depth camera and a wearable inertial sensor. In Proceedings of the 2015 IEEE International Conference on Image Processing (ICIP), Quebec City, QC, Canada, 27–30 September 2015; pp. 168–172. [Google Scholar]

- Reyes-Ortiz, J.L.; Oneto, L.; Samà, A.; Parra, X.; Anguita, D. Transition-aware human activity recognition using smartphones. Neurocomputing 2016, 171, 754–767. [Google Scholar] [CrossRef]

- Ramos, R.G.; Domingo, J.D.; Zalama, E.; Gómez-García-Bermejo, J.; López, J. SDHAR-HOME: A sensor dataset for human activity recognition at home. Sensors 2022, 22, 8109. [Google Scholar] [CrossRef]

- Arrotta, L.; Bettini, C.; Civitarese, G. MICAR: Multi-inhabitant context-aware activity recognition in home environments. Distrib. Parallel Databases 2022, 1–32. [Google Scholar] [CrossRef]

- Khater, S.; Hadhoud, M.; Fayek, M.B. A novel human activity recognition architecture: Using residual inception ConvLSTM layer. J. Eng. Appl. Sci. 2022, 69, 45. [Google Scholar] [CrossRef]

- Mohtadifar, M.; Cheffena, M.; Pourafzal, A. Acoustic-and Radio-Frequency-Based Human Activity Recognition. Sensors 2022, 22, 3125. [Google Scholar] [CrossRef]

- Delaine, F.; Faraut, G. Mathematical Criteria for a Priori Performance Estimation of Activities of Daily Living Recognition. Sensors 2022, 22, 2439. [Google Scholar] [CrossRef]

- Arrotta, L.; Civitarese, G.; Bettini, C. Dexar: Deep explainable sensor-based activity recognition in smart-home environments. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2022, 6, 1–30. [Google Scholar] [CrossRef]

- Stavropoulos, T.G.; Meditskos, G.; Andreadis, S.; Avgerinakis, K.; Adam, K.; Kompatsiaris, I. Semantic event fusion of computer vision and ambient sensor data for activity recognition to support dementia care. J. Ambient. Intell. Humaniz. Comput. 2020, 11, 3057–3072. [Google Scholar] [CrossRef]

- Hanif, M.A.; Akram, T.; Shahzad, A.; Khan, M.A.; Tariq, U.; Choi, J.I.; Nam, Y.; Zulfiqar, Z. Smart devices based multisensory approach for complex human activity recognition. Comput. Mater. Contin. 2022, 70, 3221–3234. [Google Scholar] [CrossRef]

- Roberge, A.; Bouchard, B.; Maître, J.; Gaboury, S. Hand Gestures Identification for Fine-Grained Human Activity Recognition in Smart Homes. Procedia Comput. Sci. 2022, 201, 32–39. [Google Scholar] [CrossRef]

- Syed, A.S.; Sierra-Sosa, D.; Kumar, A.; Elmaghraby, A. A hierarchical approach to activity recognition and fall detection using wavelets and adaptive pooling. Sensors 2021, 21, 6653. [Google Scholar] [CrossRef] [PubMed]

- Achirei, S.D.; Heghea, M.C.; Lupu, R.G.; Manta, V.I. Human Activity Recognition for Assisted Living Based on Scene Understanding. Appl. Sci. 2022, 12, 10743. [Google Scholar] [CrossRef]

- Zhong, C.L.; Li, Y. Internet of things sensors assisted physical activity recognition and health monitoring of college students. Measurement 2020, 159, 107774. [Google Scholar] [CrossRef]

- Wang, A.; Zhao, S.; Keh, H.C.; Chen, G.; Roy, D.S. Towards a Clustering Guided Hierarchical Framework for Sensor-Based Activity Recognition. Sensors 2021, 21, 6962. [Google Scholar] [CrossRef]

- Fan, C.; Gao, F. Enhanced human activity recognition using wearable sensors via a hybrid feature selection method. Sensors 2021, 21, 6434. [Google Scholar] [CrossRef]

- Şengül, G.; Ozcelik, E.; Misra, S.; Damaševičius, R.; Maskeliūnas, R. Fusion of smartphone sensor data for classification of daily user activities. Multimed. Tools Appl. 2021, 80, 33527–33546. [Google Scholar] [CrossRef]

- Muaaz, M.; Chelli, A.; Abdelgawwad, A.A.; Mallofré, A.C.; Pätzold, M. WiWeHAR: Multimodal human activity recognition using Wi-Fi and wearable sensing modalities. IEEE Access 2020, 8, 164453–164470. [Google Scholar] [CrossRef]

- Syed, A.S.; Syed, Z.S.; Shah, M.S.; Saddar, S. Using wearable sensors for human activity recognition in logistics: A comparison of different feature sets and machine learning algorithms. Int. J. Adv. Comput. Sci. Appl. 2020, 11, 644–649. [Google Scholar] [CrossRef]

- Chen, J.; Huang, X.; Jiang, H.; Miao, X. Low-cost and device-free human activity recognition based on hierarchical learning model. Sensors 2021, 21, 2359. [Google Scholar] [CrossRef]

- Gu, Z.; He, T.; Wang, Z.; Xu, Y. Device-Free Human Activity Recognition Based on Dual-Channel Transformer Using WiFi Signals. Wirel. Commun. Mob. Comput. 2022, 2022, 4598460. [Google Scholar] [CrossRef]

- Wu, Y.H.; Chen, Y.; Shirmohammadi, S.; Hsu, C.H. AI-Assisted Food Intake Activity Recognition Using 3D mmWave Radars. In Proceedings of the 7th International Workshop on Multimedia Assisted Dietary Management, Lisboa, Portugal, 10 October 2022; pp. 81–89. [Google Scholar]

- Qiao, X.; Feng, Y.; Liu, S.; Shan, T.; Tao, R. Radar Point Clouds Processing for Human Activity Classification using Convolutional Multilinear Subspace Learning. IEEE Trans. Geosci. Remote Sens. 2022, 60, 1–17. [Google Scholar] [CrossRef]

- Zhang, Z.; Meng, W.; Song, M.; Liu, Y.; Zhao, Y.; Feng, X.; Li, F. Application of multi-angle millimeter-wave radar detection in human motion behavior and micro-action recognition. Meas. Sci. Technol. 2022, 33, 105107. [Google Scholar] [CrossRef]

- Li, J.; Wang, Z.; Zhao, Z.; Jin, Y.; Yin, J.; Huang, S.L.; Wang, J. TriboGait: A deep learning enabled triboelectric gait sensor system for human activity recognition and individual identification. In Proceedings of the Adjunct Proceedings of the 2021 ACM International Joint Conference on Pervasive and Ubiquitous Computing and Proceedings of the 2021 ACM International Symposium on Wearable Computers, Virtual, 21–26 September 2021; pp. 643–648. [Google Scholar]

- Abdel-Basset, M.; Hawash, H.; Chakrabortty, R.K.; Ryan, M.; Elhoseny, M.; Song, H. ST-DeepHAR: Deep learning model for human activity recognition in IoHT applications. IEEE Internet Things J. 2020, 8, 4969–4979. [Google Scholar] [CrossRef]

- Majidzadeh Gorjani, O.; Proto, A.; Vanus, J.; Bilik, P. Indirect recognition of predefined human activities. Sensors 2020, 20, 4829. [Google Scholar] [CrossRef]

- Xiao, S.; Wang, S.; Huang, Z.; Wang, Y.; Jiang, H. Two-stream transformer network for sensor-based human activity recognition. Neurocomputing 2022, 512, 253–268. [Google Scholar] [CrossRef]

- Islam, M.M.; Nooruddin, S.; Karray, F. Multimodal Human Activity Recognition for Smart Healthcare Applications. In Proceedings of the 2022 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Prague, Czech Republic, 9–12 October 2022; pp. 196–203. [Google Scholar]

- Alexiadis, A.; Nizamis, A.; Giakoumis, D.; Votis, K.; Tzovaras, D. A Sensor-Independent Multimodal Fusion Scheme for Human Activity Recognition. In Proceedings of the Pattern Recognition and Artificial Intelligence: Third International Conference, ICPRAI 2022, Paris, France, 1–3 June 2022; pp. 28–39. [Google Scholar]

- Dhekane, S.G.; Tiwari, S.; Sharma, M.; Banerjee, D.S. Enhanced annotation framework for activity recognition through change point detection. In Proceedings of the 2022 14th International Conference on COMmunication Systems & NETworkS (COMSNETS), Bangalore, India, 4–8 January 2022; pp. 397–405. [Google Scholar]

- Hiremath, S.K.; Nishimura, Y.; Chernova, S.; Plotz, T. Bootstrapping Human Activity Recognition Systems for Smart Homes from Scratch. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2022, 6, 1–27. [Google Scholar] [CrossRef]

- Minarno, A.E.; Kusuma, W.A.; Wibowo, H.; Akbi, D.R.; Jawas, N. Single triaxial accelerometer-gyroscope classification for human activity recognition. In Proceedings of the 2020 8th International Conference on Information and Communication Technology (ICoICT), Yogyakarta, Indonesia, 24–26 June 2020; pp. 1–5. [Google Scholar]

- Maswadi, K.; Ghani, N.A.; Hamid, S.; Rasheed, M.B. Human activity classification using Decision Tree and Naive Bayes classifiers. Multimed. Tools Appl. 2021, 80, 21709–21726. [Google Scholar] [CrossRef]

- Thakur, D.; Biswas, S. Online Change Point Detection in Application with Transition-Aware Activity Recognition. IEEE Trans.-Hum.-Mach. Syst. 2022, 52, 1176–1185. [Google Scholar] [CrossRef]

- Ji, X.; Zhao, Q.; Cheng, J.; Ma, C. Exploiting spatio-temporal representation for 3D human action recognition from depth map sequences. Knowl.-Based Syst. 2021, 227, 107040. [Google Scholar] [CrossRef]

- Albeshri, A. SVSL: A human activity recognition method using soft-voting and self-learning. Algorithms 2021, 14, 245. [Google Scholar] [CrossRef]

- Aubry, S.; Laraba, S.; Tilmanne, J.; Dutoit, T. Action recognition based on 2D skeletons extracted from RGB videos. In Proceedings of the MATEC Web of Conferences, Sibiu, Romania, 5–7 June 2019; Volume 277, p. 02034. [Google Scholar]

- Vu, D.Q.; Le, N.T.; Wang, J.C. (2+1) D Distilled ShuffleNet: A Lightweight Unsupervised Distillation Network for Human Action Recognition. In Proceedings of the 2022 26th International Conference on Pattern Recognition (ICPR), Montreal, QC, Canada, 21–25 August 2022; pp. 3197–3203. [Google Scholar]

- Hu, Y.; Wang, B.; Sun, Y.; An, J.; Wang, Z. Genetic algorithm–optimized support vector machine for real-time activity recognition in health smart home. Int. J. Distrib. Sens. Netw. 2020, 16, 1550147720971513. [Google Scholar] [CrossRef]

- Chen, Y.; Ke, W.; Chan, K.H.; Xiong, Z. A Human Activity Recognition Approach Based on Skeleton Extraction and Image Reconstruction. In Proceedings of the 5th International Conference on Graphics and Signal Processing, Nagoya, Japan, 25–27 June 2021; pp. 1–8. [Google Scholar]

- Yan, S.; Lin, K.J.; Zheng, X.; Zhang, W. Using latent knowledge to improve real-time activity recognition for smart IoT. IEEE Trans. Knowl. Data Eng. 2019, 32, 574–587. [Google Scholar] [CrossRef]

- Ramos, R.G.; Domingo, J.D.; Zalama, E.; Gómez-García-Bermejo, J. Daily human activity recognition using non-intrusive sensors. Sensors 2021, 21, 5270. [Google Scholar] [CrossRef] [PubMed]

- Javed, A.R.; Sarwar, M.U.; Khan, S.; Iwendi, C.; Mittal, M.; Kumar, N. Analyzing the effectiveness and contribution of each axis of tri-axial accelerometer sensor for accurate activity recognition. Sensors 2020, 20, 2216. [Google Scholar] [CrossRef]

- Zhang, Y.; Tian, G.; Zhang, S.; Li, C. A knowledge-based approach for multiagent collaboration in smart home: From activity recognition to guidance service. IEEE Trans. Instrum. Meas. 2019, 69, 317–329. [Google Scholar] [CrossRef]

- Franco, P.; Martinez, J.M.; Kim, Y.C.; Ahmed, M.A. IoT based approach for load monitoring and activity recognition in smart homes. IEEE Access 2021, 9, 45325–45339. [Google Scholar] [CrossRef]

- Noor, M.H.M.; Salcic, Z.; Wang, K.I.K. Ontology-based sensor fusion activity recognition. J. Ambient. Intell. Humaniz. Comput. 2020, 11, 3073–3087. [Google Scholar] [CrossRef]

- Mekruksavanich, S.; Jitpattanakul, A. Exercise activity recognition with surface electromyography sensor using machine learning approach. In Proceedings of the 2020 Joint International Conference on Digital Arts, Media and Technology with ECTI Northern Section Conference on Electrical, Electronics, Computer and Telecommunications Engineering (ECTI DAMT & NCON), Pattaya, Thailand, 11–14 March 2020; pp. 75–78. [Google Scholar]

- Minarno, A.E.; Kusuma, W.A.; Wibowo, H. Performance comparisson activity recognition using logistic regression and support vector machine. In Proceedings of the 2020 3rd International Conference on Intelligent Autonomous Systems (ICoIAS), Singapore, 26–29 February 2020; pp. 19–24. [Google Scholar]

- Muaaz, M.; Chelli, A.; Gerdes, M.W.; Pätzold, M. Wi-Sense: A passive human activity recognition system using Wi-Fi and convolutional neural network and its integration in health information systems. Ann. Telecommun. 2022, 77, 163–175. [Google Scholar] [CrossRef]

- Imran, H.A.; Latif, U. Hharnet: Taking inspiration from inception and dense networks for human activity recognition using inertial sensors. In Proceedings of the 2020 IEEE 17th International Conference on Smart Communities: Improving Quality of Life Using ICT, IoT and AI (HONET), Charlotte, NC, USA, 14–16 December 2020; pp. 24–27. [Google Scholar]

- Betancourt, C.; Chen, W.H.; Kuan, C.W. Self-attention networks for human activity recognition using wearable devices. In Proceedings of the 2020 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Toronto, ON, Canada, 11–14 October 2020; pp. 1194–1199. [Google Scholar]

- Zhou, Z.; Yu, H.; Shi, H. Human activity recognition based on improved Bayesian convolution network to analyze health care data using wearable IoT device. IEEE Access 2020, 8, 86411–86418. [Google Scholar] [CrossRef]

- Chang, J.; Kang, M.; Park, D. Low-power on-chip implementation of enhanced svm algorithm for sensors fusion-based activity classification in lightweighted edge devices. Electronics 2022, 11, 139. [Google Scholar] [CrossRef]

- LeCun, Y.; Cortes, C.; Burges, C. MNIST Handwritten Digit Database. 2010. Available online: http://yann.lecun.com/exdb/mnist/ (accessed on 6 February 2023).

- Zhu, J.; Lou, X.; Ye, W. Lightweight deep learning model in mobile-edge computing for radar-based human activity recognition. IEEE Internet Things J. 2021, 8, 12350–12359. [Google Scholar] [CrossRef]

- Helmi, A.M.; Al-qaness, M.A.; Dahou, A.; Abd Elaziz, M. Human activity recognition using marine predators algorithm with deep learning. Future Gener. Comput. Syst. 2023, 142, 340–350. [Google Scholar] [CrossRef]

- Angerbauer, S.; Palmanshofer, A.; Selinger, S.; Kurz, M. Comparing human activity recognition models based on complexity and resource usage. Appl. Sci. 2021, 11, 8473. [Google Scholar] [CrossRef]

- Ahmed, N.; Rafiq, J.I.; Islam, M.R. Enhanced human activity recognition based on smartphone sensor data using hybrid feature selection model. Sensors 2020, 20, 317. [Google Scholar] [CrossRef]

| Reference | Name | Sensors | Subjects No/Type of Environment | Actions/Contexts |

|---|---|---|---|---|

| Roggen et al. [42] | Opportunity | 12/Lab | Body-worn, object-attached, ambient sensors (microphones, cameras, pressure sensors). | Getting up, grooming, relaxing, preparing/consuming coffee/sandwich, and cleaning up; Opening/closing doors, drawers, fridge, dishwasher, turning lights on/off, drinking. |

| Reiss and Stricker [43] | PAMAP2 | 9/Lab | IMUs, ECG. | Lie, sit, stand, walk, run, cycle, iron, vacuum clean, rope jump, ascend/descend stairs, watch TV, computer work, drive car, fold laundry, clean house, play soccer. |

| Micucci et al. [44] | UniMiB SHAR | 30/Lab | Acceleration sensor in a Samsung Galaxy Nexus I9250 smartphone. | ADLs (Walking, Going upstairs/downstairs, Sitting down, Running, Standing up from sitting/laying, Lying down from standing, and Jumping, eight types of falls. |

| Cook and Diane [45] | CASAS: Aruba | 1 adult, 2 occasional visitors/Real world | Environment sensors: motion, light, door, and temperature. | Movement from bed to bathroom, eating, getting/leaving home, housework, preparing food, relaxing, sleeping, washing dishes, working. |

| Cook et al. [46] | CASAS: Cairo | 2 adults, 1 dog/Real world | Environment sensors: motion and light sensors. | Bed (four different types), bed to toilet, breakfast, dinner, laundry, leave home, lunch, night wandering, work, medicine. |

| Cook and Schmitter-Edgecombe [47] | CASAS: Kyoto Daily life | 20/Real world | Environment sensors: motion, associated with objects, from the medicine box, a flowerpot, a diary, a closet, water, kitchen, and telephone use sensors. | Making a call, washing hands, cooking, eating, washing the dishes. |

| Singla et al. [48] | CASAS: Kyoto Multiresident | 2 (pairs taken from 40 participants)/ Real world | Environment sensors: motion, item, cabinet, water, burner, phone and temperature. | Medication dispensing, clothes hanging, furniture moving, lounging, plant watering, kitchen sweeping, checkers playing, dinner prep, table setting, magazine reading, bill paying simulation, picnic prep, dishes retrieving, picnic packing. |

| Cook and Schmitter-Edgecombe [47] | CASAS: Milan | 1 woman, 1 dog, 1 occasional visitor/ Real world | Environment sensors: motion, temperature, door closure. | Bathing, bed to toilet, cook, eat, leave home, read, watch TV, sleep, take medicine, work (desk, chores), meditation. |

| Cook et al. [49] | CASAS: Tokyo | 2/Real world | Environment sensors: motion, door closure, light. | Working, preparing meals, and sleeping. |

| Cook and Schmitter-Edgecombe [47] | CASAS: Tulum | 2/Real world | Environment sensors: motion, temperature. | Breakfast, lunch, enter home, group meeting, leave home, snack, wash dishes, watch TV. |

| Weiss et al. [50] | WISDM | 51 (undergraduate and graduate university students between the ages of 18 and 25)/Lab | Accelerometer and gyroscope sensors, which are available in both smartphones and smartwatches. | Walking, jogging, stair-climbing, sitting, standing, soccer kicking, basketball dribbling, tennis catching, typing, writing, clapping, teeth brushing, clothes folding, eating (pasta, soup, sandwich, chips), cup drinking. |

| Vaizman et al. [51] | ExtraSensory | 60/Real world | Accelerometers, gyroscopes, and magnetometers sensors, which are available in both smartphones and smartwatches. | Sitting, walking, lying, standing, bicycling, running outdoors, talking, exercise at the gym, drinking, watching TV, traveling on a bus while standing. |

| Zhang et al. [52] | USC-HAD | 14/Lab | three-axis accelerometer, three-axis gyroscope, and a three-axis magnetometer. | Walking, walking upstairs/downstairs, running, jumping, sitting, standing, sleeping, elevator up/down. |

| Stiefmeier et al. [53] | Skoda | 8/Lab | RFID tags and readers, force-sensitive resistors, IMUs (Xsens), ultrawideband position (Ubisense). | Opening the trunk, closing the engine hood, opening the back right door, checking the fuel, opening both right doors, check gaps at the front left door. |

| Martinez-Villasenor et al. [54] | UP-Fall | 17/Lab | Accelerometer, gyroscope, ambient light sensor, electroencephalograph (EEG), infrared sensors, cameras. | Walking, standing, picking up an object, sitting, jumping, laying down, falling forward using hands, falling forward using knees, falling backward, falling sitting in an empty chair, falling sideward. |

| UK-DALE dataset [55] | UK-DALE | N.A./Real world | Smart plugs. | Energy consumption of appliances: dishwasher, electric oven, fridge, heat pump, tumble dryer, washing machine. |

| Arrotta et al. [56] | MARBLE | 12/Lab | Inertial (smartwatch), magnetic, pressure, plug. | Answering phone, clearing table, cooking, eating, entering/leaving home, making phone call, preparing cold meal, setting up table, taking medicines, using PC, washing dishes, watching TV. |

| Schuldt et al. [57] | KTH | 25/Lab | Cameras. | Walking, jogging, running, boxing, hand waving, hand clapping. |

| Blank et al. [58] | Weizmann | 9/Lab | Cameras. | Running, walking, jumping-jack, jumping-forward-on-two-legs, jumping-in-place-on-two-legs, galloping-sideways, waving-two-hands, waving-one-hand, bending. |

| Rodriguez et al. [59] | UCF Sports Action | N.A./Lab | Cameras. | Sports-related actions. |

| Sucerquia et al. [60] | SisFall | 38/Lab | Accelerometer and gyroscope fixed to the waist of the participants. | Walking/jogging falls (slips, trips, fainting), rising/sitting falls, sitting falls (fainting, sleep), walking (slow, quick), jogging (slow, quick), stair-climbing (slow, quick), chair sitting/standing (half, low height), chair collapsing, lying-sitting transitions, ground position changes, bending-standing, car entering/exiting, walking stumbling, gentle jumping. |

| Niemann et al. [61] | LARa | 14/Lab | Optical marker-based Motion Capture (OMoCap), inertial measurement units (IMUs), and an RGB camera. | Standing, walking, using a cart, handling objects in different directions, synchronization. |

| Anguita et al. [62] | UCI-HAR | 30/Lab | Accelerometers and gyroscopes embedded in a Samsung Galaxy S II smartphone. | Standing, sitting, laying down, walking, walking downstairs/upstairs. |

| Shoaib et al. [63] | UT_complex | 10/Lab | Accelerometer, gyroscope, and linear acceleration sensor in a smartphone. | Walking, jogging, biking, walking upstairs/downstairs, sitting, standing, eating, typing, writing, drinking coffee, giving a talk, smoking one cigarette. |

| Chen et al. [64] | UTD-MHAD | 8/Lab | Kinect camera and a wearable inertial sensor. | Sports actions (bowling, tennis serve, baseball swing), hand gestures (drawing ‘x’, triangle, circle), daily activities (door knocking, sit-stand, stand-sit transitions), training exercises (arm curl, lunge, squat). |

| Reyes-Ortiz et al. [65] | UCI-SBHAR | 30/Lab | IMU of a smartphone carried out on the waist. | Standing, sitting, laying down, walking, walking downstairs/upstairs. |

| Reference | Methods | Dataset/s | Performance | Sensor/s | Actions |

|---|---|---|---|---|---|

| Ramos et al. [66] | DL techniques based on recurrent neural networks are used for activity recognition. | Self collected (SDHAR-HOME dataset), made public. Two users living in the same household. | A = 0.8829 (min.), A = 0.9091 (max). | Motion, Door, Window, Temperature, Humidity, Vibration, Smart Plug, Light Intensity. | Taking medication, preparing meals, personal hygiene |

| Arrotta et al. [67] | Combined semi-supervised learning and knowledge-based reasoning, cache-based active learning. | MARBLE | F1S = 0.89 (avg.). | Smartwatches equipped with inertial sensors, positioning system data, magnetic sensors, pressure mat sensors, and plug sensors. | Answering the phone, cooking, eating, washing dishes. |

| Khater et al. [68] | ConvLSTM, ResIncConvLSTM layer (residual and inception combined with ConvLSTM layer), | KTH, Weizmann, UCF Sports Action. | A = 0.695 (min.), A = 0.999 (max.). | Cameras. | Walking, jogging, running, boxing, dual hand waving, clapping, side galloping, bending, single hand waving, spot jumping, jumping jack, skipping, diving, golf swinging, kicking, lifting, horse riding, skateboarding, bench swinging, side swinging. |

| Mohtadifar et al. [69] | Doppler shift, Mel-spectrogram feature, feature-level fusion, six classifiers: MLP, SVM, RFC, ERT, KNN, and GTB. | Self collected involving four subjects. | A = 0.98 (avg.). | Radio frequency (Vector Network Analyzer), microphone array. | Falling, walking, sitting on a chair, standing up from a chair. |

| Delaine et al. [70] | New metrics for a priori estimation of the performance of model-based activity recognition: participation counter, sharing rate, weight, elementary contribution, and distinguishability. | Self collected involving one subject performing each activity 20 times with variations in execution. | N.A. | Door sensors, motion detectors, smart outlets, water-flow sensors. | Cooking, preparing a hot beverage, taking care of personal hygiene. |

| Arrotta et al. [71] | CNNs and three different XAI approaches: Grad-CAM, LIME, and Model Prototypes. | Self collected, CASAS. | A = 0.8 (min.), A = 0.9 (max). | Magnetic sensors on doors and drawers, pressure mat on chairs, smart-plug sensors for home appliances, smartwatches collecting inertial sensor data. | Phone answering, table clearing, hot meal cooking, eating, home entering, home leaving, phone calling, cold meal cooking, table setting, medicine taking, working, dish washing, TV watching. |

| Hanif et al. [73] | Neural network and KNN classifiers. | Self collected. | A = 0.99340162 (avg.). | Wrist wearable device and pocket positioned smart phone (accelerometer, gyroscope, magnetometer). | 25 basic and complex human activities (e.g., eating, smoking, drinking, talking, etc.). |

| Syed et al. [75] | L4 Haar wavelet for feature extraction, 4-2-1 1D-SPP for summarization, and hierarchical KNN for classification. | SisFall. | F1S = 0.9467 (avg.). | 2 accelerometers and 1 gyroscope placed on waist. | Slow/quick walking, slow/quick jogging, slow/quick stair-climbing, slow/quick chair sitting, chair collapsing, slow/quick lying-sitting, position changing (back-lateral-back), slow knee bending/straight standing, seated car entering/exiting, walking stumbling, high-object jumping. |

| Roberge et al. [74] | Supervised learning and MinMaxScaler, to recognize hand gestures from inertial data. | Self collected involving 21 subjects, made publicly available. | A = 0.83 (avg.). | Wristband equipped with a triaxial accelerometer and gyroscope. | Simple gestures for cooking activities, such as stirring, cutting, seasoning. |

| Syed et al. [82] | XGBoost algorithm. | LARa. | A = 0.7861 (avg.). | Accelerometer and gyroscope sensor readings on both the legs, arms and the chest/mid-body. | Standing, walking, cart walking, upwards handling (hand raised to shoulder), centered handling (no bending/lifting/kneeling), downwards handling (hands below knees, kneeling). |

| Muaaz et al. [81] | MDS, magnitude data, time- and frequency-domain features, feature-level fusion, SVM. | Self collected. | A = 0.915 (min.), A = 1.000 (max.). | IMU sensor placed on the lower back, Wi-fi receiver. | Walking, falling, sitting, picking up an object from the floor. |

| Wang et al. [78] | Clustering-based activity confusion index, data-driven approach, hierarchical activity recognition, confusion relationships. | UCI-HAR. | A = 0.8629 (min.), A = 0.9651 (max.). | Smartphone equipped with accelerometers and gyroscopes. | Standing, sitting, laying down, walking, downstairs/upstairs. |

| Fan et al. [79] | BSO, deep Q-network, hybrid feature selection methodology. | UCI-HAR, WISDM, UT_complex. | A = 0.9841 (avg.). | Inertial sensors integrated into mobile phones: one in the right pocket and the other on the right wrist to emulate one smartwatch. | Walking, jogging, sitting, standing, biking, using stairs, typing, drinking coffee, eating, giving a talk, smoking, with each carrying. |

| Sengul et al. [80] | Matrix time series, feature fusion, modified Better-than-the-Best Fusion (BB-Fus), stochastic gradient descent, optimal Decision Tree (DT) classifier, statistical pattern recognition, k- Nearest Neighbor (kNN), Support Vector Machine (SVM). | Self collected involving 20 subjects. | A = 0.9742 (min.), A = 0.9832 (max.). | Accelerometer and gyroscope integrated into smartwatches (Sony SWR50). | Being in a meeting, walking, driving with a motorized vehicle. |

| Chen et al. [83] | Received Signal Strength Indicator (RSSI), coarse-to-fine hierarchical classification, butterworth low-pass filter, and a SVM, GRU, RNN. | Self collected involving 6 subjects. | A = 0.9645 (avg.). | WiFi-RSSI sensors nodes. | Standing, sitting, sleeping, lying, walking, running. |

| Gu et al. [84] | CSI features, DL, dual-channel convolution-enhanced transformer. | Self collected involving 21 subjects. | A = 0.933 (min.), A = 0.969 (max.). | WiFi-CSI sensors nodes. | Walking, standing up, sitting down, fall to the left/right/front. |

| Wu et al. [85] | 3D point cloud data, 3D radar, voxelization with a bounding box, CNN-Bi-LSTM classifier. | Self collected. | A = 0.9673 (avg.). | 3D mmWave radars. | Food intake activities. |

| Qiao et al. [86] | Time-range-Doppler radar point clouds, convolutional multilinear principal component analysis. | Self collected involving 10 volunteers. | A = 0.9644 (avg.), P = 0.94 (avg.), R = 0.947 (avg.), SP = 0.988 (avg.), F1S = 0.944 (avg.). | Millimeter-wave frequency-modulated continuous waveform (FMCW) radar. | Normal walking, walking with plastic poles in both hands, jumping, falling down, sitting down, standing up. |

| Zhang et al. [87] | Energy domain ratio method, local tangent space alignment, adaptive extreme learning machine, multiangle entropy, improved extreme learning machine. | Self collected with one person tested at a time. | A = 0.86 (min.), A = 0.98 (max.). | Millimeter-wave radar operating at 77–79 GHz. | Chest expanding, standing walk (swing arm), standing long jump, left-right turn run, one-arm swing, alternating arms swing, alternating legs swing. |

| Li et al. [88] | Bidirectional LSTM (BiLSTM), Residual Dense-BiLSTM, Analog-to-digital converter, Adam optimizer, GPU processing. | Self collected involving 8 subjects. | A = 0.98 (avg.). | TENG-based gait sensor. | Standing, jogging, walking, running, jumping. |

| Zhong et al. [77] | Health parameters, Physical Activity Recognition and Monitoring, Support Vector Machine (SVM), Internet of Things. | Self collected involving 10 healthy students. | A = 0.98 (avg.). | Wearable ECG. | Sitting, standing, walking, jogging, jumping, biking, climbing, lying. |

| Reference | Methods | Dataset/s | Performance | Sensor/s | Actions |

|---|---|---|---|---|---|

| Xiao et al. [91] | Self-attention mechanism and the two-stream structure to model the temporal-spatial dependencies in multimodal environments. | PAMAP2, Opportunity, USC–HAD, and Skoda | F1S = 0.98 (PAMAP2), F1S = 0.69 (Opportunity), F1S = 0.57 (USC–HAD), F1S = 0.95 (Skoda). | IMU, accelerometer, gyroscope, magnetometer, and orientation sensor. | Walking, running, jumping, cycling, standing, sitting. |

| Bocus et al. [24] | Data collection from multiple sensors, in two furnished rooms with up to six subjects performing day-to-day activities. Validated using CNN. | Self collected, new publicly available dataset. | A = 0.935 (WiFi CSI), A = 0.865 (PWR), A = 0.858 (Kinect), A = 0.967 (data fusion). | WiFi CSI, Passive WiFi Radar, Kinect camera. | Sitting down on a chair, standing from the chair, laying down on the floor, standing from the floor, upper body rotation, walking. |

| Islam et al. [92] | CNNs, Convolutional LSTM. | UP-Fall | A = 0.9761 | Accelerometers, gyroscopes, RGB cameras, ambient luminosity sensors, electroencephalograph headsets, and context-aware infrared sensors. | Walking, standing, sitting, picking up an object, jumping, laying, falling forward using hands, falling forward using knees, falling backwards, falling sideward, falling sitting in an empty chair. |

| Alexiadis et al. [93] | Feed-forward artificial neural network as the fusion model. | ExtraSensory | F1S = 0.86 | Accelerometers, gyroscopes, magnetometers, watch compasses, and audio. | Sitting (toilet, eating, TV watching, cooking, cleaning), standing (cooking, eating, cleaning, TV watching, toilet), lying (TV watching, eating), walking (eating, TV watching, cooking, cleaning). |

| Dhekane et al. [94] | Similarity-based CPD, Sensor Distance Error (SDE), Feature Extraction, Classification, Noise Handling, Annotations. | CASAS (Aruba, Kyoto, Tulum, and Milan). | A = 0.9534 (min.), A = 0.9846 (max.). | Motion, light, door and temperature, associated with objects. | All included in Aruba, Kyoto, Tulum, and Milan. |

| Hiremath et al. [95] | Passive observation of raw sensor data, Representation Learning, Motif Learning and Discovery, Active Learning. | CASAS (Aruba, Milan) | A = 0.286 (min.), A = 0.944 (max.) | Door, motion, temperature. | Bathroom, bed to toilet, meal preparation, wash dishes, kitchen activity, eating, dining room activity, enter/leave home, relax, read, watch TV, sleep, meditate, work, desk activity, chores, house keeping, bedroom activity. |

| Abdel-Basset et al. [89] | Supervised dual-channel model, LSTM, temporal-spatial fusion, convolutional residual network. | UCI-HAR, WISDM. | A = 0.977 (avg.), F1S = 0.975 (avg.). | Accelerometer and gyroscope in wearable devices. | Upstairs, downstairs, walking, standing, sitting, laying, and jogging. |

| Gorjani et al. [90] | Multilayer perceptron, context awareness, single-user, long-term context, multimodal, data fusion. | Self collected involving 1 person for 7 days. | A = 0.912 (min.), A = 1.000 (max). | 2 IMU (gyroscope, accelerometer, magnetometer, barometer) on the right hand and the right leg, KNX sensors for room temperature, humidity level (%), CO2 Concentration level (ppm). | Relaxing with minimal movements, using the computer, preparing tea/sandwich, eating breakfast, wiping the tables/vacuum cleaning, exercising using stationary bicycle. |

| Reference | Methods | Dataset/s | Performance | Sensor/s | Actions |

|---|---|---|---|---|---|

| Zin et al. [10] | UV-disparity maps, spatial-temporal features, distance-based features, automatic rounding data fusion. | Self collected | A = 0.865 (min.), A = 1.000 (max.). | Depth cameras | Outside the room, transition, seated in the wheelchair, standing, sitting on the bed, lying on the bed, receiving assistance, falling. |

| Hu et al. [103] | Genetic algorithm-optimized SVM for real-time recognition. | CASAS | mF1S = 0.9 | Motion and door sensors. | Bathe, bed–toilet transition, cook, eat, leaving, personal hygiene, sleep, toilet, wash dishes, work at table, and other activity. |

| Chen et al. [104] | OpenPose library for skeleton extraction, CNN classifier, real-time processing. | Self collected from real public places. | A = 0.973 | RGB camera | Squat, stand, walk, and work. |

| Yan et al. [105] | Unsupervised feature learning, enhanced topic-aware Bayesian, HMM. | CASAS (Aruba, Cairo, Tulum, Hhl02, Hhl04). | A = 0.8357 (min.), F1S = 0.5667 (min.), A = 0.9900 (max.), F1S = 0.9807 (max.). | Environmental sensors (see CASAS). | Meal prepare, relax, eat, work, sleep, wash dishes, bed to toilet, enter home, leave home, housekeeping, resperate. |

| Ramos et al. [106] | Bidirectional LSTM networks, sliding window. | CASAS (Milan). | P = 0.90 (min.), P = 0.98 (max.), R = 0.88 (min.), R = 0.99 (max.), F1S = 0.89 (min.), F1S = 0.98 (max.). | Environmental sensors (see CASAS). | Bed to toilet, chores, desk activity, dining rm activity, eve meds, guest bathroom, kitchen activity, leave home, master bathroom, meditate, watch tv, sleep, read, morning meds, master bedroom activity, others. |

| Minarno et al. [96] | Logistic regression, multiuser, long-term context, lightweight model, and real-time processing. | UCI-HAR. | A = 0.98 (max.). | Triaxial accelerometer and gyroscope embedded in wearable devices. | Laying, Standing, Sitting, Walking, Walking Upstairs, Walking, Downstairs. |

| Maswadi et al. [97] | Naive Bayes (NB), DT, multiuser, long-term context, real-time processing. | UTD-MHAD. | A = 0.886 (min.), A = 0.999 (max.). | Accelerometer placed at four different locations: right arm, right biceps, waist, and belly. | Sitting, standing, walking, sitting down and standing up. |

| Thakur et al. [98] | Online CPD strategy, correlation-based feature selection, ensemble classifier. | UCI-SBHARPT. | A = 0.9983 (avg.). | Accelerometer and gyroscope sensors of a smartphone. | Walking, walking upstairs, walking downstairs), three static activities (sitting, standing, lying), and six transitional activities (stand-to-sit, sit-to-stand, sit-to-lie, lie-to-sit, stand-to-lie, lie-to-stand. |

| Reference | Methods | Dataset/s | Performance | Sensor/s | Actions |

|---|---|---|---|---|---|

| Zhang et al. [108] | Four-layer architecture (physical layer, middleware layer, knowledge management layer, service layer), Unordered Actions and Temporal Property of Activity (UTGIA), Agent Knowledge Learning. | Self collected in a lab setting simulating a smart home, involving 8 participants. | A = 0. 9922 | Pressure, proximity, motion (ambient). | Take medicine, set kitchen table, make tea, make instant coffe, make hot drink, heat food, make sweet coffe, make cold drink. |

| Noor et al. [110] | Ontology, Protégé, reasoner. | Self collected in a lab setting, involving 20 participants; OPPORTUNITY. | A = 0.915 | Burner sensor, water tap sensor, item sensor, chair sensor, drawer sensor, flush sensor, pir sensor, wearable sensors (accelerometers, gyroscopes, magnetometers). | Getting up, grooming, relaxing, coffee/sandwich prep and consumption, cleaning; door/drawer/fridge/dishwasher operation, light control, drinking in various positions. |

| Franco et al. [109] | ILM, IoT, HAN, FFNN, LSTM, SVM. | UK-DALE | A = 0.95385 (FFNN), A = 0.93846 (LSTM), A = 0.83077 (SVM). | Smart plugs. | Washing the dishes, baking food, ironing, drying hair, doing laundry, sleeping, unoccupied. |

| Stavropoulos et al. [72] | OWL, Sparql, Gaussian Mixture Model Clustering, Fisher Encoding, Support Vector Machine, Sequential Statistical Boundary Detection. | Self collected in a clinical setting, 98 participant trials. | R = 0.825, P = 0.808. | Ip camera, microphone, worn accelerometer, smart plugs, object-tag with accelerometer. | Answering phone, establishing account balance, preparing drink, preparing drug box. |

| Mekruksavanich et al. [111] | sEMG, MLP, DT. | Self collected involving 10 subjects. | P = 0.9852 (avg.), R = 0.9650 (avg.), A = 0.9900 (avg.). | Wearable sEMG. | Exercise activities. |

| Minarno et al. [112] | LG, SVM, KNN. | UCI-HAR. | A = 0.9846 (min.), A = 0.9896 (max.). | Accelerometer and a gyroscope embedded in a smartphone. | Sitting, standing, lying, walking, walking up/down stairs. |

| Javed et al. [107] | MLP, Integration with other smart-home systems, high-performance computing. | WISDM, Self collected. | A = 0.93 (avg.). | 2-axis accelerometer worn in front pant’s leg pocket. | Standing, sitting, downstairs, walking, upstairs, jogging. |

| Muaaz et al. [113] | CNN, Wi-Fi CSI, spectrograms, health information systems (HIS). | Self collected involving 9 subjects. | A = 0.9778 (avg.). | Wi-Fi NICs in the 5 GHz band. | Walking, falling, sitting on a chair, picking up an object from the floor. |

| Reference | Methods | Dataset/s | Performance | Sensor/s | Actions |

|---|---|---|---|---|---|

| Zhou et al. [116] | Back-Dispersion Communication, Wearable Internet of Things, DL, Bayesian Networks, EDL, Variable Autoencoder, Convolution Layers. | Self collected. | A = 0.80 (min.), A = 0.97 (max.). | Wearable Sensor Network. | Walking. |

| Chang et al. [117] | IoT, ESVM, SVM, CNN, Raspberry Pi, STM32 Discovery Board, Tensorflow Lite, Valgrind Massif profiler. | MNIST [118]. | A = 0.822, P = 0.8275, R = 0.8189, F1S = 82.32. | N.A. | N.A. |

| Zhu et al. [119] | STFT, CNN, ARM platform | Self collected. | A = 0.9784. | Infineon Sense2GoL Doppler radar. | Running, walking, walking while holding a stick, crawling, boxing while moving forward, boxing while standing in place, sitting still. |

| Helmi et al. [120] | SVM, RFC, RCN, RNN, BiGRU, MPA, PSO, DE, GSA, SSA. | Opportunity, PAMAP2, UniMiB-SHAR. | A = 0.8299 (min.), A = 0.9406 (max.). | Tri-axial accelerometer, gyroscope, magnetometer. | Open/close door/fridge/drawer, clear table, toggle switch, sip from cup, ADLs, fall actions. |

| Angerbauer et al. [121] | SVM, CNN, RNN. | UCI-HAR. | F1S=0.8834 (min.), F1S = 0.9562 (max.). | Accelerometer and gyroscope from a pocket-worn smartphones. | Standing, sitting, laying, walking, walking upstairs, walking downstairs. |

| Ahmed et al. [122] | SFFS, multiclass SVM. | UCI-HAR. | A = 0.9681 (avg.). | Accelerometer and gyroscope from a pocket-worn smartphones. | standing, sitting, lying, walking, walking downstairs, walking upstairs, stand-to-sit, sit-to-stand, sit-to-lie, lie-to-sit, stand-to-lie, lie-to-stand. |

| Imran et al. [114] | Convolutions Neural Network, multiuser, long-term context, multimodal, lightweight processing. | UCI-HAR, WISDM. | A = 0.925 (avg.). | Accelerometer and gyroscope in wearable devices. | Standing, sitting, lying, walking, walking upstairs, walking downstairs, jogging. |

| Betancourt et al. [115] | Self-attention network, multiuser, long-term context, multimodal, lightweight processing. | UCI-HAR, Self collected (publicly released with this study). | A = 0.97 (avg.). | Accelerometer, gyroscope, and magnetometer embedded in wearable devices. | Standing, sitting, lying, walking, walking upstairs, walking downstairs. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Diraco, G.; Rescio, G.; Siciliano, P.; Leone, A. Review on Human Action Recognition in Smart Living: Sensing Technology, Multimodality, Real-Time Processing, Interoperability, and Resource-Constrained Processing. Sensors 2023, 23, 5281. https://doi.org/10.3390/s23115281

Diraco G, Rescio G, Siciliano P, Leone A. Review on Human Action Recognition in Smart Living: Sensing Technology, Multimodality, Real-Time Processing, Interoperability, and Resource-Constrained Processing. Sensors. 2023; 23(11):5281. https://doi.org/10.3390/s23115281

Chicago/Turabian StyleDiraco, Giovanni, Gabriele Rescio, Pietro Siciliano, and Alessandro Leone. 2023. "Review on Human Action Recognition in Smart Living: Sensing Technology, Multimodality, Real-Time Processing, Interoperability, and Resource-Constrained Processing" Sensors 23, no. 11: 5281. https://doi.org/10.3390/s23115281

APA StyleDiraco, G., Rescio, G., Siciliano, P., & Leone, A. (2023). Review on Human Action Recognition in Smart Living: Sensing Technology, Multimodality, Real-Time Processing, Interoperability, and Resource-Constrained Processing. Sensors, 23(11), 5281. https://doi.org/10.3390/s23115281