Recognition of Attentional States in VR Environment: An fNIRS Study

Abstract

:1. Introduction

2. Materials and Methods

2.1. Participants

2.2. Apparatus

2.3. Subjective Satisfaction Assessment

2.4. Procedure

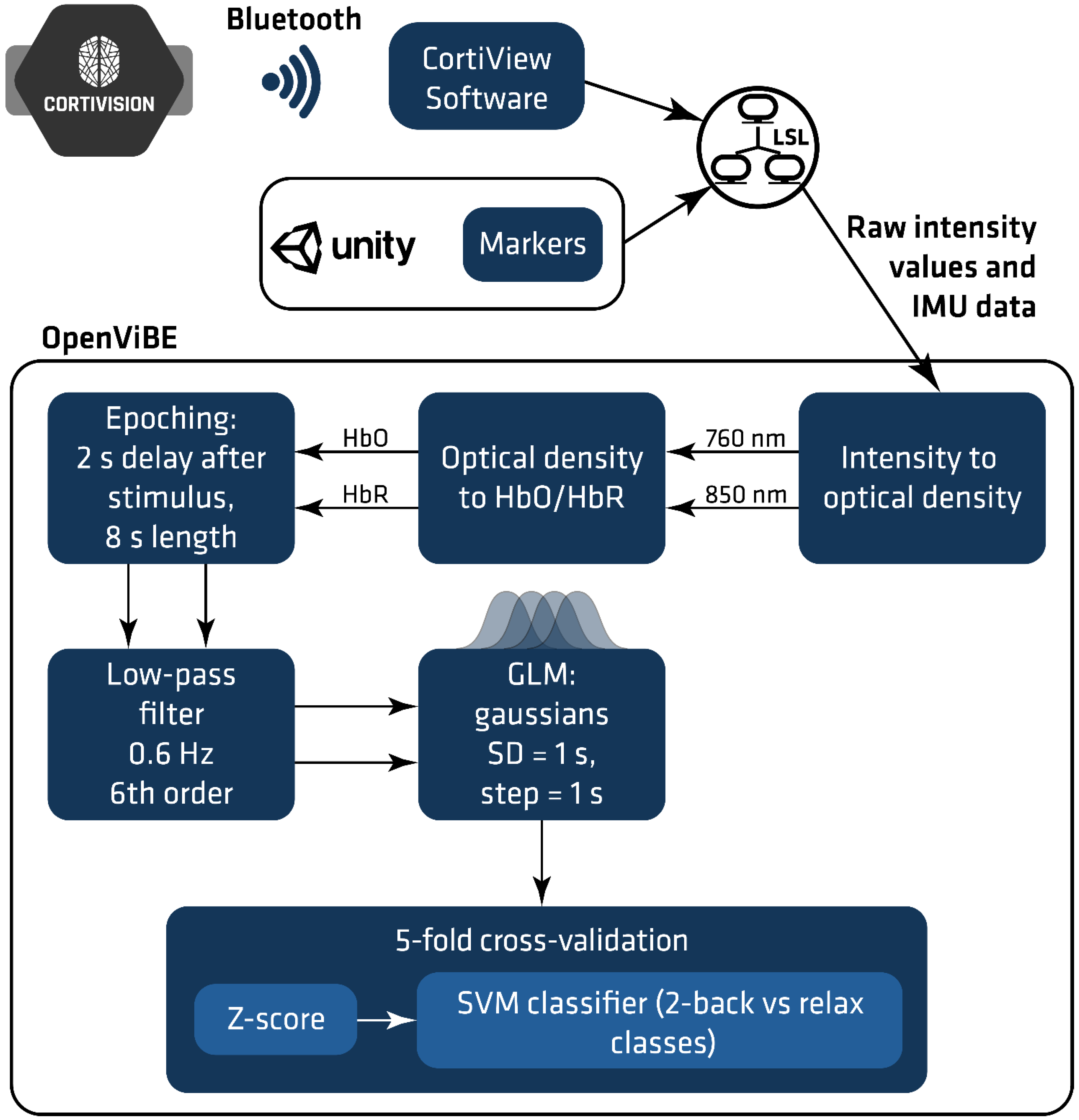

2.5. fNIRS Data Acquisition and Analysis

2.5.1. Probe Design

2.5.2. Signal Processing

- z-score normalization: the mean and standard deviation of separate features were computed based on the training set; the values were used for z-score normalization of the training and test datasets.

- SVM classifier [32] has been used to distinguish between two classes: “relax” and “2-back task”. The linear kernel was applied as this is less prone to overfitting than other kernels.

3. Results

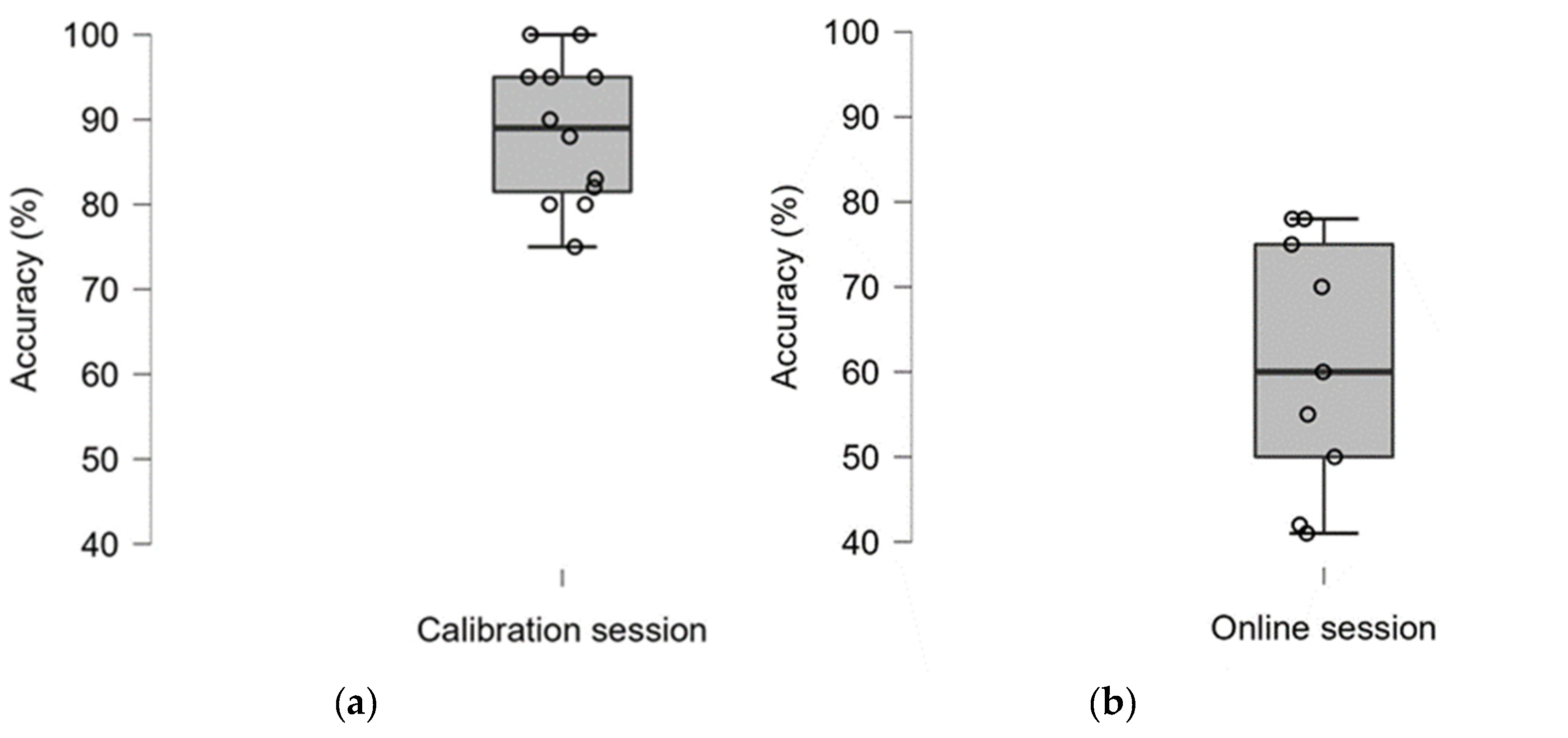

3.1. Classification Accuracy

3.2. User Satisfaction

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Pinti, P.; Tachtsidis, I.; Hamilton, A.; Hirsch, J.; Aichelburg, C.; Gilbert, S.; Burgess, P.W. The present and future use of functional near-infrared spectroscopy (fNIRS) for cognitive neuroscience. Ann. N. Y. Acad. Sci. 2020, 1464, 5–29. [Google Scholar] [CrossRef] [PubMed]

- Scholkmann, F.; Kleiser, S.; Metz, A.; Zimmermann, R.; Mata Pavia, J.; Wolf, U.; Wolf, M. A review on continuous wave functional near-infrared spectroscopy and imaging instrumentation and methodology. NeuroImage 2014, 85, 6–27. [Google Scholar] [CrossRef] [PubMed]

- Kocsis, L.; Herman, P.; Eke, A. The modified Beer–Lambert law revisited. Phys. Med. Biol. 2006, 51, N91–N98. [Google Scholar] [CrossRef] [PubMed]

- Quaresima, V.; Ferrari, M. Functional near-infrared spectroscopy (fNIRS) for assessing cerebral cortex function during human behaviour in natural/social situations: A concise review. Org. Res. Methods 2016, 22, 46–68. [Google Scholar] [CrossRef]

- Vesoulis, Z.; Mintzer, J.; Chock, V. Neonatal NIRS monitoring: Recommendations for data capture and review of analytics. J. Perinatol. 2021, 41, 675–688. [Google Scholar] [CrossRef]

- Kohl, S.; Mehler, D.; Lührs, M.; Thibault, R.; Konrad, K.; Sorger, B. The potential of functional near-infrared spectroscopy-based neurofeedback—A systematic review and recommendations for best practice. Front. Neurosci. 2020, 14, 594. [Google Scholar] [CrossRef]

- Mihara, M.; Miyai, I. Review of functional near-infrared spectroscopy in neurorehabilitation. Neurophotonics 2016, 3, 031414. [Google Scholar] [CrossRef]

- Kozlova, S. The use of near-infrared spectroscopy in the sport-scientific context. J. Neurol. Neurol. Dis. 2018, 4, 203. [Google Scholar]

- Naseer, N.; Hong, K. fNIRS-based brain-computer interfaces: A review. Front. Hum. Neurosci. 2015, 9, 3. [Google Scholar] [CrossRef] [Green Version]

- Balconi, M.; Molteni, E. Past and future of near-infrared spectroscopy in studies of emotion and social neuroscience. J. Cogn Psychol. 2015, 28, 129–146. [Google Scholar] [CrossRef]

- Cui, X.; Bray, S.; Bryant, D.; Glover, G.; Reiss, A. A quantitative comparison of NIRS and fMRI across multiple cognitive tasks. NeuroImage 2011, 54, 2808–2821. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Pinti, P.; Aichelburg, C.; Lind, F.; Power, S.; Swingler, E.; Merla, A.; Hamilton, A.; Gilbert, S.; Burgess, P.; Tachtsidis, I. Using fibreless, wearable fNIRS to monitor brain activity in real-world cognitive tasks. J. Vis. Exper. 2015, 106, e53336. [Google Scholar]

- Midha, S.; Maior, H.; Wilson, M.; Sharples, S. Measuring mental workload variations in office work tasks using fNIRS. Int. J. Hum. Comput. Stud. 2021, 147, 102580. [Google Scholar] [CrossRef]

- Pollmann, K.; Vukelić, M.; Birbaumer, N.; Peissner, M.; Bauer, W.; Kim, S. fNIRS as a Method to Capture the Emotional User Experience: A Feasibility Study. In Human-Computer Interaction. Novel User Experiences. HCI 2016. Lecture Notes in Computer Science; Kurosu, M., Ed.; Springer: Cham, Switzerland, 2016; Volume 9733. [Google Scholar]

- Pinti, P.; Aichelburg, C.; Gilbert, S.; Hamilton, A.; Hirsch, J.; Burgess, P.; Tachtsidis, I. A review on the use of wearable functional near-infrared spectroscopy in naturalistic environments. Jpn. Psychol. Res. 2018, 60, 347–373. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Landowska, A.; Royle, S.; Eachus, P.; Roberts, D. Testing the Potential of Combining Functional Near-Infrared Spectroscopy with Different Virtual Reality Displays—Oculus Rift and oCtAVE. In Augmented Reality and Virtual Reality; Jung, T., Dieck, M.C., Eds.; Springer: Cham, Switzerland, 2018; pp. 309–321. [Google Scholar]

- Validation of a Consumer-Grade Functional Near-Infrared Spectroscopy Device for Measurement of Frontal Pole Brain Oxygenation—An Interim Report. Department of Psychology, Stockholm University: Stockholm, Sweden. Available online: https://mendi-webpage.s3.eu-north-1.amazonaws.com/Mendi_signal_validation_interim_report_final.pdf (accessed on 21 February 2022).

- Cho, B.H.; Lee, J.M.; Ku, J.H.; Jang, D.P.; Kim, J.S.; Kim, I.Y.; Lee, J.H.; Kim, S.I. Attention enhancement system using virtual reality and EEG biofeedback. In Proceedings of the IEEE Virtual Reality 2002, Orlando, FL, USA, 24–28 March 2002; pp. 156–163. [Google Scholar]

- Putze, F.; Herff, C.; Tremmel, C.; Schultz, T.; Krusienski, D.J. Decoding mental workload in virtual environments: A fNIRs study using an immersive n-back task. In Proceedings of the 2019 41st Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Berlin, Germany, 23–27 July 2019; pp. 3103–3106. [Google Scholar]

- Luong, T.; Argelaguet, F.; Martin, N.; Lécuyer, A. Introducing mental workload assessment for the design of virtual reality training scenarios. In Proceedings of the 2020 IEEE Conference on Virtual Reality and 3D User Interfaces (VR), Atlanta, GA, USA, 22–26 March 2020; pp. 662–671. [Google Scholar]

- Hudak, J.; Blume, F.; Dresler, T.; Haeussinger, F.; Renner, T.; Fallgatter, A.; Gawrilow, C.; Ehlis, A. Near-infrared spectroscopy-based frontal lobe neurofeedback integrated in virtual reality modulates brain and behaviour in highly impulsive adults. Front. Hum. Neurosci. 2017, 11, 425. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Skalski, S.; Konaszewski, K.; Pochwatko, G.; Balas, R.; Surzykiewicz, J. Effects of hemoencephalographic biofeedback with virtual reality on selected aspects of attention in children with ADHD. Int. J. Psychophysiol. 2021, 170, 59–66. [Google Scholar] [CrossRef]

- Harrivel, A.; Weissman, D.; Noll, D.; Peltier, S. Monitoring attentional state with fNIRS. Front. Hum. Neurosci. 2013, 7, 861. [Google Scholar] [CrossRef]

- Kübler, A.; Holz, E.; Riccio, A.; Zickler, C.; Kaufmann, T.; Kleih, S.; Staiger-Sälzer, P.; Desideri, L.; Hoogerwerf, E.; Mattia, D. The user-centred design as novel perspective for evaluating the usability of BCI-controlled applications. PLoS ONE 2014, 9, e112392. [Google Scholar] [CrossRef] [Green Version]

- Thompson, D.; Quitadamo, L.; Mainardi, L.; Laghari, K.; Gao, S.; Kindermans, P.; Simeral, J.; Fazel-Rezai, R.; Matteucci, M.; Falk, T.; et al. Performance measurement for brain–computer or brain–machine interfaces: A tutorial. J. Neural Eng. 2014, 11, 035001. [Google Scholar] [CrossRef] [Green Version]

- Müller-Putz, G.; Scherer, R.; Brunner, C.; Leeb, R.; Pfurtscheller, G. Better than random: A closer look on BCI results. Int. J. Bioelectromagn. 2008, 10, 52–55. [Google Scholar]

- Bijur, P.; Silver, W.; Gallagher, E. Reliability of the visual analogue scale for measurement of acute pain. Acad. Emerg. Med. 2001, 8, 1153–1157. [Google Scholar] [CrossRef] [PubMed]

- Demers, L.; Weiss-Lambrou, R.; Ska, B. The Quebec User Evaluation of Satisfaction with Assistive Technology (QUEST 2.0): An overview and recent progress. Technol. Disabil. 2002, 14, 101–105. [Google Scholar] [CrossRef] [Green Version]

- Zimeo Morais, G.; Balardin, J.; Sato, J. fNIRS Optodes’ Location Decider (fOLD): A toolbox for probe arrangement guided by brain regions-of-interest. Sci. Rep. 2018, 8, 3341. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Gagnon, L.; Perdue, K.; Greve, D.; Goldenholz, D.; Kaskhedikar, G.; Boas, D. Improved recovery of the haemodynamic response in diffuse optical imaging using short optode separations and state-space modelling. NeuroImage 2011, 56, 1362–1371. [Google Scholar] [CrossRef] [Green Version]

- Refaeilzadeh, P.; Tang, L.; Liu, H. Cross-Validation. Encycl. Database Syst. 2009, 5, 532–538. [Google Scholar]

- Noble, W. What is a support vector machine? Nat. Biotechnol. 2006, 24, 1565–1567. [Google Scholar] [CrossRef]

- Herff, C.; Heger, D.; Fortmann, O.; Hennrich, J.; Putze, F.; Schultz, T. Mental workload during n-back task—Quantified in the prefrontal cortex using fNIRS. Front. Hum. Neurosci. 2014, 7, 935. [Google Scholar] [CrossRef] [Green Version]

- Guger, C.; Edlinger, G.; Harkam, W.; Niedermayer, I.; Pfurtscheller, G. How many people are able to operate an EEG-based brain-computer interface (BCI)? IEEE Trans. Neural Syst. Rehab. Eng. 2003, 11, 145–147. [Google Scholar] [CrossRef]

- Da Silva-Sauer, L.; Valero-Aguayo, L.; de la Torre-Luque, A.; Ron-Angevin, R.; Varona-Moya, S. Concentration on performance with P300-based BCI systems: A matter of interface features. Appl. Ergon. 2016, 52, 325–332. [Google Scholar] [CrossRef]

- Sousa Santos, B.; Dias, P.; Pimentel, A.; Baggerman, J.; Ferreira, C.; Silva, S.; Madeira, J. Head-mounted display versus desktop for 3D navigation in virtual reality: A user study. Multimed. Tools Appl. 2008, 41, 161. [Google Scholar] [CrossRef] [Green Version]

- Choi, B.C.; Pak, A.W. Peer reviewed: A catalogue of biases in questionnaires. Prev. Chronic Dis. 2005, 2, A13. [Google Scholar] [PubMed]

- Moradi, R.; Berangi, R.; Minaei, B. A survey of regularization strategies for deep models. Artif. Intell. Rev. 2019, 53, 3947–3986. [Google Scholar] [CrossRef]

- Kimmig, A.; Dresler, T.; Hudak, J.; Haeussinger, F.; Wildgruber, D.; Fallgatter, A.; Ehlis, A.; Kreifelts, B. Feasibility of NIRS-based neurofeedback training in social anxiety disorder: Behavioral and neural correlates. J. Neural Transm. 2018, 126, 1175–1185. [Google Scholar] [CrossRef] [PubMed]

- Trambaiolli, L.; Tossato, J.; Cravo, A.; Biazoli, C.; Sato, J. Subject-independent decoding of affective states using functional near-infrared spectroscopy. PLoS ONE 2021, 16, e0244840. [Google Scholar] [CrossRef]

- Varela-Aldás, J.; Palacios-Navarro, G.; Amariglio, R.; García-Magariño, I. Head-mounted display-based application for cognitive training. Sensors 2020, 20, 6552. [Google Scholar] [CrossRef]

| Subject | Calibration Session | Online Session |

|---|---|---|

| A | 80 | 50 * |

| B | 88 | - |

| C | 83 | 42 * |

| D | 82 * | 70 |

| E | 95 | 75 |

| F | 95 | 41 * |

| G | 100 | 78 |

| H | 75 | 60 * |

| I | 95 | - |

| J | 90 | 55 * |

| K | 80 | - |

| L | 100 | 78 |

| Group | M = 88.58; SD = 8.49 | M = 61; SD = 14.89 |

| Method | Dimension | Min | Max | Md Sten | IQR Sten |

|---|---|---|---|---|---|

| VAS | Overall satisfaction | 8 | 10 | 6 | 2.25 |

| eQUEST 2.0 | Dimensions | 2 | 5 | 6 | 4 |

| Weight | 2 | 5 | 6 | 2 | |

| Adjustment | 2 | 5 | 7 | 3.5 | |

| Safety | 4 | 5 | 10 | 0 | |

| Reliability | 4 | 5 | 6 | 4 | |

| Ease of use | 3 | 5 | 10 | 5 | |

| Comfort | 1 | 5 | 6 | 1 | |

| VAS (0 = not satisfied at all to 10 = very satisfied) eQUEST 2.0 (1 = not satisfied at all, 2 = not very satisfied, 3 = more or less satisfied, 4 = quite satisfied, 5 = very satisfied). | Median (Md) | Interquartile range (IQR) | |||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zapała, D.; Augustynowicz, P.; Tokovarov, M. Recognition of Attentional States in VR Environment: An fNIRS Study. Sensors 2022, 22, 3133. https://doi.org/10.3390/s22093133

Zapała D, Augustynowicz P, Tokovarov M. Recognition of Attentional States in VR Environment: An fNIRS Study. Sensors. 2022; 22(9):3133. https://doi.org/10.3390/s22093133

Chicago/Turabian StyleZapała, Dariusz, Paweł Augustynowicz, and Mikhail Tokovarov. 2022. "Recognition of Attentional States in VR Environment: An fNIRS Study" Sensors 22, no. 9: 3133. https://doi.org/10.3390/s22093133

APA StyleZapała, D., Augustynowicz, P., & Tokovarov, M. (2022). Recognition of Attentional States in VR Environment: An fNIRS Study. Sensors, 22(9), 3133. https://doi.org/10.3390/s22093133