Towards the Development of a Sensor Educational Toolkit to Support Community and Citizen Science

Abstract

:1. Introduction

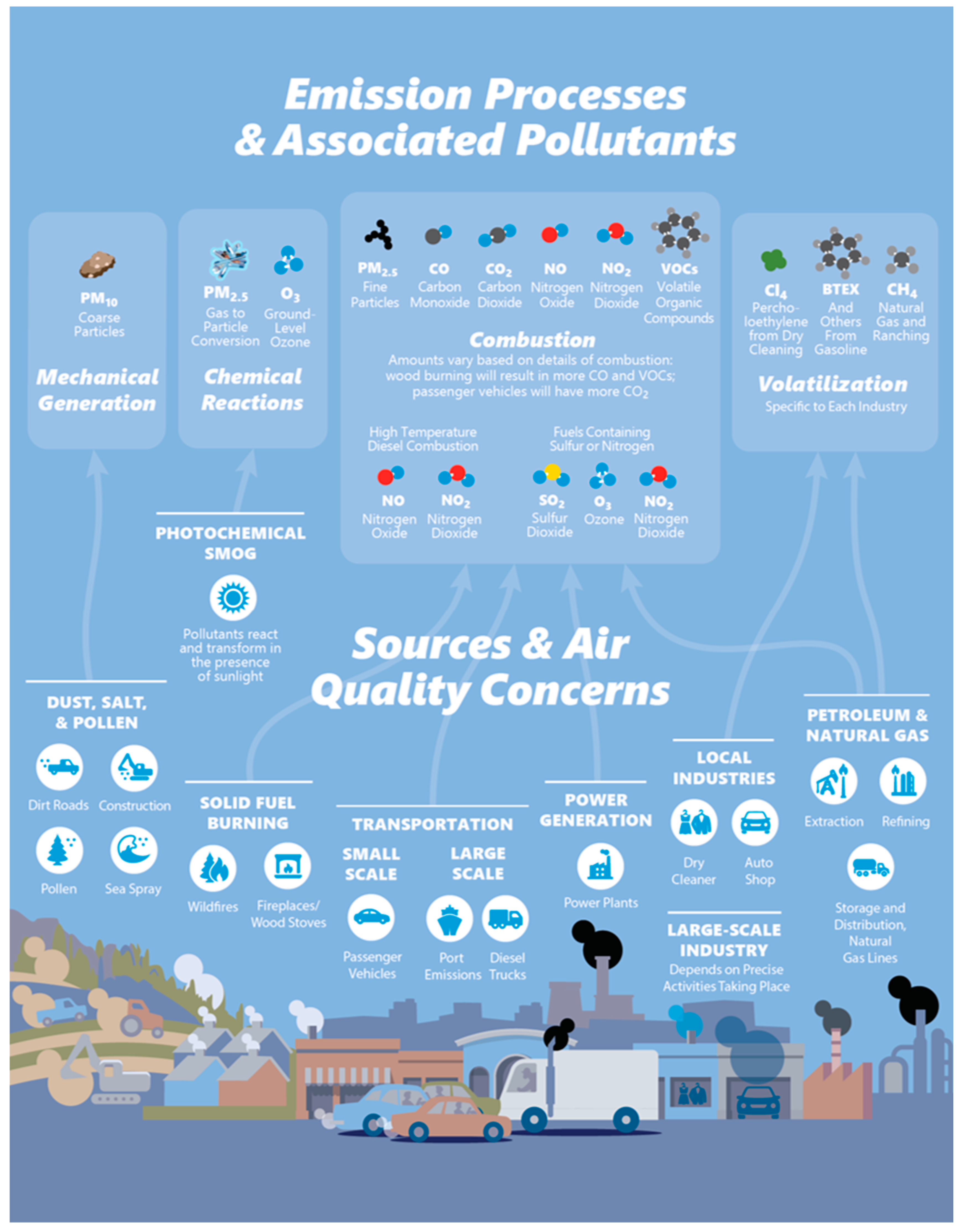

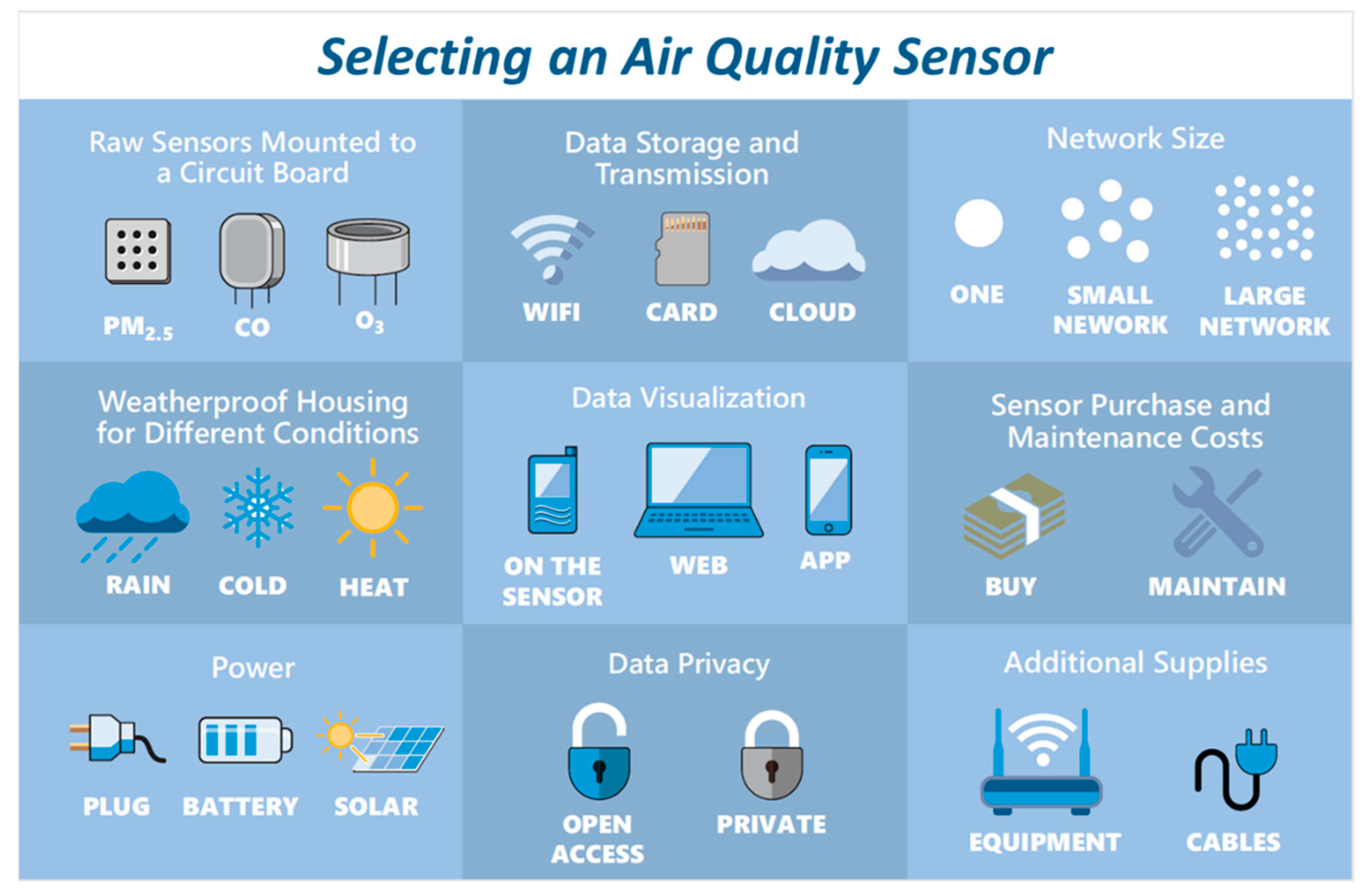

1.1. Current Understanding of Air Quality Sensing Technology

1.2. Use of Air Quality Sensors by Members of the Public

1.3. Previous Community or Citizen Science Air Monitoring Projects

1.4. Science to Achieve Results

2. Materials and Methods

2.1. Project Overview

2.2. Participating Communities

2.3. Engagement with Communities and Information Collected

3. Results and Discussion

3.1. Planning and Preparing for a Project

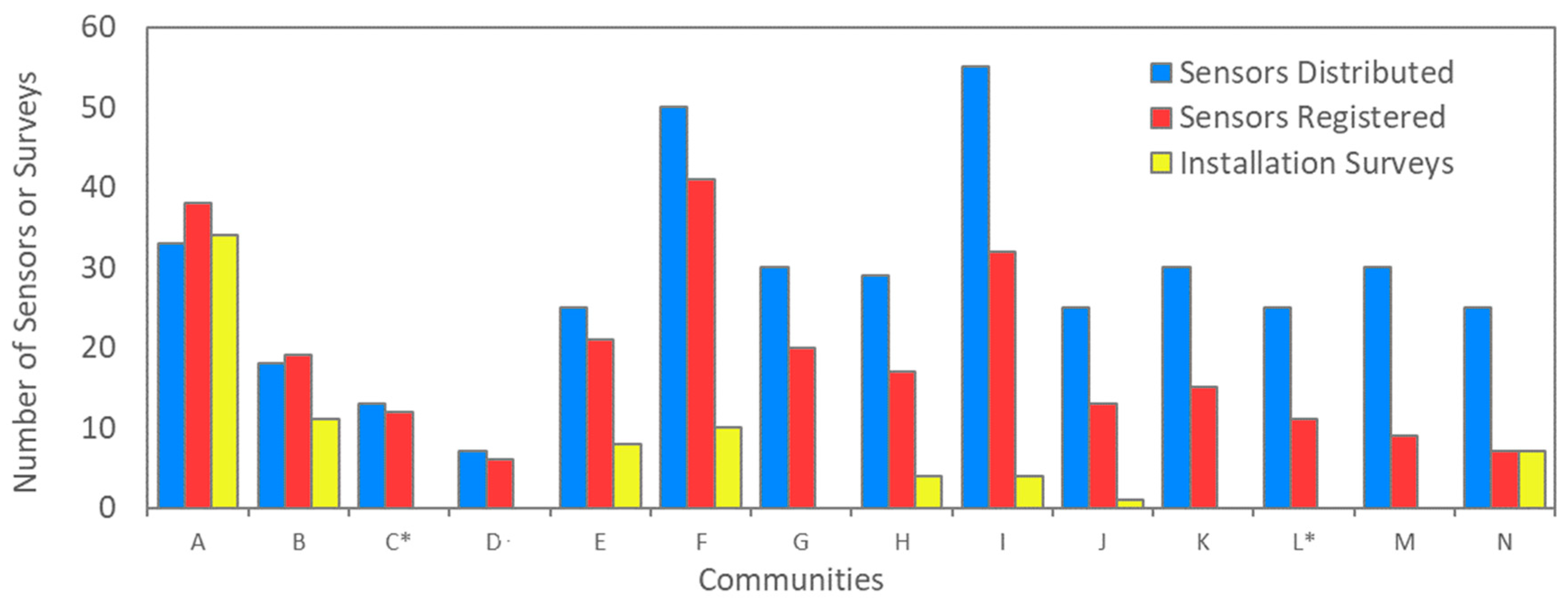

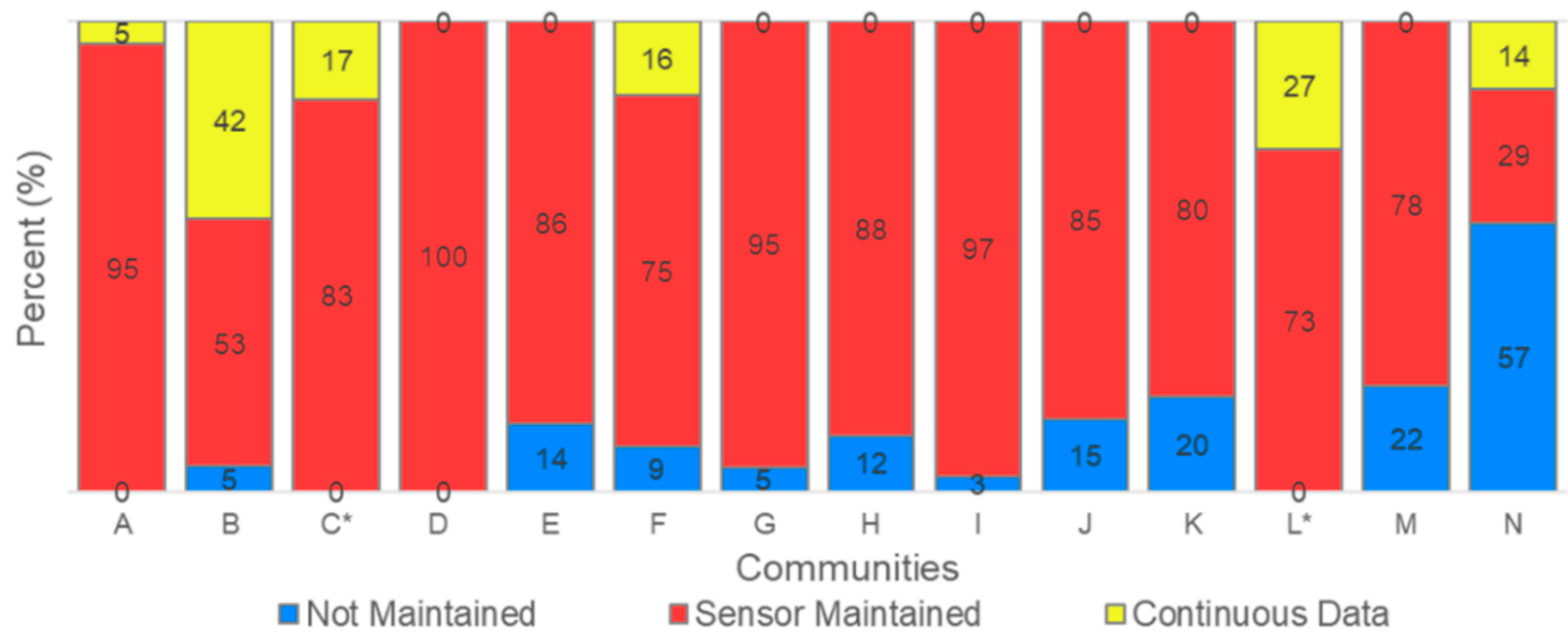

3.2. Deploying and Maintaining Sensors

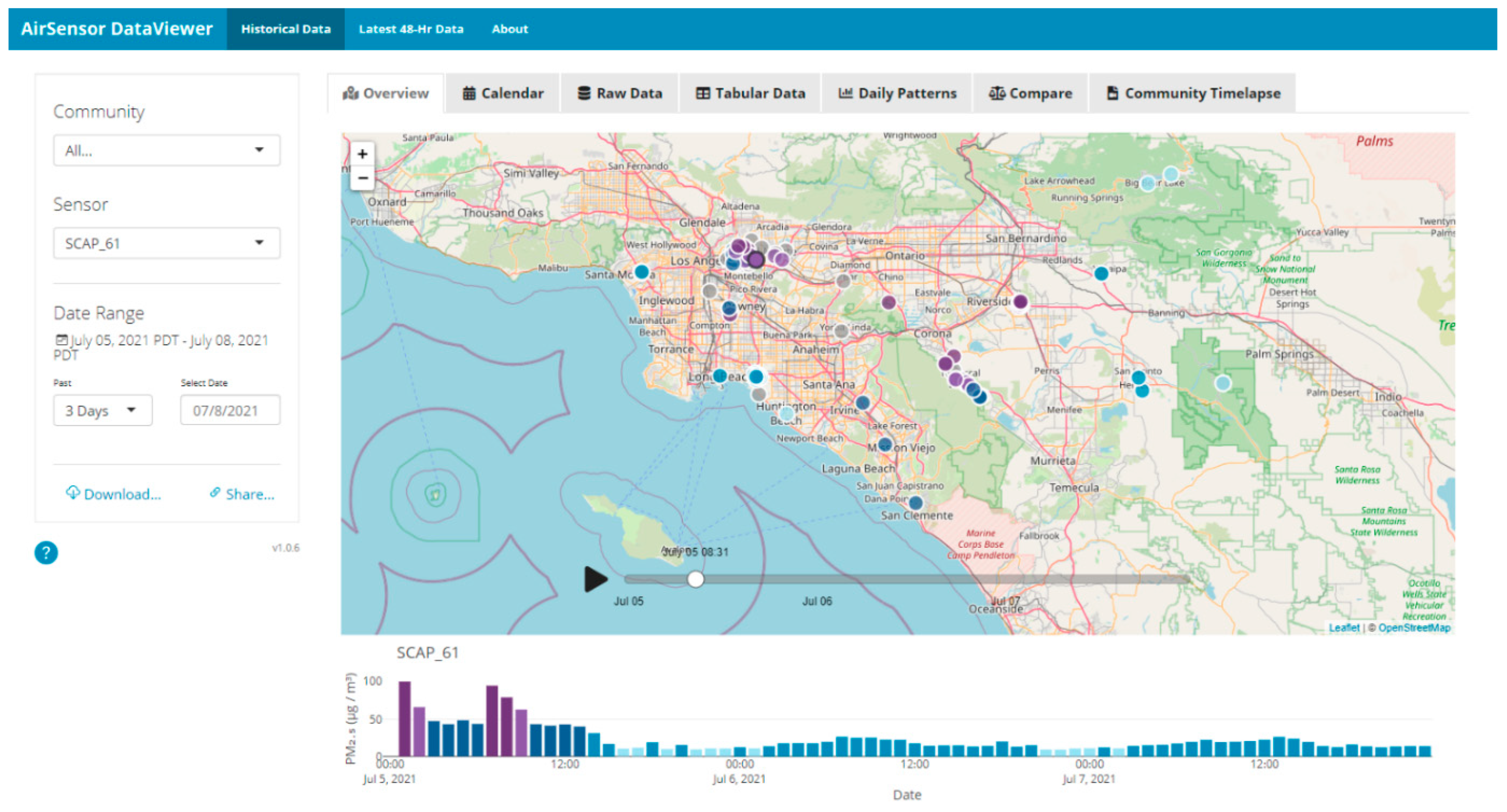

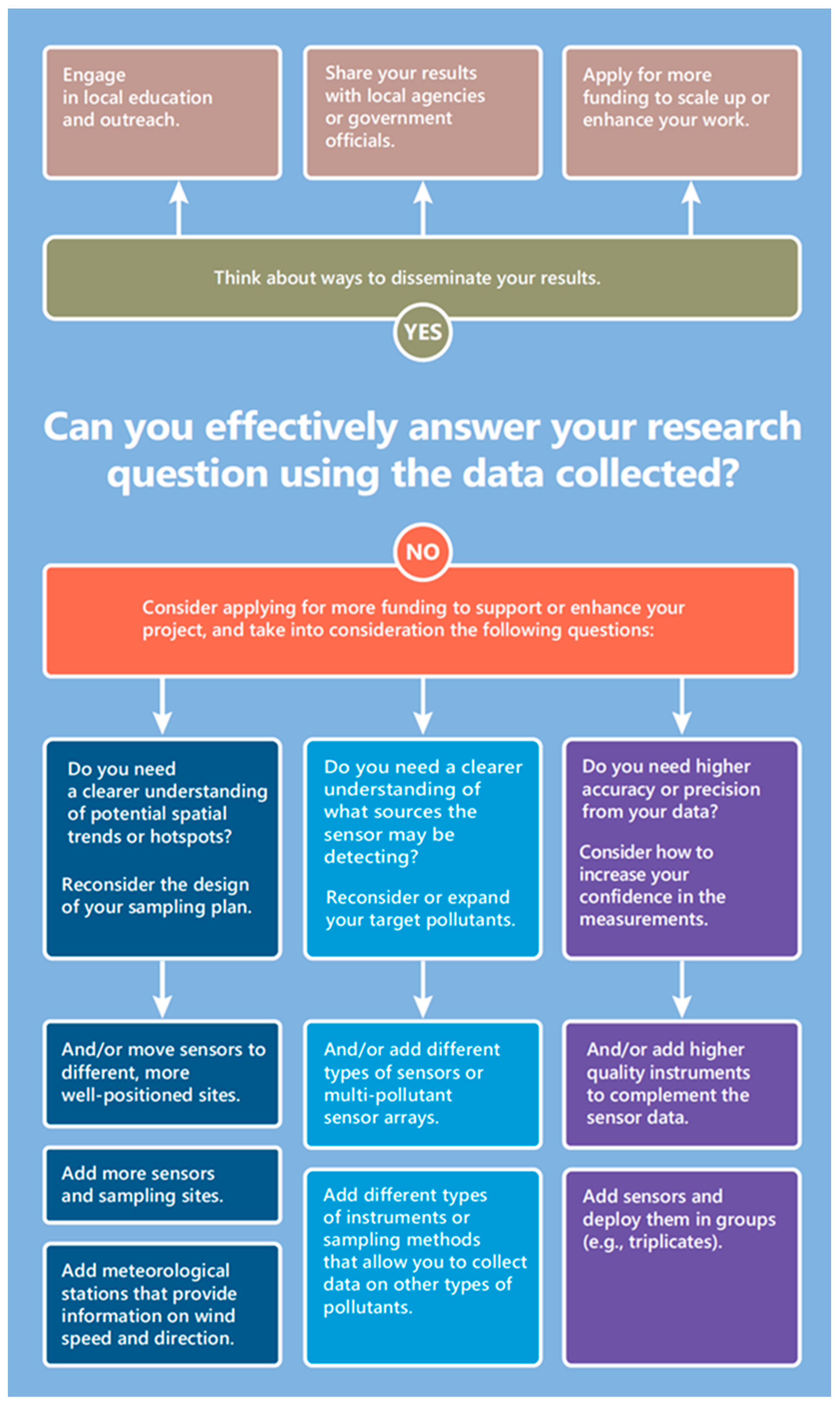

3.3. Data Access, Analysis, and Communication

3.4. On the Usefulness and Value of Sensors

3.5. Additional Strategies for Successful Projects

4. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Newman, G.; Wiggins, A.; Crall, A.; Graham, E.; Newman, S.; Crowston, K. The future of citizen science: Emerging technologies and shifting paradigms. Front. Ecol. Environ. 2012, 10, 298–304. [Google Scholar] [CrossRef] [Green Version]

- Karagulian, F.; Barbiere, M.; Kotsev, A.; Spinelle, L.; Gerboles, M.; Lagler, F.; Redon, N.; Crunaire, S.; Borowiak, A. Review of the performance of low-cost sensors for air quality monitoring. Atmosphere 2019, 10, 506. [Google Scholar] [CrossRef] [Green Version]

- Clements, A.L.; Griswold, W.G.; RS, A.; Johnston, J.E.; Herting, M.M.; Thorson, J.; Collier-Oxandale, A.; Hannigan, M. Low-Cost air quality monitoring tools: From research to practice (a workshop summary). Sensors 2017, 17, 2478. [Google Scholar] [CrossRef] [Green Version]

- Castell, N.; Dauge, F.R.; Schneider, P.; Vogt, M.; Lerner, U.; Fishbain, B.; Broday, D.; Bartonova, A. Can commercial low-cost sensor platforms contribute to air quality monitoring and exposure estimates? Environ. Int. 2017, 99, 293–302. [Google Scholar] [CrossRef] [PubMed]

- Piedrahita, R.; Xiang, Y.; Masson, M.; Ortega, J.; Collier, A.; Jiang, Y.; Li, K.; Dick, R.; Lv, Q.; Hannigan, M.; et al. The next generation of low-cost personal air quality sensors for quantitative exposure monitoring. Atmos. Meas. Tech. 2014, 7, 2425–2457. [Google Scholar] [CrossRef] [Green Version]

- Feenstra, D.; Papapostolou, V.; Hasheminassab, S.; Zhang, H.; Der Boghossian, B.; Cocker, D.; Polidori, A. Performance evaluation of twelve low-cost PM2.5 sensors at an ambient air monitoring site. Atmos. Environ. 2019, 216, 116946. [Google Scholar] [CrossRef]

- Collier-Oxandale, A.; Feenstra, B.; Papapostolou, V.; Zhang, H.; Kuang, M.; Der Boghossian, B.; Polidori, A. Field and laboratory performance evaluations of 28 gas-phase air quality sensors by the AQ-SPEC program. Atmos. Environ. 2019, 220, 117092. [Google Scholar] [CrossRef]

- De Vito, S.; Esposito, E.; Castell, N.; Schneider, P.; Bartonova, A. On the robustness of field calibration for smart air quality monitors. Sens. Actuators B Chem. 2020, 310, 127869. [Google Scholar] [CrossRef]

- Languille, B.; Gros, V.; Bonnaire, N.; Pommier, C.; Honore, C.; Debert, C.; Gauvin, L.; Annesi-Maesano, I.; Chaix, B.; Zeitouni, K. A methodology for the characterization of portable sensors for air quality measure with the goal of deployment in citizen science. Sci. Total Environ. 2020, 708, 134698. [Google Scholar] [CrossRef]

- Malings, C.; Tanzer, R.; Hauryliuk, A.; Kumar, S.P.N.; Zimmerman, N.; Kara, L.B.; Presto, A.A.; Subramanian, R. Development of a general calibration model and long-term performance evaluation of low-cost sensors for air pollutant gas monitoring. Atmos. Meas. Tech. 2018, 12, 903–920. [Google Scholar] [CrossRef] [Green Version]

- Masson, N.; Piedrahita, R.; Hannigan, M. Quantification method for electrolytic sensors in long-term monitoring of ambient air quality. Sensors 2015, 15, 27283–27302. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Vikram, S.; Collier-Oxandale, A.; Ostertag, M.; Menarini, M.; Chermak, C.; Dasgupta, S.; Rosing, T.; Hannigan, M.; Griswold, W.G. Evaluating and improving the reliability of gas-phase sensor system calibrations across new locations for ambient measurements and personal exposure monitoring. Atmos. Meas. Tech. 2019, 12, 4211–4239. [Google Scholar] [CrossRef] [Green Version]

- Jerrett, M.; Donaire-Gonzalez, D.; Popoola, O.; Jones, R.; Cohen, R.C.; Almanza, E.; Nazelle, A.D.; Mead, I.; Carrasco-turigas, G.; Cole-hunter, T.; et al. Validating novel air pollution sensors to improve exposure estimates for epidemiological analyses and citizen science. Environ. Res. 2017, 158, 286–294. [Google Scholar] [CrossRef] [PubMed]

- Thoma, E.D.; Brantley, H.L.; Oliver, K.D.; Whitaker, D.A.; Mukerjee, S.; Mitchell, B.; Wu, T.; Squier, B.; Escobar, E.; Tamira, A.; et al. South Philadelphia passive sampler and sensor study. J. Air Waste Manag. Assoc. 2016, 66, 959–970. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Sadighi, K.; Coffey, E.; Polidori, A.; Feenstra, B.; Lv, Q.; Henze, D.K.; Hannigan, M. Intra-urban spatial variability of surface ozone in Riverside, CA: Viability and validation of low-cost sensors. Atmos. Meas. Tech. 2018, 11, 1777–1792. [Google Scholar] [CrossRef] [Green Version]

- Morawska, L.; Thai, P.K.; Liu, X.; Asumadu-Sakyi, A.; Ayoko, G.; Bartonova, A.; Bedini, A.; Chai, F.; Christensen, B.; Dunbabin, M.; et al. Applications of low-cost sensing technologies for air quality monitoring and exposure assessment: How far have they gone? Environ. Int. 2018, 116, 286–299. [Google Scholar] [CrossRef] [PubMed]

- Williams, R.; Long, R.; Beaver, M.; Kaufman, A.; Zeiger, F.; Heimbinder, M.; Heng, I.; College, M.; Yap, R.; Acharya, B.R.; et al. Sensor Evaluation Report; EPA/600/R-14/143 (NTIS PB2015-100611); U.S. Environmental Protection Agency: Washington, DC, USA, 2014.

- Spinelle, L.; Aleixandre, M.; Gerboles, M. Protocol of Evaluation and Calibration of Low-Cost Gas Sensors for the Monitoring of Air Pollution. Available online: https://publications.jrc.ec.europa.eu/repository/handle/JRC83791 (accessed on 15 February 2022).

- Papapostolou, V.; Zhang, H.; Feenstra, B.J.; Polidori, A. Development of an environmental chamber for evaluating the performance of low-cost air quality sensors under controlled conditions. Atmos. Environ. 2017, 171, 82–90. [Google Scholar] [CrossRef]

- English, P.B.; Richardson, M.J.; Garzon-Galvis, C. From crowdsourcing to extreme citizen science: Participatory research for environmental health. Annu. Rev. Public Health 2018, 39, 335–350. [Google Scholar] [CrossRef] [Green Version]

- Hubbell, B.J.; Kaufman, A.; Rivers, L.; Schulte, K.; Hagler, G.; Clougherty, J.; Cascio, W.; Costa, D. Understanding social and behavioral drivers and impacts of air quality sensor use. Sci. Total Environ. 2018, 621, 886–894. [Google Scholar] [CrossRef]

- Miskell, G.; Salmond, J.; Williams, D.E. Low-cost sensors and crowd-sourced data: Observations of siting impacts on a network of air-quality instruments. Sci. Total Environ. 2017, 575, 1119–1129. [Google Scholar] [CrossRef]

- Collier-Oxandale, A.; Coffey, E.; Thorson, J.; Johnston, J.; Hannigan, M. Comparing building and neighborhood-scale variability of CO2 and O3 to inform deployment considerations for low-cost sensor system use. Sensors 2018, 18, 1349. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Coulson, S.; Woods, M.; Scott, M.; Hemment, D. Making sense: Empowering participatory sensing with transformation design. Des. J. 2018, 21, 813–833. [Google Scholar] [CrossRef]

- Robinson, J.A.; Kocman, D.; Horvat, M.; Bartonova, A. End-user feedback on a low-cost portable air quality sensor system—Are we there yet? Sensors 2018, 18, 3768. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Moore, J.; Goffin, P.; Meyer, M.; Lundrigan, P.; Patwari, N.; Sward, K.; Wiese, J. Managing in-home environments through sensing, annotating, and visualizing air quality data. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2018, 2, 1–28. [Google Scholar] [CrossRef] [Green Version]

- Golumbic, Y.N.; Fishbain, B.; Baram-Tsabari, A. User centered design of a citizen science air-quality monitoring project. Int. J. Sci. Educ. Part B 2019, 9, 195–213. [Google Scholar] [CrossRef]

- Wesseling, J.; Ruiter, H.D.; Blokhuis, D.; Weijers, E.; Volten, H.; Vonk, J.; Gast, L.; Voogt, M.; Zandaveld, P.; Ratingen, S.V.; et al. Development and implementation of a platform for public information on air quality, sensor measurements, and citizen science. Atmosphere 2019, 10, 445. [Google Scholar] [CrossRef] [Green Version]

- Schaefer, T.; Kieslinger, B.; Fabian, C.M. Citizen-based air quality monitoring: The impact on individual citizen scientists and how to leverage the benefits to affect whole regions. Citiz. Sci. Theory Pract. 2020, 5, 1. [Google Scholar] [CrossRef] [Green Version]

- Israel, B.A.; Schulz, A.J.; Parker, E.A.; Becker, A.B. Review of community-based research: Assessing partnership approaches to improve public health. Annu. Rev. Public Health 1998, 19, 173–202. [Google Scholar] [CrossRef] [Green Version]

- Davis, L.F.; Ramirez-Andreotta, M.D.; Buxner, S. Engaging diverse citizen scientists for environmental health: Recommendations from participants and promotoras. Citiz. Sci. Theory Pract. 2020, 5, 1–27. [Google Scholar] [CrossRef] [Green Version]

- Feenstra, B.; Papapostolou, V.; Collier-Oxandale, A.; Cocker, D.; Polidori, A. The AirSensor open-source R-package and DataViewer web application for interpreting community data collected by low-cost sensor networks. Environ. Model. Softw. 2020, 134, 104832. [Google Scholar] [CrossRef]

- Kadiyala, A.; Kumar, A. Applications of python to evaluate environmental data science problems. Environ. Prog. Sustain. Energy 2017, 36, 1580–1586. [Google Scholar] [CrossRef]

- Kadiyala, A.; Kumar, A. Applications of R to evaluate environmental data science problems. Environ. Prog. Sustain. Energy 2017, 36, 1358–1364. [Google Scholar] [CrossRef]

- Harrison, J.L. Parsing “participation” in action research: Navigating the challenges of lay involvement in technically complex participatory science projects. Soc. Nat. Resour. 2011, 24, 702–716. [Google Scholar] [CrossRef]

- Kinny, P.L.; Aggarwal, M.; Northridge, M.E.; Janssen, N.A.; Shepard, P. Airborne concentrations of PM2.5 and diesel exhaust particles on Harlem sidewalks: A community-based pilot study. Environ. Health Perspect. 2000, 108, 213–218. [Google Scholar]

- Wing, S.; Horton, R.A.; Marshall, S.W.; Thu, K.; Tajik, M.; Schinasi, L.; Schiffman, S.S. Air pollution and odor in communities near industrial swine operations. Environ. Health Perspect. 2008, 116, 1362–1368. [Google Scholar] [CrossRef] [PubMed]

- Ottinger, G. Buckets of resistance: Standards and the effectiveness of citizen science. Sci. Technol. Hum. Values 2010, 35, 244–270. [Google Scholar] [CrossRef]

- Commodore, A.; Wilson, S.; Muhammad, O.; Svendsen, E.; Pearce, J. Community-based participatory research for the study of air pollution: A review of motivations, approaches, and outcomes. Environ. Monit. Assess. 2017, 189, 378. [Google Scholar] [CrossRef]

- Johnston, J.E.; Juarez, Z.; Navarro, S.; Hernandez, A.; Gutschow, W. Youth engaged participatory air monitoring: A ‘day in the life’ in urban environmental justice communities. Int. J. Environ. Res. Public Health 2020, 17, 93. [Google Scholar] [CrossRef] [Green Version]

- Wong-Parodi, G.; Dias, M.B.; Taylor, M. Effect of using an indoor air quality sensor on perceptions of and behaviors toward air pollution (Pittsburgh Empowerment Library Study): Online survey and interviews. JMIR mHealth uHealth 2018, 6, e8273. [Google Scholar] [CrossRef]

- English, P.; Amato, H.; Bejarano, E.; Carvlin, G.; Lugo, H.; Jerrtt, M.; King, G.; Madrigal, D.; Metzer, D.; Northcross, A.; et al. Performance of a low-cost sensor community air monitoring network in Imperial County, CA. Sensors 2020, 20, 3031. [Google Scholar] [CrossRef]

- Matz, J.; Wylie, S.; Kriesky, J. Participatory air monitoring in the midst of uncertainty: Residents’ experiences with the Speck sensor. Engag. Sci. Technol. Soc. 2017, 3, 464–498. [Google Scholar] [CrossRef] [Green Version]

- U.S. Environmental Protection Agency. Air Sensor Toolbox. Available online: https://www.epa.gov/air-sensor-toolbox (accessed on 1 February 2022).

- Williams, R.; Kilaru, V.; Snyder, E.; Kaufman, A.; Rutter, A.; Dye, T.; Russel, A.; Hafner, H. Air Sensor Guidebook; EPA/600/R-14/159 (NTIS PB2015-100610); U.S. Environmental Protection Agency: Washington, DC, USA, 2014.

- Tracking California; Comite Civico del Valle; University of Washington. Guidebook for Developing a Community Air Monitoring Network: Steps, Lessons, and Recommendations from the Imperial County Community Air Monitoring Project; Tracking California: Richmond, CA, USA, 2018; Available online: https://trackingcalifornia.org/cms/file/imperial-air-project/guidebook (accessed on 17 March 2022).

- Ilie, A.M.C.; Eisl, H.M. Air Quality Citizen Science Research Project in NYC—Toolkit and Case Studies; Barry Commoner Center for Health and the Environment, Queens College: New York, NY, USA, 2020. [Google Scholar]

- Kaufman, A.; Williams, R.; Barzyk, T.; Greenberg, M.; O’Shea, M.; Sheridan, P.; Hoang, A.; Ash, C.; Teitz, A.; Mustafa, M.; et al. A Citizen Science and Government Collaboration: Developing Tools to Facilitate Community Air Monitoring. Environ. Justice 2017, 10, 51–61. [Google Scholar] [CrossRef] [PubMed]

- U.S. Environmental Protection Agency. Engage, Educate, and Empower California Communities on the Use and Applications of Low-Cost Air Monitoring Sensors. Available online: https://cfpub.epa.gov/ncer_abstracts/index.cfm/fuseaction/display.abstractDetail/abstract_id/10742/report/0 (accessed on 17 March 2022).

- O’Fallon, L.R.; Dearry, A. Community-based participatory research as a tool to advance environmental health sciences. Environ. Health Perspect. 2002, 110, 155–159. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Collier-Oxandale, A.; Feenstra, B.; Papapostolou, V.; Polidori, A. AirSensor v1.0: Enhancements to the open-source R package to enable deep understanding of the long-term performance and reliability of PurpleAir sensors. Environ. Model. Softw. 2022, 148, 105256. [Google Scholar] [CrossRef]

- Schulte, N.; Li, X.; Ghosh, J.K.; Fine, P.M.; Epstein, S.A. Responsive high-resolution air quality index mapping using model, regulatory, and sensor data in real-time. Environ. Res. Lett. 2020, 15, 1040a7. [Google Scholar] [CrossRef]

- Barkjohn, K.K.; Gantt, B.; Clements, A.L. Development and application of a United Sates-wide correction for PM2.5 data collected with the PurpleAir sensor. Atmos. Meas. Tech. 2021, 14, 4617–4637. [Google Scholar] [CrossRef]

- U.S. Environmental Protection Agency. Handbook for Citizen Science Quality Assurance and Documentation—Version 1. 2019. Available online: https://www.epa.gov/sites/default/files/2019-03/documents/508_csqapphandbook_3_5_19_mmedits.pdf (accessed on 16 March 2022).

- Popoola, O.A.; Carruthers, D.; Lad, C.; Bright, V.B.; Mead, M.I.; Stettler, M.E.; Saffel, J.; Jones, R.L. Use of networks of low cost air quality sensors to quantify air quality in urban settings. Atmos. Environ. 2018, 194, 58–70. [Google Scholar] [CrossRef]

- Connolly, R.E.; Yu, Q.; Wang, Z.; Chen, Y.H.; Liu, J.; Collier-Oxandale, A.; Papapostolou, V.; Polidori, A.; Zhu, Y. Long-term evaluation of a low-cost air sensor network for monitoring indoor and outdoor air quality at the community scale. Sci. Total Environ. 2022, 807, 150797. [Google Scholar] [CrossRef]

- Collier-Oxandale, A.; Wong, N.; Navarro, S.; Johnston, J.; Hannigan, M. Using gas-phase air quality sensors to disentangle potential sources in a Los Angeles neighborhood. Atmos. Environ. 2020, 233, 117519. [Google Scholar] [CrossRef]

- Field Evaluation—Purple Air (PA-II) PM Sensor, South Coast AQMD AQ-SPEC. Available online: http://www.aqmd.gov/docs/default-source/aq-spec/field-evaluations/purple-air-pa-ii---field-evaluation.pdf?sfvrsn=11 (accessed on 17 March 2022).

- California Office of Environmental Health Hazard Assessment. Maps and Data. Available online: https://oehha.ca.gov/calenviroscreen/maps-data (accessed on 8 April 2021).

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Collier-Oxandale, A.; Papapostolou, V.; Feenstra, B.; Der Boghossian, B.; Polidori, A. Towards the Development of a Sensor Educational Toolkit to Support Community and Citizen Science. Sensors 2022, 22, 2543. https://doi.org/10.3390/s22072543

Collier-Oxandale A, Papapostolou V, Feenstra B, Der Boghossian B, Polidori A. Towards the Development of a Sensor Educational Toolkit to Support Community and Citizen Science. Sensors. 2022; 22(7):2543. https://doi.org/10.3390/s22072543

Chicago/Turabian StyleCollier-Oxandale, Ashley, Vasileios Papapostolou, Brandon Feenstra, Berj Der Boghossian, and Andrea Polidori. 2022. "Towards the Development of a Sensor Educational Toolkit to Support Community and Citizen Science" Sensors 22, no. 7: 2543. https://doi.org/10.3390/s22072543

APA StyleCollier-Oxandale, A., Papapostolou, V., Feenstra, B., Der Boghossian, B., & Polidori, A. (2022). Towards the Development of a Sensor Educational Toolkit to Support Community and Citizen Science. Sensors, 22(7), 2543. https://doi.org/10.3390/s22072543