A RUL Estimation System from Clustered Run-to-Failure Degradation Signals

Abstract

1. Introduction

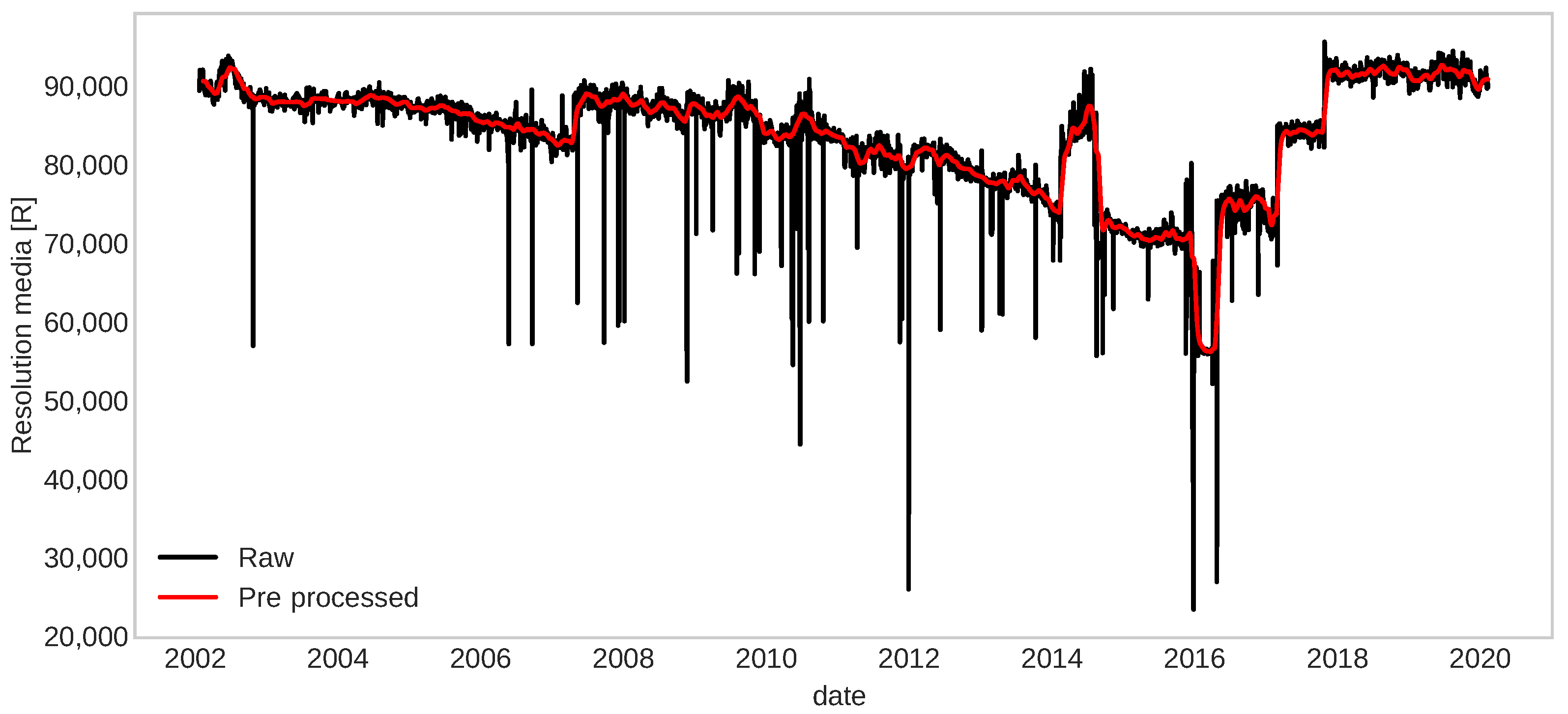

- We made improvements in cleaning spikes or possible outlines and smoothing time-series in the pre-processing data step in the fault detection framework developed in [15] to reduce the remaining noise level while maintaining its relevant characteristics such as trends and stationarity.

- We show that the fault detection framework in [15], together with our pre-processing method, improves the robustness of the framework and can be transferable to another problem with similar degradation, although with different statistical characteristics.

- We built a strategy using clustering run-to-failure critical segments to define an appropriate failure threshold that improves the RUL estimation. Moreover, using this strategy, we predict the RUL of another problem with similar degradation.

2. Background

2.1. Fault Detection

2.2. Prognostic

2.3. Recurrent Neural Networks (RNNs)

2.4. Prophet Model

3. Methodology

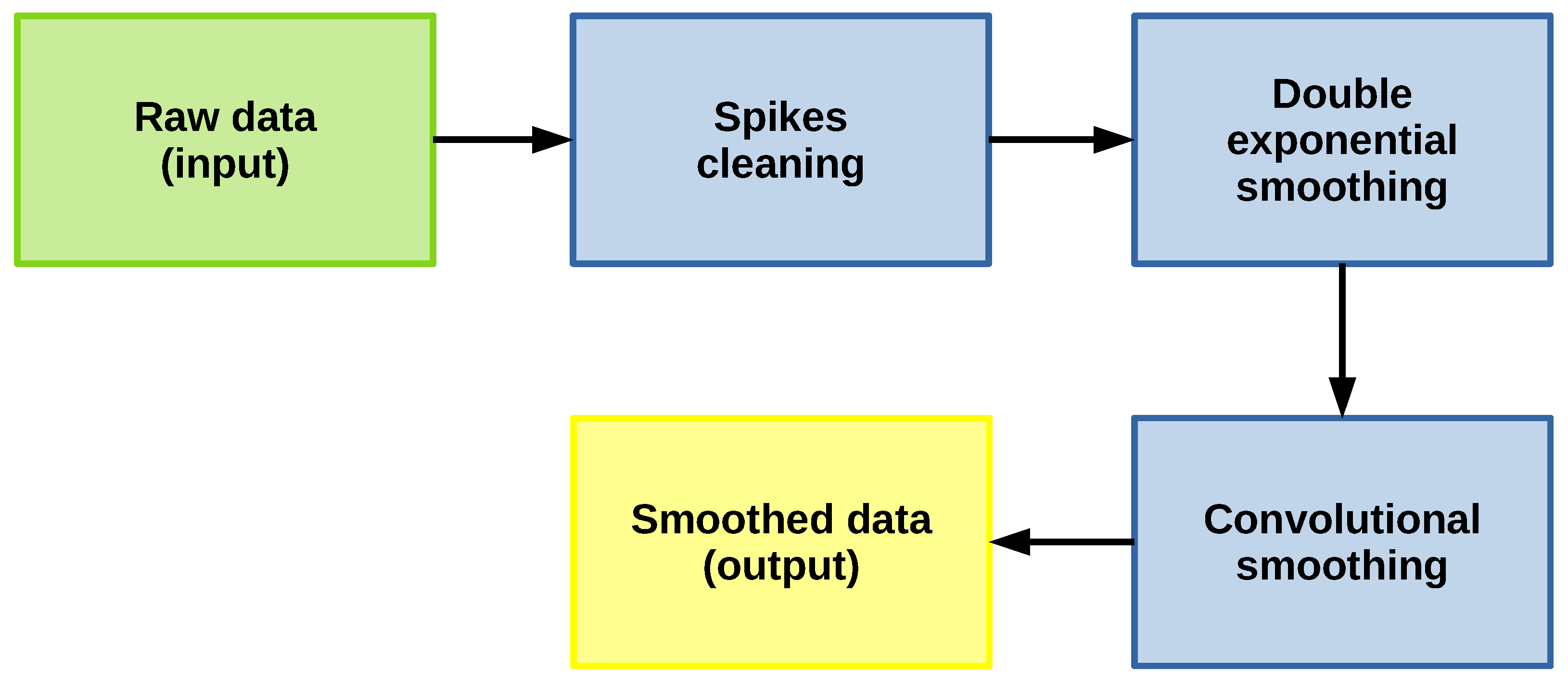

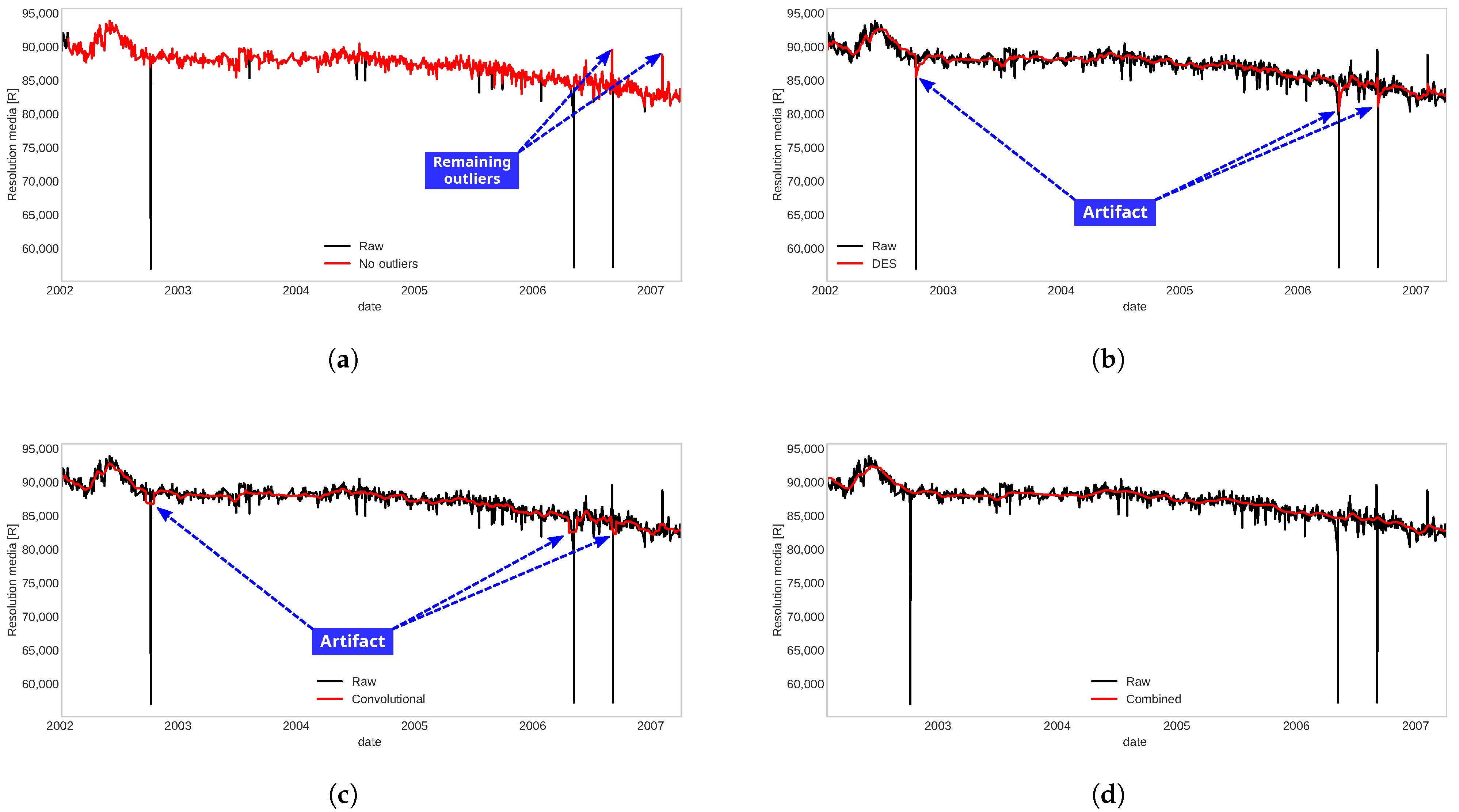

3.1. Pre-Processing Data

- Spikes cleaning: it consists of clearing possible outliers and spikes points by comparing time series values with the values of their preceding time window, identifying a time point as anomalous if the change of value from its preceding average or median is anomalously large.An advantage of this outlier reduction strategy is that it considers the local dynamics of the signal with time windows. Therefore, managing to identify as outliers the samples that are outside the local range and thus reduce the number of samples that are normal but that were identified as outliers, as could happen with traditional methods that depend on the global mean and standard deviation. This method is implemented in the ADTK library [59].

- Double exponential smoothing: this filter [26,60,61,62,63,64] is commonly used for forecasting in time series, but it can also be used for noise reduction. This method is particularly useful in time series to smooth its behavior, preserving the trend and without losing almost any information in the dynamics of the series. Also, the model is simple to implement, depending on two main parameters. For more details, see [15].

- Convolutional smoothing: this consist of applying the Fourier transform with a fixed window size to smooth the signal maintaining the trend. In other words, this method applies a central weighted moving average to the signal allowing short-term fluctuations to be reduced and long-term trends to be highlighted. It is implemented in the TSmoothie library [65].

3.2. Run-to-Failures Critical Segments Clustering

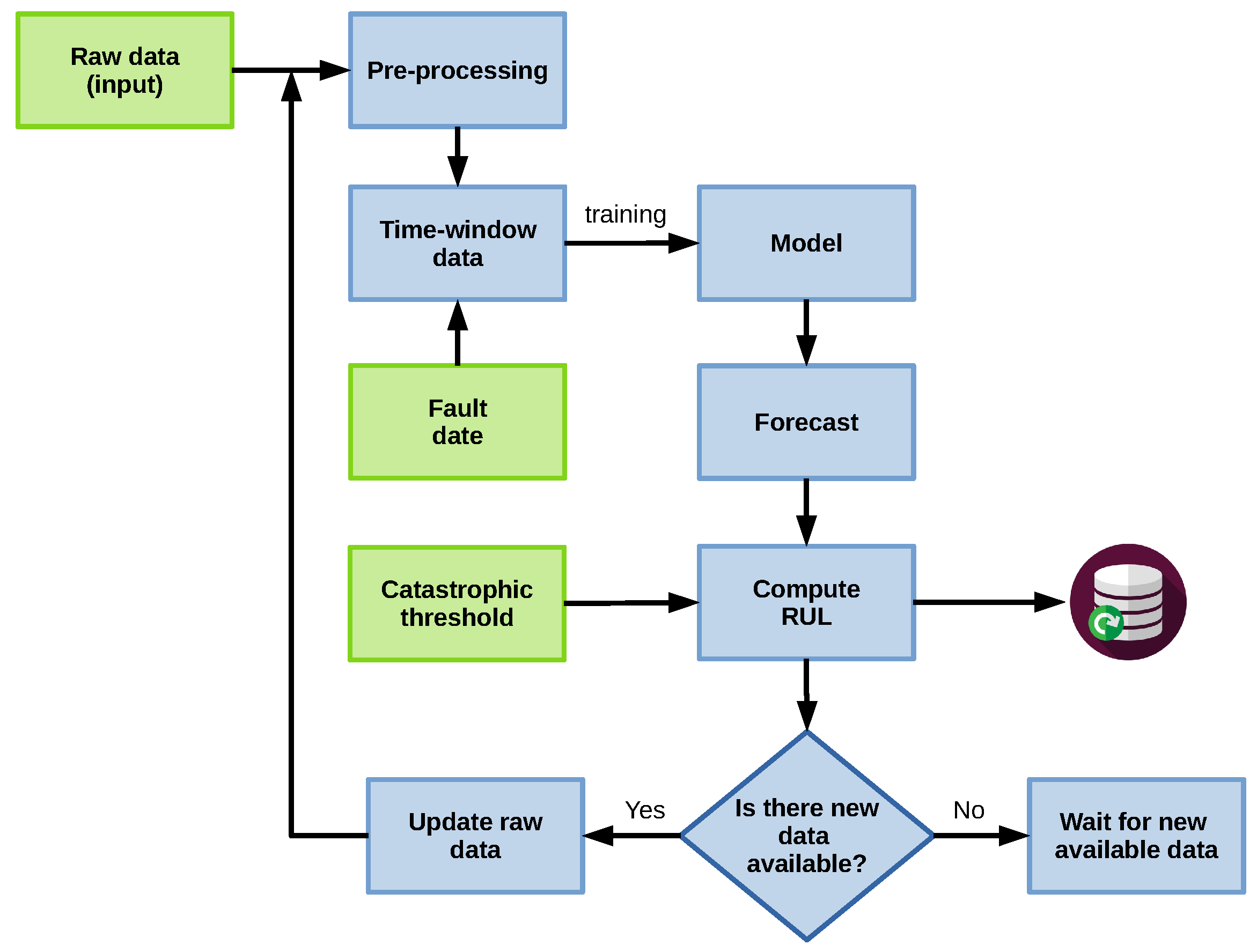

3.3. Prognostic Method

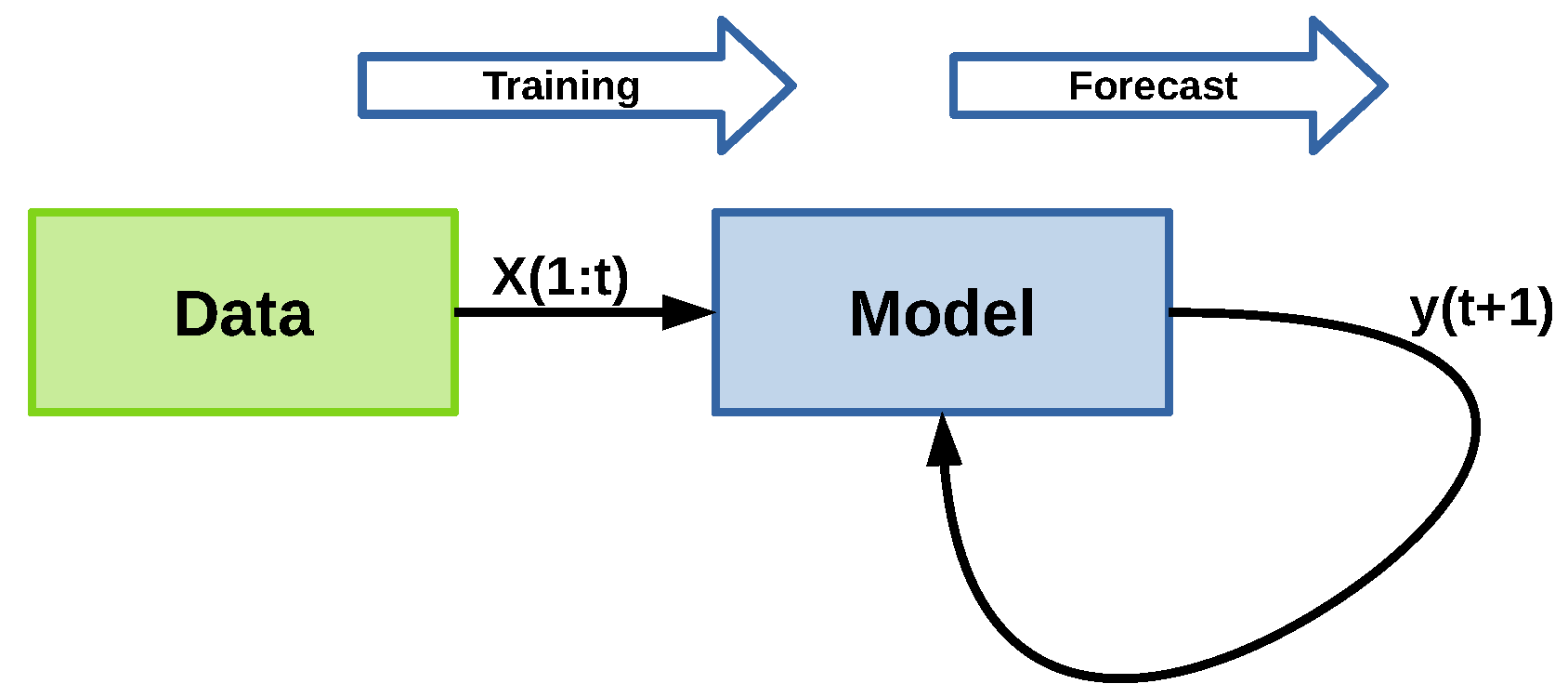

3.3.1. Strategy A

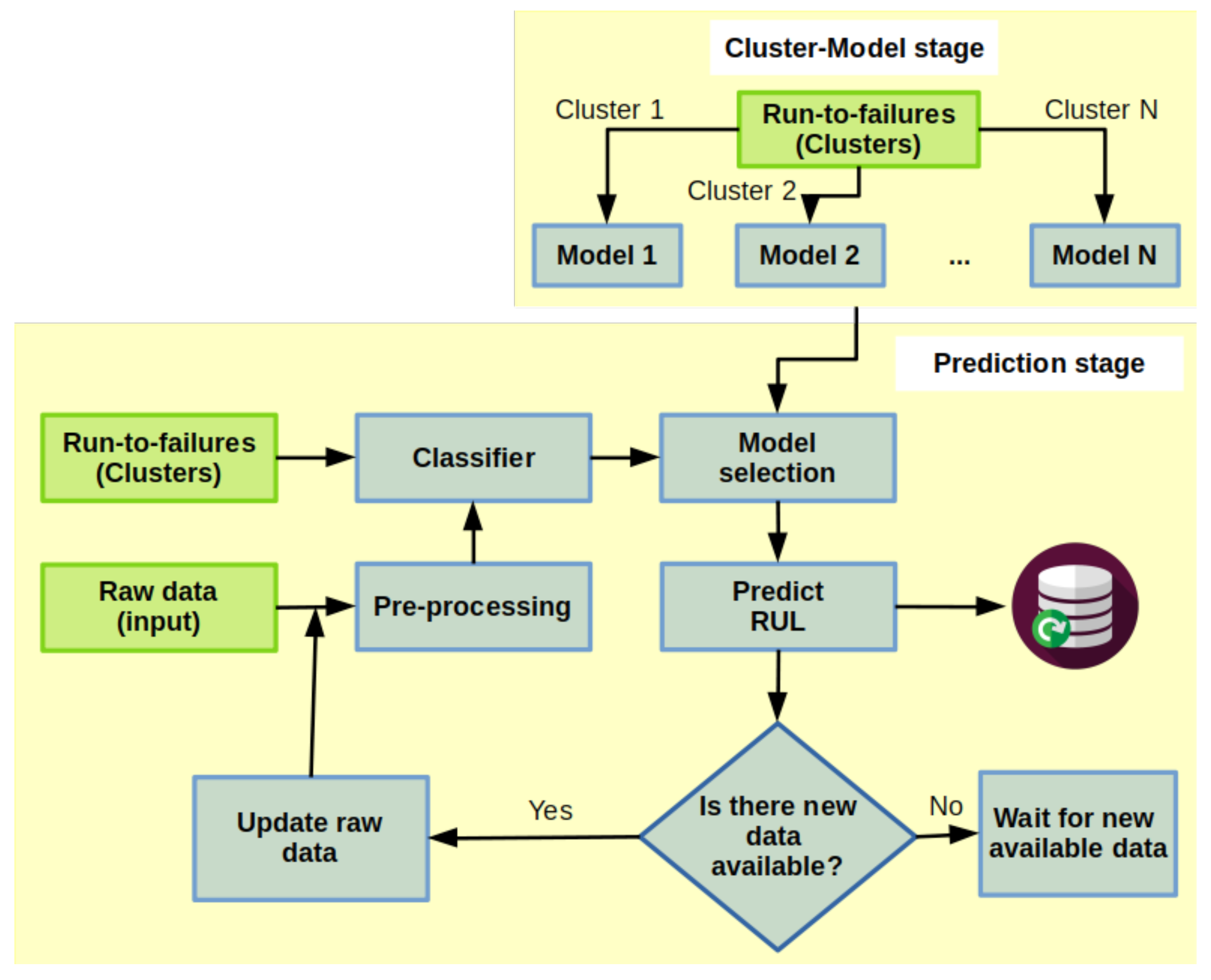

3.3.2. Strategy B

- Cluster-Model stage: it consists of the usage of clustering described in Section 3.2, so that, for each cluster we can fit a regression model. The train data is defined by the critical signals limited by a defined failure threshold in the cluster with its residual RUL, i.e., for each critical signal S with length in cluster C and such that , and . Then, each sample has a residual RULwhere is the length of the signal S,and are the first sample of S and , respectively.

- Prediction stage: it consists mainly in predicting the RUL of a component in the signal that has been diagnosed as a fault, which means a degradation behavior has started. In this step, we took a segment of the signal after a fault has been detected; it is pre-processed and submitted to a classifier to identify to which cluster it belongs and select the related regression model, already fitted in the Cluster-Model stage, to predict the RUL. This procedure is executed when new samples are available.The classifier works in matching segments to all run-to-failure critical segments using Minimum Variance Matching (MVM) [68,69,70], which is a popular method for elastic matching of two sequences of different lengths by mapping the problem of the best matching subsequence to the problem of the shortest path in a directed acyclic graph providing the minimum distance. The classification scope provides the assignment by a voting criterion, i.e., the maximum number of signals of a cluster closer to a given segment will be taken. A flow chart of this prognostic process is shown in Figure 7.

4. Application Setting

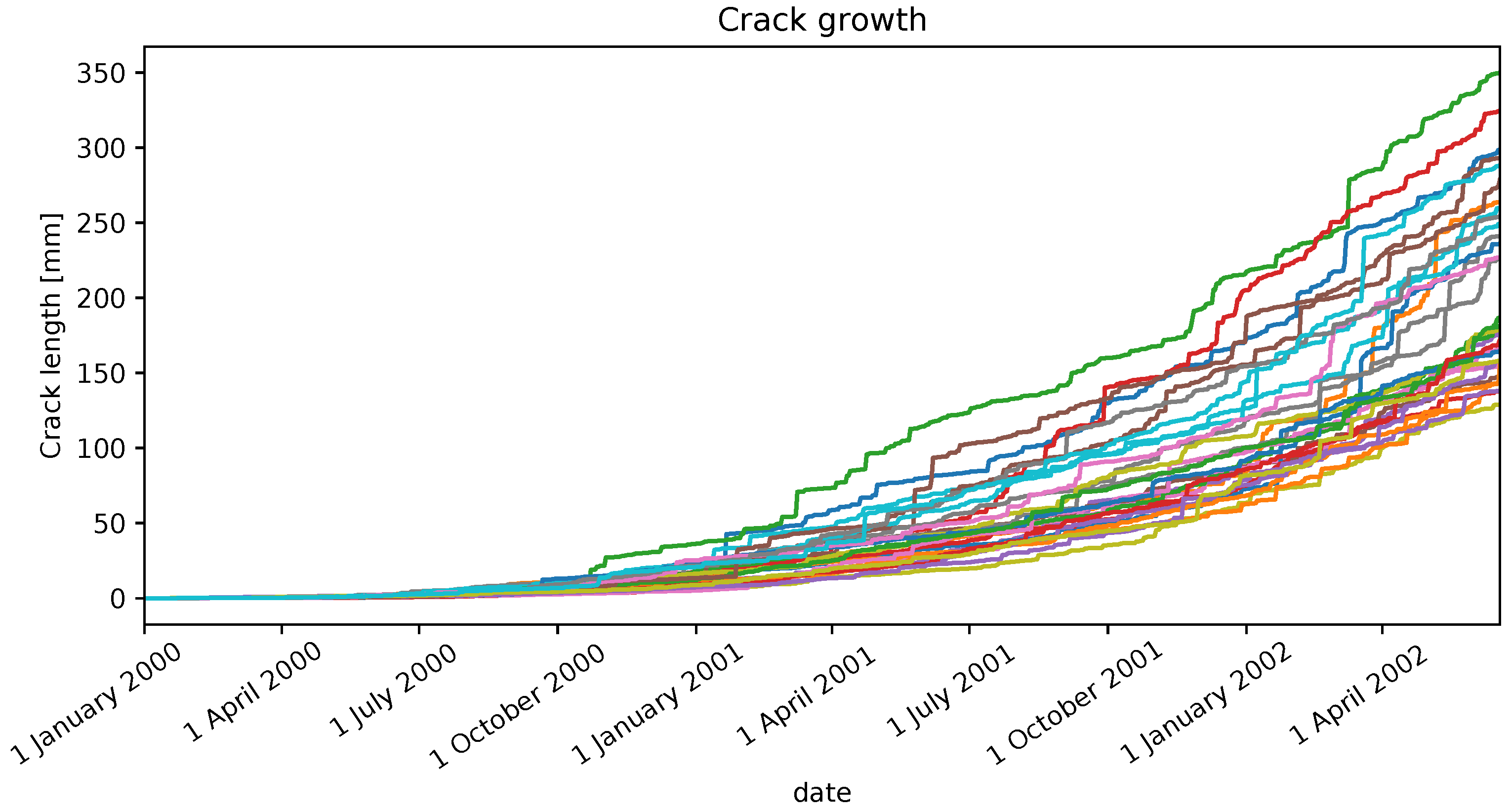

4.1. Crack Growth

4.1.1. Problem Description

4.1.2. Prognostic

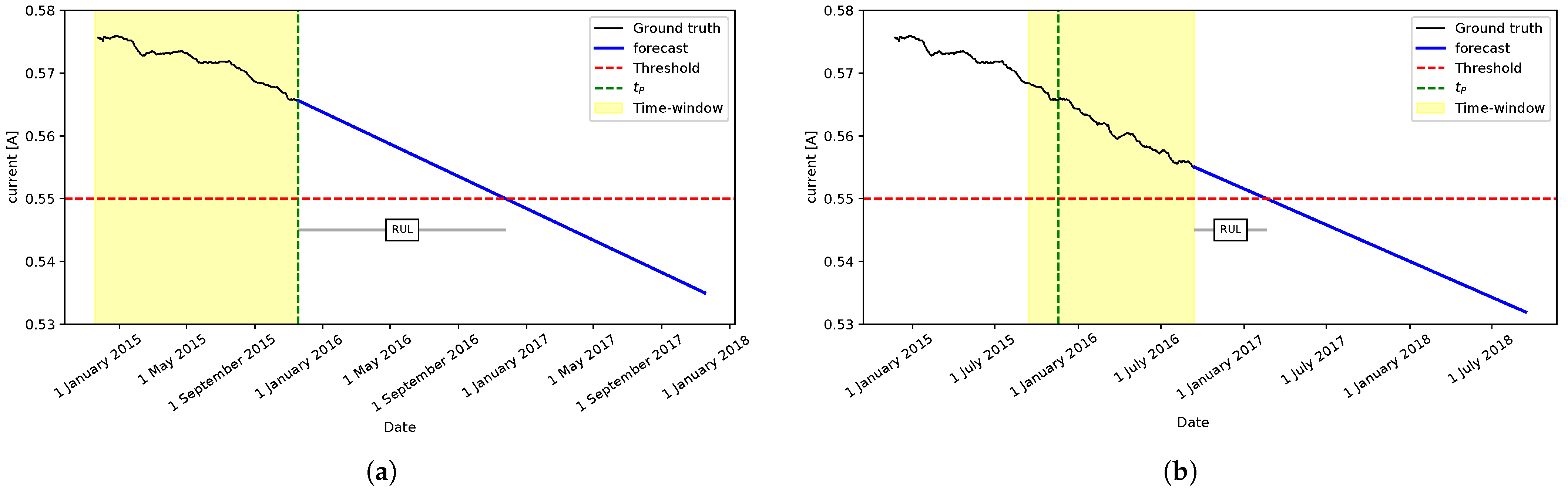

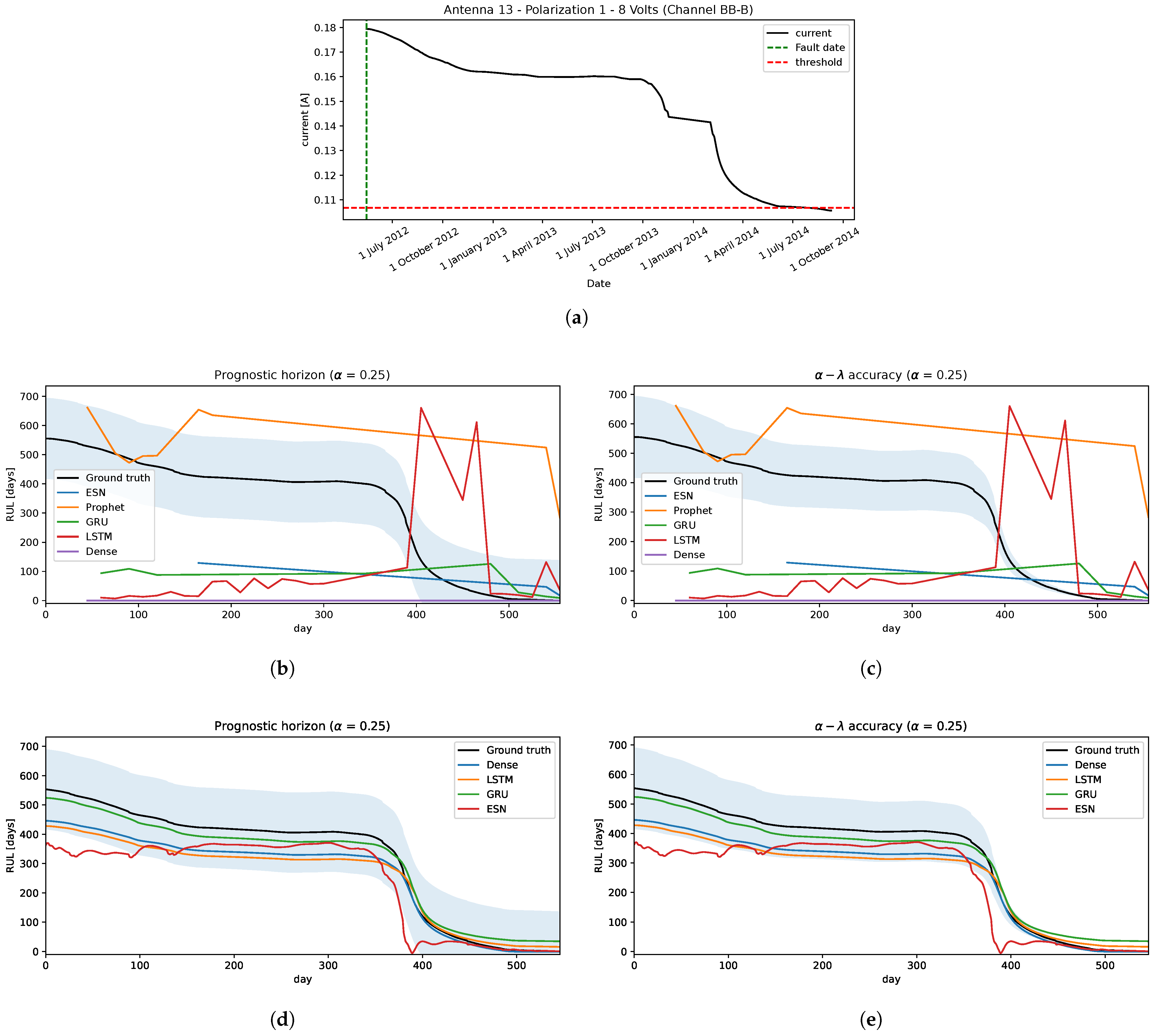

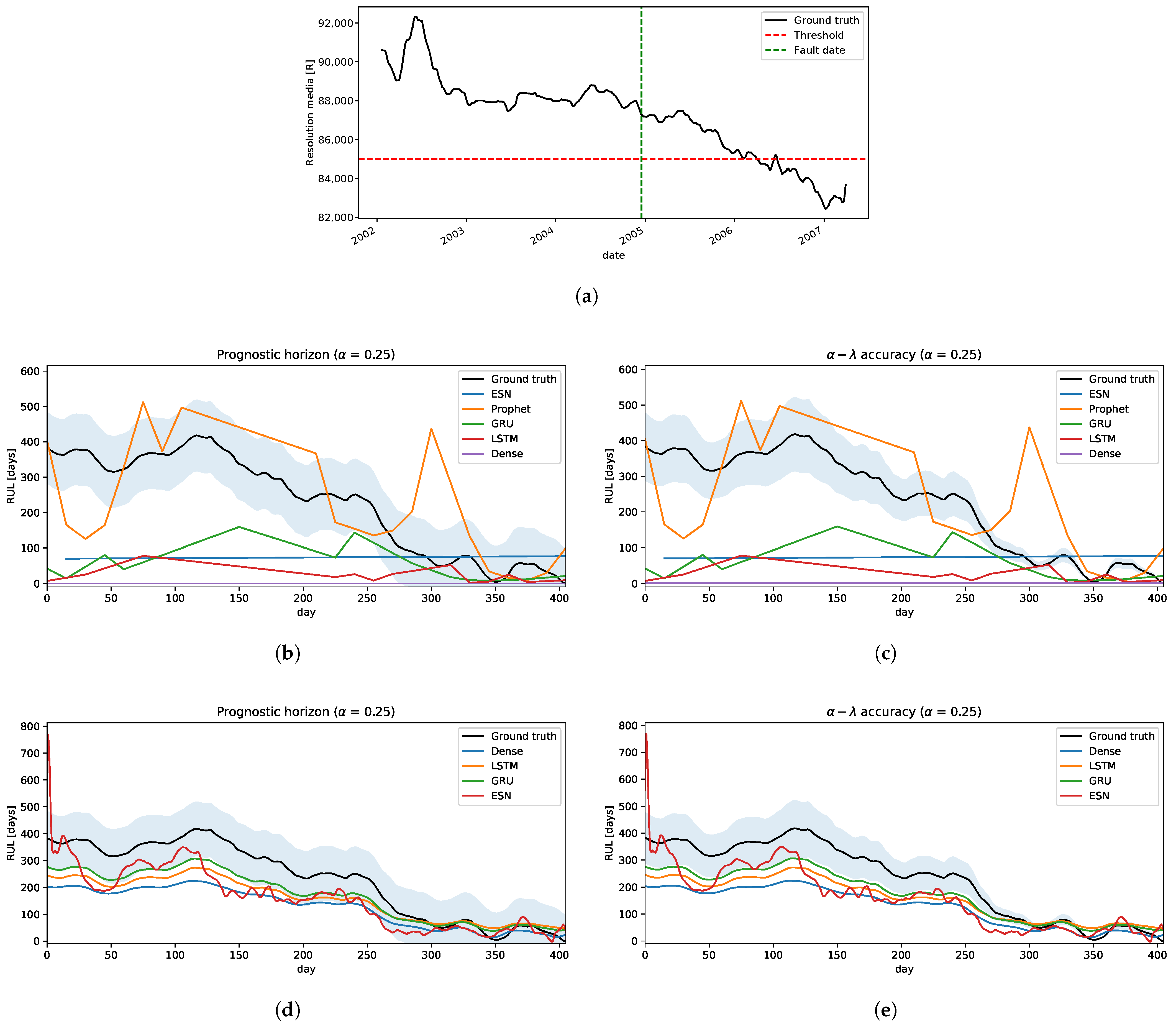

- Strategy A: following the methodology in Section 3.3.1, we estimate RUL shifting the time window by 15 days in every iteration, 1 year size of time-window, and 2 years of forecast.The results are shown in Figure 9. In the prognostic horizon, Figure 9b, we can see that all the models underestimate RUL, with some exceptions like the Dense neural network model. Neural network models had poor performances of RUL estimation and mostly fall outside of the confidence interval. Only the Prophet model is relatively close to the ground truth RUL. Concerning the accuracy, only Prophet has a segment close to the ground truth but then falls outside of the confidence interval, underestimating the RUL.

- Strategy B: using the technique proposed in Section 3.3.2 in this problem, we will simplify some steps of this process. Given that all the degradation trajectories are similar, we can assume only one cluster and the classifier will assign to it every time. Hence, the Cluster-Model stage has only one model, which is used to predict the RUL. Basically, this scheme becomes a simple regression model where it is fitted with all the historical-critical segment trajectories limited by its failure threshold and its residual RUL. We use 100 trajectories as run-to-failure signals generated from Equation (2) to fit the model.The performances can be seen in Figure 9d,e. All the models fall inside the confidence interval in the prognostic horizon and are getting closer to the ground truth as they reach the EoL, as illustrated in Figure 9d. Similar behavior is obtained for accuracy, as shown in Figure 9d. Only a few times, some methods go out and then go back into the confidence interval, e.g., LSTM and GRU, but these behaviors are acceptable.

4.2. Intermediate Frequency Processor Degradation Problem

4.2.1. Problem Description

4.2.2. Prognostic

- Strategy A: the performances of this method are illustrated in Figure 10b,c, in which we can see that none of these models give good predictions of RUL, nor when it approaches the EoL.

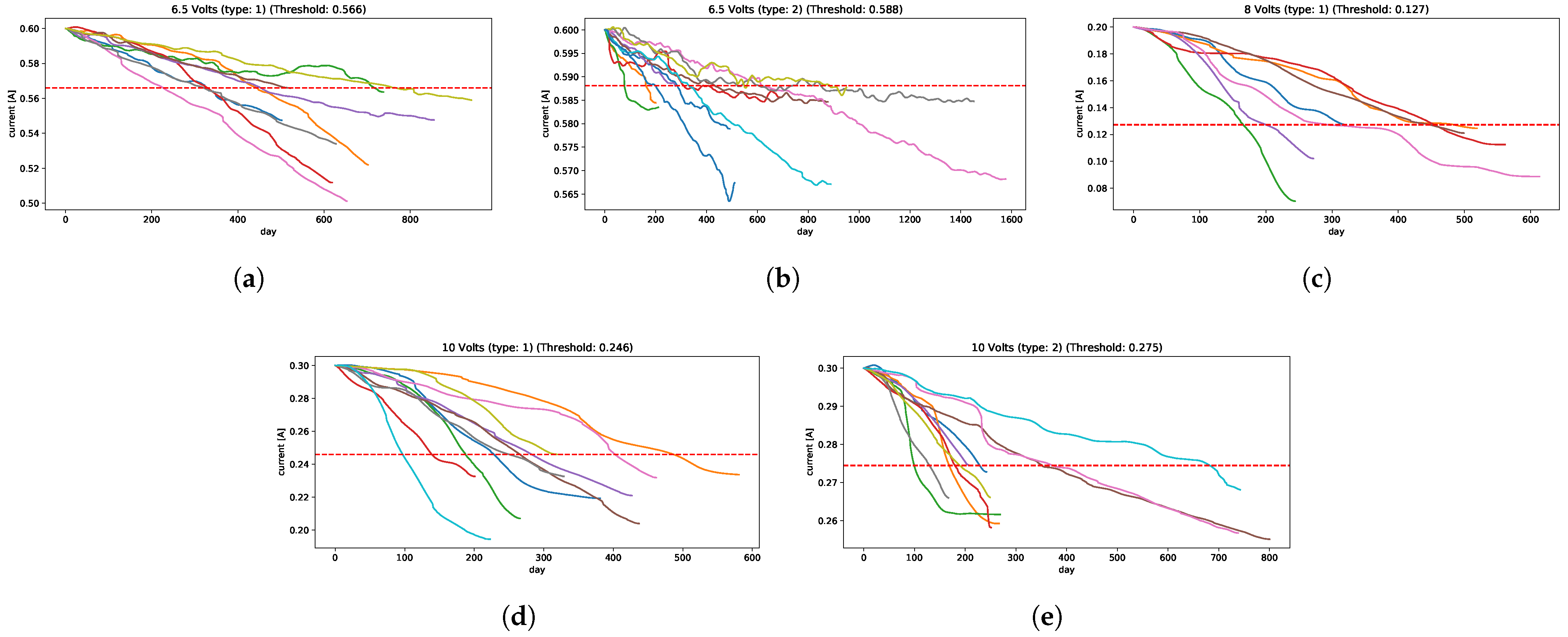

- Strategy B: from the historical run-to-failure signals, different degradation levels appears in each voltage’s current of the IFP. In this application, each voltage’s signals are clustered into a few clusters so that signals in each cluster have similar degradation levels making it easier to define an appropriate failure threshold in each cluster, just as described in Section 3.2, defining a total of 5 clusters for this problem: 2 cluster for 6.5 volts, 1 cluster for 8 volts, and 2 clusters for 10 volts; they are shown in Figure 11, in which, for each cluster has its corresponded failure threshold value, i.e., 0.566 is the failure threshold for cluster 1, 0.2 for cluster 2, 0.127 for cluster 3, 0.246 for cluster 4, and 0.275 for cluster 5; or it can be explained as 5.7%, 2%, 36%, 18%, and 8.3% of degradation levels for each cluster, respectively. These clusters are used to classify the new arriving pre-processed signal to select the appropriate failure threshold and predict the RUL.The cluster generation criterion focuses mainly on the Minimum Variance Matching (MVM) similarity metric, which is obtained by solving a shortest path (SP) problem that measures the distance between pairs of signals. The principle is to fix a signal as a centroid and compute the distances with the other signals; these distances are ordered, and using the same fundamentals of the elbow method, a group of signals is selected to form a cluster and the rest in another group . This process is repeated for the cluster to verify if the signals are similar or if another cluster is generated, and so on. Repeated runs were made, resulting in most cases with 5 clusters being enough to separate these signals.The performances under both metrics, Figure 10d,e, show that almost all models have relatively good predictions of RUL falling inside of the confidence interval. Only ESN has some irregularities, but these underestimations are acceptable. The Dense neural network model outperforms the others slightly when it gets close to the EoL.Analyzing the results, the models that used strategy A showed a problem similar to what occurs in the application of the Crack Growth in Section 4.1.2, in which the models remain sensitive to small variations, generating a great variability in the estimation of EoL and therefore, affects the prediction of the RUL.Taking into account these effects that it could have on the models, if strategy B is used and a set of historical run-to-failure signals is considered that have great variability in the degradation behavior, different from that used in Section 4.1.2 in which the signals are quite similar, could affect the models in predicting the RUL due to these variations in the level of degradation of the historical signals.To avoid this, it was decided to group the signals into groups that are similar in degradation level and address them separately. As a consequence, the performance in different models manages to predict the RUL close to the real value.

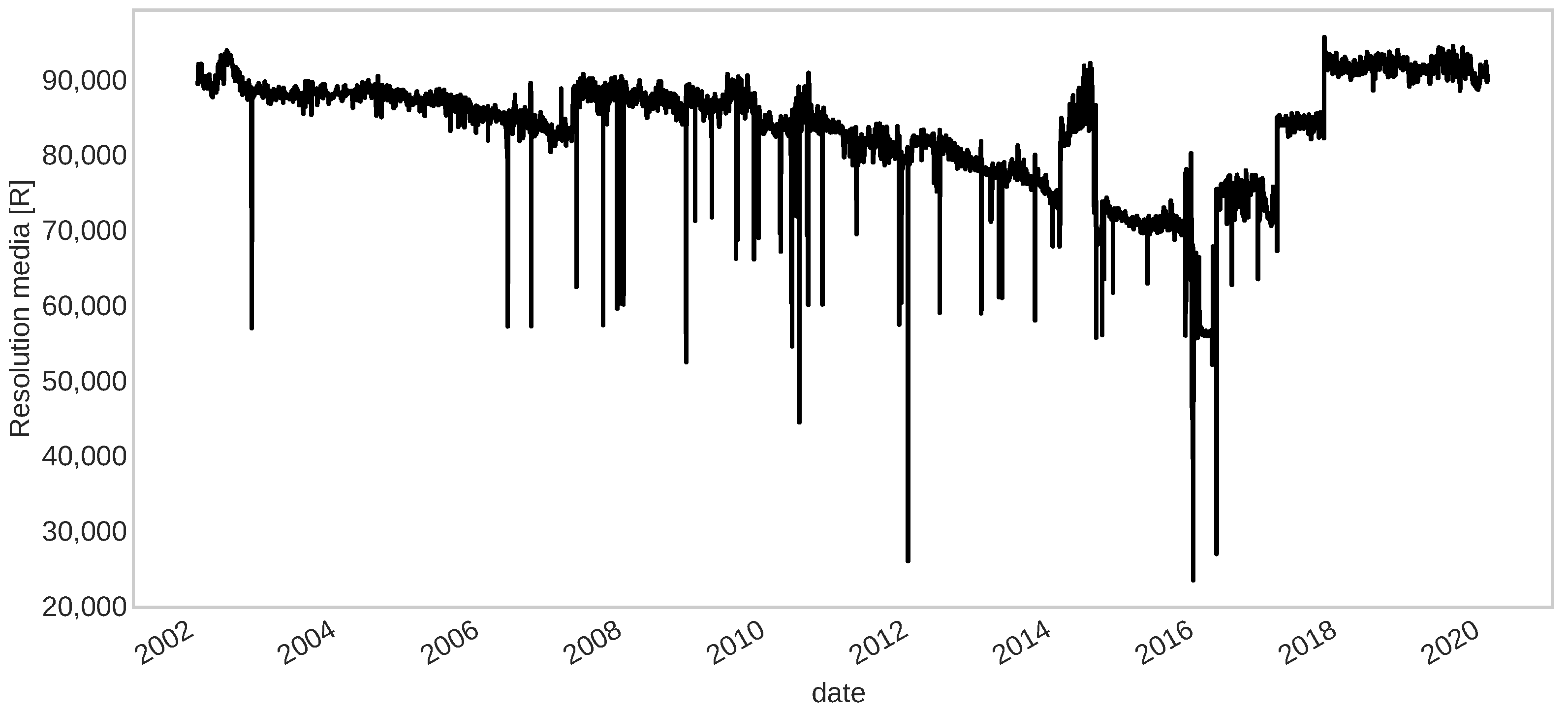

4.3. Validation in a Different Setting

4.3.1. Problem Description

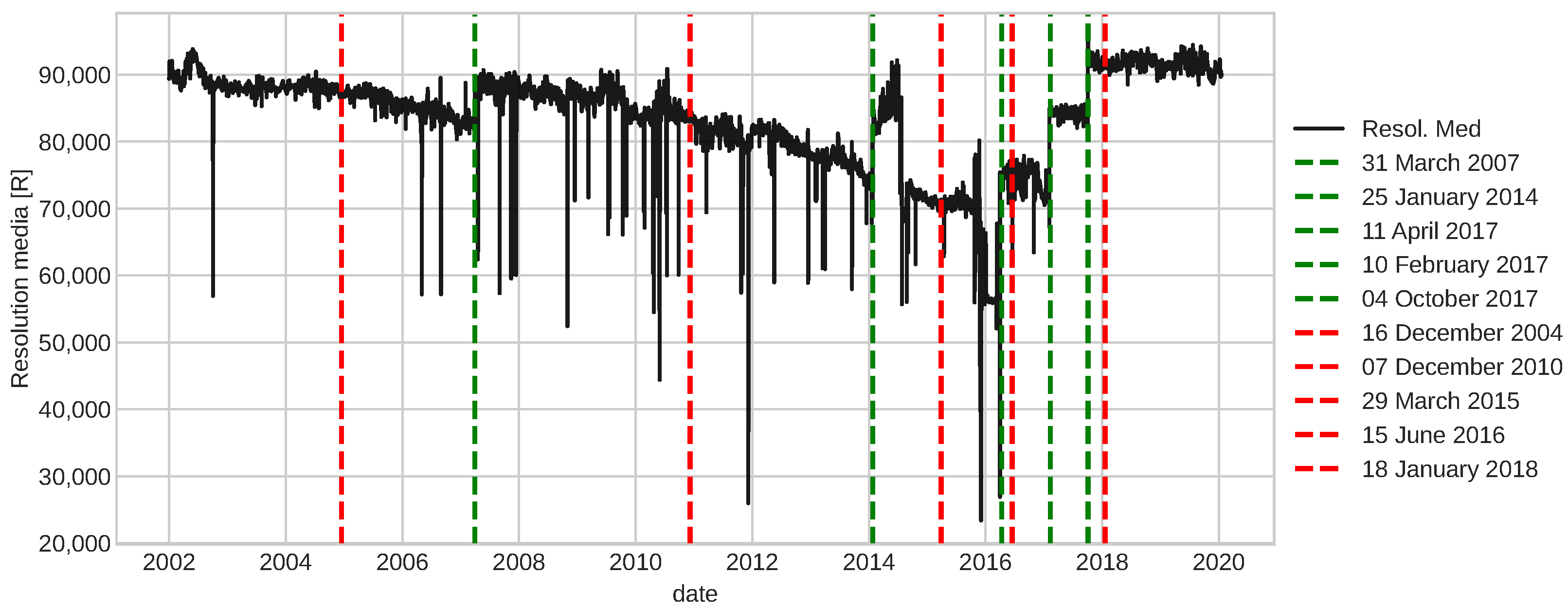

4.3.2. Fault Detection

4.3.3. Prognostic

- Strategy A: applying this method, we can see Figure 15b,c, that neural networks have a poor quality of predictions, whereas the Prophet model has some segments that fall inside the confidence interval, but it is not good enough because of its irregular behaviour.

- Strategy B: in this problem, there are no historical run-to-failure signals. So, clustering over this component is not possible. However, given that the degradation behavior present in this component is similar to the IFP of ALMA, we can use these clusters and try to transfer to this problem. To achieve this, it is necessary to transform the new arriving pre-processed signal Q and scale it to every cluster described in Section 3.2, this means, for each cluster, we define a transformed signal of Q as followswhere,is the scaling constant, and are standard normal conditions and failure threshold of the cluster i, respectively. is the first sample of the signal in this problem, and is its associated failure threshold.The classifier result gives the final scope, which is used for model selection in the prediction of RUL. In the prognostic horizon metric, Figure 15d, the GRU model outperforms the other models. However, the other models fall inside the confidence interval after 200 days. So, all the models in this metric are acceptable. From the accuracy side, most of the time, these models are not inside the confidence interval, underestimating the RUL on the first 300 days . After that, they are around the ground truth up to the EoL. In this case, the GRU model is close to the frontier of the confidence interval, which is not as bad as an instance for RUL computation by using a similar degradation signal developed from another system or component like the IFP Problem.

5. Discussion

- Strategy A: time-window size was 365 days, 2 years of forecasting, a lookback of 19 samples format (e.g., samples from time until time t with a total of 20 samples) as input, and 20 epochs for neural networks adjustments. For simplicity, we assume for this method that new data is available every 15 days to update RUL estimation. The model hyperparameters used for prognostics are summarized in Table 1.

- Strategy B: a lookback of 9 samples format (e.g., samples from time until time t with a total of 10 samples) as input, and 15 epochs for neural networks adjustments. The model hyperparameters used for prognostics are summarized in Table 2.

- Crack Growth in Section 4.1.2: is a classical problem in the literature in which the degradation is a monotonical non-decreasing trajectory. The worst performances are given by strategy A, where only the Prophet model was relatively close to the ground truth RUL. Whereas, the strategy B, all prediction models are significantly well performed on both metrics.

- IFP Degradation in Section 4.2.2: the historical degradation signals are not totally monotonous with different degradation levels and speeds, resulting in different failure threshold values for a set of signals. With this insight, defining a unique failure threshold for all the signals and forecasting the dynamic of the signal until reaching the failure threshold as described by strategy A does not work well. Therefore, clustering signals by degradation levels helps to define appropriately the failure threshold given the characteristic of similarity to a set of historical run-to-failure signals from a cluster. Therefore, using strategy B improves the prediction of RULs, in which ESN is the less accurate model than the other models tested.

- Camera Resolution Degradation in Section 4.3.3: the degradation trajectory showed irregularities similar to the IFP signals, in which there is some segment increase and then decrease, and vice versa. Therefore, the degradation trajectory is also not completely monotonous. Addressing this problem with strategy A showed some difficulties, particularly trying to forecast the dynamic or trend of the signal when the trend of the segment changes in the opposite sense to the degradation, obtaining an overestimation of the RUL. Working with this strategy showed that only the Prophet approximates the ground truth, but it is still not good enough and acceptable. From the strategy B perspective and using the RUL predictive model transferred from the IFP setting provided better results compared to the previous strategy, converging to the ground truth as it reaches the EoL with a few minor exceptions.

6. Conclusions

7. Future Work

- Improving the computation of uncertainty measurements of RUL predictions. This computation will help develop new prescriptive maintenance approaches that help in the decision-making process of maintenance procedures.

- Test this approach on other problems with similar degradation faults to continue evaluating the robustness of this run-to-failure critical segment clustering approach to predict a component’s RUL value.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| RUL | Remaining Useful Life |

| RNN | Recurrent Neural Network |

| ALMA | Atacama Large Millimeter Array |

| CBM | Condition-Based Maintenance |

| PHM | Prognostic and Health Management |

| PH | Prognostic Horizon |

| EoL | End-of-Life |

| ESN | Echo State Network |

| LSTM | Long-Short Term Memory |

| GRU | Gated Recurrent Unit |

| ADTK | Anomaly Detection Toolkit |

| MVM | Minimum Variance Matching |

| IFP | Intermediate Frecuency Processor |

| LSB | Lower Sideband |

| USB | Upper Sideband |

| SW | Switch matrix current |

| UT | Unit Telescope |

| CCD | Charge-Coupled Device |

| EoP | End-of-Prediction |

| ANN | Artificial Neural Network |

| SP | Shortest Path |

Appendix A. Evaluation Metrics

- Pronostic Horizon (PH): it identifies whether a method predicts within specified limits around the ground truth End-of-Life (EoL) so that the predictions are considered trustworthy. If it does, how much time does it allow for any maintenance action to be taken. The longer PH better the model and more time to act based on the prediction with some desired credibility. This metric is defined as:where

- Accuracy: this metric quantifies the prediction quality by identifying whether the prediction falls within specified limits at a particular time; this is a more stringent requirement as compared to PH since it requires predictions to stay within a cone of accuracy. Its output is binary since we need to evaluate whether the following condition is met,where

- Relative Accuracy: a similar notion as accuracy where, instead of finding out whether the predictions fall within given accuracy levels at a given time , we also quantitatively measure the accuracy by the followingwhere is defined previously at accuracy. For measurement of the general behavior of the algorithm over time, Cumulative Relative Accuracy (CRA) can be used, and it is defined aswhere is a weight factor as a function of the RUL at all time indices, is the set of all time indexes before when a prediction is made, and is the cardinality operation of a set. The meaning of these metrics is that as more information becomes available, the prognostic performance improvement will increase as it converges to the ground truth RUL.

- Convergence: it is a useful metric since we expect a prognostics algorithm to converge to the true value as more information accumulates over time. Besides, it shows that the distance between the origin and the centroid of the area under the curve for a metric quantifies convergence, and a faster convergence is desired to achieve high confidence in keeping the prediction horizon as large as possible. Lower distance means a faster convergence. The computation of this metric is defined as, let be the center of mass of the area under the curve . Then, the convergence can be represented by the Euclidean distance between the center of mass and , whereis a non-negative prediction error accuracy or precision metric. In other words, this metric measures the fastness of convergence of a method.

Appendix B. Recurrent Neural Networks

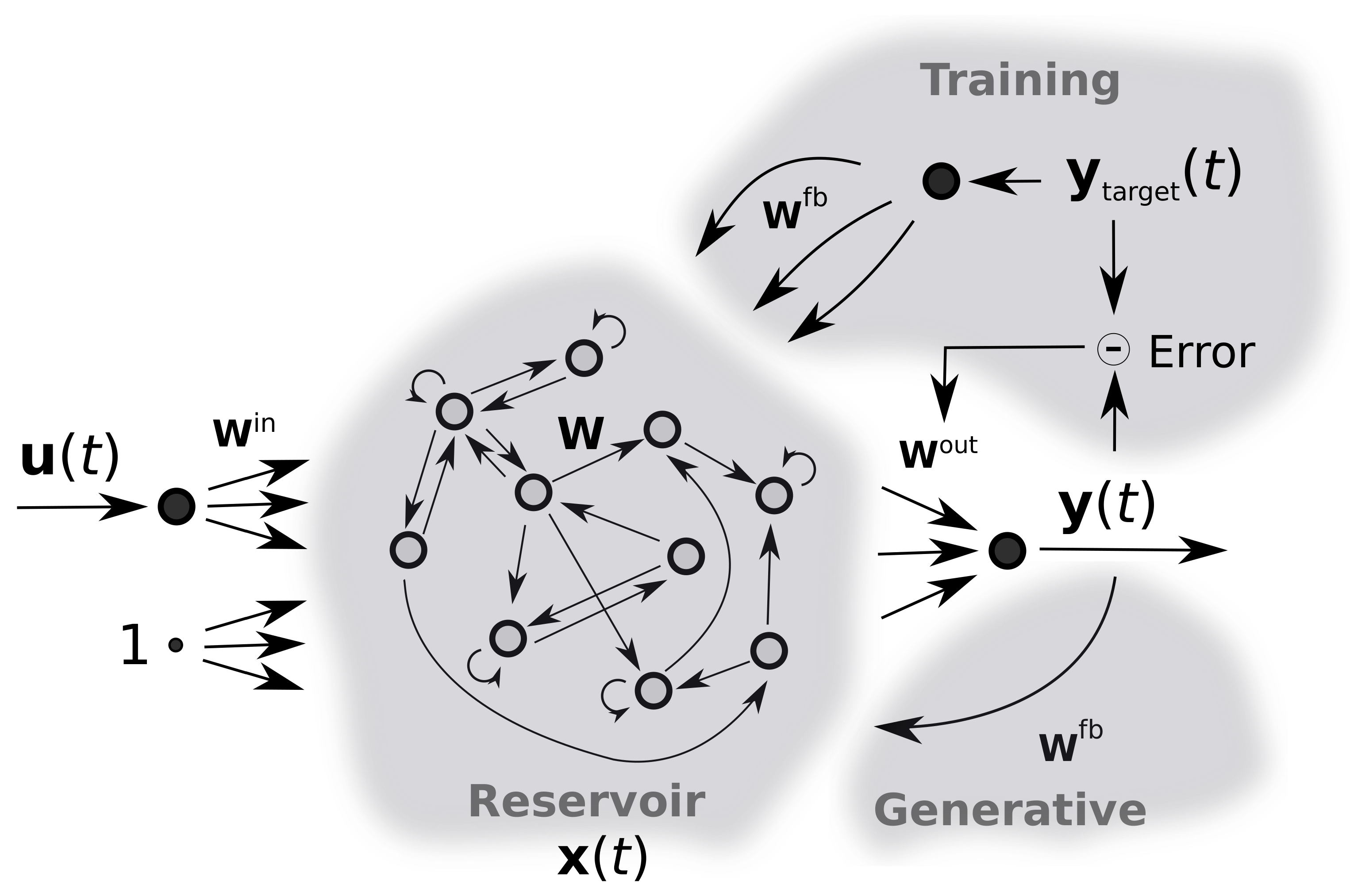

Appendix B.1. Echo State Networks (ESNs)

Appendix B.2. Long-Short Term Memory (LSTM)

- Block input: it consists of combining the input and the previous output of LSTM units for each time step t, and it is defined as

- Input gate: this gate decides which values needs to be updated with new information to the cell state. It is computed as a combination of the input , the previous output of LSTM units , and the previous cell state for each time step t,

- Forget gate: it makes the decision of what information needs to be removed from the LSTM memory, and it is calculated similarly to the input gate.

- Cell state: this step provides an update for the LSTM memory in which the current value is given by the combination of block input , input gate , forget gate and the previous cell state .

- Output gate: this gate makes the decision of what part of the LSTM memory contributes to the output and it is related to the current input vector , the previous output , and the current cell state .

- Block output: finally, this step computes the output , which combines the current cell state and the current output gate .

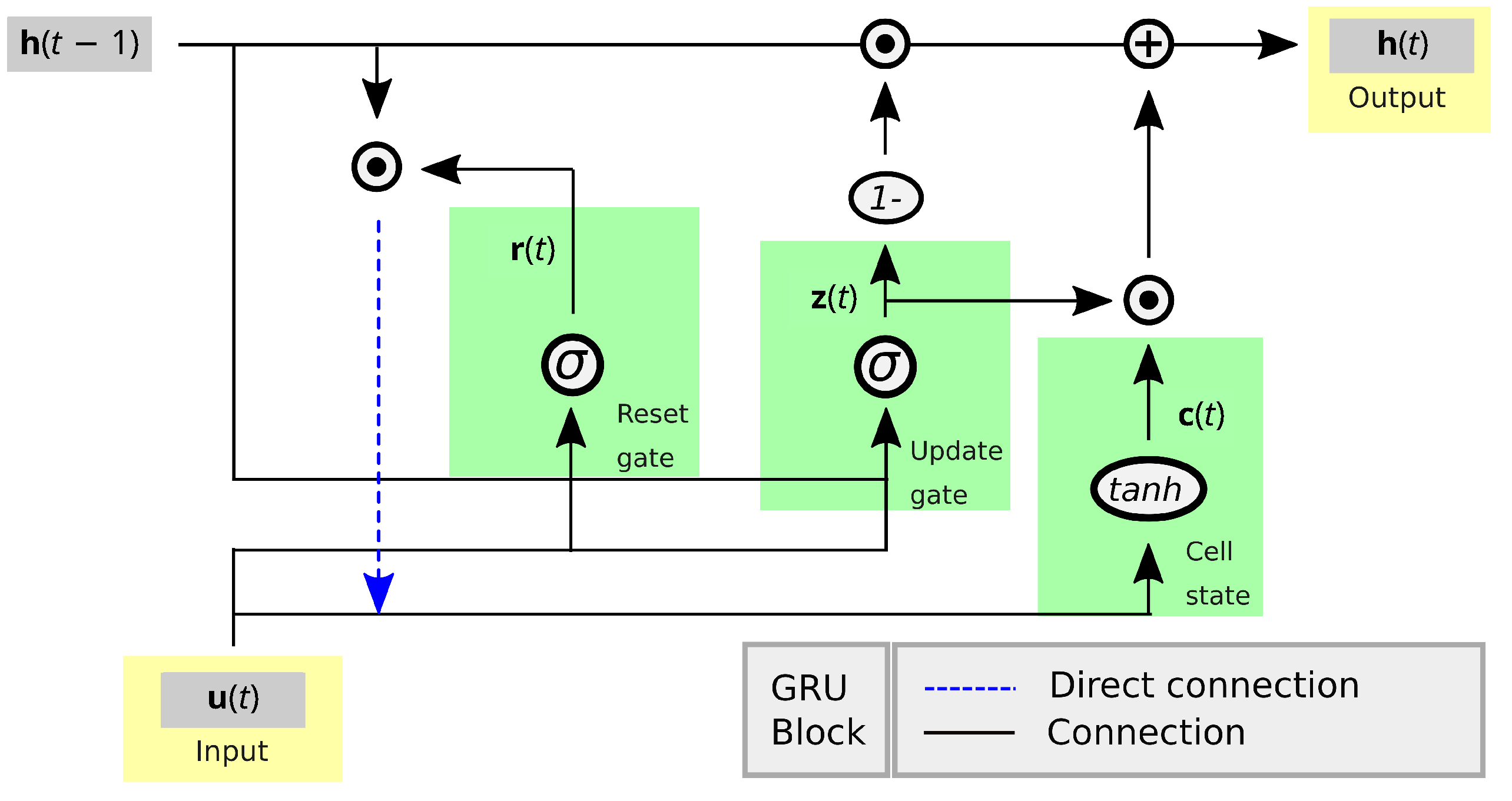

Appendix B.3. Gated Recurrent Unit (GRU)

- Update gate: this gate determines how much previously learned information should be passed on to the future,

- Reset gate: this gate decides how much previously learned information to forget.

- Cell state: it consists of storing the relevant information from the past, using the reset gate to affect the memory content.

- Block output: finally, compute the output

References

- Bougacha, O.; Varnier, C.; Zerhouni, N. A Review of Post-Prognostics Decision-Making in Prognostics and Health Management. Int. J. Progn. Health Manag. 2020, 11, 31. [Google Scholar] [CrossRef]

- Patan, K. Artificial Neural Networks for the Modelling and Fault Diagnosis of Technical Processes; Springer: Berlin/Heidelberg, Germany, 2008. [Google Scholar] [CrossRef]

- Li, Y.; Wang, X.; Lu, N.; Jiang, B. Conditional Joint Distribution-Based Test Selection for Fault Detection and Isolation. IEEE Trans. Cybern. 2021, 1–13. [Google Scholar] [CrossRef]

- Isermann, R. Fault-Diagnosis Systems; Springer: Berlin/Heidelberg, Germany, 2006. [Google Scholar] [CrossRef]

- Shi, J.; He, Q.; Wang, Z. Integrated Stateflow-based simulation modelling and testability evaluation for electronic built-in-test (BIT) systems. Reliab. Eng. Syst. Saf. 2020, 202, 107066. [Google Scholar] [CrossRef]

- Shi, J.; Deng, Y.; Wang, Z. Novel testability modelling and diagnosis method considering the supporting relation between faults and tests. Microelectron. Reliab. 2022, 129, 114463. [Google Scholar] [CrossRef]

- Bindi, M.; Corti, F.; Aizenberg, I.; Grasso, F.; Lozito, G.M.; Luchetta, A.; Piccirilli, M.C.; Reatti, A. Machine Learning-Based Monitoring of DC-DC Converters in Photovoltaic Applications. Algorithms 2022, 15, 74. [Google Scholar] [CrossRef]

- Bindi, M.; Piccirilli, M.C.; Luchetta, A.; Grasso, F.; Manetti, S. Testability Evaluation in Time-Variant Circuits: A New Graphical Method. Electronics 2022, 11, 1589. [Google Scholar] [CrossRef]

- Li, Y.; Chen, H.; Lu, N.; Jiang, B.; Zio, E. Data-Driven Optimal Test Selection Design for Fault Detection and Isolation Based on CCVKL Method and PSO. IEEE Trans. Instrum. Meas. 2022, 71, 1–10. [Google Scholar] [CrossRef]

- Tinga, T.; Loendersloot, R. Aligning PHM, SHM and CBM by understanding the physical system failure behaviour. In Proceedings of the 2nd European Conference of the Prognostics and Health Management Society, PHME 2014, Nantes, France, 8–10 July 2014; pp. 162–171. [Google Scholar]

- Montero Jimenez, J.J.; Schwartz, S.; Vingerhoeds, R.; Grabot, B.; Salaün, M. Towards multi-model approaches to predictive maintenance: A systematic literature survey on diagnostics and prognostics. J. Manuf. Syst. 2020, 56, 539–557. [Google Scholar] [CrossRef]

- Vachtsevanos, G.; Wang, P. Fault prognosis using dynamic wavelet neural networks. In Proceedings of the 2001 IEEE Autotestcon Proceedings, IEEE Systems Readiness Technology Conference, Valley Forge, PA, USA, 20–23 August 2001; pp. 857–870. [Google Scholar] [CrossRef]

- Byington, C.S.; Roemer, M.J.; Galie, T. Prognostic enhancements to diagnostic systems for improved condition-based maintenance [military aircraft]. In Proceedings of the IEEE Aerospace Conference, Big Sky, MT, USA, 9–16 March 2002; Volume 6, p. 6. [Google Scholar] [CrossRef]

- Cho, A.D.; Carrasco, R.A.; Ruz, G.A. Improving prescriptive maintenance by incorporating post-prognostic information through chance constraints. IEEE Access 2022, 10, 55924–55932. [Google Scholar] [CrossRef]

- Cho, A.D.; Carrasco, R.A.; Ruz, G.A.; Ortiz, J.L. Slow Degradation Fault Detection in a Harsh Environment. IEEE Access 2020, 8, 175904–175920. [Google Scholar] [CrossRef]

- Carrasco, R.A.; Núñez, F.; Cipriano, A. Fault detection and isolation in cooperative mobile robots using multilayer architecture and dynamic observers. Robotica 2011, 29, 555–562. [Google Scholar] [CrossRef]

- Isermann, R. Process fault detection based on modeling and estimation methods—A survey. Automatica 1984, 20, 387–404. [Google Scholar] [CrossRef]

- Park, Y.J.; Fan, S.K.S.; Hsu, C.Y. A Review on Fault Detection and Process Diagnostics in Industrial Processes. Processes 2020, 8, 1123. [Google Scholar] [CrossRef]

- Tuan Do, V.; Chong, U.P. Signal Model-Based Fault Detection and Diagnosis for Induction Motors Using Features of Vibration Signal in Two-Dimension Domain. Stroj. Vestn. 2011, 57, 655–666. [Google Scholar] [CrossRef]

- Meinguet, F.; Sandulescu, P.; Aslan, B.; Lu, L.; Nguyen, N.K.; Kestelyn, X.; Semail, E. A signal-based technique for fault detection and isolation of inverter faults in multi-phase drives. In Proceedings of the 2012 IEEE International Conference on Power Electronics, Drives and Energy Systems (PEDES), Bengaluru, India, 16–19 December 2012; pp. 1–6. [Google Scholar]

- Germán-Salló, Z.; Strnad, G. Signal processing methods in fault detection in manufacturing systems. In Proceedings of the 11th International Conference Interdisciplinarity in Engineering, INTER-ENG 2017, Tirgu Mures, Romania, 5–6 October 2017; Volume 22, pp. 613–620. [Google Scholar]

- Duan, J.; Shi, T.; Zhou, H.; Xuan, J.; Zhang, Y. Multiband Envelope Spectra Extraction for Fault Diagnosis of Rolling Element Bearings. Sensors 2018, 18, 1466. [Google Scholar] [CrossRef]

- Abid, A.; Khan, M.; Iqbal, J. A review on fault detection and diagnosis techniques: Basics and beyond. Artif. Intell. Rev. 2021, 54, 3639–3664. [Google Scholar] [CrossRef]

- Khorasgani, H.; Jung, D.E.; Biswas, G.; Frisk, E.; Krysander, M. Robust residual selection for fault detection. In Proceedings of the 53rd IEEE Conference on Decision and Control, Los Angeles, CA, USA, 15–17 December 2014; pp. 5764–5769. [Google Scholar]

- Ortiz, J.L.; Carrasco, R.A. Model-based fault detection and diagnosis in ALMA subsystems. In Observatory Operations: Strategies, Processes, and Systems VI; Peck, A.B., Benn, C.R., Seaman, R.L., Eds.; SPIE: Bellingham, WA, USA, 2016; pp. 919–929. [Google Scholar] [CrossRef]

- Ortiz, J.L.; Carrasco, R.A. ALMA engineering fault detection framework. In Observatory Operations: Strategies, Processes, and Systems VII; Peck, A.B., Benn, C.R., Seaman, R.L., Eds.; SPIE: Bellingham, WA, USA, 2018; p. 94. [Google Scholar] [CrossRef]

- Gómez, M.; Ezquerra, J.; Aranguren, G. Expert System Hardware for Fault Detection. Appl. Intell. 1998, 9, 245–262. [Google Scholar] [CrossRef]

- Fuessel, D.; Isermann, R. Hierarchical motor diagnosis utilizing structural knowledge and a self-learning neuro-fuzzy scheme. IEEE Trans. Ind. Electron. 2000, 47, 1070–1077. [Google Scholar] [CrossRef]

- He, Q.; Zhao, X.; Du, D. A novel expert system of fault diagnosis based on vibration for rotating machinery. J. Meas. Eng. 2013, 1, 219–227. [Google Scholar]

- Napolitano, M.R.; An, Y.; Seanor, B.A. A fault tolerant flight control system for sensor and actuator failure using neural networks. Aircr. Des. 2000, 3, 103–128. [Google Scholar] [CrossRef]

- Cork, L.; Walker, R.; Dunn, S. Fault detection, identification and accommodation techniques for unmanned airborne vehicles. In Proceedings of the Australian International Aerospace Congress, Fuduoka, Japan, 13–17 March 2005; AIAC, Ed.; AIAC: Australia, Melbourne, 2005; pp. 1–18. [Google Scholar]

- Masrur, M.A.; Chen, Z.; Zhang, B.; Murphey, Y.L. Model-Based Fault Diagnosis in Electric Drive Inverters Using Artificial Neural Network. In Proceedings of the 2007 IEEE Power Engineering Society General Meeting, Tampa, FL, USA, 24–28 June 2007; pp. 1–7. [Google Scholar]

- Wootton, A.; Day, C.; Haycock, P. Echo State Network applications in structural health monitoring. In Proceedings of the 2015 International Joint Conference on Neural Networks (IJCNN), Killarney, Ireland, 12–17 July 2015; pp. 1–7. [Google Scholar] [CrossRef]

- Morando, S.; Marion-Péra, M.C.; Yousfi Steiner, N.; Jemei, S.; Hissel, D.; Larger, L. Fuel Cells Fault Diagnosis under Dynamic Load Profile Using Reservoir Computing. In Proceedings of the 2016 IEEE Vehicle Power and Propulsion Conference (VPPC), Hangzhou, China, 17–20 October 2016; pp. 1–6. [Google Scholar] [CrossRef]

- Fan, Y.; Nowaczyk, S.; Rögnvaldsson, T.; Antonelo, E.A. Predicting Air Compressor Failures with Echo State Networks. In Proceedings of the Third European Conference of the Prognostics and Health Management Society 2016, PHME 2016, Bilbao, Spain, 5–8 July 2016; PHM Society: Nashville, TN, USA, 2016; pp. 568–578. [Google Scholar]

- Westholm, J. Event Detection and Predictive Maintenance Using Component Echo State Networks. Master’s Thesis, Lund University, Lund, Sweden, 2018. [Google Scholar]

- Li, Y. A Fault Prediction and Cause Identification Approach in Complex Industrial Processes Based on Deep Learning. Comput. Intell. Neurosci. 2021, 2021, 6612342. [Google Scholar] [CrossRef]

- Liu, J.; Pan, C.; Lei, F.; Hu, D.; Zuo, H. Fault prediction of bearings based on LSTM and statistical process analysis. Reliab. Eng. Syst. Saf. 2021, 214, 107646. [Google Scholar] [CrossRef]

- Zhu, Y.; Li, G.; Tang, S.; Wang, R.; Su, H.; Wang, C. Acoustic signal-based fault detection of hydraulic piston pump using a particle swarm optimization enhancement CNN. Appl. Acoust. 2022, 192, 108718. [Google Scholar] [CrossRef]

- Jana, D.; Patil, J.; Herkal, S.; Nagarajaiah, S.; Duenas-Osorio, L. CNN and Convolutional Autoencoder (CAE) based real-time sensor fault detection, localization, and correction. Mech. Syst. Signal Process. 2022, 169, 108723. [Google Scholar] [CrossRef]

- Long, J.; Zhang, R.; Yang, Z.; Huang, Y.; Liu, Y.; Li, C. Self-Adaptation Graph Attention Network via Meta-Learning for Machinery Fault Diagnosis With Few Labeled Data. IEEE Trans. Instrum. Meas. 2022, 71, 1–11. [Google Scholar] [CrossRef]

- Czajkowski, A.; Patan, K. Robust Fault Detection by Means of Echo State Neural Network. In Advanced and Intelligent Computations in Diagnosis and Control; Kowalczuk, Z., Ed.; Springer International Publishing: Cham, Switzerland, 2016; pp. 341–352. [Google Scholar]

- Liu, C.; Yao, R.; Zhang, L.; Liao, Y. Attention Based Echo State Network: A Novel Approach for Fault Prognosis. In Proceedings of the 2019 11th International Conference on Machine Learning and Computing, ICMLC ’19, Zhuhai, China, 22–24 February 2019; Association for Computing Machinery: New York, NY, USA, 2019; pp. 489–493. [Google Scholar] [CrossRef]

- Ben Salah, S.; Fliss, I.; Tagina, M. Echo State Network and Particle Swarm Optimization for Prognostics of a Complex System. In Proceedings of the 2017 IEEE/ACS 14th International Conference on Computer Systems and Applications (AICCSA), Hammamet, Tunisia, 30 October–3 November 2017; pp. 1027–1034. [Google Scholar] [CrossRef]

- Luo, J.; Namburu, M.; Pattipati, K.; Qiao, L.; Kawamoto, M.; Chigusa, S. Model-based prognostic techniques [maintenance applications]. In Proceedings of the AUTOTESTCON 2003, IEEE Systems Readiness Technology Conference, Anaheim, CA, USA, 22–25 September 2003; pp. 330–340. [Google Scholar] [CrossRef]

- Montoya, F.R.J.; Valderrama, M.; Quintero, V.L.; Pérez, A.; Orchard, M. Time-of-Failure Probability Mass Function Computation Using the First-Passage-Time Method Applied to Particle Filter-based Prognostics. In Proceedings of the Annual Conference of the PHM Society, Virtual, 9–13 November 2020. [Google Scholar] [CrossRef]

- Rozas, H.; Jaramillo, F.; Perez, A.; Jimenez, D.; Orchard, M.E.; Medjaher, K. A method for the reduction of the computational cost associated with the implementation of particle-filter-based failure prognostic algorithms. Mech. Syst. Signal Process. 2020, 135, 106421. [Google Scholar] [CrossRef]

- Hua, Z.; Zheng, Z.; Péra, M.C.; Gao, F. Data-driven Prognostics for PEMFC Systems by Different Echo State Network Prediction Structures. In Proceedings of the 2020 IEEE Transportation Electrification Conference Expo (ITEC), Chicago, IL, USA, 23–26 June 2020; pp. 495–500. [Google Scholar] [CrossRef]

- Xu, M.; Baraldi, P.; Al-Dahidi, S.; Zio, E. Fault prognostics by an ensemble of Echo State Networks in presence of event based measurements. Eng. Appl. Artif. Intell. 2020, 87, 103346. [Google Scholar] [CrossRef]

- El-Koujok, M.; Gouriveau, R.; Zerhouni, N. Reducing arbitrary choices in model building for prognostics: An approach by applying parsimony principle on an evolving neuro-fuzzy system. Microelectron. Reliab. 2011, 51, 310–320. [Google Scholar] [CrossRef]

- Khelif, R.; Chebel-Morello, B.; Malinowski, S.; Laajili, E.; Fnaiech, F.; Zerhouni, N. Direct Remaining Useful Life Estimation Based on Support Vector Regression. IEEE Trans. Ind. Electron. 2017, 64, 2276–2285. [Google Scholar] [CrossRef]

- Chen, C.; Lu, N.; Jiang, B.; Wang, C. A Risk-Averse Remaining Useful Life Estimation for Predictive Maintenance. IEEE/CAA J. Autom. Sin. 2021, 8, 412–422. [Google Scholar] [CrossRef]

- Kang, Z.; Catal, C.; Tekinerdogan, B. Remaining Useful Life (RUL) Prediction of Equipment in Production Lines Using Artificial Neural Networks. Sensors 2021, 21, 932. [Google Scholar] [CrossRef]

- Ding, Y.; Jia, M. Convolutional Transformer: An Enhanced Attention Mechanism Architecture for Remaining Useful Life Estimation of Bearings. IEEE Trans. Instrum. Meas. 2022, 71, 1–10. [Google Scholar] [CrossRef]

- Zhang, Y.; Xin, Y.; Liu, Z.W.; Chi, M.; Ma, G. Health status assessment and remaining useful life prediction of aero-engine based on BiGRU and MMoE. Reliab. Eng. Syst. Saf. 2022, 220, 108263. [Google Scholar] [CrossRef]

- He, R.; Tian, Z.; Zuo, M.J. A semi-supervised GAN method for RUL prediction using failure and suspension histories. Mech. Syst. Signal Process. 2022, 168, 108657. [Google Scholar] [CrossRef]

- Saxena, A.; Celaya, J.R.; Saha, B.; Saha, S.; Goebel, K. On Applying the Prognostic Performance Metrics. In Proceedings of the International Conference on Prognostics and Health Management (PHM), San Diego, CA, USA, 27 September–1 October 2009. [Google Scholar]

- Taylor, S.J.; Letham, B. Forecasting at Scale. Am. Stat. 2018, 72, 37–45. [Google Scholar] [CrossRef]

- Anomaly Detection Toolkit (ADTK). Available online: https://arundo-adtk.readthedocs-hosted.com/en/stable/ (accessed on 20 January 2021).

- Dielman, T. Choosing Smoothing Parameters For Exponential Smoothing: Minimizing Sums Of Squared Versus Sums Of Absolute Errors. J. Mod. Appl. Stat. Methods 2006, 5, 117–128. [Google Scholar] [CrossRef]

- Ismail, Z.; Foo, F.Y. Genetic Algorithm for Parameter Estimation in Double Exponential Smoothing. Aust. J. Basic Appl. Sci. 2011, 5, 1174–1180. [Google Scholar]

- Chusyairi, A.; Pelsri, R.N.S.; Handayani, E. Optimization of Exponential Smoothing Method Using Genetic Algorithm to Predict E-Report Service. In Proceedings of the 2018 3rd International Conference on Information Technology, Information System and Electrical Engineering (ICITISEE), Yogyakarta, Indonesia, 13–14 November 2018; pp. 292–297. [Google Scholar] [CrossRef]

- Simoni, A.; Dhamo Gjika, E.; Puka, L. Evolutionary Algorithm PSO and Holt Winters method applied in Hydro Power Plants optimization. In Proceedings of the SPNA—Statistics Probability and Numerical Analysis 2015, Tirana, Albania, 5–6 December 2015. [Google Scholar]

- Wang, Y.; Tang, H.; Wen, T.; Ma, J. A hybrid intelligent approach for constructing landslide displacement prediction intervals. Appl. Soft Comput. 2019, 81, 105506. [Google Scholar] [CrossRef]

- Cerliani, M. Tsmoothie. Available online: https://pypi.org/project/tsmoothie/ (accessed on 29 January 2021).

- Esma, E.Ö. Chapter 6, Clustering of Time-Series Data. In Data Mining; Birant, D., Ed.; IntechOpen: Rijeka, Croatia, 2021. [Google Scholar] [CrossRef]

- Goebel, K.; Saxena, A.; Saha, S.; Saha, B.; Celaya, J. Prognostic Performance Metrics. Mach. Learn. Knowl. Discov. Eng. Syst. Health Manag. 2011, 22, 147. [Google Scholar] [CrossRef]

- Latecki, L.J.; Megalooikonomou, V.; Wang, Q.; Yu, D. An elastic partial shape matching technique. Pattern Recognit. 2007, 40, 3069–3080. [Google Scholar] [CrossRef]

- Giorgino, T. Computing and Visualizing Dynamic Time Warping Alignments in R: The dtw Package. J. Stat. Softw. 2009, 31, 1–24. [Google Scholar] [CrossRef]

- Tormene, P.; Giorgino, T.; Quaglini, S.; Stefanelli, M. Matching Incomplete Time Series with Dynamic Time Warping: An Algorithm and an Application to Post-Stroke Rehabilitation. Artif. Intell. Med. 2008, 45, 11–34. [Google Scholar] [CrossRef] [PubMed]

- Klysz, S.; Leski, A. Chapter 7, Good Practice for Fatigue Crack Growth Curves Description. In Applied Fracture Mechanics; IntechOpen: Rijeka, Croatia, 2012; pp. 197–228. [Google Scholar] [CrossRef]

- Cadini, F.; Zio, E.; Avram, D. Monte Carlo-based filtering for fatigue crack growth estimation. Probabilistic Eng. Mech. 2009, 24, 367–373. [Google Scholar] [CrossRef]

- Rege, K.; Lemu, H.G. A review of fatigue crack propagation modelling techniques using FEM and XFEM. IOP Conf. Ser. Mater. Sci. Eng. 2017, 276, 012027. [Google Scholar] [CrossRef]

- Acuña-Ureta, D.E.; Orchard, M.E.; Wheeler, P. Computation of time probability distributions for the occurrence of uncertain future events. Mech. Syst. Signal Process. 2021, 150, 107332. [Google Scholar] [CrossRef]

- Ortiz, J.; Castillo, J. Automating engineering verification in ALMA subsystems. In Observatory Operations: Strategies, Processes, and Systems V; Peck, A.B., Benn, C.R., Seaman, R.L., Eds.; International Society for Optics and Photonics, SPIE: Bellingham, WA, USA, 2014; Volume 9149, pp. 809–819. [Google Scholar] [CrossRef]

- Jaeger, H. The “Echo State” Approach to Analysing and Training Recurrent Neural Networks—With an Erratum Note; GMD Technical Report; German National Research Center for Information Technology: Bonn, Germany, 2001; Volume 148, p. 13. [Google Scholar]

- Jaeger, H.; Lukoševičius, M.; Popovici, D.; Siewert, U. Optimization and applications of echo state networks with leaky-integrator neurons. Neural Netw. 2007, 20, 335–352. [Google Scholar] [CrossRef]

- Lukoševičius, M.; Jaeger, H. Reservoir computing approaches to recurrent neural network training. Comput. Sci. Rev. 2009, 3, 127–149. [Google Scholar] [CrossRef]

- Lukoševičius, M. A Practical Guide to Applying Echo State Networks. In Neural Networks: Tricks of the Trade: Second Edition; Montavon, G., Orr, G.B., Müller, K.R., Eds.; Springer: Berlin/Heidelberg, Germany, 2012; pp. 659–686. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long Short-term Memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Houdt, G.V.; Mosquera, C.; Nápoles, G. A review on the long short-term memory model. Artif. Intell. Rev. 2020, 53, 5929–5955. [Google Scholar] [CrossRef]

- Greff, K.; Srivastava, R.K.; Koutník, J.; Steunebrink, B.R.; Schmidhuber, J. LSTM: A search space odyssey. IEEE Trans. Neural Netw. Learn. Syst. 2017, 28, 2222–2232. [Google Scholar] [CrossRef] [PubMed]

- Cho, K.; van Merrienboer, B.; Gulcehre, C.; Bahdanau, D.; Bougares, F.; Schwenk, H.; Bengio, Y. Learning Phrase Representations using RNN Encoder-Decoder for Statistical Machine Translation. arXiv 2014, arXiv:1406.1078. [Google Scholar]

| Model | ||||||

| ESN | GRU | LSTM | ||||

| Hyperparameter | input_size: | 20 | input_shape: | (20, 1) | input_shape: | (20, 1) |

| output_size: | 1 | units (GRU): | 20 | units (LSTM): | 20 | |

| reservoir_size: | 100 | activation (GRU): | reLU | activation (LSTM): | reLU | |

| spectralRadius: | 0.75 | units (Dense): | 20 | units (Dense): | 20 | |

| noise_scale: | 0.001 | activation (Dense): | reLU | activation (Dense): | reLU | |

| leaking_rate: | 0.5 | units (Dense): | 1 | units (Dense): | 1 | |

| sparsity: | 0.3 | activation (Dense): | linear | activation (Dense): | linear | |

| activation: | tanh | optimizer: | adam | optimizer: | adam | |

| feedback: | True | |||||

| regularizationType: | Ridge | |||||

| regularizationParam: | auto | |||||

| Prophet | ||||||

| changepoint_prior_scale: | 0.05 | |||||

| seasonality_prior_scale | 0.01 | |||||

| daily_seasonality: | False | |||||

| Model | ||||||

|---|---|---|---|---|---|---|

| ESN | GRU | Dense | ||||

| Hyperparameter | input_size: | 10 | input_shape: | (10, 1) | input_shape: | 10 |

| output_size: | 1 | units (GRU): | 15 | units (Dense): | 50 | |

| reservoir_size: | 250 | activation (GRU): | reLU | activation (Dense): | reLU | |

| spectralRadius: | 1.0 | recurrent_dropout (GRU): | 0.5 | dropout: | 0.5 | |

| noise_scale: | 0.001 | units (GRU) | 15 | units (Dense): | 25 | |

| leaking_rate: | 0.7 | activation (GRU): | reLU | activation (Dense): | reLU | |

| sparsity: | 0.2 | recurrent_dropout (GRU): | 0.5 | dropout: | 0.5 | |

| activation: | tanh | units (Dense): | 1 | units (Dense): | 1 | |

| feedback: | False | activation (Dense): | linear | activation (Dense): | linear | |

| regularizationType: | Ridge | optimizer: | adam | optimizer: | adam | |

| regularizationParam: | 0.01 | |||||

| Problem | Prophet | ESN | LSTM | GRU |

|---|---|---|---|---|

| Crack growth | 252.40 | 109.49 | 2170.89 | 2197.84 |

| Resolution Degradation | 193.41 | 31.60 | 1995.64 | 1997.99 |

| IFP Degradation | 82.28 | 38.20 | 892.36 | 890.27 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cho, A.D.; Carrasco, R.A.; Ruz, G.A. A RUL Estimation System from Clustered Run-to-Failure Degradation Signals. Sensors 2022, 22, 5323. https://doi.org/10.3390/s22145323

Cho AD, Carrasco RA, Ruz GA. A RUL Estimation System from Clustered Run-to-Failure Degradation Signals. Sensors. 2022; 22(14):5323. https://doi.org/10.3390/s22145323

Chicago/Turabian StyleCho, Anthony D., Rodrigo A. Carrasco, and Gonzalo A. Ruz. 2022. "A RUL Estimation System from Clustered Run-to-Failure Degradation Signals" Sensors 22, no. 14: 5323. https://doi.org/10.3390/s22145323

APA StyleCho, A. D., Carrasco, R. A., & Ruz, G. A. (2022). A RUL Estimation System from Clustered Run-to-Failure Degradation Signals. Sensors, 22(14), 5323. https://doi.org/10.3390/s22145323