Affective State during Physiotherapy and Its Analysis Using Machine Learning Methods

Abstract

:1. Introduction

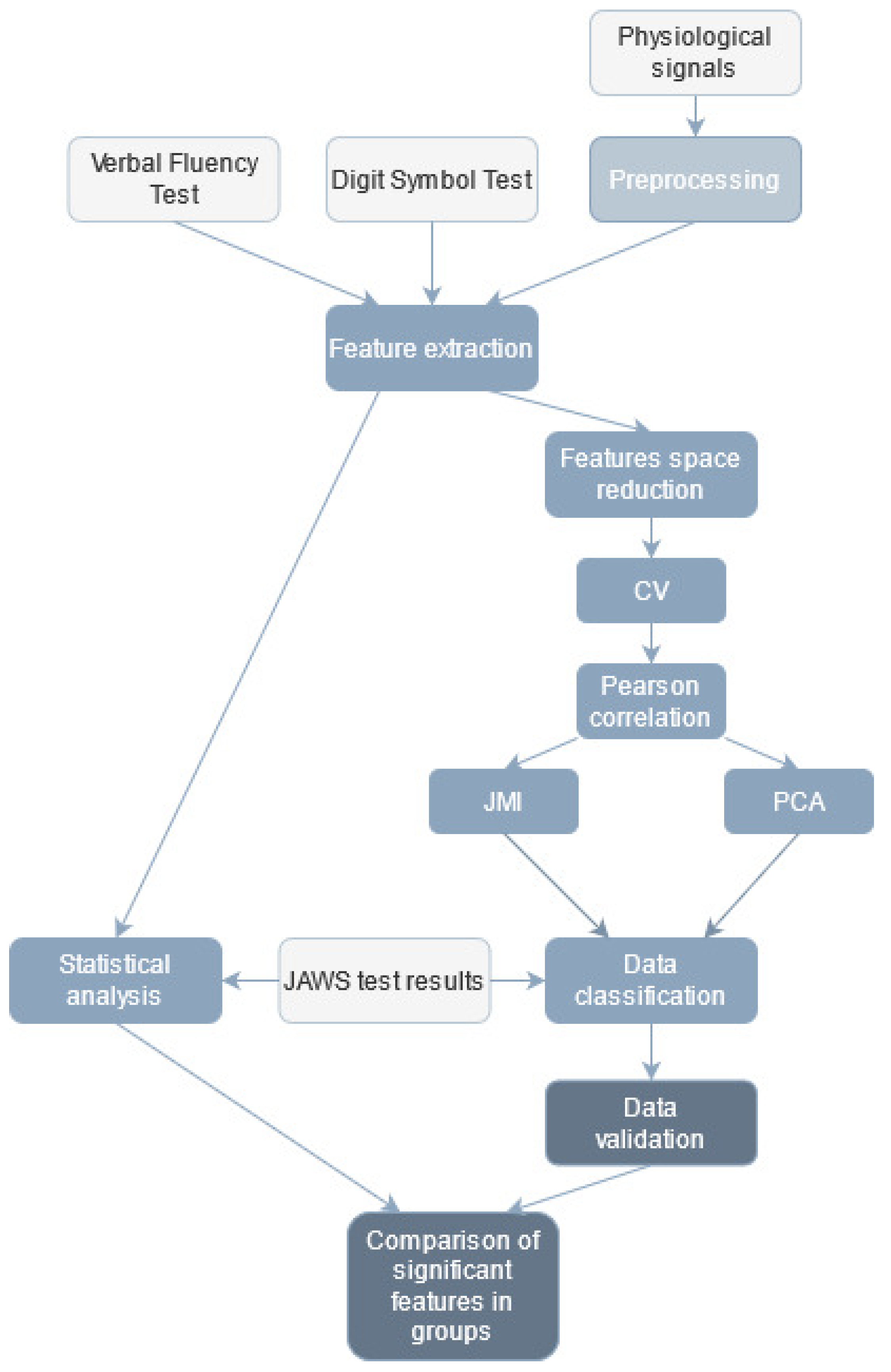

2. Materials and Methods

2.1. Research Group

2.2. Study Design

2.3. Data Acquisition

3. Data Analysis

3.1. Executive and Cognitive Abilities

3.2. Psychological Measurement

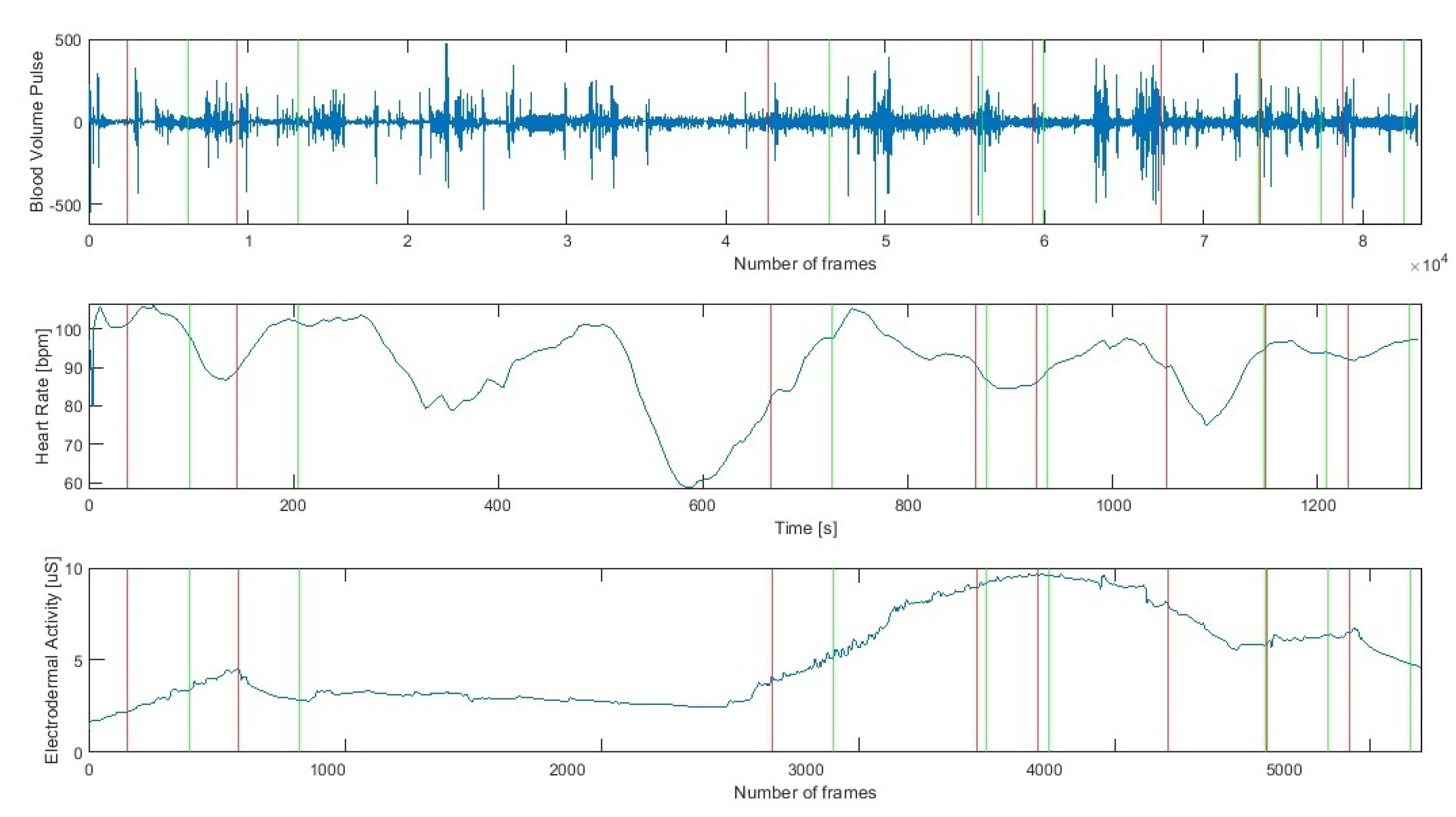

3.3. Signal Preprocessing

- VFT before exercise (vft1),

- DST before exercise (dst1),

- exercise no 1 (ex1),

- exercise no 2 (ex2),

- exercise no 3 (ex3),

- psychological test (ptest),

- VFT after exercise (vft2),

- DST after exercise (dst2).

3.3.1. Heart Signals

3.3.2. EDA

3.3.3. ACC

3.4. Statistical Analysis

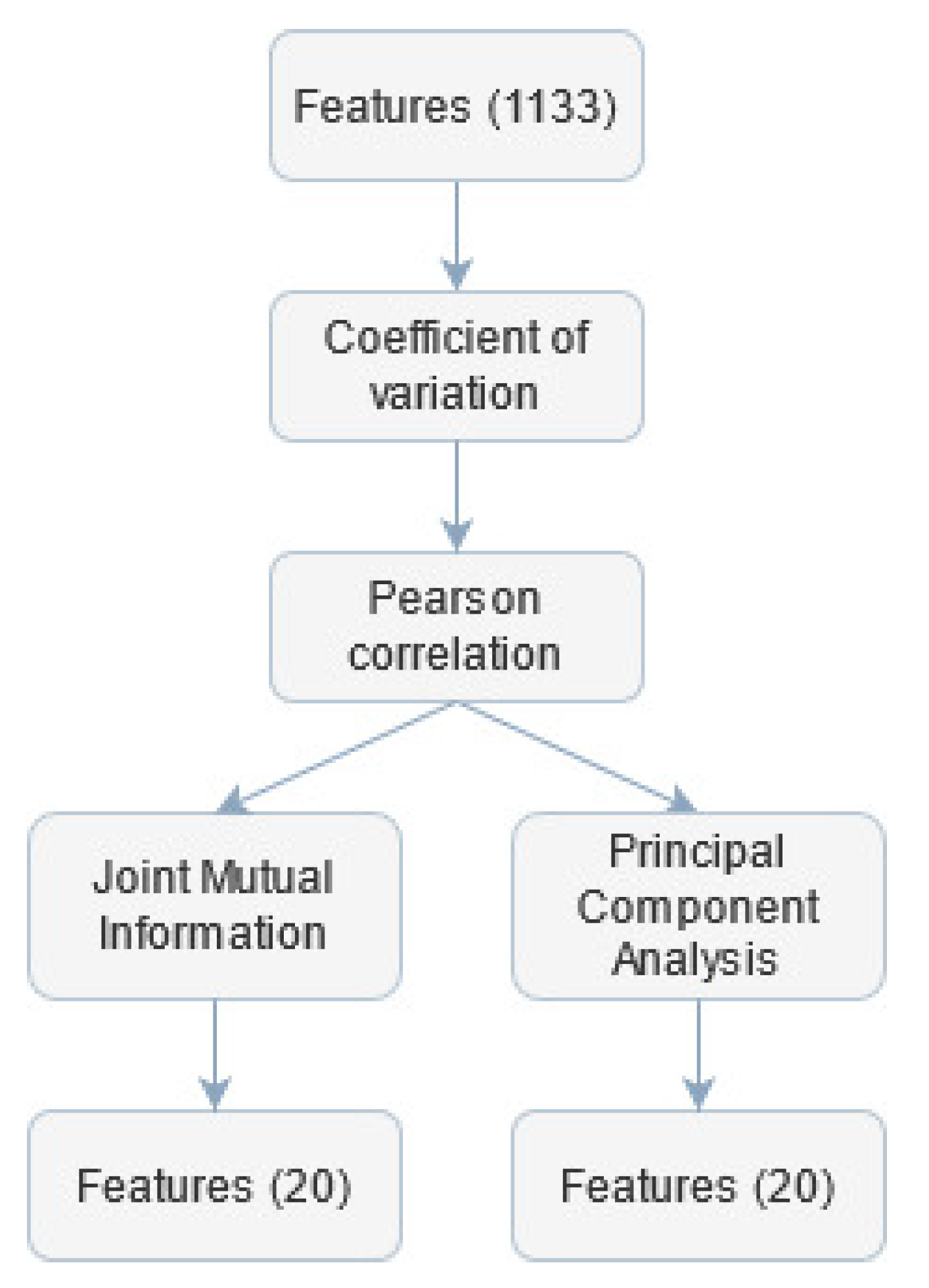

3.5. Features Selection

3.6. Classification

3.7. Method Validation

4. Results

4.1. Statistical Analysis of the Signals

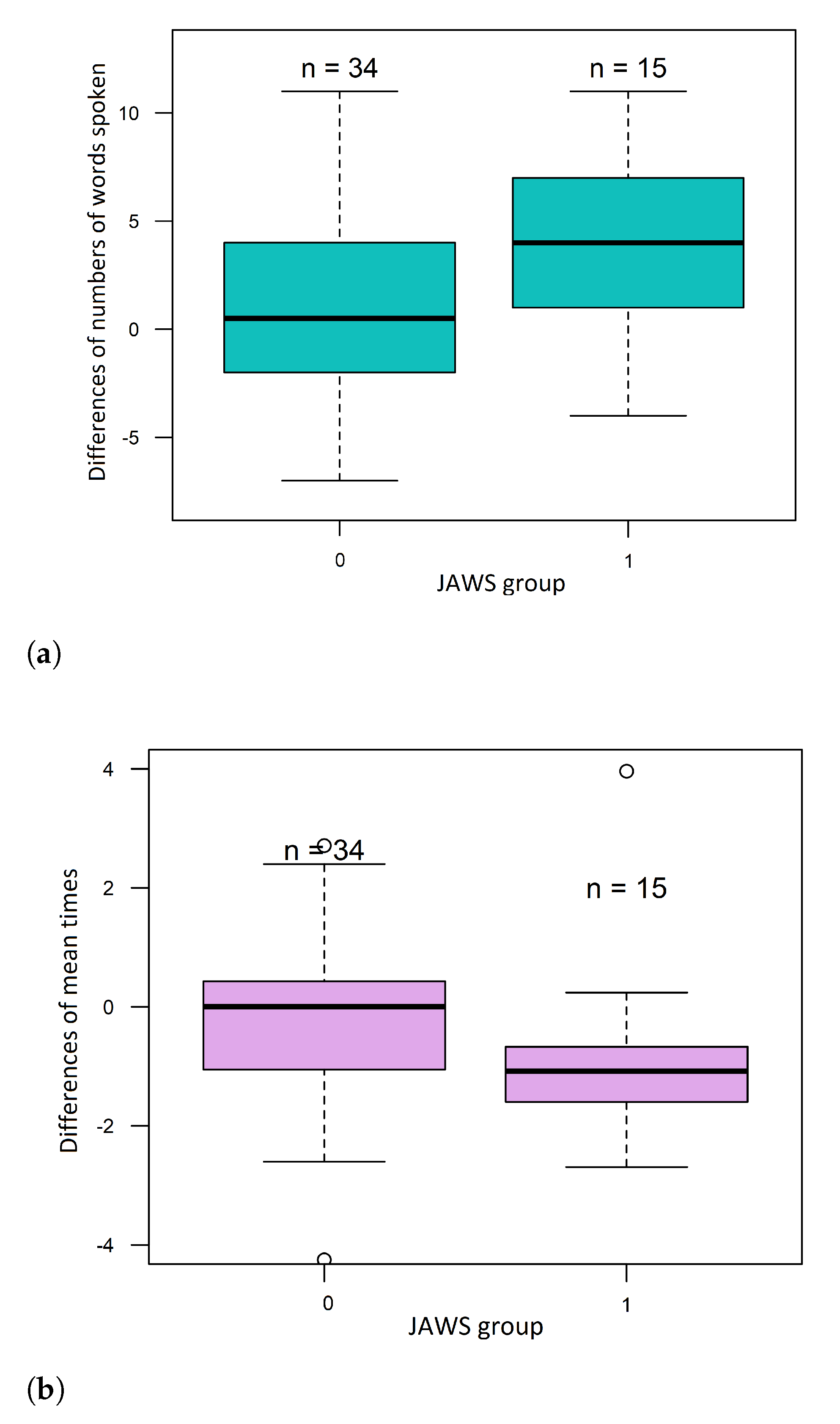

4.2. Psychological Measurement

4.3. Executive and Cognitive Abilities

4.4. Data Classification

4.4.1. Features Selection

4.4.2. Machine Learning

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| ACC X/Y/Z | Accelerometer X/Y/Z |

| ACC | Accuracy |

| BVP | Blood Volume Pulse |

| coefficient of variation | |

| D4S | Disc4Spine Project |

| DL | Deep Learning |

| EDA | Electroderma Activity |

| FN | False Negative |

| FP | False Positive |

| GSR | Galvanic Skin Response |

| HR | Heart Rate |

| JAWS | Job-Related Affective Well–being Scale |

| JMI | Joint Mutual Information |

| kNN | k-Nearest Neighbours |

| ML | Machine Learning |

| obj | observed data of EDA |

| PCA | Principal Component Analysis |

| PPV | precision |

| TN | True Negative |

| TNR | True Negative Rate |

| TP | True Positive |

| TPR | True Positive Rate |

| VFT | Verbal Fluency Test |

References

- Lemmens, K.M.M.; Nieboer, A.P.; Van Schayck, C.P.; Asin, J.D.; Huijsman, R. A model to evaluate quality and effectiveness of disease management. BMJ Qual. Saf. 2008, 17, 447–453. [Google Scholar] [CrossRef]

- Casalino, L.P. Disease management and the organization of physician practice. JAMA 2005, 293, 485–488. [Google Scholar] [CrossRef]

- Greene, J.; Hibbard, J.H. Why does patient activation matter? An examination of the relationships between patient activation and health-related outcomes. J. Gen. Intern. Med. 2012, 27, 520–526. [Google Scholar] [CrossRef] [Green Version]

- Gagnon, M.P.; Ndiaye, M.A.; Larouche, A.; Chabot, G.; Chabot, C.; Buyl, R.; Fortin, J.-P.; Giguère, A.; Leblanc, A.; Légaré, F.; et al. Optimising patient active role with a user-centred eHealth platform (CONCERTO+) in chronic diseases management: A study protocol for a pilot cluster randomised controlled trial. BMJ Open 2019, 9, e028554. [Google Scholar] [CrossRef]

- Friedberg, M.W.; SteelFisher, G.K.; Karp, M.; Schneider, E.C. Physician groups’ use of data from patient experience surveys. J. Gen. Intern. Med. 2011, 26, 498–504. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Harper, F.W.; Peterson, A.M.; Uphold, H.; Albrecht, T.L.; Taub, J.W.; Orom, H.; Phipps, S.; Penner, L.A. Longitudinal study of parent caregiving self-efficacy and parent stress reactions with pediatric cancer treatment procedures. Psycho Oncol. 2013, 22, 1658–1664. [Google Scholar] [CrossRef]

- Terry, R.; Niven, C.; Brodie, E.; Jones, R.; Prowse, M. An exploration of the relationship between anxiety, expectations and memory for postoperative pain. Acute Pain 2007, 9, 135–143. [Google Scholar] [CrossRef]

- Ghandeharioun, A.; Fedor, S.; Sangermano, L.; Ionescu, D.; Alpert, J.; Dale, C.; Sontag, D.; Picard, R. Objective assessment of depressive symptoms with machine learning and wearable sensors data. In Proceedings of the 2017 Seventh International Conference on Affective Computing and Intelligent Interaction (ACII), San Antonio, TX, USA, 23–26 October 2017; pp. 325–332. [Google Scholar]

- Romaniszyn, P.; Kania, D.; Bugdol, M.N.; Pollak, A.; Mitas, A.W. Behavioral and Physiological Profile Analysis While Exercising—Case Study. In Information Technology in Biomedicine; Springer: Cham, Switzerland, 2021; pp. 161–173. [Google Scholar]

- Romaniszyn-Kania, P.; Pollak, A.; Danch-Wierzchowska, M.; Kania, D.; Myśliwiec, A.P.; Piętka, E.; Mitas, A.W. Hybrid System of Emotion Evaluation in Physiotherapeutic Procedures. Sensors 2020, 20, 6343. [Google Scholar] [CrossRef]

- Izard, C.E. Four systems for emotion activation: Cognitive and noncognitive processes. Psychol. Rev. 1993, 100, 68. [Google Scholar] [CrossRef] [PubMed]

- Ackerman, B.P.; Abe, J.A.A.; Izard, C.E. Differential emotions theory and emotional development. In What Develops in Emotional Development? Springer: Boston, MA, USA, 1998; pp. 85–106. [Google Scholar]

- Lazarus, R.S.; Folkman, S. Stress, Appraisal, and Coping; Springer: New York, NY, USA, 1984. [Google Scholar]

- Lazarus, R.S.; Smith, C.A. Knowledge and appraisal in the cognition—Emotion relationship. Cogn. Emot. 1988, 2, 281–300. [Google Scholar] [CrossRef]

- Beck, A.T.; Clark, D.A. An information processing model of anxiety: Automatic and strategic processes. Behav. Res. Ther. 1997, 35, 40–58. [Google Scholar] [CrossRef]

- Parkinson, B. Untangling the appraisal-emotion connection. Personal. Soc. Psychol. Rev. 1997, 1, 62–79. [Google Scholar] [CrossRef]

- Russell, J.A.; Carroll, J.M. On the bipolarity of positive and negative affect. Psychol. Bull. 1999, 125, 3. [Google Scholar] [CrossRef]

- Van Katwyk, P.T.; Fox, S.; Spector, P.E.; Kelloway, E.K. Using the Job-Related Affective Well-Being Scale (JAWS) to investigate affective responses to work stressors. J. Occup. Health Psychol. 2000, 5, 219. [Google Scholar] [CrossRef]

- Selye, H. The Stress of Life; McGraw-Hill Book Company: New York, NY, USA, 1956. [Google Scholar]

- Buchwald, P.; Schwarzer, C. The exam-specific Strategic Approach to Coping Scale and interpersonal resources. Anxiety Stress Coping 2003, 16, 281–291. [Google Scholar] [CrossRef]

- Schachter, R. Enhancing performance on the scholastic aptitude test for test-anxious high school students. Biofeedback 2007, 35, 105–109. [Google Scholar]

- Shaikh, B.; Kahloon, A.; Kazmi, M.; Khalid, H.; Nawaz, K.; Khan, N.; Khan, S. Students, stress and coping strategies: A case of Pakistani medical school. Educ. Health Chang. Learn. Pract. 2004, 17, 346–353. [Google Scholar] [CrossRef] [PubMed]

- Cameron, L.D. Anxiety, cognition, and responses to health threats. In The Self-Regulation of Health and Illness Behaviour; Cameron, L.D., Leventhal, H., Eds.; Routledge: London, UK, 2003; pp. 157–183. [Google Scholar]

- Wine, J.D. Evaluation anxiety: A cognitive-attentional construct. In Series in Clinical & Community Psychology: Achievement, Stress & Anxiety; American Psychological Association: Washington, DC, USA, 1982; pp. 207–219. [Google Scholar]

- Fredrickson, B.L.; Mancuso, R.A.; Branigan, C.; Tugade, M.M. The undoing effect of positive emotions. Motiv. Emot. 2000, 24, 237–258. [Google Scholar] [CrossRef] [PubMed]

- Fredrickson, B.L.; Joiner, T. Positive emotions trigger upward spirals toward emotional well-being. Psychol. Sci. 2002, 13, 172–175. [Google Scholar] [CrossRef] [PubMed]

- Fredrickson, L.B.; Levenson, R.W. Positive emotions speed recovery from the cardiovascular sequelae of negative emotions. Cogn. Emot. 1998, 12, 191–220. [Google Scholar] [CrossRef] [Green Version]

- Tugade, M.M.; Fredrickson, B.L. Regulation of positive emotions: Emotion regulation strategies that promote resilience. J. Happiness Stud. 2007, 8, 311333. [Google Scholar] [CrossRef]

- Gable, S.L.; Reis, H.T.; Impett, E.A.; Asher, E.R. What Do You Do When Things Go Right? The Intrapersonal and Interpersonal Benefits of Sharing Positive Events. J. Personal. Soc. Psychol. 2004, 87, 228–245. [Google Scholar] [CrossRef] [Green Version]

- Bakker, A.B.; Demerouti, E. The job demands-resources model: State of the art. J. Manag. Psychol. 2007, 22, 309–328. [Google Scholar] [CrossRef] [Green Version]

- Kahn, W.A. Psychological conditions of personal engagement and disengagement at work. Acad. Manag. J. 1990, 33, 692–724. [Google Scholar]

- LeDoux, J. The Emotional Brain: The Mysterious Underpinnings of Emotional Life; Simon and Schuster: New York, NY, USA, 1998. [Google Scholar]

- Buijs, R.M.; Van Eden, C.G. The integration of stress by the hypothalamus, amygdala and prefrontal cortex: Balance between the autonomic nervous system and the neuroendocrine system. Prog. Brain Res. 2000, 126, 117–132. [Google Scholar]

- Boucsein, W. Electrodermal Activity; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2012. [Google Scholar]

- Posada-Quintero, H.F.; Chon, K.H. Innovations in electrodermal activity data collection and signal processing: A systematic review. Sensors 2020, 20, 479. [Google Scholar] [CrossRef] [Green Version]

- Carlson, N.R. Physiology of Behavior; Pearson Higher, Ed.: Amherst, MA, USA, 2012. [Google Scholar]

- Benarroch, E.E. The central autonomic network: Functional organization, dysfunction, and perspective. Mayo Clin. Proc. 1993, 68, 988–1001. [Google Scholar] [CrossRef]

- Jerritta, S.; Murugappan, M.; Nagarajan, R.; Wan, K. Physiological signals based human emotion recognition: A review. In Proceedings of the 2011 IEEE 7th International Colloquium on Signal Processing and its Applications, Penang, Malaysia, 4–6 March 2011; pp. 410–415. [Google Scholar]

- Ekman, P. An argument for basic emotions. Cogn. Emot. 1992, 6, 169–200. [Google Scholar] [CrossRef]

- Nath, R.K.; Thapliyal, H.; Caban-Holt, A. Machine learning based stress monitoring in older adults using wearable sensors and cortisol as stress biomarker. J. Signal Process. Syst. 2021, 1–13. [Google Scholar] [CrossRef]

- Bota, P.; Wang, C.; Fred, A.; Silva, H. Emotion assessment using feature fusion and decision fusion classification based on physiological data: Are we there yet? Sensors 2020, 20, 4723. [Google Scholar] [CrossRef]

- Richter, T.; Fishbain, B.; Markus, A.; Richter-Levin, G.; Okon-Singer, H. Using machine learning-based analysis for behavioral differentiation between anxiety and depression. Sci. Rep. 2020, 10, 1–12. [Google Scholar]

- Maaoui, C.; Pruski, A. Emotion recognition through physiological signals for human-machine communication. Cut. Edge Robot. 2010, 2010, 11. [Google Scholar]

- Kim, K.H.; Bang, S.W.; Kim, S.R. Emotion recognition system using short-term monitoring of physiological signals. Med. Biol. Eng. Comput. 2004, 42, 419–427. [Google Scholar] [CrossRef] [PubMed]

- Udovičić, G.; Ðerek, J.; Russo, M.; Sikora, M. Wearable emotion recognition system based on GSR and PPG signals. In Proceedings of the 2nd International Workshop on Multimedia for Personal Health and Health Care, Mountain View, CA, USA, 23 October 2017; pp. 53–59. [Google Scholar]

- Gouverneur, P.; Jaworek-Korjakowska, J.; Köping, L.; Shirahama, K.; Kleczek, P.; Grzegorzek, M. Classification of Physiological Data for Emotion Recognition. In Proceedings of the International Conference on Artificial Intelligence and Soft Computing, Zakopane, Poland, 11–15 June 2017; Springer: Cham, Switzerland, 2017; pp. 619–627. [Google Scholar]

- Priya, A.; Garg, S.; Tigga, N.P. Predicting anxiety, depression and stress in modern life using machine learning algorithms. Procedia Comput. Sci. 2020, 167, 1258–1267. [Google Scholar] [CrossRef]

- Goshvarpour, A.; Abbasi, A.; Goshvarpour, A. An accurate emotion recognition system using ECG and GSR signals and matching pursuit method. Biomed. J. 2017, 40, 355–368. [Google Scholar] [CrossRef]

- Park, S.; Li, C.T.; Han, S.; Hsu, C.; Lee, S.W.; Cha, M. Learning sleep quality from daily logs. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, Anchorage, AK, USA, 4–8 August 2019; pp. 2421–2429. [Google Scholar]

- Guo, H.W.; Huang, Y.S.; Lin, C.H.; Chien, J.C.; Haraikawa, K.; Shieh, J.S. Heart rate variability signal features for emotion recognition by using principal component analysis and support vectors machine. In Proceedings of the 2016 IEEE 16th International Conference on Bioinformatics and Bioengineering (BIBE), Taichung, Taiwan, 31 October–2 November 2016; pp. 274–277. [Google Scholar]

- Raheel, A.; Majid, M.; Alnowami, M.; Anwar, S.M. Physiological sensors based emotion recognition while experiencing tactile enhanced multimedia. Sensors 2020, 20, 4037. [Google Scholar] [CrossRef]

- Chen, S.; Jiang, K.; Hu, H.; Kuang, H.; Yang, J.; Luo, J.; Chen, X.; Li, Y. Emotion Recognition Based on Skin Potential Signals with a Portable Wireless Device. Sensors 2021, 21, 1018. [Google Scholar] [CrossRef]

- Gouizi, K.; Bereksi Reguig, F.; Maaoui, C. Emotion recognition from physiological signals. J. Med. Eng. Technol. 2011, 35, 300–307. [Google Scholar] [CrossRef] [PubMed]

- Carpenter, K.L.; Sprechmann, P.; Calderbank, R.; Sapiro, G.; Egger, H.L. Quantifying risk for anxiety disorders in preschool children: A machine learning approach. PLoS ONE 2016, 11, e0165524. [Google Scholar] [CrossRef] [Green Version]

- Zhuang, J.R.; Guan, Y.J.; Nagayoshi, H.; Muramatsu, K.; Watanuki, K.; Tanaka, E. Real-time emotion recognition system with multiple physiological signals. J. Adv. Mech. Des. Syst. Manuf. 2019, 13, JAMDSM0075. [Google Scholar] [CrossRef] [Green Version]

- Domínguez-Jiménez, J.A.; Campo-Landines, K.C.; Martínez-Santos, J.C.; Delahoz, E.J.; Contreras-Ortiz, S.H. A machine learning model for emotion recognition from physiological signals. Biomed. Signal Process. Control 2020, 55, 101646. [Google Scholar] [CrossRef]

- Wei, W.; Jia, Q.; Feng, Y.; Chen, G. Emotion recognition based on weighted fusion strategy of multichannel physiological signals. Comput. Intell. Neurosci. 2018, 2018, 5296523. [Google Scholar] [CrossRef] [Green Version]

- Pinto, G.; Carvalho, J.M.; Barros, F.; Soares, S.C.; Pinho, A.J.; Brás, S. Multimodal emotion evaluation: A physiological model for cost-effective emotion classification. Sensors 2020, 20, 3510. [Google Scholar] [CrossRef]

- Ni, A.; Azarang, A.; Kehtarnavaz, N. A Review of Deep Learning-Based Contactless Heart Rate Measurement Methods. Sensors 2021, 21, 3719. [Google Scholar] [CrossRef] [PubMed]

- Chen, T.M.; Huang, C.H.; Shih, E.S.; Hu, Y.F.; Hwang, M.J. Detection and classification of cardiac arrhythmias by a challenge-best deep learning neural network model. Iscience 2020, 23, 100886. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Apicella, A.; Arpaia, P.; Mastrati, G.; Moccaldi, N.; Prevete, R. Preliminary validation of a measurement system for emotion recognition. In Proceedings of the 2020 IEEE International Symposium on Medical Measurements and Applications (MeMeA), Bari, Italy, 1 June–1 July 2020; pp. 1–6. [Google Scholar]

- Choppin, A. EEG-Based Human Interface for Disabled Individuals: Emotion Expression with Neural Networks. Master’s Thesis, Tokyo Institute of Technology, Yokohama, Japan, 2000, unpublished. [Google Scholar]

- Boeke, E.A.; Holmes, A.J.; Phelps, E.A. Toward robust anxiety biomarkers: A machine learning approach in a large-scale sample. Biol. Psychiatry: Cogn. Neurosci. Neuroimaging 2020, 5, 79. [Google Scholar] [CrossRef] [PubMed]

- Baltrušaitis, T.; Robinson, P.; Morency, L.P. Openface: An open source facial behavior analysis toolkit. In Proceedings of the 2016 IEEE Winter Conference on Applications of Computer Vision (WACV), Lake Placid, NY, USA, 7–10 March 2016; pp. 1–10. [Google Scholar]

- Busso, C.; Deng, Z.; Yildirim, S.; Bulut, M.; Lee, C.M.; Kazemzadeh, A.; Lee, S.; Neumann, U.; Narayanan, S. Analysis of emotion recognition using facial expressions, speech and multimodal information. In Proceedings of the 6th International Conference on Multimodal Interfaces, State College, PA, USA, 13–15 October 2004; pp. 205–211. [Google Scholar]

- Den Uyl, M.J.; Van Kuilenburg, H. The FaceReader: Online facial expression recognition. In Proceedings of the Measuring Behavior, Wageningen, The Netherlands, 30 August–2 September 2005; pp. 589–590. [Google Scholar]

- Park, S.; Lee, S.W.; Whang, M. The Analysis of Emotion Authenticity Based on Facial Micromovements. Sensors 2021, 21, 4616. [Google Scholar] [CrossRef]

- Yu, D.; Sun, S. A Systematic Exploration of Deep Neural Networks for EDA-Based Emotion Recognition. Information 2020, 11, 212. [Google Scholar] [CrossRef] [Green Version]

- Koelstra, S.; Muhl, C.; Soleymani, M.; Lee, J.S.; Yazdani, A.; Ebrahimi, T.; Pun, T.; Nijholt, A.; Patras, I. Deap: A database for emotion analysis; using physiological signals. IEEE Trans. Affect. Comput. 2011, 3, 18–31. [Google Scholar] [CrossRef] [Green Version]

- Soleymani, M.; Lichtenauer, J.; Pun, T.; Pantic, M. A multimodal database for affect recognition and implicit tagging. IEEE Trans. Affect. Comput. 2011, 3, 42–55. [Google Scholar] [CrossRef] [Green Version]

- Al Machot, F.; Elmachot, A.; Ali, M.; Al Machot, E.; Kyamakya, K. A deep-learning model for subject-independent human emotion recognition using electrodermal activity sensors. Sensors 2019, 19, 1659. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Ganapathy, N.; Veeranki, Y.R.; Swaminathan, R. Convolutional neural network based emotion classification using electrodermal activity signals and time-frequency features. Expert Syst. Appl. 2020, 159, 113571. [Google Scholar] [CrossRef]

- Emery, C.F.; Leatherman, N.E.; Burker, E.J.; MacIntyre, N.R. Psychological outcomes of a pulmonary rehabilitation program. Chest 1991, 100, 613–617. [Google Scholar] [CrossRef]

- E4 Wristband User’s Manual 20150608; Empatica: Milano, Italy, 2015; pp. 5–16.

- Troyer, A.K.; Moscovitch, M.; Winocur, G.; Leach, L.; Freedman, M. Clustering and switching on verbal fluency tests in Alzheimer’s and Parkinson’s disease. J. Int. Neuropsychol. Soc. 1998, 4, 137–143. [Google Scholar] [CrossRef]

- Miller, E. Verbal fluency as a function of a measure of verbal intelligence and in relation to different types of cerebral pathology. Br. J. Clin. Psychol. 1984, 23, 53–57. [Google Scholar] [CrossRef]

- Conn, H.O. Trailmaking and number-connection tests in the assessment of mental state in portal systemic encephalopathy. Dig. Dis. Sci. 1977, 22, 541–550. [Google Scholar] [CrossRef] [PubMed]

- Szurmik, T.; Bibrowicz, K.; Lipowicz, A.; Mitas, A.W. Methods of Therapy of Scoliosis and Technical Functionalities of DISC4SPINE (D4S) Diagnostic and Therapeutic System. In Information Technology in Biomedicine; Springer: Cham, Switzerland, 2021; pp. 201–212. [Google Scholar]

- Przepiórkowski, A. Narodowy Korpus Języka Polskiego; Naukowe PWN: Warsaw, Poland, 2012. [Google Scholar]

- Pradhan, B.K.; Pal, K. Statistical and entropy-based features can efficiently detect the short-term effect of caffeinated coffee on the cardiac physiology. Med. Hypotheses 2020, 145, 110323. [Google Scholar] [CrossRef] [PubMed]

- Mańka, A.; Romaniszyn, P.; Bugdol, M.N.; Mitas, A.W. Methods for Assessing the Subject’s Multidimensional Psychophysiological State in Terms of Proper Rehabilitation. In Information Technology in Biomedicine; Springer: Cham, Switzerland, 2021; pp. 213–225. [Google Scholar]

- Nielsen, O.M. Wavelets in Scientific Computing. Ph.D. Thesis, Technical University of Denmark, Lyngby, Denmark, 1998. [Google Scholar]

- Shukla, J.; Barreda-Angeles, M.; Oliver, J.; Nandi, G.C.; Puig, D. Feature extraction and selection for emotion recognition from electrodermal activity. IEEE Trans. Affect. Comput. 2019. [Google Scholar] [CrossRef]

- Bennasar, M.; Hicks, Y.; Setchi, R. Feature selection using joint mutual information maximisation. Expert Syst. Appl. 2015, 42, 8520–8532. [Google Scholar] [CrossRef] [Green Version]

- Ku, W.; Storer, R.H.; Georgakis, C. Disturbance detection and isolation by dynamic principal component analysis. Chemom. Intell. Lab. Syst. 1995, 30, 179–196. [Google Scholar] [CrossRef]

- Cover, T.; Hart, P. Nearest neighbor pattern classification. IEEE Trans. Inf. Theory 1967, 13, 21–27. [Google Scholar] [CrossRef]

- Ward, T.; Maruna, S. Rehabilitation; Routledge: London, UK, 2007. [Google Scholar]

- Bartel, C.A.; Saavedra, R. The collective construction of work group moods. Adm. Sci. Q. 2000, 45, 197–231. [Google Scholar] [CrossRef] [Green Version]

- Barsade, S.G. The ripple effect: Emotional contagion and its influence on group behavior. Adm. Sci. Q. 2002, 47, 644–675. [Google Scholar] [CrossRef] [Green Version]

- Lachenbruch, P.A. Sensitivity, specificity, and vaccine efficacy. Control. Clin. Trials 1998, 19, 569–574. [Google Scholar] [CrossRef]

- Wertz, F.J. The question of the reliability of psychological research. J. Phenomenol. Psychol. 1986, 17, 181–205. [Google Scholar] [CrossRef]

- Diener, E.; Smith, H.; Fujita, F. The personality structure of affect. J. Personal. Soc. Psychol. 1995, 69, 130. [Google Scholar] [CrossRef]

| Signal | Sigificant Features |

|---|---|

| BVP | total sume (vft1), kurtosis (dst1), minimum (ex3), maximum (ex3), 4th moment (vft2), 5th moment (vft2), skewness (vft2), RMS (vft2) |

| HR | sume power (vft1), mean (ex1), 25 percentile (ex3), skewness (ex3), median (ptest), variance (ptest), minimum (dst2) |

| EDA | 5th moment (vft2) |

| ACC X | mean (vft1, ex2, ex3, dst2), median (vft1, ex2, ex3, ptest), variance (dst1), 25 percentile (vft1, ptest, vft2), 75 percentile (vft1, dst1, ptest, vft2, dst2), minimum (vft1, dst1, ex2, ex3, ptest, vft2, dst2), maximum (vft1, dst1, ex2, ex3, ptest, vft2), range (dst1), 4th moment (dst1), 5th moment (ptest), total sume (ex2) |

| ACC Y | mean (vft1, ptest), median (vft1), 25 percentile (vft1, dst1, ptest), 75 percentile (vft1, dst1, ex2, ptest), minimum (vft1, dst1, ex1, ex2, ex3, ptest), maximum (vft1, dst1, ex1, ex2, ex3, ptest), total sume (vft1, ex1, ptest), range (dst1), kurtosis (vft2) |

| ACC Z | median power (dst1), 4th moment (dst1), skewness (ptest), kurtosis (dst2) |

| Variable | JAWS | |

|---|---|---|

| below median | above median | |

| Mean | 35.25 | 46.88 |

| Standard deviation | 2.98 | 3.06 |

| Median | 37.60 | 46 |

| Min | 33 | 45 |

| Max | 41 | 57 |

| Range | 12–60 | |

| Group | Mean | SD | Statistics | p | Effect Size | |

|---|---|---|---|---|---|---|

| JAWS1 angry | below | 4.533 | 0.915 | 256.500 | 0.978 | |

| above | 4.676 | 0.535 | ||||

| JAWS2 anxious | below | 4.200 | 1.082 | 270.500 | 0.717 | |

| above | 4.441 | 0.660 | ||||

| JAWS3 at ease | below | 2.400 | 1.056 | 416.000 | 0.001 | 0.631 |

| above | 3.618 | 0.922 | ||||

| JAWS4 gloomy | below | 4.133 | 1.060 | 339.000 | 0.022 | 0.329 |

| above | 4.794 | 0.410 | ||||

| JAWS5 discouraged | below | 4.000 | 1.309 | 276.500 | 0.619 | |

| above | 4.382 | 0.652 | ||||

| JAWS6 disgusted | below | 4.467 | 0.915 | 271.500 | 0.662 | |

| above | 4.588 | 0.743 | ||||

| JAWS7 energetic | below | 1.867 | 0.990 | 462.00 | <0.001 | 0.812 |

| above | 3.882 | 1.008 | ||||

| JAWS8 excited | below | 2.267 | 1.100 | 339.000 | 0.063 | |

| above | 3.029 | 1.337 | ||||

| JAWS9 fatigue | below | 3.733 | 1.223 | 217.000 | 0.402 | |

| above | 3.382 | 1.349 | ||||

| JAWS10 inspired | below | 1.733 | 0.884 | 422.500 | <0.001 | 0.657 |

| above | 3.324 | 1.273 | ||||

| JAWS11 relaxed | below | 2.200 | 1.082 | 421.000 | <0.001 | 0.651 |

| above | 3.500 | 0.896 | ||||

| JAWS12 satisfied | below | 2.067 | 1.033 | 397.000 | <0.001 | 0.557 |

| above | 3.265 | 1.082 |

| Variable | Before | After | Differences after/before | |

|---|---|---|---|---|

| Verbal Fluency Test | Number of spoken words | 14.98 | 17.02 | 4.29 |

| Mean time | 4.14 | 3.64 | 1.19 | |

| Popularity of letter | 4.08 | 3.77 | 2.23 | |

| Fluency coefficient | 5.64 | 5.80 | 3.98 | |

| Digit Symbol Test | Number of total matches | 34.47 | 39.41 | 6.33 |

| Number of correct matches | 32.41 | 36.76 | 5.86 | |

| Digit coefficient | 0.92 | 0.93 | 0.06 |

| Signal | Features |

|---|---|

| BVP | mean (ptest), median (ex3), moda (ex1, ex2), quartile std (ex2), 4th moment (ex1), 5th moment (ex1, vft2 *), total sume (ex1), sume power (ex1, ptest), mean power (ex2, ex3), rms (ex3), entropy (ex3) |

| EDA | tonicity (vft1), obj (dst1), mean distance regression (dst1), number of GSR (dst1), shift (dst1) |

| PCA | JMI | |

|---|---|---|

| Accuracy (ACC) | 79.60% | 81.63% |

| Sensitivity (TPR) | 88.24% | 85.71% |

| Specificity (TNR) | 60.00% | 71.43% |

| Precision (PPV) | 0.83 | 0.88 |

| 0.86 | 0.90 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Romaniszyn-Kania, P.; Pollak, A.; Bugdol, M.D.; Bugdol, M.N.; Kania, D.; Mańka, A.; Danch-Wierzchowska, M.; Mitas, A.W. Affective State during Physiotherapy and Its Analysis Using Machine Learning Methods. Sensors 2021, 21, 4853. https://doi.org/10.3390/s21144853

Romaniszyn-Kania P, Pollak A, Bugdol MD, Bugdol MN, Kania D, Mańka A, Danch-Wierzchowska M, Mitas AW. Affective State during Physiotherapy and Its Analysis Using Machine Learning Methods. Sensors. 2021; 21(14):4853. https://doi.org/10.3390/s21144853

Chicago/Turabian StyleRomaniszyn-Kania, Patrycja, Anita Pollak, Marcin D. Bugdol, Monika N. Bugdol, Damian Kania, Anna Mańka, Marta Danch-Wierzchowska, and Andrzej W. Mitas. 2021. "Affective State during Physiotherapy and Its Analysis Using Machine Learning Methods" Sensors 21, no. 14: 4853. https://doi.org/10.3390/s21144853

APA StyleRomaniszyn-Kania, P., Pollak, A., Bugdol, M. D., Bugdol, M. N., Kania, D., Mańka, A., Danch-Wierzchowska, M., & Mitas, A. W. (2021). Affective State during Physiotherapy and Its Analysis Using Machine Learning Methods. Sensors, 21(14), 4853. https://doi.org/10.3390/s21144853