A Guide to Parent-Child fNIRS Hyperscanning Data Processing and Analysis

Abstract

1. Introduction

2. Materials and Methods

2.1. Sample Description

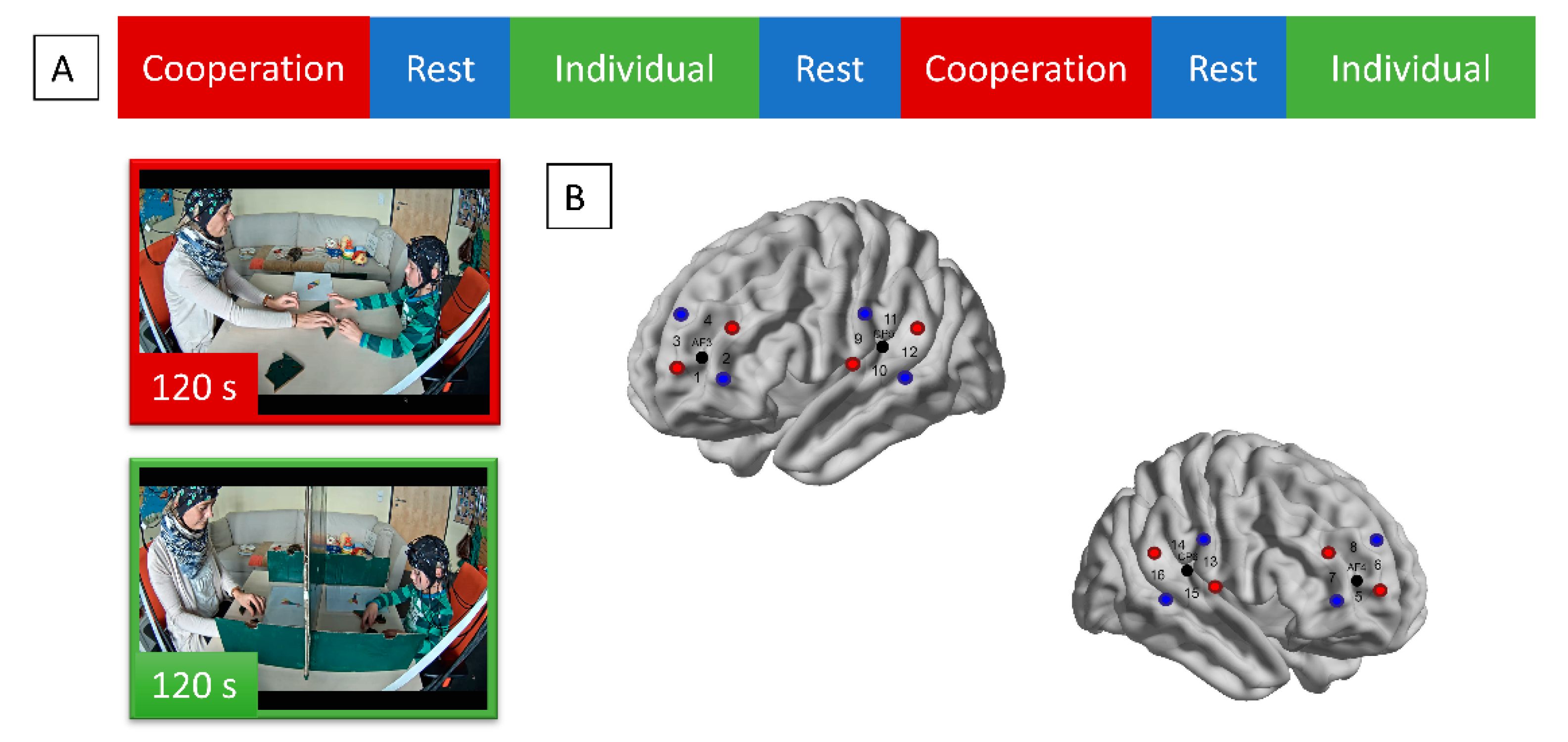

2.2. Experimental Procedure

2.3. General Information on fNIRS Data Acquisition and Analysis

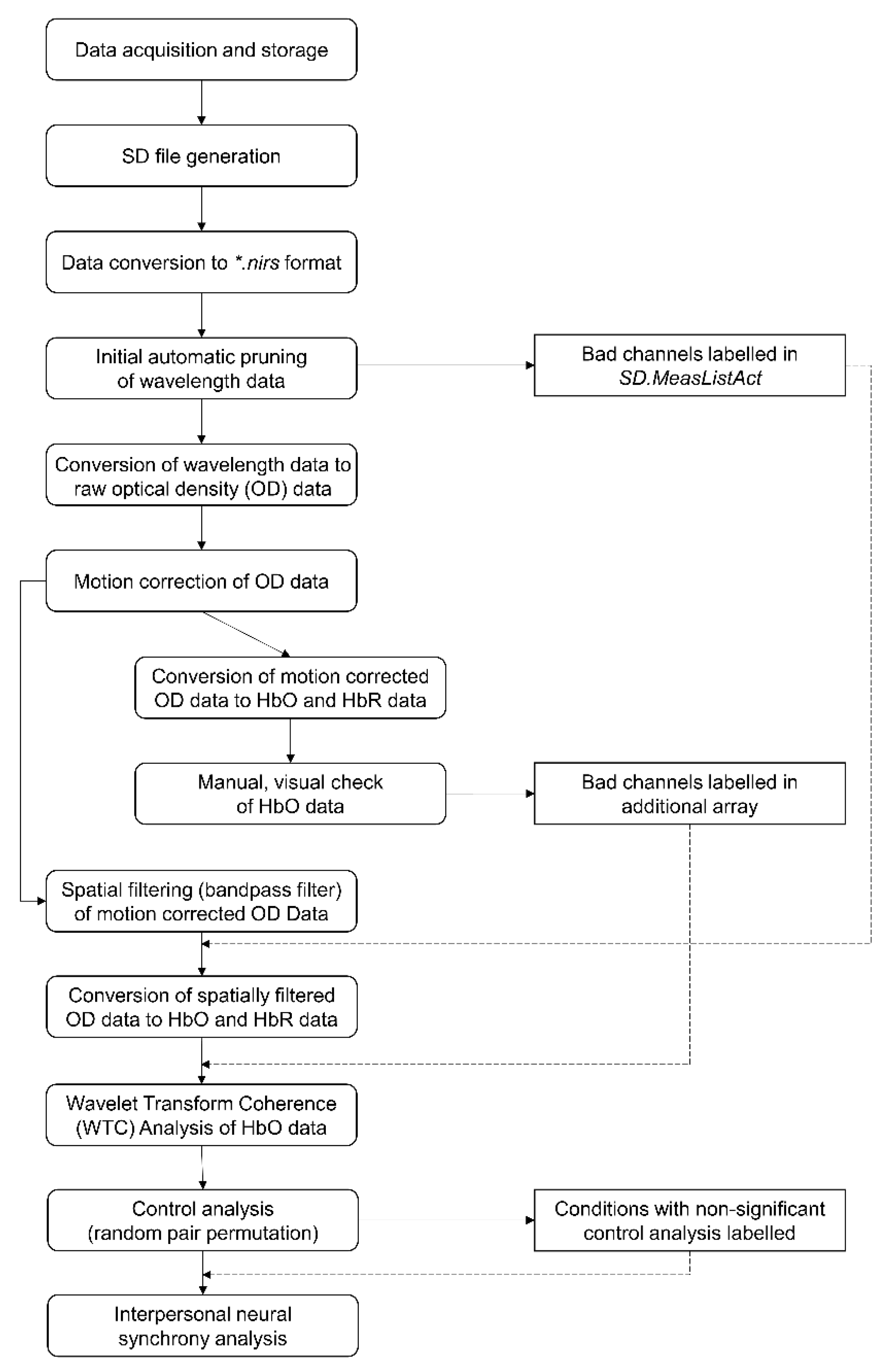

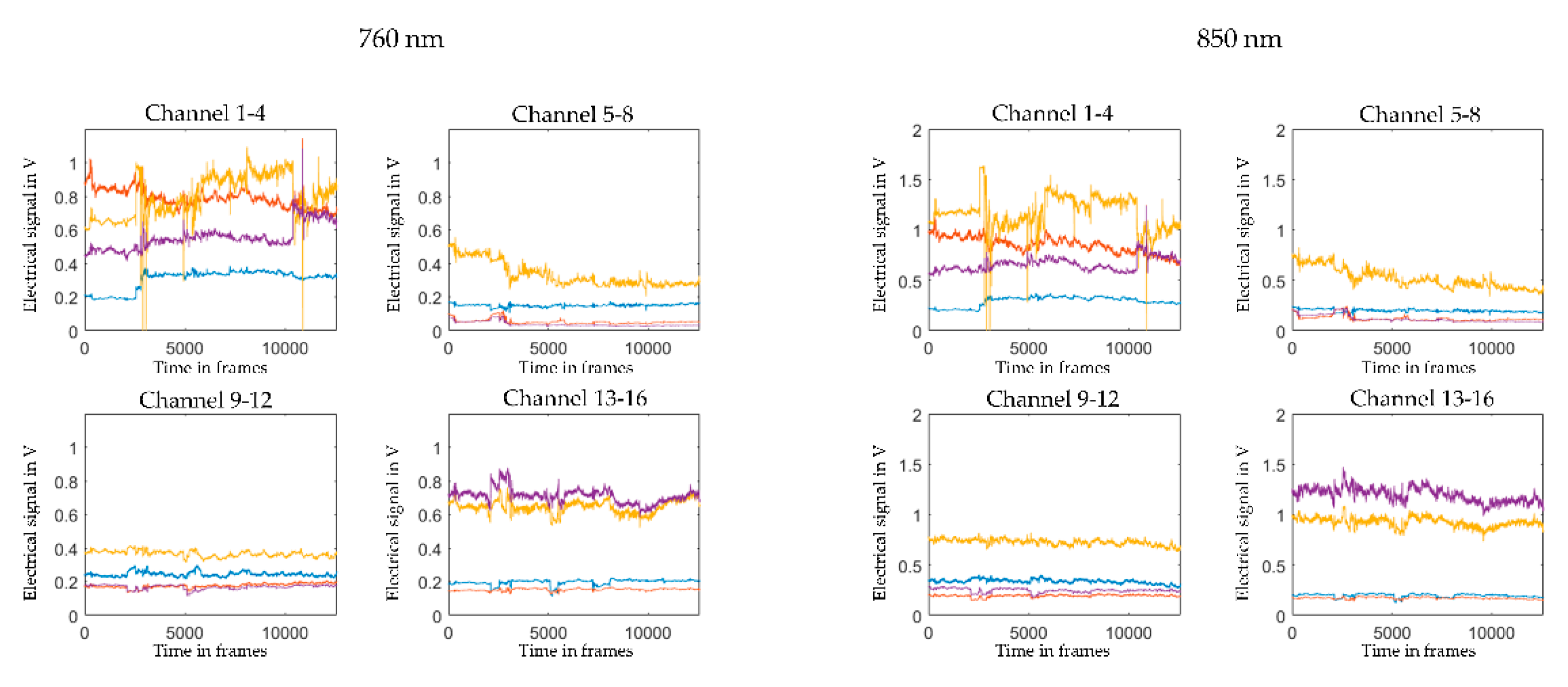

2.4. Optode Configuration and Raw Data Conversion

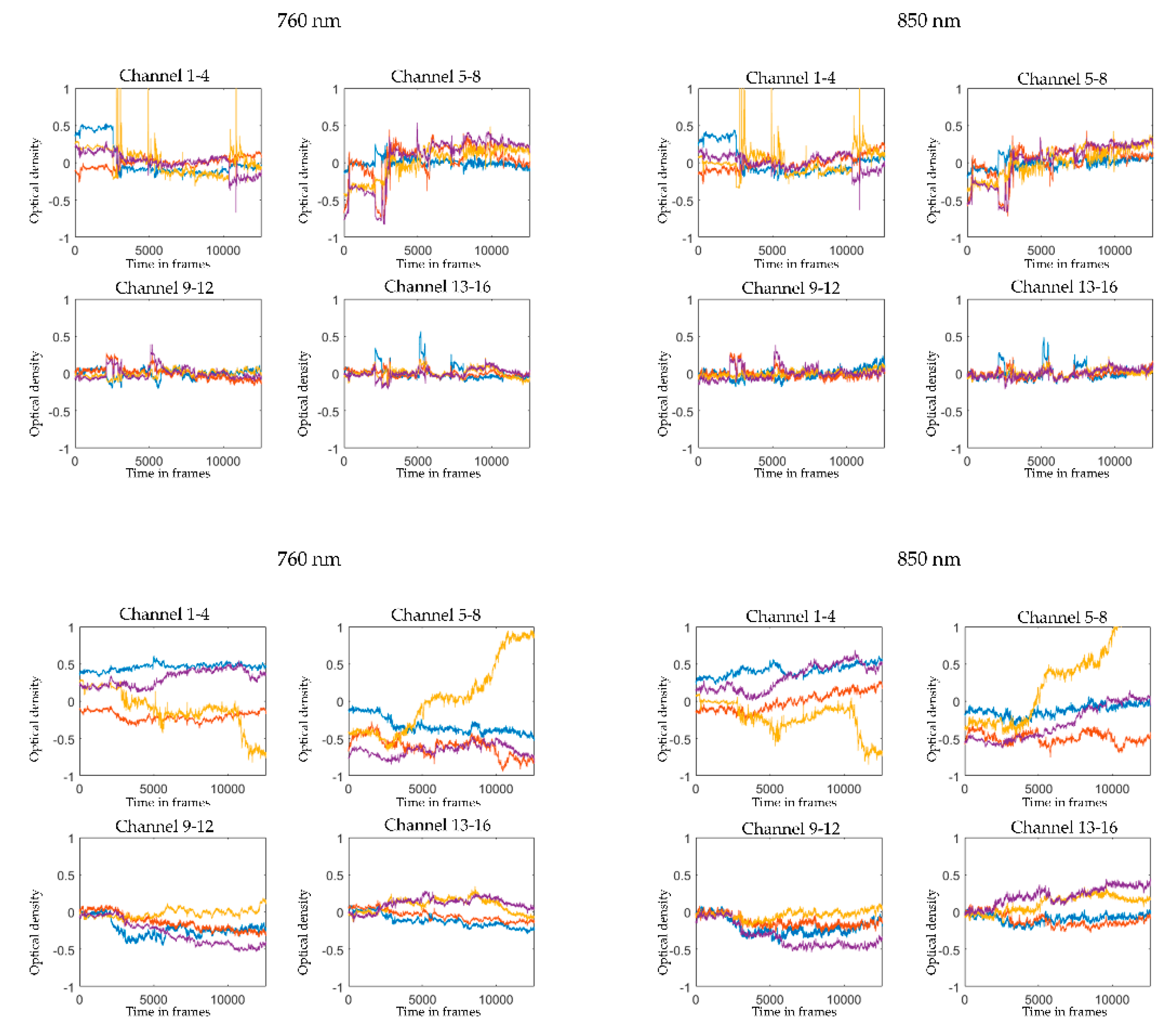

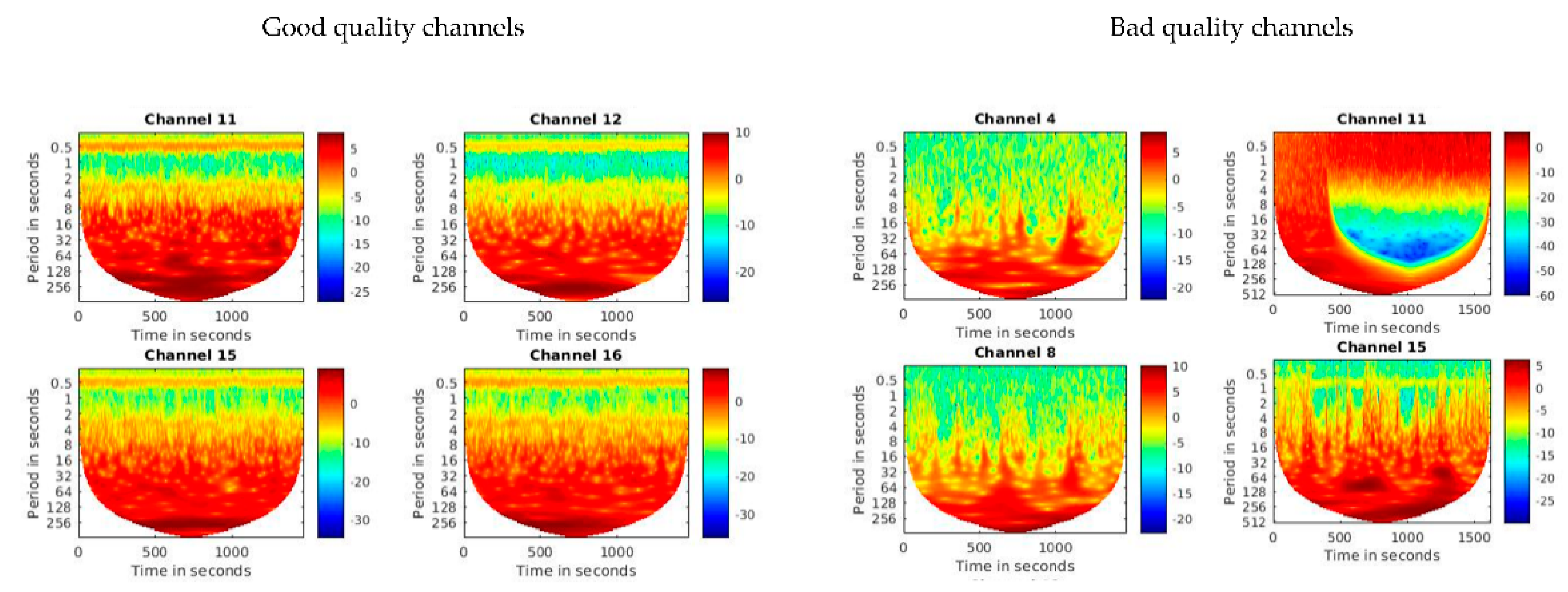

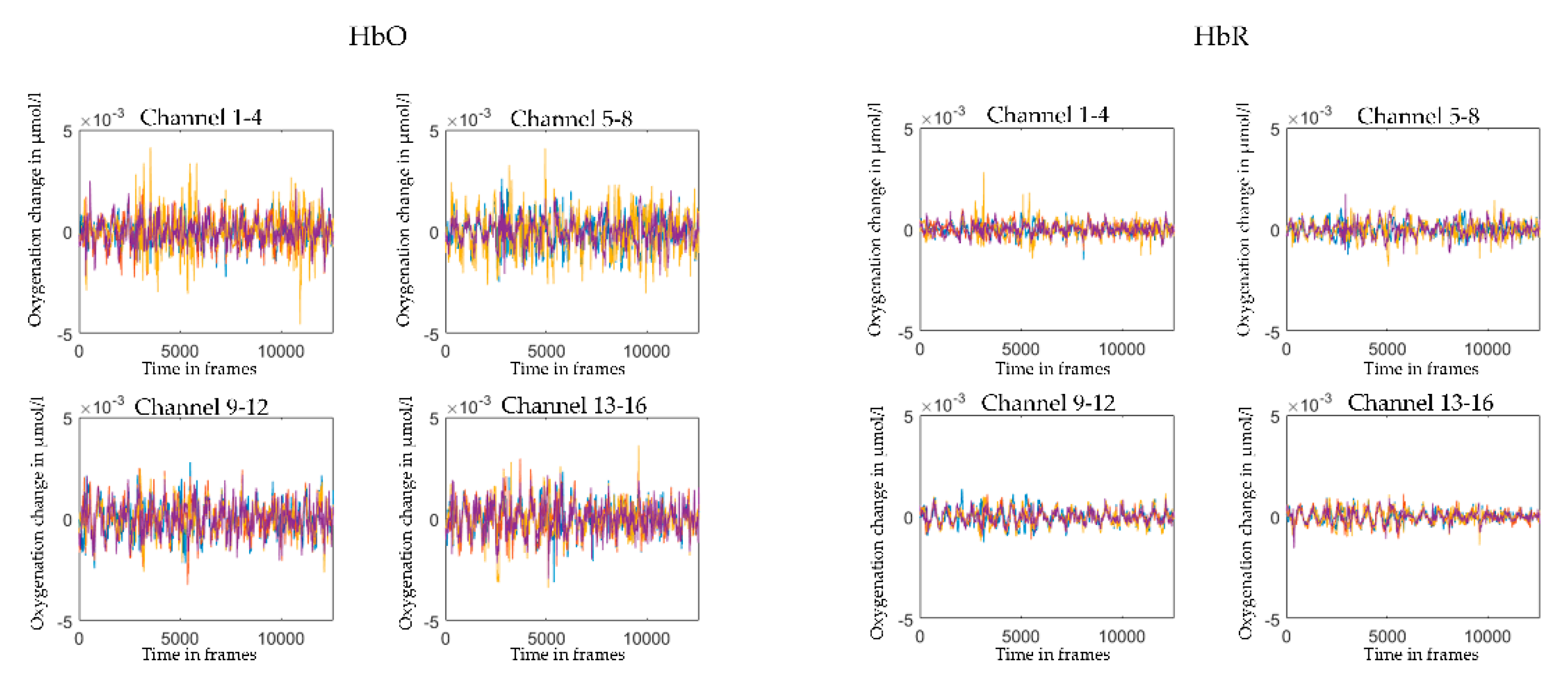

2.5. Pre-Processing and Visual Quality Check

2.6. WTC

2.7. Control Analysis

2.8. Statistical Analysis

3. Results

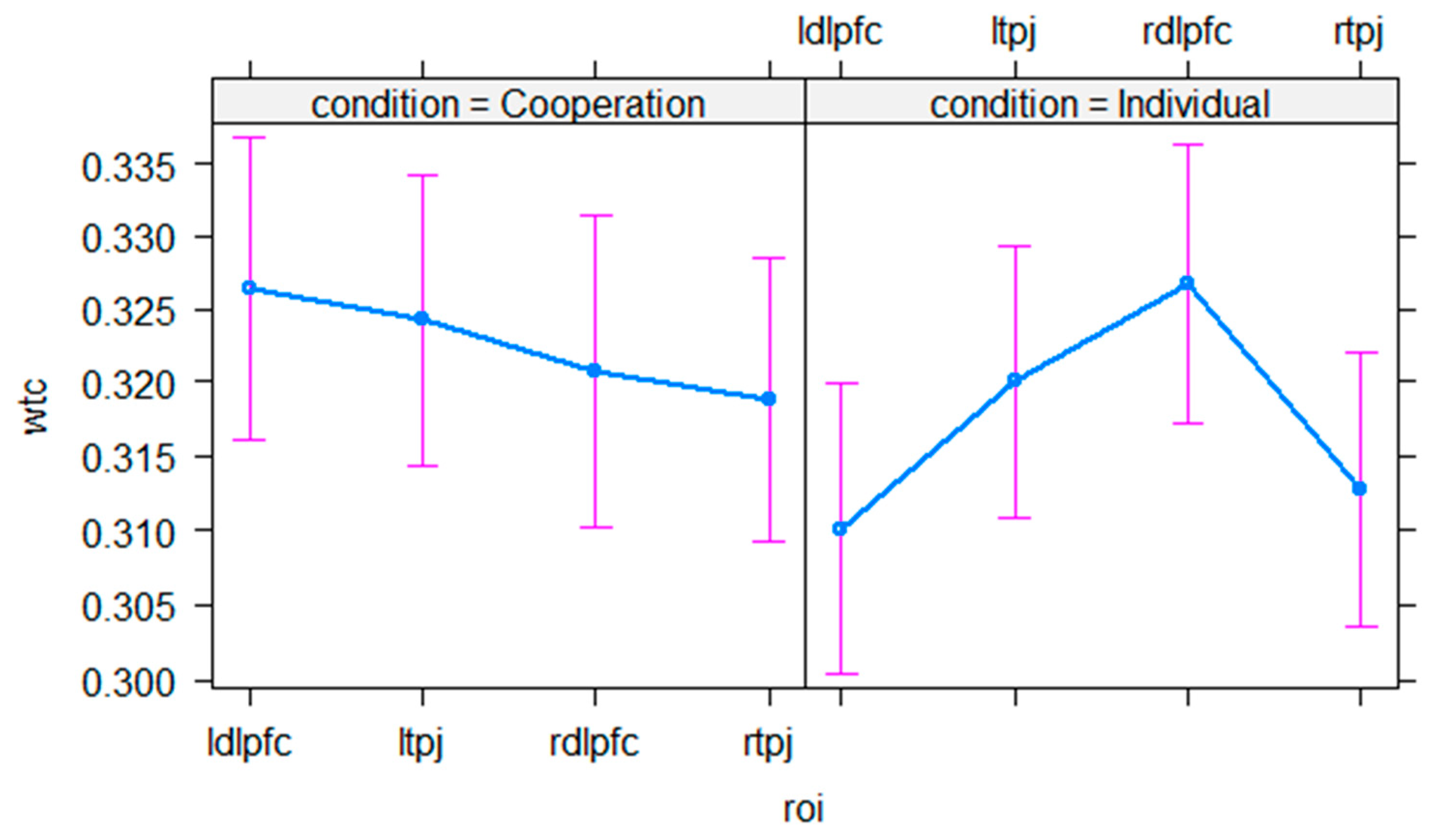

3.1. Control Analysis

3.2. Interpersonal Neural Synchrony

4. Discussion

4.1. Exemplary Dataset

4.2. General Considerations

4.3. Limitations

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- McDonald, N.M.; Perdue, K.L. The Infant Brain in the Social World: Moving toward Interactive Social Neuroscience with Functional near-Infrared Spectroscopy. Neurosci. Biobehav. Rev. 2018, 87, 38–49. [Google Scholar] [CrossRef]

- Redcay, E.; Schilbach, L. Using Second-Person Neuroscience to Elucidate the Mechanisms of Social Interaction. Nat. Rev. Neurosci. 2019, 20, 495–505. [Google Scholar] [CrossRef]

- Hoehl, S.; Markova, G. Moving Developmental Social Neuroscience toward a Second-Person Approach. PLoS Biol. 2018, 16, e3000055. [Google Scholar] [CrossRef]

- Raz, G.; Saxe, R. Learning in infancy is active, endogenously motivated, and depends on the prefrontal cortices. Annu. Rev. Dev. Psychol. 2020, 2, 247–268. [Google Scholar] [CrossRef]

- Montirosso, R.; McGlone, F. The Body Comes First. Embodied Reparation and the Co-Creation of Infant Bodily-Self. Neurosci. Biobehav. Rev. 2020, 113, 77–87. [Google Scholar] [CrossRef] [PubMed]

- Feldman, R. The Neurobiology of Human Attachments. Trends Cogn. Sci. 2017, 21, 80–99. [Google Scholar] [CrossRef] [PubMed]

- Atzil, S.; Gao, W.; Fradkin, I.; Barrett, L.F. Growing a Social Brain. Nat. Hum. Behav. 2018, 2, 624–636. [Google Scholar] [CrossRef] [PubMed]

- Atzil, S.; Gendron, M. Bio-Behavioral Synchrony Promotes the Development of Conceptualized Emotions. Curr. Opin. Psychol. 2017, 17, 162–169. [Google Scholar] [CrossRef] [PubMed]

- Dumas, G.; Lachat, F.; Martinerie, J.; Nadel, J.; George, N. From Social Behaviour to Brain Synchronization: Review and Perspectives in Hyperscanning. IRBM 2011, 32, 48–53. [Google Scholar] [CrossRef]

- Hasson, U.; Ghazanfar, A.A.; Galantucci, B.; Garrod, S.; Keysers, C. Brain-to-Brain Coupling: A Mechanism for Creating and Sharing a Social World. Trends Cogn. Sci. 2012, 16, 114–121. [Google Scholar] [CrossRef] [PubMed]

- Babiloni, F.; Astolfi, L. Social Neuroscience and Hyperscanning Techniques: Past, Present and Future. Neurosci. Biobehav. Rev. 2014, 44, 76–93. [Google Scholar] [CrossRef]

- Koban, L.; Ramamoorthy, A.; Konvalinka, I. Why Do We Fall into Sync with Others? Interpersonal Synchronization and the Brain’s Optimization Principle. Soc. Neurosci. 2019, 14, 1–9. [Google Scholar] [CrossRef] [PubMed]

- Gvirts, H.Z.; Perlmutter, R. What Guides Us to Neurally and Behaviorally Align With Anyone Specific? A Neurobiological Model Based on FNIRS Hyperscanning Studies. Neuroscientist 2020, 26, 108–116. [Google Scholar] [CrossRef] [PubMed]

- Hoehl, S.; Fairhurst, M.; Schirmer, A. Interactional Synchrony: Signals, Mechanisms, and Benefits. Soc. Cogn. Affect. Neurosci. 2020, 16, 5–18. [Google Scholar] [CrossRef]

- Novembre, G.; Iannetti, G.D. Hyperscanning Alone Cannot Prove Causality. Multibrain Stimulation Can. Trends Cogn. Sci. 2021, 25, 96–99. [Google Scholar] [CrossRef]

- Lloyd-Fox, S.; Blasi, A.; Elwell, C.E. Illuminating the Developing Brain: The Past, Present and Future of Functional near Infrared Spectroscopy. Neurosci. Biobehav. Rev. 2010, 34, 269–284. [Google Scholar] [CrossRef]

- Reindl, V.; Konrad, K.; Gerloff, C.; Kruppa, J.A.; Bell, L.; Scharke, W. Conducting Hyperscanning Experiments with Functional Near-Infrared Spectroscopy. JoVE J. Vis. Exp. 2019, 143, e58807. [Google Scholar] [CrossRef]

- Nguyen, T.; Bánki, A.; Markova, G.; Hoehl, S. Studying Parent-Child Interaction with Hyperscanning. In Progress in Brain Research; Elsevier: Amsterdam, The Netherlands, 2020; p. S0079612320300455. [Google Scholar]

- Piazza, E.; Hasenfratz, L.; Hasson, U.; Lew-Williams, C. Infant and Adult Brains Are Coupled to the Dynamics of Natural Communication. Psychol. Sci. 2020, 31, 6–17. [Google Scholar] [CrossRef]

- Azhari, A.; Leck, W.Q.; Gabrieli, G.; Bizzego, A.; Rigo, P.; Setoh, P.; Bornstein, M.H.; Esposito, G. Parenting Stress Undermines Mother-Child Brain-to-Brain Synchrony: A Hyperscanning Study. Sci. Rep. 2019, 9, 1–9. [Google Scholar] [CrossRef] [PubMed]

- Czeszumski, A.; Eustergerling, S.; Lang, A.; Menrath, D.; Gerstenberger, M.; Schuberth, S.; Schreiber, F.; Rendon, Z.Z.; König, P. Hyperscanning: A Valid Method to Study Neural Inter-Brain Underpinnings of Social Interaction. Front. Hum. Neurosci. 2020, 14. [Google Scholar] [CrossRef]

- Grinsted, A.; Moore, J.C.; Jevrejeva, S. Application of the Cross Wavelet Transform and Wavelet Coherence to Geophysical Time Series. Nonlinear Process. Geophys. 2004, 11, 561–566. [Google Scholar] [CrossRef]

- Chang, C.; Glover, G.H. Time-Frequency Dynamics of Resting-State Brain Connectivity Measured with FMRI. NeuroImage 2010, 50, 81–98. [Google Scholar] [CrossRef]

- Sun, F.T.; Miller, L.M.; D’Esposito, M. Measuring Interregional Functional Connectivity Using Coherence and Partial Coherence Analyses of FMRI Data. NeuroImage 2004, 21, 647–658. [Google Scholar] [CrossRef]

- Nguyen, T.; Schleihauf, H.; Kungl, M.; Kayhan, E.; Hoehl, S.; Vrtička, P. Interpersonal Neural Synchrony During Father–Child Problem Solving: An FNIRS Hyperscanning Study. Child Dev. 2021. [Google Scholar] [CrossRef] [PubMed]

- Nguyen, T.; Schleihauf, H.; Kayhan, E.; Matthes, D.; Vrtička, P.; Hoehl, S. The Effects of Interaction Quality for Neural Synchrony during Mother-Child Problem Solving. Cortex 2020, 124, 235–249. [Google Scholar] [CrossRef] [PubMed]

- Mathôt, S.; Schreij, D.; Theeuwes, J. OpenSesame: An Open-Source, Graphical Experiment Builder for the Social Sciences. Behav. Res. Methods 2012, 44, 314–324. [Google Scholar] [CrossRef]

- Huppert, T.J.; Diamond, S.G.; Franceschini, M.A.; Boas, D.A. HomER: A Review of Time-Series Analysis Methods for near-Infrared Spectroscopy of the Brain. Appl. Opt. 2009, 48, D280–D298. [Google Scholar] [CrossRef] [PubMed]

- Tak, S.; Uga, M.; Flandin, G.; Dan, I.; Penny, W.D. Sensor Space Group Analysis for FNIRS Data. J. Neurosci. Methods 2016, 264, 103–112. [Google Scholar] [CrossRef]

- Molavi, B.; Dumont, G.A. Wavelet-Based Motion Artifact Removal for Functional near-Infrared Spectroscopy. Physiol. Meas. 2012, 33, 259–270. [Google Scholar] [CrossRef]

- Fishburn, F.A.; Ludlum, R.S.; Vaidya, C.J.; Medvedev, A.V. Temporal Derivative Distribution Repair (TDDR): A Motion Correction Method for FNIRS. NeuroImage 2019, 184, 171–179. [Google Scholar] [CrossRef]

- Scholkmann, F.; Spichtig, S.; Muehlemann, T.; Wolf, M. How to Detect and Reduce Movement Artifacts in Near-Infrared Imaging Using Moving Standard Deviation and Spline Interpolation. Physiol. Meas. 2010, 31, 649–662. [Google Scholar] [CrossRef] [PubMed]

- Quiñones-Camacho, L.E.; Fishburn, F.A.; Camacho, M.C.; Hlutkowsky, C.O.; Huppert, T.J.; Wakschlag, L.S.; Perlman, S.B. Parent-Child Neural Synchrony: A Novel Approach to Elucidating Dyadic Correlates of Preschool Irritability. J. Child Psychiatr. Psychopathol. 2019. [Google Scholar] [CrossRef] [PubMed]

- Jiang, J.; Dai, B.; Peng, D.; Zhu, C.; Liu, L.; Lu, C. Neural Synchronization during Face-to-Face Communication. J. Neurosci. 2012, 32, 16064–16069. [Google Scholar] [CrossRef] [PubMed]

- Mayseless, N.; Hawthorne, G.; Reiss, A.L. Real-Life Creative Problem Solving in Teams: FNIRS Based Hyperscanning Study. NeuroImage 2019, 203, 116161. [Google Scholar] [CrossRef] [PubMed]

- Nguyen, T.; Schleihauf, H.; Kayhan, E.; Matthes, D.; Vrtička, P.; Höhl, S. Neural Synchrony during Mother-Child Conversation. Soc. Cogn. Affect. Neurosci. 2021, 16, 93–102. [Google Scholar] [CrossRef]

- Magnusson, A.; Skaug, H.; Nielsen, A.; Berg, C.; Kristensen, K.; Maechler, M.; van Bentham, K.; Bolker, B.; Brooks, M.; Brooks, M.M. Package ‘GlmmTMB’; R Package Version 0.2.0. Available online: https://CRAN.R-project.org/package=glmmTMB (accessed on 12 June 2021).

- Kruppa, J.A.; Reindl, V.; Gerloff, C.; Oberwelland Weiss, E.; Prinz, J.; Herpertz-Dahlmann, B.; Konrad, K.; Schulte-Rüther, M. Brain and Motor Synchrony in Children and Adolescents with ASD—A FNIRS Hyperscanning Study. Soc. Cogn. Affect. Neurosci. 2021, 16, 103–116. [Google Scholar] [CrossRef] [PubMed]

- Salkind, N.J. Encyclopedia of Research Design; SAGE Publications, Inc.: Thousand Oaks, CA, USA, 2010. [Google Scholar] [CrossRef]

- Dobson, A.J. An Introduction to Generalized Linear Models; Chapman and Hall: London, UK, 2002; ISBN 978-1-351-72621-4. [Google Scholar]

- Tachtsidis, I.; Scholkmann, F. False Positives and False Negatives in Functional Near-Infrared Spectroscopy: Issues, Challenges, and the Way Forward. Neurophotonics 2016, 3, 031405. [Google Scholar] [CrossRef]

- Reindl, V.; Gerloff, C.; Scharke, W.; Konrad, K. Brain-to-Brain Synchrony in Parent-Child Dyads and the Relationship with Emotion Regulation Revealed by FNIRS-Based Hyperscanning. NeuroImage 2018, 178, 493–502. [Google Scholar] [CrossRef]

- Jiang, J.; Zheng, L.; Lu, C. A Hierarchical Model for Interpersonal Verbal Communication. Soc. Cogn. Affect. Neurosci. 2020. [Google Scholar] [CrossRef]

- Hamilton, A.F.D.C. Hyperscanning: Beyond the Hype. Neuron 2020, 109, 404–407. [Google Scholar] [CrossRef]

- Kingsbury, L.; Huang, S.; Wang, J.; Gu, K.; Golshani, P.; Wu, Y.E.; Hong, W. Correlated Neural Activity and Encoding of Behavior across Brains of Socially Interacting Animals. Cell 2019, 178, 429–446.e16. [Google Scholar] [CrossRef] [PubMed]

- Miller, J.G.; Vrtička, P.; Cui, X.; Shrestha, S.; Hosseini, S.M.H.; Baker, J.M.; Reiss, A.L. Inter-Brain Synchrony in Mother-Child Dyads during Cooperation: An FNIRS Hyperscanning Study. Neuropsychologia 2019, 124, 117–124. [Google Scholar] [CrossRef]

- Yücel, M.A.; Lühmann, A.V.; Scholkmann, F.; Gervain, J.; Dan, I.; Ayaz, H.; Boas, D.; Cooper, R.J.; Culver, J.; Elwell, C.E.; et al. Best Practices for FNIRS Publications. Neurophotonics 2021, 8, 012101. [Google Scholar] [CrossRef] [PubMed]

- Wijeakumar, S.; Shahani, U.; Simpson, W.A.; McCulloch, D.L. Localization of hemodynamic responses to simple visual stimulation: An fNIRS study. Invest. Ophthalmol. Vis. Sci. 2012, 53, 2266–2273. [Google Scholar] [CrossRef]

- Hu, X.S.; Wagley, N.; Rioboo, A.T.; DaSilva, A.; Kovelman, I. Photogrammetry-based stereoscopic optode registration method for functional near-infrared spectroscopy. J. Biomed. Opt. 2020, 25, 095001. [Google Scholar] [CrossRef] [PubMed]

- Lloyd-Fox, S.; Richards, J.E.; Blasi, A.; Murphy, D.G.M.; Elwell, C.E.; Johnson, M.H. Coregistering functional near-infrared spectroscopy with underlying cortical areas in infants. Neurophotonics 2014, 1, 025006. [Google Scholar] [CrossRef]

- Minagawa, Y.; Xu, M.; Morimoto, S. Toward Interactive Social Neuroscience: Neuroimaging Real-World Interactions in Various Populations. Jpn. Psychol. Res. 2018, 60, 196–224. [Google Scholar] [CrossRef]

- Frijia, E.M.; Billing, A.; Lloyd-Fox, S.; Vidal Rosas, E.; Collins-Jones, L.; Crespo-Llado, M.M.; Amadó, M.P.; Austin, T.; Edwards, A.; Dunne, L.; et al. Functional Imaging of the Developing Brain with Wearable High-Density Diffuse Optical Tomography: A New Benchmark for Infant Neuroimaging Outside the Scanner Environment. NeuroImage 2021, 225, 117490. [Google Scholar] [CrossRef]

| Estimates | SE | CI Lower | CI Upper | RE SD | X² | df | p | |

|---|---|---|---|---|---|---|---|---|

| (Intercept) | −0.726 | 0.018 | −0.760 | −0.692 | 0.027 | |||

| condition | 0.411 | 1 | 0.521 | |||||

| condition individual | −0.076 | 0.024 | −0.124 | −0.030 | 0.029 | |||

| pairing | 0.345 | 1 | 0.556 | |||||

| pairing random | −0.021 | 0.023 | −0.066 | 0.023 | ||||

| roi | 3.489 | 3 | 0.322 | |||||

| roi ltpj | −0.011 | 0.022 | −0.055 | 0.034 | ||||

| roi rdlpfc | −0.025 | 0.024 | −0.071 | 0.022 | ||||

| roi rtpj | −0.036 | 0.022 | −0.080 | 0.008 | ||||

| condition : pairing | 5.396 | 1 | 0.020 | |||||

| condition individual : pairing random | 0.066 | 0.032 | 0.004 | 0.128 | ||||

| condition : roi | 7.559 | 3 | 0.056 | |||||

| condition individual : roi ltpj | 0.057 | 0.032 | −0.005 | 0.119 | ||||

| condition individual : roi rdlpfc | 0.102 | 0.033 | 0.038 | 0.165 | ||||

| condition individual : roi ltpj | 0.048 | 0.032 | −0.013 | 0.110 | ||||

| pairing : roi | 4.474 | 3 | 0.215 | |||||

| pairing random : roi ltpj | −0.002 | 0.031 | −0.064 | 0.059 | ||||

| pairing random : roi rdlpfc | 0.014 | 0.032 | −0.050 | 0.077 | ||||

| pairing random : roi rtpj | 0.021 | 0.031 | −0.041 | 0.082 | ||||

| condition : pairing : roi | 3.959 | 3 | 0.266 | |||||

| condition individual : pairing random : roi ltpj | −0.027 | 0.044 | −0.114 | 0.059 | ||||

| condition individual : pairing random : roi rdlpfc | −0.082 | 0.045 | −0.171 | 0.006 | ||||

| condition individual : pairing random: roi rtpj | −0.012 | 0.044 | −0.098 | 0.075 |

| Estimates | SE | CI Lower | CI Upper | RE SD | X² | df | p | |

|---|---|---|---|---|---|---|---|---|

| (Intercept) | −0.724 | 0.024 | −0.772 | −0.677 | 0.044 | |||

| condition | 1.540 | 1 | 0.214 | |||||

| condition individual | −0.075 | 0.033 | −0.140 | −0.010 | 0.053 | |||

| roi | 4.312 | 3 | 0.230 | |||||

| roi ltpj | −0.010 | 0.030 | −0.069 | 0.049 | ||||

| roi rtpj | −0.025 | 0.031 | −0.088 | 0.036 | ||||

| roi rtpj | −0.034 | 0.030 | −0.093 | 0.024 | ||||

| condition : roi | 5.647 | 3 | 0.130 | |||||

| condition individual : roi ltpj | 0.056 | 0.042 | −0.027 | 0.139 | ||||

| condition individual : roi rdlpfc | 0.103 | 0.043 | 0.018 | 0.188 | ||||

| condition individual : roi rtpj | 0.047 | 0.042 | −0.036 | 0.130 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Nguyen, T.; Hoehl, S.; Vrtička, P. A Guide to Parent-Child fNIRS Hyperscanning Data Processing and Analysis. Sensors 2021, 21, 4075. https://doi.org/10.3390/s21124075

Nguyen T, Hoehl S, Vrtička P. A Guide to Parent-Child fNIRS Hyperscanning Data Processing and Analysis. Sensors. 2021; 21(12):4075. https://doi.org/10.3390/s21124075

Chicago/Turabian StyleNguyen, Trinh, Stefanie Hoehl, and Pascal Vrtička. 2021. "A Guide to Parent-Child fNIRS Hyperscanning Data Processing and Analysis" Sensors 21, no. 12: 4075. https://doi.org/10.3390/s21124075

APA StyleNguyen, T., Hoehl, S., & Vrtička, P. (2021). A Guide to Parent-Child fNIRS Hyperscanning Data Processing and Analysis. Sensors, 21(12), 4075. https://doi.org/10.3390/s21124075