STDD: Short-Term Depression Detection with Passive Sensing

Abstract

1. Introduction

2. Related Work

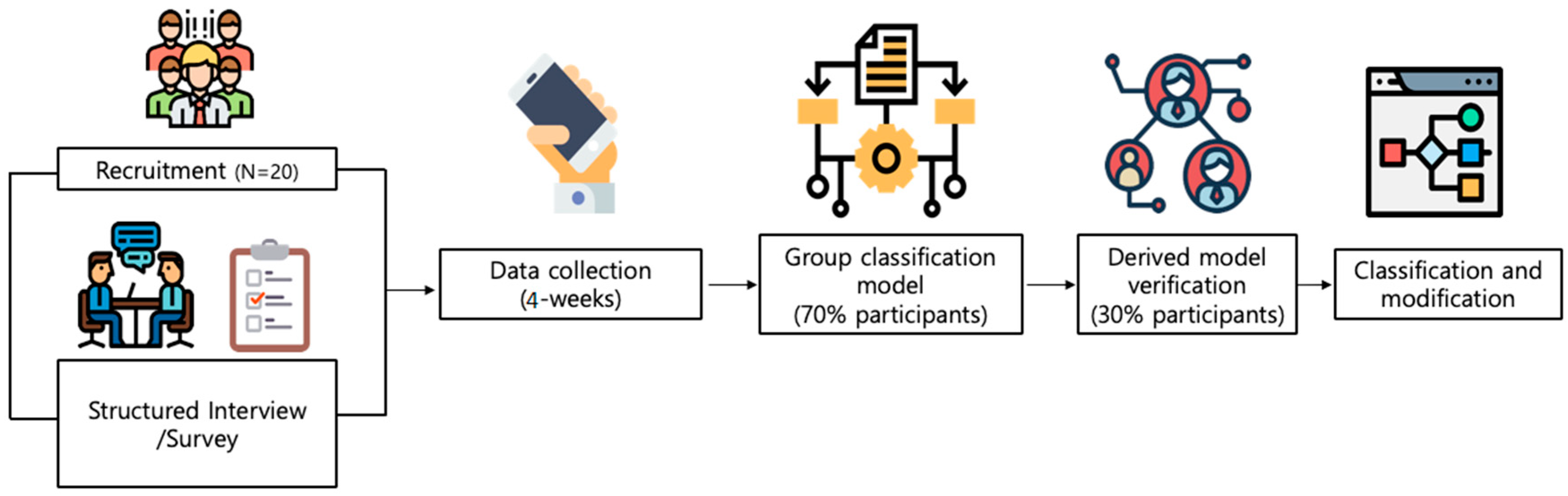

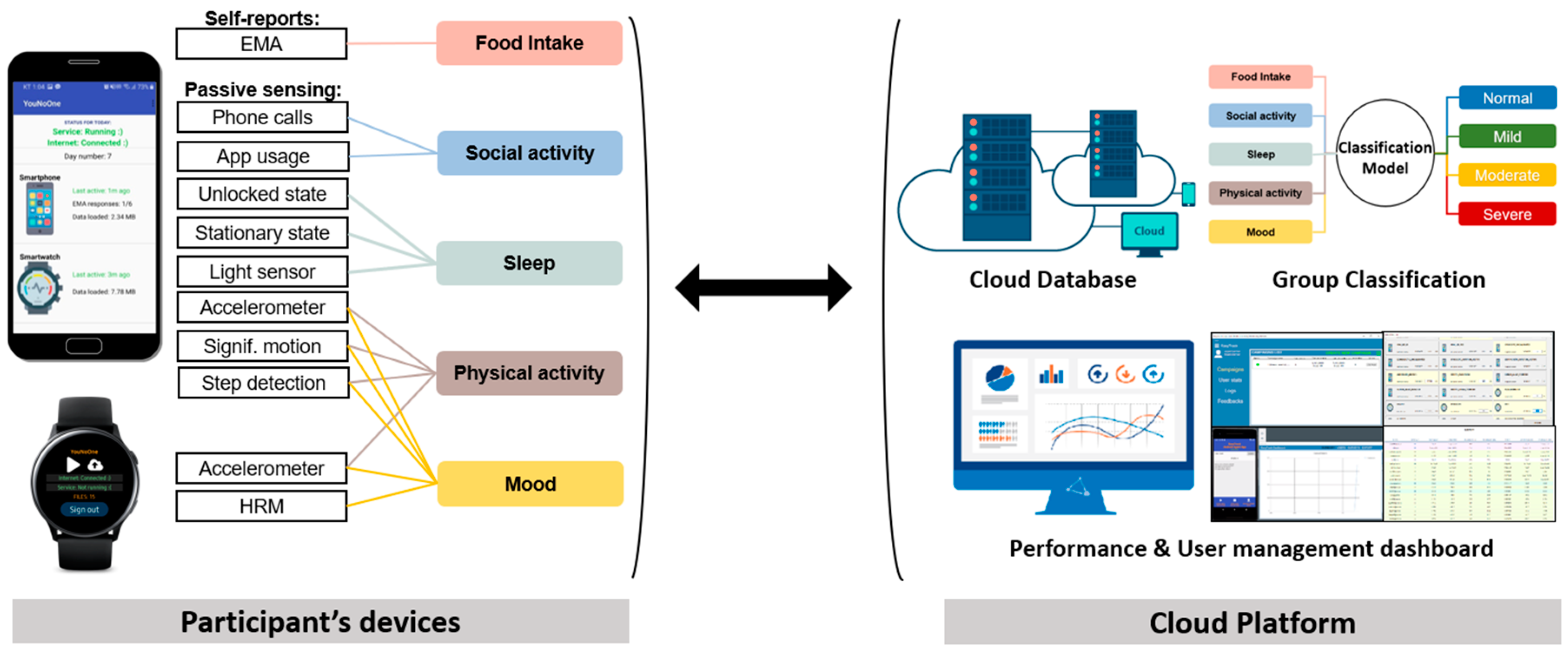

3. Study Design

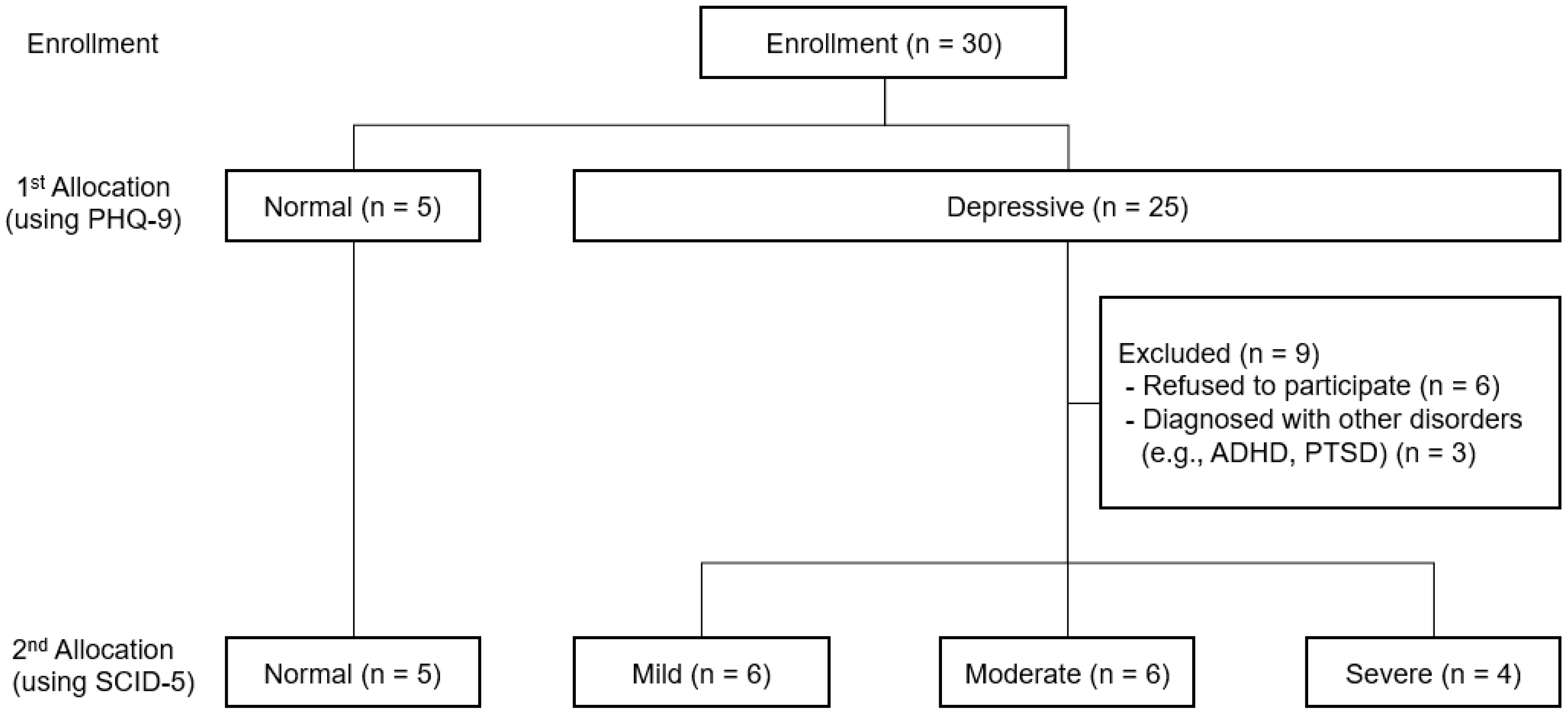

3.1. Entry and Exit

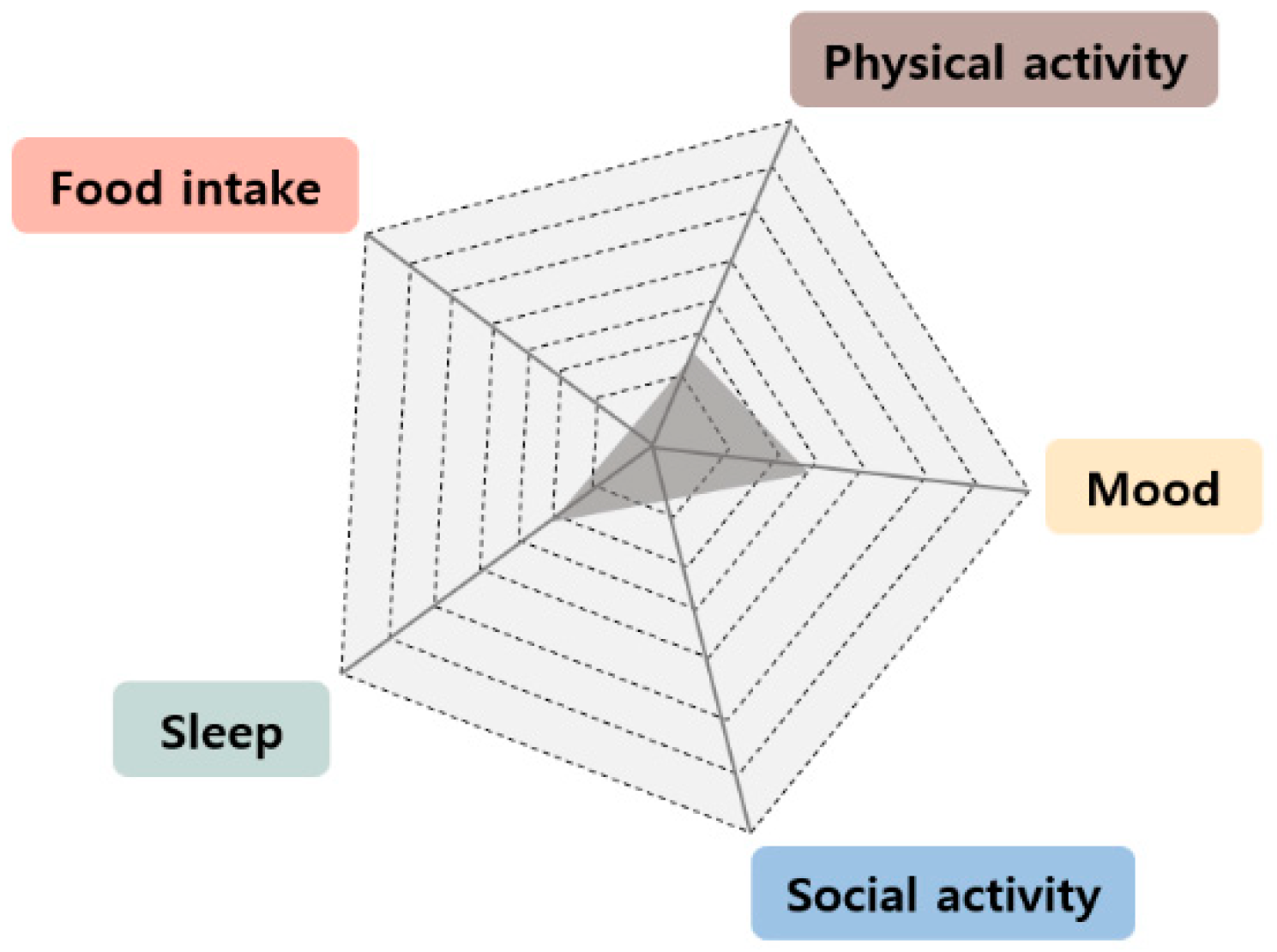

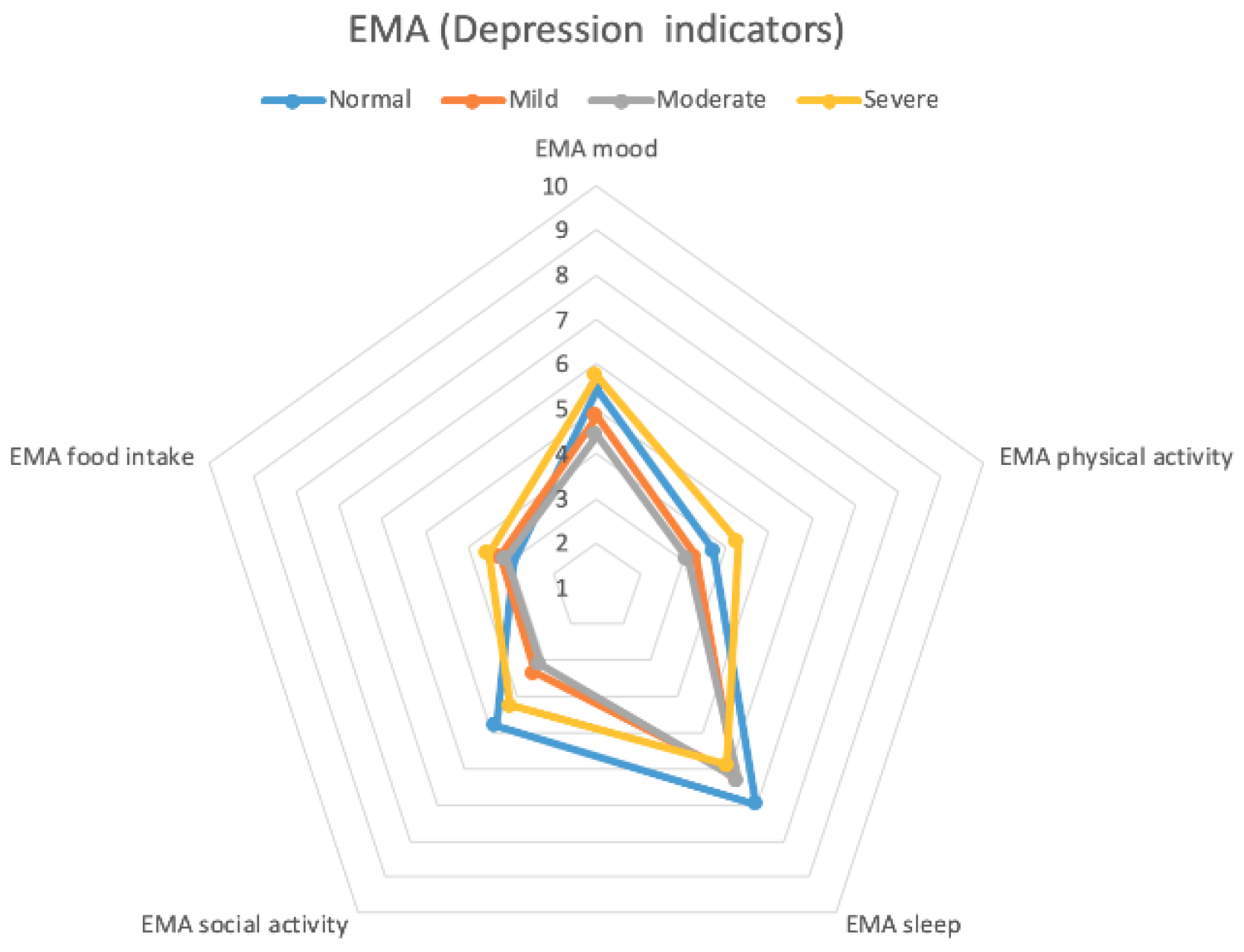

3.2. Depression Gauge: 5 Symptom Clusters

3.3. Group Classification

3.4. Data Collection and Privacy

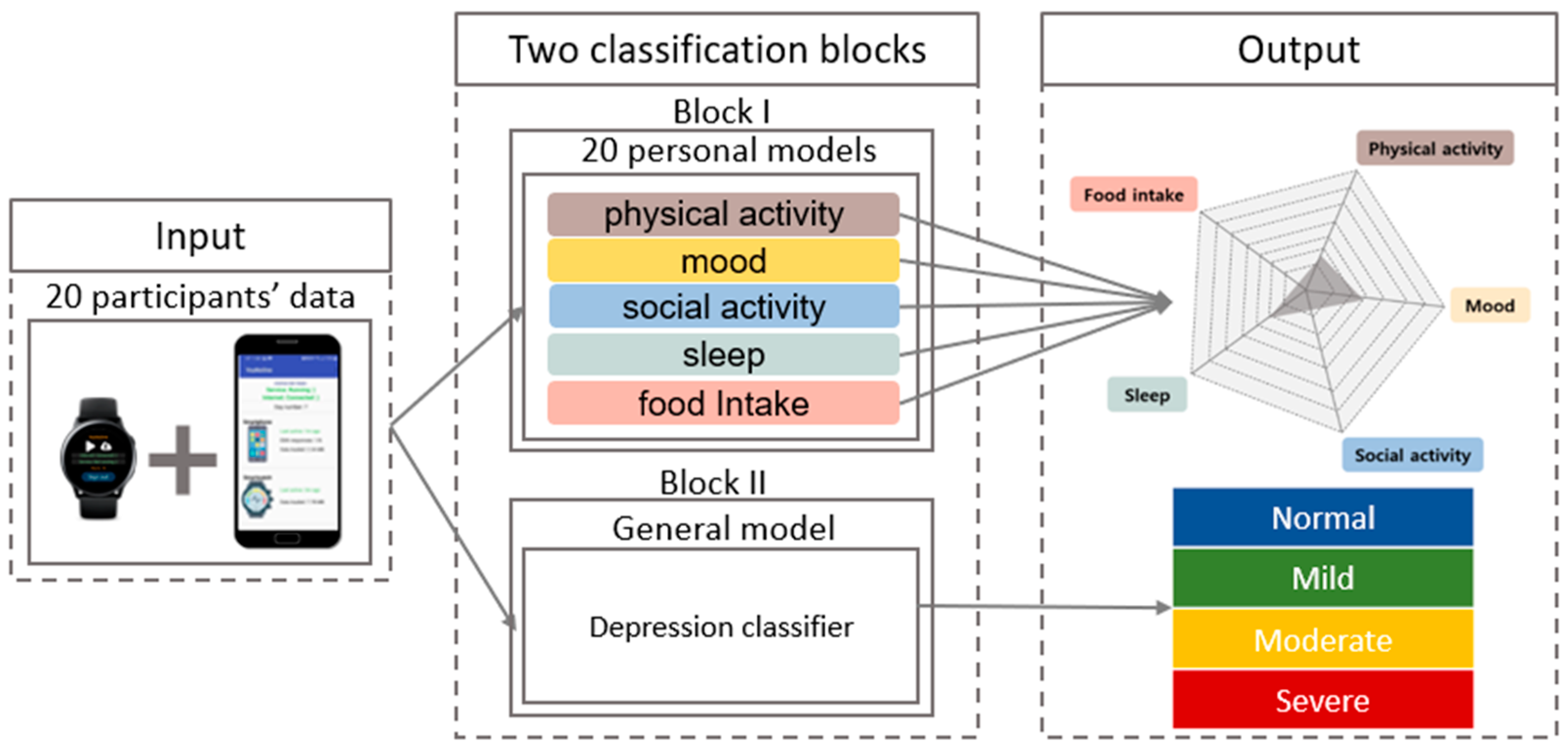

4. System Architecture and Passive Sensing

4.1. Observational Study and Depression Group Classification

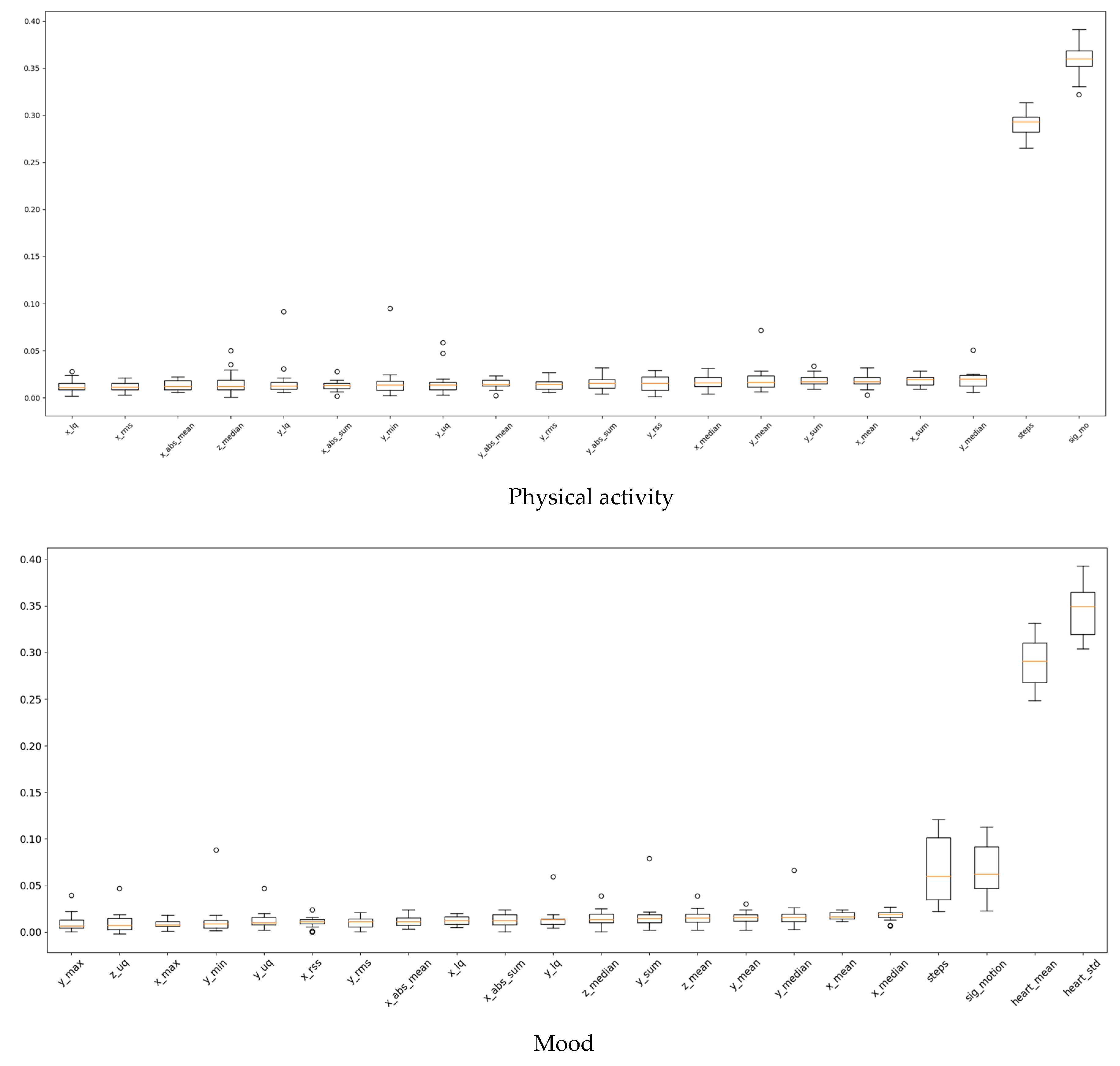

4.1.1. Block for Observational Study

4.1.2. Block for Depression Category Classification

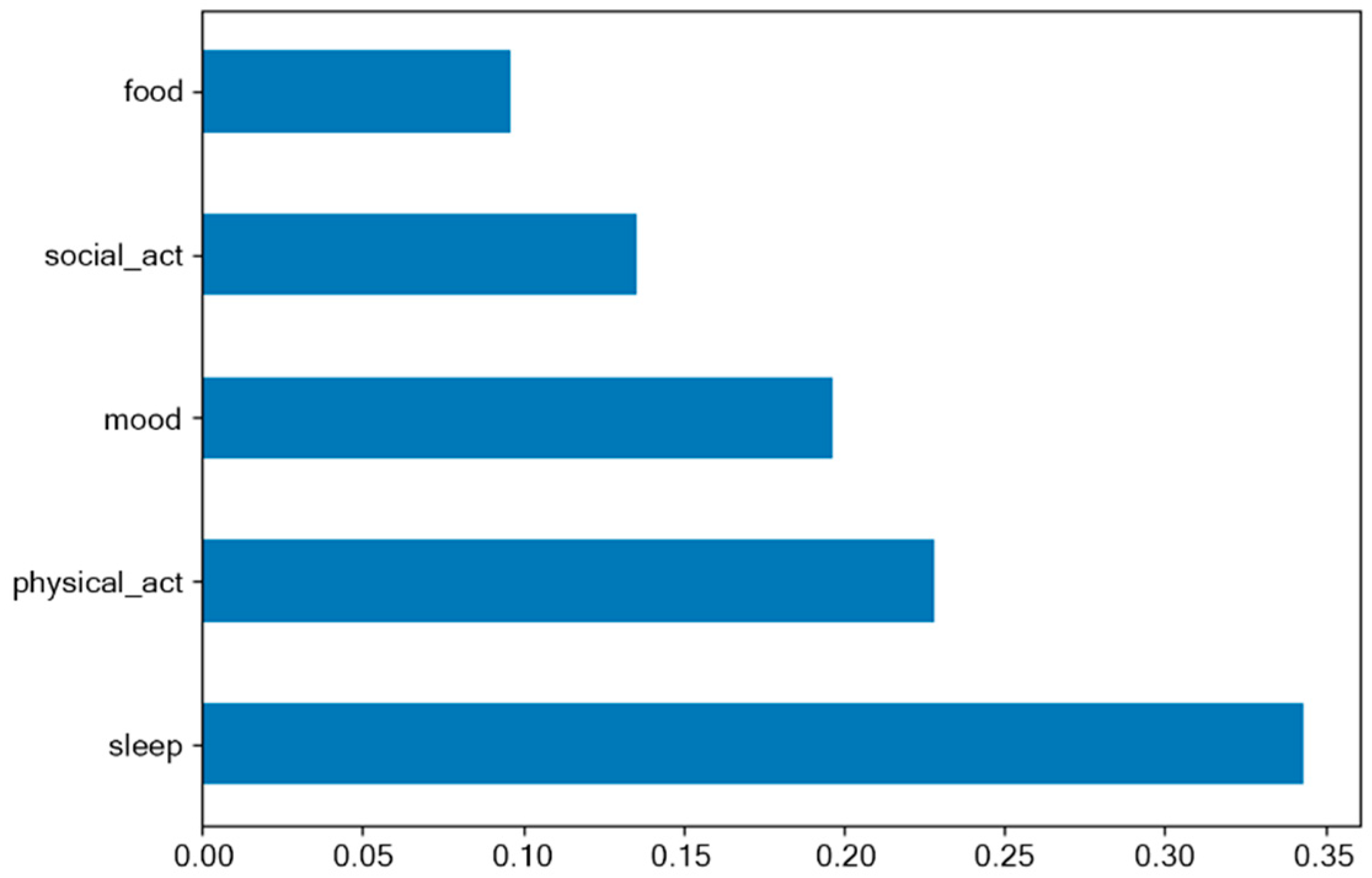

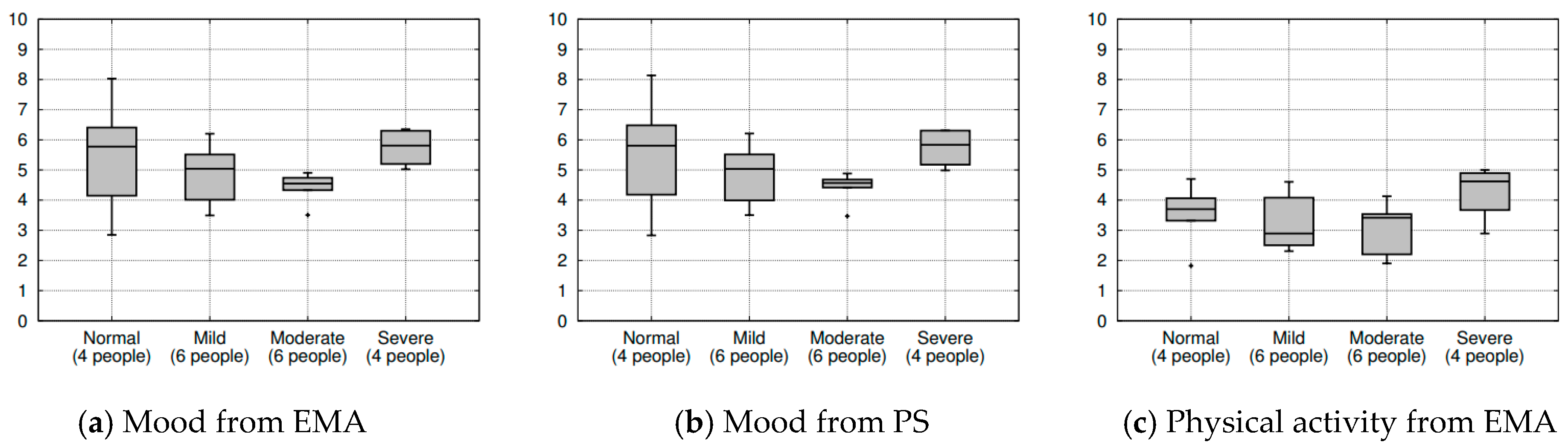

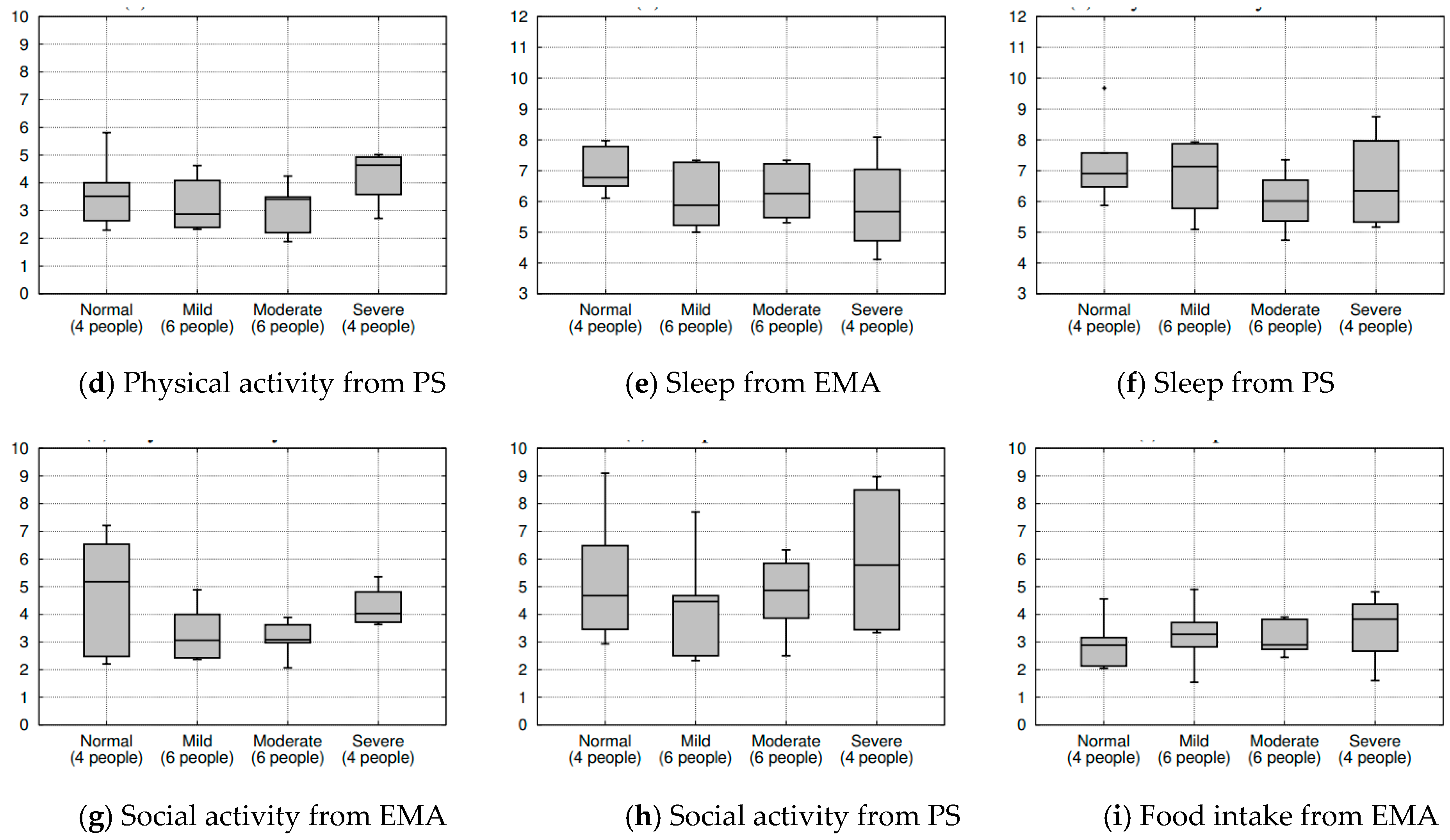

5. Results

6. Discussion and Limitations

7. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Kessler, R.C.; Chiu, W.T.; Demler, O.; Walters, E.E. Prevalence, Severity, and Comorbidity of 12-Month DSM-IV Disorders in the National Comorbidity Survey Replication. JAMA Psychiatry 2005, 62, 617–627. [Google Scholar] [CrossRef] [PubMed]

- Simon, G.E. Social and economic burden of mood disorders. Biol. Psychiatry 2003, 54, 208–215. [Google Scholar] [CrossRef]

- Katon, W.; Ciechanowski, P. Impact of major depression on chronic medical illness. J. Psychosom. Res. 2002, 53, 859–863. [Google Scholar] [CrossRef]

- Kasckow, J.; Zickmund, S.; Rotondi, A.; Mrkva, A.; Gurklis, J.; Chinman, M.; Fox, L.; Loganathan, M.; Hanusa, B.; Haas, G. Development of Telehealth Dialogues for Monitoring Suicidal Patients with Schizophrenia: Consumer Feedback. Community Ment. Health J. 2013, 50, 339–342. [Google Scholar] [CrossRef] [PubMed]

- Burns, M.; Begale, M.; Duffecy, J.; Gergle, D.; Karr, C.J.; Giangrande, E.; Mohr, D.C.; Proudfoot, J.; Dear, B. Harnessing Context Sensing to Develop a Mobile Intervention for Depression. J. Med. Internet Res. 2011, 13, e55. [Google Scholar] [CrossRef] [PubMed]

- American Psychiatric Association. Diagnostic and Statistical Manual of Mental Disorders: DSM-5, 5th ed. Washington, DC: Autor. 2013. Available online: http://dissertation.argosy.edu/chicago/fall07/pp7320_f07schreier.doc (accessed on 1 August 2019).

- Wang, R.; Chen, F.; Chen, Z.; Li, T.; Harari, G.; Tignor, S.; Zhou, X.; Ben-Zeev, D.; Campbell, A.T. Studentlife: Assessing mental health, academic performance and behavioural trends of college students using smartphones. In Proceedings of the 2014 ACM International Joint Conference on Pervasive and Ubiquitous Computing, Ser. UbiComp ’14, Seattle, WA, USA, 13–17 September 2014; pp. 3–14. [Google Scholar]

- Van Breda, W.; Pastor, J.; Hoogendoorn, M.; Ruwaard, J.; Asselbergs, J.; Riper, H. Exploring and Comparing Machine Learning Approaches for Predicting Mood Over Time. In Proceedings of the International Conference on Innovation in Medicine and Healthcare, Puerto de la Cruz, Spain, 15–17 June 2016; Volume 60, pp. 37–47. [Google Scholar]

- Becker, D.; Bremer, V.; Funk, B.; Asselbergs, J.; Riper, H.; Ruwaard, J. How to predict mood? delving into features of smartphone-based data. In Proceedings of the 22nd Americas Conference on Information Systems, San Diego, CA, USA, 11–14 August 2016; pp. 1–10. [Google Scholar]

- Deady, M.; Johnston, D.; Milne, D.; Glozier, N.; Peters, D.; Calvo, R.A.; Harvey, S.B.; Bibault, J.-E.; Wolf, M.; Picard, R.; et al. Preliminary Effectiveness of a Smartphone App to Reduce Depressive Symptoms in the Workplace: Feasibility and Acceptability Study. JMIR mHealth uHealth 2018, 6, e11661. [Google Scholar] [CrossRef]

- Boonstra, T.W.; Nicholas, J.; Wong, Q.J.J.; Shaw, F.; Townsend, S.; Christensen, H.; Chow, P.; Pulantara, I.W.; Mohino-Herranz, I. Using Mobile Phone Sensor Technology for Mental Health Research: Integrated Analysis to Identify Hidden Challenges and Potential Solutions. J. Med. Internet Res. 2018, 20, e10131. [Google Scholar] [CrossRef]

- Choi, I.; Milne, D.; Deady, M.; Calvo, R.A.; Harvey, S.B.; Glozier, N.; Calear, A.; Yeager, C.; Musiat, P.; Torous, J. Impact of Mental Health Screening on Promoting Immediate Online Help-Seeking: Randomized Trial Comparing Normative Versus Humor-Driven Feedback. JMIR Ment. Health 2018, 5, e26. [Google Scholar] [CrossRef]

- Crosby, R.D.; Lavender, J.M.; Engel, S.G.; Wonderlich, S.A. Ecological Momentary Assessment; Springer: Singapore, 2016; pp. 1–3. [Google Scholar]

- Kroenke, K.; Spitzer, R.L.; Williams, J.B. The PHQ-9: Validity of a brief depression severity measure. J. Gen. Intern. Med. 2001, 16, 606–613. [Google Scholar] [CrossRef]

- Saeb, S.; Zhang, M.; Karr, C.J.; Schueller, S.M.; Corden, M.E.; Kording, K.P.; Mohr, D.C. Mobile phone sensor correlates of depressive symptom severity in daily-life behaviour: An exploratory study. J. Med. Internet Res. 2015, 17, e175. [Google Scholar] [CrossRef] [PubMed]

- Schueller, S.M.; Begale, M.; Penedo, F.J.; Mohr, D.C. Purple: A modular system for developing and deploying behavioural intervention technologies. J. Med. Internet Res. 2014, 16, e181. [Google Scholar] [CrossRef] [PubMed]

- Dang, M.; Mielke, C.; Diehl, A.; Haux, R. Accompanying Depression with FINE - A Smartphone-Based Approach. Stud. Health Technol. Inform. 2016, 228, 195–199. [Google Scholar]

- Hung, S.; Li, M.-S.; Chen, Y.-L.; Chiang, J.-H.; Chen, Y.-Y.; Hung, G.C.-L. Smartphone-based ecological momentary assessment for chinese patients with depression: An exploratory study in Taiwan. Asian J. Psychiatry 2016, 23, 131–136. [Google Scholar] [CrossRef]

- Bardram, J.E.; Frost, M.; Szántó, K.; Faurholt-Jepsen, M.; Vinberg, M.; Kessing, L.V. Designing mobile health technology for bipolar disorder: A field trial of the Monarca system. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Ser. CHI ’13, Paris, France, 27 April–2 May 2013; pp. 2627–2636. [Google Scholar]

- Gideon, J.; Provost, E.; McInnis, M. Mood state prediction from speech of varying acoustic quality for individuals with bipolar disorder. In Proceedings of the 2016 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Shanghai, China, 20–25 March 2016; pp. 2359–2363. [Google Scholar]

- Frost, M.; Doryab, A.; Faurholt-Jepsen, M.; Kessing, L.V.; Bardram, J.E. Supporting disease insight through data analysis. In Proceedings of the 2013 ACM International Joint Conference on Pervasive and Ubiquitous Computing (UbiComp 2013), Zurich, Switzerland, 8–12 September 2013; pp. 133–142. [Google Scholar]

- Voida, S.; Matthews, M.; Abdullah, S.; Xi, M.C.; Green, M.; Jang, W.J.; Hu, D.; Weinrich, J.; Patil, P.; Rabbi, M.; et al. Moodrhythm: Tracking and supporting daily rhythms. In Proceedings of the 2013 ACM Conference on Pervasive and Ubiquitous Computing Adjunct Publication, Ser. UbiComp ’13 Adjunct, Zurich, Switzerland, 8–12 September 2013; pp. 67–70. [Google Scholar]

- Hidalgo-Mazzei, D.; Mateu, A.; Reinares, M.; Undurraga, J.; del Mar Bonnín, C.; Sánchez-Moreno, J.; Vieta, E.; Colom, F. Self-monitoring and psychoeducation in bipolar patients with a smart-phone application (sim-ple) project: Design, development and studies protocols. BMC Psychiatry 2015, 15, 52. [Google Scholar] [CrossRef]

- Tighe, J.; Shand, F.; Ridani, R.; MacKinnon, A.; De La Mata, N.L.; Christensen, H. Ibobbly mobile health intervention for suicide prevention in Australian Indigenous youth: A pilot randomised controlled trial. BMJ Open 2017, 7, e013518. [Google Scholar] [CrossRef]

- Qualtrics. Available online: https://www.qualtrics.com/ (accessed on 10 September 2019).

- Upton, J. Beck Depression Inventory (BDI). In Encyclopedia of Behavioral Medicine; Gellman, M.D., Turner, J.R., Eds.; Springer: New York, NY, USA, 2013; pp. 178–179. [Google Scholar]

- State-Trait Anxiety Inventory (STAI). Available online: https://www.apa.org/pi/about/publications/caregivers/practice-settings/assessment/tools/trait-state (accessed on 15 August 2019).

- Pollack, M. Comorbid anxiety and depression. J. Clin. Psychiatry 2005, 66, 22–29. [Google Scholar]

- Mikolajczyk, R.; El Ansari, W.; Maxwell, A. Food consumption frequency and perceived stress and depressive symptoms among students in three European countries. Nutr. J. 2009, 8, 31. [Google Scholar] [CrossRef]

- Wallin, M.S.; Rissanen, A.M. Food and mood: Relationship between food, serotonin and affective disorders. Acta Psychiatr. Scand. 1994, 89, 36–40. [Google Scholar] [CrossRef]

- Manea, L.; Gilbody, S.; McMillan, D. Optimal cut-off score for diagnosing depression with the Patient Health Questionnaire (PHQ-9): A meta-analysis. Can. Med. Assoc. J. 2011, 184, E191–E196. [Google Scholar] [CrossRef] [PubMed]

- Kocalevent, R.D.; Hinz, A.; Brahler, E. Standardization of the depression screener Patient Health Questionnaire (PHQ-9) in the general population. Gen. Hosp. Psychiatry 2013, 35, 551–555. [Google Scholar] [CrossRef]

- Manea, L.; Gilbody, S.; McMillan, D. A diagnostic meta-analysis of the Patient Health Questionnaire-9 (PHQ-9) algorithm scoring method as a screen for depression. Gen. Hosp. Psychiatry 2015, 37, 67–75. [Google Scholar] [CrossRef] [PubMed]

- Django. Available online: https://www.djangoproject.com/ (accessed on 1 July 2019).

- Hall, M.; Frank, E.; Holmes, G.; Pfahringer, B.; Reutemann, P.; Witten, I.H. The WEKA data mining software. ACM SIGKDD Explor. Newsl. 2009, 11, 10. [Google Scholar] [CrossRef]

- Quiroz, J.C.; Yong, M.H.; Geangu, E. Emotion recognition using smart watch accelerometer data: Preliminary findings. In Proceedings of the 2017 ACM International Joint Conference on Pervasive and Ubiquitous Computing and Proceedings of the 2017 ACM International Symposium on Wearable Computers, Ser. UbiComp ’17, Maui, HI, USA, 11–15 September 2017; pp. 805–812. [Google Scholar]

- Quiroz, J.C.; Geangu, E.; Yong, M.H.; Lee, U.; Snider, D. Emotion Recognition Using Smart Watch Sensor Data: Mixed-Design Study. JMIR Ment. Health 2018, 5, e10153. [Google Scholar] [CrossRef]

- KakaoTalk. Available online: https://www.kakaocorp.com/service/KakaoTalk?lang=en (accessed on 20 July 2019).

- Canzian, L.; Musolesi, M. Trajectories of depression: Unobtrusive monitoring of depressive states by means of smartphone mobility traces analysis. In Proceedings of the 2015 ACM International Joint Conference on Pervasive and Ubiquitous Computing, ser. UbiComp ’15, Osaka, Japan, 7–11 September 2015; pp. 1293–1304. [Google Scholar]

| Applications | User | Purpose | Methods & Data | Passive Sensing | EMA | Interventions |

|---|---|---|---|---|---|---|

| StudentLife [7] | Students | Mental Health & Academic- performance Prediction | Auto-report (activity, mobility, body, sleep, social) Self-report (PHQ-9, stress, self-perceived success, lonliness) | Yes | Yes | No |

| eMate EMA [8] | Students | Emotion prediction ability measurement | Self-report (emotion / five times a day) | No | Yes | No |

| iYouUV [9] | Students | Research | Auto-report (social activity, calls, SMS, App use pattern, screen-release, pictures) | Yes | No | No |

| Headgear [10] | Employee | Depression & Anxiety Detection | Self-report (emotion) | No | Yes | Yes |

| Socialise [11] | Ordinary | Depression & Anxiety Detection | Auto-report (Bluetooth, GPS, battery status) | Yes | No | No |

| Mindgauge [12] | Ordinary | Monitoring | Self-report (psychological problems, well-being, resilience) | No | Yes | Yes |

| Purple robot [16] | Depressive | Depression Detection | Auto-report (physical activity, social activity) Self-report (PHQ-9) | Yes | Yes | No |

| FINE [17] | Depressive | Depression Detection | Auto-report (smartphone use, social activity, movement) Self-report (emotion, PHQ-9) | Yes | Yes | No |

| Mobilyze! [5] | Depressive | Prediction & Intervention of Depression | Auto-report (physical activity, social activity) Self-report (emotion) | Yes | Yes | Yes |

| iHOPE [18] | Depressive | Research for EMA (feasibility & validity) | Auto-report (smartphone usage patterns) Self-report (emotion) | Yes | Yes | Yes |

| PRIORI [20] | Bipolar | Selection of Risk groups | Auto-report (voice pattern analysis) | Yes | No | No |

| MONARCA [19,21] | Bipolar | Symptom management & Intervention | Auto-report (accelerometer, call logs, screen on/off time, app usage, browsing history) Self-report (mood, sleep) | Yes | Yes | Yes |

| Moodrhythm [22] | Bipolar | Monitoring & Intervention | Auto-report (sleep, physical, social activity) | Yes | No | Yes |

| SIMPle 1.0 [23] | Bioplar | Symptom management & Psycho- educational Intervention | Auto-report (smartphone or SNS time, calls, and physical activity) Self-report (mood, suicidal thoughts) | Yes | Yes | Yes |

| iBobbly [24] | Depressive | Suicide Prevention | Self-report (emotion, function) | No | Yes | Yes |

| Symptom Cluster | Sensors | Features |

|---|---|---|

| Physical activity | Accelerometer (for each x, y, z axis) | Mean, standard deviation, maximum, minimum, energy, kurtosis, skewness, root mean square, root sum square, sum, sum of absolute values, mean of absolute values, range, median, upper quartile, lower quartile, and median absolute deviation |

| Step detector | Number of steps taken | |

| Significant motion | Number of significant motion sensor triggers | |

| Mood | Sensors used for physical activity | Features used for physical activity |

| HRM | Mean, standard deviation |

| EMA Check-in Points | Response Rate |

|---|---|

| 7 a.m. | 0.38 |

| 10 a.m. | 0.60 |

| 1 p.m. | 0.64 |

| 4 p.m. | 0.61 |

| 7 p.m. | 0.60 |

| 10 p.m. | 0.58 |

| Symptom Cluster | Precision (Mean ± SD) | Recall (Mean ± SD) | F- Measure (Mean ± SD) | TP Rate (Mean ± SD) |

|---|---|---|---|---|

| Physical activity | 91.20 ± 4.51% | 91.10 ± 4.59% | 91.05 ± 4.62% | 91.10 ± 4.59% |

| Mood | 91.26 ± 4.43% | 90.95 ± 4.57% | 91.42 ± 4.89% | 91.04 ± 4.55% |

| Group Name | Total Instances | Correctly Classified | Total TP Rate |

|---|---|---|---|

| Normal | 150 | 146 | 97.33% |

| Mild | 150 | 147 | 98.00% |

| Moderate | 150 | 138 | 92.00% |

| Severe | 150 | 145 | 96.67% |

| Total number | 600 | 576 | 96.00% |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Narziev, N.; Goh, H.; Toshnazarov, K.; Lee, S.A.; Chung, K.-M.; Noh, Y. STDD: Short-Term Depression Detection with Passive Sensing. Sensors 2020, 20, 1396. https://doi.org/10.3390/s20051396

Narziev N, Goh H, Toshnazarov K, Lee SA, Chung K-M, Noh Y. STDD: Short-Term Depression Detection with Passive Sensing. Sensors. 2020; 20(5):1396. https://doi.org/10.3390/s20051396

Chicago/Turabian StyleNarziev, Nematjon, Hwarang Goh, Kobiljon Toshnazarov, Seung Ah Lee, Kyong-Mee Chung, and Youngtae Noh. 2020. "STDD: Short-Term Depression Detection with Passive Sensing" Sensors 20, no. 5: 1396. https://doi.org/10.3390/s20051396

APA StyleNarziev, N., Goh, H., Toshnazarov, K., Lee, S. A., Chung, K.-M., & Noh, Y. (2020). STDD: Short-Term Depression Detection with Passive Sensing. Sensors, 20(5), 1396. https://doi.org/10.3390/s20051396