1. Introduction

Recently, developed computational technologies have led to a big leap in the usability of range imaging devices. The mentioned depth sensors are smaller, have a higher sampling rate and resolution, but are also much more precise. They are mainly used for computer vision in robotics, and can work as motion controllers for computers and other devices or 3D scanners. In addition, they can be used as non-contact and non-invasive systems for monitoring breathing and its features. The first widely available depth sensor was MS Kinect for Xbox 360 and MS Kinect for Xbox One, which was both successfully tested for complex monitoring of selected biomedical features [

1,

2,

3,

4,

5,

6,

7]. They formed an inexpensive replacement for conventional devices. These sensors can be used to monitor breathing and possibly heart rate changes during physical activities or sleep to analyze disorders by mapping chest movements during breathing.

In 2013, Intel presented at a Consumer Electronics Show that it would start focusing on adding motion and voice controls to its computers under the label

perceptual computing. As such, the technology was re-revealed in 2014 as RealSense (RS) [

8]. After research and implementation of said perceptual computing abilities in devices, Intel launched on January 2018 a new Intel RealSense D400 Product Family with Intel RealSense Depth Module D400 Series, and two ready to use depth cameras (Intel RealSense Depth Cameras D435 and D415).

In general, the use of image and depth sensors [

1,

7,

9,

10,

11,

12] allow sleep monitoring and 3D modeling of the chest and abdomen volume changes during breaths as an alternative to conventional spirometry with the use of red-green-blue (RGB) cameras [

13,

14], infrared cameras [

15,

16], or Doppler multi-radar systems [

17] in addition to ultrasonic and biomotion sensors [

18,

19,

20,

21]. The paper provides motivation for further studies of methods related to big data processing problems, dimensionality reduction, principal component analysis, and parallel signal processing to increase the speed, accuracy, and reliability of the results. Methods related to motion recognition can be further used in multichannel biomedical data processing for the detection of neurological disorders, for facial and movement analysis during rehabilitation, and for motion monitoring in assisted living homes [

22,

23,

24].

2. Materials and Methods

This work is devoted to proving two goals: showing that various depth sensors can be used to record breathing rate with the same accuracy as contact sensors used in polysomnography (PSG).

Section 2.3 is dedicated to spectral analysis of signals recorded by depth sensors and PSG (

Table 1), which is used to show the ability of these sensors to capture breathing rate. The next step after preprocessing and comparing signals is to show that breathing signals from depth sensors can be used for classification of sleep apnea events with the same success rate as with PSG data.

Section 2.4 is devoted to the classification of sleep apnea events. A simple competitive neural network is trained and tested on a set of PSG data records and successfully tested on a breathing signal recorded by MS Kinect v2 and RealSense R200.

The necessary background is explained in the next subsections.

2.1. Data Acquisition

Presented data were recorded either in a sleep laboratory or in a home environment. The overall set consists of 57 patients (demographic overview in

Table 2) recorded in a sleep laboratory with polysomnography, where 32 of them were healthy and 25 patients had sleep apnea. To compare the depth sensors properly, an 8-h long set of data was recorded with MS Kinect v2 together with PSG. The PSG has a stable sampling frequency of 10 Hz, and MS Kinect v2 has a mean sampling frequency of 20 Hz. A reason for an unsteady sampling frequency of MS Kinect v2 is that it is not primarily depth sensors, but a device for skeletal data tracking. The sensor is constantly searching for people in space that it would segment and the increased processing demands slows down depth data output. Five other short standalone experimental records of a sleeping person (length 14–43 min.) were recorded with all sensors mentioned in a home environment with the mean sampling frequency being between 30 and 35 Hz. A detailed overview is in

Table 1. The stable sampling frequency is necessary to properly capture the motion of a chest during breathing. Each sensor has different recording capabilities and needs different software for recording of data: MS Kinect—C#, Java, Matlab; Intel RS SR300 and R200—Intel Realsense SDK 1.x, C#; Intel RS D415 and D435—Intel Realsense SDK: Librealsense, Viewer (.bag) and MatLab wrapper. The overview of various specifications of depth sensors is in

Table 3. The Microsoft Kinect v2 was developed by Microsoft, One Microsoft Way, Redmond, Washington, United States. The Intel RealSense cameras are developed by Intel Corporation, Santa Clara, California, United States.

The sensors included in this study represent a variety of depth sensing devices—from the well-known and widely used MS Kinect v2, which is unique with its time of flight depth sensing technology and its primary function of skeletal tracking to Intel RealSense R200 with its low energy consumption, global shutter, and means to be used in small portable devices. They use tree depth sensing technologies. Time of flight uses IR emitter and camera, the technology counts the time of emitted IR pulse from the device back to the camera, and it recalculates this time to distance. The advantage of this technology is that each pixel of the output depth map has an independent value. Coded light or Structured light technology uses an IR projector and camera. By illuminating scene with a designed static light pattern, at once or by parts, it can determine depth from a single image of reflected light. The disadvantage lies in the design of a pattern, it might mean that, instead of unique depth value for each pixel, the depth might be the same for a few neighboring pixels. Active IR stereo combines the coded Light technology with stereo triangulation. It uses one IR projector and two cameras. The projected light pattern is captured by both cameras and the depth is calculated by estimating disparities between matching points in images from both cameras, resulting in enhanced depth precision.

The ideal candidate for use in this study would be Intel RS D435 with high frames per second (FPS), more precise active IR stereo, and global shutter. However, all mentioned depth sensors are taken into account in testing their ability to capture breathing rate, as there is a reason to use each of them in a different situation, or one cannot choose which to use or acquire.

The MS Kinect v2 was placed 1 meter away from a bed, looking down at the abdomen of a patient at an angle of 45 degrees from a plane of the bed. The recorded patient has a blanket, and it was possible for him to sleep either on his side or on his back despite the restriction of PSG cables and sensors. In a home environment were depth sensors were placed at the head of bed looking down at abdomen of a patient at an angle of 45 degrees from the plane of a bed.

2.2. Data Processing

The sequences of depth maps that were acquired by the depth sensors were analyzed over the selected rectangular area with

R rows and

S columns that covered the chest area of the individual. For each matrix

including values inside the region of interest at discrete time

n, the mean values of a difference between two consequent images were evaluated by the following equation:

In this way, the mean value of change over each pixel in the selected area for each two depth data forms a separate time series of length .

Then, FIR filtering of the selected order

M was applied to obtained padded signal

to evaluate the new sequence

using the following equation:

with coefficients

defined to form a band-pass filter with cut-off frequencies

Hz and

Hz to cover the estimated frequency of breathing rate and to reject all other frequency components including the mean signal value and additional high frequency noise. A Savitzky–Golay smoothing filter of the second order might be applied for additional smoothing.

Timestamps recorded with each image frame allowed for resampling of the resulting data with a spline interpolation to a constant sampling rate of Hz matching sampling rate of PSG.

The resampled data are used for feature extraction and classification using an unsupervised competitive neural network. It is using competitive learning which is in the form of a hidden layer (commonly called a

competitive layer) where every competitive neuron

i is described by its vector of weights

which calculates measure of similarity between the input data

and the vector

. The neurons compete each other on every input vector based on greatest similarity to its weight vector. The

l neuron that wins sets its output

and all other neuron outputs

. The inverse of Euclidean distance is usually used as a similarity function together with learning vector quantization (LVQ), where

L is number of clusters and weight vector

corresponds to centroid of the cluster

i [

25,

26].

Receiver operating characteristics (ROCs) are used for evaluation of the classifier. It is created by a comparison of classification results to target data and by creating a confusion matrix with: true positive (TP) values, equivalent with hit; true negative values (TN), equivalent with correct rejection; false positive (FP), equivalent with Type I error; and false negative (FP), equivalent with Type II error. The measures used in a classifier evaluation are Sensitivity (TPR), Specificity (TNR), Precision (PPV) from Equation (

3) and Accuracy (ACC) with

score from Equation (

4). The goal is to have as much accuracy as possible; however, in some cases,

score is more useful as it considers both the precision and sensitivity:

2.3. Breathing Data Processing

To show that the depth sensor records are qualitatively comparable to PSG, various records of breathing during sleep were made with Kinect V2 and RealSense SR300, R200, D415, and D435.

The overall set consists of 57 whole night PSG records, 1 whole night record was made by MS Kinect v2 simultaneously recorded with PSG, and 5 short standalone experimental records with the rest of the sensors mentioned.

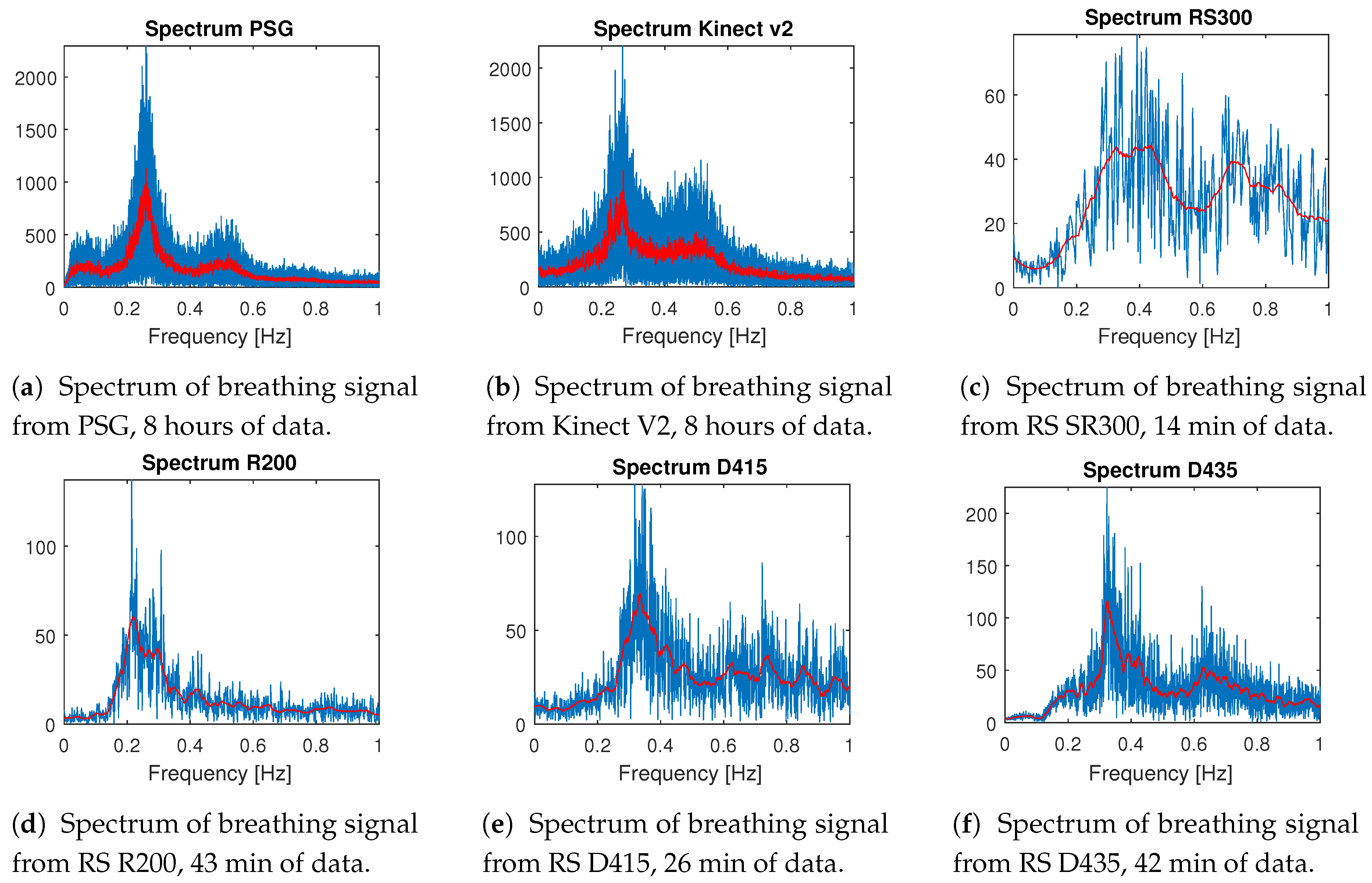

The most interesting comparison is from whole night sleep data recorded with PSG and MS Kinect v2 at once. The spectrum from one selected PSG record processed with FFT [

27] is in

Figure 1a and the spectrum from the corresponding MS Kinect v2 record is in

Figure 1b. The resting breathing rate of an adult person is between 0.2 and 0.5 Hz while both figures clearly show that both pieces of data have the highest presence of frequency change between 0.2 and 0.3 Hz, with a very similar curve of the breathing data spectrum.

The rest of the records are made in a home environment, one sensor at a time, under the same conditions (angle of view, placement of the sensor, distance from bed), and their details are summarized in

Table 1. Except for the MS Kinect v2, all devices have a very stable frame rate around 30 FPS during the whole record. Spectral analysis (

Figure 1) from RealSense SR300 is in

Figure 1c, RealSense R200 in

Figure 1d, RealSense D415 in

Figure 1e, and RealSense D435 in

Figure 1f. From all these figures, it is clear that all of the sensors can capture the breathing rate of a sleeping person using the described methods, and shows similar trends to the whole night records.

For some of the diagnoses of sleep disorders, it is sufficient to have a breathing rate, but, for diagnosis of sleep apnea, it is very useful to have a breathing signal. The sleep apnea Syndrome [

28,

29,

30,

31,

32] is characterized by a cessation or decrease of ventilatory effort during sleep and is usually associated with oxygen desaturation [

33]. It is divided into three categories: Central Apnea, Obstructive Apnea, and Mixed Apnea. The severity of such syndrome is then measured by a number of episodes during the night and are associated with sleepiness or insomnia. The classification by sleep specialists then usually marks some part of the signal when the events occur because manual marking of all events of 8-hour long records would be unnecessarily time-consuming. However, these data are enough for getting the ground true for classifier training.

2.4. Classification of Breathing Data

A classifier from the pilot study [

34] was trained on 57 whole night PSG records (32 healthy and 25 patients with sleep apnea) without a teacher based on a competitive neural network works with good accuracy on incomplete classification from a sleep specialist, 94.2% accuracy (vs. sleep specialist). The classifier from the pilot study was trained on features derived from signal energy of the 6th level Haar’s wavelet decomposition. The breathing signal from a depth sensor is different from the one from PSG, mainly because of using a difference of signal in its process. The same approach using unsupervised learning can be used to train a similar classifier on data used in this study.

The strategy to classify a normal breathing signal from that of apnea event is based on analyzing part of a signal of some length. The whole signal is then divided into smaller overlapping pieces. The first feature for the classifier is the most significant frequency. A disadvantage of this feature is that it does not take amplitude of signal into account, so a standard deviation of the signal was selected as a second feature.

Since there are parts of training PSG data that are only partially classified and longer parts of the signal with just one apnea event are rare, it is challenging to create a good training set for supervised learning, which is a reason why unsupervised learning was used. The training set was created from a selected marked apnea event from the set in

Table 4. Each segment contains a marked event with 25 s before and after the event so parts of signals with normal breathing were added to the training set. If

there were marked events too close to each other (2 central apnea events, 14 obstructive apnea events),

the events were not used for training, but they are still useful for evaluation. The circumjacent signal

usually contained apnea events missing from original classification These data were used to train two

classifiers, simple competitive neural network (2 neurons, 1000 epochs), and K-means classifier with

2 classes. The competitive neural network was selected for further use, as it showed better accuracy

(comparison of classifiers statistics is in

Table 5).

The new classifier was successfully tested on data selected from whole night PSG records (

Figure 2a), with a selected part with Central apnea events in

Figure 3a.

3. Results

This paper aims to prove two goals: the first is to show that all mentioned depth sensors can be used to record breathing rate with the same accuracy as contact sensors used in PSG. The second goal is to prove that breathing signals from depth sensors can have the same sensitivity to breathing changes as in PSG data and that it can be used for sleep apnea classification.

The breathing rate can be easily represented as the frequency of a recorded signal. The adult patient should have a breathing rate between 16 and 20 breaths per minute, which is 0.26 Hz and 0.33 Hz. For elderly patients, the breathing rate should not be lower than 10 breaths per minute and higher than 30 breaths per minute, which corresponds to 0.16–0.5 Hz [

35,

36,

37,

38,

39]. All tested depth sensors are capable of recording depth data with enough precision in depth sensing and sampling frequency (20–35 FPS) in time to capture breathing rate.

To test the quality of breathing data from depth sensors processed by the proposed workflow, a neural network classifier (simple competitive NN) was trained on a set of whole night polysomnographic records with a classification of sleep apneas by a sleep specialist. The training data consist of 57 records containing 32 healthy patients and 25 patients with sleep apnea. Since the original PSG classification by a sleep specialist is done manually, not every apnea event is marked in the classification.

There are two goals for the classification of sleep apnea. The first is to mark correctly that the event occurred, as a number of events per hour or per night is useful criteria for severity ranking of a sleep apnea syndrome. The second goal is to match the classification of a sleep specialist as closely as possible. The length of sleep apnea is also counted in the severity of sleep apnea syndrome [

33]. The usual sampling rate of classification used in hospital record is 0.5 seconds, and we decided to use more precise classification with a sampling period of up to 0.1 seconds (10 Hz). The resulting classifier, validated by 5 K-Fold cross-validation with a randomized training set using 70% of segments for training. This classifier can mark all apnea events with 100% accuracy when compared to the PSG classification of a sleep specialist, and with an

score of 92.2% (

Table 5, sensitivity 89.1% and specificity 98.8%) when compared to the classification of breathing signal segments by a sleep specialist (sampling frequency of 10 Hz). The cause of false positive and false negative classification is mainly because of slower change from regular breathing to apnea event. An example of classifier use on one of the segments is in

Figure 2b, where there is also graphical comparison to classification by a sleep specialist. Since we can rely on classification from a sleep specialist as ground truth, we can expect that the parts we are unable to compare are classified with the same or similar accuracy.

The classifier was then applied on experimental data with simulated sleep apnea records made with RealSense R200 and MS Kinect V2. These two sensors were selected from all tested devices because they both have highest error estimating distance (MS Kinect V2 [

40], 6 mm; RS R200 3 mm [

41]). Furthermore, the RS R200 has undefined areas in depth images and works on low power (1.6 W [

42]), so it is the best candidate for any further use. The example of successful classification results for RS R200 are in

Figure 3b, and the whole record contained four sleep apnea events that the classifier correctly marked. An example of successful results for Kinect v2 is in

Figure 3c, and the whole input signal contained five similar sleep apnea events.

4. Discussion and Conclusions

The limitation of this study is in the position of the depth sensor and training of the classifier. The processing of depth data can handle a blanket or clothes, but a big distance or bad angle would mean more noise and less recorded movement. The training set could be more balanced with more samples, but as it usually is with biological or biomedical data, it takes time and effort to gather data for a balanced training set. Some of the samples of apnea events are from the same person, but none of the events are repeated in the training set.

Hypothetically, the method can also be used for monitoring newborns with modification of an FIR filter. The breathing rate of newborns is 40–60 breaths per minute, which is 1.33–2 Hz. The proposed method has an upper cut-off frequency at 1 Hz. In theory, a 4 Hz sampling rate would satisfy the Nyquist–Shannon sampling theorem, while most of the sensors have a 30 Hz sampling rate. However, the resolution of depth data should not be a problem, but the movement of the newborn might be problematic.

This paper presents the use of various depth sensors for non-contact monitoring of breathing rate and breathing signal using recorded depth data frames and verification of the obtained results. We showed that the depth sensors MS Kinect v2, Intel RealSense R200, SR300, D415, and D435 are capable of capturing the breathing rate of a sleeping person. The classifier can mark both sleep apnea events and signal segments of sleep apnea events with acceptable accuracy. Both can be used for severity ranking: the first as a number of events per hour and the second for finding out the length of the event. In addition, both types of classification were successfully tested on simulated sleep apnea recorded with MS Kinect v2 and Intel RealSense R200. This method is only a small step before fully automatic use. The future research will be focused on automatic segmentation of a patient’s chest, which is now done manually. Automatic segmentation is so far tested only on data from MS Kinect v2. Quantification of automatic segmentation of a chest area from other depth sensors is planned for the near future.

Author Contributions

Data curation, J.K.; Formal analysis, M.S.; Software, M.S.; Supervision, A.P.; Validation, O.V.; Writing—review and editing, M.S. and A.P. All authors have read and agreed to the published version of the manuscript.

Funding

This research was supported by grant projects of the Ministry of Health of the Czech Republic (FN HK 00179906) and of the Charles University in Prague, Czech Republic (PROGRES Q40), as well as by the project PERSONMED—Centre for the Development of Personalized Medicine in Age-Related Diseases, Reg. No. CZ.02.1.010.00.017_0480007441, co-financed by the European Regional Development Fund (ERDF) and the governmental budget of the Czech Republic.

Acknowledgments

The research was approved by the Ethics Committee of University Hospital of Hradec Králové as stipulated by the Helsinki Declaration. All participants did consent to the use of recorded data for further studies by agreeing to be accepted to be cared for in a faculty hospital.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Schätz, M.; Centonze, F.; Kuchynka, J.; Tupa, O.; Vysata, O.; Geman, O.; Prochazka, A. Statistical recognition of breathing by MS Kinect depth sensor. In Proceedings of the 2015 International Workshop on Computational Intelligence for Multimedia Understanding (IWCIM), Prague, Czech Republic, 29–30 October 2015; pp. 1–4. [Google Scholar] [CrossRef]

- Ťupa, O.; Procházka, A.; Vyšata, O.; Schatz, M.; Mareš, J.; Vališ, M.; Mařík, V. Motion tracking and gait feature estimation for recognising Parkinson’s disease using MS Kinect. BioMed. Eng. OnLine 2015, 14, 1–20. [Google Scholar] [CrossRef] [PubMed]

- Procházka, A.; Vyšata, O.; Vališ, M.; Ťupa, O.; Schatz, M.; Mařík, V. Bayesian Classification and Analysis of Gait Disorders Using Image and Depth Sensors of Microsoft Kinect. Elsevier Digit. Signal Process. 2015, 47, 169–177. [Google Scholar] [CrossRef]

- Procházka, A.; Vyšata, O.; Vališ, M.; Ťupa, O.; Schatz, M.; Mařík, V. Use of Image and Depth Sensors of the Microsoft Kinect for the Detection of Gait Disorders. Springer Neural Comput. Appl. 2015, 26, 1621–1629. [Google Scholar] [CrossRef]

- Lachat, E.; Macher, H.; Landes, T.; Grussenmeyer, P. Assessment and Calibration of a RGB-D Camera (Kinect v2 Sensor) Towards a Potential Use for Close-Range 3D Modeling. Remote Sens. 2015, 7, 13070–13097. [Google Scholar] [CrossRef]

- Gao, Z.; Yu, Y.; Du, S. Leveraging Two Kinect Sensors for Accurate Full-Body Motion Capture. Sensors 2015, 15, 24297–24317. [Google Scholar] [CrossRef] [PubMed]

- Addison, P.S.; Smit, P.; Jacquel, D.; Borg, U.R. Continuous respiratory rate monitoring during an acute hypoxic challenge using a depth sensing camera. J. Clin. Monit. Comput. 2019, 1–9. [Google Scholar] [CrossRef] [PubMed]

- Carey, G. How Intel’s RealSense Has Come of Age | Digital Trends. 2016. Available online: https://www.digitaltrends.com/computing/intel-realsense-coming-of-age/ (accessed on 2 March 2020).

- Martinez, M.; Stiefelhagen, R. Breathing rate monitoring during sleep from a depth camera under real-life conditions. In Proceedings of the 2017 IEEE Winter Conference on Applications of Computer Vision, WACV 2017, Santa Rosa, CA, USA, 24–31 March 2017; pp. 1168–1176. [Google Scholar] [CrossRef]

- Yang, C.; Cheung, G.; Stankovic, V.; Chan, K.; Ono, N. Sleep Apnea Detection via Depth Video and Audio Feature Learning. IEEE Trans. Multimed. 2017, 19, 822–835. [Google Scholar] [CrossRef]

- Procházka, A.; Charvátová, H.; Vyšata, O.; Kopal, J.; Chambers, J. Breathing Analysis Using Thermal and Depth Imaging Camera Video Records. Sensors 2017, 17, 1408. [Google Scholar] [CrossRef]

- Wang, Y.K.; Chen, H.Y.; Chen, J.R. Unobtrusive Sleep Monitoring Using Movement Activity by Video Analysis. Electronics 2019, 8, 812. [Google Scholar] [CrossRef]

- Alimohamed, S.; Prosser, K.; Weerasuriya, C.; Iles, R.; Cameron, J.; Lasenby, J.; Fogarty, C. P134 Validating structured light plethysmography (SLP) as a non-invasive method of measuring lung function when compared to Spirometry. Thorax 2011, 66, A121. [Google Scholar] [CrossRef]

- Brand, D.; Lau, E.; Cameron, J.; Wareham, R.; Usher-Smith, J.; Bridge, P.; Lasenby, J.; Iles, R. Tidal Breathing Parameters Measurement by Structured Light Plethysmography (SLP) and Spirometry. Am. J. Respir. Crit. Care Med. 2010, B18, A2528. [Google Scholar]

- Wang, C.W.; Hunter, A.; Gravill, N.; Matusiewicz, S. Unconstrained video monitoring of breathing behavior and application to diagnosis of sleep apnea. IEEE Trans. Biomed. Eng. 2014, 61, 396–404. [Google Scholar] [CrossRef] [PubMed]

- Murthy, R.; Pavlidis, I. Noncontact measurement of breathing function. IEEE Eng. Med. Biol. Mag. 2014, 25, 57–67. [Google Scholar] [CrossRef] [PubMed]

- Gu, C.; Li, C. Assessment of Human Respiration Patterns via Noncontact Sensing Using Doppler Multi-Radar System. Sensors 2015, 15, 6383–6398. [Google Scholar] [CrossRef] [PubMed]

- Arlotto, P.; Grimaldi, M.; Naeck, R.; Ginoux, J. An Ultrasonic Contactless Sensor for Breathing Monitoring. Sensors 2014, 14, 15371–15386. [Google Scholar] [CrossRef]

- Hashizaki, M.; Nakajima, H.; Kume, K. Monitoring of Weekly Sleep Pattern Variations at Home with a Contactless Biomotion Sensor. Sensors 2014, 15, 18950–18964. [Google Scholar] [CrossRef]

- Pandiyan, E.; Selvan, M.; Hussian, M.; Velmathi, D. Force Sensitive Resistance Based Heart Beat Monitoring For Health Care System. Int. J. Inf. Sci. Technol. 2014, 4, 11–16. [Google Scholar] [CrossRef]

- Nam, Y.; Kim, Y.; Lee, J. Sleep Monitoring Based on a Tri-Axial Accelerometer and a Pressure Sensor. Sensors 2016, 16, 750. [Google Scholar] [CrossRef]

- Cippitelli, E.; Gasparrini, S.; Gambi, E.; Spinsante, S. A Human Activity Recognition System Using Skeleton Data from RGBD Sensors. Comput. Intell. Neurosci. 2016, 2016, 1–14, ID 4351435. [Google Scholar] [CrossRef]

- Erden, F.; Velipasalar, S.; Alkar, A.; Cetin, A. Sensors in Assisted Living. IEEE Signal Process. Mag. 2016, 33, 36–44. [Google Scholar] [CrossRef]

- Ye, M.; Yang, C.; Stankovic, V.; Stankovic, L.; Kerr, A. A depth camera motion analysis framework for tele-rehabilitation: Motion capture and person-centric kinematics analysis. IEEE J. Sel. Top. Signal Process. 2016, 2016, 1–11. [Google Scholar] [CrossRef]

- Rolls, E.; Deco, G. Computational Neuroscience of Vision; Oxford University Press: Oxford, UK, 2001. [Google Scholar] [CrossRef]

- Salatas, J. Implementation of Competitive Learning Networks for WEKA - ICT Research Blog. 2011. Available online: https://jsalatas.ictpro.gr/implementation-of-competitive-learning-networks-for-weka/ (accessed on 1 March 2020).

- Winograd, S. On computing the discrete Fourier transform. Math. Comput. 1978, 32, 175. [Google Scholar] [CrossRef]

- Bradley, T.D.; McNicholas, W.T.; Rutherford, R.; Popkin, J.; Zamel, N.; Phillipson, E.A. Clinical and physiologic heterogeneity of the central sleep apnea syndrome. Am. Rev. Respir. Dis. 1981, 305, 325–330. [Google Scholar]

- Guilleminault, C.; Eldridge, F.L.; Dement, W.C. Insomnia with sleep apnea: A new syndrome. Science 1973, 181, 856–858. [Google Scholar] [CrossRef] [PubMed]

- Guilleminault, C.; Quera-Salva, M.A.; Nino-Murcia, G.; Partinen, M. Central sleep apnea and partial obstruction of the upper airway. Ann. Neurol. 1987, 21, 465–469. [Google Scholar] [CrossRef]

- Issa, F.G.; Sullivan, C.E. Reversal of central sleep apnea using nasal CPAP. Chest 1986, 90, 165–171. [Google Scholar] [CrossRef]

- Cherniack, N.S. Respiratory dysrhythmias during sleep. N. Engl. J. Med. 1981, 305, 325–330. [Google Scholar]

- Thorpy, M.J.; Rochester, M. International Classification of Sleep Disorders: Diagnostic and Coding Manual, Revised; American Academy of Sleep Medicine: Rochester, MN, USA, 1997; pp. 182–185. [Google Scholar]

- Schätz, M.; Kuchyňka, J.; Vyšata, O.; Procházka, A. Pilot Study of Sleep Apnea Detection with Wavelet Transform; Technical Computing Prague; 2017. Available online: https://pdfs.semanticscholar.org/3a1e/893519193746565bc2559d5181c29c95e422.pdf (accessed on 1 March 2020).

- Deboer, S.L. Emergency Newborn Care; Trafford: Bloomington, IN, USA, 2006; p. 170. [Google Scholar]

- Lindh, W.; Pooler, M.; Tamparo, C.; Dahl, B. Delmar’s Comprehensive Medical Assisting: Administrative and Clinical Competencies; Cengage Learning: Belmont, CA, USA, 2009; p. 1552. [Google Scholar]

- Lee, Y.S.; Pathirana, P.N.; Member, S.; Steinfort, C.L. Respiration Rate and Breathing Patterns from Doppler Radar Measurements. In Proceedings of the 2014 IEEE Conference on Biomedical Engineering and Sciences (IECBES), Kuala Lumpur, Malaysia, 8–10 December 2014; pp. 8–10. [Google Scholar]

- Barrett, K.E.; Barman, S.M.; Boitano, S.; Brooks, H. Ganong’s Review of Medical Physiology, 24th ed.; McGraw-Hill Education: Berkshire, UK, 2012. [Google Scholar]

- Rodríguez-Molinero, A.; Narvaiza, L.; Ruiz, J.; Gálvez-Barrón, C. Normal respiratory rate and peripheral blood oxygen saturation in the elderly population. J. Am. Geriatr. Soc. 2013, 61, 2238–2240. [Google Scholar] [CrossRef]

- Wasenmüller, O.; Stricker, D. Comparison of Kinect V1 and V2 Depth Images in Terms of Accuracy and Precision; Springer: Berlin, Germany, 2017; pp. 34–45. [Google Scholar] [CrossRef]

- Keselman, L.; Woodfill, J.I.; Grunnet-Jepsen, A.; Bhowmik, A. Intel RealSense Stereoscopic Depth Cameras. arXiv 2017, arXiv:1705.05548. [Google Scholar]

- Intel. Intel ® RealSense™ Camera R200 Embedded Infrared Assisted Stereovision 3D Imaging System with Color Camera Product Datasheet R200; Technical Report; 2016; Available online: https://www.mouser.com/pdfdocs/intel_realsense_camera_r200.pdf (accessed on 1 March 2020).

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).