1. Introduction

In recent years, human position tracking technology has been rapidly developing and it has drastically changed everyday life by offering location information, even during complex scenarios. Location Based Services (LBS) can help to provide accurate tracking of human position and this has now been widely adopted in a range of different fields. Local spatial data are often being leveraged to provide specific services to users. It can offer continuous monitoring, location-specific information when the user is nearby a point of interest or even provide specialistic services upon request. Users can benefit from this technology by being able to find specific destinations and it has been suggested that LBS is becoming one of the most important sources of revenue for the wireless communications industry [

1].

The Global Navigation Satellite System (GNSS) is a widely used position tracking method, but it is not a suitable solution inside confined spaces, due to an often rapid deterioration of the signal strength when these spaces are entered. Nonetheless, position tracking for indoor environments is playing an ever increasing role in human navigation, which is further propelled by the rise of smart devices. An example of this is the application of indoor tracking within the healthcare sector. This technology can be used to give hospitals an understanding of how best to allocate clinical staff or assist with patient monitoring. Therefore, it is immensely valuable to find suitable techniques that can accurately localize humans indoors. A lot of varied methods have been developed by researchers and they frequently leverage information regarding the Received Signal Strength (RSS) of Wireless Local Area Network (WLAN) [

2]/Bluetooth low energy (BLE) beacons [

3]/ultra wideband (UWB) [

4], radio frequency identification (RFID) [

5], ultrasound [

6], and other modalities. Unfortunately, many of these methods rely on external aiding signals, information, or infrastructure, and, thus, they are not applicable in scenarios where the signals are severely affected or when there is no specific infrastructure available. The construction of new infrastructures to facilitate indoor tracking also comes at an added cost, as it can be expensive to setup and maintain these frameworks.

On the contrary, self-contained position tracking does not rely on any infrastructure and they are often based on Pedestrian Dead Reckoning (PDR) methods. These approaches can utilize low-cost on-body sensors, including Inertial Measurement Units (IMUs), accelerometers, gyroscopes, compasses, barometers, magnetometers, anemometers, or on-body cameras, to name just a few, for examples, [

7,

8,

9,

10]. The information from these sensors is subsequently used to calculate an estimate of the current position, thus providing infrastructureless applicability to the users. For examples, it is suitable during environmental emergencies, as the surroundings might be frequently changing. It also works well under smoky and dim-lit conditions, which makes it ideal for firefighting or rescue operations that could encounter similar environments. It can also be adopted in the sports. The trajectories of athletes could be recorded indoor or outdoor, which could be analysed by coaches for performance improvement. The trajectory length that the athletes have covered during the basketball or football game can also reflect the amount of exercise, and fatigue levels could be estimated, which could be applied by the coach, as a training tool, in order to create a competitive advantage [

11] and avoid injuries.

A recent systematic literature review [

12] showed that the majority of PDR papers for wearable sensors apply sensors positioned on the foot. This is closely followed by publications that use smartphone based sensors. The waist is the third most researched option, which is followed by the leg and upper torso. There were only two published papers identified that looked at PDR for head-mounted sensing systems. Most studies adopted foot-mounted sensors, as this location makes it is easier to detect specific gait features that are reoccuring during walking. This gait information can be utilized by subsequently applying the Zero Velocity Update (ZUPT) or Zero Angular Rate Update (ZARU) techniques. The ZUPT and ZARU allow for a reduction in the long-term accumulation of errors. However, when potential users within a healthcare setting were asked where they would like to wear sensor technologies, placement on the foot was rarely mentioned (only 2% of the time this location came-up) [

13]. It was also shown that small, discreet, and unobtrusive systems were preferred, with many people referring back to everyday objects. This would indicate that objects, such as glasses, might be more acceptable for monitoring in an everyday environment, whilst the applicability of smart mouthguards or helmets might be more appropriate within the (contact) sports community.

Glasses are already a requisite to people who need to correct for certain visual impairments and it is estimated that there are 1406 million people with near-sightedness globally (22.9% of the world population). This number is predicted to rise to 4758 million (49.8% of the world population) by 2050 [

14]. Besides vision correction, people also wear glasses for protection from ultraviolet light or blue light, or just to accessorize. There are already several smart glasses products on the market, such as Google Glasses, Vuzix Blade, Epson Moverio BT-300, Solos, and Everysight Raptor. The field of smart glasses also links in well with the growing interest in providing tracking in virtual reality (VR) environments without the need of any other technology except what is integrated into the VR headset.

Mouthguards are very essential in contact sports to protect athletes. It was estimated that there are 40 million mouthguards sold in the United States each year [

15]. With the increasing participation in contact sports, the consumption of mouthguards will continue to climb. Studies on smart mouthguards with acoustic sensors for breathing frequency detection [

16] has shown the feasibility of sensor-embedded mouthguards. Smart mouthguards may be the future of the mouthguard industry, as it combines physical protection with relevant information for the sport community [

17].

Moreover, in humans the stability of the visual field is essential for efficient motor control. The ability to keep the head steady in space allows for control of movement during locomotor tasks [

18]. Therefore, the placement of sensors on the head would provide the very suitable location, as the whole body is working on stabilizing that particular segment of the system. The head is also where the vestibular system is located, which acts as an inertial guidance system in vertebrates. Placing the artificial positional tracking system near the biological one seems to be a fitting approach.

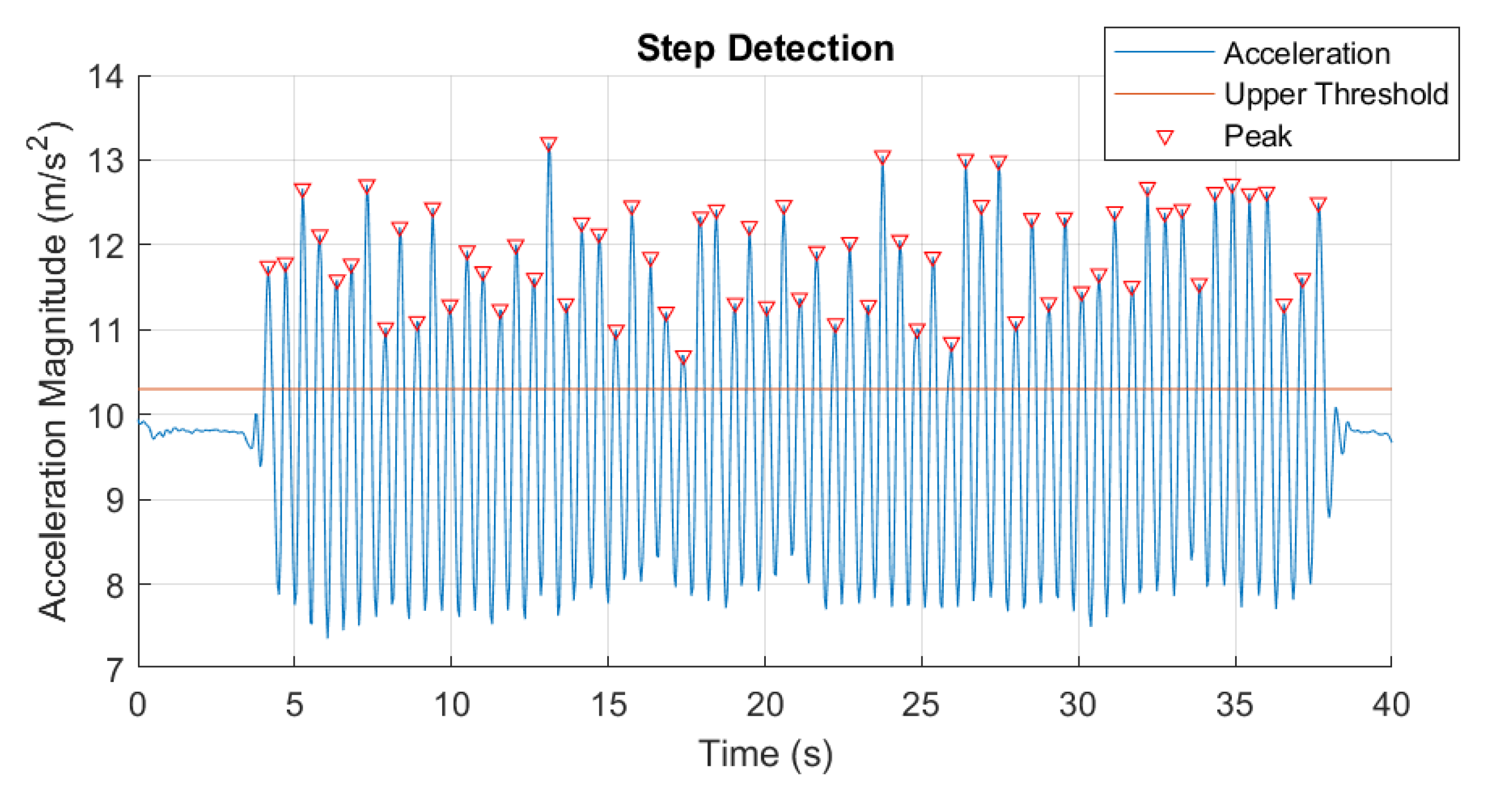

For head-mounted solutions, Hasan and Mishuk proposed an IMU sensor fusion algorithm, which utilizes data that were collected by a three-axis accelerometer and three-axis gyroscope that were embedded into smart glasses [

19]. The glasses were worn by a subject and steps were detected by applying peak detection of the accelerometer norm. The linear model in (

7) is used to obtain a step length estimation. An Extended Kalman Filter (EKF) is used to then fuse the accelerometer and gyroscope data in order to determine the heading direction. A Kalman Filter (KF) is a recursive Bayesian filter, which is known to be an optimal filter for Gaussian linear systems. KF uses a series of measurements that are observed over time (containing statistical noise and other inaccuracies) in order to produce estimates of unknown variables. These estimates tend to be more accurate than those that are based on a single measurement alone. An output from the filter is obtained by estimating the joint probability distribution over the variables for each given time frame. The EKF is the non-linear version of the KF, where the state transition and observation matrices are Jacobian matrices of non-linear measurement model equations. The state vector that was used in the aforementioned study was:

and the measurement vector was:

where

represents the Euler angle (

),

is angular rate (

/s), and

a is acceleration (ms

) on each axis. The result that was found by [

19] showed that the sensor fusion positioning technique accomplished an average error between estimated and real position of 2 m in the most complex case, when the monitoring time frame was less than 2 min.

Zhu et al. [

20] utilized a hybrid step length model and a new azimuth estimation method for PDR. The steps were detected by the peak detection or the positive zero crossing detection algorithm. The hybrid step length model is given in (

1),

where

a and

b are coefficients,

f is the walking frequency (

),

v is the accelerations’ variance.

and

(ms

) are the maximum and minimum acceleration in one step,

is the Pearson correlation coefficient between the step length and the walking frequency, and

is the Pearson correlation coefficient between the step length and accelerations’ variance.

The heading information was generated from the hybrid value of the azimuth that was estimated by gyroscopes and magnetometers:

where

and

(

) are the heading angles that were estimated by gyroscope and magnetometer,

and

are coefficients, and

(

) is the hybrid heading estimation. In their study, they found a maximum error of 1.44 m and a mean error of 0.62 m.

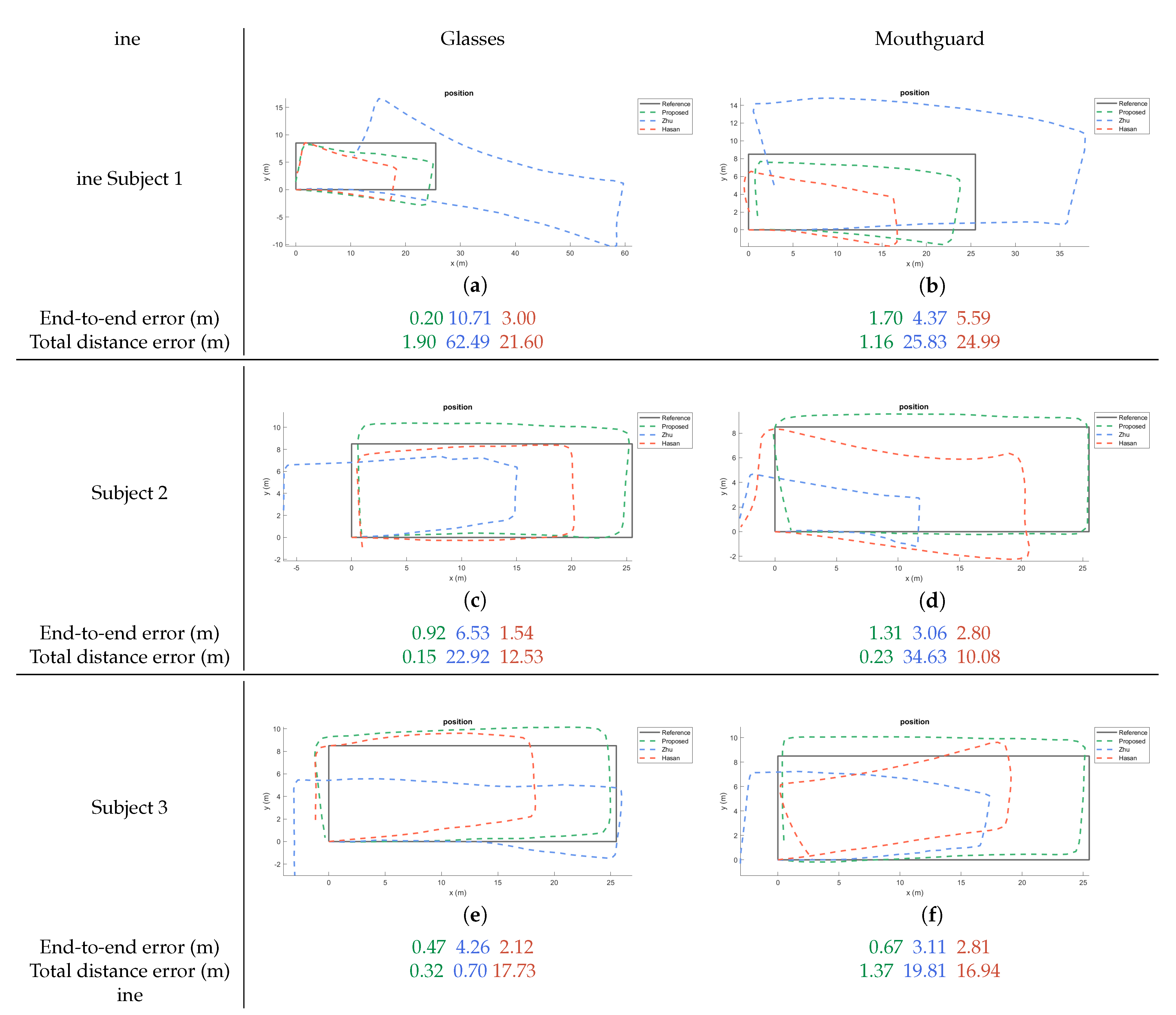

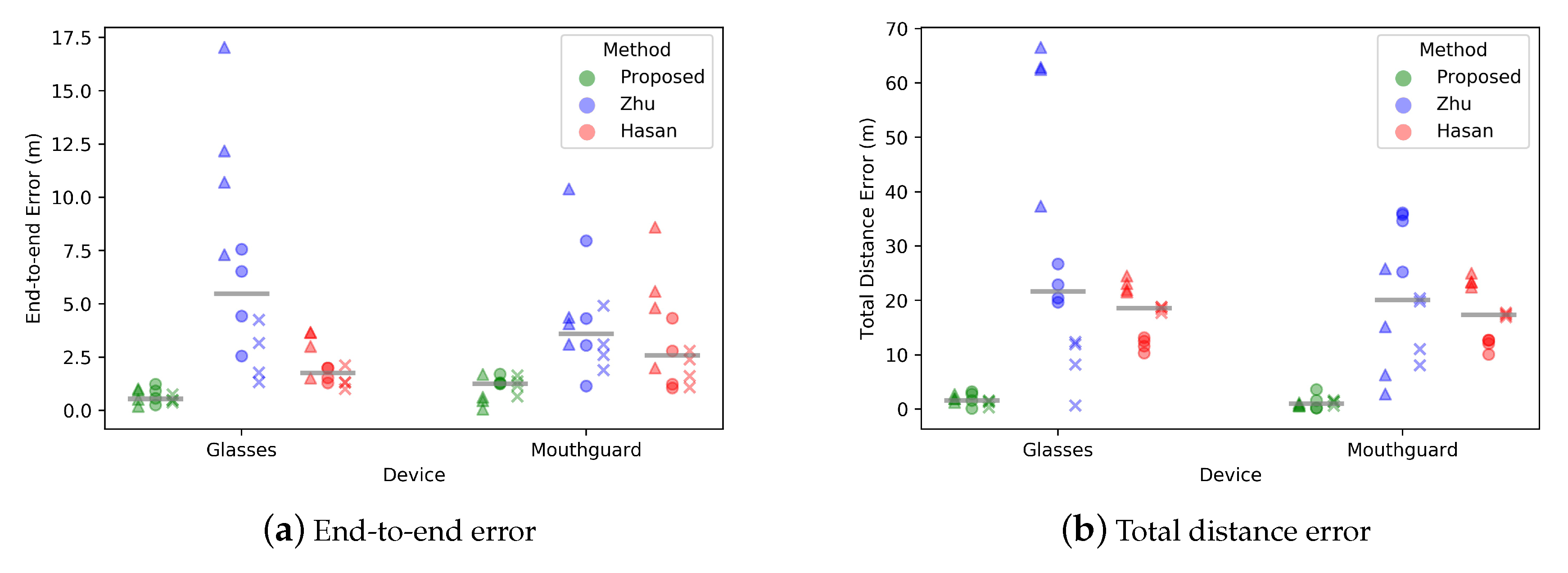

We propose exploring the performance of these two PDR methods (which are specifically for head mounted sensors) [

19,

20] and a novel algorithm that was based on Peak detection, Mahony algorithm, and Weinberg step length model. These three methods will be compared and the average end-to-end error, as well as total distance error will be recorded. The proposed PDR method has a higher accuracy than the two currently available methods. The performance is consistent across different sensors and placements, which verifies its robustness. This method provides a suitable approach across various hardware platforms and, thus, supports the potential creation of truly innovative smart head-mounted systems in the long-term.

4. Discussion

The results show that the end-to-end and total distance error was the lowest in terms of central tendency for the newly proposed method. Extra tests were carried out with a different set of sensor platform (smartphone) for provide a further preliminary cross-platform comparison. The data was collected using a Huawei P30 smartphone, which consists of three-axis accelerometer and gyroscope (ICM-20690) produced by TDK InvenSense, and three-axis magnetometer (AK09918) from Asahi Kasei Microdevices. The smartphone was placed on the cranial part of the head and was kept firmly in place while using adaptable straps. Only one subject was tested under this head-mounted smartphone condition. The three methods all performed comparatively well for the heading estimation under the smartphone condition (see

Figure 6), which is likely due to the higher accuracy of the IMU in the smartphone as compared to the MCU. However, when a cheaper IMU is used (such as the one attached on the glasses and mouthguard), the results of Hasan’s method and Zhu’s method quickly perform with greater errors (

Figure 4). The newly proposed method in this article seems rather robust (in terms of errors), even when the sensor platform changes, which indicates a more broader inclusion of hardware is possible and that the system is likely to perform relatively stable even under less optimum operational conditions.

For the heading estimation, the error of Zhu’s method was heavily influenced by sensor type and layout. The main reason for the lack of robustness in this algorithm is likely due to the fact that the noise from the sensors is not well compensated for. The hybrid heading estimation is simply the proportional sum of raw integration of gyroscope and magnetic direction, with the proportional gain being setted manually. Although, it sets a threshold for the gyroscope to start integration only when the Euclidean norm of the gyroscope data is above this threshold, the bias in gyroscopic data still remains and thus the integration error remains. The heading estimation from the magnetometer is also still an problem, because the geomagnetic field is not always uniform [

31] and it can be easily disturbed by hard or soft magnetic interferences. In addition, the oscillation during walking creates non-negligible high frequency noise in the heading estimation.

Regarding the step length estimation, Hasan’s and Zhu’s method yield larger errors for a range of reasons. Hasan’s algorithm used a linear model with the coefficient values being adopted from another study, which obtained parameters from 4000 steps of 23 different people [

24]. The linear regression based on this data would provide a general model and this model does not necessary scale well across individuals. Especially, if the conditions, subjects or instructions differ from the original database. Generating specific parameters for each individual is therefore a key factor to get more accurate step length estimations. As for the Zhu’s method, the step length estimation model combines information of step frequency, acceleration variance, as well as maximum and minimum accelerations in one step. This is a more scalable solution, which should yield more accurate estimates. Nonetheless, this method only performs well when the conditions and sensor placement are exactly the same between the step length estimation session and the subsequent tracking sessions. If changes occur between these sessions then the step length estimate might no longer be accurate enough under the new conditions. Even when the temperature or the sensor changes, the acceleration variance, maximum and minimum acceleration can start to vary, because of the changing noise or the different sensor properties [

32]. This can lead to contrasting estimations, even when all other conditions remain the constant.

The attitude representation in Hasan’s method and in the proposed method of this study is notably different. The former uses Euler angles in the EKF, which consists of three rotation angles around three axes. The latter adopted quaternions within the Mahony algorithm. The Euler angles are more human understandable, but have several disadvantages. These include the ambiguity in the rotation sequence of axes and the possible occurrence of a gimbal lock which leads to the loss of one degree of freedom. In contrast, expressing rotations as unit quaternions has some advantages, such as: concatenating rotations is computationally faster and numerically more stable; extracting the angle and axis of rotation is simpler; interpolation is more straightforward and quaternions do not suffer from gimbal lock as Euler angles do [

33]. Although the EKF with Euler angle in Hasan’s method performs well, it would be more stable if quaternions are used.

Hasan’s and the newly proposed method showed high accuracy in heading estimations, proving the effectiveness of EKF and Mahony’s algorithm in the attitude estimation. This is reflected by the fact that they are already one of the most popular algorithms in this area. In EKF, there are usually two parameters that need to be set and tuned, which are the process noise covariance matrix

Q and measurement noise covariance matrix

R [

34]. In Mahony’s algorithm, there are also two parameters that need tuning: proportional gain

and integral gain

. In both of these algorithms, the parameters are found by tuning until the best results are achieved. This is, of course, time consuming and there is no guarantee of optimality. More importantly, when the sensor position is changing, for example, from glasses to the mouthguard, the parameters need retuning. More research on automatic tuning of parameters will be useful, as it can help with the creation of the next generation of algorithms.

The processing of the recorded data in this study was done off-line. Nonetheless, many applications will need online PDR to provide real-time navigation or localisation. The PDR algorithms should be assessed for online implementation feasibility and preliminary estimates of running time provide some insights into this. The running time of each method is shown in

Table 2. Hasan’s method takes much more time than the other two methods, because the Jacobian calculation in EKF is complex and time-consuming. The mean running time of one sample is 0.0661 s, which indicates that the sampling frequency will be lower than 15 Hz. The empirical run-time estimates are limited as algorithms are platform-independent and, for that reason, more theoretical assessment needs to take place to compare the complexity of these algorithms, which is beyond the scope of this paper.

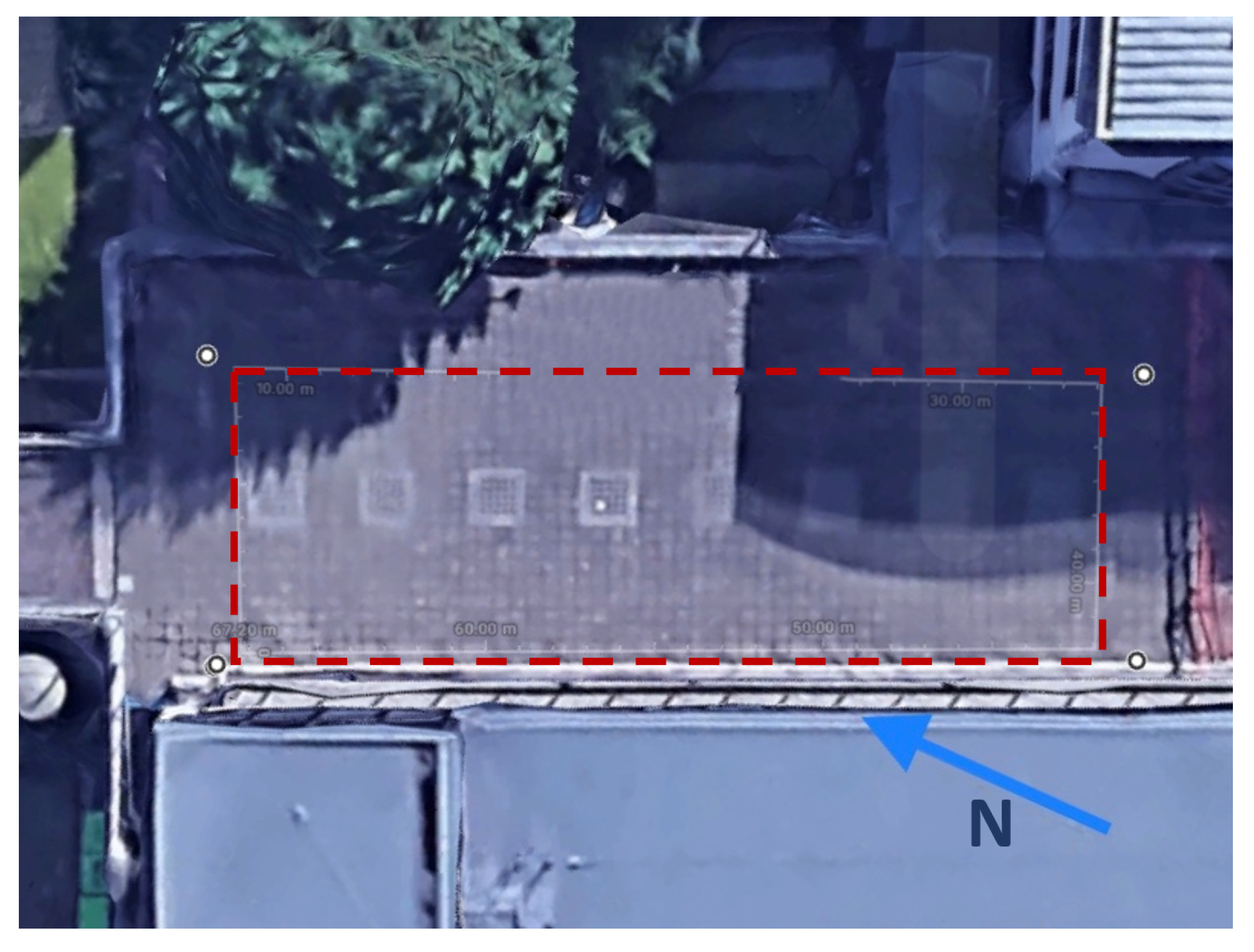

The subjects were instructed to follow the reference path that was shown in

Figure 4 and

Figure 6. However, it is likely that there is some deviation from the ideal path, especially during turning, as the subjects were asked to walk in their own preferred manner. Nonetheless, all of the subjects always started and stopped in the same location. Caution needs to be taken regarding to the generalizability of the results. Different environmental conditions, such as pathways taken or surrounding objects, can yield different outcomes. A simple walking pattern (rectangle) was selected in this study in order to minimise any trial variability between subjects and sensor placement. It should be noted that most studies use only a single person in their testing [

12] and that this study included multiple subjects. It will be useful for the field to further expand the number of subjects, as well as environmental conditions (e.g., walking surface) during experimental testing to create ever increasing levels of external validity.

The method that was presented in this paper was the best performing algorithm across the different sensor locations. This new technique offers an approach that can be generalized across sensors and smart head-mounted equipment, such as helmets, hats, glasses, mouthguard, dentures, or earphones. The ability to have a high-performing general algorithm for head-based systems can provide new opportunities for a range of industries, ranging from healthcare to entertainment.

The current experiment did not collect synchronised data from the different locations. Thus, any assessment of the relationship between these locations needs to take into account, the subject variability between trials. Nonetheless, the task at hand was relatively simple to complete and variability is likely to be limited within a subject. Information about how strongly these placements are correlated allows for further insight in terms of potential reciprocal nature of these systems. The Spearman’s rank correlation coefficient (rs) was calculated to assess this, as a Pearson Correlation is susceptible to outliners in small sample sizes. The coefficients were determined between the glasses and mouthguard condition for the proposed (rs = 0.4261, p = 0.0390), Zhu’s (rs = 0.6435, p = 0.0009) and Hasan’s (rs = 0.9920, p = 3.0338 ) method. It showed a strong correlation across all methods between the locations. This indicates that these systems can potentially act interchangeably. More work is needed in order to further confirm these preliminary findings regarding the association between different head-mounted sensor positions.