A Walking-in-Place Method for Virtual Reality Using Position and Orientation Tracking

Abstract

1. Introduction

2. Related Work

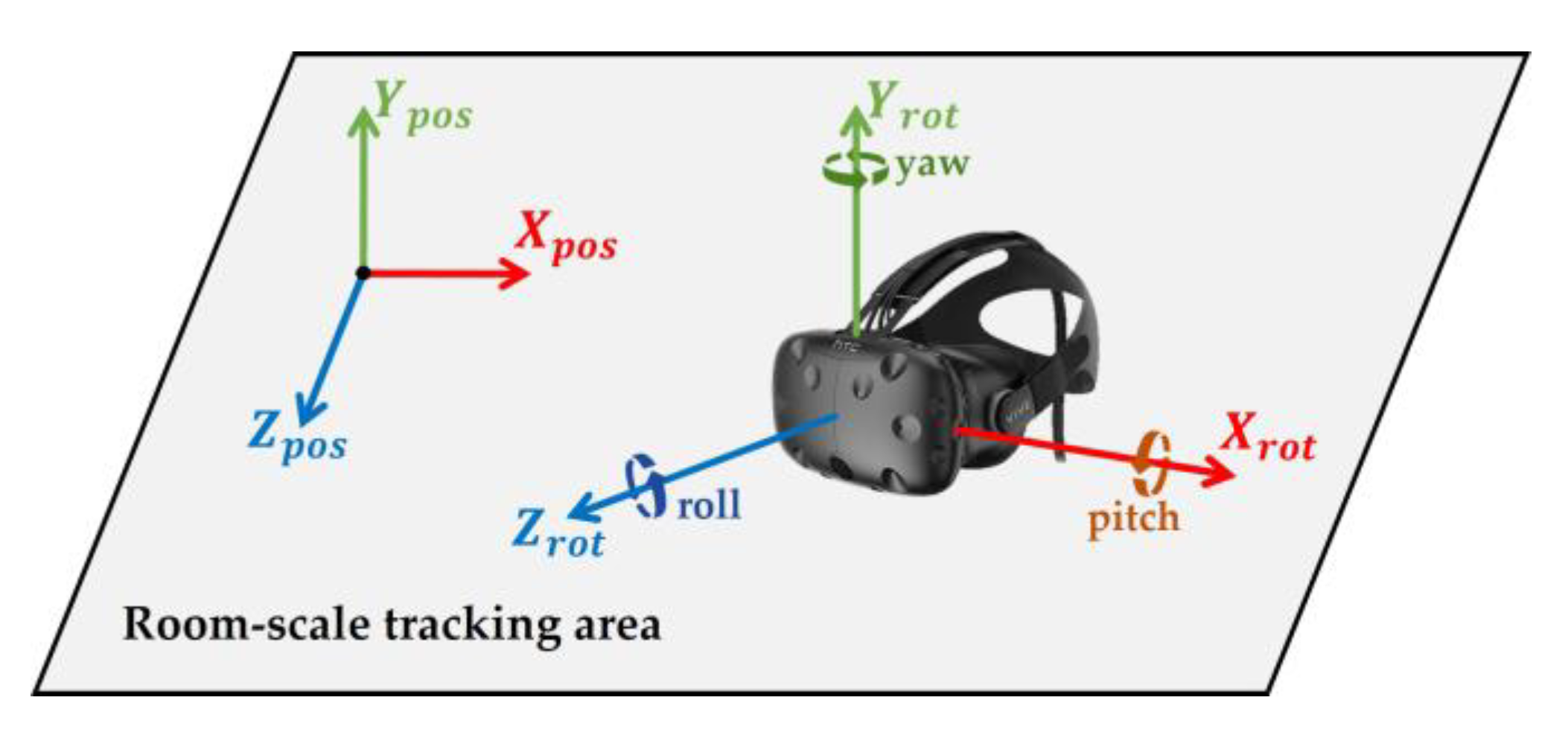

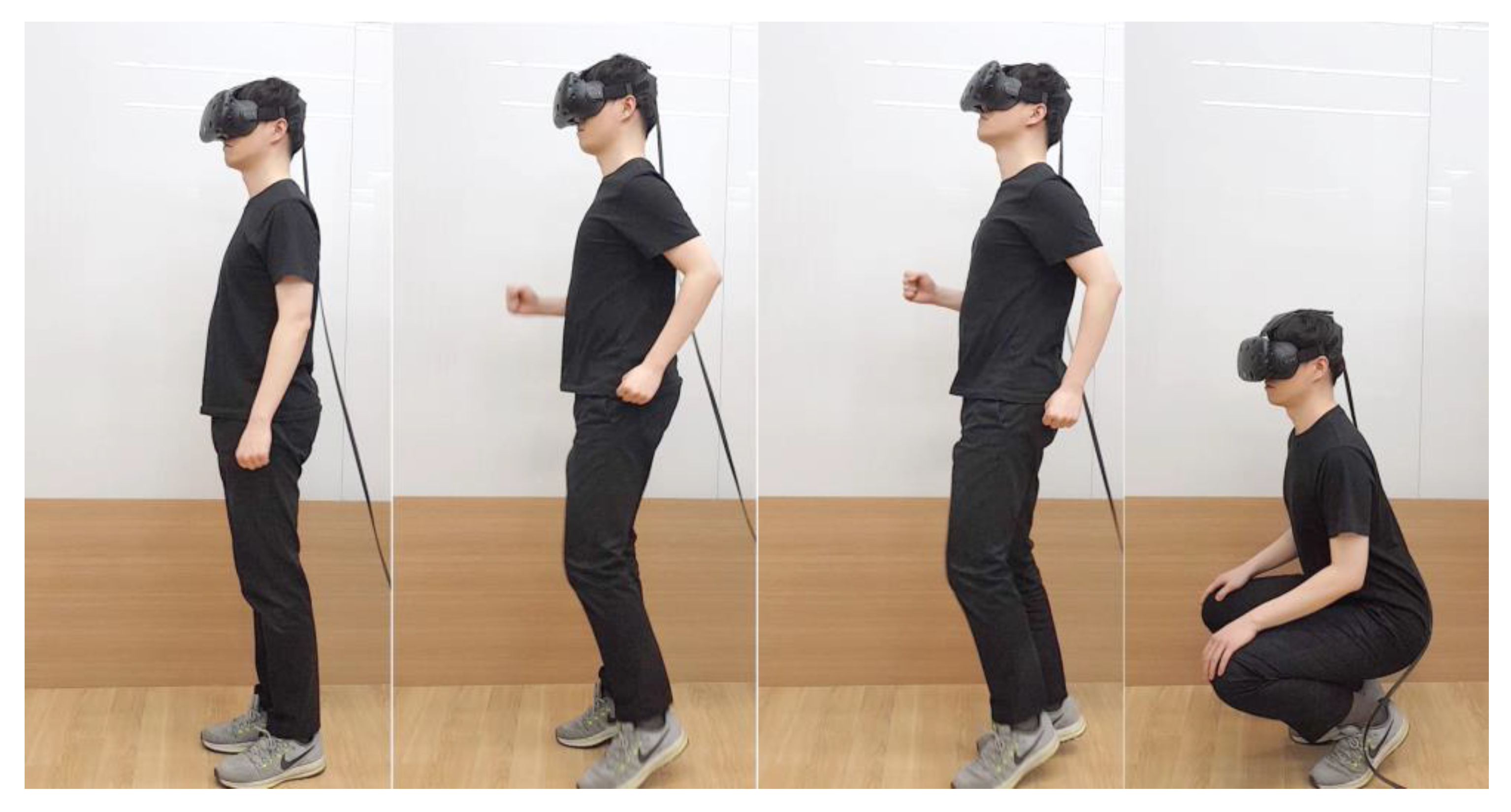

3. Methods

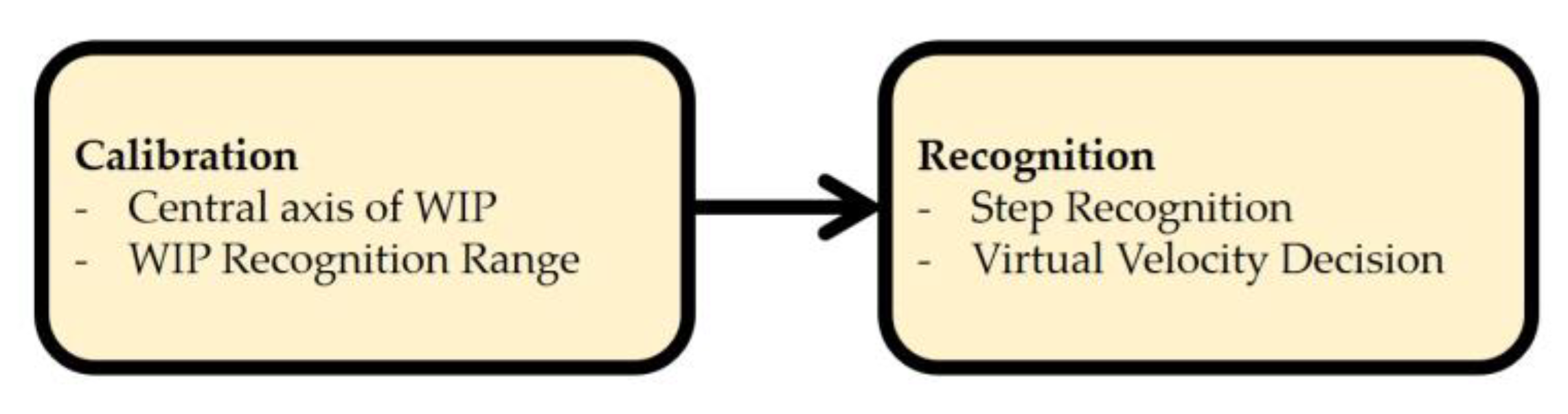

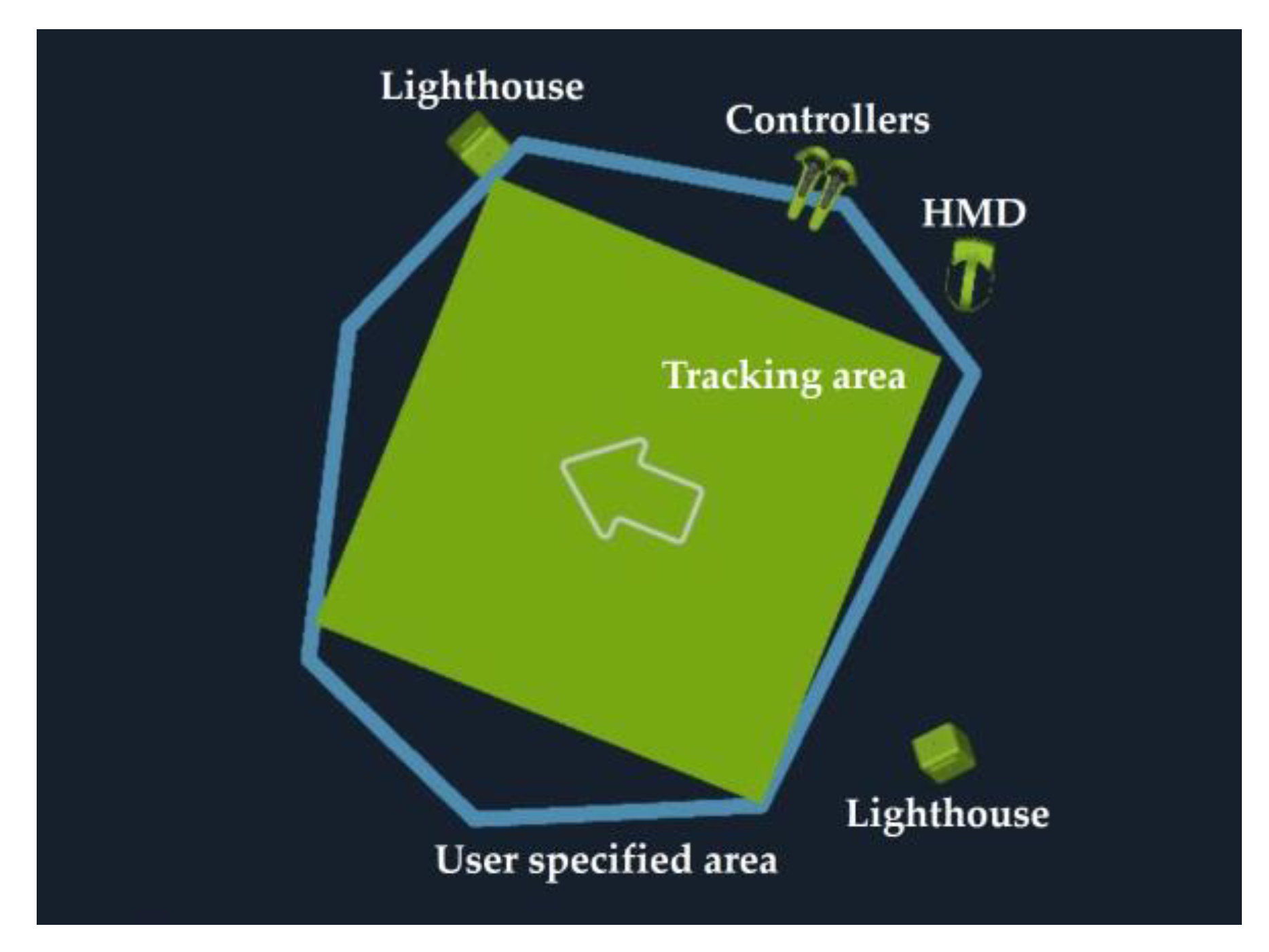

3.1. Calibration

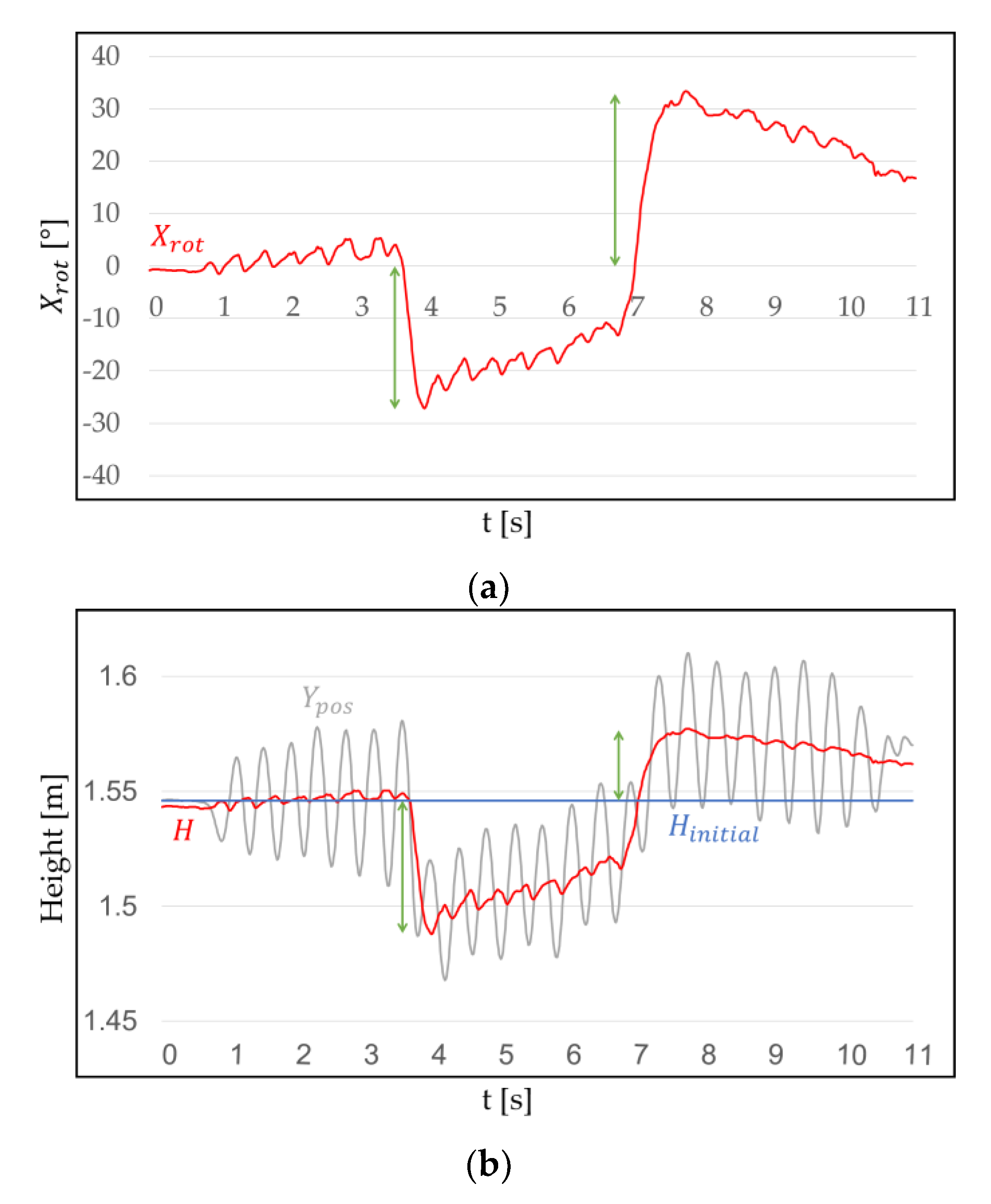

3.1.1. Central Axis of WIP

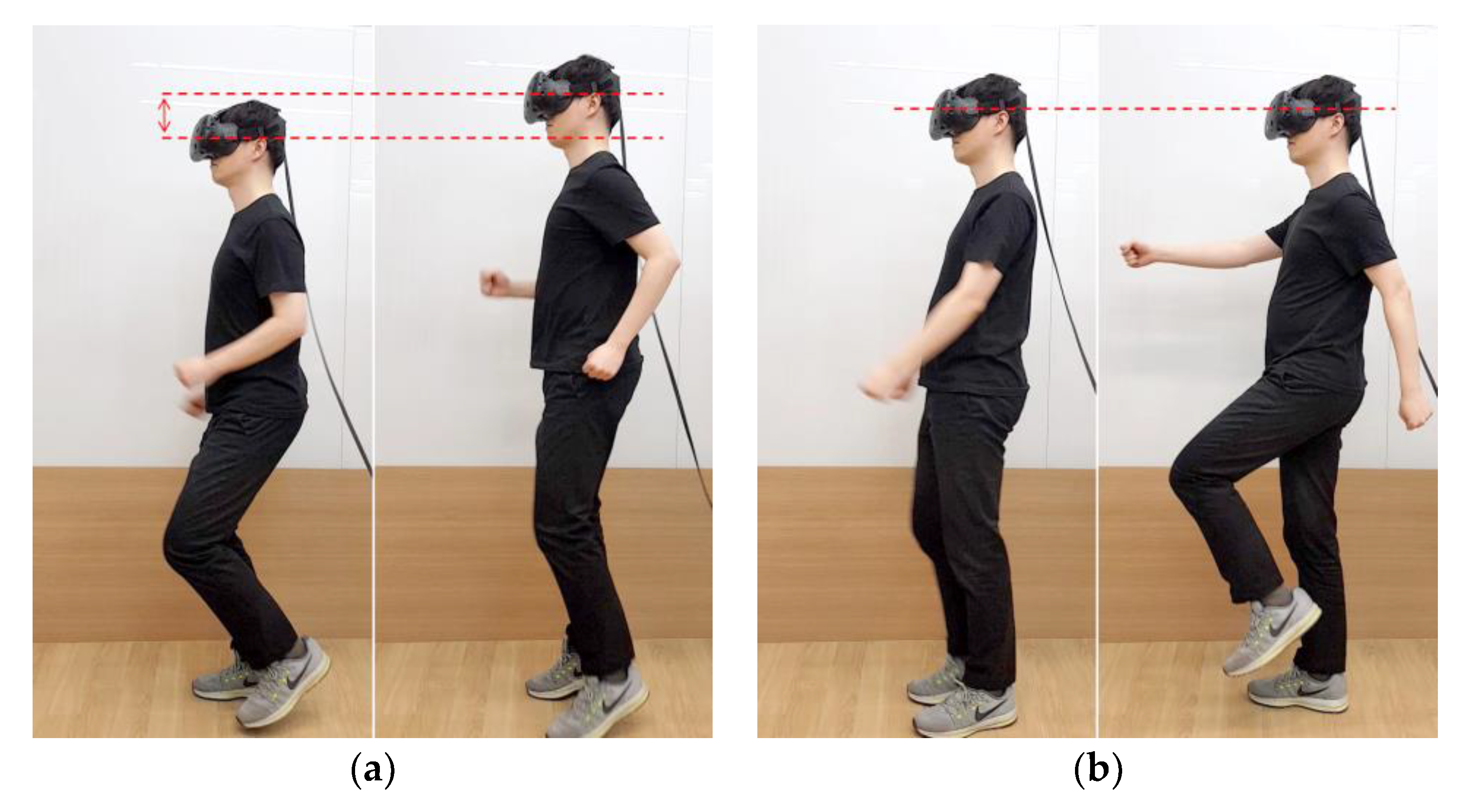

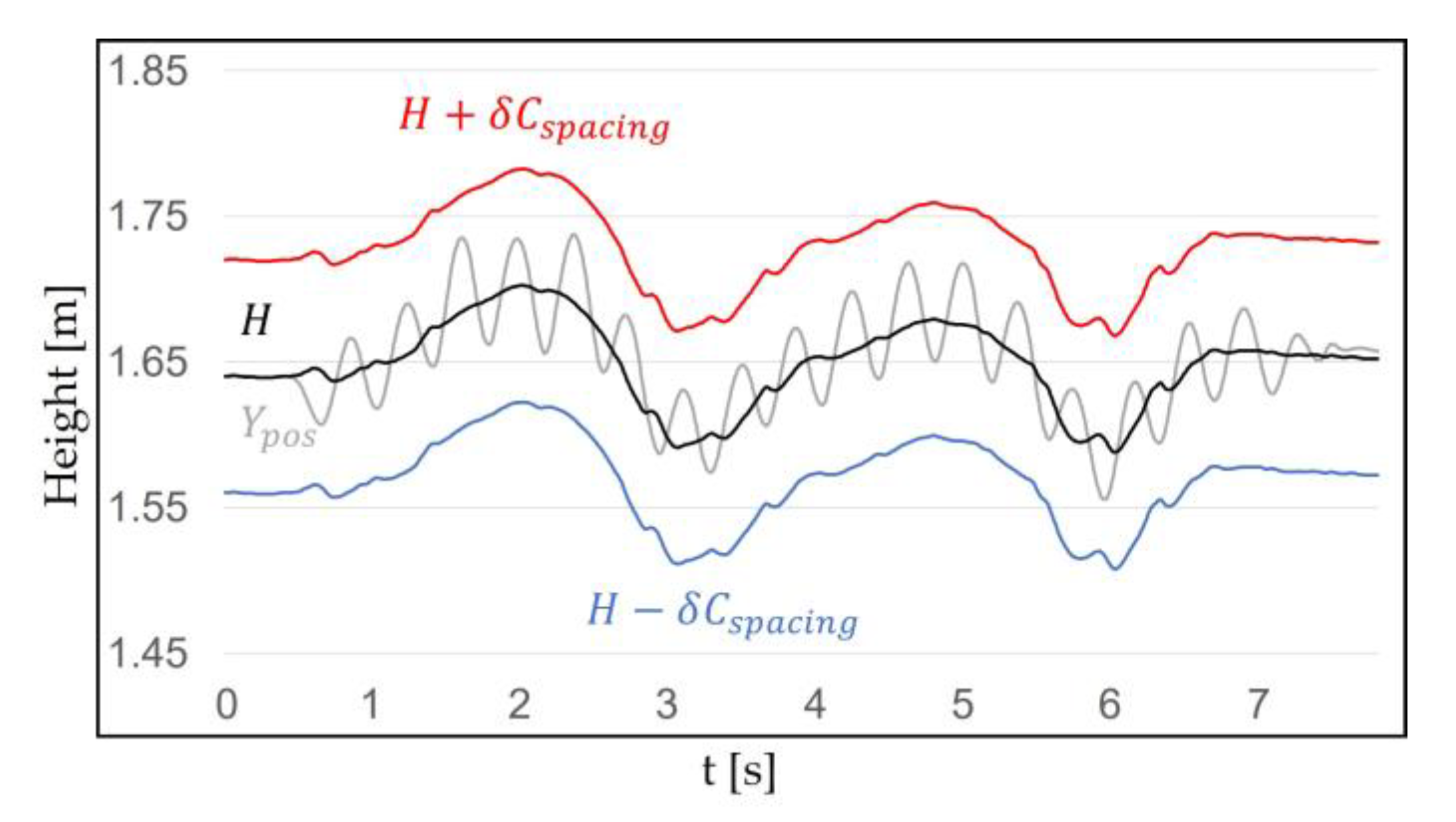

3.1.2. Walking in Place Recognition Range

3.2. Recognition

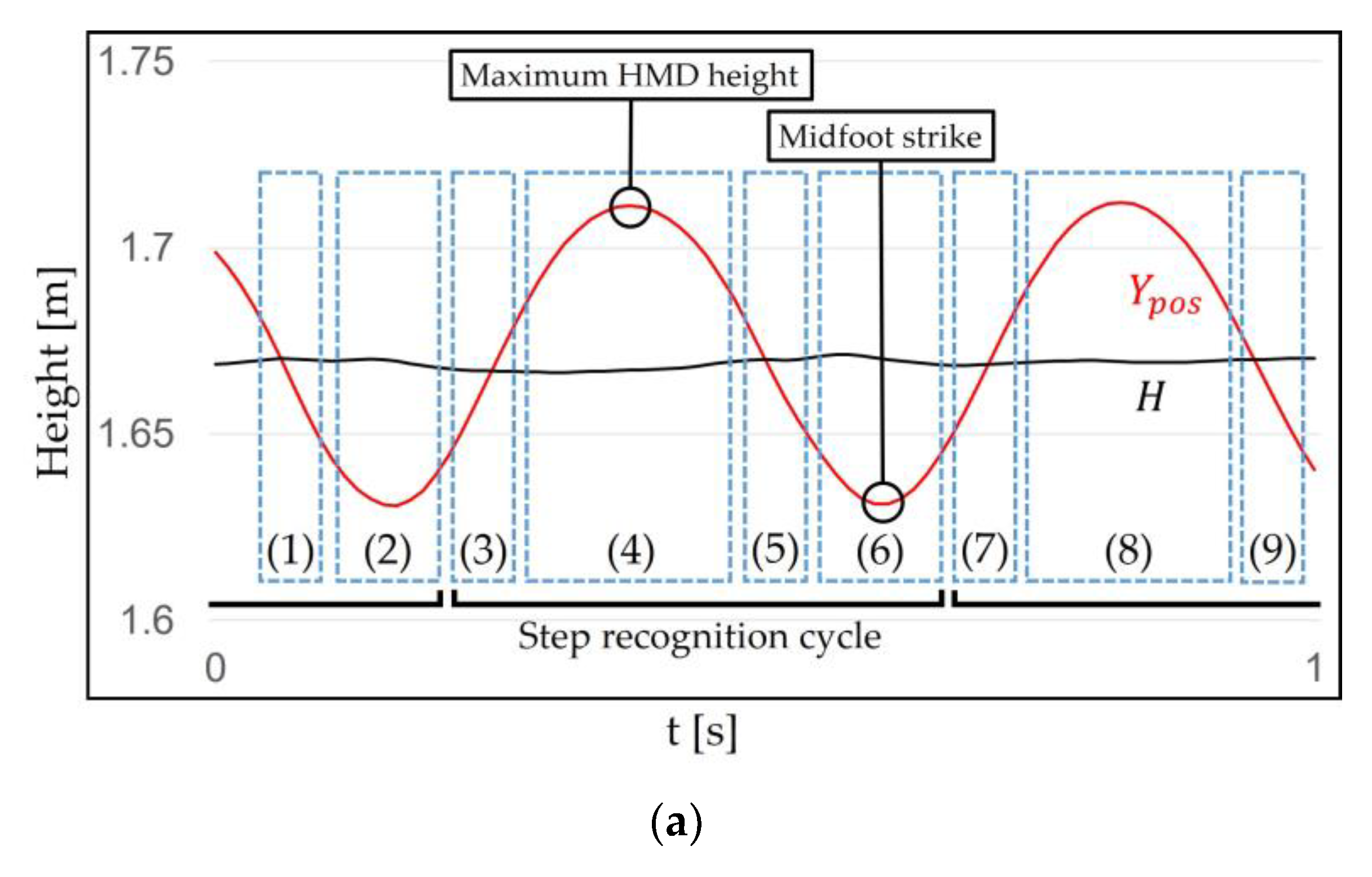

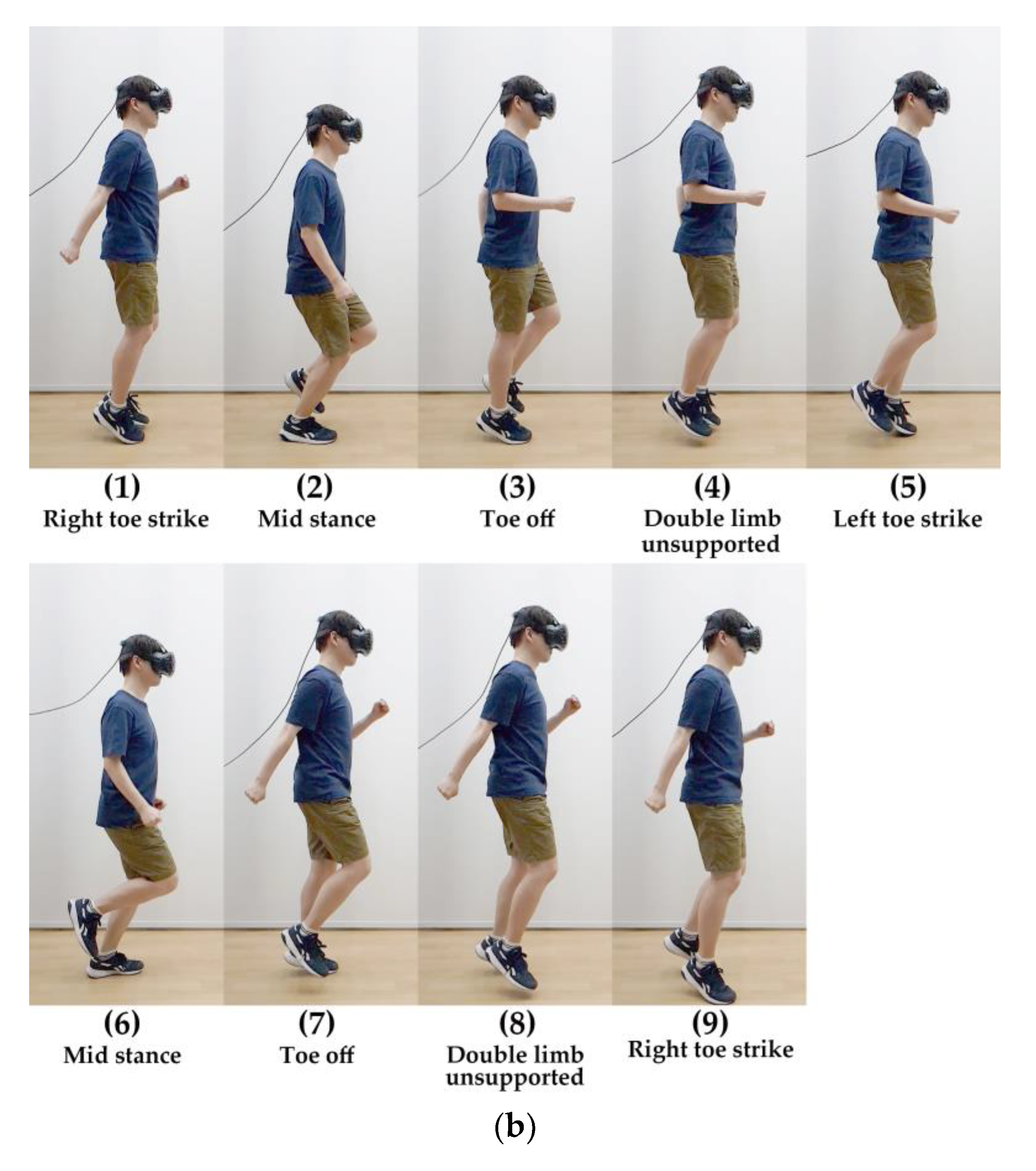

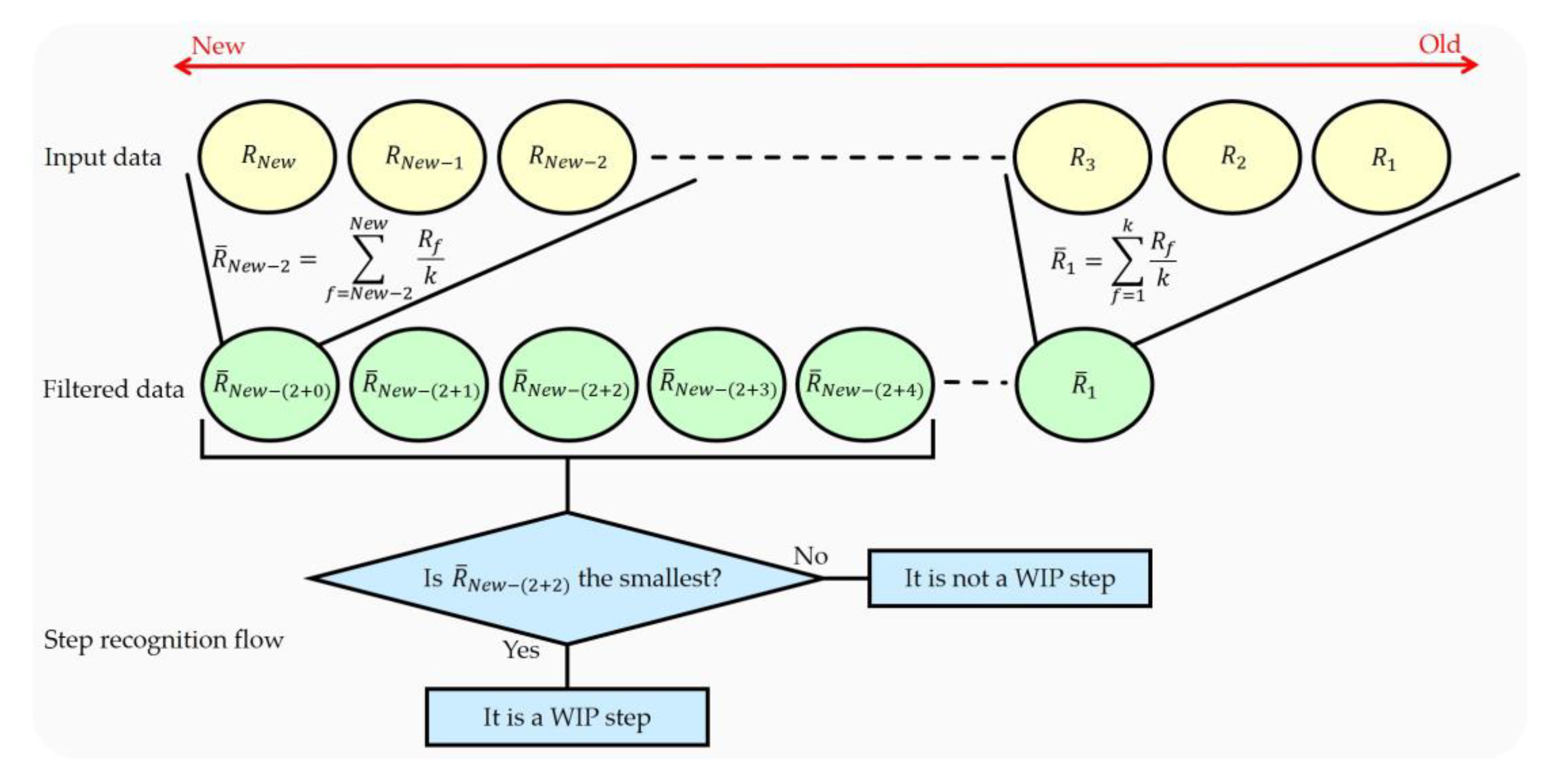

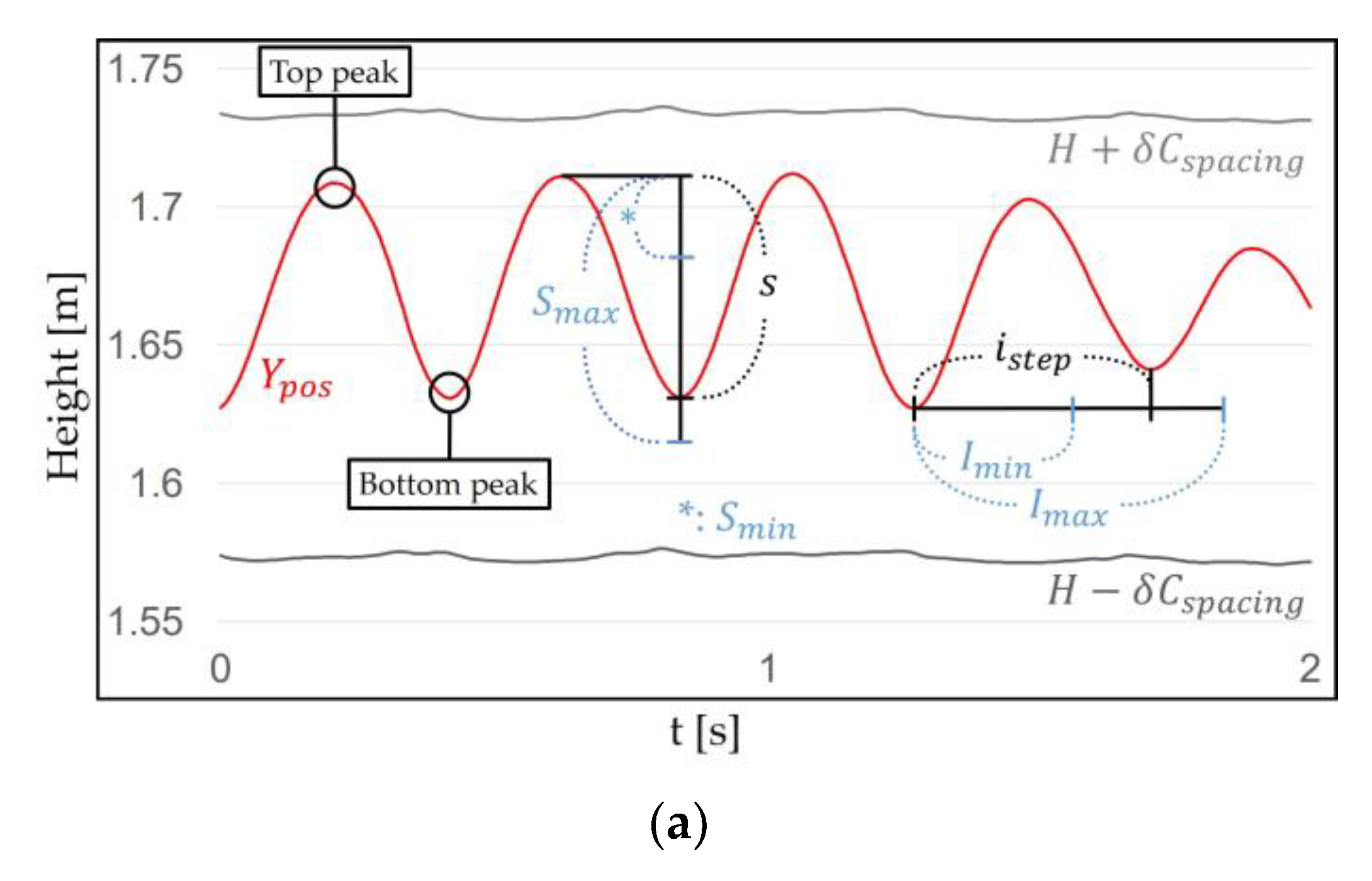

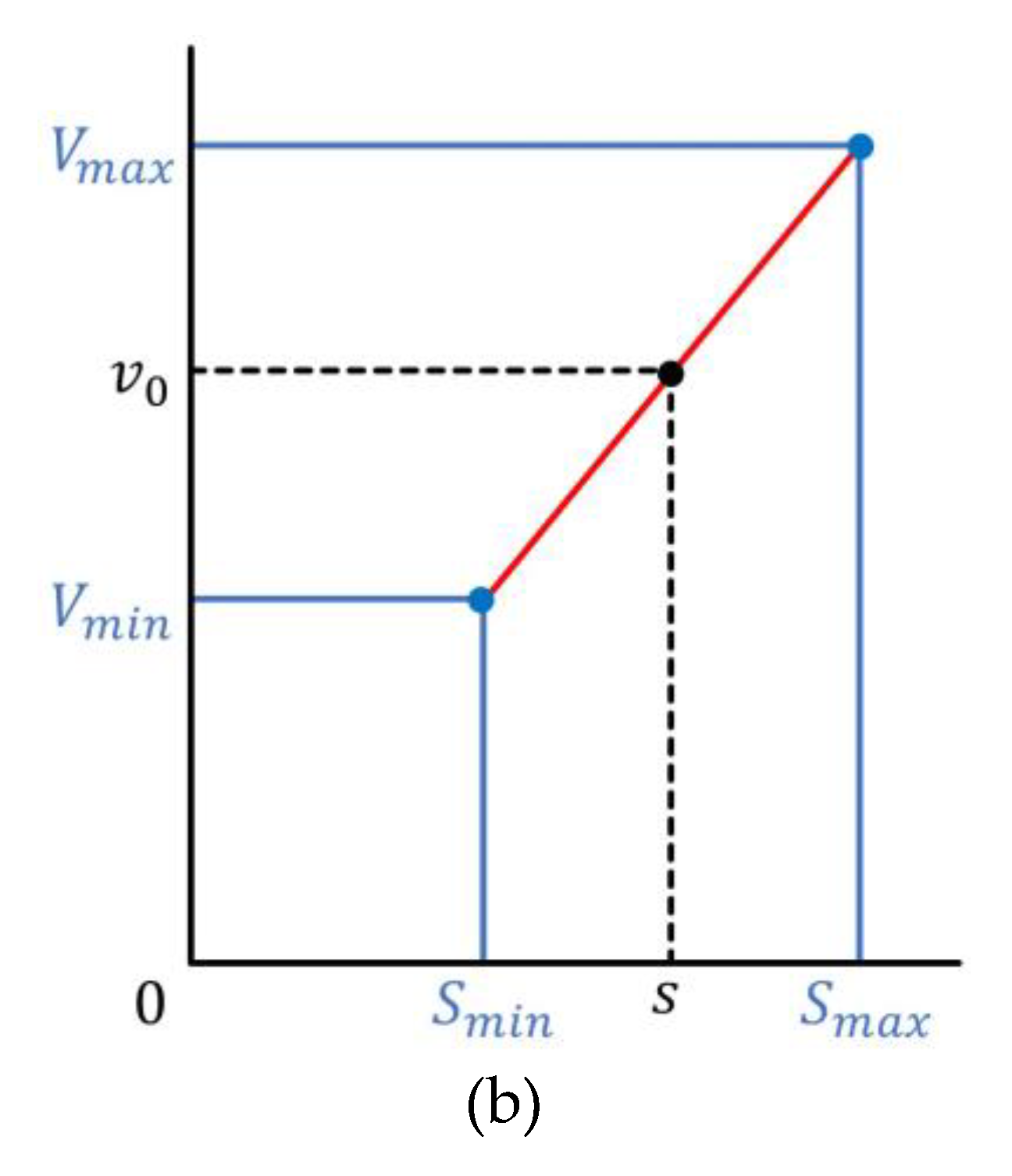

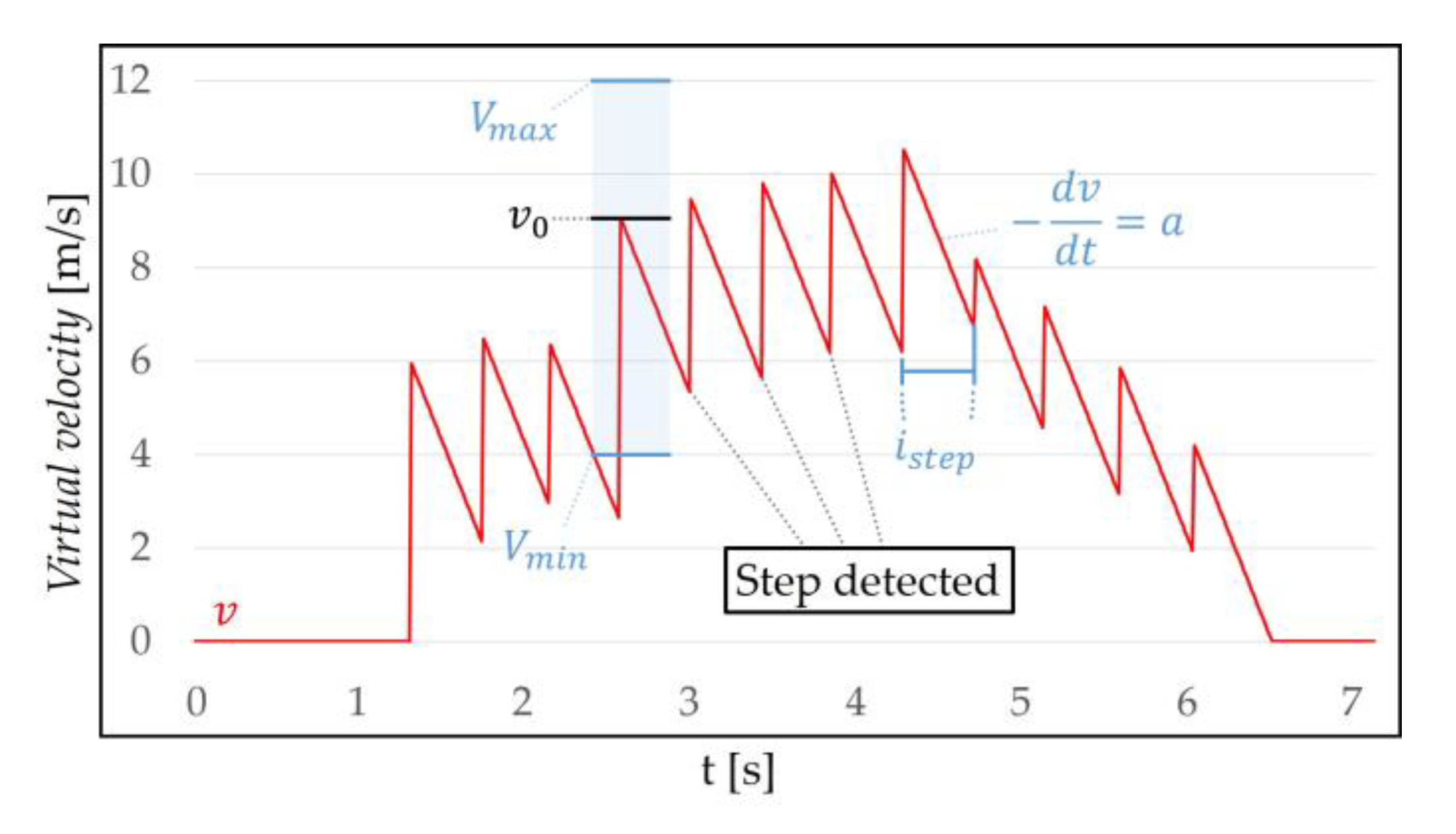

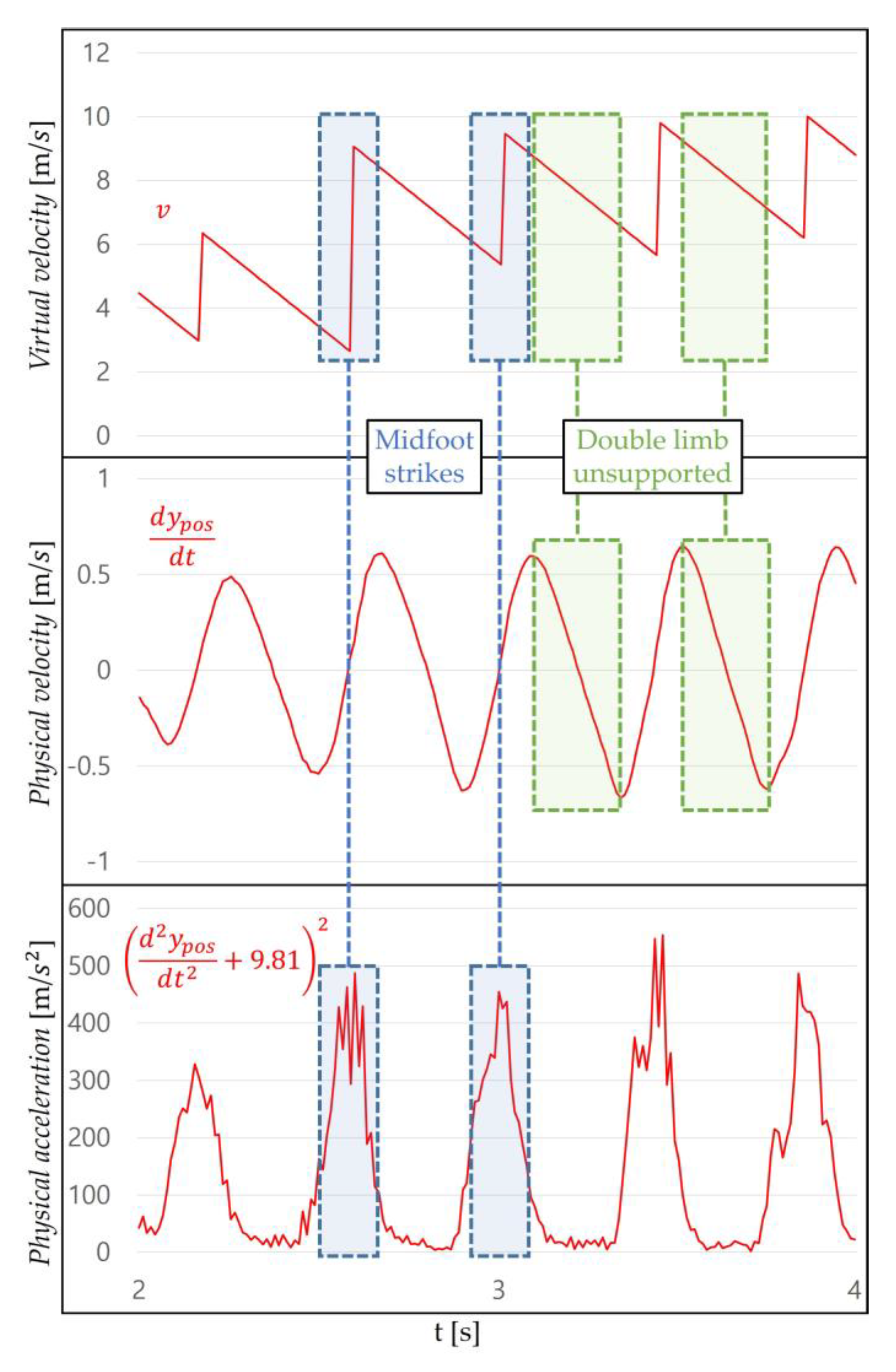

3.2.1. Step Recognition

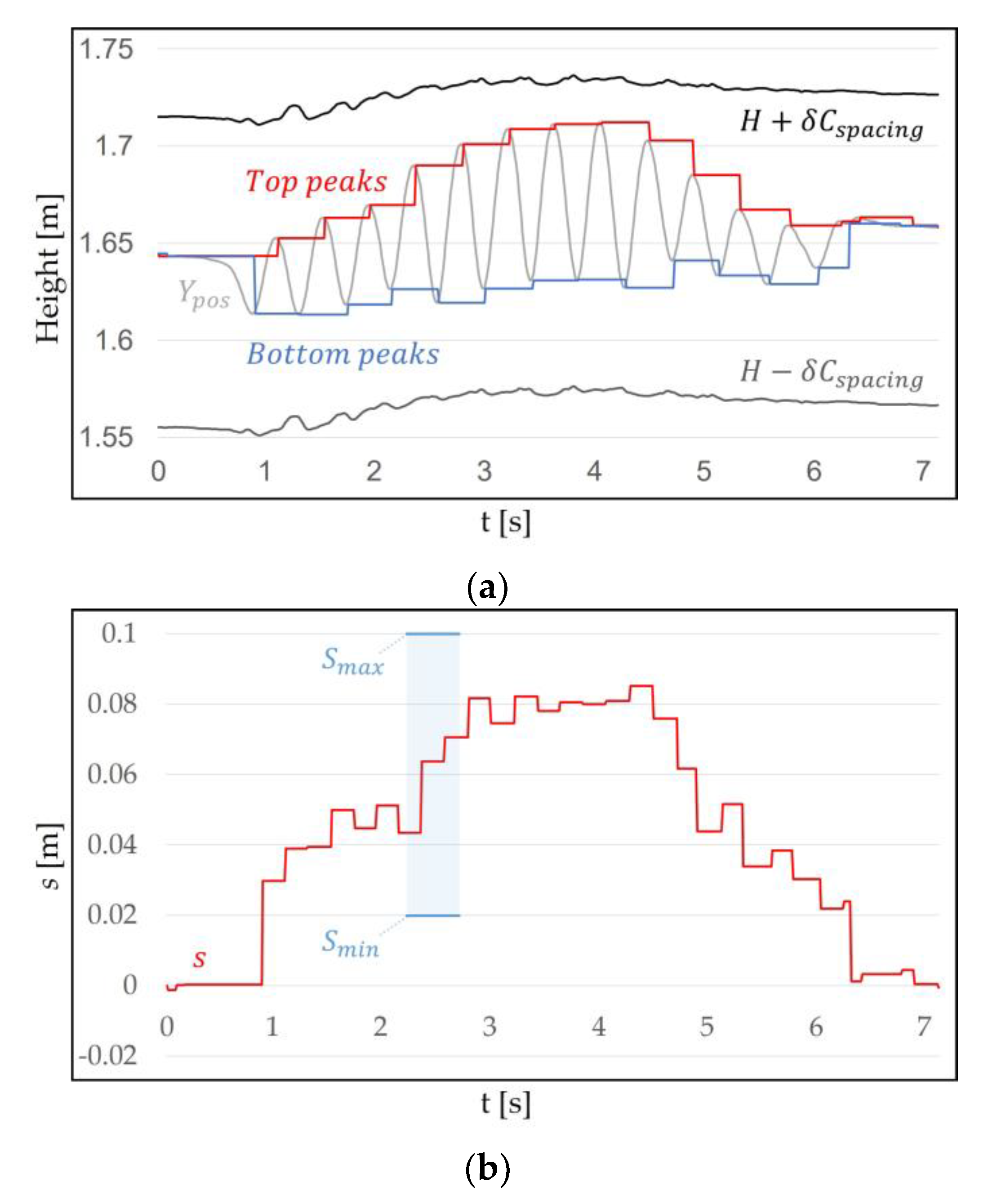

3.2.2. Virtual Velocity Decision

4. Evaluation

4.1. Instrumentation

4.2. Virtual Environment

4.3. Subjects

4.4. Interview

4.5. Procedure

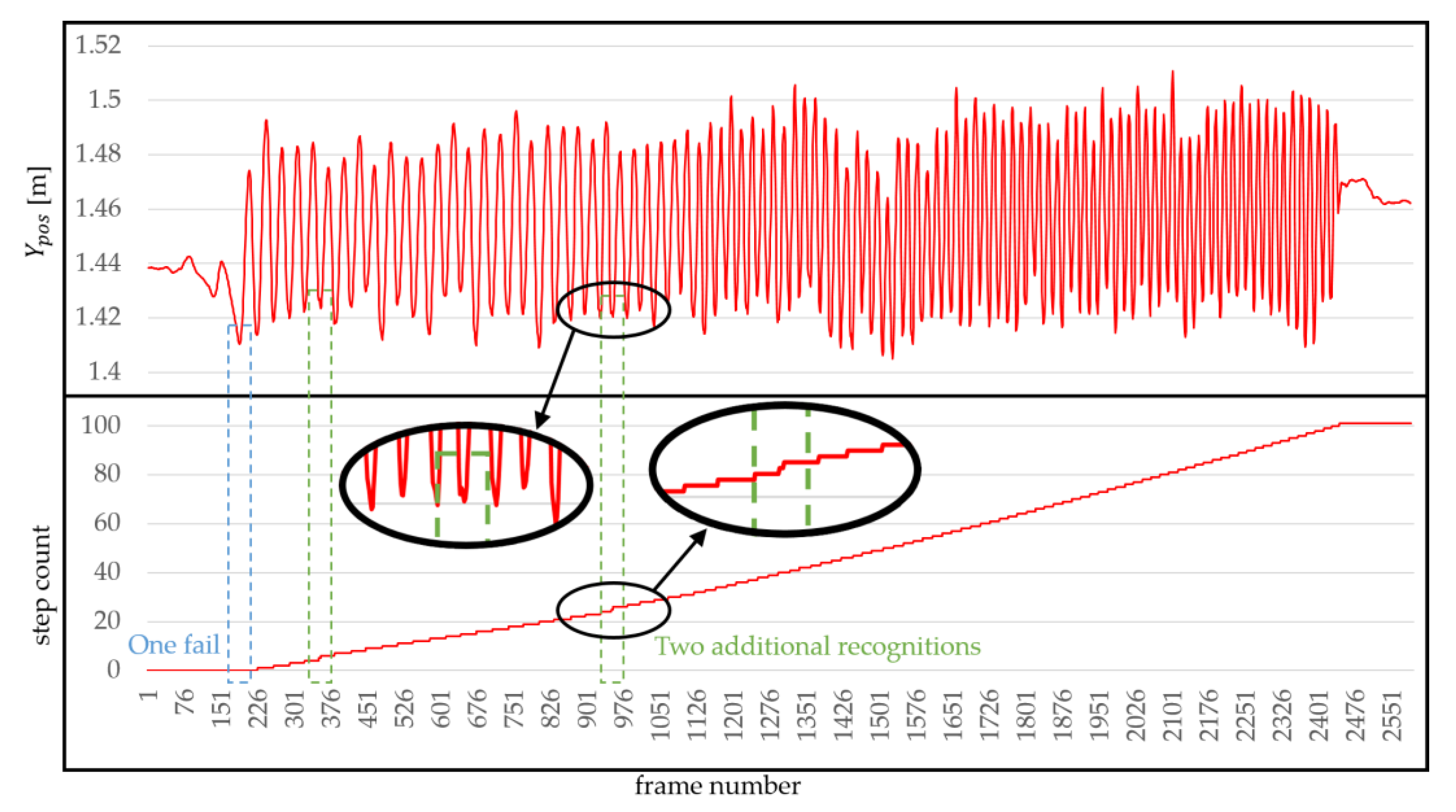

4.6. Results

5. Discussion

6. Conclusions

Supplementary Materials

Author Contributions

Funding

Conflicts of Interest

References

- The USC ICT/MRX lab. fov2go. Available online: http://projects.ict.usc.edu/mxr/diy/fov2go/ (accessed on 13 July 2018).

- Google. Cardboard. Available online: https://vr.google.com/intl/en_us/cardboard/ (accessed on 5 March 2018).

- Samsung. Gear vr with Controller. Available online: http://www.samsung.com/global/galaxy/gear-vr/ (accessed on 5 March 2018).

- Aukstakalnis, S. Practical Augmented Reality: A Guide to the Technologies, Applications, and Human Factors for AR and VR; Addison-Wesley Professional: Boston, MA, USA, 2016. [Google Scholar]

- Bowman, D.; Kruijff, E.; LaViola, J.J., Jr.; Poupyrev, I.P. 3D User Interfaces: Theory and Practice, CourseSmart eTextbook; Addison-Wesley: New York, NY, USA, 2004. [Google Scholar]

- Slater, M.; Steed, A.; Usoh, M. The virtual treadmill: A naturalistic metaphor for navigation in immersive virtual environments. In Virtual Environments’ 95; Springer: New York, NY, USA, 1995; pp. 135–148. [Google Scholar]

- McCauley, M.E.; Sharkey, T.J. Cybersickness: Perception of self-motion in virtual environments. Presence Teleoper. Virtual Environ. 1992, 1, 311–318. [Google Scholar] [CrossRef]

- Tregillus, S.; Al Zayer, M.; Folmer, E. Handsfree Omnidirectional VR Navigation using Head Tilt. In Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems, Denver, CO, USA, 6–11 May 2017; pp. 4063–4068. [Google Scholar]

- Tregillus, S.; Folmer, E. Vr-step: Walking-in-place using inertial sensing for hands free navigation in mobile vr environments. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems, San Jose, CA, USA, 7–12 May 2016; pp. 1250–1255. [Google Scholar]

- Yoo, S.; Kay, J. VRun: Running-in-place virtual reality exergame. In Proceedings of the 28th Australian Conference on Computer-Human Interaction, Launceston, TAS, Australia, 29 November–2 December 2016; pp. 562–566. [Google Scholar]

- Templeman, J.N.; Denbrook, P.S.; Sibert, L.E. Virtual locomotion: Walking in place through virtual environments. Presence 1999, 8, 598–617. [Google Scholar] [CrossRef]

- Wilson, P.T.; Kalescky, W.; MacLaughlin, A.; Williams, B. VR locomotion: Walking> walking in place> arm swinging. In Proceedings of the 15th ACM SIGGRAPH Conference on Virtual-Reality Continuum and Its Applications in Industry, Zhuhai, China, 3–4 December 2016; Volume 1, pp. 243–249. [Google Scholar]

- Usoh, M.; Arthur, K.; Whitton, M.C.; Bastos, R.; Steed, A.; Slater, M.; Brooks, F.P., Jr. Walking> walking-in-place> flying, in virtual environments. In Proceedings of the 26th Annual Conference on Computer Graphics and Interactive Techniques, Los Angeles, CA, USA, 8–13 August 1999; ACM Press: New York, NY, USA; Addison-Wesley Publishing Co.: Reading, MA, USA, 1999; pp. 359–364. [Google Scholar]

- Feasel, J.; Whitton, M.C.; Wendt, J.D. LLCM-WIP: Low-latency, continuous-motion walking-in-place. In Proceedings of the 2008 IEEE Symposium on 3D User Interfaces, Reno, NE, USA, 8–9 March 2008; pp. 97–104. [Google Scholar]

- Muhammad, A.S.; Ahn, S.C.; Hwang, J.-I. Active panoramic VR video play using low latency step detection on smartphone. In Proceedings of the 2017 IEEE International Conference on Consumer Electronics (ICCE), Las Vegas, NV, USA, 8–11 January 2017; pp. 196–199. [Google Scholar]

- Pfeiffer, T.; Schmidt, A.; Renner, P. Detecting movement patterns from inertial data of a mobile head-mounted-display for navigation via walking-in-place. In Proceedings of the 2016 IEEE Virtual Reality (VR), Greenville, SC, USA, 19–23 March 2016; pp. 263–264. [Google Scholar]

- Bhandari, J.; Tregillus, S.; Folmer, E. Legomotion: Scalable walking-based virtual locomotion. In Proceedings of the 23rd ACM Symposium on Virtual Reality Software and Technology, Gothenburg, Sweden, 8–10 November 2017; p. 18. [Google Scholar]

- HTC. Vive Overview. Available online: https://www.vive.com/us/product/vive-virtual-reality-system/ (accessed on 5 March 2018).

- Slater, M.; Usoh, M.; Steed, A. Taking steps: The influence of a walking technique on presence in virtual reality. ACM Trans. Comput.-Hum. Interact. (TOCHI) 1995, 2, 201–219. [Google Scholar] [CrossRef]

- Kotaru, M.; Katti, S. Position Tracking for Virtual Reality Using Commodity WiFi. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Puerto Rico, USA, 24–30 June 2017. [Google Scholar]

- Razzaque, S.; Kohn, Z.; Whitton, M.C. Redirected walking. In Proceedings of the Eurographics, Manchester, UK, 5–7 September 2001; pp. 289–294. [Google Scholar]

- Nilsson, N.C.; Peck, T.; Bruder, G.; Hodgson, E.; Serafin, S.; Whitton, M.; Steinicke, F.; Rosenberg, E.S. 15 Years of Research on Redirected Walking in Immersive Virtual Environments. IEEE Comput. Gr. Appl. 2018, 38, 44–56. [Google Scholar] [CrossRef] [PubMed]

- Langbehn, E.; Lubos, P.; Bruder, G.; Steinicke, F. Bending the curve: Sensitivity to bending of curved paths and application in room-scale vr. IEEE Trans. Vis. Comput. Gr. 2017, 23, 1389–1398. [Google Scholar] [CrossRef] [PubMed]

- Williams, B.; Narasimham, G.; Rump, B.; McNamara, T.P.; Carr, T.H.; Rieser, J.; Bodenheimer, B. Exploring large virtual environments with an HMD when physical space is limited. In Proceedings of the 4th Symposium on Applied Perception in Graphics and Visualization, Tübingen, Germany, 25–27 July 2007; pp. 41–48. [Google Scholar]

- Matsumoto, K.; Ban, Y.; Narumi, T.; Yanase, Y.; Tanikawa, T.; Hirose, M. Unlimited corridor: Redirected walking techniques using visuo haptic interaction. In Proceedings of the ACM SIGGRAPH 2016 Emerging Technologies, Anaheim, CA, USA, 24–28 July 2016; p. 20. [Google Scholar]

- Cakmak, T.; Hager, H. Cyberith virtualizer: A locomotion device for virtual reality. In Proceedings of the ACM SIGGRAPH 2014 Emerging Technologies, Vancouver, BC, Canada, 10–14 August 2014; p. 6. [Google Scholar]

- De Luca, A.; Mattone, R.; Giordano, P.R.; Ulbrich, H.; Schwaiger, M.; Van den Bergh, M.; Koller-Meier, E.; Van Gool, L. Motion control of the cybercarpet platform. IEEE Trans. Control Syst. Technol. 2013, 21, 410–427. [Google Scholar] [CrossRef]

- Medina, E.; Fruland, R.; Weghorst, S. Virtusphere: Walking in a human size VR “hamster ball”. In Proceedings of the Human Factors and Ergonomics Society Annual Meeting, New York, NY, USA, 22–26 September 2008; SAGE Publications Sage CA: Los Angeles, CA, USA, 2008; pp. 2102–2106. [Google Scholar]

- Brinks, H.; Bruins, M. Redesign of the Omnideck Platform: With Respect to DfA and Modularity. Available online: http://lnu.diva-portal.org/smash/record.jsf?pid=diva2%3A932520&dswid=-6508 (accessed on 25 August 2018).

- Darken, R.P.; Cockayne, W.R.; Carmein, D. The omni-directional treadmill: A locomotion device for virtual worlds. In Proceedings of the 10th Annual ACM Symposium on User Interface Software and Technology, Banff, AB, Canada, 14–17 October 1997; pp. 213–221. [Google Scholar]

- SurreyStrength. March in Place. Available online: https://youtu.be/bgjmNliHTyc (accessed on 5 March 2018).

- Life FitnessTraining. Jog in Place. Available online: https://youtu.be/BEzBhpXDkLE (accessed on 5 March 2018).

- Kim, J.-S.; Gračanin, D.; Quek, F. Sensor-fusion walking-in-place interaction technique using mobile devices. In Proceedings of the 2012 IEEE Virtual Reality Workshops (VRW), Costa Mesa, CA, USA, 4–8 March 2012; pp. 39–42. [Google Scholar]

- Wendt, J.D.; Whitton, M.C.; Brooks, F.P. Gud wip: Gait-understanding-driven walking-in-place. In Proceedings of the 2010 IEEE Virtual Reality Conference (VR), Waltham, MA, USA, 20–24 March 2010; pp. 51–58. [Google Scholar]

- Williams, B.; Bailey, S.; Narasimham, G.; Li, M.; Bodenheimer, B. Evaluation of walking in place on a wii balance board to explore a virtual environment. ACM Trans. Appl. Percept. (TAP) 2011, 8, 19. [Google Scholar] [CrossRef]

- Bouguila, L.; Evequoz, F.; Courant, M.; Hirsbrunner, B. Walking-pad: A step-in-place locomotion interface for virtual environments. In Proceedings of the 6th International Conference on Multimodal Interfaces, College, PA, USA, 13–15 October 2004; pp. 77–81. [Google Scholar]

- Terziman, L.; Marchal, M.; Emily, M.; Multon, F.; Arnaldi, B.; Lécuyer, A. Shake-your-head: Revisiting walking-in-place for desktop virtual reality. In Proceedings of the 17th ACM Symposium on Virtual Reality Software and Technology, Hong Kong, China, 22–24 November 2010; pp. 27–34. [Google Scholar]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436. [Google Scholar] [CrossRef] [PubMed]

- Jiménez, A.R.; Seco, F.; Zampella, F.; Prieto, J.C.; Guevara, J. PDR with a foot-mounted IMU and ramp detection. Sensors 2011, 11, 9393–9410. [Google Scholar] [CrossRef] [PubMed]

- Pham, D.D.; Suh, Y.S. Pedestrian navigation using foot-mounted inertial sensor and LIDAR. Sensors 2016, 16, 120. [Google Scholar] [CrossRef] [PubMed]

- Zizzo, G.; Ren, L. Position tracking during human walking using an integrated wearable sensing system. Sensors 2017, 17, 2866. [Google Scholar] [CrossRef] [PubMed]

- Seel, T.; Raisch, J.; Schauer, T. IMU-based joint angle measurement for gait analysis. Sensors 2014, 14, 6891–6909. [Google Scholar] [CrossRef] [PubMed]

- Sherman, C.R. Motion sickness: Review of causes and preventive strategies. J. Travel Med. 2002, 9, 251–256. [Google Scholar] [CrossRef] [PubMed]

- IFIXIT. Htc Vive Teardown. Available online: https://www.ifixit.com/Teardown/HTC+Vive+Teardown/62213 (accessed on 5 March 2018).

- Wendt, J.D.; Whitton, M.; Adalsteinsson, D.; Brooks, F.P., Jr. Reliable Forward Walking Parameters from Head-Track Data Alone; Sandia National Lab. (SNL-NM): Albuquerque, NM, USA, 2011. [Google Scholar]

- Inman, V.T.; Ralston, H.J.; Todd, F. Human Walking; Williams & Wilkins: Baltimore, MD, USA, 1981. [Google Scholar]

- Chan, C.W.; Rudins, A. Foot biomechanics during walking and running. Mayo Clin. Proc. 1994, 69, 448–461. [Google Scholar] [CrossRef]

- Niehorster, D.C.; Li, L.; Lappe, M. The accuracy and precision of position and orientation tracking in the HTC vive virtual reality system for scientific research. i-Perception 2017, 8. [Google Scholar] [CrossRef] [PubMed]

- Riecke, B.E.; Bodenheimer, B.; McNamara, T.P.; Williams, B.; Peng, P.; Feuereissen, D. Do we need to walk for effective virtual reality navigation? physical rotations alone may suffice. In Proceedings of the International Conference on Spatial Cognition, Portland, OR, USA, 15–19 August 2010; Springer: New York, NY, USA, 2010; pp. 234–247. [Google Scholar]

- Unity Blog. 5.6 Is Now Available and Completes the Unity 5 Cycle. Available online: https://blogs.unity3d.com/2017/03/31/5-6-is-now-available-and-completes-the-unity-5-cycle/ (accessed on 5 March 2018).

- Kennedy, R.S.; Lane, N.E.; Berbaum, K.S.; Lilienthal, M.G. Simulator sickness questionnaire: An enhanced method for quantifying simulator sickness. Int. J. Aviat. Psychol. 1993, 3, 203–220. [Google Scholar] [CrossRef]

- Zhao, N. Full-featured pedometer design realized with 3-axis digital accelerometer. Analog Dialogue 2010, 44, 1–5. [Google Scholar]

- Bozgeyikli, E.; Raij, A.; Katkoori, S.; Dubey, R. Point & teleport locomotion technique for virtual reality. In Proceedings of the 2016 Annual Symposium on Computer-Human Interaction in Play, Austin, TX, USA, 16–19 October 2016; pp. 205–216. [Google Scholar]

| Test Subject | Task 1 (Forward Navigation) | Task 2 (Backward Navigation) | Task 3 (Squat) | |||

|---|---|---|---|---|---|---|

| Average Error Rate (%) | SD | Average Error Rate (%) | SD | Average Error Rate (%) | ||

| 1 | 1.56 | 0.4 | 0.55 | 0.2 | 0.45 | 0 |

| 2 | 1.36 | 0.2 | 0.45 | 0 | 0 | 0 |

| 3 | 1.44 | 0.6 | 0.55 | 1.6 | 1.34 | 0 |

| 4 | 1.49 | 0.2 | 0.45 | 0.8 | 0.45 | 0 |

| 5 | 1.38 | 1 | 0 | 0.8 | 1.10 | 0 |

| 6 | 1.53 | 0 | 0 | 1 | 1.73 | 0 |

| 7 | 1.5 | 0.6 | 0.55 | 0.8 | 0.45 | 0 |

| 8 | 1.37 | 0.8 | 0.84 | 0.6 | 0.55 | 0 |

| 9 | 1.54 | 1.6 | 0.89 | 1 | 0.70 | 0 |

| Average | 1.46 | 0.6 | 0.48 | 0.76 | 0.75 | 0 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lee, J.; Ahn, S.C.; Hwang, J.-I. A Walking-in-Place Method for Virtual Reality Using Position and Orientation Tracking. Sensors 2018, 18, 2832. https://doi.org/10.3390/s18092832

Lee J, Ahn SC, Hwang J-I. A Walking-in-Place Method for Virtual Reality Using Position and Orientation Tracking. Sensors. 2018; 18(9):2832. https://doi.org/10.3390/s18092832

Chicago/Turabian StyleLee, Juyoung, Sang Chul Ahn, and Jae-In Hwang. 2018. "A Walking-in-Place Method for Virtual Reality Using Position and Orientation Tracking" Sensors 18, no. 9: 2832. https://doi.org/10.3390/s18092832

APA StyleLee, J., Ahn, S. C., & Hwang, J.-I. (2018). A Walking-in-Place Method for Virtual Reality Using Position and Orientation Tracking. Sensors, 18(9), 2832. https://doi.org/10.3390/s18092832