1. Introduction

Simultaneous localization and mapping (SLAM) is a method of building a map under exploration and estimating vehicle pose based on sensor information in an unknown environment. When exploring an unpredictable environment, an unmanned vehicle is generally employed for exploration as well as localization. In this regard, the vehicle could be equipped with a single sensor for detecting and identifying surroundings, or by attaching two or even more sensors on the vehicle to enhance its estimation capability.

When considering different kinds of sensors [

1], laser range finders (LRFs), vision, and Wi-Fi networks are popular sensing techniques for indoor localization tasks. Recently, with advancement in computer vision and image processing, many researchers have started investigating the vision-based SLAM [

2,

3]. Under the condition that the captured images are matched sufficiently, features can be extracted using Scale-Invariant Feature Transform (SIFT) [

4] or Speeded Up Robust Features (SURF) [

5]. Other indoor localization methods consider the amplitude of received signal from Wi-Fi networks [

6,

7,

8,

9,

10]. These localization strategies depend on pre-installed wireless hardware devices on the site and thus may not be applicable in Wi-Fi denied environments.

For an ultra-low-cost SLAM module, previous works have considered a set of on-board ultrasonic sensors [

11], which provided sparse measurements about the environment. However, the robot pose might lose its pose under complicated environments without the aid of robot kinematics information. To achieve robust image recognition [

12], robot navigation and map construction, the depth sensor, Kinect v2, was considered [

13]. Kinect v2 is based on the time-of-flight measurement principle and can be used in outdoors environment. Since the multi-depth sensors are able to provide highly dense 3D data [

14], the real-time computation effort is relative higher. Furthermore, for well-constructed indoor environment, such hardware configuration is not necessary for 2D robot positioning.

Owing to the light weight and portable advantage, light detection and ranging (LiDAR) has attracted more and more attention [

15,

16]. LiDAR possesses a high sampling rate, high angular resolution, good range detection, and high robustness against environment variability. As a result, in this research, a single LiDAR is used for indoor localization.

By analyzing the position of features in each frame at every movement of the vehicle, one can figure out the vehicle’s traveling distance and heading. With different scanning data, an iterative closed point (ICP) [

17] algorithm is employed to find the most appropriate robot pose matching conditions, including rotation and translation. However, the ICP may not always lead to good pattern matching if point cloud registration issue is not well addressed. In other words, a better point registration will lead to better robot pose estimation. To address this, point cloud outliers must be identified and recognized. Another issue when applying the ICP is computation efficiency. Since the ICP algorithm considers the closest-point rule to establish correspondences between points in current scan and a given layout, the searching effort can increase dramatically when the scan or a layout contains large amounts of data.

The researches [

18,

19,

20,

21,

22,

23,

24] has addressed and solved some of the problems when applying ICP, including (1) wrong point matching for large initial errors, (2) expensive correspondence searching, (3) slow convergence speed, and (4) outlier removal. For robot pose subjected to large initial angular displacement, especially in [

16], iterative dual correspondence (IDC) is proposed. However, it demands higher computation due to its dual-correspondence process. Metric-based ICP (MbICP) [

21] considers geometric distance that takes translation and rotation into account simultaneously. The correspondence between scans is established with this measure and the minimization of the error is carried out in terms of this distance. The MbICP shows superior robustness in the case of existing large angular displacement. Among various planar scan matching strategies, Normal Distribution Transformation (NDT) [

22] and Point-to-Line ICP (PLICP) [

23] illustrate state-of-the-art performance in consideration of scan matching accuracy. NDT transforms scans onto a grid space and tends to find the best fit by maximizing the normal distribution in each cell. The NDT does not require point-to-point registrations, so it enhances matching speed. However, the selection of the grid size dominates estimation stability. The PLICP considers normal information from environment’s geometric surface and tries to minimize the distance projected onto the normal vector of the surface. In addition, a close-form solution is also given such that the convergence speed can be drastically improved.

To obtain precise and high-bandwidth robot pose estimation, the vehicle’s dynamics and traveling status can be further integrated into a Kalman filter [

25]. A Kalman filter provides the best estimate to eliminate noise and provides a better robot pose prediction. Other researchers have considered the wheel odometry fusion-based SLAM [

26,

27], which integrates robot kinematics and encoder data for pose estimation. However, it might not be suitable for realization of a portable localization system.

Based on the aforementioned issues, in this work, the LiDAR is considered as the only sensor for mapping and localization. To avoid mismatched point registration and to enhance matching speed, we propose a feature-based weighted parallel iterative closed point (WP-ICP) architecture inspired by [

18,

23,

28,

29,

30,

31]. The main advantages of the proposed method are as follows: (a) Point sizes of the model set and data set are significantly reduced so that the ICP speed can be enhanced. (b) A split and merge algorithm [

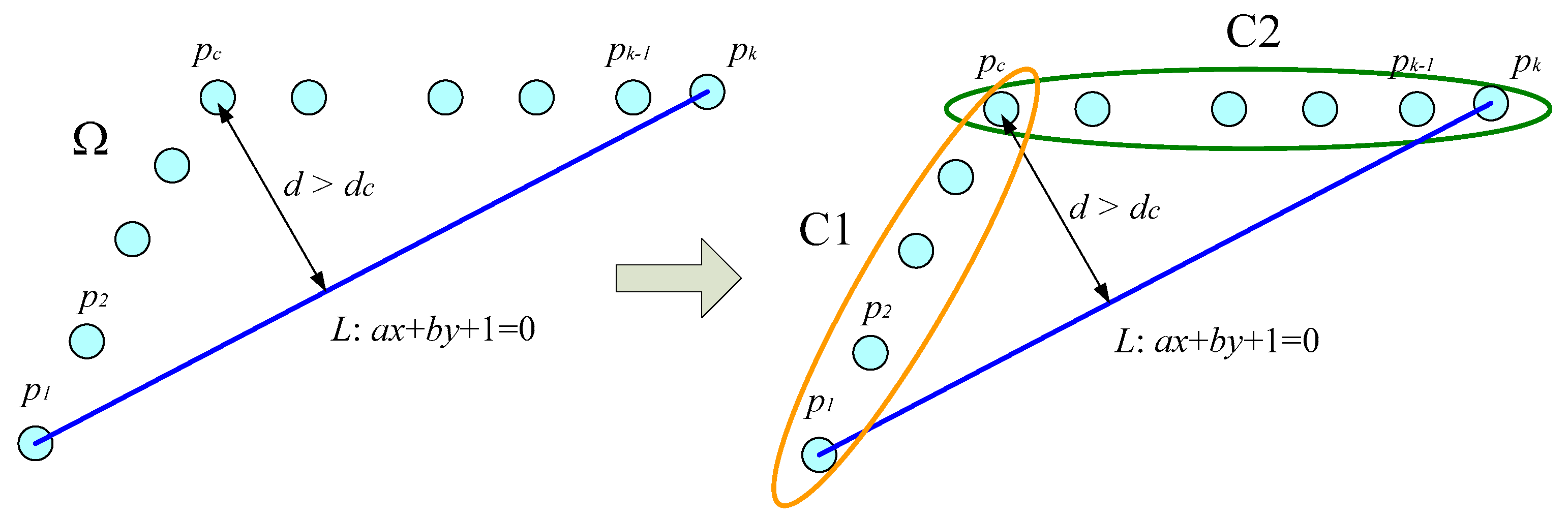

28,

29] is considered to divide the point cloud into two feature groups, namely, corner and line segments. The algorithm works by matching points labeled as corners to the corner candidates; similarly, for those points labeled as lines can only be matched to the lines candidates. As a result, it attenuates any possibilities of point cloud mismatch.

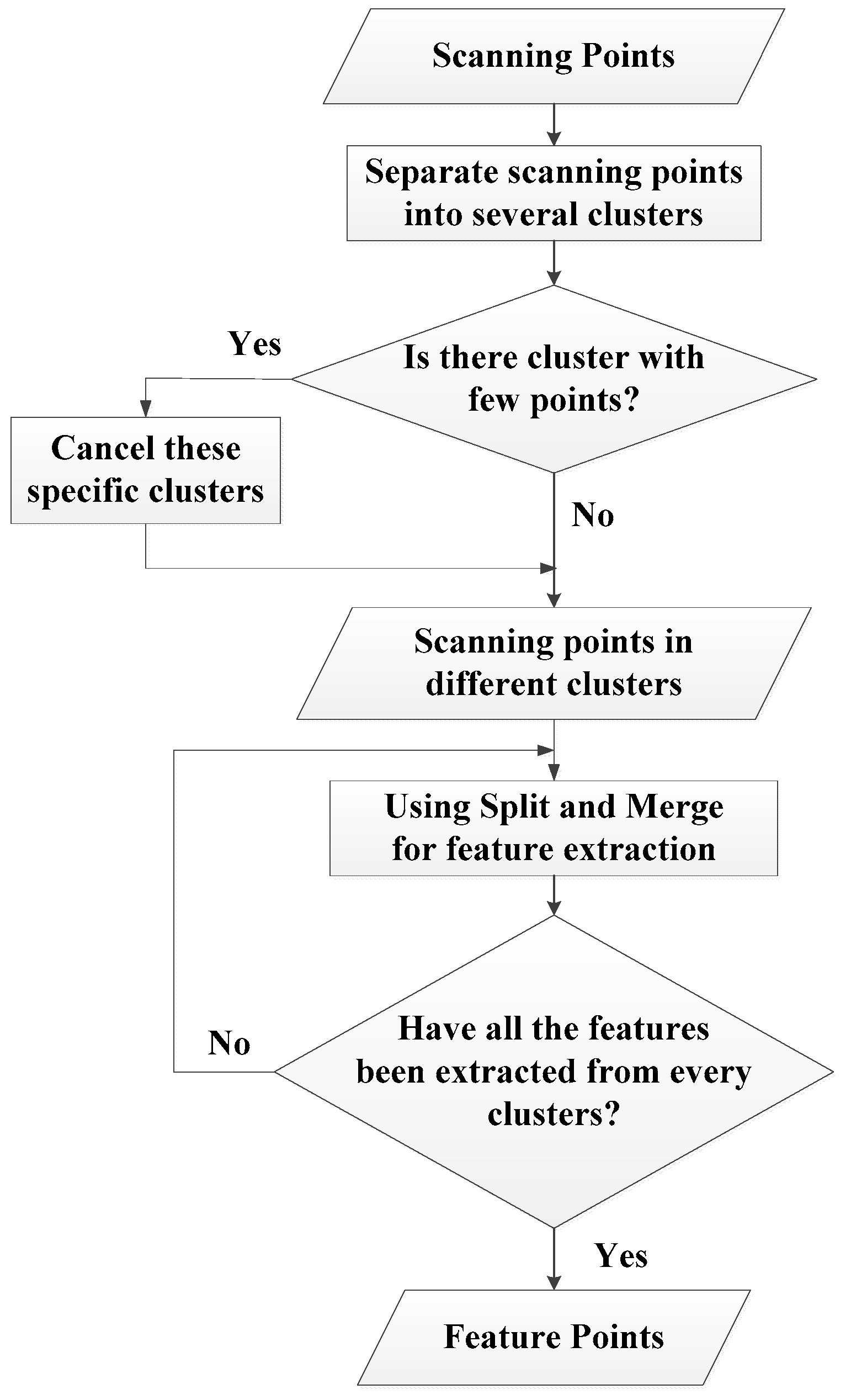

In this paper, it is supposed that the well-constructed indoor layout is given in advance. The main design object is to reduce the computation effort and to maintain the indoor positioning precision. The rest of this paper is organized as follows. In

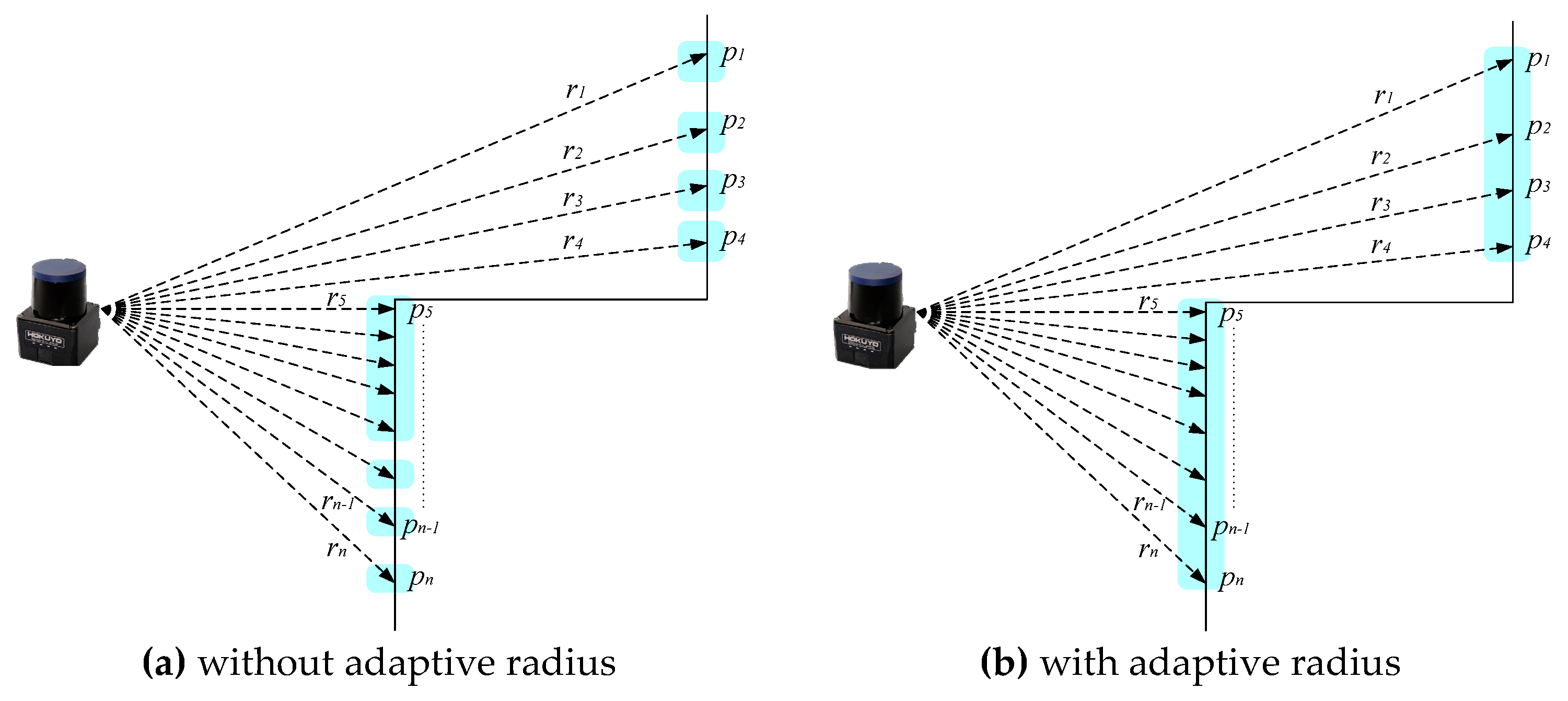

Section 2, an adaptive breakpoint detector is firstly introduced for scan point segmentation. A clustering algorithm and a split–merge approach is further considered for point clustering and feature extraction, respectively. In

Section 3, a WP-ICP algorithm is proposed.

Section 4 presents real experiments to evaluate the effectiveness of the proposed method. Finally,

Section 5 outlines conclusions and future work.

3. The Proposed Method: WP-ICP

3.1. Pose Estimation Algorithm

SLAM is considered to be a chicken and egg problem since precise localization needs a reference map, and a good mapping result comes from a correct estimation of the robot pose [

33]. To achieve SLAM, these two issues must be solved simultaneously [

34]. However, in this work, only localization is considered. Therefore, by assuming that a complete layout of the environment is given in advance, a novel scan matching algorithm is presented and will be introduced in the next subsection.

Suppose that the correspondences are already known, the pose estimation can be considered as an estimate of rigid body transform, which can be solved efficiently via singular value decomposition (SVD) technique [

35].

Let

be data set from a current scan and

be a model set received from a given layout. The goal is to find a rigid body transformation pair

such that the best alignment can be achieved in the least error sense. It can be stated as

where

are the weights for each point pair.

The optimal translation vector can be calculated by

where

can be taken as weighted centroids for the data set and the model set, respectively.

Let and . Consider also matrices , , and which are defined by , , and , respectively.

Defining

and then applying the singular value decomposition (SVD) on

yields

where

and

are unitary matrices, and

is a diagonal matrix. It has been proved that the optimal rotation matrix is available by considering

Based on Equation (9), the translational vector given in Equation (6) can also be solved.

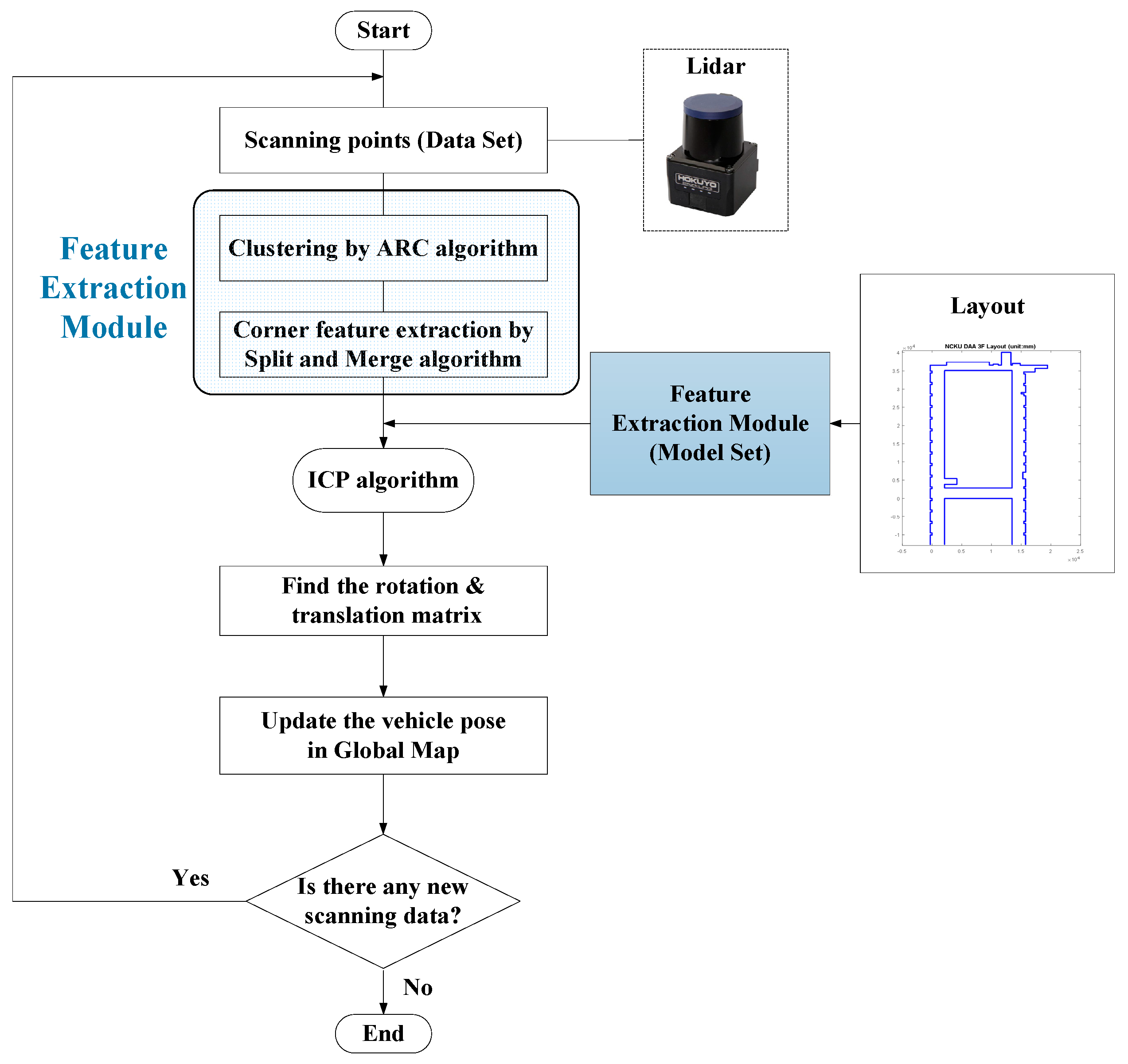

According to the ARC, the split–merge algorithm, and the ICP, the procedure of the corner-feature-based ICP pose estimation is summarized in

Figure 4. To reject the outlier during the feature point registration, the weightings

can be designed according to the Euclidean distance, and the values for certain unreasonable feature pairs can be set to zero.

3.2. A Weighted Parallel ICP Pose Estimation Algorithm

An environment that has a similar layout generally results in good estimates. However, in practice, it is not always the case. To verify the feasibility of the proposed method, the proposed algorithm needs to be robust enough even in the presence of environment uncertainties such as moving people.

Based on the results presented in

Section 2 and

Section 3, a WP-ICP is proposed. The WP-ICP considers two features for point clouds that are pre-processed: one is the corner feature and the other is the line feature. Corners are important feature points in the environment as they are distinct, whereas walls are stable feature points and a good candidate for feature extraction in structured environments [

15,

31,

36]. Taking advantage of LiDAR for detecting surroundings, walls can be represented as line segments, which are composed of two corners. Furthermore, we also considered the center point of a line segment as another matching reference point.

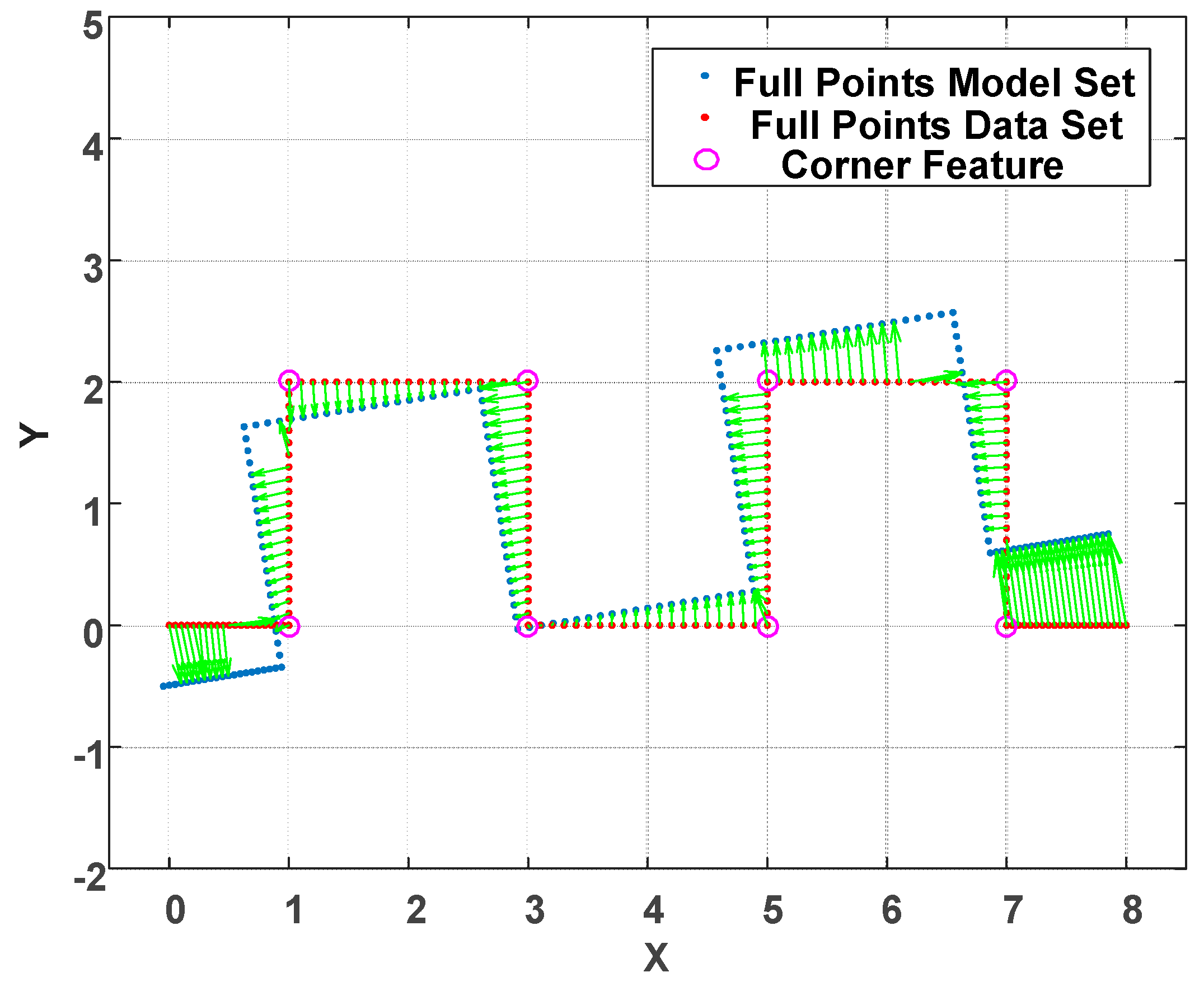

The motivation for such features are that indoor environments, e.g., offices and buildings, are generally well structured. Therefore, feature-based localization is suitable for such environments. Examining the traditional full-points ICP algorithm, point cloud registration is achieved by means of the nearest neighbor search (NNS) concept. There is no further information attached to those points. Therefore, it is easy to obtain incorrect correspondence as shown in

Figure 5. Under this circumstance, several iterations are usually needed to converge the point registration. In addition, the ICP gives rise to an obvious time cost for registration especially when the size of point cloud is large. To solve the incorrect correspondence and iteration time cost issues, a feature-based point cloud reduction method is developed.

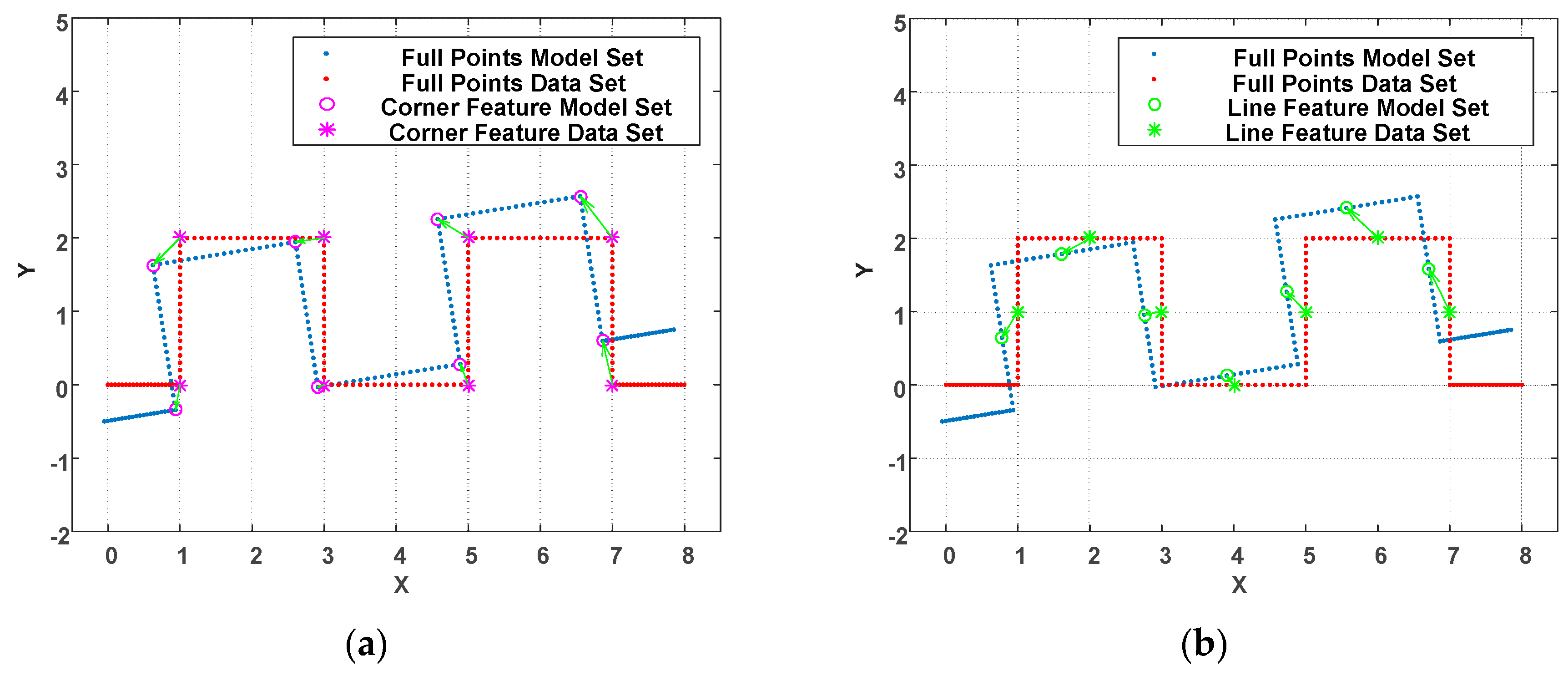

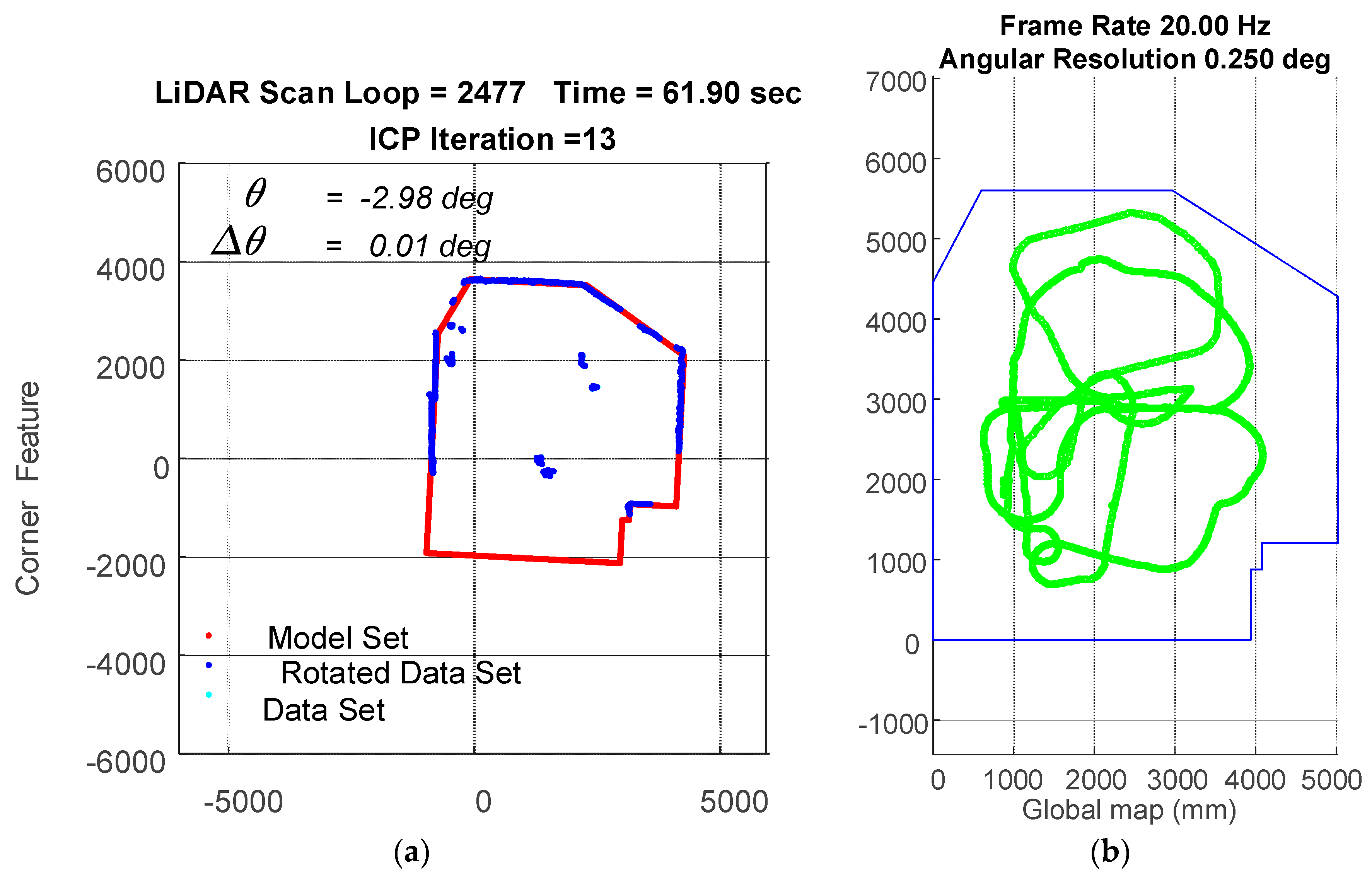

For the proposed WP-ICP, the point cloud is first characterized by fewer corners and lines. This has two main advantages: (a) First, the size of the point cloud is reduced significantly and thus enhances the ICP speed. (b) Second, the polished points are labeled as corner or line features. Only those points labeled as corners can be matched to the corner candidates; similarly, only those points labeled as lines can be matched to the lines candidates. The result is shown in

Figure 6.

Figure 6a illustrates the matching condition for corners while

Figure 6b represents the matching condition for lines. The parallel-ICP results in correct point registration and thereby reduces the number of iterations.

The main advantage of the WP-ICP is that the number of data points in a data set as well as a model set can be significantly reduced. On the contrary, the full-points ICP algorithm includes all data points for correspondence searching and thus leads to low computation efficiency.

Moreover, in the proposed WP-ICP, the scan points are clustered into the corner or the line groups, respectively. Based on the parallel mechanism, the points from a corner set can never be matched with the points from a line set. It can thus avoid mismatching during the point registration. However, for full point ICP, many mismatches could happen once the distances between those two set points are close enough.

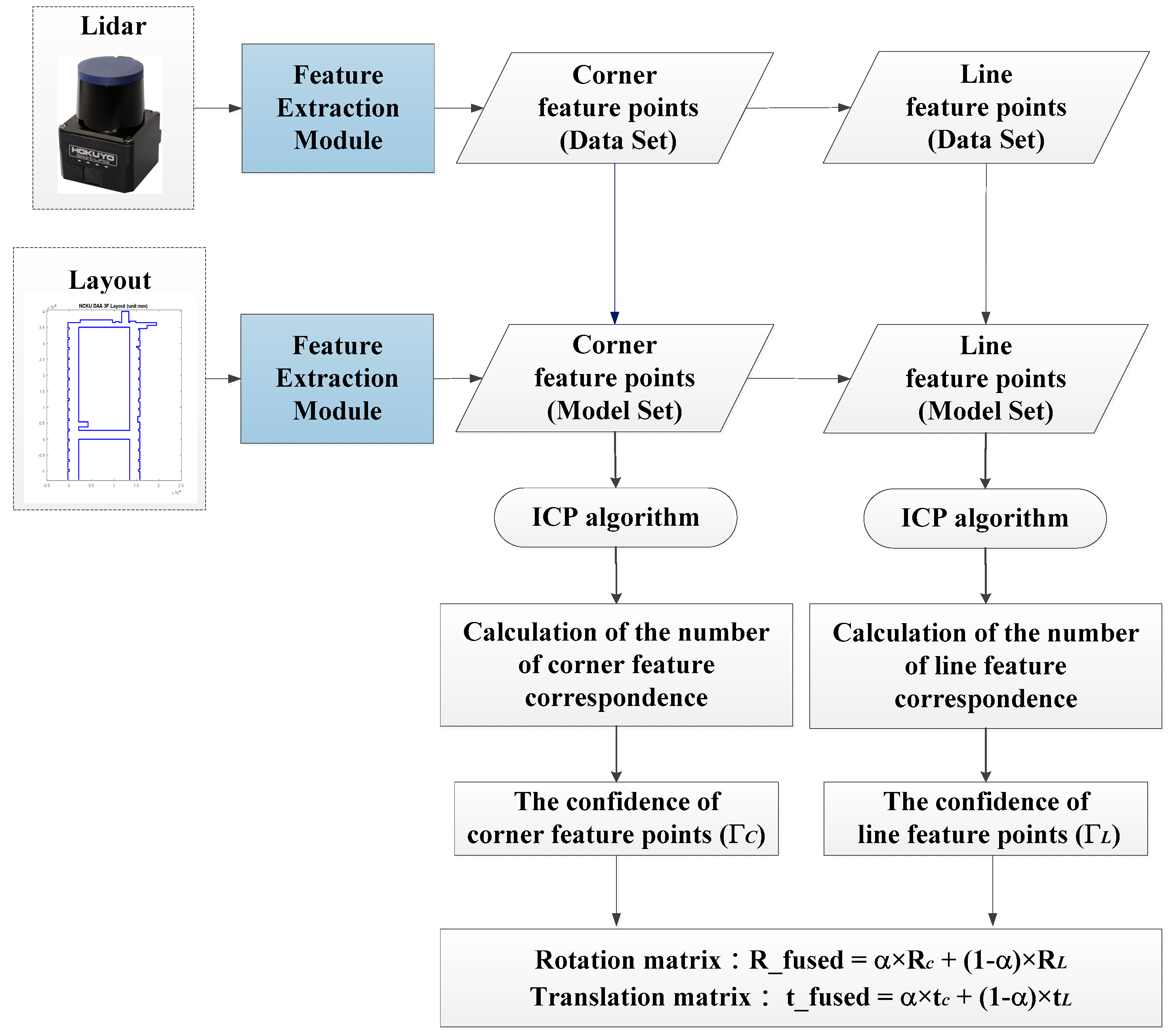

Since the WP-ICP has two ICP processes at the same time, it generates two pairs of robot pose, namely and . Therefore, it is desired to fuse these two poses to come out with a more confident pose estimate.

The criterion for the confidence evaluation is designed as follows:

where

represent the confidences and can be treated as fused weights for corner feature ICP and line feature ICP, respectively.

The final step is to calculate a fused pose estimate

as follows:

where the heading rotation angle is used to obtain the

.

Since the WP-ICP provides two sources of feature points, the real-time LiDAR scanning points are going to be separated into two groups including corners and lines. Each group is then matched with its corresponding features. Based on this parallel matching mechanism, serious mismatching can be avoided, resulting in improved localization stability and precision. The flow chart of the WP-ICP is illustrated in

Figure 7.

The practical benefits of the proposed WP-ICP include the following: (a) computation effort is reduced when KD-Trees are built and nearest point registration is applied; (b) correct point cloud registration significantly reduces ICP iterations, enabling fast robot pose estimate; (c) robot pose is determined by feature-based ICP fusion of two features (corner and line), which makes the estimate less sensitive to uncertain environments.

4. Experiments and Discussions

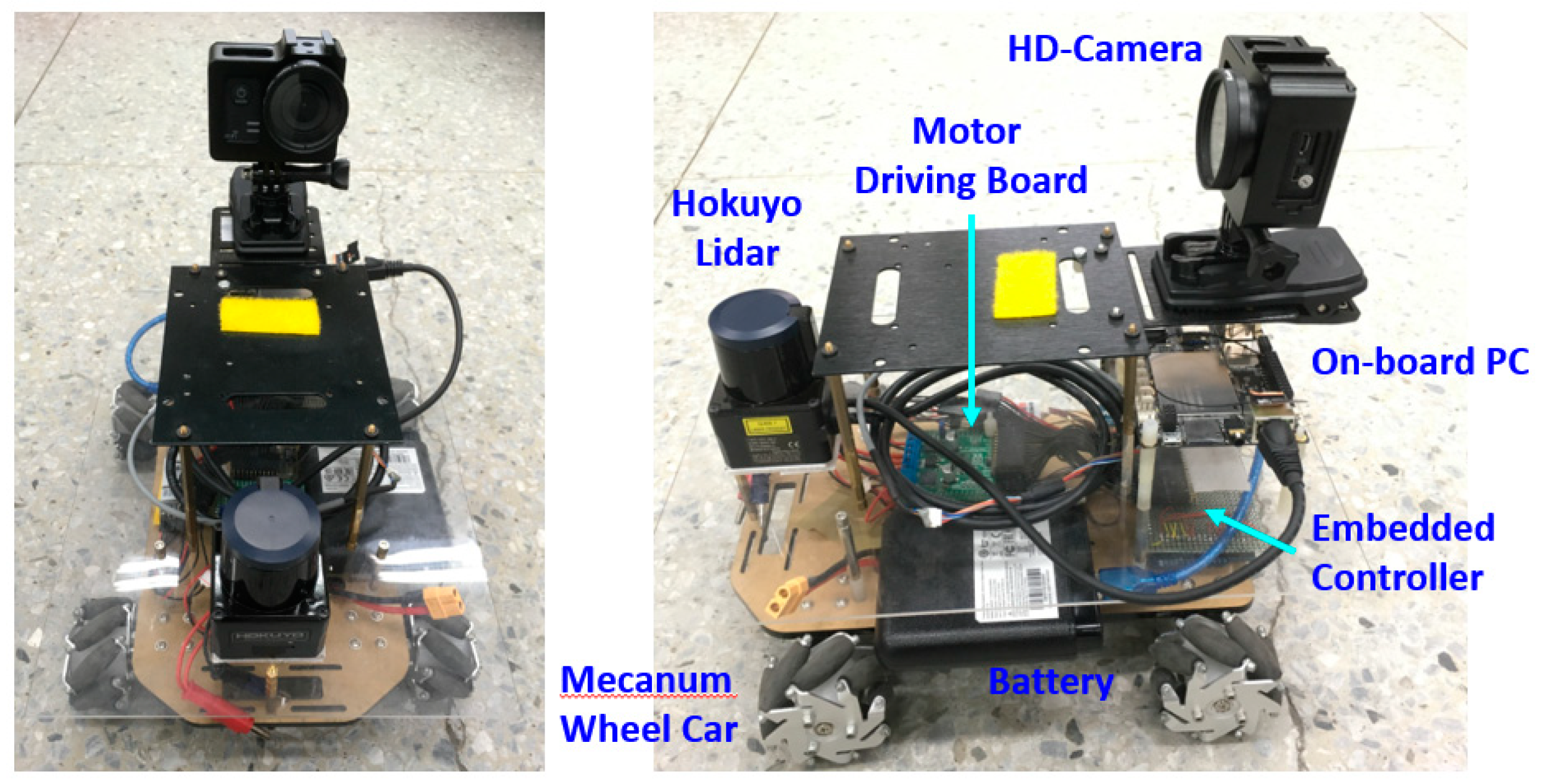

For the experiments, a Hokuyo UST-20LX Scanning Laser Rangefinder is used, where a 20 Hz scan rate and a 15 m scan distance are applied. The scanning angle resolution is 0.25°. Based on these settings, the maximum scanning points are 1081 point per scan; that is, 20 × 1081 = 21,620 points per second. The experiment system is shown in

Figure 8, which includes (1) a portable LiDAR module (the upper half part) and (2) a mecanum wheeled robot (the lower half part). In this work, since the LiDAR is considered as the single sensor, the robot vehicle is only taken as a moving platform. There is no communication between the LiDAR module and the vehicle. The maximum moving speed of the vehicle in the following experiments is restricted to 50 cm/s.

Based on the resolution and testing result, N in Equation (2) is set to 15. With regard to the ARC, clusters containing fewer than 5 points are considered as outliers and are removed before the WP-ICP is applied. The distance dc = 10 cm is used for the split and merge process. For each iteration, the weights for ICP will be set to zero if the distances between the points’ correspondences are greater than 50 cm. This threshold is determined in accordance with the maximum moving speed of the robot.

To ensure the WP-ICP algorithm is feasible, an experiment was firstly carried out in a clear environment with no obstacles, which is shown in

Figure 9. The area of the testing environment was about 5 m × 6 m. There are two experiments that were carried out in this environment. One is guiding the vehicle in a rectangular path and the other is moving the vehicle randomly.

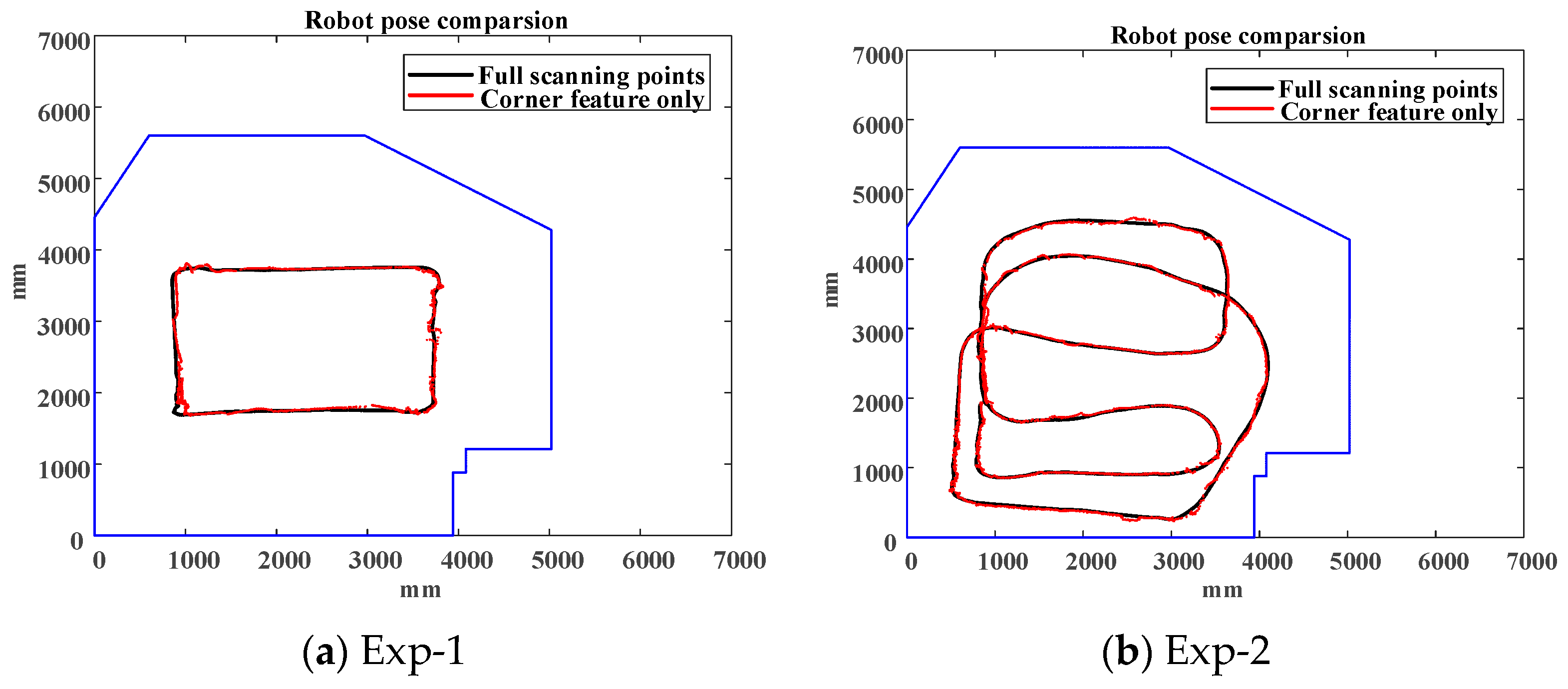

Considering full points based ICP (shown in the black line) result as the ground truth, using corner feature only (shown in the red line) is able to result in an accurate pose estimate in a clear environment as illustrated in

Figure 10.

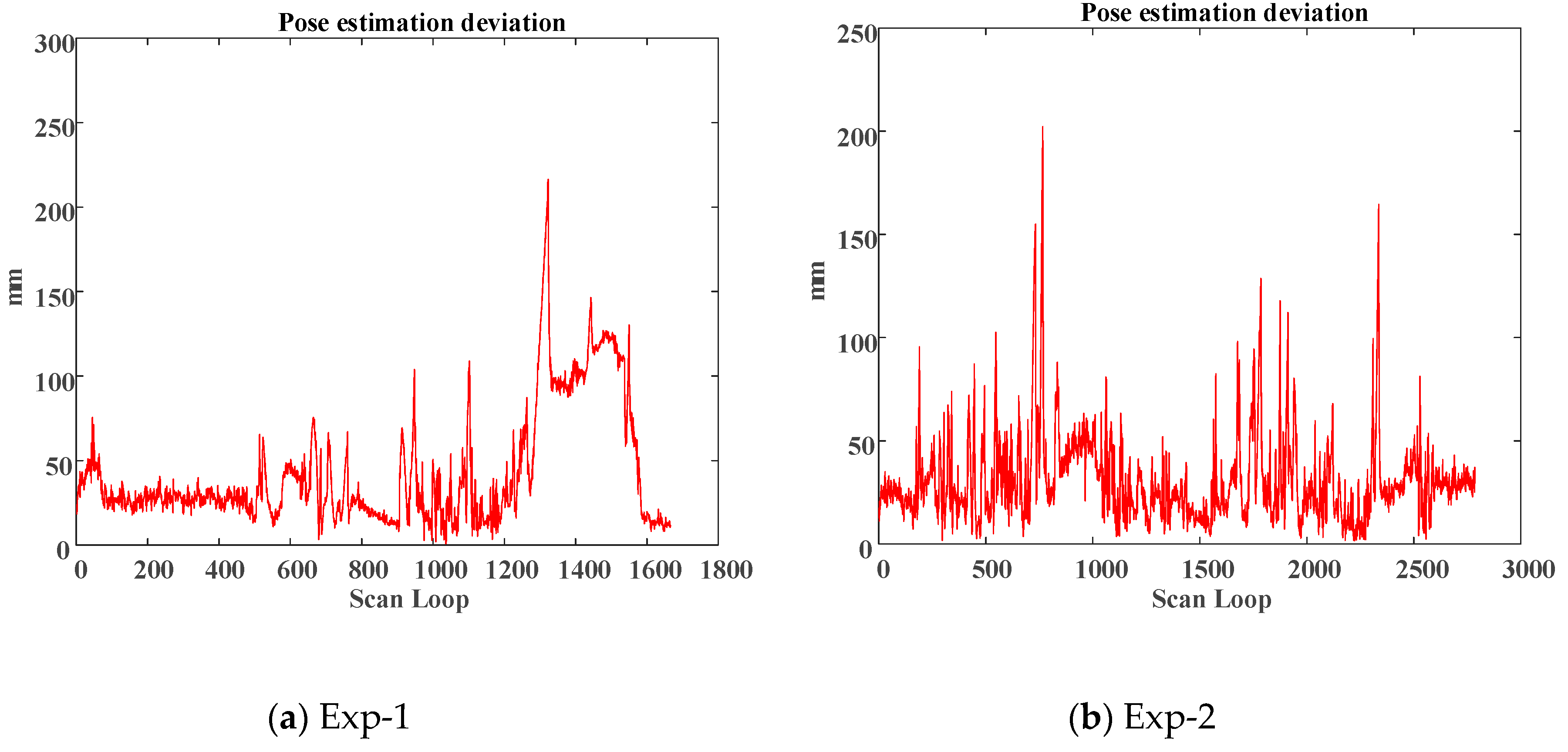

Figure 11 shows the deviation comparisons of the pose estimation and the average of deviations are 3.06786 and 4.29678 cm, respectively.

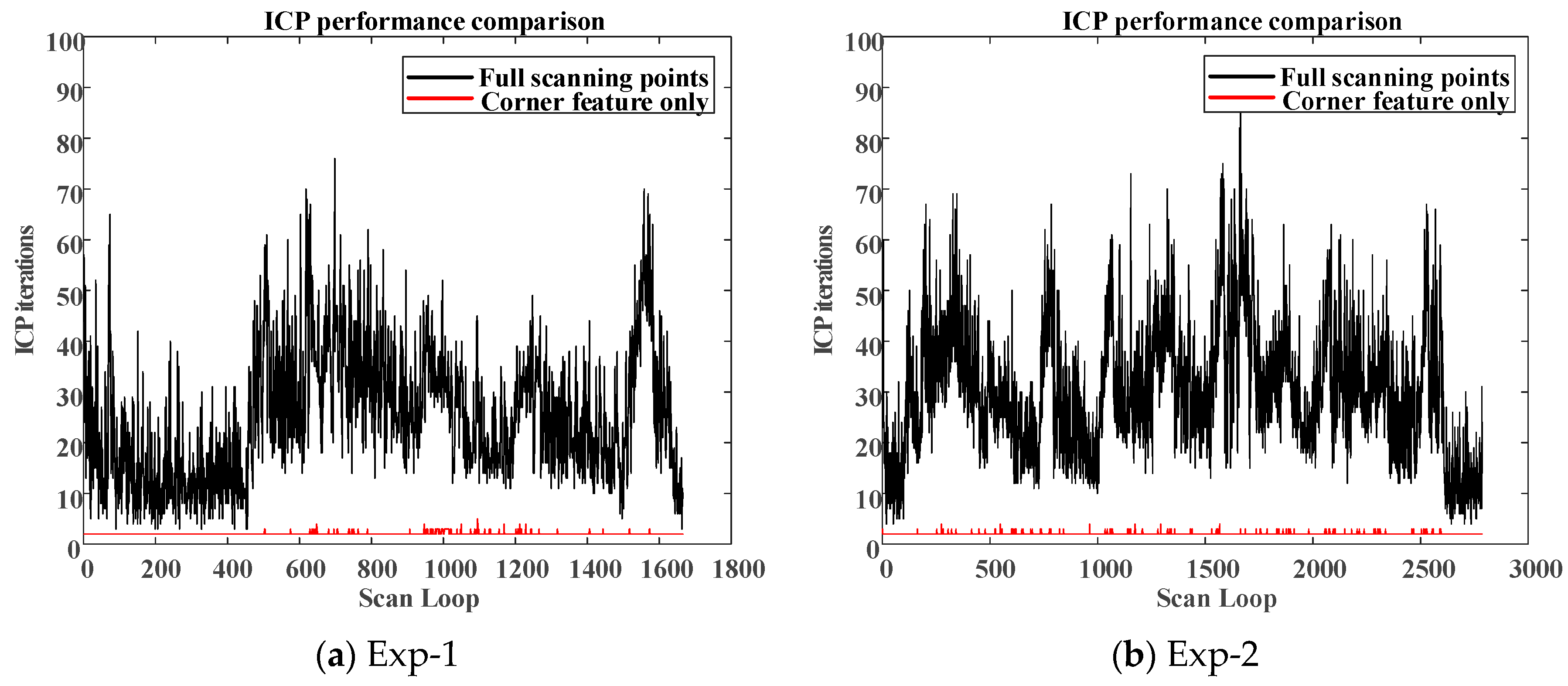

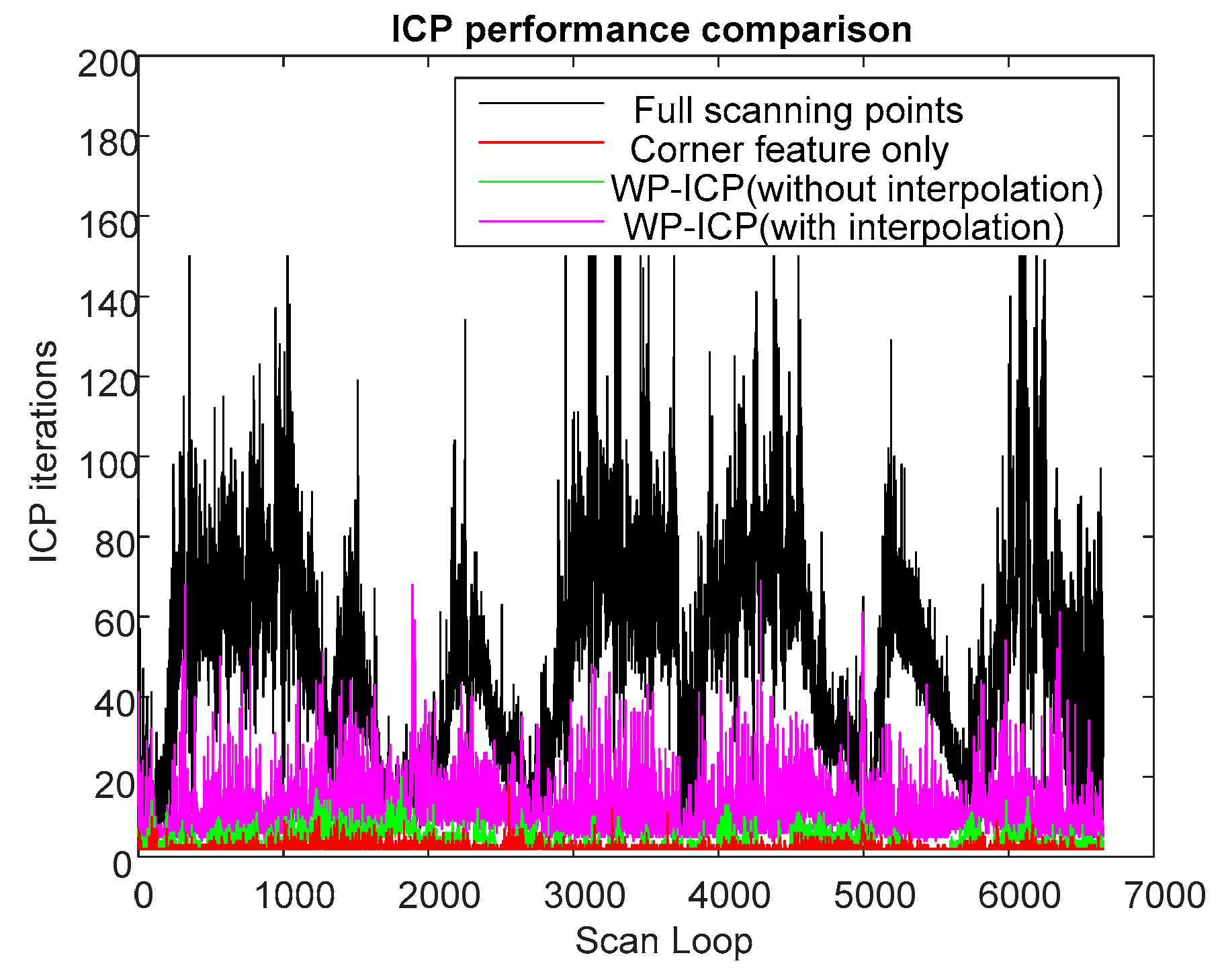

Moreover, to compare the real-time estimation capabilities between the full-points ICP and the corner-feature-based ICP, the total number of ICP iteration at each LiDAR scan loop is addressed. Less ICP iterations are helpful for real-time realization.

Figure 12 shows the number of ICP iterations between different algorithms for different experiments. It should be noted that, when using corner as the only feature, the maximum iteration loop in the ICP was no more than 4 (two iterations on average). However, using full-points ICP results in more than 25 iterations on average. In this environment, the WP-ICP was also applied. The localization performance is very close to the one conducted by the corner-based ICP. Therefore, the main advantage of the WP-ICP is not obvious under this clear environment.

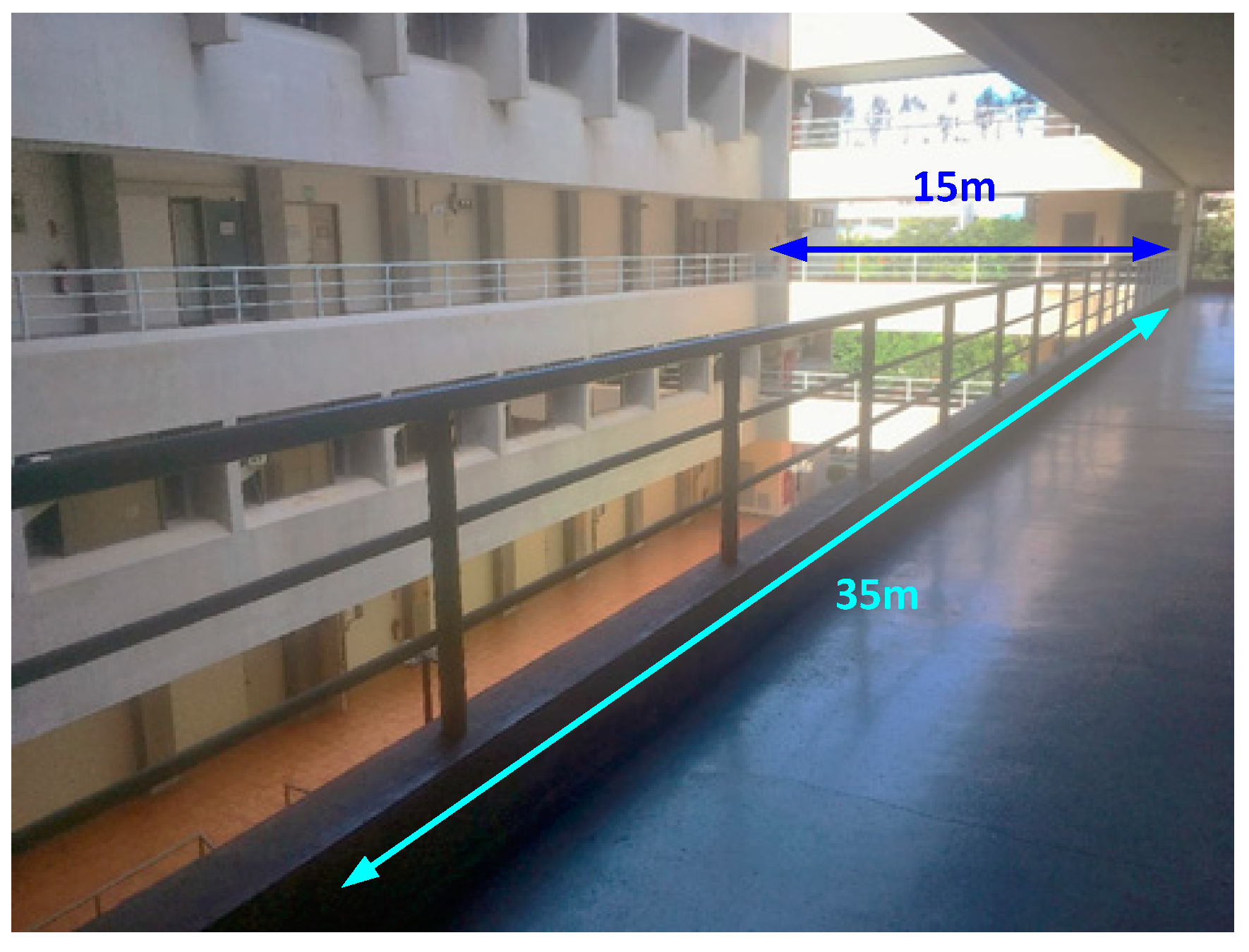

To illustrate the superior pose estimation robustness against the corner-based ICP, another experiment has been carried out on the 3rd floor of Department of Aeronautics and Astronautics, NCKU, shown in

Figure 13. The area is about 15 × 40 m

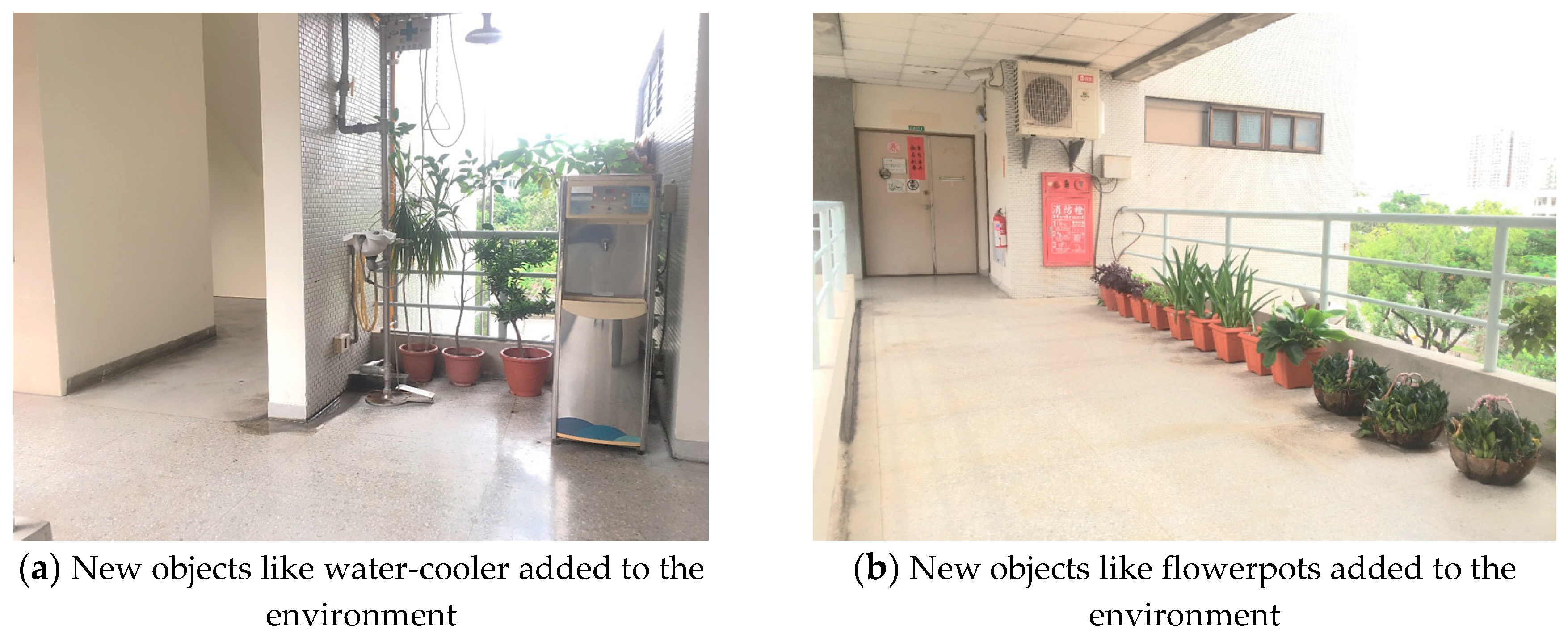

2 and contained different sized objects like flowerpots and water-cooler as shown in

Figure 14, which can be taken as unknown disturbances for post estimation. These objects were added later in the environment, and hence can be used to test the feasibility and robustness of the proposed WP-ICP algorithm in dynamic environments.

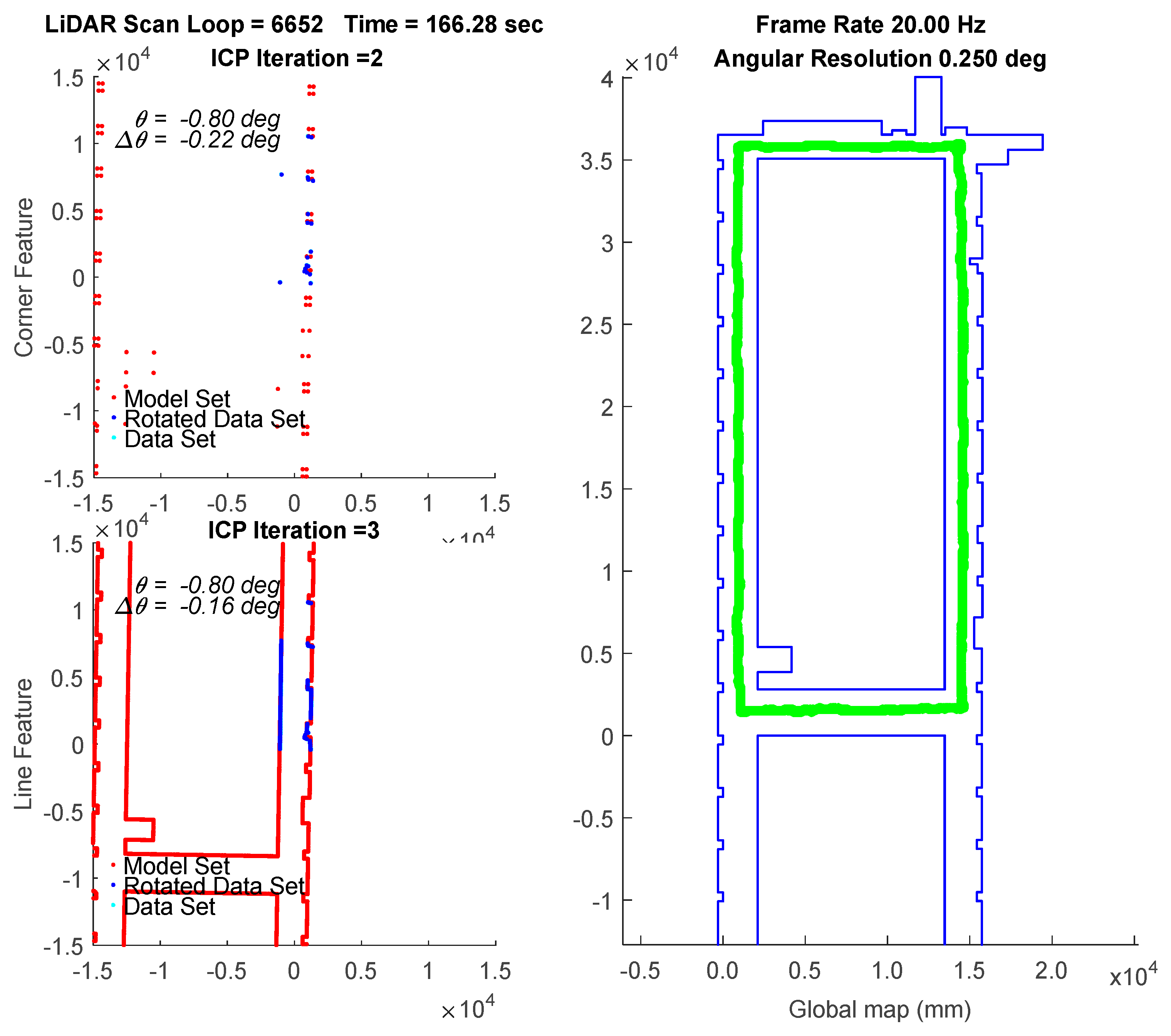

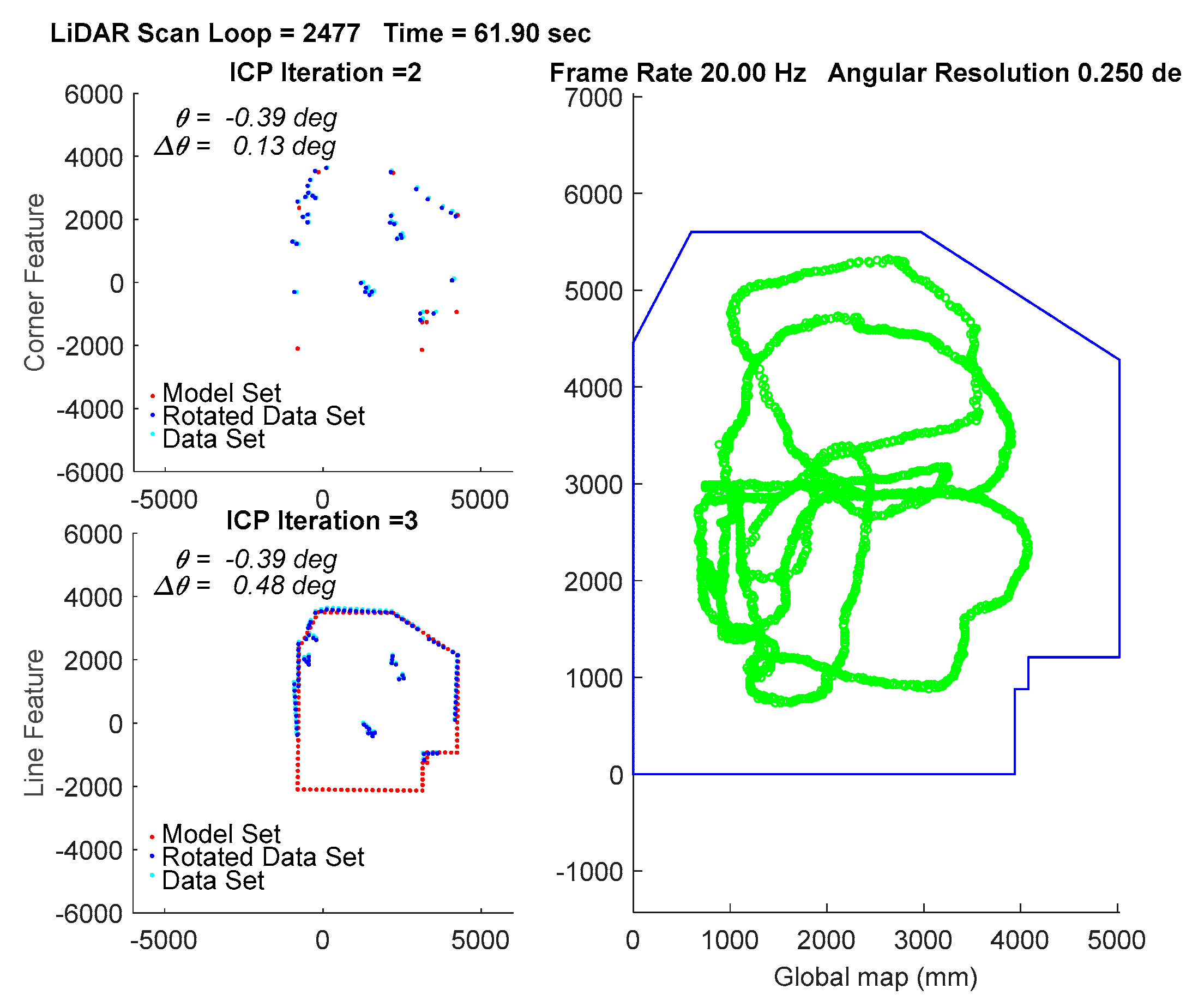

In the experiment, the traveling path of the vehicle goes counterclockwise on the 3rd floor. The transient localization behavior can refer to

Figure 15, where the upper left subplot and lower left subplot shows that all the points in data set (from a LiDAR scan) and a model set (from a layout) are already being labeled by corner features and line features, respectively. Those two features are fed into the parallel ICP process and finally being fused to a single robot pose. Facing the area that is different from layout and passing through a straight corridor, the result from utilizing corner feature points based ICP algorithm leads to apparent localization deviations.

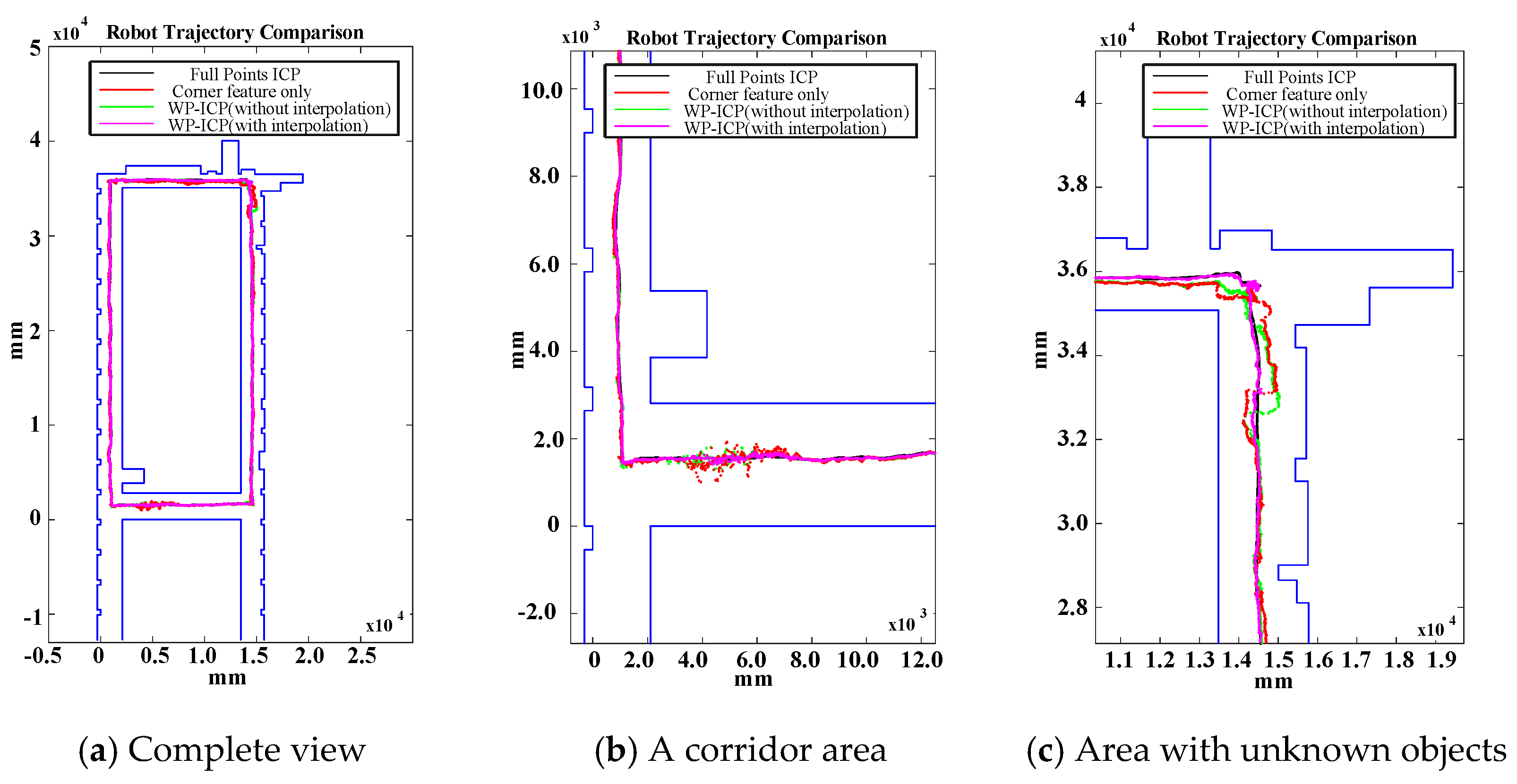

Figure 16 shows a long range localization results at DAA-3F under the use of different algorithms.

Examining

Figure 16 again, since WP-ICP provides both corner and line features as scan matching correspondences, theoretically the results are supposed to be better than those conducted by the corner-based ICP. However,

Figure 16b indicates that there are still estimation errors when passing through the corridor. It is because of fewer line features in the area. To further improve the estimation robustness, an interpolation on the line features is further integrated into the WP-ICP. Note that the interpolation is used to increase the number of line feature points for every 10 cm. The result (i.e., the purple line) shows a good estimate when utilizing the WP-ICP algorithm with interpolation. Finally, the improvement can also be found in locations subject to static unknown objects as shown in

Figure 16c, where the corresponding snapshots are shown in

Figure 14a,b, respectively.

The results of WP-ICP with interpolation demonstrate the robustness against the external environment uncertainty. The details of the computation efficiency under different algorithms are depicted in

Figure 17. Compared to the full point ICP, the use of corner features only can improve speed by about 50 times on average; the use of WP-ICP (with interpolation) can improve speed by 5 times on average. However, applying corner features only could sometimes lead to unstable estimates. Therefore, WP-ICP makes a compromise between computation speed and localization accuracy.

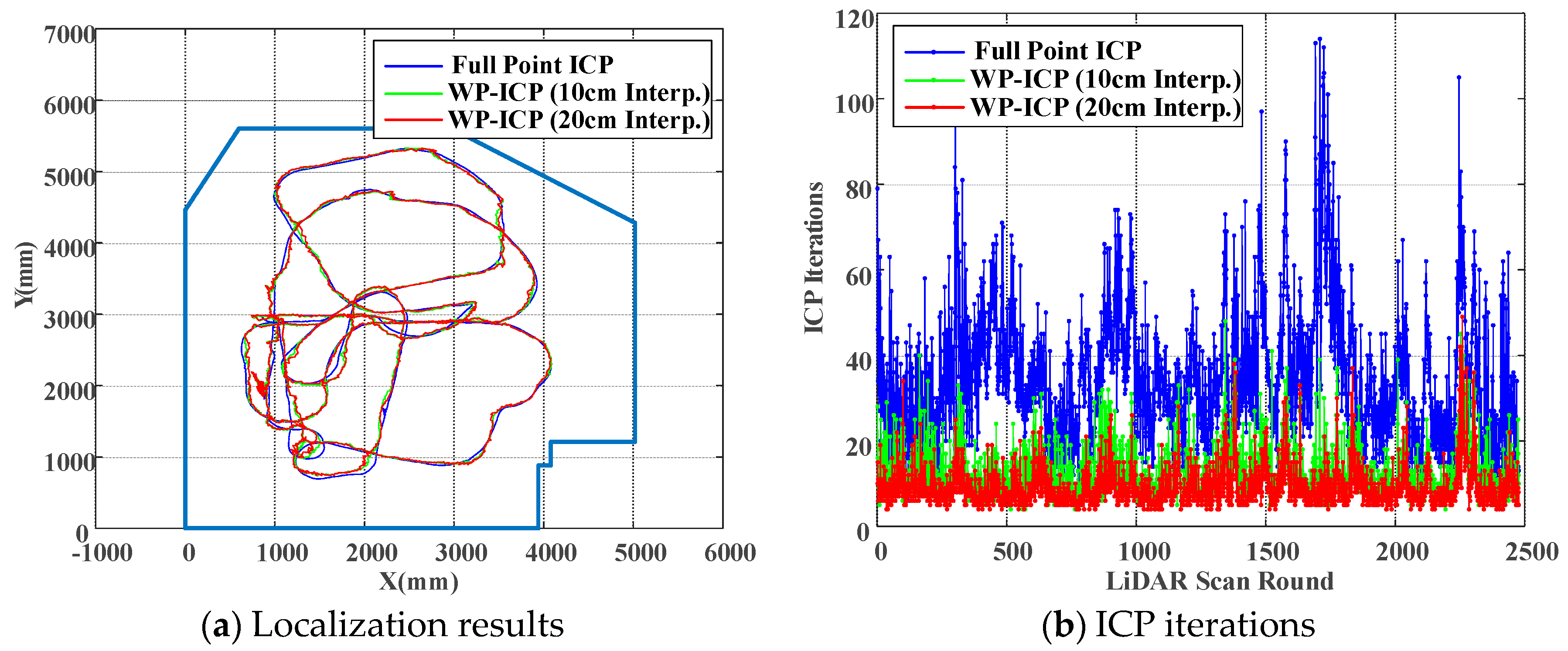

Finally, it is worth discussing the localization performance under different interpolation sizes when applying the WP-ICP. In this study, the layouts of the localization environment are given in terms of few discrete data points. Therefore, the resolution of the layouts can be further enhanced by using interpolation. For each LiDAR scan, the interpolation can be achieved by manipulating the raw data directly. The simplest way is to apply a divider on each segment.

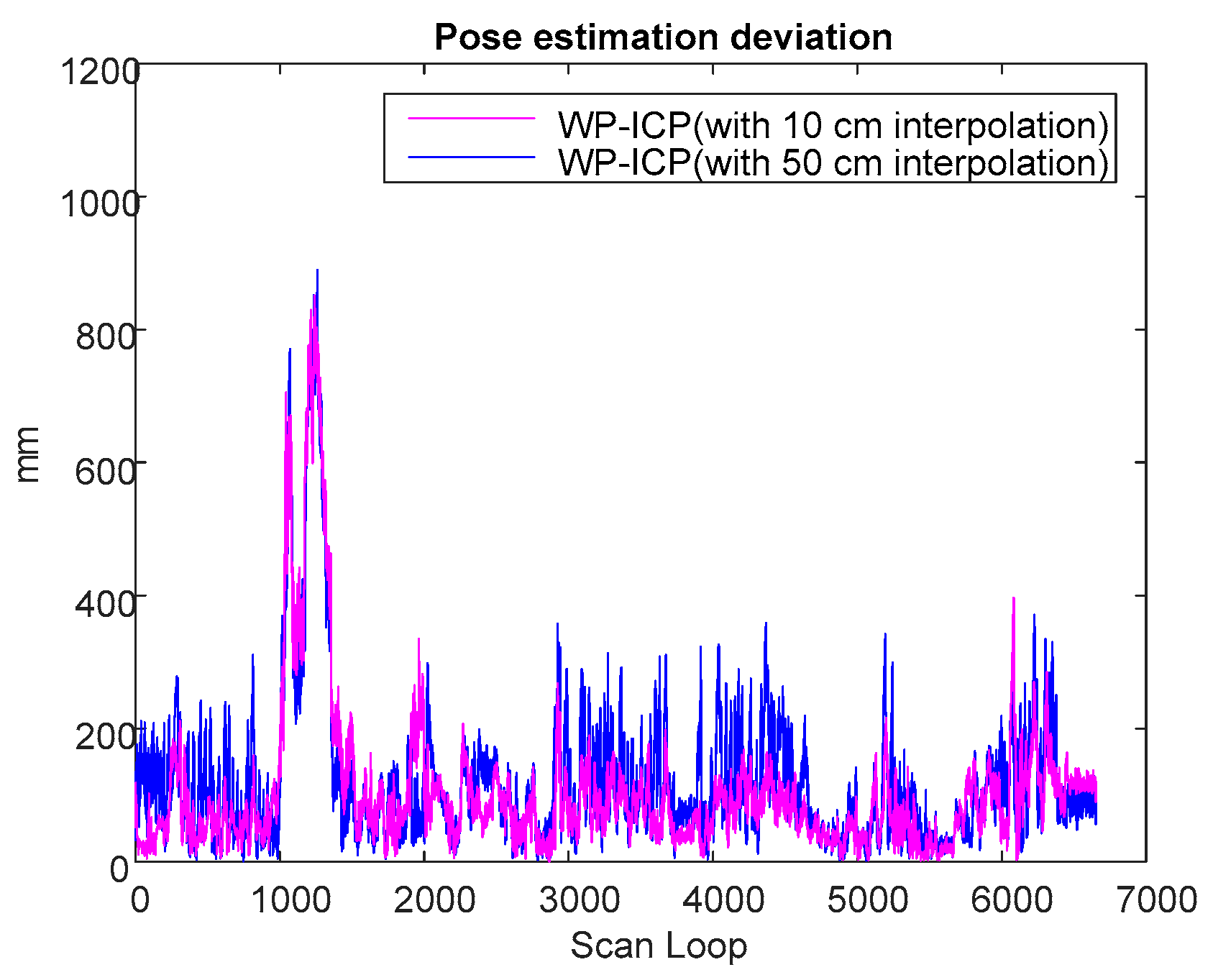

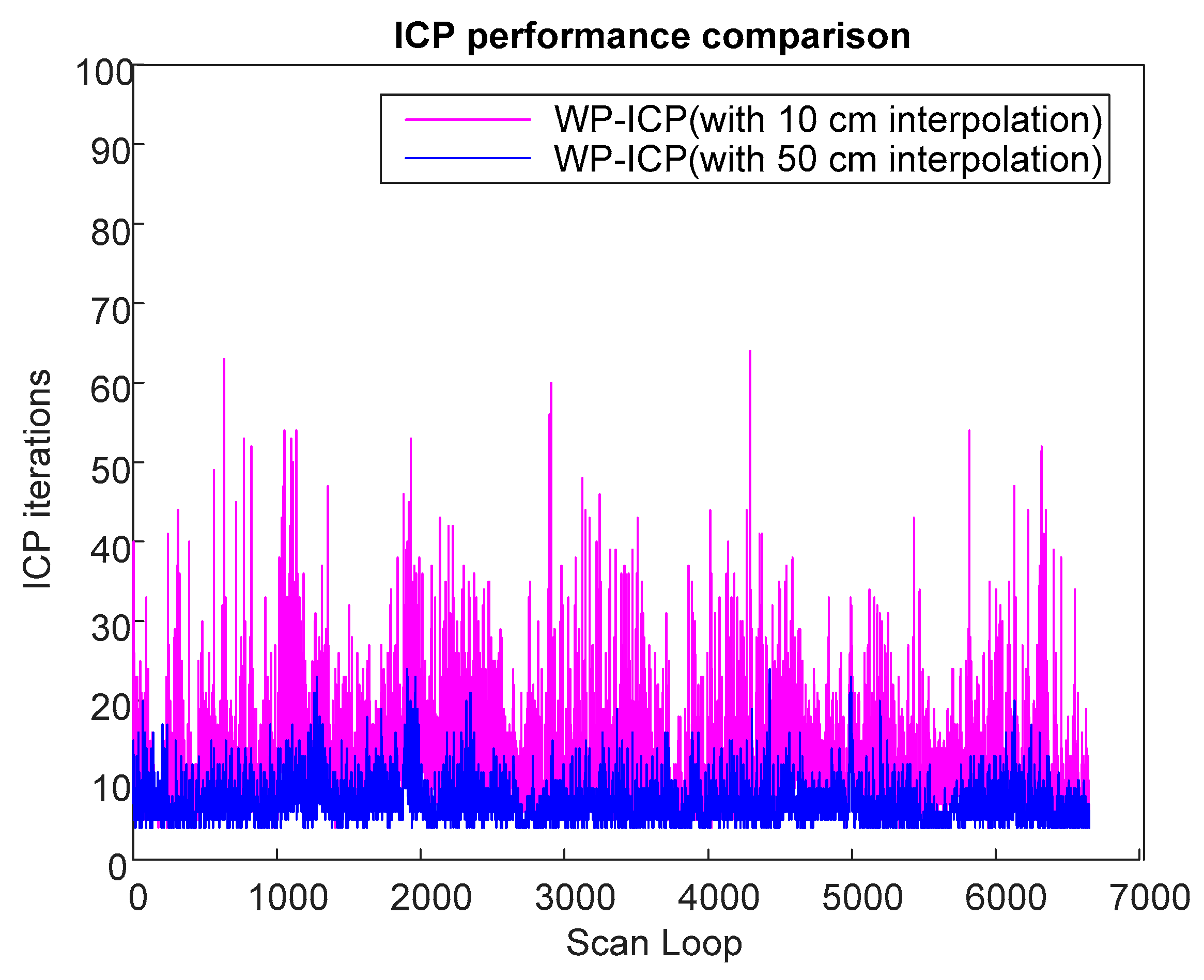

Based on previous experiments, it is obvious that WP-ICP with interpolation has the minimum error compared with the corner-feature-based ICP and the pure WP-ICP. In the following, 10 and 50 cm interpolation resolutions are further considered for the same DAA-3F experiment.

Figure 18 verifies that increasing the size of the interpolation resolution does increase the localization error. However, the number of ICP iterations can be significantly reduced. The average iterations of WP-ICP with 50 cm and 10 cm interpolations are 7.063 and 11.7577, respectively. As a result, the resolution of the interpolation can be taken as a trade-off design factor between the computation efficiency and the localization precision.

A comparison study from the viewpoint of the ICP iteration is summarized in

Table 1. It is clear that the ICP iteration performances obtained by the corner-based ICP and WP-ICP are noticeably better than full-points ICP. To overcome the challenge of unknown objects during point cloud matching, WP-ICP with interpolation was further introduced. It was shown that the localization robustness can be significantly enhanced. Although the number of ICP iterations increases, the increment is still acceptable for real-time consideration.

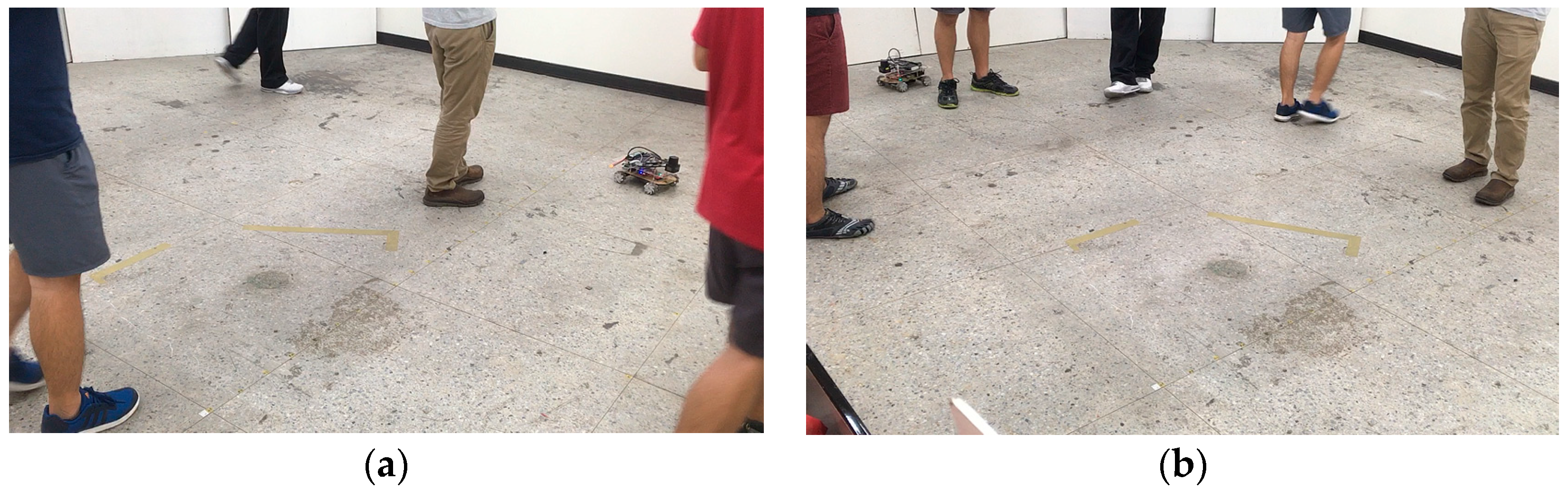

Finally, to further demonstrate the robustness of the proposed WP-ICP, we considered another experiment in the same environment (as illustrated in

Figure 19) but with five people walking around the vehicle. The practical scenes are shown in

Figure 20. Firstly, to generate a ground truth for comparison, the full-points ICP is considered, which leads to good localization results, as illustrated in

Figure 21. Due to many unknown moving objects that do not exist in the given layout, those obstacles result in many outliers. Under this condition, using corner features only would cause divergent localization, as demonstrated in

Figure 22. On the contrary, as shown in

Figure 23, applying the WP-ICP together with interpolation presents satisfactory localization results without inducing divergent behavior, even in the presence of unknown moving objects. For the WP-ICP under different interpolation resolutions, the results are depicted in

Figure 24a,b, respectively. The performance details are given in

Table 2. Experiments verify that the use of WP-ICP can withstand dynamic uncertainties as well as produce satisfactory localization result with fewer iterations.