Reliability of Sleep Measures from Four Personal Health Monitoring Devices Compared to Research-Based Actigraphy and Polysomnography

Abstract

:1. Introduction

2. Materials

2.1. Actigraphy

2.2. Polysomnography

3. Methods

3.1. Participants

3.2. Procedure

3.3. Analysis

4. Results

4.1. Device Success and Failure

4.2. Sleep Characteristics

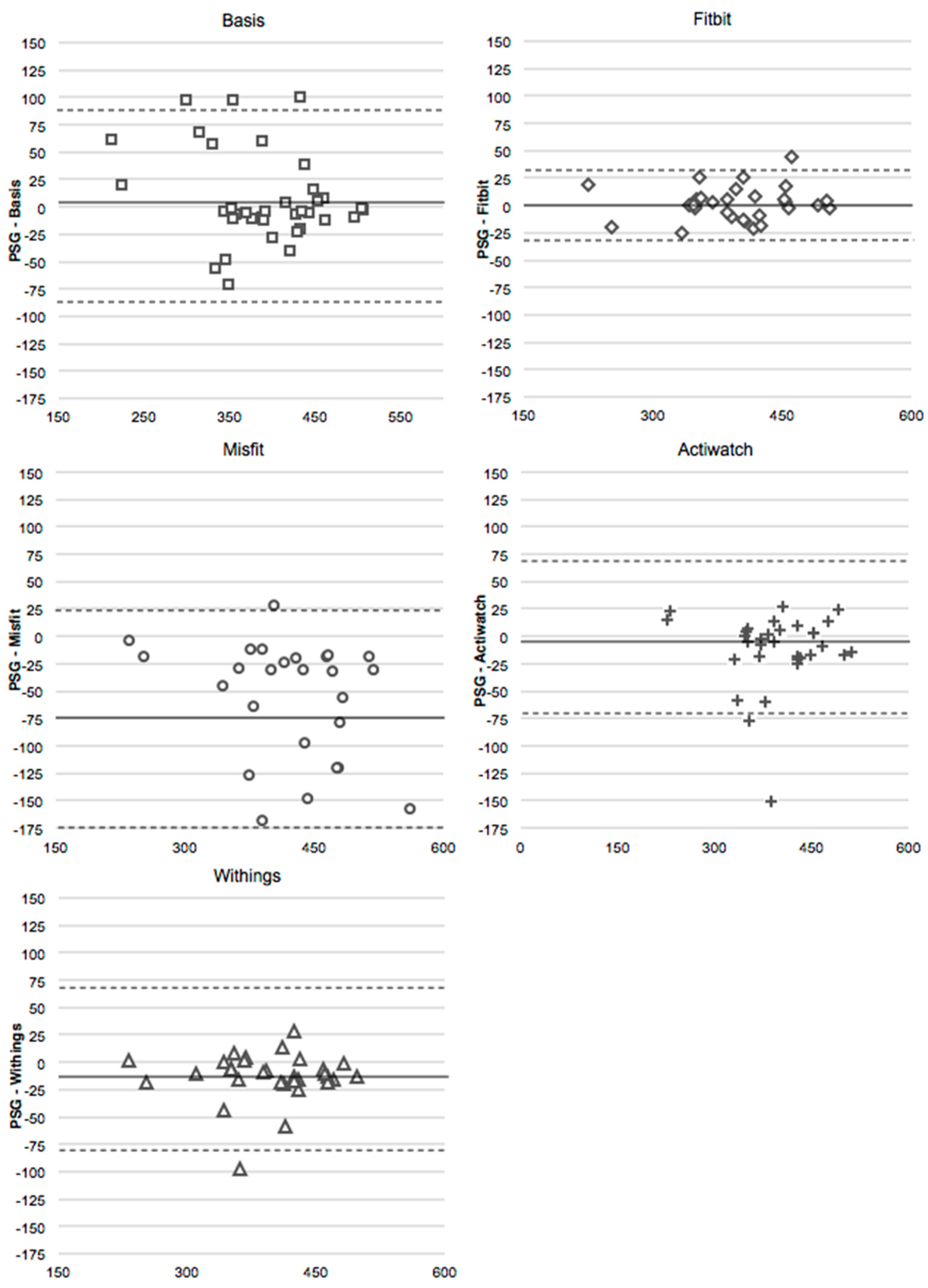

4.3. Total Sleep Time

4.4. Sleep Efficiency

4.5. Deep Sleep

4.6. Light Sleep

5. Discussion

Acknowledgments

Author contributions

Conflicts of Interest

References

- DiClemente, C.C.; Marinilli, A.S.; Singh, M.; Bellino, L.E. The role of feedback in the process of health behavior change. Am. J. Health Behav. 2001, 25, 217–227. [Google Scholar] [CrossRef] [PubMed]

- Munson, S.A.; Consolvo, S. Exploring goal-setting, rewards, self-monitoring, and sharing to motivate physical activity. In Proceedings of 2012 6th International Conference on Pervasive Computing Technologies for Healthcare (PervasiveHealth), San Diego, CA, USA, 21–24 May 2012; pp. 25–32.

- Tully, M.A.; McBride, C.; Heron, L.; Hunter, R.F. The validation of Fibit Zip™ physical activity monitor as a measure of free-living physical activity. BMC Res. Notes 2014, 7, 1–5. [Google Scholar] [CrossRef] [PubMed]

- Patel, M.S.; Asch, D.A.; Volpp, K.G. Wearable devices as facilitators, not drivers, of health behavior change. JAMA 2015, 313, 459–460. [Google Scholar] [CrossRef] [PubMed]

- Ferguson, T.; Rowlands, A.V.; Olds, T.; Maher, C. The validity of consumer-level, activity monitors in healthy adults worn in free-living conditions: A cross-sectional study. Int. J. Behav. Nutr. Phys. Act. 2015, 12, 42. [Google Scholar] [CrossRef] [PubMed]

- Kooiman, T.J.; Dontje, M.L.; Sprenger, S.R.; Krijnen, W.P.; van der Schans, C.P.; de Groot, M. Reliability and validity of ten consumer activity trackers. BMC Sports Sci. Med. Rehabil. 2015, 7. [Google Scholar] [CrossRef] [PubMed]

- Kosmadopoulos, A.; Sargent, C.; Darwent, D.; Zhou, X.; Roach, G.D. Alternatives to polysomnography (PSG): A validation of wrist actigraphy and a partial-PSG system. Behav. Res. Methods 2014, 46, 1032–1041. [Google Scholar] [CrossRef] [PubMed]

- Sadeh, A. The role and validity of actigraphy in sleep medicine: An update. Sleep Med. Rev. 2011, 15, 259–267. [Google Scholar] [CrossRef] [PubMed]

- Berger, A.M.; Wielgus, K.K.; Young-McCaughan, S.; Fischer, P.; Farr, L.; Lee, K.A. Methodological challenges when using actigraphy in research. J. Pain Symptom Manag. 2008, 36, 191–199. [Google Scholar] [CrossRef] [PubMed]

- Iber, C.; Ancoli-Israel, S.; Chesson, A.L., Jr.; Quan, S.F. The AASM Manual for the Scoring of Sleep and Associated Events: Rules Terminology and Technical Specifications, 1st ed.; AASM: Westchester, IL, USA, 2007. [Google Scholar]

- Ohayon, M.M.; Carskadon, M.A.; Guilleminault, C.; Vitiello, M.V. Meta-analysis of quantitative sleep parameters from childhood to old age in health individuals: Developing normative sleep values across the human lifespan. Sleep 2004, 27, 1255–1273. [Google Scholar] [PubMed]

- Marino, M.; Li, Y.; Rueschman, M.N.; Winkelman, J.W.; Ellenbogen, J.M.; Solet, J.M.; Dulin, H.; Berkman, L.F.; Buxton, O.M. Measuring sleep: Accuracy, sensitivity, and specificity of wrist actigraphy compared to polysomnography. Sleep 2013, 36, 1747–1755. [Google Scholar] [CrossRef] [PubMed]

- Cruse, D.; Thibaut, A.; Demertzi, A.; Nantes, J.C.; Bruno, M.A.; Gosseries, O.; Vanhaudenhuyse, A.; Bekinschtein, T.A.; Owen, A.M.; Laureys, S. Actigraphy assessments of circadian sleep-wake cycles in the Vegetative and Minimally Conscious States. BMC Med. 2013, 11. [Google Scholar] [CrossRef] [PubMed]

- Kline, C.E.; Crowley, E.P.; Ewing, G.B.; Burch, J.B.; Blair, S.N.; Durstine, J.L.; Davis, J.M.; Youngstedt, S.D. The effect of exercise training on obstructive sleep apnea and sleep quality: A randomized controlled trial. Sleep 2011, 34, 1631–1640. [Google Scholar] [CrossRef] [PubMed]

- Morgenthaler, T.; Coleman, M.D.; Lee-Chiong, M.D.; Pancer, D.D.S. Practice parameters for the use of actigraphy in the assessment of sleep and sleep disorders: An updated for 2007. Sleep 2007, 30, 519–529. [Google Scholar] [PubMed]

- Montgomery-Downs, H.E.; Insana, S.P.; Bond, J.A. Movement toward a novel activity monitoring device. Sleep Breath 2012, 16, 913–917. [Google Scholar] [CrossRef] [PubMed]

- Rosenberger, M.E.; Buman, M.P.; Haskell, W.L.; McConnell, M.V.; Carstensen, L.L. 24 Hours of Sleep, Sedentary Behavior, and Physical Activity with Nine Wearable Devices. Med. Sci. Sports Exerc. 2016, 48, 457–465. [Google Scholar] [CrossRef] [PubMed]

- Evenson, K.R.; Goto, M.M.; Furberg, R.D. Systematic review of the validity and reliability of consumer-wearable activity trackers. Int. J. Behav. Nutr. Phys. Act. 2015, 12, 1–22. [Google Scholar] [CrossRef] [PubMed]

- Baroni, A.; Bruzzese, J.M.; Di Bartolo, C.A.; Shatkin, J.P. Fitbit Flex: An unreliable device for longitudinal sleep measures in a non-clinical population. Sleep Breath 2015, 1–2. [Google Scholar] [CrossRef] [PubMed]

- Genzel, L.; Kroes, M.C.W.; Dresler, M.; Battaglia, F.P. Light sleep versus slow wave sleep in memory consolidation: A question of global versus local processes? Trends Neurosci. 2014, 37, 10–19. [Google Scholar] [CrossRef] [PubMed]

- Fietze, I.; Penzel, T.; Partinen, M.; Sauter, J.; Küchler, G.; Suvoro, A.; Hein, H. Actigraphy combined with EEG compared to polysomnography in sleep apnea patients. Physiol. Meas. 2015, 36, 385–396. [Google Scholar] [CrossRef] [PubMed]

- Lechinger, J.; Heib, D.P.J.; Grumber, W.; Schabus, M.; Klimesch, W. Heartbeat-related EEG amplitude and phase modulations from wakefulness to deep sleep: Interactions with sleep spindles and slow oscillations. Psychophysiology 2015, 52, 1441–1450. [Google Scholar] [CrossRef] [PubMed]

- Shambroom, J.R.; Fabregas, S.E.; Johnstone, J. Validation of an automated wireless system to monitor sleep in healthy adults. J. Sleep Res. 2012, 21, 221–230. [Google Scholar] [CrossRef] [PubMed]

- Cellini, N.; Buman, M.P.; McDevitt, E.A.; Ricker, A.A.; Mednick, S.C. Direct comparison of two actigraphy devices with polysomnographically recorded naps in healthy young adults. Chronobiol. Int. 2013, 30, 691–698. [Google Scholar] [CrossRef] [PubMed]

| TST | SE | Light | Deep | |

|---|---|---|---|---|

| Polysomnography | Time in bed–time awake | TST/time in bed | nREM1 + nREM2 | SWS + REM |

| Actiwatch | Time in bed–time awake | TST/time in bed | - | - |

| Basis | Asleep | Sleep Score | Light | Deep + REM |

| Fitbit | Actual Sleep Time | Actual Sleep Time/You Were in Bed for | - | - |

| Misfit | Light + Restful | Light + Restful/Light + Restful + Awake | Light | Restful |

| Withings | Sleep Duration | Sleep Duration/In Bed Duration | Light | Deep |

| Gross Mis-Estimation | Miscellaneous | User Error | |

|---|---|---|---|

| Actiwatch | 1 | 4 | - |

| Basis | 1 | 3 | - |

| Fitbit | 1 | 0 | 9 |

| Misfit | 8 | 7 | - |

| Withings | 1 | 0 | 3 |

| Sleep Parameter | Mean ± SD |

|---|---|

| Time in bed (min) | 465.98 ± 96.87 |

| Total sleep time (min) | 397.44 ± 63.63 |

| SE (%) | 84.61 ± 18.15 |

| WASO (min) | 32.22 ± 43.60 |

| Sleep onset latency (min) | 35.09 ± 67.78 |

| nREM1 (%) | 9.58 ± 3.64 |

| nREM2 (%) | 47.79 ± 8.20 |

| SWS (%) | 23.20 ± 7.51 |

| REM (%) | 19.43 ± 5.03 |

| TST | SE | Light | Deep | |||||

|---|---|---|---|---|---|---|---|---|

| Unadj. | Adj. | Unadj. | Adj. | Unadj. | Adj. | Unadj. | Adj. | |

| Actiwatch | –1.62 | −1.40 | −1.62 | −1.42 | - | - | - | - |

| Basis | −1.41 | −1.41 | −2.56 | −2.37 | −2.22 | −2.22 | −1.21 | −1.51 |

| Fitbit | −0.37 | −0.37 | −0.68 | −0.68 | - | - | - | - |

| Misfit | −4.21 | −3.62 | −4.01 | −3.90 | −4.04 | −3.58 | −4.68 | −4.35 |

| Withings | −3.84 | −3.76 | −3.49 | −3.84 | −3.21 | −2.89 | −4.23 | −4.25 |

| TST | SE | Light | Deep | |||||

|---|---|---|---|---|---|---|---|---|

| Unadj. | Adj. | Unadj. | Adj. | Unadj. | Adj. | Unadj. | Adj. | |

| Actiwatch | 0.87 | 0.94 | 0.30 # | 0.35 | - | - | - | - |

| Basis | 0.84 | 0.84 | 0.26 | 0.28 | 0.30 # | 0.30 # | 0.27 | 0.28 # |

| Fitbit | 0.97 | 0.97 | 0.21 | 0.21 | - | - | - | - |

| Misfit | 0.76 | 0.87 | −0.20 | −0.07 | 0.31 # | 0.40 | 0.20 | 0.19 |

| Withings | 0.84 | 0.94 | 0.17 | 0.21 | 0.34 | 0.39 | 0.36 | 0.36 |

| TST | SE | Light | Deep | |||||

|---|---|---|---|---|---|---|---|---|

| Avg. | Max. | Avg. | Max. | Avg. | Max. | Avg. | Max. | |

| Actiwatch | 5.82 | 48.31 | 10.37 | 128.21 | - | - | - | - |

| Basis | 7.82 | 28.33 | 22.22 | 69.89 | 23.90 | 68.97 | 35.02 | 133.11 |

| Fitbit | 2.97 | 8.23 | 11.57 | 146.15 | - | - | - | - |

| Misfit | 15.26 | 54.90 | 13.83 | 89.90 | 33.82 | 72.66 | 69.58 | 155.94 |

| Withings | 6.00 | 57.30 | 11.43 | 143.59 | 24.06 | 93.50 | 37.202 | 107.75 |

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mantua, J.; Gravel, N.; Spencer, R.M.C. Reliability of Sleep Measures from Four Personal Health Monitoring Devices Compared to Research-Based Actigraphy and Polysomnography. Sensors 2016, 16, 646. https://doi.org/10.3390/s16050646

Mantua J, Gravel N, Spencer RMC. Reliability of Sleep Measures from Four Personal Health Monitoring Devices Compared to Research-Based Actigraphy and Polysomnography. Sensors. 2016; 16(5):646. https://doi.org/10.3390/s16050646

Chicago/Turabian StyleMantua, Janna, Nickolas Gravel, and Rebecca M. C. Spencer. 2016. "Reliability of Sleep Measures from Four Personal Health Monitoring Devices Compared to Research-Based Actigraphy and Polysomnography" Sensors 16, no. 5: 646. https://doi.org/10.3390/s16050646

APA StyleMantua, J., Gravel, N., & Spencer, R. M. C. (2016). Reliability of Sleep Measures from Four Personal Health Monitoring Devices Compared to Research-Based Actigraphy and Polysomnography. Sensors, 16(5), 646. https://doi.org/10.3390/s16050646