Abstract

Variational inference is a powerful framework, used to approximate intractable posteriors through variational distributions. The de facto standard is to rely on Gaussian variational families, which come with numerous advantages: they are easy to sample from, simple to parametrize, and many expectations are known in closed-form or readily computed by quadrature. In this paper, we view the Gaussian variational approximation problem through the lens of gradient flows. We introduce a flexible and efficient algorithm based on a linear flow leading to a particle-based approximation. We prove that, with a sufficient number of particles, our algorithm converges linearly to the exact solution for Gaussian targets, and a low-rank approximation otherwise. In addition to the theoretical analysis, we show, on a set of synthetic and real-world high-dimensional problems, that our algorithm outperforms existing methods with Gaussian targets while performing on a par with non-Gaussian targets.

1. Introduction

Representing uncertainty is a ubiquitous problem in machine learning. Reliable uncertainties are key for decision making, especially in contexts where the trade-off between exploitation and exploration plays a central role, such as Bayesian optimization [1], active learning [2], and reinforcement learning [3]. While Bayesian inference is a principled tool to provide uncertainty estimation, computing posterior distributions is intractable for many problems of interest. Most sampling methods struggle to scale up to large datasets [4], while the diagnosis of convergence is not always straightforward [5]. On the other hand, Variational Inference (VI) methods can rely on well-understood optimization techniques and scale well to large datasets, at the cost of an approximation quality depending heavily on the assumptions made. The Gaussian family is by far the most popular variational approximation used in VI [6,7]. This is for several reasons. First, Gaussian variational families are easy to sample from, reparametrize, and marginalize. Second, they are easily amenable to diagonal covariance approximations, making them scalable to high dimensions. Third, most expectations are either easily computable by quadrature or Monte Carlo integration, or known in closed-form.

A large body of work covers different approaches to optimize the Variational Gaussian Approximation (VGA), with the speed of convergence and the scalability in dimensions as the main concerns. From the perspective of convergence speed, the major bottleneck when computing gradients with stochastic estimators is the estimator variance [8]. Particle-based methods with deterministic paths do not have this issue, and have been proven to be highly successful in many applications [9,10,11]. However, can we use a particle-based algorithm to compute a VGA? If so, what are its properties and is it competitive with other VGA methods?

In this paper, we attempt to answer these questions by introducing the Gaussian Particle Flow (GPF), a framework to approximate a Gaussian variational distribution with particles. GPF is derived from a continuous-time flow, where the necessary expectations over the evolving densities are approximated by particles. The complexity of the method grows quadratically with the number of particles but linearly with the dimension, remaining compatible with other approximations such as structured mean-field approximations. Using the same dynamics, we also derive a stochastic version of the algorithm, Gaussian Flow (GF). To show convergence, we prove the decrease in an empirical version of the free energy that is valid for a finite number of particles. For the special case of D–dimensional Gaussian target densities, we show that particles are enough to obtain convergence to the true distribution. We also find, for this case, that convergence is exponentially fast. Finally, we compare our approach with other VGA algorithms, both in fully controlled synthetic settings and on a set of real-world problems.

2. Related Work

The goal of Bayesian inference is to carry out computations with the posterior distribution of a latent variable given some observations y. By Bayes theorem, the posterior distribution is , where and are, respectively, the likelihood and the prior distribution. Even if the likelihood and the prior are known analytically, marginalizing out high-dimensional variables in the product in order to compute quantities such as is typically intractable. Variational Inference (VI) aims to simplify this problem by turning it into an optimization one. The intractable posterior is approximated by the closest distribution within a tractable family, with closeness being measured by the Kullback-Leibler (KL) divergence, defined by

where denotes the expectation of f over q. Denoting by a family of distributions, we look for

Since is not computable in an efficient way, we equivalently minimize the upper bound :

where is the entropy of q (). Here, is known as the variational free energy and is known as the Evidence Lower BOund (ELBO). A diverse set of approaches to perform VI with Gaussian families have been developed in the literature, which we review in the following.

2.1. The Variational Gaussian Approximation

The VGA is the restriction of to be the family of multivariate Gaussian distributions , where is the mean and is the covariance matrix, for which the free energy is found to be

where . A standard descent algorithm based on gradients of Equation (2) with respect to variational parameters give rise to some issues. First, naively computing the gradient of the expectation with respect to the covariance matrix C involves unwanted second derivatives of [12], which may not be available or may be computationally too expensive in a black-box setting. Second, the gradient of the entropy term entails inverting a non-sparse matrix, which we would like to avoid for higher-dimensional cases. Finally, the positive-definiteness of the covariance matrix leads to non-trivial constraints on parameter updates, which can lead to a slowdown of convergence or, if ignored, to instabilities in the algorithm.

To solve these issues, a variety of approaches have been proposed in the literature. If we focus on factorizable models, we can make a simplification: for problems with likelihoods that can be rewritten as , the number of independent variational parameters is reduced to [12,13]. In this special case, the Gaussian expectations in the free energy (2) split into a sum of 1-dimensional integrals, which can be efficiently computed by using numerical quadrature methods. To extend to the general case, gradients of the free energy are estimated by a stochastic sampling approach, which also forms the starting point of our method. This relies on the so-called reparametrization trick, where the expectation over the parameter-dependent variational density is replaced by an expectation over a fixed density instead. This facilitates the gradient computation because unwanted derivatives of the type are avoided. For the Gaussian case, the reparametrization trick is a linear transformation of an arbitrary D dimensional Gaussian random variable in terms of a D-dimensional Gaussian random variable :

where and are the variational parameters. We assume that the covariance is not degenerate and, for simplicity, we set it as the identity. For instance, the gradient of the expectation given q over a function f given the mean m becomes . This can be simply proved by using the reparametrization (3) inside the integral and passing the gradient inside; for more details, see [14].

Given this representation, the free energy is easily obtained as a function of the variational parameters:

Other representations are possible. Challis and Barber [13] and Ong et al. [15] use a different reparametrization with a factorized structure of the covariance , where and , with is the rank of . Other representations assume special structures of the precision matrix , which allow you to enforce special properties, such as sparsity in [16,17].

In general, these methods tend to scale poorly with the number of dimensions, as one needs to optimize parameters. The (structured) Mean-Field (MF) [18,19] approach imposes independence between variables in the variational distribution. The number of variational parameters is then , but covariance information between dimensions is lost.

2.2. Natural Gradients

Besides the issue of expectations, more efficient optimizations directions, beyond ordinary gradient descent, have been considered. These can help to deal with constraints such as those given for the covariance matrix. Natural gradients [20] are a special case of Riemannian gradients and utilize the specific Riemannian manifold structure of variational parameters. They can often deal with constraints of parameters (such as the positive definiteness of the covariance), accelerate inference, and improve the convergence of algorithms. The application of such advanced gradient methods typically requires an estimate of the inverse Fisher information matrix as a preconditioner of ordinary gradients. Khan and Nielsen [21] and Lin et al. [22] propose a solution that requires extra second derivatives of the log–posteriors. Salimbeni et al. [23] developed an automatic process to compute these without the second derivatives but with instability issues. Lin et al. [17] solved these issues by using geodesics on the manifold of parameters, at the price of having to compute inverse matrices as well as Hessians.

2.3. Particle-Based VI

Stochastic gradient descent methods compute expectations (and gradients) at each time step with new independent Monte Carlo samples drawn from the current approximation of the variational density. Particle-based methods for variational inference draw samples only once at the beginning of the algorithm instead. They iteratively construct transformations of an initial random variable (having a simple tractable density) where the transformed density leads to the decrease and finally to the minimum of the variational free energy. The iterative approach induces a deterministic temporal flow of random variables which depends on the current density of the variable itself. Using an approximation by the empirical density (which is represented by the positions of a set of ’particles’) one obtains a flow of interacting particles which converges asymptotically to an empirical approximation of the desired optimal variational density.

The most popular approach is Stein Variational Gradient Descent (SVGD) [24], which computes a nonparametric transformation based on the kernelized Stein discrepancy [9]. SVGD has the advantage of not being restricted to a parametric form of the variational distribution. However, using standard distance-based kernels like the squared exponential kernel () can lead to underestimated covariances and poor performance in high dimensions [11,25]. Hence, it is interesting to develop particle approaches that approximate the VGA. We provide a more thorough comparison between our method and SVGD in Section 3.6.

2.4. GVA in Bayesian Neural Networks

There has been increased interest in making Bayesian Neural Networks (BNN) by adding priors to Neural Networks parameters. The true form of the posterior is unknown but VGA has been used due to its ease of use and scalability with the number of dimensions (typically ). Most of the aforementioned methods apply to BNN, but techniques have been specifically tailored with BNN in mind. [26] use the low-rank structure of [13] but exploit the Local Reparametrization Trick, where each datapoint gets a different sample from q in order to reduce the stochastic gradient estimator variance. Stochastic Weight Averaging-Gaussian (SWAG) [27], in which a set of particles obtained via stochastic gradient descent represent a low-rank Gaussian distribution, approximating the true posterior with a prior posterior produced by the network’s regularization. While easy to implement, SWAG does not allow you to incorporate an explicit prior, and the resulting distribution does not derive from a principled Bayesian approach.

2.5. Related Approaches

The closest approach to our proposed method is the Ensemble Kalman Filter (EKF) [28]. It assumes that the posterior is computed in a sequential way, where, at each time step, only single (or smaller batches) of data observations, represented by their likelihoods, become available. An ensemble of particles, representing a Gaussian distribution is iteratively updated with every new batch of observations. EKF allows us to work on high-dimensional problems with a limited amount of particles but is restricted to factorizable likelihoods for which a sequential representation is possible. While EKF maintains a representation of a Gaussian posterior, it is not clear how this relates to the goal of minimizing the free energy or the KL divergence.

3. Gaussian (Particle) Flow

We introduce Gaussian Particle Flow (GPF) and Gaussian Flow (GF), two computationally tractable approaches, to obtain a Variational Gaussian Approximation (VGA). In the following, we derive deterministic linear dynamics, which decreases the variational free energy. We additionally give some variants with a Mean-Field (MF) approach and prove theoretical convergence guarantees.

In the following, indicates the total derivative given time, partial derivatives given time, gradients given a vector x.

3.1. Gaussian Variable Flows

We next discuss an alternative approach to generate the desired transformation of random variables, leading from a simple (prior) Gaussian density to a more complex Gaussian, which minimizes the variational free energy. It is based on the idea of variable flows, i.e., recursive deterministic transformations of the random variables defined by a mapping where . Well-known examples of flows are Normalizing Flows [29], where are bijections, or Neural ODEs [30] where is defined by a neural network and is the input. For simplicity, we will consider small changes and work with flows in the continuous-time limit (), which follow a system of Ordinary Differential Equation (ODE). For the Gaussian case, in the spirit of the reparametrization trick (3), we choose a linear corresponding map f and write

where is a matrix and (which is no longer interpreted as an independent variational parameter). When the initial random variable is Gaussian distributed, the vectors are also Gaussian for any t. To construct a flow that decreases the free energy over time, we can either compute the time derivative of the specific free energy (2) induced by the ODE (5), or simply derive the general result valid for smooth maps f (see, e.g., [24]). To be self contained, we briefly repeat the main steps: We first compute the change of the free energy in terms of the time derivative of :

where we have used the fact that and . We next use the continuity equation for the density

related to the deterministic flow to obtain

where we have applied Green’s identity twice and used the fact that . Specializing to the linear flow (5), we obtain

where

Equation (6) represents the change in the free energy for an infinitesimal change in the variables x given by the flow (5). Obviously, the simplest choices

lead to a decrease in the free energy . More detailed derivations are given in Appendix A. Additionally, equality only happens, when

Using Stein’s lemma [31], we can show that these fixed-point solutions are equal to the conditions for the optimal variational Gaussian distribution solution given in [12]. In Appendix C, we show that our parameter updates can be interpreted as a Riemannian gradient descent method for the free energy (4). This is based on the metric introduced by ([20], Theorem 7.6) as an efficient technique for learning the mixing matrix in models of blind source separation. This gradient should not be confused with the so-called natural gradient obtained by pre-multiplying with the inverse Fischer-information matrix.

Of course, there are other choices for and , which lead to a decrease in the free energy and the same fixed-point equations. In Section 3.6, we discuss how SVGD, with a linear kernel, can lead to the same fixed points but with different dynamics.

3.2. From Variable Flows to Parameter Flows

Before we introduce the particle algorithm, we show that the results for the variable flow can also be converted into a temporal change of the parameters , , as defined for Equation (3). From this, a corresponding Gaussian Flow (GF) algorithm can be easily derived. By differentiating the parametrisation (with now considered as free variational parameter) with respect to time t and using (5), we obtain

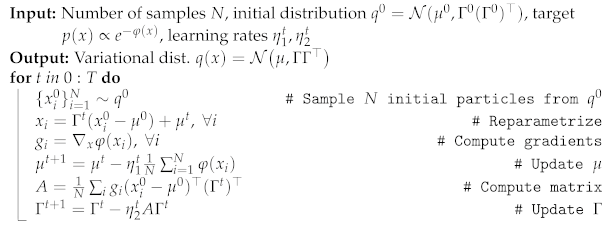

By inserting into the right hand side of (10), and using the optimal parameters from (7), we obtain

Note that the expectations are over the probability distribution of the initial random variable . Discretizing Equations (11) in time, and estimating the expectations by drawing independent samples from the fixed Gaussian at each time step, we obtain our GF algorithm to minimize the variational free energy in the space of Gaussian densities. We summarize the steps of GF in Algorithm 1. Remarkably, this scheme differs from previous VGA algorithms with Riemannian gradients based on the Fisher information metric (see, e.g., [17,32]) because no matrix inversions or second order derivatives of the function are required.

GF also allows for the computation of a low-rank VGA by enforcing and . This algorithm scales linearly in the number of dimensions and quadratically in the rank K of the covariance.

It is interesting to note that the reverse construction of a variable flow from a parameter flow is, in general, not possible. This would require the ability to eliminate all variational parameters and the initial variables in the resulting differential equation for , and replace them with functions of alone. For instance, if we eliminate the initial variables in terms of and the algorithm of [14], the resulting expression still depends on .

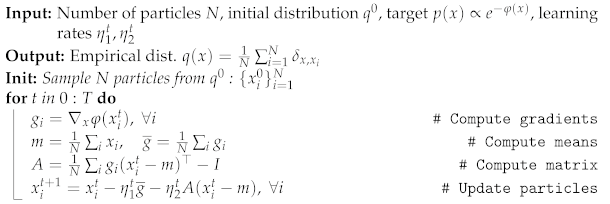

3.3. Particle Dynamics

The main idea of the particle approach is to approximate the Gaussian density in (7) by the empirical distribution

computed from N samples , . These are initially sampled from the density at time and are then propagated using the discretized dynamics of the ODE (5):

where

where and are learning rates (We further comment on the use of different optimization schemes in Section 4.4). Note that although is a matrix, changing the matrix multiplication order leads to a computational complexity of with a storage complexity of , since neither the empirical covariance matrix or need to be explicitly computed.

Relaxation of Empirical Free Energy and Convergence

We have shown that the continuous-time dynamics (10) of the random variables leads to a decay of the free energy with time t. Assuming that the free energy is bounded from below, one might conjecture that this property would imply the convergence of the particle algorithm to a fixed point when learning rates are sufficiently small such that the discrete-time dynamics are approximated well by the continuous limit. Unfortunately, the finite number N of particles poses an extra problem. The definition of the free energy by the KL–divergence (1) for continuous random variables such as assumes that both and are densities with respect to the Lebesgue measure. Hence, is not defined if we take , (12) as the empirical distribution of the finite particle approximation. Nevertheless, we define a finite N approximation to the Gaussian free energy, which is also then found to decay under the finite N dynamics. Let us first assume that and define

with the empirical covariance matrix

The definition (14) is chosen in such way that in the large N limit, when the empirical distribution converges to a Gaussian distribution , we will also obtain the convergence of the approximation (14) to . It can be shown (see Appendix B) that , with equality only at the fixed points of the dynamics.

In applications of our particle method to high-dimensional problems, the limitations of computational power may force us to restrict particle numbers to be smaller than the dimensionality D. For , the empirical covariance will be singular, and typically contain only non-zero eigenvalues, which leads to the and makes Equation (14) meaningless. We resolve this issue through a regularisation of the log–determinant term in (14), replacing all zero eigenvalues of by the values 1, i.e., . We show in Appendix B that the free energy still decays, provided that the dynamics of the particles stay the same. This regularisation step can be formally stated as a replacement of the empirical covariance (15) in (14) by

where ith eigenvector of .

3.4. Algorithm and Properties

The algorithm we propose is to sample N particles where from (which can be centered around the MAP for example), and iteratively optimize their positions using Equation (13). Once convergence is reached, i.e., , we can easily make predictions using the converged empirical distribution , where is the Dirac delta function, or, alternatively, the Gaussian density it represents, i.e., , where and . To draw samples from , no inversions of the empirical covariance C are needed, as we can obtain new samples by computing:

where are i.i.d. normal variables: . This can be shown by defining D, the deviation matrix, a matrix which columns equal to . We naturally have which makes D the Cholesky decomposition of C.

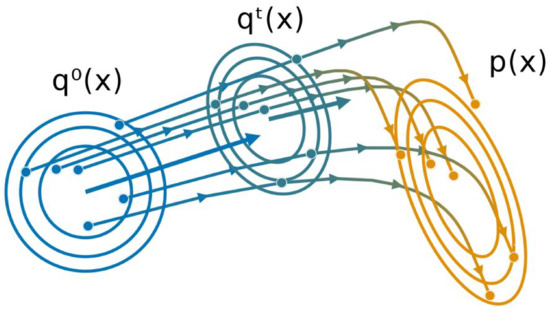

All the inference steps are summarized in Algorithm 2 and an illustration in two dimensions is provided in Figure 1.

Figure 1.

Illustration of the Gaussian Particle Flow algorithm, with and representing the initial and target distribution respectively. Particles are iteratively moved according to the gradient flow starting from , approximating a new Gaussian distribution at each iteration t.

We summarize the principal points of our approach:

- Gradients of expectations have zero variance, at the cost of a bias decreasing with the number of particles and equal to zero for Gaussian target (see Theorem 1);

- It works with noisy gradients (when using subsampling data, for example);

- The rank of the approximated covariance C is . When , the algorithm can be used to obtain a low-rank approximation.

- The complexity of our algorithm is and storing complexity is . By adjusting the number of particles used, we can control the performance trade-off;

- GPF (and GF) are also compatible with any kind of structured MF (see Section 3.5);

- Despite working with an empirical distribution, we can compute a surrogate of the free energy to optimize hyper-parameters, compute the lower bound of the log-evidence, or simply monitor convergence.

| Algorithm 1: Gaussian Flow (GF) |

|

| Algorithm 2: Gaussian Particle Flow (GPF) |

|

3.4.1. Relaxation of Empirical Free Energy

The definition of the free energy from the KL–divergence (1) for a continuous random variables assumes that both and are densities with respect to the Lebesgue measure. Hence, it is not a priori clear that a specific approximation , based on an empirical distribution with a finite number of particles N, will decrease under the particle flow. Thus we may not be able to guarantee convergence to a fixed point for finite N. Luckily, as we show in Appendix D, we find that:

For , the empirical covariance will typically contain non-zero eigenvalues and lead to , making Equation (17) meaningless. We resolve this issue by introducing a regularized free energy where is replaced by where are the eigenvalues of . We show in Appendix D that, given the dynamics from Equation (5), is also guaranteed to not increase over time. It can, therefore, be used as a regularized proxy for the true and used to optimize over hyper-parameters or to monitor convergence. Note that similar proofs exist for SVGD [33] and were proven to be highly non-trivial.

3.4.2. Dynamics and Fixed Points for Gaussian Targets

We illustrate our method by some exact theoretical results for the dynamics and the fixed points of our algorithm when the target is a multivariate Gaussian density. While such targets may seem like a trivial application, our analysis could still provide some insight into the performance for more complicated densities.

Theorem 1.

If the target density is a D-dimensional multivariate Gaussian, only particles are needed for Algorithm 2 to converge to the exact target parameters.

Proof.

The proof is given in Appendix E. □

Theorem 2.

For a target , i.e., with precision matrixΛ, where , and particles, the continuous time limit of Algorithm 2 will converge exponentially fast for both the mean and the trace of the precision matrix:

where and are the empirical mean and covariance matrix at time t and is the matrix exponential.

Proof.

The proof is given in Appendix F. □

Our result shows that convergence of the mean directly depends on . However, we can also precondition the gradient on m by , i.e., using the natural gradient approximation in the Fisher sense, and eventually get rid of the dependency on when .

The exponential relaxation of fluctuations also manifests itself in the decay of the free energy towards its minimum. For the Gaussian target, the free energy exactly separates into two terms corresponding to the mean and fluctuations. We can write , where the nontrivial fluctuation part (subtracted by its minimum) is given by

We can show that

indicating an asymptotic decrease in faster than , independent of the target. We can also prove the finite time bound

The degenerate case

Additionally, we can show the following result for the fixed points:

Theorem 3.

Given a D-dimensional multivariate Gaussian target density , using Algorithm 2 with particles, the empirical mean converges to the exact mean μ. The non-zero eigenvalues of converge to a subset of the target covariance Σ spectrum. Furthermore, the global minimumof the regularised version of the free energy (17) corresponds to the largest eigenvalues of Σ.

Proof.

The proof is given in Appendix G. □

This result suggests that might typically converge to an optimal low-rank approximation of . We show an empirical confirmation in Section 4.2 for this conjecture. This suggests that it makes sense to apply our algorithm to high-dimensional problems even when the number of particles is not large. If the target density has significant support close to a low-dimensional submanifold, we might still obtain a reasonable approximation.

3.5. Structured Mean-Field

For high-dimensional problems, it may be useful to restrict the variational Gaussian approximation to the posterior to a specific structure via a structured mean-field approximation. In this way, spurious dependencies between variables that are caused by finite-sample effects could be explicitly removed from the algorithms. This is most easily incorporated in our approach by splitting a given collection of latent variables x into M disjoint subsets . We reorder the vector indices in such a way that the first components correspond to , and so on. Hence, we obtain . A structured mean-field approach is enforced by imposing a block matrix structure for the update matrix , where ⊕ is the direct sum operator. It is easy to see that this construction corresponds to a related block structure of the matrix in Equation (3). This means that the subsets of the random vectors are modeled as independent. Hence, when the number of particles grows to infinity, one recovers the fixed-point equations for the optimal MF structured Gaussian variational approximation from our approach. As previously, as the number of particles grows to infinity, we recover the optimal MF Gaussian variational approximation. Note that using a structured MF does not change the complexity of the algorithm but requires fewer particles to obtain a full-rank solution.

3.6. Comparison with SVGD

Given the similarities with the SVGD methods [24], one could question the differences of our approach. The model proposed by [10] using a linear kernel has similar properties to our approach. The variable update becomes:

The fixed points are

where the last equality holds since . This is the same as our algorithm fixed points (9). Similarly to Theorem 1, particles will converge to the exact D-dimensional multivariate Gaussian target. However, the generated flows are different. The main difference is that we normalize our flow via the norm, whereas [10] rely on the reproducing kernel Hilbert space (RKHS) norm, i.e., where and . For a full introduction on RKHS, we recommend [34]. Remarkably, centering the particles on the mean, namely, using the modified linear kernel , leads to the same dynamics. Additionally, when using SVGD, there is no direct possibility of computing the current KL divergence between the variational distribution and the target, unless some values are accumulated [35]. There is also no clear theory explaining what happens when the number of particles is smaller than the number of dimensions, for both distance-based kernels and the linear kernel.

4. Experiments

We now evaluate the efficiency of GPF and GF. First, given a Gaussian target, we compare the convergence of our approach with popular VGA methods, which are all described in Section 2. Second, we evaluate the effect of varying the number of particles for both Gaussian targets and non-Gaussian targets, especially with a low-rank covariance. Then, we evaluate the efficiency of our algorithm on a range of real-world binary classification problems through a Bayesian logistic regression model and a series of BNN on the MNIST dataset.

All the Julia [36] code and data used to reproduce the experiments are available at the Github repository: https://github.com/theogf/ParticleFlow_Exp (accessed on 27 July 2021).

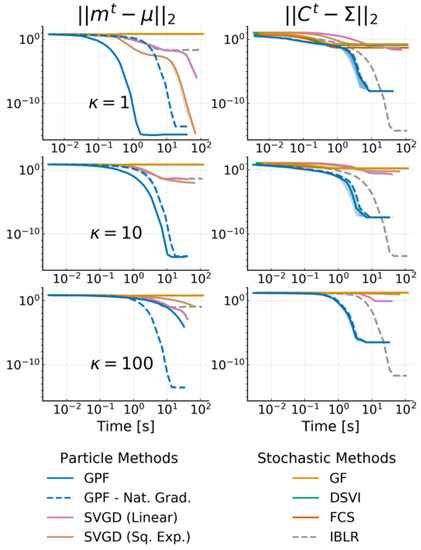

4.1. Multivariate Gaussian Targets

We consider a 20-dimensional multivariate Gaussian target distribution. The mean is sampled from a normal Gaussian and the covariance is a dense matrix defined as , where U is a unitary matrix and is a diagonal matrix. is constructed as where is the condition number, i.e., . This means that, for , we obtain a , and for , we obtain eigenvalues ranging uniformly from to 10 in log-space.

We compare GPF and GF to the state-of-the art methods for VGA described in Section 2, namely Doubly Stochastic VI (DSVI) [14], Factor Covariance Structure (FCS) [15] with rank , iBayes Learning Rule (IBLR) [17] with a full-rank covariance and their Hessian approach, and Stein Variational Gradient Descent with both a linear kernel (Linear SVGD) [10] and a squared-exponential kernel (Sq. Exp. SVGD) [24]. For all methods, we set the number of particles or, alternatively, the number of samples used by the estimator, as , and use standard gradient descent () with a learning rate of for all particle methods. We use RMSProp [37] with a learning rate of for all stochastic methods. We run each experiment 10 times with 30,000 iterations, and plot the average error on the mean and the covariance with one standard deviation. For GPF, we additionally evaluate the method with and without using natural gradients for the mean (i.e., pre-multiplying the averaged gradient with ), indicated, respectively, with a dashed and solid line. Figure 2 reports the norm of the difference between the mean and covariance with the true posterior over time for the target condition number .

Figure 2.

norm of the difference between the target mean (left side) and target covariance (right side) with the inferred variational parameters and against time for 20-dimensional Gaussian targets with condition number . We use particles/samples and show the mean over 10 runs as well as the 68% credible interval. Methods with dashed curves use natural gradients on the mean. Note that DSVI, GF and FCS are overlapping and are, at this scale, indistinguishable from one another.

As Theorem 1 predicts, GPF converges exactly to the true distribution, regardless of the target. GF and other methods based on stochastic estimators cannot obtain the same precision as their accuracy is penalized by the gradient noise. IBLR approximate the covariance perfectly, despite the stochasticity of its estimator; however IBLR needs to compute the true Hessian at each step. When using a Hessian approximation instead, IBLR performed just like DSVI; the true benefit of IBLR appears when second-order functions are computed, which is naturally intractable in high-dimensions. SVGD with a linear kernel, achieves a good performance but is highly unstable: most of the runs (ignored here) diverge. This is due to the dot computation which can become extremely high, especially for non-centered data. For this reason, we do not consider this method for the later experiments. SVGD with a sq. exp. kernel obtains a good estimate for the mean but fails to approximate the covariance.

Perhaps surprisingly, GF does not perform much better than DSVI or FCS. This is potentially due to the benefit of Riemannian gradients being canceled by the gradient noise [38] providing a strong argument for particle-based methods over stochastic estimators.

Remarkably, we also confirm Theorem 2, that the convergence speed of is independent of the target , while the convergence speed of has this dependency unless the natural gradient is used (see the dashed curves). The case highlights that natural gradient do not necessarily improve convergence speed.

4.2. Low-Rank Approximation for Full Gaussian Targets

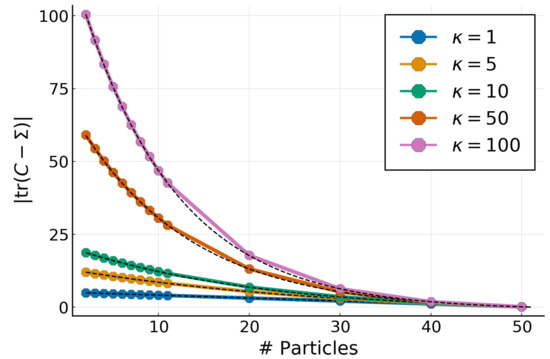

We explore the effect of the number of particles for both Gaussian and non-Gaussian targets. We use the same Gaussian target from the previous experiment in 50 dimensions with a full-rank covariance determined by their condition number . The covariance eigenvalues in log-space range uniformly from to . For a given target multivariate Gaussian, we vary the number of particles from 2 to and look at the absolute difference of . The results in , as well as the corresponding predictions (in dashed-black), from Theorem 3, are shown on Figure 3.

Figure 3.

Trace error for a Gaussian target with and condition numbers for a varying number of particles with GPF. Predictions from Theorem 3 are shown in dashed-black.

The empirical results perfectly match the theoretical predictions, confirming that, for Gaussian targets, the particles determine a low-rank approximation whose spectrum is equal to the largest eigenvalues from the target.

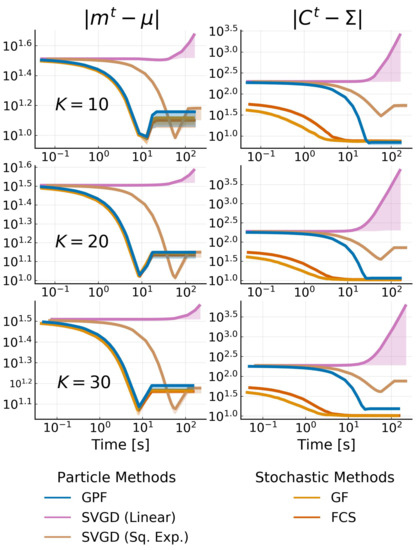

4.3. High-Dimensional Low-Rank Gaussian Targets

We consider a typical low-rank target case where the dimensionality is high but the effective rank of the covariance is unknown. The target is given by where , the covariance is defined by , where U is a unitary matrix and is a diagonal matrix defined by

where K is the effective rank of the target. We pick and vary to simulate a true problem where the correct K is not known. We test all methods allowing for low-rank structure, namely, GPF, GF, FCS and SVGD (Linear and Sq. Exp.). We fix the rank (or the number of particles) to be 20; therefore, we obtain three cases where the rank is exact, under-estimated, and over-estimated. For all methods, we use RMSProp [37] for the stochastic methods, or a diagonal version of it (see Section 4.4) for the particle ones. The error of the mean and the covariance is shown in Figure 4. Note that the difference in the initial error on the covariance is due to the difficulty of starting with the same covariance between particle and stochastic methods.

Figure 4.

Convergence plot of low-rank methods for a 500-dimensional multivariate Gaussian target with effective rank . The rank of each method is fixed as 20. The difference in the starting point for the covariance is due to the initialization difference between each method. We show the mean over 10 runs for each method with shadowed areas representing the 68% credible interval.

We observe once again that the SVGD with a linear kernel fails to converge due to the large gradients. All methods perform equally in the estimation of the mean while being non-influenced by the rank of the target. As expected, the approximation quality for the covariance degrades when the rank gets bigger, but all algorithms still converge to good approximations. SVGD with a sq. exp. kernel performs much worse than the rest of the methods. This is a known phenomenon where, for high dimensions, the covariance SVGD is either over- or underestimated.

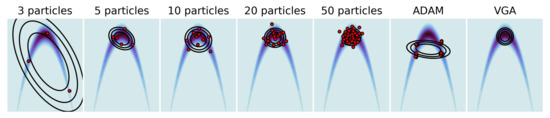

4.4. Non-Gaussian Target

We now investigate the behavior of our algorithm with non-Gaussian target distributions. We built a two-dimensional banana distribution: , varied the number of particles used for GPF in and compared it with a standard full-rank VGA approach. We also showed the impact of replacing a fixed with the Adam [39] optimizer for 50 particles. The results are shown in Figure 5. As expected, increasing the number of particles madesthe distribution obtained via GPF increasingly closer to the optimal standard VGA, even in a non-Gaussian setting. However, using a momentum-based optimizer such as Adam breaks the linearity assumption of the original flow (5) and leads to a twisted representation of the particles. (We observed the same behavior with other momentum-based optimizers). A simple modification of the most known optimizers allows the linearity to be maintained while correctly adapting the learning rate to the shape of the problem. Most optimisers accumulate momentum or gradients element-wise, and end up modifying the updates as , where is the preconditioner obtained via the optimiser and ⊙ is the Hadamard product. By instead taking the average over each dimensions, we obtained the updates , where is a diagonal matrix. The details of the dimension-wise conditioners for ADAM, AdaGrad and AdaDelta are given in Appendix H.

Figure 5.

Two-dimensional Banana distribution. Comparison of GPF using an increasing number of particles and a different optimizer (ADAM) with the standard VGA (rightmost plot).

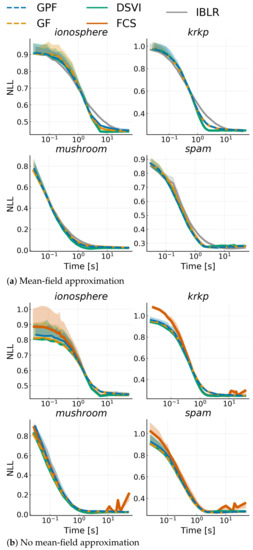

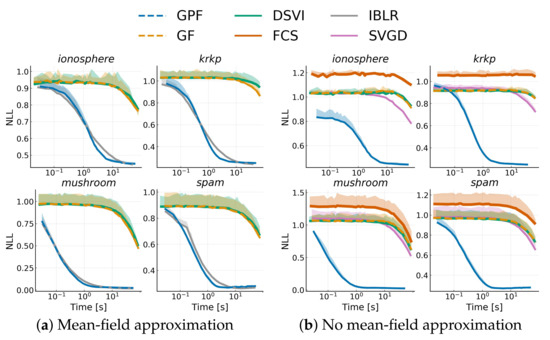

4.5. Bayesian Logistic Regression

Finally, we considered a range of real-world binary classification problems modeled with a Bayesian logistic regression. Given some data where and , we defined the model with weight , and with being the logistic function. We set a prior on w: . We benchmarked the competing approaches over four datasets from the UCI repository [40]: spam (), krkp (), ionosphere () and mushroom (). We ran all algorithms discussed in Section 4.1, both with and without a mean-field approximation; SVGD was omitted since it is too unstable. All algorithms were run with a fixed learning rate , and we used mini-batches of size 100. We show alternative training settings in Appendix I. Note that FCS, for mean-field, simplifies to DSVI Additionally, we did not consider full-rank IBLR, as it is too expensive, and we used their reparametrized gradient version for the Hessian. Figure 6 shows the average negative log-likelihood on 10-fold cross-validation with one standard deviation for each dataset. While, as expected, the advantages shown for Gaussian targets do not transfer to non-Gaussian targets, GPF and GF are consistently on par with competitors. On the other hand, IBLR tends to be outperformed. It is also interesting to note that mean-field does not seem to have a negative impact on these problems, and performance remains the same even with a full-rank matrix.

Figure 6.

Average negative log-likelihood vs. time on a test-set over 10 runs against training time for a Bayesian logistic regression model applied to different datasets. Top plots use a mean-field approximation, while bottom plots use a low-rank structure for the covariance with rank .

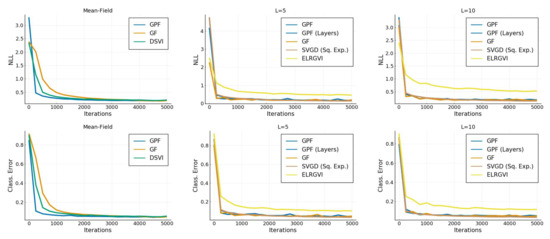

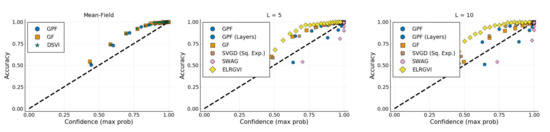

4.6. Bayesian Neural Network

We ran our algorithm on a standard network with two hidden layers each, with neurons and tanh activation functions (we additionally tried ReLU [41], but some baselines failed to converge). We trained on the MNIST dataset [42] ( 60,000, ) and used an isotropic prior on the weights with . We additionally compared these with Stochastic Weight Averaging-Gaussian (SWAG) [27] with an SGD learning rate of (selected empirically) and Efficient Low-Rank Gaussian Variational Inference (ELRGVI) [26]. We varied the assumptions on the covariance matrix to be diagonal (Mean-Field), or to have rank . Additionally, we showed, for GPF, the effect of using a structured mean-field assumption by imposing the independence of the weights between each layer (GPF (Layers)).

We trained each algorithm for 5000 iterations with a batchsize of 128(∼10 epochs) and reported the final average negative log-likelihood, accuracy and expected calibration error [43] on the test set ( 10,000) on Table 1. The predictive distribution is given by

where is the training data, and is a test sample. We computed the accuracy and the average negative test log-likelihood as:

where is the indicator function (equal to 1 for , 0 otherwise). For the definition of expected calibrated error, we refer the reader to [43]. Additional convergence and uncertainty calibration plots can be found in Appendix I.

Table 1.

Negative Log-Likelihood (NLL), Accuracy (Acc), and Expected Calibration Error (ECE) for a Bayesian Neural Networks (BNN) on the MNIST dataset. We varied the rank of the variational covariance from mean-field (all variables are independent) to a low-rank structure with . Bold numbers indicated the best performance, and italic bold numbers indicate the best performance when restricted to VGA methods. Convergence and calibration plots can be found in Appendix I.

Overall, the SVGD method performed best in terms of both accuracy and negative log-likelihood. However, SVGD is not in the same category as others, since it is not a VGA. For VGAs, we observed that a low-rank approximation improves upon mean-field methods. In particular, assuming independence between layers provides a large advantage to GPF. GPF and GF generally perform equally or better than all the other VGA methods. Note that, although not reported here, all methods needed approximately the same time for the 5000 iterations, except for SWAG, which only needed the MAP and a few thousand iterations of SGD afterward, making it generally faster but also less controlled (a grid search was needed to find the appropriate learning for SGD).

5. Discussion

We introduced GPF, a general-purpose and theoretically grounded, particle-based approach, to perform inference with variational Gaussians as well as GF its parameter version. We were able to show the convergence of the particle algorithm based on an empirical approximation of the free energy. We also showed that we can approximate high-dimensional targets by allowing for low-rank approximations with a small number of particles. The results for Gaussian targets suggest that the convergence of posterior covariance approximation may relax asymptotically fast, with small dependence on the target. This work is the first step in analyzing convergence speed and guarantees in inference with variational Gaussians, and future work could extend guarantees to non-Gaussian problems. One could also take advantage of existing particle-based VI methods to accelerate inference further or reach a better optima [44,45].

Author Contributions

Conceptualization, T.G.-F. and M.O.; methodology, T.G.-F., V.P. and M.O.; software, T.G.-F.; validation, T.G.-F.; formal analysis, T.G.-F.; investigation, T.G.-F.; resources, T.G.-F. and V.P.; data curation, T.G.-F.; writing—original draft preparation, T.G.-F., V.P. and M.O.; writing—review and editing, T.G.-F., V.P. and M.O.; visualization, T.G.-F.; supervision, M.O.; project administration, T.G.-F.; funding acquisition, M.O. All authors have read and agreed to the published version of the manuscript.

Funding

We acknowledge the support of the German Research Foundation and the Open Access Publication Fund of TU Berlin.

Data Availability Statement

Datasets can be found on the UCI dataset website [40] and the MNIST dataset can be found on Yann Lecun website [42].

Acknowledgments

We thank Fela Winkelmolen for his initial help on computations, Jannik Thümmel for his work on the linear SVGD and the reviewers for their insightful comments.

Conflicts of Interest

The authors declare no conflict of interest.

Appendix A. Derivation of the Optimal Parameters

In Section 3, we considered the optimization problem:

where we have introduced , the Froebius norm and , the norm and

To solve this problem, we used the Lagrange multiplier method. We write the Lagrangian as:

where and . For simplicity we can divide the problem as:

For , we have the constraints:

Computing the gradients is straightforward:

which gives us the result . Similarly for :

Replacing the gradients gives:

which gives us the result .

Appendix B. Relaxation of the Empirical Free Energy

We prove the decrease in the empirical free energy (17) under the particle flow when the covariance C is nonsingular. We define the empirical distribution with a finite number N of particles. The empirical free energy is defined as

We are interested in the temporal change of the free energy, when particles move under a general linear dynamics

The induced dynamics for are:

For notational simplicity, we introduce and (similarly ).

where we used the permutation properties of the trace.

Plugging the dynamics into Equation (A2), we obtain:

where we used the fact that .

We next look for conditions on b and A, under which , i.e., the dynamics will lead to a decrease in the free energy. We pick , where , and we obtain, for the first term in (A3):

For A, let us first define and rewrite the second and last term of the Equation (A3) as:

Combining both, we get . Similarly to the previous step, we pick , where , which leads to another negative term:

where we use the fact that is a positive semi-definite matrix for any real valued X.

Note that different forms of A (e.g., are replaced by a positive definite matrix) could be used, as long as the trace of the product stays positive. Inserting b and A, the free energy dynamics become

The variable dynamics are given by

which is equivalent to Equation (5), for . Our result shows that the empirical approximation of the free energy decreases under the particle flow.

Appendix C. Riemannian Gradient for Matrix Parameter Γ

The parameter flow for the matrix in (11) is given by

This is easily rewritten in terms of the parameter gradient as

Similar to natural gradients, which are defined by the metric, which is induced by the Fisher–matrix, we can rewrite the parameter change in terms of a different Riemannian gradient. This gradient is the direction of change , which yields the steepest descent of the free energy over a small time interval . As an extra condition, one keeps the length of (measured by a ’natural’ metric, which has specific invariance properties) fixed. This is defined by an inner product (the squared length) in the tangent space of small deviations from the matrix . Hence, is found by minimising (for small ) under the condition that is fixed. Following [20] (Theorem 6), a natural metric in the space of symmetric nonsingular matrices can be defined as

This metric is invariant against multiplications of and by matrices Y, i.e., and reduces to the Euclidian metric at the unit matrix .

The direction of the natural gradient is obtained by expanding the free energy for small and introducing a Lagrange–multiplier for the constraint. One ends up with the quadratic form

to be minimised by . By taking the derivative with respect to , one finds that the direction of agrees with the right equation of the flow (11).

Appendix D. Regularised Free Energy for N ≤ D

The problem of defining an empirical approximation for particles is that the empirical covariance becomes singular and typically has nonzero eigenvalues, and thus . Note that the extra 0 eigenvalue is derived from the fact that the empirical sum of fluctuations must be zero, which provides an additional linear constraint.

We can regularise the log determinant term by replacing the zero eigenvalues of C: . The new covariance becomes

since . The dynamics of the particles stays the same. To rewrite this formally in terms of matrices, we define

where

and ith eigenvector of C. This replaces all 0 eigenvalues by 1. is a projector: and . We also have . In the following, it is useful to introduce the matrix of fluctuations Z, such that . The column vectors of Z span the subspace of eigenvectors with . Hence, it follows that .

We want to show that the regularised free energy decreases under the particle dynamics for . Since the part of the time derivative of that depends on is not changed, we will only discuss the fluctuation part in the following.

It is useful to introduce the matrix:

with is the matrix of the gradient.

To obtain this result, we need

We need to work out

where we have used the fact that the eigenvalues of have a zero time derivative and can be omitted. We use the linear dynamics to obtain:

where we have used and . Hence

Finally, the temporal change in the free energy due to the fluctuations is given by

Note that this proof is not only valid for , but also for , as the overall computations are simplified with . A more detailed proof for is, furthermore, given in Appendix B.

Efficient Computation of

A practical way to compute without performing an eigenvector expansion is to define the matrix

where is the all-ones matrix. shares the nonzero eigenvalues with C and has an additional eigenvalue 0 corresponding to the constant eigenvector . Adding an all-ones matrix preserves all existing eigenvalues while replacing the 0 one with a constant. This leads to the following result:

Appendix E. Proof of Theorem 1: Fixed Points for a Gaussian Model (N > d)

Theorem A1

(1). If the target density is a D-dimensional multivariate Gaussian, only particles are needed for Algorithm 2 to converge to the exact target parameters.

The general fixed-point condition for the dynamics (13) of the position for particle i is given by:

for . By taking the expectation over all particles, we obtain:

where is the empirical distributions of particles at the the fixed point. Note that this result is independent of N, i.e., it is also valid for .

For a D-dimensional Gaussian target , we will show that empirical mean and covariance given by the particle algorithm converge to the true mean and covariance matrix of the Gaussian when we use particles. In this setting, we have . For simplification, we use the precision matrix and get

The gradient becomes:

At the fixed points, we have that and are equal to 0. For the mean m:

For the matrix , we have

where we use the result for the mean and right multiplied by as . Now, we can only simplify, as if C is not singular. This is true only if its rank is equal to D, needing particles.

Appendix F. Proof of Theorem 2: Rates of Convergence for Gaussian Targets

Theorem A2

(2). For a target , where , and particles, the continuous time limit of Algorithm 2 will converge exponentially fast for both the mean and the trace of the precision matrix:

where and are the empirical mean and covariance matrix at time t and is the matrix exponential.

In the following, we assume the target We use the notation and .

Appendix F.1. Convergence of the Mean

Given our target , similarly to Appendix E we have , where and . This transform the first of Equations (11) into

If now consider the error on m: we obtain:

Therefore, the mean converges exponentially fast to the true mean. The asymptotic rate is governed by the largest eigenvalue of , i.e., the inverse of the smallest eigenvalue of , .

Appendix F.2. Convergence of the Covariance Matrix

Let , we have from Equation (5), that

where . This expectation can further be simplified as

where . Hence, we have the exact result

We know that the optimal target is . Therefore, we define the error . Linearizing Equation (A6) gives us

We were not yet able to find a general solution of this equation, but we can obtain a simple result for the trace at time t:

We, therefore, have a asymptotic linear convergence: which is independent of the parameters of the Gaussian model.

We can also equivalently obtain a non-asymptotic estimate of a specific error measure for the precision matrix. Using equation (A6), we have the following dynamics for the precision :

Taking the trace

Hence we get the following exact result:

which is again independent of the parameters of the Gaussian model.

Additionally, this tells us that if the covariance C is non-singular at time , it will remain non-singular for all t ( would be infinite). Hence, if we start with particles with a proper empirical covariance, they cannot collapse to make C singular.

Appendix F.3. Convergence of the Trace of the Covariance

The asymptotic result on traces obtained previously can be turned into an exact inequality. We have

Taking the trace, we get

Since is positive definite, we have and thus

leading to:

by using by Grönwall’s lemma [46]:

Lemma A1

(Grönwall). For an interval and a given function f differentiable everywhere in and satisfying:

then f is bounded by the corresponding differential equation :

The bound is nontrivial only if . This would be natural assumption for a Bayesian model, if is the prior covariance and the eigenvalues of at (corresponding to the posterior) are reduced by the data.

Appendix F.4. Decay of Fluctuation Part of the Free Energy

Still focusing on the Gaussian model, we can further derive a bound on the free energy. It is easy to see that for the Gaussian case, the free energy in Equation (4) separates into a sum of two terms. The first one depends on the mean only and the second one on only the fluctuations (i.e., ).

We will consider the second, nontrivial part only. We assume that the covariance matrix is nonsingular (corresponding to ). The fluctuation part of the free energy (minus its minimum) is given by

where we have introduced the matrix . One can show that its eigenvalues are real and are upper bounded by 1. First, we can show from the equations of motion that

Second, using the elementary bound valid for and applied to the eigenvalues of B yields

The last two equalities used the definition . Since and are both positive definite, we can bound the last term by (see ([47], Theorem 6.5))

where, in the last line, we have bounded the trace of a product of p.d. matrices a second time.

Combining with Equation (A7) we show that

We can plug in our result from Theorem 2:

We can plug this in and use Grönwall’s Lemma A1 to get an exponential bound

Appendix F.5. Asymptotic Decay of the Free Energy:

For large times t, we can do better. Let us analyse the asymptotic decay constant defined by

In the last inequality, we used . Everything is expressed by traces of functions of B, and thus by its eigenvalues. Since as (this applies also to its eigenvalues u), we can use Taylor’s expansion to show that

which is independent of .

Appendix G. Proof of Theorem 3: Fixed-Points for Gaussian Model (N ≤ D)

Theorem A3

(3). Given a D-dimensional multivariate Gaussian target density , using Algorithm 2 with particles, the empirical mean converges to the exact mean μ. The non-zero eigenvalues of converge to a subset of the target covariance Σ spectrum. Furthermore, theglobal minimumof the regularised version of the free energy (17) corresponds to thelargesteigenvalues of Σ.

Applying Equation (A4) to our fixed point equation, we obtain

Hence, the set of centered positions of the particles , are all eigenvectors of the matrix with eigenvalue 1. S spans a dimensional space (we have ).

If we specialise to a Gaussian target , (and we have and can reuse the result from Equation (A5):

Using the equality above, we get:

which shows that the obtained low-rank covariance C and the target covariance have eigenvectors and eigenvalues in common.

However, are these the largest ones? We look at the modified free energy (17) (ignoring the contribution of the mean):

where are the eigenvalues of the empirical covariance C. We first note that , independent of which eigenvalues are obtained at the fixed point. This is easily seen by the following argument: If we use the index–set for the common eigenvectors and eigenvalues , , we can write

From this we obtain

From this result we obtain

The term is a constant, but the first term makes a difference: The absolute minimum of is achieved, when the are largest eigenvalues of . Our simulations empirically show that the algorithm usually converges to the absolute minimum.

Appendix H. Dimension-Wise Optimizers

Here, we list some of the most populars optimizers used and their dimension-wise versions. In all algorithms, we consider the matrix created by the concatenation of the flow of each particle: , where We additionally use the notation for the i-th dimension of the flow of the n-th particle. The main differences between the original algorithms and their modified version were put in red.

Appendix H.1. ADAM

The ADAM algorithm is given by:

| Algorithm A1: ADAM |

| Input: Output: |

| Algorithm A2: Dimension-wise ADAM |

| Input: Output: ; ; |

Appendix H.2. AdaGrad

The AdaGrad algorithm is given by:

| Algorithm A3: AdaGrad |

| Input: Output: |

| Algorithm A4: Dimension-wise AdaGrad |

| Input: Output: ; |

Appendix H.3. RMSProp

The RMSProp algorithm is given by:

| Algorithm A5: RMSProp |

| Input: Output: |

| Algorithm A6: Dimension-wise RMSProp |

| Input: Output: |

Appendix I. Additional Figures

Appendix I.1. Bayesian Logistic Regression

Similarly to the previous section, we also show results with the RMSProp optimizer with learning rate .

Figure A1.

Similarly to Figure 6, we show the average negative log-likelihood on a test-set over 10 runs against training time on different datasets for a Bayesian logistic regression problem. The dashed curve represents the low-rank approximation with RMSProp for methods based on stochastic estimators.

Appendix I.2. Bayesian Neural Network

Figure A2.

Convergence of the classification error and average negative log-likelihood as a function of time.

Figure A3.

Accuracy vs confidence. Every test sample is clustered in function of its highest predictive probability. The accuracy of this cluster is then computed. A perfectly calibrated estimator would return the identity.

References

- Shahriari, B.; Swersky, K.; Wang, Z.; Adams, R.P.; de Freitas, N. Taking the human out of the loop: A review of Bayesian optimization. Proc. IEEE 2016, 104, 148–175. [Google Scholar] [CrossRef] [Green Version]

- Settles, B. Active Learning Literature Survey; Computer Sciences Technical Report 1648; University of Wisconsin–Madison: Madison, WI, USA, 2009. [Google Scholar]

- Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction; The MIT Press: Cambridge, MA, USA, 2018. [Google Scholar]

- Bardenet, R.; Doucet, A.; Holmes, C. On Markov chain Monte Carlo methods for tall data. J. Mach. Learn. Res. 2017, 18, 1515–1557. [Google Scholar]

- Cowles, M.K.; Carlin, B.P. Markov chain Monte Carlo convergence diagnostics: A comparative review. J. Am. Stat. Assoc. 1996, 91, 883–904. [Google Scholar] [CrossRef]

- Barber, D.; Bishop, C.M. Ensemble learning for multi-layer networks. In Advances in Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 1998; pp. 395–401. [Google Scholar]

- Graves, A. Practical Variational Inference for Neural Networks. In Proceedings of the 24th International Conference on Neural Information Processing Systems, Granada, Spain, 12–15 December 2011; Volume 24, pp. 2348–2356. [Google Scholar]

- Ranganath, R.; Gerrish, S.; Blei, D. Black box variational inference. In Proceedings of the Seventeenth International Conference on Artificial Intelligence and Statistics, Reykjavik, Iceland, 22–25 April 2014; pp. 814–822. [Google Scholar]

- Liu, Q.; Lee, J.; Jordan, M. A kernelized Stein discrepancy for goodness-of-fit tests. In Proceedings of the 33rd International Conference on Machine Learning, New York, NY, USA, 20–22 June 2016; pp. 276–284. [Google Scholar]

- Liu, Q.; Wang, D. Stein variational gradient descent as moment matching. In Proceedings of the 32nd International Conference on Neural Information Processing Systems, Montreal, QC, Canada, 3–8 December 2018; Volume 32, pp. 8868–8877. [Google Scholar]

- Zhuo, J.; Liu, C.; Shi, J.; Zhu, J.; Chen, N.; Zhang, B. Message Passing Stein Variational Gradient Descent. In Proceedings of the 35th International Conference on Machine Learning, Stockholm, Sweden, 10–15 July 2018; Volume 80, pp. 6018–6027. [Google Scholar]

- Opper, M.; Archambeau, C. The variational Gaussian approximation revisited. Neural Comput. 2009, 21, 786–792. [Google Scholar] [CrossRef] [PubMed]

- Challis, E.; Barber, D. Gaussian kullback-leibler approximate inference. J. Mach. Learn. Res. 2013, 14, 2239–2286. [Google Scholar]

- Titsias, M.; Lázaro-Gredilla, M. Doubly stochastic variational Bayes for non-conjugate inference. In Proceedings of the 31st International Conference on Machine Learning, Beijing, China, 21–26 June 2014; pp. 1971–1979. [Google Scholar]

- Ong, V.M.H.; Nott, D.J.; Smith, M.S. Gaussian variational approximation with a factor covariance structure. J. Comput. Graph. Stat. 2018, 27, 465–478. [Google Scholar] [CrossRef] [Green Version]

- Tan, L.S.; Nott, D.J. Gaussian variational approximation with sparse precision matrices. Stat. Comput. 2018, 28, 259–275. [Google Scholar] [CrossRef] [Green Version]

- Lin, W.; Schmidt, M.; Khan, M.E. Handling the Positive-Definite Constraint in the Bayesian Learning Rule. In Proceedings of the 37th International Conference on Machine Learning, Virtual. 13–18 July 2020; Volume 119, pp. 6116–6126. [Google Scholar]

- Hinton, G.E.; van Camp, D. Keeping the Neural Networks Simple by Minimizing the Description Length of the Weights. In Proceedings of the Sixth Annual Conference on Computational Learning Theory, Santa Cruz, CA, USA, 26–28 July 1993; COLT ’93;. Association for Computing Machinery: New York, NY, USA, 1993; pp. 5–13. [Google Scholar]

- Blei, D.M.; Kucukelbir, A.; McAuliffe, J.D. Variational inference: A review for statisticians. J. Am. Stat. Assoc. 2017, 112, 859–877. [Google Scholar] [CrossRef] [Green Version]

- Amari, S.I. Natural Gradient Works Efficiently in Learning. Neural Comput. 1998, 10, 251–276. [Google Scholar] [CrossRef]

- Khan, M.E.; Nielsen, D. Fast yet simple natural-gradient descent for variational inference in complex models. In Proceedings of the International Symposium on Information Theory and Its Applications (ISITA), Singapore, 28–31 October 2018; pp. 31–35. [Google Scholar]

- Lin, W.; Khan, M.E.; Schmidt, M. Fast and simple natural-gradient variational inference with mixture of exponential-family approximations. In Proceedings of the 36th International Conference on Machine Learning, Long Beach, CA, USA, 9–15 June 2019; pp. 3992–4002. [Google Scholar]

- Salimbeni, H.; Eleftheriadis, S.; Hensman, J. Natural Gradients in Practice: Non-Conjugate Variational Inference in Gaussian Process Models. In Proceedings of the Twenty-First International Conference on Artificial Intelligence and Statistics, Lanzarote, Canary Islands, 9–11 April 2018; pp. 689–697. [Google Scholar]

- Liu, Q.; Wang, D. Stein variational gradient descent: A general purpose bayesian inference algorithm. arXiv 2016, arXiv:1608.04471. [Google Scholar]

- Ba, J.; Erdogdu, M.A.; Ghassemi, M.; Suzuki, T.; Sun, S.; Wu, D.; Zhang, T. Towards Characterizing the High-dimensional Bias of Kernel-based Particle Inference Algorithms. In Proceedings of the 2nd Symposium on Advances in Approximate Bayesian Inference, Vancouver, BC, Canada, 8 December 2019. [Google Scholar]

- Tomczak, M.; Swaroop, S.; Turner, R. Efficient Low Rank Gaussian Variational Inference for Neural Networks. In Proceedings of the Advances in Neural Information Processing Systems, Virtual. 6–12 December 2020; Volume 33. [Google Scholar]

- Maddox, W.J.; Izmailov, P.; Garipov, T.; Vetrov, D.P.; Wilson, A.G. A simple baseline for bayesian uncertainty in deep learning. In Proceedings of the Advances in Neural Information Processing Systems, Vancouver, BC, Canada, 8–14 December 2019; pp. 13153–13164. [Google Scholar]

- Evensen, G. Sequential data assimilation with a nonlinear quasi-geostrophic model using Monte Carlo methods to forecast error statistics. J. Geophys. Res. Oceans 1994, 99, 10143–10162. [Google Scholar] [CrossRef]

- Rezende, D.; Mohamed, S. Variational inference with normalizing flows. In Proceedings of the 32nd International Conference on Machine Learning, Lille, France, 7–9 July 2015; pp. 1530–1538. [Google Scholar]

- Chen, R.T.; Rubanova, Y.; Bettencourt, J.; Duvenaud, D. Neural ordinary differential equations. In Proceedings of the 32nd International Conference on Neural Information Processing, Montréal, QC, Canada, 3–8 December 2018; pp. 6572–6583. [Google Scholar]

- Ingersoll, J.E. Theory of Financial Decision Making; Rowman & Littlefield: Lanham, MD, USA, 1987; Volume 3. [Google Scholar]

- Barfoot, T.D.; Forbes, J.R.; Yoon, D.J. Exactly sparse gaussian variational inference with application to derivative-free batch nonlinear state estimation. Int. J. Robot. Res. 2020, 39, 1473–1502. [Google Scholar] [CrossRef]

- Korba, A.; Salim, A.; Arbel, M.; Luise, G.; Gretton, A. A Non-Asymptotic Analysis for Stein Variational Gradient Descent. In Proceedings of the 32nd International Conference on Neural Information Processing, Virtual, 6–12 December 2020; Volume 33. pp. 4672–4682.

- Berlinet, A.; Thomas-Agnan, C. Reproducing Kernel Hilbert Spaces in Probability and Statistics; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2011. [Google Scholar]

- Zaki, N.; Galy-Fajou, T.; Opper, M. Evidence Estimation by Kullback-Leibler Integration for Flow-Based Methods. In Proceedings of the Third Symposium on Advances in Approximate Bayesian Inference, Virtual Event. January–February 2021. [Google Scholar]

- Bezanson, J.; Edelman, A.; Karpinski, S.; Shah, V.B. Julia: A fresh approach to numerical computing. SIAM Rev. 2017, 59, 65–98. [Google Scholar] [CrossRef] [Green Version]

- Tieleman, T.; Hinton, G. Lecture 6.5-rmsprop, Coursera: Neural Networks for Machine Learning; Technical Report; University of Toronto: Toronto, ON, USA, 2012. [Google Scholar]

- Zhang, G.; Li, L.; Nado, Z.; Martens, J.; Sachdeva, S.; Dahl, G.; Shallue, C.; Grosse, R.B. Which Algorithmic Choices Matter at Which Batch Sizes? Insights From a Noisy Quadratic Model. In Advances in Neural Information Processing Systems; Wallach, H., Larochelle, H., Beygelzimer, A., d’Alché-Buc, F., Fox, E., Garnett, R., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2019; Volume 32, pp. 8196–8207. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Dua, D.; Graff, C. UCI Machine Learning Repository. 2017. Available online: https://archive.ics.uci.edu/ml/datasets.php (accessed on 28 July 2021).

- Agarap, A.F. Deep learning using rectified linear units (relu). arXiv 2018, arXiv:1803.08375. [Google Scholar]

- LeCun, Y. The MNIST Database of Handwritten Digits. Available online: http://yann.lecun.com/exdb/mnist/ (accessed on 20 July 2021).

- Guo, C.; Pleiss, G.; Sun, Y.; Weinberger, K.Q. On calibration of modern neural networks. In Proceedings of the 34th International Conference on Machine Learning, Sydney, Australia, 6–11 August 2017; pp. 1321–1330. [Google Scholar]

- Liu, C.; Zhuo, J.; Cheng, P.; Zhang, R.; Zhu, J. Understanding and accelerating particle-based variational inference. In Proceedings of the 36th International Conference on Machine Learning, Long Beach, CA, USA, 9–15 June 2019; pp. 4082–4092. [Google Scholar]

- Zhu, M.H.; Liu, C.; Zhu, J. Variance Reduction and Quasi-Newton for Particle-Based Variational Inference. In Proceedings of the 37th International Conference on Machine Learning, Virtual. 13–18 July 2020. [Google Scholar]

- Gronwall, T.H. Note on the derivatives with respect to a parameter of the solutions of a system of differential equations. Ann. Math. 1919, 20, 292–296. [Google Scholar] [CrossRef]

- Zhang, F. Matrix Theory: Basic Results and Techniques; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2011. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).