Deep Learning for Walking Behaviour Detection in Elderly People Using Smart Footwear

Abstract

1. Introduction

1.1. Context

1.2. Related Work

1.3. Limitations of Existing Practices

1.4. Proposed Solution

2. Materials and Methods

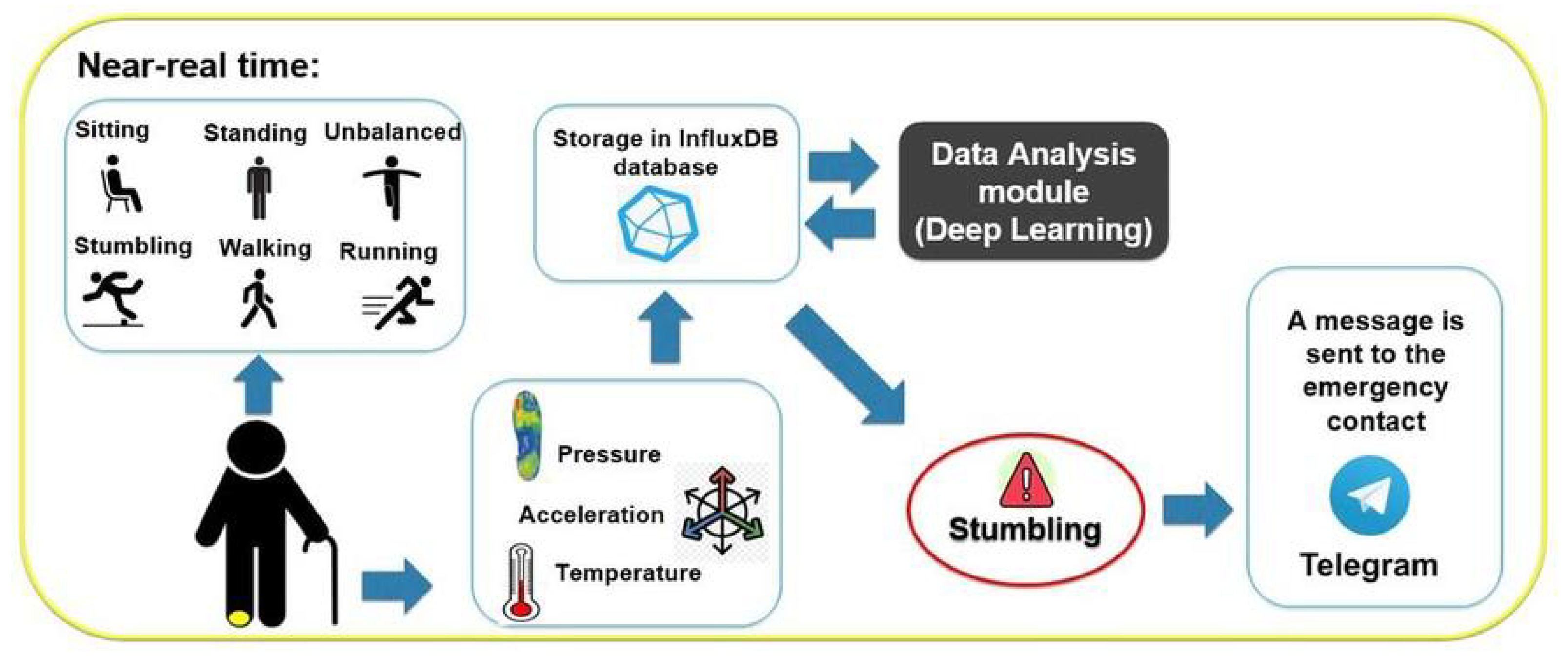

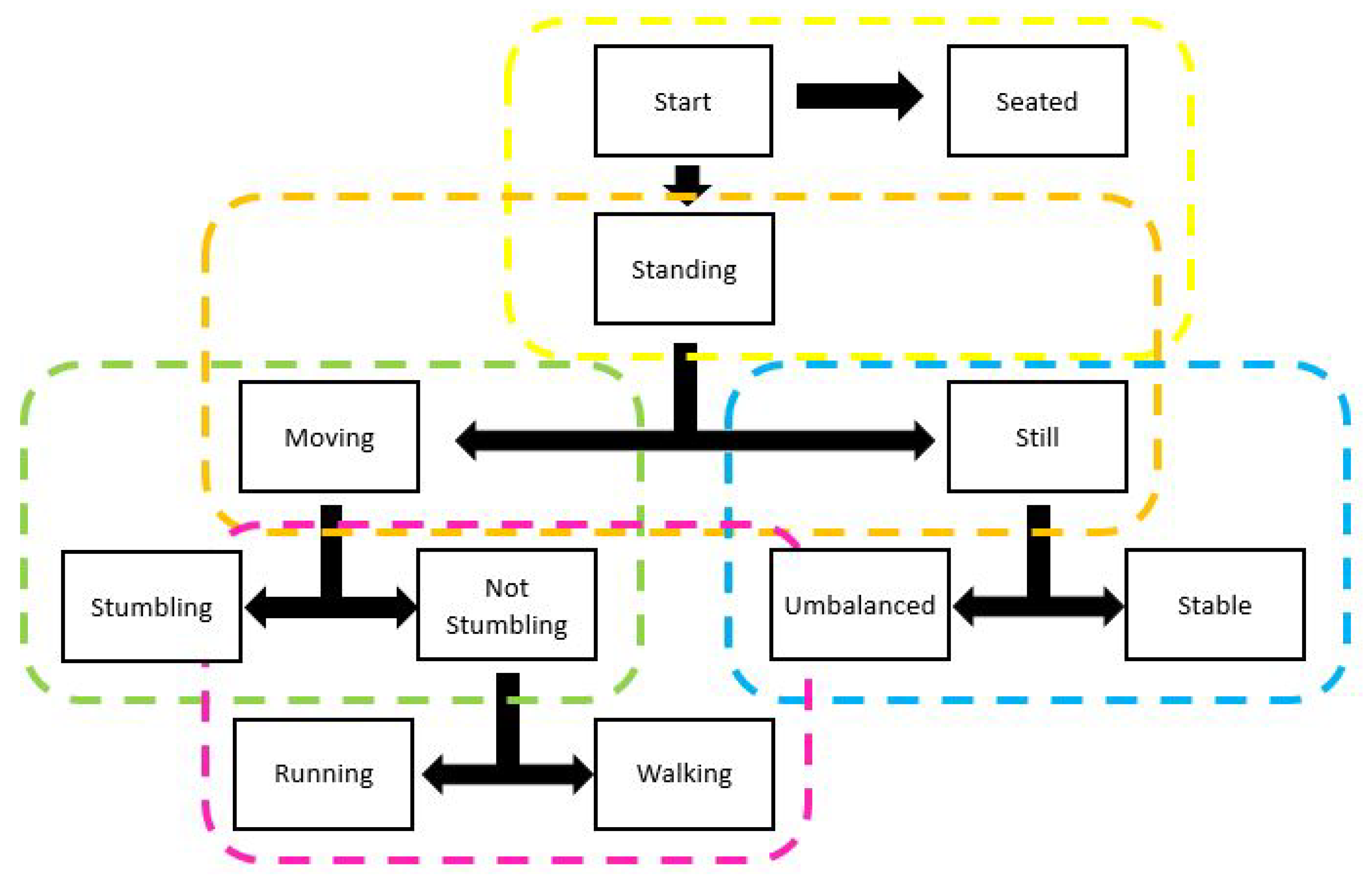

2.1. System Architecture

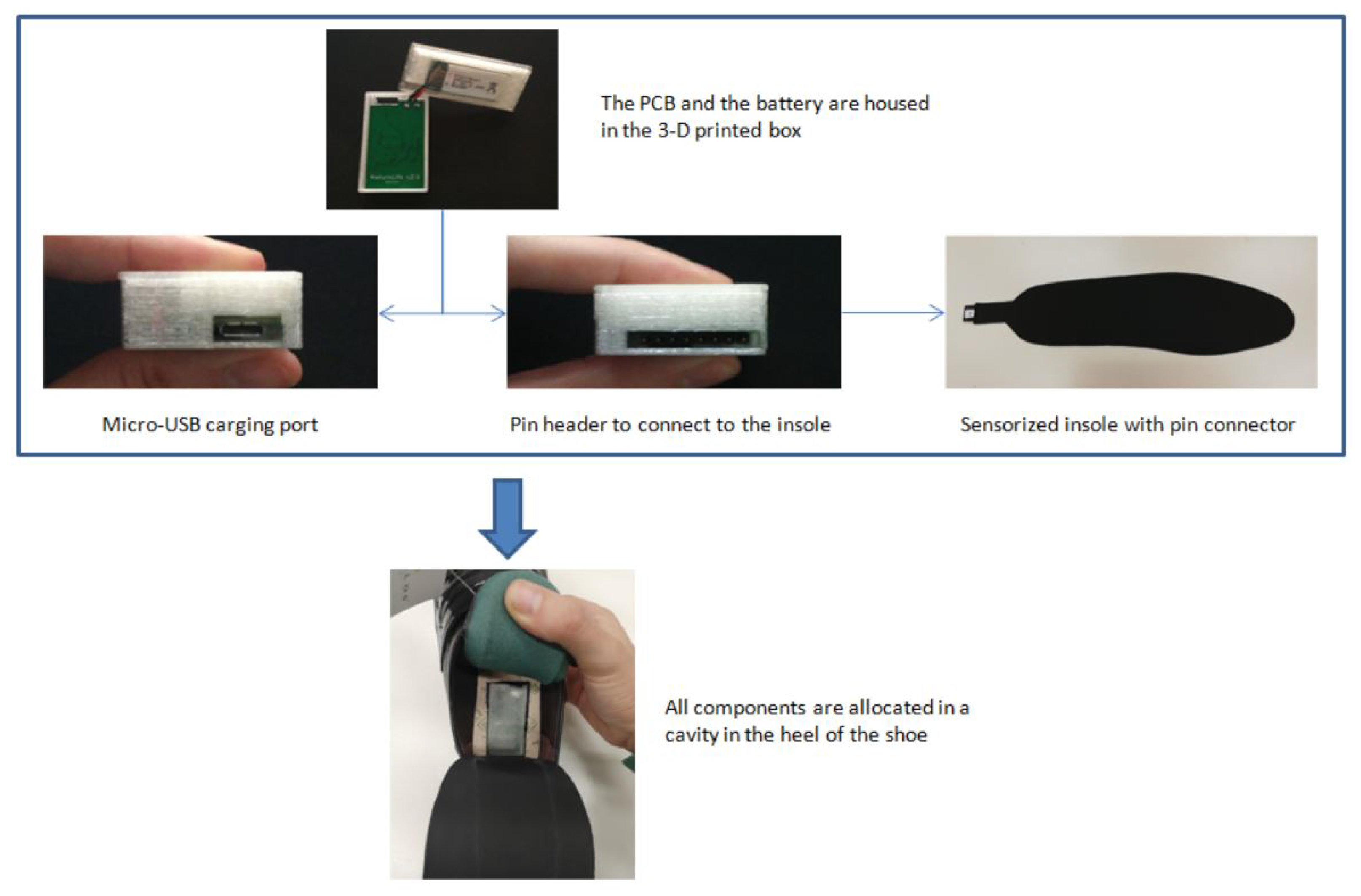

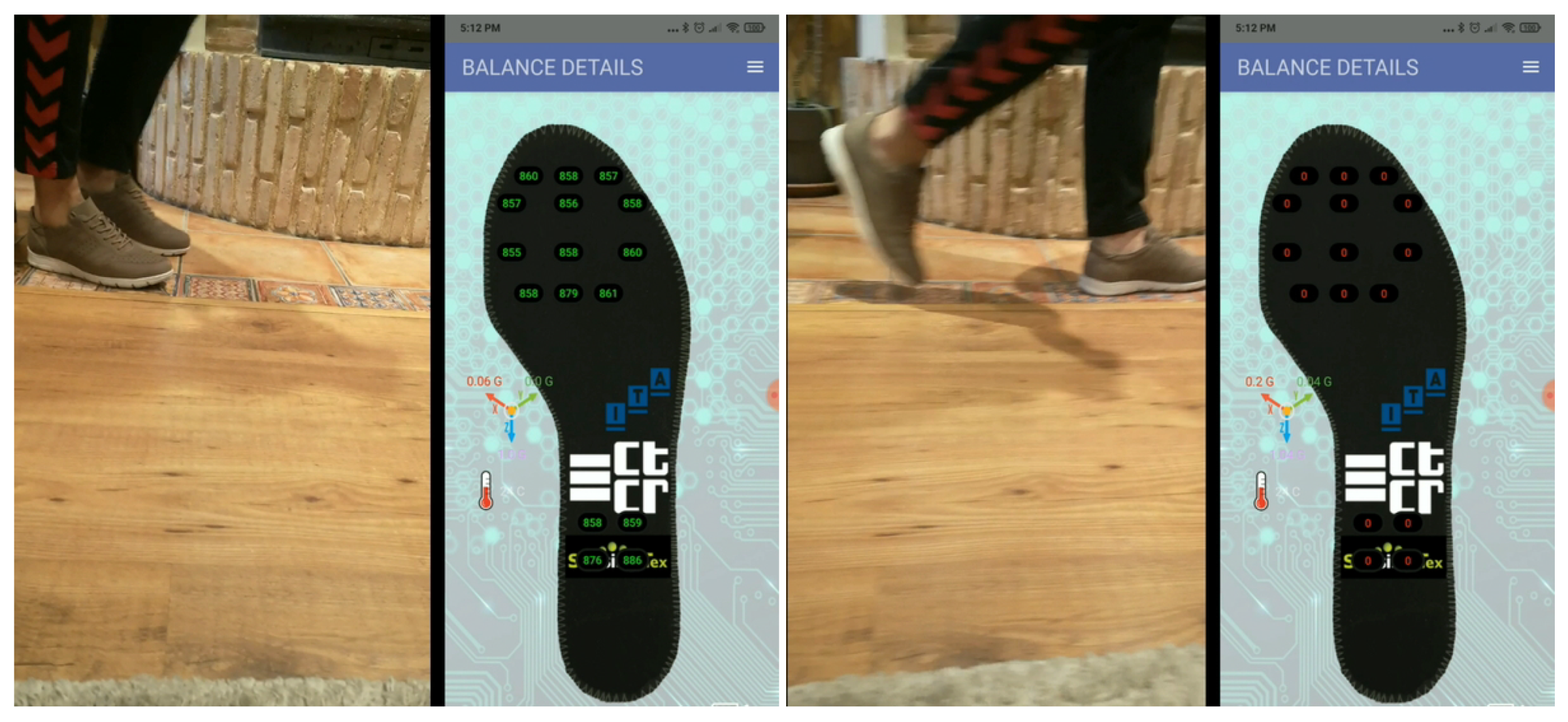

2.2. Smart Footwear Design

2.3. Experimental Setup. Data Collection

2.3.1. Events

- Sitting: sitting on a chair. May also include movements of the feet or the crossing of the legs.

- Standing still without imbalance: standing without moving forwards, backwards or sideways. May also include small foot taps.

- Standing with imbalance: includes lateral, frontal and random imbalances.

- Walking: includes different walking speeds, from slower to more normal.

- Running: running with a higher gait than walking.

- Stumbling: stumbling with the right foot. Includes both more violent and softer stumbles.

2.3.2. Data Collection

2.4. Data Analysis Module

Data Preprocessing

2.5. Model Architecture

Artificial Neural Networks

3. Results

4. Discussion and Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Acknowledgments

- -

- Coventry University -https://www.coventry.ac.uk/- (accessed on 10 May 2021) for the Maturolife project leadership and the aplication of the state of the art technologies for Selective Catalysation and Metallisation of Fabrics and Textiles.

- -

- CTCR -https://www.ctcr.es/en- (accessed on 10 May 2021) for their work in the integration of the hardware and software components needed to seamlessly transfer information from the sensorized devices and specially for their support during the experiments.

- -

- Sensing Tex -http://sensingtex.com- (accessed on 10 May 2021) for the integration of their innovative Pressure Sensing Mat solutions in the field of smart textiles based on printed electronics.

- -

- Printed Electronics -https://www.printedelectronics.com/- (accessed on 10 May 2021) for their work in the analysis and implementation of printing methods and the selection of compatible material to interconnect with the SensingTex sensing mat solutions.

- -

- Pitillos(r) -https://www.calzadospitillos.com/en/- (accessed on 10 May 2021) for the manufacturing of the shoes and their knowledge and experience in the design of usable models to house the above described technologies.

Conflicts of Interest

References

- Eurostat. Ageing Europe. Looking at the Lives of Older People in the EU. 2020. Available online: https://ec.europa.eu/eurostat/web/products-statistical-books/-/ks-02-20-655 (accessed on 3 May 2021).

- Majumder, S.; Aghayi, E.; Noferesti, M.; Memarzadeh-Tehran, H.; Mondal, T.; Pang, Z.; Deen, M.J. Smart homes for elderly healthcare—Recent advances and research challenges. Sensors 2017, 17, 2496. [Google Scholar] [CrossRef]

- Deen, M.J. Information and communications technologies for elderly ubiquitous healthcare in a smart home. Pers. Ubiquitous Comput. 2015, 19, 573–599. [Google Scholar] [CrossRef]

- Communication from the Commission to the European Parliament, the Council, the European Economic and Social Committee and the Committee of the Regions, European Disability Strategy 2010–2020: A Renewed Commitment to a Barrier-Free Europe. Available online: https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=celex%3A52010DC0636 (accessed on 3 May 2021).

- Canjuga, I.; Železnik, D.; Neuberg, M.; Božicevic, M.; Cikac, T. Does an impaired capacity for self-care impact the prevalence of social and emotional loneliness among elderly people? Work. Older People 2018, 22, 211–223. [Google Scholar] [CrossRef]

- Borg, C.; Hallberg, I.R.; Blomqvist, K. Life satisfaction among older people (65+) with reduced self-care capacity: The relationship to social, health and financial aspects. J. Clin. Nurs. 2006, 15, 607–618. [Google Scholar] [CrossRef] [PubMed]

- Kahya, N.C.; Zorlu, T.; Ozgen, S.; Sari, R.M.; Sen, D.E.; Sagsoz, A. Psychological effects of physical deficiencies in the residences on elderly persons: A case study in Trabzon Old Person’s Home in Turkey. Appl. Ergon. 2009, 40, 840–851. [Google Scholar] [CrossRef] [PubMed]

- Brookes, N.; Palmer, S.; Callaghan, L. I live with other people and not alone: A survey of the views and experiences of older people using Shared Lives (adult placement). Work. Older People 2016, 20, 179–186. [Google Scholar] [CrossRef]

- Balta-Ozkan, N.; Davidson, R.; Bicket, M.; Whitmarsh, L. Social barriers to the adoption of smart homes. Energy Policy 2013, 63, 363–374. [Google Scholar] [CrossRef]

- De Silva, L.C.; Morikawa, C.; Petra, I.M. State of the art of smart homes. Eng. Appl. Artif. Intell. 2012, 25, 1313–1321. [Google Scholar] [CrossRef]

- Lutolf, R. Smart Home Concept and the Integration of Energy Meters into a Home Based System. In Proceedings of the Seventh International Conference on Metering Apparatus and Tariffs for Electricity Supply, Glasgow, UK, 17–19 November 1992; pp. 277–278. [Google Scholar]

- Aldrich, F.K. Smart Homes: Past, Present and Future. In Inside the Smart Home; Springer: London, UK, 2006; pp. 17–39. [Google Scholar]

- Shi, W.V. A survey on assistive technologies for elderly and disabled people. J. Mechatron. 2015, 3, 121–125. [Google Scholar] [CrossRef]

- Troncone, A.; Saturno, R.; Buonanno, M.; Pugliese, L.; Cordasco, G.; Vogel, C.; Esposito, A. Advanced Assistive Technologies for Elderly People: A Psychological Perspective on Older Users’ Needs and Preferences (Part B). Acta Polytech. Hung. 2021, 18, 29–44. [Google Scholar] [CrossRef]

- Peek, S.T.M.; Wouters, E.J.M.; van Hoof, J.; Luijkx, K.G.; Boeije, H.R.; Vrijhoef, H.J.M. Factors influencing acceptance of technology for aging in place: A systematic review. Int. J. Med. Inform. 2014, 83, 235–248. [Google Scholar] [CrossRef]

- Jo, T.H.; Ma, J.H.; Cha, S.H. Elderly Perception on the Internet of Things-Based Integrated Smart-Home System. Sensors 2021, 21, 1284. [Google Scholar] [CrossRef] [PubMed]

- Yusif, S.; Soar, J.; Hafeez-Baig, A. Older people, assistive technologies, and the barriers to adoption: A systematic review. Int. J. Med. Inform. 2016, 94, 112–116. [Google Scholar] [CrossRef]

- Davies, K.N.; Mulley, G.P. The views of elderly people on emergency alarm use. Clin. Rehabil. 1993, 7, 278–282. [Google Scholar] [CrossRef]

- Shannon, C.E. A mathematical theory of communication. Bell Syst. Tech. J. 1948, 27, 379–423. [Google Scholar] [CrossRef]

- Bevilacqua, A.; MacDonald, K.; Rangarej, A.; Widjaya, V.; Caulfield, B.; Kechadi, T. Human Activity Recognition with Convolutional Neural Networks. In Joint European Conference on Machine Learning and Knowledge Discovery in Databases; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2018; Volume 11053. [Google Scholar]

- Domínguez-Morales, M.J.; Luna-Perejón, F.; Miró-Amarante, L.; Hernández-Velázquez, M.; Sevillano-Ramos, J.L. Smart footwear insole for recognition of foot pronation and supination using neural networks. Appl. Sci. 2019, 9, 3970. [Google Scholar] [CrossRef]

- Wang, J.; Chen, Y.; Hao, S.; Peng, X.; Hu, L. Deep learning for sensor-based activity recognition: A survey. Pattern Recognit. Lett. 2019, 119, 3–11. [Google Scholar] [CrossRef]

- Papagiannaki, A.; Zacharaki, E.I.; Kalouris, G.; Kalogiannis, S.; Deltouzos, K.; Ellul, J.; Megalooikonomou, V. Recognizing physical activity of older people from wearable sensors and inconsistent data. Sensors 2019, 19, 880. [Google Scholar] [CrossRef]

- Shi, Q.; Zhang, Z.; He, T.; Sun, Z.; Wang, B.; Feng, Y.; Shan, X.; Salam, B.; Lee, C. Deep learning enabled smart mats as a scalable floor monitoring system. Nat. Commun. 2020, 11, 1–11. [Google Scholar] [CrossRef]

- Lee, S.S.; Choi, S.T.; Choi, S.I. Classification of gait type based on deep learning using various sensors with smart insole. Sensors 2019, 19, 1757. [Google Scholar] [CrossRef] [PubMed]

- Maitre, J.; Bouchard, K.; Bertuglia, C.; Gaboury, S. Recognizing activities of daily living from UWB radars and deep learning. Expert Syst. Appl. 2021, 164, 113994. [Google Scholar] [CrossRef]

- Scataglini, S.; Moorhead, A.P.; Feletti, F. A Systematic Review of Smart Clothing in Sports: Possible Applications to Extreme Sports. Muscles Ligaments Tendons J. MLTJ 2020, 10, 333–342. [Google Scholar] [CrossRef]

- Shiang, T.Y.; Hsieh, T.Y.; Lee, Y.S.; Wu, C.C.; Yu, M.C.; Mei, C.H.; Tai, I.H. Determine the foot strike pattern using inertial sensors. J. Sens. 2016. [Google Scholar] [CrossRef]

- Moore, S.R.; Kranzinger, C.; Fritz, J.; Stöggl, T.; Kröll, J.; Schwameder, H. Foot strike angle prediction and pattern classification using loadsoltm wearable sensors: A comparison of machine learning techniques. Sensors 2020, 20, 6737. [Google Scholar] [CrossRef]

- Sazonov, E.S.; Fulk, G.; Hill, J.; Schutz, Y.; Browning, R. Monitoring of posture allocations and activities by a shoe-based wearable sensor. IEEE Trans. Biomed. Eng. 2010, 58, 983–990. [Google Scholar] [CrossRef] [PubMed]

- European Maturolife Project Website. Available online: http://maturolife.eu (accessed on 3 May 2021).

- Moody, L.; York, N.; Ozkan, G.; Cobley, A. Bringing assistive technology innovation and material science together through design. In Innovation in Medicine and Healthcare Systems, and Multimedia; Springer: Singapore, 2019; pp. 305–315. [Google Scholar]

- Moody, L.; Cobley, A.J. MATUROLIFE: Using Advanced Material Science to Develop the Future of Assistive Technologies. In Design of Assistive Technology for Ageing Populations; Springer: Berlin/Heidelberg, Germany, 2019; Volume 167, p. 189. [Google Scholar]

- Yang, D.; Moody, L.; Cobley, A. Integrating Cooperative Design and Innovative Technology to Create Assistive Products for Older Adults. In Proceedings of the International Association of Societies of Design Research Conference 2019: DESIGN REVOLUTIONS, Manchester, UK, 2–5 September 2019. [Google Scholar]

- Callari, T.C.; Moody, L.; Magee, P.; Yang, D.; Ozkan, G.; Martinez, D. MATUROLIFE. Combining Design Innovation and Material Science to Support Independent Ageing. In Design Journal; Taylor & Francis: Dundee, UK, 10–13 April 2019; pp. 2161–2162. [Google Scholar]

- Callari, T.C.; Moody, L.; Magee, P.; Yang, D. ‘Smart—not only intelligent’ Co-creating priorities and design direction for ‘smart’ footwear to support independent ageing. Int. J. Fash. Des. Technol. Educ. 2019, 12, 313–324. [Google Scholar] [CrossRef]

- Hegde, N.; Bries, M.; Sazonov, E. A comparative review of footwear-based wearable systems. Electronics 2016, 5, 48. [Google Scholar] [CrossRef]

- Ma, X.; Wang, H.; Xue, B.; Zhou, M.; Ji, B.; Li, Y. Depth-based human fall detection via shape features and improved extreme learning machine. IEEE J. Biomed. Health Inform. 2014, 18, 1915–1922. [Google Scholar] [CrossRef]

- Kangas, M.; Konttila, A.; Lindgren, P.; Winblad, I.; Jämsä, T. Comparison of low-complexity fall detection algorithms for body attached accelerometers. Gait Posture 2008, 28, 285–291. [Google Scholar] [CrossRef] [PubMed]

- Li, Q.; Stankovic, J.A.; Hanson, M.A.; Barth, A.T.; Lach, J.; Zhou, G. Accurate, fast fall detection using gyroscopes and accelerometer-derived posture information. In Sixth International Workshop on Wearable and Implantable Body Sensor Networks; IEEE: Washington, DC, USA, June 2009; pp. 138–143. [Google Scholar]

- Tao, Y.; Qian, H.; Chen, M.; Shi, X.; Xu, Y. A Real-time intelligent shoe system for fall detection. In Proceedings of the 2011 IEEE International Conference on Robotics and Biomimetics, Karon Beach, Thailand, 7–11 December 2011; pp. 2253–2258. [Google Scholar]

- Santos, G.L.; Endo, P.T.; Monteiro, K.H.D.C.; Rocha, E.D.S.; Silva, I.; Lynn, T. Accelerometer-based human fall detection using convolutional neural networks. Sensors 2019, 19, 1644. [Google Scholar] [CrossRef] [PubMed]

- De Pinho André, R.; Diniz, P.; Fuks, H. Bottom-up Investigation: Human Activity Recognition Based on Feet Movement and Posture Information. In Proceedings of the 4th International Workshop on Sensor-Based Activity Recognition and Interaction, Rostock, Germany, 21–22 September 2017; pp. 1–6. [Google Scholar]

- el Achkar, C.M.; Lenoble-Hoskovec, C.; Paraschiv-Ionescu, A.; Major, K.; Büla, C.; Aminian, K. Instrumented shoes for activity classification in the elderly. Gait Posture 2016, 44, 12–17. [Google Scholar] [CrossRef] [PubMed]

- Zitouni, M.; Pan, Q.; Brulin, D.; Campo, E. Design of a smart sole with advanced fall detection algorithm. J. Sens. Technol. 2019, 9, 71. [Google Scholar] [CrossRef]

- Montanini, L.; Del Campo, A.; Perla, D.; Spinsante, S.; Gambi, E. A footwear-based methodology for fall detection. IEEE Sens. J. 2017, 18, 1233–1242. [Google Scholar] [CrossRef]

- Light, J.; Cha, S.; Chowdhury, M. Optimizing pressure sensor array data for a smart-shoe fall monitoring system. In Proceedings of the 2015 IEEE SENSORS, Busan, Korea, 1–4 November 2015; pp. 1–4. [Google Scholar]

- Sim, S.Y.; Jeon, H.S.; Chung, G.S.; Kim, S.K.; Kwon, S.J.; Lee, W.K.; Park, K.S. Fall detection algorithm for the elderly using acceleration sensors on the shoes. In Proceedings of the 2011 Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Boston, MA, USA, 30 August–3 September 2011; IEEE: Boston, MA, USA, August 2011; pp. 4935–4938. [Google Scholar]

- Wirth, R.; Hipp, J. CRISP-DM: Towards a standard process model for data mining. In Proceedings of the 4th International Conference on the Practical Applications of Knowledge Discovery and Data Mining (Vol. 1), London, UK, 11 April 2000; Springer: Berlin/Heidelberg, Germany, 2000. [Google Scholar]

- Kubernetes. Available online: https://kubernetes.io/ (accessed on 4 May 2021).

- Telegram Messenger. Available online: https://telegram.org (accessed on 3 May 2021).

- MQTT—The Standard for IoT Messaging. Available online: https://mqtt.org/ (accessed on 3 May 2021).

- InfluxDB Time Series Platform|InfluxData. Available online: https://www.influxdata.com/products/influxdb/ (accessed on 3 May 2021).

- Akiba, T.; Sano, S.; Yanase, T.; Ohta, T.; Koyama, M. Optuna: A next-generation hyperparameter optimization framework. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, Anchorage, AK, USA, 4–8 August 2019; Association for Computing Machinery: New York, NY, USA, July 2019; pp. 2623–2631. [Google Scholar]

- Bergstra, J.; Bardenet, R.; Bengio, Y.; Kégl, B. Algorithms for hyper-parameter optimization. In Proceedings of the 25th Annual Conference on Neural Information Processing Systems, Granada, Spain, 12–17 December 2011; Neural Information Processing Systems Foundation, Inc. (NIPS). Volume 24. [Google Scholar]

- The Python Tutorial. Available online: https://docs.python.org/3/tutorial/ (accessed on 4 May 2021).

| Events | No of Observations |

|---|---|

| Sitting | 3020 |

| Standing still without imbalance | 6920 |

| Standing with imbalance | 5230 |

| Walking | 9620 |

| Running | 3480 |

| Stumbling | 820 |

| Parameters | Search Space |

|---|---|

| No. of hidden Dense/Time Distr. Dense layers | [1, 11] |

| No. of units of each layer | [1, 64] |

| Activation function of each layer | {tanh,selu} |

| Use of Conv. layer | {True,False} |

| No. of filters in Conv. | [1, 50] |

| Size window in Conv. | [2, 32] |

| Activation function of Conv layer | {selu,sigmoid} |

| Use of pooling layers | [True,False] |

| Use of LSTM layer | [True,False] |

| No. of units of LSTM | [1, 50] |

| Learning rate | {0.1, 0.01, 0.001} |

| Optimizer | {sgd,adam,rmsprop} |

| Batch size | [1, 50] |

| Model Standing vs. Seated | Model Moving vs. Still | Model Stumbling vs. Not Stumbling | Model Unbalanced vs. Stable | Model Running vs. Walking | |

|---|---|---|---|---|---|

| No. of hidden Dense/ Time Distr. Dense layers | 1 | 1 | 11 | 11 | 3 |

| No. of units of each layer | 32 | 42 | [26,54,16,38, 46,54,18,38, 36,54,1] | [32,4,38,50 34,38,22,6 24,58,58] | [46,6,9] |

| Activation function of each layer | selu | tanh | [tanh,selu,selu,selu, selu,tanh,selu,selu, selu,tanh,selu] | [tanh,tanh,selu,tanh, tanh,tanh,selu,tanh, selu,tanh,selu] | [selu,tanh,tanh] |

| Use of Conv. layer | False | False | True | True | False |

| No. of filters in Conv. | - | - | 21 | 6 | - |

| Size window in Conv. | - | - | 10 | 4 | - |

| Activation function of Conv layer | - | - | selu | sigmoid | - |

| Use of pooling layers | False | False | False | True | False |

| Use of LSTM layer | False | False | False | False | False |

| No. of units of LSTM | - | - | - | - | - |

| Learning rate | 0.01 | 0.01 | 0.01 | 0.001 | 0.01 |

| Optimizer | adam | sgd | sgd | adam | adam |

| Batch size | 33 | 48 | 21 | 19 | 47 |

| Previous timestamps | 16 | 32 | 4 | 16 | 32 |

| Selected sensors | { | { | { | { | { |

| } | } | } | } | } |

| Model Standing vs. Seated | Model Moving vs. Still | Model Stumbling vs. Not Stumbling | Model Unbalanced vs. Stable | Model Running vs. Walking | |

| Accuracy | 0.99 | 0.98 | 0.78 | 0.91 | 0.96 |

| AUC | 0.98 | 0.98 | 0.77 | 0.91 | 0.95 |

| Precision | 1 | 0.98 | 0.64 | 0.91 | 0.95 |

| Recall | 1 | 0.99 | 0.75 | 0.89 | 0.91 |

| F1-score | 1 | 0.98 | 0.69 | 0.9 | 0.93 |

| Specificity | 0.97 | 0.98 | 0.79 | 0.93 | 0.98 |

| NPV | 0.97 | 0.99 | 0.86 | 0.91 | 0.97 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Aznar-Gimeno, R.; Labata-Lezaun, G.; Adell-Lamora, A.; Abadía-Gallego, D.; del-Hoyo-Alonso, R.; González-Muñoz, C. Deep Learning for Walking Behaviour Detection in Elderly People Using Smart Footwear. Entropy 2021, 23, 777. https://doi.org/10.3390/e23060777

Aznar-Gimeno R, Labata-Lezaun G, Adell-Lamora A, Abadía-Gallego D, del-Hoyo-Alonso R, González-Muñoz C. Deep Learning for Walking Behaviour Detection in Elderly People Using Smart Footwear. Entropy. 2021; 23(6):777. https://doi.org/10.3390/e23060777

Chicago/Turabian StyleAznar-Gimeno, Rocío, Gorka Labata-Lezaun, Ana Adell-Lamora, David Abadía-Gallego, Rafael del-Hoyo-Alonso, and Carlos González-Muñoz. 2021. "Deep Learning for Walking Behaviour Detection in Elderly People Using Smart Footwear" Entropy 23, no. 6: 777. https://doi.org/10.3390/e23060777

APA StyleAznar-Gimeno, R., Labata-Lezaun, G., Adell-Lamora, A., Abadía-Gallego, D., del-Hoyo-Alonso, R., & González-Muñoz, C. (2021). Deep Learning for Walking Behaviour Detection in Elderly People Using Smart Footwear. Entropy, 23(6), 777. https://doi.org/10.3390/e23060777