1. Introduction

The instruments and measuring systems must be calibrated to link the directly measured signal to the measured quantity. Calibration is a measurement, subject to errors [

1]. The need for periodic quality control (QC) is evidence of the time variability of the measuring system. This study focuses on QC decisions, and only measurement errors can be quantified in the QC. Therefore, only these will be discussed.

Metrology—the science of measurement—was developed in conjunction with the concept of the normal (Gaussian) distribution. These mathematical tools were originally created to describe measurements taken in stable and unchanging environments—usually in systems that are not alive. The probability density function of the Gaussian distribution describes how the values of a continuous random variable are distributed, and it is strictly valid only when the conditions remain constant. However, clinical laboratory measurements involve biological materials and systems that are inherently variable. This makes the application of traditional metrological models more complex and requires careful consideration of the specific conditions under which measurements are made.

A significant contribution of the theory was that it enabled the distinction between systematic and random measurement error components. The total measurement error (TE) is the sum of the systematic error component (SE) and the random error component (RE). The criterion to distinguish the two components (SE and RE) in the International Vocabulary of Metrology (3rd Ed., VIM3) [

2] 2.17 and 2.19 definitions is the predictability. The systematic measurement error component (SE) is either constant or varies predictably, while the random error component (RE) varies unpredictably across replicate measurements. The stress in the definition is on the “in replicate measurements” expression, which restricts the meaning of “varies unpredictably”. The neglect of the restriction is the source of several misinterpretations of the definition, as will be detailed in the study.

Unfortunately, the σ and μ, the parameters of the probability density function of the Gaussian distribution, are ideal values; therefore, estimators are used instead.

, the mean of the measurements, is the estimator of μ, the ideal mean, and if and only if the conditions of the probability density function of the Gaussian distribution are strictly respected, the standard deviation (SD) is the estimator of σ, the ideal mean, which is the measure of the dispersion of data around the mean caused by the random error (RE). The SD can be determined by making several measurements of the same quantity. Its value depends on the conditions in which the measurements are made. VIM3 [

2] distinguishes three types of measurement conditions:

Repeatability conditions (VIM3 2.20 [

2]—constant conditions: same measuring procedure, same operators, same measuring system, same location, and same operating conditions over a short period of time). The measured SD is abbreviated as

.

Intermediate, also called reproducibility within laboratory conditions (VIM3 2.22 [

2]—variable conditions in the laboratory, over an extended period of time, maintaining the same measurement procedure and the same location). The measured SD is abbreviated

.

(Full) reproducibility conditions (VIM3 2.24 [

2], including all possible variations that may happen when moving and measuring the sample in a different laboratory, including different measuring procedures and different locations). The measured SD is abbreviated

.

The full reproducibility conditions include variations that a specialist (an operator) cannot influence in their own laboratory; therefore, this study will not discuss them. However, with some adaptations, the conclusions of this study can also be applied to full reproducibility conditions.

This study starts by assuming four quintessential principles valid across all fields of metrology:

QP1: A parameter must be determined under the same conditions under which it is used in calculations and predictions. If the conditions differ, the results may not be valid [

3,

4].

QP2: When applying a law or using an equation, we assume that all conditions of applicability are fulfilled. (It may be an unconscious, hidden assumption if the compliance with the condition is not verified.) [

2].

QP3: A corrective action—such as recalibration—can neither efficiently correct an error if the average error introduced by the corrective action is larger than the original error, nor if the uncertainty of the value is greater than the error intended to fix [

2,

5].

QP4: Adding a constant (as a correction, GUM B.2.23 [

2,

5]) or multiplying it by a constant (as a correction factor, GUM B.2.24 [

2,

5]) to a function does not reduce the variability of that function. This means that such corrections cannot eliminate the natural variation present in the measurements.

Quality control (QC) in clinical laboratories has unique characteristics compared to other fields that use metrology. The samples measured in clinical labs are biological materials, which are highly complex and contain proteins. These samples, along with reference materials and reagents, are often unstable. This instability leads to significant variation in measurement errors. Additionally, the technology used in the production of the control materials can alter the structure of the proteins. This change causes what is known as matrix errors. Because of this, the bias measured during internal QC in clinical laboratories is only accepted as a relative value—meaning it is compared to the target value of the control material. The true or “absolute bias value” is determined through the external quality assessment (EQA).

Reliable quality control is not possible without a solid theoretical foundation. This foundation must be built on clear definitions and well-established conditions. In the context of clinical laboratory QC, we assume the following facts:

Despite the effort to correct them, most of the monthly mean deviations of the control results from the target values are not insignificant, suggesting their incorrigibility. (In the clinical laboratory, half of these relative biases are around 1 ×

(one standard deviation (SD) measured in intermediate (reproducibility within laboratory) conditions (The conditions may be either intermediate conditions or reproducibility within laboratory conditions. VIM suggests the first, but older literature uses the second. The abbreviation is from the second) [

1]).

The laws of the normal distribution would predict hundreds of warnings and alarms if the bias is 1 × SD, which are not observed. Instead, several ‘impossible’ QC graphs (e.g., no value beyond the 2 × SD limit) are experienced in practice [

1], suggesting an overestimation of the decision limits based on

[

6].

The time variability of the systematic error (bias) is known from the beginning of metrology [

7,

8]. Despite the known variability, it is not a usual practice to distinguish between biases measured in repeatability and those measured in intermediate or reproducibility within laboratory conditions, as is performed in the case of standard deviations (SD).

The variability of the aforementioned relative biases contradicts the predictions based on Student’s t distribution tables [

9]. The variability of the biases measured in external quality assessment (EQA) is even bigger [

10].

Calibration is a measurement, subject to errors [

1].

In normal laboratory conditions (which must be guaranteed), i.e., air-conditioned clinical laboratory (adequate temperature), using an automated analyzer, well-trained personnel, adequate deionized water quality, correctly performed maintenance, and absence of failures (which are usually not detected in the QC), only sources of random error (RE) but no sources of significant σ variability can be identified. While

is not time-variable [

11,

12,

13], the variability of the

(a value measured in a month may even double or halve in the next month [

10]) contradicts the predictions based on chi-square distribution tables [

9], questioning whether

is the correct estimator of the σ parameter (the mean random error).

Research aimed at understanding the causes of the previously mentioned phenomena uncovered several hidden factors (referred to as QP2), as well as some widely accepted but incorrect assumptions and contradictions. Below are a few examples:

The analysis of the causes suggested that the source of these false assumptions can be traced to the unverified conditions of the normal distribution, the misinterpretation of the ‘random measurement error’ definition in VIM3 2.19 [

2], and the neglected separation of the components of the systematic error; however, VIM3 2.17 [

2], through the word ‘or’, suggests the existence of two components of the systematic error (SE).

Modern metrology was born alongside the work of Gauss and Laplace, as well as the development of the normal distribution. However, neither of the authors used the ‘normal distribution’ term. It originates from K. Pearson [

15]: “Many years ago, I called the Laplace-Gaussian curve the ‘normal’ curve, which name, while avoids an international question of priority, has the disadvantage of leading people to believe that other distributions of frequency are in one sense or other ‘abnormal.’” The two definitions of the quantitative expression of the measurement uncertainty are equivalent only if the data are normally distributed. According to GUM F.1.1.3 [

2,

5], “Second, it must be asked whether all of the influences that are assumed to be random really are random”.

The study starts with the description of the proposed error model in metrology, then a mathematical deduction, which will bring proofs that the separation of the SE into a constant and a variable component is justified, the has two components, and only one is random. The other component, however, is an SD; it is systematic and exhibits a different mathematical behavior. In the next section, the study uses computer simulations to support the mathematical findings. These simulations confirmed that the equations are correct and that the separation of components is justified. The Discussion section explores the consequences of these results, and suggestions will be formulated for future research. The findings also call for a shift in how we think about quality control (QC) and metrology. A new perspective is needed—one that clearly distinguishes between random and systematic components of uncertainty and treats them according to their true nature.

2. Proposed Error Model

The concept of bias is a subject of ongoing debate in metrology. According to the International Vocabulary of Metrology (VIM3, [

2]), two definitions are relevant. First, VIM3 4.20 defines instrumental bias (IB) as the average of replicate indications (

) minus a reference quantity value (

xref):

According to the VIM3 2.18 [

2], the definition of measurement bias or bias (B) is “the estimate of a systematic error”. The reference value itself (VIM3 5.18 [

2]) is described as a quantity value used as a basis for comparison with quantities of the same kind. This reference can either be a true value (which is usually unknown) or a conventional value (which is known and agreed upon).

In the total error (TE) theory-based metrology, bias is calculated relative to a reference value. However, the Guide to the Expression of Uncertainty in Measurement (GUM [

5]) introduced a more modern approach. According to GUM 3.2.3 [

5], if a bias is identified and is ‘significant in size relative to the required accuracy of the measurement’, it must be corrected. Additionally, the uncertainty associated with this correction must be included in the overall uncertainty budget.

Despite correction efforts, some bias may remain. However, GUM 3.2.3 [

5] states the following: “It is assumed that, after correction, the expectation or expected value of the error arising from a systematic effect is zero”. The statement is not true. According to QP3, a correction cannot eliminate a bias if the uncertainty of the bias is greater than the bias intended to be fixed. Consequently, after correction, the remanent bias is not zero, but is under the limit of corrigibility and can be considered insignificant. However, its value is not precisely known; the bias exists. The situation becomes more complex when the bias is not constant. If the bias changes over time or under different conditions, any correction becomes temporary. In such cases, the bias tends to reappear, making calibration or correction only a short-term solution. Only a constant bias component can be efficiently corrected (QP4).

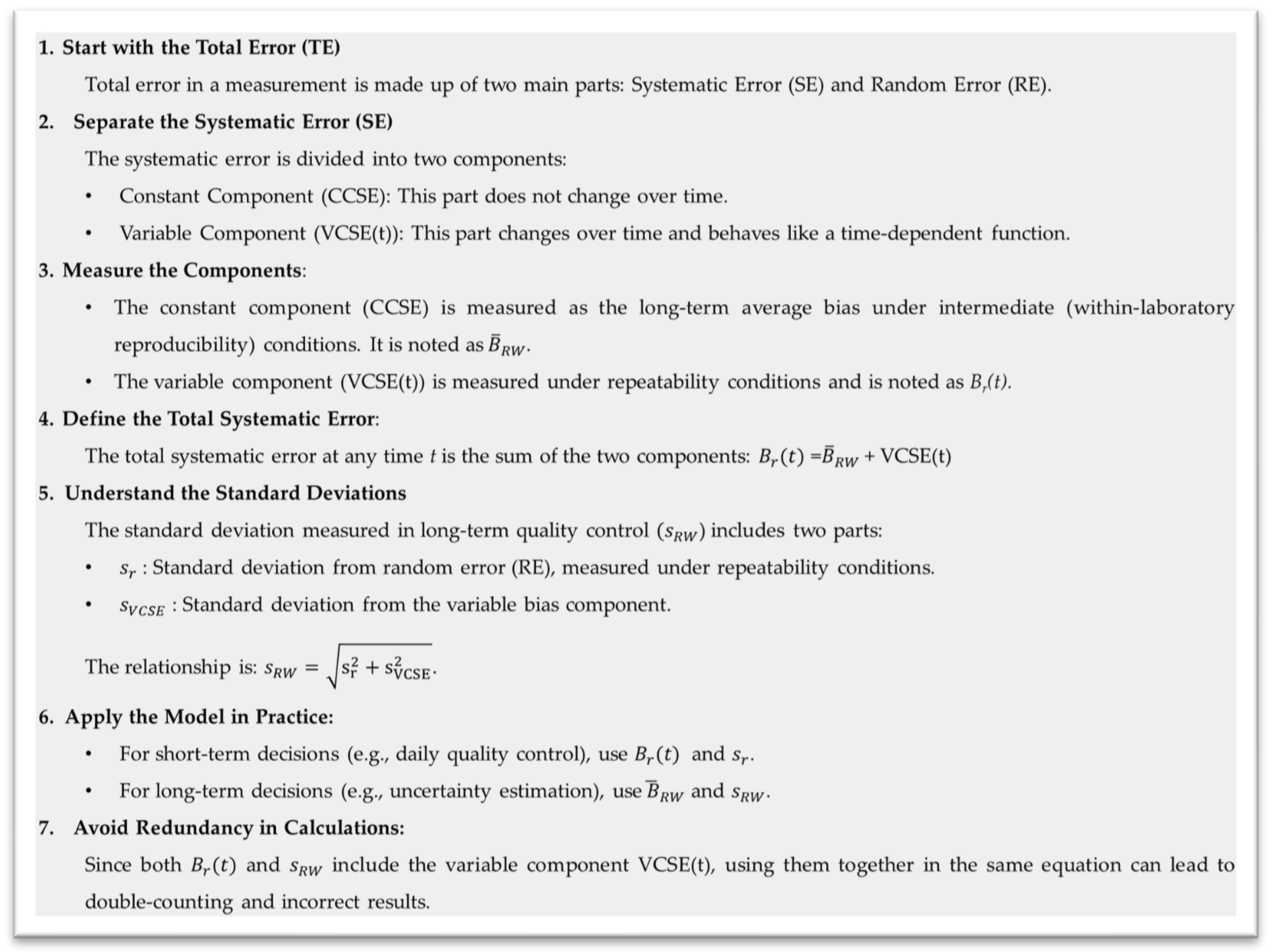

The contradictions above could not be explained consistently without separating the systematic error (SE) (and its estimator, the bias) into two subcomponents: the constant component of the SE (CCSE) and the variable component of the SE (VCSE(t)), which changes over time. This separation is essential because the variable component behaves as a time-dependent function, making its impact on measurements unpredictable and harder to correct. Even the definition provided in VIM3 2.17 [

2] suggests this distinction. It describes systematic error as “The component of measurement error that in replicate measurements remains constant or varies in a predictable manner”. The use of the word “or” implies that systematic error may either be constant or change in a way that can be anticipated. Recognizing this dual nature enables a more accurate understanding and handling of bias in measurement systems, particularly in complex environments such as clinical laboratories.

The existence of the CCSE is sustained by the mentioned incorrigible monthly biases linked to the calibration errors. In contrast, the need for periodic controls sustains the existence of the VCSE(t). The bias can be measured by repeatedly measuring the same certified reference material (in repeatability conditions). On different days, different values are obtained. Let it be noted that B

r(t) is the bias measured under repeatability conditions at time t. In long time frames, under intermediate reproducibility within laboratory conditions, only a mean value can be obtained. (Let it be noted that

, highlighting with an accent that is a mean value.)

can be identified with the CCSE. The difference between them is the VCSE(t) function. The relationship between them is as follows [

16,

17]:

Without definitions in VIM3, more authors, using different names, notations, and definitions, published equations consistent with Equation (2) [

18,

19,

20]. Using empirical methods and computer simulations, AB. Vandra [

16,

17] obtained the following relationship between the standard deviation (SD) measured in repeatability and in reproducibility within laboratory conditions (

and

):

where

is the SD calculable from the variable B

r(t) values, or the values of the VCSE(t). An SD can be calculated from any set of variable data, not only from normally distributed ones [

16,

17]. Several authors using different names, definitions, and notations published equivalent equations to Equation (3) [

13,

18,

19,

20]. None of them published a mathematical proof. The differences in notations, content, definitions, and names impose upon the standardization and definitions in the VIM3.

Equation (3) suggests that

is not a purely random component, but a mix of two terms. s

r is the random component (

≈ σ), while

, which is calculated from the bias values, is a systematic component. Consequently,

is not the measure of the RE, but of the variability of data under two influences: the RE and the bias variations. Because this observation contradicts the actual recommendations, which consider

the estimator of the RE [

2,

3,

4,

5,

6,

14], this study aims to bring mathematical proof for this statement.

Equations (2) and (3) together describe an error model that addresses the time variability of the SE (bias). In contrast to similar alternative models, this model takes an additional step, linking the bias components to the values measured in repeatability and intermediate reproducibility (reproducibility within the laboratory) conditions, respectively. Equations (2) and (3) have the advantage of highlighting that the VCSE(t) function in repeatability conditions is hidden in Br(t), whereas in reproducibility within laboratory conditions, in the . The VCSE(t) appears unimportant since, being included in other error components, no decisions are made based on its value; however, the statement is only apparently true. If we are consistent with Equations (1) and (2), we can realize that is a mean, not a value. Br(t) is a time-dependent function, and its value has only 24 h validity. includes a systematic error component and considering it the measure of σ is questionable. The use of and Br(t) in the same equation causes redundant use of the VCSE(t). Because there are two SD and two bias types, “the SD” and “the bias” (with the definite article) are ambiguous terms (definitional uncertainties) if it is not specified (at least in the context) which one we are referring to. (To or to Br(t)? To or to ?). For example, “according to GUM, the discovered bias must be corrected”. The ambiguity is revealed when we recognize that bias measurements (in EQA) are conducted under repeatability conditions, bias is variable, and its validity term is limited to only 24 h. According to QP4, a variable bias cannot be eliminated by adding a constant (a correction) to the result. Meanwhile, is a constant and can be corrected.

The inclusion of the VCSE(t) in the bias (B

r(t)) in the short term, and the

in more extended time frames, suggested questionable theories, like the following: “Variable bias components become random errors over time” [

21]. As will be shown, the ‘transformation’ is only subjective, based on a misinterpretation of VIM3 2.19 [

2].

However, this theory was born in the clinical laboratory, suggesting modifications in the VIM3, with implications across all domains where metrology is applied. Therefore, this study aims to provide a theoretical foundation for the previously empirically deduced theory and error model based on the separation of the SE into a constant and a variable subcomponent, elucidating its implications and the significance of .

Furthermore, as a consequence of the proposed error model (

Figure 1) applied consistently with the mentioned four quintessential principles of metrology, it can be concluded that the long-term QC data are not normally distributed, and

is not the correct estimator of σ. The former conclusion contradicts the actual paradigm. Therefore, this study aims to bring mathematical proofs, sustained by computer simulations, that the long-term QC data are not normally distributed, and the correct estimator of the σ parameter is measured under repeatability conditions (

). The advantages of separating the SE into a constant and variable component, as well as the risks of not performing the separation, will be analyzed.

This study has two main goals. The first is to answer the following questions: (1) Can long-term control data follow a normal distribution? (2) What is the cause of the significant variability of

and the mean? If the answer to the first question is yes, the study also aims to define the conditions under which this is true. The second goal is to help clarify what the term “random” means in metrology. As M. Krystek observed [

22], “We speak of ‘random’ variations, although we cannot explain what the attribute ‘random’ actually means”. And he also mentioned that “It has long been known that there are two essentially different contributions to the measurement uncertainty, but the discussion of how to deal with them in a correct way is still going on”.

3. Mathematical Deduction

This study is a theoretical investigation based on mathematical deductions derived from the Gauss equation. It focuses on the conditions under which this equation can be applied, and on the precise definitions of its parameters, σ and μ, and their estimators, the mean and the SD, respectively. The misinterpretations highlight the weakness of the VIM3 2.19 [

2], and the inequivalence of the ‘random’ and ‘unpredictable’ terms will be sustained by examples and counterexamples, mainly from the clinical laboratory practice but also from other domains.

The primary objective of this study is to determine whether the long-term data can be assumed to be normally distributed. The demonstration starts with the probability density function of the Gaussian (normal) distribution:

where

f(

x) is the probability density function,

σ is the scale parameter characterizing the dispersion of data, and

μ is the location parameter, the ideal mean of the data [

9].

The

σ and

μ parameters of the equation are assumed to be constants. This is a condition of applicability. If they are not, Equation (4) is not valid, and the distribution is not normal. Gauss imagined describing the distribution of data in lifeless domains under constant conditions [

23]. This means the same measured quantity at a specific moment, which means repeatability conditions of measurement. (VIM3 2.20 [

2]). In variable conditions

σ and

μ are time-variable functions, and Equation (4) becomes

where

and

are the functions that describe the time-dependence of the parameters. For each t moment, a different equation is obtained.

Quality control measurements are made in discrete moments. In each t = i moment (i = (1,…,m)), n measurements are made (j = (1,…n)). Let it be noted that x

i,j the jth result in day i. In repeatability conditions at time point ‘i’, the mean of the results (the mean of day i obtained from a finite number of results

) is

The theoretical mean

at time point t = i is

is the estimator of

The deviation of the jth measurement

from the measured mean (

, noted d

i,j(

, is

Because of the properties of the means are as follows:

The SD measured at time point t = i, (

repeatability conditions) is the root mean square of the deviations

(with Bessel’s correction [

24], substituting the number of measurements n with the degrees of freedom

n − 1). The

σ parameter at time point t = i (

σi) is the limit of the measured standard deviation,

, if n →

:

Because of Equation (10), the measured SD,

, can be used as the estimator of

σi, but only in the domain of validity of Equation (4), which means constant repeatability conditions. From the set of

m individual values of repeatability standard deviation,

, it is possible to calculate their average (

):

The long-term mean

of the determined m daily means

is

is the estimator of the theoretical long-term mean

μ. The deviation of each measured value from the long-term mean (

di,j(

)) is

The first term in Equation (13), denoted as di,j(, represents the deviation from the daily average under repeatability conditions at time point ‘i’. This term captures the random variation in the measurements. The second term is the difference between the daily average and the long-term mean , a systematic component (Equation (1)). These two components have different physical meanings and statistical behavior. The random error term is variable, while the systematic error term is constant within a given day. Because one term is constant and the other is variable, they are statistically independent. This separation helps clarify the nature of measurement variability and supports the structure of the proposed error model.

Because of the properties of the means are as follows:

Calculating the long-term SD (

), substituting the

di,j(

) values in Equation (11), the following is obtained:

Because of Equations (9) and (14), the

term is approximately 0 and can be neglected. In the first term, under the square root,

can be identified, while the second term represents the square of an SD calculated from the variable daily mean values (

). Because

is the difference between two means, it is not a random component. It characterizes the variability of the systematic error component. Let it be named the SD calculated from the variable component of the SE and noted as

, as proposed in

Section 2.

Because neither of the terms under the sum is dependent on j, all terms in the

sums are equal. As m increases, the difference between the last two terms becomes insignificant. Using the

notation, Equation (15) becomes

Equation (17) is Equation (3) (which was deduced using empirical methods by more authors, as mentioned in the Introduction), with a single difference: Equation (17) highlights that is a mean value. The former deduction confirms the validity of Equation (3). Equation (3) assumes a homoscedastic measurement system (constant -QP2), while Equation (17) is also valid in heteroscedastic measuring systems.

Let it be that j = 2 (two measurements in repeatability conditions on each day). According to Equation (6)

=

. Consequently, according to Equation (8), d

i,1(

= −d

i,2(

, according to Equations (13) and (14),

= d

i,1(

, and the SD calculated from the differences in duplicate measurements measured in repeatability conditions is

(Equation (11)), validating the Dahlberg method [

11] of calculating

using duplicate measurements, obtained in long time frames. The possibility of calculating

from long-term data demonstrates that

is not linked to the time frame, but rather to the constant conditions. The short time frame is necessary only to guarantee the constant conditions of the measurements. Consequently, Equations (3) and (17) suggest that the second term in the equation (

) is not linked to the random phenomenon but is a systematic component.

It is unusual in practice to make more replicated measurements in repeatability conditions each day, and to calculate their averages. Let us imagine such an experiment, calculating the average of

k measurements on each day performed in repeatability conditions. When calculating the average deviation of each day, the second term of Equation (13) will not be influenced (it is the same in each

) term). Therefore

If the method of measurement is homoscedastic or quasi-homoscedastic (the SD is constant or quasi-constant), is obtained; if it is heteroscedastic (variable SD), a mean value of the SD is measured in repeatability conditions (. Equation (18) suggests that the second term in the equation () is not linked to the random phenomenon.

In constant conditions (

= 0), Equation (18) reduces to

Equation (18) contradicts what we should expect from an average of normally distributed values.

5. Discussion

An inaccurate definition is not necessarily incorrect. An inaccurate definition means missing or unspecified conditions, details, or the definition leaves room for misinterpretation. Such an example is the name of the ‘normal distribution.’ K. Pearson, who proposed the unlucky name, acknowledged later: “It has led many writers to try and force all frequency (…) into a ‘normal’ curve”. [

15].

This is the motivation behind the GUM F.1.1.3 [

2,

5] recommendation to verify all distributions before considering them normal. This step is essential to avoid errors in statistical analysis. In fields where periodic quality control (QC) is required, the bias is not constantly changing over time. This bias must be predictable, meaning it behaves as a time-dependent function. Without this property, reliable predictions cannot be made. Therefore, in such domains, treating bias as a time-variable function is not just practical—it is necessary.

This study focuses on the issue of measurement error, which includes both systematic and random components. The systematic error component can be divided into constant and variable parts. Our main goal is to draw attention to the fact that the variable part of systematic error is often overlooked in current computational methods. In our model, we assume that all input parameters are independent. This assumption simplifies the theory and helps us develop the model more easily. However, we do not explore the uncertainties that come from variable measurement errors caused by correlations between input parameters. Modeling the variances and covariances of these correlated systematic errors is important, but it is beyond the scope of this article.

Since the true value cannot be known, the exact value of the bias can only be determined with uncertainty. However, even though the bias cannot be measured precisely, it does exist. In the internal QC in the clinical laboratory, a single reference standard for each level is used as control material in long time frames. These control materials, because of the technology used in their production, are not commutable, which means that they do not behave exactly as patient samples, and the exact value of the bias cannot be determined because of the matrix error. Even in this condition, the variability of the measured value can be used to determine the VCSE(t) within the limits of uncertainties of the statistical methods. Only the constant component of the bias remains uncertain.

Bias does not increase or decrease indefinitely because corrective actions are regularly applied in quality control processes. As a result, bias is always a bounded function and typically exhibits a cyclical nature. Unfortunately, these cycles are unequal in length, amplitude, and mean. Bias can be strictly increasing or decreasing only over short time periods. In contrast, the probability density function of a normal distribution is unbounded, meaning it extends infinitely in both directions. This contradiction excludes the identity. Therefore, neither the daily , nor the variations in bias, nor the long-term control data can be normally distributed. However, the bias function is not always continuous; in fact, it is usually discontinuous in practice.

A human intervention (e.g., calibration) causes an unpredictable change in the mean and bias, discontinuing the function, because the results after calibration are calculated using a different calibration graph. (However, calibrations are performed by humans, calibration errors are not human errors, but inherent errors caused by the fact that calibration is a measurement). However, bias remains predictable between such interventions. It is essential to distinguish between two types of variability: random error and systematic error, which vary randomly. Random error refers to unpredictable changes that occur between two consecutive measurements (in replicated measurements according to VIM3 2.19 [

2]). In contrast, the randomly variable systematic error is not consistent with the VIM3 2.19 definition, as it remains predictable in the short term (constant bias in the case of stable reagent or gradually increasing/decreasing bias, e.g., caused by reagent degradation). This latter type of error reflects changes in the bias that are not purely random but follow a pattern or function over time. Due to the unpredictable changes in the mean (caused by human interventions, e.g., calibration errors, though unexpected phenomena may also contribute, e.g., reagent impurification), the bias becomes unpredictable over time, but not “unpredictable in replicated measurements”. Therefore, such bias variations are not consistent with the VIM3 2.19 definition of the RE.

Equation (4), which describes the probability density function, is valid only in constant conditions, a condition that is frequently neglected/forgotten. In variable conditions, Equation (5) is valid, which describes a different normal distribution in each moment ‘t’, but the sum of these is not necessarily a normal distribution. For example, the sum of two normal distributions with the same σ, but a different mean (μ1 and μ2), is a bimodal distribution, with peaks in μ1 and μ2. Due to SE (bias) variability, the classical error model (TE = SE + RE, and maxTE = SE + z · SD), which assumes a normal distribution of the long-term QC data, must be reevaluated.

More authors (using different names, abbreviations, and definitions for the terms) have proposed alternative, empirically deduced error models to address the SE variability. This study proposed to support mathematically these models. The study started from a model proposed by AB Vandra [

15] (Equations (2) and (3)), which takes one more step, linking the bias components to the values measured in repeatability (B

r(t)) and intermediate reproducibility (reproducibility within laboratory) conditions (

).

By the mathematical deduction of Equation (17), Equation (3) is validated, with one minor difference. While Equation (3) assumes a homoscedastic measurement system, Equation (17) is more general, being valid in both homoscedastic and heteroscedastic measurement systems. Simultaneously, the Dahlberg method of calculating (the SD measured in repeatability conditions) from differences between duplicate measurements was also mathematically validated. The method uses data obtained in long time frames (intermediate conditions), assuming a homoscedastic measurement system. If the measurement system is heteroscedastic, we do not obtain but a mean value, . This possibility supports the idea that under repeatability conditions, the constant conditions are essential, not the short time frame.

According to Equations (3) and (17) (and to similar equations in the literature), the SD determined from long-term data has two terms: , and . The second term, , is not a random but a systematic component, because it is calculated from means (, which in repeatability conditions are constants and do not vary unpredictably in replicated measurements (VIM3 2.19). In longer time frames is a constant. varies, but not randomly. Its possible values form a bounded set of values; therefore, the distribution is not normal.

This aspect suggests that the long-term QC data are not normally distributed, but rather a mixture of two distinct distributions: a normal distribution caused by the random errors, and a bounded distribution of the systematic component.

An interesting aspect of the mathematical deduction is Equation (18). The two components of Equations (3) and (17) not only have different (systematic and random) origins but also have different mathematical behavior when the averages of multiple measurements obtained under repeatability conditions are calculated. The SD of the normally distributed values reduces times (Equation (19)) if it is calculated from averages of n values. In Equation (18), only the random component, sr, is reduced times; the does not, proving its different origin. This fact not only challenges the normal distribution of the long-term data, but also questions whether is the correct estimator of σ.

The computer simulations and statistical analysis supported the mathematical deduction. In the case of all bias types, independent of the n number of data used in the average calculations, the differences between the obtained s

RW and the theoretically predicted values (Equation (17)) were insignificant. (

Table 1).

The consecutive histograms (

Figure 3) visually illustrate the effect of calculating the average of more results obtained under repeatability conditions. The first line (bias1–constant bias, normally distributed values) shows what can be expected from a normal distribution. As n, the number of measurements from which the average is calculated increases, and the histograms become narrower and sharper.

In the other three lines (cases of gradually increasing bias (bias4b), randomly variable bias (bias5a), and sinusoidal bias (bias6a)), the phenomenon is hardly observable because the distribution is not normal, but a mix of two distributions. As the SD of the random component decreases (being calculated from averages of n values), the histograms become more similar to the distribution of the systematic component.

The phenomenon is most pronounced in the last case (sinusoidal bias), where the histogram exhibits a bimodal shape, as in the cases of data obtained from a sinusoidal function. (

Figure 4) The histograms are consistent with Equation (18); the

term remains unaffected by calculating the average of more results. The long-term means also exhibit more significant variability than could be expected from a normal distribution (

Table 3).

When a human intervention—such as calibration—is performed between each pair of quality control (QC) measurements, it introduces unpredictable changes in both the mean and the bias. Calibration is subject to measurement errors; therefore, systematic errors that vary randomly over time (like in bias5a and bias5b) begin to behave like random bias (as in the bias2 case). Consequently, the long-term QC data starts to look like they would follow a normal distribution, with a standard deviation close to σ ≈ .

However, this effect applies only to the QC results. The QC results change unpredictably between two consecutive measurements due to the intervention. The same does not apply to the actual measured samples. All measurements between two calibrations are affected systematically by the last bias value.

A typical example of bias variation in clinical laboratory practice was reported by Vandra AB [

16], where repeated measurements of ALP using an unstable reagent and high-level control showed that bias gradually changed between calibrations and shifted suddenly after each calibration—patterns that are common in laboratory work but often hidden by random error. Similarly, it has been noted that “Within a given day the small deviations of the calibration graph from an ‘ideal calibration graph’ affect all the samples in a systematic way” [

21]. These examples highlight the importance of understanding bias variation in real-world settings and support the need for experimental validation using actual measurement data.

As sustained with examples in the Basics subsection, all studied bias variations are theoretically possible cases, but not all are significant in practice. In the clinical laboratory, the most important sources of bias variations are changes in reagent properties, which cause a gradual quasilinear variation (bias4 cases). In contrast, the calibrations (including measurement, stability, nominal value, and reconstitution errors) and the control material changes (reconstitution, stability, and nominal value errors) cause randomly variable biases (alternation between constant periods and unpredictable changes–bias5 cases) (

Figure 2). The result is a sawtooth-shaped graph (masked by the random errors), a cyclical variation with unequal cycles (with different lengths, amplitudes, and means). Some of these cycles may last even a month.

One consequence of the non-Gaussian distribution caused by the cyclical variation in the bias values (B

r(t)) is the significant variability of the means and

., causing a similar variability in

. The phenomenon can be visually predicted if the graphs of the bias variations are studied. The longer the cycles in the case of bias4 (linearly increasing bias), the larger the

, and consequently the

. In the uniform distribution

can be obtained by dividing the range of values by 2

. Choosing different time frames with different ranges of bias values, different means,

and

, is obtained. If the time frame becomes longer than the cycle, all possible bias values are obtained, and the

values become stable. (

≈

). In the case of bias5, the possible range of bias values is reached only after several human interventions (calibrations, newly reconstituted calibrators, and control materials). A few values, even those that originated from a normal distribution, do not behave as a normal distribution, but rather as discrete values. For this reason, it can be predicted that the variability of the mean and

will be more significant (

Figure 7).

The computer simulation data confirmed the former predictions. Data in

Table 2 show that the significant variability of the

calculated from 40 data points are linked to the incomplete cycles in the cases of bias4 (gradually increasing bias), bias5 (randomly variable bias), and bias6 (sinusoidal bias). If the cycles were shorter, the variability was less significant. In the case of the bias5 series, due to the inequality between the cycles (random changes), the variability was more significant, as proven by Cochran’s F-test.

The link between the lengths of the cycles and the

variability is sustained by the comparison between the corresponding

values obtained in the bias4a and bias4b respective to the bias5a and bias 5b series. (

Figure 4). (The bias4b and bias5b series have longer cycles and more significant variability).

The more significant

variability can also be visually observed on the individual value plot charts (

Figure 5). (The less dispersed first five examples are from the bias1 series (constant bias–normal distribution)).

The influence of bias variation on the medium-term means (and relative biases) was studied by calculating the ranges (the differences between the minimum and maximum values in 10,000 simulated data) of the moving averages of 17, 31, and 97 values. The range of a single computer-simulated value was used as a comparison. These values were chosen to be prime numbers, avoiding being multiples of 10 and 25.

The ranges of the normally distributed values of the constant bias (bias1_1) are borderline to the estimated limits. Because the probability of having no values over the limits from 10,000 datasets is only 36% (0.9999

10000), it is predictable that borderline exceeding the estimated limits will occur. Meanwhile, in all the other bias series, the variability of the biases is significantly bigger than could be expected from a normal distribution (

Table 3). As in the case of

, the most significant increases in the variability of the mean were obtained in the cases bias4c, bias 5a, and bias 5b (

Table 3). The phenomenon can also be observed on boxplots of the moving averages,

Figure 8.

The mathematical deductions and computer simulations confirm the experimental data from the literature and from the day-to-day experience of the authors about the significant variability of the means and . Monthly values of , as well as monthly means and bias variations, do not follow the laws of a normal distribution. Long-term QC data are not normally distributed. This observation supports assumptions 1, 4, and 6. The reason for this non-Gaussian behavior lies in the unequal cycles in bias variation.

Although the repeatability standard deviation

is defined under repeatability conditions, it can still be separated and determined from long-term data. This is possible using methods such as EP15-A3 [

25] or variants of the Dahlberg method [

11]. This separation works because

, the time-dependent component of bias, is not a random error component. If it were really random, separating

from long-term data would not be possible. The phenomenon highlights once more that in repeatability conditions, it is not the short time frame but the constant conditions that are important.

It is important to distinguish between the two types of unpredictable variation:

True random error changes unpredictably in replicated measurements, consistent with the VIM3 2.19 definition.

Random variation in bias, an alternation between predictable periods and unpredictable changes in the mean, is not consistent with the VIM3 2.19 definition because in replicated measurements, it may be predictable. It behaves differently and follows distinct patterns.

Understanding this difference is crucial for accurately interpreting measurement data and designing reliable quality control procedures.

Another conclusion is that the correct estimator of the σ parameter is , not . According to Equations (3), (17), and (18), the latter also contains a systematic component (), which causes the non-Gaussian distribution of the long-term control data.

Equation (4) can only be used correctly when the conditions are stable—meaning both the standard deviation (σ) and the mean (μ) stay constant. If the conditions change, then the standard deviation (SD) we measure does not represent just the random error. Instead, it shows the total variation in the data, which comes from two simultaneous sources: the random error and the variations in bias. These two effects must be separated and understood individually. This challenges the current paradigm, which often treats as the estimator for σ. However, this approach is based on a series of flawed assumptions:

The conditions required for applying the normal distribution are not verified.

It is wrongly assumed that long-term control data follows a normal distribution.

There is confusion between parameters representing random and variable error components.

The distinction between constant and variable bias components is often ignored.

Definitional uncertainty of “the bias”, the lack of distinguishing between constant and variable bias components, and the biases measured in repeatability and intermediate (reproducibility within laboratory) conditions, which permit the confusion between bias types.

To correct the wrong use of

as the estimator of the σ parameter is especially important in the clinical laboratory quality control, which is based on the Westgard rules [

14]. The long-term success of the Westgard-rules-based QC can be explained by two compensating errors: by using

as the estimator of σ (instead of the correct

), due to the

/

ratio, larger, proportionally increased decision limits are used.

As JO Westgard and T. Groth acknowledged, “The calculations based on computer simulations behind the power function graphs are made assuming “within-run (repeatability) SD”, while the graphs are designed with “total SD”. [

14]. (The statement contradicts QP1). In this way hundreds of predictable false alarms are avoided, in the case of incorrigible biases. (As mentioned in the introduction, as the first among the assumed facts, half of the monthly mean biases measured in the internal QC are ≈1

. Based on the laws of the normal distribution, it can be predicted that in the case of a bias of only 1SD, assuming three control runs/day on two levels for each measurand, daily R

1–2S false warnings will occur [

1].) This compensation is not accurate, proven by several false alarms in practice. (After calibration, no improvement is obtained). A new quality control system is necessary, which simultaneously corrects both errors. The necessity of reevaluation of all equations used in the QC, which contain SD in their formula, will be discussed in

Section 5.3.

5.1. Misinterpretation of Time-Dependent Bias as Random Error

The clinical laboratory has its particularities in comparison with other domains in which metrology is used. The samples are more complex, the errors are often larger, and the variability in reagents is more significant. Because of these differences, it is not surprising that the questionable theory of transforming variable bias components into random phenomena originated in the clinical laboratory literature. Behind this theory lies a binary way of thinking: the belief that there is one “correct” standard deviation (SD) that should be used in all situations—specifically,

. This idea contradicts QP1, which recognizes that different conditions may require different estimators. The theory is based on a misinterpretation of the VIM3 2.19 [

2] definition, more precisely of the ‘unpredictable in replicate measurements’ expression, considering all unpredictable phenomena as random.

The transformation of systematic error (SE) into random error is purely subjective. It is based on the assumption that once an unpredictable change occurs, the systematic error becomes unpredictable. Randomness in the metrological sense is an objective phenomenon, whereas unpredictable is subjective, depending on our ability to predict.

Two criteria serve to make a distinction between objectively transformed and subjectively perceived as transformed phenomena: (1) what is transformed becomes unmeasurable (e.g., we cannot measure the amount of ice in the water after melting); (2) what is transformed loses its properties (e.g., melted ice loses its form).

The random error (RE) (more precisely, the estimator of σ, the ) can be determined from long-term data. Meanwhile, the non-Gaussian distribution of the long-term control proves that the VCSE(t) maintains its influence on the distribution.

5.2. The VIM3 2.19 Definition of the Random Measurement Error

The VIM3 2.19 definition of the random measurement error is the “…component of the measurement error that in replicate measurements varies in an unpredictable manner”. Focusing on the ‘in replicate measurements’ expression, the definition does not leave room for incorrect interpretations. A correct interpretation is an unpredictable variation between all consecutive measurements, not simply ‘unpredictable.’

The misinterpretations in the literature [

6,

21] suggest that there is room for increasing the accuracy of the definition. There is a common tendency to treat any unpredictable phenomenon as random. In metrology, the term ‘random’ has a narrower and more specific meaning than ‘unpredictable’. The confusion between ‘random’ and ‘variable’ terms is traceable back to WA Shewhart, who, referring to SE variations, stated the following [

8]: “The causes of this variability are, in general, unknown”. The consequence is our limited subjective capacity to predict. To avoid such confusions, an additional note is necessary for the VIM3 2.19 [

2] definition as follows:

(Proposed note) “Unpredictable has a wider meaning than random (in the metrological sense). Unpredictable in replicate measurements must be understood as an unpredictable variation between each consecutive pair of measurements.”

5.3. The Subcomponents of the Systematic Measurement Error

is calculated from the

differences, which can be identified with the VCSE(t) function. According to QP3, due to the uncertainties of the determinations and the inherent errors of the corrective actions, the bias cannot be efficiently eliminated, and the constant component of systematic error (CCSE) exists, confirming the experience, the literature data [

10], and the validity of Equation (2).

The CCSE can be identified with the long-term mean bias CCSE ≡

. According to QP4, only this component can be efficiently corrected. The B

r(t) correction is theoretically possible. Still, it is an ephemeral solution, because it reappears. Due to its variability, the B

r(t) value has a short validity term, and a correction after this term expires, as the GUM [

2,

5] B.2.23 and B.2.24 recommend, are risky (may increase the bias). By being consistent with the error model described in Equations (2) and (3), and distinguishing between the bias measured in constant, repeatability, and respective, variable, intermediate reproducibility within laboratory conditions (B

r(t) and

), two sets of error parameters are obtained: B

r(t) and

, respectively,

and

, defining two different TE equations. (z is a confidence factor, usually z = 2, corresponding to approximately 95% confidence).

In repeatability conditions:

In reproducibility within laboratory conditions:

The mathematical demonstration in the Results section has uncovered that the VCSE(t) is hidden in

and

too. Therefore, all equations that simultaneously contain these two parameters include redundant VCSE(t) and are erroneous. For example:

Usually, this error is committed when the SE is expressed as ‘The bias’ with a definite article, containing a definitional uncertainty. (Which bias?) Such equations usually take the following form:

(Equation (23)-type formulas are frequently used in the clinical laboratory literature, substituting the bias value determined in the last EQA round, which is a ; therefore, practically the redundant Equation (22) is used.)

To avoid such errors, there is an imperative need to distinguish between the bias types. Bias evaluations (any would be the methodology) do not determine ‘the bias’, but either

or

. It is necessary to standardize (for names and abbreviations) and define in VIM3 the bias subcomponents. To be consistent with VIM3 2.17, AB. Vandra [

16,

17] proposed the following definitions:

The constant component of the systematic error is “The component of measurement error that in replicate measurements remains constant”.

The variable component of the systematic error is “The component of measurement error that in replicate measurements varies in a predictable manner”.

According to QP1, the conditions for determining the error parameters must be consistent with the conditions of their use. In a laboratory that performs periodic QC (in any domain), there are two types of decisions:

QC decisions: These are short-term decisions, such as whether measurements can proceed or whether corrective actions are needed. These decisions are made under repeatability conditions. In this context, Equation (20) is valid, and correct decisions can be made based on repeatability measured parameters. This principle is often violated by the calculations of the decision limits based on

Unfortunately, such recommendations exist [

14]. The QC must be redesigned in all domains in which the decision limits are calculated with

, to ensure that decisions are made under appropriate conditions (with decision limits calculated with

).

The evaluation of the uncertainty of the results is a long-term decision. In this case, Equation (21) is valid. To apply the GUM [

2,

5] recommendations, the first step is to correct for the bias. As mentioned earlier, a key finding of this study is that only the constant bias component can be corrected efficiently (QP4).

This study demonstrates that there must be made a distinction between the constant and variable bias components, and that is the correct estimator of the mean RE (the σ parameter of the Gaussian equation). In this way, it becomes the basis of a fundamental reevaluation of the QC, mainly in the clinical laboratory, but also in other domains. Three main suggestions arise from the study, suggesting further research.

All calculations based on equations that include SD in their formula must be reevaluated, because it is not that is the correct estimator of SD but .

A new, more accurate quality control system is necessary, based on as the correct estimator of σ, which also efficiently avoids the incorrigible biases.

The model suggests that efficient bias corrections, such as GUM B.2.23 and B.2.24 recommendations, are possible only for the constant bias component (QP4).

However, these conclusions were made based on the experience of the authors in the clinical laboratory; the conclusions may have applicability in other domains of metrology too, which also motivates further research.