Abstract

To establish a safe human–robot interaction in collaborative agricultural environments, a field experiment was performed, acquiring data from wearable sensors placed at five different body locations on 20 participants. The human–robot collaborative task presented in this study involved six well-defined continuous sub-activities, which were executed under several variants to capture, as much as possible, the different ways in which someone can carry out certain synergistic actions in the field. The obtained dataset was made publicly accessible, thus enabling future meta-studies for machine learning models focusing on human activity recognition, and ergonomics aiming to identify the main risk factors for possible injuries.

1. Introduction

An emerging field in agriculture is collaborative robotics that take advantage of the distinctive human cognitive characteristics and the repeatable accuracy and strength of robots. One important factor, which is at the top of the priority list of Industry 5.0, is the safety of workers, due to the simultaneous presence of humans and autonomous vehicles within the same workplaces [1]. This human-centric approach is very challenging, especially in the agricultural sector, since it deals with unpredictable and complex environments, which contrast with other industries’ structured domains. A crucial element in accomplishing a safe human–robot interaction is human awareness. Human activity recognition relying on wearable sensor data has received remarkable attention as compared with vision-based techniques, as the latter are prone to visual disturbances. To that end, sensors, including accelerometers, magnetometers and gyroscopes, are often utilized, either alone or in a synergistic manner. In general, multi-sensor data fusion is considered to be more trustworthy than a single sensor, because the potential information losses from one sensor can be offset by the presence of the others [2].

In the present study, a collaborative human–robot task was designed, while data were collected from field experimental sessions involving two different types of Unmanned Ground Vehicles (UGVs) and twenty healthy participants wearing five Inertial Measurement Units (IMUs). Consequently, the workers’ activity “signatures” were obtained and analyzed, providing the potential to increase human awareness in human–robot interaction activities and provide useful feedback for future ergonomic analyses.

2. Materials and Methods

Experimental Setup and Signal Processing

A total of 13 male and 7 female participants, with neither recent musculoskeletal injury nor history of surgeries, took part in these outdoor experiments. Their average age, weight and height were 30.95 years (standard deviation ≈ 4.85), 75.4 kg (standard deviation ≈ 17.2) and 1.75 m (standard deviation ≈ 0.08), respectively. An informed consent form was filled out prior to any participation, which had been approved by the Institutional Ethical Committee. Moreover, a five-minute instructed warm-up was executed to avoid any injury. The aforementioned task, which has to be carried out three times by each person, included: (a) walking a 3.5 m unimpeded distance; (b) lifting a crate (empty or with a total mass of 20% of the mass of each participant); (c) carrying the crate back to the departure point; (d) placing the crate on an immovable UGV (Husky; Clearpath Robotics Inc. or Thorvald; SAGA Robotics, Oslo, Norway). A common plastic crate (height = 31 cm, width = 53 cm, depth = 35 cm) was used, with a tare weight of 1.5 kg and two handles at 28 cm above the base. Finally, the loading heights for the cases of Husky and Thorvald were approximately 40 and 80 cm, respectively.

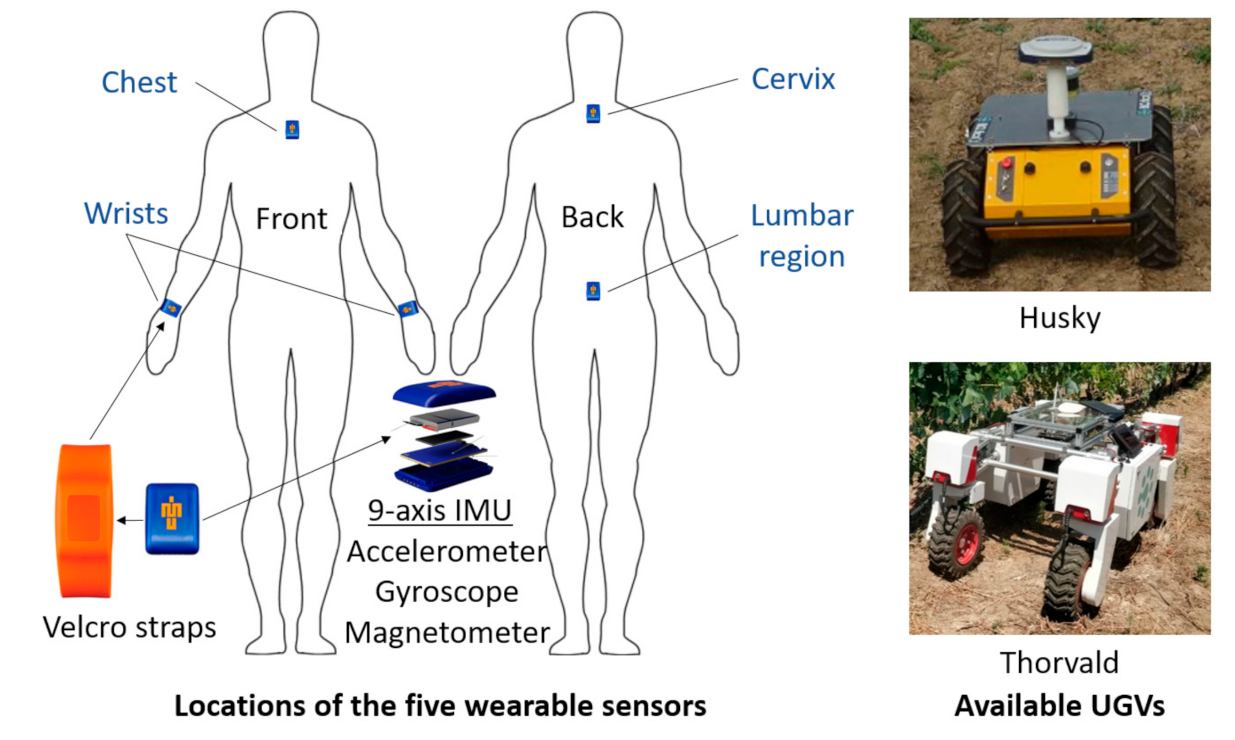

Five IMU sensors (Blue Trident, Vicon, Nexus, Oxford, UK) were utilized in the present experiments, which are widely used in such studies. Each wearable sensor contains a tri-axial accelerometer, a tri-axial magnetometer and a tri-axial gyroscope. These sensors were attached via double-sided tape at the regions of chest (breast bone), the first thoracic vertebra, T1, (cervix), and the fourth lumbar vertebrae, L4, (lumbar region). In contrast, special velcro straps were used to attach the sensors at the left and right wrists (Figure 1). The sampling frequency was set equal to 50 Hz, while, for the purpose of synchronizing the IMUs and gathering the data, the Capture.U software, provided by VICON, was deployed.

Figure 1.

Body locations of the five IMU sensors and the available UGVs used in the experiments.

Distinguishing the sub-activities through carefully analyzing the video records was a particularly challenging task, since each participant performed the predefined sub-activities in their own pace and manner to increase the variability of the dataset. The most observed difference among the participants was definitely the technique they used to lift the crate from the ground. These techniques can be divided into the following main lifting postures [3]: (a) Stooping: bending the trunk forward from an erect position without kneeling; (b) Squat: bending knees by keeping the back straight and then standing back up; (c) Semi-squat: an intermediate posture between stooping and squat.

The sub-activities were continuous to obtain realistic measurements, hence increasing the degree of difficulty in labeling. To determine the critical instant where the transition occurred, the following well-defined criteria were imposed: (i) “Standing still” until the signal is given to begin; (ii) “Walking without the crate”: One of the feet leaves the ground, corresponding to the start of the stance phase of gait cycle; (iii) “Bending”: Starting bending the trunk forward (stooping), or kneeling (squat), or performing both simultaneously (semi-squat); (iv) “Lifting crate”: Starting lifting the crate; (v) “Walking with the crate”: The stance phase starts as analyzed above, but carrying the crate this time; (vi) “Placing crate”: Starting stooping, squat or semi-squat and ending when the entire crate is placed to either Husky or Thorvald.

3. Results and Discussion

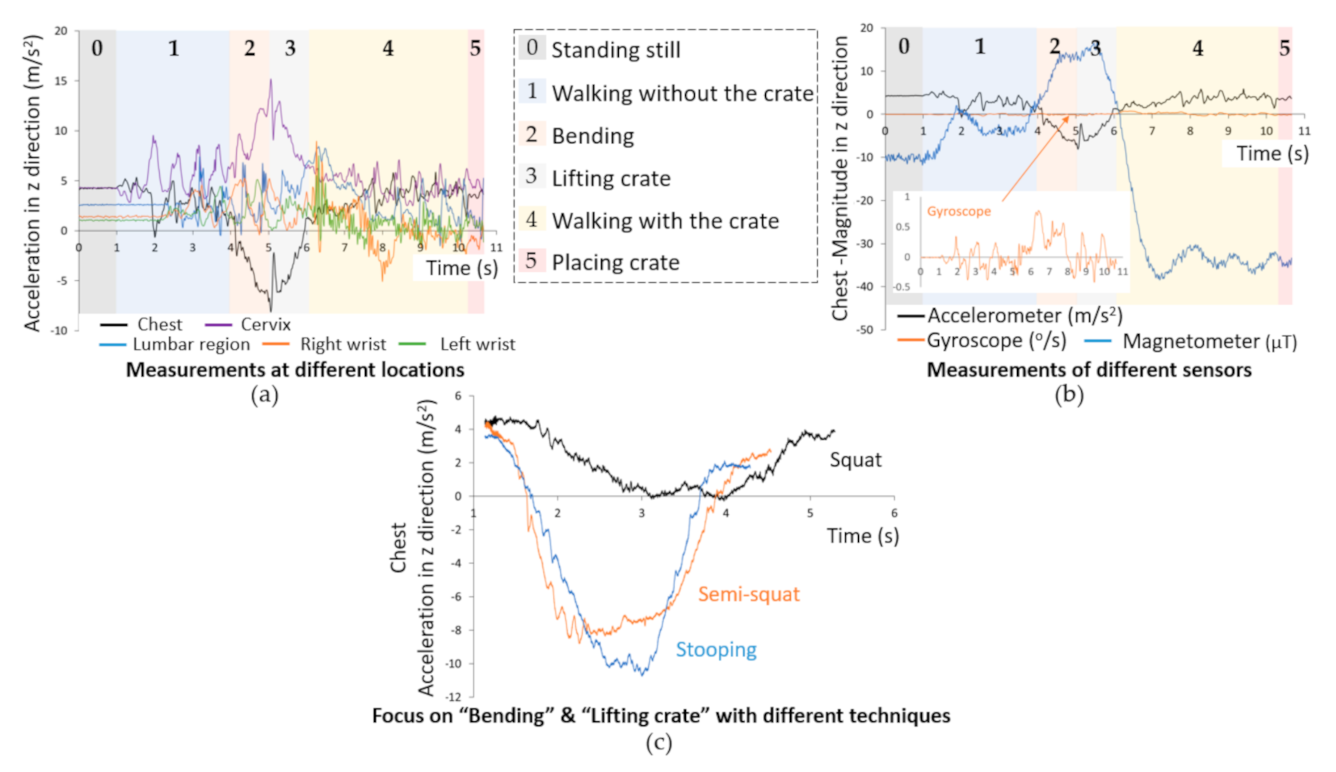

For the sake of brevity, only indicative raw signals in z direction are presented in this study (Figure 2), while the full dataset was made publicly available in [4]. Moreover, labels were assigned to the sub-activities, from 0 (standing still) to 5 (placing crate), with the intention of rendering them adequate for future machine learning studies.

Figure 2.

Indicative raw signals in z direction, considering the case of loading Thorvald with a crate of a total mass equal to 20% of the participant’s mass, representing: (a) acceleration at different body locations, (b) measurements of different sensors at the chest, and (c) acceleration at the chest using different techniques.

As expected, the sub-activities demanding more time were those involving walking with and without the crate. In contrast, transitional sub-activities, including bending to approach the crate and lifting it, as well as placing the crate onto the robot, were considerably less time-consuming. Consequently, more effort was needed to capture the critical transitional instant through carefully analyzing the video records in accordance with the aforementioned criteria. In Figure 2a,b, the distinction of the sub-activities is clearly shown. More specifically, Figure 1a depicts the raw signals acquired by accelerometers at the five body locations. The signals originating from the wrists and lower back were quite complicated, while those from the chest and cervix presented local maxima or minima when a transition took place. Focusing on the acceleration measurements of the chest (Figure 2b), for instance, the nearly flat signal (corresponding to the standstill state) starts to fluctuate after t = 1 s, almost periodically indicating the repetitive parts of the gait cycle. This state is abruptly interrupted by an “indentation” in acceleration (or, equivalently, a “bulge” regarding the magnetometer signal). Within this indentation, the bending and lifting of the crate occur, requiring an approximately equal time. Subsequently, the signal indicating gait follows, while the sub-activity of placing the crate cannot easily be distinguished; it looks like a part of the previous sub-activity.

One very interesting feature extracted from the analysis of signals was their different forms, especially when bending and lifting the crate. This differentiation can be attributed to the several variations in the experiments (e.g., full/empty crate, different depositing heights, performing tasks at the individual’s own pace). However, a more careful examination of video records revealed that the difference in these time series was mainly due to the lifting technique used. As can be seen in Figure 2c, when the participant used the squat technique, a relatively small indentation was observed, lasting longer than the other two techniques. The squat style was used by a minority of subjects, whereas stooping and semi-squat were used in the majority of cases, demonstrating a deeper indentation. In general, a squat lift leads to less stress on the spine while stooping; although more natural, this is considered to be the primary risk factor for lower back disorders. This type of lifting, especially when performed in a repetitive manner, appears to be the most common technique in agricultural activities, justifying the epidemic proportion of low back injuries in this sector [3,5]. The semi-squat is an alternative posture between squat and stooping, which avoids the deep kneeling of squat and the full lumbar flexion of stooping. There is considerable controversy regarding the best lifting posture, since all of them have drawbacks regarding oxygen consumption and fatigue in spine and knees [3].

In summary, a field experiment, involving well-defined continuous sub-activities, was designed, which collected data via wearable sensors. This dataset is characterized by a large variability, due to the inclusion of a plethora of different parameters. Finally, it was made publicly accessible [4] and is expected to be particularly useful for future research regarding machine learning and ergonomic studies.

Author Contributions

Conceptualization, A.C.T., L.B., D.B.; methodology, L.B., E.A., A.A.; writing—original draft preparation, A.C.T., L.B., D.K.; writing—review and editing, D.K., D.B.; visualization, L.B., A.A., E.A.; supervision, D.B. All authors have read and agreed to the published version of the manuscript.

Institutional Review Board Statement

The study was conducted according to the guidelines of the Declaration of Helsinki, and approved by the Ethical Committee under the identification code 1660 on 3 June 2020.

Informed Consent Statement

Informed consent was obtained from all subjects involved in the study.

Data Availability Statement

The dataset used in this work is publicly available in [4] which also provides additional information about the experimental procedure, file naming and sub-activity mapping.

Acknowledgments

This research has been partly supported by the Project: “Human-Robot Synergetic Logistics for High Value Crops” (project acronym: SYNERGIE), funded by the General Secretariat for Research and Technology (GSRT) under reference no. 2386.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Benos, L.; Bechar, A.; Bochtis, D. Safety and ergonomics in human-robot interactive agricultural operations. Biosyst. Eng. 2020, 200, 55–72. [Google Scholar] [CrossRef]

- Anagnostis, A.; Benos, L.; Tsaopoulos, D.; Tagarakis, A.; Tsolakis, N.; Bochtis, D. Human activity recognition through recurrent neural networks for human-robot interaction in agriculture. Appl. Sci. 2021, 11, 2188. [Google Scholar] [CrossRef]

- Benos, L.; Tsaopoulos, D.; Bochtis, D. A review on ergonomics in agriculture. Part I: Manual operations. Appl. Sci. 2020, 10, 1905. [Google Scholar] [CrossRef] [Green Version]

- Open Datasets—iBO. Available online: https://ibo.certh.gr/open-datasets/ (accessed on 1 March 2021).

- Benos, L.; Tsaopoulos, D.; Bochtis, D. A Review on Ergonomics in Agriculture. Part II: Mechanized Operations. Appl. Sci. 2020, 10, 3484. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).