An Iterative Hybrid Algorithm for Roots of Non-Linear Equations

Abstract

1. Introduction

2. Background, Definitions

2.1. Bisection Method

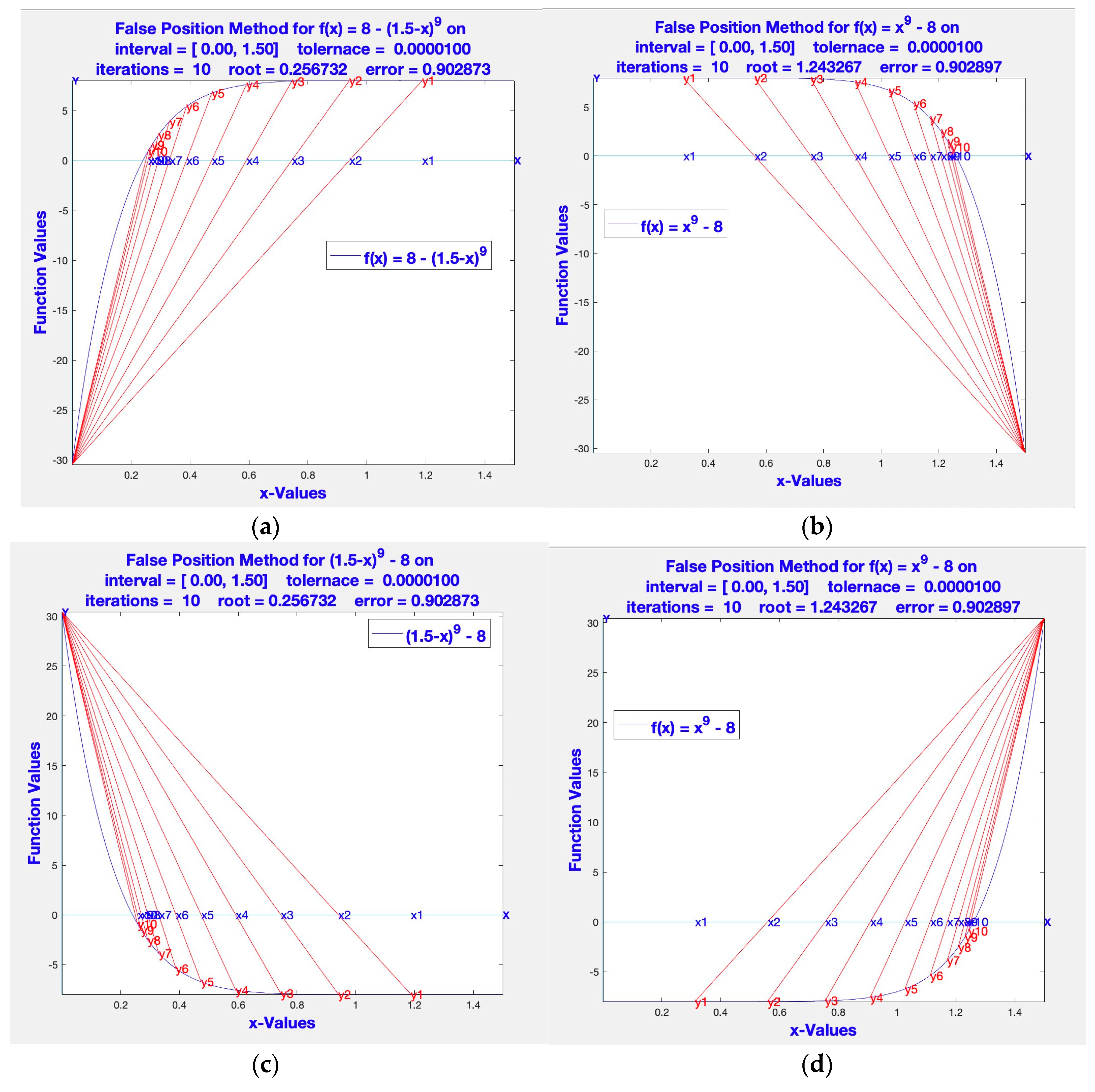

2.2. False Position (Regula Falsi) Method

2.3. Dekker’s Method

2.4. Brent’s Algorithm

Detour to Reverse Quadratic Interpolation

2.5. Newton-Raphson (1760)

2.6. Oghovese-John Method (2014)

2.7. Grau-Diaz-Barero (2006)

2.8. Sharma-Guha (2007)

2.9. Khattri-Abbasbandy (2011)

2.10. Fang-Chen-Tian-Sun-Chen (2011)

2.11. Jayakumar (2013)

2.12. Nora-Imran-Syamsudhuha (2018)

2.13. Weerakon et al. (2000)

2.14. Edmond Halley (1995)

| Method | Year | Name in Table 2, Table 3, Table 4 and Table 5 | EFF |

|---|---|---|---|

| Newton–Rapson | 1760 | MN_R | 1.4142 |

| Edmond Halley | 1995 | NHAL | 1.4422 |

| Weerkoon–Fernando | 2000 | NWFhm3 | 1.4310 |

| Grau–Diaz–Barero | 2006 | MGDB | 1.5651 |

| Sharma–Guha | 2007 | MSG | 1.5651 |

| Fang–Chen–Tian–Sun–Chen | 2011 | FCTSC | 1.3480 |

| Khattri–Abbasbandy | 2011 | MKA | 1.4860 |

| Jayakumar | 2013 | MJKs | 1.3161 |

| Jayakumar | 2013 | MJKhs | 1.3161 |

| Oghovese–John | 2014 | MOJmis | 1.2599 |

| Nora–Imran–Syamsudhuha | 2018 | MNIS | 1.5651 |

| Hybrid Method | 2021 | Hybridn | 1.5874 |

3. Three Way Hybrid Algorithm

Hybrid Algorithm

4. Convergence of Hybrid Algorithm

5. Empirical Results of Simulations

| Function | x3 − x2 − x − 1 | exp(x) + x − 6 | x3 + log(x) | sin(x) − x/2 | (x − 1)3 − 1 | x3 + 4x2 − 10 | (x − 2)23 −1 | sin(x)2 − x2 + 1 | 8 − x + log2(x) | 1.0/(x − 3) − 6 | x9 − 8 | x0.5 − 1 | 0.7x5 − 8x4 +4 4x3 − 90x2 + 82x − 25 | 5x3 − 5x2 + 6x − 2 | 0.5x3 −4x2 +5.5 x − 1 | 5x3 − 5x2 + 6x − 2 | −0.6x2 + 2.4x + 5.5 | x8 − 1 | sin(x) − x3 | 8exp(−x)sin(x) − 1 | sin(x) + x | (0.8 − 0.3*x)/x |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Method | ||||||||||||||||||||||

| MN_R | 34 | 14 | 10 | 10 | 6 | 8 | 22 | 16 | 14 | 18 | 42 | 14 | 12 | 8 | 12 | 8 | 6 | 52 | 14 | 6 | 6 | 10 |

| MNIS | 40 | 44 | 60 | 76 | 28 | 28 | 84 | 76 | 64 | 60 | 180 | 220 | 44 | 60 | 76 | 76 | 68 | 84 | 44 | 56 | 28 | 60 |

| MKA | 27 | 27 | 39 | 51 | 15 | 18 | 57 | 51 | 42 | 42 | 153 | 114 | 42 | 39 | 63 | 63 | 45 | 51 | 21 | 39 | 21 | 33 |

| MSG | 28 | 12 | 39 | 8 | 8 | 8 | 64 | 28 | 12 | 12 | 36 | 12 | 12 | 8 | 32 | 8 | 8 | 248 | 16 | 8 | 12 | 12 |

| FCTSC | 600 | 12 | 12 | 12 | 6 | 6 | 18 | 12 | 36 | 18 | 60 | 18 | 12 | 12 | 18 | 12 | 12 | 18 | 12 | 6 | 18 | 18 |

| MGDB | 20 | 8 | 32 | 8 | 8 | 8 | 16 | 12 | 12 | 12 | 36 | 16 | 12 | 8 | 32 | 8 | 8 | 36 | 12 | 4 | 12 | 12 |

| MOJmis | 81 | 15 | 9 | 9 | 6 | 6 | 39 | 21 | 21 | 18 | 42 | 12 | 12 | 6 | 9 | 6 | 6 | 3 | 15 | 6 | 12 | 12 |

| MWFhm3 | 50 | 10 | 10 | 10 | 5 | 10 | 15 | 15 | 20 | 15 | 40 | 15 | 10 | 10 | 10 | 10 | 5 | 40 | 10 | 5 | 10 | 10 |

| MJKs | 36 | 20 | 12 | 12 | 8 | 8 | 72 | 28 | 28 | 24 | 56 | 20 | 16 | 12 | 20 | 12 | 8 | 4 | 24 | 8 | 8 | 16 |

| MJKhs | 36 | 16 | 12 | 12 | 8 | 8 | 28 | 24 | 28 | 20 | 52 | 16 | 16 | 8 | 12 | 8 | 8 | 4 | 12 | 8 | 12 | 12 |

| NHAL | 27 | 12 | 27 | 12 | 9 | 9 | 15 | 18 | 27 | 9 | 198 | 45 | 12 | 12 | 18 | 12 | 15 | 18 | 18 | 9 | 30 | 24 |

| Hybridn | 15 | 6 | 6 | 6 | 3 | 6 | 12 | 9 | 9 | 9 | 24 | 9 | 6 | 6 | 6 | 6 | 3 | 3 | 9 | 3 | 3 | 9 |

| Summary for Comparison of Methods for | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| Function sin(x) − x3, Intial value 0.500 | |||||||||

| Max Iterations = 100 | Error tolerance = 0.0000001000 | ||||||||

| Method | Order | nofe | NIters | NOFE | COC | EFF | Root | |xn − xn−1| | Function Value |

| MN_R | 2 | 2 | 7 | 14 | 2.01 | 1.4142136 | −0.928626 | 6.547 × 10−5 | 0.000000014 |

| MNIS | 6 | 4 | 11 | 44 | 1 | 1.5650846 | −0.928626 | 1.04 × 10−7 | −0.000000085 |

| MKA | 4 | 3 | 7 | 21 | 1 | 1.5874011 | 0.9286263 | 9.7 × 10−8 | 0.000000077 |

| MSG | 6 | 4 | 4 | 16 | 4.29 | 1.5650846 | −0.928626 | 1.13 × 10−6 | 0 |

| FCTSC | 6 | 6 | 2 | 12 | 4.29 | 1.3480062 | 0 | 0 | 0 |

| MGDB | 6 | 4 | 3 | 12 | 4.29 | 1.5650846 | −0.928626 | 2.56 × 10−7 | 0 |

| MOJmis | 2 | 3 | 5 | 15 | 3.93 | 1.2599211 | 0.9286263 | 0.000183 | 0 |

| MWFhm3 | 6 | 5 | 2 | 10 | 3.93 | 1.4309691 | −0.928626 | 0 | 0 |

| MJKs | 3 | 4 | 6 | 24 | 3.42 | 1.316074 | 0.9286263 | 1.302 × 10−5 | 0 |

| MJKhs | 3 | 4 | 3 | 12 | 4.79 | 1.316074 | 0.9286263 | 0.0022525 | 0.000000039 |

| NHAL | 3 | 3 | 6 | 18 | 3 | 1.4422496 | 0 | 0 | 0 |

| Hybridn | 4 | 3 | 3 | 9 | 4.83 | 1.5874011 | −0.928626 | 6.547 × 10−5 | 0 |

| Summary for Comparison of Methods for | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| Function 0.7*x5 − 8*x4 + 44*x3 − 90*x2 + 82*x − 25, Initial Value 00 | |||||||||

| Max Iterations = 100 | Error tolerance = 0.0000001000 | ||||||||

| Method | Order | nofe | NIters | NOFE | COC | EFF | Root | |xn − xn−1| | Function Value |

| MN_R | 2 | 2 | 6 | 12 | 2.01 | 1.414214 | 0.579409 | 1 × 10−7 | 0 |

| MNIS | 6 | 4 | 11 | 44 | 1 | 1.565085 | 0.579409 | 1.5 × 10−8 | −9.9 × 10−8 |

| MKA | 4 | 3 | 13 | 39 | 1 | 1.587401 | 0.579409 | 1.2 × 10−8 | −7.9 × 10−8 |

| MSG | 6 | 4 | 3 | 12 | 5.79 | 1.565085 | 0.579409 | 9.5 × 10−8 | 0 |

| FCTSC | 6 | 6 | 2 | 12 | 5.79 | 1.348006 | 0.579409 | 7.9 × 10−8 | −7.9 × 10−8 |

| MGDB | 6 | 4 | 3 | 12 | 5.79 | 1.565085 | 0.579409 | 1.1 × 10−8 | 0 |

| MOJmis | 2 | 3 | 4 | 12 | 2.94 | 1.259921 | 0.579409 | 7.83 × 10−7 | 0 |

| MWFhm3 | 6 | 5 | 2 | 10 | 2.94 | 1.430969 | 0.579409 | 0 | 0 |

| MJKs | 3 | 4 | 4 | 16 | 2.93 | 1.316074 | 0.579409 | 1.16 × 10−6 | 0 |

| MJKhs | 3 | 4 | 4 | 16 | 2.96 | 1.316074 | 0.579409 | 1.02 × 10−7 | 0 |

| NHAL | 3 | 3 | 4 | 12 | 3.01 | 1.44225 | 0.579409 | 1.6 × 10−8 | 0 |

| Hybridn | 4 | 3 | 2 | 6 | 6.76 | 1.587401 | 0.579409 | 0 | 0 |

| Summary for Comparison of Methods for | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| Function x3 + log(x), Initial value 0.100 | |||||||||

| Max Iterations = 100 | Error tolerance = 0.0000001000 | ||||||||

| Method | Order | nofe | NIters | NOFE | COC | EFF | Root | |xn − xn−1| | FunctionValue |

| MN_R | 2 | 2 | 5 | 10 | 1.94 | 1.4142 | 0.704709 | 3.57 × 10−7 | 0 |

| MNIS | 6 | 4 | 15 | 60 | 1 | 1.5651 | 0.036264 | 4.7 × 10−8 | 2.9 × 10−8 |

| MKA | 4 | 3 | 13 | 39 | 1 | 1.5874 | 0.704709 | 6 × 10−8 | −0.00000007 |

| MSG | 6 | 4 | 7 | 28 | 6.62 | 1.5651 | 0.036264 | 3.53 × 10−7 | 0 |

| FCTSC | 6 | 6 | 2 | 12 | 6.62 | 1.3480 | 0.704709 | 0 | 0 |

| MGDB | 6 | 4 | 8 | 32 | 3.22 | 1.5651 | 0.704709 | 0.059996 | 5 × 10−9 |

| MOJmis | 2 | 3 | 3 | 9 | 4.59 | 1.2599 | 0.704709 | 0.00025 | 0 |

| MWFhm3 | 6 | 5 | 2 | 10 | 5.79 | 1.4310 | 0.704709 | 0 | 0 |

| MJKs | 3 | 4 | 3 | 12 | 6.34 | 1.3161 | 0.704709 | 0.000155 | 0 |

| MJKhs | 3 | 4 | 3 | 12 | 5.71 | 1.3161 | 0.704709 | 8.56 × 10−5 | 0 |

| NHAL | 3 | 3 | 9 | 27 | 3.04 | 1.4422 | 0.704709 | 0 | 0 |

| Hybridn | 4 | 3 | 2 | 6 | 6.87 | 1.5874 | 0.704709 | 0 | 0 |

6. Conclusions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A

Appendix B

| f | the function |

| a, xl | lower value bracket |

| b, xu | upper value bracket |

| es | error stopping critia |

| imax | upper bound on the number of iterations |

| iter | the number of iterations |

| root | approxmate final root |

| roots | approxmate iterated rootss |

| ea | error at each iteration |

| bl | lower value brack at each iteration |

| br | upper value brack at each iteration |

References

- Dekker, T.J.; Hoffmann, W. Algol 60 Procedures in Numerical Algebra, Parts 1 and 2; Tracts 22 and 23; Mathematisch Centrum: Amsterdam, The Netherlands, 1968. [Google Scholar]

- Dekker, T.J. Finding a zero by means of successive linear interpolation. In Constructive Aspects of the Fundamental Theorem of Algebra; Dejon, B., Henrici, P., Eds.; Wiley-Interscience: London, UK, 1969; ISBN 978-0-471-20300-1. Available online: https://en.wikipedia.org/wiki/Brent%27s_method#Dekker’s_method (accessed on 3 March 2021).

- Brent’s Method. Available online: https://en.wikipedia.org/wiki/Brent%27s_method#Dekker’s_method (accessed on 12 December 2019).

- Press, W.H.; Teukolsky, S.A.; Vetterling, W.T.; Flannery, B.P. Van Wijngaarden–Dekker–Brent Method. In Numerical Recipes: The Art of Scientific Computing, 3rd ed.; Cambridge University Press: New York, NY, USA, 2007; ISBN 978-0-521-88068-8. [Google Scholar]

- Sivanandam, S.; Deepa, S. Genetic algorithm implementation using matlab. In Introduction to Genetic Algorithms; Springer: Berlin/Heidelberg, Germany, 2008; pp. 211–262. [Google Scholar] [CrossRef]

- Moazzam, G.; Chakraborty, A.; Bhuiyan, A. A robust method for solving transcendental equations. Int. J. Comput. Sci. Issues 2012, 9, 413–419. [Google Scholar]

- Oghovese, O.; John, E.O. New Three-Steps Iterative Method for Solving Nonlinear Equations. IOSR J. Math. (IOSR-JM) 2014, 10, 44–47. [Google Scholar] [CrossRef]

- Fitriyani, N.; Imran, M.; Syamsudhuha, A. Three-Step Iterative Method to Solve a Nonlinear Equation via an Undetermined Coefficient Method. Glob. J. Pure Appl. Math. 2018, 14, 1425–1435. [Google Scholar]

- Darvishi, M.T.; Barati, A. A third-oredr Newton-type method to solve system of nonlinear equations. Appl. Math. Comput. 2007, 187, 630–635. [Google Scholar]

- Weerakoon, S.; Fernando, T.G.I. A variant of Newton’s method with accelerated third-order convergence. Appl. Math. Lett. 2000, 13, 87–93. [Google Scholar] [CrossRef]

- Khattri, S.K.; Abbasbandy, S. Optimal Fourth Order Family of Iterative Methods. Mat. Vesn. 2011, 63, 67–72. [Google Scholar]

- Ostrowski, A.M. Solutions of Equations and System of Equations; Academic Press: New York, NY, USA; London, UK, 1966. [Google Scholar]

- Cordero, A.; Torregrosa, J.R. Variants of Newton’s Method using fifth-order quadrature formulas. Appl. Math. Comput. 2007, 190, 686–698. [Google Scholar] [CrossRef]

- Jayakumar, J. Generalized Simpson-Newton’s Method for Solving Nonlinear Equations with Cubic Convergence. IOSR J. Math. (IOSR-JM) 2013, 7, 58–61. [Google Scholar] [CrossRef]

- Sabharwal, C.L. Blended Root Finding Algorithm Outperforms Bisection and Regula Falsi Algorithms. Mathematics 2019, 7, 1118. [Google Scholar] [CrossRef]

- Chun, C. Iterative method improving Newton’s method by the decomposition method. Comput. Math. Appl. 2005, 50, 1559–1568. [Google Scholar] [CrossRef]

- Hasanov, V.I.; Ivanov, I.G.; Nedjibov, G. A new modification of Newton’s method. Appl. Math. Eng. 2002, 27, 278–286. [Google Scholar]

- Singh, M.K.; Singh, A.K. A Six-Order Method for Non-Linear Equations to Find Roots. Int. J. Adv. Eng. Res. Dev. 2017, 4, 587–592. [Google Scholar]

- Osinuga1, I.A.; Yusuff, S.O. Quadrature based Broyden-like method for systems of nonlinear equations. Stat. Optim. Inf. Comput. 2018, 6, 130–138. [Google Scholar] [CrossRef]

- Babajee, D.K.R.; Dauhoo, M.Z. An analysis of the properties of the variants of Newton’s method with third order convergence. Appl. Math. Comput. 2006, 183, 659–684. [Google Scholar] [CrossRef]

- Grau, M.; Diaz-Barrero, J.L. An Improvement to Ostrowski Root-Finding Method. Appl. Math. Comput. 2006, 173, 450–456. [Google Scholar] [CrossRef]

- Sharma, J.R.; Guha, R.K. A Family of Modified Ostrowski Methods with Accelerated Sixth Order Convergence. Appl. Math. Comput. 2007, 190, 111–115. [Google Scholar] [CrossRef]

- Fang, L.; Chen, T.; Tian, L.; Sun, L.; Chen, B. A Modified Newton-Type Method with Sixth-Order Convergence for Solving Nonlinear Equations. Procedia Eng. 2011, 15, 3124–3128. [Google Scholar] [CrossRef]

- Halley at Wikipedia. Available online: https://en.wikipedia.org/wiki/Halley%27s_method#Cubic_convergence (accessed on 22 December 2019).

- Newton. Philosophiae Naturalis Principia Mathematica; Sumptibus Cl. et Ant. Philibert: Geneva, Switzerland, 1760. [Google Scholar]

- Ortega, J.M.; Rheinboldt, W.C. Iterative Solutions of Nonlinear Equations in Several Variables; Academic Press: New York, NY, USA, 1970. [Google Scholar]

- Santra, S.S.; Ghosh, T.; Bazighifan, O. Explicit criteria for the oscillation of second-order differential equations with several sub-linear neutral coefficients. Adv. Diff. Equ. 2020, 2020, 643. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sabharwal, C.L. An Iterative Hybrid Algorithm for Roots of Non-Linear Equations. Eng 2021, 2, 80-98. https://doi.org/10.3390/eng2010007

Sabharwal CL. An Iterative Hybrid Algorithm for Roots of Non-Linear Equations. Eng. 2021; 2(1):80-98. https://doi.org/10.3390/eng2010007

Chicago/Turabian StyleSabharwal, Chaman Lal. 2021. "An Iterative Hybrid Algorithm for Roots of Non-Linear Equations" Eng 2, no. 1: 80-98. https://doi.org/10.3390/eng2010007

APA StyleSabharwal, C. L. (2021). An Iterative Hybrid Algorithm for Roots of Non-Linear Equations. Eng, 2(1), 80-98. https://doi.org/10.3390/eng2010007