XGB-RF: A Hybrid Machine Learning Approach for IoT Intrusion Detection

Abstract

:1. Introduction

- A hybrid machine learning algorithm has been proposed, for the first time, named XGB-RF where the prominent attributes are selected using RF algorithm and then classified using XGB algorithm.

- The proposed algorithm has also been compared with other state-of-the-art machine learning algorithms.

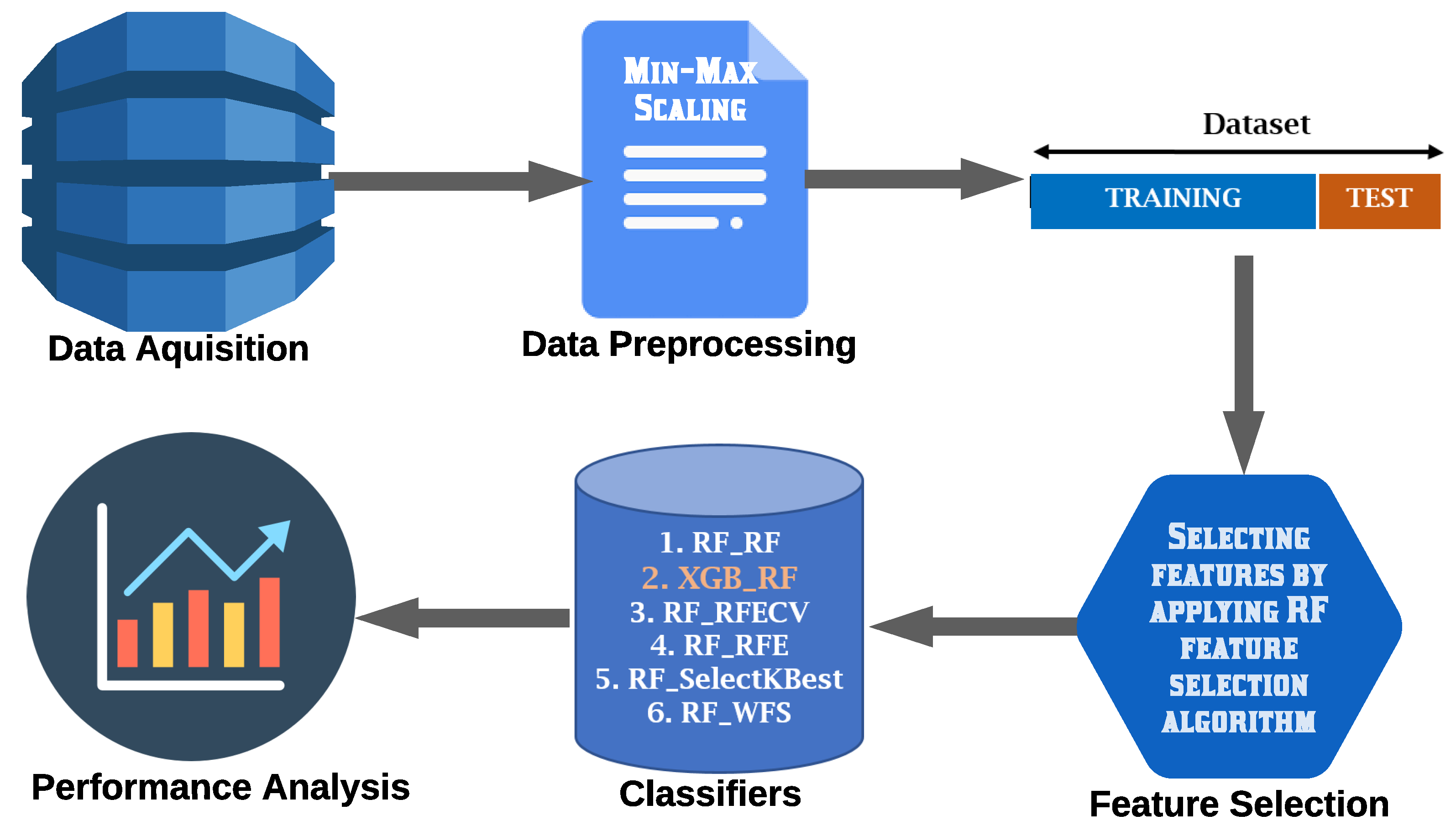

2. Materials and Methods

2.1. Data Acquisition

- Benign (Class_1): The benign class is defined as the traffic which does not carry any attack or malicious activities.

- Mirai ACK & Mirai SYN (Class_2 & Class_4): In order to prevent legitimate clients from connecting to a server, Mirai uses SYN flooding. As soon as the two parties complete the three-way handshake: the client sends SYN, receives SYN, the server sends ACK+1, the client sends ACK+1 (which the server receives and processes), and the information exchange may begin. Mirai (the threat actor) uses an illegal IP address to send SYN queries to all open ports. The server will respond with an SYN-ACK in response. Because of this, the attacker will not return the ACK intended. It is an interactive Mexican standoff in the digital world at its core. Until the server receives a response, it will wait. The attacker will transmit another SYN before the connection expires, and the process begins again. The attack’s goal is to completely fill the connection table so that a genuine user cannot access it. When Mirai attacks its target, it uses a variety of methods. Mirai ACK is similar in fashion to Mirai SYM, which uses ACK Flooding.

- Mirai Scan (Class_3): The virus and the command and control center (CnC) are the two major parts of Mirai. In addition to the malware’s 10 attack vectors, Mirai also includes a scanner that actively scans out more computers to infect. Later on, the CnC plays the main initiator and can activate the single or multiple attack vectors onto the compromised devices (BOT). When the scanner is running, it randomly attempts to connect to IP addresses using the telnet protocol (on TCP port 23 or 2223). After a successful login attempt, the CnC receives the identification of the new BOT and its credentials from the CnC’s database. Attackers can use the CnC’s command-line interface to choose a target IP address and length of the attack. New device addresses and credentials discovered by the CnC are also used to copy over the infection code and establish further BOTs.

- Mirai UDP (Class_5): The Mirai UDP attack is unique among other UDP Floods. While still a UDP Flood, the default behavior of Mirai is to randomize the source port and the destination ports. When combined with multiple source IPs (coming from multiple bots), the result is a flood of UDP traffic that can be difficult to fingerprint on an upstream router or firewall because there is no common source IP, source port, or destination port.

- Mirai UDP plain (Class_6): In contrast to UDP flooding, a UDP plain attack is far more "surgical" and effective. Because of how the attacking bot "picks" ports, its effectiveness can be explained. The attacking bot will target the one that is most frequently used rather than flooding all of them. Rather than going all-in, focus the attack on a single target. This will boost the chances of success.

- Gafgyt [Combo, Junk, Scan, TCP & UDP] (Class_7, Class_8, Class_9, Class_10 & Class_11): Gafgyt is an IoT botnet family that has been around for a long time with a lot of variants. A massive family with the same notoriety as Mirai has developed over time. Its variations have matured to the point that they can perform DDoS attacks, scan for vulnerabilities, execute commands, and download and execute malware in real-time. This Gafgyt service mode shows that the network of Gafgyt nodes is used for easy communication between administrators and users as well as passing command and control (C&C) instructions. Botnet administrators can use the Gafgyt network to keep track of various attack instructions supplied by users and answer inquiries and discuss ideas. Gafgyt is an Internet of Things-based botnet that uses a variety of smart routers as both bot nodes and targets. Generally, an IoT device infected with Gafgyt begins scanning the Internet for responding nodes soon after infection and then attempts penetration via weak password cracking or vulnerability exploitation, converting other PCs to bot nodes and spreading the botnet. The Gafgyt botnet prefers smart routers among IoT devices due to their vast numbers, a plethora of vulnerabilities, and weak management.

2.2. Data Pre-Processing

- -

- Header description

- -

- Over five separate time-windows (100 ms; 500 ms; 1.5 s; 10 s; and 1 min)

- -

- Seven statistical variables (mean; variance; count; magnitude; radius; covariance; and correlation coefficient) were calculated.

2.3. Feature Selection

2.3.1. RF-Based Feature Selection

2.3.2. Recursive Feature Elimination (RFE)

| Algorithm 1: Followed steps for Recursive Feature Elimination (RFE) |

|

2.3.3. Recursive Feature Elimination and Cross-Validation (RFECV)

2.3.4. Select-K-Best

2.4. Classifiers

2.4.1. Random Forest (RF)

2.4.2. eXtreme Gradient Boosting (XGBoost)

2.5. Model Performance Analysis

| Algorithm 2: Followed steps for XGBoost classifier |

|

3. Experimental Results

3.1. Performance Measures

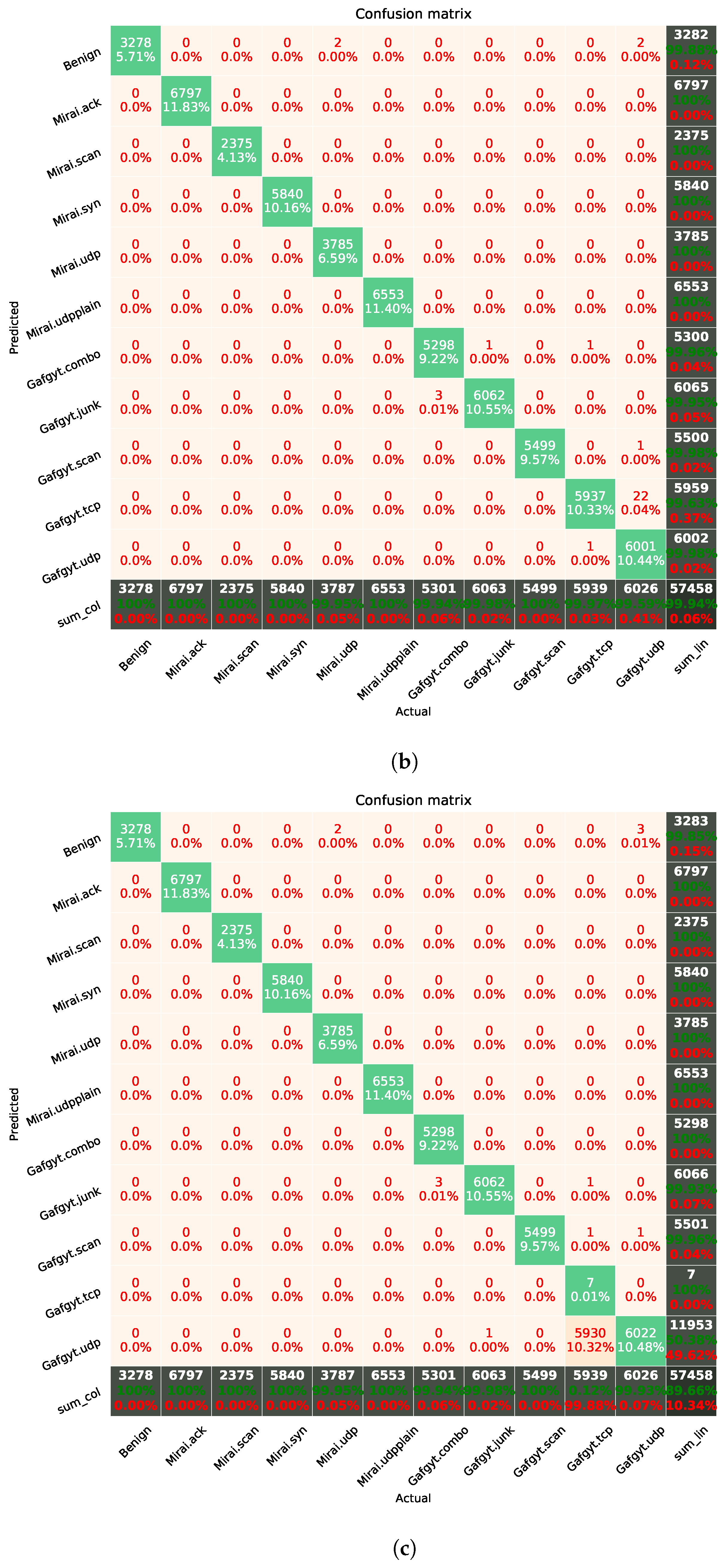

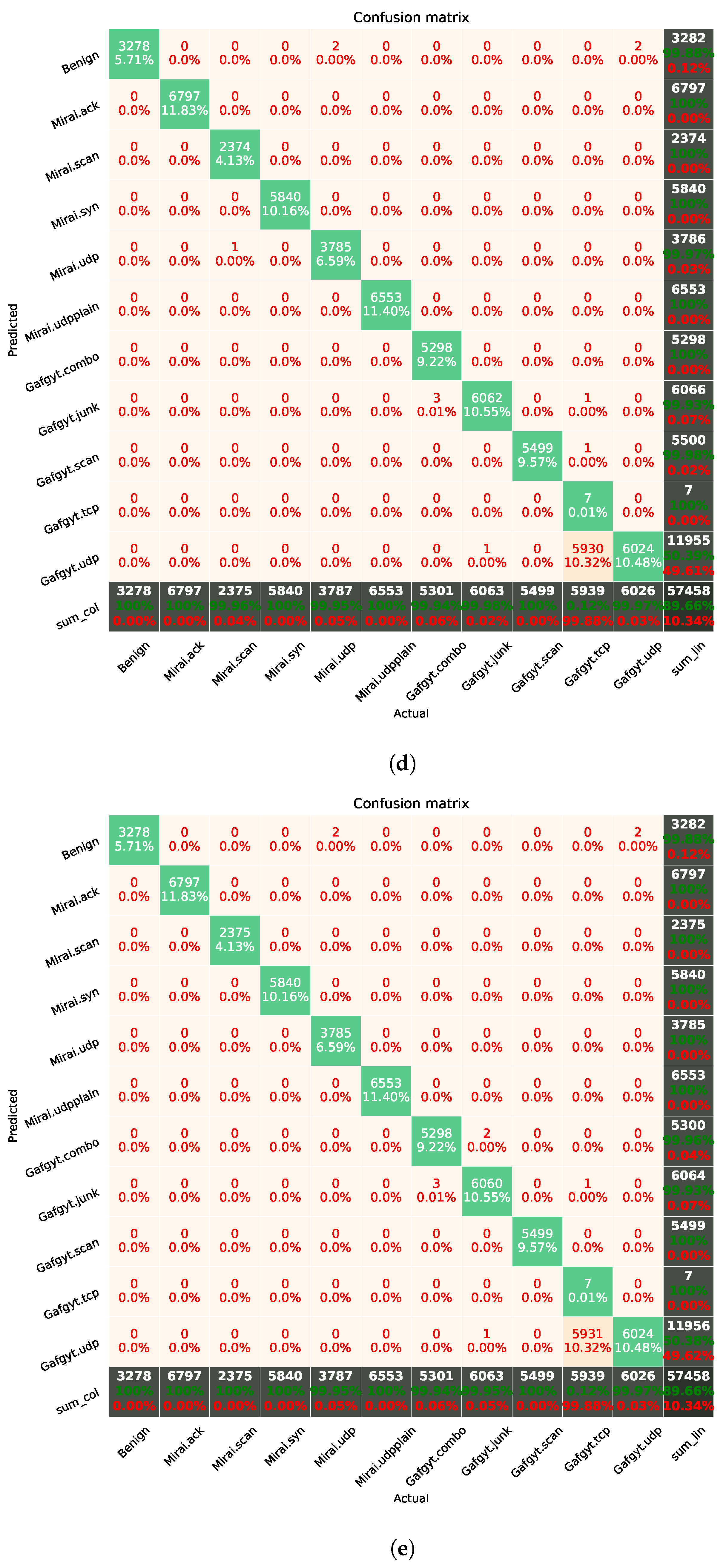

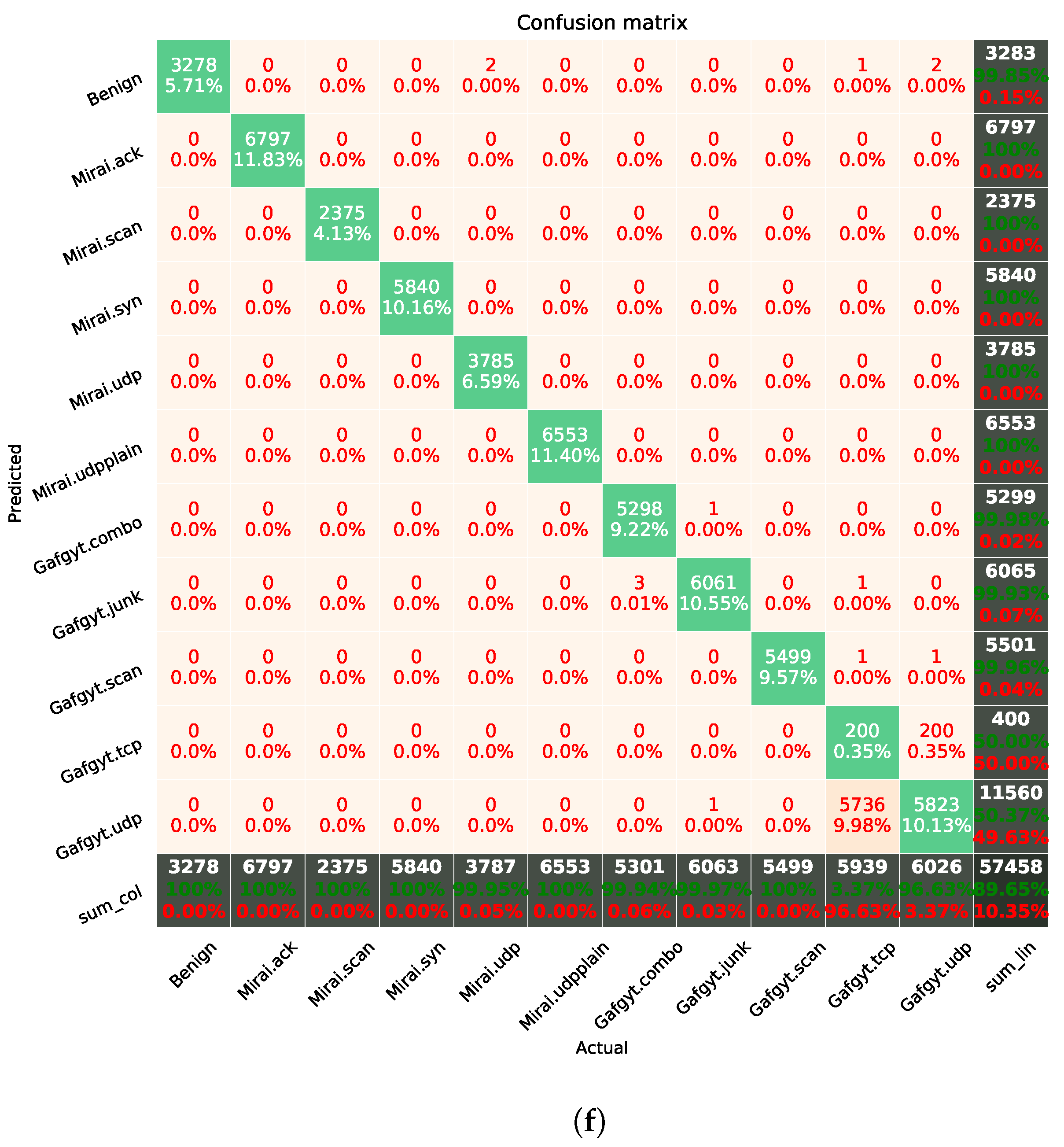

3.2. Confusion Matrix

3.3. Evaluation on Different Train-Test Schemes

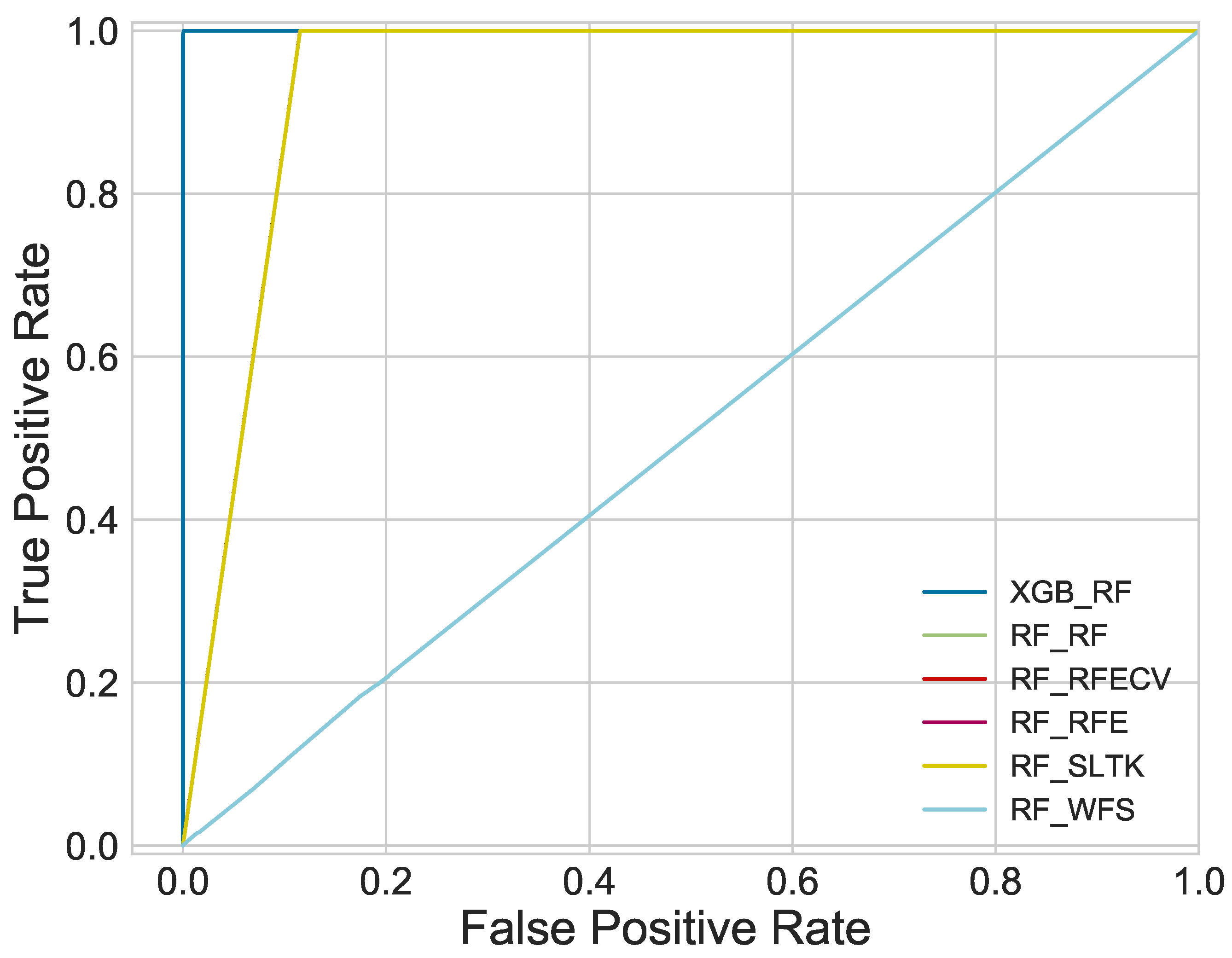

3.4. ROC Curve

3.5. Performance Comparison with Other Studies

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| IoT | Internet of Things |

| IDS | Intrusion Detection System |

| RF | Random Forest |

| XGB | eXtreme Gradient Boosting |

| KNN | K-nearest neighbor |

| SVM | Support Vector Machine |

| RFE | Recursive Feature Elimination |

| RFECV | Recursive Feature Elimination and Cross-Validation |

| WFS | Without Feature Selection |

| TCP | Transmission Control Protocol |

| UDP | User Datagram Protocol |

| ACK | Acknowledge |

| MCC | Matthew’s Correlation Coefficient |

| ROC | Receiver Operating Characteristic |

| ANN | Artificial Neural Network |

| NB | Naïve Bayes |

| DT | Decision Tree |

| DMLP | Deep Multilayer Perceptron |

| CART | Classification And Regression Trees |

| RNN | Recurrent Neural Network |

| LGBA-NN | Local-Global Best Bat Algorithm for Neural Networks |

References

- Fallahpour, A.; Wong, K.Y.; Rajoo, S.; Fathollahi-Fard, A.M.; Antucheviciene, J.; Nayeri, S. An integrated approach for a sustainable supplier selection based on Industry 4.0 concept. Environ. Sci. Pollut. Res. 2021, 1–19. [Google Scholar] [CrossRef] [PubMed]

- Attaran, M. The internet of things: Limitless opportunities for business and society. J. Strateg. Innov. Sustain. 2017, 12, 11–29. [Google Scholar]

- Symantec Internet Security Threat Report. 2019. Available online: https://docs.broadcom.com/doc/istr-24-2019-en (accessed on 30 June 2021).

- Fruhlinger, J. Top Cybersecurity Facts, Figures and Statistics. 2020. Available online: https://www.csoonline.com/article/3153707/top-cybersecurity-facts-figures-and-statistics.html (accessed on 30 June 2021).

- A Perfect Storm: The Security Challenges of Coronavirus Threats and Mass Remote Working. 2020. Available online: https://blog.checkpoint.com/2020/04/07/a-perfect-storm-the-security-challenges-of-coronavirus-threats-and-mass-remote-working/ (accessed on 30 June 2021).

- Manimurugan, S.; Al-Mutairi, S.; Aborokbah, M.M.; Chilamkurti, N.; Ganesan, S.; Patan, R. Effective attack detection in internet of medical things smart environment using a deep belief neural network. IEEE Access 2020, 8, 77396–77404. [Google Scholar] [CrossRef]

- Fathollahi-Fard, A.M.; Ahmadi, A.; Karimi, B. Multi-Objective Optimization of Home Healthcare with Working-Time Balancing and Care Continuity. Sustainability 2021, 13, 12431. [Google Scholar] [CrossRef]

- Muthanna, M.S.A.; Muthanna, A.; Rafiq, A.; Hammoudeh, M.; Alkanhel, R.; Lynch, S.; Abd El-Latif, A.A. Deep reinforcement learning based transmission policy enforcement and multi-hop routing in QoS aware LoRa IoT networks. Comput. Commun. 2021, 183, 33–50. [Google Scholar] [CrossRef]

- Fathollahi-Fard, A.M.; Dulebenets, M.A.; Hajiaghaei-Keshteli, M.; Tavakkoli-Moghaddam, R.; Safaeian, M.; Mirzahosseinian, H. Two hybrid meta-heuristic algorithms for a dual-channel closed-loop supply chain network design problem in the tire industry under uncertainty. Adv. Eng. Inform. 2021, 50, 101418. [Google Scholar] [CrossRef]

- Moosavi, J.; Naeni, L.M.; Fathollahi-Fard, A.M.; Fiore, U. Blockchain in supply chain management: A review, bibliometric, and network analysis. Environ. Sci. Pollut. Res. 2021, 5, 1–15. [Google Scholar] [CrossRef]

- Rafiq, A.; Ping, W.; Min, W.; Muthanna, M.S.A. Fog Assisted 6TiSCH Tri-Layer Network Architecture for Adaptive Scheduling and Energy-Efficient Offloading Using Rank-Based Q-Learning in Smart Industries. IEEE Sens. J. 2021, 21, 25489–25507. [Google Scholar] [CrossRef]

- Marzano, A.; Alexander, D.; Fonseca, O.; Fazzion, E.; Hoepers, C.; Steding-Jessen, K.; Chaves, M.H.; Cunha, Í.; Guedes, D.; Meira, W. The evolution of bashlite and mirai iot botnets. In Proceedings of the 2018 IEEE Symposium on Computers and Communications (ISCC), Natal, Brazil, 25–28 June 2018; pp. 00813–00818. [Google Scholar]

- Cisco Annual Internet Report (2018–2023) White Paper. 2020. Available online: https://www.cisco.com/c/en/us/solutions/collateral/executive-perspectives/annual-internet-report/white-paper-c11-741490.html (accessed on 30 June 2021).

- Vasilomanolakis, E.; Karuppayah, S.; Mühlhäuser, M.; Fischer, M. Taxonomy and survey of collaborative intrusion detection. Acm Comput. Surv. 2015, 47, 1–33. [Google Scholar] [CrossRef]

- Summerville, D.H.; Zach, K.M.; Chen, Y. Ultra-lightweight deep packet anomaly detection for Internet of Things devices. In Proceedings of the 2015 IEEE 34th International Performance Computing and Communications Conference (IPCCC), Nanjing, China, 14–16 December 2015; pp. 1–8. [Google Scholar]

- Midi, D.; Rullo, A.; Mudgerikar, A.; Bertino, E. Kalis—A system for knowledge-driven adaptable intrusion detection for the Internet of Things. In Proceedings of the 2017 IEEE 37th International Conference on Distributed Computing Systems (ICDCS), Atlanta, GA, USA, 5–8 June 2017; pp. 656–666. [Google Scholar]

- Alothman, Z.; Alkasassbeh, M.; Al-Haj Baddar, S. An efficient approach to detect IoT botnet attacks using machine learning. J. High Speed Netw. 2020, 26, 241–254. [Google Scholar] [CrossRef]

- Aburomman, A.A.; Reaz, M.B.I. Review of IDS development methods in machine learning. Int. J. Electr. Comput. Eng. 2016, 6, 2432–2436. [Google Scholar]

- Bijalwan, A. Botnet forensic analysis using machine learning. Secur. Commun. Netw. 2020, 2020, 9302318. [Google Scholar] [CrossRef]

- Shafiq, M.; Tian, Z.; Sun, Y.; Du, X.; Guizani, M. Selection of effective machine learning algorithm and Bot-IoT attacks traffic identification for internet of things in smart city. Future Gener. Comput. Syst. 2020, 107, 433–442. [Google Scholar] [CrossRef]

- Soe, Y.N.; Feng, Y.; Santosa, P.I.; Hartanto, R.; Sakurai, K. Machine learning-based IoT-botnet attack detection with sequential architecture. Sensors 2020, 20, 4372. [Google Scholar] [CrossRef]

- Diro, A.A.; Chilamkurti, N. Distributed attack detection scheme using deep learning approach for Internet of Things. Future Gener. Comput. Syst. 2018, 82, 761–768. [Google Scholar] [CrossRef]

- Ahmad, I.; Basheri, M.; Iqbal, M.J.; Rahim, A. Performance comparison of support vector machine, random forest, and extreme learning machine for intrusion detection. IEEE Access 2018, 6, 33789–33795. [Google Scholar] [CrossRef]

- Deng, L.; Li, D.; Yao, X.; Cox, D.; Wang, H. Mobile network intrusion detection for IoT system based on transfer learning algorithm. Clust. Comput. 2019, 22, 9889–9904. [Google Scholar] [CrossRef]

- Mirsky, Y.; Doitshman, T.; Elovici, Y.; Shabtai, A. Kitsune: An ensemble of autoencoders for online network intrusion detection. arXiv 2018, arXiv:1802.09089. [Google Scholar]

- Ustebay, S.; Turgut, Z.; Aydin, M.A. Intrusion detection system with recursive feature elimination by using random forest and deep learning classifier. In Proceedings of the 2018 International Congress on Big Data, Deep Learning and Fighting Cyber Terrorism (IBIGDELFT), Ankara, Turkey, 3–4 December 2018; pp. 71–76. [Google Scholar]

- Da Costa, K.A.; Papa, J.P.; Lisboa, C.O.; Munoz, R.; de Albuquerque, V.H.C. Internet of Things: A survey on machine learning-based intrusion detection approaches. Comput. Netw. 2019, 151, 147–157. [Google Scholar] [CrossRef]

- Meidan, Y.; Bohadana, M.; Mathov, Y.; Mirsky, Y.; Shabtai, A.; Breitenbacher, D.; Elovici, Y. N-baiot—Network-based detection of iot botnet attacks using deep autoencoders. IEEE Pervasive Comput. 2018, 17, 12–22. [Google Scholar] [CrossRef] [Green Version]

- Xie, H.; Wei, S.; Zhang, L.; Ng, B.; Pan, S. Using feature selection techniques to determine best feature subset in prediction of window behaviour. In Proceedings of the 10th Windsor Conference: Rethinking Comfort, Windsor, UK, 12–13 April 2018; pp. 315–328. [Google Scholar]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef] [Green Version]

- Genuer, R.; Poggi, J.M.; Tuleau-Malot, C. Variable selection using random forests. Pattern Recognit. Lett. 2010, 31, 2225–2236. [Google Scholar] [CrossRef] [Green Version]

- Parsa, A.B.; Movahedi, A.; Taghipour, H.; Derrible, S.; Mohammadian, A.K. Toward safer highways, application of XGBoost and SHAP for real-time accident detection and feature analysis. Accid. Anal. Prev. 2020, 136, 105405. [Google Scholar] [CrossRef] [PubMed]

- Friedman, J.H. Greedy function approximation: A gradient boosting machine. Ann. Stat. 2001, 29, 1189–1232. [Google Scholar] [CrossRef]

- Awal, M.A.; Masud, M.; Hossain, M.S.; Bulbul, A.A.M.; Mahmud, S.H.; Bairagi, A.K. A novel bayesian optimization-based machine learning framework for COVID-19 detection from inpatient facility data. IEEE Access 2021, 9, 10263–10281. [Google Scholar] [CrossRef]

- Htwe, C.S.; Thant, Y.M.; Thwin, M.M.S. Botnets Attack Detection Using Machine Learning Approach for IoT Environment. J. Phys. Conf. Ser. 2020, 1646, 012101. [Google Scholar] [CrossRef]

- Alharbi, A.; Alosaimi, W.; Alyami, H.; Rauf, H.T.; Damaševičius, R. Botnet Attack Detection Using Local Global Best Bat Algorithm for Industrial Internet of Things. Electronics 2021, 10, 1341. [Google Scholar] [CrossRef]

- Mason, S.J.; Graham, N.E. Areas beneath the relative operating characteristics (ROC) and relative operating levels (ROL) curves: Statistical significance and interpretation. Q. J. R. Meteorol. Soc. A J. Atmos. Sci. Appl. Meteorol. Phys. Oceanogr. 2002, 128, 2145–2166. [Google Scholar] [CrossRef]

- Abbas, A.; Khan, M.A.; Latif, S.; Ajaz, M.; Shah, A.A.; Ahmad, J. A New Ensemble-Based Intrusion Detection System for Internet of Things. Arab. J. Sci. Eng. 2021, 1–15. [Google Scholar] [CrossRef]

- Goeschel, K. Reducing false positives in intrusion detection systems using data-mining techniques utilizing support vector machines, decision trees, and naive Bayes for off-line analysis. In Proceedings of the SoutheastCon 2016, Norfolk, VA, USA, 30 March–3 April 2016; pp. 1–6. [Google Scholar] [CrossRef]

- Hezam, A.A.; Mostafa, S.A.; Ramli, A.A.; Mahdin, H.; Khalaf, B.A. Deep Learning Approach for Detecting Botnet Attacks in IoT Environment of Multiple and Heterogeneous Sensors. In Proceedings of the International Conference on Advances in Cyber Security, Penang, Malaysia, 24–25 August 2021; Springer: Singapore, 2021; pp. 317–328. [Google Scholar]

- Khoa, T.V.; Saputra, Y.M.; Hoang, D.T.; Trung, N.L.; Nguyen, D.; Ha, N.V.; Dutkiewicz, E. Collaborative Learning Model for Cyberattack Detection Systems in IoT Industry 4.0. In Proceedings of the 2020 IEEE Wireless Communications and Networking Conference (WCNC), Seoul, Korea, 25–28 May 2020; pp. 1–6. [Google Scholar] [CrossRef]

| Total Features | Feature Name |

|---|---|

| 115 | MI_dir_L5_weight, MI_dir_L5_mean, MI_dir_L5_variance, MI_dir_L3_weight, MI_dir_L3_mean, MI_dir_L3_variance, MI_dir_L1_weight, MI_dir_L1_mean, MI_dir_L1_variance, MI_dir_L0.1_weight, MI_dir_L0.1_mean, MI_dir_L0.1_variance, MI_dir_L0.01_weight, MI_dir_L0.01_mean, MI_dir_L0.01_variance, H_L5_weight, H_L5_mean, H_L5_variance, H_L3_weight, H_L3_mean, H_L3_variance, H_L1_weight, H_L1_mean, H_L1_variance, H_L0.1_weight, H_L0.1_mean, H_L0.1_variance, H_L0.01_weight, H_L0.01_mean, H_L0.01_variance, HH_L5_weight, HH_L5_mean, HH_L5_std, HH_L5_magnitude, HH_L5_radius, HH_L5_covariance, HH_L5_pcc, HH_L3_weight, HH_L3_mean, HH_L3_std, HH_L3_magnitude, HH_L3_radius, HH_L3_covariance, HH_L3_pcc, HH_L1_weight, HH_L1_mean, HH_L1_std, HH_L1_magnitude, HH_L1_radius, HH_L1_covariance, HH_L1_pcc, HH_L0.1_weight, HH_L0.1_mean, HH_L0.1_std, HH_L0.1_magnitude, HH_L0.1_radius, HH_L0.1_covariance, HH_L0.1_pcc, HH_L0.01_weight, HH_L0.01_mean, HH_L0.01_std, HH_L0.01_magnitude, HH_L0.01_radius, HH_L0.01_covariance, HH_L0.01_pcc, HH_jit_L5_weight, HH_jit_L5_mean, HH_jit_L5_variance, HH_jit_L3_weight, HH_jit_L3_mean, HH_jit_L3_variance, HH_jit_L1_weight, HH_jit_L1_mean, HH_jit_L1_variance, HH_jit_L0.1_weight, HH_jit_L0.1_mean, HH_jit_L0.1_variance, HH_jit_L0.01_weight, HH_jit_L0.01_mean, HH_jit_L0.01_variance, HpHp_L5_weight, HpHp_L5_mean, HpHp_L5_std, HpHp_L5_magnitude, HpHp_L5_radius, HpHp_L5_covariance, HpHp_L5_pcc, HpHp_L3_weight, HpHp_L3_mean, HpHp_L3_std, HpHp_L3_magnitude, HpHp_L3_radius, HpHp_L3_covariance, HpHp_L3_pcc, HpHp_L1_weight, HpHp_L1_mean, HpHp_L1_std, HpHp_L1_magnitude, HpHp_L1_radius, HpHp_L1_covariance, HpHp_L1_pcc, HpHp_L0.1_weight, HpHp_L0.1_mean, HpHp_L0.1_std, HpHp_L0.1_magnitude, HpHp_L0.1_radius, HpHp_L0.1_covariance, HpHp_L0.1_pcc, HpHp_L0.01_weight, HpHp_L0.01_mean, HpHp_L0.01_std, HpHp_L0.01_magnitude, HpHp_L0.01_radius, HpHp_L0.01_covariance, HpHp_L0.01_pcc |

| Short Name | Brief Description |

|---|---|

| MI | Stats summarizing the recent traffic from this packet’s Source MAC-IP |

| H | Stats summarizing the recent traffic from this packet’s host (IP) |

| HH | Stats summarizing the recent traffic going from this packet’s host (IP) to the packet’s destination host. |

| HpHp | Stats summarizing the recent traffic going from this packet’s host+port (IP) to the packet’s destination host+port. Example: 192.168.4.2:1242 ->192.168.4.12:80 |

| HH_jit | Stats summarizing the jitter of the traffic going from this packet’s host (IP) to the packet’s destination host. |

| ML Schemes | ACC | F1_Score | Kappa | MCC | Sensitivity | Specificity | Threat_Score | Balanced Accuracy |

|---|---|---|---|---|---|---|---|---|

| RF-RF | 89.6638% | 86.2163% | 88.5499% | 89.6139% | 89.6638% | 98.9503% | 86.3819% | 94.3070% |

| XGB-RF | 99.9426% | 99.9426% | 99.9364% | 99.9364% | 99.9426% | 99.9942% | 99.8921% | 99.9683% |

| RF-RFE | 89.6603% | 86.2128% | 88.5460% | 89.6100% | 89.6603% | 98.9499% | 86.3725% | 94.3051% |

| RF-RFECV | 89.6585% | 86.2112% | 88.5441% | 89.6077% | 89.6585% | 98.9498% | 86.3728% | 94.3041% |

| RF-SelectK | 89.6551% | 86.2076% | 88.5402% | 89.6042% | 89.6551% | 98.9494% | 86.3635% | 94.3022% |

| RF-WFS | 89.6324% | 86.8542% | 88.5153% | 89.4106% | 89.6324% | 98.9472% | 86.6114% | 94.2898% |

| Performance | Train-Test (70–30%) | Train-Test (67–33%) |

|---|---|---|

| Accuracy | 99.9564% | 99.9539% |

| F1_Score | 99.9565% | 99.9539% |

| Kappa | 99.9518% | 99.9489% |

| MCC | 99.9518% | 99.9489% |

| Sensitivity | 99.9565% | 99.9539% |

| Specificity | 99.9956% | 99.9953% |

| Threat_Score | 99.9220% | 99.9160% |

| Balanced Accuracy | 99.9760% | 99.9746% |

| Studies | Dataset | Classifiers | Accuracy |

|---|---|---|---|

| Adeel et al. [38] | CICIDS2017 | RF | 99.67% |

| Kathleen et al. [39] | KDDCup99 | SVM-DT-NB | 99.62% |

| Yan et al. [21] | N-BaIoT | NB-J48-ANN | 99.10% |

| Serpil et al. [26] | CICIDS2017 | DMLP | 91% |

| Chaw et al. [35] | N-BaIoT | CART | 99% |

| Abdulkareem et al. [40] | N-BaIoT | RNN | 89.75% |

| Tran et al. [41] | N-BaIoT | Collective Deep Learning | 99.84% |

| Abdullah et al. [36] | N-BaIoT | LGBA-NN | 90% |

| Proposed Method | N-BaIoT | XGB-RF | 99.94% |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Faysal, J.A.; Mostafa, S.T.; Tamanna, J.S.; Mumenin, K.M.; Arifin, M.M.; Awal, M.A.; Shome, A.; Mostafa, S.S. XGB-RF: A Hybrid Machine Learning Approach for IoT Intrusion Detection. Telecom 2022, 3, 52-69. https://doi.org/10.3390/telecom3010003

Faysal JA, Mostafa ST, Tamanna JS, Mumenin KM, Arifin MM, Awal MA, Shome A, Mostafa SS. XGB-RF: A Hybrid Machine Learning Approach for IoT Intrusion Detection. Telecom. 2022; 3(1):52-69. https://doi.org/10.3390/telecom3010003

Chicago/Turabian StyleFaysal, Jabed Al, Sk Tahmid Mostafa, Jannatul Sultana Tamanna, Khondoker Mirazul Mumenin, Md. Mashrur Arifin, Md. Abdul Awal, Atanu Shome, and Sheikh Shanawaz Mostafa. 2022. "XGB-RF: A Hybrid Machine Learning Approach for IoT Intrusion Detection" Telecom 3, no. 1: 52-69. https://doi.org/10.3390/telecom3010003

APA StyleFaysal, J. A., Mostafa, S. T., Tamanna, J. S., Mumenin, K. M., Arifin, M. M., Awal, M. A., Shome, A., & Mostafa, S. S. (2022). XGB-RF: A Hybrid Machine Learning Approach for IoT Intrusion Detection. Telecom, 3(1), 52-69. https://doi.org/10.3390/telecom3010003