1. Introduction

Cloud computing has emerged as a key enabler for enhancing operational efficiency and minimizing infrastructure expenses. Nevertheless, latency remains a significant challenge in centralized cloud architectures. To address this limitation, fog and edge computing paradigms have been developed, positioning computational resources closer to end-users. The proliferation of connected devices has further increased the demand for cloud-based services, particularly in terms of compliance verification and handling high-volume traffic. Consequently, robust testing methodologies are essential to evaluate how these evolving requirements impact system performance and to optimize distributed architectures for achieving user-centric goals.

The Internet of Things (IoT) represents a paradigm shift in modern computing by enabling everyday physical devices to possess processing and networking functionalities, thereby becoming intelligent and remotely manageable. This technological evolution facilitates the creation of innovative applications across various domains such as intelligent urban environments, industrial automation systems, and modern healthcare infrastructures [

1]. Data generated by IoT devices is predominantly handled through cloud platforms, recognized as the standard solution for delivering flexible and scalable computational services [

2]. Despite considerable progress in network communication technologies and increased bandwidth availability, centralized cloud architectures continue to experience response delays, particularly under heavy user loads or when applications require immediate data processing [

3]. Real-time data analysis and automated decision-making are essential requirements for IoT-driven applications. Existing approaches to IoT data management typically rely on transmitting substantial volumes of information to remote cloud servers for batch processing, rather than leveraging distributed architectures capable of real-time computation [

4].

The increasing demand for low-latency responses in IoT ecosystems, coupled with the enhanced computational power of peripheral devices such as gateways [

5], has driven the adoption of fog computing—a distributed architecture that relocates data processing closer to its origin. This architectural transition introduces significant challenges for validating distributed applications deployed across heterogeneous cloud-fog infrastructures. When conducting distributed testing, multiple test agents operate concurrently and autonomously, which frequently leads to coordination difficulties. In the absence of effective synchronization mechanisms among test components, issues related to event ordering and state observation may emerge, potentially compromising defect identification during conformance verification. Conventional testing strategies mitigate these issues by incorporating explicit synchronization messages into test sequences, resulting in considerable communication overhead.

Ensuring reliability in fog and cloud-based environments requires that distributed systems be thoroughly tested for conformance with their specifications. In fog-computing environments, where computation and data processing occur across heterogeneous and geographically dispersed nodes, the testing process must address issues of fault tolerance, observability, and synchronization among testers. In a typical distributed testing architecture, multiple testers operate in parallel, each interacting with the Implementation Under Test (IUT) through Points of Control and Observation (PCOs) and communicating via a multicast channel to maintain coordination. However, if testers are not properly synchronized, determining the chronological sequence of events captured at distinct observation points becomes challenging or even unfeasible

Traditional approaches resolve synchronization and observability issues by exchanging explicit coordination messages between testers. While effective in maintaining global test consistency, this strategy introduces substantial communication overhead, latency, and energy consumption, especially at scale or across geographically distant nodes. Moreover, high volumes of coordination traffic can interfere with normal system operation and degrade testing efficiency. Reducing coordination overhead is thus not merely an optimization objective: it directly targets the feasibility of distributed conformance testing in fog settings by mitigating synchronization and observability pressures induced by parallel, geographically dispersed testers. In practical fog/IoT deployments, minimizing coordination traffic reduces communication overhead and associated latency/energy costs, and prevents coordination messages from becoming a source of inefficiency or interference—an issue amplified under dynamic network conditions and large-scale distributed execution.

This paper addresses the following research problem: How can distributed testers dynamically coordinate in heterogeneous fog computing environments while minimizing communication overhead and preserving data privacy? Static coordination mechanisms cannot adapt to variable network conditions, leading to either over-coordination (excessive messages causing congestion) or under-coordination (missed synchronization causing test failures). We therefore ask: Can deep reinforcement learning, combined with federated learning, enable distributed testers to learn adaptive coordination policies that outperform traditional approaches in both efficiency and reliability?

To address these challenges, the primary objective of this work is to propose a distributed testing framework inspired by fog computing principles and leveraging Deep Q-Networks (DQN) and federated learning to optimize the testing process. Our approach replaces explicit coordination messages with learned decision functions that dynamically determine when and how testers should coordinate, resulting in more efficient test execution without compromising fault detection capability. More specifically, this work presents the following key contributions: (i) a novel framework integrating Deep Q-Networks (DQN) with Federated Learning for distributed test coordination in fog computing environments; (ii) an adaptive decision function that dynamically learns when coordination is necessary based on real-time system state; (iii) a privacy-preserving approach where testers learn locally without sharing raw test data, using federated aggregation to build a robust global model; and (iv) empirical validation through a case study demonstrating reduced coordination messages while improving test reliability.

The rest of this article is structured as follows:

Section 2 provides the theoretical background and situates our research within the existing literature.

Section 3 introduces the adaptive test coordination methodology and the system model.

Section 4 details the federated training process.

Section 5 reports the case study and experimental evaluation.

Section 6 concludes this work and discusses potential avenues for future research.

2. Background and Related Work

2.1. Internet of Things vs. Cloud Computing

The IoT concept empowers physical objects with intelligence capabilities, interconnected entities capable of transferring data over a network without direct human involvement. The immense volume of data generated by these devices requires a distributed architecture to ensure effective data storage and processing. Consequently, combining Cloud Computing (CC) with IoT has proven highly beneficial, since the substantial data produced by IoT devices demand flexible storage capabilities and virtual resource provisioning that cloud platforms provide [

1]. On the other hand, cloud platforms provide substantial computational assets to IoT infrastructures, including processing capabilities and data storage facilities. However, this paradigm has certain drawbacks such as communication delays occurring between IoT endpoints and remote cloud servers.

Both cloud and IoT technologies have undergone significant advancements, and their features frequently complement each other. In fact, cloud platforms can provide effective mechanisms for managing IoT services by enabling applications that leverage connected devices and their generated data. Conversely, cloud infrastructures gain advantages from IoT integration by broadening their reach to interact with physical objects in a more decentralized and adaptive way.

2.2. Distributed Cloud Architecture: Fog Computing vs. Edge Computing

Cloud Computing refers to a computing model in which distant servers handle processing and data storage resources, with a key feature being flexibility, providers can automatically adjust resources according to customer needs. To reduce resource waste associated with the cloud, a new technology known as “fog” or “edge” computing has been introduced through collaborative efforts between industrial practitioners and academic researchers [

3].

Fog Computing (FC) and Edge Computing (EC) bring cloud computing capabilities closer to the network periphery. Both approaches aim to perform immediate computation on data produced by connected devices near the network boundary, thereby substantially minimizing response delays. The key distinction between fog and edge computing lies in the type of IT equipment used. Edge computing typically executes at endpoint devices themselves (compute resources reside within the connected object), whereas fog computing relies on separate network intermediate nodes in the IT infrastructure, including IoT gateway devices [

5]. This distributed continuum is often conceptualized as a three-layer architecture that forms the basis of the testing model:

Core Cloud: The central component of this model, possessing full traditional cloud functionalities such as administration and delivery of cloud-based services and data, encompassing the complete range of cloud offerings.

Regional Cloud: Facilitates inter-layer communication through data exchange across architectural tiers.

Edge Cloud: Positioned in proximity to end-users, enabling direct interaction with customers. Fog computing represents a typical implementation of this edge layer.

In general, the core cloud operates at the highest scale within the upper tier, while the regional cloud functions at a reduced scale offering intermediary services such as mobility support, automated service deployment, and caching mechanisms. The edge cloud represents the smallest tier positioned nearest to end-users, capable of integrating device characteristics, service requirements, geographic parameters, and user preferences to provision and deliver time-sensitive cloud services.

This layered view is essential for the testing foundations developed next: once computation and service delivery become stratified across core/regional/edge, testing and observation are also naturally distributed, and the cost of coordination becomes intertwined with the underlying communication fabric that connects these layers.

2.3. Distributed Conformance Testing

Conformance testing is a fundamental technique used to verify whether a system implementation conforms to its formal specification. It is typically applied in a black-box setting, where the internal functioning of the Implementation Under Test (IUT) remains hidden, and verification relies exclusively on monitoring input stimuli and output responses. In the context of distributed systems, conformance testing introduces unique challenges due to parallelism and geographic dispersion. Two essential properties determine the feasibility of testing such systems:

Observability: the ability to determine system outputs and their execution order at observation points.

Controllability: the ability to apply input sequences that generate specific, expected outputs at corresponding interfaces.

Lack of synchronization among distributed testers can severely impact both properties. Without coordination, testers may be unable to determine the causal order of events or to synchronize the timing of input and output operations. As a result, observability problems arise when a tester cannot determine when to start or stop waiting for a response, while synchronization problems occur when inputs at different ports cannot be applied in the correct order [

6].

Traditional solutions rely on explicit coordination messages among testers to maintain global synchronization and event order. However, these messages significantly increase network traffic and energy consumption, making traditional testing methods inefficient for large-scale or dynamic distributed systems, particularly in fog and edge environments where resources are constrained and network conditions can vary.

2.4. Related Work

Numerous studies have investigated testing distributed applications in distributed cloud and fog environments. A decentralized cloud model integrating edge computing, mobile edge computing, and cloudlets has been proposed to bring cloud services closer to mobile devices, with discussion of the benefits, applications, and challenges associated with distributed cloud environments [

7]. Testing perspectives for smart applications under fog computing have also been reviewed by presenting the fundamental concepts underlying fog computing architecture and evaluating various testing methodologies and their results within intelligent residential, healthcare, and transportation application areas [

8].

Federated learning has been explored as a privacy-preserving paradigm for IoT environments. A lightweight federated learning perspective summarizes enabling technologies, protocols, platforms, real-life use cases, and key research challenges [

9]. Federated deep reinforcement learning has been studied through service-based approaches that adapt Deep Q-learning and introduce a federated DQN (FDQN) for tasks such as anomaly detection and attack classification [

10]. Trust assessment has also been integrated into a Dynamic-DQN reinforcement-learning scheduling policy for federated IoT edge computing, accounting for trust levels and energy limitations in task allocation decisions [

11].

Several works address coordination issues in distributed testing. In this context, automated test-based verification for microservices systems is presented in [

12]. Furthermore, a comprehensive survey on performance testing and fault-tolerance mechanisms as a service in cloud environments are addressed in [

13,

14].

Contemporary studies have significantly progressed the integration of machine learning and optimization methods for testing and coordination purposes within distributed architectures. A multi-agent deep reinforcement learning framework is proposed for microservices testing, demonstrating improved fault detection in containerized environments [

1]. An adaptive test coordination mechanism using Q-learning is introduced for IoT systems [

15]. In federated learning, a proximal variant is proposed to address heterogeneity in distributed optimization [

16], while communication-efficient federated aggregation provides a foundational baseline [

17]. In addition, partial-order reduction methods [

18] and constraint-based approaches [

19] have been explored for test optimization, but they typically assume static environments with predetermined coordination points.

Despite these advances, few studies leverage modern AI to address coordination decisions in distributed conformance testing where coordination is required to mitigate observability and synchronization issues, yet coordination messages introduce non-trivial communication overhead. Existing solutions typically depend on static or predefined synchronization protocols that lack adaptability to dynamic network conditions. Moreover, although federated learning can enable decentralized optimization without centralized data sharing, federated learning-based coordination for distributed testers remains underexplored in this setting. To fill this gap, our work introduces a Federated Deep Q-Network approach for distributed cloud testing that combines the decision-making capabilities of Deep Q-Networks with the privacy-preserving collaboration of Federated Learning. This integration enables distributed testers to learn adaptive coordination strategies, reducing communication overhead while preserving accuracy and conformance in large-scale, fog-enabled environments.

3. Adaptive Test Coordination Methodology

To address the problem stated in

Section 1, we propose a novel distributed cloud testing architecture that integrates our Federated Deep Q-Network approach.

3.1. Proposed System Architecture

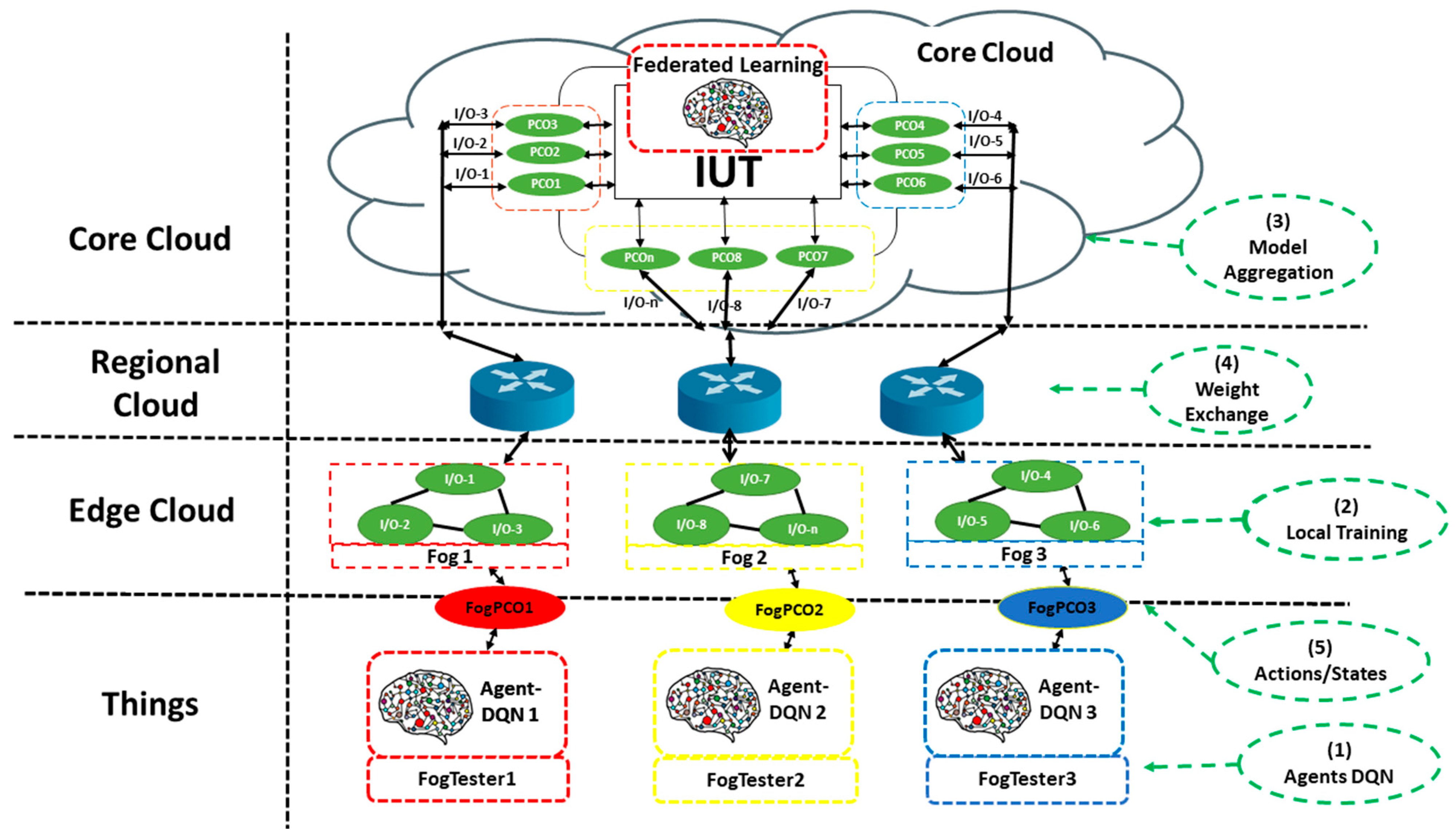

To evaluate the Implementation Under Test (IUT) within a heterogeneous and distributed environment, we propose the 4-layer hierarchical architecture illustrated in

Figure 1. This architecture is designed to map our federated DQN solution onto a realistic Fog/Cloud infrastructure.

Things Layer: This foundational layer comprises the distributed testers. Each tester is an autonomous entity that hosts a DQN Agent. These agents are responsible for making real-time, local coordination decisions and executing their portion of the test sequence.

Edge Cloud Layer: This layer consists of the fog infrastructure. These fog nodes are geographically distributed and co-located with the testers. Each fog node contains multiple I/O components and is governed by a Fog-PCO. These Fog-PCOs are responsible for monitoring and ensuring coordination with higher-level components (linking Fog-Testers to the Edge Cloud layer).

Regional Cloud Layer: This intermediary layer consists of network routers and regional aggregators. Its primary function is to bridge the edge deployments with the central cloud. It aggregates data and model updates from the Edge Cloud’s PCOs, reducing the communication load on the Core Cloud.

Core Cloud Layer: This layer hosts the central elements of the architecture. This includes the IUT, regarded as an opaque entity that can be accessed through a collection of geographically distributed PCOs. The Core Cloud layer also hosts several additional PCOs, which function as communication bridges connecting the edge layer to the centralized learning component. These PCOs receive updates from the regional layer, perform preprocessing, and sup-port the federated training pipeline.

In this architecture, each Agent-DQN (tester) interacts with the IUT. The I/O from the IUT is routed through the regional and edge layers to the corresponding FogTester.

During training, each DQN agent collects local experiences (states, actions, rewards) at the Things layer. These experiences are used for local training at the Edge Cloud layer within each Fog node. Periodically, model weights are sent to the Core Cloud for federated aggregation, then redistributed to all agents.

3.2. Model Formalism

Definition 1. System Components.

The distributed testing environment is represented by the following sets:

F = {, , . . . ,}: Set of fog nodes

T = {,, . . . ,}: set of testers, where is attached to fog nod

P = {, , . . . ,}: set of PCOs

R= {, , . . . ,}: set of regions in the distributed cloud

The mapping functions are defined as: maps PCOs to regions, maps regions to fog nodes.

Definition 2. Traditional vs. DQN-Enhanced Test Sequences.

A Local Test Sequence for a tester

is defined as a finite ordered sequence of test actions:

where

is one of:

!x: sending input x to the IUT

?y: receiving output y from the IUT

/ Sending/receiving a synchronization message to/from tester .

/ Sending/receiving an observation message to/from tester .

This conventional model relies on explicit coordination messages, which increase network traffic and synchronization delay. Our DQN-Enhanced LTS replaces these static coordination messages with a dynamic decision function

. The sequence becomes:

where each

belongs to:

and

is a decision function evaluating state

. At points requiring coordination, the agent decides the optimal action based on the current state

.

We model the sequential decision-making process of each tester as a tuple ⟨, , , , γ⟩, where is the state space, is the action space, is the state transition probabilities, is the reward function measuring testing performance, and γ the discount factor.

Definition 3. State Space .

The state space

for tester

includes:

where:

: Current position in the test sequence

: History of messages sent/received so far

: Wait time for expected messages

: Network condition metrics for relevant fog connections

: Historical information about other testers from past coordination events

It is important to note that does not represent a real-time view obtained through continuous communication with other testers. Rather, it represents historical information about other testers from past coordination events, maintained locally by tester . The construction and update of occur without continuous data exchange:

Initialization: is initialized as empty or with known initial system states.

Event-driven update: is updated only when a coordination message is sent or received. The tester updates its historical knowledge about the relevant tester based on the message content.

This mechanism ensures that maintaining incurs no additional communication overhead, as updates occur exclusively through coordination messages that are already selectively exchanged based on the learned policy.

Definition 4. Action Space.

The action space

for tester

includes:

where:

: Send coordination message to tester

: Wait for coordination message from tester

: Skip coordination (proceed without explicit coordination)

: Send input to

: Check for output from

Definition 5. Reward Function .

The reward function

:

×

×

→ R for tester

guides the agent toward optimal behavior. It is a weighted sum designed to balance conflicting objectives:

where:

: Reward for maintaining correct test sequence (+1 if correct, −1 if incorrect)

: Reward based on reduction in coordination messages compared to traditional approach (−0.1 per coordination message)

: Reward for test completion (+10 if test completes successfully)

: Reward for correctly detecting faults (+5 for each correctly detected fault)

α, β, γ, δ: Weighting coefficients

Definition 6. Q-Value Function and Decision Function.

The Q-value function

:

×

→R represents the expected cumulative future reward for tester

taking action

in state

:

where λ ∈ [0, 1] is the discount factor for future rewards. The optimal decision function

(

simply selects the action that maximizes this Q-value, it determines the optimal action for tester

in state

,

The output of the decision function can be mapped to coordination actions:

With ε: represents skipping coordination.

3.3. DQN-Based Decision Making

Our methodology is built upon the Deep Q-Network algorithm, A DQN approximates the optimal action-value function, (,), which represents the maximum expected cumulative reward achievable from state s by taking action a. We first present the foundational DQN algorithm and then detail its integration into our federated architecture in next sections. At its core, each tester learns its policy using a process based on the standard DQN algorithm. This algorithm iteratively gathers experience by interacting with an environment and optimizes an action-value Q function to find the best policy.

Algorithm 1 details this fundamental process, where M denotes the number of training episodes (150), T the maximum steps per episode (15), N the replay memory capacity (2000), and C the target network update frequency (100 steps). In a centralized model, a single agent would interact with the environment, store all transitions in a unified replay memory D, and perform gradient descent to update a single Q-network. We describe below the key steps of this algorithm:

Step 1: Initialization

Step 2 (line 7 to line 15): This step ensures the balance between exploration and exploitation. With probability ε, tester selects a random action from the action space (Equation (4)), enabling the discovery of new coordination strategies. With probability (1 − ε), it selects the optimal action according to Equation (7), based on the current state (Equation (3)). The parameter ε decreases progressively, allowing a transition from exploration to exploitation as learning progresses.

Step 3 (line 16): To ensure training stability, DQN uses a separate target network parameterized by . The target values in (Step 13) are computed from this target network, which remains fixed for C steps before being synchronized with the main network (←).

| Algorithm 1 DQN Training for Distributed Test Coordination |

Initialize replay memory D to capacity N Initialize action-value function Q with random weights θ Initialize target action-value function with weights for episode = 1 to M do Initialize state for each tester for t = 1 to T do for each tester do With probability ϵ select a random action Otherwise select Execute action and observe reward and next state Store transition in D Sample random minibatch of transitions from D Set Perform gradient descent step on with respect to θ end for Every C steps reset end for end for

|

4. Federated Training of the Coordination Model

Training the distributed DQN agents presents a significant challenge. A centralized approach, where all testers send their raw experience data (states, actions, rewards) to a central server, would violate data privacy and reintroduce the very network bottlenecks we aim to solve. Therefore, we adopt a Federated Learning approach. In our federated model, each FogTester acts as an FL client. It trains its local DQN model using only its own private data . Periodically, instead of sending data, it sends its updated model parameters (weights) to the central FL server in the Core Cloud. The server aggregates these parameters to create an improved global model and distributes it back to the testers. This preserves privacy and enhances efficiency. This process iterates through three steps:

Local Model Update: Each fog node

updates its local model parameters

using its private local dataset

.

where µ is the local learning rate and ∇L is the gradient of the loss function with respect to the local data

.

Global Model Aggregation: After local training, the central server aggregates the updated local models from all participating testers to compute the new global model

.

Model Distribution: Finally, the server distributes the new, aggregated global model back to all participating testers. Each tester then overwrites its local model parameters with the updated global parameters before beginning the next round of testing and local training:

While Algorithm 1 describes a centralized model, our architecture (

Figure 1) is inherently distributed. To train a global policy

using the local data

from each Fog-Tester, we employ a federated learning orchestration as detailed in Algorithm 2. This high-level process is managed by the Aggregator Server. It distributes the current global model

to a set of participating fog nodes

. Each node then computes a local update

(a new set of model weights) and sends it back. The server performs a weighted average to produce the next iteration of the global model.

In Algorithm 2, R denotes the number of federated rounds (4), F the set of all fog nodes, the subset of fog nodes selected for round r, and the size of local dataset at node .

| Algorithm 2 Federated Learning for DQN in Distributed Cloud Testing |

- 1.

Initialize global model with random weights - 2.

for each round r = 1, 2, …, R do - 3.

Select set of fog nodes to participate in this round - 4.

for each fog node in parallel do - 5.

(Set local model to global model) - 6.

LocalUpdate - 7.

Send to server - 8.

end for - 9.

(Update global model) - 10.

Distribute to allfog nodes - 11.

end for

|

Note: denotes the state space of tester , while in Algorithm 2 denotes the set of fog nodes selected for round r.

The LocalUpdate (,) function (called in Algorithm 2) is the core client-side operation. This process consists of two conceptual phases, which are described in Algorithm 1 but are now executed locally in the FL context:

Experience Gathering: Each client uses its local model to interact with its part of the IUT, gathering new transitions and storing them in its private local replay memory .

Local Training: After gathering experience, the client trains its local model by performing E local epochs of gradient descent over batches from its local data .

In Algorithm 3, E denotes the number of local epochs, the number of batches, and µ the local learning rate (0.001).

| Algorithm 3 LocalUpdate |

- 1.

Split local dataset into batches - 2.

for each local epoch e = 1, 2, …, E do - 3.

for batch - 4.

Compute gradient g = - 5.

Update local model: -µ · g - 6.

end for - 7.

end for - 8.

Return

|

Once the federated training converges, the final, optimized global model deployed across all Fog-Testers. During runtime, each tester uses its Q-network to select optimal coordination actions based on the current system state, as described in the Algorithm 4. In such pseudocode, s denotes the current state of tester , the action space and (s,a) the learned Q-value for state-action pair. The function returns the coordination command: ε(skip), !/!(send), or ?/? (wait). We describe below the key steps of Algorithm 4:

Line 3: This step computes the optimal action by selectin (s,a). The trained Q-network evaluates all possible actions in the action space and returns the one with the highest expected cumulative reward.

Lines 4–10: These steps translate the selected action into a concrete coordination command:

- ○

If = : return ε to skip coordination (line 5)

- ○

If = : return or to send a message to tester (line 7)

- ○

If = : return or to wait for a message from (line 9)

- ○

Otherwise: return the appropriate input/output action with the IUT.

| Algorithm 4 Decision Function Implementation |

- 1.

Input: Current state s of tester - 2.

(s,a) (Get best action from Q-network) - 3.

(s,a) (Get best action from Q-network) - 4.

If then - 5.

Return ε (skip coordination) - 6.

else if then - 7.

return or (send coordination message to ) - 8.

else if then - 9.

return or (wait for coordination message from ) - 10.

Else return Appropriate action for input/output - 11.

end if

|

5. Case Study: VR-Assist Rental Testing with DQN and Federated Learning

5.1. Overview

Building upon the foundational fog-based testing architecture proposed by [

20], this work introduces a significant enhancement: The integration of intelligent, learning-based agents at the Fog-Tester level. The core objective remains the minimization of coordination messages; however, instead of relying on static, pre-defined Local Test Sequences with embedded synchronization commands, our approach employs Deep Q-Networks (DQNs) to learn an optimal coordination policy dynamically based on real-time system and network state.

Each Fog-Tester is now an autonomous DQN agent responsible for a specific geographical area (e.g., Downtown, Midtown, Suburbs). Its primary decision is binary: to coordinate with other fogs or to act independently when interacting with IUT in the core cloud. The state space for each agent is designed to capture the essential context of a testing step, including:

Step: The type of user request (SIMPLE, CROSS_AREA, PEAK_LOAD, CONGESTED, BULK).

query_type: The type of user request (SIMPLE, CROSS_AREA, PEAK_LOAD, CONGESTED, BULK).

cross_area: A boolean indicating if the request spans multiple geographical areas.

network_congestion: A continuous value representing current network congestion.

peak_hours: A boolean indicating if the request occurs during peak load hours.

system_load: The current computational load on the local fog node.

complexity: The inherent complexity of the request.

The action space is {0, 1}, representing Do not coordinate and Coordinate, respectively. The reward function is carefully crafted to penalize unnecessary coordination (which consumes bandwidth and increases latency) and reward intelligent independent actions or necessary coordination that prevents test failures.

To address data privacy and leverage the distributed nature of the fog architecture, we implement Federated Learning (FL) at the regional cloud layer. The Fog-Testers (clients) train their DQN models locally on their specialized experiences. Periodically, their model weights are sent to the regional cloud, where a Federated Averaging (FedAvg) algorithm aggregates them to form an improved global model. This global model is then distributed back to all Fog-Testers. This process ensures that each agent benefits from the collective learning of all nodes without ever sharing raw, potentially sensitive local data, enhancing both performance and privacy.

5.2. Methodology

The VR-assist rental service was deployed across three distinct geographical zones, each with unique operational characteristics mimicking real-world scenarios:

Downtown Zone: Characterized by high network congestion (congestion ~ 0.6–0.9), frequent peak hours, and complex, multi-criteria property searches. The dominant query types are CONGESTED and CROSS_AREA.

Midtown Zone: Experiences moderate and variable network conditions (congestion ~ 0.4–0.8). The query load is a balanced mix of SIMPLE and CROSS_AREA types.

Suburbs Zone: Features stable network conditions (congestion ~ 0.1–0.4) and lower system load. Requests are predominantly SIMPLE or BULK queries for larger properties.

Each Fog-Tester agent was initially trained on episodes tailored to its zone’s profile, leading to specialized policy learning. Federated averaging then merged these specialized policies into a robust global model.

Table 1 provides examples of states, actions, and rewards encountered by the DQN agents in different zones, demonstrating the learned policy.

The DQN agents were implemented using Python (3.10.0) with TensorFlow (2.19.0)/Keras (3.10.0). The neural network architecture consists of an input layer with 7 neurons corresponding to the state space (step, query_type, cross_area, network_congestion, peak_hours, system_load, and complexity), three hidden layers with 64, 32, and 16 neurons, respectively, using ReLU activation, and an output layer with 2 neurons using linear activation for Q-values.

The reward function defined in Equation (5) uses the following weighting coefficients: α = 0.3 (correctness), β = 0.3 (efficiency), γ = 0.2 (completion), and δ = 0.2 (fault detection). The training configuration uses the following hyperparameters: replay buffer capacity N = 2000, learning rate = 0.001, discount factor λ = 0.95, batch size = 32, initial exploration rate ε0 = 1.0 with decay rate 0.995 to minimum ε_min = 0.01, target network update frequency C = 100 steps, and Huber loss function. Each agent was trained for 150 episodes, with federated averaging performed every 30 local episodes over 4 rounds. Performance was measured over 50 separate test episodes to ensure unbiased evaluation.

5.3. Results and Comparative Analysis

Figure 2 summarizes the training dynamics and convergence behavior of the centralized DQN and the proposed federated DQN in the fog setting. In

Figure 2a, the centralized DQN exhibits the expected exploration-to-exploitation trajectory: cumulative reward starts negative during early exploration and increases steadily, reaching a stable plateau at an average reward of 142.3 after roughly 100 training episodes.

Figure 2b reports the corresponding optimization signal, where the Huber loss decreases smoothly with limited oscillation, indicating stable temporal-difference updates. This behavior is consistent with the use of a target network updated periodically (every C = 100 steps), which mitigates moving-target instability during learning.

The federated setting in

Figure 2c highlights both local adaptation and global knowledge sharing across the three fog zones (Downtown, Midtown, Suburbs). Each FogTester learns a zone-specific policy, while periodic federated averaging rounds (R1–R4) synchronize model updates to propagate useful structure across clients. The resulting policies converge to a higher final performance, with a final average reward of 158.7, outperforming the centralized DQN baseline (142.3). This improvement suggests that combining localized learning (capturing zone-specific dynamics) with global aggregation yields more effective coordination policies than training a single centralized model.

Finally,

Figure 2d quantifies the primary systems-level objective—reducing coordination overhead. As training progresses, agents increasingly skip unnecessary coordination actions, driving a sustained decrease in the average number of coordination messages per episode. The learned policies achieve 5.2 messages per episode on average, compared to 12.5 under the traditional LTS baseline, corresponding to a 58% reduction in coordination traffic. Importantly, the monotonic trend in message reduction mirrors reward stabilization, indicating that lower coordination is achieved through policy refinement rather than random action selection.

Overall, these learning curves show consistent convergence for both centralized and federated training, and they provide evidence that the federated approach achieves superior reward while substantially reducing coordination overhead. We evaluate the Intelligent Federated DQN approach against (i) the traditional LTS-based method [

20] and (ii) a centralized DQN baseline over 50 test episodes;

Table 2 reports the corresponding quantitative comparison.

The results demonstrate a clear superiority of the learning-based approaches over the static method. The Federated DQN achieved a ~58% reduction in coordination messages compared to the traditional LTS approach, significantly surpassing the original paper’s goal of minimizing messages.

This simultaneous reduction in coordination messages and test failure rate (from 18% to 4%) is explained by the over-coordination problem of traditional approaches: sending messages during high network congestion or system load causes timeouts and failures rather than preventing them. As illustrated in

Table 1, the DQN learns to skip coordination in unfavorable conditions (e.g., Downtown with congestion = 0.9, Suburbs with PEAK_LOAD) while maintaining it when beneficial.

In fact, while the LTS method uses pre-defined, conservative coordination points, the DQN learns a more nuanced policy, coordinating only when necessary to ensure test correctness and performance. Furthermore, the federated approach’s performance is comparable to the centralized DQN in terms of failure rate and reward while providing the crucial added benefits of privacy preservation and specialization to diverse geographical zones. The ability to learn from each zone’s unique characteristics without exposing local data makes it a more scalable and secure solution for real-world distributed systems.

This case study validates that integrating federated learning into fog-based testing architectures is not only feasible but highly advantageous. The Intelligent Federated DQN successfully addresses the core challenge of coordination message optimization posed by [

20], but does so with a dynamic, adaptive, and privacy-conscious strategy. The system moves from a rigid, rule-based paradigm to a learning-based one, able to optimize complex trade-offs between test validity, network utilization, and latency in real-time. This lays the groundwork for future research into more complex multi-agent reinforcement learning schemes for full autonomous validation of distributed cloud systems.

6. Conclusions

In summary, the paper has introduced a novel framework for distributed cloud testing that synergizes Deep Q-Networks (DQN) with federated learning. Our approach moves beyond traditional reliance on explicit coordination messages by deploying intelligent agents that learn nuanced decision functions. These functions dynamically determine the optimal moments and methods for coordination, enabling a more adaptive and efficient testing process tailored to the real-time state of the system.

The core innovation of our work lies in its integrated contributions: a unified mathematical model that formalizes distributed testing within fog computing environments, a privacy-conscious federated learning mechanism for training DQN agents across distributed nodes, and adaptive decision functions that minimize unnecessary coordination. The empirical validation through a detailed case study confirms that this integrated approach significantly enhances test execution efficiency while rigorously preserving, and even improving, fault detection capabilities compared to static coordination methods.

As prospects, this research opens several promising avenues. Future work will focus on extending the model’s applicability to performance and security testing within distributed clouds, ensuring its robustness across a broader spectrum of quality attributes. We also plan to investigate the potential of other advanced reinforcement learning algorithms to further optimize decision-making. Additionally, future comparisons with other coordination optimization strategies, such as partial-order reduction methods and constraint-based approaches, would provide a more comprehensive evaluation of our framework.

Furthermore, while our results demonstrate a simultaneous reduction in coordination messages (58%) and test failure rates (from 18% to 4%), this specific performance dynamic would benefit from more detailed empirical analysis in future work to further validate and characterize the conditions under which this dual improvement occurs.

Finally, the principles established here are not limited to IoT applications and present a significant opportunity for improving testing and coordination in other complex distributed systems.