1. Introduction

One of the basic requirements of digital preservation is maintaining the fixity of digital content—the property of a digital file or object being fixed or unchanged [

1]—because we need to have confidence that the objects we have in storage are the same as they were when we put them in there. However, the problem with a digital image is that it does not actually exist. What we usually call a digital image is a file with bitstream numeric representation of the image, which is raw data that the digital object consists of, for example, actual colour information for pixels in the digital raster image and headers or technical metadata used to determine the file format for image viewing software. To actually perceive it, we need to have access to that kind of software, be able to use it on some operating system/hardware, and finally have some output system that actually generates the image that we can see, be it a computer monitor, printer, or some kind of device that directly manipulates our optic nerves [

2]. Therefore, even if we maintain the fixity of the original file, we cannot cast aside the problem of maintaining other parts of the infrastructure needed to present it as an actual image [

3]. The purpose of this paper is to improve the relevancy of metrics used to validate digital images in long-term preservation by shifting the focus from files and data to the perceptive analysis of images. While it is a broad outline that needs further research, some existing technologies and tools can serve as the first steps in this direction.

Another important attribute of the digital image is its intrinsic non-uniqueness. The digital data lifecycle is a never-ending Theseus paradox: there are never ‘original’ bits, we only have copies. An important distinction here is that in the digital domain, we can assume that it is possible to have verifiable perfect bit-to-bit copies of objects, which means that in a sense, we know everything there is to know about the object we store. There is a catch though: the same image can be stored with completely different bitstream data, for example, if it is saved in a different image format (

Figure 1), which is very likely to happen. While ingesting data, we usually need to normalize it, for example, by converting initial files to formats suitable for long-term preservation. We can be certain that at some point, our data will need to be migrated to another software/hardware configuration that will require converting the files to another format, resulting in them losing bitstream fixity.

Therefore, it is very important to keep in mind the limitations of traditional tools used to maintain file fixity. One of the most powerful tools to maintain file fixity is the use of hash functions—mathematical functions that can be used to map data of an arbitrary size to data of a fixed size. They are commonly used to assert file fixity because even small changes in a file result in a completely different hash, and hashes are easily stored as small strings. For example, a commonly used hash resulting from the MD5 algorithm can be stored in a hexadecimal format as a 32-symbol string that creates small overhead in storage and can be stored alongside the original file. This approach is used in the widely adopted BagIt convention for storing digital objects. Keeping and checking file hashes is necessary, but not sufficient, for creating long-term policy. We can more or less arbitrary declare an image in one format/bitstream representation a ‘master’ and use file fixity tools before the next data migration, creating new hashes for files changed during migration after the migration process is completed. Every data migration is a risky process with potential data loss, so we need to be able to mitigate those risks. We need to be able to determine if the migration was successful and that the resulting image was not changed, even if bitstream data was, especially keeping in mind that actual migration is most likely an automatic process affecting a large number of objects.

2. Perceptual Hash in Image Fixity

Perceptual hash functions are in a sense diametrically opposite to cryptographic hash functions: while in cryptographic hashing, a small change in input leads to a drastic change in hashes (called avalanche effect), which makes them great tools for maintaining file fixity, perceptual hashes of similar images will also be similar, which makes them useful for maintaining content fixity [

4,

5].

Using cryptographic hashes is more straightforward since we only need to determine whether a file was changed, while the probability of collision (having another file with the same hash string) is quite low. There are two possible scenarios for the collision: data corruption and malicious attack. It is very unlikely that corrupted data would result in a hash collision, while a malicious attack, taking into account constant advances in processing power, is not out of the question, so we need to consider the possible intent of such an attack first [

6]. If the goal is to substitute a stored image with another image with similar characteristics, described in stored object metadata, a collision attack seems to be extremely unlikely. If, on the other hand, the attacker’s goal is to trick the archive into seeing garbage data as a valid stored image, the chance of success mostly depends on the collision resistance of a particular algorithm.

Compared to the cryptographic hashing perception, hashing is less robust and prone to false positives, but in our case, it is used to compare supposedly identical images so that we can mainly focus on the discriminability of the perceptive hashing algorithm [

7]. For example, if we need to migrate our images from PNG to TIFF (lossless), md5, sha, and other cryptographic hashes will be completely different and will need to be recalculated, but a comparison based on a perceptive hash will show zero difference. Based on the availability and relative computational cheapness of perception hashing algorithms today (supported, for example, by the most commonly used open source image manipulation library ImageMagick), it could be argued that including perceptual hashes of digital images in archival information packages has not only long-term benefits, since it records information about the image itself, which is the actual object we want to preserve, not the file, but can also be incorporated in dupes/similar searching algorithms.

3. Case Study

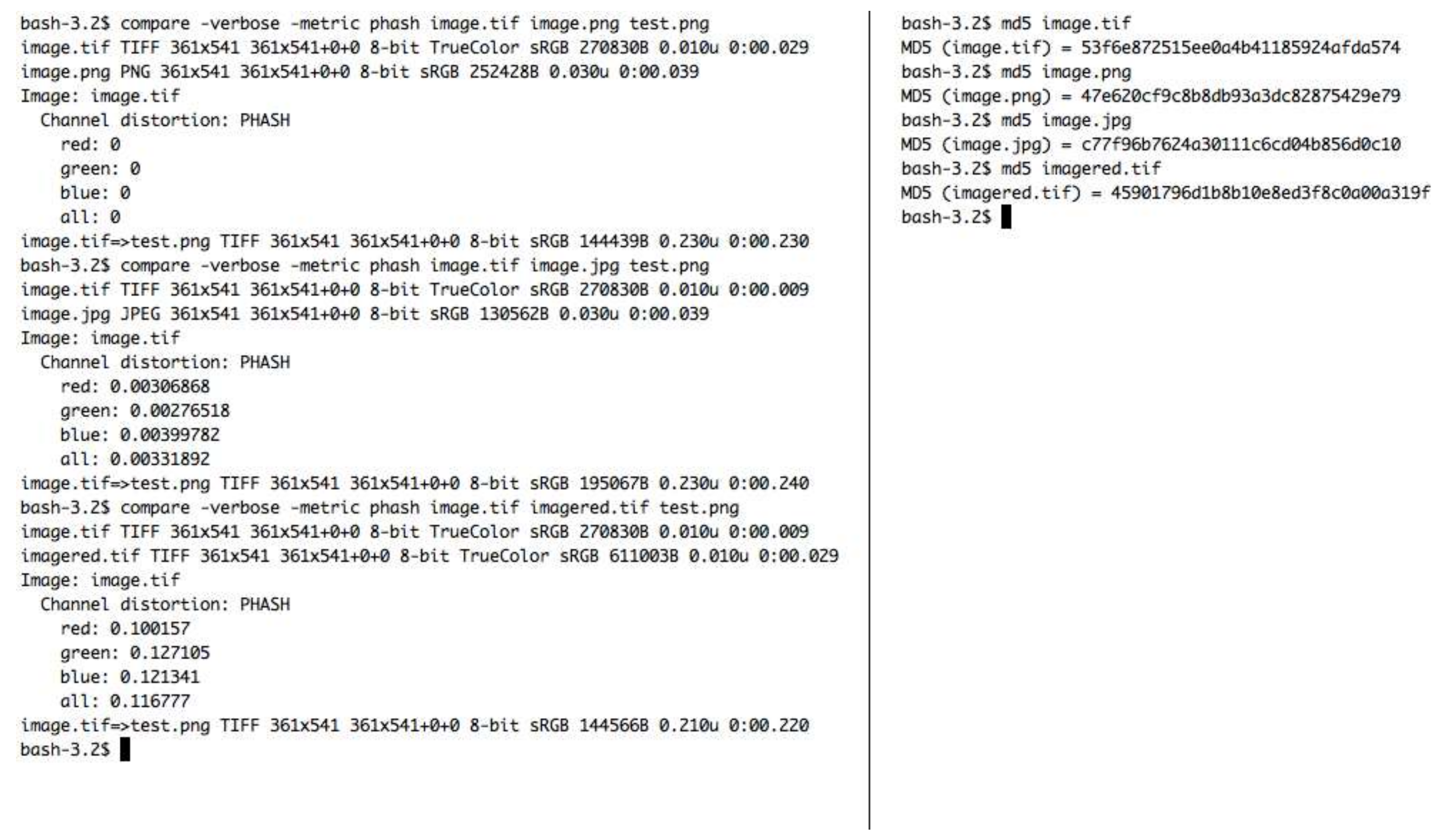

A simple test case was used to demonstrate the difference between the phash and md5 cryptographic hash that can be used in maintaining image fixity through format conversion. The source image was converted to TIFF, PNG (lossless), and lossy JPG formats, and another image was created with a deliberate artifact: red box (

Figure 2). ImageMagick 7.0.7-21 Q16 x86_64 2018-01-08 was used to compare different images using phash metrics.

As seen in

Figure 3, conversion to lossless PNG shows zero difference using phash metrics, while conversion to lossy JPG shows slight distortion, and a comparison to the image with the artifact shows prominent distortion. At the same time, for crypto hash functions, all files were equally different. What this test shows is that while phash cannot serve as the main fixity checking tool, it can be used to measure image preservation in the migration process. One important possible area of application is the creation of derivatives for dissemination, which are often compressed and resampled to a lower resolution than original images in storage. The use of the phash comparison allows for not just error checking in the derivatives creation process, but also for the limited measurement of derivatives’ degradation compared to the original image.

4. Digital Artifacts

As was already mentioned, dealing with objects in the digital domain, we imply that it is possible to have all the information there is about the object, and there is no ‘dark matter’ between pixels in the digital image. However, this does not mean that we know everything there is to know. The digital image is one of the most straightforward digital objects, and yet it can nevertheless contain some surprises. For example, TIFF is a container format, and there is a possibility that our ingestion tools might miss some of the data present in TIFF (for example, an additional image/previous version of an art object or non-standard metadata). An image may contain steganographic information that could be corrupted during normalization and migration processes. Images can be provided to the institution in the format not suitable for long-term preservation, while not being normalizable without a loss of data (e.g., camera raw files). Therefore, to maintain the fixity of a digital object from ingestion through migrations, we need to either use completely reversible normalization and migration procedures or, at the very least, maintain a pre-normalized copy in digital artifact status, meaning that there is no guarantee that it will be readily available due to possible format obsolescence. This is especially important for objects with incomplete provenance when there is no clear chain of ownership and preservation.

5. Digital Provenance as Fixity Assurance

While hash and other control sum-based techniques for fixity checking are intrinsically connected to digital content, maintaining a complete internal and external history of the object is an important and necessary component in assuring object fixity. The lifecycle of the digital object includes ingestion and sometimes migrations, which change the file and therefore create new hashes. Changing metadata is usually more frequent, and if metadata information is stored in files, as, e.g., according to BagIt specification, and has its own hashes, they too are not immutable. Therefore, maintaining the chain of events that affect stored hashes is as important as maintaining digital object fixity; otherwise, these events might become weak spots in digital cultural object preservation practices [

8].

The most common practices include logging all user actions in the database. Many institutions require verified paper trails for changing crucial descriptive metadata. Similarly, the administrative way of maintaining fixity of the digital object involves keeping records of changes and techniques used in, e.g., the migration process.

6. Discussion

Shifting the accent from preserving files to preserving images, we can use perceptual hashes to describe what we actually intend to preserve. The distinction is very important: by using cryptographic hashing/checksums/technical metadata, we maintain material fixity, but we are talking about raw data, that is, in fact, circumstantial to maintaining image fixity since different bitstream objects can represent one image. Using perceptual hashes, on the other hand, is a small step towards being able to verify the fixity of the image itself as we perceive it, adding a new layer to the concept of trustworthy digital objects [

9]. While right now it is mostly applicable for fixity checking during format conversion during data migration, using perceptual hashes is an important paradigm change, because now we are testing not what we have in this digital object, but whether it works as intended. That means we have a more robust model for the digital preservation process because it is less dependent on current formats and technologies. The paradigm could be researched further and extended to other types of digital heritage objects: we describe conditions for objects to fulfill their functions in relation to humans and test everchanging digital objects against these conditions.

When we create a long-term digital archive, we have to ensure that archive packages are accessible. It is not enough to store bitstream data and technical metadata for access. We have to be sure that instruments that can be used to access objects exist and are maintained because while physical objects represent themselves by being tangible, digital objects do not. This means that when we prepare a digital archival object, i.e., a digital image, we have to think about how it should present itself, we should store instructions and materials to create objects of perception, and we need to be able to interact with the object; otherwise, we have not prepared it properly.

Some of the complications arise from the fragmented nature of digital objects. Raw data can be stored in one place, metadata in other, while metadata links to common registries and standards and hardware are a completely different matter. A possible way to develop digital preservation could be the movement toward smart archival packages, which know how to represent themselves and help maintain their fixity. We have to store a code to view our content, or at least preserve specifications to build such a code. A possible logical step would be to include that code in the dissemination information package at the very least. As a next step, by peeking into the realm of what science fiction is, could fully self-representative archival objects that include instructions be employed to create hardware or configure some kind of smart matter?