1. Introduction

The Baltic Dry Index (BDI) serves as a critical barometer for the global shipping industry, reflecting the costs of transporting dry bulk commodities such as coal, iron ore, and grain. As an economic indicator, the BDI provides valuable insights into the health of global trade, supply chain dynamics, and overall economic activity. Given its relevance, accurate forecasting of the BDI is of paramount importance for a diverse range of stakeholders, including shipping companies, commodity traders, investors, policymakers, and economists.

Historically, the BDI has exhibited significant volatility, influenced by a myriad of factors. These factors include global economic cycles, seasonal demand fluctuations, geopolitical events, and changes in shipping capacity. For instance, economic downturns often lead to reduced demand for raw materials, subsequently lowering shipping rates and the BDI. Conversely, periods of economic growth drive up demand for commodities, resulting in higher shipping rates. Additionally, events such as trade disputes, natural disasters, and changes in regulatory environments can cause sudden and unpredictable movements in the BDI. This volatility poses substantial challenges for forecasting but also presents opportunities for leveraging advanced analytical techniques to improve prediction accuracy.

Traditional forecasting methods, such as time series analysis and econometric models, have been employed with varying degrees of success in predicting BDI movements. These methods provide a foundation for understanding past trends and making short-term forecasts; they often struggle to capture the complex, non-linear relationships inherent in the factors influencing the BDI.

In recent years, advancements in machine learning and artificial intelligence have opened new avenues for improving forecast accuracy. Techniques such as artificial neural networks (ANNs), support vector machines (SVMs), and ensemble learning methods offer the potential to model complex patterns and interactions that traditional methods may overlook. These contemporary approaches can incorporate a wider range of variables and are capable of learning from large datasets, making them well-suited for forecasting highly volatile indices like the BDI.

Forecasting the Baltic Dry Index is crucial for the shipping industry as it offers insights into global trade trends, aiding in fleet management, capacity planning, and route optimization. Accurate BDI forecasts help shipping companies reduce costs, improve efficiency, and make informed investment decisions. Additionally, understanding shipping demand trends aids in predicting commodity prices and managing risks, ultimately enhancing the competitiveness and operational efficiency of shipping companies. Thus, forecasting BDI has attracted considerable attention from both academic researchers and industry practitioners. The primary objective of this study is to compare various univariate forecasting techniques in order to develop a more accurate short-term prediction model for the BDI, offering practical insights for the shipping sector.

The structure of this paper is as follows:

Section 2 reviews relevant literature,

Section 3 details the research methodology,

Section 4 analyzes and compares the forecasting results, and

Section 5 concludes the study with key findings and implications.

2. Literature Review

BDI forecasting methods can be broadly categorized into three groups: conventional econometric models, nonlinear models and machine learning techniques, and ensemble machine learning methods. These approaches have become the most widely adopted methodologies for BDI analysis in academic research.

2.1. Econometric Model

Econometric models were among the first developed for BDI forecasting, and they have been widely employed in validation studies. Both univariate and multivariate econometric methodologies are commonly applied in this research. Previous studies frequently utilized models such as the vector error correction model (VECM), vector autoregressive (VAR), and autoregressive integrated moving average (ARIMA). For instance, Veenstra and Franses [

1] developed a time series-based VAR model to forecast the BDI, identifying non-stationarity in the data, which raised concerns about the stability of various modelling techniques. Cullinane et al. [

2] used the ARIMA model for modifications to the Baltic Freight Index (BFI), demonstrating its superior predictive power. Kavussanos and Alizadeh [

3] examined the seasonality of dry bulk freight rates, employing seasonal ARIMA and VAR models to assess fluctuations across different vessel sizes. Tsioumas et al. [

4] introduced a multivariate vector autoregressive model with exogenous variables (VARX), which outperformed ARIMA in improving BDI forecasting accuracy. Papailias et al. [

5] showed that trigonometric regression can lead to improved predictions and then use their forecasting results to perform an investment exercise and to show how they can be used for improved risk management in the freight sector. Zhang et al. [

6] compared ARIMA, ARIMA-GARCH models, and artificial neural networks (ANNs), noting that econometric models performed better in one-step-ahead forecasts, while ANN-based algorithms achieved a higher direction matching rate in weekly and monthly data. More recently, Katris et al. [

7] proposed data-driven model selection using ARIMA, fractional ARIMA (FARIMA), and ARIMA/FARIMA models with GARCH and EGARCH errors, marking the first use of FARIMA and MARS models in BDI forecasting.

2.2. Nonlinear Models and Machine Learning

Traditional econometric models often struggle to effectively capture the nonstationary and nonlinear characteristics of BDI time series. As a result, recent years have seen the increasing use of nonlinear regression models and machine learning techniques in BDI prediction research. Common methods include artificial neural networks, support vector machines (SVM), and nonlinear regression. SVMs are praised for their ability to approximate nonlinear functions and generalize well. For instance, Yang et al. [

8] showed that SVM models can successfully account for BDI’s nonlinear properties and developed a freight early warning system based on SVM to predict fluctuations in shipping market prices. Bao et al. [

9] proposed a new BDI forecasting model using support vector machines with correlation-based feature selection. The study examined macroeconomic indicators and freight index fluctuations to construct the model. Comparative results showed SVM outperforms neural networks in trend accuracy and forecast precision, aiding market risk management.

Similarly, Li and Parsons [

10] employed neural networks to forecast short-and long-term monthly tanker freight rates. Their results demonstrated that neural networks outperformed traditional ARIMA models, particularly for long-term forecasts. Building on this, Sahin et al. [

11] introduced three ANN techniques for predicting BDI, conducting detailed comparisons to identify the most effective model. In recent years, advanced models like convolutional neural networks (CNNs) and long short-term memory (LSTM) networks have gained recognition as top-performing predictive neural networks, though their use in BDI forecasting remains underexplored.

Additionally, both econometric models and AI methods have their individual limitations. Hybrid forecasting approaches have emerged as a way to combine these models, leveraging their strengths to offset individual weaknesses. Such integrated systems tend to offer higher accuracy. For example, Chou and Lin [

12] developed a fuzzy neural network combined with technical indicators to predict the BDI, achieving better accuracy than either technique alone.

Most recently, Bae [

13] utilized deep learning models to predict the trends of the Baltic Dry Index (BDI), comparing the forecasting effects of algorithms such as Recurrent Neural Networks (RNN) and determining the optimal parameters. Liu et al. [

14] proposed a BiLSTM-based system for BDI forecasting, incorporating the grey relational degree analysis method to select seven highly correlated factors as inputs. This model outperformed common machine learning models like support vector regression (SVR) and regression, as well as the standard LSTM neural network.

2.3. Ensemble Machine Learning Models

Ensemble learning has been extensively employed to enhance model performance, serving as an effective method for boosting the predictive power of individual models. Leonov and Nikolov [

15] found that freight pricing in shipping markets is highly volatile. They analyzed fluctuations in the Baltic Panamax 2A and 3A freight rates using a novel approach in shipping economics: a hybrid wavelet-neural network model. In a study on Baltic Dry Index prediction, Zeng et al. [

16] applied Empirical Mode Decomposition (EMD) to break down the original BDI series into several intrinsic mode functions (IMFs). Each IMF and combined component was modelled using an Artificial Neural Network, yielding a forecasting method based on EMD and ANN. Their results showed that this approach outperformed other techniques such as ANN and VAR.

Similarly, Kamal et al. [

17] developed a deep learning ensemble model by integrating Recurrent Neural Networks, Long Short-Term Memory, and Gated Recurrent Units (GRU) to improve BDI forecasting. The ensemble method outperformed both a single econometric model and a single machine learning model, highlighting its superior predictive capabilities.

Su et al. [

18] predicted the Baltic Dry Index using a deep integrated model (CNN-BiLSTM-AM) comprising a convolutional neural network, bi-directional long short-term memory, and the attention mechanism (AM). Their findings indicate that the integrated model CNN-BiLSTM-AM encompasses the nonlinear and nonstationary characteristics of the shipping industry, and it has a greater prediction accuracy than any single model.

Most recently, research has shifted toward deep learning and hybrid intelligent systems, reflecting the growing need to capture the complex, non-linear dynamics of global shipping markets. Kim et al. [

19] advanced the field by integrating financial market data into machine learning models, complemented with SHAP explanations to enhance interpretability and transparency of predictions. Similarly, Atsalaki et al. [

20] employed a neuro-fuzzy inference system (ANFIS), combining the adaptability of neural networks with the rule-based reasoning of fuzzy logic, thereby improving forecasting accuracy in volatile environments. Zhang [

21] introduced a GRU-based deep learning framework that demonstrated strong performance in identifying market trends and cyclical patterns, while Li et al. [

22] applied LSTM architectures to capture long-term dependencies in BDI time series.

Existing BDI forecasting studies largely rely on single models, leaving a gap in the use of structured hybrid approaches. Our contribution is a unified framework that systematically integrates EMD, SVR, and GWO. Although each method is well known, their coordinated use—EMD for decomposition, SVR for IMF-level forecasting, and GWO for optimizing the final weights—has not been previously examined for BDI prediction. This approach fills a methodological gap by offering a more robust way to capture the complex dynamics of the series.

Univariate forecasting is simple, efficient, and easy to interpret as it relies solely on past values of the variable being predicted, requiring less data and computational resources. It is robust against noise from external factors and suitable for quick, short-term forecasts. Additionally, it serves as a strong baseline for comparing more complex models. Based on the above literature, this study proposes a comparison of univariate models, including GM(1,1), ARIMA, SVR, LSTM, GRU and EMD-SVR-GWO.

The forecasting models examined in this study span a range of methodological complexity, each suited to different time-series characteristics. GM(1,1) performs well in small-sample settings and weak-information environments but offers limited capacity for modelling nonlinear patterns. ARIMA remains a strong benchmark for linear and stationary data, though it is less effective under structural changes or nonlinear dynamics. SVR improves flexibility through kernel-based nonlinear mapping, making it suitable when relationships are complex, but data are limited. LSTM and GRU further enhance forecasting capability by learning long-range dependencies and nonlinear interactions directly from the data, performing well in series with memory effects, regime shifts, or high variability. Finally, our proposed EMD-SVR-GWO framework uses EMD for decomposition, SVR for IMF-level forecasting, and GWO for optimizing the final weights. Together, these methods provide a comprehensive basis for evaluating forecasting performance across linear, nonlinear, and hybrid approaches.

3. Methods

To ensure a coherent comparison, all forecasting models are evaluated within a unified framework using the same monthly BDI dataset, identical in-sample and out-of-sample partitions, and a common one-step-ahead forecasting horizon. The models are presented in increasing order of methodological complexity—from traditional statistical methods to machine learning and hybrid ensemble approaches—allowing a transparent assessment of predictive performance. Although deep learning models such as LSTM and GRU typically benefit from larger datasets, they are included here as benchmark nonlinear learners rather than as fully optimized deep architectures, allowing a fair comparison of modelling paradigms under identical data constraints.

3.1. Grey Forecasting Model

Grey theory, initially proposed by Deng [

23], is designed to analyze systems with incomplete or limited information. A key advantage of grey models is their ability to generate forecasts using relatively small datasets. In particular, the GM(1,1) model does not require the underlying time series to be stationary, nor does it rely on probabilistic or ergodic assumptions as in stochastic time-series models such as ARIMA. Instead, GM(1,1) employs an accumulated generating operation (AGO) to transform the original sequence into a more regular form, enabling effective modelling of trend behaviour under limited data conditions. These characteristics make GM(1,1) especially suitable for short-term forecasting problems with small samples and high uncertainty.

The GM(1,1) model refers to a first-order, single-variable grey model. The procedure for constructing GM(1,1) is outlined as follows:

Let the original data sequence be

where

x(0)(k) stands for the original data sequence in period

k. The following sequence

is defined as

Equation (2) was generated based on the accumulated generating operation of Equation (1).

Before building the model, a class ratio must be performed to ensure that the sequence is suitable for modelling. The class ratio

is defined as follows:

if

(0, 1), then

is appropriate for modelling.

After finishing the class ratio test, the GM(1,1) model is formulated as a first-order differential equation for

as

where

a and

b are coefficients to be estimated.

Next, we applied the ordinary least squares method to Equation (4) to estimate the coefficients of

a and

b. Once

and

are estimated, we generate predictions by substituting

and

into the following equation:

Finally, the forecasted values of the time series are obtained by applying the inverse accumulated generating operation (IAGO) to convert to

as follows:

3.2. SARIMA

The seasonal model employed in this study belongs to the general class of univariate models originally introduced by Box and Jenkins [

24]. SARIMA has been widely applied across various disciplines and remains a cornerstone in time series forecasting. A fundamental requirement in constructing this model is that the time series must be stationary, meaning its probabilistic properties remain constant over time.

SARIMA extends the ARIMA framework by incorporating seasonal components, making it suitable for time series data exhibiting seasonality. The model is typically expressed as SARIMA(p, d, q)(P, D, Q)s, where

p represents the order of the non-seasonal autoregressive terms;

d denotes the degree of non-seasonal differencing;

q corresponds to the order of the non-seasonal moving average terms;

P, D, and Q represent the seasonal autoregressive, seasonal differencing, and seasonal moving average orders, respectively;

s indicates the length of the seasonal cycle.

The general representation of the SARIMA model can be written as

where

ψp(B) = (1 − ψ1B − ψ2B2 −… − ψp Bp),

Φp(Bs) is the seasonal operator of order P;

B is the backshift operator with Bm(Zt) = Zt−m;

s is the season length;

d = (1 − B) is the non-seasonal operator;

is the seasonal differencing operator;

Zt is the stationary data at time t;

θq(B) = (1 − θ1B − θ2B2−… − θqBq);

is the seasonal operators of order Q, and εt is the white noise with zero mean and variance.

The model-building process involves three main stages: identification, parameter estimation, and diagnostic checking. In the identification phase, the tentative structure of the AR and MA terms (p and q) is determined by analyzing the autocorrelation and partial autocorrelation patterns. Once an initial specification is chosen, parameter estimation is carried out, often using methods such as maximum likelihood or least squares. Finally, diagnostic checking assesses the adequacy of the fitted model by examining residuals to ensure they exhibit the properties of white noise. If the diagnostics confirm model suitability, the SARIMA model is then employed for forecasting.

3.3. Long Short-Term Memory Model

Deep learning has become increasingly important in time-series forecasting due to its ability to capture complex nonlinear and long-range temporal dependencies. Among recurrent neural network architectures, the Long Short-Term Memory network proposed by Hochreiter and Schmidhuber [

25] addresses the vanishing-gradient problem inherent in traditional RNNs by introducing explicit memory cells and gating mechanisms. Given the nonlinear, volatile, and path-dependent nature of the Baltic Dry Index, LSTM provides an effective nonlinear benchmark model for comparison with the proposed EMD–SVR–GWO framework.

An LSTM cell contains three multiplicative gates—input, forget, and output gates—together with a cell state that carries long-term information. For a time sequence

, the LSTM cell updates are defined as

where

are the input, forget, and output gates;

is the cell state;

is the hidden state;

are trainable weight matrices and biases;

denotes the logistic function;

represents elementwise multiplication.

The forget gate regulates the retention of past information, enabling the network to model both short-term shocks and long-term cycles.

3.4. Gated Recurrent Unit Model

The Gated Recurrent Unit model, introduced by Chung et al. [

26], is a simplified gated RNN architecture designed to capture long-term dependencies with fewer parameters than LSTM. GRU merges the input and forget mechanisms into a single update gate, improving computational efficiency while maintaining competitive forecasting accuracy. This makes GRU particularly suitable for small and moderately sized datasets such as the monthly BDI series.

A GRU cell contains two gates: the reset gate

rt and update gate

zt. The transition equations are

Here,

zt controls the interpolation between past information and newly computed content;

rt controls how much past information to retain;

is the candidate hidden state.

Compared with LSTM, GRU eliminates the explicit cell state ct, leading to a faster and more compact model.

3.5. The EMD-SVR-GWO Model

3.5.1. EMD Model

Empirical Mode Decomposition is a data-driven technique developed by Norden E. Huang [

27] in the late 1990s for analyzing non-linear and non-stationary time series data. Unlike traditional signal processing methods that rely on predefined basis functions (e.g., Fourier or wavelet transforms), EMD adaptively decomposes a signal into a set of intrinsic mode functions based on the inherent characteristics of the data. This makes EMD particularly useful for a wide range of applications, including signal processing, economics, geophysics, and biomedical engineering.

The EMD process consists of the following steps:

- (1).

Identify Extrema: Detect all local maxima and minima in the signal. These points indicate where the signal changes direction and are used to construct the upper and lower envelopes.

- (2).

Interpolate: Using the identified extrema, create smooth upper and lower envelopes by interpolating between the maxima and minima, respectively. Common interpolation methods include cubic splines or piecewise linear functions.

- (3).

Mean Calculation: Compute the mean of the upper and lower envelopes. This mean represents the local trend of the signal.

- (4).

Sifting Process: Subtract the mean from the original signal to obtain a candidate IMF. This component is called a “proto-IMF.” The sifting process involves repeating the steps of extrema identification, interpolation, and mean calculation on the proto-IMF until it meets the IMF criteria: (i) The number of zero crossings and extrema must either be equal or differ by at most one. (ii) The mean value of the envelopes should be zero at every point.

- (5).

Residual Calculation: Once a valid IMF is obtained, subtract it from the original signal to get a residual. This residual represents the remaining signal after extracting the first IMF.

- (6).

Repeat: Apply the EMD process recursively to the residual to extract further IMFs until the residual becomes a monotonic function or has no more than two extrema. The decomposition is complete when the residual is either a constant, a monotonic trend, or contains no further oscillatory modes.

EMD has been applied across various fields due to its ability to handle non-linear and non-stationary data. In signal processing, it facilitates denoising, feature extraction, and fault detection in mechanical and electrical systems. The technique supports the analysis of financial time series, market trends, and economic cycles in economics. Geophysics benefits from EMD through seismic signal analysis, ocean wave modelling, and climate data interpretation. In biomedical engineering, EMD is instrumental in processing physiological signals such as electroencephalograms, electrocardiograms, and speech signals.

The advantages of EMD include its data-driven nature, which eliminates the need for predefined basis functions and makes it versatile for various types of data. Additionally, its adaptability allows it to handle non-linear and non-stationary signals effectively.

In summary, Empirical Mode Decomposition is a powerful and adaptive method for analyzing complex signals. By decomposing signals into intrinsic mode functions, EMD provides a flexible approach for revealing underlying patterns and trends in non-linear and non-stationary data. Its wide range of applications and ability to handle diverse data types make it an essential tool in various scientific and engineering disciplines.

3.5.2. SVR Model

Although different models in this study employ different notational conventions, they are unified by a common forecasting objective. Time-series models such as GM(1,1) and ARIMA express forecasts explicitly in terms of time indices (e.g., yt, yt−1), whereas machine learning models such as SVR formulate the problem in a supervised-learning form. In this study, these formulations are linked by constructing input–output pairs from the univariate BDI series, where lagged observations [yt−1, yt−2,…, yt−p] serve as inputs and the next-period value yt is the prediction target. As a result, all models generate one-step-ahead forecasts under a unified time-series forecasting framework, ensuring methodological consistency and comparability.

Support Vector Regression is a robust and versatile machine learning algorithm used for regression tasks. Originating from the principles of Support Vector Machines, SVR aims to predict continuous outcomes based on input features. While SVM is widely recognized for its effectiveness in classification problems, SVR extends these capabilities to the realm of regression, making it a valuable tool in various applications, such as financial forecasting, time series prediction, and biological data analysis.

At its core, SVR is designed to find a function that approximates the relationship between input features and a continuous target variable. The primary objective of SVR is to minimize the error of predictions while maintaining a degree of robustness by considering only a subset of the training data, known as support vectors. These support vectors are the critical elements of the training set that define the model.

SVR operates by introducing a margin of tolerance (epsilon), within which errors are ignored. This margin is referred to as the epsilon-insensitive zone. The essence of SVR is to ensure that the predictions lie within this margin for as many data points as possible, thereby balancing the complexity of the model with its predictive accuracy.

The SVR problem can be formulated as follows:

Given a training dataset , where xi represents the input features and yi the target variable, the goal is to find a function f(x) that deviates from the actual targets yi by at most ϵ. The function f(x) is typically expressed as

f(x) = ⟨w,x⟩ + b, where w is the weight vector and b is the bias term. The optimization problem is defined as follows (Vapnik [

28]):

subject to

Here, slack variables that allow for deviations greater than ϵ, and C is a regularization parameter that determines the trade-off between the flatness of f(x) and the amount by which deviations larger than ϵ are tolerated.

One of the strengths of SVR, inherited from SVM, is the ability to handle non-linear relationships through the kernel trick. By mapping the input features into a higher-dimensional space using a kernel function, SVR can fit complex, non-linear functions. Commonly used kernels include the linear, polynomial, and radial basis function (RBF) kernels. In this research, the radial basis function is adopted.

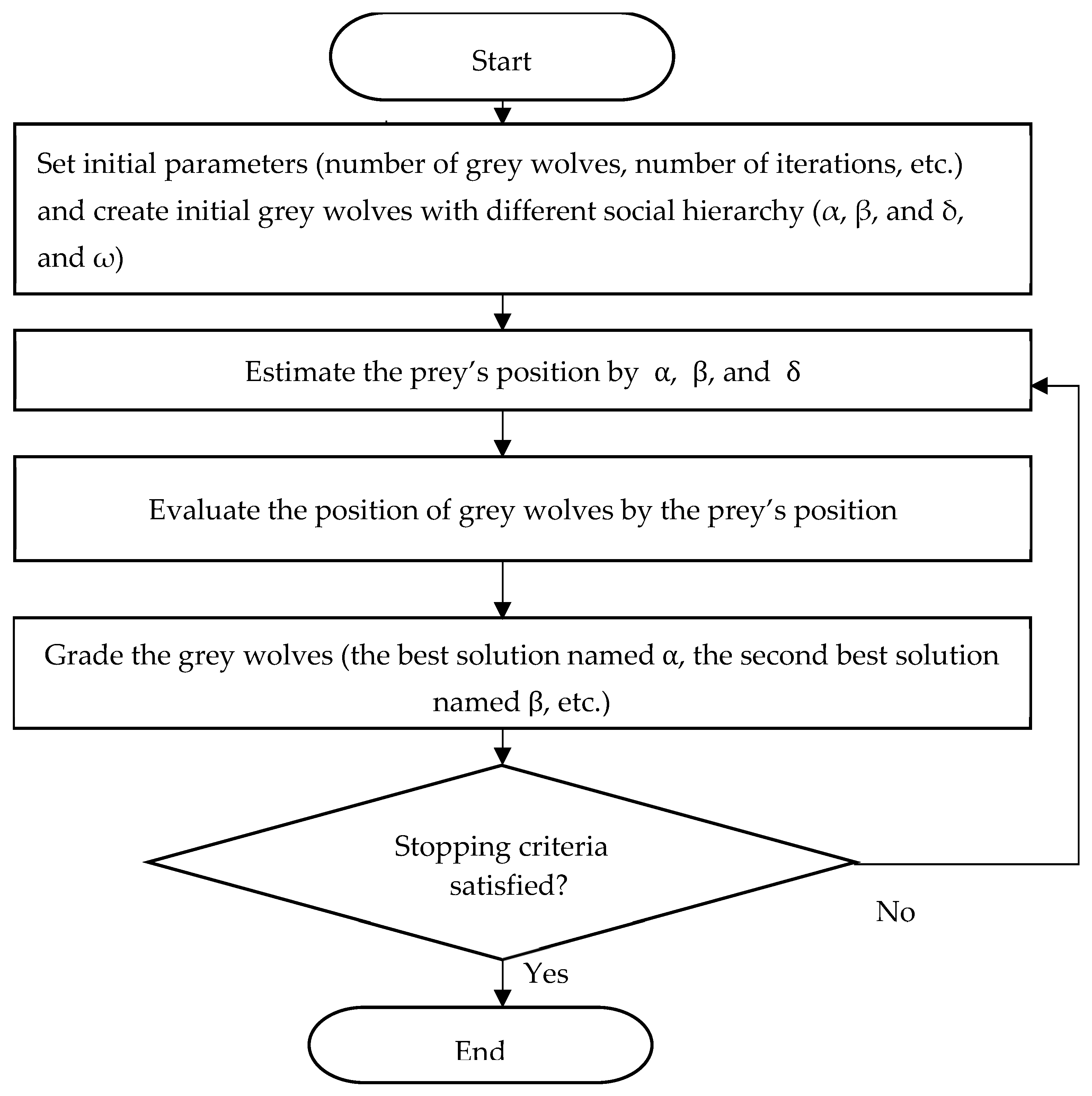

3.5.3. GWO

The Grey Wolf Optimizer (GWO) is a population-based metaheuristic optimization algorithm proposed by Mirjalili et al. [

29]. It is inspired by the cooperative hunting behaviour and leadership hierarchy observed in grey wolf packs. GWO has attracted increasing attention in forecasting and optimization studies due to its simple structure, limited number of control parameters, and strong capability to balance global exploration and local exploitation. Only the elements of GWO directly relevant to parameter optimization in this study are summarized below; full algorithmic details are available in Mirjalili et al. [

29].

In GWO, each candidate solution represents a grey wolf, and the quality of a solution is evaluated using a predefined fitness function. During each iteration, the best three solutions are designated as the alpha (α), beta (β), and delta (δ) wolves, which are assumed to have the most accurate knowledge of the prey’s location (i.e., the optimal solution). All remaining solutions are classified as omega (ω) wolves and update their positions by following these three leaders. This hierarchical structure allows the algorithm to guide the search process toward promising regions of the solution space while maintaining population diversity.

The encircling behaviour of grey wolves during hunting is mathematically modelled as:

where

denotes the position vector of the grey wolf at iteration

t,

(t) represents position vector of the prey,

= vector used to specify a new position of the grey wolf, and

and

are coefficient vectors defined as follows:

with

a decreasing linearly from 2 to 0 over iterations, and r1 and r2 are random vectors uniformly distributed in [0, 1].

The parameter a plays a critical role in controlling the search behaviour of GWO. When ∣A∣ > 1, grey wolves move away from the current best solutions, encouraging global exploration of the search space. Conversely, when ∣A∣ < 1, wolves converge toward the estimated prey position, leading to local exploitation. This adaptive mechanism enables GWO to effectively transition from exploration to exploitation as the optimization progresses, reducing the risk of premature convergence.

To model cooperative hunting, GWO assumes that the true prey position is approximated by the three leading wolves (α, β, and δ). The positions of the remaining wolves are updated according to

This averaging mechanism allows the search process to incorporate multiple elite solutions simultaneously, improving robustness and stability compared with algorithms that rely on a single global best solution.

In this study, GWO is employed to optimize the combination weights used to reconstruct the final BDI forecast from the SVR-predicted intrinsic mode functions (IMFs). Each candidate solution corresponds to a vector of weights assigned to individual IMFs, and the fitness function is defined by the reconstruction error between the weighted forecast and the reference series. Through iterative position updates, GWO identifies the weight configuration that minimizes forecasting error, thereby improving the accuracy and robustness of the final aggregated prediction.

Figure 1 illustrates the overall flow of the GWO algorithm applied in this study.

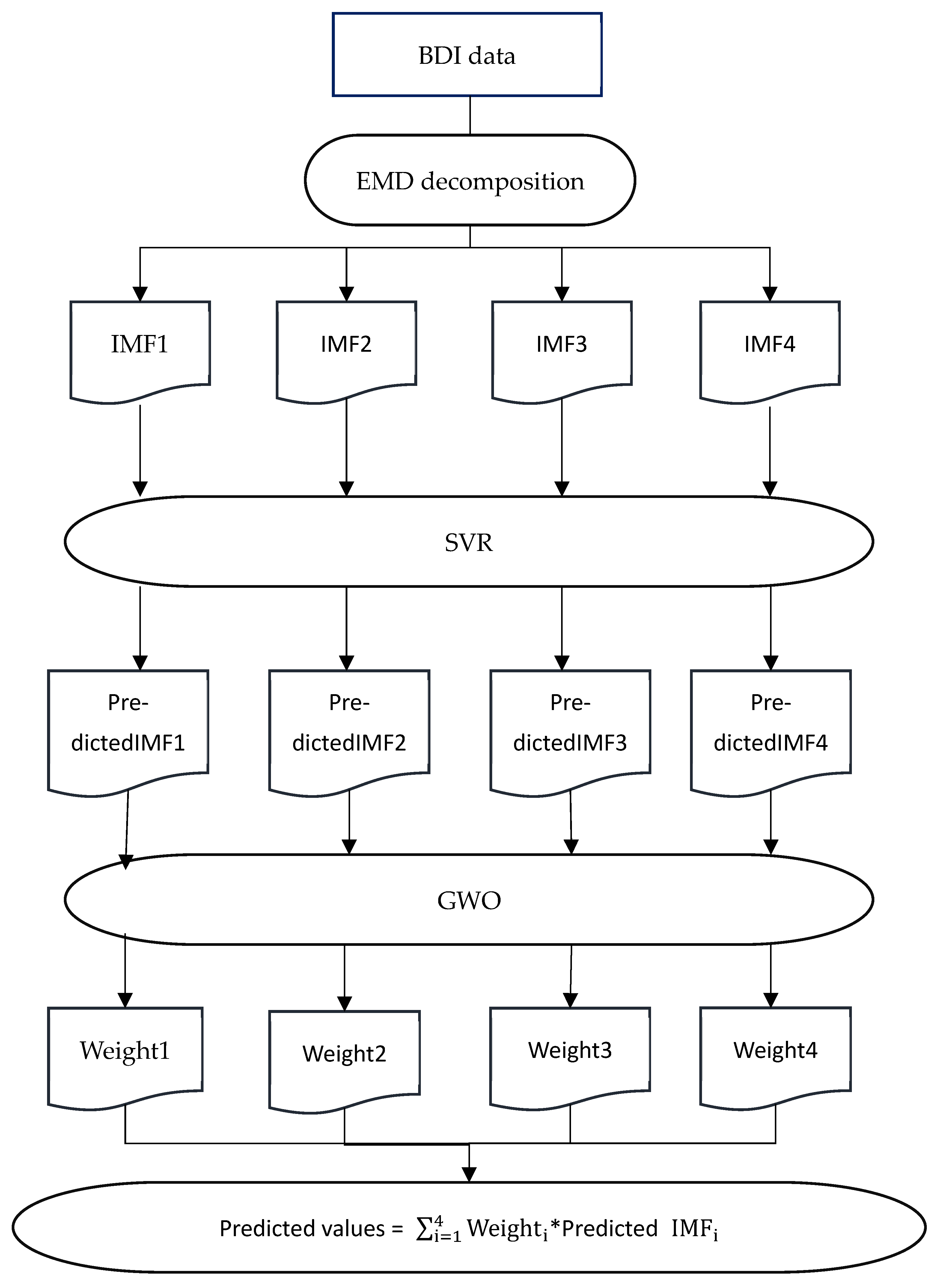

3.5.4. Model Construction of EMD-SVR-GWO

The EMD-SVR-GWO model is a hybrid forecasting framework designed to improve prediction accuracy by leveraging the strengths of three advanced techniques: Empirical Mode Decomposition, Support Vector Regression, and the Grey Wolf Optimizer. This model is particularly effective for complex, nonlinear, and non-stationary time series data, such as financial indices, economic indicators, or environmental data. The construction of the EMD-SVR-GWO model involves three primary stages:

EMD is a data-driven decomposition technique that breaks down the original data into a set of components called Intrinsic Mode Functions and a residual trend. Each IMF represents oscillatory modes embedded within the data, capturing different frequency characteristics, while the residual captures the long-term trend.

The decomposition enables the isolation of distinct patterns (high-frequency fluctuations, medium-term cycles, and long-term trends), which are modelled separately in the next stage.

- 2.

Support Vector Regression

After decomposition, each IMF and the residual are treated as independent sub-series. Support Vector Regression is applied to predict each component individually. SVR is chosen for its robustness in handling nonlinear relationships and its strong generalization capabilities.

Each IMF captures different dynamics of the original time series, and SVR models are trained separately to forecast these dynamics effectively.

The final stage involves the reconstruction of the original time series by combining the predicted IMFs and residual. To achieve the most accurate forecast, the Grey Wolf Optimizer is employed to determine the optimal combination of these forecasts. GWO is a metaheuristic optimization algorithm inspired by the hunting behavior and social hierarchy of grey wolves in nature.

The EMD-SVR-GWO hybrid model offers a powerful and flexible framework for time series forecasting. EMD enhances the model by decomposing complex data into simpler components, SVR captures nonlinear relationships within each component, and GWO optimizes the final forecast by adjusting the weights for the most accurate reconstruction. This approach is highly effective for datasets with mixed trends, seasonality, and noise, providing superior forecasting performance compared to traditional single-model methods.

Figure 2 presents the flowchart of the EMD-SVR-GWO model.

4. Data and Results

This section begins with a description of the dataset used in the analysis, followed by the presentation of results from all forecasting models. Subsequently, the models’ performance is assessed using three evaluation criteria, and their forecasting accuracy is compared.

4.1. BDI Time Series Data

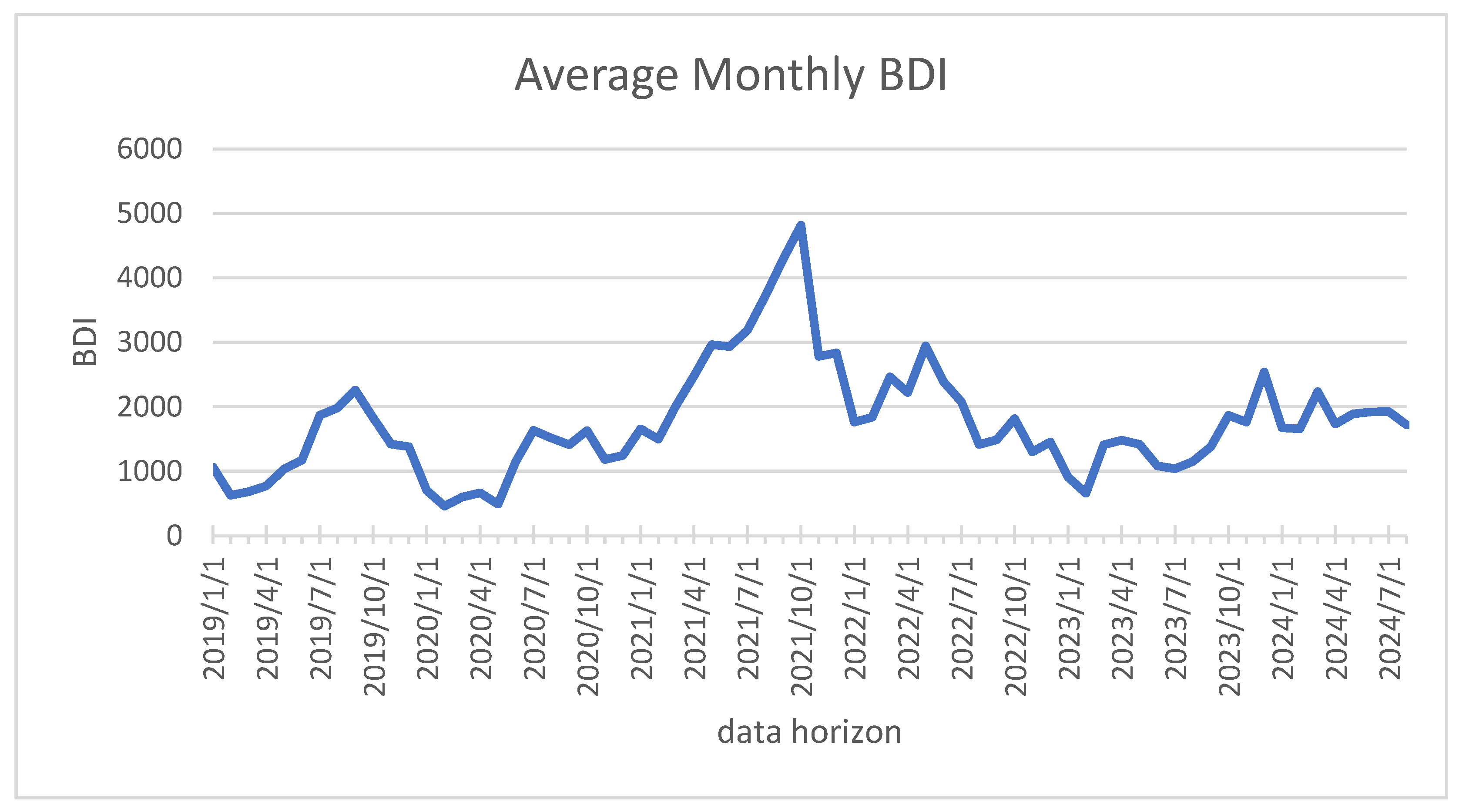

Monthly Baltic Dry Index data from January 2019 to August 2024 are used in this study. Monthly values are obtained by averaging daily BDI observations from the Eastmoney database, yielding 68 observations.

Table 1 reports the original monthly BDI data used in the empirical analysis to ensure transparency and reproducibility, while

Figure 3 presents a graphical view of the series, illustrating its overall evolution and nonstationary behavior.

Table 2 summarizes the descriptive statistics, highlighting the variability and right-skewed distribution of the data. The monthly BDI series has a mean of 1743.264 and exhibits substantial variability, with a standard deviation of 851.541 and a wide range from 460.6 to 4819.95. The positive skewness (1.244) and moderate kurtosis (2.380) further indicate a right-skewed distribution with some extreme values. The sample consists of 68 monthly observations.

For model evaluation, the dataset is divided into an in-sample period from January 2019 to December 2023 and an out-of-sample period from January to August 2024.

4.2. Model Results

This section summarizes the outcomes produced by the four models, while their forecasting accuracy is compared in the following section.

4.2.1. Grey Forecasting

The accuracy of forecasts generated by the grey model depends on the length of the initial data segment selected from the time series. To address this, we executed multiple grey forecasts using different initial sequence sizes. The best results, indicated by the lowest prediction errors, occurred when the initial sequence length was set to four observations. The procedure for producing forecasts with the grey model involves the following steps:

Before applying the GM(1,1) model, the data must satisfy the class ratio condition. As described in

Section 3.1, the ratio values

should lie between 0 and 1 for the series to qualify for grey modeling. The class ratio test results, summarized in

Table 3, confirm that the grey model is suitable for this dataset.

- 2.

Accumulated Generating Operation (AGO):

Following Equation (2), the AGO was applied to obtain the new sequence. The resulting values are presented in

Table 4.

- 3.

Mean value generating sequence

We calculated the mean value generating sequence, as shown in

Table 5.

- 4.

Time series prediction model

The coefficients a and b were estimated using the least squares method, yielding the following values:

These estimates are used to get

These parameters are then applied to Equation (8) to compute the predicted values of the time series. The forecasts for the period from January 2024 to August 2024 are presented in

Table 6.

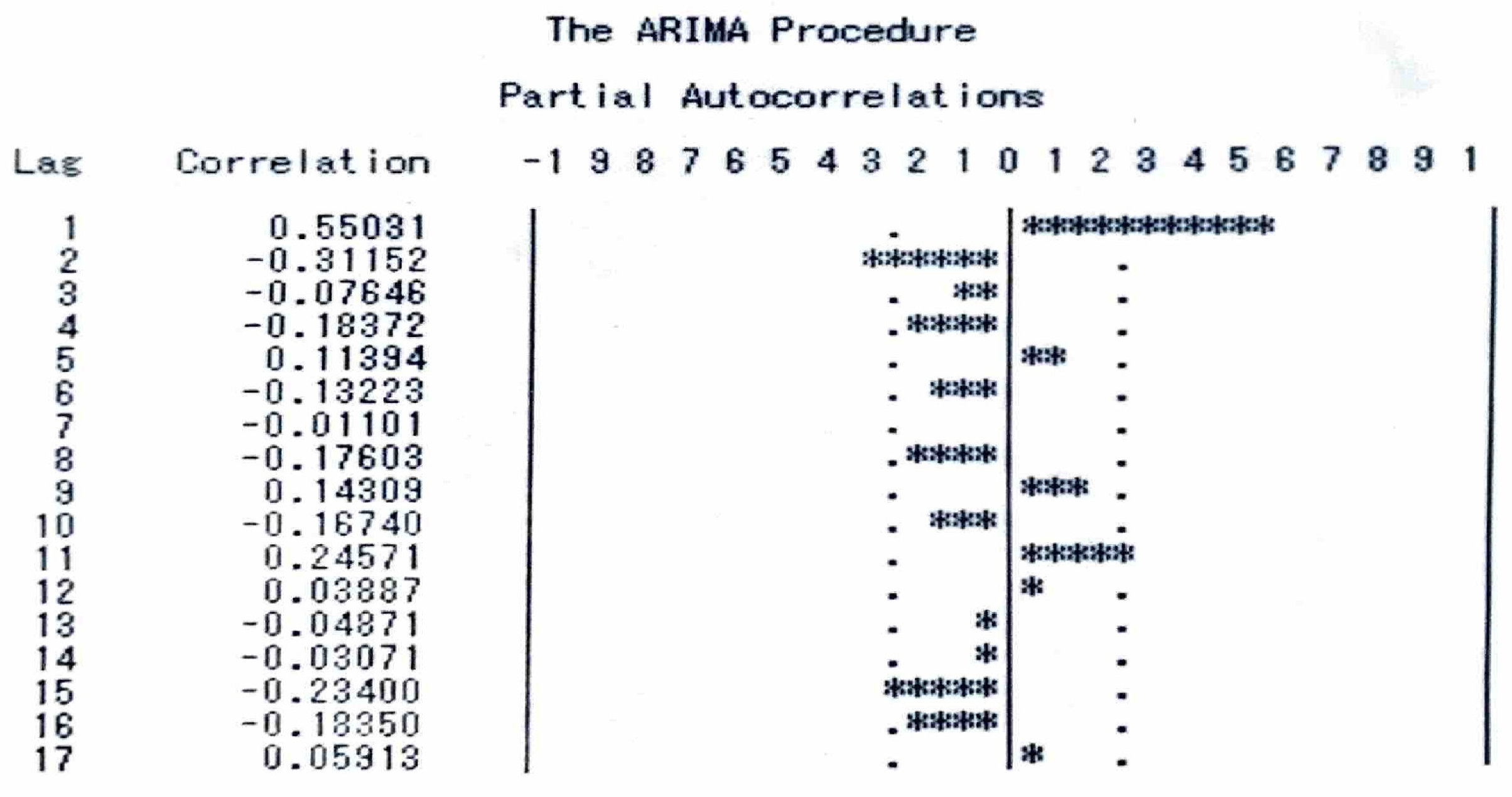

4.2.2. ARIMA Model

Figure 3 shows that the monthly BDI series exhibits a clear trend, suggesting nonstationarity. This observation is confirmed by the Augmented Dickey–Fuller (ADF) test, which fails to reject the null hypothesis of a unit root (ADF statistic = −2.377,

p = 0.1483). Accordingly, first differencing is applied to obtain a stationary series suitable for ARIMA modeling.

For methodological transparency, we first report results obtained using the classical Box–Jenkins framework. After differencing, the ACF and PACF plots, as shown in

Figure 4 and

Figure 5, were utilized to identify potential models, and several ARIMA specifications were examined using standard residual diagnostics. Among the candidate models, ARIMA(0,1,1) satisfies the residual independence requirement based on the Ljung–Box test, whereas alternative specifications fail to meet adequacy conditions. However, despite being statistically admissible, the forecasting performance of this model remains limited.

It is acknowledged that while the Box–Jenkins identification–estimation–diagnostic procedure is historically important, contemporary time-series forecasting increasingly relies on automated, information-criterion–based model selection methods. Accordingly, ACF and PACF plots are used here primarily for illustrative purposes rather than as decisive selection tools.

To obtain the final ARIMA specification, we employ the auto_arima procedure from the pmdarima Python package (version 2.1.1), which systematically evaluates candidate models using a stepwise search strategy based on information criteria (AIC, BIC, and HQIC). This automated procedure identifies an ARIMA(1,0,0) model as optimal. Despite its parsimonious structure, this specification achieves superior out-of-sample forecasting performance compared to more complex alternatives.

The difference between the classical ARIMA(0,1,1) and the automated ARIMA(1,0,0) selection primarily reflects the sensitivity of manual differencing decisions in the Box–Jenkins framework. Over-differencing may induce spurious moving-average behavior, whereas the automated procedure evaluates a broader model space and identifies that the BDI series is more appropriately represented as a stationary autoregressive process. Since AR models preserve long-run persistence while avoiding unnecessary complexity, the ARIMA(1,0,0) specification provides a more robust balance between parsimony and predictive accuracy.

Based on these considerations, the ARIMA(1,0,0) model selected by the automated procedure is adopted for forecasting. The final ARIMA(1,0,0) forecasts are reported in

Table 6. Additional diagnostic results and intermediate model comparisons are reported in

Appendix A for completeness.

4.2.3. LSTM

To ensure consistency with other univariate models studied, the BDI sequence is normalized to the interval [0, 1]. A sliding window of k past observations (here k = 5) is used as input to predict the next period:

The LSTM architecture employed in this study consists of a single LSTM layer with 32 hidden units using a hyperbolic tangent activation function, followed by a fully connected output layer. The model is trained using the Adam optimizer, with mean squared error (MSE) selected as the loss function to guide optimization.

The model is trained for 200 epochs until convergence. After training, multi-step-ahead forecasts (H = 8) are generated recursively:

The final LSTM forecasts (after rescaling) are reported in

Table 6 for direct comparison with GM(1,1), ARIMA, SVR, GRU, and EMD–SVR–GWO models.

4.2.4. GRU

The preprocessing procedures for GRU follow those of the LSTM model. Using the same sliding-window inputs ensures comparability across models. The GRU model used in this study is configured with a single GRU layer containing 32 units, followed by a dense output layer. The training process employs the Adam optimizer with mean squared error as the loss function, and the model is trained over 200 epochs to ensure effective learning.

Given parameters

θ, the GRU forecasting function is

As with the LSTM, multi-step forecasting is performed recursively:

This unified setup allows a direct comparison of performance between LSTM and GRU and ensures consistency with the other forecasting models evaluated in this study. The final GRU forecasts are reported in

Table 6.

4.2.5. SVR

Support Vector Regression is a powerful machine learning technique for predicting the Baltic Dry Index. In this study, a total of 80 monthly BDI data points are used. The first 72 consecutive months serve as input features for the model, while the last 8 months are used as output targets.

To prevent training errors caused by high-dimensional data or large variations in feature values, the dataset must be normalized before training the SVR model. The standardization process follows the formula

where

X is the original feature value;

is the mean of the feature in the training set;

σ is the standard deviation of the feature in the training set;

is the standardized value.

Next, the SVR model is trained using an appropriate kernel function, which determines how the model captures non-linear relationships in the data. The three most common kernels are linear, polynomial, and radial basis function. For BDI prediction, the RBF kernel is selected due to its effectiveness in handling complex, non-linear patterns.

Finally, the trained SVR model is deployed for forecasting future BDI values. It is used to generate one-step predictions for the last 8 months of the dataset. The predicted BDI values for the time series from January 2024 to August 2024 are presented in

Table 6.

4.2.6. EMD-SVR-GWO

In the proposed EMD-SVR-GWO hybrid ensemble model, the first stage is to apply EMD to decompose the data of BDI into several IMFs. Too many IMFs may result in a poor final result due to the accumulation error of each IMF. This paper decomposes the original data into four IMFs to avoid aforementioned problems.

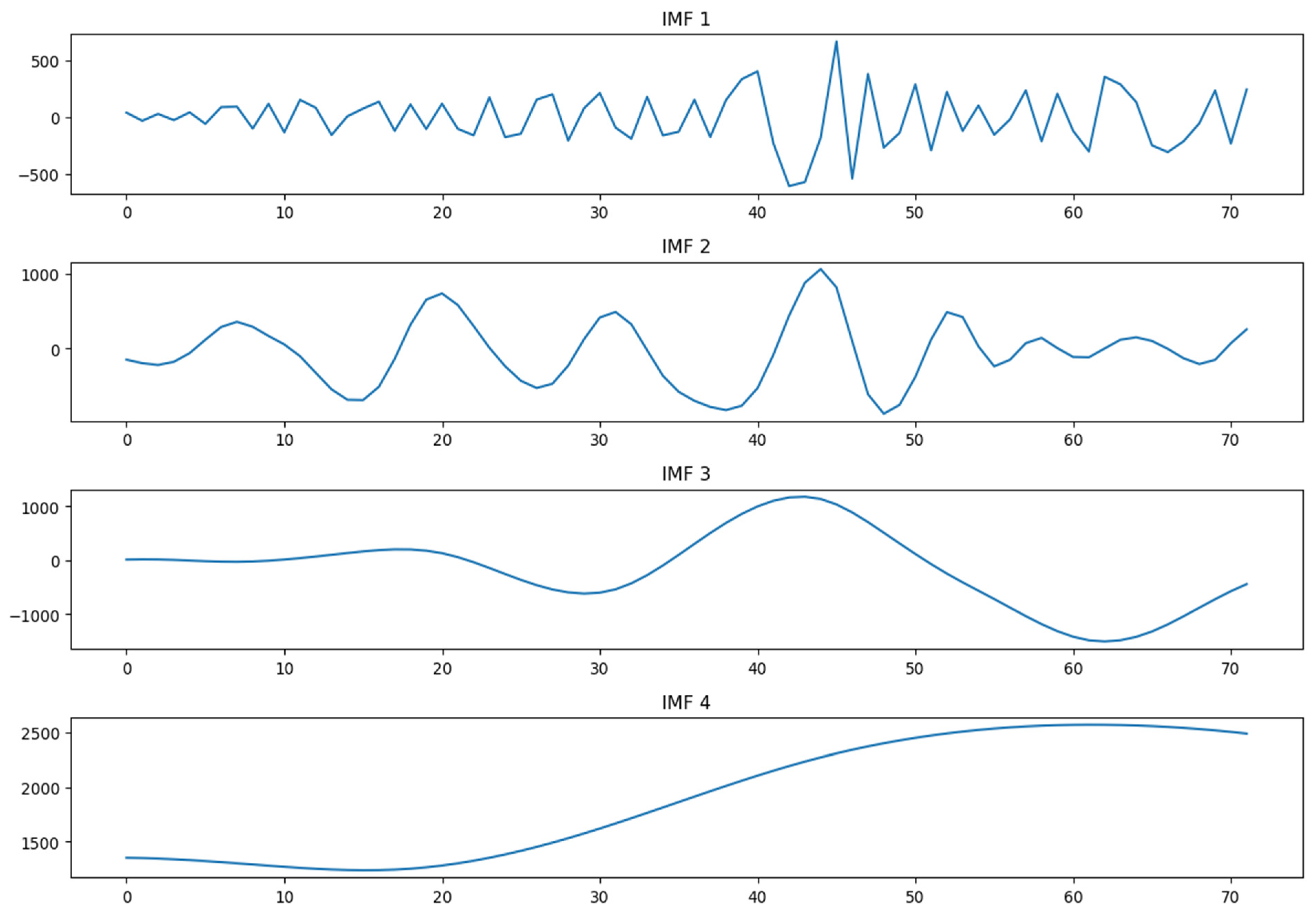

Figure 6 presents the decomposition results of the BDI via EMD, where all IMFs are listed from the highest frequency to the lowest frequency.

The SVR is applied to each IMFs in the second stage. The RBF kernel is selected for SVR. The trained SVR model is deployed for forecasting the next 8 periods of each IMF.

The third stage is to optimize the weight of each IMF using GWO based on least squares. It is worth noting that when using the least squares method as an evaluation criterion, actual data is required. However, in forecasting, actual data is unknown. In this study the predicted values obtained from simple regression are used as the actual data. The optimal weight for each IMF is shown in

Table 7. After obtaining the weight of each IMF, multiply this weight by the predicted value of each IMF and sum them up to obtain the predicted value of BDI. The predicted BDI are shown in

Table 6.

The dominance of the lowest-frequency IMF indicates that medium- to long-term components carry the majority of predictive power for monthly BDI movements, while high-frequency components mainly contribute noise. This finding is consistent with the economic interpretation of freight indices, which are driven primarily by persistent demand–supply imbalances rather than short-term fluctuations.

4.3. Comparison of Forecasting Results

The BDI forecasts for the out-of-sample period (January to August 2024) were generated using each forecasting approach. The predicted values, along with the actual BDI figures for comparison, are presented in

Table 6 and

Figure 7.

Figure 7 compares the actual BDI with forecasts from different models over the eight-step horizon. The actual BDI shows a sharp increase in the third period followed by a decline in the fourth period, after which it remains stable, reflecting short-term market volatility. GM(1,1) shows large oscillations and substantial deviations, while ARIMA(1,0,0) produces overly smooth forecasts that fail to capture variability. SVR captures the general trend but underestimates fluctuations. LSTM and GRU generate steadily decreasing forecasts, suggesting a tendency to overestimate trend dynamics. In contrast, the proposed EMD–SVR–GWO model provides stable predictions that remain close to the observed BDI range, indicating improved robustness and balanced forecasting performance.

Yokum and Armstrong [

30] carried out two studies examining experts’ views on the criteria for selecting forecasting methods. Their findings indicated that accuracy is regarded as the most important factor by the majority of researchers. However, because no single accuracy metric is universally applicable to all forecasting scenarios, multiple measures are often employed to provide a comprehensive evaluation of model performance. As noted by Makridakis et al. [

31], the ranking of models can vary depending on the metric applied.

Model performance is assessed using root mean squared error (RMSE), mean absolute error (MAE), and mean absolute percentage error (MAPE), which directly measure out-of-sample forecasting accuracy and are widely used in time-series forecasting studies. The coefficient of determination (R

2) is not emphasized, as it assumes independent observations and may yield misleading values for nonstationary and highly volatile series such as the BDI. These measures are defined as follows:

where

Yi and

denote the actual and predicted values of the time series for period

i, respectively. All three performance metrics yield positive values, and a smaller value indicates a more accurate forecasting model.

The comparative evaluation of forecasting accuracy across all methods is summarized in

Table 8. According to the results, the EMD-SVR-GWO model delivers the highest accuracy, as it consistently records the lowest values across all three metrics. ARIMA ranks as the second most effective model, regardless of which measure is applied. The difference in accuracy between SVR and ARIMA is relatively minor. In contrast, the Grey forecasting approach performs the poorest in predicting the monthly BDI.

A commonly cited reference for the MAPE-based classification is Lewis [

32]. In this work, Lewis outlines that a MAPE below 10% indicates excellent forecast accuracy, 10–20% is good, 20–50% is acceptable, and values above 50% are considered poor.

According to Lewis’s MAPE-based classification, the proposed EMD-SVR-GWO model achieves a MAPE of 8.3455, corresponding to excellent forecast accuracy. In comparison, the ARIMA, SVR, and LSTM models yield MAPEs of 14.2829, 15.3686, and 16.3638, respectively, which fall within the good accuracy range, while the GRU and Grey Forecast models exhibit acceptable performance (with a MAPE of 24.0836 and 27.26, respectively). It should be noted that LSTM and GRU are included primarily as benchmark deep-learning methods, and their results are interpreted with caution due to the limited sample size, particularly in the test set. Deep neural networks typically require substantially larger datasets to fully exploit their representational capacity, and their comparatively weaker performance in this study likely reflects data constraints rather than inherent model deficiencies. Overall, the results consistently demonstrate the robustness and superior predictive accuracy of the proposed EMD-SVR-GWO framework for BDI forecasting under small-sample conditions.

5. Conclusions

From an industry perspective, improved short-term BDI forecasts can support chartering decisions, fleet deployment, and risk management in volatile freight markets, particularly during periods of structural imbalance such as post-pandemic recovery. The Baltic Dry Index is a key indicator of global shipping costs and economic activity, influencing decisions in trade, investment, and policymaking. However, its high volatility makes accurate forecasting essential. Reliable predictions help shipping companies optimize operations, help investors anticipate market trends, and help policymakers make informed decisions, reducing financial uncertainty and improving strategic planning in the shipping and trade industries.

In forecasting research, synergistic effects arise when multiple complementary methods are combined so that their strengths reinforce one another and improve overall predictive accuracy. In our model, the three-stage EMD-SVR-GWO framework creates such a synergistic effect because each method strengthens a different part of the forecasting process. EMD reduces noise and separates key patterns, SVR captures nonlinear relationships within each component, and GWO optimizes how these component forecasts are combined. Our model fills a methodological gap by offering a more robust way to capture the complex dynamics of the series.

This paper compares various univariate forecasting methods to develop a more precise short-term BDI prediction model, providing valuable insights for decision-makers. Six forecasting techniques are examined: Grey Forecast, ARIMA, Support Vector Regression, LSTM, GRU, and EMD-SVR-GWO. Our results indicate that EMD-SVR-GWO outperforms ARIMA and other four methods (SVR, LSTM, GRU and Grey Forecast). Our proposed approach goes beyond simply combining EMD with SVR by introducing an additional composition step. Notably, this step plays a significant role in enhancing the overall forecasting accuracy. The EMD-SVR-GWO model achieves a MAPE of 8.3455, classified into the excellent category.

From an industry perspective, the novel EMD-SVR-GWO model provided by this study serves as a valuable reference for ship-owners and charterers in making chartering decisions. For instance, if the Baltic Dry Index is projected to rise, ship owners should consider purchasing new or second-hand vessels or securing time charter contracts. If they already hold long-term transportation contracts, sub-chartering their vessels may be a strategic move. On the other hand, charterers should act promptly to secure time charter contracts or long-term transportation agreements with ship-owners. Conversely, if the Baltic Dry Index is expected to decline, the opposite strategies should be adopted.

Despite the strong forecasting performance of the proposed framework, several limitations should be acknowledged from an economic and market perspective. First, the dataset covers a relatively short time span, which may limit the model’s ability to capture long-term structural changes in the global freight market, such as shifts in trade patterns, regulatory interventions, or major economic cycles. As a result, the predictive results identified in this study may be less stable during periods of significant market restructuring or regime change.

Second, although the multi-stage hybrid structure is effective in extracting nonlinear patterns, it may face challenges under extreme market conditions, such as sudden freight rate surges driven by geopolitical events, supply chain disruptions, or abrupt demand shocks. In such environments, purely data-driven models may adjust less rapidly, potentially affecting short-term forecasting reliability.

In addition, the study adopts a univariate framework and evaluates only the Grey Wolf Optimizer (GWO) for weighting optimization, which limits the explicit inclusion of external economic drivers of freight market fluctuations, such as commodity prices, exchange rates, and financial market sentiment.

Future research may extend the proposed framework to a multivariate setting by incorporating economically meaningful variables. Recent studies show that commodity prices, exchange rates, and volatility indices significantly enhance BDI forecasting accuracy (Kim et al. [

19]), while future prices of aluminum, iron ore, cotton, thermal coal, and equity market indicators such as the NASDAQ Composite Index also exhibit strong explanatory power (Li et al. [

22]). These factors can be integrated into an EMD-based hybrid framework using multivariate SVR or deep learning models. Moreover, alternative metaheuristic algorithms, such as Particle Swarm Optimization, may be explored to further improve robustness under highly volatile freight market conditions.