A Hybrid Game Engine–Generative AI Framework for Overcoming Data Scarcity in Open-Pit Crack Detection

Abstract

1. Introduction

- We design and implement a novel hybrid framework for large-scale synthetic dataset generation without requiring real-world training data, combining a parameterized UE5 virtual environment, StyleGAN2-ADA-based image enhancement, and automatic annotation using a vision language model (VLM).

- We conduct a systematic evaluation of three transfer learning strategies for training StyleGAN2-ADA on game engine data, comparing semantically unrelated and domain-aligned source domains to assess their impact on generation quality. The fidelity, diversity, and domain gap of the generated images are evaluated through a combination of distributional, perceptual, statistical, and embedding-based analyses.

- We evaluate downstream effectiveness by training YOLOv11 on synthetic data generated by the proposed framework and testing its object detection performance on a held-out set of 200 real-world open-pit mining images, demonstrating that the best-performing configurations improve AP@0.5 and AP@[0.5:0.95] over the UE5 baseline by up to 16.4% and 34.7%, respectively.

2. Related Works

2.1. Synthetic Dataset Generation Using Game Engines

2.2. Synthetic Dataset Generation Using Generative Models

| Application | Downstream Task | Training Dataset | Training Configuration | FID |

|---|---|---|---|---|

| Petrographic image classification [103] | Classification | 10,070 real petrographic images | 6520 kimg, NVIDIA Quadro RTX 5000 | 12.49 |

| Brain tumor classification [72] | Classification | 3064 real brain scans | NVIDIA Tesla P100 | 58.11–67.53 |

| Abdominal scan synthesis [99] | Not reported | 1300 real abdominal scans | 7800 kimg, NVIDIA GeForce RTX 2080 | 18.14 |

| Algal bloom detection [74] | Semantic segmentation | 3114 real algal bloom images | NVIDIA Tesla P100 | 42.56 |

| Dental radiograph classification [73] | Classification | 1456 real dental radiographs | NVIDIA Tesla A100 | 72.76 |

| Brain scan synthesis [98] | Not reported | 1412 real brain scans | 1800 kimg, NVIDIA Tesla A100 | 20.21 |

| Chest X-ray classification [102] | Classification | 3616 real chest X-rays | NVIDIA Tesla K80 | 20.90 |

| Skin cancer classification [97] | Classification | 33,126 real skin lesion images | NVIDIA GeForce RTX 3090 | 0.79 |

| Landslide detection [104] | Semantic segmentation | 770 real landslide images | Not reported | 67.47 |

| Wildfire detection [76] | Object detection | 1865 real wildfire images | 25,000 kimg, NVIDIA GeForce RTX 3090 Ti | 24.07 |

| Pavement crack detection [105] | Semantic segmentation | 778 real crack images | 32,000 kimg, NVIDIA Tesla T4 | 6.30 |

3. Materials and Methods

3.1. Synthetic Dataset Generation Using UE5

3.1.1. Virtual Environment Construction

3.1.2. Terrain Material Parameterization

3.1.3. Surface Crack Decal Parameterization

3.1.4. Lighting Positioning and Intensity Parameterization

3.1.5. Camera Viewpoint Parameterization

3.1.6. Synthetic Image Rendering

3.1.7. Bounding Box Computation

3.1.8. Automated Dataset Generation Pipeline

| Algorithm 1. Automated Synthetic Dataset Generation Pipeline for UE5 |

| Input: Dataset size N, Crack decals C, Terrain materials T Output: Images I = {I1, …, IN}, Labels L = {L1, …, LN} 1: for i = 1 to N do 2: //Sample crack decal and terrain material 3: crack ← SampleCrack(C), terrain ← SampleMaterial(T) 4: //Sample lighting and camera parameters 5: (θ, ψ, I) ← SampleLighting(), (d, ϕ, α, ρ, FOV) ← SampleCamera() 6: //Configure scene with sampled parameters 7: SpawnDecal(crack), ApplyTerrainMaterial(terrain), SetDirectionalLight(θ, ψ, I) 8: //Compute and assign camera position 9: position ← SphericalToCartesian(d, ϕ, α) 10: SetPosition(position, roll = ρ, perspective = FOV) 11: //Render image and compute annotation 12: Ii = CaptureImage(), Li = ComputeBoundingBox() 13: //Prepare for next iteration 14: DestroyDecal(crack) 15: end for 16: return I, L |

3.2. Synthetic Dataset Fidelity and Diversity Enhancement Using StyleGAN2-ADA

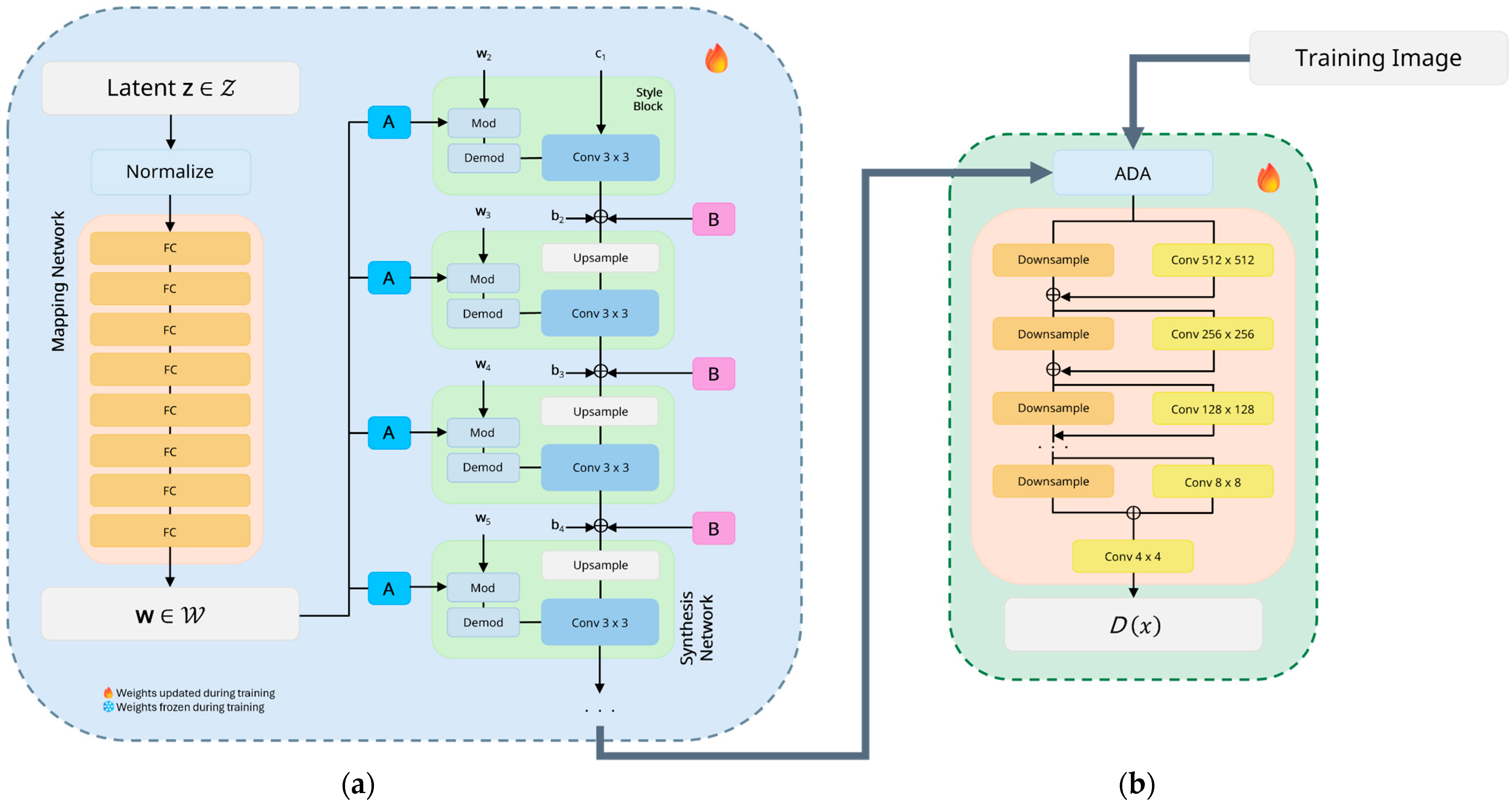

3.2.1. StyleGAN2-ADA Architecture Overview

3.2.2. StyleGAN2-ADA Training Configuration

3.2.3. Generation Fidelity Evaluation

3.2.4. Generation Diversity Evaluation

3.2.5. Domain Gap Evaluation

3.2.6. Automatic Annotation Using Grounding DINO

3.3. Crack Detection Using YOLOv11

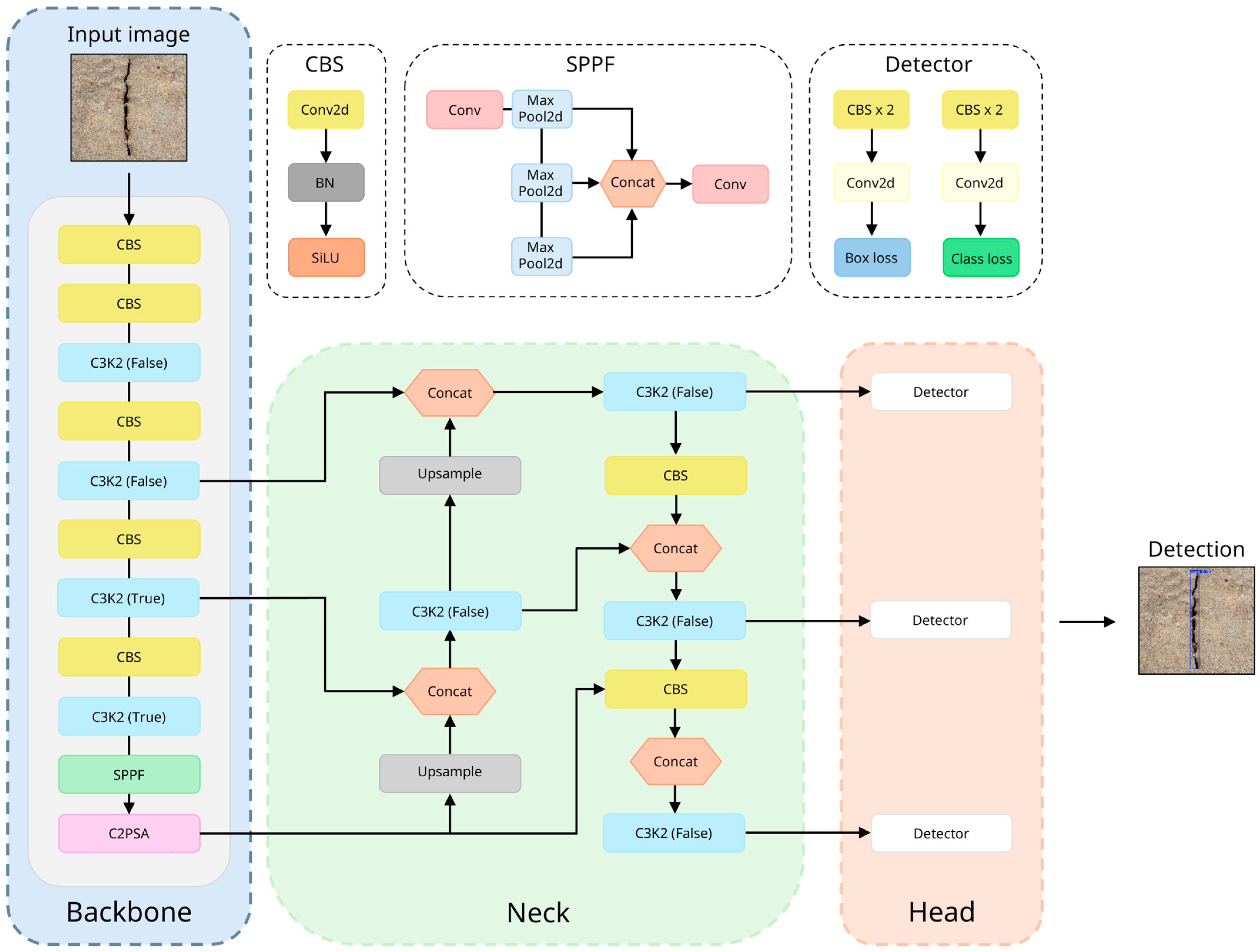

3.3.1. YOLOv11 Architecture Overview

3.3.2. YOLOv11 Training Configuration

3.3.3. Object Detection Performance Evaluation

4. Results and Discussion

4.1. StyleGAN2-ADA Training and Image Generation Assessment

4.1.1. Training Dynamics

4.1.2. Generation Fidelity and Diversity Evaluation

4.1.3. Domain Gap Evaluation

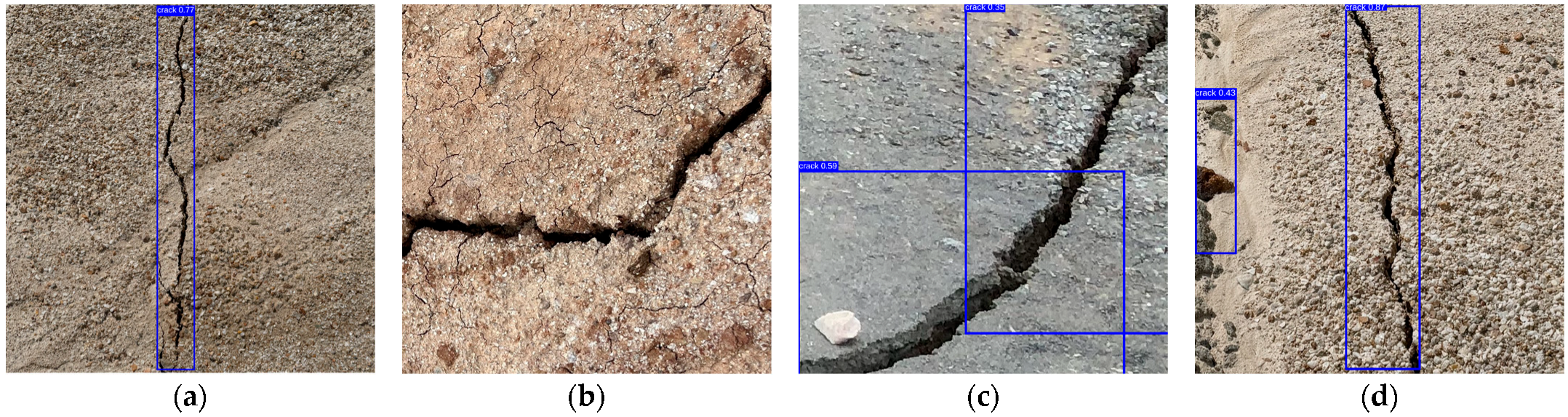

4.1.4. Annotation Reliability Assessment

4.2. YOLOv11 Training and Crack Detection Performance Evaluation

4.2.1. Training Dynamics

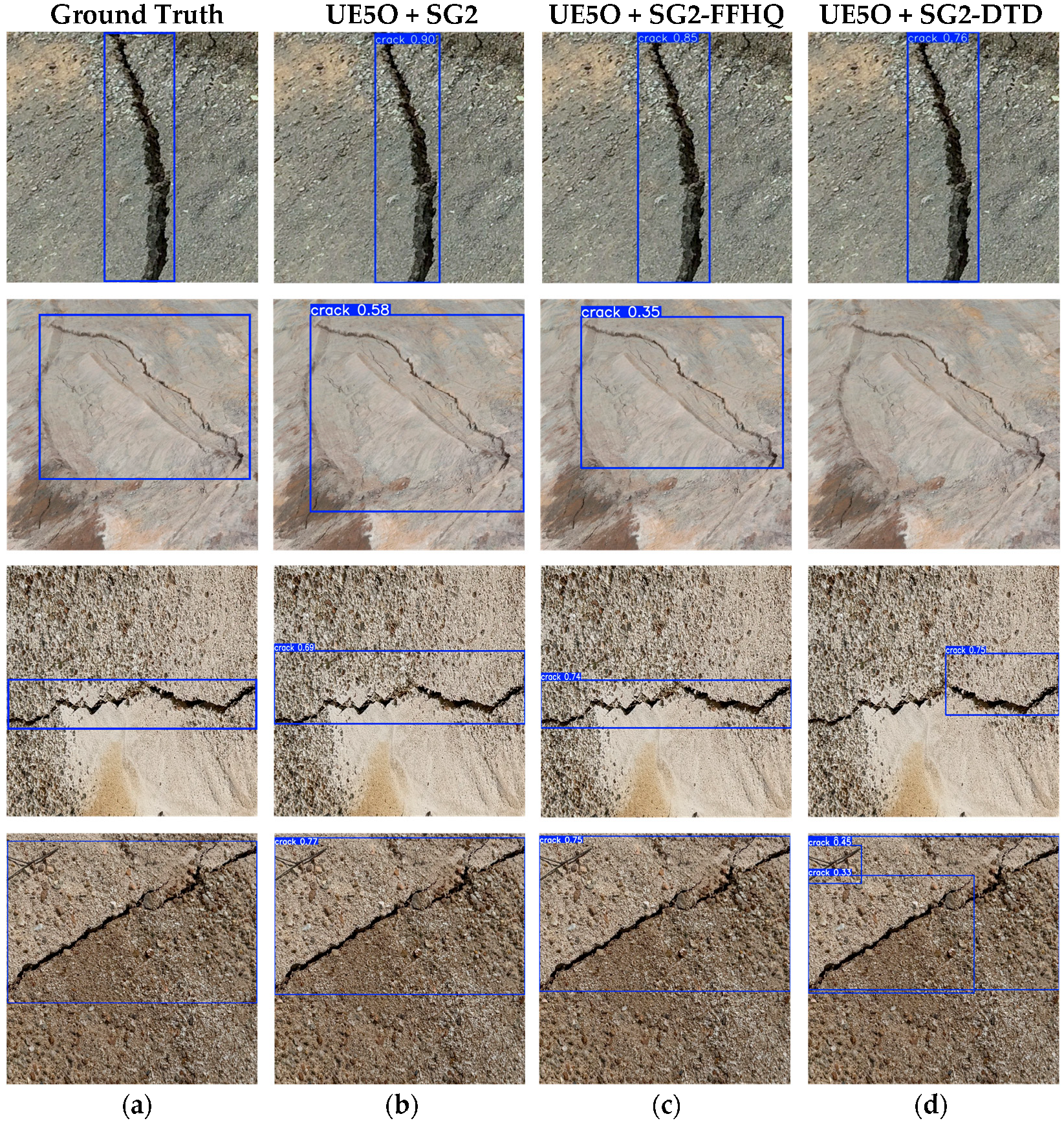

4.2.2. Real-World Performance Evaluation

4.3. Practical Deployment Considerations and Limitations

5. Conclusions and Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| ADA | Adaptive Discriminator Augmentation |

| AI | Artificial Intelligence |

| AP | Average Precision |

| BERT | Bidirectional Encoder Representations from Transformers |

| CBS | Convolutional Block with Batch normalization and SiLU |

| CIoU | Complete Intersection over Union |

| CNN | Convolutional Neural Network |

| COCO | Common Objects in Context |

| CPU | Central Processing Unit |

| CUDA | Compute Unified Device Architecture |

| CV | Computer Vision |

| DL | Deep Learning |

| DSLR | Digital Single-Lens Reflex |

| DTD | Describable Textures Dataset |

| FC | Fully Connected |

| FID | Fréchet Inception Distance |

| FN | False Negative |

| FOV | Field of View |

| FP | False Positive |

| GAN | Generative Adversarial Network |

| GPU | Graphics Processing Unit |

| GTA V | Grand Theft Auto V |

| HRSST | High Resolution Screenshot Tool |

| IoU | Intersection over Union |

| LPIPS | Learned Perceptual Image Patch Similarity |

| mAP | Mean Average Precision |

| MLP | Multilayer Perceptron |

| NDDS | NVIDIA Deep Learning Dataset Synthesizer |

| PCK | Percentage of Correct Keypoints |

| PBR | Physically Based Rendering |

| RAM | Random Access Memory |

| RT | Ray Tracing |

| RTGI | Real-Time Global Illumination |

| SG2 | StyleGAN2-ADA |

| SOTA | State of the Art |

| SPPF | Spatial Pyramid Pooling-Fast |

| TAA | Temporal Anti-Aliasing |

| TP | True Positive |

| t-SNE | t-Distributed Stochastic Neighbor Embedding |

| UAV | Unmanned Aerial Vehicle |

| UE | Unreal Engine |

| UE4 | Unreal Engine 4 |

| UE5 | Unreal Engine 5 |

| UE5O | Unreal Engine 5 only |

| VAE | Variational Autoencoder |

| VGG-16 | Visual Geometry Group-16 |

| ViT | Vision Transformer |

| VLM | Vision Language Model |

References

- Li, G.; Hu, Z.; Wang, D.; Wang, L.; Wang, Y.; Zhao, L.; Jia, H.; Fang, K. Instability Mechanisms of Slope in Open-Pit Coal Mines: From Physical and Numerical Modeling. Int. J. Min. Sci. Technol. 2024, 34, 1509–1528. [Google Scholar] [CrossRef]

- Kolapo, P.; Oniyide, G.O.; Said, K.O.; Lawal, A.I.; Onifade, M.; Munemo, P. An Overview of Slope Failure in Mining Operations. Mining 2022, 2, 350–384. [Google Scholar] [CrossRef]

- de Graaf, P.J.H.; Desjardins, M.; Tsheko, P.; Fourie, A.B.; Tibbett, M. Geotechnical Risk Management for Open Pit Mine Closure: A Sub-Arctic and Semi-Arid Case Study; Australian Centre for Geomechanics: Crawley, Australia, 2019; pp. 211–234. [Google Scholar]

- Zhang, N.; Wang, Y.; Zhao, F.; Wang, T.; Zhang, K.; Fan, H.; Zhou, D.; Zhang, L.; Yan, S.; Diao, X.; et al. Monitoring and Analysis of the Collapse at Xinjing Open-Pit Mine, Inner Mongolia, China, Using Multi-Source Remote Sensing. Remote Sens. 2024, 16, 993. [Google Scholar] [CrossRef]

- Lin, Y.N.; Park, E.; Wang, Y.; Quek, Y.P.; Lim, J.; Alcantara, E.; Loc, H.H. The 2020 Hpakant Jade Mine Disaster, Myanmar: A Multi-Sensor Investigation for Slope Failure. ISPRS J. Photogramm. Remote Sens. 2021, 177, 291–305. [Google Scholar] [CrossRef]

- Martin, C.D.; Stacey, P.F.; Dight, P.M. Pit Slopes in Weathered and Weak Rocks; Australian Centre for Geomechanics: Crawley, Australia, 2013; pp. 3–28. [Google Scholar]

- Zhong, Z.; Hu, B.; Li, J.; Sheng, J.; Wan, C. Impact of Rainfall Dry-Wet Cycles on Slope Deformation and Landslide Prediction in Open-Pit Mines: A Case Study of Mohuandang Landslide, Emeishan, China. Results Eng. 2025, 26, 105011. [Google Scholar] [CrossRef]

- Wang, W.; Griffiths, D. Case Study of Slope Failure during Construction of an Open Pit Mine in Indonesia. Can. Geotech. J. 2018, 56, 636–648. [Google Scholar] [CrossRef]

- Kong, K.W.K.; Dight, P.M. Blasting Vibration Assessment of Rock Slopes and a Case Study; Australian Centre for Geomechanics: Crawley, Australia, 2013; pp. 1335–1344. [Google Scholar]

- Wang, J.; Zhou, Z.; Chen, C.; Wang, H.; Chen, Z. Failure Mechanism and Stability Analysis of an Open-Pit Slope under Excavation Unloading Conditions. Front. Earth Sci. 2023, 11, 1109316. [Google Scholar] [CrossRef]

- Bridges, M.C.; Dight, P.M. An Extensional Mechanism of Instability and Failure in the Walls of Open Pit Mines; Australian Centre for Geomechanics: Crawley, Australia, 2013; pp. 137–150. [Google Scholar]

- Whittall, J.R.; McDougall, S.; Eberhardt, E. A Risk-Based Methodology for Establishing Landslide Exclusion Zones in Operating Open Pit Mines. Int. J. Rock Mech. Min. Sci. 2017, 100, 100–107. [Google Scholar] [CrossRef]

- McQuillan, A.; Canbulat, I.; Oh, J. Methods Applied in Australian Industry to Evaluate Coal Mine Slope Stability. Int. J. Min. Sci. Technol. 2020, 30, 151–155. [Google Scholar] [CrossRef]

- Vaziri, A.; Moore, L.; Ali, H. Monitoring Systems for Warning Impending Failures in Slopes and Open Pit Mines. Nat. Hazards 2010, 55, 501–512. [Google Scholar] [CrossRef]

- Mohammed, M.M. A Review On Slope Monitoring And Application Methods In Open Pit Mining Activities. Int. J. Min. Sci. Technol. Res. 2021, 10, 181–186. [Google Scholar]

- Ching, J.; Phoon, K.-K. Value of Geotechnical Site Investigation in Reliability-Based Design Advances in Structural Engineering. Adv. Struct. Eng. 2012, 15, 1935–1945. [Google Scholar] [CrossRef]

- Zumrawi, M. Effects of Inadequate Geotechnical Investigations on Civil Engineering projects. Int. J. Sci. Res. IJSR 2014, 3, 927–931. [Google Scholar]

- Crisp, M.P.; Jaksa, M.; Kuo, Y. Optimal Testing Locations in Geotechnical Site Investigations through the Application of a Genetic Algorithm. Geosciences 2020, 10, 265. [Google Scholar] [CrossRef]

- Le Roux, R.; Sepehri, M.; Khaksar, S.; Murray, I. Slope Stability Monitoring Methods and Technologies for Open-Pit Mining: A Systematic Review. Mining 2025, 5, 32. [Google Scholar] [CrossRef]

- Alzubaidi, L.; Zhang, J.; Humaidi, A.J.; Al-Dujaili, A.; Duan, Y.; Al-Shamma, O.; Santamaría, J.; Fadhel, M.A.; Al-Amidie, M.; Farhan, L. Review of Deep Learning: Concepts, CNN Architectures, Challenges, Applications, Future Directions. J. Big Data 2021, 8, 53. [Google Scholar] [CrossRef]

- Matsuzaka, Y.; Yashiro, R. AI-Based Computer Vision Techniques and Expert Systems. AI 2023, 4, 289–302. [Google Scholar] [CrossRef]

- Kalluri, P.R.; Agnew, W.; Cheng, M.; Owens, K.; Soldaini, L.; Birhane, A. Computer-Vision Research Powers Surveillance Technology. Nature 2025, 643, 73–79. [Google Scholar] [CrossRef] [PubMed]

- Chimakurthi, V.N.S.S. Application of Convolution Neural Network for Digital Image Processing. Eng. Int. 2020, 8, 149–158. [Google Scholar] [CrossRef]

- Kameswari, C.; J, K.; Reddy, T.; Chinthaguntla, B.; Jagatheesaperumal, S.; Gaftandzhieva, S.; Doneva, R. An Overview of Vision Transformers for Image Processing: A Survey. Int. J. Adv. Comput. Sci. Appl. 2023, 14, 273–289. [Google Scholar] [CrossRef]

- Fawole, O.A.; Rawat, D.B. Recent Advances in 3D Object Detection for Self-Driving Vehicles: A Survey. AI 2024, 5, 1255–1285. [Google Scholar] [CrossRef]

- Bratulescu, R.-A.; Vatasoiu, R.-I.; Sucic, G.; Mitroi, S.-A.; Vochin, M.-C.; Sachian, M.-A. Object Detection in Autonomous Vehicles. In Proceedings of the 2022 25th International Symposium on Wireless Personal Multimedia Communications (WPMC), Herning, Denmark, 30 October–2 November 2022; pp. 375–380. [Google Scholar]

- Albuquerque, C.; Henriques, R.; Castelli, M. Deep Learning-Based Object Detection Algorithms in Medical Imaging: Systematic Review. Heliyon 2025, 11, e41137. [Google Scholar] [CrossRef]

- Saraei, M.; Lalinia, M.; Lee, E.-J. Deep Learning-Based Medical Object Detection: A Survey. IEEE Access 2025, 13, 53019–53038. [Google Scholar] [CrossRef]

- Malburg, L.; Rieder, M.-P.; Seiger, R.; Klein, P.; Bergmann, R. Object Detection for Smart Factory Processes by Machine Learning. Procedia Comput. Sci. 2021, 184, 581–588. [Google Scholar] [CrossRef]

- Fatima, Z.; Zardari, S.; Tanveer, M.H. Advancing Industrial Object Detection Through Domain Adaptation: A Solution for Industry 5.0. Actuators 2024, 13, 513. [Google Scholar] [CrossRef]

- Di Mucci, V.M.; Cardellicchio, A.; Ruggieri, S.; Nettis, A.; Renò, V.; Uva, G. Artificial Intelligence in Structural Health Management of Existing Bridges. Autom. Constr. 2024, 167, 105719. [Google Scholar] [CrossRef]

- Plevris, V.; Papazafeiropoulos, G. AI in Structural Health Monitoring for Infrastructure Maintenance and Safety. Infrastructures 2024, 9, 225. [Google Scholar] [CrossRef]

- Lee, J.; Lee, S. Construction Site Safety Management: A Computer Vision and Deep Learning Approach. Sensors 2023, 23, 944. [Google Scholar] [CrossRef]

- Rabbi, A.B.K.; Jeelani, I. AI Integration in Construction Safety: Current State, Challenges, and Future Opportunities in Text, Vision, and Audio Based Applications. Autom. Constr. 2024, 164, 105443. [Google Scholar] [CrossRef]

- Yan, Y.; Zhang, Y.; Zhou, L.; Zhang, F.; Wang, H. Acoustic Signals-Based Probabilistic Fault Diagnosis for Expansion Joints of Small and Medium Bridges Using Bayesian Ensemble Learning. Eng. Struct. 2026, 354, 122379. [Google Scholar] [CrossRef]

- Liao, R.; Zhang, Y.; Wang, H.; Zhao, T.; Wang, X. Multi-Objective Optimisation of Surveillance Camera Placement for Bridge–Ship Collision Early-Warning Using an Improved Non-Dominated Sorting Genetic Algorithm. Adv. Eng. Inform. 2026, 69, 103918. [Google Scholar] [CrossRef]

- Khalife, S.; Emadi, S.; Wilner, D.; Hamzeh, F. Developing Project Value Attributes: A Proposed Process for Value Delivery on Construction Projects. In Proceedings of the IGLC 30—International Group for Lean Construction Conference, Edmonton, AB, Canada, 25–29 July 2022; pp. 913–924. [Google Scholar]

- Demirel, Z.; Nasraldeen, S.T.; Pehlivan, Ö.; Shoman, S.; Albdairi, M.; Almusawi, A. Comparative Evaluation of YOLO and Gemini AI Models for Road Damage Detection and Mapping. Future Transp. 2025, 5, 91. [Google Scholar] [CrossRef]

- Zhao, M.; Wang, S.; Guo, B.; Gu, W. Review of Crack Depth Detection Technology for Engineering Structures: From Physical Principles to Artificial Intelligence. Appl. Sci. 2025, 15, 9120. [Google Scholar] [CrossRef]

- Ruan, S.; Hu, Y.; Liu, J.; Wang, J. An Advanced Crack Detection Method for Slope Management in Open-Pit Mines: Applying Enhanced YOLOv8 Network. Int. J. Min. Reclam. Environ. 2026, 40, 70–87. [Google Scholar] [CrossRef]

- An, J.; Dong, S.; Wang, X.; Li, C.; Zhao, W. Research on UAV Aerial Imagery Detection Algorithm for Mining-Induced Surface Cracks Based on Improved YOLOv10. Sci. Rep. 2025, 15, 30101. [Google Scholar] [CrossRef] [PubMed]

- Ruan, S.; Liu, D.; Gu, Q.; Jing, Y. An Intelligent Detection Method for Open-Pit Slope Fracture Based on the Improved Mask R-CNN. J. Min. Sci. 2022, 58, 503–518. [Google Scholar] [CrossRef]

- Letshwiti, T.M.; Shahsavar, M.; Moniri-Morad, A.; Sattarvand, J. Deep Learning-Based Image Segmentation for Highwall Stability Monitoring in Open Pit Mines. J. Eng. Res. 2025, 13, 3595–3608. [Google Scholar] [CrossRef]

- Wang, K.; Wei, B.; Zhao, T.; Wu, G.; Zhang, J.; Zhu, L.; Wang, L. An Automated Approach for Mapping Mining-Induced Fissures Using CNNs and UAS Photogrammetry. Remote Sens. 2024, 16, 2090. [Google Scholar] [CrossRef]

- Winkelmaier, G.; Battulwar, R.; Khoshdeli, M.; Valencia, J.; Sattarvand, J.; Parvin, B. Topographically Guided UAV for Identifying Tension Cracks Using Image-Based Analytics in Open-Pit Mines. IEEE Trans. Ind. Electron. 2021, 68, 5415–5424. [Google Scholar] [CrossRef]

- Bansal, M.A.; Sharma, D.R.; Kathuria, D.M. A Systematic Review on Data Scarcity Problem in Deep Learning: Solution and Applications. ACM Comput. Surv. 2022, 54, 208:1–208:29. [Google Scholar] [CrossRef]

- Wang, J.; Lan, C.; Liu, C.; Ouyang, Y.; Qin, T.; Lu, W.; Chen, Y.; Zeng, W.; Yu, P.S. Generalizing to Unseen Domains: A Survey on Domain Generalization. arXiv 2022, arXiv:2103.03097. [Google Scholar] [CrossRef]

- Alzubaidi, L.; Bai, J.; Al-Sabaawi, A.; Santamaría, J.; Albahri, A.S.; Al-dabbagh, B.S.N.; Fadhel, M.A.; Manoufali, M.; Zhang, J.; Al-Timemy, A.H.; et al. A Survey on Deep Learning Tools Dealing with Data Scarcity: Definitions, Challenges, Solutions, Tips, and Applications. J. Big Data 2023, 10, 46. [Google Scholar] [CrossRef]

- Harle, S.M.; Wankhade, R.L. Machine Learning Techniques for Predictive Modelling in Geotechnical Engineering: A Succinct Review. Discov. Civ. Eng. 2025, 2, 86. [Google Scholar] [CrossRef]

- Ramasamy, D.; Sivamani, S. The Future of Geotechnical Engineering Through Deep Learning: A Concise Literature Review. J. Inf. Syst. Eng. Manag. 2025, 10, 685–694. [Google Scholar] [CrossRef]

- Yamani, A.; AlAmoudi, N.; Albilali, S.; Baslyman, M.; Hassine, J. Data Requirement Goal Modeling for Machine Learning Systems. arXiv 2025, arXiv:2504.07664. [Google Scholar] [CrossRef]

- Taye, M.M. Understanding of Machine Learning with Deep Learning: Architectures, Workflow, Applications and Future Directions. Computers 2023, 12, 91. [Google Scholar] [CrossRef]

- Hutchinson, M.L.; Antono, E.; Gibbons, B.M.; Paradiso, S.; Ling, J.; Meredig, B. Overcoming Data Scarcity with Transfer Learning. arXiv 2017, arXiv:1711.05099. [Google Scholar] [CrossRef]

- Wang, Z.; Wang, P.; Liu, K.; Wang, P.; Fu, Y.; Lu, C.-T.; Aggarwal, C.C.; Pei, J.; Zhou, Y. A Comprehensive Survey on Data Augmentation. IEEE Trans. Knowl. Data Eng. 2026, 38, 47–66. [Google Scholar] [CrossRef]

- Zhao, Z.; Alzubaidi, L.; Zhang, J.; Duan, Y.; Gu, Y. A Comparison Review of Transfer Learning and Self-Supervised Learning: Definitions, Applications, Advantages and Limitations. Expert Syst. Appl. 2024, 242, 122807. [Google Scholar] [CrossRef]

- Brodzicki, A.; Piekarski, M.; Kucharski, D.; Jaworek-Korjakowska, J.; Gorgon, M. Transfer Learning Methods as a New Approach in Computer Vision Tasks with Small Datasets. Found. Comput. Decis. Sci. 2020, 45, 179–193. [Google Scholar] [CrossRef]

- Kumar, T.; Mileo, A.; Brennan, R.; Bendechache, M. Image Data Augmentation Approaches: A Comprehensive Survey and Future Directions. IEEE Access 2024, 12, 187536–187571. [Google Scholar] [CrossRef]

- Mumuni, A.; Mumuni, F. Data Augmentation: A Comprehensive Survey of Modern Approaches. Array 2022, 16, 100258. [Google Scholar] [CrossRef]

- Li, M.; Chen, H.; Wang, Y.; Zhu, T.; Zhang, W.; Zhu, K.; Wong, K.-F.; Wang, J. Understanding and Mitigating the Bias Inheritance in LLM-Based Data Augmentation on Downstream Tasks. arXiv 2025, arXiv:2502.04419. [Google Scholar] [CrossRef]

- Nikolenko, S. Synthetic Data for Deep Learning; Springer: Cham, Switzerland, 2021; ISBN 978-3-030-75177-7. [Google Scholar]

- Unreal Engine 5. Available online: https://www.unrealengine.com/en-US/unreal-engine-5 (accessed on 15 September 2025).

- Unity Real-Time Development Platform|3D, 2D, VR & AR Engine. Available online: https://unity.com (accessed on 15 September 2025).

- Li, Y.; Dong, X.; Chen, C.; Li, J.; Wen, Y.; Spranger, M.; Lyu, L. Is Synthetic Image Useful for Transfer Learning? An Investigation into Data Generation, Volume, and Utilization. arXiv 2024, arXiv:2403.19866. [Google Scholar] [CrossRef]

- Nanite Virtualized Geometry in Unreal Engine|Unreal Engine 5.6 Documentation|Epic Developer Community. Available online: https://dev.epicgames.com/documentation/en-us/unreal-engine/nanite-virtualized-geometry-in-unreal-engine (accessed on 16 September 2025).

- Lumen Global Illumination and Reflections in Unreal Engine|Unreal Engine 5.6 Documentation|Epic Developer Community. Available online: https://dev.epicgames.com/documentation/en-us/unreal-engine/lumen-global-illumination-and-reflections-in-unreal-engine (accessed on 16 September 2025).

- Ulhas, S.S.; Kannapiran, S.; Berman, S. GAN-Based Domain Adaptation for Creating Digital Twins of Small-Scale Driving Testbeds: Opportunities and Challenges. In Proceedings of the 2024 IEEE Intelligent Vehicles Symposium (IV), Jeju Island, Republic of Korea, 2–5 June 2024; pp. 137–143. [Google Scholar]

- Goodfellow, I.J.; Pouget-Abadie, J.; Mirza, M.; Xu, B.; Warde-Farley, D.; Ozair, S.; Courville, A.; Bengio, Y. Generative Adversarial Networks. arXiv 2014, arXiv:1406.2661. [Google Scholar] [CrossRef]

- Karras, T.; Laine, S.; Aila, T. A Style-Based Generator Architecture for Generative Adversarial Networks. arXiv 2019, arXiv:1812.04948. [Google Scholar] [CrossRef]

- Karras, T.; Aittala, M.; Hellsten, J.; Laine, S.; Lehtinen, J.; Aila, T. Training Generative Adversarial Networks with Limited Data. arXiv 2020, arXiv:2006.06676. [Google Scholar] [CrossRef]

- Bandi, A.; Adapa, P.V.S.R.; Kuchi, Y.E.V.P.K. The Power of Generative AI: A Review of Requirements, Models, Input–Output Formats, Evaluation Metrics, and Challenges. Future Internet 2023, 15, 260. [Google Scholar] [CrossRef]

- Werda, M.S.; Taibi, H.; Kouiss, K.; Chebak, A.; Ben Halima, S.; Decottignies, M.; Dilliott, C. Towards Minimizing Domain Gap When Using Synthetic Data in Automotive Vision Control Applications. IFAC-Pap. 2024, 58, 522–527. [Google Scholar] [CrossRef]

- Tariq, U.; Qureshi, R.; Zafar, A.; Aftab, D.; Wu, J.; Alam, T.; Shah, Z.; Ali, H. Brain Tumor Synthetic Data Generation with Adaptive StyleGANs. In Artificial Intelligence and Cognitive Science; Longo, L., O’Reilly, R., Eds.; Springer Nature: Cham, Switzerland, 2023; pp. 147–159. [Google Scholar]

- Yang, S.; Kim, K.-D.; Ariji, E.; Takata, N.; Kise, Y. Evaluating the Performance of Generative Adversarial Network-Synthesized Periapical Images in Classifying C-Shaped Root Canals. Sci. Rep. 2023, 13, 18038. [Google Scholar] [CrossRef] [PubMed]

- Barrientos-Espillco, F.; Gascó, E.; López-González, C.I.; Gómez-Silva, M.J.; Pajares, G. Semantic Segmentation Based on Deep Learning for the Detection of Cyanobacterial Harmful Algal Blooms (CyanoHABs) Using Synthetic Images. Appl. Soft Comput. 2023, 141, 110315. [Google Scholar] [CrossRef]

- Achicanoy, H.; Chaves, D.; Trujillo, M. StyleGANs and Transfer Learning for Generating Synthetic Images in Industrial Applications. Symmetry 2021, 13, 1497. [Google Scholar] [CrossRef]

- Park, G.; Lee, Y. Wildfire Smoke Detection Enhanced by Image Augmentation with StyleGAN2-ADA for YOLOv8 and RT-DETR Models. Fire 2024, 7, 369. [Google Scholar] [CrossRef]

- Man, K.; Chahl, J. A Review of Synthetic Image Data and Its Use in Computer Vision. J. Imaging 2022, 8, 310. [Google Scholar] [CrossRef]

- Half-Life 2 on Steam. Available online: https://store.steampowered.com/app/220/HalfLife_2/ (accessed on 9 October 2025).

- Taylor, G.R.; Chosak, A.J.; Brewer, P.C. OVVV: Using Virtual Worlds to Design and Evaluate Surveillance Systems. In Proceedings of the 2007 IEEE Conference on Computer Vision and Pattern Recognition, Minneapolis, MN, USA, 17–22 June 2007; pp. 1–8. [Google Scholar]

- Richter, S.R.; Vineet, V.; Roth, S.; Koltun, V. Playing for Data: Ground Truth from Computer Games. arXiv 2016, arXiv:1608.02192. [Google Scholar] [CrossRef]

- Lee, H.; Jeon, J.; Lee, D.; Park, C.; Kim, J.; Lee, D. Game Engine-Driven Synthetic Data Generation for Computer Vision-Based Safety Monitoring of Construction Workers. Autom. Constr. 2023, 155, 105060. [Google Scholar] [CrossRef]

- Rasmussen, I.; Kvalsvik, S.; Andersen, P.-A.; Aune, T.N.; Hagen, D. Development of a Novel Object Detection System Based on Synthetic Data Generated from Unreal Game Engine. Appl. Sci. 2022, 12, 8534. [Google Scholar] [CrossRef]

- Turkcan, M.K.; Li, Y.; Zang, C.; Ghaderi, J.; Zussman, G.; Kostic, Z. Boundless: Generating Photorealistic Synthetic Data for Object Detection in Urban Streetscapes. arXiv 2024, arXiv:2409.03022. [Google Scholar] [CrossRef]

- Hwang, H.; Adhikari, K.; Shodhaka, S.; Kim, D. Synthetic Data Augmentation for Robotic Mobility Aids to Support Blind and Low Vision People. In Robot Intelligence Technology and Applications 9; Park, D., Liu, C., Lee, D.-Y., Kim, M.J., Eds.; Springer Nature: Cham, Switzerland, 2025; pp. 92–102. [Google Scholar]

- Cauli, N.; Reforgiato Recupero, D. Synthetic Data Augmentation for Video Action Classification Using Unity. IEEE Access 2024, 12, 156172–156183. [Google Scholar] [CrossRef]

- Borkman, S.; Crespi, A.; Dhakad, S.; Ganguly, S.; Hogins, J.; Jhang, Y.-C.; Kamalzadeh, M.; Li, B.; Leal, S.; Parisi, P.; et al. Unity Perception: Generate Synthetic Data for Computer Vision. arXiv 2021, arXiv:2107.04259. [Google Scholar] [CrossRef]

- Naidoo, J.; Bates, N.; Gee, T.; Nejati, M. Pallet Detection from Synthetic Data Using Game Engines. arXiv 2023, arXiv:2304.03602. [Google Scholar] [CrossRef]

- Angus, M.; ElBalkini, M.; Khan, S.; Harakeh, A.; Andrienko, O.; Reading, C.; Waslander, S.; Czarnecki, K. Unlimited Road-Scene Synthetic Annotation (URSA) Dataset. In Proceedings of the 2018 21st International Conference on Intelligent Transportation Systems (ITSC), Maui, HI, USA, 4–7 November 2018; pp. 985–992. [Google Scholar]

- Shooter, M.; Malleson, C.; Hilton, A. SyDog: A Synthetic Dog Dataset for Improved 2D Pose Estimation. arXiv 2021, arXiv:2108.00249. [Google Scholar] [CrossRef]

- Lee, J.G.; Hwang, J.; Chi, S.; Seo, J. Synthetic Image Dataset Development for Vision-Based Construction Equipment Detection. J. Comput. Civ. Eng. 2022, 36, 04022020. [Google Scholar] [CrossRef]

- Natarajan, S.A.; Madden, M.G. Hybrid Synthetic Data Generation Pipeline That Outperforms Real Data. J. Electron. Imaging 2023, 32, 023011. [Google Scholar] [CrossRef]

- NVIDIA Corporation. NVIDIA Deep Learning Dataset Synthesizer (NDDS). Available online: https://github.com/NVIDIA/Dataset_Synthesizer (accessed on 10 September 2025).

- Perception Package|Perception Package|1.0.0-Preview.1. Available online: https://docs.unity3d.com/Packages/com.unity.perception@1.0/manual/index.html (accessed on 9 October 2025).

- Games, R. Grand Theft Auto V. Available online: https://www.rockstargames.com/gta-v (accessed on 10 October 2025).

- Sengar, S.S.; Hasan, A.B.; Kumar, S.; Carroll, F. Generative Artificial Intelligence: A Systematic Review and Applications. arXiv 2024, arXiv:2405.11029. [Google Scholar] [CrossRef]

- Deijn, R.d.; Batra, A.; Koch, B.; Mansoor, N.; Makkena, H. Reviewing FID and SID Metrics on Generative Adversarial Networks. In Proceedings of the AI, Machine Learning and Applications, Copenhagen, Denmark, 27 January 2024; pp. 111–124. [Google Scholar]

- Wang, R.; Chen, X.; Wang, X.; Wang, H.; Qian, C.; Yao, L.; Zhang, K. A Novel Approach for Melanoma Detection Utilizing GAN Synthesis and Vision Transformer. Comput. Biol. Med. 2024, 176, 108572. [Google Scholar] [CrossRef] [PubMed]

- Lai, M.; Marzi, C.; Mascalchi, M.; Diciotti, S. Brain MRI Synthesis Using Stylegan2-ADA. In Proceedings of the 2024 IEEE International Symposium on Biomedical Imaging (ISBI), Athens, Greece, 27–30 May 2024; pp. 1–5. [Google Scholar]

- Gonçalves, B.; Vieira, P.; Vieira, A. Abdominal MRI Synthesis Using StyleGAN2-ADA. In Proceedings of the 2023 IST-Africa Conference (IST-Africa), Tshwane, South Africa, 2 June–31 May 2023; pp. 1–9. [Google Scholar]

- Chong, M.J.; Forsyth, D. Effectively Unbiased FID and Inception Score and Where to Find Them. In 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: New York, NY, USA, 2020; pp. 6070–6079. [Google Scholar]

- Jayasumana, S.; Ramalingam, S.; Veit, A.; Glasner, D.; Chakrabarti, A.; Kumar, S. Rethinking FID: Towards a Better Evaluation Metric for Image Generation. In 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: New York, NY, USA, 2024; pp. 9307–9315. [Google Scholar]

- Fedoruk, O.; Klimaszewski, K.; Ogonowski, A.; Możdżonek, R. Performance of GAN-Based Augmentation for Deep Learning COVID-19 Image Classification. AIP Conf. Proc. 2024, 3061, 030001. [Google Scholar] [CrossRef]

- Ferreira, I.; Ochoa, L.; Koeshidayatullah, A. On the Generation of Realistic Synthetic Petrographic Datasets Using a Style-Based GAN. Sci. Rep. 2022, 12, 12845. [Google Scholar] [CrossRef] [PubMed]

- Feng, X.; Du, J.; Wu, M.; Chai, B.; Miao, F.; Wang, Y. Potential of Synthetic Images in Landslide Segmentation in Data-Poor Scenario: A Framework Combining GAN and Transformer Models. Landslides 2024, 21, 2211–2226. [Google Scholar] [CrossRef]

- Ghosh, R.; Yamany, M.S.; Smadi, O. Generation of Synthetic Dataset to Improve Deep Learning Models for Pavement Distress Assessment. Innov. Infrastruct. Solut. 2025, 10, 41. [Google Scholar] [CrossRef]

- Karras, T.; Laine, S.; Aila, T. Flickr-Faces-HQ Dataset (FFHQ). Available online: https://github.com/NVlabs/ffhq-dataset (accessed on 10 September 2025).

- Liu, S.; Zeng, Z.; Ren, T.; Li, F.; Zhang, H.; Yang, J.; Jiang, Q.; Li, C.; Yang, J.; Su, H.; et al. Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Object Detection. arXiv 2024, arXiv:2303.05499. [Google Scholar]

- Creating Landscapes in Unreal Engine|Unreal Engine 5.7 Documentation|Epic Developer Community. Available online: https://dev.epicgames.com/documentation/en-us/unreal-engine/creating-landscapes-in-unreal-engine (accessed on 7 January 2026).

- Tremblay, J.; Prakash, A.; Acuna, D.; Brophy, M.; Jampani, V.; Anil, C.; To, T.; Cameracci, E.; Boochoon, S.; Birchfield, S. Training Deep Networks with Synthetic Data: Bridging the Reality Gap by Domain Randomization. arXiv 2018, arXiv:1804.06516. [Google Scholar] [CrossRef]

- Tobin, J.; Fong, R.; Ray, A.; Schneider, J.; Zaremba, W.; Abbeel, P. Domain Randomization for Transferring Deep Neural Networks from Simulation to the Real World. arXiv 2017, arXiv:1703.06907. [Google Scholar] [CrossRef]

- Viscarra Rossel, R.A.; Bui, E.N.; de Caritat, P.; McKenzie, N.J. Mapping Iron Oxides and the Color of Australian Soil Using Visible–near-Infrared Reflectance Spectra. J. Geophys. Res. Earth Surf. 2010, 115. [Google Scholar] [CrossRef]

- van Vreeswyk, A.M.E.; Leighton, K.A.; Payne, A.L.; Hennig, P. An Inventory and Condition Survey of the Pilbara Region, Western Australia; Technical Bulletin 92; Department of Agriculture, Western Australia: Perth, Australia, 2004. [Google Scholar]

- Quixel. Available online: https://quixel.com/megascans (accessed on 8 January 2026).

- Xiao, Y.; Deng, H.; Li, J.; Zhou, M.; Assefa, E.; Chen, X. A Quantitative Method for the Determination of Rock Fragmentation Based on Crack Density and Crack Saturation. Sci. Rep. 2023, 13, 11747. [Google Scholar] [CrossRef]

- Research on Deformation Characteristics and Mechanisms of an Open Pit Coal Mine Landslide Event in Extremely Cold Region|Scientific Reports. Available online: https://www.nature.com/articles/s41598-025-27509-5 (accessed on 29 March 2026).

- Wang, X.; Wang, Y.; Wang, Y.; Chan, T.O. A Fast and Reliable Crack Measurement Approach Based on Perspective Projection Simulation Models and UAV Imaging for Dam and Levee Inspections. Surv. Rev. 2025, 58, 134–145. [Google Scholar] [CrossRef]

- Cine Camera Actor|Unreal Engine 4.27 Documentation|Epic Developer Community. Available online: https://dev.epicgames.com/documentation/en-us/unreal-engine/cinematic-cameras-in-unreal-engine (accessed on 7 January 2026).

- Taking Screenshots in Unreal Engine|Unreal Engine 5.7 Documentation|Epic Developer Community. Available online: https://dev.epicgames.com/documentation/en-us/unreal-engine/taking-screenshots-in-unreal-engine (accessed on 9 January 2026).

- Epic Games. FPerspectiveMatrix. Available online: https://dev.epicgames.com/documentation/en-us/unreal-engine/API/Runtime/Core/Math/FPerspectiveMatrix (accessed on 13 January 2026).

- Ultralytics Object Detection Datasets Overview. Available online: https://docs.ultralytics.com/datasets/detect/ (accessed on 13 January 2026).

- Synthetic Scientific Image Generation with VAE, GAN, and Diffusion Model Architectures. Available online: https://www.mdpi.com/2313-433X/11/8/252 (accessed on 28 November 2025).

- Describable Textures Dataset. Available online: https://www.robots.ox.ac.uk/~vgg/data/dtd/ (accessed on 29 October 2025).

- Pinkney, J. Awesome Pretrained StyleGAN2. Available online: https://github.com/justinpinkney/awesome-pretrained-stylegan2 (accessed on 10 September 2025).

- Karras, T.; Aittala, M.; Laine, S.; Härkönen, E.; Hellsten, J.; Lehtinen, J.; Aila, T. StyleGAN3: Official PyTorch Implementation of Alias-Free Generative Adversarial Networks. Available online: https://github.com/NVlabs/stylegan3 (accessed on 10 September 2025).

- Karras, T.; Aittala, M.; Hellsten, J.; Laine, S.; Lehtinen, J.; Aila, T. StyleGAN2-ADA: Official PyTorch Implementation. Available online: https://github.com/NVlabs/stylegan2-ada-pytorch (accessed on 10 September 2025).

- Heusel, M.; Ramsauer, H.; Unterthiner, T.; Nessler, B.; Hochreiter, S. GANs Trained by a Two Time-Scale Update Rule Converge to a Local Nash Equilibrium. arXiv 2018, arXiv:1706.08500. [Google Scholar] [CrossRef]

- Szegedy, C.; Vanhoucke, V.; Ioffe, S.; Shlens, J.; Wojna, Z. Rethinking the Inception Architecture for Computer Vision. arXiv 2015, arXiv:1512.00567. [Google Scholar] [CrossRef]

- Zhang, R.; Isola, P.; Efros, A.A.; Shechtman, E.; Wang, O. The Unreasonable Effectiveness of Deep Features as a Perceptual Metric. arXiv 2018, arXiv:1801.03924. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. arXiv 2015, arXiv:1409.1556. [Google Scholar] [CrossRef]

- Chicco, D.; Sichenze, A.; Jurman, G. A Simple Guide to the Use of Student’s t-Test, Mann-Whitney U Test, Chi-Squared Test, and Kruskal-Wallis Test in Biostatistics. BioData Min. 2025, 18, 56. [Google Scholar] [CrossRef] [PubMed]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning Transferable Visual Models From Natural Language Supervision. arXiv 2021, arXiv:2103.00020. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Bińkowski, M.; Sutherland, D.J.; Arbel, M.; Gretton, A. Demystifying MMD GANs. arXiv 2021, arXiv:1801.01401. [Google Scholar] [CrossRef]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical Vision Transformer Using Shifted Windows. arXiv 2021, arXiv:2103.14030. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. BERT: Pre-Training of Deep Bidirectional Transformers for Language Understanding. arXiv 2019, arXiv:1810.04805. [Google Scholar] [CrossRef]

- Ultralytics Ultralytics YOLO11. Available online: https://docs.ultralytics.com/models/yolo11/ (accessed on 20 January 2026).

- Nash, J. Non-Cooperative Games. Ann. Math. 1951, 54, 286–295. [Google Scholar] [CrossRef]

- Liu, H.; Li, X.; Wang, L.; Zhang, Y.; Wang, Z.; Lu, Q. MS-YOLOv11: A Wavelet-Enhanced Multi-Scale Network for Small Object Detection in Remote Sensing Images. Sensors 2025, 25, 6008. [Google Scholar] [CrossRef]

- Mu, D.; Guou, Y.; Wang, W.; Peng, R.; Guo, C.; Marinello, F.; Xie, Y.; Huang, Q. URT-YOLOv11: A Large Receptive Field Algorithm for Detecting Tomato Ripening Under Different Field Conditions. Agriculture 2025, 15, 1060. [Google Scholar] [CrossRef]

| Application | Downstream Task | Platform | Dataset Size | Performance |

|---|---|---|---|---|

| Construction monitoring [81] | Object detection | Unity | 7000 | mAP@[0.5:0.95]: 0.46 |

| Generic object detection [82] | Object detection | UE4 with NDDS | 1500 | Not reported |

| Autonomous driving [83] | Object detection | UE5 | 16,700 | mAP@0.5: 0.67 |

| Navigation assistance [84] | Object detection | UE4 with NDDS | 3000 | Precision: 0.92 Recall: 0.91 |

| Exercise monitoring [85] | Pose estimation | Unity | 5000 | I3D test accuracy: 0.99 |

| Grocery item detection [86] | Object detection | Unity | 400,000 | mAP@[0.5:0.95]: 0.68 |

| Warehouse object detection [87] | Semantic segmentation | Unity | 7140 | mAP@0.5: 0.65 |

| Autonomous driving [88] | Semantic segmentation | GTA V | 1,355,568 | CIoU: 0.45 |

| Animal monitoring [89] | Pose estimation | Unity | 32,000 | PCK: 0.13 |

| Construction monitoring [90] | Object detection | Unity | 6000 | Precision: 0.92 |

| Generic object detection [91] | Classification | UE4 | 31,200 | Top-1 accuracy: 0.72 |

| Crack Type | Count | Description |

|---|---|---|

| Single | 10 | Linear or slightly curved cracks with a single continuous trace |

| Bifurcated | 5 | Cracks exhibiting branching into two subsidiary traces |

| Crossed | 7 | Intersecting crack traces forming networked geometries |

| Setting | Value |

|---|---|

| Sensor Format | 36 mm × 20.25 mm |

| Aspect Ratio | 16:9 |

| Resolution | 1920 × 1080 pixels |

| Aperture | ƒ/5.6 |

| ISO | 100 |

| Shutter Speed | 1/500 s |

| Hyperparameter | Value |

|---|---|

| Learning Rate | 0.002 |

| Optimizer | Adam (β1 = 0, β2 = 0.99, ε = 1 × e−8) |

| R1 Regularization Weight | 10.0 |

| Effective R1 Weight | 160 |

| Path Length Regularization Interval | 4 iterations |

| R1 Regularization Interval | 16 iterations |

| ADA Target | 0.60 (60%) |

| Loss Function | Non-saturating logistic loss |

| Dataset | Total Images | Training Images | Validation Images |

|---|---|---|---|

| UE5O | 20,000 | 17,000 | 3000 |

| UE5O + SG2 | 40,000 | 34,000 | 6000 |

| UE5O + SG2-FFHQ | 40,000 | 34,000 | 6000 |

| UE5O + SG2-DTD | 40,000 | 34,000 | 6000 |

| Hyperparameter | Value |

|---|---|

| Model | YOLOv11m |

| Initialization Weights | COCO |

| Input Resolution | 512 × 512 pixels |

| Batch Size | 64 |

| Epochs | 300 |

| Optimizer | Adam (β1 = 0.90, β2 = 0.99) |

| Initial Learning Rate | 0.001 |

| Learning Rate Schedule | Cosine decay |

| Warmup Epochs | 3 |

| Weight Decay | 0.0005 |

| Data Augmentation | On (scaling, translation, flip, mosaic) |

| Configuration | FID Score | Mean LPIPS | Median LPIPS | LPIPS Range | LPIPS vs. UE5O |

|---|---|---|---|---|---|

| SG2 (Baseline) | 15.99 | 0.452 ± 0.094 | 0.456 | [0.011, 0.725] | +2.80% |

| SG2 + FFHQ | 12.75 | 0.472 ± 0.090 | 0.477 | [0.140, 0.709] | +7.49% |

| SG2 + DTD | 15.88 | 0.457 ± 0.092 | 0.462 | [0.115, 0.735] | +3.96% |

| Comparison | Holm-Adjusted p-Value | Rank-Biserial Effect Size r |

|---|---|---|

| SG2 (Baseline) vs. UE5O | 1.74 × 10−34 | 0.0995 |

| SG2 + FFHQ vs. UE5O | 6.89 × 10−171 | 0.2276 |

| SG2 + DTD vs. UE5O | 8.70 × 10−70 | 0.1442 |

| Dataset | Mean MMD2 | 95% CI | Change vs. UE5O |

|---|---|---|---|

| UE5O | 0.000472 | 0.000446–0.000503 | - |

| SG2 (Baseline) | 0.000487 | 0.000456–0.000516 | −3.20% |

| SG2 + FFHQ | 0.000404 | 0.000379–0.000425 | +14.40% |

| SG2 + DTD | 0.000480 | 0.000456–0.000501 | −1.70% |

| Metric | Value |

|---|---|

| Mean IoU | 0.931 |

| Median IoU | 0.958 |

| F1@0.5 | 0.990 |

| F1@0.75 | 0.960 |

| Threshold | Mean IoU | Median IoU | F1@0.5 | F1@0.75 |

|---|---|---|---|---|

| 0.30 | 0.897 | 0.945 | 0.894 | 0.847 |

| 0.35 | 0.909 | 0.945 | 0.946 | 0.889 |

| 0.40 | 0.900 | 0.945 | 0.945 | 0.896 |

| 0.45 | 0.918 | 0.947 | 0.894 | 0.856 |

| Dataset | Precision | Recall | F1@0.5 | AP@0.5 (95% CI) | AP@[0.5:0.95] (95% CI) |

|---|---|---|---|---|---|

| UE5O | 0.692 | 0.729 | 0.710 | 0.792 (0.748–0.849) | 0.536 (0.475–0.595) |

| UE5O + SG2 | 0.792 | 0.902 | 0.844 | 0.922 (0.876–0.948) | 0.706 (0.649–0.744) |

| UE5O + SG2-FFHQ | 0.808 | 0.850 | 0.829 | 0.911 (0.880–0.939) | 0.722 (0.680–0.763) |

| UE5O + SG2-DTD | 0.730 | 0.828 | 0.776 | 0.858 (0.805–0.895) | 0.638 (0.593–0.689) |

| Configuration | FP Count | FN Count |

|---|---|---|

| UE5O | 53 | 44 |

| UE5O + SG2 | 27 | 20 |

| UE5O + SG2-FFHQ | 42 | 8 |

| UE5O + SG2-DTD | 48 | 31 |

| Dataset | FID | Mean LPIPS | MMD2 | AP@0.5 | AP@[0.5:0.95] |

|---|---|---|---|---|---|

| UE5O | - | 0.440 | 0.000472 | 0.792 | 0.536 |

| UE5O + SG2 | 15.99 | 0.452 | 0.000487 | 0.922 | 0.706 |

| UE5O + SG2-FFHQ | 12.75 | 0.472 | 0.000404 | 0.911 | 0.722 |

| UE5O + SG2-DTD | 15.88 | 0.457 | 0.000480 | 0.858 | 0.638 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Le Roux, R.; Khaksar, S.; Sepehri, M.; Murray, I. A Hybrid Game Engine–Generative AI Framework for Overcoming Data Scarcity in Open-Pit Crack Detection. Mach. Learn. Knowl. Extr. 2026, 8, 99. https://doi.org/10.3390/make8040099

Le Roux R, Khaksar S, Sepehri M, Murray I. A Hybrid Game Engine–Generative AI Framework for Overcoming Data Scarcity in Open-Pit Crack Detection. Machine Learning and Knowledge Extraction. 2026; 8(4):99. https://doi.org/10.3390/make8040099

Chicago/Turabian StyleLe Roux, Rohan, Siavash Khaksar, Mohammadali Sepehri, and Iain Murray. 2026. "A Hybrid Game Engine–Generative AI Framework for Overcoming Data Scarcity in Open-Pit Crack Detection" Machine Learning and Knowledge Extraction 8, no. 4: 99. https://doi.org/10.3390/make8040099

APA StyleLe Roux, R., Khaksar, S., Sepehri, M., & Murray, I. (2026). A Hybrid Game Engine–Generative AI Framework for Overcoming Data Scarcity in Open-Pit Crack Detection. Machine Learning and Knowledge Extraction, 8(4), 99. https://doi.org/10.3390/make8040099