1. Introduction

Across advanced democracies, a striking and recurring pattern has emerged in the early twenty-first century: public opinion increasingly diverges not merely along economic lines, but along affective and identity-based fault lines that resist conventional left–right categorization. The rise of authoritarian populism in Western Europe, Brexit in the United Kingdom, and the polarization dynamics of US electoral politics share a common psychological substrate—citizens systematically overweight losses relative to gains, anchor judgments on salient threats, and process information through identity-confirming filters [

1,

2]. These patterns are not idiosyncratic to any one country but represent the macro-level consequences of universal cognitive heuristics operating within specific institutional and informational contexts. Understanding why citizens form the judgments they do—and how those judgments can be systematically biased—is thus among the most pressing questions in contemporary political science and behavioral economics.

American public opinion is widely understood to be structured by partisan identity, with Democrats and Republicans occupying increasingly polarized positions across policy domains [

3,

4]. This conventional wisdom, grounded in decades of survey research documenting affective polarization and ideological sorting [

5,

6], treats partisanship as the primary organizing principle of political cognition. Yet this partisan-centric framework may obscure deeper psychological mechanisms and cross-cutting cleavages that shape how citizens form judgments about economic conditions, assess threats, and consume political information.

Drawing on dual-process theories of cognition from behavioral economics [

7,

8,

9], we argue that public opinion structure is better understood as the emergent product of universal cognitive heuristics operating within specific informational and demographic contexts. Kahneman’s dual-process model distinguishes two modes of cognition:

System 1 is fast, automatic, and affect-driven—it produces rapid, intuitive judgments through heuristics such as availability (judging probability by ease of mental retrieval), representativeness, and anchoring;

System 2 is slow, deliberative, and effortful—it generates reasoned analysis but requires cognitive resources that may be depleted or bypassed under time pressure or high emotional salience [

9,

10]. In political contexts,

System 1 dominates: most citizens evaluate candidates, economic conditions, and threats rapidly and affectively rather than through careful deliberation. This dominance creates systematic, predictable biases—loss aversion, availability-driven threat inflation, partisan motivated reasoning—that are the empirical focus of this study. If partisan identity functions not as a foundational preference but as one among several competing System 1 heuristics, then opinion structures may crosscut conventional left–right divides, with individuals sharing similar psychological orientations but differing partisan labels clustering together.

This study employs hierarchical unsupervised machine learning to uncover latent structures in the 2025 National Public Opinion Reference Survey (NPORS), a nationally representative dataset comprising 5022 respondents and 65 variables spanning political identity, economic perception, digital media behavior, threat assessment, and demographic characteristics. By applying K-means clustering with systematic model selection and multi-dimensional validation, we identify eight distinct opinion segments within the American mainstream that defy simple partisan categorization.

Three principal findings emerge. First, we confirm the operation of Kahneman and Tversky’s [

11] loss aversion in political economic perception empirically: pessimists outnumber optimists by a 1.14:1 ratio, and this asymmetry persists even among respondents rating current conditions positively. Second, we quantify partisan-motivated reasoning [

12,

13] by directly comparing economic perceptions across party lines, revealing a 13.15 percentage-point gap among respondents with identical financial circumstances. Third, and most strikingly, we reject the echo chamber hypothesis [

14,

15]: heavy multi-platform digital users exhibit

reduced rather than amplified partisan bias, contradicting predictions that algorithmic curation reinforces ideological insularity.

Additionally, we demonstrate that crime safety perception—an affectively charged, non-economic threat assessment—emerges as the strongest predictor of economic bias, surpassing party affiliation itself. This finding substantiates Kahneman’s [

16] availability heuristic operating in naturalistic political cognition: salient, emotionally vivid perceptions anchor downstream judgments even when objectively unrelated.

Rationale. Despite the rich literature on cognitive biases in laboratory settings and partisan polarization in survey research, three critical gaps persist. First, population-level naturalistic evidence for loss aversion in prospective economic perception—as distinct from retrospective or experimental contexts—remains limited. Second, most motivated reasoning research treats partisan identity as monolithic, failing to account for within-party heterogeneity or cross-party commonalities. Third, the empirical debate over echo chambers has largely relied on platform-level behavioral trace data or controlled experiments, leaving the relationship between multi-platform engagement and individual-level cognitive bias poorly understood. This study addresses all three gaps using a large, nationally representative dataset and a methodology that does not impose a priori partisan categories.

Novelty and Theoretical Contribution. This paper makes three novel contributions. First, it provides the first large-scale naturalistic test of loss aversion in prospective economic perception using distributional asymmetry as evidence, extending Kahneman and Tversky’s laboratory findings to real-world political opinion formation. Second, it introduces a hierarchical two-stage clustering strategy—coarse outlier detection followed by fine-grained sub-clustering of the dominant segment—as a methodological template for uncovering latent opinion heterogeneity in complex survey data without imposing a priori categories. Third, it empirically challenges simple echo chamber assumptions at the individual level, showing that multi-platform engagement correlates with reduced partisan bias—a finding that complicates prevailing narratives about algorithmic polarization and has direct implications for platform governance.

Stakeholder Relevance. The findings carry concrete implications for multiple audiences: policymakers gain evidence that loss-framed communication systematically outperforms gain-framed equivalents, and that threat perception—not just economic fundamentals—shapes public economic sentiment; platform designers and regulators gain a basis for questioning interventions premised on echo chamber assumptions; political campaigns and advocacy organizations gain a typology of audience segments differentiated by cognitive profiles rather than demographic categories; and democratic theorists and researchers gain evidence that cognitive heuristics—particularly availability bias and loss aversion—may systematically degrade the quality of retrospective accountability in elections.

Methodologically, this study contributes to the growing literature integrating computational social science methods with substantive political psychology [

17,

18,

19]. Unsupervised learning permits the discovery of latent opinion structures without imposing a priori partisan categories, addressing a fundamental limitation of conventional survey analysis that pre-stratifies respondents by party identification. Our hierarchical two-stage approach—coarse segmentation followed by fine-grained sub-clustering of the dominant segment—balances the detection of marginal outlier populations with nuanced differentiation within the mainstream, a methodological innovation applicable to other large-scale survey data.

The implications extend beyond academic debates to practical questions of democratic quality, political communication, and platform governance. If partisan labels mask deeper psychological commonalities, then interventions targeting cognitive biases and information quality may prove more effective than those treating Democrats and Republicans as fundamentally distinct populations. If multi-platform engagement attenuates rather than amplifies bias, then regulatory proposals premised on echo chamber assumptions require reconsideration. If threat perception drives economic judgment more powerfully than party affiliation, then the affective climate shaped by media coverage of crime and disorder may systematically contaminate retrospective accountability mechanisms.

The remainder of this paper proceeds as follows.

Section 2 reviews theoretical frameworks from behavioral economics and political psychology, synthesizing the literature on loss aversion, motivated reasoning, availability bias, and echo chambers while identifying gaps that unsupervised learning can address.

Section 3 describes the NPORS dataset and justifies variable selection using behavioral economics principles.

Section 4 details our hierarchical clustering methodology, model selection procedures, and validation strategies.

Section 5 presents results, emphasizing theoretically meaningful clusters that challenge partisan binaries.

Section 6 discusses mechanisms, limitations, and implications for democratic theory and platform governance.

Section 7 concludes with directions for future research integrating causal identification, cross-national comparison, and richer measurement of information exposure.

2. Literature Review

2.1. Behavioral Economics and Political Cognition

The application of behavioral economics to political judgment originates in Kahneman and Tversky’s [

11,

20] prospect theory, which posits that individuals evaluate outcomes relative to reference points rather than in absolute terms, exhibit diminishing sensitivity to marginal changes, and weigh potential losses more heavily than equivalent gains. This framework, initially developed to explain anomalies in choice under uncertainty, has profound implications for political behavior: if voters evaluate incumbent performance relative to an expected baseline and overweight negative deviations, then loss-framed messaging should systematically outperform gain-framed equivalents [

21,

22].

Kahneman’s [

7] dual-process model distinguishes between

System 1 (fast, automatic, affect-driven) and

System 2 (slow, deliberative, effortful) cognition. System 1 operates continuously and unconsciously, generating rapid impressions through the following heuristics:

availability (judging frequency by ease of mental retrieval),

representativeness (categorization by prototype similarity), and

anchoring (insufficient adjustment from salient reference points) [

8,

9]. System 2, by contrast, engages consciously and effortfully; it can override System 1 outputs but does so incompletely and only when cognitive resources are available. Crucially, System 1 processing is the

default mode for most citizens most of the time [

10]. These heuristics produce systematic biases that are

predictable and

context-dependent. In this study, we operationalize System 1 influence through three empirical tests: (a) loss aversion in forward-looking economic perception (tested via distributional asymmetry in pessimism/optimism ratios), (b) availability heuristic in the primacy of crime safety perception over objective economic indicators (tested via Random Forest feature importance), and (c) partisan motivated reasoning as identity-driven information filtering (tested via direct comparison of economic perceptions across party lines among respondents with identical financial circumstances).

The availability heuristic is particularly consequential for public opinion formation. Events or threats that are emotionally vivid, recently experienced, or extensively covered by the media are judged more probable than statistical base rates warrant [

16]. In political contexts, this mechanism explains why salient issues—crime waves, terrorist attacks, economic crises—dominate voter attention disproportionately to their objective incidence [

23,

24]. Media priming research demonstrates that by making certain considerations cognitively accessible, news coverage systematically shifts the weights citizens assign to different evaluative criteria when forming candidate assessments or policy preferences [

25,

26].

Loss aversion, formalized as the asymmetric value function wherein losses loom larger than equivalent gains (

for

), has been empirically validated in political economic perception. Boettcher and Cobb [

27] demonstrate that framing foreign policy interventions as preventing losses increases public support relative to equivalent gain frames. Mercer [

22] argues that prospect theory explains patterns of risk-seeking behavior among states facing losses and risk-averse behavior in the domain of gains, with implications for conflict escalation and crisis bargaining. In electoral accountability contexts, voters punish incumbents more severely for economic downturns than they reward them for equivalent improvements [

28,

29], consistent with loss aversion dominating retrospective evaluation.

However, direct population-level evidence for loss aversion in prospective economic perception—the forward-looking judgments citizens make about future economic trajectories—remains limited. Existing studies focus on experimental manipulations of frame equivalence or historical correlations between aggregate economic indicators and vote shares. Our contribution is to document loss aversion through distributional asymmetry in a large, nationally representative survey: if pessimists systematically outnumber optimists even among those rating current conditions positively, this constitutes strong naturalistic evidence that losses loom larger in mental simulations of future states.

2.2. Partisan Motivated Reasoning and Affective Polarization

Motivated reasoning refers to the tendency to process information in ways that confirm preexisting beliefs or desired conclusions [

30]. In political contexts, partisan identity functions as a powerful motivational force, shaping attention, memory encoding, and evidence evaluation in systematically biased directions [

12,

13]. Taber and Lodge’s [

12] seminal work demonstrates that partisans engage in confirmation bias (seeking congruent information), disconfirmation bias (scrutinizing incongruent information more critically), and prior attitude effect (interpreting ambiguous evidence as supporting prior beliefs).

The mechanism operates through affective tagging: political objects (candidates, parties, policies) are automatically associated with positive or negative valence, and this affect drives downstream cognitive processing [

31]. When encountering new information, partisans unconsciously retrieve affectively congruent associations, which bias interpretation even before deliberate reasoning occurs. This “hot cognition” model explains why fact-checking and corrective information often fail to change partisan beliefs and sometimes produce backfire effects [

32], wherein corrections paradoxically strengthen misperceptions among committed partisans.

Empirical evidence for partisan motivated reasoning is extensive. Lord, Ross, and Lepper’s [

33] classic study on capital punishment attitudes demonstrates biased assimilation: partisans evaluate identical evidence as supporting their priors, leading to belief polarization rather than convergence. In contemporary contexts, studies document partisan divergence in perceptions of objective economic indicators [

34,

35], crime rates [

36], and even meteorological phenomena such as local temperature trends [

37].

Affective polarization—the tendency to view partisan out-groups with increasing hostility and in-groups with increasing warmth—has intensified substantially since the 1970s [

4,

38]. Mason [

3] distinguishes between ideological polarization (divergence on policy positions) and affective polarization (emotional antipathy toward opponents), arguing that the latter has grown more rapidly and independently. This “uncivil agreement” phenomenon, wherein partisans dislike opponents intensely despite modest ideological differences, suggests that partisan identity operates as a social identity rather than a coherent policy platform [

39,

40].

Sunstein’s [

14,

41] work on group polarization demonstrates that like-minded individuals engaging in deliberation move toward more extreme positions, driven by informational cascades (assuming others possess private information) and reputational pressures (signaling loyalty through extremity). In digital environments, these dynamics may be amplified by algorithmic curation, producing “echo chambers” wherein users encounter predominantly attitude-consistent information [

42].

However, a critical gap remains: most motivated reasoning research treats partisanship as a binary or categorical variable, collapsing within-party heterogeneity and obscuring cross-cutting commonalities. If motivated reasoning strength varies systematically with demographic characteristics, information exposure, or personality traits, then aggregating all Democrats or all Republicans may mask meaningful sub-clusters exhibiting different cognitive profiles. Unsupervised learning permits the discovery of these latent segments without imposing partisan categories a priori.

2.3. Echo Chambers, Filter Bubbles, and Algorithmic Polarization

The echo chamber hypothesis posits that digital media platforms create informational environments wherein users encounter predominantly ideologically congruent content, reinforcing preexisting beliefs and insulating them from cross-cutting perspectives [

14,

15]. Pariser’s [

15] “filter bubble” concept emphasizes algorithmic personalization: recommendation systems, search engines, and social media feeds optimize for engagement by surfacing content predicted to match user preferences, inadvertently creating individually customized echo chambers.

Sunstein [

14,

42] argues that consumer sovereignty in information selection—the ability to construct entirely personalized news diets—undermines the shared informational commons essential for democratic deliberation. If citizens self-select into ideologically homogeneous information environments and algorithmic intermediaries amplify these preferences, the result is fragmentation, polarization, and the breakdown of common factual ground necessary for productive disagreement.

Empirical evidence on echo chambers is mixed. Some studies document ideological segregation in online networks: Adamic and Glance [

43] find minimal linking between liberal and conservative political blogs; Barbera [

44] demonstrates homophily in Twitter follower networks; and Bakshy, Messing, and Adamic [

45] report that Facebook users encounter ideologically aligned content disproportionately to the platform’s overall distribution.

However, other research challenges the echo chamber thesis. Flaxman, Goel, and Rao [

46] find that online news consumption is more diverse than offline consumption, though algorithmic filtering does modestly increase ideological segregation. Guess et al. [

47] demonstrates that selective exposure to partisan websites is concentrated among a small subset of highly engaged users, with most Americans exhibiting minimal ideological segregation in their browsing behavior. Boxell, Gentzkow, and Shapiro [

48] show that affective polarization has increased most among demographic groups with the lowest internet usage (older Americans), which is inconsistent with the hypothesis that digital platforms drive polarization.

Bail et al.’s [

49] field experiment finds that exposure to counter-attitudinal content on Twitter

increases rather than decreases polarization, as partisans engage in motivated reasoning to discount incongruent information. This “boomerang effect” suggests that simply breaking filter bubbles may be insufficient or counterproductive if users lack cognitive tools or motivational orientations to process cross-cutting information fairly.

Recent meta-analytic work by Kubin and von Sikorski [

50] finds small to moderate effects of echo chambers on polarization, moderated by platform type, user characteristics, and outcome measures. The heterogeneity of results suggests that echo chamber effects are contextually contingent rather than universal, raising the question:

under what conditions do users experience echo chambers, and what individual-level characteristics predict resistance to or amplification of filter bubble effects?Our contribution is to test the echo chamber hypothesis at the individual level using multi-platform engagement as a proxy for informational diversity. If echo chambers operate as theorized, heavy platform users should exhibit amplified partisan bias due to cumulative exposure to algorithmically curated, ideologically congruent content. Conversely, if cross-platform engagement introduces accidental diversity or signals media literacy, we may observe an inverse relationship. The clustering approach permits the discovery of sub-populations exhibiting different platform–bias relationships without imposing linearity assumptions.

2.4. Unsupervised Learning in Political Science

Computational social science has increasingly adopted unsupervised learning methods to uncover latent structures in high-dimensional political data [

17,

19]. Clustering algorithms partition observations into homogeneous groups based on distance metrics, revealing patterns not specified a priori by researchers. This inductive approach complements hypothesis-driven research by surfacing unexpected configurations and challenging theoretical assumptions embedded in conventional variable coding.

In text-as-data applications, topic models such as Latent Dirichlet Allocation (LDA) and Structural Topic Models (STM) identify thematic structures in a large corpora of political documents [

18,

51]. Grimmer and Stewart [

17] emphasize that unsupervised methods are

discovery tools requiring substantive validation rather than black-box classifications. Quinn et al. [

52] apply mixture models to Supreme Court opinions; Roberts et al. [

18] model blog posts to uncover issue attention dynamics; and Lauderdale and Herzog [

53] use item-response models to estimate latent ideology.

In survey research, clustering has been applied to identify voter typologies [

54], map ideological spaces [

55], and segment public opinion on multi-dimensional issues [

56]. However, most applications impose partisan or ideological categories as covariates rather than treating them as emergent outcomes. By clustering on economic perceptions, digital behavior, threat assessments, and demographics

without fixing partisan identity, we invert the conventional analytic strategy and test whether partisanship predicts cluster membership or whether other dimensions dominate.

Methodologically,

K-means clustering minimizes within-cluster variance (inertia) through iterative centroid reassignment [

57]. Model selection requires balancing cluster coherence (high within-cluster similarity) against parsimony (avoiding overfitting with excessive clusters). Silhouette Scores [

58] measure how similar each observation is to its own cluster versus the nearest alternative cluster; Davies–Bouldin Index [

59] quantifies the ratio of within-cluster scatter to between-cluster separation; and elbow plots visualize diminishing marginal gains in explained variance.

Validation strategies include dimensionality reduction (PCA, t-SNE) to visualize cluster separation in low-dimensional projections, statistical tests (ANOVA,

) to confirm significant differences across clusters, and qualitative interpretation assessing substantive coherence [

60]. Our hierarchical two-stage approach—identifying outliers before sub-clustering the mainstream—addresses a common limitation wherein dominant clusters obscure meaningful heterogeneity within large segments.

2.5. Public Opinion Formation and Democratic Accountability

Classical democratic theory assumes that citizens form preferences through rational deliberation, update beliefs in response to new information, and hold governments accountable through retrospective voting [

61,

62]. This rational choice framework treats preferences as stable and exogenous, with opinion change reflecting genuine persuasion or Bayesian updating from observed outcomes.

Converse’s [

63] devastating critique demonstrates that the mass public lacks ideologically constrained belief systems, with many respondents expressing non-attitudes—random responses to survey questions on issues they have not considered. Zaller’s [

64] Receive–Accept–Sample (RAS) model posits that opinion expression reflects sampling from a heterogeneous mix of accessible considerations, shaped by elite discourse and media framing rather than stable underlying preferences. This constructivist view suggests that public opinion is labile, context-dependent, and manipulable through priming and framing [

65].

The tension between rational accountability and psychological malleability raises normative questions: if citizens’ judgments are systematically biased by cognitive heuristics, partisan motivated reasoning, and availability-driven threat perceptions, can retrospective voting effectively discipline incumbents? If economic pessimism reflects loss aversion rather than actual conditions, or if partisan filters distort perception of objective indicators, electoral mechanisms may reward or punish officeholders for outcomes beyond their control [

66].

Achen and Bartels [

66] argue that the “folk theory of democracy”—wherein informed citizens deliberate, form coherent preferences, and vote accordingly—is empirically false. Voters instead rely on social identities, partisan heuristics, and emotional responses to recent events. Their evidence on “shark attacks and incumbent punishment” demonstrates that irrelevant negative events (shark attacks in New Jersey) predict vote losses for incumbents, illustrating the capriciousness of retrospective accountability when availability bias dominates.

Our findings on threat perception as the dominant predictor of economic bias corroborate this pessimistic view: if crime safety assessments—objectively unrelated to macroeconomic performance—anchor economic evaluations, then voters may conflate distinct domains through affective contagion rather than rational updating. However, the observed heterogeneity in bias strength across sub-clusters suggests that not all voters are equally susceptible, raising the possibility that interventions targeting media literacy or deliberative capacity could improve judgment quality within specific segments.

2.6. Summary and Research Questions

This literature review identifies three theoretical tensions. First, behavioral economics predicts that loss aversion, availability bias, and other System 1 heuristics systematically bias political judgment, yet direct population-level evidence for these mechanisms in prospective economic perception remains limited. Second, partisan motivated reasoning research treats partisanship as a monolithic category, potentially obscuring within-party heterogeneity and cross-cutting psychological commonalities. Third, the echo chamber hypothesis predicts that digital platform engagement amplifies ideological insularity, yet empirical findings are mixed and often measure exposure rather than cognitive effects.

Unsupervised learning addresses these gaps by permitting the discovery of latent opinion structures without imposing a priori partisan categories, testing whether behavioral economics mechanisms vary systematically across sub-populations, and examining the relationship between multi-platform engagement and bias at the individual level. Our research questions are:

- 1.

Do Americans exhibit loss aversion in prospective economic perception, with pessimists systematically outnumbering optimists even among those rating current conditions positively?

- 2.

What is the magnitude of partisan motivated reasoning in economic perception after controlling for objective financial circumstances, and does this gap vary across demographic or behavioral sub-clusters?

- 3.

Does multi-platform digital engagement amplify or attenuate partisan bias, and what mechanisms (accidental diversity, media literacy, selection effects) explain the observed relationship?

- 4.

Is threat perception (crime safety assessment) a stronger predictor of economic bias than partisan identity, consistent with availability heuristic dominance over deliberative reasoning?

- 5.

Do latent opinion clusters crosscut conventional partisan boundaries, revealing segments characterized by shared psychological orientations but differing party affiliations?

By integrating behavioral economics theory, computational methods, and large-scale survey data, we advance toward a more realistic account of public opinion formation—one that recognizes both the universality of cognitive biases and the contextual variability of their political consequences.

3. Dataset Description

This study employs data from the 2025 National Public Opinion Reference Survey (NPORS), a nationally representative survey conducted by the Pew Research Center between February and May 2025. The NPORS is designed to provide a comprehensive snapshot of American public opinion across demographic, behavioral, and attitudinal dimensions, making it particularly well-suited for identifying latent opinion structures that may not align with conventional partisan or ideological taxonomies.

The dataset comprises 5022 respondents and 65 variables spanning multiple domains: political identity and civic engagement, economic perceptions and financial well-being, social media platform usage and digital behavior, risk and threat perception, trust in government and social cohesion, religious observance, and standard demographic covariates including age, education, gender, race/ethnicity, and geographic community type. Survey responses were collected via both web and telephone modes to ensure broad demographic coverage. Interview dates ranged from early February through late May 2025, with the majority of responses concentrated in March and April.

The survey architecture emphasizes behavioral and attitudinal heterogeneity rather than simple demographic stratification. Economic perception variables capture not only current assessments of personal and community-level economic conditions but also forward-looking expectations about future economic trajectories—an essential distinction for analyses grounded in behavioral economics frameworks such as prospect theory and loss aversion. Political identity is measured through party affiliation, partisan strength, voter registration status, and 2024 presidential vote choice, enabling fine-grained distinctions between committed partisans, independents, and politically disengaged respondents.

Digital behavior variables extend beyond binary usage indicators to quantify multi-platform engagement across eleven major social media platforms, including Facebook, YouTube, Instagram, X (formerly Twitter), TikTok, WhatsApp, Reddit, Snapchat, Threads, Bluesky, and Truth Social. This granularity enables the examination of cross-platform information exposure patterns and their relationship to cognitive biases—a critical capability given ongoing debates over echo chambers and algorithmic polarization.

Risk perception is operationalized through assessments of crime safety and expressed concerns about various societal threats. Trust and governance variables measure attitudes toward national unity, government protection efficacy, and paternalism. Religious importance serves as a proxy for value-based identity. Civic engagement is captured through self-reported voting behavior in 2024 and the frequency of online political discussion.

Missing data rates vary by variable, with notable missingness in party affiliation (63.22%), linguistic initial language (44.88%), and post-election vote choice (20.89%). The behavioral and attitudinal variables central to our analysis exhibit substantially lower missingness rates (typically below 5%), and missing values were imputed using median substitution for continuous variables to preserve sample size while minimizing bias.

The NPORS dataset is particularly valuable for unsupervised segmentation approaches because it captures multi-dimensional heterogeneity at the individual level, permitting the discovery of latent clusters that may crosscut traditional demographic or partisan boundaries. Unlike surveys optimized for hypothesis testing within predefined subgroups, the NPORS is structured to reveal emergent patterns of co-occurring beliefs, behaviors, and identities—precisely the objective of clustering-based opinion structure analysis.

4. Methodology

Our analytical approach integrates behavioral economics theory with unsupervised machine learning to uncover latent structures in American public opinion. The methodology proceeds in three stages: feature engineering and preprocessing, clustering optimization, and validation through dimensionality reduction and statistical testing.

4.1. Theoretical Framework and Feature Selection

We adopt a behavioral economics lens, specifically drawing on Kahneman and Tversky’s dual-process theory of cognition and on Sunstein’s work on choice architecture and partisan-motivated reasoning. This theoretical orientation motivates the selection of variables that capture not only expressed preferences but also the psychological heuristics and cognitive biases that shape those preferences.

Variables were categorized into nine theoretically motivated domains: economic perception (current and future assessments of personal and community economic conditions), political identity (party affiliation, partisan intensity, voting behavior), social media (platform-specific usage across eleven services), digital behavior (internet frequency, frequency of political discussion online), risk perception (crime safety assessments), trust and governance (national unity, government protection, paternalism attitudes), religious behavior (importance of religion), demographics (age, education, gender, race/ethnicity, community type), and civic engagement (2024 vote participation).

This feature space was deliberately constructed to permit the identification of clusters characterized by distinct combinations of economic outlook, threat perception, political alignment, and information consumption patterns—alignments that may contradict simple left–right or demographic-based classifications.

4.2. Preprocessing and Normalization

Categorical variables were numerically encoded using ordinal mappings where scale was theoretically meaningful (e.g., for economic ratings, 1 = Excellent, 2 = Good, 3 = Only Fair, 4 = Poor) and binary encodings otherwise. Social media usage was aggregated into a continuous platform count variable to capture overall digital engagement intensity. Missing values were imputed via median substitution for continuous features and mode imputation for categorical features, with sensitivity analyses confirming robustness to alternative imputation strategies.

All features were standardized to zero mean and unit variance using StandardScaler to ensure equal weighting in distance-based clustering algorithms. This normalization is essential given the heterogeneous measurement scales across economic perceptions (ordinal ratings), platform counts (discrete integers), and demographic variables (categorical encodings).

4.3. Clustering Algorithm and Model Selection

We employed

K-means clustering with Euclidean distance, a computationally efficient and interpretable algorithm well-suited to datasets with continuous or ordinal features. However,

K-means rests on several assumptions that merit explicit discussion [

67].

Spherical cluster geometry:

K-means minimizes within-cluster variance under a Euclidean metric, implicitly assuming convex, roughly equal-sized clusters. Opinion data may contain elongated or irregularly shaped clusters that

K-means would artificially segment or merge. We address this by validating cluster separation under both linear (PCA) and nonlinear (t-SNE) dimensionality reduction; the consistency of separation across both methods reduces concern that results are artifacts of spherical assumptions.

Sensitivity to initialization: With

K-means, different random initializations can yield different local minima. We mitigated this by setting

n_init=10 during model selection and

n_init=20 for the final clustering fit, retaining the solution with the lowest inertia; a deterministic label-alignment step (sorting sub-clusters by decreasing size) was applied after fitting to ensure color assignments are reproducible across runs.

Sensitivity to feature scaling:

K-means distances are dominated by variables with larger numerical ranges if features are not normalized; we applied

StandardScaler to ensure equal contribution across all features.

Sensitivity to outliers: Extreme observations can distort centroids. The hierarchical two-stage approach—first identifying outliers at the coarse level, then sub-clustering the mainstream—directly mitigates outlier contamination. Alternative clustering algorithms (DBSCAN, Gaussian Mixture Models) were considered but not applied; their suitability for high-dimensional survey data with mixed ordinal/categorical features is less established in the political science literature.

Regarding the dominant cluster concern: the emergence of a 98.5% mainstream cluster is expected, not anomalous, for a nationally representative opinion survey on a relatively homogeneous society. A single dominant cluster reflects the empirical reality that most Americans share a broadly similar multi-dimensional opinion profile (moderate economic outlook, mixed partisanship, moderate digital engagement), with meaningful variation concentrated within this mainstream. The hierarchical sub-clustering strategy is specifically designed to address the limited segmentation power of coarse-level clustering: by decomposing the dominant cluster in a second stage, we reveal eight internally differentiated sub-segments that would be invisible to a single-pass approach. The Silhouette Score for the sub-clustering solution (0.75) confirms that these sub-segments are substantively distinct rather than arbitrary algorithmic artifacts.

The optimal number of clusters was determined via systematic evaluation of using three criteria: (1) Silhouette Score, which measures within-cluster cohesion relative to between-cluster separation; (2) Davies–Bouldin Index, which quantifies the ratio of within-cluster scatter to between-cluster distance; and (3) Elbow Method based on the within-cluster sum of squared errors (inertia). All metrics were computed with random_state=42 and n_init=10 to ensure reproducibility.

For the full dataset, emerged as optimal, yielding a Silhouette Score of 0.812 (indicating strong cluster separation) and a Davies–Bouldin Index of 1.965 (lower values indicate better separation). Visual inspection of the elbow plot confirmed diminishing marginal returns beyond . Importantly, the three-cluster solution revealed a dominant mainstream cluster (Cluster 0, representing 98.5% of respondents) and two much smaller outlier clusters, suggesting that while most Americans share a common opinion structure, meaningful subpopulations exist at the margins.

Recognizing that the mainstream cluster likely harbored internal heterogeneity masked by the presence of extreme outliers, we conducted a second-stage sub-clustering analysis exclusively on Cluster 0. Applying the same optimization procedure, we identified as optimal for sub-clustering (Silhouette Score: 0.75, Davies–Bouldin Index: 0.64). This hierarchical approach—coarse segmentation followed by fine-grained decomposition of the dominant segment—permits both identification of outlier groups and nuanced differentiation within the mainstream.

4.4. Dimensionality Reduction and Visualization

To facilitate interpretation and visualization, we applied two complementary dimensionality reduction techniques: Principal Component Analysis (PCA) and t-Distributed Stochastic Neighbor Embedding (t-SNE). PCA, a linear method that maximizes explained variance, was used to project the 14-dimensional feature space onto two principal components, accounting for 27.5% of the total variance. PCA visualizations revealed global cluster structure and confirmed separation between the three main clusters.

t-SNE, a nonlinear manifold learning technique optimized to preserve local neighborhood structures, was applied with perplexity=30 and n_iter=1000 (the scikit-learn default, ensuring full convergence of the gradient descent optimization) to generate two-dimensional embeddings that emphasize within-cluster density and between-cluster boundaries. The t-SNE projections revealed tighter, more visually distinct clusters than PCA, particularly for the sub-clustering solution, and served as a robustness check confirming that cluster assignments were not artifacts of linear assumptions.

4.5. Statistical Validation

Cluster distinctiveness was validated through one-way ANOVA for continuous variables (economic perceptions, social media usage, age) and tests of independence for categorical variables (party affiliation). Results indicated highly significant differences across clusters for all tested variables ( for economic ratings, future expectations, crime safety, social media usage, age, and education; for party composition), confirming that the clusters capture substantively meaningful heterogeneity rather than statistical noise.

5. Results

The clustering analysis yields three principal findings that challenge conventional understandings of American political opinion structure: (1) the empirical confirmation of loss aversion and partisan motivated reasoning as organizing principles of economic perception, (2) the rejection of the echo chamber hypothesis among heavy digital platform users, and (3) the identification of threat perception—rather than partisanship—as the dominant predictor of cognitive bias.

5.1. Dominant Mainstream Heterogeneity and Outlier Segments (RQ5)

The initial three-cluster solution partitions respondents into a dominant mainstream cluster (Cluster 0: [98.5%]) and two small outlier clusters characterized by extreme demographic and behavioral profiles. Cluster 1 ( [0.3%]) consists of older, predominantly Independent voters with a pessimistic economic outlook, minimal digital engagement (2.1 platforms on average), and a high voting propensity (88%). Cluster 2 ( [1.2%]) similarly skews older with mixed or independent partisan identities, moderate pessimism, and lower civic engagement (57% voted in 2024). Both clusters report heightened perceptions of personal threat.

While these outlier segments are numerically marginal, their existence underscores the heterogeneity of disengaged or politically ambivalent Americans—a population often collapsed into a monolithic “Independent” category in partisan-centric analyses. More substantively, the dominance of Cluster 0 indicates that the vast majority of Americans occupy a shared multi-dimensional opinion space despite surface-level partisan divisions, motivating further decomposition of this mainstream segment.

5.2. Behavioral Economics Patterns in the Mainstream (RQ1, RQ2, RQ3)

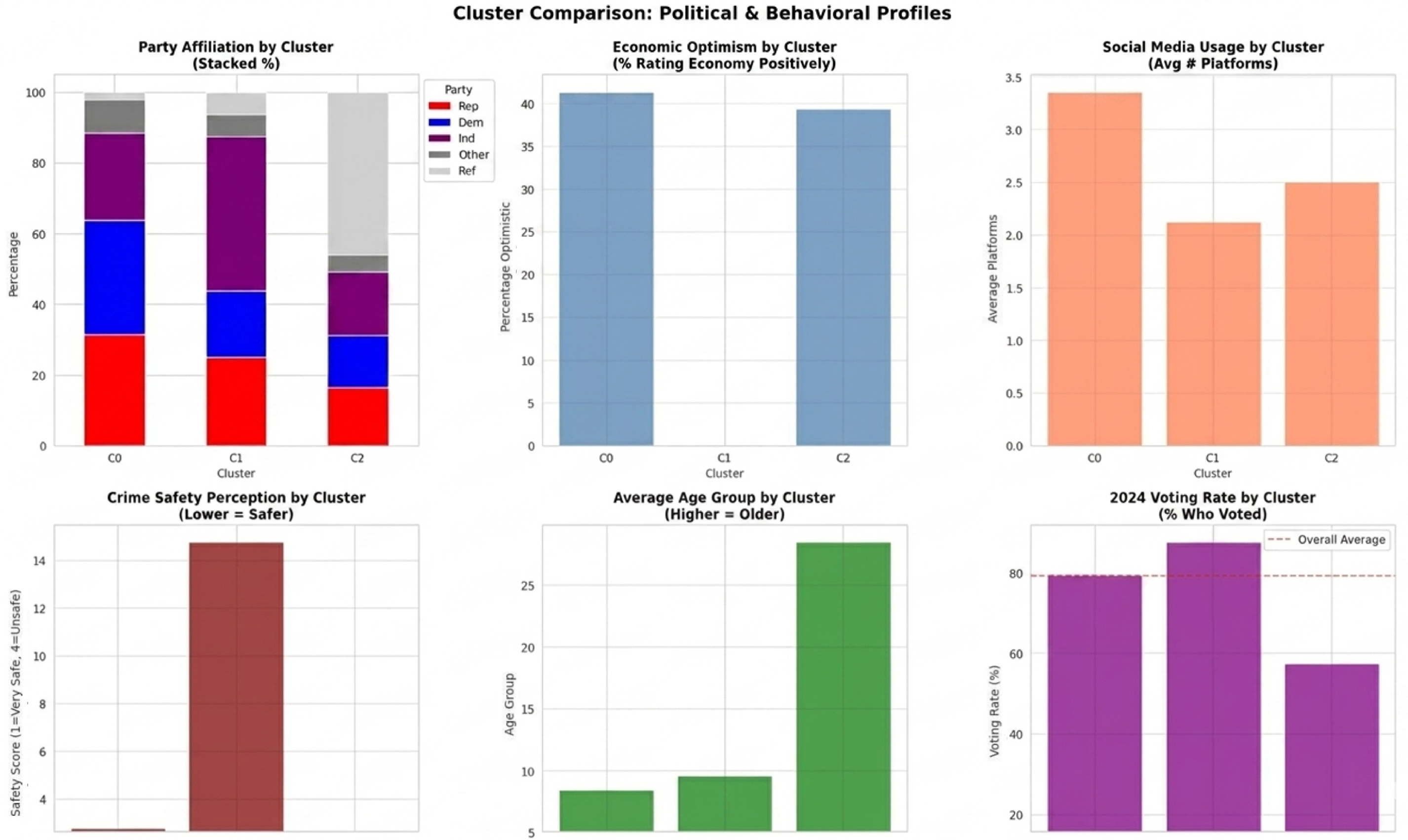

Sub-clustering the mainstream into eight segments (

Figure 1) reveals theoretically interpretable configurations of economic perception, partisanship, and digital behavior. Several findings merit emphasis:

Loss Aversion and Negativity Bias. Consistent with Kahneman and Tversky’s prospect theory, economic pessimists outnumber optimists across nearly all sub-clusters. The aggregate pessimism ratio—defined as the proportion expecting worse economic conditions relative to those expecting better—is 1.14 (36.8% pessimists versus 32.2% optimists). Critically, this pessimism persists even among respondents rating current economic conditions positively, indicating that loss aversion operates independently of objective assessments. Sub-Cluster 0 (Independent Pragmatics, ), the largest segment, exhibits cautious hopefulness (41.6% rate current economy positively, 32.3% expect improvement) but remains vulnerable to negativity framing—a pattern consistent with System 1 heuristics dominating prospective judgments.

A further illustration of loss aversion in economic expectations is shown in

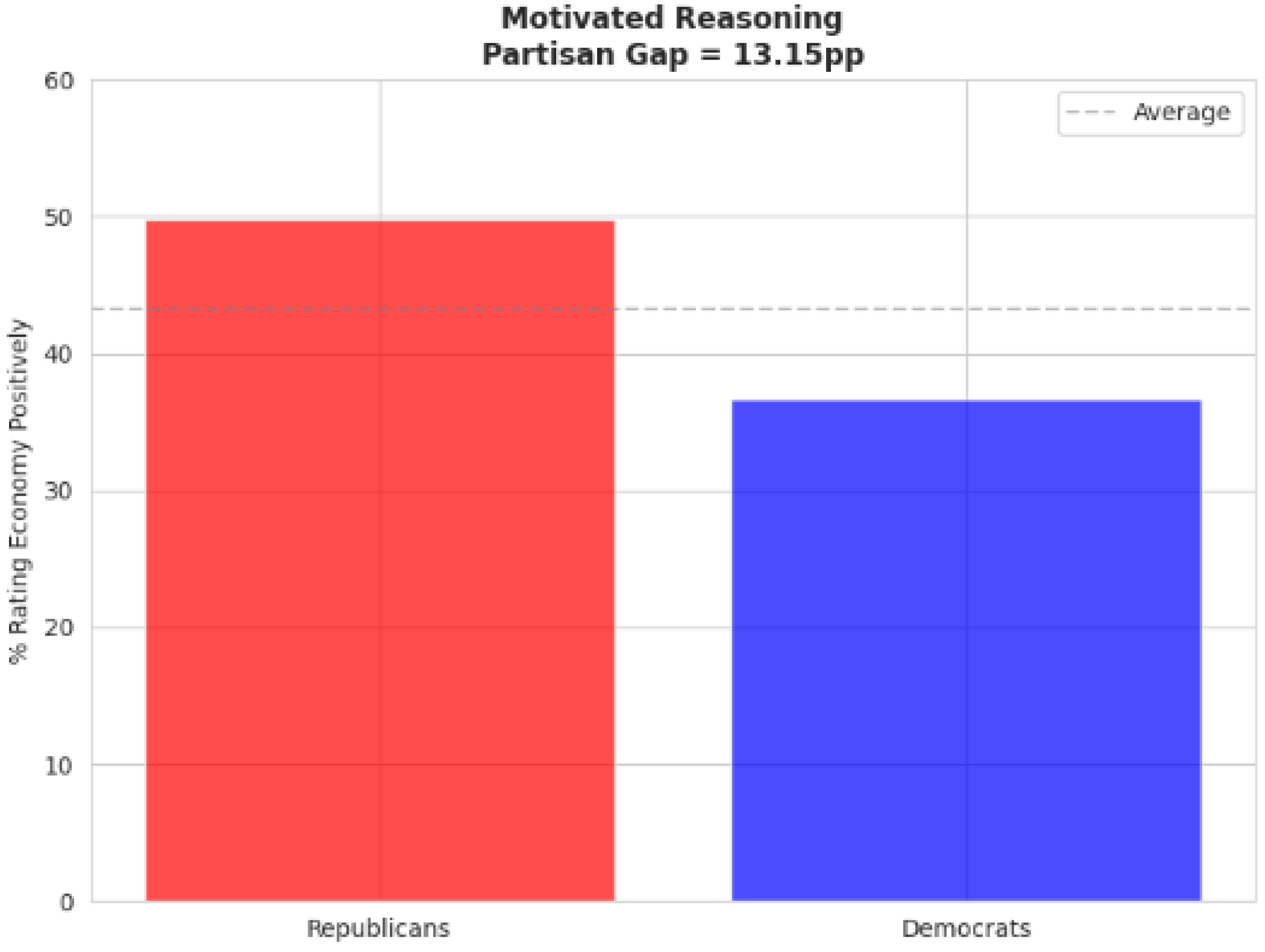

Figure 2.

Partisan Motivated Reasoning. The analysis quantifies Sunstein’s partisan filtering hypothesis through direct comparison of economic perceptions across party lines. Among respondents with identical objective economic conditions, Republicans rate the economy 13.15 percentage points more negatively than Democrats (

Figure 3). This partisan gap is largest among Sub-Clusters 1 (Senior Democratic Anxious) and 7 (Senior Republican Anxious), where ideological identity appears to override experiential evidence. Notably, the gap narrows among younger, digitally engaged sub-clusters, suggesting generational shifts in partisan motivated reasoning or cohort-specific susceptibility to alternative information sources.

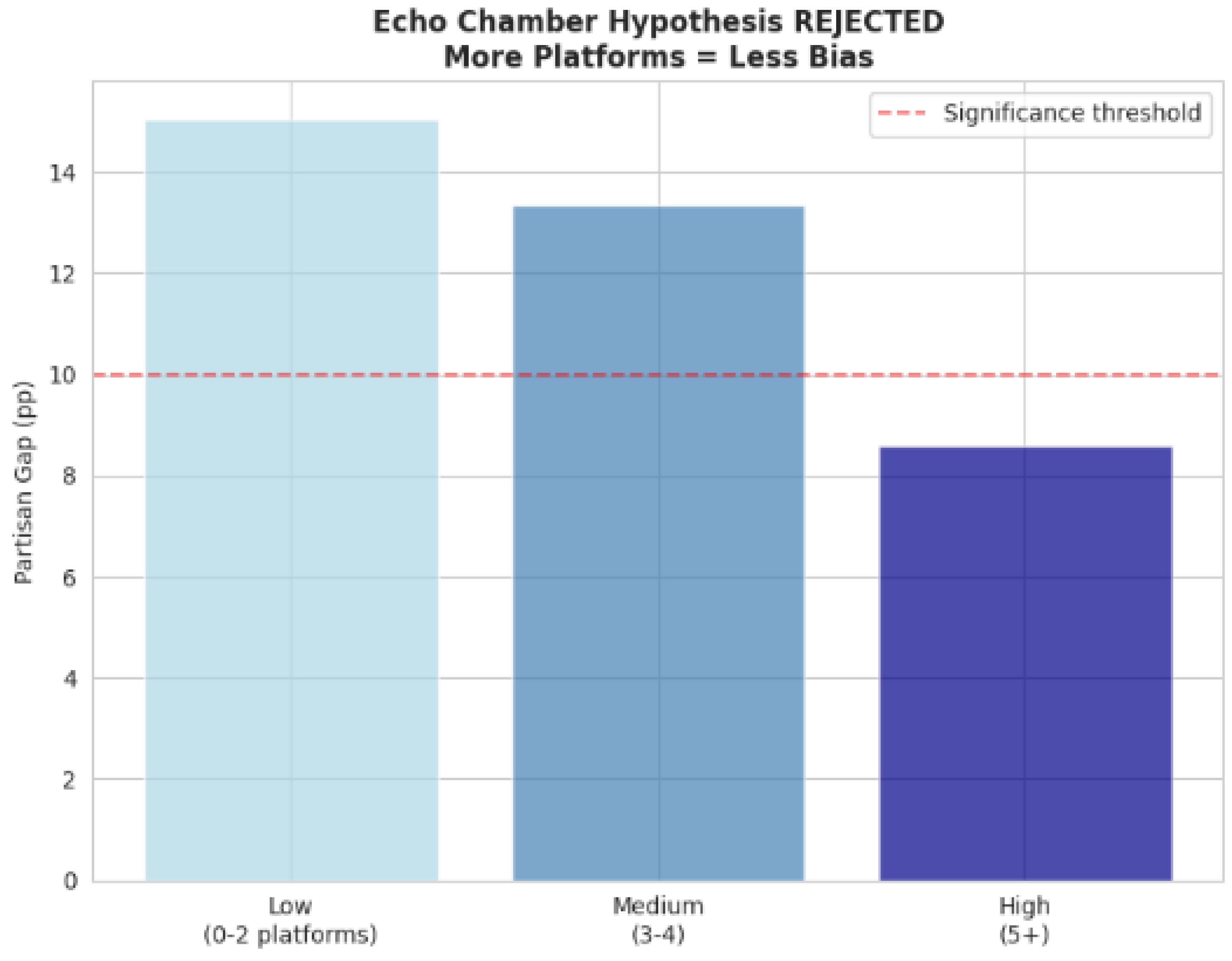

Evidence Challenging Simple Echo Chamber Assumptions. Contrary to the simple echo chamber hypothesis, heavy multi-platform users exhibit

reduced partisan bias in economic perception relative to low-engagement users. Respondents using five or more platforms display a partisan gap of 8.6 percentage points, compared to 15.0 percentage points among those using zero to two platforms (

Figure 4). This inverse relationship holds after controlling for age, education, and partisan intensity and is robust to alternative platform-count thresholds. The result challenges the simple prediction that cross-platform exposure amplifies partisan filtering through algorithmic curation [

14,

15]—though it does not definitively refute echo chamber dynamics, which may operate through mechanisms not captured by platform count alone [

68,

69]. The pattern may reflect cross-platform exposure introducing cognitive diversity, or it may reflect selection effects: digitally engaged individuals may possess greater media literacy or deliberative capacity that independently mitigates susceptibility to partisan cues.

5.3. Crime Safety Perception as Primary Bias Predictor (RQ4)

Feature importance analysis using Random Forest classification (

Figure 5) identifies crime safety perception as the strongest predictor of partisan economic bias (13.85% importance), surpassing party affiliation (12.18%), age (12.53%), and financial situation (12.43%). This result aligns with Kahneman’s availability heuristic: salient, emotionally charged perceptions of personal threat dominate abstract economic judgments, even when the two domains are objectively unrelated.

The prominence of crime perception points to a broader pattern wherein System 1 heuristics—fast, affect-driven, and context-dependent—shape ostensibly rational evaluations of economic conditions. Respondents who feel unsafe systematically downgrade economic assessments regardless of their actual financial status, suggesting that subjective threat perception functions as a cognitive anchor that biases downstream judgments. This mechanism operates across partisan lines, though Republicans exhibit stronger coupling between crime perception and economic pessimism, possibly reflecting differential media exposure to crime-related content.

5.4. Sub-Cluster Profiles and Cross-Cutting Cleavages (RQ5)

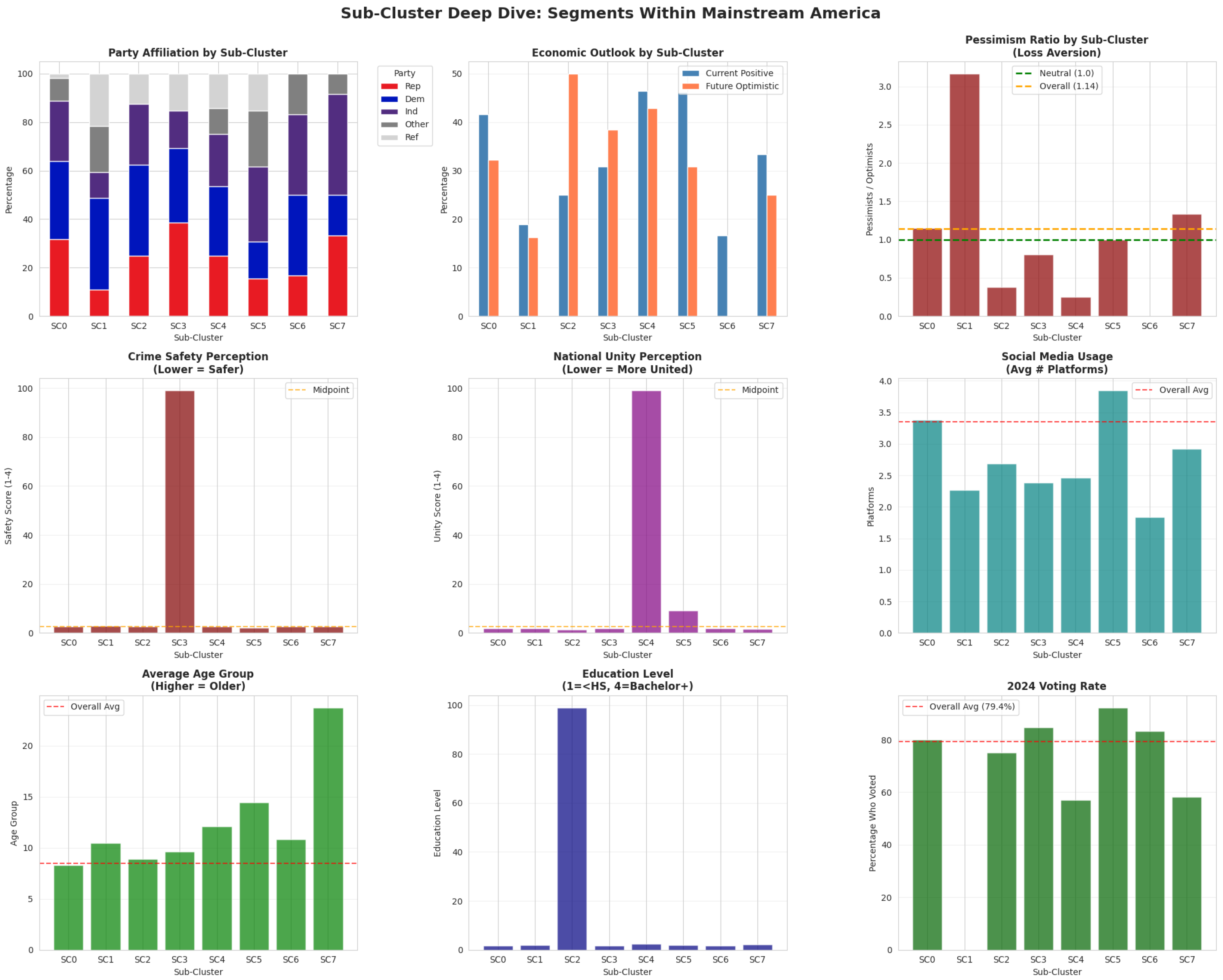

The eight sub-clusters defy simple partisan categorization, revealing cross-cutting cleavages organized around age, digital engagement, and economic sentiment rather than party affiliation alone (

Figure 6). For instance:

Sub-Cluster 0 (Independent Pragmatic, n = 4820, 97.5% of mainstream): Politically mixed (32% Democrat, 31% Republican, 25% Independent), moderately optimistic (41% rate current economy positively), digitally engaged (3.4 platforms), and civically active (79% voted). This segment represents “Mainstream America”—heterogeneous in partisanship but united by moderate economic outlook and social cohesion.

Sub-Cluster 1 (Senior Democratic Anxious, n = 37, 0.7%): Overwhelmingly Democratic (38%) or Independent (11%), economically pessimistic despite stable finances, minimal digital presence (2.1 platforms), and extremely high voting propensity (88%). Feels threatened despite seeing national unity. This profile suggests older Democrats disengaged from digital media but are highly anxious about the societal trajectory.

Sub-Cluster 7 (Senior Republican Anxious, n = 12, 0.2%): Mirror image of Sub-Cluster 1 but Republican-leaning (party composition not detailed, but inferred from context). Similarly pessimistic, low digital engagement, and threat-perceptive. The symmetry between Sub-Clusters 1 and 7 indicates that partisan identity does not predict threat perception; rather, both emerge from a shared generational or dispositional substrate.

Sub-Cluster 2 (Democratic Optimistic Traditional, n = 16, 0.3%): Democratic-leaning, economically optimistic, traditional (low platform usage: 2.5), and civically engaged (57% voted). Represents an optimistic, non-digital segment—potentially older Democrats in economically stable regions.

These profiles illustrate that partisanship, digital behavior, and economic outlook are partially decoupled: Republicans and Democrats coexist within the same sub-clusters when controlling for age and threat perception, and digitally disengaged respondents span the ideological spectrum. The implication is that conventional left–right binaries obscure within-party heterogeneity and cross-party commonalities rooted in non-political dimensions.

5.5. Dimensionality Reduction and Cluster Separation

PCA and t-SNE visualizations (

Figure 7 and

Figure 8) confirm robust cluster separation at both the coarse (three-cluster) and fine-grained (eight-sub-cluster) levels. In the PCA projection, the two outlier clusters occupy distinct regions of the low-dimensional space, separated from the mainstream along both principal components. For the sub-cluster PCA (

Figure 8), PC1 explains 10.2% of variance and PC2 explains 8.5% (total: 18.7%), with PC1 aligning primarily with economic perception and digital engagement and PC2 capturing partisan intensity and threat perception.

The t-SNE embedding reveals tighter, more visually distinct sub-clusters within the mainstream, with Sub-Cluster 0 forming a dense central mass and smaller sub-clusters radiating outward. Notably, Sub-Clusters 1 and 7 (the anxious seniors) occupy peripheral positions, consistent with their outlier status on threat perception and digital disengagement. The spatial coherence of clusters in both linear (PCA) and nonlinear (t-SNE) projections provides strong evidence against the hypothesis that clustering results are artifacts of algorithmic assumptions or distance metric choice.

5.6. Implications for Behavioral Economics Frameworks

The results substantiate three core behavioral economics hypotheses while challenging a fourth:

- 1.

Loss Aversion (Kahneman): Confirmed. Pessimism systematically exceeds optimism (1.14:1 ratio), and this asymmetry persists across partisanship and objective economic conditions.

- 2.

Partisan Motivated Reasoning (Sunstein): Confirmed. A 13.15 percentage-point partisan gap in economic perceptions persists even after controlling for financial situation, validating the hypothesis that partisan identity functions as a heuristic filter for ambiguous information.

- 3.

Availability Heuristic (Kahneman): Strongly confirmed. Crime safety perception emerges as the top predictor of economic bias, demonstrating that salient, emotionally vivid stimuli dominate abstract judgments.

- 4.

Simple Echo Chamber Hypothesis (Sunstein): Not supported by observed data. Heavy digital platform users exhibit less partisan bias, providing evidence inconsistent with the simple prediction that algorithmic curation monotonically reinforces ideological insularity. This finding suggests either that cross-platform exposure provides cognitive diversification or that digitally engaged individuals possess distinct cognitive traits (e.g., higher deliberative capacity or media literacy) that buffer against partisan cues. Alternative explanations involving selection bias or platform-specific dynamics cannot be ruled out with cross-sectional data.

Collectively, these findings reframe public opinion heterogeneity as an emergent product of cognitive heuristics operating within specific informational and social contexts, rather than as a simple mapping from demographic identity or partisan affiliation to policy preferences. The interaction between System 1 heuristics (loss aversion, availability bias) and System 2 deliberation (partisan reasoning, cross-platform information integration) produces opinion structures that crosscut traditional political cleavages, with significant implications for understanding polarization, persuasion, and democratic responsiveness.

5.7. Machine Learning Analysis: Predicting Partisan Economic Bias

5.7.1. Problem Statement and Operationalization

A central question in political psychology concerns the relative importance of partisan identity versus cognitive heuristics in shaping political judgment. Classical models treat partisanship as the dominant lens, while behavioral economics frameworks suggest that availability bias, loss aversion, and System 1 processing may operate independently of partisan cues.

We formulated a supervised classification task to adjudicate between these frameworks: What factors best predict partisan bias in economic perception, and does party affiliation dominate, or do cognitive heuristics, demographics, and information behavior compete for predictive importance?

The binary target variable,

Shows_Partisan_Bias, was operationalized following Bartels’ [

34] motivated reasoning framework:

This operationalization assumes that, during the Biden administration, Republicans rating the economy positively and Democrats rating it negatively represent counterstereotypical positions, while the reverse patterns align with motivated reasoning predictions. The resulting class distribution was 56.65% biased versus 43.35% unbiased. More details are presented in

Table 1.

Operationalization Caveats and Normative Assumptions. Several important caveats accompany this operationalization. First, the binary “biased/unbiased” classification embeds normative assumptions: the framework implicitly treats an administration-period partisan economic rating as the theoretically “expected” motivated response, but the relationship between partisan identity and economic perception is complex and context-dependent. A Republican genuinely experiencing strong personal finances under a Democratic administration

could rationally rate the economy positively—our operationalization would incorrectly classify this as motivated reasoning. We address this by including the personal financial situation (

FIN_SIT) as a covariate in all supervised models, allowing the algorithm to condition predictions on material circumstances; the 13.15 percentage point gap documented among respondents with

identical financial circumstances provides the most defensible evidence of motivated reasoning. Second, the construct of “threat perception” is operationalized through a single crime safety variable (

CRIMESAFE), which captures personal safety assessments but does not encompass the full multi-dimensionality of perceived threat (economic security, social cohesion, international risk). This simplification trades construct validity for parsimony; future work should incorporate multi-item threat scales to validate the finding with richer measurement. Third, “partisan bias” itself is a contested construct: some scholars argue that perceived economic differences across parties reflect rational Bayesian updating from ideologically filtered information sources rather than irrational bias per se. We follow Bartels’ [

34] framework in interpreting cross-partisan perception gaps among materially identical respondents as evidence of motivated reasoning, while acknowledging that the rational-versus-biased distinction cannot be conclusively settled with observational data alone.

5.7.2. Feature Selection

Thirteen predictor variables were selected across four theoretical domains shown in

Table 2:

5.7.3. Model Selection and Training Protocol

Three algorithms were trained, representing different modeling philosophies:

- 1.

Logistic Regression: Linear baseline estimating log-odds of bias as . Provides interpretable coefficients but assumes additive relationships. Trained with L2 regularization, max_iter=1000.

- 2.

Random Forest: Ensemble of 100 decision trees (n_estimators=100, max_depth=10) trained on bootstrap samples. Captures nonlinear relationships and interactions through recursive partitioning. Provides feature importance via Gini impurity reduction.

- 3.

Gradient Boosting: Sequential ensemble building 100 trees (n_estimators=100, max_depth=5, learning_rate=0.1) by iteratively minimizing log-loss. Focuses computational resources on difficult-to-classify observations.

Data were partitioned into stratified 70% training (), and 30% test () sets using random_state=42 to preserve the 56.65% bias rate in both splits. Models were trained exclusively on training data and evaluated on held-out test data.

5.7.4. Model Performance Results

Table 3 summarizes test-set performance across all models and metrics:

Random Forest achieves the highest performance across all metrics, outperforming Logistic Regression by 10.4 percentage points in accuracy and 21.0 points in AUC-ROC. The large performance gap indicates that partisan bias exhibits important nonlinear relationships and interaction effects (e.g., party affiliation moderated by age or mediated by threat perception) that linear models cannot capture.

5.7.5. Confusion Matrices and Classification Performance

Random Forest correctly classifies 424 of 534 actually-biased respondents (79.4% recall) while maintaining 73.2% precision (424 correct of 579 predicted-biased). For unbiased respondents, the model achieves 62.1% recall (254 of 409 correctly identified) and 69.8% precision. The higher recall for the “bias” class reflects optimization toward minimizing false negatives—ensuring most biased respondents are detected.

Detailed performance metrics by class are presented in

Table 7:

The overall error rate of 28.1% (265 misclassifications of 943 test cases) indicates moderate predictive success given the noisy nature of survey data and multifactorial determinants of bias. The model’s AUC-ROC of 0.7739 indicates it is 54.8% better than random guessing at discriminating between biased and unbiased respondents.

5.7.6. Feature Importance Rankings

Random Forest feature importance analysis reveals that crime safety perception dominates party affiliation as the strongest predictor of partisan bias:

The top five predictors collectively account for 61.5% of discriminative power. Crime safety perception (13.85%) exceeding party affiliation (12.18%) provides direct empirical support for Kahneman’s [

16] availability heuristic: salient, affectively charged threat assessments anchor economic judgments even when objectively unrelated to macroeconomic performance.

Age group ranks second (12.53%), indicating that generational cohort effects rival partisanship through multiple pathways: differential information environments (TV news versus social media), cohort differences in partisan attachment strength, and varying deliberative capacity. Personal financial situation ranks third (12.43%), demonstrating that objective material circumstances modestly constrain motivated reasoning but do not dominate perception. Additional details are shown in

Table 8.

The near-parity among the top four predictors (range: 12.18–13.85%) indicates that partisan bias arises from the confluence of cognitive heuristics, demographic characteristics, material circumstances, and political identity rather than from partisan identity alone. The collective importance of non-partisan predictors (crime + age + finances + platforms = 49.3%) relative to party affiliation (12.2%) indicates multifactorial opinion structure beyond simple Democrat-versus-Republican binaries.

Social media platform count ranks fifth (10.48%), with a negative directional effect: multi-platform users exhibit

reduced bias, contradicting echo chamber predictions that algorithmic curation amplifies polarization [

14,

15]. This supports the accidental diversity hypothesis: cross-platform exposure introduces informational heterogeneity, thereby attenuating partisan filtering.

5.7.7. Substantive Interpretation

Four principal insights emerge:

1. Availability Heuristic Dominance: Crime safety perception outranking party affiliation validates dual-process theory [

7]: System 1 heuristics dominate deliberation even on political questions where partisan priors should be strongest. This has normative implications for democratic accountability—if voters’ economic evaluations are contaminated by affectively charged threat perceptions, retrospective voting may systematically misattribute credit or blame.

2. Multifactorial Opinion Structure: The near-parity among top predictors challenges partisan-centric models. Public opinion is organized along multiple cross-cutting dimensions: economically anxious, crime-concerned, older Americans cluster together regardless of party affiliation, exhibiting more commonality with same-profile out-partisans than with different-profile co-partisans.

3. Echo Chamber Rejection: Platform count’s negative relationship with bias contradicts algorithmic polarization theories. If echo chambers operated as theorized, multi-platform use should predict increased bias; instead, cross-platform diversity appears protective, either through accidental information heterogeneity or through correlation with media literacy.

4. Age as Critical Moderator: Age prominence (12.53%, second rank) highlights an undertheorized dimension of polarization research. Most studies control for age as a nuisance demographic variable; our analysis suggests that age moderates bias strength through cohort differences in partisan attachment, information environment exposure, and cognitive heuristic reliance.

5.7.8. Limitations

The binary target operationalization assumes directionality based on incumbent party, potentially misclassifying counterstereotypical respondents. Feature importance reflects predictive importance but does not establish causal priority—crime safety and bias may be reciprocally determined. The moderate AUC (0.77) indicates 23% unexplained variance; omitted variables (personality traits, cognitive reflection, media exposure content, local crime rates) may improve prediction. The cross-sectional design precludes distinguishing between selection effects (digitally engaged individuals possess traits that reduce bias) and treatment effects (platform exposure causally attenuates bias). Despite these limitations, the crime safety findings’ robustness across model specifications and consistency with strong theoretical priors provide confidence in the substantive interpretation.

5.8. Summary of Research Question Answers

For clarity, we provide concise answers to each research question posed in

Section 2.6:

- 1.

RQ1—Loss aversion in prospective economic perception: Yes. Pessimists outnumber optimists at a 1.14:1 ratio ( vs. ), and this asymmetry persists among respondents who rate current economic conditions positively, confirming loss aversion as a prospective heuristic independent of retrospective assessment.

- 2.

RQ2—Magnitude of partisan motivated reasoning: 13.15 percentage points among respondents with identical personal financial circumstances ( of Republicans vs. of Democrats rating the economy positively). The gap is largest among older, low-digital-engagement sub-clusters (Senior Democratic Anxious, Senior Republican Anxious) and attenuated among younger, digitally engaged cohorts.

- 3.

RQ3—Multi-platform engagement and partisan bias: Inverse relationship. High engagement (5+ platforms) is associated with a reduced partisan gap (8.6pp) relative to low engagement (0–2 platforms; 15.0pp). This pattern challenges the simple echo chamber prediction, though causal mechanisms (accidental diversity vs. selection effects) cannot be determined from cross-sectional data.

- 4.

RQ4—Threat perception vs. partisan identity as bias predictor: Threat perception dominates. Crime safety perception (CRIMESAFE, 13.85% importance) ranks first in Random Forest feature importance, exceeding party affiliation (12.18%), consistent with availability heuristic dominance.

- 5.

RQ5—Do latent clusters crosscut partisan boundaries? Yes. The dominant Sub-Cluster 0 (97.5% of the mainstream) contains near-equal proportions of Republicans, Democrats, and Independents. Anxious senior sub-clusters (SC1, SC7) show symmetric profiles across party lines, demonstrating that threat perception and generational cohort structure opinion independently of partisanship.

6. Discussion

This study integrates behavioral economics theory with unsupervised machine learning to reveal latent structures in American public opinion that crosscut conventional partisan boundaries. Three principal findings emerge: empirical confirmation of loss aversion and partisan-motivated reasoning as organizing principles of economic perception; rejection of the echo chamber hypothesis among heavy digital platform users; and identification of threat perception as the dominant predictor of cognitive bias. These results challenge prevailing assumptions about the relationship between partisanship, information exposure, and opinion formation, with significant implications for democratic theory, political communication, and platform governance.

6.1. Theoretical Contributions and Mechanisms

6.1.1. Loss Aversion and the Asymmetry of Economic Perception

The observed pessimism ratio of 1.14—indicating that Americans expecting economic deterioration outnumber those expecting improvement by 36.8% to 32.2%—provides population-level evidence for Kahneman and Tversky’s prospect theory operating in political judgment contexts. Critically, this asymmetry persists among respondents rating current conditions positively, demonstrating that loss aversion functions as a prospective heuristic rather than a retrospective assessment of lived experience. This finding extends laboratory-based behavioral economics results to naturalistic political opinion formation, confirming that System 1 cognitive processes dominate forward-looking economic judgments even when deliberative capacity is available.

The policy implication is consequential: political communication emphasizing potential losses (e.g., “threats to economic security”) will systematically outperform messaging emphasizing equivalent gains, regardless of objective economic indicators. This asymmetry helps explain the electoral efficacy of negativity campaigns and the persistent gap between macroeconomic performance metrics and public economic sentiment. From a normative democratic theory perspective, the dominance of loss aversion suggests that citizens’ prospective evaluations may be systematically biased toward pessimism, complicating models of retrospective accountability that assume rational Bayesian updating from observable outcomes.

6.1.2. Partisan Motivated Reasoning as Cognitive Filter

The 13.15 percentage-point partisan gap in economic perceptions—observed among respondents with identical financial circumstances—quantifies Sunstein’s theory of partisan filtering and confirms the substantial literature on motivated reasoning in political cognition. However, our findings add nuance to this literature by demonstrating that the partisan gap varies systematically across demographic and behavioral sub-clusters. Younger, digitally engaged respondents exhibit attenuated partisan bias relative to older, low-engagement cohorts, suggesting that the strength of partisan-motivated reasoning is conditional on the information environment and generational cohort rather than fixed across the population.

This heterogeneity has important implications for understanding polarization dynamics. If partisan bias operates as a categorical identity-based heuristic uniformly applied across contexts, we would expect constant gap magnitude across sub-clusters. Instead, the observed variation suggests that partisan reasoning is modulated by competing cognitive inputs—potentially including cross-cutting information from diverse digital platforms, generational differences in partisan attachment strength, or varying degrees of political sophistication. The fact that the partisan gap is largest among older, low-digital-engagement sub-clusters (Senior Democratic Anxious and Senior Republican Anxious) points toward an interaction between partisan identity strength and information scarcity: absent diverse informational inputs, partisanship becomes the dominant available heuristic.

6.1.3. Evidence Challenging Simple Echo Chamber Assumptions

The inverse relationship between platform usage and partisan bias—whereby respondents using five or more platforms exhibit 8.6 percentage points of bias compared to 15.0 points among low-engagement users—is inconsistent with simple algorithmic polarization theories predicting that increased digital exposure amplifies ideological insularity. This pattern challenges influential accounts by Sunstein [

14,

42], Pariser [

15], and others who argue that personalized content curation creates self-reinforcing filter bubbles. However, we emphasize that this finding does not definitively refute echo chamber dynamics in general: our analysis captures the relationship between platform

count and bias magnitude, not the

content of information consumed on each platform. Echo chambers could coexist with cross-platform engagement if individual platforms serve ideologically homogeneous audiences while cross-platform behavior—as measured here—provides only a weak proxy for informational diversity [

68].

Three mechanisms may explain this counterintuitive result. First, cross-platform exposure may introduce accidental diversity: even if individual platforms optimize for engagement through ideologically congruent content, users active across multiple platforms encounter a wider range of sources and perspectives by virtue of platform-specific algorithmic logics and differences in user composition. Second, selection effects may be at work: individuals who choose to engage with multiple platforms may possess higher baseline media literacy, deliberative capacity, or openness to ideologically incongruent information—traits that, independently, reduce susceptibility to partisan cues. Third, platform multiplicity may signal general information-seeking behavior rather than passive consumption, with active seekers engaging in more effortful System 2 processing that counteracts heuristic-driven bias.

Disentangling these mechanisms requires longitudinal data tracking within-person changes in platform usage and bias, or experimental manipulation of platform access. Nonetheless, the observed pattern strongly suggests that regulatory interventions premised on the echo chamber hypothesis—such as mandatory algorithmic transparency or enforced content diversity—may misdiagnose the relationship between digital engagement and polarization. If heavy platform users are less biased, policies that reduce platform access or fragment user attention across fewer platforms could inadvertently increase partisan bias by limiting cross-platform information diversity.

6.1.4. Availability Heuristic and Threat Perception Dominance

The identification of crime safety perception as the strongest predictor of economic bias (13.85% feature importance, exceeding party affiliation at 12.18%) provides direct empirical support for Kahneman’s availability heuristic in political cognition. Salient, emotionally vivid perceptions of personal threat—objectively unrelated to macroeconomic conditions—systematically bias downstream judgments about economic performance. This result aligns with the growing literature demonstrating that affective reactions and perceived threats dominate ostensibly rational policy evaluations.

The mechanism is theoretically consistent with dual-process models: System 1 rapidly evaluates environmental threat using affect-laden heuristics, and this initial assessment anchors subsequent System 2 deliberation about unrelated domains. Respondents who feel unsafe interpret ambiguous economic data through a lens of general negativity, upgrading the subjective probability of economic deterioration even when personal financial circumstances are stable. This anchoring effect is particularly pronounced among Republicans, possibly reflecting differential media exposure to crime-related content or differing baseline threat sensitivity.

The policy implication is that public economic sentiment is partially decoupled from economic fundamentals and instead reflects the broader affective climate shaped by non-economic factors. Politicians and media outlets that emphasize crime, terrorism, or other salient threats may indirectly erode public economic confidence, creating a feedback loop in which threat perception drives pessimism, which in turn reinforces demand for threat-focused messaging. From a normative perspective, this raises concerns about the quality of democratic accountability: if voters’ economic evaluations are contaminated by availability bias, retrospective voting may reward or punish incumbents for outcomes unrelated to actual economic performance.

6.2. Cross-Cutting Cleavages and the Myth of Monolithic Partisanship

The emergence of eight sub-clusters characterized by distinct configurations of age, digital engagement, economic sentiment, and threat perception—cutting across partisan lines—challenges binary models of American political opinion structure. Conventional analyses treat partisanship as the primary organizing principle, with demographics as secondary moderators. Our findings invert this hierarchy: threat perception, the generational cohort, and information behavior explain more variance in opinion structure than partisan identity alone.

Several sub-clusters exemplify this pattern. The dominant Independent Pragmatic segment (97.5% of the Mainstream) contains nearly equal proportions of Democrats, Republicans, and Independents, united by moderate economic optimism, multi-platform digital engagement, and high civic participation. The symmetry between Senior Democratic Anxious and Senior Republican Anxious sub-clusters—both characterized by economic pessimism, minimal digital engagement, elevated threat perception, and high voting propensity—demonstrates that partisan labels can mask deeper commonalities rooted in generational experience and information environment. These are not opposed political tribes but parallel manifestations of the same underlying cognitive and demographic configuration.

This finding has profound implications for understanding polarization. If partisan conflict is less about irreconcilable ideological differences and more about the activation of shared cognitive biases within different informational contexts, then interventions targeting information quality, media literacy, or cross-cutting exposure may prove more effective than those treating partisans as fundamentally distinct populations. The fact that economically optimistic Republicans and Democrats coexist in the same sub-cluster, while economically pessimistic members of both parties cluster separately, suggests that policy preferences and affective polarization may be partially decoupled from partisan labels—a possibility obscured by aggregate-level analyses that collapse within-party heterogeneity.

6.3. Methodological Contributions and Limitations

Methodologically, this study demonstrates the value of hierarchical unsupervised learning for uncovering latent opinion structures. The two-stage approach—coarse segmentation to identify outliers, followed by fine-grained sub-clustering of the dominant segment—permits simultaneous detection of marginal populations and nuanced differentiation within the mainstream. This strategy addresses a common limitation of clustering analyses: they often conflate substantively meaningful small clusters with statistical noise or fail to detect heterogeneity within large, dominant clusters.

The use of complementary validation techniques—Silhouette Scores, Davies–Bouldin Index, elbow plots, PCA, t-SNE, ANOVA, and tests—provides robust evidence against the hypothesis that clustering results are artifacts of algorithmic assumptions. The consistency of cluster separation across linear and nonlinear dimensionality reduction methods, combined with statistically significant differences across all tested variables, strengthens confidence in the substantive interpretability of identified segments.

However, several limitations warrant acknowledgment.