Query-Adaptive Hybrid Search

Abstract

1. Introduction

2. Related Works

3. Materials and Methods

3.1. Datasets

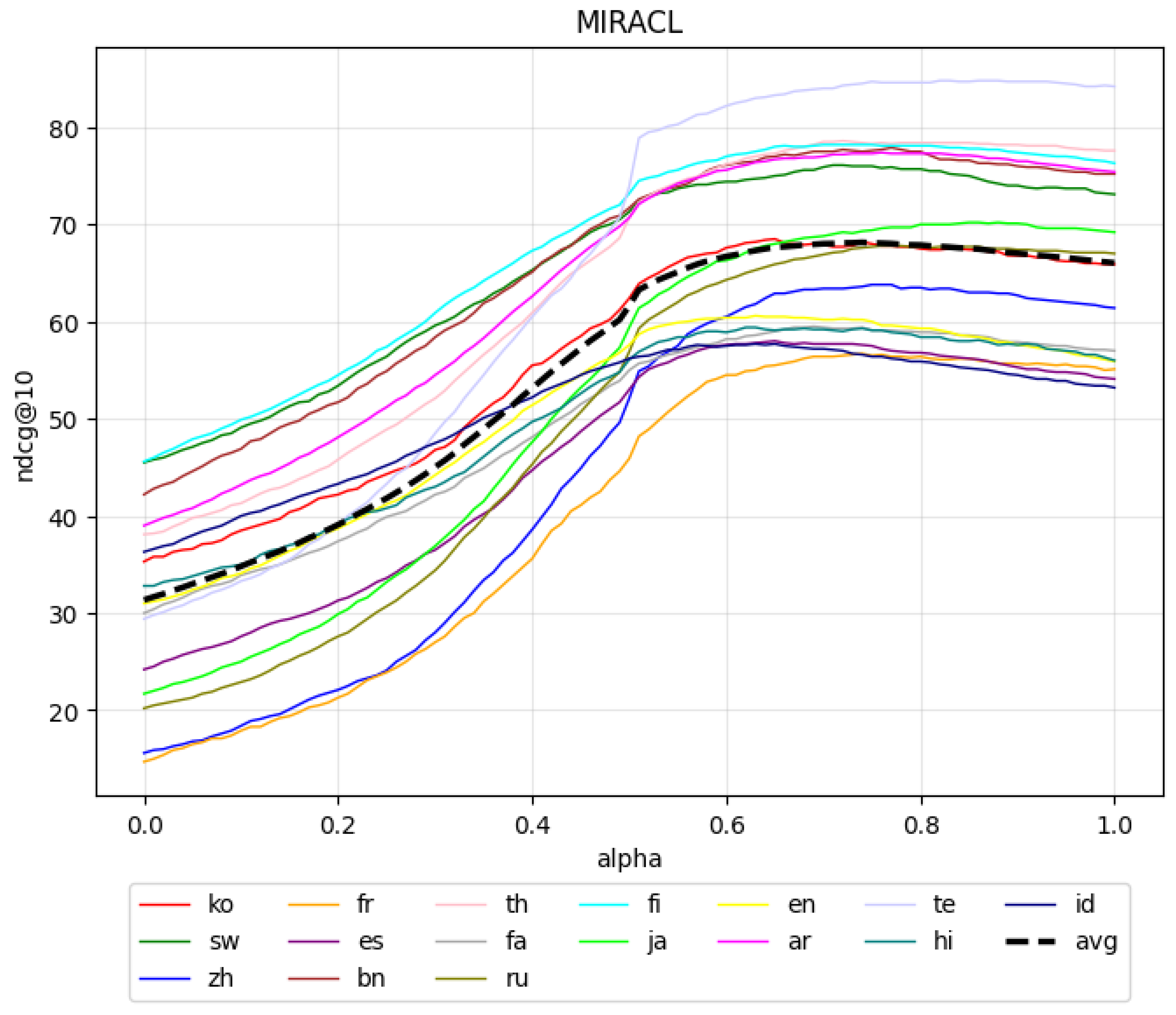

3.2. Hybrid Search

- Language-specific tokenization: Each language is processed using a dedicated tokenizer that accounts for its morphological characteristics;

- Stop-word handling: We use customized stop-word lists tailored for information retrieval tasks;

- Static fixation: The use of classical BM25 allows us to treat lexical retrieval as a fixed baseline, supporting our hypothesis that, in hybrid retrieval, the complementarity of components is more critical than their individual accuracy, provided that the priority model is correctly selected on a per-query basis.

- tfj denotes the term frequency of token j in candidate c;

- cfj is the candidate frequency of j in the corpus;

- cl and avcl represent the candidate length and average candidate length, respectively;

- N is the total number of candidates in C;

- k1 modulates the saturation rate of term frequency, delaying saturation to allow term frequency to have a higher effect in longer candidates;

- b adjusts length normalization, with higher values leading to increased penalization of longer candidates.

3.3. Query-Driven Alpha Prediction

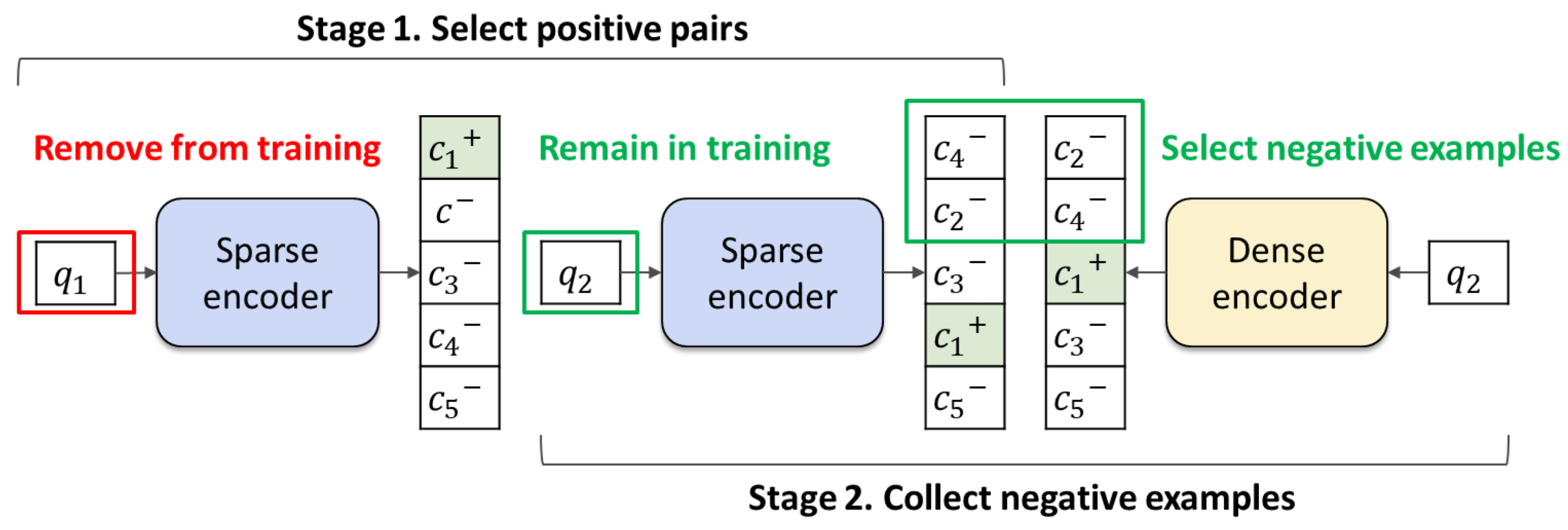

3.4. Antagonist Negative Sampling

4. Results

4.1. Evaluation

- HTR (Optimal). Hybrid search model used with a fixed value of α that yields the best performance over the entire dataset.

- HTR (Oracle). An idealized setting when the optimal α is selected individually for each query, representing the upper bound on achievable performance.

4.2. Ablation Study

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| QDAP | Query-Driven Alpha Prediction |

| LLM | Large Language Model |

| MLDR | Multilingual Long-Document Retrieval Dataset |

| MIRACL | Multi-Modal Image Registration and Connectivity Analysis Dataset |

| BM25 | Best Matching 25 |

| BGE-M3 | BAAI General Embedding: Multilinguality, Multifunctionality, Multigranularity |

| mGTE | Multilingual General Text Embeddings Model |

| nDCG | Normalized Discounted Cumulative Gain |

| RAG | Retrieval-Augmented Generation |

| DPR | Dense Passage Retrieval |

| BERT | Bidirectional Encoder Representations from Transformer Architecture |

| RoBERTa | Robustly Optimized BERT Approach |

| SBERT | Sentence-BERT |

| ColBERT | Contextualized Late Interaction Over BERT |

| ANCE | Approximate Nearest Neighbor Negative Contrastive Estimation |

| SPLADE | Sparse Lexical and Expansion Model |

| uniCOIL | Universal Contextualized Inverted List |

| SimANS | Similarity-Based Adversarial Negative Sampling |

| TAS-B | Topic-Aware Sampling (BERT-based) |

| RRF | Reciprocal Rank Function |

| BioASQ | Biomedical Semantic Indexing and Question Answering |

| MKQA | Multilingual Knowledge Questions and Answers |

| RoPE | Rotary Position Embedding |

| WD | Wasserstein Distance |

| CE | Cross-Entropy |

| InfoNCE | Information Noise-Contrastive Estimation |

| HTR | Hybrid Text Retriever |

| LTR | Lexical Text Retriever |

| STR | Semantic Text Retriever |

| GPU | Graphics Processing Unit |

Appendix A. Training of the Query-Driven Alpha Prediction (QDAP) Module

| Algorithm A1 Pseudocode for Query-Driven Alpha Prediction (QDAP) Module Training |

|

Appendix B. Antagonist Negative Sampling Algorithm

| Algorithm A2 Pseudocode for Antagonist Negative Sampling Data Preparation |

|

References

- Bora, A.; Cuayáhuitl, H. Systematic Analysis of Retrieval-Augmented Generation-Based LLMs for Medical Chatbot Applications. Mach. Learn. Knowl. Extr. 2024, 6, 2355–2374. [Google Scholar] [CrossRef]

- Lakatos, R.; Pollner, P.; Hajdu, A.; Joó, T. Investigating the Performance of Retrieval-Augmented Generation and Domain-Specific Fine-Tuning for the Development of AI-Driven Knowledge-Based Systems. Mach. Learn. Knowl. Extr. 2025, 7, 15. [Google Scholar] [CrossRef]

- Robertson, S.; Zaragoza, H. The Probabilistic Relevance Framework: BM25 and Beyond. Found. Trends Inf. Retr. 2009, 3, 333–389. [Google Scholar] [CrossRef]

- Arabzadeh, N.; Yan, X.; Clarke, C. Predicting Efficiency/Effectiveness Trade-offs for Dense vs. Sparse Retrieval Strategy Selection. In Proceedings of the CIKM ’21: Proceedings of the 30th ACM International Conference on Information & Knowledge Management, Gold Coast, QLD, Australia, 1–5 November 2021; pp. 2862–2866. [Google Scholar] [CrossRef]

- Posokhov, P.; Matveeva, A.; Makhnytkina, O.; Matveev, A.; Matveev, Y. Personalizing Retrieval-Based Dialogue Agents. In Proceedings of the Speech and Computer; Prasanna, S.R.M., Karpov, A., Samudravijaya, K., Agrawal, S.S., Eds.; Springer: Cham, Switzerland, 2022; pp. 554–566. [Google Scholar]

- Karpukhin, V.; Oguz, B.; Min, S.; Lewis, P.; Wu, L.; Edunov, S.; Chen, D.; Yih, W.t. Dense Passage Retrieval for Open-Domain Question Answering. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), Online, 16–20 November 2020; pp. 6769–6781. [Google Scholar] [CrossRef]

- Posokhov, P.; Skrylnikov, S.; Makhnytkina, O.; Matveev, Y. Hybrid Approach to the Personification of Dialogue Agents. In Proceedings of the Artificial Intelligence and Speech Technology; Dev, A., Sharma, A., Agrawal, S.S., Rani, R., Eds.; Springer: Cham, Switzerland, 2025; pp. 102–115. [Google Scholar]

- Matveev, Y.; Makhnytkina, O.; Posokhov, P.; Matveev, A.; Skrylnikov, S. Personalizing Hybrid-Based Dialogue Agents. Mathematics 2022, 10, 4657. [Google Scholar] [CrossRef]

- Chen, J.; Xiao, S.; Zhang, P.; Luo, K.; Lian, D.; Liu, Z. M3-Embedding: Multi-Linguality, Multi-Functionality, Multi-Granularity Text Embeddings Through Self-Knowledge Distillation. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2024, Bangkok, Thailand, 11–16 August 2024; pp. 2318–2335. [Google Scholar] [CrossRef]

- Zhang, X.; Zhang, Y.; Long, D.; Xie, W.; Dai, Z.; Tang, J.; Lin, H.; Yang, B.; Xie, P.; Huang, F.; et al. mGTE: Generalized Long-Context Text Representation and Reranking Models for Multilingual Text Retrieval. In Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing: Industry Track, Miami, FL, USA, 12–16 November 2024; pp. 1393–1412. [Google Scholar] [CrossRef]

- Hsu, H.L.; Tzeng, J. DAT: Dynamic Alpha Tuning for Hybrid Retrieval in Retrieval-Augmented Generation. arXiv 2025, arXiv:2503.23013. [Google Scholar] [CrossRef]

- Zhang, X.; Thakur, N.; Ogundepo, O.; Kamalloo, E.; Alfonso-Hermelo, D.; Li, X.; Liu, Q.; Rezagholizadeh, M.; Lin, J. MIRACL: A Multilingual Retrieval Dataset Covering 18 Diverse Languages. Trans. Assoc. Comput. Linguist. 2023, 11, 1114–1131. [Google Scholar] [CrossRef]

- Formal, T.; Lassance, C.; Piwowarski, B.; Clinchant, S. SPLADE v2: Sparse Lexical and Expansion Model for Information Retrieval. arXiv 2021, arXiv:2109.10086. [Google Scholar] [CrossRef]

- Formal, T.; Piwowarski, B.; Clinchant, S. SPLADE: Sparse Lexical and Expansion Model for First Stage Ranking. In Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval, New York, NY, USA, 11–15 July 2021; pp. 2288–2292. [Google Scholar] [CrossRef]

- Mallia, A.; Khattab, O.; Suel, T.; Tonellotto, N. Learning Passage Impacts for Inverted Indexes. In Proceedings of the SIGIR ’21: Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval, Virtual Event, 11–15 July 2021; pp. 1723–1727. [Google Scholar] [CrossRef]

- Lin, J.J.; Ma, X. A Few Brief Notes on DeepImpact, COIL, and a Conceptual Framework for Information Retrieval Techniques. arXiv 2021, arXiv:2106.14807. [Google Scholar] [CrossRef]

- Humeau, S.; Shuster, K.; Lachaux, M.; Weston, J. Poly-encoders: Architectures and Pre-training Strategies for Fast and Accurate Multi-sentence Scoring. In Proceedings of the 8th International Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, 26–30 April 2020. [Google Scholar]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers); Burstein, J., Doran, C., Solorio, T., Eds.; Association for Computational Linguistics: Minneapolis, MN, USA, 2019; pp. 4171–4186. [Google Scholar] [CrossRef]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. RoBERTa: A Robustly Optimized BERT Pretraining Approach. arXiv 2019, arXiv:1907.11692. [Google Scholar] [CrossRef]

- Reimers, N.; Gurevych, I. Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks. arXiv 2019, arXiv:1908.10084. [Google Scholar] [CrossRef]

- Ma, J.; Korotkov, I.; Hall, K.B.; McDonald, R.T. Hybrid First-stage Retrieval Models for Biomedical Literature. In Proceedings of the Working Notes of CLEF 2020—Conference and Labs of the Evaluation Forum, Thessaloniki, Greece, 22–25 September 2020; CEUR Workshop Proceedings; Cappellato, L., Eickhoff, C., Ferro, N., Névéol, A., Eds.; CEUR-WS.org: Aachen, Germany, 2020; Volume 2696. [Google Scholar]

- Sawarkar, K.; Mangal, A.; Solanki, S.R. Blended RAG: Improving RAG (Retriever-Augmented Generation) Accuracy with Semantic Search and Hybrid Query-Based Retrievers. In Proceedings of the 2024 IEEE 7th International Conference on Multimedia Information Processing and Retrieval (MIPR), San Jose, CA, USA, 7–9 August 2024; pp. 155–161. [Google Scholar] [CrossRef]

- Khattab, O.; Zaharia, M. ColBERT: Efficient and Effective Passage Search via Contextualized Late Interaction over BERT. In Proceedings of the 43rd International ACM SIGIR Conference on Research and Development in Information Retrieval, New York, NY, USA, 25–30 July 2020; pp. 39–48. [Google Scholar] [CrossRef]

- Santhanam, K.; Khattab, O.; Saad-Falcon, J.; Potts, C.; Zaharia, M. ColBERTv2: Effective and Efficient Retrieval via Lightweight Late Interaction. In Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Seattle, WA, USA, 10–15 July 2022; pp. 3715–3734. [Google Scholar] [CrossRef]

- Cormack, G.V.; Clarke, C.L.A.; Buettcher, S. Reciprocal rank fusion outperforms condorcet and individual rank learning methods. In Proceedings of the 32nd International ACM SIGIR Conference on Research and Development in Information Retrieval, New York, NY, USA, 19–23 July 2009; pp. 758–759. [Google Scholar] [CrossRef]

- Bruch, S.; Gai, S.; Ingber, A. An Analysis of Fusion Functions for Hybrid Retrieval. ACM Trans. Inf. Syst. 2023, 42, 1–35. [Google Scholar] [CrossRef]

- Ma, X.; Sun, K.; Pradeep, R.; Lin, J. A Replication Study of Dense Passage Retriever. arXiv 2021, arXiv:2104.05740. [Google Scholar] [CrossRef]

- Bernston, A. Azure AI Search: Outperforming Vector Search with Hybrid Retrieval and Reranking. 2023. Available online: https://techcommunity.microsoft.com/blog/azure-ai-foundry-blog/azure-ai-search-outperforming-vector-search-with-hybrid-retrieval-and-reranking/3929167 (accessed on 6 March 2026).

- Incremona, A.; Pozzi, A.; Guiscardi, A.; Tessera, D. A differentiable and uncertainty-aware mutual information regularizer for bias mitigation. Neurocomputing 2026, 669, 132498. [Google Scholar] [CrossRef]

- Shaik, H.; Villuri, G.; Doboli, A. An Overview of Large Language Models and a Novel, Large Language Model-Based Cognitive Architecture for Solving Open-Ended Problems. Mach. Learn. Knowl. Extr. 2025, 7, 134. [Google Scholar] [CrossRef]

- Matveev, A.; Makhnytkina, O.; Matveev, Y.; Svischev, A.; Korobova, P.; Rybin, A.; Akulov, A. Virtual Dialogue Assistant for Remote Exams. Mathematics 2021, 9, 2229. [Google Scholar] [CrossRef]

- Masliukhin, S.; Posokhov, P.; Skrylnikov, S.; Makhnytkina, O.; Ivanovskaya, T. Prompt-based multi-task learning for robust text retrieval. Sci. Tech. J. Inf. Technol. Mech. Opt. 2024, 24, 1016–1023. [Google Scholar] [CrossRef]

- Xiong, L.; Xiong, C.; Li, Y.; Tang, K.; Liu, J.; Bennett, P.N.; Ahmed, J.; Overwijk, A. Approximate Nearest Neighbor Negative Contrastive Learning for Dense Text Retrieval. arXiv 2020, arXiv:2007.00808. [Google Scholar] [CrossRef]

- Zhou, K.; Gong, Y.; Liu, X.; Zhao, W.X.; Shen, Y.; Dong, A.; Lu, J.; Majumder, R.; Wen, J.r.; Duan, N. SimANS: Simple Ambiguous Negatives Sampling for Dense Text Retrieval. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing: Industry Track, Abu Dhabi, United Arab Emirates, 7–11 December 2022; pp. 548–559. [Google Scholar] [CrossRef]

- Hofstätter, S.; Lin, S.C.; Yang, J.H.; Lin, J.; Hanbury, A. Efficiently Teaching an Effective Dense Retriever with Balanced Topic Aware Sampling. In Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval, New York, NY, USA, 11–15 July 2021; pp. 113–122. [Google Scholar] [CrossRef]

- Qu, Y.; Ding, Y.; Liu, J.; Liu, K.; Ren, R.; Zhao, W.X.; Dong, D.; Wu, H.; Wang, H. RocketQA: An Optimized Training Approach to Dense Passage Retrieval for Open-Domain Question Answering. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Online, 6–11 June 2021; pp. 5835–5847. [Google Scholar] [CrossRef]

- Ren, R.; Qu, Y.; Liu, J.; Zhao, W.X.; She, Q.; Wu, H.; Wang, H.; Wen, J.R. RocketQAv2: A Joint Training Method for Dense Passage Retrieval and Passage Re-ranking. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, Punta Cana, Dominican Republic, 7–11 November 2021; pp. 2825–2835. [Google Scholar] [CrossRef]

- Longpre, S.; Lu, Y.; Daiber, J. MKQA: A Linguistically Diverse Benchmark for Multilingual Open Domain Question Answering. Trans. Assoc. Comput. Linguist. 2021, 9, 1389–1406. [Google Scholar] [CrossRef]

- Su, J.; Lu, Y.; Pan, S.; Wen, B.; Liu, Y. RoFormer: Enhanced Transformer with Rotary Position Embedding. arXiv 2021, arXiv:2104.09864. [Google Scholar] [CrossRef]

- Posokhov, P.; Masliukhin, S.; Stepan, S.; Tirskikh, D.; Makhnytkina, O. Relevance Scores Calibration for Ranked List Truncation via TMP Adapter. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2025, Vienna, Austria, 1–27 July 2025; pp. 7728–7734. [Google Scholar] [CrossRef]

- Bahri, D.; Tay, Y.; Zheng, C.; Metzler, D.; Tomkins, A. Choppy: Cut Transformer for Ranked List Truncation. In Proceedings of the 43rd International ACM SIGIR Conference on Research and Development in Information Retrieval, New York, NY, USA, 25–30 July 2020; pp. 1513–1516. [Google Scholar] [CrossRef]

- van den Oord, A.; Li, Y.; Vinyals, O. Representation Learning with Contrastive Predictive Coding. arXiv 2018, arXiv:1807.03748. [Google Scholar]

| Dataset | Language | Train | Val | Test | Corpus | Avg. Doc Length |

|---|---|---|---|---|---|---|

| MLDR | Arabic (ar) | 1817 | 200 | 200 | 7607 | 9428 |

| German (de) | 1847 | 200 | 200 | 10,000 | 9039 | |

| English (en) | 10,000 | 200 | 800 | 200,000 | 3308 | |

| Spanish (es) | 2254 | 200 | 200 | 9551 | 8771 | |

| French (fr) | 1608 | 200 | 200 | 10,000 | 9659 | |

| Hindi (hi) | 1618 | 200 | 200 | 3806 | 5555 | |

| Italian (it) | 2151 | 200 | 200 | 10,000 | 9195 | |

| Japanese (ja) | 2262 | 200 | 200 | 10,000 | 9297 | |

| Korean (ko) | 2198 | 200 | 200 | 6176 | 7832 | |

| Portuguese (pt) | 1845 | 200 | 200 | 6569 | 7922 | |

| Russian (ru) | 1864 | 200 | 200 | 10,000 | 9723 | |

| Thai (th) | 197 | 200 | 200 | 10,000 | 8089 | |

| Chinese (zh) | 10,000 | 200 | 800 | 200,000 | 4249 | |

| MIRACL | Arabic (ar) | 3295 | 200 | 2896 | 2,061,414 | 53 |

| Bengali (bn) | 1431 | 200 | 411 | 297,265 | 55 | |

| English (en) | 2663 | 200 | 799 | 32,893,221 | 64 | |

| Spanish (es) | 1962 | 200 | 648 | 10,373,953 | 65 | |

| Persian (fa) | 1907 | 200 | 632 | 2,207,172 | 48 | |

| Finnish (fi) | 2697 | 200 | 1271 | 1,883,509 | 40 | |

| French (fr) | 943 | 200 | 343 | 14,636,953 | 54 | |

| Hindi (hi) | 969 | 200 | 350 | 506,264 | 68 | |

| Indonesian (id) | 3871 | 200 | 960 | 1,446,315 | 47 | |

| Japanese (ja) | 3277 | 200 | 860 | 6,953,614 | 95 | |

| Korean (ko) | 868 | 200 | 213 | 1,486,752 | 101 | |

| Russian (ru) | 4483 | 200 | 1252 | 9,543,918 | 44 | |

| Swahili (sw) | 1701 | 200 | 482 | 131,924 | 35 | |

| Telugu (te) | 3252 | 200 | 828 | 518,079 | 50 | |

| Thai (th) | 2772 | 200 | 733 | 542,166 | 107 | |

| Chinese (zh) | 1112 | 200 | 393 | 4,934,368 | 88 |

| Model | Avg | ar | de | en | es | fr | hi | it | ja | ko | pt | ru | th | zh |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| BM25 | 53.6 | 45.1 | 52.6 | 57.0 | 78.0 | 75.7 | 43.7 | 70.9 | 36.2 | 25.7 | 82.6 | 61.3 | 33.6 | 34.6 |

| BGE-M3 (Dense) | 52.5 | 47.6 | 46.1 | 48.9 | 74.8 | 73.8 | 40.7 | 62.7 | 50.9 | 42.9 | 74.4 | 59.5 | 33.6 | 26.0 |

| BGE-M3 (Sparse) | 62.2 | 58.7 | 53.0 | 62.1 | 87.4 | 82.7 | 49.6 | 74.7 | 53.9 | 47.9 | 85.2 | 72.9 | 40.3 | 40.5 |

| BGE-M3 (Multi-vec) | 57.6 | 56.6 | 50.4 | 55.8 | 79.5 | 77.2 | 46.6 | 66.8 | 52.8 | 48.8 | 77.5 | 64.2 | 39.4 | 32.7 |

| BGE-M3 (Dense + Sparse) | 64.8 | 63.0 | 56.4 | 64.2 | 88.7 | 84.2 | 52.3 | 75.8 | 58.5 | 53.1 | 86.0 | 75.6 | 42.9 | 42.0 |

| BGE-M3 (Dense + Sparse + Multi-vec) | 65.0 | 64.7 | 57.9 | 63.8 | 86.8 | 83.9 | 52.2 | 75.5 | 60.1 | 55.7 | 85.4 | 73.8 | 44.7 | 40.0 |

| mGTE-TRM (Dense) | 56.5 | 55.0 | 54.9 | 51.0 | 81.2 | 76.2 | 45.2 | 66.7 | 52.1 | 46.7 | 79.1 | 64.2 | 35.3 | 27.4 |

| mGTE-TRM (Sparse) | 71.0 | 74.3 | 66.2 | 66.4 | 93.6 | 88.4 | 61.0 | 82.2 | 66.2 | 64.2 | 89.9 | 82.0 | 47.4 | 41.8 |

| mGTE-TRM (Dense + Sparse) | 71.3 | 74.6 | 66.6 | 66.5 | 93.6 | 88.6 | 61.6 | 83.0 | 66.7 | 64.6 | 89.8 | 82.1 | 47.7 | 41.4 |

| STR (Dense) | 61.8 | 63.5 | 59.7 | 48.9 | 86.3 | 82.1 | 51.0 | 73.2 | 60.4 | 55.1 | 82.3 | 74.2 | 39.6 | 27.2 |

| LTR (Sparse) | 67.3 | 70.5 | 61.0 | 64.4 | 87.5 | 82.9 | 51.0 | 79.8 | 66.7 | 64.7 | 83.7 | 74.1 | 37.5 | 51.3 |

| HTR (Dense + Sparse) | 74.3 | 80.0 | 69.6 | 67.7 | 92.7 | 91.2 | 62.8 | 87.4 | 69.6 | 72.5 | 88.9 | 85.0 | 45.4 | 53.2 |

| Model | Avg | ar | bn | en | es | fa | fi | fr | hi | id | ja | ko | ru | sw | te | th | zh |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| BM25 | 31.7 | 39.5 | 48.2 | 26.7 | 7.7 | 28.7 | 45.8 | 11.5 | 35.0 | 29.7 | 31.2 | 37.1 | 25.6 | 35.1 | 38.3 | 49.1 | 17.5 |

| BGE-M3 (Dense) | 68.9 | 78.4 | 80.0 | 56.9 | 55.5 | 57.7 | 78.6 | 57.8 | 59.3 | 56.0 | 72.8 | 69.9 | 70.1 | 78.6 | 86.2 | 82.6 | 61.7 |

| BGE-M3 (Sparse) | 54.2 | 67.1 | 68.7 | 43.7 | 38.8 | 45.2 | 65.3 | 35.5 | 48.2 | 48.9 | 56.3 | 61.5 | 44.5 | 57.9 | 79.0 | 70.9 | 36.3 |

| BGE-M3 (Multi-vec) | 70.3 | 79.6 | 81.1 | 59.4 | 57.2 | 58.8 | 80.1 | 59.0 | 61.4 | 58.2 | 74.5 | 71.2 | 71.2 | 79.0 | 87.9 | 83.0 | 62.7 |

| BGE-M3 (Dense + Sparse) | 70.0 | 79.6 | 80.7 | 58.8 | 57.5 | 59.2 | 79.7 | 57.6 | 62.8 | 58.3 | 73.9 | 71.3 | 69.8 | 78.5 | 87.2 | 83.1 | 62.5 |

| BGE-M3 (Dense + Sparse + Multi-vec) | 71.2 | 80.2 | 81.5 | 59.8 | 59.2 | 60.3 | 80.4 | 60.7 | 63.2 | 59.1 | 75.2 | 72.2 | 71.7 | 79.6 | 88.2 | 83.8 | 63.9 |

| mGTE-TRM (Dense) | 63.1 | 71.4 | 72.7 | 54.1 | 51.4 | 51.2 | 73.5 | 53.9 | 51.6 | 50.3 | 65.8 | 62.7 | 63.2 | 69.9 | 83.0 | 74.0 | 60.8 |

| mGTE-TRM (Sparse) | 55.8 | 66.5 | 70.4 | 35.6 | 46.2 | 40.0 | 47.6 | 66.5 | 39.8 | 48.9 | 47.9 | 59.3 | 64.3 | 47.1 | 59.4 | 83.0 | 70.5 |

| mGTE-TRM (Dense + Sparse) | 64.7 | 73.4 | 75.1 | 49.9 | 57.6 | 62.7 | 52.0 | 74.7 | 53.5 | 56.4 | 52.8 | 67.1 | 66.7 | 63.5 | 69.5 | 85.2 | 75.8 |

| STR (Dense) | 66.0 | 75.4 | 75.2 | 55.9 | 54.1 | 57.0 | 76.3 | 55.1 | 56.0 | 53.2 | 69.2 | 65.9 | 67.0 | 73.1 | 84.2 | 77.6 | 61.4 |

| LTR (Sparse) | 31.4 | 39.0 | 42.2 | 31.0 | 24.2 | 30.0 | 45.6 | 14.7 | 32.8 | 36.3 | 21.7 | 35.3 | 20.2 | 45.5 | 29.4 | 38.1 | 15.6 |

| HTR (Dense + Sparse) | 67.1 | 76.4 | 76.0 | 58.8 | 55.5 | 58.2 | 77.4 | 55.5 | 57.5 | 56.9 | 69.5 | 66.7 | 66.6 | 75.2 | 84.7 | 78.0 | 60.2 |

| Model | Avg | MLDR | MIRACL |

|---|---|---|---|

| previous works | |||

| BM25 | 42.6 | 53.6 | 31.7 |

| BGE-M3 (Dense) | 60.7 | 52.5 | 68.9 |

| BGE-M3 (Sparse) | 58.2 | 62.2 | 54.2 |

| BGE-M3 (Multi-vec) | 63.9 | 57.6 | 70.3 |

| BGE-M3 (Dense + Sparse) | 67.4 | 64.8 | 70.0 |

| BGE-M3 (Dense + Sparse + Multi-vec) | 68.1 | 65.0 | 71.2 |

| mGTE-TRM (Dense) | 59.8 | 56.5 | 63.1 |

| mGTE-TRM (Sparse) | 63.4 | 71.0 | 55.8 |

| mGTE-TRM (Dense + Sparse) | 68.0 | 71.3 | 64.7 |

| our work | |||

| STR (Dense) | 63.9 | 61.8 | 66.0 |

| LTR (Sparse) | 49.3 | 67.3 | 31.4 |

| rank fusion | |||

| HTR (Optimal) | 60.7 | 62.7 | 58.8 |

| HTR (Dense + Sparse) | 67.5 | 70.6 | 64.4 |

| HTR (Oracle) | 75.4 | 78.1 | 72.6 |

| minmax fusion | |||

| HTR (Optimal) | 66.7 | 68.1 | 65.3 |

| HTR (Dense + Sparse) | 70.7 | 74.3 | 67.1 |

| HTR (Oracle) | 76.4 | 79.1 | 73.8 |

| Model Type | Loss Type | Avg | MLDR | MIRACL |

|---|---|---|---|---|

| QDAP-L (Full model) | WD + CE | 70.7 | 74.3 | 67.1 |

| WD | 67.2 | 70.5 | 63.8 | |

| CE | 69.1 | 72.6 | 65.6 | |

| QDAP-S (Adapter) | WD + CE | 68.6 | 72 | 65.3 |

| WD | 63.3 | 66.9 | 59.7 | |

| CE | 66.5 | 70.2 | 62.9 |

| Positives | Negatives | Avg | MLDR | MIRACL |

|---|---|---|---|---|

| No Filtering | Random | 64.3 | 67.0 | 61.5 |

| Sparse | 70.3 | 74.9 | 65.7 | |

| Dense | 71.5 | 74.2 | 68.8 | |

| Sparse + Dense | 73.1 | 75.7 | 70.6 | |

| Dense + Sparse Filtering | Random | 62.9 | 66.3 | 59.6 |

| Sparse | 67.2 | 68.8 | 65.7 | |

| Dense | 68.5 | 69.6 | 67.4 | |

| Sparse + Dense | 71.7 | 73.9 | 69.6 | |

| Sparse Filtering | Random | 66.9 | 69.8 | 64.1 |

| Sparse | 72.8 | 76.4 | 69.1 | |

| Dense | 73.4 | 76.5 | 70.2 | |

| Sparse + Dense | 76.4 | 79.1 | 73.8 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Posokhov, P.; Skrylnikov, S.; Masliukhin, S.; Zavgorodniaia, A.; Koroteeva, O.; Matveev, Y. Query-Adaptive Hybrid Search. Mach. Learn. Knowl. Extr. 2026, 8, 91. https://doi.org/10.3390/make8040091

Posokhov P, Skrylnikov S, Masliukhin S, Zavgorodniaia A, Koroteeva O, Matveev Y. Query-Adaptive Hybrid Search. Machine Learning and Knowledge Extraction. 2026; 8(4):91. https://doi.org/10.3390/make8040091

Chicago/Turabian StylePosokhov, Pavel, Stepan Skrylnikov, Sergei Masliukhin, Alina Zavgorodniaia, Olesia Koroteeva, and Yuri Matveev. 2026. "Query-Adaptive Hybrid Search" Machine Learning and Knowledge Extraction 8, no. 4: 91. https://doi.org/10.3390/make8040091

APA StylePosokhov, P., Skrylnikov, S., Masliukhin, S., Zavgorodniaia, A., Koroteeva, O., & Matveev, Y. (2026). Query-Adaptive Hybrid Search. Machine Learning and Knowledge Extraction, 8(4), 91. https://doi.org/10.3390/make8040091