1. Introduction

Offshore wind farms, submarine cables and pipelines, artificial islands, port expansions, and coastal protection projects are increasingly executed in tropical and subtropical marine settings where seabed sediments contain high proportions of carbonate (calcareous) sands. Carbonate sediments are widespread in major offshore basins and are now encountered not only in oil and gas developments but also in a growing number of offshore wind regions, where foundation, cable, and scour design depend on reliable characterization of dynamic soil properties [

1]. Unlike quartz sands that underpin much of classical soil mechanics, carbonate sands are biogenic deposits formed from skeletal fragments of marine organisms (e.g., coral, algae, foraminifera, and mollusks). Their grains are commonly angular to highly irregular and may contain intragranular voids within a brittle internal framework. Under monotonic and cyclic loading, these traits promote grain crushing, micro-fracture, and progressive fabric change, leading to stiffness and damping responses that can depart substantially from silica sands [

1,

2].

Dynamic geotechnical response is governed primarily by the shear modulus (

G) and damping ratio (

D). The shear modulus controls stiffness and wave propagation and directly influences shear-wave velocity, small-strain stiffness, site response, and soil–structure interaction. The damping ratio quantifies hysteretic energy dissipation and affects resonance amplitudes, cyclic response attenuation, and the stability of equivalent-linear iterations [

3]. For offshore foundations and marine infrastructure, credible

G–γ and

D–γ relationships are central to performance-based design because they directly inform (i) equivalent-linear/nonlinear site-response analyses, (ii) soil–structure interaction models used for vibration serviceability and fatigue assessment (e.g., offshore wind turbine foundations), and (iii) dynamic response assessments for pipelines/cables and scour protection, where stiffness degradation and damping control amplification, resonance, and cyclic demand.

In current practice, dynamic properties are often estimated using semi-empirical correlations such as Hardin–Drnevich and normalized modulus-reduction/damping curve families developed largely from silica-based databases [

4,

5]. However, calcareous sands can deviate from these frameworks because crushing progressively alters particle size distribution and reorganizes the contact network, while micro-fracture and pore collapse can introduce additional dissipation mechanisms; saturation effects may also differ due to intragranular porosity [

2,

6]. Consistent with these mechanistic differences, compiled resonant-column and cyclic databases comparing carbonate and silica sands frequently report higher modulus, higher damping, and faster stiffness degradation for carbonate sands under comparable states, implying that “one-size-fits-all” dynamic curves may be unreliable for carbonate deposits [

6]. These differences are not merely academic: dynamic response is strongly nonlinear, and modest deviations in stiffness or damping with strain can shift natural periods, alter cyclic demand levels relevant to fatigue, and change predicted response amplitudes in offshore structures and buried infrastructure.

Laboratory testing, particularly resonant-column (RC) and cyclic triaxial (CTX) testing, provides direct measurements of

G and

D over a range of strain amplitudes and stress states [

7,

8]. Yet, comprehensive RC/CTX programs remain expensive and time-intensive, and they rarely explore the full multidimensional space required for performance-based offshore design (confinement, density state, saturation condition, gradation, and strain amplitude). As a result, engineers often interpolate from sparse tests or adopt generic correlations, increasing epistemic uncertainty and elevating the risk of either unconservative predictions (underestimating amplification or degradation) or overly conservative designs (overestimating degradation and damping).

Machine learning (ML) offers a practical pathway to bridge sparse testing and design needs by learning nonlinear mappings from measurable soil descriptors to dynamic response quantities. When combined with explainable AI (XAI) tools such as SHapley Additive exPlanations (SHAP), ML models can also be audited to verify whether learned sensitivities remain consistent with soil mechanics expectations and to identify spurious patterns that may arise from heterogeneous datasets [

9,

10,

11,

12,

13]. However, for ML to be deployable in offshore geotechnical practice, two concerns must be resolved: (i) whether model complexity is truly warranted for the common case of moderate-size tabular datasets, and (ii) whether reported performance reflects transferable learning rather than leakage across closely related test series or implicit memorization through deposit identifiers.

Figure 1 summarizes (i) the offshore engineering drivers that require reliable

G–γ and

D–γ curves (serviceability, fatigue, and site-response/SSI analyses), (ii) the curated RC/CTX datasets used in this study, and (iii) the leakage-aware benchmarking workflow (training-only preprocessing, leakage-controlled splitting, and standardized model comparison) adopted herein.

As summarized in

Table 1, recent machine learning applications on calcareous/coral sands span both (i) non-tabular problems that rely on specialized inputs such as images, particle tracking, or point-cloud/morphology descriptors, and (ii) tabular regression problems that use routine geotechnical index/state variables. Despite the increasing use of deep learning in carbonate research, it remains unclear whether deep architectures provide material improvements over well-regularized and interpretable ensemble methods when predictors are standard tabular descriptors typically available in site investigations [

10,

11,

12]. Second, many reported results do not explicitly test transferability when explicit site/deposit labels are excluded and when data splitting controls leakage across repeated or closely related experimental series, conditions that better represent deployment to new offshore sites. Third, predictive accuracy alone is insufficient for engineering adoption; models must also exhibit physics-consistent sensitivities, including stiffness hardening with confinement, modulus reduction with strain amplitude, and plausible damping evolution, supported by explainability-based diagnostics [

13]. To address these gaps, this study establishes a leakage-aware benchmarking framework to predict

D and

G from physically meaningful descriptors and evaluates eleven algorithms spanning linear baselines, ensemble trees, kernel methods, and a neural network, with the objective of identifying model families that best balance accuracy, robustness, and deployability for carbonate-sand dynamic characterization.

The objective of this study is to develop a rigorous benchmarking framework for predicting key dynamic properties of biogenic calcareous sands,

and

G, using standard geotechnical descriptors, and to identify model classes that combine high predictive accuracy with practical deployability. Specifically, the study evaluates (i) which machine learning paradigms perform best for tabular predictors in this application, (ii) whether competitive accuracy is achievable without explicit deposit/soil-type labels, and to quantify cross-soil generalization under a soil-wise grouped validation protocol, and (iii) whether the leading models exhibit mechanics-consistent sensitivities to stress state, strain amplitude, and density proxies when audited using explainability tools [

13]. The intended outcome is actionable guidance for offshore and coastal engineering practice: a transparent workflow, a ranked comparison of algorithms, and a recommended model family for dynamic characterization in carbonate environments.

2. Methodology

The methodology consists of four phases: (i) compilation of experimental databases and definition of input–output variables, (ii) leakage-safe preprocessing (training-only clipping/imputation, categorical encoding, and scaling), (iii) model development and evaluation using a consistent cross-validated benchmarking protocol, and (iv) interpretability and deployment-oriented diagnostics to confirm mechanics-consistent sensitivities and enable practical curve generation. The workflow is designed to be traceable and reproducible, consistent with best practice in computational geotechnics (

Figure 2).

2.1. Experimental Databases and Prediction Targets

Two consolidated experimental databases were compiled from laboratory dynamic tests on reconstituted biogenic calcareous sands, drawing on RC and CTX measurements. The databases were collected from total of 12 peer-reviewed studies [

6,

19,

20,

21,

22,

23,

24,

25,

26,

27,

28,

29]. The source studies and the availability of dynamic test type (RC/CTX) at the study level are summarized in

Table S1 (Supplementary Materials). The first database was developed for predicting the

, and contains

records, while the second targets the

, and contains

records. Each record corresponds to a measured response at a defined state point and is described by the imposed stress level, the shear strain amplitude, and a set of standard geotechnical descriptors (index and state variables) commonly available in routine practice.

Both tasks are formulated as supervised regression problems. For record , an input feature vector is mapped to a target response (either or ) through a learned predictor . Unavoidable experimental variability is represented implicitly through a residual term, reflecting differences in specimen preparation and reconstitution procedures, testing protocols and equipment, interpretation approaches (particularly for damping estimation), and microstructural evolution during loading (e.g., crushing and fabric change) that are not fully captured by the retained predictors.

For damping, each database record corresponds to a damping ratio value measured at the reported shear strain amplitude in RC/CTX testing. Accordingly, the damping target is treated as a strain-dependent response , and is retained as an input to enable prediction of at specified strain levels.

A central methodological decision was to emphasize generalizable prediction rather than deposit-specific fitting. Accordingly, explicit deposit identifiers and soil-type/site labels were excluded from the feature set to reduce the risk of memorization and to reflect realistic deployment scenarios in which engineers typically have access to index properties and stress–strain state variables but may not have robust site labels for model transfer. The predictor set was therefore restricted to routinely measurable descriptors that represent established controlling mechanisms in sand dynamics.

Table 2 provides the symbol, unit, model role (input/output), and engineering meaning of each variable, while

Table 3 summarizes descriptive statistics (minimum, maximum, mean, and standard deviation) for the predictors and targets in each database.

Because saturation condition was reported inconsistently across sources (e.g., “Saturated”, “SATurated”, “Saturated (undrained)”, “dry”, “Dry”), entries were standardized into consistent categories during preprocessing. In addition, one value in the raw dataset was slightly negative due to experimental scatter; this was clipped to zero to enforce physical admissibility prior to model training and statistical reporting.

2.2. Model Development and Evaluation Overview

A consistent benchmarking protocol was used across all candidate algorithms. For each target ( and ), models were trained using the same predictor set and evaluated under the same split strategy. Hyperparameters were tuned using a consistent search strategy (i.e., randomized search) within the training data only, and performance was summarized using standard regression metrics (e.g., , RMSE, MAE).

Finally, explainability diagnostics (SHAP-based feature attribution) were used to verify whether leading models exhibit mechanics-consistent sensitivities (e.g., stiffness increasing with confining stress; damping increasing with strain amplitude), supporting interpretability and engineering credibility.

2.3. Preprocessing and Leakage-Safe Workflow

A leakage-safe preprocessing workflow was applied uniformly across all algorithms to ensure a fair benchmark and to prevent optimistic bias caused by information leakage. All preprocessing operations were implemented within an end-to-end pipeline so that transformations were fit only on training data and then applied to validation/test data.

Numerical predictors were imputed using the median computed from the training partition only. Median imputation is robust for geotechnical datasets where some variables exhibit heavy tails (e.g., ) and where extreme values are physically meaningful rather than erroneous. Because the compiled carbonate databases include incomplete reporting across studies, median imputation preserves sample size and reduces bias introduced by listwise deletion. When applicable, imputation was performed after train/test splitting to avoid leakage from test distributions.

Saturation condition was treated as a categorical predictor. Raw entries were standardized (e.g., “SATurated”, “Saturated (undrained)”, “dry”, “Dry”) and grouped into consistent categories (e.g., Saturated, Dry). The variable was then converted to one-hot encoded binary indicators to avoid introducing an artificial ordinal structure.

Numerical predictors were standardized by z-score normalization,

where

and

were estimated from the training data only. Scaling is essential for distance-based and gradient-based models (e.g., kNN, SVR, and MLP) because feature magnitudes otherwise dominate distance metrics and optimization dynamics. Although tree-based models are scale-invariant, scaling was applied consistently across the benchmark so that all algorithms received inputs processed under the same protocol.

The raw dataset contained a single slightly negative value attributable to experimental scatter/processing. Because negative damping is non-physical, that value was clipped to 0 prior to training and statistical reporting. No additional filtering was applied beyond basic physical admissibility checks.

All transformations, imputation, encoding, and scaling, were encapsulated in pipelines trained on each training fold and applied to corresponding validation/test folds. This prevents test information from influencing imputation statistics or scaling parameters and avoids a common failure mode in moderate-size tabular benchmarks.

2.4. Benchmarking Protocol and Validation Strategy

To obtain robust estimates of predictive performance, the benchmark adopted cross-validated model selection with held-out evaluation. For each target ( and ), models were trained and evaluated under identical splits and identical preprocessing pipelines.

Five-fold cross-validation (K = 5) was used to reduce sensitivity to a single favorable split. Within each fold, hyperparameters were tuned using a consistent search strategy (randomized search) based on validation performance. This mitigates the “lucky split” effect and improves stability of ranking among algorithms.

Hyperparameter sensitivity varies substantially across model families. Kernel and neural models often require careful tuning (e.g., SVR , kernel width , and ; MLP architecture, regularization, and solver settings). In contrast, ensemble trees (Random Forest, Extra Trees, Gradient Boosting) are frequently competitive under modest tuning, which is advantageous for practical deployment when rapid calibration is needed.

A key concern in experimental geotechnics is that multiple records can originate from the same specimen, test series, or experimental campaign, creating near-duplicates that inflate apparent generalization when randomly split. Where specimen identifiers are unavailable, conservative splitting strategies (e.g., by publication, campaign, or test batch) are recommended to reduce near-duplicate leakage and better represent deployment to unseen deposits. This study emphasizes leakage control and excludes explicit deposit labels to encourage learning from physical state descriptors rather than memorization.

To control dependence among repeated measurements, grouped validation was additionally performed using a group identifier retained only for splitting and excluded from the predictors. The group identifier was defined as the soil/deposit label (“Soil Name”, e.g., Dabaa sand), such that all records from the same soil were held out together in each fold.

2.5. Models Benchmarked and Governing Equations

Eleven regression algorithms were benchmarked to span a spectrum from interpretable baselines to flexible nonlinear learners. Let denote the -dimensional feature vector and the target response ( or ).

2.5.1. Ordinary Least Squares and Regularized Linear Regression

Ordinary least squares (OLS) fits

by minimizing the sum of squared residuals:

Ridge and Lasso add regularization penalties to improve generalization and reduce multicollinearity sensitivity:

These models provide transparent baselines and help quantify the value of nonlinearity and interaction learning.

2.5.2. k-Nearest Neighbors Regression

kNN predicts by averaging the responses of the

nearest training points

:

optionally using distance-weighted averaging. kNN is flexible but sensitive to scaling and local sampling density.

2.5.3. Regression Trees

A regression tree partitions the feature space into

regions

and assigns each region a constant prediction

:

Trees naturally capture nonlinearities and interactions but can be unstable unless ensembled.

2.5.4. Random Forest and Extra Trees

Random Forest averages

decorrelated trees trained on bootstrapped samples:

Extra Trees similarly averages trees but introduces additional randomization in split thresholds, which can improve variance reduction and robustness to noise, often beneficial for heterogeneous experimental datasets.

2.5.5. Boosting Ensembles

Boosting constructs an additive model by sequentially fitting weak learners to residual structure. In gradient boosting,

where

is fitted to the negative gradient of the loss function and

is a learning rate controlling shrinkage. Boosting is effective for structured nonlinearities but can overfit without appropriate regularization.

2.5.6. Support Vector Regression (SVR)

SVR estimates a function with an

-insensitive loss by solving:

subject to constraints that allow deviations up to

. With a kernel

, predictions take the dual form:

SVR can be strong in moderate-size tabular settings but is sensitive to hyperparameters.

2.5.7. Multi-Layer Perceptron (MLP)

An MLP maps

through successive nonlinear transformations:

MLPs can approximate complex nonlinear relationships but require tuning and are less interpretable without post hoc tools [

30].

2.6. Evaluation Metrics

Model performance was quantified using

, RMSE, and MAE:

measures explained variance, RMSE penalizes larger errors, and MAE represents typical error magnitude. For , errors are reported in percent, directly relevant to damping values used in equivalent-linear site response iterations. For , errors are reported in MPa, reflecting stiffness uncertainty that propagates into impedance estimates and wave-propagation response.

2.7. Reproducibility and Deployment Considerations

Benchmarking is most valuable when it can be reproduced and translated into deployable workflows. Three practices are recommended.

2.7.1. Pipeline-Based Deployment and Reproducibility

Preprocessing must be packaged with the model so that new inputs undergo the same imputation, encoding, and scaling learned from training data. This prevents drift and ensures that field/lab inputs are statistically consistent with training conditions.

All analyses were conducted in Python 3.11.6 using scikit-learn 1.4.2, SHAP 0.44.1, NumPy 1.26.4, and pandas 2.2.2 on a standard CPU workstation (multi-core). To ensure deterministic replication, we fixed pseudo-random number generation throughout the workflow using random_state = 42 for: (i) the random 70/15/15 train/validation/test split, (ii) cross-validation shuffling where applicable, (iii) RandomizedSearchCV, and (iv) stochastic estimators (e.g., Random Forest, Extra Trees, and MLP).

Hyperparameter optimization used RandomizedSearchCV with the following settings: n_iter = 200, cv = 5-fold, scoring = “neg_root_mean_squared_error”, refit = True (refitting the best configuration under the scoring metric), random_state = 42, and n_jobs = −1. For the random-split benchmark, the inner cross-validation used KFold (n_splits = 5, shuffle = True, random_state = 42) applied to the training partition only. For the leakage-aware grouped evaluation, we used GroupKFold (n_splits = 5) with Soil Name as the grouping label; Soil Name was used only for splitting and was excluded from predictors.

2.7.2. Domain Limits and Range Checks

Models should be deployed with clear input validity bounds derived from the database (

Table 3). In this study, the predictor space spans approximately

–

kPa,

–

, and

ranging from very small strains up to the upper limit reported in the dataset. Predictions outside these domains should be flagged and either rejected or accompanied by conservative fallback estimates.

2.7.3. Curve Generation for Engineering Use

Offshore practice typically requires curves rather than point predictions. Once trained, a model can be evaluated on a logarithmically spaced strain grid to generate and relationships at specified state points (e.g., given , , gradation, and saturation). Post-processing checks can enforce basic admissibility (e.g., nonnegative damping; avoiding nonphysical oscillations) and optionally smooth minor numerical irregularities without distorting the fundamental trend.

These steps connect the benchmark to practical outcomes: rapid screening in early design, sensitivity analysis under parameter uncertainty, and targeted planning of laboratory programs to reduce uncertainty in carbonate environments.

2.8. Hyperparameter Tuning Details

To ensure a fair comparison, all models underwent hyperparameter tuning using a Randomized Search strategy with 5-fold cross-validation applied only to the training partition (70% split). Within each cross-validation iteration, preprocessing and model fitting were conducted in a leakage-safe pipeline, and candidate configurations were evaluated using the Mean Squared Error (MSE). The final tuned model for each algorithm was then refit on the full training set prior to test-set evaluation, with optimal settings for each target reported separately in the

Section 3.

The randomized search spaces were defined to provide comparable opportunity for improvement across model families while controlling capacity and overfitting. For ensemble-tree methods, both Extra Trees and Random Forest were tuned by varying the number of estimators , maximum tree depth , minimum samples required to split an internal node , and minimum samples per leaf . For Extra Trees, the random feature subset size was additionally varied through (or equivalently a fractional subset when implemented), to assess the effect of stronger split randomization.

For boosting ensembles, Gradient Boosting was tuned over the learning rate , number of boosting stages , maximum depth of the base learners , and subsampling ratio . AdaBoost was tuned by varying the number of estimators and learning rate . To keep AdaBoost within the intended weak-learner regime, the base estimator was a shallow decision tree, with its complexity constrained by (decision stumps to shallow trees).

For the Multi-Layer Perceptron (MLP) Regressor, the search considered both architecture and optimization stability by varying hidden-layer topology among representative single- and two-layer structures (e.g., ), L2 regularization strength , and the initial learning rate .

For Support Vector Regression (SVR) with an RBF kernel, the search varied the regularization parameter on a logarithmic scale, the -insensitive loss width , and the kernel coefficient . This SVR search space was intentionally kept comparable in size to the tuning budgets used for other models; broader kernel-scale searches and target transformations were not explored.

For the linear baseline (Ordinary Least Squares/Linear Regression), no hyperparameter search was required under the standard formulation, and it was fitted using default settings (e.g., ) within the same pipeline. For Ridge and Lasso regression, regularization strength was tuned over logarithmic intervals, with , to explore mild to strong shrinkage and (for Lasso) sparsity effects.

For K-Nearest Neighbors (KNN), tuning varied the neighborhood size and the weighting scheme , with distance computed in the standardized feature space. For the Decision Tree Regressor, structural regularization was tuned by varying and , with optionally included to further control leaf purity when needed.

Models were evaluated in two complementary settings. First is the Random split (70%/15%/15% train/validation/test) with a fixed random seed (seed = 42). Hyperparameters were tuned using RandomizedSearchCV with K = 5 folds applied to the training partition only, within a leakage-safe pipeline. Secondly, the soil-wise grouped evaluation to assess transfer to unseen soils and reduce dependence among repeated measurements, the GroupKFold was used with K = 5 using “Soil Name” as the grouping label (retained only for splitting and excluded from predictors). Grouped performance is reported as mean ± SD across folds.

2.9. Empirical and Semi-Empirical Baseline Models

To contextualize the predictive gains of the proposed ML surrogates relative to state-of-practice approaches used in dynamic characterization and site-response workflows, we benchmarked several representative empirical and semi-empirical baseline formulations against the curated datasets. Baselines were fitted using the same leakage-safe philosophy as the ML benchmark. All baseline parameters were estimated on the training partition only, and performance was reported on a held-out test set using the same metrics (, RMSE, MAE).

2.9.1. Data Partitions and Handling

For each target dataset (: ; : after removing missing target entries), the records were randomly split into 70% training, 15% validation, and 15% test partitions (random seed = 42). For damping baselines involving , a small positive strain floor was used to avoid undefined values at : , where in the training set. Predicted damping values were clipped to satisfy physical admissibility, , consistent with the dataset’s admissibility treatment.

In addition to the random split evaluation, grouped cross-validation was conducted using the “Soil Name” label retained only for splitting and excluded from the predictors. The damping dataset contains 14 unique Soil Name groups and the shear-modulus dataset contains 15 groups. Group sizes range from 10 to 158 records (median 51.5 for damping; 63 for shear modulus). Because the number of groups is modest, grouped-CV results are reported as mean ± SD and are interpreted as a strict transfer stress test.

2.9.2. Shear Modulus Baselines (Empirical and Semi-Empirical)

A common empirical representation of stiffness hardening is a power-law dependence on mean effective stress:

which is a stress-only power law (empirical Hardin-style trend), where

and

were estimated by linear regression in log-space using training data with

and

:

Another relation is the stress–strain hyperbolic degradation (semi-empirical Hardin–Drnevich-style form). To reflect both stress hardening and strain-dependent modulus reduction, we fitted a hyperbolic degradation model: where is a characteristic reference strain controlling the onset rate of degradation. The parameters were estimated on the training data using nonlinear least squares with positivity constraints .

These two baselines represent (a) an empirical “stress-only” stiffness predictor and (b) a semi-empirical stiffness surface that incorporates both stress state and strain effects, closely aligned with conventional modulus-reduction concepts used in practice.

2.9.3. Damping Ratio Baselines (Empirical Regression Forms)

Because widely used normalized damping families (e.g., Darendeli-type formulations) typically require additional soil parameters not consistently available in the compiled carbonate database (e.g., PI/OCR/

and other metadata), transparent empirical regressions were adopted that reflect the dominant strain dependence of damping and a secondary stress effect. The strain-only log model is

and Strain + confinement log model is:

Parameters were estimated by least squares on the training set, and predictions were clipped to ensure . These baselines provide a defensible state-of-practice comparator that is interpretable and consistent with the well-known nonlinear sensitivity of damping to strain amplitude.

2.10. Explainability Methods (SHAP/PDP/ICE)

To support engineering credibility beyond aggregate error metrics, post hoc explainability analyses were performed using SHAP-based feature attribution together with PDP and ICE trends. The objective was to verify that the leading model exhibits mechanically plausible sensitivities (e.g., stiffness increasing with confinement; modulus reduction and damping increase with strain amplitude) and to identify potential artifacts arising from heterogeneous reporting across laboratory studies.

2.10.1. SHAP Implementation

SHAP values were computed for the best-performing tree-ensemble model (Extra Trees) using the default TreeSHAP implementation provided by the SHAP Python library via “shap.TreeExplainer”. TreeSHAP was selected because it is exact/efficient for tree ensembles and provides additive attributions that sum to the model output relative to an expected value.

2.10.2. Background (Baseline) Dataset

The SHAP expected value (baseline prediction) was defined using the training partition only. This background choice was aligned with the leakage-safe workflow, ensuring that the reference distribution for expectation values was not informed by held-out samples.

2.10.3. Evaluation Split for SHAP

SHAP attributions were computed on the held-out test set (rather than the training data) to avoid interpreting patterns that could reflect training memorization and to provide a more realistic view of feature contributions under generalization.

2.10.4. Interpretation and Reporting

Global attribution patterns were summarized using SHAP beeswarm (summary) plots, where features are ordered by mean absolute SHAP value and the horizontal spread reflects contribution magnitude. Color indicates feature value, supporting interpretation of whether high/low values tend to increase or decrease predictions. These “SHAP-style summaries” were used as a diagnostic complement to performance metrics and were interpreted alongside mechanistic expectations.

2.10.5. PDP/ICE Generation

PDP and ICE plots were generated from the fitted end-to-end pipeline (including preprocessing) and evaluated over the observed feature ranges. PDP curves summarize average marginal effects, while ICE curves show instance-level heterogeneity around the average trend. These plots were used to cross-check monotonicity and curvature expectations (e.g., increasing with , decreasing with ; damping increasing with ) and to identify state-dependent variability.

3. Results

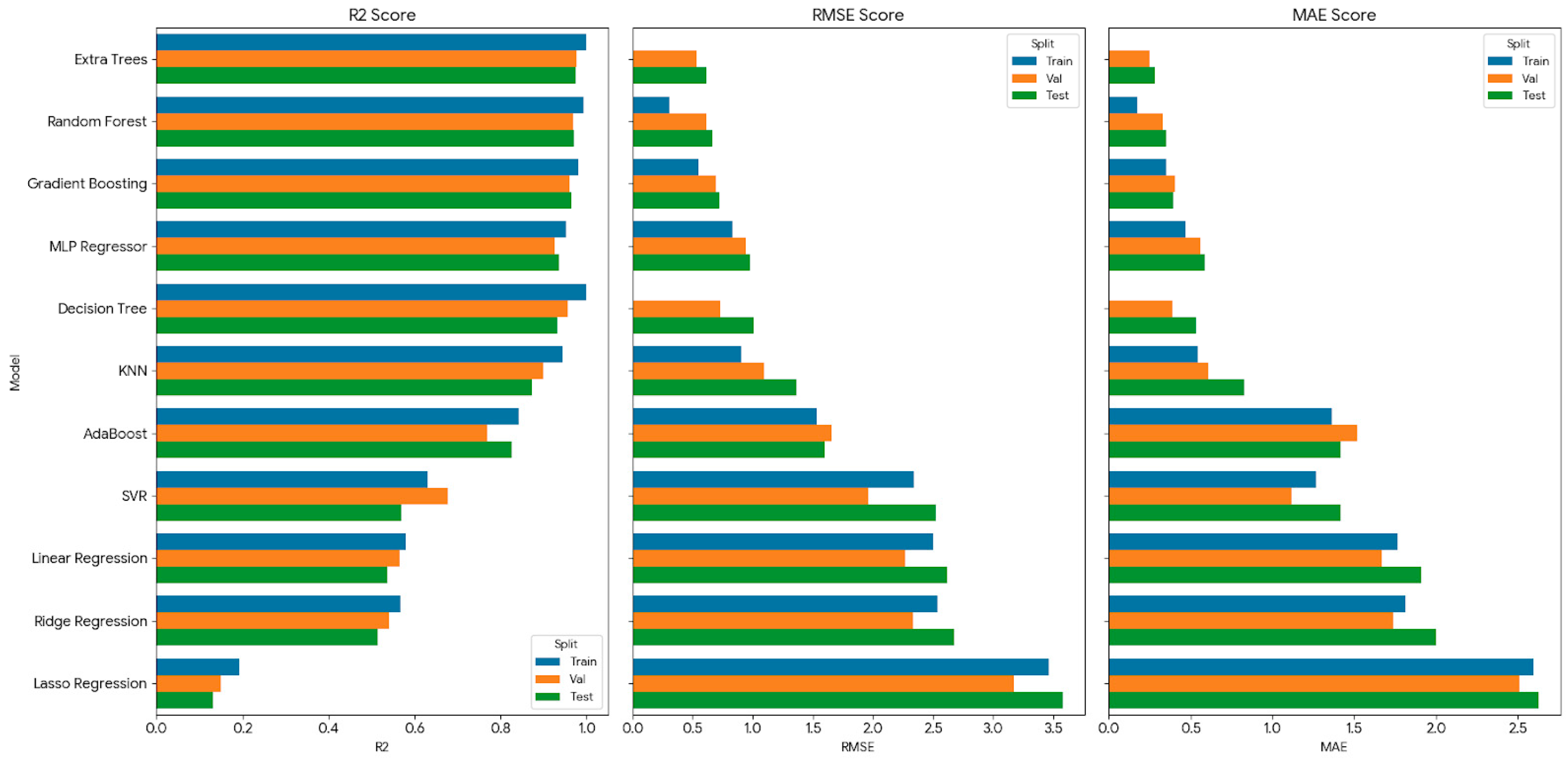

Figure 3 and

Figure 4 compare the predictive performance of the benchmarked models for the two targets,

and

, respectively, using

, RMSE, and MAE reported for the training, validation, and test splits. For transparency and reproducibility, the optimal hyperparameter settings identified for each algorithm (separately for

and

) are reported in

Table 4.

A consistent ranking emerges across both targets: ensemble-tree models (Extra Trees and Random Forest, followed closely by Gradient Boosting) deliver the highest accuracy and the most stable generalization, as evidenced by the close agreement between validation and test metrics. Single Decision Trees achieve very strong training scores but exhibit a clearer drop on validation/test, indicating increased susceptibility to overfitting. kNN and the MLP are competitive but show greater sensitivity to the split and typically slightly higher errors than the leading ensembles. Linear and regularized linear models underperform for

, reflecting the strongly nonlinear and interaction-driven nature of damping, while they capture a larger fraction of the global trend in

but remain consistently less accurate than nonlinear ensembles. SVR is the least reliable approach in this benchmark, most notably for shear modulus, showing poor stability and inflated error relative to alternative nonlinear learners. In the following subsections, model comparison is discussed primarily in terms of random-split test-set performance (unseen records under the pooled dataset). To assess transfer to unseen soils, soil-wise GroupKFold results are summarized separately (

Table S2).

3.1. Damping Ratio Prediction (D)

The damping-ratio benchmark (

Figure 3) highlights a clear dominance of tree ensembles. Under the random split, tree ensembles (Extra Trees, Random Forest) achieve the strongest predictive accuracy on the held-out test set (

Table S2;

Figure 3). However, under soil-wise GroupKFold, performance decreases substantially (

Table S2), indicating limited transferability to unseen soils using the current descriptor set.

Gradient Boosting follows closely, offering similarly high performance with slightly higher errors than the two leading ensembles. Importantly, for these top models the validation and test metrics remain close, indicating strong robustness and low sensitivity to the particular split.

Among non-ensemble models, kNN and MLP perform well but remain modestly inferior to the leading ensembles, which is consistent with damping being a high-scatter target influenced by multiple dissipation mechanisms and experimental interpretation variability. Decision Tree achieves extremely high training performance but shows a more noticeable decline on validation/test, consistent with overfitting when a single tree is allowed to memorize local irregularities in heterogeneous laboratory data.

In contrast, linear and regularized linear regressions (Linear/Ridge/Lasso) underperform substantially for damping, with markedly lower R2 and much larger RMSE/MAE than nonlinear learners. This confirms that damping response in the compiled carbonate dataset is not well captured by additive linear trends and is strongly influenced by nonlinear interactions among stress level, strain amplitude, and state/gradation descriptors. SVR also remains weaker than the best nonlinear models and does not offer a competitive accuracy–stability trade-off for damping under the tested configuration.

For damping-ratio modeling in carbonate sands, the results support using Extra Trees or Random Forest as default surrogates, because they provide the best combination of accuracy and stability across Train/Val/Test. Single-tree models should be avoided for deployment unless heavily constrained/pruned, and linear models are not recommended for damping prediction in this dataset.

3.2. Shear Modulus Prediction (G)

For G (

Figure 4), the benchmark again favors ensemble trees, with Extra Trees and Random Forest achieving the random split. However, under soil-wise GroupKFold, performance decreases substantially (

Table S2), indicating limited transferability to unseen soils using the current descriptor set

Gradient Boosting remains highly competitive, followed by Decision Tree, kNN, and MLP, all of which achieve strong predictive performance overall. As with damping, the key signature of reliable models is that the validation and test bars remain close, indicating stable generalization rather than “lucky split” performance.

A notable difference from damping is that linear and regularized linear models (Linear/Ridge/Lasso) perform much better for G than for D. Their R2 values are comparatively high, indicating that shear modulus contains a stronger “global trend” structure that linear models can partially capture. However, their errors (RMSE/MAE) remain consistently higher than ensemble trees, implying that while modulus has an overall smooth dependence on the predictors, accurate reproduction of hardening/degradation behavior still benefits from nonlinear interaction learning.

The clearest failure mode in this target is SVR, which shows very low explained variance (low R2) and substantially inflated RMSE/MAE compared with all other methods. This indicates that SVR, under the present feature scaling and hyperparameter configuration, does not provide a stable approximation of the modulus response surface. Practically, this reinforces an important deployment lesson: even powerful models can become unreliable on heterogeneous geotechnical datasets without careful tuning and, in some cases, target transformation (e.g., log-scaling of G) and kernel parameter optimization.

For shear modulus prediction, Extra Trees and Random Forest again provide the strongest deployable performance, while linear baselines can serve as quick screening tools but should not be relied upon where high fidelity is required. SVR is not recommended for this dataset in its current configuration. SVR performance is likely influenced by the wide dynamic range and heteroscedasticity of

in the compiled RC/CTX database and by inter-study heterogeneity, which can reduce the suitability of a single global kernel scale. Although standardized feature scaling was applied, RBF-SVR can remain highly sensitive to kernel bandwidth (

), regularization (

), and

, and improved performance is often observed when broader

/

searches, alternative kernels, and/or target transformations (e.g.,

) are explored in a problem-specific manner. The present benchmark adopted a consistent tuning budget and a standardized search space across all models (

Section 2.8); under this controlled setting, ensemble-tree methods exhibited superior robustness and more stable validation-to-test behavior on

[

31,

32,

33].

3.3. Comparative Discussion and Practical Takeaway

Across both targets, ensemble trees deliver the best accuracy and the most consistent validation-to-test behavior. This outcome is mechanistically plausible: carbonate-sand dynamics depend on nonlinear interactions (e.g., strain-dependent response modulated by confinement and density state), and ensembles naturally represent interaction structure and regime-like behavior while remaining robust to noise.

From a deployment perspective, Extra Trees is the most reliable default model and Random Forest is a very close second, offering similarly strong accuracy and generalization. Gradient Boosting provides a strong alternative with competitive performance and a favorable bias–variance balance. kNN and MLP can also achieve good results, but they are typically less robust in practice because performance is more sensitive to feature scaling, hyperparameter tuning, and the local density of training data. In contrast, Linear/Ridge/Lasso are not recommended for damping prediction because they underfit the strongly nonlinear damping response, while SVR, in its current configuration, is not recommended for shear modulus prediction due to poor stability and inflated errors.

3.4. Physics-Consistency and Sensitivity Analysis

High predictive accuracy (high

and low error metrics) is a necessary condition for deploying a surrogate model, but it is not sufficient for engineering adoption. A deployable model must also demonstrate mechanically plausible relationships rather than exploiting spurious correlations in heterogeneous experimental datasets. Accordingly physics-consistency auditing methodology and results is summarized in

Figure 5 for the best-performing model (Extra Trees) using complementary interpretability views: global sensitivity/importance, global marginal effects through PDP (

Figure 6), and instance-level behavior though ICE (

Figure 7) and SHAP-style summaries (

Figure 8). These diagnostics provide a targeted check that the learned relationships reproduce expected soil-dynamics behavior for carbonate sands and remain credible for interpolation within the training domain [

13].

3.4.1. Drivers of Stiffness: Shear Modulus

Across the outputs presented, the SHAP summary (

Figure 8a) provides a direct relative comparison of predictors (ranked by mean

);

ranks as the most influential feature and exhibits the largest SHAP impact magnitude on

, with higher

producing strongly positive contributions to predicted stiffness. This aligns with established soil-dynamics behavior where small-strain stiffness follows stress hardening commonly expressed in power-law form [

4],

The PDP results show a clear increase of

with

(

Figure 6a), while the strain-related PDP displays modulus reduction, high stiffness at very small strains followed by degradation as

increases (

Figure 6a). Density-state proxies (notably

, and to a lesser extent

and

) also exhibit meaningful influence, which is plausible for calcareous sands where packing potential, fabric, and crushability can modify the stiffness–stress response. The ICE plots confirm that, although the average trends are mechanically sensible, the instance-level responses vary in slope and curvature across samples (

Figure 7a), indicating state-dependent stiffness behavior rather than a single oversimplified dependence.

3.4.2. Drivers of Energy Dissipation: Damping Ratio

For damping, the audit indicates a different governing structure: in the SHAP summary (

Figure 8b),

ranks as the most influential feature (highest mean

) and shows the widest SHAP spread, with secondary contributions from confinement and gradation-related descriptors (

Figure 6b and

Figure 8b). The beeswarm summary shows that higher

drives consistently positive contributions (higher damping), while low-strain conditions cluster near lower damping predictions (

Figure 8b). The PDP trend exhibits a strongly nonlinear increase in damping with strain amplitude, consistent with the expected evolution of hysteretic energy dissipation as cyclic loops enlarge (

Figure 6b). The confinement-related PDP is generally negative (

Figure 6b), indicating reduced damping at higher

, which is mechanically plausible because increased effective stress stabilizes contacts and suppresses micro-slip at a given strain level. ICE plots further show heterogeneity in damping growth rates across instances (

Figure 7b), consistent with interaction effects where strain sensitivity is modulated by state variables such as density and gradation.

3.4.3. Interpretation and Engineering Implications

Taken together, the audit supports that the Extra Trees model is not only accurate but also mechanics-consistent in how it separates governing controls: stiffness is primarily stress-controlled (

dominates

), while damping is primarily strain-controlled (

dominates

) (

Figure 6,

Figure 7 and

Figure 8). These outcomes support using the model as an interpolation surrogate within the database domain, with standard range checks on

and

. A focused discussion of test-set generalization and how these behaviors translate under held-out evaluation is presented later (to avoid duplicating the split-wise interpretation already summarized in

Figure 3 and

Figure 4).

3.5. Baseline Comparison Results

Representative empirical and semi-empirical baselines were calibrated using the training partitions only (seed = 42). The fitted baseline equations and their predictive performance on the held-out test set are summarized in

Table 5 for both shear modulus

and damping ratio

. These baselines provide a state-of-practice reference for evaluating the added value of the ML surrogates.

As summarized in

Table 5, the stress-only power-law stiffness baseline

shows limited predictive capability (

, RMSE

Pa), whereas the semi-empirical stress–strain hyperbolic form

substantially improves stiffness prediction (

, RMSE

Pa) by explicitly incorporating strain-dependent degradation. For damping, the log-strain baseline achieves

(RMSE

), and adding confinement yields a modest improvement to

(RMSE

), confirming that damping is primarily strain-controlled with secondary stress-state effects.

When compared with the ML models benchmarked in this study (

Figure 3 and

Figure 4), the leading ensemble-tree surrogates (Extra Trees and Random Forest) provide a clear improvement in predictive fidelity and robustness. In particular, the ML models learn multi-variable nonlinearities and interaction effects beyond the fixed functional forms of the baselines, yielding higher explained variance and lower prediction error on the held-out test set while maintaining stable validation-to-test behavior. This comparison demonstrates that the proposed ML-driven workflow provides measurable value beyond traditional empirical and semi-empirical formulations, especially for heterogeneous carbonate-sand datasets where stiffness and damping are governed by coupled effects of stress state, strain amplitude, density state, gradation, and saturation condition. Future work is recommended to benchmark the proposed baselines against non-calcareous damping curves through the application of machine learning methods [

34] or developing.

3.6. Limitations and Recommended Future Work

Although the benchmarking framework provides clear evidence that ensemble-tree methods are strong candidates for tabular carbonate-sand dynamics, several limitations should be acknowledged to support safe and transparent deployment. First, the models are reliable only within the domain represented by the training data. Predictions outside the covered ranges of , , density indices, and gradation parameters may lead to unphysical extrapolation. For engineering use, range checks should be implemented, and out-of-domain inputs should trigger warnings and/or conservative fallback estimates.

Second, the compiled datasets integrate results from different laboratories and experimental campaigns, introducing heterogeneity in specimen preparation, saturation procedures, instrumentation, and interpretation methods (particularly for damping). While ensemble models are relatively robust to noise, unresolved inter-laboratory variability can still influence the learned mapping. In addition, the database merges RC and CTX results, which differ in loading frequency range and damping evaluation; therefore, method-related bias may be partially embedded in the compiled targets. Because test-type and frequency metadata were not consistently available at the record level, RC/CTX-stratified performance and explicit frequency-dependent modeling were not performed. Future database upgrades should therefore include richer metadata (e.g., preparation protocol, saturation method, test apparatus, and damping computation approach) to enable stratified modeling and more explicit control of confounding factors.

Third, dynamic soil behavior is inherently curve-based (continuous and ), whereas the present benchmark evaluates pointwise predictions using pointwise metrics. A dedicated curve-level assessment, such as integrated absolute error over strain, monotonicity checks (e.g., non-increasing with increasing beyond the small-strain regime), and smoothness/admissibility constraints, would provide a stronger measure of design relevance. Accordingly, curve-level validation against measured and series was not performed and is recommended for future work to support direct use in nonlinear site-response and offshore design workflows.

Fourth, this study focuses on standard tabular predictors that are commonly available, improving near-term deployability, but it does not explicitly capture microstructure descriptors (e.g., particle angularity, intragranular porosity, or crushability indices) that may govern deposit-to-deposit variability in carbonate sands. Incorporating low-cost morphology proxies or image-derived descriptors is a promising direction to improve transferability across carbonate provinces.

Finally, the benchmark reports deterministic predictions; however, uncertainty quantification is important for performance-based design. Future work should incorporate prediction intervals using bootstrap ensembles, quantile regression forests, or conformal prediction so that uncertainty can be propagated into site-response and soil–structure interaction analyses.

4. Conclusions

This study developed an ML-driven, leakage-aware benchmarking framework for predicting the dynamic response parameters of biogenic calcareous sands using standard geotechnical descriptors. Two consolidated experimental databases were curated from resonant column and cyclic triaxial testing (: ; : ), covering a wide range of effective confining stress, density state, and gradation conditions relevant to offshore and coastal engineering. Eleven regression algorithms were evaluated under a consistent preprocessing and evaluation workflow, using , RMSE, and MAE across training/validation/test splits.

Across both targets, ensemble-tree models, particularly Extra Trees and Random Forest, consistently delivered the strongest accuracy and the most stable performance under random-split evaluation, making them strong interpolation surrogates within the compiled database domain. Under strict soil-wise grouped validation, performance drops substantially, indicating that cross-soil transfer remains challenging and likely requires richer deposit-specific descriptors and/or more complete test metadata.

Beyond accuracy, explainability-based diagnostics supported engineering credibility. The interpretability audit confirms that the leading model internalizes mechanically plausible sensitivities: shear modulus is governed primarily by effective confinement () and degrades with increasing strain amplitude (), while damping ratio is driven predominantly by strain amplitude with secondary modulation by stress state and gradation. This separation of governing controls provides confidence that the surrogate captures fundamental dynamic trends rather than purely statistical artifacts.

Overall, the proposed framework supports deployable decision support for carbonate environments: it enables rapid estimation of dynamic parameters, fast generation of site-conditioned and curves within the training domain, and more efficient planning of targeted laboratory programs by identifying where additional testing is likely to reduce uncertainty most.