This section outlines the architecture, algorithms, dataset, and experimental setup used to develop and evaluate the proposed online IDS for smart healthcare networks.

3.1. Online Intrusion Detection Architecture for Smart Healthcare Networks

The proposed architecture (

Figure 2) for online intrusion detection in smart healthcare networks integrates advanced ML methods, robust network monitoring strategies, and real-time threat response mechanisms to secure IoMT.

At the foundational level, the architecture incorporates a diverse range of medical IoT devices [

30], including general health monitoring devices such as glucose meters, blood pressure monitors, ECG devices, and thermometers, which frequently transmit patient health metrics. Additionally, wearable and personal monitoring devices, including smart watches, pulse oximeters, fall detection systems, and other continuously monitored patient physiological state devices, are integrated seamlessly. Clinical diagnostic equipment, such as ultrasound scanners, infusion pumps, and X-ray machines, which handle sensitive and substantial data volumes, also forms a critical component of this architecture. These devices utilize diverse communication protocols, including MQTT [

31], Bluetooth [

32], and Wi-Fi [

33], requiring a versatile and robust security framework to ensure secure data transmission.

The network communication infrastructure is designed to facilitate secure and efficient data transfer among IoMT devices, patient monitoring systems, healthcare provider servers, and remote data repositories. Core components such as routers and switches effectively manage and direct data flow within the healthcare facility’s local network and external internet. A crucial component of this infrastructure is the Network TAP (Test Access Point), enabling passive interception and analysis of network traffic without affecting operational functionality. Additionally, the integration of Wireshark enhances network visibility through detailed packet-level inspection, aiding real-time monitoring and analysis.

The intrusion detection and cybersecurity response layer operates in real time, utilizing a machine-learning-based Leveraging Bagging model to rapidly detect and mitigate potential threats. The model continuously analyzes streaming data to identify anomalies indicative of various cyberattacks, such as unauthorized access attempts, malware infections, data breaches, denial of service attacks, and other malicious activities. Upon anomaly detection, the system generates immediate alerts to facilitate rapid intervention, protecting healthcare services and sensitive patient information. Advanced AI components further enhance the automated decision-making capabilities, significantly reducing response latency and mitigating potential damage effectively.

Incorporated threat modeling strategies allow the architecture to identify and anticipate intrusion patterns specific to healthcare networks, such as unauthorized data access, device hijacking, and network service disruptions. The architecture provides significant advantages, including instantaneous real-time detection and response to security incidents, scalability to accommodate expanding IoMT ecosystems, versatile protocol support ensuring comprehensive network security, and detailed network visibility provided by tools such as Network TAP and Wireshark. By implementing this architecture, healthcare networks can substantially enhance patient data security, ensure continuous service delivery, and effectively respond to evolving cybersecurity threats.

3.2. Proposed Online Intrusion Detection Framework

The core component of our proposed intrusion detection framework is the Leveraging Bagging classifier, an ensemble-based model specifically tailored for online learning in dynamic environments such as smart healthcare networks. Traditional batch learning algorithms struggle in such settings due to their inability to adapt to evolving data streams or concept drifts. In contrast, online ensemble methods such as Leveraging Bagging offer robustness, adaptability, and improved generalization by maintaining a diverse collection of continuously updated base learners.

3.2.1. Definition of the Leveraging Bagging Classifier

The Leveraging Bagging algorithm builds upon the standard Online Bagging approach by using Poisson-distributed sampling to update each base classifier multiple times per instance [

12]. Let

denote the ensemble of

M base classifiers, each trained incrementally. For every incoming data instance

at time

t, each base learner

receives the instance

times, where

is a tunable hyperparameter controlling the sampling variance. The Poisson distribution is defined as follows:

This stochastic resampling mechanism enables greater variance in base learners’ exposure to training data, thereby enhancing ensemble diversity and resistance to overfitting. In our implementation, the parameter

is set to 6.0, as recommended in prior studies for online learning tasks [

12].

During the learning phase, for each classifier

, the training update is performed

times as follows:

Once trained, prediction is performed by aggregating the probability distributions output by each base learner. Let

be the predicted class probabilities from classifier

. The ensemble prediction is then computed as the average probability across all classifiers:

The final predicted class

is determined by selecting the label with the highest averaged probability:

This architecture ensures that the model can effectively adapt to streaming healthcare data while maintaining high predictive performance and scalability. Furthermore, by integrating online learning principles with ensemble diversity, the Leveraging Bagging classifier is particularly well-suited for real-time intrusion detection where attack patterns may evolve rapidly.

3.2.2. Base Learner Factory Function

A crucial element of the Leveraging Bagging architecture is the choice of the base learner, which significantly influences the overall detection performance of the ensemble. For online classification tasks in non-stationary environments like smart healthcare networks, the base learner must support incremental learning, exhibit low latency, and handle potentially imbalanced or noisy data streams. In this study, we employ the Hoeffding Tree classifier (also known as the Very Fast Decision Tree) [

34], which satisfies these requirements and is widely adopted in streaming ML applications.

The Hoeffding Tree is an incremental decision tree algorithm that leverages the Hoeffding bound to make statistically sound decisions about attribute splits. Unlike conventional decision trees that require the entire dataset, the Hoeffding Tree evaluates whether a split is warranted based on a finite sample of the input stream, ensuring both computational efficiency and adaptiveness.

Formally, let

denote the heuristic evaluation function (e.g., information gain or Gini index) for attribute

. After observing

n instances, the Hoeffding bound guarantees with probability

that the attribute

with the highest heuristic value is truly the best choice for a split if

where

is the second-best attribute and

is defined as follows:

Here, R is the range of the heuristic function (e.g., for information gain in a C-class problem), and is the user-defined confidence parameter. This statistical framework allows the algorithm to make high-confidence decisions with only a small subset of data, making it ideal for real-time intrusion detection.

In our proposed system, we define a factory function to instantiate Hoeffding Tree classifiers with consistent parameters across all base learners. This design promotes modularity and reproducibility. The parameters used in our implementation include a grace period of 30 and a sigma of 0.01. The grace period defines the minimum number of instances required between split attempts at each node, balancing speed and accuracy, while the sigma value controls the strictness of the Hoeffding bound, affecting the frequency of splits. The model parameters used for training and evaluation are summarized in

Table 2.

By encapsulating the instantiation process in a factory function, the ensemble construction becomes highly flexible. The model can be easily extended to use other incremental learners or to fine-tune the hyperparameters of the base learner without modifying the ensemble logic. This design choice aligns with the principle of separation of concerns and contributes to the scalability and maintainability of the proposed intrusion detection architecture.

3.2.3. Model Initialization and Preprocessing Pipeline

In real-time intrusion detection tasks, especially within smart healthcare environments where data originates from diverse IoMT sources, maintaining consistency and reliability in model input is vital. Before feeding data into the ensemble model, a preprocessing step is employed to ensure that all features are appropriately scaled and standardized, preventing dominant features from biasing the learning process.

To construct an end-to-end learning system, we use a pipeline structure that integrates data preprocessing and the ensemble classifier. The pipeline is composed of two main components: a Standard Scaler for feature normalization, and the Leveraging Bagging classifier as the final estimator. This modular design facilitates both interpretability and reproducibility while enabling seamless updates to individual components.

Formally, given a feature vector

where

d is the number of input features, we apply standard scaling:

where

and

are the mean and standard deviation of feature

, estimated incrementally in the streaming context. This transformation ensures that each feature has zero mean and unit variance, a necessary step for maintaining numerical stability in models sensitive to input scale, such as decision trees or gradient-based learners.

Following normalization, the transformed feature vector

is passed to the Leveraging Bagging classifier for prediction and learning. The pipeline structure can be denoted as follows:

where

represents the ensemble model defined previously. This composition ensures that data flows sequentially through preprocessing and classification stages without requiring manual intervention for each operation. Furthermore, the encapsulation provided by the pipeline abstraction supports efficient deployment, testing, and evaluation under real-time streaming conditions.

By maintaining this structured and modular pipeline, the system guarantees compatibility with dynamic feature distributions, reduces variance across features, and supports scalable learning in evolving healthcare networks. This design choice also aligns with practical requirements for integrating ML systems into medical monitoring platforms, where automation and reliability are critical.

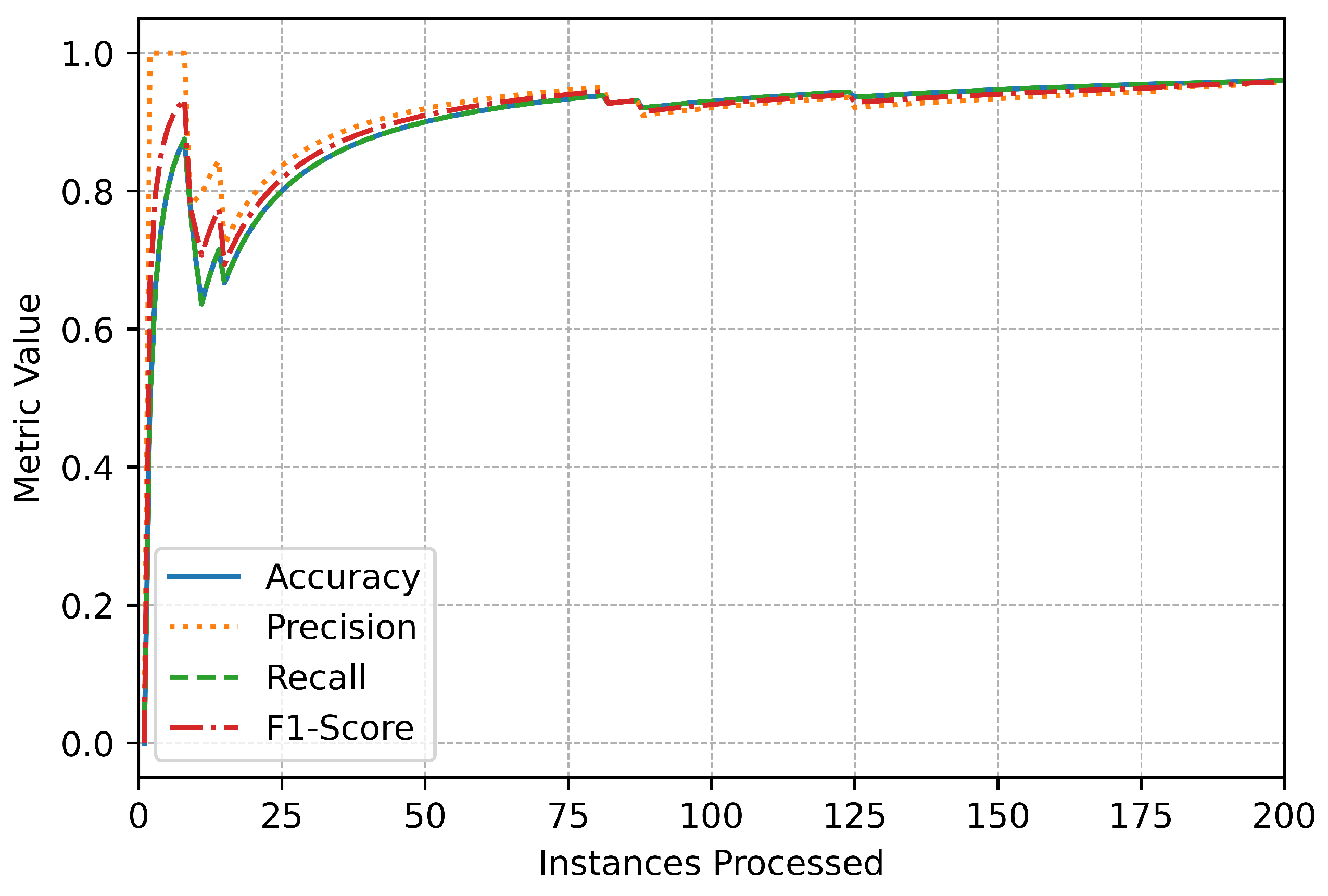

3.2.4. Metrics and Drift Detection Setup

To comprehensively assess the performance of the proposed online IDS, we incorporate a suite of evaluation metrics that are updated incrementally with each incoming instance. These metrics provide real-time insights into classification quality and support longitudinal monitoring of model behavior over time. Additionally, to maintain robustness against evolving threats, a concept drift detection mechanism is integrated to identify shifts in the data distribution that may compromise model reliability.

The selected metrics include accuracy, weighted precision, weighted recall, and weighted F1-score, each computed using a streaming-friendly formulation. Let denote the true label and the predicted label at time t. For a multi-class setting with class labels , the incremental versions of the metrics are defined as follows:

Weighted precision, recall, and F1-score are computed per class and then aggregated by class frequency, ensuring fair performance evaluation across imbalanced class distributions:

where

is the relative frequency of class

c and

is the per-class metric value up to time

t.

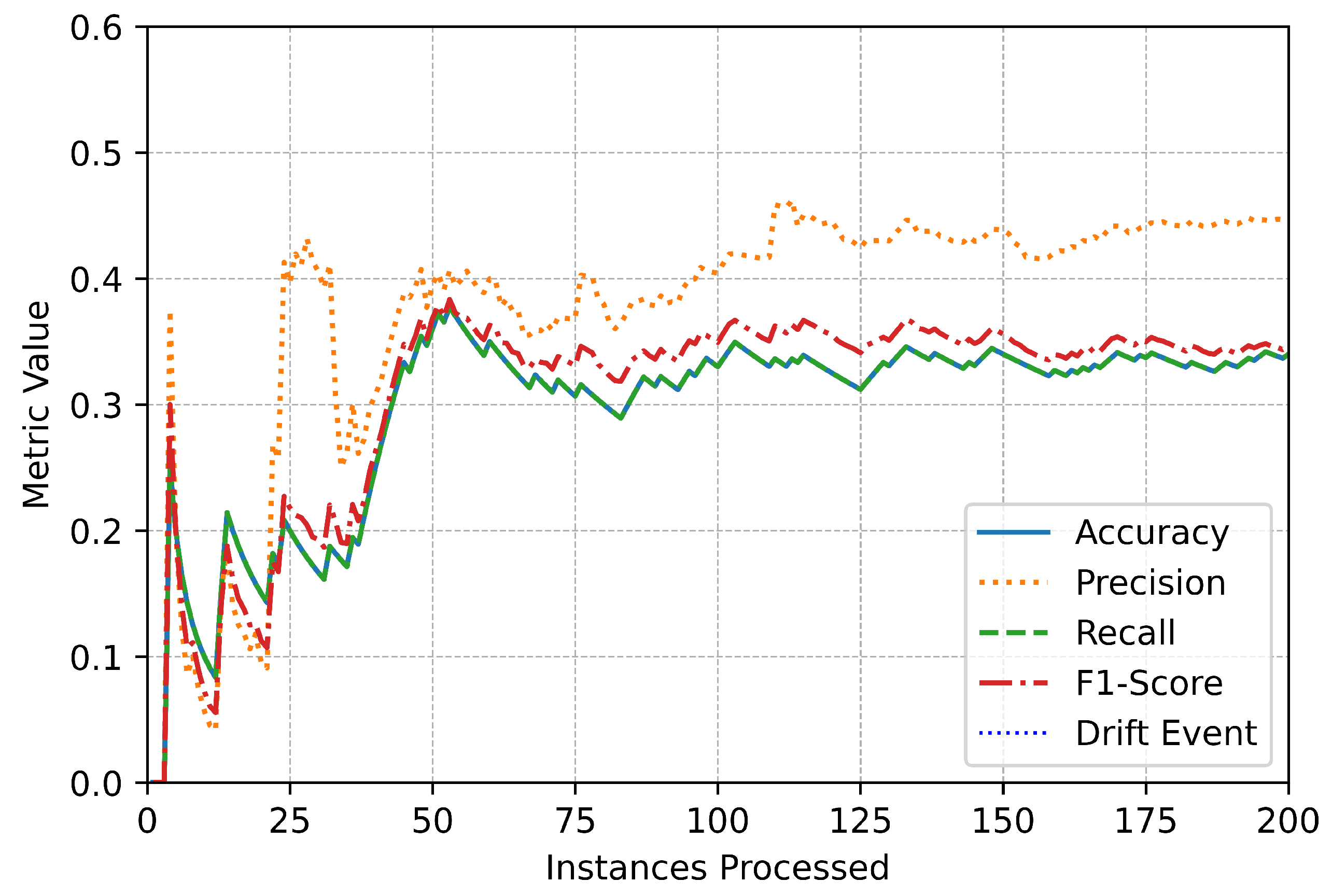

To detect changes in the underlying data distribution—often referred to as concept drift—we incorporate the Page–Hinkley test [

13], a well-established drift detection algorithm. This method monitors the average error rate of the classifier and triggers an alert when significant deviation from the expected mean is observed. Let

be the binary loss at time

t, defined as follows:

Let

be the cumulative average loss and

the minimum of the cumulative differences:

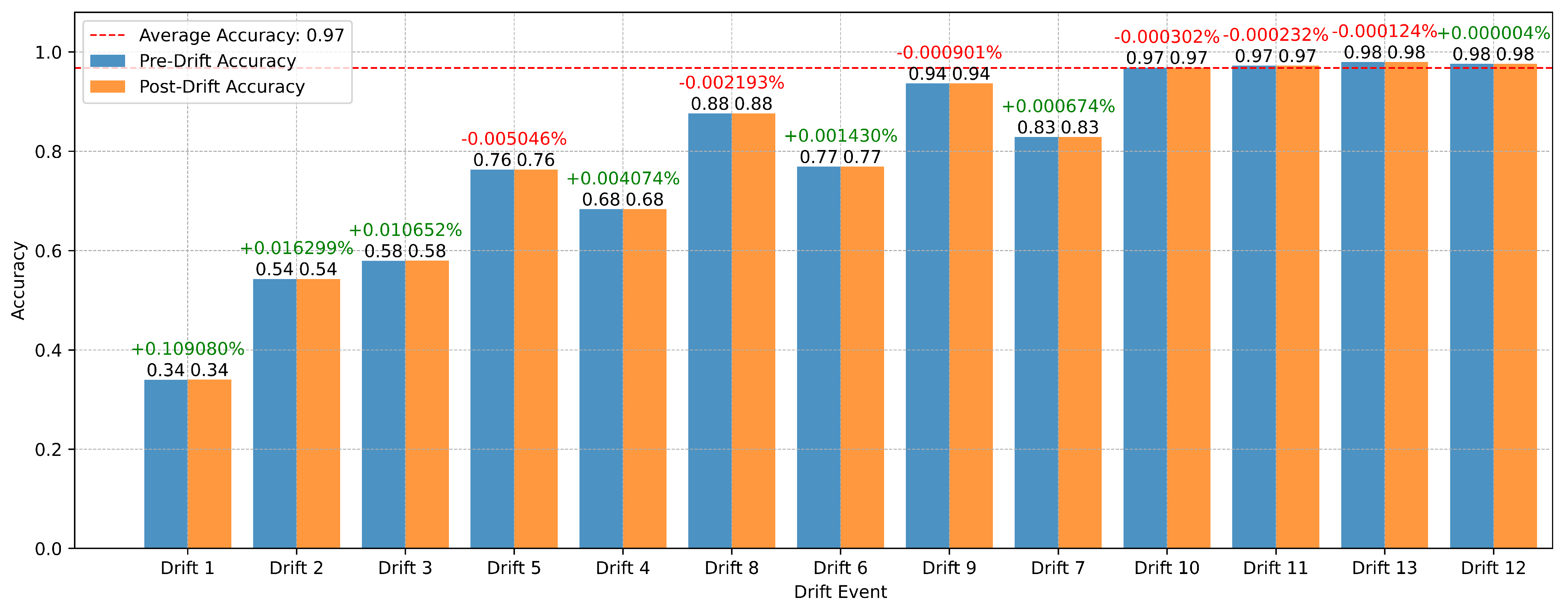

Here, is a slack parameter to prevent false positives, and is a predefined threshold controlling sensitivity. When this condition is satisfied, a drift event is registered, and the system applies a selective adaptation strategy to maintain predictive stability. Rather than resetting the entire ensemble, only base learners exhibiting elevated error rates are reinitialized. Specifically, if the recent error estimate of a learner exceeds a predefined threshold following drift detection, that learner is replaced with a newly instantiated model generated by the base learner factory. This targeted replacement allows the framework to adapt quickly to evolving traffic patterns while preserving reliable knowledge retained by well-performing learners, ensuring continuous and robust operation.

In practice, this mechanism enhances the resilience of the IDS against emerging or morphing attack vectors, which are prevalent in real-world healthcare networks. Furthermore, by logging all performance metrics and drift events over time, our system supports detailed post hoc analysis and continuous model auditing—an essential capability in regulated domains such as healthcare cybersecurity.

3.2.5. Online Training and Evaluation Loop

The core operational logic of the proposed online IDS is embedded in a continuous training and evaluation loop, which processes each incoming instance in real time. This streaming-based learning paradigm is critical for dynamic environments such as smart healthcare networks, where new data arrives sequentially from a variety of IoMT devices. The loop is designed to perform four fundamental operations for every instance—prediction, learning, evaluation, and drift detection—executed sequentially and efficiently to minimize latency.

At each time step

t, the system receives a feature vector

and its corresponding true label

. The model first generates a prediction

using Equation (4). Immediately after prediction, the model is updated with the true instance–label pair using

This one-pass learning approach is memory-efficient and facilitates fast adaptation to recent patterns, which is essential for intrusion scenarios where malicious behaviors may shift over time.

Following learning, the system updates all evaluation metrics incrementally using the observed pair. This real-time logging enables performance monitoring without requiring batch aggregation, thereby supporting on-the-fly diagnostic visualization and adaptive thresholding.

Next, concept drift is assessed using the Page–Hinkley test. If a drift is detected—indicating a statistically significant increase in classification error—the system flags the corresponding instance index and records the detection timestamp. This flag can be used to trigger mitigation procedures, such as alerting administrators, retraining the model, or initiating forensic analysis.

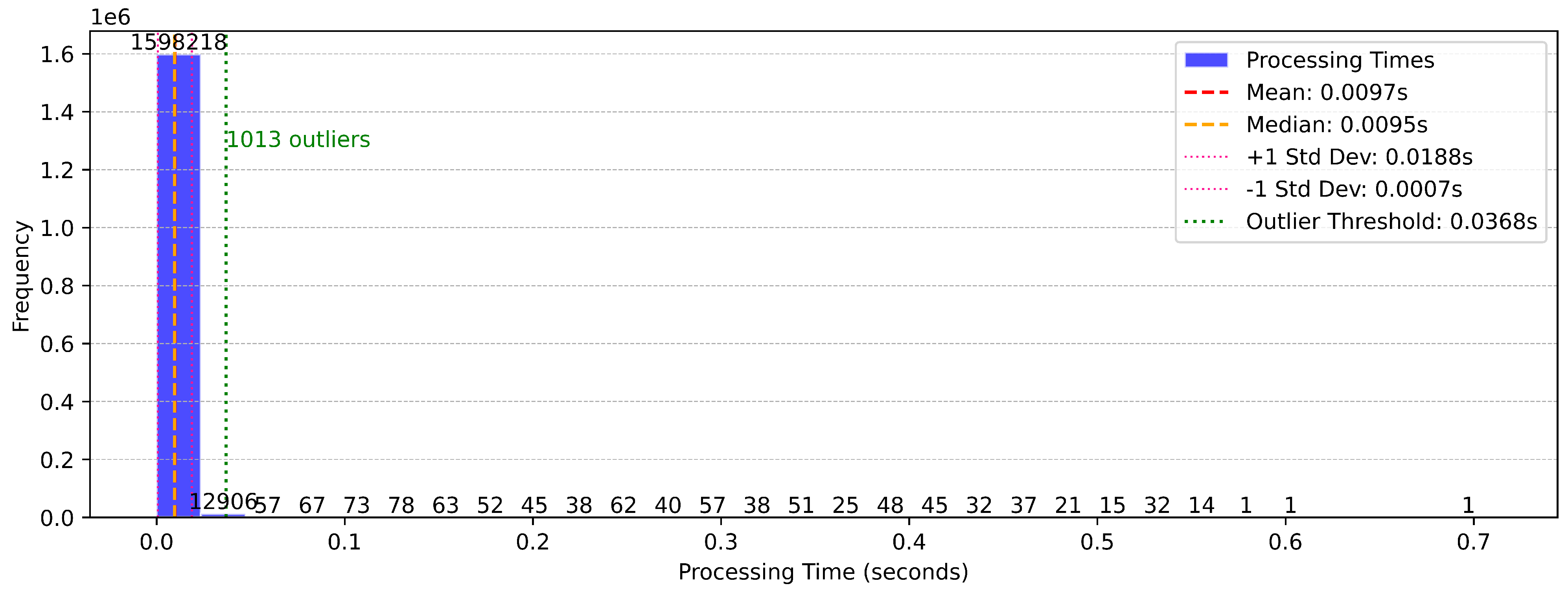

To quantify computational efficiency, the time required for prediction, learning, metric computation, and drift detection is measured individually for each instance. These times are aggregated to compute average processing time per instance and identify performance bottlenecks. Let

be the cumulative processing time after

t instances:

This fine-grained timing analysis provides insight into the model’s suitability for real-time deployment.

The loop continues until all instances in the stream have been processed. At specified intervals, the system logs intermediate results to the console or a visualization dashboard, offering periodic feedback on the number of instances processed, current metric scores, and drift event occurrences. This modular and interpretable design allows the system to operate robustly in real-time settings while supporting maintainability, extensibility, and traceability.

In order to clearly describe the operational workflow of the proposed online intrusion detection framework, Algorithm 1 presents the detailed procedural steps. The framework operates in a fully online manner, processing each incoming instance sequentially without revisiting previous data. Upon arrival of a new instance, the system first standardizes the input features using running statistics to ensure numerical stability. The standardized instance is then used to generate predictions through an ensemble of Hoeffding Tree base learners, with their outputs aggregated to produce the final predicted label. After prediction, each base learner is updated based on a stochastic resampling strategy governed by a Poisson distribution, promoting ensemble diversity. Simultaneously, the system updates evaluation metrics incrementally to track real-time performance. Concept drift detection is continuously performed using the Page–Hinkley test, monitoring the error stream for significant deviations. In the event of detected drift, appropriate adaptation strategies are triggered to maintain model relevance. Additionally, instance-level processing times are recorded to assess the computational efficiency of the system. This integrated, sequential process ensures that the proposed framework remains adaptive, accurate, and computationally feasible for deployment in real-time smart healthcare environments.

3.3. Dataset for Real-Time Stream Simulation

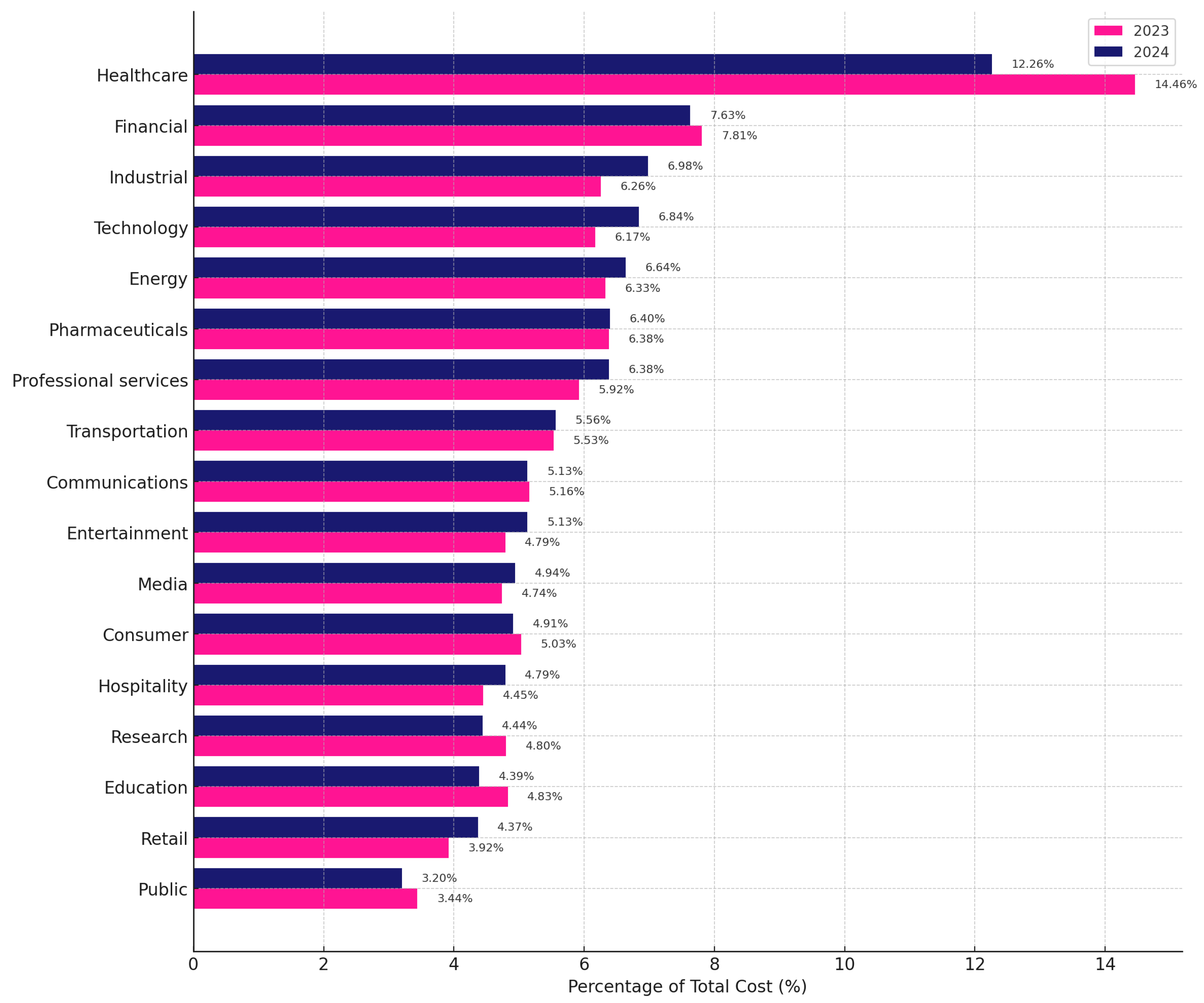

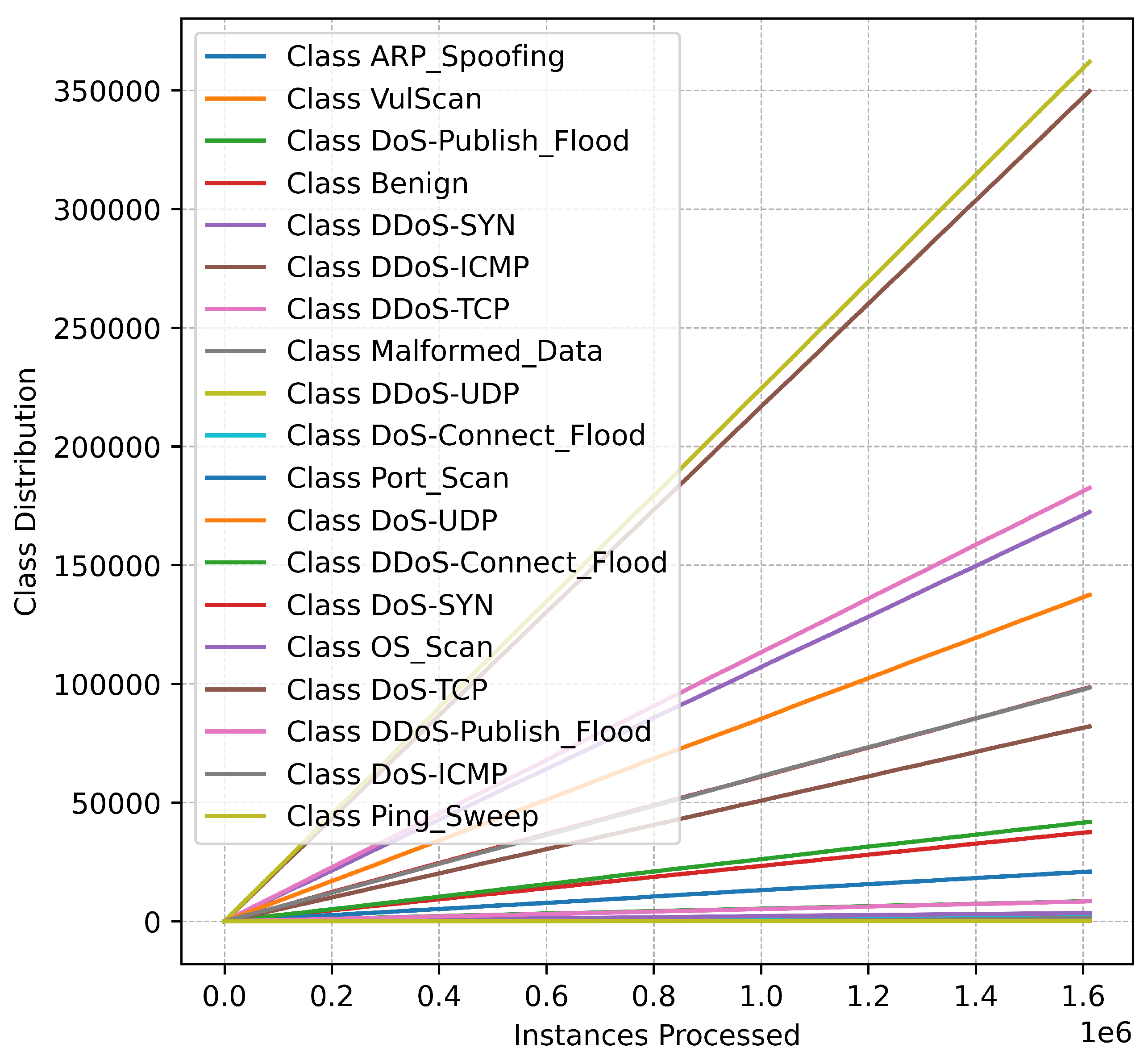

The evaluation of the proposed online intrusion detection framework was conducted using the CICIoMT2024 dataset [

35], a comprehensive benchmark designed to reflect the diverse characteristics of network traffic in smart IoT-enabled healthcare environments. This dataset encompasses a wide range of benign and malicious traffic flows generated by various IoT devices, offering a realistic and challenging testbed for developing and validating data-stream learning algorithms.

In contrast to conventional batch learning models, the proposed framework operates under a fully online learning paradigm, eliminating the need for a separate training phase. Instead, a prequential evaluation protocol was adopted, wherein each incoming instance was first subjected to prediction and then immediately used to update the model. This procedure ensures that performance metrics accurately reflect the model’s adaptability and generalization capabilities in real-time conditions. It also aligns with real-world deployment scenarios, where IDSs must learn continuously from sequentially arriving traffic without prior access to future observations.

Accordingly, only the test portion of the CICIoMT2024 dataset was utilized for both evaluation and incremental learning. To construct a unified dataset for streaming simulation, multiple session-specific CSV files were consolidated, and label normalization was performed to remove session identifiers and standardize attack naming conventions. Labels were subsequently mapped into three hierarchical granularities: a binary scheme distinguishing benign and attack traffic; a six-class scheme grouping attacks into major families such as DDoS, DoS, MQTT abuse, reconnaissance, and spoofing; and a fine-grained nineteen-class scheme capturing specific attack types including DDoS-UDP, port scanning, and ARP spoofing. The distribution of instances across these labeling schemes is summarized in

Table 3.

| Algorithm 1: Online intrusion detection framework |

![Make 08 00067 i001 Make 08 00067 i001]() |

Rigorous data quality validation was performed during preprocessing. Null-value inspections confirmed the absence of missing entries, and duplicate records were removed, retaining only the first occurrence of each duplicated instance to preserve the integrity of the data. Following preprocessing, the feature matrix and corresponding labels were extracted and prepared for real-time simulation.

To emulate a streaming environment, the dataset was randomized by shuffling the instances before evaluation [

36], thereby mitigating potential ordering biases inherent to session-based traffic captures. A fixed random seed of 42 was applied during shuffling to ensure reproducibility. During the simulation, instances were streamed sequentially to the online learning model, with each instance processed independently and without access to future data. This setup accurately mirrors operational conditions in smart healthcare networks, where data must be processed immediately upon arrival under strict real-time constraints.