Shared Autoencoder-Based Unified Intrusion Detection Across Heterogeneous Datasets for Binary and Multi-Class Classification Using a Hybrid CNN–DNN Model

Abstract

1. Introduction

- Introducing a novel IDS framework that integrates three heterogeneous benchmark datasets (CSE-CIC-IDS2018, NF-BoT-IoT-v2, and IoT-23) into a harmonized, protocol-agnostic representation using a shared autoencoder. This enables the detection of twenty-five traffic classes within a single model by aligning disparate feature spaces into a universal behavioral manifold.

- Developing a shared autoencoder architecture that implements “Structural Dualism” to combine heterogeneous datasets into a unified 15-dimensional latent space. This design captures generalizable intrusion patterns (global intelligence) while preserving dataset-specific reconstruction (local nuance) through alternating multi-domain training, ensuring robust feature harmonization across different network environments.

- Providing an enhanced hybrid CNN–DNN deep learning model specifically engineered to process the learned latent space. This synergistic design uses the CNN for spatial pattern extraction within the 15-dimensional latent vector and the DNN for robust global classification, demonstrating superior performance over traditional serial models. Class imbalance is mitigated using resampling techniques such as adaptive synthetic sampling (ADASYN) and edited nearest neighbors (ENN).

- Undertaking rigorous evaluation of the proposed model on the merged datasets and each dataset individually to establish its generalization capability. This highlights its superior performance over existing solutions by proving the model recognizes the underlying semantics of attack behaviors rather than superficial dataset-specific features.

2. Previous Work

2.1. Traditional Machine Learning for IDS

2.2. Deep Learning-Based Intrusion Detection

2.3. Hybrid Models for IDS

2.4. Cross-Dataset and Multi-Domain IDS

| Author | Dataset | Year | Utilized Technique | Accuracy | Contribution | Limitations | |

|---|---|---|---|---|---|---|---|

| B | M | ||||||

| Esra Altulaihan. et al. [6] | IoTID20 | 2024 | SVM | - | 99.38% | This research aims to develop an ML-based IDS for detecting DoS attacks through anomaly detection in the context of IoT networks. |

|

| Dainan Zhang et al. [7] | TON IoT, UNSW-NB15 | 2026 | LightGBM | 97.22% | 96.08% | First work to couple an SCC–SSA two-stage selection pipeline with Bayesian optimization for a fully automated, resource-aware IoT workflow |

|

| Abdallah R. Gad et al. [8] | ToN-IoT | 2022 | XGBoost | 98.2% | 97.8% | A machine learning-driven, decentralized system was introduced with the goal of identifying and decreasing IoT attacks |

|

| Nada Abdu Alsharif et al. [9] | NSL-KDD | 2023 | RF | 99.9% | - | An ML-driven IDS was developed in this study, with blockchain utilized to secure IoT device communications. |

|

| Hanadi Hakami et al. [10] | UNSW-NB15 | 2025 | RF | - | 98.85% | This study introduces a new method that uses machine learning to boost detection accuracy and enhance system reliability. |

|

| Mohamed ElKashlan et al. [11] | Iot-23 | 2023 | Filtered classifier | 99.2% | 99.2% | This paper introduces a machine learning-based method to categorize and detect harmful traffic in IoT networks. |

|

| Ahmed Abdelkhalek and Maggie Mashaly [12] | NSL-KDD | 2023 | CNN | 93.3% | 81.8% | The class imbalance in the NSL-KDD dataset is addressed in this research by using a CNN in conjunction with resampling approaches, which improves the detection of minority assaults. |

|

| Ajdani, M et al. [13] | NSL-KDD, CICIDS2017 | 2025 | GAN + DNN | 98.2% | 97.8% | Integrates GANs with DNNs to generate synthetic data, specifically targeting the reduction of False Positives and False Negatives. |

|

| Tanzila Saba et al. [14] | BoT-IoT | 2022 | CNN | - | 95.55% | In order to efficiently analyze all network traffic in IoT contexts, this study suggests a CNN-based approach for anomaly identification in IDS. |

|

| Rubayyi Alghamdi and Martine Bellaiche [15] | Iot-23 | 2023 | LSTM | 98.20% | 92.8% | In order to classify traffic into binary and multi-class categories, this work presents a deep ensemble IDS that uses Lambda architecture and LSTM-based models. |

|

| Wa’ad H. Aljuaid and Sultan S. Alshamrani [16] | CSE-CICIDS2018 | 2024 | CNN | - | 98.67% | In order to increase the effectiveness of cyberattack detection in cloud computing environments, this study suggests a deep learning model that makes use of an advanced CNN architecture. |

|

| Muhammad Wasim Nawaz et al. [17] | CICIDS2017 | 2023 | LSTM | - | 99% | In this study, an LSTM-based model with tailored loss functions for multi-class classification and oversampling is proposed to address class imbalance in network intrusion detection. |

|

| Chiming Xi et al. [18] | NSL-KDD, CIC-DDoS 2019, UNSW-NB15 | 2024 | Multi-Scale Transformer (IDS-MTran) | 99.25% | 99.74% | Introduced Multi-Scale branches using convolution kernels to capture both micro-details and macro-patterns. Features the cross feature enrichment (CFE) module for deep fusion. |

|

| Hesham Kamal and Maggie Mashaly [22] | CSE-CIC-IDS2018 | 2025 | AE-DTNN | 99.92% | 99.72% | This paper details a hybrid AE-DTNN model engineered to produce a highly dependable and agile IDS for deployment in current, dynamic network ecosystems. |

|

| Yanfang Fu et al. [23] | NSL-KDD | 2022 | CNN and BiLSTMs | 90.73% | - | In order to improve network intrusion detection accuracy and robustness, this research presents DLNID, a deep learning model that makes use of CNN, attention, and Bi-LSTM. |

|

| Emad Ul Haq Qazi et al. [24] | CSE-CIC-IDS2018 | 2023 | CNN-RNN | 99.02% | 98.90% | Developed a dual-stage fusion model. The CNN layer captures local spatial features (relationships between feature columns), while the RNN layer extracts temporal patterns (sequential dependencies in traffic flows) within the CICIDS-2018 dataset. |

|

| Muhammad Sajid et al. [25] | CICIDS2017 | 2024 | CNN-LSTM | 97.90% | - | In order to enhance the detection of emerging network assaults, this study proposes a hybrid model that combines XGBoost, CNN, and LSTM. |

|

| Hesham Kamal and Maggie Mashaly [26] | Iot-23 | 2025 | CNN-MLP | 99.99% | 99.91% | A hybrid model, which merges a CNN and an MLP, is introduced in this study to accurately spot and sort IoT traffic in both dual and multi-category contexts. |

|

| Muhammad Basit Umair et al. [27] | NSL-KDD | 2022 | Multilayer CNN-LSTM | - | 99.5% | An IDS utilizing CNN and LSTM with a softmax classifier is presented in this study. It is assessed on benchmark datasets and contrasted with a multilayer DNN. |

|

| Hesham Kamal and Maggie Mashaly [28] | NF-BoT-IoT-v2 | 2025 | Transformer–DNN & AE-CNN | 99.98% | 97.90% & 97.95% | They propose two sophisticated hybrid deep learning models: the first integrates an autoencoder with a CNN, while the second merges a Transformer with a DNN. |

|

| Sami Yaras and Murat Dener [29] | ToN-IoT | 2024 | CNN-LSTM | 98.75% | - | In order to increase intrusion detection accuracy, this study created a CNN-LSTM model using pertinent characteristics from the CICIoT2023 and TON_IoT datasets. |

|

| Hesham Kamal and Maggie Mashaly [30] | NF-UNSW-NB15-v2 | 2024 | Transformer-CNN | 99.71% | 99.02% | The study proposes a combined Transformer-CNN model designed to solve the problem of class imbalance by applying resampling and class weighting techniques. |

|

| Zhi-Xian Zheng [31] | ToN IoT | 2025 | GAN + Transformer + Bi-GRU | 99.9% | 97.66% | Combines GANs to generate adversarial samples for class balancing with Transformer/Bi-GRU for global and sequential feature extraction. |

|

| Mr. S. Balaji et al. [32] | IoT-specific Attack Data | 2024 | Hybrid GAN + Firefly Optimization | 99% | 98% | Developed a Distributed IDS using Firefly optimization and SMOTE to detect malicious behavior without a centralized controller. |

|

| Doaa Mohsin Abd Ali Afraji [33] | ToN_IoT, CICIDS2017 | 2025 | CNN-LSTM-GRU (Parallel-Sequential Fusion) | 99.99% | 99.49% | Introduced a multi-branch architecture that captures spatial (CNN) and both short-term (GRU) and long-term (LSTM) temporal patterns simultaneously. |

|

| Kuburat Oyeranti Adefemi [34] | IoTID20, BoT-IoT | 2025 | Hybrid CNN-GRU | 99.83% | 99.01% | Integrated convolutional layers for spatial feature extraction with GRUs for temporal dependencies, specifically choosing GRU over LSTM for lower computational overhead. |

|

| Md Aadil Hasan and Dev Sharma [35] | CIC-IDS2017, UNSW-NB15, WSN-DS | 2025 | Hybrid CNN-LSTM (3-Layer) | 99.65% | 99.61% | Combines CNN for spatial pattern extraction with LSTM for temporal dependency modeling; uses Stratified K-Fold (K = 8) for validation stability. |

|

| Minxiao Wang et al. [38] | CIC-IDS-2017, UNSW-15, and ISCX-2012 | 2024 | CNN | 97% | 96.2% | An ensemble IoT IDS was created by researchers, combining ML and DL techniques to boost its ability to detect attacks. |

|

| Said Al-Riyami et al. [39] | NSL-KDD, FigureKDD | 2021 | Trees, k-NN, CNN, LSTM | 96.51% | 89.80% | Identified that cross-dataset failure is caused by feature naming mismatches; proved uniform relabeling restores performance. |

|

| Jia Yu et al. [40] | TON_IoT, UNSW-NB15, Synthetic (GAN) | 2025 | CAE-NSVM (Multi-view Fusion + Federated Learning) | 96.3% | 82.9% | Integrated AE-SVM joint loss model that extracts features from 5 host views within a privacy-preserving Federated framework. |

|

| Adel Alabbadi and Fuad Bajaber [41] | TON_IoT, NSL-KDD, CICIoMT 2024 | 2025 | X-FuseRLSTM (Sparse Transformer + RLSTM + XAI) | 99.72% | 99.40% | Dual-path fusion using Transformers for long-range spatial data and Residual LSTMs for temporal patterns, integrated with XAI (SHAP/LIME). |

|

| Muhammad Iqrar Amin et al. [42] | NFv2-(UNSW-NB15, BoT-IoT, ToN-IoT, CIC-2018) | 2026 | Heterogeneous Deep Stacked Ensemble (GRU + LSTM + DNN + MLP) | 99.83% | 88.77% | Uses “Frozen” heterogeneous base learners to preserve domain-specific knowledge and a Meta-Learner to bridge the gap between different network traffic distributions. |

|

| Min Li et al. [43] | TON_IoT, UNSW-NB15 | 2025 | Multi-View KG-Enhanced DL (Model 6: KG + Multi-CNN + LSTM) | 97.3% | 91.1% | Proposed a two-level fusion (secondary fusion) strategy integrating Knowledge Graphs for relational features with DL for spatial/temporal data. |

|

| Hesham Kamal and Maggie Mashaly [44] | CICIDS2017 and CSE-CIC-IDS2018) | 2025 | PCA–Transformer | 99.80% | 99.28% | A modern IDS design is presented that uses an aggregated dataset and a PCA–Transformer technique to recognize twenty-one types of traffic (1 benign and 20 malicious) drawn from multiple data sources. |

|

| Rubayyi Alghamdi and Martine Bellaiche [45] | UNSW-NB15, Ton_IoT and IoT-23 | 2022 | RF | 99.45% | 97.81% | An ensemble IoT IDS was created by researchers, combining ML and DL techniques to boost its ability to detect attacks. |

|

| Saleh Alabdulwahab et al. [46] | TON_IoT, BoT-IoT and MQTT-IoT-IDS2020 | 2023 | CTGAN | - | 99% | This technique uses CTGAN to generate fake IoT intrusion reports, which improves detection performance and guarantees data balance. |

|

2.5. Challenges

- Combination of Datasets for Detecting a Broader Range of Attack Types: A significant challenge is that individual datasets often contain only a limited variety of attack types, which can hinder the effectiveness of intrusion detection models. The root cause of this issue is the heterogeneity of networks, where devices generate diverse traffic and face varied attack scenarios.

- Complexity and Difficulty of Combined Architectures: A major challenge lies in designing methods that can effectively integrate multiple heterogeneous datasets while preserving their unique characteristics. The root cause is that traditional approaches require numerous preprocessing and integration steps, as well as careful design of model architectures.

- High Performance: A key challenge in intrusion detection is achieving consistently high performance across different environments and attack types. The root cause of this challenge is the complexity and diversity of network traffic, where devices generate large volumes of heterogeneous data with varying patterns, making it difficult for models to maintain accuracy, precision, and reliability under all conditions.

- Class Imbalance: A major challenge in intrusion detection is that datasets often contain a disproportionate number of samples for different attack types, with some attacks being significantly underrepresented. The root cause of this issue is the infrequent occurrence of certain attack types in real-world networks, which leads to skewed data distributions and can reduce model performance for minority classes.

- Generalization: A key problem in intrusion detection is that models trained on specific datasets often perform poorly when faced with new or different environments. The root cause of this issue is the heterogeneity of networks and traffic patterns, where devices, protocols, and attack behaviors vary widely, making it difficult for models to generalize beyond the data they were trained on.

- Scalability: A main problem with intrusion detection is keeping it effective as the size of networks and the amount of traffic grows. The root cause of this issue is the rapid growth and expansion of ecosystems, where a large number of heterogeneous devices generate massive amounts of data, making it difficult for models to process and analyze all traffic efficiently.

- Combination of Datasets for Detecting a Broader Range of Attack Types: This work introduces a novel IDS framework that integrates the heterogeneous CSE-CIC-IDS2018, NF-BoT-IoT-v2, and IoT-23 datasets into a unified, feature-enriched representation using a shared autoencoder. This integration enables a single model to detect a broad spectrum of threats across twenty-five traffic classes, comprising one benign class and twenty-four distinct attack types.

- Complexity and Difficulty of Combined Architectures: The proposed approach employs a shared encoder with dataset-specific decoders, enabling the model to learn generalizable features across diverse traffic patterns while preserving all information and maintaining reconstruction fidelity and detection performance. This shared autoencoder framework is flexible, can accommodate any number of heterogeneous datasets, and follows the same integration steps.

- High Performance: High detection performance is achieved using the proposed hybrid CNN–DNN model with enhanced preprocessing, leading to superior results in both binary and multi-class classification tasks.

- Class Imbalance: Effectively addressed through mitigation strategies such as ADASYN, and ENN, ensuring balanced learning and improved detection performance.

- Generalization: Ensured through training on both the unified multi-dataset representation (CSE-CIC-IDS2018, NF-BoT-IoT-v2, and IoT-23) and the individual datasets, resulting in improved robustness and adaptability to varied attack environments

- Scalability: Achieved through an optimized hybrid CNN–DNN architecture designed to manage large-scale traffic derived from both the unified representation of CSE-CIC-IDS2018, NF-BoT-IoT-v2, and IoT-23 and from each dataset independently, while preserving strong detection performance.

3. Methodology

3.1. Description of Dataset

3.2. Shared Autoencoder Framework

- Dataset Preparation and Feature–Label Separation

- 2.

- Feature Encoding and Missing Value Handling

- 3.

- Dataset-Specific Feature Normalization

- 4.

- Definition of Model Hyperparameters

- 5.

- Input Layer Construction

- 6.

- Dataset-Specific Projection Layers

- 7.

- Shared Encoder Architecture

- 8.

- Dataset-Specific Decoder Design

- 9.

- Model Compilation and Optimization Strategy

- 10.

- Interleaved and Masked Multi-Dataset Training Strategy

| Algorithm 1: Interleaved and Masked Multi-Dataset Training |

| Input: Multi-dataset feature matrices and training hyperparameters. Output: Trained shared autoencoder and unified latent space representations. 1: Normalize each dataset independently. |

| 2: Split each dataset into training and validation sets. |

| 3: Initialize model parameters (projection, encoder, decoders). |

| 4: for epoch = 1 to E do |

| 5: Generate interleaved mini-batches from all datasets |

| 6: for each interleaved batch do |

| 7: Project inputs using dataset-specific projection layers |

| 8: Encode projected features using shared encoder |

| 9: Reconstruct inputs using corresponding dataset-specific decoders |

| 10: Compute masked reconstruction loss for each dataset |

| 11: Aggregate total loss across datasets |

| 12: Update , , using backpropagation |

| 13: end for |

| 14: Evaluate reconstruction loss on validation sets |

| 15: end for |

| 16: Extract unified latent representations using the shared encoder. |

| 17: Concatenate latent features to form unified latent dataset |

- 11.

- Latent Feature Extraction

- 12.

- Unified Latent Space Construction

- 13.

- Latent Feature Persistence

- 14.

- Outlier Detection and Elimination Using LOF and Z-Score

- 15.

- Normalization of the Combined Dataset Latent Spaces

- 16.

- Splitting into Train and Test File

- 17.

- Class Imbalance Mitigation

- (i)

- ADASYN

- (ii)

- ENN

3.3. Proposed CNN–DNN Model

3.3.1. Convolutional Neural Networks–Deep Neural Network (CNN–DNN)

3.3.2. Architectural Overview

- (i)

- Binary Classification

- (ii)

- Multi-class Classification

- (iii)

- Setting Up the CNN–DNN Model’s Hyperparameters

4. Results and Experiments

4.1. Overview of Dataset Properties and Preprocessing

4.2. Configuration and Hyperparameter Summary of the Comparable Models

- Convolutional Neural Networks (CNN)

- Autoencoder

- Deep Neural Network (DNN)

Setting up Hyperparameters for Models

4.3. Establishment of the Experiment

4.4. Evaluation Metrics

4.5. Results

- (i)

- Binary Classification

- (ii)

- Multi-Class Classification

4.6. Comprehensive Ablation and Sensitivity Analysis of the Proposed Framework

- Proposed CNN–DNN Ablation Study

- 2.

- Impact of Resampling Strategies

- 3.

- Latent Space Dimensionality Analysis

- 4.

- Analysis Under Different Random Seed Splits

4.7. Comparison with Recent Models

4.8. Time, Memory, and Model Size

- 1.

- Inference time

- 2.

- Training time

- 3.

- Memory consumption

- 4.

- Model Size

5. Discussion

5.1. Binary Classification

5.2. Multi-Class Classification

6. Limitations

- Combination of Multiple Datasets: While the current framework effectively integrates three heterogeneous datasets, extending the approach to include more datasets could introduce extra challenges in preprocessing, integration, and training, necessitating careful design to preserve information and maintain reconstruction fidelity.

- Data Preprocessing: The quality of the preprocessed data has a direct impact on the model’s performance. Accurately handling missing values, properly encoding category data, and successfully normalizing numerical data are all essential.

- Model Adaptation: Hyperparameter tuning calls for a thorough and iterative process in order to optimize the model for optimal performance on a variety of datasets. This procedure is essential in order to guarantee that the model precisely matches the unique characteristics of each new dataset.

7. Conclusions

8. Future Work

- Combination of Multiple Datasets: Future work will explore the extension of the framework to integrate more than three heterogeneous datasets, investigating scalable methods for data integration, harmonization, and model training. This includes developing strategies to maintain information integrity, reconstruction fidelity, and high detection performance when combining a larger and more diverse set of network traffic sources.

- Data Preprocessing: Customizing data preparation techniques for every dataset is essential to achieving optimal model performance. Refer to Section 3.2 in this article for a more thorough explanation of these sophisticated methods.

- Hyperparameter Optimization and Model Adaptation: More advanced hyperparameter optimization techniques are essential for deployment success in a variety of data environments. Section 3.3 goes into depth about these tuning techniques.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Sun, P.; Liu, P.; Li, Q.; Liu, C.; Lu, X.; Hao, R.; Chen, J. DL-IDS: Extracting Features Using CNN-LSTM Hybrid Network for Intrusion Detection System. Secur. Commun. Netw. 2020, 2020, 8890306. [Google Scholar] [CrossRef]

- Almansor, M.; Gan, K. Intrusion detection systems: Principles and perspectives. J. Multidiscip. Eng. Sci. Stud. 2018, 4, 2458–2925. [Google Scholar]

- Alkahtani, H.; Aldhyani, T.H. Intrusion Detection System to Advance Internet of Things Infrastructure-Based Deep Learning Algorithms. Complexity 2021, 2021, 5579851. [Google Scholar] [CrossRef]

- Edeh, D.I. Network Intrusion Detection System Using Deep Learning Technique. Master’s Thesis, Department of Computing, University of Turku, Turku, Finland, 2021. [Google Scholar]

- Wu, P. Deep Learning for Network Intrusion Detection: Attack Recognition with Computational Intelligence. Master’s Thesis, School of Computer Science and Engineering, University of New South Wales, Sydney, Australia, 2020. [Google Scholar]

- Altulaihan, E.; Almaiah, M.A.; Aljughaiman, A. Anomaly detection IDS for detecting DoS attacks in IoT networks based on machine learning algorithms. Sensors 2024, 24, 713. [Google Scholar] [CrossRef] [PubMed]

- Zhang, D.; Huang, D.; Chen, Y.; Lin, S.; Li, C. A lightweight IoT intrusion detection method based on two-stage feature selection and Bayesian optimization. AIMS Electron. Electr. Eng. 2025, 9, 359–389. [Google Scholar] [CrossRef]

- Gad, A.R.; Haggag, M.; Nashat, A.A.; Barakat, T.M. A distributed intrusion detection system using machine learning for IoT based on ToN-IoT dataset. Int. J. Adv. Comput. Sci. Appl. 2022, 13, 548–563. [Google Scholar] [CrossRef]

- Alsharif, N.A.; Mishra, S.; Alshehri, M. IDS in IoT using Machine Learning and Blockchain. Eng. Technol. Appl. Sci. Res. 2023, 13, 11197–11203. [Google Scholar] [CrossRef]

- Hakami, H.; Faheem, M.; Ahmad, M.B. Machine Learning Techniques for Enhanced Intrusion Detection in IoT Security. IEEE Access 2025, 13, 31140–31158. [Google Scholar] [CrossRef]

- ElKashlan, M.; Elsayed, M.S.; Jurcut, A.D.; Azer, M. A machine learning-based intrusion detection system for iot electric vehicle charging stations (evcss). Electronics 2023, 12, 1044. [Google Scholar] [CrossRef]

- Abdelkhalek, A.; Mashaly, M. Addressing the class imbalance problem in network intrusion detection systems using data resampling and deep learning. J. Supercomput. 2023, 79, 10611–10644. [Google Scholar] [CrossRef]

- Ajdani, M. Deep Learning-Based Intrusion Detection Systems: A Novel Approach Using Generative Adversarial Networks (GANs). Int. J. Inf. Secur. Priv. 2025, 19, 1–5. [Google Scholar] [CrossRef]

- Saba, T.; Rehman, A.; Sadad, T.; Kolivand, H.; Bahaj, S.A. Anomaly-based intrusion detection system for IoT networks through deep learning model. Comput. Electr. Eng. 2022, 99, 107810. [Google Scholar] [CrossRef]

- Alghamdi, R.; Bellaiche, M. An ensemble deep learning based IDS for IoT using Lambda architecture. Cybersecurity 2023, 6, 5. [Google Scholar] [CrossRef]

- Aljuaid, W.H.; Alshamrani, S.S. A deep learning approach for intrusion detection systems in cloud computing environments. Appl. Sci. 2024, 14, 5381. [Google Scholar] [CrossRef]

- Nawaz, M.W.; Munawar, R.; Mehmood, A.; Rahman, M.M.U.; Qammer; Abbasi, H. Multi-class Network Intrusion Detection with Class Imbalance via LSTM & SMOTE. arXiv 2023, arXiv:2310.01850. [Google Scholar] [CrossRef]

- Xi, C.; Wang, H.; Wang, X. A novel multi-scale network intrusion detection model with transformer. Sci. Rep. 2024, 14, 23239. [Google Scholar] [CrossRef] [PubMed]

- Shu, L.; Dong, S.; Su, H.; Huang, J. Android malware detection methods based on convolutional neural network: A survey. IEEE Trans. Emerg. Top. Comput. Intell. 2023, 7, 1330–1350. [Google Scholar] [CrossRef]

- Li, J.; Zhou, H.; Wu, S.; Luo, X.; Wang, T.; Zhan, X.; Ma, X. FOAP: Fine-Grained Open-World Android App Fingerprinting. In Proceedings of the 31st USENIX Security Symposium (USENIX Security 2022), Boston, MA, USA, 10–12 August 2022; USENIX Association: Berkeley, CA, USA, 2022; pp. 1579–1596. [Google Scholar]

- Ni, T.; Lan, G.; Wang, J.; Zhao, Q.; Xu, W. Eavesdropping Mobile App Activity via Radio-Frequency Energy Harvesting. In Proceedings of the 32nd USENIX Security Symposium (USENIX Security 2023), Santa Clara, CA, USA, 9–11 August 2023; USENIX Association: Berkeley, CA, USA, 2023; pp. 3511–3528. [Google Scholar]

- Kamal, H.; Mashaly, M. AE-DTNN: Autoencoder–Dense–Transformer Neural Network Model for Efficient Anomaly-Based Intrusion Detection Systems. Mach. Learn. Knowl. Extr. 2025, 7, 78. [Google Scholar] [CrossRef]

- Fu, Y.; Du, Y.; Cao, Z.; Li, Q.; Xiang, W. A deep learning model for network intrusion detection with imbalanced data. Electronics 2022, 11, 898. [Google Scholar] [CrossRef]

- Qazi, E.U.; Faheem, M.H.; Zia, T. HDLNIDS: Hybrid deep-learning-based network intrusion detection system. Appl. Sci. 2023, 13, 4921. [Google Scholar] [CrossRef]

- Sajid, M.; Malik, K.R.; Almogren, A.; Malik, T.S.; Khan, A.H.; Tanveer, J.; Rehman, A.U. Enhancing intrusion detection: A hybrid machine and deep learning approach. J. Cloud Comput. 2024, 13, 123. [Google Scholar] [CrossRef]

- Kamal, H.; Mashaly, M. Robust Intrusion Detection System Using an Improved Hybrid Deep Learning Model for Binary and Multi-Class Classification in IoT Networks. Technologies 2025, 13, 102. [Google Scholar] [CrossRef]

- Umair, M.B.; Iqbal, Z.; Faraz, M.A.; Khan, M.A.; Zhang, Y.-D.; Razmjooy, N.; Kadry, S. A network intrusion detection system using hybrid multilayer deep learning model. Big Data 2024, 12, 367–376. [Google Scholar] [CrossRef]

- Kamal, H.; Mashaly, M. Enhanced Hybrid Deep Learning Models-Based Anomaly Detection Method for Two-Stage Binary and Multi-Class Classification of Attacks in Intrusion Detection Systems. Algorithms 2025, 18, 69. [Google Scholar] [CrossRef]

- Yaras, S.; Dener, M. IoT-based intrusion detection system using new hybrid deep learning algorithm. Electronics 2024, 13, 1053. [Google Scholar] [CrossRef]

- Kamal, H.; Mashaly, M. Advanced hybrid transformer-CNN deep learning model for effective intrusion detection systems with class imbalance mitigation using resampling techniques. Future Internet 2024, 16, 481. [Google Scholar] [CrossRef]

- Zheng, Z.X.; Chen, F. An IoT Intrusion Detection Method Combining GAN and Transformer Neural Networks. J. Netw. Intell. 2025, 10, 1011–1026. [Google Scholar]

- Balaji, S.; Dhanabalan, G.; Umarani, C.; Naskath, J. A GAN-based Hybrid Deep Learning Approach for Enhancing Intrusion Detection in IoT Networks. Int. J. Adv. Comput. Sci. Appl. 2024, 15, 1104–1112. [Google Scholar] [CrossRef]

- Afraji, D.M.; Lloret, J.; Peñalver, L. An integrated hybrid deep learning framework for intrusion detection in IoT and IIoT networks using CNN-LSTM-GRU architecture. Computation 2025, 13, 222. [Google Scholar] [CrossRef]

- Adefemi, K.O.; Mutanga, M.B.; Alimi, O.A. A Hybrid CNN–GRU Deep Learning Model for IoT Network Intrusion Detection. J. Sens. Actuator Netw. 2025, 14, 96. [Google Scholar] [CrossRef]

- Hasan, M.A.; Sharma, D. CNN-LSTM Powered Network IDS for Adaptive Cyber Defence. Revolut. Adv. Comput. Electron. Int. J. 2025, 1, 1–16. [Google Scholar] [CrossRef]

- Sun, Z.; Ni, T.; Yang, H.; Liu, K.; Zhang, Y.; Gu, T.; Xu, W. FLoRa: FLoRa: Energy-efficient, reliable, and beamforming-assisted over-the-air firmware update in lora networks. In Proceedings of the 22nd International Conference on Information Processing in Sensor Networks (IPSN ’23), New York, NY, USA, 9–12 May 2023; Association for Computing Machinery: New York, NY, USA, 2023; pp. 14–26. [Google Scholar]

- Wang, J.; Ni, T.; Lee, W.B.; Zhao, Q. A Contemporary Survey of Large Language Model Assisted Program Analysis. Trans. Artif. Intell. 2025, 1, 105–129. [Google Scholar] [CrossRef]

- Wang, M.; Yang, N.; Guo, Y.; Weng, N. Learn-ids: Bridging gaps between datasets and learning-based network intrusion detection. Electronics 2024, 13, 1072. [Google Scholar] [CrossRef]

- Al-Riyami, S.; Lisitsa, A.; Coenen, F. Cross-datasets evaluation of machine learning models for intrusion detection systems. In Proceedings of the Sixth International Congress on Information and Communication Technology: ICICT 2021, London, UK, 27 October 2021; Springer Singapore: Singapore, 2021; Volume 4, pp. 815–828. [Google Scholar]

- Yu, J.; Wang, G.; Shi, N.; Saxena, R.; Lee, B. A Multi-View-Based Federated Learning Approach for Intrusion Detection. Electronics 2025, 14, 4166. [Google Scholar] [CrossRef]

- Alabbadi, A.; Bajaber, F. X-FuseRLSTM: A Cross-Domain Explainable Intrusion Detection Framework in IoT Using the Attention-Guided Dual-Path Feature Fusion and Residual LSTM. Sensors 2025, 25, 3693. [Google Scholar] [CrossRef] [PubMed]

- Amin, M.I.; Shen, M.; Ishak, M.K.; Manickam, S.; Karuppayah, S. Enhancing generalization of cross-domain intrusion detection: A heterogeneous deep stacked ensemble approach. Connect. Sci. 2026, 38, 2599708. [Google Scholar] [CrossRef]

- Li, M.; Qiao, Y.; Lee, B. Multi-View Intrusion Detection Framework Using Deep Learning and Knowledge Graphs. Information 2025, 16, 377. [Google Scholar] [CrossRef]

- Kamal, H.; Mashaly, M. Combined Dataset System Based on a Hybrid PCA–Transformer Model for Effective Intrusion De-tec-tion Systems. AI 2025, 6, 168. [Google Scholar] [CrossRef]

- Alghamdi, R.; Bellaiche, M. Evaluation and selection models for ensemble intrusion detection systems in IoT. IoT 2022, 3, 285–314. [Google Scholar] [CrossRef]

- Alabdulwahab, S.; Kim, Y.T.; Seo, A.; Son, Y. Generating synthetic dataset for ML-based IDS using CTGAN and feature selection to protect smart IoT environments. Appl. Sci. 2023, 13, 10951. [Google Scholar] [CrossRef]

- Elouardi, S.; Motii, A.; Jouhari, M.; Amadou, A.N.; Hedabou, M. A survey on Hybrid-CNN and LLMs for intrusion detection systems: Recent IoT datasets. IEEE Access 2024, 12, 180009–180033. [Google Scholar] [CrossRef]

- Sharafaldin, I.; Lashkari, A.H.; Ghorbani, A.A. CSE-CIC-IDS2018 Dataset. Canadian Institute for Cybersecurity, University of New Brunswick, 2018. Available online: https://www.unb.ca/cic/datasets/ids-2018.html (accessed on 9 February 2026).

- Songma, S.; Sathuphan, T.; Pamutha, T. Optimizing intrusion detection systems in three phases on the 2053 CSE-CIC-IDS-2018 dataset. Computers 2023, 12, 245. [Google Scholar] [CrossRef]

- Sarhan, M.; Layeghy, S.; Portmann, M. Towards a standard feature set for network intrusion detection system datasets. Mob. Netw. Appl. 2022, 27, 357–370. [Google Scholar] [CrossRef]

- Moustafa, N. Network Intrusion Detection System (NIDS) Datasets. University of Queensland: Brisbane, Australia. Available online: https://staff.itee.uq.edu.au/marius/NIDS_datasets (accessed on 21 June 2025).

- Balaji, R.; Deepajothi, S.; Prabaharan, G.; Daniya, T.; Karthikeyan, P.; Velliangiri, S. Survey on intrusions detection system using deep learning in iot environment. In Proceedings of the 2022 International Conference on Sustainable Computing and Data Communication Systems (ICSCDS), Erode, India, 7–9 April 2022; pp. 195–199. [Google Scholar]

- Garcia, S.; Parmisano, A.; Erquiaga, M.J. IoT-23: A Labeled Dataset with Malicious and Benign IoT Network Traffic. Zenodo. 2021. Available online: https://zenodo.org/records/4743746 (accessed on 20 February 2024).

- Abdalgawad, N.; Sajun, A.; Kaddoura, Y.; Zualkernan, I.A.; Aloul, F. Generative deep learning to detect cyberattacks for the IoT-23 dataset. IEEE Access 2021, 10, 6430–6441. [Google Scholar] [CrossRef]

- Kim, Y.G.; Ahmed, K.J.; Lee, M.J.; Tsukamoto, K. A Comprehensive Analysis of Machine Learning-Based Intrusion Detection System for IoT-23 Dataset. In International Conference on Intelligent Networking and Collaborative Systems; Springer International Publishing: Cham, Switzerland, 2022; pp. 475–486. [Google Scholar]

- Kotsiantis, S.B.; Kanellopoulos, D.; Pintelas, P.E. Data preprocessing for supervised leaning. Int. J. Comput. Sci. 2006, 1, 111–117. [Google Scholar]

- Han, J.; Pei, J.; Tong, H. Data Mining: Concepts and techniques; Morgan Kaufmann: Burlington, MA, USA, 2022. [Google Scholar]

- Little, R.J.; Rubin, D.B. Statistical Analysis with Missing Data; John Wiley & Sons: Hoboken, NJ, USA, 2019. [Google Scholar]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Singh, D.; Singh, B. Investigating the impact of data normalization on classification performance. Appl. Soft Comput. 2020, 97, 105524. [Google Scholar] [CrossRef]

- Sola, J.; Sevilla, J. Importance of input data normalization for the application of neural networks to complex industrial problems. IEEE Trans. Nucl. Sci. 1997, 44, 1464–1468. [Google Scholar] [CrossRef]

- Pan, S.J.; Yang, Q. A survey on transfer learning. IEEE Trans. Knowl. Data Eng. 2009, 22, 1345–1359. [Google Scholar] [CrossRef]

- Glorot, X.; Bordes, A.; Bengio, Y. Deep sparse rectifier neural networks. In Proceedings of the Fourteenth International Conference on Artificial Intelligence and Statistics (AISTATS 2011), Fort Lauderdale, FL, USA, 11–13 April 2011. [Google Scholar]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Hinton, G.E.; Salakhutdinov, R.R. Reducing the dimensionality of data with neural networks. Science 2006, 313, 504–507. [Google Scholar] [CrossRef]

- Bengio, Y.; Courville, A.; Vincent, P. Representation learning: A review and new perspectives. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 1798–1828. [Google Scholar] [CrossRef]

- Tishby, N.; Zaslavsky, N. Deep learning and the information bottleneck principle. In Proceedings of the 2015 IEEE Information Theory Workshop (ITW 2015), Jerusalem, Israel, 26 April–1 May 2015. [Google Scholar]

- Meidan, Y.; Bohadana, M.; Mathov, Y.; Mirsky, Y.; Shabtai, A.; Breitenbacher, D.; Elovici, Y. N-baiot—Network-based detection of iot botnet attacks using deep autoencoders. IEEE Pervasive Comput. 2018, 17, 12–22. [Google Scholar] [CrossRef]

- Bousmalis, K.; Trigeorgis, G.; Silberman, N.; Krishnan, D.; Erhan, D. Domain separation networks. In Proceedings of the Advances in Neural Information Processing Systems, Barcelona, Spain, 5–10 December 2016; Volume 29. [Google Scholar]

- Caruana, R. Multitask learning. Mach. Learn. 1997, 28, 41–75. [Google Scholar] [CrossRef]

- Kendall, A.; Gal, Y.; Cipolla, R. Multi-task learning using uncertainty to weigh losses for scene geometry and semantics. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 7482–7491. [Google Scholar]

- Ruder, S. An overview of multi-task learning in deep neural networks. arXiv 2017, arXiv:1706.05098. [Google Scholar] [CrossRef]

- Ganin, Y.; Lempitsky, V. Unsupervised domain adaptation by backpropagation. In Proceedings of the 32nd International Conference on Machine Learning (ICML 2015), Lille, France, 6–11 July 2015; pp. 1180–1189. [Google Scholar]

- Rizzardi, A.; Sicari, S.; Coen-Porisini, A. HERO: From High-dimensional network traffic to zERO-Day attack detection. Comput. Netw. 2025, 265, 111264. [Google Scholar]

- Rizzardi, A.; Sicari, S.; Porisini, A.C. NERO: NEural algorithmic reasoning for zeRO-day attack detection in the IoT: A hybrid approach. Comput. Secur. 2024, 142, 103898. [Google Scholar]

- Sharafaldin, I.; Lashkari, A.H.; Ghorbani, A.A. Toward generating a new intrusion detection dataset and intrusion traffic characterization. In Proceedings of the International Conference on Information Systems Security and Privacy, Funchal, Portugal, 22–24 January 2018; pp. 108–116. [Google Scholar]

- Nair, V.; Hinton, E.G. Rectified linear units improve restricted boltzmann machines. In Proceedings of the 27th International Conference on Machine Learning (ICML 2010), Haifa, Israel, 21–24 June 2010; pp. 807–814. [Google Scholar]

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: A simple way to prevent neural networks from overfitting. J. Mach. Learn. Res. 2014, 15, 1929–1958. [Google Scholar]

- Nielsen, M.A. Neural Networks and Deep Learning; Determination Press: San Francisco, CA, USA, 2015. [Google Scholar]

- Bishop, C.M.; Nasser, M.N. Pattern Recognition and Machine Learning; Springer: New York, NY, USA, 2006; Volume 4. [Google Scholar]

- El-Habil, B.Y.; Abu-Naser, S.S. Global climate prediction using deep learning. J. Theor. Appl. Inf. Technol. 2022, 100, 4824–4838. [Google Scholar]

- Song, Z.; Ma, J. Deep learning-driven MIMO: Data encoding and processing mechanism. Phys Commun. 2022, 57, 101976. [Google Scholar] [CrossRef]

- Zhou, X.; Zhao, C.; Sun, J.; Yao, K.; Xu, M. Detection of lead content in oilseed rape leaves and roots based on deep transfer learning and hyperspectral imaging technology. Spectroch. Acta Part A Mol. Biomol. Spectrosc. 2022, 290, 122288. [Google Scholar] [CrossRef] [PubMed]

- LeCun, Y.; Bottou, L.; Bengio, Y.; Haffner, P. Gradient-based learning applied to document recognition. Proc. IEEE 2002, 86, 2278–2324. [Google Scholar] [CrossRef]

- Kunang, Y.N.; Nurmaini, S.; Stiawan, D.; Zarkasi, A. Automatic features extraction using autoencoder in intrusion detection system. In Proceedings of the 2018 International Conference on Electrical Engineering and Computer Science (ICECOS), Pangkal Pinang, Indonesia, 2–4 October 2018; pp. 219–224. [Google Scholar]

- Gogna, A.; Majumdar, A. Discriminative autoencoder for feature extraction: Application to character recognition. Neural Process. Lett. 2019, 49, 1723–1735. [Google Scholar] [CrossRef]

- Chen, X.; Ma, L.; Yang, X. Stacked denoise autoencoder based feature extraction and classification for hyperspectral images. J. Sens. 2016, 2016, 3632943. [Google Scholar]

- Michelucci, U. An introduction to autoencoders. arXiv 2022, arXiv:2201.03898. [Google Scholar] [CrossRef]

- Veeramreddy, J.; Prasad, K. Anomaly-Based Intrusion Detection System. In Anomaly Detection and Complex Network Systems; Alexandrov, A.A., Ed.; IntechOpen: London, UK, 2019. [Google Scholar]

- Chen, C.; Song, Y.; Yue, S.; Xu, X.; Zhou, L.; Lv, Q.; Yang, L. FCNN-SE: An Intrusion Detection Model Based on a Fusion CNN and Stacked Ensemble. Appl. Sci. 2022, 12, 8601. [Google Scholar] [CrossRef]

- Powers, D.M.W. Evaluation: From Precision, Recall, and F-Measure to ROC, Informedness, Markedness & Correlation. J. Mach. Learn. Technol. 2011, 2, 37–63. [Google Scholar]

- Assy, A.T.; Mostafa, Y.; El-Khaleq, A.A.; Mashaly, M. Anomaly-based intrusion detection system using one-dimensional convolutional neural network. Procedia Comput. Sci. 2023, 220, 78–85. [Google Scholar] [CrossRef]

- Kamal, H.; Mashaly, M. Hybrid Deep Learning-Based Autoencoder-DNN Model for Intelligent Intrusion Detection System in IoT Networks. In Proceedings of the 2025 15th International Conference on Electrical Engineering (ICEENG), Cairo, Egypt, 12–15 May 2025; pp. 1–6. [Google Scholar]

- Kamal, H.; Mashaly, M. Improving Anomaly Detection in IDS with Hybrid Auto Encoder-SVM and Auto Encoder-LSTM Models Using Resampling Methods. In Proceedings of the 2024 6th Novel Intelligent and Leading Emerging Sciences Conference (NILES), Giza, Egypt, 19–21 October 2024; pp. 34–39. [Google Scholar]

- Kamal, H.; Mashaly, M. Securing IIoT Networks Using a Hybrid PCA-CNN-Based Intrusion Detection System. In Proceedings of the 2025 7th Novel Intelligent and Leading Emerging Sciences Conference (NILES), Giza, Egypt, 25–27 October 2025; IEEE: New York, NY, USA, 2025; pp. 19–24. [Google Scholar]

| Feature/Property | Standard AE | Multi-Task AE | Domain Adaptation AE | Proposed Shared Projection AE |

|---|---|---|---|---|

| Input compatibility | Homogeneous | Homogeneous | Homogeneous | Heterogeneous (variable dimensions) |

| Architectural focus | Reconstruction | Parallel tasking | Domain invariance | Cross-domain feature alignment |

| Feature mapping | Direct | Direct | Statistical/Rule-based | Learnable projection (non-linear) |

| Latent space | Isolated | Task-specific | Aligned (source-target) | Universal unified manifold |

| Data integration | Single source | Multi-source (fixed) | Transfer-based | Interleaved multi-domain jointly |

| Attack generalization | Narrow | Task-specific | Source-to-target | Universal (wide-spectrum) |

| Handling mismatch | Not possible | Manual padding | Requires shared features | Automatic via projection layers |

| Author | High Performance | Classification Type | Class Imbalance Mitigation | Generalization Threshold > 2 | Scalability | Learning Type | Model Type | Datasets Combination | ||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| B | M | B | M | DL | ML | H | S | |||||

| Esra Altulaihan. et al. [6] | ✓ | ✓ | ✓ | ✓ | ||||||||

| Saleh Alabdulwahab et al. [7] | ✓ | ✓ | ✓ | ✓ | ✓ | |||||||

| Abdallah R. Gad et al. [8] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Nada Abdu Al-sharif et al. [9] | ✓ | ✓ | ✓ | ✓ | ||||||||

| Hanadi Hakami et al. [10] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||||

| Mohamed ElKashlan et al. [11] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||||

| Ahmed Abdelkhalek and Maggie Mashaly [12] | ✓ | ✓ | ✓ | ✓ | ✓ | |||||||

| Ajdani, M et al. [13] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Tanzila Saba et al. [14] | ✓ | ✓ | ✓ | |||||||||

| Rubayyi Alghamdi and Martine Bellaiche [15] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||||

| Wa’ad H. Aljuaid and Sultan S. Alshamrani [16] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Muhammad Wasim Nawaz et al. [17] | ✓ | ✓ | ✓ | ✓ | ✓ | |||||||

| Chiming Xi [18] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||

| Hesham Kamal and Maggie Mashaly [22] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||

| Yanfang Fu et al. [23] | ✓ | ✓ | ✓ | ✓ | ||||||||

| Emad Ul Haq Qazi et al. [24] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||||

| Muhammad Sajid et al. [25] | ✓ | ✓ | ✓ | ✓ | ✓ | |||||||

| Hesham Kamal and Maggie Mashaly [26] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||

| Muhammad Basit Umair et al. [27] | ✓ | ✓ | ✓ | ✓ | ||||||||

| Hesham Kamal and Maggie Mashaly [28] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Sami Yaras and Murat Dener [29] | ✓ | ✓ | ✓ | ✓ | ||||||||

| Hesham Kamal and Maggie Mashaly [30] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Zhi-Xian Zheng [31] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Mr. S. Balaji et al. [32] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||

| Doaa Mohsin Abd Ali Afraji [33] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Kuburat Oyeranti Adefemi [34] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Md Aadil Hasan and Dev Sharma [35] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Minxiao Wang et al. [38] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Said Al-Riyami et al. [39] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||||

| Jia Yu et al. [40] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Adel Alabbadi and Fuad Bajaber [41] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||

| Muhammad Iqrar Amin [42] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||||

| Min Li et al. [43] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Hesham Kamal and Maggie Mashaly [44] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||

| Rubayyi Alghamdi and Martine Bellaiche [45] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||

| Saleh Alabdulwahab et al. [46] | ✓ | ✓ | ✓ | ✓ | ✓ | |||||||

| Our Work | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ||

| Architecture | Mechanism | Generalization | Information Retention |

|---|---|---|---|

| Independent encoders | Separate AE per dataset | Low | High locally |

| Shared encoder w/o vertical combination | Single AE, shared latent | High | Low; unique attack patterns lost |

| Proposed method | Shared AE + dataset-specific latent & decoders | Maximum | Max, keeps universal & unique patterns |

| Term | Symbol | Description |

|---|---|---|

| Original features | Raw input matrix after label separation. | |

| Projected features | Aligned features via projection layers. | |

| Latent features | Embeddings extracted by shared encoder. | |

| Unified features | Vertically concatenated latent matrices. |

| Latent | Top 3 Associated Attacks | Representative Features (CSE-CIC, NF, IoT-23) | Semantic Interpretation |

|---|---|---|---|

| LD 1 | DDoS-UDP, Theft, Recon | Fwd IAT Std, RETRAN_IN_PKTS, orig_bytes | Connection Initiation Dynamics |

| LD 2 | DDoS-HTTP, Slowloris, Slow-GoldenEye | Flow IAT Min, SRC_TO_DST_AVG_THR, conn_state | Temporal Flow Persistence |

| LD 3 | Bot, DDoS-HOIC, Infiltration | Subflow Bwd Packets, CLIENT_FLAGS, orig_bytes | Traffic Burstiness & Variance |

| LD 4 | DDoS-HOIC, Bot, SSH-Bruteforce | Bwd IAT Total, CLIENT_TCP_FLAGS, id_orig_h | Protocol Signaling Patterns |

| LD 5 | DoS-Hulk, Theft, SQL Injection | Fwd Pkt Len Min, PKTS_128_TO_256, orig_bytes | Payload Sizing & Volume |

| LD 6 | DDoS-UDP, Slowloris, Attack | Bwd Packets/s, NUM_PKTS_256_TO_512, history | Asymmetric Timing Fluctuations |

| LD 7 | DoS, DDoS-UDP, Recon | Bwd IAT Mean, TCP_WIN_MAX_OUT, duration | Bidirectional Load Symmetry |

| LD 8 | SSH-Bruteforce, DDoS-HOIC, Bot | Flow IAT Std, IN_PKTS, conn_state | Temporal Jitter & Flow State |

| LD 9 | DDoS-UDP, DoS, Recon | SYN Flag Count, OUT_PKTS, id_resp_p | Volumetric Saturation & Stress |

| LD 10 | Slowloris, Attack, SSH-Bruteforce | Flow IAT Min, L4_DST_PORT, id_resp_p | Temporal & Target Dynamics |

| LD 11 | DoS-Hulk, Infiltration, SQL Injection | Bwd IAT Min, SERVER_TCP_FLAGS, history | Inter-Arrival Timing Consistency |

| LD 12 | DDoS-UDP, Theft, Recon | Bwd IAT Mean, L4_DST_PORT, id_resp_p | Transmission Window Dynamics |

| LD 13 | Brute Force-Web, SSH, Attack | Bwd IAT Std, L7_PROTO, orig_bytes | Request-Response Cycle Dynamics |

| LD 14 | Slowloris, Slow-GoldenEye, Okiru | Fwd Pkts Len Tot, ICMP_IPV4_TYPE, resp_ip_bytes | Response Latency Profiling |

| LD 15 | C&C, Brute Force-Web, C&C-PartOfA-HPS | Fwd Act Data Pkts, MIN_TTL, orig_bytes | Periodic Signaling (Beaconing) |

| Dataset | Initial Loss (Val) | Final Loss (Val) |

|---|---|---|

| CSE-CIC-IDS2018 | 0.007737 | 0.000444 |

| NF-BoT-IoT-v2 | 0.000956 | 0.000056 |

| IoT-23 | 0.004334 | 0.000127 |

| Class | Before Z-Score & LOF | After Z-Score & LOF |

|---|---|---|

| Benign | 108,863 | 5732 |

| DDoS attacks-LOIC-HTTP | 36,309 | 32,945 |

| DDOS attack-HOIC | 32,378 | 26,952 |

| DoS attacks-Hulk | 30,570 | 27,905 |

| Bot | 28,380 | 26,145 |

| Infiltration | 6600 | 4557 |

| SSH-Bruteforce | 20,755 | 16,059 |

| DoS attacks-GoldenEye | 16,918 | 13,774 |

| DoS attacks-Slowloris | 7623 | 5910 |

| DDOS attack-LOIC-UDP | 1680 | 1567 |

| Brute Force-Web | 471 | 305 |

| Brute Force-XSS | 202 | 139 |

| SQL Injection | 38 | 6 |

| DoS attacks-SlowHTTPTest | 47 | 32 |

| FTP-BruteForce | 45 | 32 |

| Reconnaissance | 61,420 | 32,806 |

| DDoS | 143,002 | 134,946 |

| DoS | 76,485 | 73,042 |

| Theft | 1739 | 1557 |

| PartOfAHorizontalPortScan | 69,104 | 2343 |

| Okiru | 14,930 | 14,117 |

| C&C-HeartBeat | 8132 | 7742 |

| C&C | 6711 | 6096 |

| Attack | 2122 | 1825 |

| C&C-PartOfAHorizontalPortScan | 236 | 103 |

| Class | Train | Test |

|---|---|---|

| Benign | 4888 | 844 |

| DDoS attacks-LOIC-HTTP | 27,916 | 5029 |

| DDOS attack-HOIC | 22,902 | 4050 |

| DoS attacks-Hulk | 23,698 | 4207 |

| Bot | 22,201 | 3944 |

| Infiltration | 3831 | 726 |

| SSH-Bruteforce | 13,671 | 2388 |

| DoS attacks-GoldenEye | 11,729 | 2045 |

| DoS attacks-Slowloris | 5003 | 907 |

| DDOS attack-LOIC-UDP | 1350 | 217 |

| Brute Force-Web | 251 | 54 |

| Brute Force-XSS | 116 | 23 |

| SQL Injection | 5 | 1 |

| DoS attacks-SlowHTTPTest | 28 | 4 |

| FTP-BruteForce | 28 | 4 |

| Reconnaissance | 27,959 | 4847 |

| DDoS | 114,662 | 20,284 |

| DoS | 62,182 | 10,860 |

| Theft | 1329 | 228 |

| PartOfAHorizontalPortScan | 1968 | 375 |

| Okiru | 11,985 | 2132 |

| C&C-HeartBeat | 6605 | 1137 |

| C&C | 5192 | 904 |

| Attack | 1558 | 267 |

| C&C-PartOfAHorizontalPortScan | 86 | 17 |

| Class | Before ADASYN | After ADASYN |

|---|---|---|

| Normal | 4888 | 366,213 |

| Attack | 366,255 | 366,255 |

| Class | Before ADASYN | After ADASYN |

|---|---|---|

| Benign | 4888 | 4888 |

| DDoS attacks-LOIC-HTTP | 27,916 | 27,916 |

| DDOS attack-HOIC | 22,902 | 22,902 |

| DoS attacks-Hulk | 23,698 | 23,698 |

| Bot | 22,201 | 22,201 |

| Infiltration | 3831 | 3831 |

| SSH-Bruteforce | 13,671 | 13,671 |

| DoS attacks-GoldenEye | 11,729 | 11,729 |

| DoS attacks-Slowloris | 5003 | 5003 |

| DDOS attack-LOIC-UDP | 1350 | 1350 |

| Brute Force-Web | 251 | 251 |

| Brute Force-XSS | 116 | 116 |

| SQL Injection | 5 | 114,661 |

| DoS attacks-SlowHTTPTest | 28 | 114,661 |

| FTP-BruteForce | 28 | 114,664 |

| Reconnaissance | 27,959 | 27,959 |

| DDoS | 114,662 | 114,662 |

| DoS | 62,182 | 62,182 |

| Theft | 1329 | 1329 |

| PartOfAHorizontalPortScan | 1968 | 1968 |

| Okiru | 11,985 | 11,985 |

| C&C-HeartBeat | 6605 | 6605 |

| C&C | 5192 | 5192 |

| Attack | 1558 | 1558 |

| C&C-PartOfAHorizontalPortScan | 86 | 86 |

| Class | Before ENN | After ENN |

|---|---|---|

| Normal | 366,213 | 366,213 |

| Attack | 366,255 | 365,706 |

| Class | Before ENN | After ENN |

|---|---|---|

| Benign | 4888 | 4503 |

| DDoS attacks-LOIC-HTTP | 27,916 | 27,882 |

| DDOS attack-HOIC | 22,902 | 22,902 |

| DoS attacks-Hulk | 23,698 | 23,698 |

| Bot | 22,201 | 22,188 |

| Infiltration | 3831 | 3831 |

| SSH-Bruteforce | 13,671 | 13,669 |

| DoS attacks-GoldenEye | 11,729 | 11,729 |

| DoS attacks-Slowloris | 5003 | 5003 |

| DDOS attack-LOIC-UDP | 1350 | 1267 |

| Brute Force-Web | 251 | 227 |

| Brute Force-XSS | 116 | 66 |

| SQL Injection | 114,661 | 114,659 |

| DoS attacks-SlowHTTPTest | 114,661 | 15,755 |

| FTP-BruteForce | 114,664 | 15,461 |

| Reconnaissance | 27,959 | 27,832 |

| DDoS | 114,662 | 113,703 |

| DoS | 62,182 | 60,718 |

| Theft | 1329 | 1329 |

| PartOfAHorizontalPortScan | 1968 | 1477 |

| Okiru | 11,985 | 11,985 |

| C&C-HeartBeat | 6605 | 6605 |

| C&C | 5192 | 5186 |

| Attack | 1558 | 1553 |

| C&C-PartOfAHorizontalPortScan | 86 | 86 |

| Model Stage | Block | Layers | Layer Size | Activation |

|---|---|---|---|---|

| CNN | Input block | Input layer | Number of features | - |

| Hidden block 1 | 1D CNN layer | 256 | - | |

| Batch normalization | - | ReLU | ||

| 1D Max pooling layer | 2 | - | ||

| Dropout layer | 0.0000001 | - | ||

| Hidden block 2 | 1D CNN layer | 256 | ||

| Batch normalization | - | ReLU | ||

| 1D Max pooling layer | 4 | - | ||

| Dropout layer | 0.0000001 | - | ||

| DNN | Hidden block 3 | Dense layer | 1024 | - |

| Batch normalization | - | ReLU | ||

| Dropout layer | 0.0000001 | - | ||

| Hidden block 4 | Dense layer | 768 | - | |

| Batch normalization | - | ReLU | ||

| Dropout layer | 0.0000001 | - | ||

| Output block | Output layer | 1 (Binary) | Sigmoid |

| Model Stage | Block | Layers | Layer Size | Activation |

|---|---|---|---|---|

| CNN | Input block | Input layer | Number of features | - |

| Hidden block 1 | 1D CNN layer | 256 | - | |

| Batch normalization | - | ReLU | ||

| 1D Max pooling layer | 2 | - | ||

| Dropout layer | 0.0000001 | - | ||

| Hidden block 2 | 1D CNN layer | 256 | ||

| Batch normalization | - | ReLU | ||

| 1D Max pooling layer | 4 | - | ||

| Dropout layer | 0.0000001 | - | ||

| DNN | Hidden block 3 | Dense layer | 1024 | - |

| Batch normalization | - | ReLU | ||

| Dropout layer | 0.0000001 | - | ||

| Hidden block 4 | Dense layer | 768 | - | |

| Batch normalization | - | ReLU | ||

| Dropout layer | 0.0000001 | - | ||

| Output block | Output layer | Number of classes (Multi-class) | Softmax |

| Parameter | Binary Classifier | Multi-Class Classifier |

|---|---|---|

| Batch size | 128 | 128 |

| Learning rate | Scheduled: Initial = 0.001, Factor = 0.5, Min = 1 × 10−5 (ReduceLROnPlateau) | Scheduled: Initial = 0.001, Factor = 0.5, Min = 1 × 10−5 (ReduceLROnPlateau) |

| Optimizer | Adam | Adam |

| Loss function | Binary_crossentropy | Categorical_crossentropy |

| Metric | Accuracy | Accuracy |

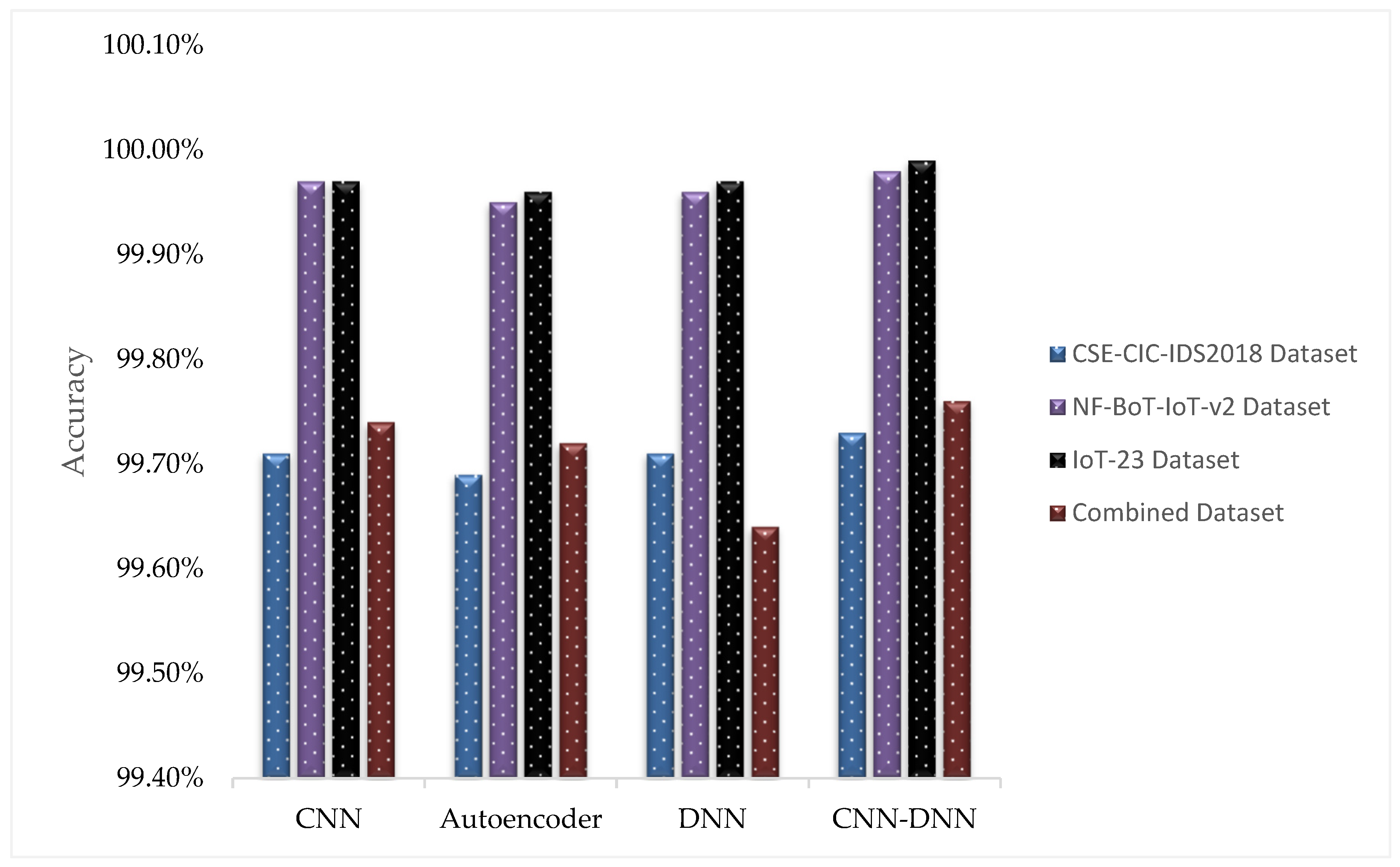

| Dataset | Model | Accuracy | Precision | Recall | F-Score |

|---|---|---|---|---|---|

| CSE-CIC-IDS2018 | CNN | 99.71% | 99.72% | 99.71% | 99.71% |

| Autoencoder | 99.69% | 99.70% | 99.69% | 99.69% | |

| DNN | 99.71% | 99.72% | 99.71% | 99.71% | |

| CNN–DNN (Proposed) | 99.73% | 99.74% | 99.73% | 99.73% | |

| NF-BoT-IoT-v2 | CNN | 99.97% | 99.97% | 99.97% | 99.97% |

| Autoencoder | 99.95% | 99.95% | 99.95% | 99.95% | |

| DNN | 99.96% | 99.96% | 99.96% | 99.96% | |

| CNN–DNN (Proposed) | 99.98% | 99.98% | 99.98% | 99.98% | |

| IoT-23 | CNN | 99.97% | 99.97% | 99.97% | 99.97% |

| Autoencoder | 99.96% | 99.96% | 99.96% | 99.96% | |

| DNN | 99.97% | 99.97% | 99.97% | 99.97% | |

| CNN–DNN (Proposed) | 99.99% | 99.99% | 99.99% | 99.99% | |

| Combined Dataset (using shared autoencoder) | CNN | 99.74% | 99.78% | 99.74% | 99.75% |

| Autoencoder | 99.72% | 99.77% | 99.72% | 99.73% | |

| DNN | 99.64% | 99.71% | 99.64% | 99.66% | |

| CNN–DNN (Proposed) | 99.76% | 99.80% | 99.76% | 99.77% |

| Dataset | Model | Accuracy | Precision | Recall | F-Score |

|---|---|---|---|---|---|

| CSE-CIC-IDS2018 | CNN | 99.58% | 99.59% | 99.58% | 99.57% |

| Autoencoder | 99.54% | 99.54% | 99.54% | 99.52% | |

| DNN | 99.57% | 99.56% | 99.57% | 99.55% | |

| CNN–DNN (Proposed) | 99.61% | 99.63% | 99.61% | 99.60% | |

| NF-BoT-IoT-v2 | CNN | 98.23% | 98.26% | 98.23% | 98.23% |

| Autoencoder | 98.21% | 98.25% | 98.21% | 98.21% | |

| DNN | 98.19% | 98.24% | 98.19% | 98.19% | |

| CNN–DNN (Proposed) | 98.28% | 98.29% | 98.28% | 98.28% | |

| IoT-23 | CNN | 99.91% | 99.94% | 99.91% | 99.92% |

| Autoencoder | 99.54% | 99.58% | 99.54% | 99.55% | |

| DNN | 99.75% | 99.80% | 99.75% | 99.76% | |

| CNN–DNN (Proposed) | 99.94% | 99.96% | 99.94% | 99.95% | |

| Combined Dataset (Using Shared Autoencoder) | CNN | 99.48% | 99.50% | 99.48% | 99.47% |

| Autoencoder | 99.46% | 99.55% | 99.46% | 99.50% | |

| DNN | 99.49% | 99.49% | 99.49% | 99.49% | |

| CNN–DNN (Proposed) | 99.54% | 99.55% | 99.54% | 99.54% |

| Classification Type | Model | CSE-CIC-IDS2018 | NF-BoT-IoT-v2 | IoT-23 | Combined |

|---|---|---|---|---|---|

| Binary | CNN | 99.52% | 99.76% | 99.62% | 99.54% |

| DNN | 99.41% | 99.74% | 94.85% | 99.43% | |

| CNN–DNN (Proposed) | 99.73% | 99.98% | 99.99% | 99.76% | |

| Multi-Class | CNN | 99.41% | 97.87% | 93.61% | 99.35% |

| DNN | 99.44% | 97.91% | 89.38% | 99.29% | |

| CNN–DNN (Proposed) | 99.61% | 98.28% | 99.91% | 99.54% |

| Classification Type | Resampling Strategy | CSE-CIC-IDS2018 | NF-BoT-IoT-v2 | IoT-23 | Combined |

|---|---|---|---|---|---|

| Binary | No Resampling | 99.73% | 99.94% | 99.91% | 99.71% |

| ADASYN | 99.48% | 99.97% | 99.94% | 99.73% | |

| ENN | 99.66% | 99.96% | 99.95% | 99.72% | |

| ADASYN + ENN | 99.54% | 99.98% | 99.99% | 99.76% | |

| Multi-Class | No Resampling | 99.61% | 98.18% | 99.89% | 99.45% |

| ADASYN | 99.57% | 98.20% | 99.90% | 99.49% | |

| ENN | 99.56% | 98.21% | 99.86% | 99.47% | |

| ADASYN + ENN | 99.55% | 98.28% | 99.94% | 99.54% |

| Classification Type | Latent Space | Accuracy |

|---|---|---|

| Binary | 10 | 99.58% |

| 15 | 99.76% | |

| 20 | 99.68% | |

| Multi-Class | 10 | 99.43% |

| 15 | 99.54% | |

| 20 | 99.48% |

| Classification Type | Random Seed (Splitting) | Accuracy |

|---|---|---|

| Binary | 7 | 99.75% |

| 21 | 99.76% | |

| 42 | 99.76% | |

| 99 | 99.76% | |

| Multi-Class | 7 | 99.54% |

| 21 | 99.53% | |

| 42 | 99.54% | |

| 99 | 99.54% |

| Classification Type | Model | CSE-CIC-IDS2018 | NF-BoT-IoT-v2 | IoT-23 | Combined |

|---|---|---|---|---|---|

| Binary | Transformer-CNN [30] | 99.68% | 99.83% | 99.78% | 99.66% |

| CNN-MLP [26] | 99.64% | 99.79% | 99.83% | 99.68% | |

| Transformer-DNN [28] | 99.66% | 99.86% | 99.50% | 99.65% | |

| CNN–DNN (Proposed) | 99.73% | 99.98% | 99.99% | 99.76% | |

| Multi-Class | Transformer-CNN [30] | 99.43% | 98.06% | 99.80% | 99.40% |

| CNN-MLP [26] | 99.52% | 98.04% | 99.79% | 99.42% | |

| Transformer-DNN [28] | 99.49% | 98.02% | 95.17% | 99.39% | |

| CNN–DNN (Proposed) | 99.61% | 98.28% | 99.94% | 99.54% |

| Classification Type | Model | Inference Time (Seconds) |

|---|---|---|

| Binary | CNN | 7.87902 × 10−6 |

| Autoencoder | 6.03750 × 10−6 | |

| DNN | 6.29230 × 10−6 | |

| CNN–DNN (Proposed) | 7.94129 × 10−6 | |

| Multi-Class | CNN | 7.99174 × 10−6 |

| Autoencoder | 7.54983 × 10−6 | |

| DNN | 7.84450 × 10−6 | |

| CNN–DNN (Proposed) | 7.96421 × 10−6 |

| Classification Type | Model | Training Time (Seconds) |

|---|---|---|

| Binary | CNN | 9.152675 × 10−6 |

| Autoencoder | 3.364086 × 10−7 | |

| DNN | 1.139832 × 10−6 | |

| CNN–DNN (Proposed) | 9.329939 × 10−6 | |

| Multi-Class | CNN | 9.407687 × 10−6 |

| Autoencoder | 4.069567 × 10−7 | |

| DNN | 1.233268 × 10−6 | |

| CNN–DNN (Proposed) | 9.453416 × 10−6 |

| Classification Type | Model | Memory Consumption (Inference) (MB) | Memory Consumption (Training) (MB) |

|---|---|---|---|

| Binary | CNN | 0.0376 | 0.0750 |

| Autoencoder | 0.0009 | 0.0017 | |

| DNN | 0.0074 | 0.0144 | |

| CNN–DNN (Proposed) | 0.1342 | 0.2670 | |

| Multi-Class | CNN | 0.0390 | 0.0777 |

| Autoencoder | 0.0009 | 0.0017 | |

| DNN | 0.0075 | 0.0146 | |

| CNN–DNN (Proposed) | 0.1356 | 0.2697 |

| Classification Type | Model | Size (MB) |

|---|---|---|

| Binary | CNN | 6.11 |

| Autoencoder | 0.17 | |

| DNN | 9.30 | |

| CNN–DNN (Proposed) | 45.20 | |

| Multi-Class | CNN | 6.25 |

| Autoencoder | 0.18 | |

| DNN | 9.51 | |

| CNN–DNN (Proposed) | 45.41 |

| Dataset | Class | Accuracy | Precision | Recall | F-Score |

|---|---|---|---|---|---|

| CSE-CIC-IDS2018 | Normal | 96.62% | 92.59% | 96.62% | 94.56% |

| Attack | 99.81% | 99.91% | 99.81% | 99.86% | |

| NF-BoT-IoT-v2 | Normal | 99.94% | 99.89% | 99.94% | 99.91% |

| Attack | 99.98% | 99.99% | 99.98% | 99.99% | |

| IoT-23 | Normal | 100% | 99.98% | 100% | 99.99% |

| Attack | 99.99% | 100% | 99.99% | 100% | |

| Combined Dataset (Using Shared Autoencoder) | Normal | 100% | 84.15% | 100% | 91.39% |

| Attack | 99.75% | 100% | 99.75% | 99.88% |

| Class | Accuracy | Precision | Recall | F-Score |

|---|---|---|---|---|

| Benign | 97.17% | 91.39% | 97.17% | 94.19% |

| DDoS attacks-LOIC-HTTP | 99.92% | 100% | 99.92% | 99.96% |

| DDOS attack-HOIC | 100% | 100% | 100% | 100% |

| DoS attacks-Hulk | 100% | 100% | 100% | 100% |

| Bot | 100% | 99.89% | 100% | 99.95% |

| Infiltration | 93.57% | 97.94% | 93.57% | 95.70% |

| SSH-Bruteforce | 100% | 100% | 100% | 100% |

| DoS attacks-GoldenEye | 99.97% | 100% | 99.97% | 99.99% |

| DoS attacks-Slowloris | 100% | 99.94% | 100% | 99.97% |

| DDOS attack-LOIC-UDP | 100% | 98.04% | 100% | 99.01% |

| Brute Force-Web | 96.08% | 84.48% | 96.08% | 89.91% |

| Brute Force-XSS | 40.74% | 100% | 40.74% | 57.89% |

| SQL Injection | 33.33% | 100% | 33.33% | 50% |

| DoS attacks-SlowHTTPTest | 33.33% | 50% | 33.33% | 40% |

| FTP-BruteForce | 66.67% | 50% | 66.67% | 57.14% |

| Class | Accuracy | Precision | Recall | F-Score |

|---|---|---|---|---|

| Benign | 99.94% | 99.94% | 99.94% | 99.94% |

| Reconnaissance | 95.85% | 98.40% | 95.85% | 97.11% |

| DDoS | 99.37% | 99.41% | 99.37% | 99.39% |

| DoS | 98.12% | 96.19% | 98.12% | 97.15% |

| Theft | 100% | 97.98% | 100% | 98.98% |

| Class | Accuracy | Precision | Recall | F-Score |

|---|---|---|---|---|

| Benign | 100% | 99.99% | 100% | 100% |

| PartOfAHorizontalPortScan | 99.84% | 99.99% | 99.84% | 99.92% |

| DDoS | 100% | 100% | 100% | 100% |

| Okiru | 100% | 100% | 100% | 100% |

| C&C-HeartBeat | 100% | 100% | 100% | 100% |

| C&C | 99.81% | 99.90% | 99.81% | 99.85% |

| Attack | 99.69% | 100% | 99.69% | 99.85% |

| C&C-PartOfAHorizontalPortScan | 100% | 62.79% | 100% | 77.14% |

| Class | Accuracy | Precision | Recall | F-Score |

|---|---|---|---|---|

| Benign | 92.18% | 93.17% | 92.18% | 92.67% |

| DDoS attacks-LOIC-HTTP | 99.90% | 100% | 99.90% | 99.95% |

| DDOS attack-HOIC | 100% | 100% | 100% | 100% |

| DoS attacks-Hulk | 100% | 100% | 100% | 100% |

| Bot | 100% | 99.92% | 100% | 99.96% |

| Infiltration | 100% | 99.73% | 100% | 99.86% |

| SSH-Bruteforce | 100% | 100% | 100% | 100% |

| DoS attacks-GoldenEye | 100% | 100% | 100% | 100% |

| DoS attacks-Slowloris | 100% | 100% | 100% | 100% |

| DDOS attack-LOIC-UDP | 99.08% | 97.73% | 99.08% | 98.40% |

| Brute Force-Web | 88.89% | 85.71% | 88.89% | 87.27% |

| Brute Force-XSS | 39.13% | 100% | 39.13% | 56.25% |

| SQL Injection | 100% | 10% | 100% | 18.18% |

| DoS attacks-SlowHTTPTest | 25% | 50% | 25% | 33.33% |

| FTP-BruteForce | 75% | 50% | 75% | 60% |

| Reconnaissance | 99.98% | 99.88% | 99.98% | 99.93% |

| DDoS | 99.58% | 99.72% | 99.58% | 99.65% |

| DoS | 99.42% | 99.21% | 99.42% | 99.31% |

| Theft | 100% | 100% | 100% | 100% |

| PartOfAHorizontalPortScan | 84.80% | 82.81% | 84.80% | 83.79% |

| Okiru | 100% | 100% | 100% | 100% |

| C&C-HeartBeat | 100% | 100% | 100% | 100% |

| C&C | 99.89% | 99.89% | 99.89% | 99.89% |

| Attack | 100% | 100% | 100% | 100% |

| C&C-PartOfAHorizontalPortScan | 100% | 100% | 100% | 100% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Kamal, H.; Mashaly, M. Shared Autoencoder-Based Unified Intrusion Detection Across Heterogeneous Datasets for Binary and Multi-Class Classification Using a Hybrid CNN–DNN Model. Mach. Learn. Knowl. Extr. 2026, 8, 53. https://doi.org/10.3390/make8020053

Kamal H, Mashaly M. Shared Autoencoder-Based Unified Intrusion Detection Across Heterogeneous Datasets for Binary and Multi-Class Classification Using a Hybrid CNN–DNN Model. Machine Learning and Knowledge Extraction. 2026; 8(2):53. https://doi.org/10.3390/make8020053

Chicago/Turabian StyleKamal, Hesham, and Maggie Mashaly. 2026. "Shared Autoencoder-Based Unified Intrusion Detection Across Heterogeneous Datasets for Binary and Multi-Class Classification Using a Hybrid CNN–DNN Model" Machine Learning and Knowledge Extraction 8, no. 2: 53. https://doi.org/10.3390/make8020053

APA StyleKamal, H., & Mashaly, M. (2026). Shared Autoencoder-Based Unified Intrusion Detection Across Heterogeneous Datasets for Binary and Multi-Class Classification Using a Hybrid CNN–DNN Model. Machine Learning and Knowledge Extraction, 8(2), 53. https://doi.org/10.3390/make8020053