Perceiving AI as an Epistemic Authority or Algority: A User Study on the Human Attribution of Authority to AI

Abstract

1. Introduction

- RQ1 (predispositions and algority). How are literature-derived predispositions— specifically trust in automation and beliefs about automation performance—associated with respondents’ algority-related responses across scenarios (e.g., expectation that AI makes fewer errors; acceptance of replacement; preference for AI judgment; deference to AI recommendations)?

- RQ2 (moral–attitudinal correlates in sensitive contexts). How are authority-relevant moral attitudes associated with algority-related responses in ethically sensitive scenarios (e.g., criminal judgment), and does this association differ from low-stakes or everyday contexts?

- RQ3 (scenario dependence). To what extent do the observed associations between predispositions (trust in automation; beliefs about automation performance; authority-relevant moral attitudes) and algority-related responses differ between low-stakes scenarios (e.g., route planning) and high-stakes scenarios (e.g., criminal justice, job interviewing, creditworthiness)?

2. Related Work: Definitions, Boundaries, and Positions

2.1. From Algorithmic Authority to Algority

2.2. Distinguishing Human and Algorithmic Authority

2.3. Agency, Mediation, and Scope: The Non-Agentiality Stance

2.4. Macro-Level Accounts and Micro-Level Mechanisms

3. Methods

3.1. User Study

3.2. Measures

3.2.1. Literature-Derived Constructs

3.2.2. Ad Hoc Constructs

4. Results

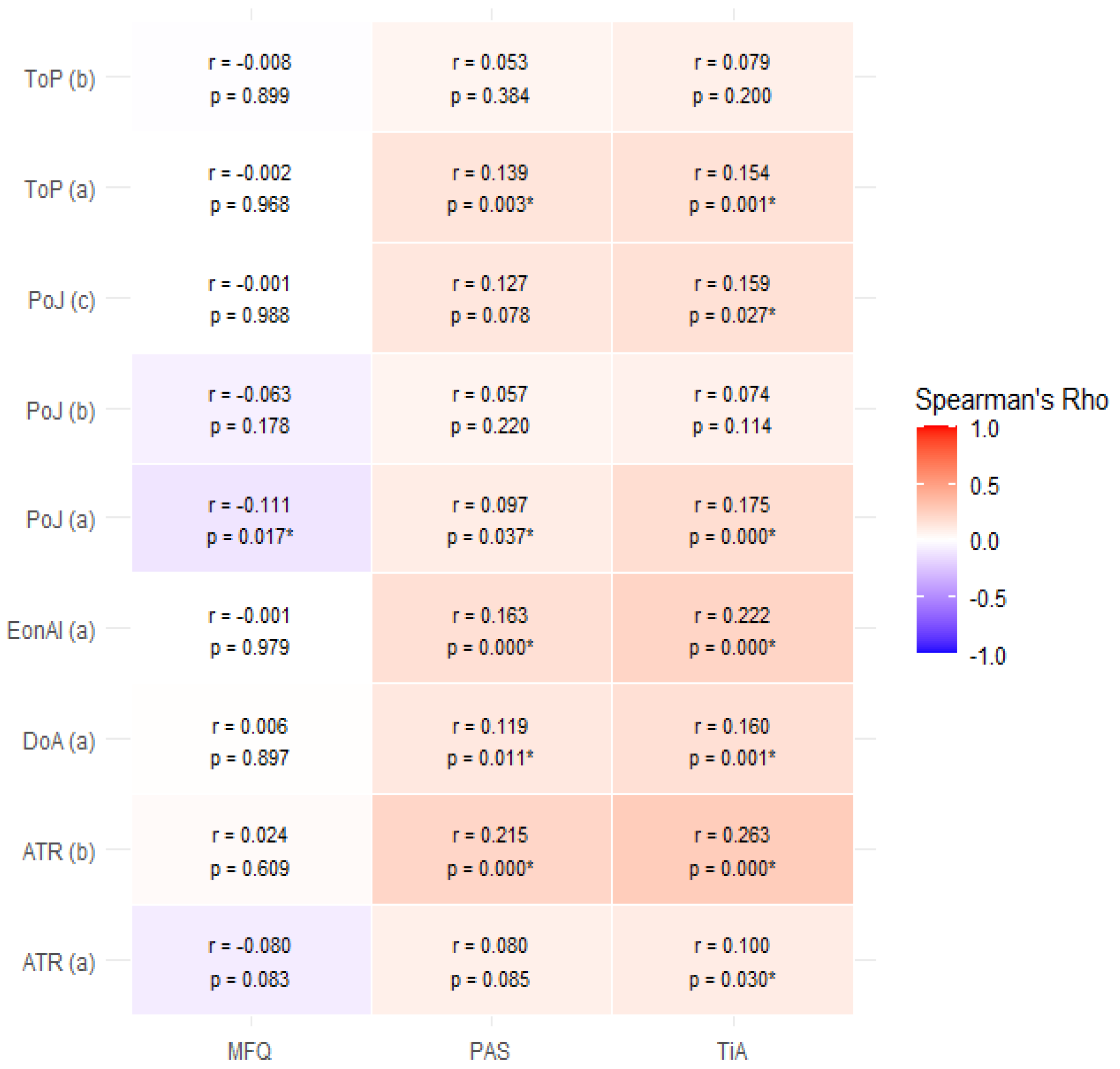

4.1. Correlations

4.2. Non-Parametric Tests

4.2.1. Trust in Automaton Scale (TiA)

4.2.2. Perfect Automation Schema (PAS)

4.2.3. Moral Foundation Questionnaire (MFQ) and Gender

4.2.4. Decision-Making Approaches

5. Discussion

5.1. Is Expert Judgment Being Replaced by AI as Epistemic Authority?

5.2. The Importance of Operational Domain and Task Nature in Granting Epistemic Authority to AI

5.3. The Role of Individuals’ Moral Foundations in Acknowledging Authority in AI

5.4. Gender and Epistemic Authority of AI

6. Limitations and Further Research

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Grimmelikhuijsen, S. Explaining why the computer says no: Algorithmic transparency affects the perceived trustworthiness of automated decision-making. Public Adm. Rev. 2023, 83, 241–262. [Google Scholar] [CrossRef]

- Rhoen, M.; Feng, Q.Y. Why the ‘Computer says no’: Illustrating big data’s discrimination risk through complex systems science. Int. Data Priv. Law 2018, 8, 140–159. [Google Scholar] [CrossRef]

- Tingle, J. The computer says no: AI, health law, ethics and patient safety. Br. J. Nurs. 2021, 30, 870–871. [Google Scholar] [CrossRef] [PubMed]

- Vik, P. ‘The computer says no’: The demise of the traditional bank manager and the depersonalisation of British banking, 1960–2010. Bus. Hist. 2017, 59, 231–249. [Google Scholar] [CrossRef]

- Wihlborg, E.; Larsson, H.; Hedström, K. “The Computer Says No!”–A Case Study on Automated Decision-Making in Public Authorities. In Proceedings of the 2016 49th Hawaii International Conference on System Sciences (HICSS), Koloa, HI, USA, 5–8 January 2016; pp. 2903–2912. [Google Scholar]

- Sundin, O.; Haider, J.; Andersson, C.; Carlsson, H.; Kjellberg, S. The search-ification of everyday life and the mundane-ification of search. J. Doc. 2017, 73, 224–243. [Google Scholar] [CrossRef]

- Shirky, C. A Speculative Post on the Idea of Algorithmic Authority. 2017. Available online: https://www.bibsonomy.org/url/a4f71c8404afbb43b64a2c03196fe5e5 (accessed on 24 January 2026).

- Forte, A. The new information literate: Open collaboration and information production in schools. Int. J. Comput.-Support. Collab. Learn. 2015, 10, 35–51. [Google Scholar] [CrossRef][Green Version]

- Lustig, C.; Nardi, B. Algorithmic authority: The case of Bitcoin. In Proceedings of the 2015 48th Hawaii International Conference on System Sciences, Kauai, HI, USA, 5–8 January 2015; pp. 743–752. [Google Scholar]

- Lustig, C.; Pine, K.; Nardi, B.; Irani, L.; Lee, M.K.; Nafus, D.; Sandvig, C. Algorithmic authority: The ethics, politics, and economics of algorithms that interpret, decide, and manage. In Proceedings of the 2016 CHI Conference Extended Abstracts on Human Factors in Computing Systems, San Jose, CA, USA, 7–12 May 2016; pp. 1057–1062. [Google Scholar]

- Danaher, J. The threat of algocracy: Reality, resistance and accommodation. Philos. Technol. 2016, 29, 245–268. [Google Scholar] [CrossRef]

- Ståhl, T.; Sormunen, E.; Mäkinen, M. Epistemic beliefs and internet reliance—Is algorithmic authority part of the picture? Inf. Learn. Sci. 2021, 122, 726–748. [Google Scholar] [CrossRef]

- Cabitza, F.; Campagner, A.; Simone, C. The need to move away from agential-AI: Empirical investigations, useful concepts and open issues. Int. J. Hum.-Comput. Stud. 2021, 155, 102696. [Google Scholar] [CrossRef]

- Schwarz, O. Sociological Theory for Digital Society: The Codes That Bind Us Together; John Wiley & Sons: Hoboken, NJ, USA, 2021. [Google Scholar]

- Burrell, J.; Fourcade, M. The Society of Algorithms. Annu. Rev. Sociol. 2021, 47, 213–237. [Google Scholar] [CrossRef]

- Beer, D. Power Through the Algorithm? Participatory Web Cultures and the Technological Unconscious. New Media Soc. 2009, 11, 985–1002. [Google Scholar] [CrossRef]

- Noble, S.U. Algorithms of Oppression; New York University Press: New York, NY, USA, 2018. [Google Scholar] [CrossRef]

- Cheney-Lippold, J. We Are Data; New York University Press: New York, NY, USA, 2017. [Google Scholar] [CrossRef]

- Araujo, T.; Helberger, N.; Kruikemeier, S.; De Vreese, C.H. In AI we trust? Perceptions about automated decision-making by artificial intelligence. AI Soc. 2020, 35, 611–623. [Google Scholar] [CrossRef]

- Glikson, E.; Woolley, A.W. Human trust in artificial intelligence: Review of empirical research. Acad. Manag. Ann. 2020, 14, 627–660. [Google Scholar] [CrossRef]

- Kizilcec, R.F. How much information? Effects of transparency on trust in an algorithmic interface. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems, San Jose, CA, USA, 7–12 May 2016; pp. 2390–2395. [Google Scholar]

- Lee, M.K. Understanding perception of algorithmic decisions: Fairness, trust, and emotion in response to algorithmic management. Big Data Soc. 2018, 5, 2053951718756684. [Google Scholar] [CrossRef]

- Wiegmann, D.A.; Rich, A.; Zhang, H. Automated diagnostic aids: The effects of aid reliability on users’ trust and reliance. Theor. Issues Ergon. Sci. 2001, 2, 352–367. [Google Scholar] [CrossRef]

- Zhang, H.; Hook, J.N.; Johnson, K.A. Moral Foundations Questionnaire. In Encyclopedia of Personality and Individual Differences; Zeigler-Hill, V., Shackelford, T.K., Eds.; Springer International Publishing: Cham, Switzerland, 2016; pp. 1–3. [Google Scholar] [CrossRef]

- Kohn, S.C.; De Visser, E.J.; Wiese, E.; Lee, Y.C.; Shaw, T.H. Measurement of trust in automation: A narrative review and reference guide. Front. Psychol. 2021, 12, 604977. [Google Scholar] [CrossRef]

- Merritt, S.M.; Unnerstall, J.L.; Lee, D.; Huber, K. Measuring individual differences in the perfect automation schema. Hum. Factors 2015, 57, 740–753. [Google Scholar] [CrossRef] [PubMed]

- Bokros, S.E. A deference model of epistemic authority. Synthese 2021, 198, 12041–12069. [Google Scholar] [CrossRef]

- Hauswald, R. Artificial epistemic authorities. Soc. Epistemol. 2025, 39, 716–725. [Google Scholar] [CrossRef]

- Lange, B. Epistemic Deference to AI. In Proceedings of the International Conference on Bridging the Gap Between AI and Reality; Springer Nature: Cham, Switzerland, 2024; pp. 174–186. [Google Scholar]

- Yang, S.; Ma, R. Classifying Epistemic Relationships in Human-AI Interaction: An Exploratory Approach. arXiv 2025, arXiv:2508.03673. [Google Scholar] [CrossRef]

- Renier, L.A.; Mast, M.S.; Bekbergenova, A. To err is human, not algorithmic—Robust reactions to erring algorithms. Comput. Hum. Behav. 2021, 124, 106879. [Google Scholar] [CrossRef]

- Rubbi, I.; Lupo, R.; Lezzi, A.; Cremonini, V.; Carvello, M.; Caricato, M.; Conte, L.; Antonazzo, M.; Caldararo, C.; Botti, S.; et al. The social and professional image of the nurse: Results of an online snowball sampling survey among the general population in the post-pandemic period. Nurs. Rep. 2023, 13, 1291–1303. [Google Scholar] [CrossRef] [PubMed]

- Kennedy-Shaffer, L.; Qiu, X.; Hanage, W. Snowball Sampling Study Design for Serosurveys Early in Disease Outbreaks. Am. J. Epidemiol. 2021, 190, 1918–1927. [Google Scholar] [CrossRef]

- Zickar, M.; Keith, M. Innovations in Sampling: Improving the Appropriateness and Quality of Samples in Organizational Research. Annu. Rev. Organ. Psychol. Organ. Behav. 2022, 10, 315–337. [Google Scholar] [CrossRef]

- Graham, J.; Haidt, J.; Nosek, B.A. Liberals and conservatives rely on different sets of moral foundations. J. Personal. Soc. Psychol. 2009, 96, 1029. [Google Scholar] [CrossRef] [PubMed]

- Harper, C.A.; Rhodes, D. Reanalysing the factor structure of the moral foundations questionnaire. Br. J. Soc. Psychol. 2021, 60, 1303–1329. [Google Scholar] [CrossRef]

- Körber, M. Theoretical considerations and development of a questionnaire to measure trust in automation. In Proceedings of the 20th Congress of the International Ergonomics Association (IEA 2018) Volume VI: Transport Ergonomics and Human Factors (TEHF); Aerospace Human Factors and Ergonomics 20; Springer: Cham, Switzerland, 2019; pp. 13–30. [Google Scholar]

- Taherdoost, H. Validity and reliability of the research instrument; how to test the validation of a questionnaire/survey in a research. Int. J. Acad. Res. Manag. (IJARM) 2016, 5, 28–36. [Google Scholar] [CrossRef]

- Ursachi, G.; Horodnic, I.A.; Zait, A. How reliable are measurement scales? External factors with indirect influence on reliability estimators. Procedia Econ. Financ. 2015, 20, 679–686. [Google Scholar] [CrossRef]

- Hair, J.F.; Ringle, C.M.; Gudergan, S.P.; Fischer, A.; Nitzl, C.; Menictas, C. Partial least squares structural equation modeling-based discrete choice modeling: An illustration in modeling retailer choice. Bus. Res. 2019, 12, 115–142. [Google Scholar] [CrossRef]

- Okoye, K.; Hosseini, S. Correlation tests in R: Pearson cor, kendall’s tau, and spearman’s rho. In R Programming: Statistical Data Analysis in Research; Springer: Berlin/Heidelberg, Germany, 2024; pp. 247–277. [Google Scholar]

- McKight, P.E.; Najab, J. Kruskal-wallis test. In The Corsini Encyclopedia of Psychology; John Wiley & Sons, Inc.: Hoboken, NJ, USA, 2010; Volume 1. [Google Scholar] [CrossRef]

- Fang, Z.; Du, R.; Cui, X. Uniform approximation is more appropriate for wilcoxon rank-sum test in gene set analysis. PLoS ONE 2012, 7, e31505. [Google Scholar] [CrossRef]

- Tomczak, M.; Tomczak, E. The need to report effect size estimates revisited. An overview of some recommended measures of effect size. Trends Sport Sci. 2014, 1, 19–25. [Google Scholar]

- Keren, A. Expert Authority and Its Assessment. Soc. Epistemol. 2025, 39, 612–625. [Google Scholar] [CrossRef]

- Croce, M.; Baghramian, M. Experts—Part II: The Sources of Epistemic Authority. Philos. Compass 2024, 19, e70005. [Google Scholar] [CrossRef]

- Anderson, A.A.; Scheufele, D.A.; Brossard, D.; Corley, E.A. The role of media and deference to scientific authority in cultivating trust in sources of information about emerging technologies. Int. J. Public Opin. Res. 2012, 24, 225–237. [Google Scholar] [CrossRef]

- Schroder-Pfeifer, P.; Talia, A.; Volkert, J.; Taubner, S. Developing an assessment of epistemic trust: A research protocol. Res. Psychother. Psychopathol. Process Outcome 2018, 21, 330. [Google Scholar] [CrossRef]

- Howell, E.L.; Wirz, C.D.; Scheufele, D.A.; Brossard, D.; Xenos, M.A. Deference and decision-making in science and society: How deference to scientific authority goes beyond confidence in science and scientists to become authoritarianism. Public Underst. Sci. 2020, 29, 800–818. [Google Scholar] [CrossRef]

- Kapania, S.; Siy, O.; Clapper, G.; Sp, A.M.; Sambasivan, N. “Because AI is 100% right and safe”: User attitudes and sources of AI authority in India. In Proceedings of the 2022 CHI Conference on Human Factors in Computing Systems, New Orleans, LA, USA, 29 April–5 May 2022; pp. 1–18. [Google Scholar]

- Ferrario, A.; Facchini, A.; Termine, A. Experts or Authorities? The Strange Case of the Presumed Epistemic Superiority of Artificial Intelligence Systems. Minds Mach. 2024, 34, 30. [Google Scholar] [CrossRef]

- Grote, T.; Berens, P. On the ethics of algorithmic decision-making in healthcare. J. Med. Ethics 2020, 46, 205–211. [Google Scholar] [CrossRef] [PubMed]

- Parasuraman, R.; Riley, V. Humans and automation: Use, misuse, disuse, abuse. Hum. Factors 1997, 39, 230–253. [Google Scholar] [CrossRef]

- Moes, M.; Knox, K.; Pierce, L.; Beck, H. Should I decide or let the machine decide for me. In Proceedings of the Poster Presented at the Meeting of the Southeastern Psychological Association, Savannah, GA, USA, 18–21 March 1999. [Google Scholar]

- Schoenherr, J.R.; Thomson, R. When AI fails, who do we blame? Attributing responsibility in human–AI interactions. IEEE Trans. Technol. Soc. 2024, 5, 61–70. [Google Scholar] [CrossRef]

- Wagner, A.R.; Borenstein, J.; Howard, A. Overtrust in the robotic age. Commun. ACM 2018, 61, 22–24. [Google Scholar] [CrossRef]

- Siau, K.; Wang, W. Building trust in artificial intelligence, machine learning, and robotics. Cut. Bus. Technol. J. 2018, 31, 47. [Google Scholar]

- Yeomans, M.; Shah, A.; Mullainathan, S.; Kleinberg, J. Making sense of recommendations. J. Behav. Decis. Mak. 2019, 32, 403–414. [Google Scholar] [CrossRef]

- Logg, J.M.; Minson, J.A.; Moore, D.A. Algorithm appreciation: People prefer algorithmic to human judgment. Organ. Behav. Hum. Decis. Processes 2019, 151, 90–103. [Google Scholar] [CrossRef]

- Castelo, N.; Bos, M.W.; Lehmann, D.R. Task-dependent algorithm aversion. J. Mark. Res. 2019, 56, 809–825. [Google Scholar] [CrossRef]

- Brailsford, J.; Vetere, F.; Velloso, E. Exploring the Association between Moral Foundations and Judgements of AI Behaviour. In Proceedings of the CHI Conference on Human Factors in Computing Systems, Honolulu, HI, USA, 11–16 May 2024; pp. 1–15. [Google Scholar]

- Maninger, T.; Shank, D.B. Perceptions of violations by artificial and human actors across moral foundations. Comput. Hum. Behav. Rep. 2022, 5, 100154. [Google Scholar] [CrossRef]

- Hoff, K.A.; Bashir, M. Trust in Automation: Integrating Empirical Evidence on Factors That Influence Trust: Integrating Empirical Evidence on Factors That Influence Trust. Hum. Factors J. Hum. Factors Ergon. Soc. 2014, 57, 407–434. [Google Scholar] [CrossRef]

- Kaufmann, E.; Chacon, A.; Kausel, E.E.; Herrera, N.; Reyes, T. Task-specific algorithm advice acceptance: A review and directions for future research. Data Inf. Manag. 2023, 7, 100040. [Google Scholar] [CrossRef]

- Milella, F.; Natali, C.; Scantamburlo, T.; Campagner, A.; Cabitza, F. The impact of gender and personality in human-AI teaming: The case of collaborative question answering. In Proceedings of the IFIP Conference on Human-Computer Interaction, York, UK, 28 August–1 September 2023; Springer: Berlin/Heidelberg, Germany, 2023; pp. 329–349. [Google Scholar]

- Pasquale, F. The Black Box Society: The Secret Algorithms That Control Money and Information; Harvard University Press: Cambridge, MA, USA, 2015. [Google Scholar]

- Shin, D.; Park, Y.J. Role of fairness, accountability, and transparency in algorithmic affordance. Comput. Hum. Behav. 2019, 98, 277–284. [Google Scholar] [CrossRef]

- Kim, T.; Molina, M.D.; Rheu, M.; Zhan, E.S.; Peng, W. One AI does not fit all: A cluster analysis of the laypeople’s perception of AI roles. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems, Hamburg, Germany, 23–28 April 2023; pp. 1–20. [Google Scholar]

- Ding, X.; Xing, C. Trust in AI vs. human doctors: The roles of subjective understanding, perceived epistemic authority and social proof. Acta Psychol. 2025, 261, 105945. [Google Scholar] [CrossRef]

- Alvarado, R. AI as an epistemic technology. Sci. Eng. Ethics 2023, 29, 32. [Google Scholar] [CrossRef] [PubMed]

- Facchini, A.; Fregosi, C.; Natali, C.; Termine, A.; Wilson, B. Algorithmic Authority & AI Influence in Decision Settings: Theories and Implications for Design. In Proceedings of the 12th International Conference on Human-Agent Interaction, Swansea, UK, 24–27 November 2024; pp. 472–474. [Google Scholar]

| Concept | Definition |

|---|---|

| Algorithmic authority | The power of algorithms to manage human action and influence what information is accessible to users, stressing that it does not reside solely in code but emerges from a diversity of sociotechnical actors [10]. |

| Epistemic Authority | The attribution of epistemic legitimacy to an AI system’s outputs as convincing assertions about what is true and, consequently, what it is better to do. |

| Algority | The propensity to confer such authority on algorithms in contexts where one might otherwise defer to human experts. We treat algority as a relational phenomenon grounded in human conferral of legitimacy rather than as an instance of algorithmic agency, a boundary we have previously discussed as a non-agentiality stance [13]. |

| Epistemic reliance | Epistemic reliance is conferred not on an identifiable individual but on a process: an unmanaged computational procedure that derives value from heterogeneous sources [6,7,8]. |

| Moral Foundation Questionnaire (MFQ) |

| (1) Whether or not someone showed a lack of respect for authority |

| (2) Whether or not someone conformed to the traditions of society |

| (3) Whether or not an action caused chaos or disorder |

| (4) Respect for authority is something all children need to learn |

| (5) Men and women each have different roles to play in society |

| (6) If I were a soldier and disagreed with my commanding officer’s orders, |

| I would obey anyway because that is my duty |

| Trust in Automation (TiA) |

| (1) One should be careful with unfamiliar automated systems * |

| (2) I rather trust a system than I mistrust it |

| (3) Automated systems generally work well |

| (4) I trust the system |

| (5) I can rely on the system |

| Perfect Automation Schema (PAS) |

| (1) Automated systems have 100% perfect performance |

| (2) Automated systems rarely make mistakes |

| (3) Automated systems can always be counted on to make accurate decisions |

| (4) Automated systems make more mistakes than people realize * |

| (5) People generally believe automated system work better than they do * |

| (6) People have no reason to question the decisions automated systems make |

| (7) If automated system makes error, then it is broken |

| (8) If an automated system makes a mistake, then it is completely useless |

| (9) Correctly functioning automated systems are perfectly reliable |

| Expectation on AI (EonAI) |

| (a) In these areas, compared with renowned experts, an AI software or machine usually makes: |

| (1) Far fewer errors |

| (2) Slightly fewer errors |

| (3) About the same number of errors |

| (4) Slightly more errors |

| (5) Many more errors |

| (6) Can’t make these decisions yet |

| Attitude towards replacement (ATR) |

| (a) If, in a given high-risk task, an AI system consistently shows higher accuracy than an average human expert: |

| (1) It should support humans, but they must always have the last word and can disagree |

| (2) It should support humans, who always have the last word and can disagree only if they bring solid evidence that they are right and the machine is wrong |

| (3) It should replace humans in these tasks |

| (4) This can never happen |

| (b) Nobel laureate Kahnemann once said that: “Whenever we can replace human judgment with an algorithm, we should at least consider it. [In fact] we should replace humans with algorithms whenever it is possible to: |

| (1) Strongly disagree |

| (2) Moderately disagree |

| (3) Slightly disagree |

| (4) Slightly agree |

| (5) Moderately agree |

| (6) Strongly agree |

| Trust on Prediction (ToP) |

| (a) If a dating app calculated a 98.5 percent affinity between you and a potential partner, how likely do you think you would be to have a long and rewarding love affair with that person: |

| (1) Almost certainly |

| (2) 50% |

| (3) About 1 in 20 (5%) |

| (4) Hardly possible |

| (b) If a computer program considered your exam grades, and your answers to a long psychometric questionnaire, and offered you a job position with a 97.8 percent probability that it would be the perfect job for you, what is the likelihood that you might actually feel fulfilled in doing that job? |

| (1) Almost certainly |

| (2) 50% |

| (3) About 1 in 20 (5%) |

| (4) Hardly possible |

| Preference on Judgment (PoJ) |

| (a) You are falsely accused of a crime you did not commit, and you are assisted by a very good lawyer. You would rather be tried by: |

| (1) A judge |

| (2) An AI that calculates the probability that you are actually innocent or guilty |

| (3) A popular jury |

| (4) A popular jury including an AI that calculates the probability that you are actually innocent or guilty |

| (5) A jury of judges |

| (6) A jury of judges using an AI that calculates the probability that you are actually innocent or guilty |

| (b) For a very important job interview for a position you believe you deserve and that would change your life for the better, you would rather be judged by: |

| (1) A human being |

| (2) An artificial intelligence |

| (3) A human supported by an artificial intelligence |

| (4) A human committee |

| (5) A human commission supported by an AI |

| (c) If you were to apply for a mortgage, and for that purpose you still had to fill out a lengthy questionnaire, knowing that the decision is final even though it is your right to know the reasons: |

| (1) A human expert |

| (2) An AI |

| (3) A committee |

| (4) A committee of which an AI is also a member |

| Deference for Action (DoA) |

| (a) Imagine the following situation: you have to drive to a friend who lives in an area you do not know. Getting into the car, you turn on the navigation system and enter your friend’s correct address: |

| (1) turn right and follow your friend’s directions because… she will know exactly how to get to her house! |

| (2) you turn left and follow the navigator’s directions because it has just been updated and may want you to avoid a busy area or a temporarily closed road. |

| (3) You are confused, you make the traffic circle a couple of times trying to figure out what to do but eventually you listen to the navigator who will still take you to your destination |

| (4) You’re confused, you go around the traffic circle a couple of times trying to figure out what to do but in the end you do what the friend told you trusting that the navigator will recalculate the route in a few seconds… |

| W | r | Z-Score | p-Value (1) | p-Value (2) | |

|---|---|---|---|---|---|

| EonAI | 33,622.50 | 0.19 | 4.39 | <0.001 * | <0.001 * |

| ATR (a) | 29,522.5 | 0.07 | 1.70 | 0.090 | 0.045 * |

| ATR (b) | 32,402 | 0.19 | 4.43 | <0.001 * | <0.001 * |

| ToP (b) | 9618 | 0.06 | 1.39 | 0.164 | 0.082 |

| PoJ (c) | 5160 | 0.07 | 1.60 | 0.109 | 0.055 |

| ToP (a) | 30,410 | 0.13 | 3.07 | 0.002 * | 0.001 * |

| PoJ (a) | 30,300.50 | 0.13 | 3.13 | 0.002 * | <0.001 * |

| PoJ (b) | 29,169.50 | 0.09 | 2.12 | 0.034 * | 0.017 * |

| DoA | 29,135.5 | 0.11 | 2.62 | 0.009 * | 0.004 * |

| W | r | Z-Score | p-Value (1) | p-Value (2) | |

|---|---|---|---|---|---|

| EonAI | 30,439.5 | 0.09 | 2.00 | 0.046 * | 0.023 * |

| ATR (a) | 27,625.50 | 0.00 | 0.08 | 0.939 | 0.470 |

| ATR (b) | 31,282 | 0.15 | 3.46 | <0.001 * | <0.001 * |

| ToP (b) | 9360 | 0.04 | 0.85 | 0.396 | 0.198 |

| PoJ (c) | 5255 | 0.07 | 1.60 | 0.109 | 0.055 |

| ToP (a) | 30,350.50 | 0.12 | 2.89 | 0.004 * | 0.002 * |

| PoJ (a) | 29,065 | 0.08 | 1.96 | 0.050 * | 0.025 * |

| PoJ (b) | 27,904 | 0.04 | 0.97 | 0.334 | 0.167 |

| DoA | 27,786.50 | 0.06 | 1.47 | 0.141 | 0.070 |

| W | r | Z-Score | p-Value (1) | p-Value (2) | |

|---|---|---|---|---|---|

| EonAI | 27,799 | 0.00 | 0.05 | 0.960 | 0.480 |

| ATR (a) | 25,457.50 | 0.07 | −1.69 | 0.090 | 0.955 |

| ATR (b) | 27,668 | 0.03 | 0.66 | 0.511 | 0.255 |

| ToP (b) | 8585.50 | 0.03 | −0.71 | 0.478 | 0.762 |

| PoJ (c) | 4541.50 | 0.01 | −0.33 | 0.746 | 0.628 |

| ToP (a) | 26,570.5 | 0.01 | −0.18 | 0.859 | 0.571 |

| PoJ (a) | 24,224.50 | 0.09 | −2.14 | 0.033 * | 0.984 |

| PoJ (b) | 25,961 | 0.03 | −0.67 | 0.502 | 0.749 |

| DoA | 27,064 | 0.04 | 0.83 | 0.408 | 0.204 |

| W | r | Z-Score | p-Value (1) | p-Value (2) | |

|---|---|---|---|---|---|

| EonAI | 29,220 | 0.04 | 0.98 | 0.330 | 0.165 |

| ATR (a) | 28,889.5 | 0.04 | 0.91 | 0.364 | 0.184 |

| ATR (b) | 23,385.5 | 0.11 | −2.65 | 0.008 * | 0.996 |

| ToP (b) | 9890 | 0.08 | 1.92 | 0.055 | 0.027 * |

| PoJ (c) | 4677.50 | 0.01 | 0.33 | 0.741 | 0.371 |

| ToP (a) | 27,295 | 0.01 | 0.30 | 0.763 | 0.381 |

| PoJ (a) | 27,111.50 | 0.01 | 0.17 | 0.869 | 0.434 |

| PoJ (b) | 26,604 | 0.01 | −0.25 | 0.806 | 0.598 |

| DoA | 26,604 | 0.02 | 0.48 | 0.635 | 0.317 |

| p-Value | PMW Test | p-adj 1 | |||

|---|---|---|---|---|---|

| TiA-PoJ (a) | 14.142 | 0.0008492 * | 0.0226 | H-H | 0.0016 * |

| PAS-PoJ (a) | 4.4416 | 0.1085 | 0.00455 | - | - |

| MFQ-PoJ (a) | 7.9952 | 0.01836 * | 0.0112 | - | - |

| TiA-PoJ (b) | 2.5591 | 0.2782 | 0.00104 | - | - |

| PAS-PoJ (b) | 6.5179 | 0.03843 * | 0.00841 | - | - |

| MFQ-PoJ (b) | 2.9134 | 0.233 | 0.00170 | - | - |

| TiA-PoJ (c) | 5.6236 | 0.0601 | 0.00675 | - | - |

| PAS-PoJ (c) | 3.3988 | 0.1828 | 0.00260 | - | - |

| MFQ-PoJ (c) | 0.15968 | 0.9233 | 0.00 | - | - |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Milella, F.; Cabitza, F. Perceiving AI as an Epistemic Authority or Algority: A User Study on the Human Attribution of Authority to AI. Mach. Learn. Knowl. Extr. 2026, 8, 36. https://doi.org/10.3390/make8020036

Milella F, Cabitza F. Perceiving AI as an Epistemic Authority or Algority: A User Study on the Human Attribution of Authority to AI. Machine Learning and Knowledge Extraction. 2026; 8(2):36. https://doi.org/10.3390/make8020036

Chicago/Turabian StyleMilella, Frida, and Federico Cabitza. 2026. "Perceiving AI as an Epistemic Authority or Algority: A User Study on the Human Attribution of Authority to AI" Machine Learning and Knowledge Extraction 8, no. 2: 36. https://doi.org/10.3390/make8020036

APA StyleMilella, F., & Cabitza, F. (2026). Perceiving AI as an Epistemic Authority or Algority: A User Study on the Human Attribution of Authority to AI. Machine Learning and Knowledge Extraction, 8(2), 36. https://doi.org/10.3390/make8020036