MBS: A Modality-Balanced Strategy for Multimodal Sample Selection

Abstract

1. Introduction

- (1)

- We introduce the concept and computation of the Modality Balance Score (MBS), which effectively quantifies the contribution of multimodal samples to model convergence during training;

- (2)

- Based on MBS, we propose a sample selection strategy and demonstrate that it can identify effective samples at earlier stages of training, while exhibiting robustness under larger selection ratios;

- (3)

- We provide extensive experimental validation, comparing our approach with two other representative sample selection strategies on two multimodal datasets. These results offer useful references for researchers with different preferences in choosing sample selection strategies.

2. Related Work

2.1. Incremental Learning in Resource-Constrained Environments

2.2. Data Selection Based on Quantified Sample Attributes

- (1)

- GraNd score:

- (2)

- EL2N score:

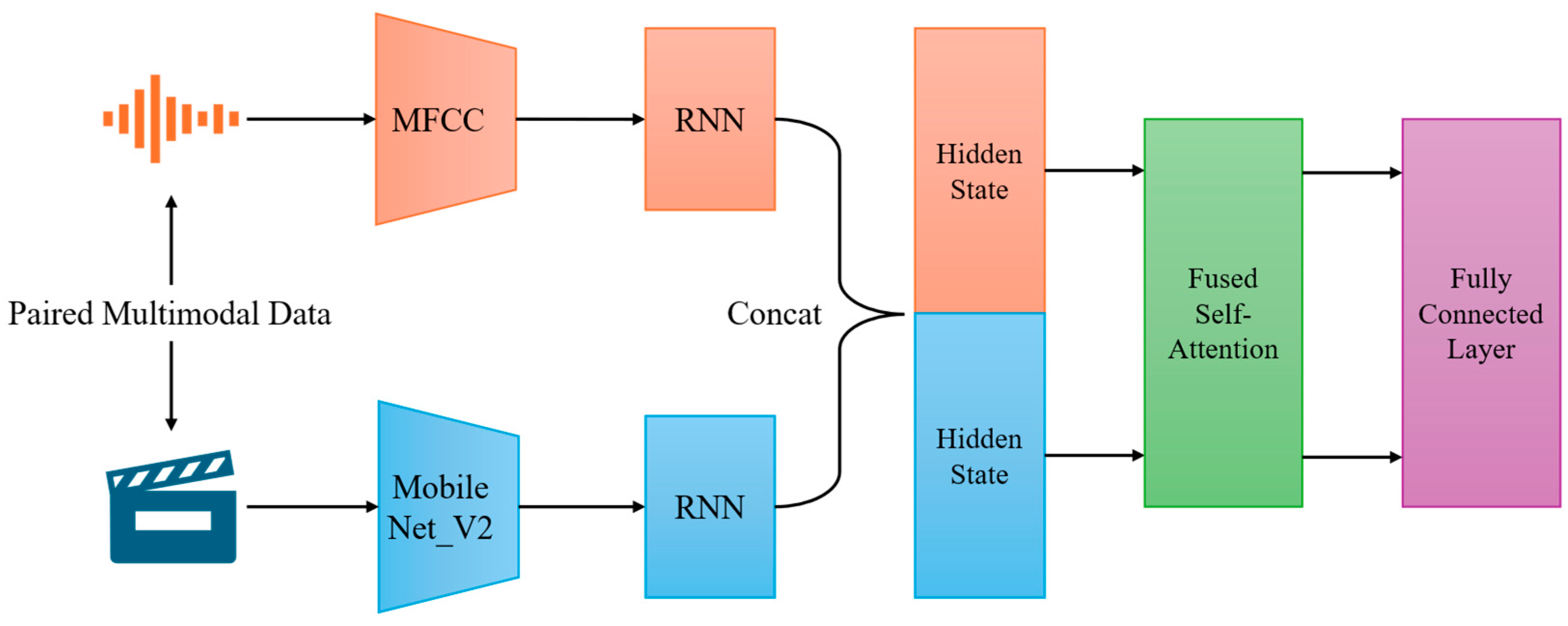

3. Basic Model

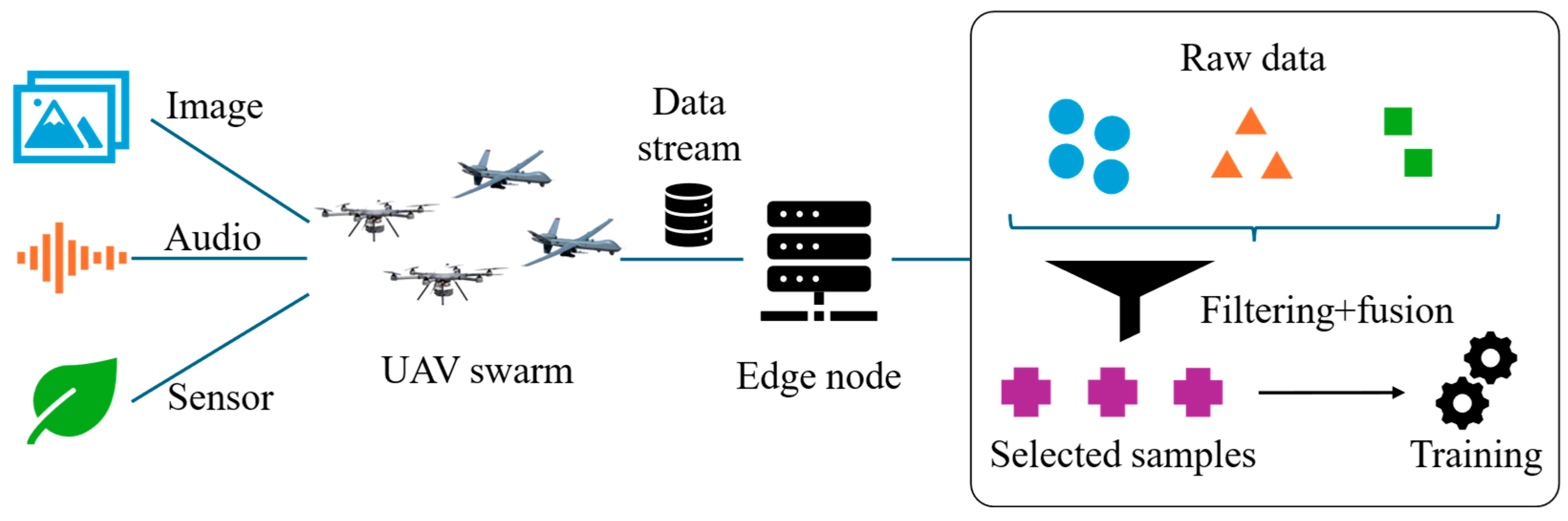

3.1. Dual-Modality Incremental Learning Paradigm

3.2. Optimization Objective

4. Main Method

4.1. Modality Balance Quantification: MBS

4.2. Multimodal Sample Selection Strategy: Based on MBS

| Algorithm 1: Multimodal Sample Selection based on MBS |

| Input: Batch of samples Pretrained encoders Strategy parameters Selection ratio Output: Filtered sample set Steps: 1: Initialize 2: For each sample do 3: 4: 5: = 6: = 7: = 8: Append (, ) to 9: End For 10: Compute 11: Initialize 12: For each do 13: If then 14: Append to 15: Else if then 16: Append to 17: End If 18: End For 19: Sort and by deviation in ASC 20: 21: Initialize = { } 22: Add top K samples from to 23: Add top K samples from to 24: Return |

4.3. Effectiveness

5. Evaluation

5.1. Experimental Setup

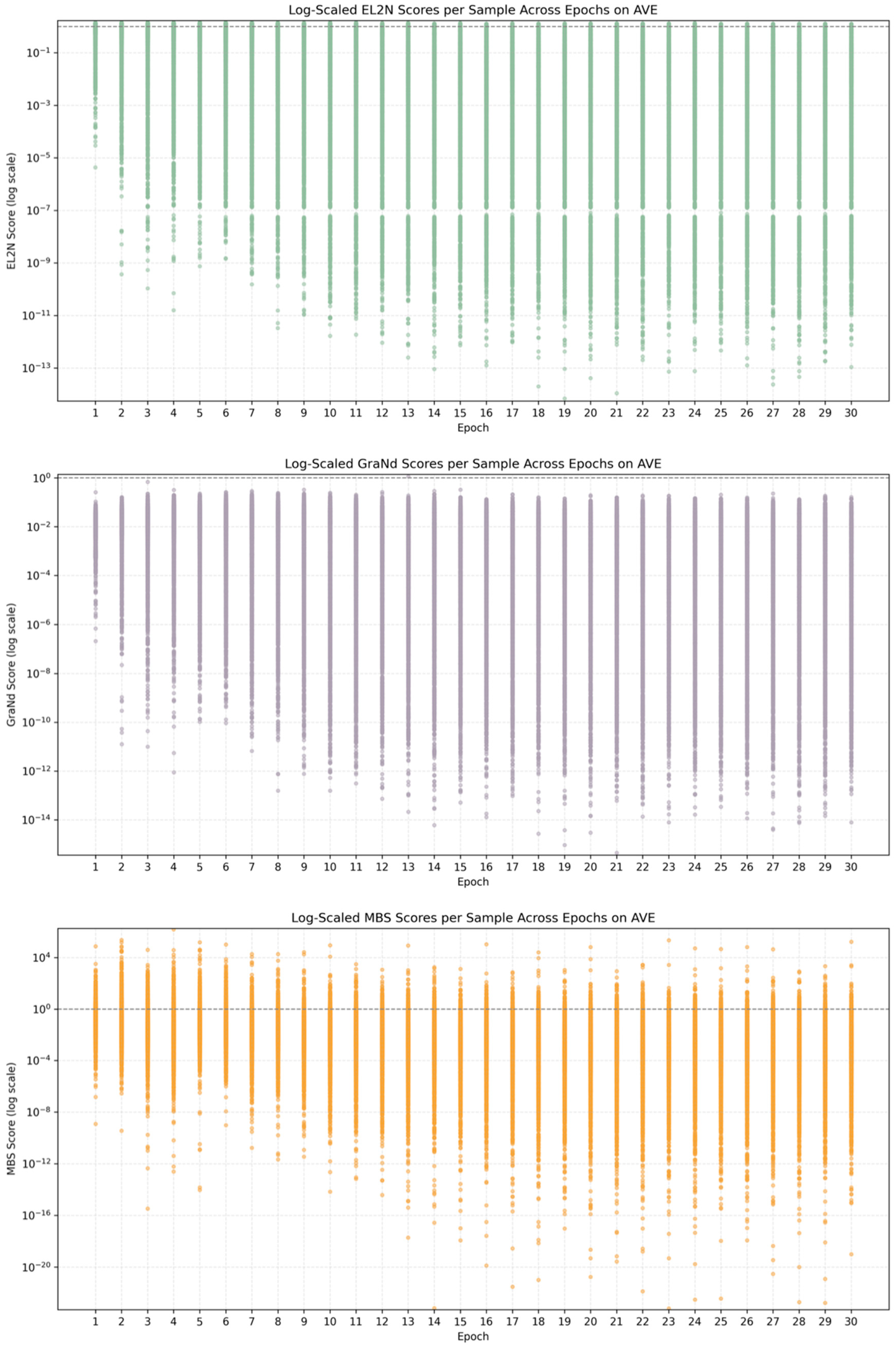

5.2. Experiment A: Multi-Attribute Observation of the Sample Set

5.3. Experiment B: Model Performance Under Different Selection Strategies

5.3.1. Model Performance on the CREMA-D Dataset

5.3.2. Model Performance on the AVE Dataset

5.4. Comprehensive Analysis

5.5. Stress Testing and Ablation Analysis

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A

Appendix A.1

| Algorithm A1: Sample Selection Strategy based on GraNd |

| Input: grand_scores: List[float], GraNd score for each sample keep_ratio: float, proportion of samples to keep (between 0 and 1) Output: selected_indices: List[int], indices of retained samples Steps: 1: Get total number of samples 2: num_samples = len(grand_scores) 3: Compute number of samples to keep 4: num_keep = int(num_samples × keep_ratio) 5: Sort scores in descending order 6: sorted_indices = argsort(grand_scores, descending = True) 7: Select top num_keep samples 8: selected_indices = sorted_indices[:num_keep] 9: Return selected indices 10: return selected_indices |

Appendix A.2

| Algorithm A2: Sample Selection Strategy based on EL2N |

| Input: el2n_scores: List[float], EL2N score for each sample keep_ratio: float, proportion of samples to keep (between 0 and 1) Output: selected_indices: List[int], indices of retained samples Steps: 1: Get total number of samples 2: num_samples = len(el2n_scores) 3: Compute number of samples to keep 4: num_keep = int(num_samples × keep_ratio) 5: Sort scores in descending order 6: sorted_indices = argsort(el2n_scores, descending = False) 7: Select top num_keep samples 8: selected_indices = sorted_indices[:num_keep] 9: Return selected indices 10: return selected_indices |

References

- Liu, H.I.; Galindo, M.; Xie, H.; Wong, L.-K.; Shuai, H.-H.; Li, Y.-H.; Cheng, W.-H. Lightweight deep learning for resource-constrained environments: A survey. ACM Comput. Surv. 2024, 56, 1–42. [Google Scholar] [CrossRef]

- Shuvo, M.M.H.; Islam, S.K.; Cheng, J.; Morshed, B.I. Efficient acceleration of deep learning inference on resource-constrained edge devices: A review. Proc. IEEE 2022, 111, 42–91. [Google Scholar] [CrossRef]

- Landola, F.N.; Han, S.; Moskewicz, M.W.; Ashraf, K.; Dally, W.J.; Keutzer, K. SqueezeNet: AlexNet-level accuracy with 50× fewer parameters and <0.5 MB model size. arXiv 2017, arXiv:1602.07360. [Google Scholar]

- Zhang, X.; Zhou, X.; Lin, M.; Sun, J. Shufflenet: An extremely efficient convolutional neural network for mobile devices. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018; pp. 6848–6856. [Google Scholar]

- Tan, M.; Le, Q. Efficientnet: Rethinking model scaling for convolutional neural networks. In Proceedings of the International Conference on Machine Learning, PMLR, Long Beach, CA, USA, 9–15 June 2019; pp. 6105–6114. [Google Scholar]

- Mehta, S.; Rastegari, M. MobileViT: Light-weight, General-purpose, and Mobile-friendly Vision Transformer. In Proceedings of the International Conference on Learning Representations, ICLR, Vienna, Austria, 3–7 May 2021. [Google Scholar]

- Chen, Y.; Dai, X.; Chen, D.; Liu, M.; Dong, X.; Yuan, L.; Liu, Z. Mobile-former: Bridging mobilenet and transformer. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 5270–5279. [Google Scholar]

- Han, S.; Mao, H.; Dally, W.J. Deep Compression: Compressing Deep Neural Network with Pruning, Trained Quantization and Huffman Coding. In Proceedings of the 4th International Conference on Learning Representations, ICLR, San Juan, Puerto Rico, 2–4 May 2016. [Google Scholar]

- Molchanov, P.; Tyree, S.; Karras, T.; Aila, T.; Kautz, J. Pruning Convolutional Neural Networks for Resource Efficient Inference. In Proceedings of the International Conference on Learning Representations, ICLR, Toulon, France, 24–26 April 2017. [Google Scholar]

- Lee, N.; Ajanthan, T.; Torr, P. Snip: Single-Shot Network Pruning Based on Connection Sensitivity. In Proceedings of the International Conference on Learning Representations, ICLR, New Orleans, LA, USA, 6–9 May 2019. [Google Scholar]

- Jacob, B.; Kligys, S.; Chen, B.; Zhu, M.; Tang, M.; Howard, A.; Adam, H.; Kalenichenko, D. Quantization and training of neural networks for efficient integer-arithmetic-only inference. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018; pp. 2704–2713. [Google Scholar]

- Gou, J.; Yu, B.; Maybank, S.J.; Tao, D. Knowledge distillation: A survey. Int. J. Comput. Vis. 2021, 129, 1789–1819. [Google Scholar] [CrossRef]

- Chen, Y.H.; Krishna, T.; Emer, J.S.; Sze, V. Eyeriss: An energy-efficient reconfigurable accelerator for deep convolutional neural networks. IEEE J. Solid-State Circuits 2016, 52, 127–138. [Google Scholar] [CrossRef]

- Kang, Y.; Hauswald, J.; Gao, C.; Rovinski, A.; Mudge, T.; Mars, J.; Tang, L. Neurosurgeon: Collaborative intelligence between the cloud and mobile edge. ACM SIGARCH Comput. Archit. News 2017, 45, 615–629. [Google Scholar] [CrossRef]

- De Lange, M.; Aljundi, R.; Masana, M.; Parisot, S.; Jia, X.; Leonardis, A.; Slabaugh, G.; Tuytelaars, T. A continual learning survey: Defying forgetting in classification tasks. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 44, 3366–3385. [Google Scholar] [CrossRef] [PubMed]

- Wang, L.; Zhang, X.; Su, H.; Zhu, J. A comprehensive survey of continual learning: Theory, method and application. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 46, 5362–5383. [Google Scholar] [CrossRef] [PubMed]

- Rebuffi, S.A.; Kolesnikov, A.; Sperl, G.; Lampert, C. iCaRL: Incremental Classifier and Representation Learning. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 2001–2010. [Google Scholar]

- Wang, K.; Herranz, L.; van de Weijer, J. Continual Learning in Cross-Modal Retrieval. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPR), Nashville, TN, USA, 19–25 June 2021; pp. 1–10. [Google Scholar]

- Wang, Z.; Chen, Y.; Xu, C. Uncertainty-aware Sample Selection for Multimodal Continual Learning. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2–3 October 2023; pp. 11245–11255. [Google Scholar]

- Paul, M.; Ganguli, S.; Dziugaite, G.K. Deep learning on a data diet: Finding important examples early in training. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Online, 6–14 December 2021; Volume 34, pp. 20596–20607. [Google Scholar]

- Yang, Z.; Yang, H.; Majumder, S.; Cardoso, J.; Gallego, G. Data Pruning Can Do More: A Comprehensive Data Pruning Approach for Object Re-identification. arXiv 2024, arXiv:2412.10091. [Google Scholar] [CrossRef]

- Gong, C.; Zheng, Z.; Wu, F.; Shao, Y.; Li, B.; Chen, G. To store or not? Online data selection for federated learning with limited storage. In Proceedings of the ACM Web Conference (WWW), Austin, TX, USA, 30 April–4 May 2023; pp. 3044–3055. [Google Scholar]

- Peng, X.; Wei, Y.; Deng, A.; Wang, D.; Hu, D. Balanced multimodal learning via on-the-fly gradient modulation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 8238–8247. [Google Scholar]

- Fan, Y.; Xu, W.; Wang, H.; Wang, J.; Guo, S. PMR: Prototypical modal rebalance for multimodal learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition(CVPR), Vancouver, BC, Canada, 17–24 June 2023; pp. 20029–20038. [Google Scholar]

- Mahmoud, A.; Elhoushi, M.; Abbas, A.; Yang, Y.; Ardalan, N.; Leather, H.; Morcos, A. Sieve: Multimodal dataset pruning using image captioning models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 16–22 June 2024; p. 22423. [Google Scholar]

- Ye, W.; Wu, Q.; Lin, W.; Zhou, Y. Fit and prune: Fast and training-free visual token pruning for multi-modal large language models. In Proceedings of the AAAI Conference on Artificial Intelligence, Philadelphia, PA, USA, 25 February–4 March 2025; Volume 39, pp. 22128–22136. [Google Scholar]

- Cao, H.; Cooper, D.G.; Keutmann, M.K.; Gur, R.C.; Nenkova, A.; Verma, R. CREMA-D: Crowd-sourced Emotional Multimodal Actors Dataset. IEEE Trans. Affect. Comput. 2014, 5, 377–390. [Google Scholar] [CrossRef] [PubMed]

- Tian, Y.; Shi, J.; Li, B.; Duan, Z.; Xu, C. Audio-Visual Event Localization in Unconstrained Videos. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; Springer: Cham, Switzerland, 2018; pp. 247–263. [Google Scholar]

| Ratio | 0.1 | 0.2 | 0.3 | |

|---|---|---|---|---|

| Strategy | ||||

| EL2N | 0.8000 | 0.8097 | 0.7870 | |

| GraNd | 0.8065 | 0.8076 | 0.8011 | |

| MBS | 0.8259 | 0.8141 | 0.8065 | |

| Metric | Focus | Sample Attribute Described |

|---|---|---|

| EL2N | Learning Difficulty | Indicates whether a sample is easy for the model to learn, and whether it contains noise or lies near the decision boundary |

| GraNd | Contribution to Parameter Updates | Reflects the extent to which a sample drives the training direction of the model |

| MBS | Modality Balance | Characterizes whether the contributions of different modalities within a multimodal sample are balanced |

| Epoch | 5th | 10th | 15th | 20th | 25th | 30th | |

|---|---|---|---|---|---|---|---|

| Strategy | |||||||

| RANDOM | 1.70 | 0.95 | 0.20 | 0.85 | 0.70 | 0 | |

| EL2N | 0.55 | 0.625 | 0 | 0.15 | 0.175 | 0.70 | |

| GraNd | 0.45 | 0.10 | 0 | 0 | 0 | 1.35 | |

| MBS | 0 | 1.025 | 2.50 | 1.70 | 1.825 | 0.65 | |

| Epoch | 5th | 10th | 15th | 20th | 25th | 30th | |

|---|---|---|---|---|---|---|---|

| Strategy | |||||||

| RANDOM | 0.8 | 0.40 | 0.2 | 0.25 | 0.25 | 0.25 | |

| EL2N | 0.20 | 0.45 | 0.65 | 0.80 | 0.60 | 0.25 | |

| GraNd | 0.45 | 0.70 | 0 | 0.375 | 0.20 | 1.55 | |

| MBS | 1.25 | 1.15 | 1.85 | 1.275 | 1.65 | 0.65 | |

| Ratio | 0.1 | 0.15 | 0.2 | 0.25 | 0.3 | 0.35 | 0.4 | 0.45 | 0.5 | |

|---|---|---|---|---|---|---|---|---|---|---|

| Strategy | ||||||||||

| RANDOM | 0 | 0 | 3.75 | 0 | 0 | 6.0 | 4.5 | 0 | 1.0 | |

| EL2N | 4.0 | 5.5 | 2.5 | 1.75 | 6.0 | 0 | 0 | 0 | 0 | |

| GraNd | 2.5 | 0 | 3.0 | 3.0 | 0 | 0 | 0.5 | 0.5 | 0.5 | |

| MBS | 4.0 | 5.0 | 1.25 | 5.75 | 4.5 | 4.5 | 5.5 | 10 | 9.0 | |

| Ratio | 0.1 | 0.15 | 0.2 | 0.25 | 0.3 | 0.35 | 0.4 | 0.45 | 0.5 | |

|---|---|---|---|---|---|---|---|---|---|---|

| Strategy | ||||||||||

| RANDOM | 2.5 | 0 | 2.0 | 6.0 | 0 | 0 | 4.0 | 0 | 0 | |

| EL2N | 0 | 2.0 | 4.0 | 2.5 | 0.33 | 1.5 | 1.5 | 0 | 5.0 | |

| GraNd | 4.5 | 7.5 | 3.5 | 0 | 0.33 | 0 | 0.5 | 0.5 | 2.5 | |

| MBS | 3.5 | 1.0 | 1.0 | 2.0 | 9.83 | 9.0 | 4.5 | 10.0 | 3.0 | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Xu, Y.; Chen, B.; Hu, F.; Liu, J.; Zhao, C.; Wu, H. MBS: A Modality-Balanced Strategy for Multimodal Sample Selection. Mach. Learn. Knowl. Extr. 2026, 8, 17. https://doi.org/10.3390/make8010017

Xu Y, Chen B, Hu F, Liu J, Zhao C, Wu H. MBS: A Modality-Balanced Strategy for Multimodal Sample Selection. Machine Learning and Knowledge Extraction. 2026; 8(1):17. https://doi.org/10.3390/make8010017

Chicago/Turabian StyleXu, Yuntao, Bing Chen, Feng Hu, Jiawei Liu, Changjie Zhao, and Hongtao Wu. 2026. "MBS: A Modality-Balanced Strategy for Multimodal Sample Selection" Machine Learning and Knowledge Extraction 8, no. 1: 17. https://doi.org/10.3390/make8010017

APA StyleXu, Y., Chen, B., Hu, F., Liu, J., Zhao, C., & Wu, H. (2026). MBS: A Modality-Balanced Strategy for Multimodal Sample Selection. Machine Learning and Knowledge Extraction, 8(1), 17. https://doi.org/10.3390/make8010017