Recent Advances in Supervised Dimension Reduction: A Survey

Abstract

1. Introduction

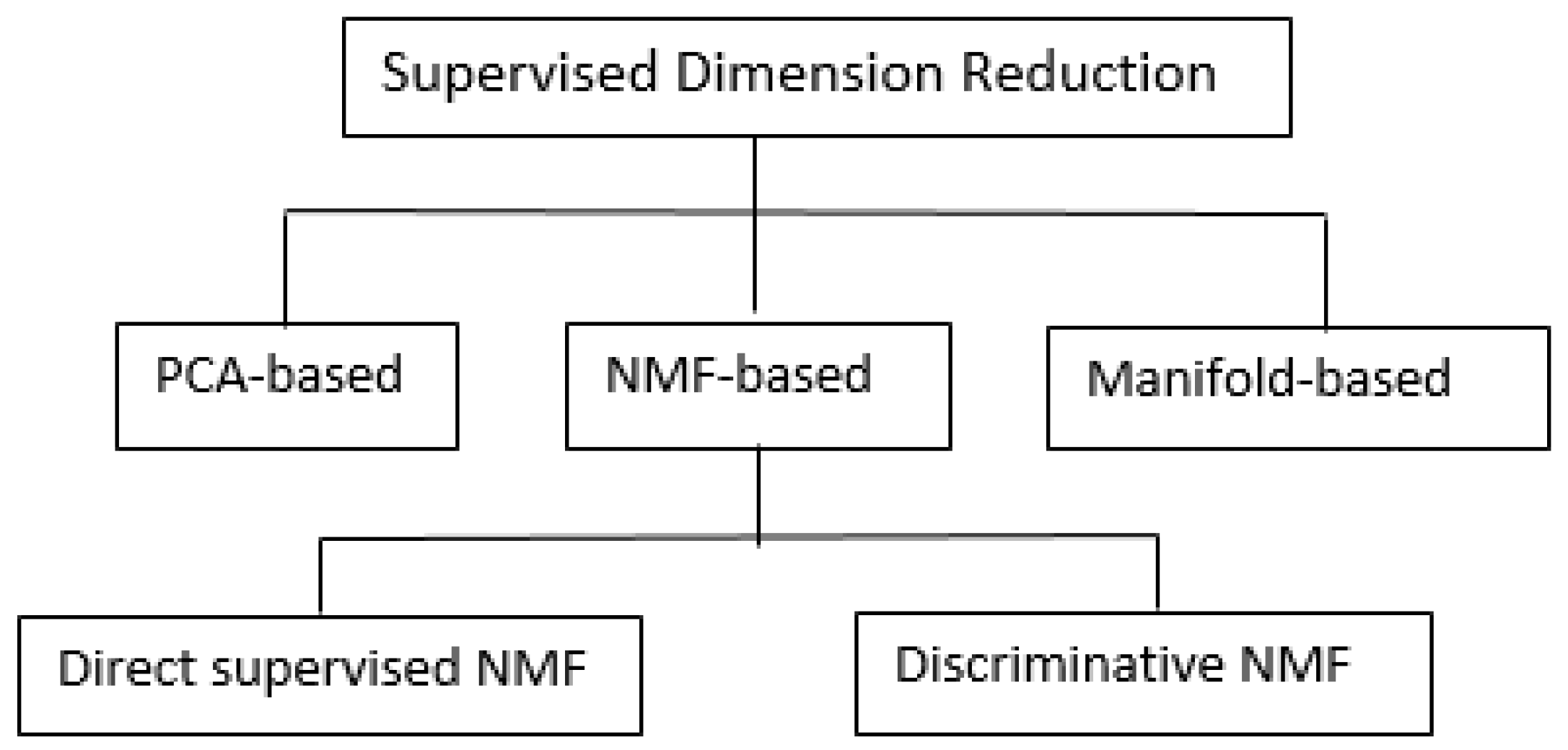

2. Definition and Taxonomy

3. Supervised Dimension Reduction

3.1. PCA-Based Supervised Dimension Reduction

3.2. NMF-Based Supervised Dimension Reduction

3.2.1. Direct Supervised NMF

3.2.2. Discriminative NMF

3.3. Manifold-Based Supervised Dimension Reduction

3.3.1. Isomap-Based Supervised Dimension Reduction

| Algorithm 1 MDS algorithm. |

|

| Algorithm 2 Isomap algorithm. |

|

| Algorithm 3 Enhanced supervised Isomap. |

|

3.3.2. LLE-Based Supervised Dimension Reduction

3.3.3. LE-Based Supervised Dimension Reduction

3.4. Discussion

4. Application

4.1. Computer Vision

4.2. Biomedical Informatics

4.3. Speech Recognition

4.4. Visualization

5. Potential Future Research Issues

5.1. Scalability

5.2. Missing Values

5.3. Heterogeneous Types

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Ai-Jun, Y.; Xin-Yuan, S. Bayesian variable selection for disease classification using gene expression data. Bioinformatics 2009, 26, 215–222. [Google Scholar] [CrossRef]

- Sun, J.; Bi, J.; Kranzler, H.R. Multi-view singular value decomposition for disease subtyping and genetic associations. BMC Genet. 2014, 15, 73. [Google Scholar] [CrossRef]

- Luo, Y.; Mao, C.; Yang, Y.; Wang, F.; Ahmad, F.S.; Arnett, D.; Irvin, M.R.; Shah, S.J. Integrating Hypertension Phenotype and Genotype with Hybrid Non-negative Matrix Factorization. Bioinformatics 2018. [Google Scholar] [CrossRef]

- Cai, J.; Luo, J.; Wang, S.; Yang, S. Feature selection in machine learning: A new perspective. Neurocomputing 2018, 300, 70–79. [Google Scholar] [CrossRef]

- Sun, S.; Zhang, C. Adaptive feature extraction for EEG signal classification. Med. Biol. Eng. Comput. 2006, 44, 931–935. [Google Scholar] [CrossRef]

- Guyon, I.; Elisseeff, A. An introduction to feature extraction. In Feature Extraction; Springer: Berlin/Heidelberg, German, 2006; pp. 1–25. [Google Scholar]

- Rogati, M.; Yang, Y. High-performing feature selection for text classification. In Proceedings of the Eleventh International Conference on Information and Knowledge Management, McLean, VA, USA, 4–9 November 2002; pp. 659–661. [Google Scholar]

- Kim, H.; Howland, P.; Park, H. Dimension reduction in text classification with support vector machines. J. Mach. Learn. Res. 2005, 6, 37–53. [Google Scholar]

- Basu, T.; Murthy, C. Effective text classification by a supervised feature selection approach. In Proceedings of the 2012 IEEE 12th International Conference on Data Mining Workshops, Brussels, Belgium, 10 December 2012; pp. 918–925. [Google Scholar]

- Carreira-Perpinán, M.A. A Review of Dimension Reduction Techniques; Technical Report CS-96-09 9; University of Sheffield: Sheffield, UK, 1997; pp. 1–69. [Google Scholar]

- Fodor, I.K. A Survey of Dimension Reduction Techniques; Center for Applied Scientific Computing, Lawrence Livermore National Laboratory: Livermore, CA, USA, 2002; Volume 9, pp. 1–18. [Google Scholar]

- Van Der Maaten, L.; Postma, E.; Van den Herik, J. Dimensionality reduction: A comparative review. J. Mach. Learn. Res. 2009, 10, 66–71. [Google Scholar]

- Thangavel, K.; Pethalakshmi, A. Dimensionality reduction based on rough set theory: A review. Appl. Soft Comput. 2009, 9, 1–12. [Google Scholar] [CrossRef]

- Ma, Y.; Zhu, L. A review on dimension reduction. Int. Stat. Rev. 2013, 81, 134–150. [Google Scholar] [CrossRef]

- Blum, M.G.; Nunes, M.A.; Prangle, D.; Sisson, S.A. A comparative review of dimension reduction methods in approximate Bayesian computation. Stat. Sci. 2013, 28, 189–208. [Google Scholar] [CrossRef]

- Sorzano, C.O.S.; Vargas, J.; Montano, A.P. A survey of dimensionality reduction techniques. arXiv 2014, arXiv:1403.2877. [Google Scholar]

- Luo, Y.; Ahmad, F.S.; Shah, S.J. Tensor factorization for precision medicine in heart failure with preserved ejection fraction. J. Cardiovasc. Transl. Res. 2017, 10, 305–312. [Google Scholar] [CrossRef] [PubMed]

- Tang, J.; Alelyani, S.; Liu, H. A survey of dimensionality reduction techniques. In Data Classification: Algorithms and Applications; CRC Press: Boca Raton, FL, USA, 2015. [Google Scholar]

- Hotelling, H. Analysis of a complex of statistical variables into principal components. J. Educ. Psychol. 1933, 24, 417. [Google Scholar] [CrossRef]

- Bair, E.; Hastie, T.; Paul, D.; Tibshirani, R. Prediction by supervised principal components. J. Am. Stat. Assoc. 2006, 101, 119–137. [Google Scholar] [CrossRef]

- Barshan, E.; Ghodsi, A.; Azimifar, Z.; Jahromi, M.Z. Supervised principal component analysis: Visualization, classification and regression on subspaces and submanifolds. Pattern Recognit. 2011, 44, 1357–1371. [Google Scholar] [CrossRef]

- Gretton, A.; Bousquet, O.; Smola, A.; Schölkopf, B. Measuring statistical dependence with Hilbert-Schmidt norms. In International Conference on Algorithmic Learning Theory; Springer: Berlin/Heidelberg, German, 2005; pp. 63–77. [Google Scholar]

- Fukumizu, K.; Bach, F.R.; Jordan, M.I. Dimensionality reduction for supervised learning with reproducing kernel Hilbert spaces. J. Mach. Learn. Res. 2004, 5, 73–99. [Google Scholar]

- Bin, J.; Ai, F.F.; Liu, N.; Zhang, Z.M.; Liang, Y.Z.; Shu, R.X.; Yang, K. Supervised principal components: A new method for multivariate spectral analysis. J. Chemom. 2013, 27, 457–465. [Google Scholar] [CrossRef]

- Roberts, S.; Martin, M.A. Using supervised principal components analysis to assess multiple pollutant effects. Environ. Health Perspect. 2006, 114, 1877. [Google Scholar] [CrossRef]

- Yu, S.; Yu, K.; Tresp, V.; Kriegel, H.P.; Wu, M. Supervised probabilistic principal component analysis. In Proceedings of the 12th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, Philadelphia, PA, USA, 20–23 August 2006; pp. 464–473. [Google Scholar]

- Lee, D.D.; Seung, H.S. Learning the parts of objects by non-negative matrix factorization. Nature 1999, 401, 788. [Google Scholar] [CrossRef]

- Dhillon, I.S.; Tropp, J.A. Matrix nearness problems with Bregman divergences. SIAM J. Matrix Anal. Appl. 2007, 29, 1120–1146. [Google Scholar] [CrossRef]

- Kong, D.; Ding, C.; Huang, H. Robust nonnegative matrix factorization using l21-norm. In Proceedings of the 20th ACM International Conference on Information and Knowledge Management, Glasgow, UK, 24–28 October 2011; pp. 673–682. [Google Scholar]

- Lee, D.D.; Seung, H.S. Algorithms for non-negative matrix factorization. In Proceedings of the Conference on Neural Information Processing Systems, Denver, CO, USA, 27 November–2 December 2000; pp. 556–562. [Google Scholar]

- Lin, C.J. Projected gradient methods for nonnegative matrix factorization. Neural Comput. 2007, 19, 2756–2779. [Google Scholar] [CrossRef] [PubMed]

- Hsieh, C.J.; Dhillon, I.S. Fast coordinate descent methods with variable selection for non-negative matrix factorization. In Proceedings of the 17th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Diego, CA, USA, 21–24 August 2011; pp. 1064–1072. [Google Scholar]

- Sun, D.L.; Fevotte, C. Alternating direction method of multipliers for non-negative matrix factorization with the beta-divergence. In Proceedings of the 2014 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Florence, Italy, 4–9 May 2014; pp. 6201–6205. [Google Scholar]

- Lee, H.; Yoo, J.; Choi, S. Semi-supervised nonnegative matrix factorization. IEEE Signal Process. Lett. 2010, 17, 4–7. [Google Scholar]

- Jing, L.; Zhang, C.; Ng, M.K. SNMFCA: Supervised NMF-based image classification and annotation. IEEE Trans. Image Process. 2012, 21, 4508–4521. [Google Scholar] [CrossRef] [PubMed]

- Gupta, M.D.; Xiao, J. Non-negative matrix factorization as a feature selection tool for maximum margin classifiers. In Proceedings of the 2011 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Colorado Springs, CO, USA, 20–25 June 2011; pp. 2841–2848. [Google Scholar]

- Shu, X.; Lu, H.; Tao, L. Joint learning with nonnegative matrix factorization and multinomial logistic regression. In Proceedings of the 2013 International Conference on Image Processing, Melbourne, Australia, 15–18 September 2013; pp. 3760–3764. [Google Scholar]

- Chao, G.; Mao, C.; Wang, F.; Zhao, Y.; Luo, Y. Supervised Nonnegative Matrix Factorization to Predict ICU Mortality Risk. arXiv 2018, arXiv:1809.10680. [Google Scholar]

- Luo, Y.; Xin, Y.; Joshi, R.; Celi, L.A.; Szolovits, P. Predicting ICU Mortality Risk by Grouping Temporal Trends from a Multivariate Panel of Physiologic Measurements. In Proceedings of the Thirtieth AAAI Conference on Artificial Intelligence, Phoenix, AZ, USA, 12–17 February 2016; pp. 42–50. [Google Scholar]

- Mairal, J.; Bach, F.; Ponce, J.; Sapiro, G.; Zisserman, A. Discriminative learned dictionaries for local image analysis. In Proceedings of the 2008 IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008. [Google Scholar]

- Mairal, J.; Bach, F.; Ponce, J. Task-driven dictionary learning. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 34, 791–804. [Google Scholar] [CrossRef] [PubMed]

- Zhang, R.; Hu, Z.; Pan, G.; Wang, Y. Robust discriminative non-negative matrix factorization. Neurocomputing 2016, 173, 552–561. [Google Scholar] [CrossRef]

- Bisot, V.; Serizel, R.; Essid, S.; Richard, G. Supervised nonnegative matrix factorization for acoustic scene classification. In Proceedings of the Detection and Classification of Acoustic Scenes and Events 2016, Budapest, Hungary, 3 September 2016. [Google Scholar]

- Sprechmann, P.; Bronstein, A.M.; Sapiro, G. Supervised non-negative matrix factorization for audio source separation. In Excursions in Harmonic Analysis; Springer: Berlin/Heidelberg, Germany, 2015; Volume 4, pp. 407–420. [Google Scholar]

- Wang, Y.; Jia, Y.; Hu, C.; Turk, M. Fisher non-negative matrix factorization for learning local features. In Proceedings of the Sixth Asian Conference on Computer Vision, Jeju, Korea, 27–30 January 2004; pp. 27–30. [Google Scholar]

- Zafeiriou, S.; Tefas, A.; Buciu, I.; Pitas, I. Exploiting discriminant information in nonnegative matrix factorization with application to frontal face verification. IEEE Trans. Neural Netw. 2006, 17, 683–695. [Google Scholar] [CrossRef]

- Kotsia, I.; Zafeiriou, S.; Pitas, I. A novel discriminant non-negative matrix factorization algorithm with applications to facial image characterization problems. IEEE Trans. Inf. Forensics Secur. 2007, 2, 588–595. [Google Scholar] [CrossRef]

- Guan, N.; Tao, D.; Luo, Z.; Yuan, B. Manifold regularized discriminative nonnegative matrix factorization with fast gradient descent. IEEE Trans. Image Process. 2011, 20, 2030–2048. [Google Scholar] [CrossRef]

- Lu, Y.; Lai, Z.; Xu, Y.; Li, X.; Zhang, D.; Yuan, C. Nonnegative discriminant matrix factorization. IEEE Trans. Circuits Syst. Video Technol. 2017, 27, 1392–1405. [Google Scholar] [CrossRef]

- Vilamala, A.; Lisboa, P.J.; Ortega-Martorell, S.; Vellido, A. Discriminant Convex Non-negative Matrix Factorization for the classification of human brain tumours. Pattern Recognit. Lett. 2013, 34, 1734–1747. [Google Scholar] [CrossRef]

- Lee, S.Y.; Song, H.A.; Amari, S.I. A new discriminant NMF algorithm and its application to the extraction of subtle emotional differences in speech. Cognit. Neurodyn. 2012, 6, 525–535. [Google Scholar] [CrossRef] [PubMed]

- Tenenbaum, J.B.; De Silva, V.; Langford, J.C. A global geometric framework for nonlinear dimensionality reduction. Science 2000, 290, 2319–2323. [Google Scholar] [CrossRef] [PubMed]

- Roweis, S.T.; Saul, L.K. Nonlinear dimensionality reduction by locally linear embedding. Science 2000, 290, 2323–2326. [Google Scholar] [CrossRef] [PubMed]

- Belkin, M.; Niyogi, P. Laplacian eigenmaps for dimensionality reduction and data representation. Neural Comput. 2003, 15, 1373–1396. [Google Scholar] [CrossRef]

- Torgerson, W.S. Multidimensional scaling: I. Theory and method. Psychometrika 1952, 17, 401–419. [Google Scholar] [CrossRef]

- Vlachos, M.; Domeniconi, C.; Gunopulos, D.; Kollios, G.; Koudas, N. Non-linear dimensionality reduction techniques for classification and visualization. In Proceedings of the Eighth ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, Edmonton, AB, Canada, 23–26 July 2002; pp. 645–651. [Google Scholar]

- Ribeiro, B.; Vieira, A.; das Neves, J.C. Supervised Isomap with dissimilarity measures in embedding learning. In Iberoamerican Congress on Pattern Recognition; Springer: Berlin/Heidelberg, German, 2008; pp. 389–396. [Google Scholar]

- Geng, X.; Zhan, D.C.; Zhou, Z.H. Supervised nonlinear dimensionality reduction for visualization and classification. IEEE Trans. Syst. Man Cybern. Part B 2005, 35, 1098–1107. [Google Scholar] [CrossRef]

- Li, C.G.; Guo, J. Supervised isomap with explicit mapping. In Proceedings of the First International Conference on Innovative Computing, Information and Control (ICICIC’06), Beijing, China, 30 August–1 September 2006; pp. 345–348. [Google Scholar]

- Zhang, Y.; Zhang, Z.; Qin, J.; Zhang, L.; Li, B.; Li, F. Semi-supervised local multi-manifold Isomap by linear embedding for feature extraction. Pattern Recognit. 2018, 76, 662–678. [Google Scholar] [CrossRef]

- De Ridder, D.; Duin, R.P. Locally Linear Embedding for Classification; Pattern Recognition Group Technical Report PH-2002-01; Delft University of Technology: Delft, The Netherlands, 2002; pp. 1–12. [Google Scholar]

- De Ridder, D.; Kouropteva, O.; Okun, O.; Pietikäinen, M.; Duin, R.P. Supervised locally linear embedding. In Artificial Neural Networks and Neural Information Processing—ICANN/ICONIP 2003; Springer: Berlin/Heidelberg, Germany, 2003; pp. 333–341. [Google Scholar]

- Zhang, S.Q. Enhanced supervised locally linear embedding. Pattern Recognit. Lett. 2009, 30, 1208–1218. [Google Scholar] [CrossRef]

- Liu, C.; Zhou, J.; He, K.; Zhu, Y.; Wang, D.; Xia, J. Supervised locally linear embedding in tensor space. In Proceedings of the 2009 Third International Symposium on Intelligent Information Technology Application, NanChang, China, 21–22 November 2009; pp. 31–34. [Google Scholar]

- Raducanu, B.; Dornaika, F. A supervised non-linear dimensionality reduction approach for manifold learning. Pattern Recognit. 2012, 45, 2432–2444. [Google Scholar] [CrossRef]

- Zheng, F.; Chen, N.; Li, L. Semi-supervised Laplacian eigenmaps for dimensionality reduction. In Proceedings of the 2008 International Conference on Wavelet Analysis and Pattern Recognition, Hong Kong, China, 30–31 August 2008; pp. 843–849. [Google Scholar]

- Wu, R.; Yu, Y.; Wang, W. Scale: Supervised and cascaded laplacian eigenmaps for visual object recognition based on nearest neighbors. In Proceedings of the 2013 IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, 25–27 June 2013; pp. 867–874. [Google Scholar]

- Jiang, Q.; Jia, M. Supervised laplacian eigenmaps for machinery fault classification. In Proceedings of the 2009 WRI World Congress on Computer Science and Information Engineering, Los Angeles, CA, USA, 31 March–2 April 2009; pp. 116–120. [Google Scholar]

- Zhang, X.; Yali, P.; Liu, S.; Wu, J.; Ren, P. A supervised dimensionality reduction method-based sparse representation for face recognition. J. Mod. Opt. 2017, 64, 799–806. [Google Scholar] [CrossRef]

- Chen, W.S.; Zhao, Y.; Pan, B.; Chen, B. Supervised kernel nonnegative matrix factorization for face recognition. Neurocomputing 2016, 205, 165–181. [Google Scholar] [CrossRef]

- Kumar, B. Supervised Dictionary Learning for Action Recognition and Localization. Ph.D. Thesis, Queen Mary University of London, London, UK, 2012. [Google Scholar]

- Santiago-Mozos, R.; Leiva-Murillo, J.M.; Pérez-Cruz, F.; Artes-Rodriguez, A. Supervised-PCA and SVM classifiers for object detection in infrared images. In Proceedings of the IEEE Conference on Advanced Video and Signal Based Surveillance, Miami, FL, USA, 22–22 July 2003; pp. 122–127. [Google Scholar]

- Xinfang, X.; Xin, X.; Hao, D.; Han, W.; Luoru, L. A Semi-Supervised Dimension Reduction Method for Polarimetric SAR Image Classification. Acta Opt. Sin. 2018, 4, 045. [Google Scholar]

- Zhang, X.; Guan, N.; Jia, Z.; Qiu, X.; Luo, Z. Semi-supervised projective non-negative matrix factorization for cancer classification. PLoS ONE 2015, 10, 1–20. [Google Scholar] [CrossRef]

- Gaujoux, R.; Seoighe, C. Semi-supervised Nonnegative Matrix Factorization for gene expression deconvolution: a case study. Infect. Genet. Evol. 2012, 12, 913–921. [Google Scholar] [CrossRef]

- Chen, X.; Wang, L.; Smith, J.D.; Zhang, B. Supervised principal component analysis for gene set enrichment of microarray data with continuous or survival outcomes. Bioinformatics 2008, 24, 2474–2481. [Google Scholar] [CrossRef]

- Lu, M.; Lee, H.S.; Hadley, D.; Huang, J.Z.; Qian, X. Supervised categorical principal component analysis for genome-wide association analyses. BMC Genom. 2014, 15, 1–10. [Google Scholar] [CrossRef]

- Lu, M.; Huang, J.Z.; Qian, X. Supervised logistic principal component analysis for pathway based genome-wide association studies. In Proceedings of the ACM Conference on Bioinformatics, Computational Biology and Biomedicine, Orlando, FL, USA, 7–10 October 2012; pp. 52–59. [Google Scholar]

- Fuse, T.A.; Jayasignpure, N.D.; Pawar, P.D. NMF-SVM Based CAD Tool for the Diagnosis of Alzheimer’s Disease. Int. J. Appl. Innov. Eng. Manag. 2014, 3, 268–274. [Google Scholar]

- Giradi, D.; Holzinger, A. Dimensionality Reduction for Exploratory Data Analysis in Daily Medical Research. In Advanced Data Analytics in Health; Springer: Cham, Switzerland, 2018; pp. 3–20. [Google Scholar]

- Weninger, F.; Roux, J.L.; Hershey, J.R.; Watanabe, S. Discriminative NMF and its application to single-channel source separation. In Proceedings of the Fifteenth Annual Conference of the International Speech Communication Association, Singapore, 14–18 September 2014. [Google Scholar]

- Nakajima, H.; Kitamura, D.; Takamune, N.; Koyama, S.; Saruwatari, H.; Ono, N.; Takahashi, Y.; Kondo, K. Music signal separation using supervised NMF with all-pole-model-based discriminative basis deformation. In Proceedings of the 2016 24th European Signal Processing Conference (EUSIPCO), Budapest, Hungary, 29 August–2 September 2016; pp. 1143–1147. [Google Scholar]

- Kitamura, D.; Saruwatari, H.; Yagi, K.; Shikano, K.; Takahashi, Y.; Kondo, K. Robust music signal separation based on supervised nonnegative matrix factorization with prevention of basis sharing. In Proceedings of the IEEE International Symposium on Signal Processing and Information Technology, Athens, Greece, 12–15 December 2013; pp. 392–397. [Google Scholar]

- Hund, M.; Böhm, D.; Sturm, W.; Sedlmair, M.; Schreck, T.; Ullrich, T.; Keim, D.A.; Majnaric, L.; Holzinger, A. Visual analytics for concept exploration in subspaces of patient groups. Brain Inform. 2016, 3, 233–247. [Google Scholar] [CrossRef]

- Sun, S.; Zhang, C. The selective random subspace predictor for traffic flow forecasting. IEEE Trans. Int. Transp. Syst. 2007, 8, 367–373. [Google Scholar] [CrossRef]

- Mikolov, T.; Sutskever, I.; Chen, K.; Corrado, G.S.; Dean, J. Distributed representations of words and phrases and their compositionality. In Proceedings of the Twenty-Seventh Annual Conference on Neural Information Processing Systems, Lake Tahoe, NV, USA, 5–10 Demcember 2013; pp. 3111–3119. [Google Scholar]

- Pennington, J.; Socher, R.; Manning, C. Glove: Global vectors for word representation. In Proceedings of the 2014 Conference on Dmpirical Methods in Natural Language Processing (EMNLP), Doha, Qatar, 25–29 Octorber 2014; pp. 1532–1543. [Google Scholar]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. arXiv 2018, arXiv:1810.04805. [Google Scholar]

- Luo, Y.; Szolovits, P.; Dighe, A.S.; Baron, J.M. 3D-MICE: integration of cross-sectional and longitudinal imputation for multi-analyte longitudinal clinical data. J. Am. Med. Inform. Assoc. 2017, 25, 645–653. [Google Scholar] [CrossRef] [PubMed]

- Su, Y.S.; Gelman, A.; Hill, J.; Yajima, M. Multiple imputation with diagnostics (mi) in R: Opening windows into the black box. J. Stat. Softw. 2011, 45, 1–31. [Google Scholar] [CrossRef]

- Dempster, A.P.; Laird, N.M.; Rubin, D.B. Maximum likelihood from incomplete data via the EM algorithm. J. R. Stat. Soc. 1977, 39, 1–38. [Google Scholar]

- Chao, G.; Sun, S. Consensus and complementarity based maximum entropy discrimination for multi-view classification. Inf. Sci. 2016, 367, 296–310. [Google Scholar] [CrossRef]

- Xu, C.; Tao, D.; Xu, C. A survey on multi-view learning. arXiv 2013, arXiv:1304.5634. [Google Scholar]

- Chao, G.; Sun, S. Alternative multiview maximum entropy discrimination. IEEE Trans. Neural Netw. Learn. Syst. 2016, 27, 1445–1456. [Google Scholar] [CrossRef]

- Chao, G.; Sun, S.; Bi, J. A survey on multi-view clustering. arXiv 2017, arXiv:1712.06246. [Google Scholar]

- Holzinger, A. From Machine Learning to Explainable AI. In Proceedings of the 2018 World Symposium on Digital Intelligence for Systems and Machines (DISA), Kosice, Slovakia, 23–25 August 2018; pp. 55–66. [Google Scholar]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chao, G.; Luo, Y.; Ding, W. Recent Advances in Supervised Dimension Reduction: A Survey. Mach. Learn. Knowl. Extr. 2019, 1, 341-358. https://doi.org/10.3390/make1010020

Chao G, Luo Y, Ding W. Recent Advances in Supervised Dimension Reduction: A Survey. Machine Learning and Knowledge Extraction. 2019; 1(1):341-358. https://doi.org/10.3390/make1010020

Chicago/Turabian StyleChao, Guoqing, Yuan Luo, and Weiping Ding. 2019. "Recent Advances in Supervised Dimension Reduction: A Survey" Machine Learning and Knowledge Extraction 1, no. 1: 341-358. https://doi.org/10.3390/make1010020

APA StyleChao, G., Luo, Y., & Ding, W. (2019). Recent Advances in Supervised Dimension Reduction: A Survey. Machine Learning and Knowledge Extraction, 1(1), 341-358. https://doi.org/10.3390/make1010020