The HDIN Dataset: A Real-World Indoor UAV Dataset with Multi-Task Labels for Visual-Based Navigation

Abstract

:1. Introduction

- Incompatible vehicle platforms challenge: The GS4 dataset [21] was collected from a Turtlebot 2 platform, where the visual samples are low-altitude viewing and the corresponding labels are Turtlebot control (i.e., the angular velocity of wheels). These samples and labels are not suitable for the UAV platform.

- Specific Sensor challenge: The visual samples of the GS4 dataset [21] were collected from a 360° fisheye camera, which are different from the captured images based on the common UAV monocular camera; The labels of the ICL dataset [20] are distance-to-collision, which require additionally installing three pairs of Infrared and Ultrasonic sensors and sensor fusion with time-synchronization.

- Generalization challenge raised from label regression: Since the continuous regression labels existing data jitters, that is, large gaps between consecutive data, the CNN model cannot regress the inputs to the expected values; Unidentical data units with varying ranges such as DroNet [24] and ICL datasets [20], respectively, used steer wheel angles (−1~1 of radians) and distance-to-collision (0~500 cm) as training labels.

- Collecting data based on a widely available UAV platform from its original onboard sensors and simplifying the processes of multi-sensor synchronization.

- Defining a novel scaling factor labeling method with three label types to overcome the learning challenges due to the data jitters during collection and unidentical label units.

2. Related Works

3. Data Collection Setup

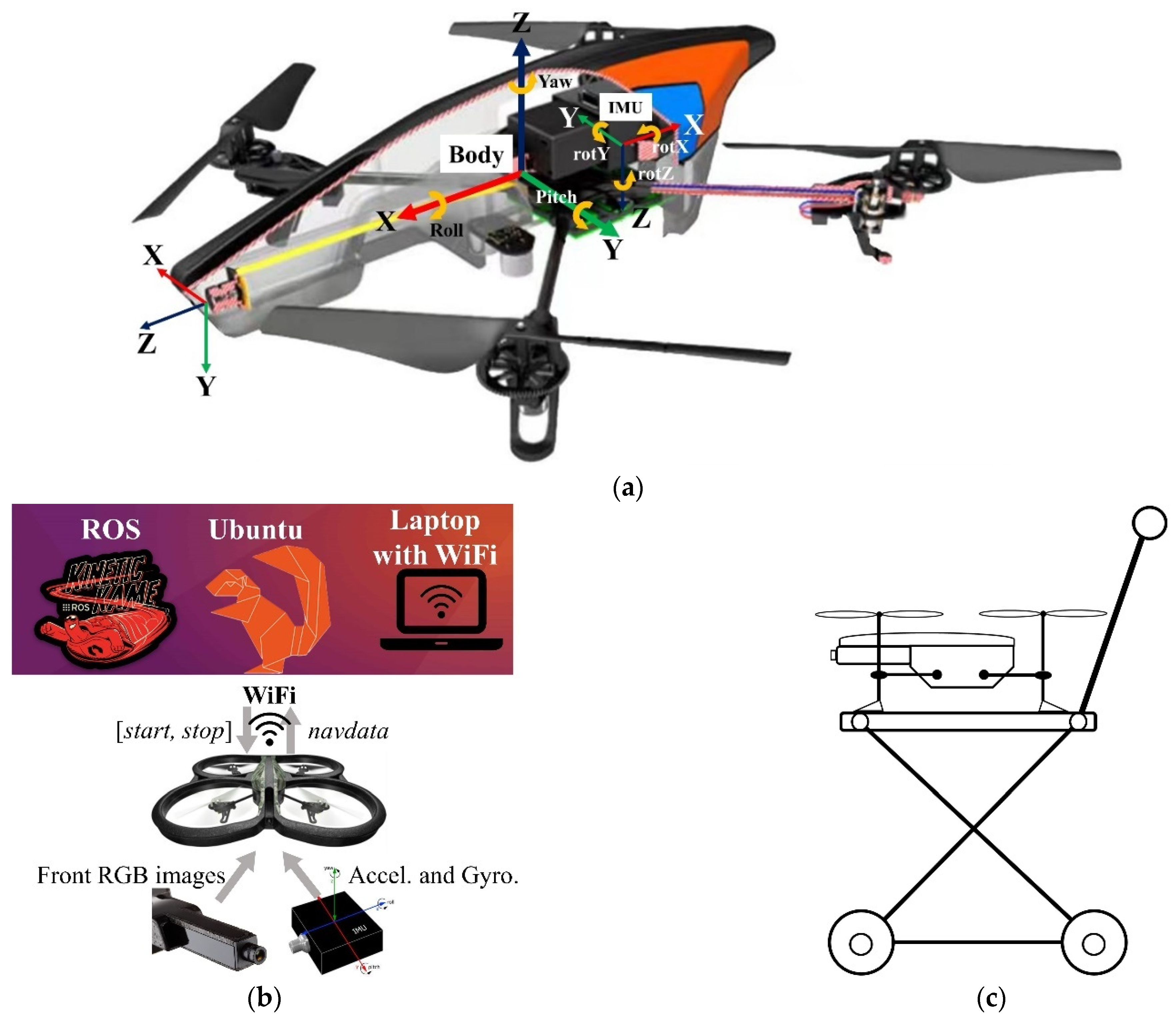

3.1. UAV Platform

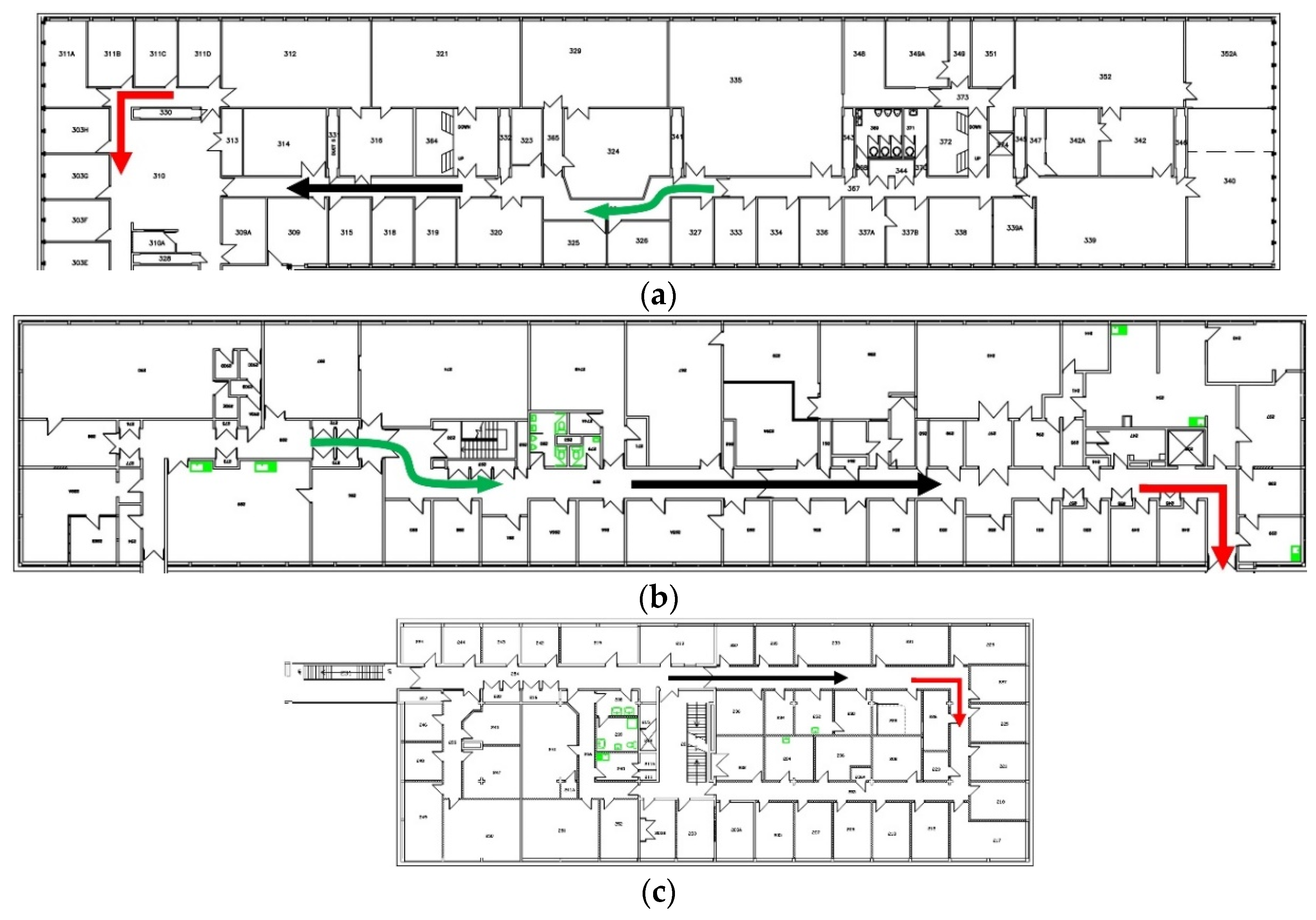

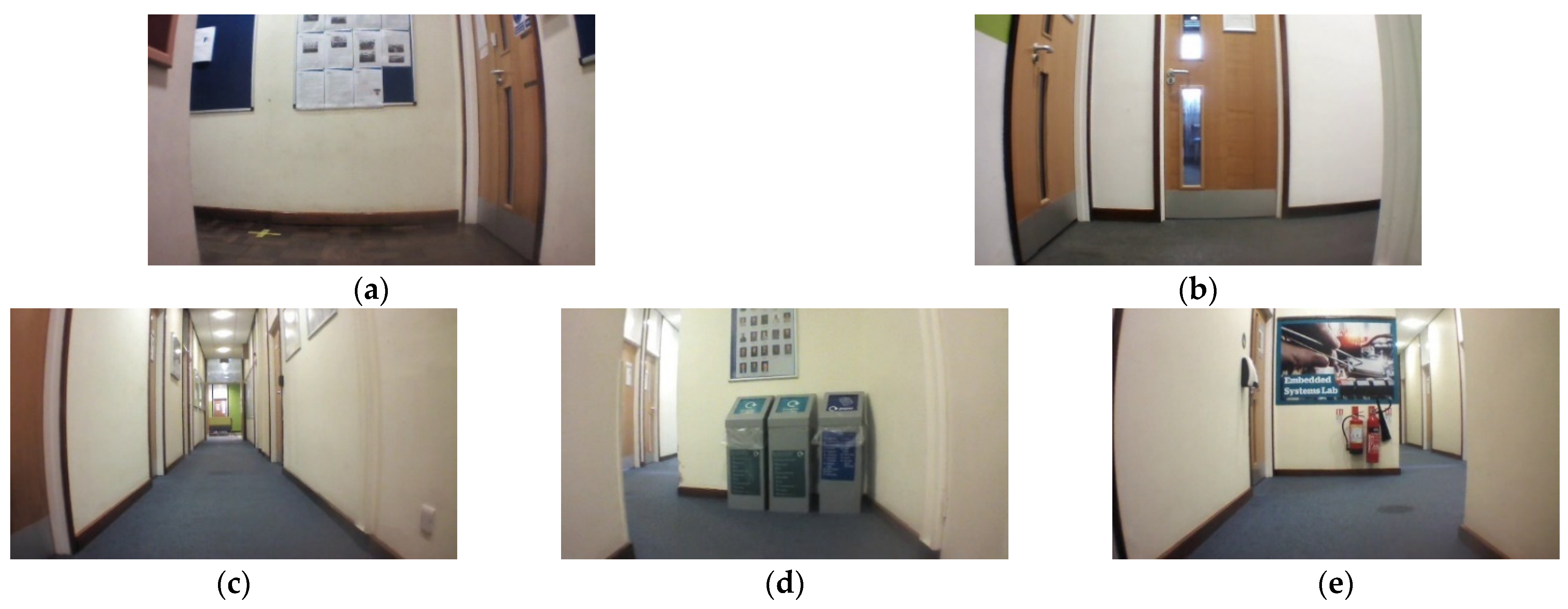

3.2. Experimental Environment

4. Collection Methodology

4.1. Steering Subset

| Algorithm 1: Scaling Factor Labeling Method. |

| Label type 1: Expected Steering |

| Input: Steering label text, Image path Output: Synchronize steering text |

| 1 ← Load Steering label text; 2 ← Load images from Image path; 3 for to m do 4 ← Matching(, ); 5 ← Transformation(); 6 for to m do 7 ; 8 ← Low-pass filter(); 9 Output to Synchronize steering text; |

| Label type 2: Fitting Angular Velocity |

| Input: Steering label text, Image path Output: Synchronize steering text |

| 1 ← Load Steering label text; 2 ← Load images from Image path; 3 ← Transformation(); 4 ← Fitting(); 5 ← Derivative (); 6 ← Matching(, ); 7 ← deg2rad(); 8 Output to Synchronize steering text; |

| Label type 3: Scalable Angular Velocity |

| Input: Steering label text, Image path Output: Synchronize steering text |

| 1 ← Load Steering label text; 2 ← Load images from Image path; 3 ← Transformation(); 4 ← Fitting(); 5 ← Derivative (); 6 ← Matching(, ); 7 ←/max angular velocity; 8 Output to Synchronize steering text; |

4.2. Collision Subset

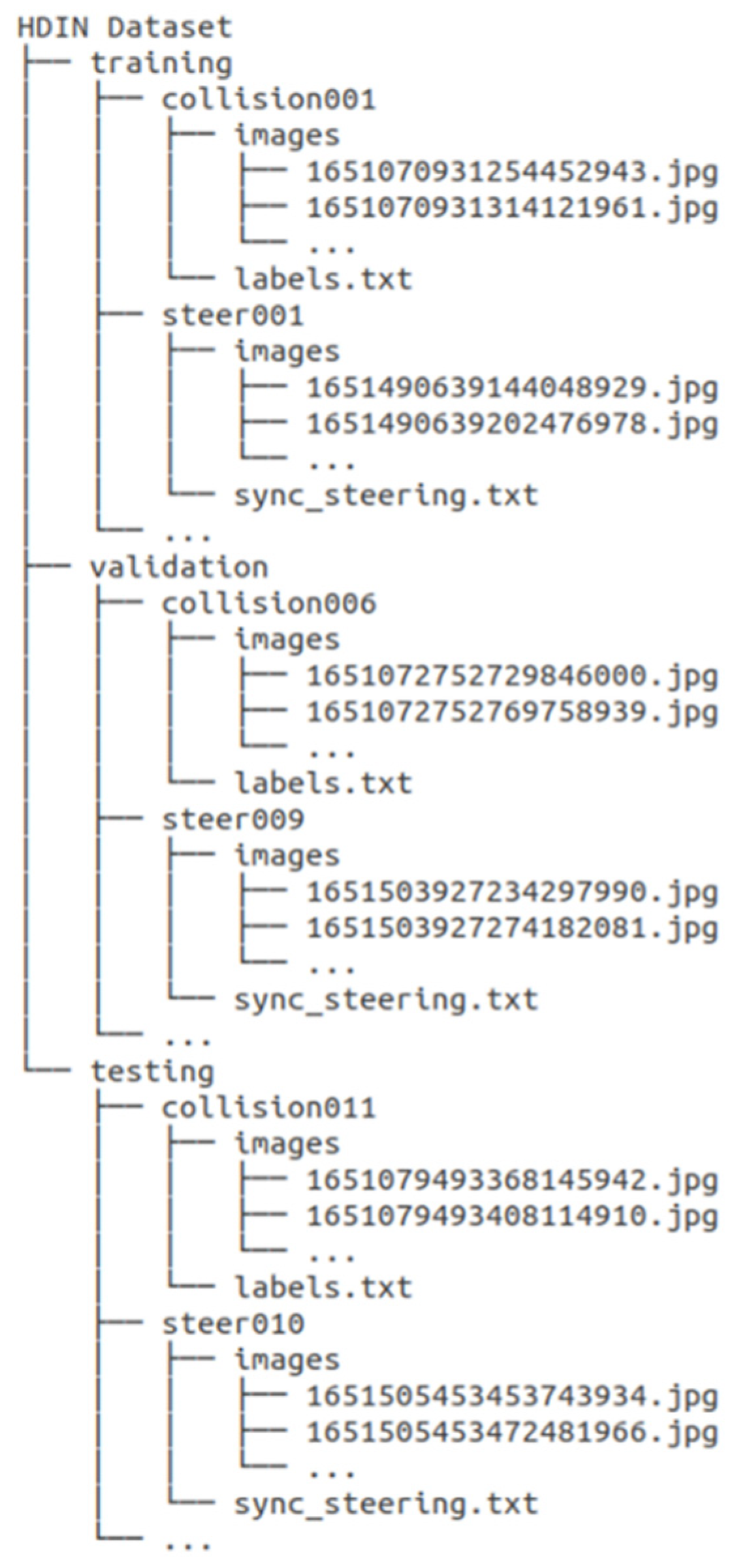

4.3. Dataset Structure

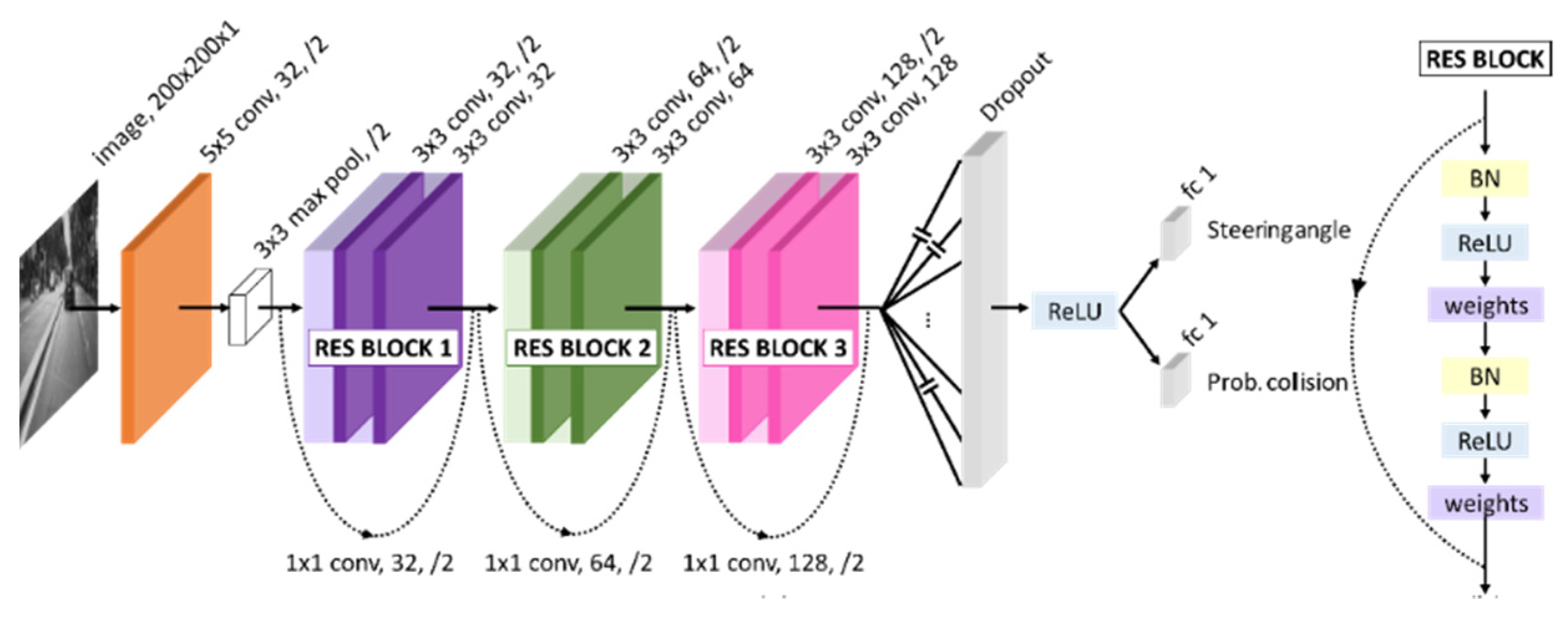

5. Dataset Evaluation

5.1. Quantitative Comparison

5.2. Data Distribution Visualization

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Acknowledgments

Conflicts of Interest

References

- Schroth, L. The Drone Market 2019–2024: 5 Things You Need to Know. 2019. Available online: https://www.droneii.com/the-drone-market-2019-2024-5-things-you-need-to-know (accessed on 21 July 2022).

- Daponte, P.; De Vito, L.; Glielmo, L.; Iannelli, L.; Liuzza, D.; Picariello, F.; Silano, G. A review on the use of drones for precision agriculture. IOP Conference Series: Earth and Environmental Science. In Proceedings of the 1st Workshop on Metrology for Agriculture and Forestry (METROAGRIFOR), Ancona, Italy, 1–2 October 2018; Volume 275. [Google Scholar]

- Khan, N.A.; Jhanjhi, N.; Brohi, S.N.; Usmani, R.S.A.; Nayyar, A. Smart traffic monitoring system using Unmanned Aerial Vehicles (UAVs). Comput. Commun. 2020, 157, 434–443. [Google Scholar] [CrossRef]

- Kwon, W.; Park, J.H.; Lee, M.; Her, J.; Kim, S.-H.; Seo, J.-W. Robust Autonomous Navigation of Unmanned Aerial Vehicles (UAVs) for Warehouses’ Inventory Application. IEEE Robot. Autom. Lett. 2020, 5, 243–249. [Google Scholar] [CrossRef]

- Lu, Y.; Xue, Z.; Xia, G.-S.; Zhang, L. A survey on vision-based UAV navigation. Geo-Spat. Inf. Sci. 2018, 21, 21–32. [Google Scholar] [CrossRef] [Green Version]

- Carrio, A.; Sampedro, C.; Rodriguez-Ramos, A.; Campoy, P. A Review of Deep Learning Methods and Applications for Unmanned Aerial Vehicles. J. Sens. 2017, 2017, 3296874. [Google Scholar] [CrossRef]

- Krajewski, R.; Bock, J.; Kloeker, L.; Eckstein, L. The highD Dataset: A Drone Dataset of Naturalistic Vehicle Trajectories on German Highways for Validation of Highly Automated Driving Systems. In Proceedings of the 2018 21st International Conference on Intelligent Transportation Systems (Itsc), Maui, HI, USA, 4–7 November 2018; pp. 2118–2125. [Google Scholar]

- Bozcan, I.; Kayacan, E. AU-AIR: A Multi-modal Unmanned Aerial Vehicle Dataset for Low Altitude Traffic Surveillance. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 8504–8510. [Google Scholar]

- Shah, A.P.; Lamare, J.; Nguyen-Anh, T.; Hauptmann, A. CADP: A Novel Dataset for CCTV Traffic Camera based Accident Analysis. In Proceedings of the 2018 15th IEEE International Conference on Advanced Video and Signal Based Surveillance (AVSS), Auckland, New Zealand, 27–30 November 2018; pp. 1–9. [Google Scholar]

- Mou, L.; Hua, Y.; Jin, P.; Zhu, X.X. ERA: A Data Set and Deep Learning Benchmark for Event Recognition in Aerial Videos [Software and Data Sets]. IEEE Geosci. Remote Sens. Mag. 2020, 8, 125–133. [Google Scholar] [CrossRef]

- Barekatain, M.; Marti, M.; Shih, H.-F.; Murray, S.; Nakayama, K.; Matsuo, Y.; Prendinger, H. Okutama-Action: An Aerial View Video Dataset for Concurrent Human Action Detection. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Honolulu, HI, USA, 21–26 July 2017; pp. 2153–2160. [Google Scholar]

- Robicquet, A.; Sadeghian, A.; Alahi, A.; Savarese, S. Learning Social Etiquette: Human Trajectory Understanding in Crowded Scenes; Springer International Publishing: Berlin/Heidelberg, Germany, 2016; pp. 549–565. [Google Scholar]

- Perera, A.G.; Law, Y.W.; Chahl, J. UAV-GESTURE: A Dataset for UAV Control and Gesture Recognition. In Proceedings of the European Conference on Computer Vision (ECCV) Workshops 2018, Munich, Germany, 8–14 September 2018; Volume 11130, pp. 117–128. [Google Scholar]

- Hui, B.; Song, Z.; Fan, H.; Zhong, P.; Hu, W.; Zhang, X.; Lin, J.; Su, H.; Jin, W.; Zhang, Y.; et al. Dataset for Infrared Image Dim-Small Aircraft Target Detection and Tracking under Ground/Air Background(V1). Science Data Bank. 2019. Available online: https://www.scidb.cn/en/detail?dataSetId=720626420933459968&dataSetType=journal (accessed on 21 July 2022).

- Mueller, M.; Smith, N.; Ghanem, B. A Benchmark and Simulator for UAV Tracking. In Proceedings of the Computer Vision-Eccv 2016 Pt I 2016, Amsterdam, The Netherlands, 11–14 October 2016; Volume 9905, pp. 445–461. [Google Scholar]

- Zheng, Z.; Wei, Y.; Yang, Y. University-1652: A Multi-view Multi-source Benchmark for Drone-based Geo-localization. In Proceedings of the 28th ACM International Conference on Multimedia, Seattle, WA, USA, 12–16 October 2020; pp. 1395–1403. [Google Scholar]

- Hirose, N.; Xia, F.; Martin-Martin, R.; Sadeghian, A.; Savarese, S. Deep Visual MPC-Policy Learning for Navigation. IEEE Robot. Autom. Lett. 2019, 4, 3184–3191. [Google Scholar] [CrossRef] [Green Version]

- Kouris, A.; Bouganis, C.S. Learning to Fly by MySelf: A Self-Supervised CNN-based Approach for Autonomous Navigation. In Proceedings of the 2018 IEEE/Rsj International Conference on Intelligent Robots and Systems (Iros), Madrid, Spain, 1–5 October 2018; pp. 5216–5223. [Google Scholar]

- Udacity. An Open Source Self-Driving Car. 2016. Available online: https://www.udacity.com/self-driving-car. (accessed on 21 July 2022).

- Padhy, R.P.; Verma, S.; Ahmad, S.; Choudhury, S.K.; Sa, P.K. Deep neural network for autonomous uav navigation in indoor corridor environments. Procedia Comput. Sci. 2018, 133, 643–650. [Google Scholar] [CrossRef]

- Huang, G.; Liu, Z.; van der Maaten, L.; Weinberger, K.Q. Densely connected convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 4700–4708. [Google Scholar]

- Chhikara, P.; Tekchandani, R.; Kumar, N.; Chamola, V.; Guizani, M. DCNN-GA: A deep neural net architecture for navigation of UAV in indoor environment. IEEE Internet Things J. 2020, 8, 4448–4460. [Google Scholar] [CrossRef]

- Loquercio, A.; Maqueda, A.I.; del-Blanco, C.R.; Scaramuzza, D. DroNet: Learning to Fly by Driving. IEEE Robot. Autom. Lett. 2018, 3, 1088–1095. [Google Scholar] [CrossRef]

- Palossi, D.; Conti, F.; Benini, L. An Open Source and Open Hardware Deep Learning-powered Visual Navigation Engine for Autonomous Nano-UAVs. In Proceedings of the 2019 15th International Conference on Distributed Computing in Sensor Systems (Dcoss), Santorini, Greece, 29–31 May 2019; pp. 604–611. [Google Scholar]

- Antonini, A.; Guerra, W.; Murali, V.; Sayre-McCord, T. The Blackbird UAV dataset. Int. J. Robot. Res. 2020, 39, 1346–1364. [Google Scholar] [CrossRef]

- Antonini, A.; Guerra, W.; Murali, V.; Sayre-McCord, T.; Karaman, S. The Blackbird Dataset: A Large-Scale Dataset for UAV Perception in Aggressive Flight. In Proceedings of the 2018 International Symposium on Experimental Robotics, Buenos Aires, Argentina, 5–8 November 2018; Volume 11, pp. 130–139. [Google Scholar]

- Fonder, M.; Van Droogenbroeck, M. Mid-Air: A multi-modal dataset for extremely low altitude drone flights. In Proceedings of the 2019 IEEE/Cvf Conference on Computer Vision and Pattern Recognition Workshops (Cvprw 2019), Long Beach, CA, USA, 16–17 June 2019; pp. 553–562. [Google Scholar]

| Datasets | ICL [20] | DroNet [22,23] | GS4 [21] | Our HDIN | |

|---|---|---|---|---|---|

| Vehicles | Collected | UAV | Car [24] and Bicycle | UGV a | UAV |

| Applied | UAV | UAV | UGV a | UAV | |

| Environments | Real indoor | Real outdoor | Sim b and Real indoor | Real indoor | |

| Samples | Front RGB | Front Gray and RGB | 360° fisheye | Front RGB | |

| Labels | [−30°, 0°, 30°] distances | Steer wheel angle; Collision label c | UGV a control | UAV’s orientation; Collision label c | |

| Characteristics | Sensor customized installation, fusion and time-sync d. | “Line-like” pattern dependence. | Incompatible vehicle platforms and sensors. | Common UAV platform and onboard sensors. | |

| MAE a | UE b | UA c | |

|---|---|---|---|

| Positive rotation | 11.352° | 0.0105° | 98.95% |

| Negative rotation | 4.154° | 0.0038° | 99.62% |

| Dataset | Label Type | Img Type | EVA a | RMSE | Ave. Accuracy | F-1 Score b |

|---|---|---|---|---|---|---|

| DroNet [22,23] | Steer wheel angle | Gray | 0.737 | 0.110 | 95.3% | 0.895 |

| HDIN (Ours) | Expected steering | RGB | 0.778 | 0.193 | 84.9% | 0.784 |

| Gray | 0.798 | 0.184 | 88.2% | 0.822 | ||

| Fitting angular velocity | RGB | 0.808 | 0.090 | 86.7% | 0.804 | |

| Gray | 0.810 | 0.089 | 86.2% | 0.799 | ||

| Scalable angular velocity | RGB | 0.853 | 0.113 | 85.3% | 0.789 | |

| Gray | 0.827 | 0.123 | 85.8% | 0.794 |

| Dataset with Label Types | Steering Subset | Collision Subset | |||

|---|---|---|---|---|---|

| Mean | Var | Range | Collision | Non-Collision | |

| DroNet with Steer wheel angle | 0.0067 | 0.045 | (−0.87, 0.94) | 77.6% | 22.4% |

| HDIN with Expected steering | −0.0027 | 0.167 | (−1.07, 1.24) | 72.6% | 27.4% |

| HDIN with Fitting angular velocity | −0.0017 | 0.042 | (−0.52, 0.60) | 72.6% | 27.4% |

| HDIN with Scalable angular velocity | −0.0024 | 0.087 | (−0.75, 0.85) | 72.6% | 27.4% |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chang, Y.; Cheng, Y.; Murray, J.; Huang, S.; Shi, G. The HDIN Dataset: A Real-World Indoor UAV Dataset with Multi-Task Labels for Visual-Based Navigation. Drones 2022, 6, 202. https://doi.org/10.3390/drones6080202

Chang Y, Cheng Y, Murray J, Huang S, Shi G. The HDIN Dataset: A Real-World Indoor UAV Dataset with Multi-Task Labels for Visual-Based Navigation. Drones. 2022; 6(8):202. https://doi.org/10.3390/drones6080202

Chicago/Turabian StyleChang, Yingxiu, Yongqiang Cheng, John Murray, Shi Huang, and Guangyi Shi. 2022. "The HDIN Dataset: A Real-World Indoor UAV Dataset with Multi-Task Labels for Visual-Based Navigation" Drones 6, no. 8: 202. https://doi.org/10.3390/drones6080202

APA StyleChang, Y., Cheng, Y., Murray, J., Huang, S., & Shi, G. (2022). The HDIN Dataset: A Real-World Indoor UAV Dataset with Multi-Task Labels for Visual-Based Navigation. Drones, 6(8), 202. https://doi.org/10.3390/drones6080202