1. Introduction

In order to increase the yields from the existing farmland to feed a growing population without a great increase in the use of resources (water, fertilization and man-power), new precision farming methods are emerging. These methods rely heavily on the detailed monitoring of assets (crops, livestock, etc.), which is made possible with unmanned aerial systems (UAS), also referred to as drones (both terms will be used interchangeably in this document). Up until now, regular use of farming UAS has been limited due to the requirements for the human operator(s) of the drone. Depending on the particular use case, one or two operators are required for any given mission. To make this technology accessible to the average farmer, it is critical that in the near future, agricultural UAS are developed with increased autonomy, robustness and flexibility in mind. The farmer should be able to collect the desired data with minimal human effort and technical insight, expecting systems with high availability that can be easily adopted to perform different types of tasks. A solution where the UAS is integrated with other sensors and vehicles into a cloud-based architecture allows the use of advanced data analysis techniques that can provide the farmer with decision support directly through a user-friendly farm management system (FMS), rather than having to interpret the results from each data source to arrive at a recommended course of action. The decision making can address everything from the management of hydration, fertilization, plant population density and pesticides, to planning the seeding/planting and harvesting times. Data collected in past growing seasons can help one to make smarter decisions regarding soil management, what to plant where and to determine the optimal spacing of plants. The AFarCloud project defines a cloud-based distributed platform that allows integration and cooperation of cyber-physical systems in real-time, and early versions of the system have been implemented at several European demonstration farms. This paper discusses the drone-relevant implications and lesson learned.

About 1 year into the AFarCloud project, new European UAS regulations were introduced in a first effort to streamline drone operations across Europe, and also to bring the safety standards achieved in manned aviation to drone operations. The regulations took effect in most European countries at the beginning of 2021, and the most recent revisions are available online [

1]. Understanding the implications that the new regulations have for agricultural drone operations presently, and in the near future, is essential for developing useful UAS-based farming solutions and applications. Thus, this work includes a high-level analysis of the technological and operational effects of the new drone regulations.

Most machinery purchased for a farm will have multiple uses. The functionalities of the agricultural UAS should also be explored to extract the most benefits possible, even if the main application will be remote sensing and associated data collection. The UAS can be very useful as a highly mobile assistant to other machines and electronics. In the AFarCloud project, UAS were used to collect data from remote ground sensors. Additionally, the project explored the use of the UAS for in situ data collection, both through a payload developed to perform low altitude grass analysis and through a simple UAS manipulator for in situ measurements. A brief overview of the novel applications that were investigated is provided.

Several recent surveys cover the state-of-art in the use of UAS in agriculture. The review in [

2] covers the history and trends in agricultural aviation. Generally, due to the large areas that a farmer must tend, attempts at employing assistance from aerial technology follows quickly after its invention. In [

3], the authors describe popular agricultural UAS applications, the status of the physical components of the UAS and recent developments in data handling. Similar analyses are presented in [

4,

5]. The above-mentioned surveys agree that the capabilities of an agricultural UAS rely on the capabilities of the onboard sensors, computing unit and other equipment. Due to the size and weight constraints associated with the UAS’s payload, and air time due to limited battery capacity, this equipment must be chosen carefully to suit the intended mission. The key agricultural payload technologies that are available for UAS are summarized in

Table 1.

The referenced review articles cover a wide range of different agricultural UAS applications, as summarized in

Table 2.

It is important to note that satellites provide another relevant source of remote sensing data. While the data are significantly more accessible, the data have low spatial resolution and cannot be collected at all during times of significant cloud coverage. An analysis of the usefulness of UAS-collected data compared to satellite data is beyond the scope of this work, but this topic is discussed in some detail in [

6].

The challenges associated with heterogeneous systems producing large amounts of “unconnected” data that the farmer must analyze and draw conclusions from have been known for some time. In a 2010 study [

7], Sørenson et al. identified that farmers face problems related to “data/information overload” and “lack of user-friendly software tools”. Specifically, the farmers would like “information in an automated and summarized fashion” and “cross-linking of information”. The introduction of farmer know-how into a decision support system framework is considered in [

8]. The 2012 paper by Kaloxylos et al. [

9] proposes a functional architecture of an FMS utilizing future Internet capabilities. The AFarCloud architecture is designed to meet needs that are not covered by current FMS solutions, such as real-time management of agricultural vehicles capable of cooperating. The AFarCloud architecture was described in [

10]. This paper explores the feasibility of autonomous UAS operations in the agricultural sector from regulatory and practical points of view. It provides lessons learned from the AFarCloud EU project where drones from different manufactures were successfully integrated with a cloud-based middleware, and describes the development of a subset of relevant UAS-centered applications that were tested as part of the AFarCloud project.

2. Materials and Methods

Achieving an increased level of autonomy (LOA) for agricultural UAS operations has been the main focus throughout the UAS work in AFarCloud. The increased autonomy was achieved both through the integration with the FMS/middleware systems and through the addition of functionality onboard the UAS.

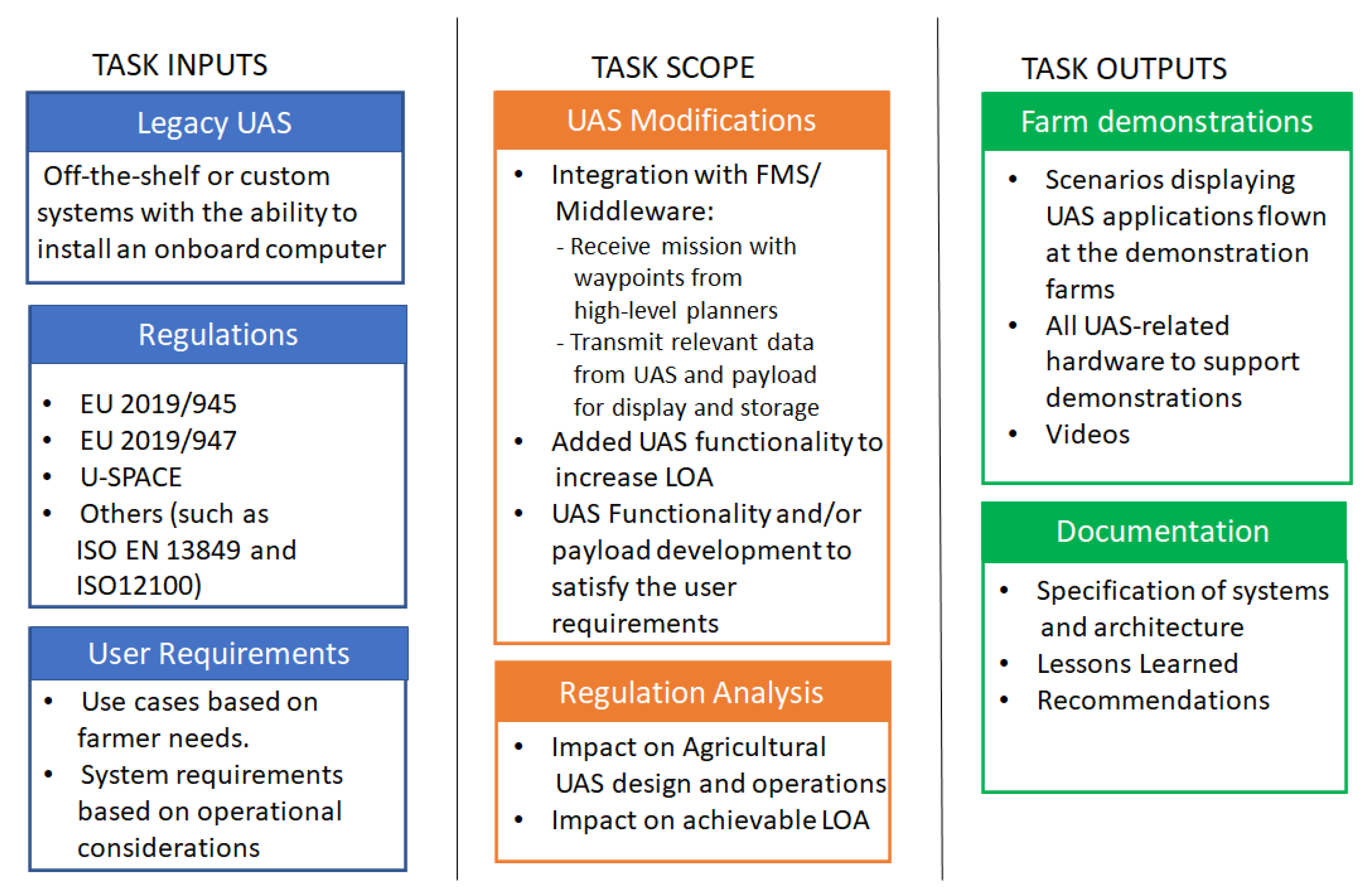

Figure 1 summarizes the inputs, scope and outputs for the drone task in the project.

The integration of the UAS with the FMS through the AFarCloud middleware has been one of the key objectives of the AFarCloud project. The communication between the UAS and the AFarCloud middleware makes use of the Data Distribution Service (DDS) for Real-Time Systems protocol. The DDS interface of the middleware sends and receives data, events and commands between nodes, i.e., software processes either in the cloud or on devices such as UAS, using a publish–subscribe model. A benefit of the DDS is that it guarantees data persistency (no data are lost) and real-time (depending on the context, near real-time) delivery of the data. The information is shared in a virtual global data space by writing (publishing) and reading (subscribing) data objects in a topic or name. The structure of these data objects is defined using IDL (Interface Description Language), and contains a consecutively increasing sequence number for linking petitions and responses. The integration of each drone was achieved by installing a DDS proxy in the onboard computer. This proxy allows integration with a robotic operating system (ROS) or with MAVLink, creating a communication bridge between the UAS and the AFarCloud middleware.

Successful integration with the middleware allows UAS mission data to be sent to the AFarCloud data repositories and opens up for fusing the information with data from other AFarCloud sensors (stationary or onboard other vehicles). It also enables the application of big data and artificial intelligence (AI) methods to support the farmer through decision support algorithms. Furthermore, the solution allows the farmer to plan missions involving the UAS with the Mission Management Tool (MMT), a software module within the FMS.

As a holistic end-user interface, the MMT provides different sets of functionalities to the end-user. These functionalities can be grouped into (1) mission command and control, (2) data visualization and analysis, and (3) decision support. As a command and control center for UAS missions, the MMT allows the operator to define, plan, execute and supervise missions involving heterogeneous UAS. During an ongoing mission, it also allows the operator to re-plan the mission if the mission parameters change and a new plan is required. As a data visualization interface, the MMT can visualize the information gathered by different sensors in the form of markers on the map (when static sensors are involved) and/or map overlays (where imagery data is involved). Historical data can also be analyzed and visualized on the MMT with the help of another component in the middleware referred to as the High Level Awareness Framework (HLAF). Finally, the MMT’s communication with decision support components allows it to provide relevant information to the operator, which can be used in the pre-mission planning stage. The MMT also supports the user in decision making by providing an interface for receiving alarms when a sensor or UAS system requires special attention.

For the UAS itself, an analysis was performed to identify requirements on an agricultural UAS considering the potential of integrating the system with an overarching FMS/middleware solution, the specific challenges involved with agricultural use cases and the new regulatory framework. This work was performed in an iterative fashion considering the operational viewpoint, the system viewpoint and the test/verification viewpoint. Three full iterations were performed with each specification released, and a test campaign was executed, once per year over the 3-year long project time frame.

Once fully autonomous UAS operation is allowed, it is important to have solutions ready that can free up the farmer for longer time intervals. Despite advances in battery technology, the air time of the UAS is still limited, and removing the need for having to frequently replace/recharge the battery could definitely increase the end-user acceptance of UAS-based solutions. As a consequence, a concept for an autonomous charging station was developed and a working prototype was built. It was recognized that even fairly basic versions would end up being relatively heavy and bulky. Hence, concepts for a mobile charging station were also explored, since an agricultural UAS may have to cover large areas.

3. Results

This section presents the main results from the UAS perspective of the AFarCloud project.

3.1. Overview of UAS Related Standards, Regulations and Directives

The widespread adoption of UAS technologies has led regulatory agencies worldwide to scramble to develop robust frameworks to regulate their operation. Joint Authorities for Rulemaking on Unmanned Systems (JARUS) is a group of experts from the National Aviation Authorities (NAAs) and regional aviation safety organizations. Its purpose is to recommend a single set of technical, safety and operational requirements for the certification and safe integration of UAS into airspace and at aerodromes. The objective of JARUS is to provide guidance materials aiming to facilitate each authority to write their own requirements and to avoid duplicate efforts. At present 61 countries, and the European Aviation Safety Agency (EASA) and EUROCONTROL, are contributing to the development of JARUS. Specifically, JARUS has developed a multi-stage method of risk assessment for a UAS operation that is referred to as SORA (Specific Operations Risk Assessment). In June 2019, and based on the work within JARUS, the European Union (EU) became the first authority in the world to publish comprehensive rules applying to the safe, secure and sustainable use of both commercial and leisure drones.

On 5 December 2019, the International Organization for Standardization (ISO) released ISO 21384-3, Unmanned aircraft systems—Part 3: Operational procedures [

11]. This is the first of several ISO standards, others of which are in development, aimed at providing minimum safety and quality requirements for UAS, and criteria relating to coordination and organization in airspace.

3.1.1. EU 2019/945 and EU 2019/947

EU has published Commission Delegated Regulation (EU) 2019/945 of 12 March 2019 on the requirements of unmanned aircraft systems and the requirements to be met by designers, manufacturers, importers and distributors. Commission Implementing Regulation (EU) 2019/947 of 24 May 2019 defines the rules and procedures for the use of unmanned aircraft by pilots and operators, defining categories of operation and their associated regulatory regimes. It establishes the operation categories OPEN (low risk), SPECIFIC (medium risk) and CERTIFIED (high risk), as further summarized in

Table 3.

Drones with class identification labels (C0 through C6) are expected to become commercially available some time in 2022. Note that use of a drone with a class identification label in most cases will lead to slightly relaxed requirements, e.g., a C4 class drone under 25 kg may fly over uninvolved people, even though it should be avoided whenever possible.

The new risk-based approach simplifies the process for flying low-risk UAS operations in the OPEN category. Furthermore, it provides detailed requirements for both the UAS itself and the organization of the UAS operations within the SPECIFIC category, such as flying beyond visual line of sight (BVLOS). The EU regulations directly replace national drone regulations. Note that drones that are developed for the European market will have to comply with a number of other Directives (e.g., EMC directive 2014/30/EU and Radio Equipment Directive 2014/53/EU), as specified in more detail in the new EU regulations and related acceptable means of compliance and guidance material.

3.1.2. U-Space Regulation Package

With the new risk-based regulations that apply to drone operators, it is clear that a detailed risk analysis will be required in order to get approval by the authorities to operate BVLOS and also then to fly autonomously. A key obstacle is that fully autonomous drone operations require a way to guarantee safe integration of the drones with regular air traffic. A framework for this, which is referred to as U-Space (unmanned aircraft traffic management), was adopted by the European Commission in April 2021 [

12] and is expected to become applicable in January 2023.

U-Space plans a phased introduction of procedures and services designed to support the European drone community with safe, efficient and secure access to all classes of airspace and all types of environments. The plan is to add services in four phases, U1 to U4, mainly as a function of the available levels of autonomy of the drones, and the available solutions for data exchange and communication. It is of interest to note that farmland is designated as Type X airspace, meaning “low risk”.

U-Space’s full services will not be fully implemented until at least 2030, but by then the framework will offer very high levels of automation, connectivity and digitalization for both the drones and the U-Space system.

3.1.3. Potential for Autonomous UAS Operation within the New EU Regulatory Framework

According to the International Civil Aviation Organization (ICAO) definition, an autonomous UAS is defined as: “An unmanned aircraft that does not allow pilot intervention in the management of the flight”. The UAS completes the mission from start to finish under the exclusive command of the onboard computers. With the current UAS regulations, autonomous flight without human monitoring is not allowed. However, this is expected to change with the full implementation of U-Space (refer to

Section 3.1.2), and it is likely that a low-risk operation over a field on a rural farm will be one of the first applications to get approval. Once a UAS platform operating semi-autonomously has achieved sufficiently robust operation, the step toward fully autonomous operation will be simply related to the removal of human monitoring.

In terms of drone operational categories, the removal of both human monitoring and the ability to take over control of the UAS will most likely mean that the operations will fall into the SPECIFIC category (this applies to BVLOS operations today). To guarantee adequate levels of safety and robustness for a fully autonomous UAS, the platform likely has to be certified to meet a set of minimum standards. Note that the new drone regulations define different classes of drones (currently C1 through C6 are defined); however, to our knowledge at the time of writing, no drones were yet certified in accordance with the standards. For now, higher risk operations (e.g., in the SPECIFIC category) such as BVLOS require a risk assessment based on the SORA methodology. Once a C5 or C6 certified drone is made available (most likely during 2022 or 2023), BVLOS flights could be performed by declaring a standard scenario. In summary, fully-autonomous agricultural UAS operations will most likely be possible in the near future, assuming the use of a sufficiently capable UAS platform with an appropriate class identification. The exact requirements for such a UAS and the class identification approval process are still not fully known.

3.2. System Requirements for Agricultural UAS with a High Level of Autonomy

Even though electrically-powered drones can contribute towards sustainable agricultural production, their adoption is still slow by European farmers [

13,

14]. This is because there still exist challenges that make drones difficult to use, and therefore create barriers in their wide acceptance from producers. In order to improve the design of farming drones, a systematic analysis of the user needs has been carried out and a set of system requirements for a drone targeting agricultural applications have been defined. A UAS is a complex system with a lot of relevant technical requirements, but here we summarize the most critical high-level requirements for a robust autonomous UAS for agricultural applications based on the experiences gathered in the AFarCloud project.

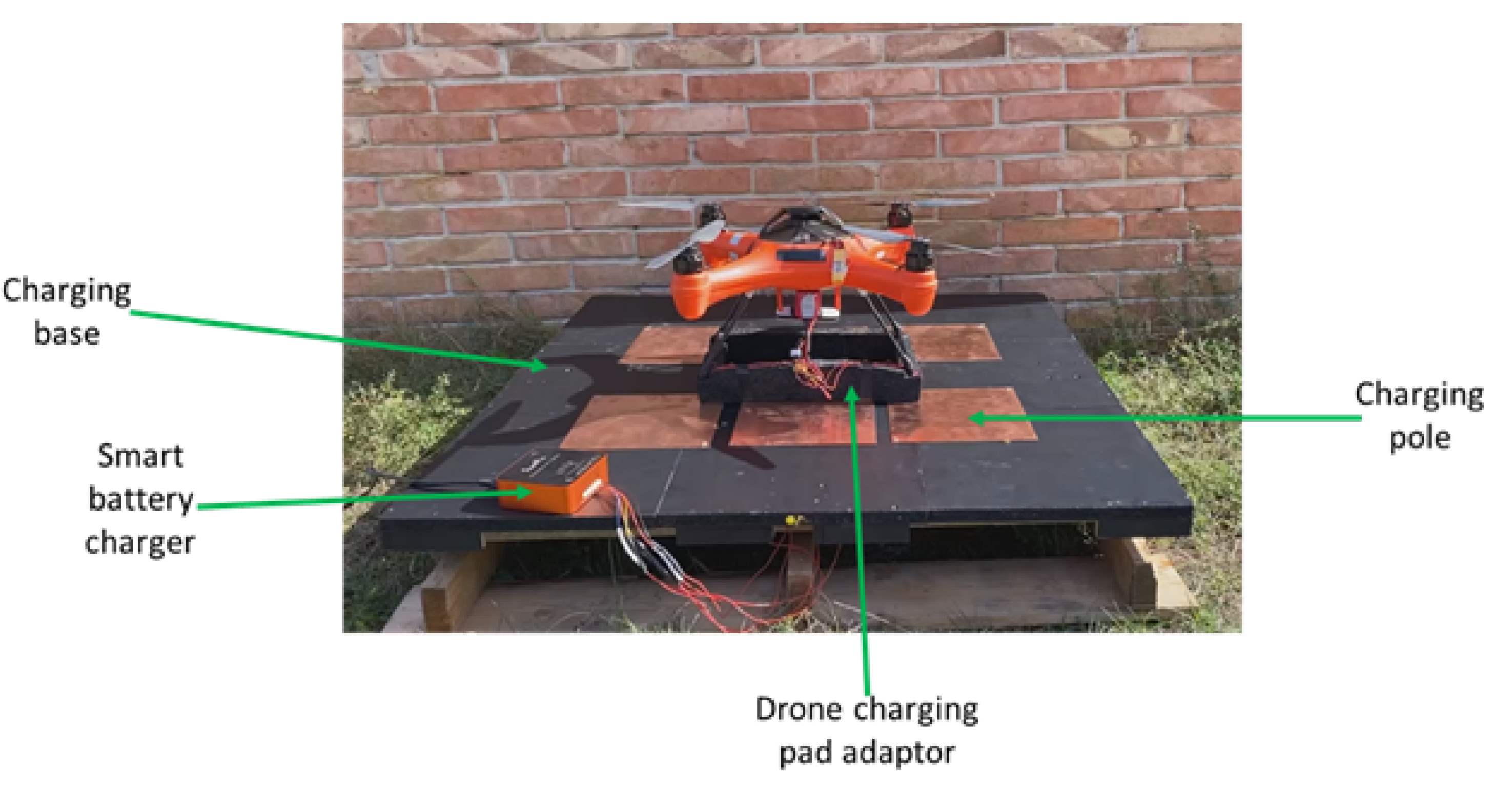

3.2.1. Autonomous Base Station

To enable continuous autonomous drone operations regardless of the size of the area of interest, a base station shall support the UAS with charging and with downloading of large datafiles that cannot be transmitted via the middleware/cloud solution. With current battery technology, state-of-the-art multirotor drones are limited to around 25–30 min of flight time when carrying lightweight payloads. Continued drone operation for cases where the desired mission time is longer than what is possible with a fully charged battery relies on a human operator to replace the battery part-way through the mission. Fully autonomous charging/docking stations provide key functionalities, such as charging and data download, that will allow the UAS to complete longer duration missions without supervision (once the regulation permits this). Note that the charging pauses can also be used for transferring payload data that are collected. This could be relevant if a large number of data are collected, or if seamless data transfer from the UAS to a base station is not possible due to communication limitations.

3.2.2. System and Payload Modularity

The UAS shall be designed in a modular fashion to allow easy replacement of parts and payloads. Many existing hardware and software solutions are challenging to integrate (with) due to a lack of standardized interfaces and use of proprietary closed-source solutions. Agricultural applications involve flying in harsh environments which will lead to wear and tear on system components. For both economical and environmental considerations, it should be possible to easily replace a failed component. Furthermore, an aerial platform is highly weight sensitive, and unnecessary weight means reduced range/endurance. Hence, a desirable characteristic of an agricultural UAS is the option of carrying only the instrumentation/payload needed for a particular mission but having the ability to quickly replace the payload to perform other types of missions. At a particular farm, a UAS will be expected to perform different types of missions, since maintaining and operating several different UAS platforms would be both costly and labor intensive. Additionally, from the perspective of a service provider entity, it is plausible to assume that modular drone solutions will be required to address the needs of different farms.

3.2.3. High Positioning/Navigation Accuracy

The UAS positioning solution shall provide the accuracy required to support the intended precision farming applications. It is critical to consider the required accuracy of the mission. While regular global navigation satellite systems (GNSS) with accuracy of about 2 m may be sufficient for some applications, others will need centimeter-level precision, as is made possible by real-time kinematic (RTK) GNSS and post-processed kinematic (PPK) GNSS. Such applications can include sensor data collection to generate variable rate prescription maps for the application of seeds or fertilizer. For longer-duration autonomous missions, high positioning accuracy will likely be required in order to allow the UAS to land autonomously onto the charging station.

3.2.4. Compatibility with Communication Solutions

The UAS shall incorporate highly adaptable and reliable communications systems with some level of redundancy in case of signal loss. Farms tend to be in rural locations that can present communication challenges. It is important that the UAS can support several communication technologies to allow a suitable communication strategy for each farm and use case. In AFarCloud, UAS tests were conducted with WiFi, Bluetooth, active radio frequency identification (RFID), LoRa, ZigBee, and 4G communication, targeting different types of applications [

15]. Factors that must be considered in this selection procedure include local coverage, required range, required data rate, energy consumption and cost. For new agricultural UAS platforms, 5G compatibility is strongly recommended.

3.2.5. Onboard Computer for Autonomous Transmission of Data

The UAS should include a programmable onboard computer to allow autonomous data transfer with an overarching farming management system and to perform other specialized tasks. While such a solution involves a more complex system, the extra flexibility it provides proved essential to the drone partners participating in AFarCloud. When fully autonomous operation is allowed, it is likely that a system integrator, a role dedicated to a specialized company with the expert knowledge on the technologies and the end-user needs, will have to perform the initial setup of the required hardware and software.

The HEIFU UAS, which is a custom drone developed during the AFarCloud project [

16], features a system-on-module (SoM) which comprises a 128-core Nvidia Maxwell architecture-based graphics processing unit (GPU), together with a quad-core ARM57 central processing unit (CPU) and 4 GB of 64-bit LPDDR4 memory. This enables use of AI algorithms within the core of the HEIFU applications portfolio. Furthermore, its native 4G modem is capable of handling up to 50 Mbps (uplink) and 150 Mbps (downlink) when communicating with the AFarCloud platform via DDS. With this setup, the HEIFU drones were demonstrated to perform the required autonomous AFarCloud missions flawlessly (VLOS or BVLOS).

3.3. UAS Applications

This section provides a brief description of the payloads and applications for agricultural UAS that have been developed and tested during the AFarCloud project. It should be noted that the project included only a subset of the applications listed in

Table 2, and also that the applications were not developed to the same level of maturity.

3.3.1. Fixed-Wing UAS Large-Area Multi-Farm Monitoring Service

Even for small farms, data collected by agricultural drones would be necessary to implement precision farming methods. However, most of such farmers are not capable of nor interested in operating a drone themselves, let alone maintaining and upgrading such a system. Due to advances in digitization, it is possible to translate the needs of a farm with respect to data that are used in decision making into a service provided by another company. As smaller farms are typically clustered together with other small farms, fixed wing drones (capable of much longer range than multirotor drones) could be a good option to collect data for multiple farms in one flight, helping to reduce the cost of the service. The service provider would post-process the collected data and provide the subscribing farms with output in an agreed-to, and potentially easy to understand, format. This concept is illustrated in

Figure 2.

Fixed wing UAS are suited to monitoring larger areas efficiently, as they can inspect and map the status of fields or animals in an entire valley one day, and then move on to work the next valley the next. During harvest time, a drone flight every morning can help farmers to select which areas to harvest each day, to ensure optimal quality and efficiency. At this time, it is particularly relevant to scan the fields for foreign objects that may compromise the harvest. For example, deer fawns may be hiding in the fields, as they are frightened by the noise made by the agricultural vehicles. Since they are not easily visible to the personnel that operate the vehicles, they are often run over. This results in unnecessary suffering for the animals, tragic events on their own, and contamination of the harvest. Furthermore, metal objects (such as soda cans) can lead to injuries or a painful deaths for livestock if mixed into the hay bales consumed by them.

To demonstrate the foreign object detection application, the fixed-wing UAS Falk developed by Maritime Robotics (shown in

Figure 3) was successfully integrated with the AFarCloud middleware.

A mission was planned in the MMT and flown by the UAS; the progress was available in the form of the drone position and status. The main onboard sensor was a stabilized gimbal (DST Otus U-135) with RGB and IR cameras, which had the primary aim of capturing images to feed into AI-based object detection algorithms designed to detect harmful objects prior to harvesting the crops. While further development and training of the AI algorithm is still required to reliably detect all objects of interest, the concept was demonstrated by reporting the detection of a bottle at a known location. The testing was performed at a flight altitude of 120 m, while the UAS flew at speeds around 25 m/s. For market-ready systems, the gimbal payload should be adapted to provide the most efficient detections at this flight altitude and speed in order to optimize coverage while staying within the airspace that professional operators can utilize in most of Europe (and in most other parts of the world). When an item was detected, it was sent as an object of interest to the MMT, as shown in

Figure 4, so that the farmer could decide if further actions should be taken.

3.3.2. UAS-Based Data Collection from (Remote) Sensors

The sensor’s battery life is a critical limitation for wireless sensor applications. There are multiple use-cases where Zigbee and Bluetooth communication is used to reduce power consumption [

17]. However, continuous data transmission is the primary source of power consumption. In the AFarCloud project, a solution for data gathering with drones was developed to mitigate this issue. The sensors internally log periodic readings, and a UAS can retrieve the readings on an autonomous mission. The associated flight plan for the UAV includes flight to the sensor location and execution of the sensor reading script (variable with the sensor type), and can be defined, planned and issued from the MMT.

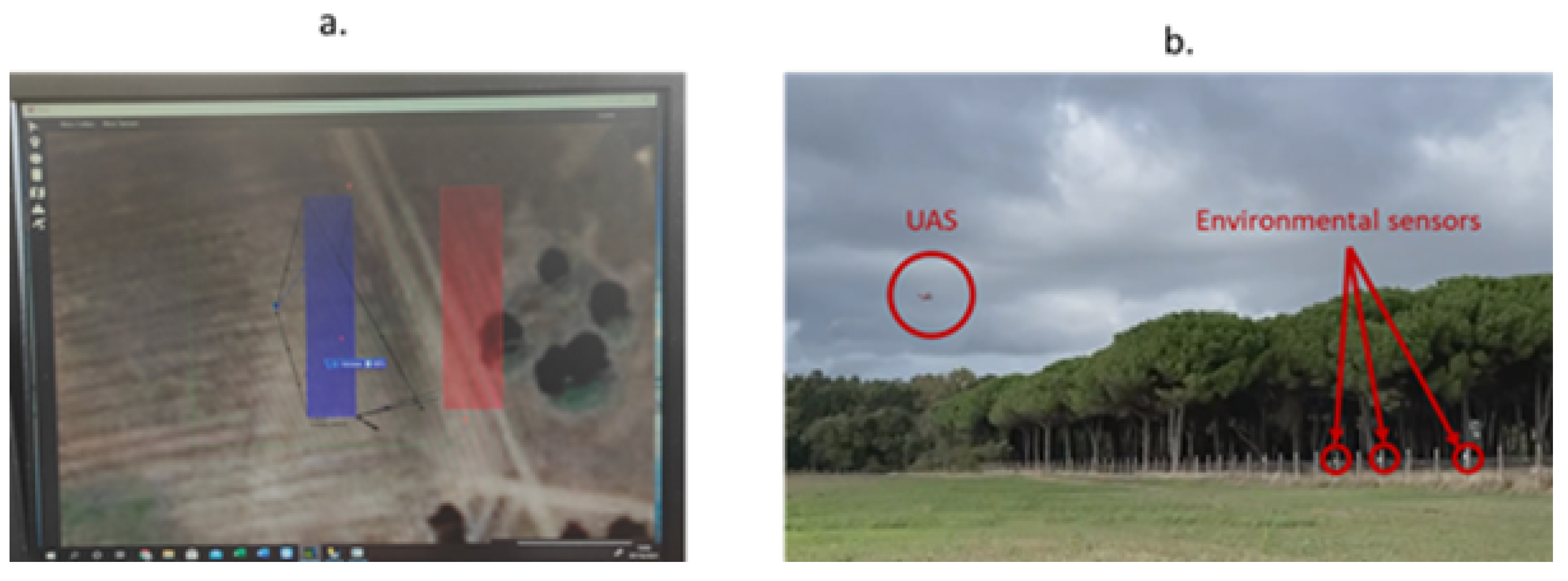

In one specific project demonstration, a custom-made hexacopter developed by Hellenic Drones was used to collect data from environmental sensors (monitoring a field’s temperature and humidity). The sensors were provided by TU Wien, they communicate via Bluetooth and they buffer data until an external system connects to the sensor and loads the data. The UAS was integrated with the AFarCloud Middleware, and the mission was planned in the MMT. The communication between the MMT, the Middleware and the drone was achieved through Internet connectivity, while the sensor data were transmitted to the onboard computer (Raspberry Pi4) via Bluetooth. Finally, the collected data were transmitted to the AFarCloud Middleware (again via the Internet).

Figure 5 presents the mission plan, the positions of the environmental sensors and the UAS flying above the field.

3.3.3. Simple UAS Manipulator for In Situ Measurements

For now, multirotor UAS are mostly used for different types of data collection and monitoring tasks. However, there is a potential to also use UAS to perform simple in situ measurements or manipulation missions, for instance, to collect data/samples in hard to access locations and/or to avoid trampling the crops. This concept was explored by developing a basic prototype of a UAS manipulator that was installed on an AFarCloud-integrated UAS, as shown in

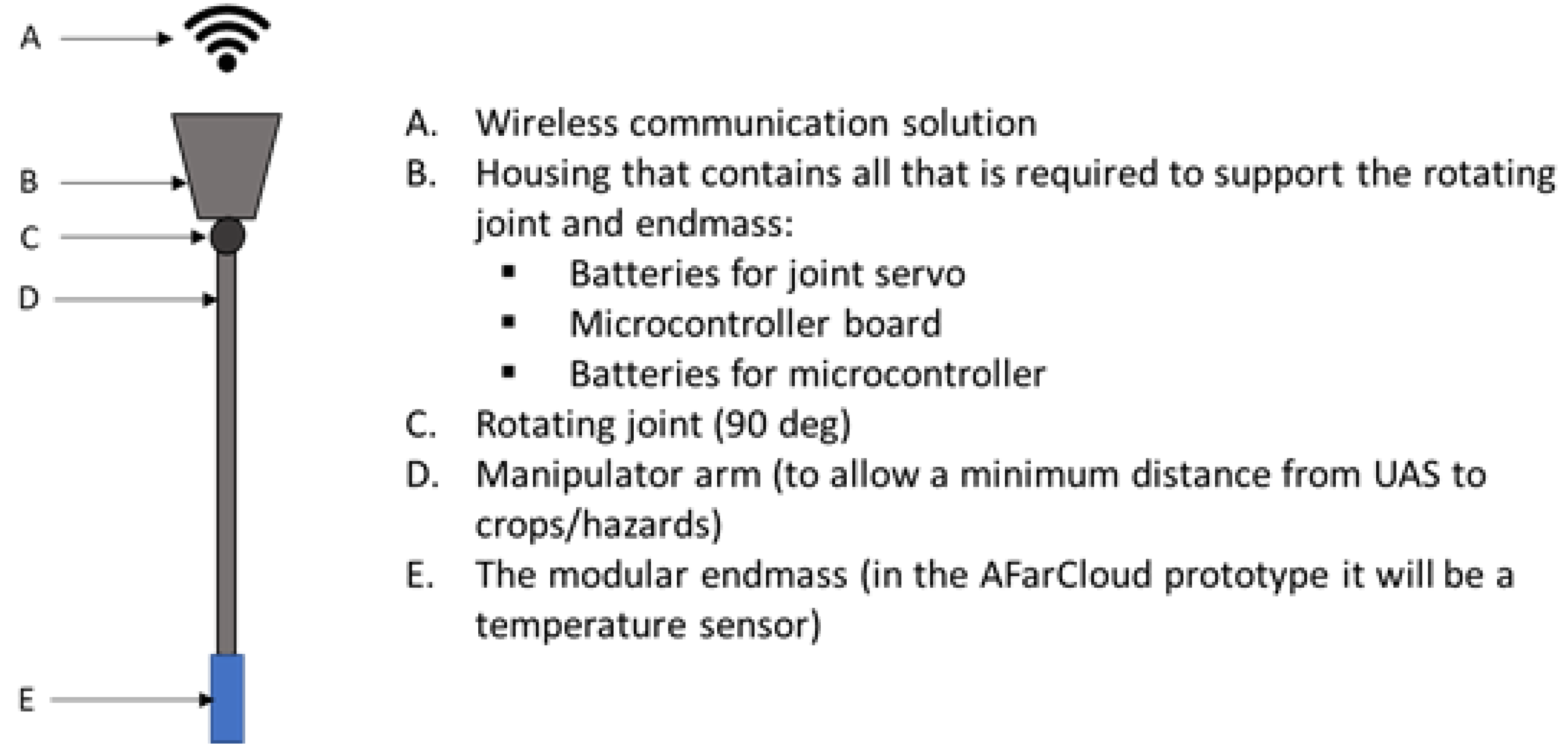

Figure 6.

The prototype manipulator is self-contained, having its own battery and BLE communication with the UAS’s onboard computer (in this case a Jetson Nano onboard the HEIFU UAS). Its key functionalities are illustrated in

Figure 7, and the specific components installed in the prototype are summarized in

Table 4.

The UAS manipulator consists of a long pole that can be rotated down (using a digital servo) to extend the sensor/manipulator, e.g., into foliage or crops of interest below. The wireless design allows the manipulator to be an optional payload that is easy to attach/remove so that the UAS can be used for a wide range of missions without carrying unnecessary hardware. Additionally, the approach reduces the risk of compromising the safety and integrity of the UAS. This is expected to be important, as the new CE and class markings for drones will make it difficult to make modifications that directly involve the UAS software and hardware. This setup allowed a mission to be defined in the MMT that specified the locations for the desired in situ measurements. The UAS would fly the path and deploy the drone manipulator to collect temperature measurements at the desired locations. The recorded temperatures would then be made available to the MMT through the middleware.

3.3.4. UAS-Based Low Altitude Grass Analysis

Yet another type of in situ measurement was explored as part of the project. In dairy farming, it is essential to harvest grass for silage in the maturity phase where its nutrients are in optimal balance. To eliminate the current, slow laboratory-based approach, a near-infrared (NIR) sensor-based versatile analyzer carried by a UAS can determine the digestability value (D-value) directly in field, based on statistical interpretation of the captured frequency response. In AFarCloud, a prototype device was built and tested as a UAS payload, as shown in

Figure 8.

The last version of the analyzer is based on Raspberry Pi4 SBC and Spectral Engines NIR sensors 1.4 and 1.7. The analyzer uses its own special halogen light source to provide unfiltered NIR radiation to the analysis area to achieve the required measurement accuracy, and thus the UAS must land on the ground for the 30 s measurement period and prevent interference from ambient light. For that reason, there is also a disc-shaped shader for covering the analyzed area from ambient sunlight. Inside the disc, there is a covering flap, which is moved by an electric motor. This aluminum flap also serves as a calibration target when closed, and the calibration can be performed while flying to the next measurement position. The onboard D-value solver software component was developed with use of a collection of 62 grass samples that were analyzed both with the NIR sensor and by an accredited laboratory. Utilizing these pairs, the algorithm was designed using NumPy, SciPy and Scikit-learn, in order to calculate errors, statistics, normalizations and correlations.

The prototype weighs roughly 2 kg, which is an acceptable payload for most (semi-) professional UAS platforms on the market. The analyzer can be commanded by the UAS operating system and therefore, autonomous missions can be easily planned and executed. This way, the farmer gets the results immediately and can plan their harvesting schedule accordingly. Today, a complete chain of analysis may take 2–3 working days, and the associated costs do not allow daily tests, let alone several tests per day.

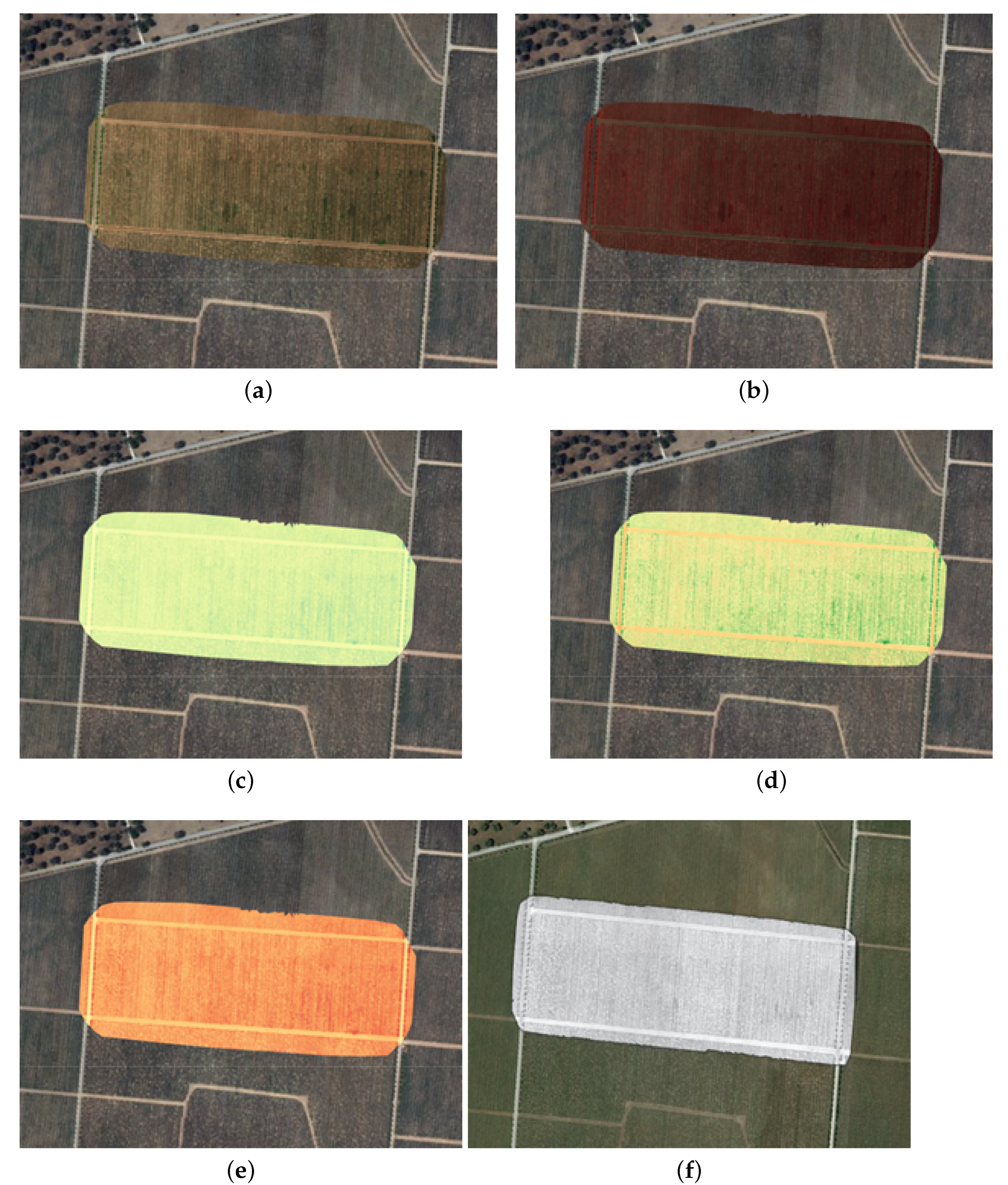

3.3.5. Generation of 2D/3D Maps from Multispectral Sensor Data

As exemplified in the previous section, drones can be useful for collecting essential information from different parts of the electromagnetic spectrum. With multi-spectral cameras, large amounts of data can be collected efficiently. The collected information can then be stored in a map for convenient usage for different farming purposes, for example, to detect the status of different areas with respect to pests, nutrient deficiencies, water content, other soil characteristics or parameters that are relevant for field operations. Additionally, basic analysis, such as detection of rows of crops and boundaries of the fields, can be performed with maps generated from camera images.

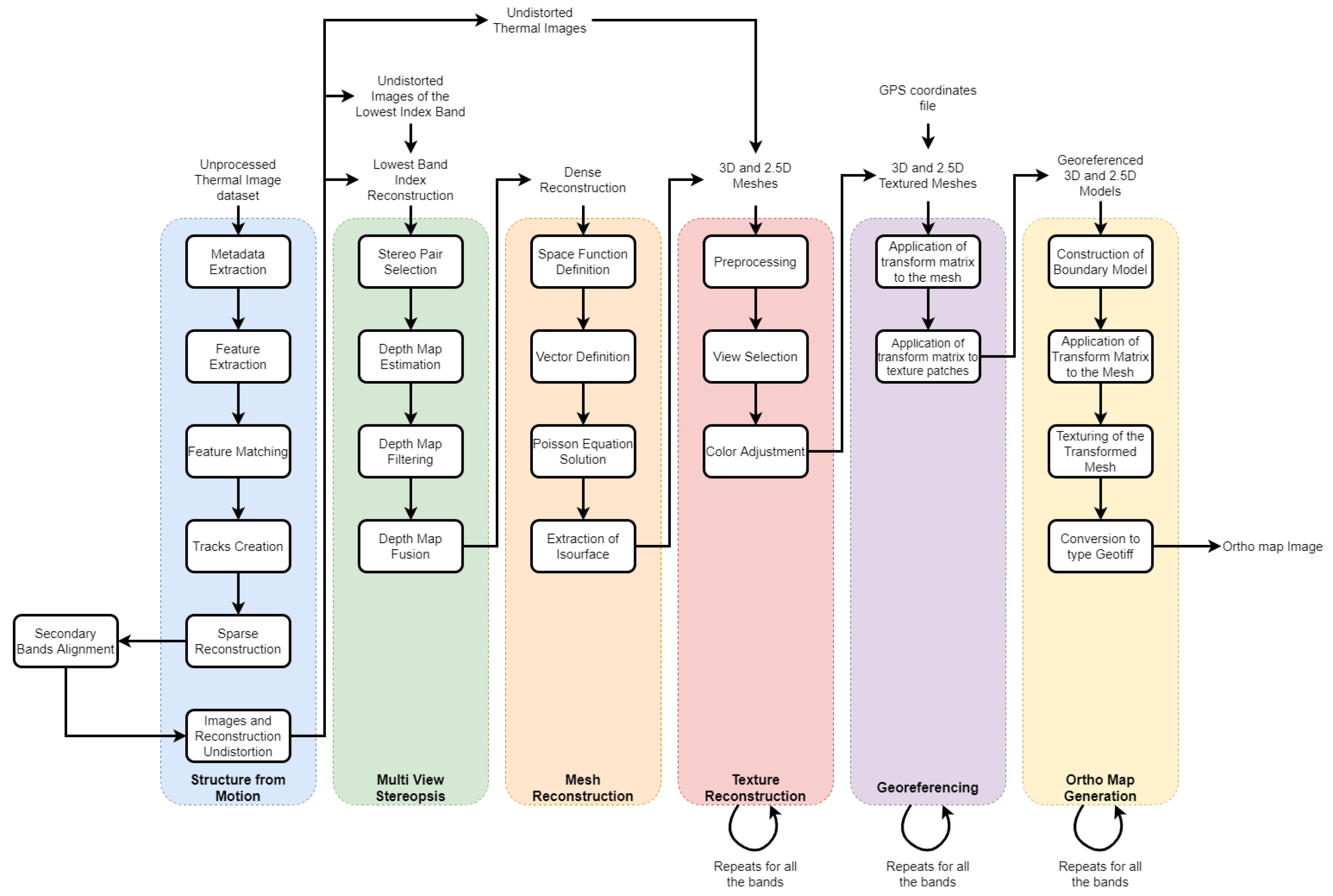

To reconstruct a surveyed site from camera images, the images must go through a set of processes illustrated in the workflow of

Figure 9. The images are first processed by the structure from motion block, which outputs a sparse reconstruction. The sparse point cloud is then passed on to the multi-view stereopsis block where a densification process occurs. In the third step, the dense point cloud is used to generate 2D and 3D mesh models. The next step is to incorporate the textures into the meshes, which is repeated for each spectral band from the camera’s dataset. The textured model is then georeferenced using GPS information extracted from the images. Finally, the last step of the workflow is aimed at creating an orthomap of the surveyed site using the 3D model, which is repeated for each band in the dataset. This procedure is described in more detail in [

18].

At the end of this procedure, the farmer will get a 3D map with each of the bands of the multi-spectral camera included.

Figure 10 shows combinations of spectral bands that were created based on flight testing at a vineyard field in Portugal. The testing was completed using the Beyond Vision-made drone HEIFU [

16] carrying a MicaSense RedEdge MX Multispectral sensor to capture the blue, green, red, NIR and red edge bands and a FLIR camera to capture thermal images.

The data can be used to optimize the operation of the farm in multiple ways—for example, to generate prescription maps for precision spraying of nutrients and pesticides using artificial intelligence. One example in the AFarCloud Project was the use of the maps in

Figure 10 as input for a fault detection algorithm [

19,

20] that detects vineyard canopy gaps, as shown in

Figure 11.

Detecting those gaps is of extreme importance, especially in large farms. Specifically, the AI-based analysis eliminates the time-consuming manual inspection of all the corridors, and instead identifies key points of interest for closer inspection. Gaps in the canopy of a vineyard might indicate the presence of a plague and/or disease, which it is critical to detect and contain early to prevent great losses to production. If the potential gaps are confirmed as threats to the culture, corrective action may be immediately triggered, saving time and potentially the crop.

3.3.6. Drone Charging Stations and Associated Applications

The key to success for most of the applications presented in

Table 2 is a high degree of autonomy. The UAS will have to be able to work autonomously for hours without human interaction in order for, e.g., a fruit-picking application to be viable. Thus, the UAS depends on support from an autonomous charging station. The charging station prototype for multirotor UAS that is shown in

Figure 12 was developed to extend the autonomy of drone missions.

The prototype was designed to be independent of the size and make of the UAS. This was achieved by the installation of multiple charging plates that can be used depending on the UAS size and battery type. Moreover, a key element of autonomous drone charging is the landing pad adaptor that is specially designed to fit each the landing gear of each UAS. The landing pad adaptor is safely secured to the landing gear and enables contact between the batteries poles with the charging station poles. Finally, power is transmitted to the charging base by the battery’s charger in order to ensure balanced charging of the battery cells.

As even a “bare bones” outdoor charging station is quite heavy, and farms tend to be stretched out over large areas, it may be advantageous to attach the charging station to a mobile platform. A charging station onboard an autonomous ground vehicle (AGV) would add autonomy to the solution by allowing the center of the operation, i.e., the charging station, to move autonomously to another part of the field. With the AFarCloud infrastructure, the MMT can be used to plan missions for the drone and an AGV that is hosting a UAS charging station. Furthermore, the AGV provides an opportunity to incorporate sensors, analysis equipment or collection bins that may increase the capabilities of the autonomous solution. A challenge would be that the AGV would need to carry a large battery bank and/or plan stops to recharge the batteries during lengthy missions. In AFarCloud a landing platform pulled by an Summit XL AGV has been developed and integrated with the AFarCloud middleware as a step to validate the concept.

Figure 13 shows a HEIFU UAS that performed autonomous landing on a landing platform at the end of an MMT-planned mission where the final AGV position was different from the starting position.

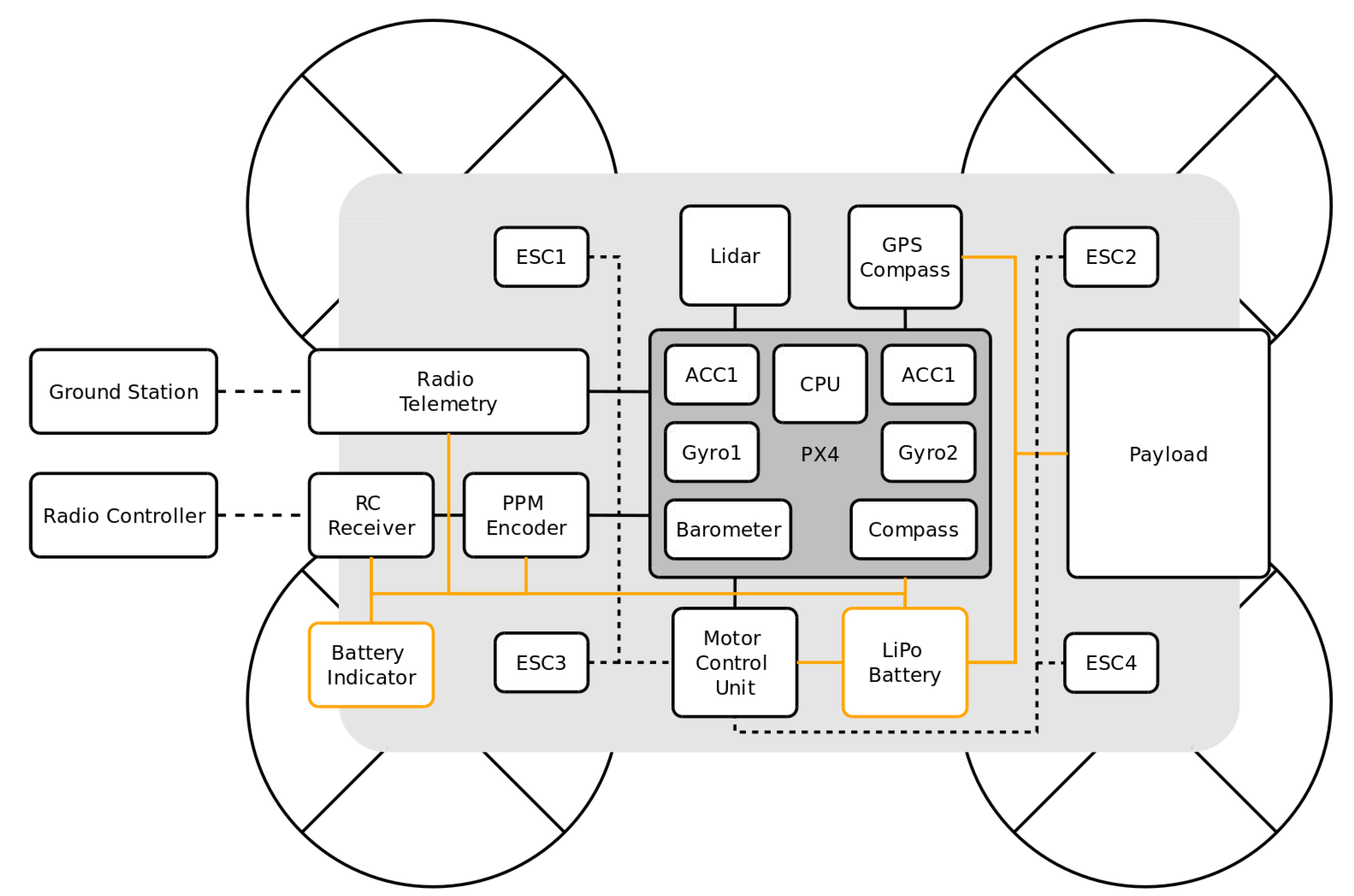

3.3.7. Low-Cost Open Drone Concept

The primary focus of the UAS platform referred to as the Open Drone [

21], is to provide a low-cost, open and modular alternative to commercial drones. Flexibility is achieved by allowing different drone configurations, i.e., number of motors, motor configuration, payload type, etc. On a farm, there are many UAS-relevant monitoring and data collection activities. Each configuration would aim at a specific problem, where low cost and low perceived complexity are the main targets. Using open-source software, communication and design, adaptability to different scenarios is ensured. Replacement parts could be readily available, either directly through 3D printing from the open designs or provided by use of standard components available from multiple suppliers. Such an affordable and modular system can both mean that more farms may decide to invest in their own drones and that a service provider can experiment to define various data collection services.

The Open Drone, as illustrated in

Figure 14 and shown in

Figure 15, was designed in SolidWorks and then 3D-printed from polylactic acid (PLA) with different Tri-Hexagon infills (around 15–30% on the arms and around 8–15% on other parts of the body, as they did not require the extra strength). It is based on the Pixhawk 4 flight controller (using PX4 autopilot) and can hence be used in concert with tools available within the Dronecode ecosystem; for example, QGroundControl can be used for setup, calibration and stand-alone mission control and software-in-the-loop (SITL) simulations through jMAVSim or Gazebo.

The Open Drone is controlled from a ground station over the MAVLink protocol, and if manual control is required, via radio controller. Pixhawk 4 uses an external ublox Neo-M8N GPS/GLONASS receiver as input for the GPS locations. It controls four T-Motor MS2820 KV830 motors, over EMAX BLHeli 20A ESCs. The data-link radio antenna is a V3 3DR Radio Telemetry 433MHz and the RC antenna is i8A 2.408–2.475GHz. The Benewake TFmini Light Detection and Ranging sensor (Lidar) was used instead of the barometer at low altitudes for smoother landings. A ZIPPY Compact 8000 mAh 11.1V Li-Po battery provides a flight time of up to 17 min. The Open Drone is 60 × 60 cm and can power a payload up to 500 g configured subject to application. The maximal takeoff weight is 2900 g. The overall cost of the Open Drone is <1000€.

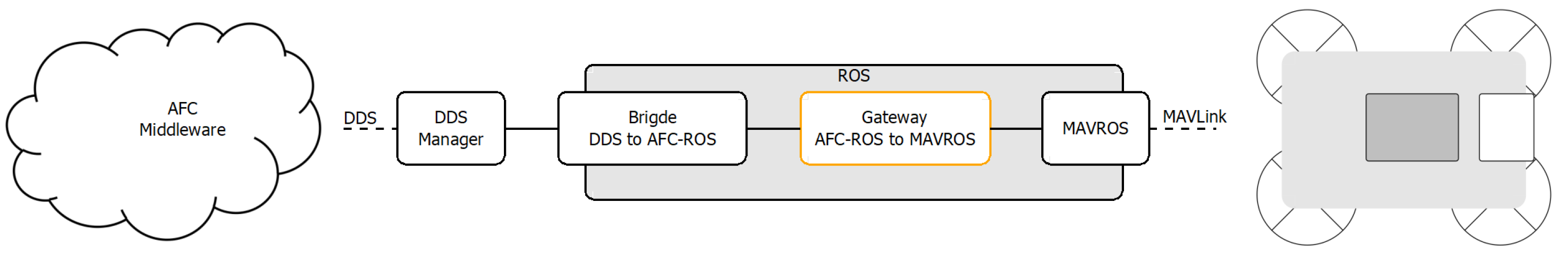

For integration with AFarCloud, the ground station relays communication between the AFC middleware, DDS and MAVLink, as shown in

Figure 16.

MAVROS provides a communication node between ROS and MAVLink, and hence the Open Drone. The two ROS message systems are connected by a gateway, which constitutes the core of the integration.

4. Discussion

The new EU UAS regulatory framework will pave the way for fully autonomous UAS operations that can contribute to more widespread use of UAS technologies to help realize the benefits of precision farming methods. The risk-based approach leads to a need to employ certified UAS with demonstrated performance and known responses to various failures. This means that some of the flexibility we have come to expect from the onboard hardware and software will be lost. However, by carefully designing a flexible framework around the certified UAS and also by designing non-intrusive modular payloads, some of the hurdles can be overcome, particularly if the regulations take into account the opportunities tied to a modular design.

An important aspect to consider for the development of new UAS aimed at the agricultural sector is functionality that enables a farmer to benefit from UAS data collection while minimizing the need for the farmer to pilot, charge, troubleshoot or maintain the drone himself/herself. Onboard diagnostics, system redundancy, modularity and compatibility with farm management systems so the data can be transformed into actionable information for the farmer are key elements. The UAS should be developed with different types of drone-as-a-service concepts in mind, including a complete service where the farmer has no interaction with the drone system at all and a service where a drone-in-a-box solution is permanently placed at the farm but with remote operation and support provided by a drone service provider.

The AFarCloud project has demonstrated how a number of different components and applications for drones in agriculture can be integrated into a common platform. By providing a platform that is not tied to a single supplier, it would be easier for the farmers to integrate the modules they need on their specific farms. For the economic viability of drones in agriculture, it is important to either amortize the cost over multiple uses on a farm, or over multiple farms in cases of more specialized equipment, such as fixed-wing drones. With an open platform, data collected by an individual farmer may be combined with data from third party service providers, hence supporting integration of multiple business models.

To take the concept of an open platform for farming drones towards commercial use, further work is needed to make deployment simple, user friendly and safe. As the new regulations are crystallized, the technical designs can adopt and make the integration smooth with open interfaces and standardized approaches for verification and testing. Collaboration among many actors active in the domain of technology development and addressing the end-user needs are essential, especially for creating a sustainable business ecosystem. There is a need for research both into the methodology and into the refined precision farming applications.

Author Contributions

Conceptualization, M.M. and H.L.; methodology, M.M. and G.J.; software, A.E.A., D.P. and J.P.M.-C.; validation, D.P. and B.C.; formal analysis, M.M.; writing—original draft preparation, M.M., D.P., V.S., C.B., M.H., T.H., B.C., R.H., C.A., J.P.M.-C., H.L. and A.E.A.; writing—review and editing, M.M., H.L. and G.J.; visualization, M.M., V.S., M.H., T.H., D.P., R.H. and C.A.; supervision, G.J. and B.C.; project administration, G.J. All authors have read and agreed to the published version of the manuscript.

Funding

This project has received funding from the ECSEL Joint Undertaking (JU) under grant agreement number 783221. The JU receives support from the European Union’s Horizon 2020 research and innovation program; and Austria, Belgium, Czech Republic, Finland, Germany, Greece, Italy, Latvia, Norway, Poland, Portugal, Spain and Sweden.

Conflicts of Interest

The authors declare no conflict of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| AGV | Autonomous Ground Vehicle |

| AI | Artificial Intelligence |

| BVLOS | Beyond Visual Line Of Sight |

| DDS | Data Distribution Service |

| FMS | Flight Management System |

| HLAF | High Level Awareness Framework |

| IDL | Interface Description Language |

| LOA | Level Of Autonomy |

| MMT | Mission Management Tool |

| MTOW | Maximum TakeOff Weight |

| NDVI | Normalized Difference Vegetation Index |

| NIR | Near InfraRed |

| NPK | Nitrogen, Phosphorus, and Potassium |

| PDRA | Predefined Risk Assessment |

| RGB | Red, Green, Blue |

| STS | STandard Scenario |

| SORA | Specific Operations Risk Assessment |

| RFID | Radio Frequency IDentification |

| ROS | Robotic Operating System |

| UAS | Unmanned Aerial System |

| VLOS | Visual Line Of Sight |

References

- Commission Regulation (EU) 2019/945 and 2019/947 Easy Access Rules Revision from 13 January 2021 Concerning the Rules and Procedures for the Operation of Unmanned Aircraft. Available online: https://www.easa.europa.eu/document-library/easy-access-rules/easy-access-rules-unmanned-aircraft-systems-regulation-eu (accessed on 3 August 2021).

- del Cerro, J.; Cruz Ulloa, C.; Barrientos, A.; de León Rivas, J. Unmanned Aerial Vehicles in Agriculture: A Survey. Agronomy 2021, 11, 203. [Google Scholar] [CrossRef]

- Hassler, S.C.; Baysal-Gurel, F. Unmanned Aircraft System (UAS) Technology and Applications in Agriculture. Agronomy 2019, 9, 618. [Google Scholar] [CrossRef] [Green Version]

- Tsouros, D.C.; Bibi, S.; Sarigiannidis, P.G. A Review on UAV-Based Applications for Precision Agriculture. Information 2019, 10, 349. [Google Scholar] [CrossRef] [Green Version]

- Kim, J.; Kim, S.; Ju, C.; Son, H.I. Unmanned Aerial Vehicles in Agriculture: A Review of Perspective of Platform, Control, and Applications. IEEE Access 2019, 7, 105100–105115. [Google Scholar] [CrossRef]

- Bollas, N.; Kokinou, E.; Polychronos, V. Comparison of Sentinel-2 and UAV Multispectral Data for Use in Precision Agriculture: An Application from Northern Greece. Drones 2021, 5, 35. [Google Scholar] [CrossRef]

- Sørensen, C.G.; Fountas, S.; Nash, E.; Pesonen, L.; Bochtis, D.; Pedersen, S.M.; Basso, B.; Blackmore, S.B. Conceptual model of a future farm management information system. Comput. Electron. Agric. 2010, 72, 37–47. [Google Scholar] [CrossRef] [Green Version]

- McCown, R.L. A cognitive systems framework to inform delivery of analytic support for farmers’ intuitive management under seasonal climatic variability. Agric. Syst. 2012, 105, 7–20. [Google Scholar] [CrossRef]

- Kaloxylos, A.; Eigenmann, R.; Teye, F.; Politopoulou, F.; Wolfert, S.; Shrank, C.; Dillinger, M.; Lampropoulou, I.; Antoniou, E.; Pesonen, L.; et al. Farm management systems and the Future Internet era. Comput. Electron. Agric. 2012, 89, 130–144. [Google Scholar] [CrossRef]

- Castillejo, P.; Johansen, G.; Cürüklü, B.; Bilbao-Arechabala, S.; Fresco, R.; Martínez-Rodríguez, B.; Pomante, L.; Rusu, C.; Martínez-Ortega, J.F.; Centofanti, C.; et al. Aggregate Farming in the Cloud: The AFarCloud ECSEL project. Microprocess. Microsyst. 2020, 78, 103218. [Google Scholar] [CrossRef]

- International Organization for Standardization. Unmanned Aircraft Systems—Part 3: Operational Procedures (ISO 21384-3:2019). 2019. Available online: https://www.iso.org/obp/ui/#iso:std:70853:en (accessed on 15 October 2021).

- Commission Implementing Regulation (EU) 2021/664 of 22 April 2021 on a Regulatory Framework for the U-Space. Available online: https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX%3A32021R0664 (accessed on 15 October 2021).

- Lee, C.L.; Strong, R.; Dooley, K.E. Analyzing Precision Agriculture Adoption across the Globe: A Systematic Review of Schol-arship from 1999 to 2020. Sustainability 2021, 13, 10295. [Google Scholar] [CrossRef]

- Delgado, J.A.; Short, N.M.; Roberts, D.P.; Vandenberg, B. Big Data Analysis for Sustainable Agriculture on a Geospatial Cloud Framework. Front. Sustain. Food Syst. 2019, 3, 54. [Google Scholar] [CrossRef] [Green Version]

- Pedro, D.; Matos-Carvalho, J.P.; Azevedo, F.; Sacoto-Martins, R.; Bernardo, L.; Campos, L.; Fonseca, J.M.; Mora, A. FFAU–Framework for Fully Autonomous UAVs. Remote Sens. 2020, 12, 3533. [Google Scholar] [CrossRef]

- Pedro, D.; Lousã, P.; Ramos, Á.; Matos-Carvalho, J.P.; Azevedo, F.; Campos, L. HEIFU–Hexa Exterior Intelligent Flying Unit. In Computer Safety, Reliability, and Security. SAFECOMP 2021 Workshops: Lecture Notes in Computer Science; Habli, I., Sujan, M., Gerasimou, S., Schoitsch, E., Bitsch, F., Eds.; Springer: Cham, Switzerland, 2021; Volume 12853. [Google Scholar] [CrossRef]

- Kalaivani, T.; Allirani, A.; Priya, P. A survey on Zigbee based wireless sensor networks in agriculture. In Proceedings of the 3rd International Conference on Trendz in Information Sciences and Computing (TISC2011), Chennai, India, 8–9 December 2011; pp. 85–89. [Google Scholar] [CrossRef]

- Vong, A.; Matos-Carvalho, J.P.; Toffanin, P.; Pedro, D.; Azevedo, F.; Moutinho, F.; Garcia, N.C.; Mora, A. How to Build a 2D and 3D Aerial Multispectral Map?—All Steps Deeply Explained. Remote Sens. 2021, 13, 3227. [Google Scholar] [CrossRef]

- Pino, M.; Matos-Carvalho, J.P.; Pedro, D.; Campos, L.M.; Costa Seco, J. UAV Cloud Platform for Precision Farming. In Proceedings of the 2020 12th International Symposium on Communication Systems, Networks and Digital Signal Processing (CSNDSP), Porto, Portugal, 20–22 July 2020; pp. 1–6. [Google Scholar] [CrossRef]

- Matos-Carvalho, J.P.; Fonseca, J.M.; Mora, A. UAV Downwash Dynamic Texture Features for Terrain Classification on Autonomous Navigation. In Proceedings of the 2018 Federated Conference on Computer Science and Information Systems (FedCSIS), Sofia, Bulgaria, 6–9 September 2018; pp. 1079–1083. [Google Scholar]

- Beckman, E.; Hamrén, R.; Harborn, J.; Harenius, L.; Nordvall, D.; Rad, A.; Ramic, D. Open Drone–FLA400–Project in Dependable System. 2020. Available online: http://www.es.mdh.se/publications/6182- (accessed on 15 October 2021).

Figure 1.

An overview of inputs, scope and outputs of the AFarCloud drone Task.

Figure 1.

An overview of inputs, scope and outputs of the AFarCloud drone Task.

Figure 2.

Fixed-wing UAS collecting data for subscribers.

Figure 2.

Fixed-wing UAS collecting data for subscribers.

Figure 3.

Airborne view of the Maritime Robotics Falk fixed-wing UAS.

Figure 3.

Airborne view of the Maritime Robotics Falk fixed-wing UAS.

Figure 4.

Example of an item detected by a drone, displayed in the MMT during the flight (left); closeup view of the (right).

Figure 4.

Example of an item detected by a drone, displayed in the MMT during the flight (left); closeup view of the (right).

Figure 5.

(a) The sensor data retrieval mission planned on the MMT. (b) Image captured during mission execution.

Figure 5.

(a) The sensor data retrieval mission planned on the MMT. (b) Image captured during mission execution.

Figure 6.

UAS manipulator in stowed and deployed positions.

Figure 6.

UAS manipulator in stowed and deployed positions.

Figure 7.

Illustration of UAS manipulator’s key functions.

Figure 7.

Illustration of UAS manipulator’s key functions.

Figure 8.

NIR grass analyzer as a UAS payload.

Figure 8.

NIR grass analyzer as a UAS payload.

Figure 9.

Workflow to reconstruct a surveyed site from camera images. Adapted from [

18].

Figure 9.

Workflow to reconstruct a surveyed site from camera images. Adapted from [

18].

Figure 10.

Mappings of popular (combinations of) spectral bands collected with a drone-carried multispectral sensor, including red green blue (RGB), color infrared (CIR), normalized difference red edge (NDRE), normalized difference vegetation index (NDVI), normalized difference water index (NDWI) and thermal. (a) RGB. (b) CIR. (c) NDVI. (d) NDVI. (e) NDWI. (f) Thermal.

Figure 10.

Mappings of popular (combinations of) spectral bands collected with a drone-carried multispectral sensor, including red green blue (RGB), color infrared (CIR), normalized difference red edge (NDRE), normalized difference vegetation index (NDVI), normalized difference water index (NDWI) and thermal. (a) RGB. (b) CIR. (c) NDVI. (d) NDVI. (e) NDWI. (f) Thermal.

Figure 11.

Multispectral indexes with AI applied in these multispectral maps to detect vineyard canopy gaps. The purple boxes in the images indicate the AI-detected canopy gaps. (a) CIR map. (b) NDVI map.

Figure 11.

Multispectral indexes with AI applied in these multispectral maps to detect vineyard canopy gaps. The purple boxes in the images indicate the AI-detected canopy gaps. (a) CIR map. (b) NDVI map.

Figure 12.

UAS-independent charging station prototype.

Figure 12.

UAS-independent charging station prototype.

Figure 13.

HEIFU UAS autolanding on an AGV-pulled charging station.

Figure 13.

HEIFU UAS autolanding on an AGV-pulled charging station.

Figure 14.

Open Drone overview.

Figure 14.

Open Drone overview.

Figure 16.

Open Drone AFarCloud ground station.

Figure 16.

Open Drone AFarCloud ground station.

Table 1.

Summary of UAS payloads relevant for agricultural applications.

Table 1.

Summary of UAS payloads relevant for agricultural applications.

| UAS Payload | Brief Description of Application Areas |

|---|

| RGB camera | Useful for 3D modelling of crops, to locate/size individual specimens, biomass estimation and help to identify diseases and weeds. |

| Multispectral camera | Captures several wavelength bands from the electromagnetic spectrum. Different sensors offer different combinations of bands. |

| | RGB—One or several bands of visual light (Red/Green/Blue). |

| | Near Infra-Red (NIR)—To determine physical and chemical properties of organic substances. Allows capture of NDVI. |

| | Red-edge—Region of NIR with rapid change in reflectance of vegetation. Suited to detect chlorophyll content. |

| Hyperspectral camera | Can collect hundreds of electromagnetic bands. Compared to multispectral cameras, the bands are narrower, and the sensor is more expensive and produces a lot more data. However, the higher spectral resolution may allow, e.g., plant phenotyping. |

| Thermal camera | Captures radiation from most of the infrared region to provide thermal image of objects for, e.g., seeding viability and disease detection. Can be used to detect water stress and plan irrigation. |

| Depth sensor | Technologies include RGB-D and LiDAR and can be useful to perform 3D modelling and altitude monitoring. LiDAR gives best accuracy, but the sensor is heavier and more expensive. |

| Sprayers | Can support spraying of small hard-to-access areas. Higher precision and less drift of chemical compared to conventional methods. Multi-purpose sprayers/spreaders exist that can handle both fluid and granular matter (fertilizer, seeds etc.) |

| Grippers and | Both applications and technology still in early development. Relevant |

| manipulators | applications includes fruit picking, pruning and sample collection. |

Table 2.

Summary of agriculture-relevant UAS applications.

Table 2.

Summary of agriculture-relevant UAS applications.

| Specific Agricultural UAS Applications | Refs. |

|---|

| Field mapping to generate, e.g., NDVI maps to assess vegetation health. | [3,5] |

Detect water stress with additional thermal imaging Detect nutrient stress with additional NPK relevant data Detect plant diseases and pests (RGB camera is also useful)

| [2,3,5]

[2,3,5]

[2,3,5] |

| Environmental monitoring (mobile sensing of humidity, temp. etc.) | [2] |

| Assess plant/tree size, spacing and field layout. | [2,3,5] |

| Estimate yield, biomass and nutrient levels for soil and plants | [2,3,5] |

| Weed management; identify weeds. | [2,3,5] |

| Locate and count assets such as animals, trees, vehicles etc. | [3] |

| Spraying of chemicals, seeds or fertilizer | [2,3,5] |

| Harvesting of fruit and vegetables using UAS gripping/cutting tools | [3,5] |

| Soil monitoring | [2] |

| Identify locations for soil sampling | [2] |

| Provide artificial pollination | [5] |

Table 3.

Summary of categories for UAS operations defined in EU 2019/947.

Table 3.

Summary of categories for UAS operations defined in EU 2019/947.

| Category | Operational Details (Highlights) |

|---|

| OPEN | Low risk, no pre-approval. Visual Line Of Sight (VLOS) only. |

| | Max height < 120 m. Three sub-categories depending on Max TakeOff Weight (MTOW), drone class and presence of people: |

| | (A1) MTOW < 0.5 kg. Minimize overflight over uninvolved people. |

| | (A2) MTOW < 2 kg. 50 m distance to uninvolved people. |

| | (A3) MTOW < 25 kg. 150 m distance to populated areas. |

| SPECIFIC | Medium risk. Three possible routes for authorisation by NAA: |

| | (1) Apply for operational authorization based on SORA or Pre-Defined Risk Assessment (PDRA). No UAS class marking required. |

| | (2) Declare a standard scenario (STS) with C5 or C6 classified UAS. |

| | (3) Apply to become a Light UAS Operator (LUC) to self-approve SORA, PDRA and STS. |

| CERTIFIED | High risk. Regulatory regime similar to manned aviation. |

Table 4.

Key components used in the UAS manipulator prototype.

Table 4.

Key components used in the UAS manipulator prototype.

| Hardware | Detailed Description |

|---|

| Servomotor for rotating joint | DS3218 20KG 270 Degree U Mount RC Digital Servo |

| Microcontroller | Arduino Nano 33 BLE |

| Temperature probe | Waterproof DS18B20 |

| Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).