1. Introduction

Sociotechnical systems [

1] are an important class of applications of artificial intelligence (AI) tools, since many deployments of technology built on their foundations are at the core of decision processes at the individual and the organizational levels. An inherent problem in this area is that of explainability and interpretability, topics that were not central in earlier “AI booms” characterized by expert systems and rule-based models. The issues underlying this problem are within the domain of explainable AI (XAI) [

2], which is now widely recognized as a crucial feature for the practical deployment of AI models [

3]. The importance of this aspect can be appreciated by pointing to the Explainable Artificial Intelligence (XAI) program launched by the Defence Advanced Research Projects Agency (DARPA) [

4], which aims to create a set of new artificial intelligence techniques that allow for end users to understand, properly trust, and effectively manage the emerging generation of artificial intelligence systems [

5]. The danger is that complex black-box models (some of which can comprise hundreds of layers and millions of parameters) [

6] are increasingly used for important predictions in critical contexts, and these models generate outputs that may not be justified or simply do not allow for detailed explanations of their behavior [

4]. In this direction, recent work focused on addressing these problems from different points of view [

7,

8,

9]. In this paper, we focus on cybersecurity as a salient example of a sociotechnical domain [

10] in which the availability of explanations that support the output of a model are crucial. Transparency, together with a human-in-the-loop (HITL) scheme, leads to more robust decision-making processes whose results can be trusted by users [

8]. Achieving this is challenging, since many domains involve information arriving from multiple heterogeneous sources with different levels of uncertainty due to gaps in knowledge (incompleteness), overspecification (inconsistency), or inherent uncertainty.

In cybersecurity domains, a clear example is the task of real-time security analysis, a complex process in which many uncertain factors are involved, given that analysts must deal with the behavior of different actors and entities, the dynamic nature of exploits, and the fact that the observations of potentially malicious activities are limited. Cyberthreat analysis (CTA) [

11] is a highly technical intelligence problem in which (human) analysts take into consideration multiple sources of information, with possibly varying degrees of confidence or uncertainty, with the goal of gaining insight into events of interest that may represent a threat to a system. When building AI tools to assist such a process, knowledge engineers face the challenge of leveraging uncertain knowledge in the best possible way [

12]. Due to the nature of these analytical processes, an automated reasoning system with human-in-the-loop capabilities would be best suited for the task. Such a system must be able to accomplish several goals, among which we distinguish the following main capabilities [

13]: (i) reason about evidence in a formal, principled manner; (ii) consider evidence associated with probabilistic uncertainty; (iii) consider logical rules that allow for the system to draw conclusions on the basis of certain pieces of evidence and iteratively apply such rules; (iv) consider pieces of information that may not be compatible with each other, deciding which the most relevant are; and (v) show the actual status of the system on the basis of the above-described features, and provide the analyst with the ability to understand why an answer is correct, and how the system arrives at that conclusion (i.e.,

explainability and interpretability). In this context, there is a specific literature to the study of techniques and methodologies for providing explanations in cybersecurity domains [

14,

15,

16,

17]. The model that we develop in this work is based on

argumentation-based reasoning, an approach that is designed to mimic the way humans with which rationally arrive at conclusions by analyzing arguments for and against them, and is especially well-suited for accommodating desirable features, such as reasoning about possibly uncertain evidence in a principled manner, handling pieces of information that may not be compatible with each other, and showing the actual status of the system to analysts along with the ability to understand why an output is produced.

Contributions. We contribute to the area of intelligent systems applied to cybersecurity in the following ways:

A use case for the application of a structured probabilistic argumentation model (DeLP3E) [

18] based on publicly available cybersecurity datasets.

Design of the P-DAQAP framework, an extension of DAQAP [

19], to work with DeLP3E, and the proposal of different classes of queries in the context of applications related to CTA.

A preliminary empirical evaluation of an approximation algorithm for probabilistic query answering in P-DAQAP, showing the potential for the system to scale to nontrivial problem sizes, arriving at solutions efficiently and effectively.

To the best of our knowledge, this is the first system of its kind. In particular, being able to consider the internal structure of arguments allows for the platform to be extended to work with other defeasible argumentation formalisms, and offers greater transparency to adapt classical approaches that do not consider probabilistic information.

2. Preliminaries

Tools developed in the area of argumentation-based reasoning offer the possibility of analyzing complex and dynamic domains by studying the arguments for and against a conclusion. Specifically,

defeasible argumentation leverages models that contain inconsistency, evaluating arguments that support contradictory conclusions and deciding which ones to keep [

20]. An argument supports a conclusion from a set of premises [

20]; a conclusion

constitutes a piece of tentative information that an agent is willing to accept. If the agent then acquires new information, conclusion

, along with the arguments that support it, could be invalidated. The validity of a conclusion

is guaranteed when there is an argument that provides justification for

that is undefeated. This process involves the construction of an argument

for

, and the analysis of counterarguments that are possible defeaters of

; as these defeaters are arguments, it must be verified that they are not themselves defeated. There are several formalisms that are based on this idea, such as ABA [

21], ASPIC+ [

22],

defeasible logic programming (DeLP) [

23], and

deductive argumentation [

24], which consider the structure of the arguments that model a discussion. The DAQAP platform [

19] on which the presented system is based uses DeLP as its central formalism. We now briefly present the necessary background, starting with DeLP and its probabilistic extension.

2.1. Defeasible Logic Programming (DeLP)

DeLP combines logic programming and defeasible argumentation. A DeLP program

, also denoted as

, is a set of facts and strict rules

, and defeasible rules

.

Facts are ground literals representing atomic information (or its negation using strong negation “∼”),

strict rules represent nondefeasible information, and

defeasible rules represent tentative information. Here, we consider the extension that incorporates

presumptions to set

, which can be thought of as a kind of defeasible fact [

25].

The dialectical process used in deciding which information prevails as warranted involves the construction and evaluation of arguments that either support or interfere with the query under analysis. An argument is a minimal set of defeasible rules that, along with the set of strict rules and facts, are not contradictory and derive a certain conclusion , denoted as . Arguments supporting the answer for a query can be organized using dialectical trees. A query is issued to a program in the form of a ground literal .

A literal is warranted if there exists a nondefeated argument supporting . To establish if is a nondefeated argument, defeaters for are considered, i.e., counterarguments that by some criteria are preferred to . An argument is a counterargument for iff is contradictory. Given a preference criterion, and an argument that is a defeater for , is called a proper defeater if it is preferred to , or a blocking defeater if it is equally preferred or is incomparable with . Since there may be more than one defeater for a particular argument, many acceptable argumentation lines could arise from one argument, leading to a tree structure. This is called a dialectical tree because it represents an exhaustive dialectical analysis for the argument in its root; every node (except the root) represents a defeater of its parent, and leaves correspond to nondefeated arguments. Each path from the root to a leaf corresponds to a different acceptable argumentation line. A dialectical tree provides a structure for considering all possible acceptable argumentation lines that can be generated for deciding whether an argument is defeated.

Given a literal and an argument from a program , to decide whether is warranted, every node in the tree is recursively marked as D (defeated) or U (undefeated), obtaining a marked dialectical tree : (1) all leaves in are marked as “U”s; and (2) let be an inner node of ; then, is marked as U iff every child of is marked as D. Thus, node is marked as D iff it has at least one child marked as U. Given an argument obtained from , if the root of is marked as U, then warrants , and is warranted from . The DeLP interpreter takes a program and a DeLP query L, and returns one of the following four possible answers: YES if L is warranted from , NO if the complement of L regarding strong negation is warranted from , UNDECIDED if neither L nor its complement are warranted from , or UNKNOWN if L is not in the language of the program .

2.2. Probabilistic DeLP: DeLP3E Framework

We now provide a brief introduction to DeLP3E; for full details, we refer the reader to [

18]. A DeLP3E KB

consists of three parts that correspond to

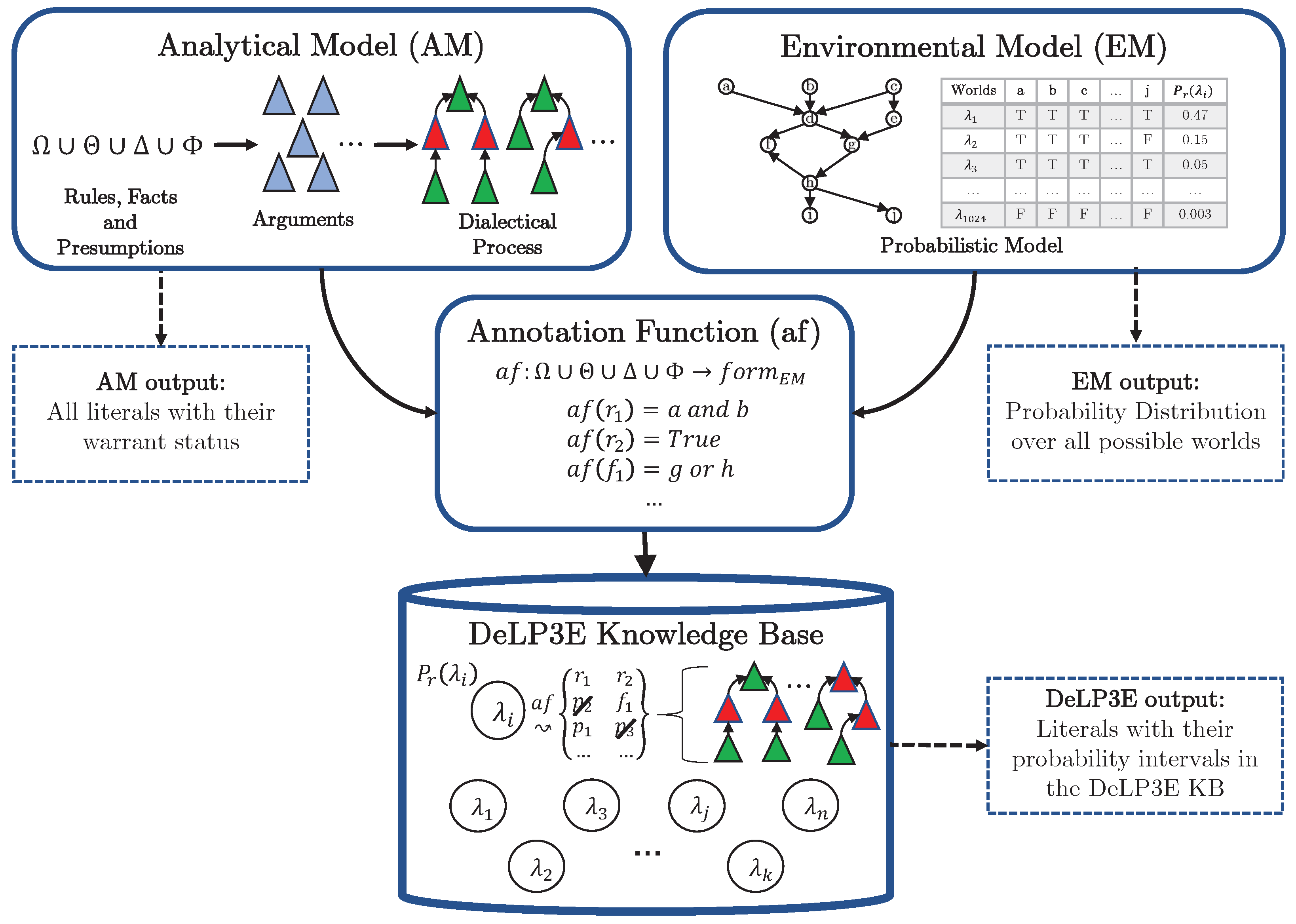

two separate models of the world, and a function linking the two; these components are illustrated in

Figure 1.

The environmental model (EM) is used to describe background knowledge that is probabilistic in nature, while the analytical model (AM) is used to analyze competing hypotheses that can account for a given phenomenon. The EM must be consistent, while the AM allows for contradictory information as the system must have the capability to reason about competing explanations for a given event. In general, the EM contains knowledge such as evidence, intelligence reporting, or uncertain knowledge about actors, software, and systems, while the AM contains elements that the analyst can leverage on the basis of information in the EM. AMs correspond to DeLP programs, while EMs in this paper are abstracted away, assuming that the well-known Bayesian network model is used.

Finally, the third component is the

annotation function, which links components in the AM with conditions over the EM (the conditions under which statements in the AM can potentially be true). We use

to denote the sets of all ground atoms for the EM; here, we concentrate on subsets of ground atoms from

, called

worlds. Atoms that belong to the set are

true in the world, while those that do not are

false (Therefore, there are

possible worlds in the EM). This set is denoted with

. Logical formulas arise from the combination of atoms using the traditional connectives (∧, ∨, and ¬); we use

to denote the set of all possible (ground) formulas in the EM. Annotation functions then assign formulas in

to components in the AM to indicate the conditions (probabilistic events) under which they hold. In this way, each world

induces a subset of the AM, comprised of all elements whose annotations are satisfied by

; for DeLP3E program

P, we denote the subset of the AM induced by

with

(cf.

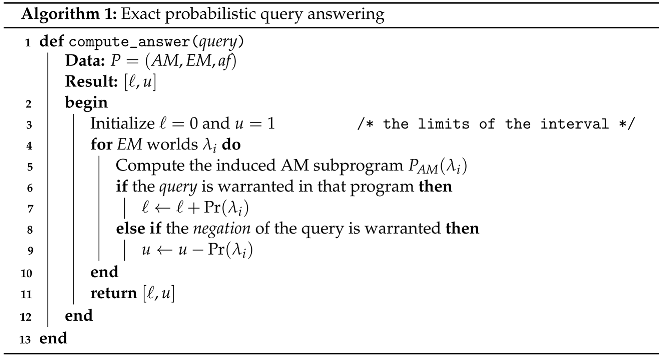

Figure 1). Exact probabilistic query answering is carried out via Algorithm 1.

![Bdcc 06 00091 i001]()

Since the number of worlds in

is exponential in the number of EM random variables, this procedure quickly becomes intractable. However, a

sound approximation of the exact interval can be obtained by simply selecting a subset of

and executing the same procedure. We refer to this algorithm as approximate query answering via

world sampling. It is easy to see that this approximation scheme is sound since it always yields intervals

.

Section 5 is dedicated to studying the effectiveness and efficiency of this approach.

A Simple Illustrative Example

In order to clearly illustrate the model and query-answering procedure in DeLP3E, we present the following simple example of knowledge base :

Analytical Model

Annotation Function

Environmental Model| World | a | b | |

| T | T | 0.25 |

| T | F | 0.20 |

| F | T | 0.05 |

| F | F | 0.50 |

We have an AM consisting of three literals, an EM consisting of two variables, and an annotation function that relates these two models; suppose we query for the literal . To compute the exact probability interval, we go world by world as described above, generating the corresponding subprogram and querying each one of them for the status of the query. Lastly, in order to arrive at the probability interval with which is warranted in P, we keep track of the probability of the worlds where the query is warranted (for the lower limit of the interval) and the probability of the worlds where the complement of the query is warranted (for the upper limit). In our example, the result for query is ; the details of this calculation are as follows:

Subprograms induced in each possible world:

- –

- –

- –

- –

Query is, thus, clearly warranted only in world , while its complement () is warranted in and

Probability interval calculation: Result:

The resulting probability interval represents two kinds of uncertainty: the first, called probabilistic uncertainty, arises from the environmental model since we have a probability distribution over possible worlds; the second, epistemic uncertainty, arises from the fact that we we generally have a probability interval instead of a point probability, which happens when there are worlds in which neither the query nor its complement are warranted (as is the case of world above).

Having presented the preliminary concepts, in the next section, we illustrate the application of DeLP3E in a cybersecurity domain.

3. Cyberthreat Analysis with DeLP3E

We now present a use case leveraging several datasets developed and maintained by the MITRE Corporation (a not-for-profit organization that works with governments, industry, and academia) and National Institute of Standards and Technology (NIST) (MITRE datasets: ATT&CK (

https://attack.mitre.org, accessed on 21 August 2022), CAPEC (

https://capec.mitre.org, accessed on 21 August 2022), and CWE (

https://cwe.mitre.org, accessed on 21 August 2022). NIST manages the National Vulnerability Database (NVD) (

https://nvd.nist.gov, accessed on 21 August 2022) that includes CVE and CPE).

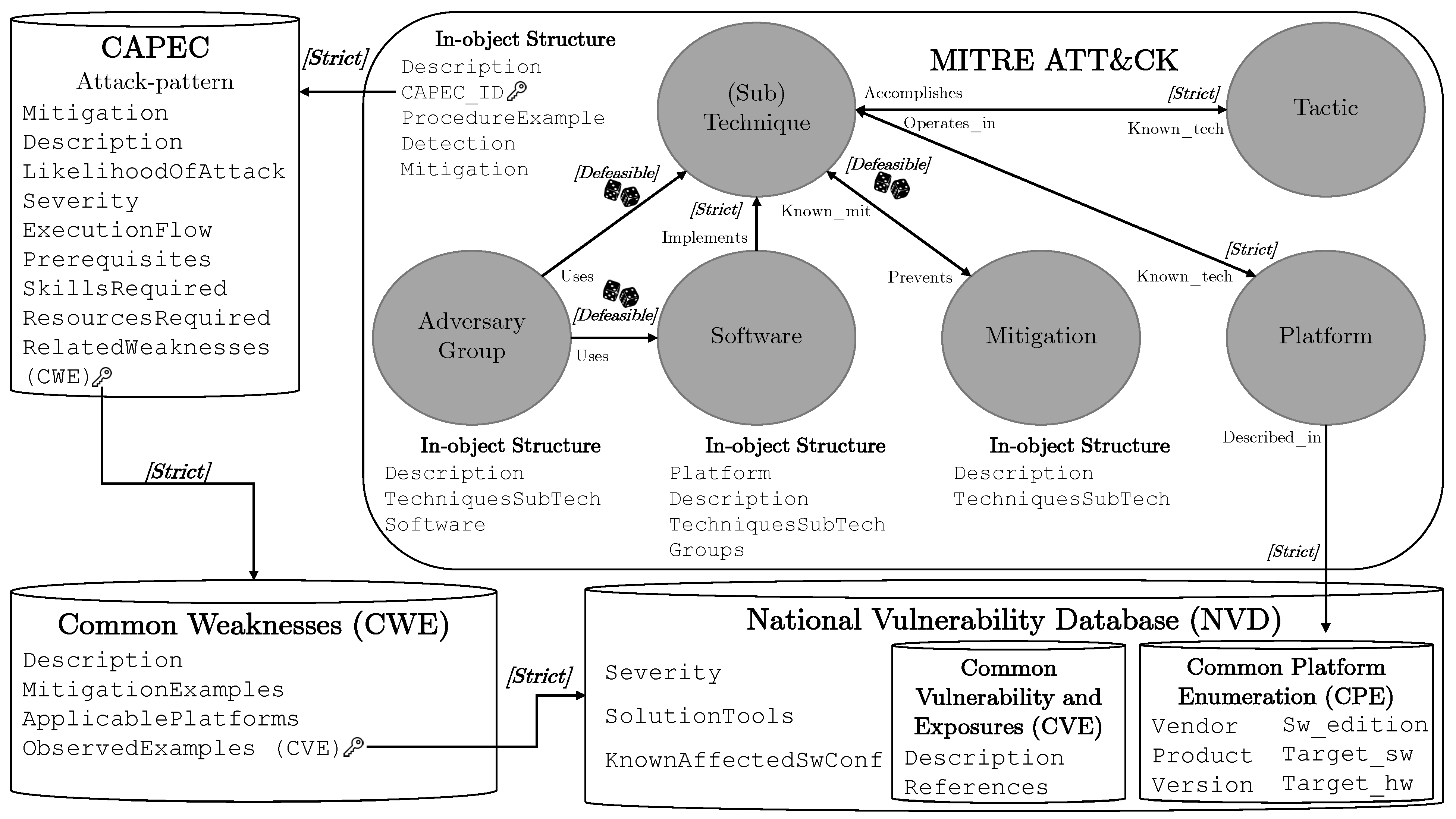

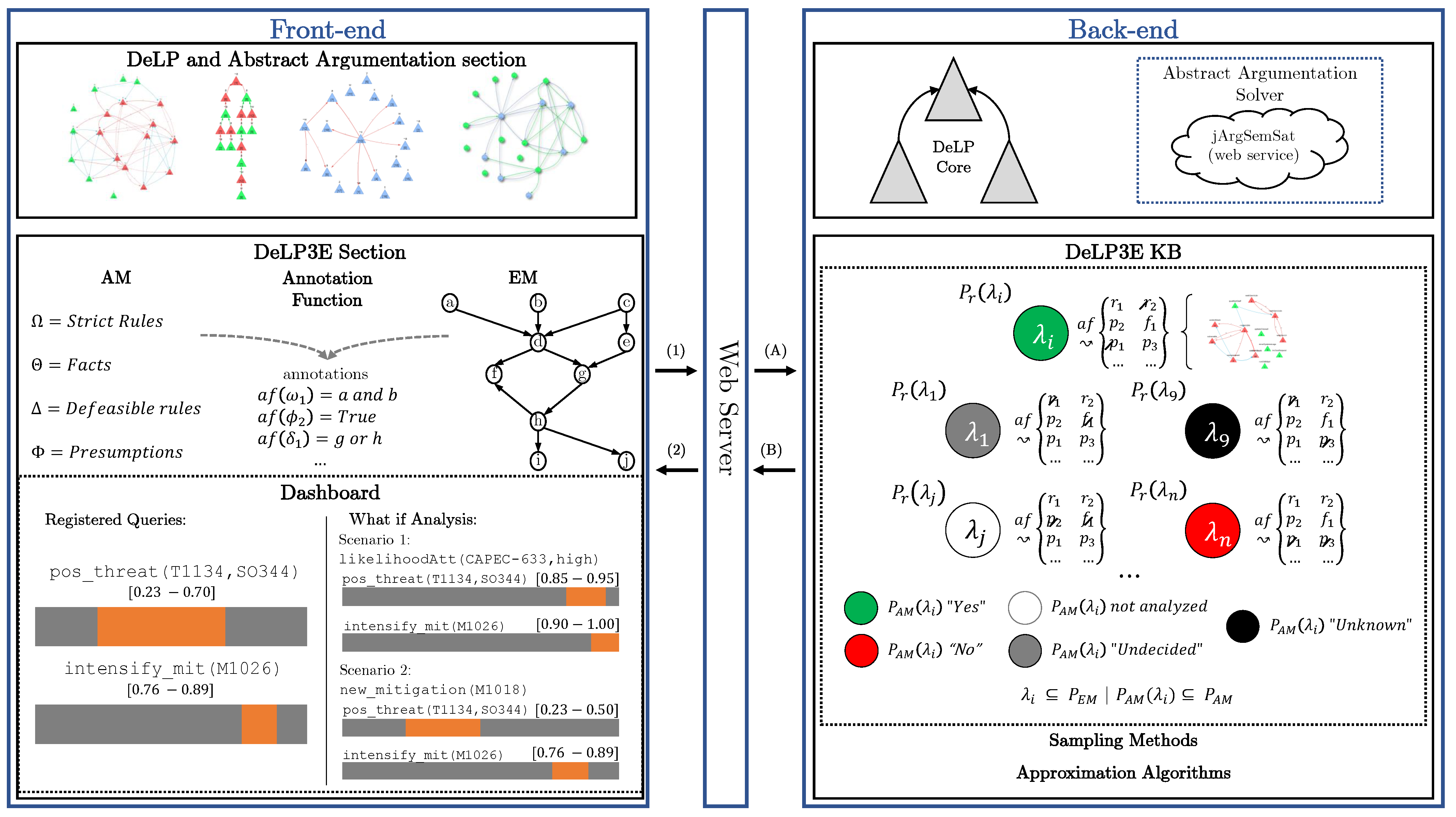

Figure 2 shows an overview of our approach. We first describe the basic components and then show how the DeLP3E components are specified, along with two queries for addressing specific problems in the CTA domain.

The ATT&CK model is a curated knowledge base and model geared towards adversarial behavior in cybersecurity settings; it contains information on the various phases of an attack and the platforms that are most commonly targeted. The behavioral model consists of several core components:

- (i)

Tactics, denoting short-term tactical adversary goals during an attack.

- (ii)

Techniques, describing the means by which adversaries achieve tactical goals.

- (iii)

Subtechniques, describing more specific means at a lower level than that of techniques by which adversaries achieve tactical goals.

- (iv)

Documented adversary usage of techniques, their procedures, and other metadata.

The supporting datasets provide information on attack patterns (Common Attack Pattern Enumeration and Classification—CAPEC), software and hardware weakness types (Common Weakness Enumeration—CWE), and the National Vulnerability Database (NVD). The latter is a rich repository of data; here, we distinguish two subsets including data about vulnerabilities (Common Vulnerabitlities and Exposures—CVE) and platforms (Common Platform Enumeration—CPE).

Figure 2 shows the information provided by each dataset, and how they are related to each other via foreign keys. For instance, attack techniques included in ATT&CK link to entries in CAPEC, which in turn link to CWE and NVD. We augmented this structure with two features towards deriving a DeLP3E KB. First, we labeled connections between datasets (and components within ATT and CK) with either “[

strict]” or “[

defeasible]”, indicating the type of knowledge being encoded. For instance, observed examples of a weakness included in CWE are linked to CVEs included in the NVD as strict, since this is well-established knowledge. On the other hand, mitigation strategies are linked to techniques as defeasible knowledge, since the relationship between the two is tentative in nature. The second feature, which appears in the figure as a small icon depicting a pair of dice, indicates relationships that are subject to

probabilistic events. For the purposes of this use case, we label all defeasible relations in this way.

We used all this information to create the AM, EM, and annotation function, and create a DeLP3E KB; an introductory example is shown in

Listing 1. On the left-hand side, we have the elements of the AM that can be used to create arguments for and against conclusions; for instance:

, with

, with

,

Listing 1. Left: DeLP program that comprises the AM. Right: Annotation function.

- Θ

- Ω

- Φ

- Δ

mitigation(M)

tech_subtech(T_ST)

adv_group(G)

adv_group(G)

tech_in_use(T_ST), soft_in_use(S)

prev_techsub(T_ST)

known_mit(M), tech_in_use(T_ST),

likelihoodAttack(T_ST, high)

The former indicates that

account discovery is used as an attack technique, since the advanced persistent threat group 29 (APT29, also known as Cozy Bear) is active and uses it. The latter refers to the use of

credential access protection as a mitigation technique to prevent the use of

OS credential dumping. This is a clear example of an argument that involves uncertainty, since credential access protection is not a foolproof endeavor. An example of this is the well-known

Heartbleed vulnerability (CVE-2014-0160) that affected OpenSSL implementations, leaving them open to credential dumping. For reasons of space, in this simple example, we only label AM components with probabilistic events (

–

; elements with no annotation are simply labeled with

true) and do not describe how they are related in the EM. One example could be to simply assume pairwise independence (as in many probabilistic database models [

26]), or a Bayesian network [

27], as described in

Section 5.

Queries. We lastly present two queries that we revisit in the next section:

:

What is the probability that access token manipulation (technique T1134) uses leveraging the Azorult malware (software id SO344) to attack our systems?

:

What is the probability that privileged account management (mitigation strategy M1026) should be deployed? M1026 mitigates T1134.

In the next two sections, we discuss the design of a software system for implementing this kind of functionalities based on DeLP3E, and a preliminary evaluation of query answering in DeLP3E via sampling techniques.

5. Empirical Evaluation

We now report on the results of a preliminary empirical evaluation designed to test the effectiveness and efficiency of a world sampling-based approximation to query answering in DeLP3E. We used Bayesian networks for the EM and sampled directly from the distributions they encode. The experiments focus on varying three key dimensions: number of random variables (which determines the number of possible worlds), number of sampled worlds, and the entropy of the probability distribution associated with the EM. Intuitively, entropy is a measure of disorder. For probability distributions, it measures how “spread out” the probability mass is over the space of possible worlds, so a low value indicates a highly concentrated mass. Extreme cases thus range from a single world having probability one, to all worlds having the same probability.

All runs were performed on a computer with an Intel Core i5-5200U CPU at 2.20GHz and 8GB of RAM under the 64-bit Debian GNU/Linux 10 OS. Probability computations were carried out using the pyAgrum (

https://agrum.gitlab.io, accessed on 21 August 2022) Python library.

5.1. Experimental Setup

All problem instances (DeLP3E knowledge bases and queries) were synthetically generated to be able to adequately control the independent variables in our analysis. To obtain an instance, we first randomly generate the AM as a classical DeLP program with a balanced set of facts and rules; rule bodies and heads are generated in such a way as to ensure overlap, in order to yield nontrivial arguments (see [

31] for details on such a procedure). The general design of the program generator consists of the following steps:

Generating the basic components on which the more complex structures are created, that is, facts and assumptions are generated first.

Arguments are organized in levels, where each level indicates the maximal number of rules used in its derivation chain until a basic element is reached.

Dialectical trees are generated only for top-level arguments because they have a greater number of possible points of attack, given that they have more elements in their body.

For the Bayesian networks in the EM, we randomly generated a graph on the basis of the desired number of EM variables (and a random number of edges set to the number of nodes as a maximum) using the

networkx library (

https://networkx.github.io, accessed on 21 August 2022). To control the entropy of the encoded distribution, we took each node probability table entry and randomly choose between

true and

false; then, we randomly assigned a probability to that outcome in the interval

, where

is a parameter varied in

.

Annotation functions are lastly randomly generated by assigning to each element in the AM an element randomly chosen from the set of (possibly negated) EM variables plus “true” (AM elements annotated with true hold in all worlds).

Quality Metric. Given a probability interval

, we used the following metric to gauge the quality of a sound approximation

(that is

always holds):

Intuitively, this metric calculates the probability mass that is

discarded by one interval in relation to another. The resulting value is always a real number in

, where a value of zero indicates the poorest possible approximation (

, which is always a sound approximation for problem instance), and a value of 1 yields the best possible approximation, which corresponds precisely with the exact interval. Thus, we generally apply this metric by using the result of the exact algorithm in the numerator and an approximation in the denominator.

5.2. Results

Figure 4a shows the average running time taken

per sample over all configurations based on a set of 100 runs. We calculated the running time in this manner to adequately compare the times for the different EM sizes. Even though the impact of this dimension on individual running time is not significant, it may become so when sampling hundreds of thousands of worlds. For example, consider the difference between running time per sample for 1 billion worlds vs. 1 million worlds:

s; for a sample size of 100,000 worlds, this difference amounts to 2.55 s. In the third column, we include an estimation of running times of the brute-force algorithm based on these values. Both running times are worst-case since optimization is possible (for instance, in our system we avoid recomputing warrant statuses of induced subprograms for which these values had been computed).

Figure 4b shows results concerning approximation quality; the metric was calculated with respect to the exact result for up to 20 EM variables (≈1M worlds). For the case of 30 EM variables (≈1B worlds), we approximated the metric using 250,000 worlds (which amounts to approximately

of the set of possible worlds), since the exact algorithm becomes intractable for instances of this size.

The following general observations arise from these results:

First, sampling larger sets of worlds leads to higher quality approximations. Though this is expected, there are two interesting details:

For the 20 EM variable case, the quality obtained by 5000 vs. 10,000 samples was not statistically significant (two-tailed two-sample unequal variance Student’s t-tests yielded p-values greater than 0.08 for and greater than 0.16 for ), which means that only 5000 samples sufficed to obtain a good approximation.

The proportion of repeated samples (i.e., wasted effort) was quite high for both entropy levels; for (higher entropy) on average 52% of samples were repeated, while for (lower entropy), an average of 87% were not unique. For the 20 EM variable case, the quality levels were achieved with only 2293 and 469 unique samples, respectively. Larger sample sizes also lead to lower variation in quality (shorter error bars).

Next, entropy noticeably impacted solution quality (except for 10 EM variables, the smallest setting). Since our approximation algorithm samples worlds directly from the BN’s distribution, it is natural to observe better effectiveness with lower (less spread out) entropy distributions. A smaller number of worlds represents a larger portion of the probability mass.

Lastly, even for higher values of entropy, we observed adequate quality levels for modest numbers of samples compared to the size of the full sample space.

These results shed light on the applicability of P-DAQAP on real-world problems such as the CTA use case, given that relatively low numbers of effective (i.e., nonrepeated) samples yield good approximations of the exact values.

5.3. Results in the Context of Practical Applications

We now analyze the results we obtained in these experiments in the context of the MITRE ATT and CK data that we focused on for our use case in

Section 3. For the purposes of this brief analysis, let us consider the Enterprise segment of the dataset, which contains 191 techniques and 385 subtechniques, and this translates into a large number of constants that would certainly lead to an intractable probabilistic model if tackled directly. Fortunately, there is a well-understood independence relation among such techniques, and they can, thus, be effectively pruned depending on the tactics to which they are associated. For instance, the

Privilege Escalation tactic (TA0004) that we refer to in the use case has 13 associated techniques, while the rest of the techniques in the dataset associated at most 30 (with the exception of

Defense Evasion (TA0005) that has 42, though additional filtering according to the specific operating system in question allows to bring this number down significantly). Our preliminary results therefore show that having the capacity to scale to 30 EM variables is within the realm of this kind of application, though further efforts are required to effectively arrive at submodels derived from the general one that can be used to solve specific query answering tasks. In this same vein, there are multiple research and development efforts to manipulate, adapt, and export data and knowledge from the ATT and CK dataset [

32,

33,

34,

35].

6. Conclusions and Future Work

We presented an extension of the DAQAP platform to incorporate probabilistic knowledge bases, giving rise to the P-DAQAP system, which is, to the best of our knowledge, the first system of its kind for probabilistic defeasible reasoning. After discussing the details of its design and describing applications to cybersecurity, we performed an empirical evaluation whose goal it was to explore the effectiveness and efficiency of world sampling-based approximate query answering. Our study showed that the entropy associated with the probability distribution over worlds has a large impact on expected solution quality, but even a modest number of samples suffices to reach good-quality approximations. Compared to classical (nonprobabilistic) approaches, the results of our experiments show that P-DAQAP allows for representing, effectively and efficiently reasoning with different types of uncertainty, modeling complex domains in more detail, and providing more informed answers that can be accompanied by explanations. In critical environments, having outputs of this kind increases credibility and trust in the system by its users.

Future work involves carrying out a broader evaluation investigating other sampling methods, avoiding repeated samples, and testing other probabilistic models. One of the goals of this research line is to develop a method to guide knowledge engineering efforts on the basis of domain features, requirements in terms of expressive power, approximation quality, and query response time.