1. Introduction

Today’s world is increasingly characterized by technological advancements. One of the most striking components of digitalization perhaps comes in the form of the ever more popular artificial intelligence, many times appearing in different forms of embodiment, that is, as robots.

In order to prepare coming generations for a future in which robots are just an integral part of every day life, we designed, implemented and conducted a teaching project for an early robotics introduction for children at kindergarten age. We have a substantial background in teaching robotics at the university level (both in Bachelor and in Master courses). We also gave summer courses and held one-day events for school students earlier already. We now thought about extending these previous excursions into pre-university education to even children in pre-school and kindergarten. While we do have experience with teaching robotics, this is focused on higher education. We are not educators for pre-school or kindergarten and this report is not a study. Hence, the contribution of this work is more on the technical implementation and the lessons learnt.

To this end, we took our basic robotics introduction course and tried to boil it down to the core principles. We made an effort to illustrate these principles so that young children can relate as much as possible. Then, we tried to spice things up in terms of how we confer the content. More precisely, we enrich the ‘lecture’ part of the event with videos and animation. Furthermore, we let a real robot, namely Softbank’s Pepper, do some essential parts of the lecture. Finally, the event is concluded by a quiz. Again, to make things more interesting and entertaining, we tried to come up with something engaging and fancy. The quiz is implemented in the form of a robot race competition between two teams. Every team has a robot that moves forward in case of the team giving the right answer and not moving closer to the goal if the answer given is false. The robots used for this quiz were LEGO Mindstorm EV3, built to resemble the robot WALL-E known from the movie.

The rest of the paper is organized as follows. We first give a brief review of related work and set our effort apart from existing work in pre-school robotics education. Then, we describe and explain our conceptual design before we discuss important issues of the implementation. Finally, we analyse and discuss lessons learnt from a pilot instance conducted in a local kindergarten.

2. Related Work

Before going into detail about our approach and pilot run, we review related work in different dimensions. First, we look at the connections between robotics and education. Then, we take a particular look at robots used in pre-school activities. Finally, we review existing applications of the robot platform Pepper that we use.

2.1. Robotics and Education

Robotics is often used as a tool to foster interest and conduct early education in technology and engineering (e.g., [

1,

2]). The main focus lies on K–12 education to engage learners with STEM (Science, Technology, Engineering, Mathematics) subjects. The field of educational robotics is tackling this problem. Several initiatives exist to spark interest in young learners, for instance, the Roberta Initiative [

3], the FIRST Lego League [

4], or RoboCupJunior [

5]. Besides these initiatives, the potential of using robots in school education (for different purposes) is widely acknowledged, see e.g., [

6]. While the mentioned initiatives mainly make use of a project-based approach to support their teaching message, there are also a number of approaches following the constructivism or constructionism approach. For instance, Cejka et al. [

7] describe a learning approach by constructivism following Papert’s approach [

8] for K–12 learners. A review of research trends in robotics education for young children has recently been published in [

9]. This paper gives a thorough overview of the different approaches taken in educational robotics. In our own previous research, we were also engaged in robotics education for K–12 learners or university students coming from a disadvantaged background [

10,

11]. In the work reported on the present article, we now turn to pre-school education.

There is other research related to our approach concerned with the use of telepresence robots in education. Ref. [

12] is exploring how telepresence robots can be used to allow for elderly people to teach students from home. Robots may be operated by children [

13] or may be used to help in overcoming a language barrier [

14]. In [

15], a telepresence robot is used for English tutoring. The tele-teaching robot Engkey is presented in [

16]. It is tele-operated by a human English teacher from a remote site. An overview of telerobot assisted language learning is given in [

17]. In contrast to the works mentioned, we do not intend to make a human act through the body of a robot, but we want the robot to act on its own instead.

2.2. Robots in Pre-School

In this paper, we report on an initiative to spark first interest in the field of robotics at a pre-school level. Rather than setting up a whole curriculum or using robots as a vehicle for teaching STEM subjects, we aim at giving pre-school children a positive experience about the topic “robotics”. They should experience that robotics is an interesting field and that controlling a robot is not some kind of magic, but computer science, engineering, and math. We think it is important to give this experience in a positive, playful way. The potential of educational robotics has been acknowledged earlier, in particular the potential to facilitate curiosity and creativity [

18].

with the curriculum described in [

19]. The focus there is on actually making the children build and program the robot in a seven week course. Our time frame is much shorter: a single event of about two hours. In addition, we concentrate on giving an introduction to robotics only. Our aim is to explain what is behind the robots that children already see in their daily lives and that they will see much more in the upcoming future. This is to spark interest in (the computer science parts of) robotics. It is also to sensitize the children to the complex inner workings of the fancy machines around them. Two novel concepts for educational robotics for kindergartens are presented in [

20]. Both concepts are oriented towards hands-on experiences with robots. While one looks at the effect of robotics training on the cognitive processes, the other focuses on cross-generational aspects. Ref. [

21] reports that robots can be a captivating tool and that they increase children’s desire to explore. Preschoolers have been found to be very open towards robots earlier already and even integrate (very simple) robots in their social play [

22]. The effectiveness of robot-assisted language learning for pre-schoolers is studied in [

23].

A different line of research is using robots as a tool for storytelling. For example, storytelling with a kindergarten socially assistive robot is presented in [

24]. The underlying idea is to engage young children in educational games with a robot. The results suggest that this activity improves the children’s performance and that children enjoy the interaction very much. Another interesting work in the field of kindergarten education with robots is [

25]. The authors show that better learning results can be achieved by motivating pre-school children to solve algebra exercises when robots are supporting the learning activity. Ref. [

26] reports on a number of interaction applications with pre-school children with the robot NAO. In the conclusion, they mention that their NAOqi apps could also be used for the robot Pepper, which was used in our work. Ref. [

27] suggests that using a socially assistive robot in pre-schooler learning activities increases engagement as well. While it is interesting to analyse the behaviour of robot-child interactions as is done in [

28], this not the aim or a contribution of the work presented here. We also do not conduct a larger study in any sense. Instead, we focus on the technical implementation of using robots to convey basic robotics concepts to pre-school children and evaluate this in a pilot run.

2.3. Socially Interactive Robot Platforms

As motivated already, we want to use a robot to conduct an early introduction to robotics for children in kindergarten. There is a large choice of robot kits that are commonly used to let young learners build and program robots. Our focus, however, is to offer an interesting yet informative experience on basic robotics principles which is why we chose to let a robot itself conduct large parts of the presentation. Softbank Robotics’ Pepper robot (

Figure 1) is an ideal platform for this endeavour. While the robot hardware is not very sophisticated, it is very appealing for humans to interact with it.

Pepper was designed as a human–robot interaction (HRI) platform with a number of applications. For instance, the H2020

MuMMER project (

http://mummer-project.eu) is using Pepper for their HRI activities [

29]. Some of the main applications are information kiosk applications in large shopping malls. Results of how humans react to Pepper have been published, for example, in [

30]. The most related work to ours is [

31] where Pepper is used for remote teaching activities. The results convince that Pepper is a good platform for HRI particularly when dealing with children. Apart from that, Pepper has been used in a number of different applications such as museum tourguides or information kiosks at airports or hospitals (e.g., [

32,

33,

34,

35,

36,

37]). Following an analysis in [

38], pepper is a good choice in terms of an affective antropomorphic platform. In fact, Pepper has already been chosen as a (successful) teaching tool for young children before [

39].

3. Conceptual Design

3.1. Course Preparation

As a first step in our endeavour, we posed ourselves a set of questions in order to design an event that would meet our vision sketched earlier. First, we thought about what is important in robotics and what (part of this) we want to and what part we are able to convey to the children at kindergarten age. Secondly, we looked at which sub-audience(s) at kindergarten age we can address with these topics and how heterogeneous this audience may really be. Then, we examined how we can make the children relate to what they should learn and understand. Finally, we considered how the event can be entertaining and informative at the same time and how we can assess what children really memorized and learned.

With the educators of the childcare facility, we coordinated and discussed important points about the lecture in the facility. They pointed out that the form of a lecture with frontal teaching was very new to the children. They were curious how the children would be able to follow a frontal lecture. As an action of support, they proposed that all the children should bring their chairs into the gym where the lecture took place. This action should discipline the children. As another important issue, the educators pointed out considering the shorter attention span of the children to include sections in the lecture where less attention is needed and some activation of the children takes place. For instance, we included video sequences of failures of robots, e.g., robots would tip over while trying to open a door, and incorporated a quiz section at the end of the lecture. As another educators’ remark, which was also on our check list, we should choose all examples from the experience world of the children. While our initial intention was to present the lecture only for final year pre-school children, we were asked by the educators to open the lecture also for younger children of the facility. The educators were aware that w.r.t. attention span and discipline, this could bare certain risks for conducting the lecture, but they wanted to also expose younger children to the social robots.

In the remainder of this section, we will present and discuss further details in three blocks, namely in subsections on content, form, and quiz.

3.2. Content

As a first step, we had to select what the most important elements of robotics are that we want to convey to young children. Drawing from previous experience in higher education teaching in robotics, we distilled that, for any presentation on mobile autonomous robots, we need to include information on the robot hardware itself and how the system knows where it is and how it gets from A to B to solve its task. In more detail, the following agenda builds the basis for our education event:

Introduction

Sensors

different sensors available in general,

particular sensors of the robot Pepper,

at least one sensor (concept) in more detail,

Perception/Mapping

different kinds of maps,

building a map,

Localization

Navigation

While many of the topics could be detailed in arbitrary depth, we want to get across the main idea of the basic concepts. Given our target audience, this requires a specific form of presentation.

3.3. Form

To be able to convey the content above to children at kindergarten age, we needed to find examples and explanations that the children can relate to. Furthermore, almost none of the children in our target audience is able to read yet. Hence, using text is not an option in most cases, and even images such as pictograms have to be chosen carefully.

One of the main decisions we made here is to let a robot take over for presenting the content for large parts of the event. This appeared useful for several reasons. For one, the fascination of a machine talking to the children should raise the attention. For another, the information appears even more credible if it is presented by the very machine that the information is about. Finally, reading out textual information solves part of the reading issue mentioned above.

Since even the most sophisticated robots can not keep up with the variability and fallibility of humans, let alone young children and a robot would not have sufficient flexibility to handle any situation that might appear, the parts given by the robot are interleaved with human moderators for answering questions and handling unexpected events.

Even though robots present an exciting element to (very) young children, the total time of the event must be carefully chosen not to overload the audience. In addition, the attention span of children at kindergarten age is pretty limited. There are rules of thumb that children can concentrate according to the formula:

Attention span for learning =

chronological age + 1 min. Others estimate the ability to concentrate for learning in the age group between 5–7 years with about 15 min [

40]. Earlier studies found that children at kindergarten age can concentrate on playing with a toy they like for not much more than half an hour [

41]. Admittedly, these figures are for focusing on home work exercises and playing with a toy—following a presentation is a different thing. An additional disturbance factor for the attention span of the children was the form of presentation. The children in that age group do not have much experience with frontal teaching. They need to get used to the new situation first. This is why we chose to brighten up the content with some rather shallow elements such as fun videos, e.g., robots falling over, where the concentration levels could drop again and the children could relax.

For the content itself, we tried to come up with examples and illustrations that as many of the children can relate to as possible. We will give a set of examples of these choices in the following. For introducing the perception hardware of the robot, we first ask the children about their own senses. Thus, it should be easier to realize that robots need such senses too, just in the form of hardware. As an example sensor, we discuss sonar because it offers two great properties. First, the bat is using sonar to orient itself and many children might have heard this information already. Second, many cars use sonars in their parking assistant systems. Hence, a lot of the children might know the beeping noises that their family car or some other vehicle makes. The latter example is exactly what we are using in explaining how time-of-flight works. Here, the echo metaphor is used as well. Some pictures from the illustration of the sonar sensor are given in

Figure 2.

For explaining the localization (and later the navigation) method, we took a toy horse barn that can be taken apart. By then overlaying a top view picture of the stable with a picture of it partly disassembled with only the walls remaining, we could illustrate a laser-based localization of a robot, in our story, whose job it was to clean the barn. Images from the horse barn illustration are given in

Figure 3.

3.4. Quiz

As with every teaching activity, also at this very young age, we wanted to include some form of test in order to check whether the children have memorized the most important elements of the lecture. To make the quiz more entertaining, exciting, and to invite children for active participation, we opted for a team-style approach that includes robots in a game. The participants are divided into two teams: red and blue. Every team gets assigned one of two identical robots, LEGO Mindstorm EV3 in our case. The two robots are placed on a playing field that resembles a dragstrip style race track. The two lanes are marked with a color each: red and blue respectively. A picture of the race track and one of the robots is given in

Figure 4.

The quiz now works as follows. The teams are asked questions referring to content presented earlier in the lecture. Children can select one of three answers much like in the “Runaround” quiz show (

https://en.wikipedia.org/wiki/Runaround_(game_show)). For every correct answer, the team’s robot will move forward a couple of blocks; for a wrong answer, it will either lift its forklift as like shrugging its shoulders or turn in a circle instead, thus not getting any closer to the finish line.

Apart from offering a very engaging and entertaining element, the quiz offers another opportunity to explain several robotic concepts in practice. After the quiz has taken place, the children can tele-operate the Mindstorm robots with a joystick. In addition, the moderators explain what the robots actually need to do to perform their task. That is, the robots need to read their color sensor’s value to orient on the race track and to decide how long to instruct the motors to turn, etc.

4. Implementation

We were aware that children in pre-school possibly would not be very enthusiastic about listening to university lecturers to teach them the basics of mobile robotics. In order to engage them with this exciting topic which also requires a lot of abstract thinking, we decided to have the mobile service robot Pepper presenting the lecture contents. We further integrated LEGO Mindstorm EV3 robots in our Runaround-style quiz. For each correct answer, the robot belonging to the team that gave a correct answer moved some squares towards the finish line.

4.1. Technical Realization

As for a humanoid service robot, Pepper has quite limited capabilities compared, for instance, to TIAGo [

42], but it has quite an appeal to the humans that interact with it. Pepper comes with a number of basic capabilities such as speech synthesis, speech recognition, or face detection which comes pre-installed or can be downloaded from the Softbank Robotics App Store. However, the appeal of the robot immediately stimulates passers-by to interact with the robot. We learnt this on numerous occasions where we displayed the robot to the broader public.

In particular, it comes with a mode that is called

autonomous life where Pepper reacts to a set of sentences answering questions and making human-like gestures while speaking. While the speech detection is quite limited in crowded human-populated environments, Pepper can, nonetheless, be used quite well as an information kiosk.

Figure 1 shows that it is equipped with a touch panel that can be used to display information and request user inputs. Information can be exchanged via an HTTP webserver.

In our setup, we use the robot middleware ROS [

43] to interact with the sensors and actuators of the robot. We bridge the NAOqi API (

http://doc.aldebaran.com/2-5/index_dev_guide.html) with ROS making use of the

rosbridge (

http://wiki.ros.org/rosbridge_suite) package. This allows us to access Pepper’s motors and further built-in capabilities from within the ROS eco-system. In the ERiKA lecture, we particularly made use of Pepper’s built-in gestures and its speech synthesis module. The whole content of the lecture was prepared as an HTML-5 slide show making use of the

reveal.js (

https://revealjs.com/) slide presentation system which is implemented in Javascript. In addition to a number of nice presentation features such as slide transitions and overview slides, it comes with a multiplex feature, which allows for controlling the presentation from different hosts. This way, we could either advance the lecture and select the next slide from the touch display installed at Pepper, or from an external presentation laptop.

ROS was connected with some additional Javascript code via the

ROS Javascript library (

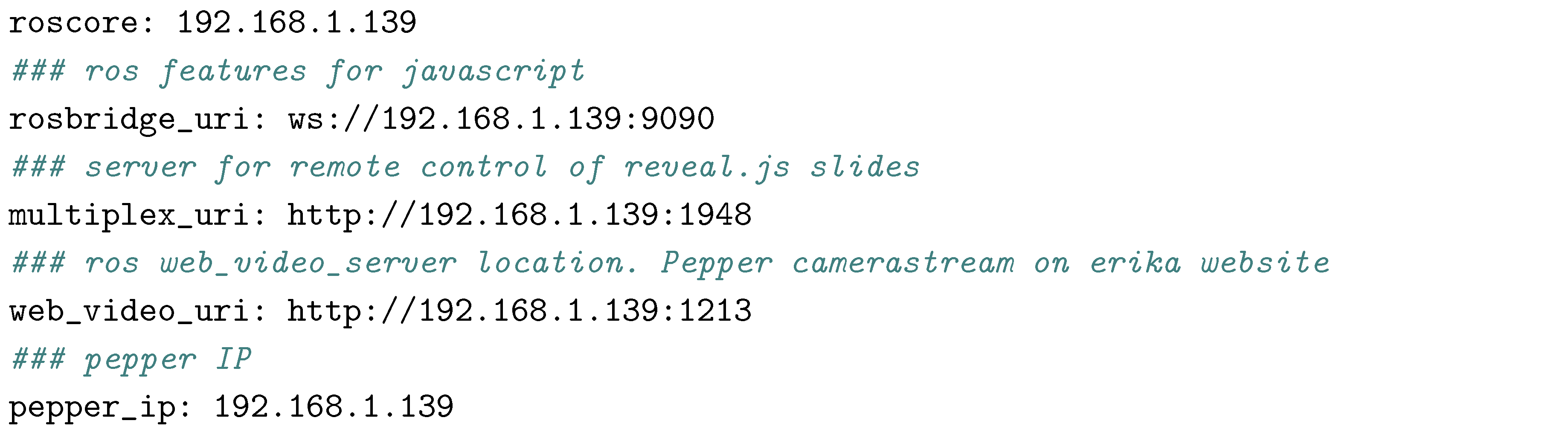

http://wiki.ros.org/roslibjs). With this library, we were able to communicate user inputs from the tablet to the ROS high-level application and vice versa to display live camera images from the robot in the ERiKA presentation. To connect the browser with ROS, the rosbridge needs to be connected on the system. In our configuration, the following hosts and ports were used:

![Mti 02 00064 i001]()

At the startup of the presentation, one needs to connect to the rosbridge calling the

ROSLIB initialisation function:

![Mti 02 00064 i002]()

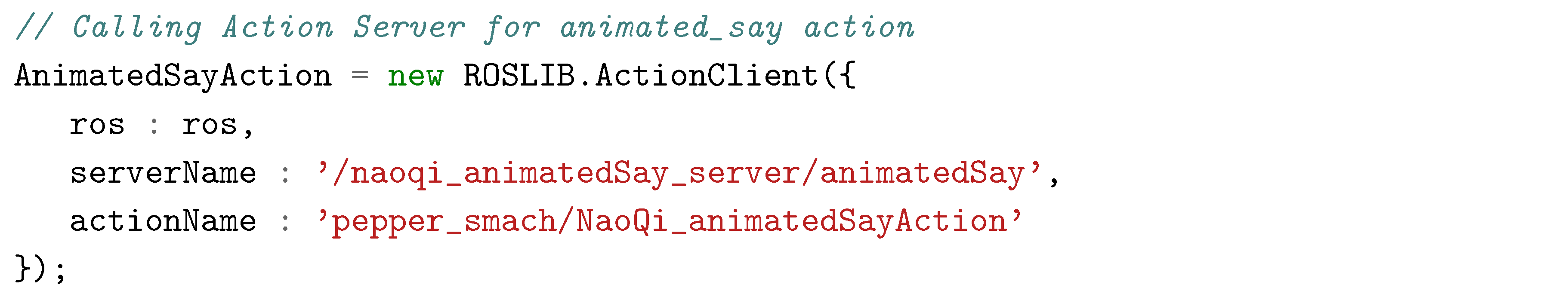

As mentioned before, we mainly used the

animatedSay action from NAOqi for the lectures. This was encapsulated as a ROS action. The respective Javascript code for starting the ROS action is:

![Mti 02 00064 i003]()

For the children, it was very exciting that Pepper (with some extra Javascript and ROSLIB implementation) was able to read out all the content that was presented on the slides. This is in particular an important feature, as pre-school children are, in general, not able to read.

For the quiz, two teams of pre-school children had to compete against each other: the blue team against the red team. As described in

Section 3.4, for each question, three different answers could be selected. The quiz was organized as a race between two LEGO Mindstorm robots (see

Figure 4). For each correct answer, the robot moved a (couple of) square(s) forward, for an incorrect answer, it simply lifted its forklift as like shrugging its shoulders or it turned in a circle. A team answering all questions correctly would reach the finish line.

Technically, this was realised as follows. The EV3 can be controlled under the Robot Operating System (see, for instance,

http://www.ros.org/news/2016/03/ros-on-lego-mindstorms-ev3.html). Using this feature, we were easily able to encapsulate control commands for the LEGO EV3 as ROS actions and make them available via the ROSLIB to our HTML front-end application:

![Mti 02 00064 i004]()

The above code shows the Javascript definiton for the first of the two EV3 robots (called boobe3e_1) to move forward in case a correct answer was selected by one of the teams.

In summary, our system uses the ROSbridge to access NAOqi functionality within ROS. All functions were encapsulated as ROS actions. Furthermore, the ROS Javascript library ROSlibjs allowed us to call NAOqi functions from the HTML front-end that displayed the lecture and the quiz on Pepper’s tablet. Using ROS allowed us also to access and operate the LEGO Mindstorm EV3 robots which were used in the quiz from within the HTML presentation. Here, we made good experiences with the reveal.js slide presentation system which also allowed for running the slide show in a multiplex mode, i.e., control the presentation from an external laptop as well as on Pepper’s tablet. This came in very handy particular for the Runaround quiz, where the two teams of children had to select the correct answer on the tablet. We will give some more information about the quiz in the next sections.

4.2. Pilot Run

As a pilot run, we conducted a first instance of the above concept in a regional kindergarten.

4.2.1. Setup

After arriving at the premises of the kindergarten, we set up the robot Pepper and the Mindstorms for the quiz in the gym. The robots were all connected to a local access point. Laptops were used to run the presentation on a projector and to start up the robots’ software. A picture of the room is given in

Figure 5.

While we were setting up, the pre-school aged children were already given badges to indicate their participation in the quiz scheduled for later. They spent the time colouring these badges to form two equally sized teams: a red team and a blue team. The team badges are depicted in

Figure 6.

4.2.2. Lecture

The children entered the gym bringing their own chairs with them. It took some time for everybody to find an adequate position and for the children to calm down a little. Once everything settled, Pepper began with an introduction. For the course of the lecture, the authors of this paper and Pepper were presenting the slides in an interleaved fashion. While Pepper was ‘reading’ the slides’ content, the human teachers reacted to questions and remarks from the audience and tried to continuously activate the young audience.

4.2.3. Quiz

To give an impression of the quiz, we give two questions and different states of the graphical user interface (GUI) in

Figure 7. The upper row shows two questions in the initial state. Left is a question of how many senses humans have. Right is the question of what localization is about. Children have three answers to choose from. While we tried to illustrate the possible answers with pictures as much as possible, we still read out loud the three options to allow for taking a well-informed decision. After both teams have chosen an answer, a ten second countdown is started (

Figure 7 lower row, left image). While there is time left, the teams can still change their answer. When the time is up, the quiz system automatically highlights the right answer (

Figure 7 lower row, right image) and it initiates appropriate actions with the teams’ robot on the race track. That is to say, if the team gives the right answer, its robot moves forward, if the team’s answer is wrong, its robot does not move forward but instead turns in a circle or only lifts and lowers its fork lift, not getting any closer towards the goal. Luckily, both groups reached the goal line at the same (final) step. This, however, might depend on moderator intervention when asking the questions. As a prize for the contestants in the quiz, we prepared certificates and we gave out little papercraft sheets (taken from

https://www.softbankrobotics.com/emea/en/paper-toys) for building a Pepper figure (at home).

4.2.4. Free Play

After the quiz, we gave pre-schoolers the opportunity to teleoperate the Mindstorm robots with a joystick. Unfortunately, one of the two robots had slight network issues. This is why the controller had to be restarted over and over again every couple of minutes. Nevertheless, children were enthusiastic about steering the robot around in the gym. In addition, we let the children and the educators freely interact with Pepper running the manufacturer-supplied autonomous life mode. We let Pepper say some utterances upon user request as well. Finally, the children (and educators) were also given the chance to take pictures with Pepper and the LEGO robots.

5. Pilot Evaluation

To improve the initial design and its implementation, we rely on the responses and feedback from different stakeholders. We give our own observations as well as children’s and educators’ feedback in the following.

5.1. Observations

During the pilot event and in reflections after the same, the authors made a couple of observations. We briefly report these observations in the following.

The overall reception of the event with children and educators was very good. While the organizational part needed improvising at times, this can be assumed to be normal for a pilot. In the presentation, some of the video sequences appeared to be too long in retrospect. This most likely is due to a smaller attention span with the younger children than expected. Overall, some video clips should rather be split into image sequences. This would allow for a more fine grained interaction with the audience response. For example, stopping the video to react on a remark from one of the children is rather complicated. In the explanation of the time-of-flight concept, we showed a formula of how to calculate the distance of an obstacle from the time needed for the sound reflection to come back to the sensor. This formula appeared too abstract for children at kindergarten age. However, we will probably keep it, but we will add a relation to how waiting for the echo of a clap sound in a large reflective hallway is essentially the same thing.

A general improvement with respect to the quiz could be to introduce intermediate summary slides after every section of the presentation. This allows for a quick repetition shortly after new content has been presented. We hope to increase the memorization of facts and hence also a better performance in the quiz later on.

5.2. Childrens’ Feedback

In the post-processing of the events, the kindergarten collected feedback from the children. We give the translated statements sorted by whether they are positive or not:

Criticism

I didn’t like that one of the smaller robots did not work properly.

It wasn’t so nice that the robots in the video fell over.

It was a pity that Pepper did not drive around.

It was a pity that Pepper did not recognize all commands/questions.

The smaller children should have stayed for the quiz.

Positive feedback

Pepper looked really nice.

It was cool to be able to drive around with the smaller robots and that Pepper talked.

The films were funny.

The quiz was nice.

It was nice that Pepper could talk and that we could see her.

I like that we were allowed to drive around with the small robots.

The quiz was great.

There was a prize.

That we could talk with Pepper.

I can now build a paper-Pepper with my dad.

We could tele-operate the small robots with a joystick.

5.3. Educators’ Feedback

Some time after the pilot run, we also received feedback from the educators of the kindergarten, which we summarize in the following. Overall, the event was received as very fascinating for the children, especially meeting the robot Pepper in person. If one assumes an average attention span of 20–30 min for pre-school children (age 5–6), then the attention and the concentration that could be observed at the event, in particular for the younger children (age 3–4), was extremely high. For the kids, it was an exciting experience with lots of new information to process.

In the setting at the event, it was very important to connect the new content to the children’s reality. Using the children’s prior experiences and using practical examples allows for a better understanding. At kindergarten age, generic terms and concepts are present in a very limited form only. Every activity and video clip was fascinating for the children. The concentration could have been increased even more if the event was given in a smaller group. The younger children posed some kind of distraction. It was ambitious for all children and for the pre-schoolers an amazing challenge. The children are not used to frontal forms of teaching with such large audiences. They know such settings rather from concerts of cinema events where they take on a passive role and expect to be entertained. The quiz and the free play with the robots served as a perfect conclusion of the event.

6. Conclusions

In this paper, we reported on our efforts to design, implement, and conduct an early introduction to robotics for children at kindergarten age. We laid out our conceptual design and methodological considerations drawing from our experience in teaching robotics in higher education institutions. What has been particular challenging was to deal with the shorter attention span, to find examples from the experience world of pre-school children, and to boil down complex contents in a way that children of that age would follow a frontal lecture for over about an hour. It has to be noted that it was the first time for the children to be exposed to frontal teaching in their kindergarten. They usually know learning only in the form of chair circles. This was an additional challenge for the children. Furthermore, to expose more children to the excitement to have a humanoid robot at the premises, children below the age of 5 were also allowed to attend the lecture. This caused additional distractions during the lecture.

In summary, we can state that Pepper was the right choice for presenting the content. This way, we overcame the hurdle that pre-schoolers, in general, cannot read. It also raised the children’s attention. To cater to the more limited attention span of the children, content-wise, we also integrated shallower elements such as video clips from robots tipping over, etc. For the quiz, we used LEGO Mindstorm robots to activate the children for the second hour of the event. Finally, after the quiz, the children could also tele-operate and play around with the Mindstorm robots, which was quite fun for the children. On top of that, each child participating in the quiz received a participation certificate, and all the children got a cut-out sheet to build their own cardboard pepper robot.

We got very positive feedback from the children as well as from the educators. In addition, we learnt our lessons. It was clearly not really adequate to try and teach the formula to calculate the time of flight of an ultrasound signal in that age group. However, when we repeated the lecture with some updated material some weeks later to 4th grade of primary school pupils, the learners were easily able to understand this formula. In summary, this new learning concept was well-received in the local communities. We received a number of requests to repeat the event in different kindergartens and pre-schools.

At the startup of the presentation, one needs to connect to the rosbridge calling the ROSLIB initialisation function:

At the startup of the presentation, one needs to connect to the rosbridge calling the ROSLIB initialisation function:

As mentioned before, we mainly used the animatedSay action from NAOqi for the lectures. This was encapsulated as a ROS action. The respective Javascript code for starting the ROS action is:

As mentioned before, we mainly used the animatedSay action from NAOqi for the lectures. This was encapsulated as a ROS action. The respective Javascript code for starting the ROS action is:

For the children, it was very exciting that Pepper (with some extra Javascript and ROSLIB implementation) was able to read out all the content that was presented on the slides. This is in particular an important feature, as pre-school children are, in general, not able to read.

For the children, it was very exciting that Pepper (with some extra Javascript and ROSLIB implementation) was able to read out all the content that was presented on the slides. This is in particular an important feature, as pre-school children are, in general, not able to read.