Intelligent Human–Computer Interaction for Building Information Models Using Gesture Recognition

Abstract

1. Introduction

2. Related Work

2.1. HCI Methods in BIM

2.2. Sensory Interaction Technology

2.3. Gesture Interaction

3. Gesture Design for HCI in 3D Model Manipulation

3.1. Gesture Recognition

3.2. Gesture Design

4. Gesture Interaction Algorithm for 3D Models

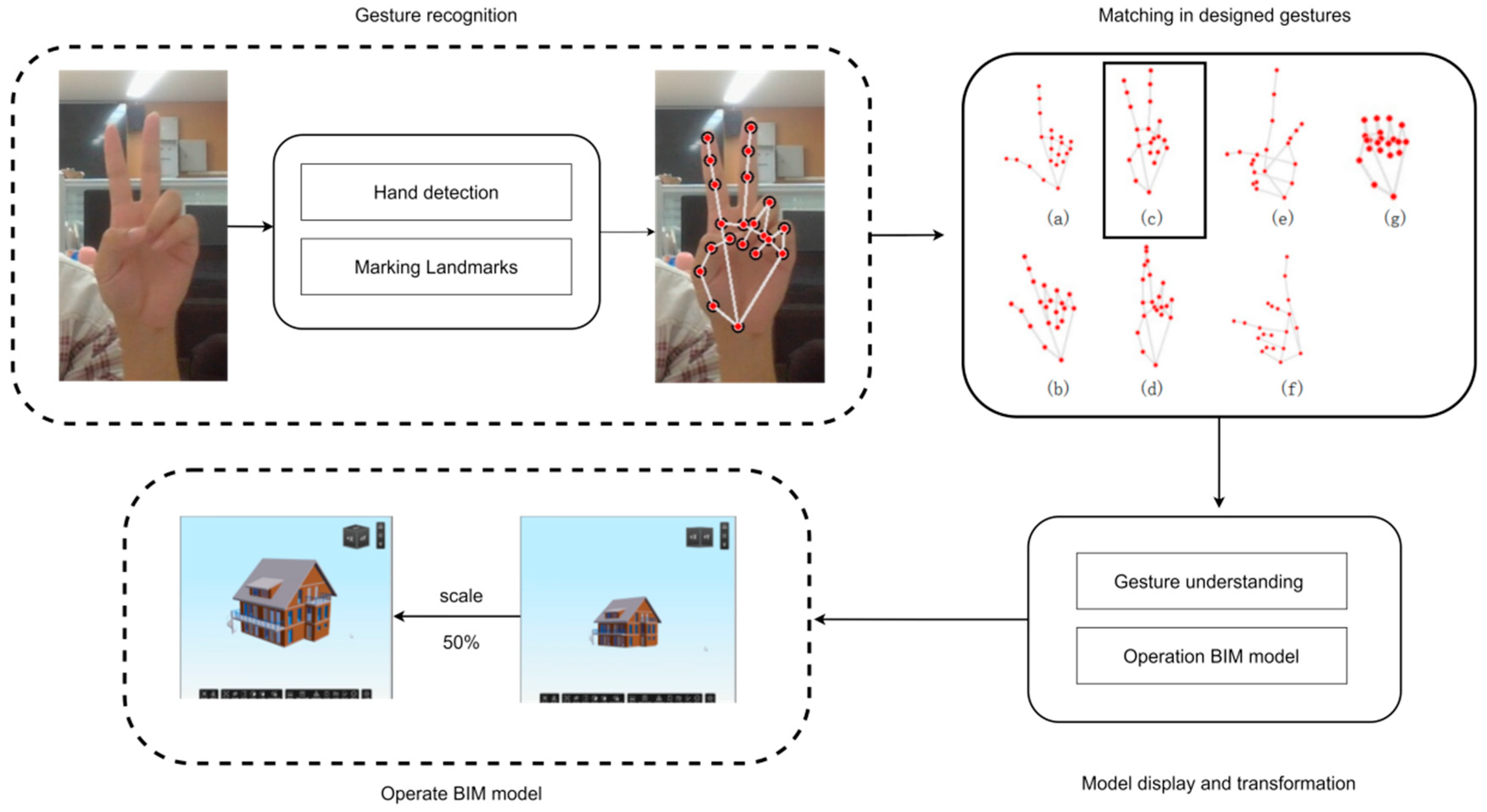

4.1. Overall Framework

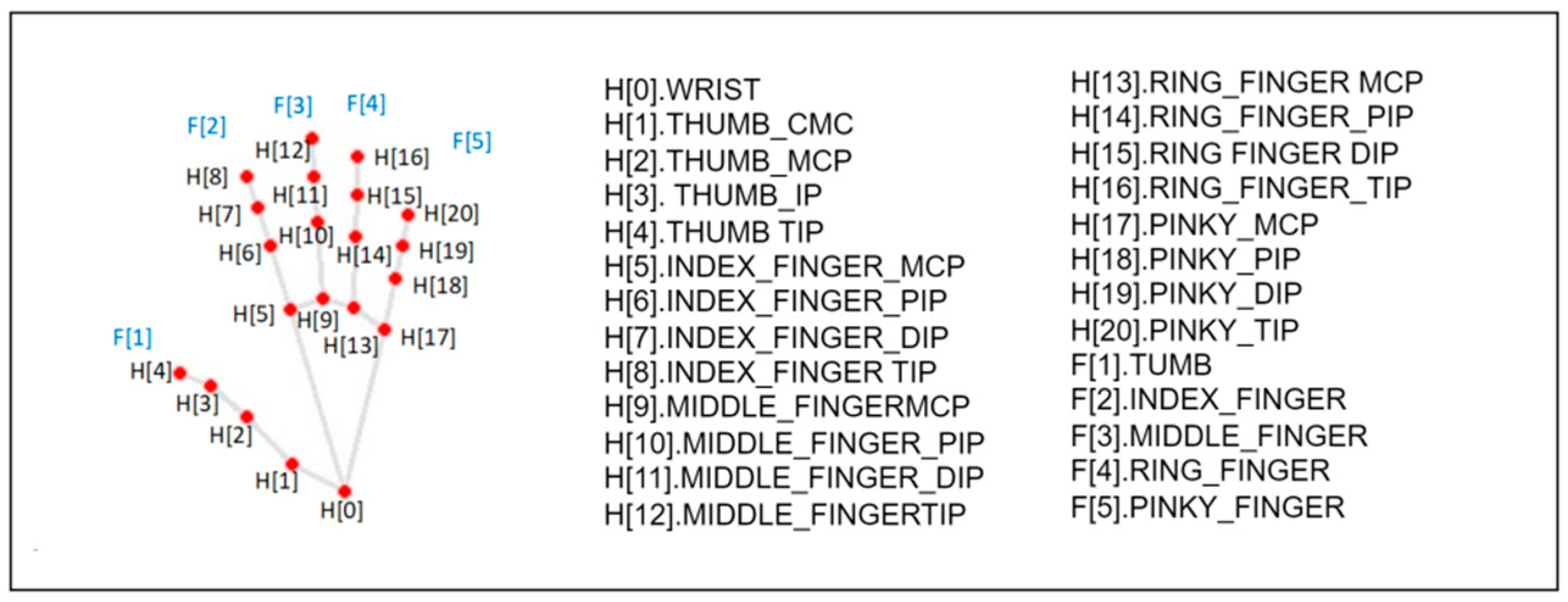

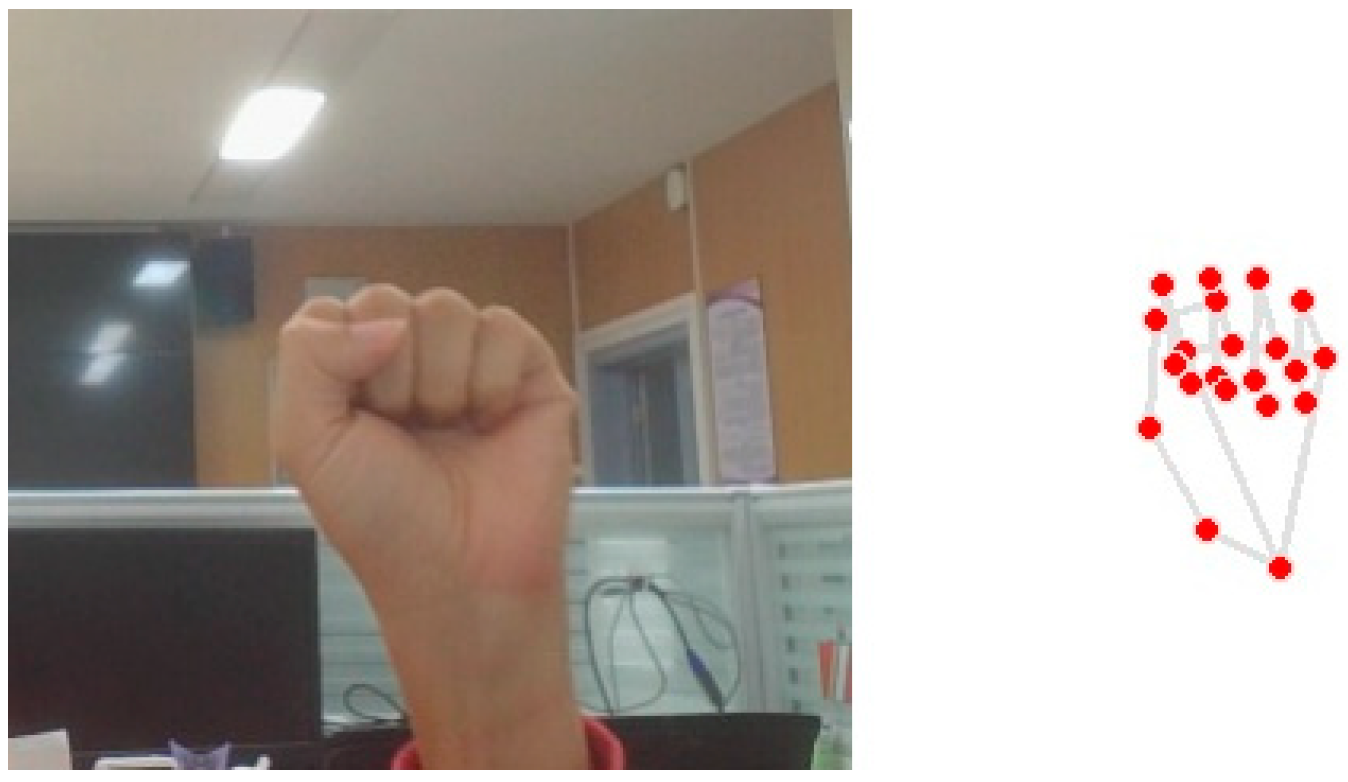

4.2. Hand Landmarks Configuration

- Finger angle computation

- 2.

- Fingertip to Palm Distance Calculation Method

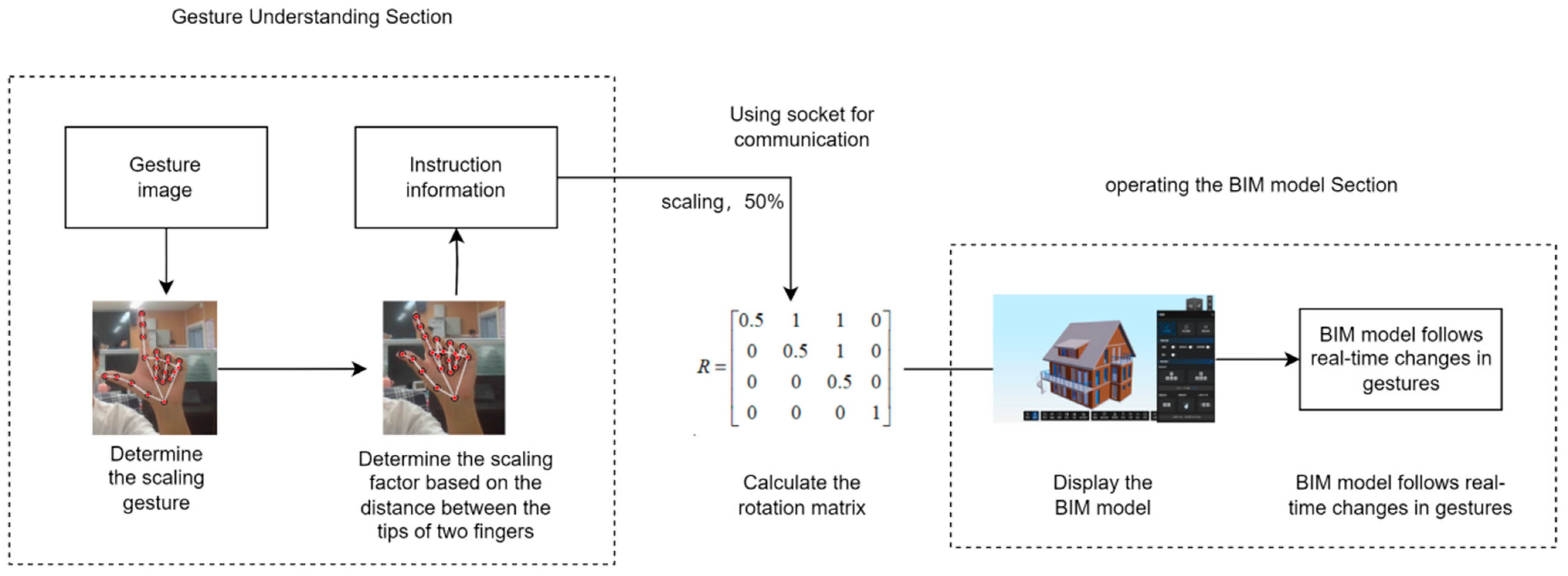

4.3. Gesture Understanding and Model Transformation

4.3.1. Click Gesture

4.3.2. Click Method

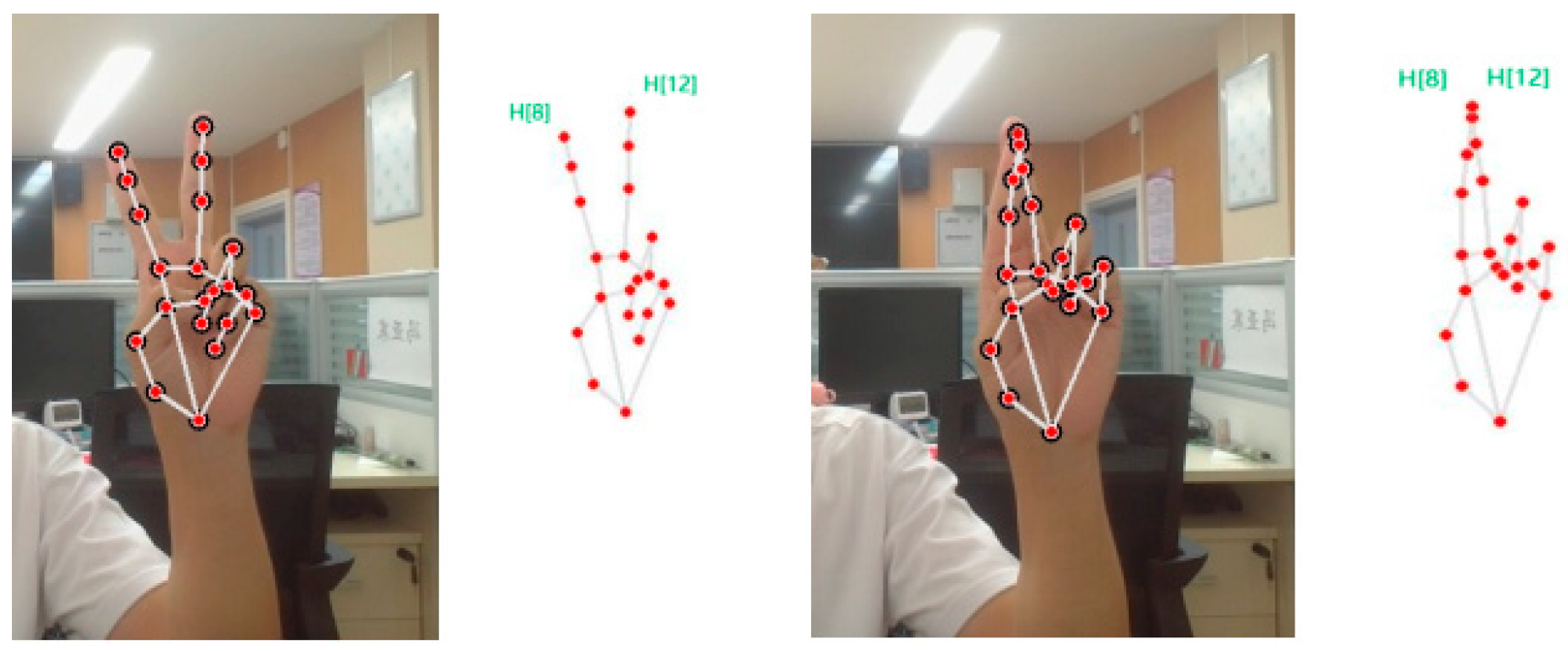

4.3.3. Scale Gesture

4.3.4. Scaling Method

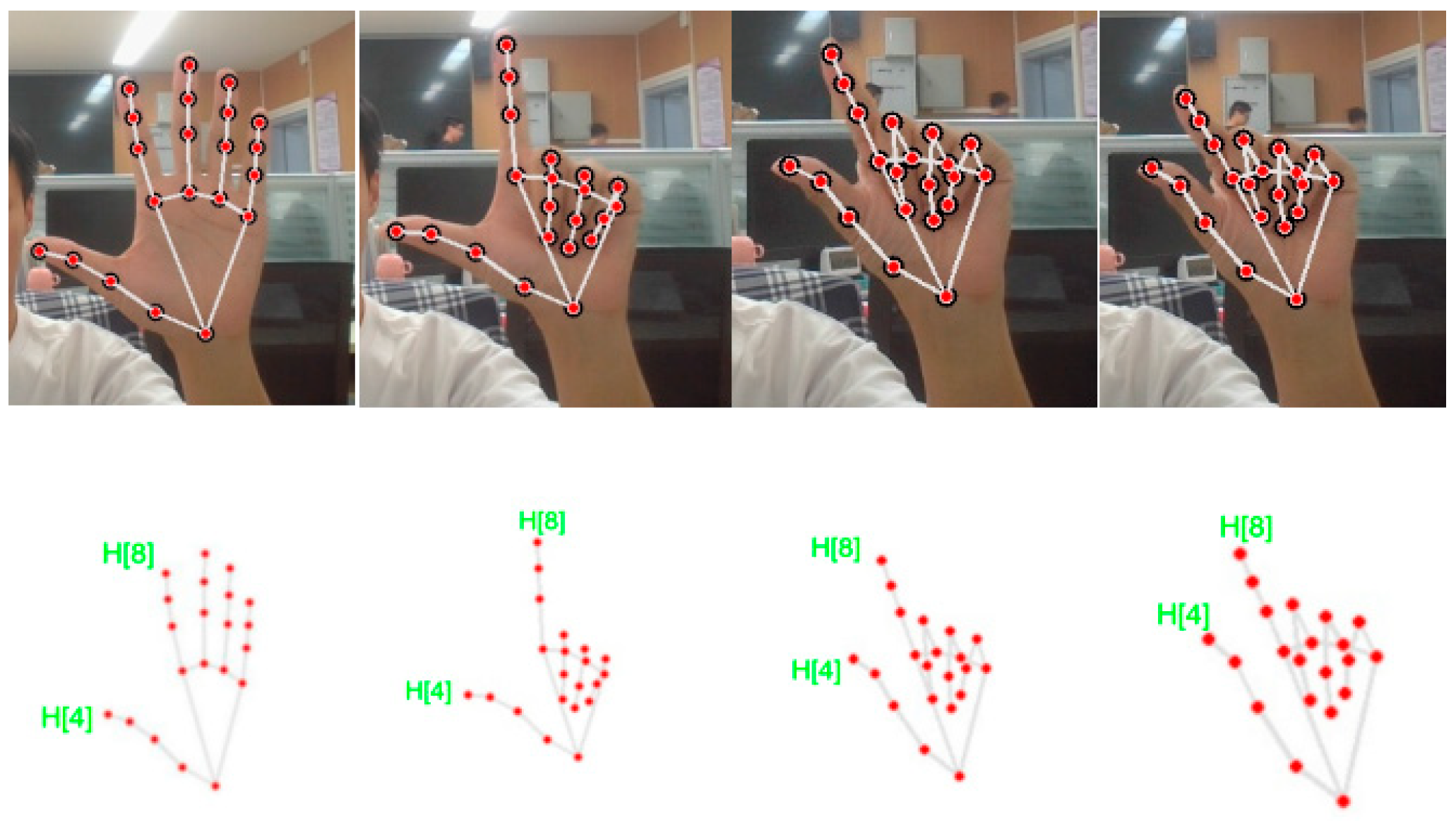

4.3.5. Rotating Gesture

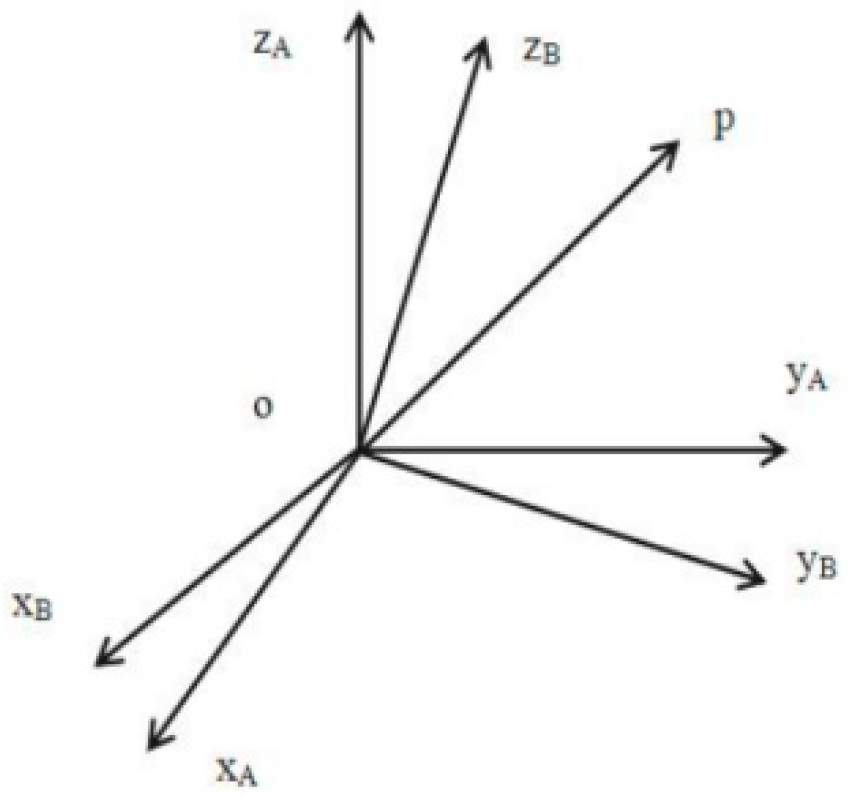

4.3.6. Rotation Method

4.3.7. Panning Gesture

4.3.8. Panning Method

4.3.9. Recovery Gesture

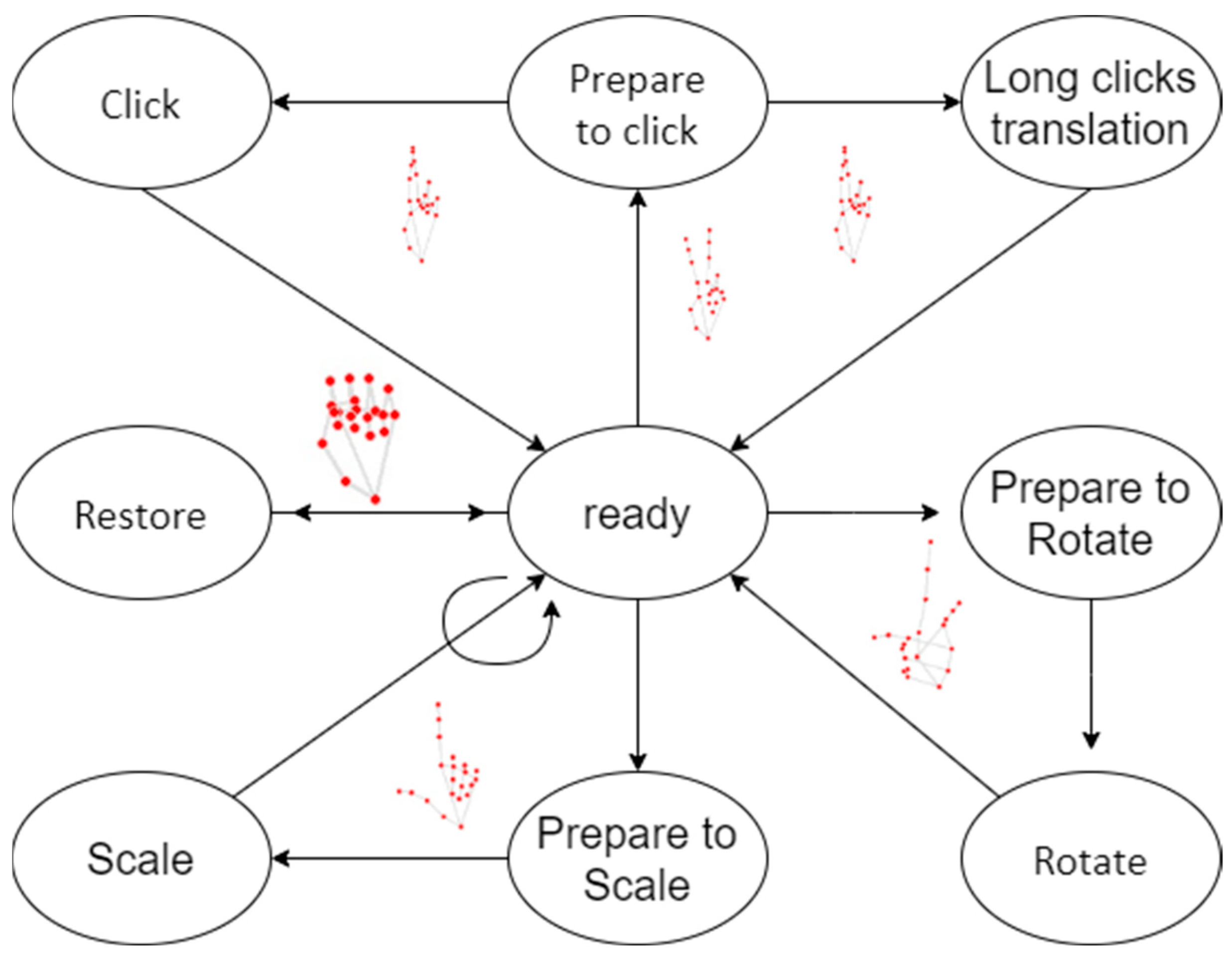

5. System Implementation Architecture and State Transition

5.1. System Architecture

5.2. System State Transition

5.3. Inter System Communication

5.4. Scalability and Flexibility of the System Architecture

6. Experimental Section

6.1. Experimental Settings

6.1.1. Computer Environment

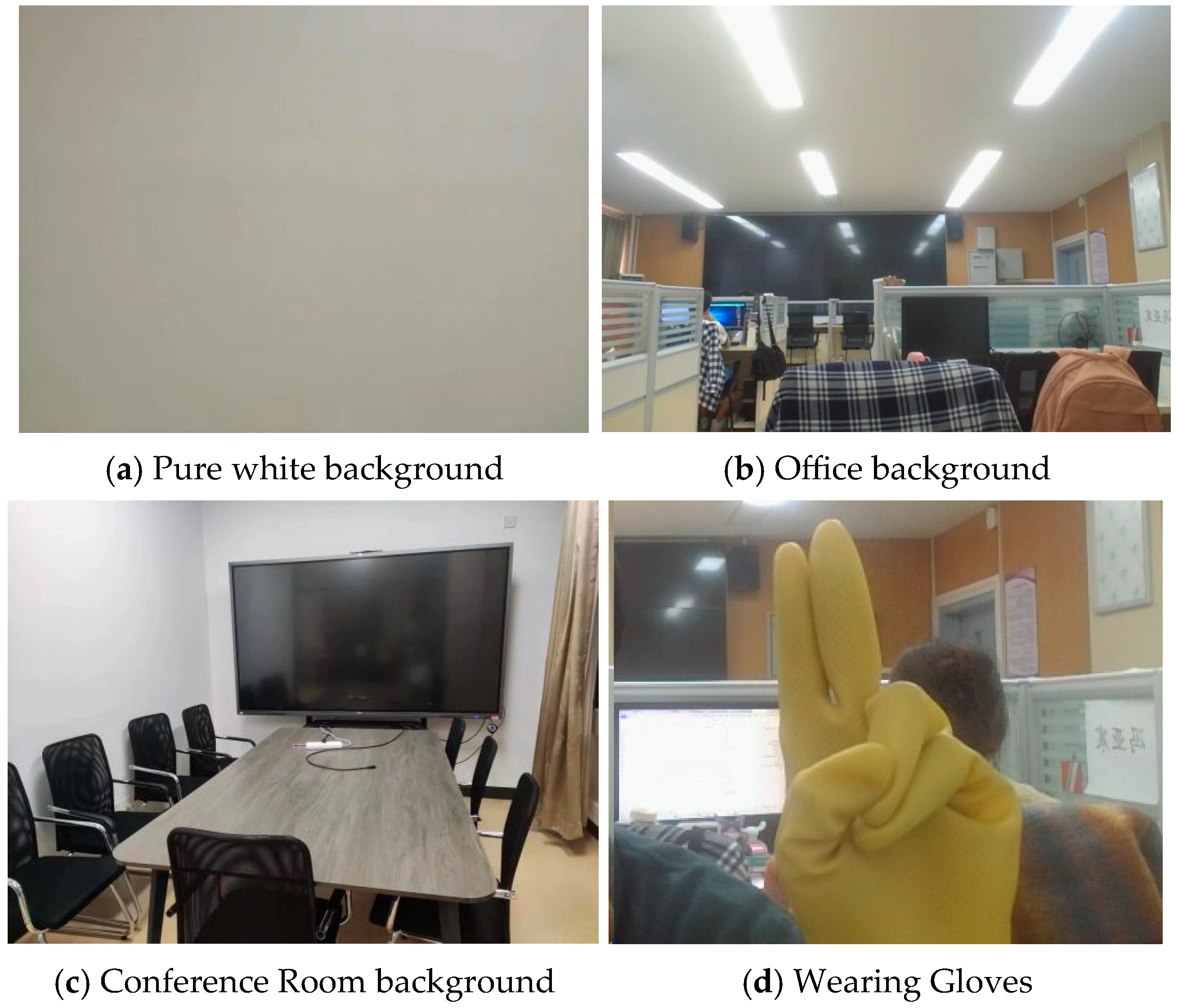

6.1.2. Experimental Scenario

6.1.3. Data Set

6.1.4. Evaluation Metrics

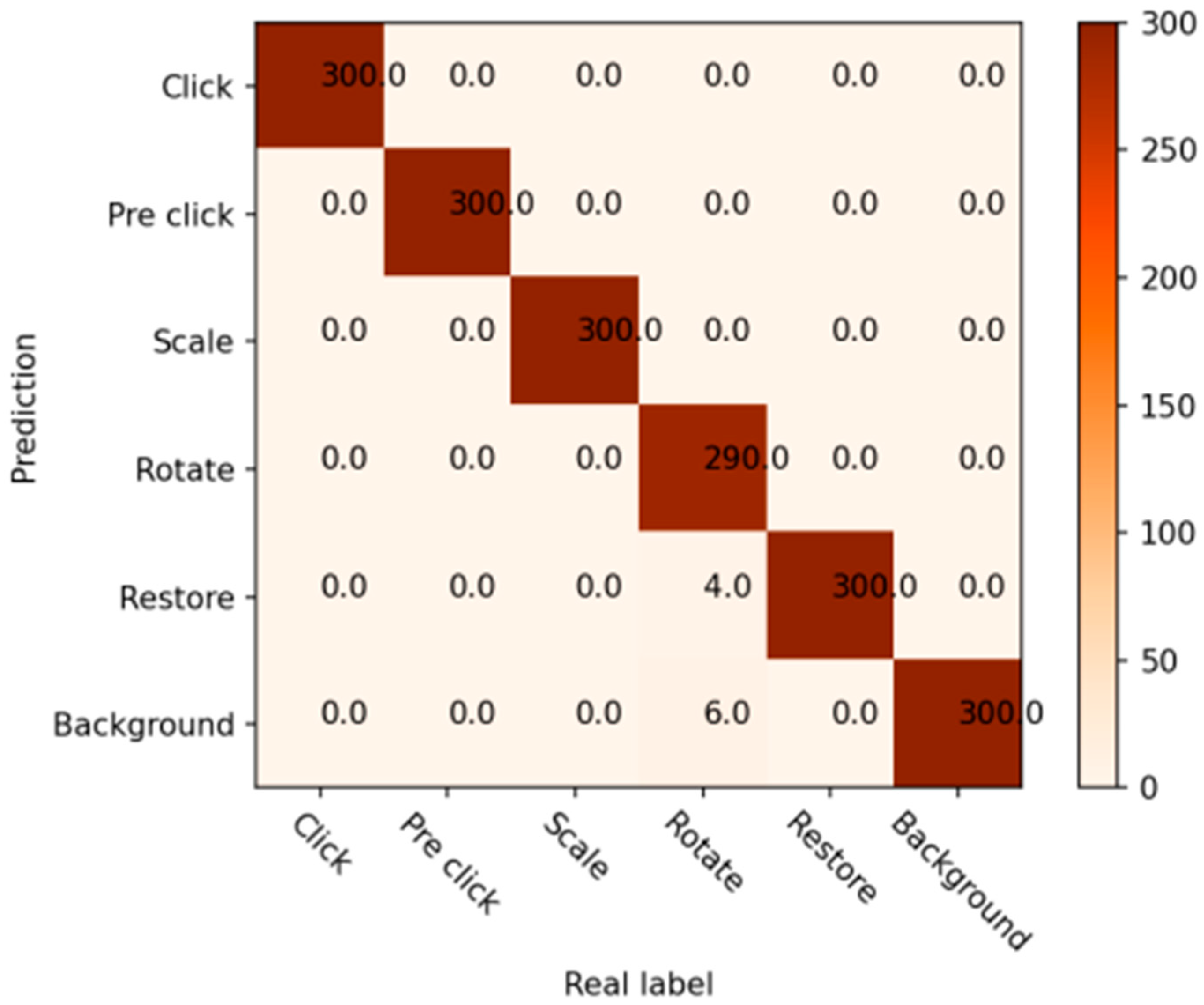

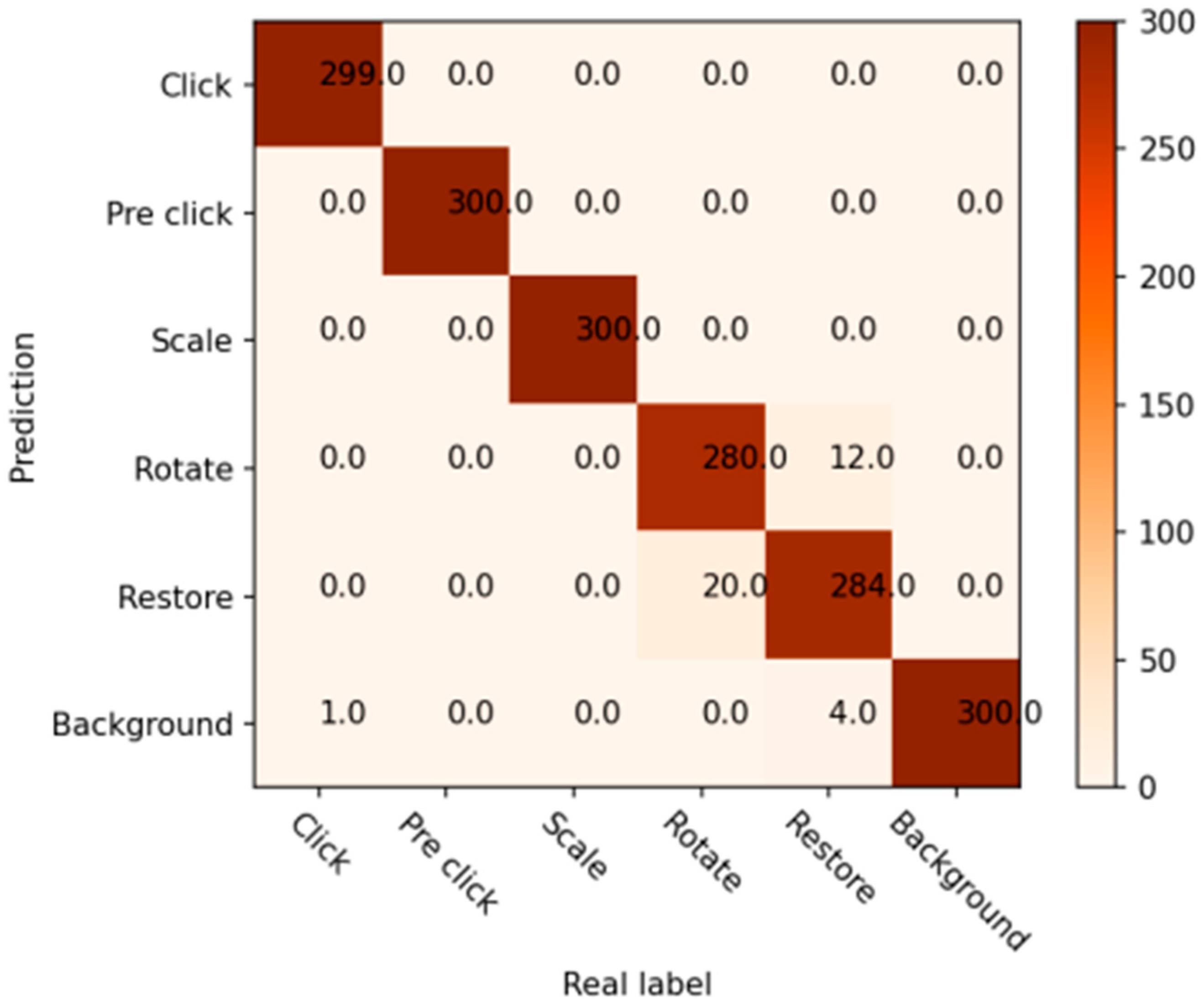

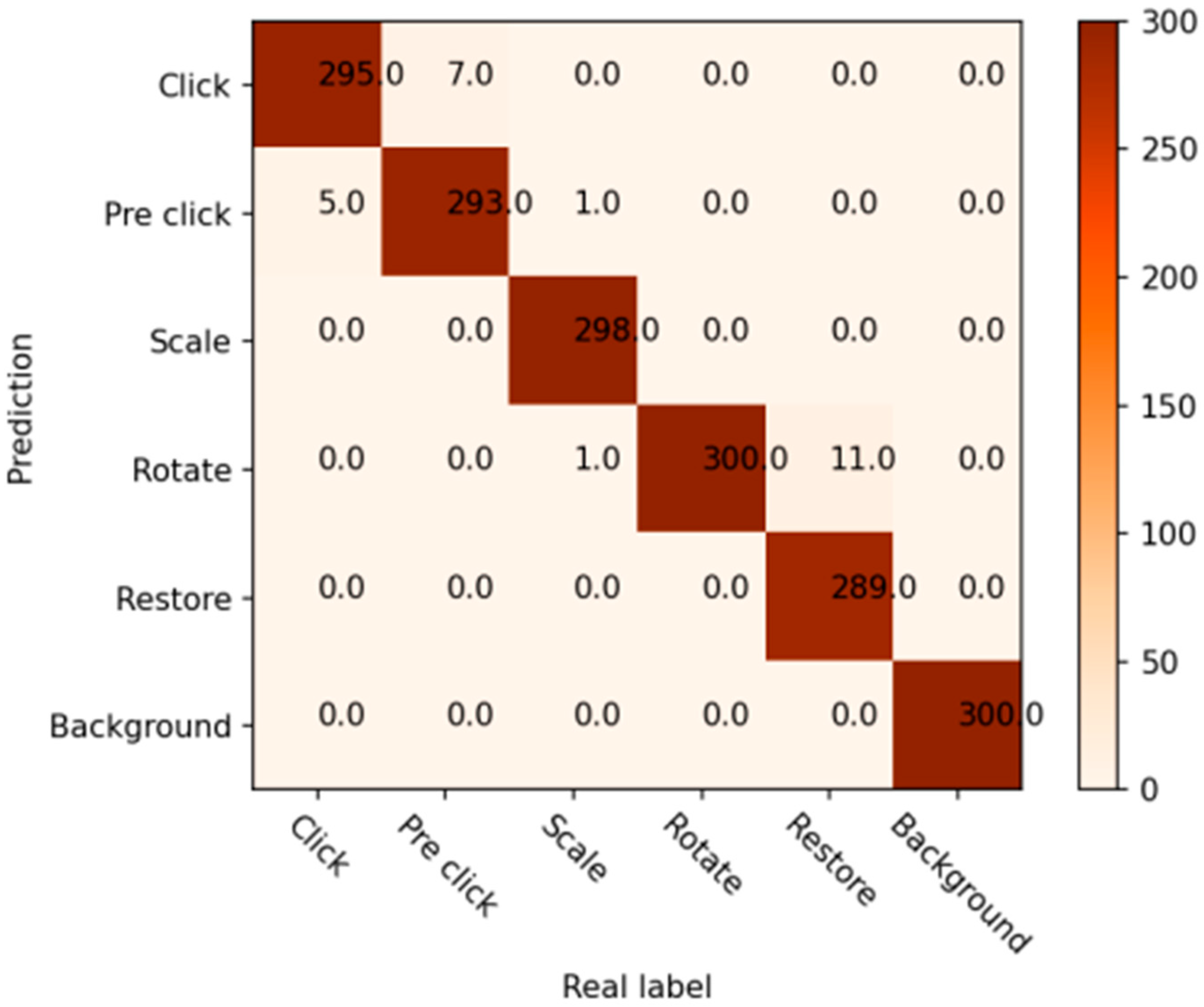

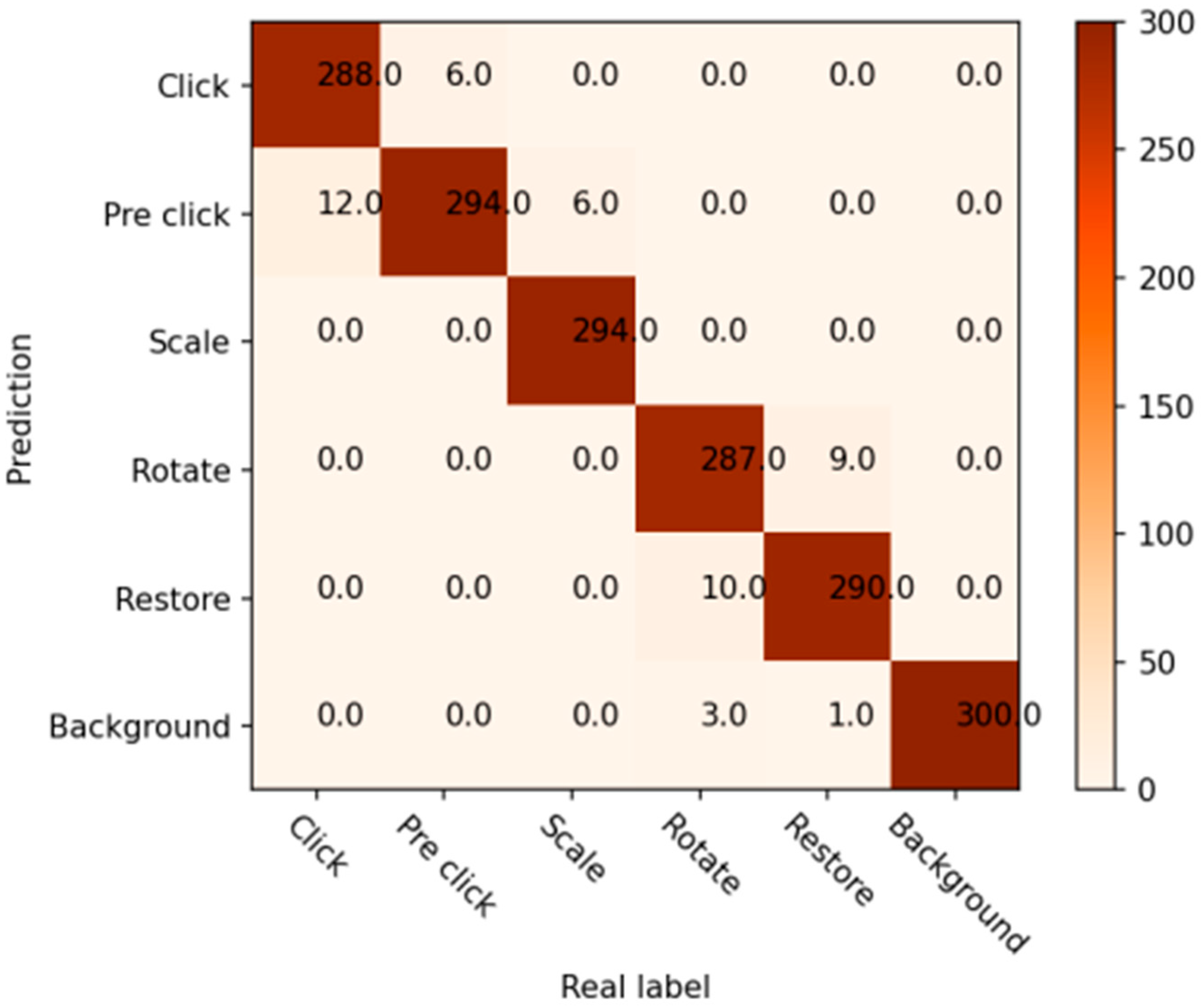

6.2. Experimental Result

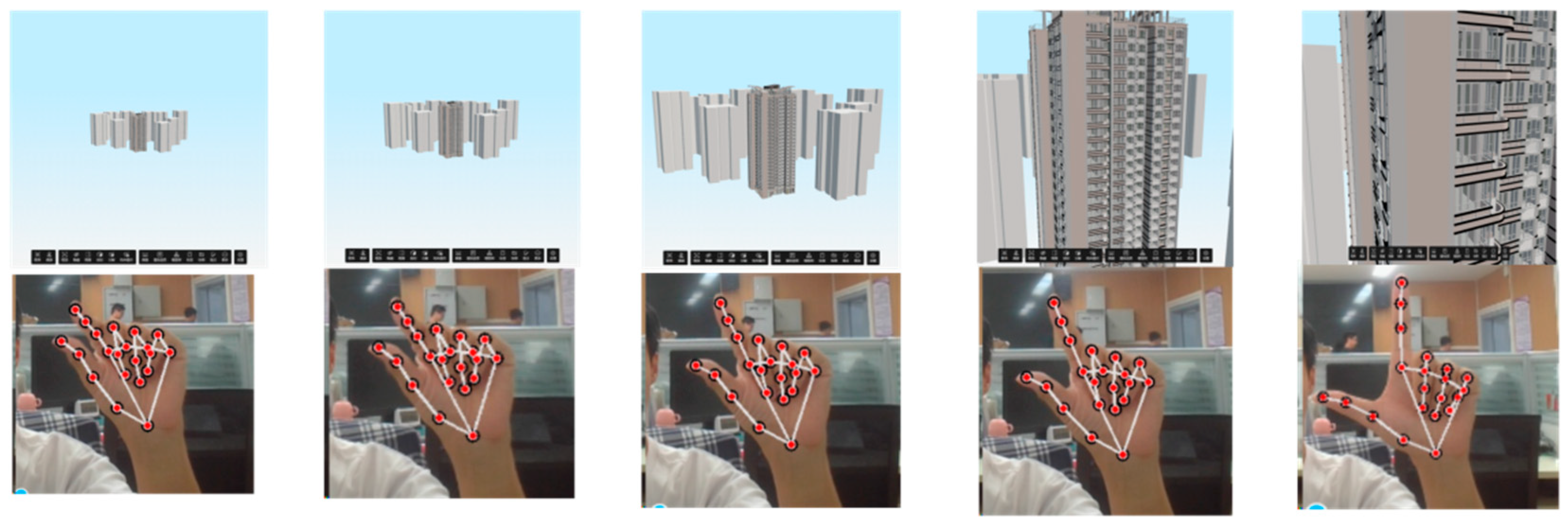

6.2.1. Qualitative Analysis

6.2.2. Quantitative Experiments

6.3. Comparative Analysis with Existing BIM Interaction Methods

6.3.1. Naturalness and Intuitiveness

6.3.2. Spatial Freedom and Flexibility

6.3.3. Multitasking and Efficiency

6.3.4. Health and Comfort

6.3.5. Adaptability and Application Scenarios

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Chen, L.; Luo, H. A BIM-based construction quality management model and its applications. Autom. Constr. 2014, 46, 64–73. [Google Scholar] [CrossRef]

- Prabhakaran, A.; Mahamadu, A.-M.; Mahdjoubi, L.; Boguslawski, P. BIM-based immersive collaborative environment for furniture, fixture and equipment design. Autom. Constr. 2022, 142, 104489. [Google Scholar] [CrossRef]

- Condotta, M.; Scanagatta, C. BIM-based method to inform operation and maintenance phases through a simplified procedure. J. Build. Eng. 2023, 65, 105730. [Google Scholar] [CrossRef]

- Mehrbod, S.; Staub-French, S.; Mahyar, N.; Tory, M. Characterizing interactions with BIM tools and artifacts in building design coordination meetings. Autom. Constr. 2019, 98, 195–213. [Google Scholar] [CrossRef]

- Al-Ansi, A.M.; Jaboob, M.; Garad, A.; Al-Ansi, A. Analyzing augmented reality (AR) and virtual reality (VR) recent development in education. Soc. Sci. Humanit. Open 2023, 8, 100532. [Google Scholar] [CrossRef]

- Weidner, F.; Boettcher, G.; Arboleda, S.A.; Diao, C.; Sinani, L.; Kunert, C.; Gerhardt, C.; Broll, W.; Raake, A. A systematic review on the visualization of avatars and agents in ar & vr displayed using head-mounted displays. IEEE Trans. Vis. Comput. Graph. 2023, 29, 2596–2606. [Google Scholar]

- Burian, B.; Ebnali, M.; Robertson, J.; Musson, D.; Pozner, C.; Doyle, T.; Smink, D.; Miccile, C.; Paladugu, P.; Atamna, B. Using extended reality (XR) for medical training and real-time clinical support during deep space missions. Appl. Ergon. 2023, 106, 103902. [Google Scholar] [CrossRef]

- Alhakamy, A.A. Extended Reality (XR) Toward Building Immersive Solutions: The Key to Unlocking Industry 4.0. ACM Comput. Surv. 2024, 56, 1–38. [Google Scholar] [CrossRef]

- Zhou, H.; Wang, D.; Yu, Y.; Zhang, Z. Research progress of human–computer interaction technology based on gesture recognition. Electronics 2023, 12, 2805. [Google Scholar] [CrossRef]

- Schiavi, B.; Havard, V.; Beddiar, K.; Baudry, D. BIM data flow architecture with AR/VR technologies: Use cases in architecture, engineering and construction. Autom. Constr. 2022, 134, 104054. [Google Scholar] [CrossRef]

- Amin, K.; Mills, G.; Wilson, D. Key functions in BIM-based AR platforms. Autom. Constr. 2023, 150, 104816. [Google Scholar] [CrossRef]

- Elghaish, F.; Chauhan, J.K.; Matarneh, S.; Rahimian, F.P.; Hosseini, M.R. Artificial intelligence-based voice assistant for BIM data management. Autom. Constr. 2022, 140, 104320. [Google Scholar] [CrossRef]

- Tchantchane, R.; Zhou, H.; Zhang, S.; Alici, G. A review of hand gesture recognition systems based on noninvasive wearable sensors. Adv. Intell. Syst. 2023, 5, 2300207. [Google Scholar] [CrossRef]

- Oudah, M.; Al-Naji, A.; Chahl, J. Hand gesture recognition based on computer vision: A review of techniques. J. Imaging 2020, 6, 73. [Google Scholar] [CrossRef]

- Hadavi, A.; Alizadehsalehi, S. From BIM to metaverse for AEC industry. Autom. Constr. 2024, 160, 105248. [Google Scholar] [CrossRef]

- Chignell, M.; Wang, L.; Zare, A.; Li, J. The evolution of HCI and human factors: Integrating human and artificial intelligence. ACM Trans. Comput. Hum. Interact. 2023, 30, 1–30. [Google Scholar] [CrossRef]

- Li, X. Human–robot interaction based on gesture and movement recognition. Signal Process. Image Commun. 2020, 81, 115686. [Google Scholar] [CrossRef]

- Sutton, R.S.; Modayil, J.; Delp, M.; Degris, T.; Pilarski, P.M.; White, A.; Precup, D. Horde: A scalable real-time architecture for learning knowledge from unsupervised sensorimotor interaction. In Proceedings of the 10th International Conference on Autonomous Agents and Multiagent Systems, Taipei, Taiwan, 2–6 May 2011; pp. 761–768. [Google Scholar]

- Gao, P. Key technologies of human–computer interaction for immersive somatosensory interactive games using VR technology. Soft Comput. 2022, 26, 10947–10956. [Google Scholar] [CrossRef]

- Sadeghi Milani, A.; Cecil-Xavier, A.; Gupta, A.; Cecil, J.; Kennison, S. A systematic review of human–computer interaction (HCI) research in medical and other engineering fields. Int. J. Hum. Comput. Interact. 2024, 40, 515–536. [Google Scholar] [CrossRef]

- Lim, Y.; Gardi, A.; Ezer, N.; Kistan, T.; Sabatini, R. Eye-tracking sensors for adaptive aerospace human-machine interfaces and interactions. In Proceedings of the 2018 5th IEEE International Workshop on Metrology for AeroSpace (MetroAeroSpace), Rome, Italy, 20–22 June 2018; pp. 311–316. [Google Scholar]

- Sitole, S.P.; LaPre, A.K.; Sup, F.C. Application and evaluation of lighthouse technology for precision motion capture. IEEE Sens. J. 2020, 20, 8576–8585. [Google Scholar] [CrossRef]

- Qi, J.; Ma, L.; Cui, Z.; Yu, Y. Computer vision-based hand gesture recognition for human-robot interaction: A review. Complex Intell. Syst. 2024, 10, 1581–1606. [Google Scholar] [CrossRef]

- Suo, J.; Liu, Y.; Wang, J.; Chen, M.; Wang, K.; Yang, X.; Yao, K.; Roy, V.A.; Yu, X.; Daoud, W.A. AI-Enabled Soft Sensing Array for Simultaneous Detection of Muscle Deformation and Mechanomyography for Metaverse Somatosensory Interaction. Adv. Sci. 2024, 11, 2305025. [Google Scholar] [CrossRef] [PubMed]

- Mustafin, M.; Chebotareva, E.; Li, H.; Martínez-García, E.A.; Magid, E. Features of interaction between a human and a gestures-controlled collaborative robot in an assembly task: Pilot experiments. In Proceedings of the 2023 International Conference on Artificial Life and Robotics, Online, 9–12 February 2023; pp. 158–162. [Google Scholar]

- Wen, F.; Sun, Z.; He, T.; Shi, Q.; Zhu, M.; Zhang, Z.; Li, L.; Zhang, T.; Lee, C. Machine learning glove using self-powered conductive superhydrophobic triboelectric textile for gesture recognition in VR/AR applications. Adv. Sci. 2020, 7, 2000261. [Google Scholar] [CrossRef]

- Mahmoud, N.M.; Fouad, H.; Soliman, A.M. Smart healthcare solutions using the internet of medical things for hand gesture recognition system. Complex Intell. Syst. 2021, 7, 1253–1264. [Google Scholar] [CrossRef]

- Camgoz, N.C.; Koller, O.; Hadfield, S.; Bowden, R. Sign language transformers: Joint end-to-end sign language recognition and translation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 14–19 June 2020; pp. 10023–10033. [Google Scholar]

- Alabdullah, B.I.; Ansar, H.; Mudawi, N.A.; Alazeb, A.; Alshahrani, A.; Alotaibi, S.S.; Jalal, A. Smart Home Automation-Based Hand Gesture Recognition Using Feature Fusion and Recurrent Neural Network. Sensors 2023, 23, 7523. [Google Scholar] [CrossRef]

- Joshi, H.; Litoriya, R.; Mangal, D. Design of a Virtual Mouse Using Gesture Recognition and Machine Learning. Preprints 2022. [Google Scholar] [CrossRef]

- Du, G.; Guo, D.; Su, K.; Wang, X.; Teng, S.; Li, D.; Liu, P.X. A mobile gesture interaction method for augmented reality games using hybrid filters. IEEE Trans. Instrum. Meas. 2022, 71, 9507612. [Google Scholar] [CrossRef]

- Wang, M.; Yan, Z.; Wang, T.; Cai, P.; Gao, S.; Zeng, Y.; Wan, C.; Wang, H.; Pan, L.; Yu, J. Gesture recognition using a bioinspired learning architecture that integrates visual data with somatosensory data from stretchable sensors. Nat. Electron. 2020, 3, 563–570. [Google Scholar] [CrossRef]

- Lee, H.; Lee, S.; Kim, J.; Jung, H.; Yoon, K.J.; Gandla, S.; Park, H.; Kim, S. Stretchable array electromyography sensor with graph neural network for static and dynamic gestures recognition system. NPJ Flex. Electron. 2023, 7, 20. [Google Scholar] [CrossRef]

- Ali, H.; Jirak, D.; Wermter, S. Snapture—A novel neural architecture for combined static and dynamic hand gesture recognition. Cogn. Comput. 2023, 15, 2014–2033. [Google Scholar] [CrossRef]

- Wang, R.; Wu, X.-J.; Chen, Z.; Xu, T.; Kittler, J. Learning a discriminative SPD manifold neural network for image set classification. Neural Netw. 2022, 151, 94–110. [Google Scholar] [CrossRef]

- Li, H.; Wu, L.; Wang, H.; Han, C.; Quan, W.; Zhao, J. Hand gesture recognition enhancement based on spatial fuzzy matching in leap motion. IEEE Trans. Ind. Inform. 2019, 16, 1885–1894. [Google Scholar] [CrossRef]

- Rajalakshmi, E.; Elakkiya, R.; Subramaniyaswamy, V.; Alexey, L.P.; Mikhail, G.; Bakaev, M.; Kotecha, K.; Gabralla, L.A.; Abraham, A. Multi-semantic discriminative feature learning for sign gesture recognition using hybrid deep neural architecture. IEEE Access 2023, 11, 2226–2238. [Google Scholar] [CrossRef]

- Guo, L.; Lu, Z.; Yao, L. Human-machine interaction sensing technology based on hand gesture recognition: A review. IEEE Trans. Hum. Mach. Syst. 2021, 51, 300–309. [Google Scholar] [CrossRef]

- Ojeda-Castelo, J.J.; Capobianco-Uriarte, M.d.L.M.; Piedra-Fernandez, J.A.; Ayala, R. A survey on intelligent gesture recognition techniques. IEEE Access 2022, 10, 87135–87156. [Google Scholar] [CrossRef]

- Vuletic, T.; Duffy, A.; McTeague, C.; Hay, L.; Brisco, R.; Campbell, G.; Grealy, M. A novel user-based gesture vocabulary for conceptual design. Int. J. Hum. Comput. Stud. 2021, 150, 102609. [Google Scholar] [CrossRef]

- Visser, W. The function of gesture in an architectural design meeting. In About Designing; CRC Press: Boca Raton, FL, USA, 2022; pp. 269–284. [Google Scholar]

| Gesture | Gesture Recognition Method | Function Realization |

|---|---|---|

Pre-click | When the first two fingers (F[1] and F[2]) are extended and the remaining fingers are flexed, a preparatory click state is entered. Within this state, the cursor dynamically tracks the movement. | Upon recognition of the gesture, the position of H[8] (i.e., the apex of the index finger) within the image is utilized as a fixed point. Consequently, the cursor shifts towards the presently indicated direction of H[0]. A greater distance signifies an accelerated cursor movement. |

Click | When the first two fingers (F[1] and F[2]) are straightened, the remaining ones are flexed, and the separation is smaller than the one between H[5] and H[9], a click command is then enacted. | |

Narrow | When F[0] and F[1] are extended while the remaining fingers remain flexed, sustaining this posture for a second initiates the scale mode. | Upon the initiation of the scale mode, the lengths of H[4] and H[8] become the referential benchmarks. The scaling ratio correspondingly presents itself as the multiple of the magnitude of length variation. |

Enlarge | Upon the extension of fingers F[0] and F[1], with the remaining digits kept flexed, and the sustenance of this position for a temporal span of one second, a transition into a zoom mode is initiated. | |

Rotate | Upon the unfolding of finger F[1] concurrent with the flexion of the remaining digits, and the preservation of this condition for an interval of one second, the transition into a rotational mode is thereby instigated. | The orientation of F[1] in the computed image is established as the rotation direction for the model. The rotational speed of the building model remains constant. |

Restore | Proceed with the formation of a clenched fist. | The model is returned to its initial state through a reset function. |

| Click | Prepare to Click | Scale | Rotate | Restore | Average | |

|---|---|---|---|---|---|---|

| Pure White | 100% | 100% | 100% | 96.6% | 100% | 99% |

| Office | 99.66% | 100% | 100% | 94% | 94.66% | 98% |

| Conference Room | 98.33% | 97.66% | 99.33% | 100% | 96.33% | 98% |

| Wearing Gloves | 96% | 98% | 98% | 95.66% | 96.66% | 97% |

| Average | 98.5% | 98.9% | 99.3% | 96.4% | 96.9% | 98% |

| TP | FP | FN | TN | Accuracy | Precision | Recall | |

|---|---|---|---|---|---|---|---|

| Click | 1183 | 13 | 17 | 4778 | 0.99 | 0.98 | 0.98 |

| Pre click | 1187 | 24 | 13 | 4763 | 0.99 | 0.98 | 0.98 |

| scale | 1185 | 0 | 19 | 4789 | 0.99 | 1 | 0.98 |

| rotate | 1157 | 29 | 33 | 4788 | 0.98 | 0.97 | 0.97 |

| restore | 1163 | 33 | 27 | 4778 | 0.99 | 0.97 | 0.97 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, T.; Wang, Y.; Zhou, X.; Liu, D.; Ji, J.; Feng, J. Intelligent Human–Computer Interaction for Building Information Models Using Gesture Recognition. Inventions 2025, 10, 5. https://doi.org/10.3390/inventions10010005

Zhang T, Wang Y, Zhou X, Liu D, Ji J, Feng J. Intelligent Human–Computer Interaction for Building Information Models Using Gesture Recognition. Inventions. 2025; 10(1):5. https://doi.org/10.3390/inventions10010005

Chicago/Turabian StyleZhang, Tianyi, Yukang Wang, Xiaoping Zhou, Deli Liu, Jingyi Ji, and Junfu Feng. 2025. "Intelligent Human–Computer Interaction for Building Information Models Using Gesture Recognition" Inventions 10, no. 1: 5. https://doi.org/10.3390/inventions10010005

APA StyleZhang, T., Wang, Y., Zhou, X., Liu, D., Ji, J., & Feng, J. (2025). Intelligent Human–Computer Interaction for Building Information Models Using Gesture Recognition. Inventions, 10(1), 5. https://doi.org/10.3390/inventions10010005