1. Introduction

In a discussion of technologically enhanced humans in the 21st century, the emergence of

Homo sapiens several hundred thousand years ago is a good starting point for that discussion. Through evolution, humans first evolved to live as hunter-gatherers on the savannah plains of Africa [

1]. The forces of evolution operating over millions of years provided our early human ancestors the skeletal and muscular structure for bipedal locomotion, sensors to detect visual, auditory, haptic, olfactory, and gustatory stimuli, and information-processing abilities to survive in the face of numerous challenges. One of the main evolutionary adaptations of humans compared to other species is the capabilities of our brain and particularly the cerebral cortex. For example, the average human brain has an estimated 85–100 billion neurons and contains many more glial cells which serve to support and protect the neurons [

2]. Each neuron may be connected to up to 10,000–12,500 other neurons, passing signals to each other via as many as 100 trillion synaptic connections, equivalent by some estimates to a computer with a 1 trillion bit per second processor [

3].

Comparing the brain (electro-chemical) to computers (digital), synapses are roughly similar to transistors, in that they are binary, open or closed, letting a signal pass or blocking it. So, given a median estimate of 12,500 synapses/neurons and taking an estimate of 22 billion cortical neurons, our brain, at least at the level of the cerebral cortex, has something on the order of 275

trillion transistors that may be used for cognition, information processing, and memory storage and retrieval [

2]. Additionally, recent evidence points to the idea that there is actually subcellular computing going on within neurons, moving our brains from the paradigm of a single computer to something more like an Internet of the brain, with billions of simpler nodes all working together in a massively parallel network [

4].

Interestingly, while we have rough estimates of the brain’s computing capacity, we do not have an accurate measure of the brain’s overall ability to compute; but the brain does seem to operate at least at the level of petaflop computing (and likely more). For example, as a back-of-the-napkin calculation, with 100 billion neurons connected to say 12,500 other neurons and postulating that the strength of a synapse can be described using one byte (8 bits), multiplying this out produces 1.25 petabytes of computing power. This is, of course, a very rough estimate of the brain’s computing capacity done just to illustrate the point that the brain has tremendous complexity and ability to compute. There are definitely other factors in the brain’s ability to compute such as the behavior of support cells, cell shapes, protein synthesis, ion channeling, and the biochemistry of the brain itself that will surely factor in when calculating a more accurate measure of the computational capacity of the brain. And that a brain can compute, at least at the petaflop level or beyond, is, of course, the result of the process of evolution operating over a period of millions of years.

No matter what the ultimate computing power of the brain is, given the magnitude of 85–100 trillion synapses to describe the complexity of the brain, it is relevant to ask—will our technology ever exceed our innate capabilities derived from the process of evolution? If so, then it may be desirable to enhance the body with technology in order to keep pace with the rate at which technology is advancing and becoming smarter (“smartness” in the sense of “human smartness”) [

5]. This is basically a “cyborg oriented” approach to thinking about human enhancement and evolution. Under this approach, even though much of the current technology integrated into the body is for medical purposes, this century, able-bodied people will become increasingly enhanced with technology, which, among others, will include artificial intelligence embedded in the technology implanted within their body [

5,

6].

An interesting question is why would “able-bodied” people agree to technological enhancements, especially those implanted under the skin? One reason is derived from the “grinder movement” which represents people who embrace a hacker ethic to improve their own body by self-implanting “cyborg devices” under their skin. Implanting a magnet under the fingertip in order to directly experience a magnetic field is one example, and arguably creates a new sense [

5]. Another reason to enhance the body with technology is expressed by transhumanists, who argue that if a technology such as artificial intelligence reaches and then surpasses human levels of general intelligence, humans will no longer be the most intelligent being on the planet; thus, the argument goes, we need to merge with technology to remain relevant and to move beyond the capabilities provided by our biological evolution [

5]. Commenting on this possibility, inventor and futurist Ray Kurzweil has predicted that there will be low-cost computers with the same computational capabilities as the brain by around 2023 [

6]. Given continuing advances in computing power and in artificial intelligence, the timescale for humans to consider the possibility of a superior intelligence is quickly approaching and a prevailing idea among some scientists, inventors, and futurists is that we need to merge with the intelligent technology that we are creating as the next step of evolution [

5,

6,

7]. Essentially, to merge with technology means to have so much technology integrated into the body through closed-loop feedback systems that the human is considered more of a technological being than a biological being [

5]. Such a person could, as is possible now, be equipped with artificial arms and legs (prosthetic devices), or technology performing the functions of our internal organs (e.g., heart pacer), and most importantly for our future to merge with technology, functions performed by the brain itself using technology implanted within the brain (e.g., neuroprosthesis, see

Table 1 and

Table 2).

Of course, the software to create artificial general intelligence currently lags behind hardware developments (note that some supercomputers now operate at the exaflop, i.e., 10

18 level), but still, the rate of improvements in a machine’s ability to learn indicates that computers with human-like intelligence could occur this century and be embedded within a neuroprosthesis implanted within the brain [

6]. If that happens, commentators predict that humans may then connect their neocortex to the cloud (e.g., using a neuroprosthesis), thus accessing the trillions of bits of information available through the cloud and benefiting from an algorithm’s ability to learn and to solve problems currently beyond a human’s understanding [

8]. This paper reviews some of the technologies which could lead to that outcome.

Evolution, Technology, and Human Enhancement

While the above capabilities of the brain are remarkable, we should consider that for millennia the innate computational capabilities of the human brain have remained relatively fixed; and even though evolution affecting the human body is still occurring, in many ways we are today very similar anatomically, physiologically, and as information processors to our early ancestors of a few hundred thousand years ago. That is, the process of evolution created a sentient being with the ability to survive in the environment that

Homo sapiens evolved to successfully compete in. In the 21st century, we are not much different from that being even though the technology we use today is vastly superior. In contrast, emerging technologies in the form of exoskeletons, prosthetic devices for limbs controlled by the brain, and neuroprosthetic devices implanted within the brain are beginning to create technologically enhanced people with abilities beyond those provided to humans through the forces of evolution [

1,

5,

7]. The advent of technological enhancements to humans combined with the capabilities of the human body provided through the process of evolution brings up the interesting point that our biology and the technology integrated into the body are evolving under vastly different time scales. This has implications for the future direction of our species and raises moral and ethical issues associated with altering the speed of the evolutionary processes which ultimately created

Homo sapiens [

9].

Comparing the rate of biological evolution to the speed at which technology evolves, consider the sense of vision. The light-sensitive protein opsin is critical for the visual sense; from an evolutionary timescale, the opsin lineage arose over 700 million years ago [

10]. Fast forward over a hundred million years later, the first fossils of eyes were recorded from the lower Cambrian period (about 540 million years ago). It is thought that before the Cambrian explosion, animals may have sensed light, but did not use it for fast locomotion or navigation by vision. Compare these timeframes for developing the human visual system, to the development of “human-made” technology to aid vision [

11]. Once technology created by humans produced the first “vision aid”, the speed of technological development has operated on a timescale orders of magnitude faster than biological evolution. For example, around 1284, Salvino D’Armate invented the first wearable eye glasses and just 500 years later (“just” as in the planet is 4.7 billion years old and anatomically modern humans evolved a few hundred thousand years ago), in the mid 1780s, bifocal eyeglasses were invented by Benjamin Franklin. Fast forward a few more centuries and within the last ten to fifteen years, the progress in creating technology to enhance, or even replace the human visual system has vastly accelerated. For example, eye surgeons at the Massachusetts Eye and Ear Infirmary, are working on a miniature telescope to be implanted into an eye that is designed to help people with vision loss from end-stage macular degeneration [

12]. And what is designed based on medical necessity today may someday take on a completely different application, that of enhancing the visual system of normal-sighted people to allow them to detect electromagnetic energy outside the range of our evolutionary adopted eyes, to zoom in or out of a scene with telephoto lens, to augment the world with information downloaded from the cloud, and even to wirelessly connect the visual sense of one person to that of another [

5].

From an engineering perspective, and particularly as described by control theory, one can conclude that evolution applies positive feedback in adopting the human to the ambient environment in that the more capable methods resulting from one stage of evolutionary progress are the impetus used to create the next stage [

6]. That is, the process of evolution operates through modification by descent, which allows nature to introduce small variations in an existing form over a long period of time; from this general process, the evolution into humankind with a prefrontal cortex took millions of years [

1]. For both biological and technological evolution, an important issue is the magnitude of the exponent describing growth (or the improvement in the organism or technology). Consider a standard equation expressing exponential growth, ƒ

(x) = 2

x. The steepness of the exponential function, which in this discussion refers to the rate at which biological or technological evolution occurs, is determined by the magnitude of the exponent. For biological versus technological evolution, the value for the exponent is different, meaning that biological and technological evolution proceed with far different timescales. This difference has relevance for our technological future and our continuing integration of technology with the body to the extent that we may eventually merge with and become the technology. Some commentators argue that we have always been human–technology combinations, and to some extant I agree with this observation. For example, the development of the first tools allowed human cognition to be extended beyond the body to the tool in order to manipulate it for some task. However, in this paper, the discussion is more on the migration of “smart technology” from the external world to either the surface of the body or implanted within the body; the result being that humans will be viewed more as a technological being than biological.

Additionally, given the rate at which technology and particularly artificial intelligence is improving, once a technological Singularity is reached in which artificial intelligence is smarter than humans, humans may be “left behind” as the most intelligent beings on the planet [

5,

7]. In response, some argue that the solution to exponential growth in technology, and particularly computing technology, is to become enhanced with technology ourselves, and deeper into the future, to ultimately become the technology [

5,

7]. In that context, this article reviews some of the emerging enhancement technologies directed primarily at the functions performed by the brain and discusses a timeframe in which humans will continue to use technology as a tool, which I term the “standard model” of technology use, then become so enhanced with technology (including technology implanted within the body) that we may view humans as an example of technology, which I term the “cyborg-machine” model of technology [

5].

The rate at which technological evolution occurs, as modeled by the law of accelerating returns and specifically by Moore’s law which states that the number of transistors on an integrated circuit doubles about every two years—both of which in the last few decades have accurately predicted rapid advancements in the technologies which are being integrated into the body—make a strong argument for accelerating evolution through the use of technology [

6]. To accelerate evolution using technology is to enhance the body to beyond normal levels or performance, or even to create new senses; but basically, through technology implanted within the body, to provide humans with vastly more computational resources than provided by a 100-trillion-synapse brain (many of which are not directly involved in cognition). More fundamentally, the importance of the law of accelerating returns for human enhancement is that it describes, among others, how technological change is exponential, meaning that advances in one stage of technology development help spur an even faster and more profound technological capability in the next stage of technology development [

6]. This law, describing the rate at which technology advances, has been an accurate predictor of the technological enhancement of humans over the last few decades and provides the motivation to argue for a future merger of humans with technology [

5].

2. Two Categories of Technology for Enhancing the Body

Humans are users and builders of technology, and historically, technology created by humans has been designed primarily as external devices used as tools allowing humans to explore and manipulate the environment [

1]. By definition, technology is the branch of knowledge that deals with the creation and use of “technical means” and their interrelation with life, society, and the environment. Considering the long history of humans as designers and users of technology, it is only recently that technology has become implanted within the body in order to repair, replace, or enhance the functions of the body including those provided by the brain [

5]. This development, of course, is the outcome of millions of years of biological evolution which created a species which was capable of building such complex technologies. Considering tools as an early example of human-made technology, the ability to make and use tools dates back millions of years in the sapiens family tree [

13]. For example, the creation of stone tools occurred some 2.6 million years ago by our early hominid ancestors in Africa which represented the first known manufacture of stone tools—sharp flakes created by knapping, or striking a hard stone against quartz, flint, or obsidian [

1]. However, this advancement in tools represented the major extent of technology developments over a period of eons.

In the role of technology to create and then enhance the human body, I would like to emphasize two types of technology which have emerged from my thinking on this topic in the last few decades, these include “biological” and “cultural” technology (

Table 1). While I discuss each in turn, I do not mean to suggest that they evolved independently from each other; human intelligence, for example, has to some extent been “artificially enhanced” since humans developed the first tools; this is due in part to the interaction between the cerebral cortex and technology. This view is consistent with that expressed by Andy Clark in his books, “Natural-Born Cyborgs: and the Future of Human Intelligence,” and “Mindware: An Introduction to the Philosophy of Cognitive Science.” Basically, his view on the “extended mind” is that human intelligence has always been artificial, made possible by the technology’s that humans designed which he proposes extended the reach of the mind into the environment external to the body. For example, he argues that a notebook and pencil plays the role of a biological memory, which physically exists and operates external to the body, thus becoming a part of an extended mind which cognitively interacts with the notebook. While agreeing with Andy Clark’s observations, it is worth noting that this paper does not focus on whether the use of technology extends the mind to beyond the body. Instead, the focus here, when describing biological and cultural technology, is simply to emphasize that the processes of biological evolution created modern humans with a given anatomy and physiology, and prefrontal cortex for cognition, and that human ingenuity has led to the types of non-biological technology being developed that we may eventually merge with. Additionally, the focus here is on technology that will be integrated into the body, including the brain, and thus the focus is not on the extension of cognition into the external world, which of course is an end-product of evolution made possible by the

Homo sapiens prefrontal cortex.

With that caveat in mind, biological technology is that technology resulting from the processes of evolution which created a bipedal, dexterous, and sentient human being. As an illustration of biology as technology, consider the example of the musculoskeletal system. From an engineering perspective, the human body may be viewed as a machine formed of many different parts that allow motion to occur at the joints formed by the parts of the human body [

14]. The process of evolution which created a bipedal human through many iterations of design, among others, took into account the forces which act on the musculoskeletal system and the various effects of the forces on the body; such forces can be modeled using principles of biomechanics, an engineering discipline which concerns the interrelations of the skeleton system, muscles, and joints.

In addition, the forces of evolution acting over extreme time periods ultimately created a human musculoskeletal system that uses levers and ligaments which surround the joints to form hinges, and muscles which provide the forces for moving the levers about the joints [

14]. Additionally, the geometric description of the musculoskeletal system can be described by kinematics, which considers the geometry of the motion of objects, including displacement, velocity, and acceleration (without taking into account the forces that produce the motion). Considering joint mechanics and structure, as well as the effects that forces produce on the body, indicates that evolution led to a complex engineered body suitable for surviving successfully as a hunter-gatherer. From this example, we can see that basic engineering principles on levers, hinges, and so on were used by nature to engineer

Homo sapiens. For this reason, “biology” (e.g., cellular computing, human anatomy) may be thought of as a type of technology which developed over geologic time periods which ultimately led to the hominid family tree that itself eventually led a few hundred thousand years ago to the emergence of

Homo sapiens; the “tools” of biological evolution are those of nature primarily operating at the molecular level and based on a DNA blueprint. So, one can conclude that historically, human technology has built upon the achievements of nature [

13]. Millions of years after the process of biological evolution started with the first single cell organisms, humans discovered the principles which now guide technology design and use, in part, by observing and then reverse engineering nature.

Referring to “human-made” technology, I use the term “cultural technology” to refer to the technology which results from human creativity and ingenuity, that is, the intellectual output of humans. This is the way of thinking about technology that most people are familiar with; technology is what humans invent and then build. Examples include hand-held tools developed by our early ancestors to more recent neuroprosthetic devices implanted within the brain. Both biological and in many cases cultural technology can be thought of as directed towards the human body; biological technology operating under the slow process of evolution, cultural technology operating at a vastly faster pace. For example, over a period of only a few decades computing technology has dramatically improved in terms of speed and processing power as described by Moore’s law for transistors; and more generally, technology has continuously improved as predicted by the law of accelerating returns as explained in some detail by Ray Kurzweil [

6]. However, it should be noted that biological and cultural technologies are not independent. What evolution created through millions of years of trial-and-error is now being improved upon in a dramatically faster time period by tool-building

Homo sapiens. In summary,

Homo sapiens are an end-product of biological evolution (though still evolving), and as I have described to this point, a form of biological technology supplemented by cultural technology. Further, I have postulated that biological humans may merge with what I described as cultural technology, thus becoming less biological and more “digital-technological”.

3. On Being Biological and on Technologically Enhancing the Brain

As a product of biological evolution, the cerebral cortex is especially important as it shapes our interactions and interpretations of the world we live in [

1]. Its circuits serve to shape our perception of the world, store our memories and plan our behavior. A cerebral cortex, with its typical layered organization, is found only among mammals, including humans, and non-avian reptiles such as lizards and turtles [

15]. Mammals, reptiles and birds originate from a common ancestor that lived some 320 million years ago. For

Homo sapiens, comparative anatomic studies among living primates and the primate fossil record show that brain size, normalized to bodyweight, rapidly increased during primate evolution [

16]. The increase was accompanied by expansion of the neocortex, particularly its “association” regions [

17]. Additionally, our cerebral cortex, a sheet of neurons, connections and circuits, comprises “ancient” regions such as the hippocampus and “new” areas such as the six-layered “neocortex”, found only in mammals and most prominently in humans [

15]. Rapid expansion of the cortex, especially the frontal cortex, occurred during the last half-million years. However, the development of the cortex was built on approximately five million years of hominid evolution; 100 million years of mammalian evolution; and about four billion years of molecular and cellular evolution. Compare the above timeframes with the timeframe for major developments in computing technology discussed next. Keep in mind that, among others, recent computing technologies are aimed at enhancing the functions of the cerebral cortex itself, and in some cases, this is done by implanting technology directly in the brain [

5,

18].

The recent history of computing technology is extremely short when considered against the backdrop of evolutionary time scales that eventually led to Homo sapiens and spans only a few centuries. But before the more recent history of computing is discussed, it should be noted that an important invention for counting, the abacus, was created by Chinese mathematicians approximately 5000 years ago. Some (such as philosopher and cognitive scientist Andy Clark) would consider this invention (and more recently digital calculators and computers) to be an extension of the neocortex. While interacting with technology external to the body extends cognition to that device, still, the neurocircuits controlling the device, remain within the brain. More recently, in 1801, Joseph Marie Jacquard invented a loom that used punched wooden cards to automatically weave fabric designs, and two centuries later early computers used a similar technology with punch cards. In 1822, the English mathematician Charles Babbage conceived of a steam-driven calculating machine that would be able to compute tables of numbers and a few decades later Herman Hollerith designed a punch card system which among others, was used to calculate the U.S. 1890 census. A half-century later, Alan Turing presented the notion of a universal machine, later called the Turing machine, theoretically capable of computing anything that could be computable. The central concept of the modern computer is based on his ideas. In 1943–1946 the Electronic Numerical Integrator and Calculator (ENIAC) was built which is considered the precursor of digital computers (it filled a 20-foot by 40-foot room consisting of 18,000 vacuum tubes) and was capable of calculating 5000 addition problems a second. In 1958, Jack Kilby and Robert Noyce unveiled the integrated circuit, or computer chip. And recently, based on efforts by the U.S. Department of Energy’s Oak Ridge National Laboratory, the supercomputer, Summit, operates at a peak performance of 200 petaflops—which corresponds to 200 million billion calculations a second (a 400 million increase in calculations compared to ENIAC from 75 years earlier). Of course, computing machines and technologies integrated into the body will not remain static. Instead, they will continue to improve, allowing more technology to be used to repair, replace, or enhance the functions of the body with computational resources.

In contrast to the timeframe for advances in computing and the integration of technology in the body, the structure and functionality of the

Homo sapiens brain has remained relatively the same for hundreds of thousands of years. With that timeframe in mind, within the last decade, several types of technology have been either developed, or are close to human trials, to enhance the capabilities of the brain. In the U.S., one of the major sources of funding for technology to enhance the brain is through the Defense Advanced Research Projects Agency (DARPA); some of the projects funded by DARPA are shown in

Table 2. Additionally, in the European Union the Human Brain Project (HPB) is another major effort to learn how the brain operates and to build brain interface technology to enhance the brain’s capabilities. One example of research funded by the HBP is Heidelberg University’s program to develop neuromorphic computing [

19]. The goal of this approach is to understand the dynamic processes of learning and development in the brain and to apply knowledge of brain neurocircuitry to generic cognitive computing. Based on neuromorphic computing models, the Heidelberg team has built a computer which is able to model/simulate four million neurons and one billion synapses on 20 silicon wafers. In contrast, simulations on conventional supercomputers typically run factors of 1000 slower than biology and cannot access the vastly different timescales involved in learning and development, ranging from milliseconds to years; however, neuromorphic chips are designed to address this by operating more like the human brain. In the long term, there is the prospect of using neuromorphic technology to integrate intelligent cognitive functions into the brain itself.

As mentioned earlier, medical necessity is a factor motivating the need to develop technology for the body. For example, to restore a damaged brain to its normal state of functioning, DARPA’s Restoring Active Memory (RAM) program funds research to construct implants for veterans with traumatic brain injuries that lead to impaired memories. Under the program, researchers at the Computational Memory Lab, University of Pennsylvania, are searching for biological markers of memory formation and retrieval [

20,

21]. Test subjects consist of hospitalized epilepsy patients who have electrodes implanted deeply in their brain to allow doctors to study their seizures. The interest is to record the electrical activity in these patients’ brains while they take memory tests in order to uncover the electric signals associated with memory operations. Once they have found the signals, researchers will amplify them using sophisticated neural stimulation devices; this approach, among others, could lead to technology implanted within the brain which could eventually increase the memory capacity of humans.

Other research which is part of the RAM program is through the Cognitive Neurophysiology Laboratory at the University of California, Los Angeles. The focus of this research is on the entorhinal cortex, which is the gateway to the hippocampus, the primary brain region associated with memory formation and storage [

22]. Working with the Lawrence Livermore National Laboratory, in California, closed-loop hardware in the form of tiny implantable systems is being jointly developed. Additionally, Theodore Berger at the University of Southern California has been a pioneer in the development of a neuroprosthetic device to aid memory [

23]. His artificial hippocampus is a type of cognitive prosthesis that is implanted into the brain in order to improve or replace the function(s) of damaged brain tissue. A cognitive prosthesis allows the native signals used normally by the area of the brain to be replaced (or supported). Thus, such a device must be able to fully replace the function of a small section of the nervous system—using that section’s normal mode of operation. The prosthesis has to be able to receive information directly from the brain, analyze the information and give an appropriate output to the cerebral cortex. As these and the examples in

Table 2 show, remarkable progress is being made in designing technology to be directly implanted in the brain. These developments, occurring over a period of just a decade, designed to enhance or repair the brain, represent a major departure from the timeframe associated with the forces of evolution which produced the current 100-trillion-synapse brain.

4. Tool Use and Timeframe to Become Technology

As more technology is integrated into our bodies, and as we move away from the process of biological evolution as the primary force operating on the body, we need to consider exponential advances in computing technology that have occurred over the last few decades. One of the main predictors for the future direction of technology and particularly artificial intelligence is Ray Kurzweil, Google’s Director of Engineering. Kurzweil has predicted that the technological Singularity, the time at which human general intelligence will be matched by artificial intelligence will be around 2045 [

6]. Further, Kurzweil claims that we will multiply our effective intelligence a billion-fold by merging with the artificial intelligence we have created [

6]. Kurzweil’s timetable for the Singularity is consistent with other predictions, notably those of futurist Masayoshi Son, who argues that the age of super-intelligent machines will happen by 2047 [

24]. In addition, a survey of AI experts (n = 352) attending two prominent AI conferences in 2015 responded that there was a 50% chance that artificial intelligence would exceed human abilities by around 2060 [

25]. In my view, these predictions for artificial intelligence (if they materialize) combined with advances in technology implanted in the body are leading to a synergy between biological and cultural technology in which humans will be equipped with devices that will allow the brain to directly access the trillions of bits of information in the cloud and to control technology using thought through a positive feedback loop; these are major steps towards humans merging with technology.

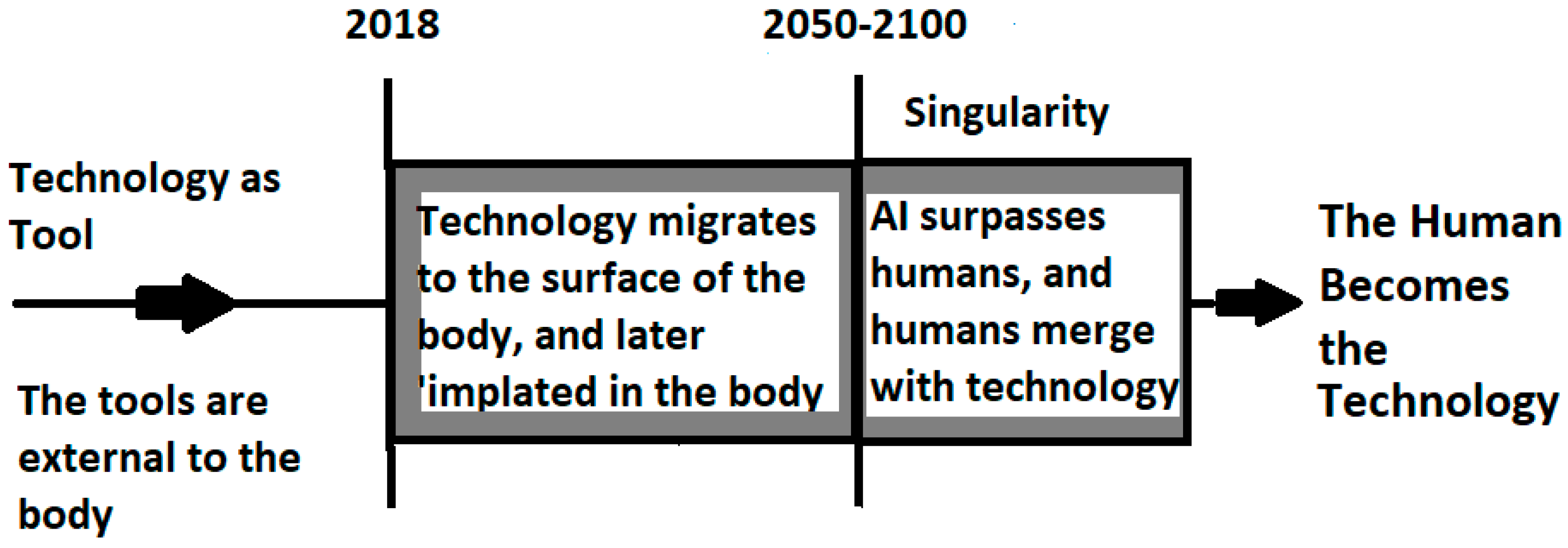

In

Figure 1, I am less specific about the date when the Singularity will occur compared to predictions by Kurzweil and Son, providing a range from 2050–2100 as a possibility. Further, in the Figure which also displays a timeframe for humans to merge with technology, I emphasize three time periods of importance for the evolution of human enhancement technology and eventual merger between humans and technology. These time periods suggest that the merging of humans with technology can be described as occurring in major stages (with numerous substages); for example, for most of the history of human technology, technology was external to the body, but as we move towards a being that is more technology that biology, we are entering a period of intermediate beings that are clearly biotechnical. However, returning to the first time period, it represents the preceding period of time that up to now is associated with human biological evolution and marked predominantly by humans using primitive (i.e., non-computing) tools for most of our history. Note that the tools designed by early humans were always external to the body and most frequently held by the hands. Such tools designed by our early ancestors allowed humans to manipulate the environment, but the ability to implant technology within the body in order to enhance the body with capabilities that are beyond what evolution provided humans is a very recent advancement in technology, one that could lead to a future merger between humans and technology.

The second stage of human enhancement is now until the Singularity occurs [

5]. In this short time period, technology will still be used primarily as a tool that is not considered as part of the human, thus humans will continue to be more biological than technological (but increasingly biotechnological beings will emerge). However, between now and the end of the century, we will see the development of more technology to replace biological parts, and to possibly create new features of human anatomy and brain functionality. In addition, during this time period people will increasingly access artificial intelligence as a tool to aid in problem solving and more frequently artificial intelligence will perform tasks independent of human supervision. But in this second stage of human enhancement, artificial intelligence will exist primarily on devices external to the human body. Later, artificial intelligence will be implanted in the body itself. Additionally, in the future, technology that is directly implanted in the brain will increase the computing and storage capacity of the brain, opening up new ways of viewing the world, and moving past the capabilities of the brain provided by biological evolution, essentially extending our neocortex into the cloud [

18].

The third stage of technology development impacting human enhancement and our future to merge with technology is represented by the time period occurring after the technological Singularity is reached. At this inflection point, artificial intelligence will have surpassed humans in general intelligence and technology will have advanced to the point where the human is becoming equipped with technology that is superior to our biological parts produced by the forces of evolution; thus, the human will essentially become a form of nonbiological technology. Of the various implants that will be possible within the human body, I believe that neuroprosthetic devices will be determinative for allowing humans to merge with, and control technology, and ultimately to become technology. Given the focus of the discussion in this paper comparing the timescale for evolutionary forces which created humans versus the dramatically accelerated timescale under which technology is improving the functionality of humans in the 21st century, the figure covers no more than a century, which is a fraction of the timeframe of human evolution since the first Homo sapiens evolved a few hundred thousand years ago.

5. Moral Issues to Consider

As we think about a future in which human cognitive functions and human bodies may be significantly enhanced with technology, it is important to mention the moral and ethical issues that may result when humans equipped with enhancement technologies surpass others in abilities and when different classes of humans exist by nature of the technology they embrace [

26]. Bob Yirka, discussing the ethical impact of rehabilitative technology on society comments that one area which is already being discussed is disabled athletes with high-tech prosthetics that seek to compete with able-bodied athletes [

26]. Another issue where a technologically-enhanced human may raise ethical and legal issues is the workplace [

5]. In an age of technologically enhanced people, should those with enhancements be given preferences in employment, or have different work standards than those who are not enhanced? Further, in terms of other legal and human rights, should those that are disabled but receive technological enhancements that make them “more abled” than those without enhancements, be considered a special class needing additional legal protections, or should able-bodied people in comparison to those enhanced receive such protections? Additionally, Gillet, poses the interesting question of how society should treat a “partially artificial being?” [

27]. The use of technological enhancements could create different classes of people by nature of their abilities, and whether a “partial human” would still be considered a natural human, and receive all protections offered under laws, statutes, and constitutions remains to be seen. Finally, would only some people be allowed to merge with technology creating a class of humans with superior abilities, or would enhancement technology be available to all people, and even mandated by governments raising the possibility of a dystopian future?

Clearly, as technology improves, and becomes implanted within human bodies, and repairs or enhances the body, or creates new human abilities, moral and ethical issues will arise, and will need significant discussion and resolution that are beyond the scope of this paper.

6. Concluding Thoughts

To summarize, this paper proposes that the next step in human evolution is for humans to merge with the increasingly smart technology that tool-building

Homo sapiens are in the process of creating. Such technology will enhance our visual and auditory systems, replace biological parts that may fail or become diseased, and dramatically increase our information-processing abilities. A major point is that the merger of humans with technology will allow the process of evolution to proceed under a dramatically faster timescale compared to the process of biological evolution. However, as a consequence of exponentially improving technology that may eventually direct its own evolution, if we do not merge with technology, that is, become the technology, we will be surpassed by a superior intelligence with an almost unlimited capacity to expand its intelligence [

5,

6,

7]. This prediction is actually a continuation of thinking on the topic by robotics and artificial intelligence pioneer Hans Moravec who, almost 30 years ago, argued that we are approaching a significant event in the history of life in which the boundaries between biological and post-biological intelligence will begin to dissolve [

7]. However, rather than warning humanity of dire consequences which could accompany the evolution of entities more intelligent than humans, Moravec postulated that it is relevant to speculate about a plausible post-biological future and the ways in which our minds might participate in its unfolding. Thus, the emergence of the first technology creating species a few hundred thousand years ago is creating a new evolutionary process leading to our eventual merger with technology; this process is a natural outgrowth of—and a continuation of—biological evolution.

As noted by Kurzweil [

6] and Moravec [

7], the emergence of a technology-creating species has led to the exponential pace of technology on a timescale orders of magnitude faster than the process of evolution through DNA-guided protein synthesis. The accelerating development of technology is a process of creating ever more powerful technology using the tools from the previous round of innovation. While the first technological steps by our early ancestors of a few hundred thousand years ago produced tools with sharp edges, the taming of fire, and the creation of the wheel occurred much faster, taking only tens of thousands of years [

6]. However, for people living in this era, there was little noticeable technological change over a period of centuries, such is the experience of exponential growth where noticeable change does not occur until there is a rapid rise in the shape of the exponential function describing growth [

6]; this is what we are experiencing now with computing technology and to a lesser extent with enhancement technologies. As Kurzweil noted, in the nineteenth century, more technological change occurred than in the nine centuries preceding it and in the first twenty years of the twentieth century, there was more technological advancement than in all of the nineteenth century combined [

6]. In the 21st century, paradigm shifts in technology occur in only a few years and these paradigm shifts directed towards enhancing the body could lead to a future merger between humans and technology.

According to Ray Kurzweil [

6], if we apply the concept of exponential growth, which is predicted by the law of accelerating returns, to the highest level of evolution, the first step, the creation of cells, introduced the paradigm of biology. The subsequent emergence of DNA provided a digital method to record the results of evolutionary experiments and to store them within our cells. Then, the evolution of a species who combined rational thought with an opposable appendage occurred, allowing a fundamental paradigm shift from biology to technology [

6]. Consistent with the arguments presented in this paper, Kurzweil concludes that this century, the upcoming primary paradigm shift will be from biological thinking to a hybrid being combining biological and nonbiological thinking [

6,

28]. This hybrid will include “biologically inspired” processes resulting from the reverse engineering of biological brains; one current example is the use of neural nets built based on mimicking the brain’s neural circuitry. If we examine the timing and sequence of these steps, we observe that the process has continuously accelerated. The evolution of life forms required billions of years for the first steps, the development of primitive cells; later on, progress accelerated and during the Cambrian explosion, major paradigm shifts took only tens of millions of years. Later on, humanoids eventually developed over a period of millions of years, and

Homo sapiens over a period of only hundreds of thousands of years. We are now a tool-making species that may merge with and become the technology we are creating. This may happen by the end of this century or the next, such is the power of the law of accelerating returns for technology.