State of the Art and Challenges for Occupational Health and Safety Performance Evaluation Tools

Abstract

:1. Introduction

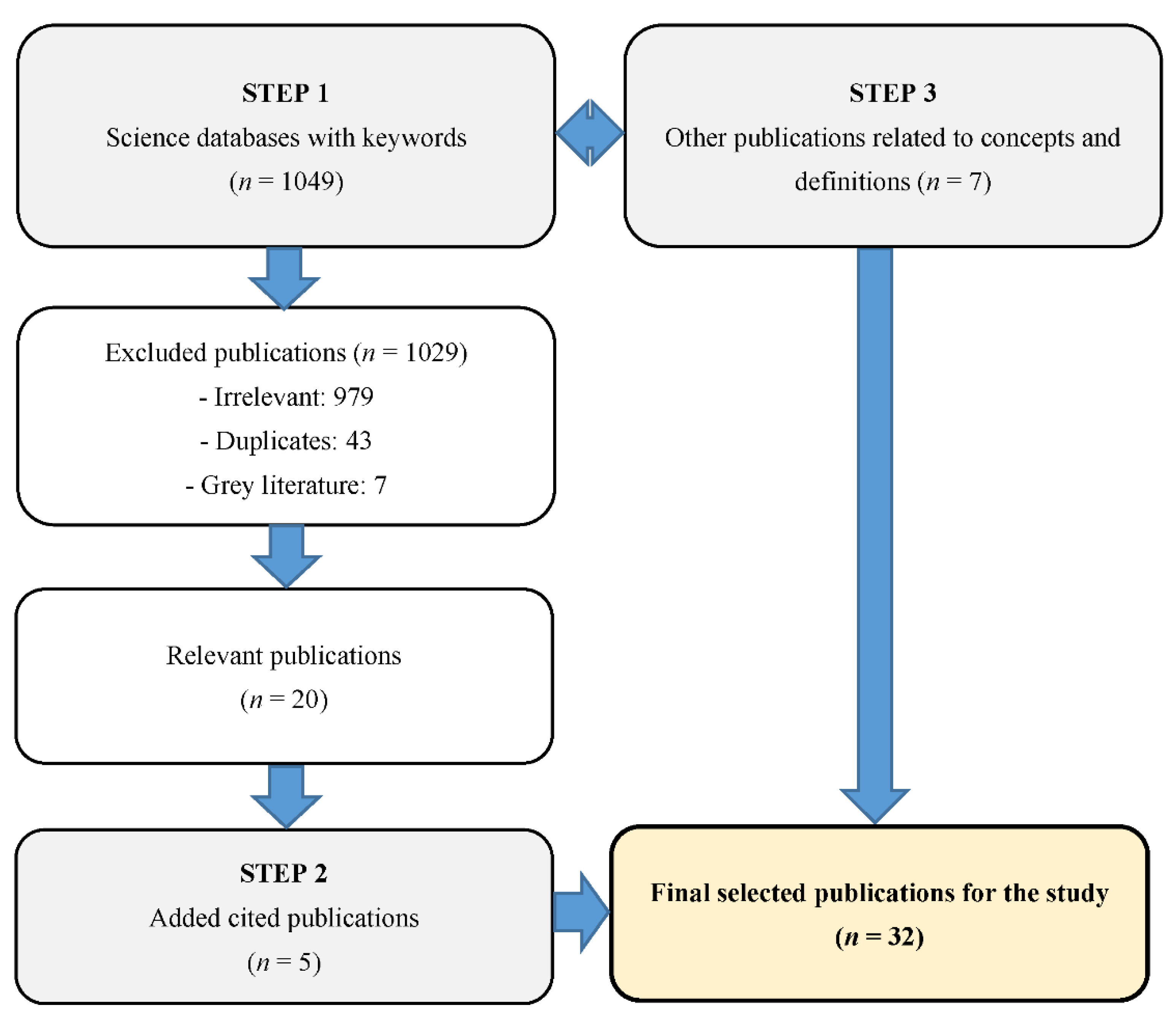

2. Research Methodology

3. Results

- -

- Performance is adequate if the organization practices effective management of OHS;

- -

- OHS management is effective if it reduces or eliminates work-related injuries or illnesses in the organization.

- -

- The design method, which differs from one tool to the next in accordance with the vision of the creators. The majority of the tools identified are based on published studies; others are based on specific data or expert opinion [7,15,16,17,20,21,22,23]. This criterion provides an overall idea of tool capability, effectiveness, and generalizability.

- -

- -

- Sector of application, which suggests how flexible the tool might be. An evaluation tool is potentially generalizable if it appears to be applicable in all sectors or better yet in any country. The majority of the tools found in the literature were designed for specific sectors [1,7,15,16,17,20,21,22,23,26,27,28,29,30].

- -

- Reliability, meaning that the tool is apt to give similar results from one evaluator to the next [5]. This essential criterion is not always met [5,31]. We seek a tool that is reliable by virtue of being designed with indicators that are relevant to OHS performance evaluation in a company of any size in any sector. This criterion is met only if the other three are.

3.1. Tool 1—OHS Self-Diagnostic Tool (2008)

3.2. Tool 2—Safety and Health Assessment System Standard in Construction (SHASSIC, 2010)

3.3. Tool 3—Organizational Performance Metric (OPM, 2011)

3.4. Tool 4—Project Safety Index (PSI, 2011)

3.5. Tool 5—System of Performance Indicators in Ergonomics for Building Construction (SIDECE, 2012)

3.6. Tool 6—Measures Suitable for Proactive Indicators of OHS Status (2012)

3.7. Tool 7—Total Safety Performance (TSP, 2014)

3.8. Tool 8—Fuzzy Comprehensive Performance Evaluation (HSE, 2015)

3.9. Tool 9—Monash University Organizational Performance Metric (OPM-MU, 2016)

- -

- Replacing the percentage scale with a 5-point Likert scale;

- -

- Including questions about perception to evaluate how OPM was associated with various elements of OHS;

- -

- Inviting participants in the survey to declare the number of incidents in which they were involved personally;

- -

- Collecting the measurements used in the workplace in each organization;

- -

- Inclusion of reactive indicators.

3.10. Tool 10—CORESafety Health and Safety Management System (2016)

3.11. Tool 11—Diagnosis of the Commitment to OHS (2016)

- -

- Commitment and support by upper management;

- -

- Employee (labour) participation;

- -

- Responsibilities of managers and labourers;

- -

- Organization of prevention;

- -

- Evaluation of overall OHS performance.

3.12. Tool 12—OHS Profile (2018)

3.13. Tool 13—Risk Management Maturity Measurement: A Preliminary Model (2018)

3.14. Tool 14—Measurable Proactive Indicators of Risk Management Maturity (2019)

3.15. Tool 15—Evaluation of OHS Management (2019)

3.16. Characteristics, Strengths, and Weaknesses of All Identified Tools

4. Discussion and Limitation of This Study

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Liu, Y.-J.; Chen, J.-L.; Cheng, S.-Y.; Hsu, M.-T.; Wang, C.-H. Evaluation of safety performance in process industries. Process. Saf. Prog. 2014, 33, 166–171. [Google Scholar] [CrossRef]

- Arezes, P.M.; Miguel, A.S. The role of safety culture in safety performance measurement. Meas. Bus. Excel. 2003, 7, 20–28. [Google Scholar] [CrossRef]

- Wu, T.-C.; Chen, C.-H.; Li, C.-C. A correlation among safety leadership, safety climate and safety performance. J. Loss Prev. Process. Ind. 2008, 21, 307–318. [Google Scholar] [CrossRef]

- Sgourou, E.; Katsakiori, P.; Goutsos, S.; Manatakis, E. Assessment of selected safety performance evaluation methods in regards to their conceptual, methodological and practical characteristics. Saf. Sci. 2010, 48, 1019–1025. [Google Scholar] [CrossRef]

- Tremblay, A.; Badri, A. Assessment of occupational health and safety performance evaluation tools: State of the art and challenges for small and medium-sized enterprises. Saf. Sci. 2018, 101, 260–267. [Google Scholar] [CrossRef]

- OIT. Système de Gestion de la SST: Un Outil Pour une Amélioration Continue; Organisation Internationale du Travail: Geneva, Switzerland, 2011. [Google Scholar]

- Tremblay, A.; Badri, A. A novel tool for evaluating occupational health and safety performance in small and medium-sized enterprises: The case of the Quebec forestry/pulp and paper industry. Saf. Sci. 2018, 101, 282–294. [Google Scholar] [CrossRef]

- Roy, M.; Bergeron, S.; Fortier, L. Développement D’instruments de Mesure de Performance en Santé et Sécurité du Travail à L’intention des Entreprises Manufacturières Organisées en Equipes Semi-Autonomes de Travail; Robert-Sauvé Research Institute in Occupational Health and Safety of Quebec: Montreal, QC, Canada, 2004. [Google Scholar]

- Bédard, S.; Bélanger, L.; Cormier, Y.; LeQuoc, S. Guide de Prévention: Indicateurs en Prévention SST; Association Paritaire Pour la Santé et la Sécurité du Travail du Secteur Affaires Sociales (ASSTSAS): Montréal, QC, Canada, 2018. [Google Scholar]

- INRS. Employeur, Institut National de la Recherche Scientifique (INRS). 2020. Available online: http://www.inrs.fr/demarche/employeur (accessed on 16 April 2020).

- Juglaret, F. Indicateurs et Tableaux de Bord Pour la Prévention des Risques en Santé-Sécurité au Travail. Ph.D. Thesis, École nationale supérieure des mines de Paris, Paris, France, 2012. [Google Scholar]

- Sinelnikov, S.; Inouye, J.; Kerper, S. Using leading indicators to measure occupational health and safety performance. Saf. Sci. 2015, 72, 240–248. [Google Scholar] [CrossRef]

- Ruiz, A. La Mesure de la Performance “Un Outil D’amélioration des Services en Acquisition”. In Journée des Acquisitions et Des TIC; Université LAVAL: Quebec City, QC, Canada, 2014. [Google Scholar]

- CNESST. Honorons la Mémoire des Personnes Décédées ou Blessées au Travail. 2019. Available online: https://www.cnesst.gouv.qc.ca/salle-de-presse/communiques/Pages/26-avril-2019-quebec.aspx (accessed on 15 February 2020).

- Agumba, J.N.; Haupt, T.C. Identification of health and safety performance improvement indicators for small and medium construction enterprises: A Delphi consensus study. Mediter. J. Soc. Sci. 2012, 3, 545. [Google Scholar]

- Weijun, L.; Liang, W.; Zhang, L.; Tang, Q. Performance assessment system of health, safety and environment based on experts’ weights and fuzzy comprehensive evaluation. J. Loss Prev. Process. Ind. 2015, 35, 95–103. [Google Scholar]

- Lingard, H.; Wakefield, R.; Cashin, P. The development and testing of a hierarchical measure of project OHS performance. Eng. Constr. Arch. Manag. 2011, 18, 30–49. [Google Scholar] [CrossRef] [Green Version]

- Duguay, P.; Busque, M.-A.; Boucher, A. Indicateurs Annuels de Santé et de Sécurité du Travail Pour le Québec: Étude de Faisabilité (Version Révisée); Robert-Sauvé Research Institute in Occupational Health and Safety of Quebec: Montreal, QC, Canada, 2012. [Google Scholar]

- Reiman, T.; Pietikäinen, E. Leading indicators of system safety–monitoring and driving the organizational safety potential. Saf. Sci. 2012, 50, 1993–2000. [Google Scholar] [CrossRef]

- Misnan, M.S.; Mohamad, S.F.; Yusof, Z.M.; Bakri, A. Improving Construction Industry Safety Standard through Audit: SHASSIC Assessment Tools for Safety. In Proceedings of the CRIOCM 2010 15th International Symposium, Johor Bahru, Malaysia, 6–7 August 2010. [Google Scholar]

- Bezerra, I.X.B.; de Carvalho, R.J.M. Construction and application of an indicator system to assess the ergonomic performance of large and medium-sized construction companies. Work 2012, 41 (Suppl. 1), 3798–3805. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Bourque, G.; Chabot, L.-F. Outil Diagnostic: Prise en Charge de la Santé et de la Sécurité du Travail. In Commission des Normes, de L’équité, de la Santé et de la Sécurité du Travail (CNESST); Commission des Normes, de L’équité, de la Santé et de la Sécurité du travail: Québec, QC, Canada, 2016. [Google Scholar]

- Cheng, S.-Y.; Lin, K.-P.; Liou, Y.-W.; Hsiao, C.-H.; Liu, Y.-J. Constructing an active health and safety performance questionnaire in the food manufacturing industry. Int. J. Occup. Saf. Ergon. 2019, 27, 1–7. [Google Scholar] [CrossRef] [PubMed]

- Cadieux, J.; Roy, M.; Desmarais, L. A preliminary validation of a new measure of occupational health and safety. J. Saf. Res. 2006, 37, 413–419. [Google Scholar] [CrossRef] [PubMed]

- Hinze, J.; Thurman, S.; Wehle, A. Leading indicators of construction safety performance. Saf. Sci. 2013, 51, 23–28. [Google Scholar] [CrossRef]

- Roy, M.; Cadieux, J.; Fortier, L.; Leclerc, L. Validation D’un Outil D’autodiagnostic et D’un Modèle de Progression de la Mesure en Santé et Sécurité du Travail; Robert-Sauvé Research Institute in Occupational Health and Safety of Quebec: Montréal, QC, Canada, 2008; p. 36. [Google Scholar]

- Haas, E.J.; Yorio, P. Exploring the state of health and safety management system performance measurement in mining organizations. Saf. Sci. 2016, 83, 48–58. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Kaassis, B.; Badri, A. Development of a preliminary model for evaluating occupational health and safety risk management maturity in small and medium-sized enterprises. Safety 2018, 4, 5. [Google Scholar] [CrossRef] [Green Version]

- Shea, T.; De Cieri, H.; Donohue, R.; Cooper, B.; Sheehan, C. Leading indicators of occupational health and safety: An employee and workplace level validation study. Saf. Sci. 2016, 85, 293–304. [Google Scholar] [CrossRef]

- Sun, J.; Liu, C.; Yuan, H. Evaluation of Risk Management Maturity: Measurable Proactive Indicators Suitable for Chinese Small and Medium-Sized Chemical Enterprises. In IOP Conference Series: Earth and Environmental Science; IOP Publishing: Xi’an, China, 2019. [Google Scholar]

- Robson, L.S.; Bigelow, P.L. Measurement properties of occupational health and safety management audits: A systematic literature search and traditional literature synthesis. Can. J. Public Health 2010, 101, S34–S40. [Google Scholar] [CrossRef] [PubMed]

- Amick, B.; Farquhar, A.; Grant, K.; Hunt, S.; Kapoor, K.; Keown, K.; Lawrie, C.; McKean, C.; Miller, S.; Murphy, C.; et al. Benchmarking Organisational Leading Indicators for the Prevention and Management of Injuries and Illnesses: Final Report; Institute for Work & Health: Toronto, ON, Canada, 2011; p. 14. [Google Scholar]

| Authors | Category | Formula |

|---|---|---|

| IRSST report R-357 [8] | Rate (R) | |

| Frequency (F) | ||

| Severity (S) | ||

| Severity index (I) | ||

| IRSST report R-725 [18] | Frequency (F) | |

| Rate (R) | ||

| Severity (S) | ||

| Prevalence | ||

| 1 CSST burden (B) | ||

| Payout per lesion (avg.) | ||

| [11] | Severity index (S) | |

| Severity (S) | ||

| Rate (R) | ||

| Frequency (F) | ||

| ASSTSAS GP75 guide to prevention [9] | Frequency (F) of absence per 100 2 FTE workers | |

| Rate of lesions causing absence per 200,000 h worked (R) | ||

| Severity per 100 FTE workers | ||

| Severity (SS) scaled to 200,000 h worked | ||

| Severity index (SI) |

| N° | Indicator |

|---|---|

| 1 | The required means of protection are installed on the equipment and machinery. |

| 2 | Preventative maintenance of the equipment is carried out. |

| 3 | The employer provides the personal protective devices required for the work. |

| 4 | The employer respects regulations regarding noise, air quality, and so on. |

| 5 | The workstations are adjustable to the characteristics of the employees. |

| 6 | The employer enforces safe work practices (e.g., lockout–tagout, enclosures, etc.) |

| N° | Indicator |

|---|---|

| 1 | Personal protective devices |

| 2 | Evaluation of risks and identification of hazards |

| 3 | Safety policy |

| 4 | Training and promotion (e.g., initial training, training prior to job performance review, handling of dangerous materials, etc.) |

| 5 | Management of machinery and equipment |

| 6 | Emergency safety procedures (e.g., evacuation route, location of first-aid kits, important phone numbers, persons in charge to contact, etc.) |

| 7 | System of accident reporting and inquiry |

| N° | Indicator |

|---|---|

| 1 | Formal safety audits at regular intervals are an integral part of our activities. |

| 2 | All personnel promote continuous improvement of OHS performance. |

| 3 | The company considers OHS to be as important as production and quality. |

| 4 | Laborers and supervisors all have the information they need in order to work safely. |

| 5 | Laborers always participate in decisions involving health and safety. |

| 6 | Staff in charge of OHS have the power to bring about changes deemed necessary. |

| 7 | Employees who practice safe methods of working are recognized and encouraged. |

| 8 | All personnel are provided with the protective devices necessary for working safely. |

| Type | Indicator |

|---|---|

| Reactive | Number of employees injured |

| Number of injuries requiring medical treatment | |

| Number of injuries not requiring first aid | |

| Number of injuries causing lost hours of work | |

| Proactive | Number of close-call mishaps declared |

| Number of informal inspections | |

| Number of problems noted during informal inspections | |

| Number of official inspections | |

| Number of problems noted during official inspections | |

| Number of analyses of risk | |

| Number of problems noted during risk analysis | |

| Complementary elements of inquiry | I have received proper training in OHS. |

| OHS concerns can be discussed openly. | |

| My supervisor recognizes and supports safe behavior. | |

| My supervisor is open to ideas for improving OHS. | |

| My coworkers participate in OHS activities. | |

| My coworkers are mindful of my health and safety. | |

| Management promotes OHS in a true sense. |

| Type | Indicator |

|---|---|

| Reactive | Production error rate |

| Lost labor attributed to ergonomically inappropriate furniture | |

| Company net income | |

| Medical care provided to workers (cost, number of interventions) | |

| Seriousness of accidents | |

| Absenteeism | |

| Cost of repairing and replacing equipment and materials | |

| Proactive | External pressure on company |

| Good logistical and construction site set-up practices | |

| NR-17 compliance with construction site environmental conditions | |

| NR-17 compliance with site machinery, equipment, and tools | |

| NR-17 compliance with task organization | |

| Improvement of work processes and technologies | |

| Employee trust of employer index | |

| PCMAT compliance with worker health and safety | |

| OHSAS 18,001 compliance with workplace satisfaction | |

| NR-18 compliance with material loading, transport, and unloading |

| N° | Indicator |

|---|---|

| 1 | For each project, employ at least one worker with OHS training. |

| 2 | For each project, employ at least one OHS representative. |

| 3 | Provide written information on OHS procedures. |

| 4 | Inform the workers about preventive and risk-reducing measures with pamphlets. |

| 5 | Provide verbal instructions on OHS that are understandable by all employees. |

| 6 | Organize regular meetings to inform workers verbally about OHS measures. |

| 7 | Provide personal protective devices. |

| 8 | Provide the right tools, equipment, and installations for the job. |

| 9 | Set up the project site with OHS in mind. |

| 10 | Use proper procedures for risk evaluation. |

| 11 | Have hazards identified by at least one employee trained in OHS. |

| 12 | Carry out OHS inspections on a daily basis at least. |

| 13 | Give employees OHS training regularly. |

| 14 | Encourage and support OHS training of employees. |

| 15 | Communicate regularly with employees on OHS matters. |

| 16 | Implement the OHS management system properly. |

| 17 | Have an OHS policy. |

| 18 | Participate in OHS inspections. |

| 19 | Participate in the production of the OHS policy. |

| Dimension | Indicator |

|---|---|

| Technical | Self-inspection |

| Emergency plan | |

| Personal protective devices | |

| Handling of dangerous materials | |

| Safety protection (including risk control) | |

| Risk analysis | |

| Organizational | Legislation and regulation |

| Accident statistics and inquiry | |

| Commitment of management | |

| Organization and responsibility | |

| Education and training | |

| Management of subcontractors | |

| Management of purchases | |

| Management of change | |

| Licenses, work permits | |

| Communication | |

| Monitoring the work environment | |

| Health examinations | |

| Safety audit | |

| Planning review | |

| Progress review | |

| Follow-up review | |

| Human | Employee participation |

| Safe behavior | |

| Safety-oriented attitude |

| N° | Indicator | N° | Indicator |

|---|---|---|---|

| 1 | Leadership and commitment | 16 | Community and public relations |

| 2 | Health, safety, and environmental mission | 17 | Licenses, work permits |

| 3 | Hazard identification, risk evaluation, and critical control point determination | 18 | Health in the workplace |

| 4 | Legal and other obligations | 19 | Production per se |

| 5 | Objectives and goals | 20 | Operational control |

| 6 | Programs | 21 | Management of change |

| 7 | Organizational approach, obligations, resources, and documents | 22 | Emergency preparations and intervention |

| 8 | Resources | 23 | Output measurement and monitoring |

| 9 | Skills, training, and sensitization | 24 | Evaluation of compliance |

| 10 | Communication, participation, and consultation | 25 | Aberrations, corrective and preventive actions |

| 11 | Documentation | 26 | Incident/accident management |

| 12 | Monitoring of documents | 27 | Monitoring of recordings |

| 13 | Installation structural integrity | 28 | Internal OHS audit |

| 14 | HSE management of subcontractors and suppliers | 29 | Managerial review |

| 15 | Clients and products |

| N° | Indicator | N° | Indicator |

|---|---|---|---|

| 1 | Responsibility for OHS | 6 | OHS hierarchical structure |

| 2 | Consultation and communication about OHS | 7 | Risk management |

| 3 | Autonomizing and involvement of employees in OHS decisions | 8 | OHS systems (policies, procedures, practice) |

| 4 | Commitment and leadership of management | 9 | Training, interventions, information, OHS tools and resources |

| 5 | Recognition of and positive feedback for OHS efforts | 10 | OHS inspections and audits in the workplace |

| Indicator Category | Performance Indicator |

|---|---|

| Interventions | Number of communications and meetings |

| Number of inquiries and examinations | |

| Number of corrective actions carried out | |

| Number of hazard alerts or suggestions | |

| Number of behavioral observations | |

| Information on employee participation (percent, number) | |

| Number of inquiries focused on OHS | |

| Organizational performance | Number and type of citations; percent compliance |

| Number and type of near accidents (close calls) | |

| Behavioral observation results | |

| Performance evaluation results | |

| Results of risk management studies (hazard inspections and audits) | |

| Inquiries into performance | |

| Employee performance | Numbers and type of injuries and illnesses |

| Results of analyses of principal causes of injuries and illnesses | |

| Medical monitoring or drug testing results | |

| Results of evaluations of employee knowledge of OHS | |

| Job performance reviews and interviews |

| Dimension | Organizational | Technical | Behavioral | Continued Improvement |

|---|---|---|---|---|

| Themes |

|

|

| Continued improvement |

| Theme | Indicator |

|---|---|

| Commitment of company directors | Managers have defined and written OHS roles and responsibilities (e.g., description of tasks, mandates). |

| Directors follow up to ensure that managers are fulfilling their OHS duties. | |

| Mechanisms of employee participation in OHS are in place (e.g., health and safety committee, designated representatives, OHS meetings). | |

| OHS is promoted by means other than posters. | |

| Identification and control of risks | Rescue and first-aid registries are in place. |

| The registry is used for preventive purposes (e.g., identifying recurrences, training employees, etc.). | |

| Inquiries and analyses are conducted after accidents and documented. | |

| OHS inspections are conducted periodically. | |

| Prevention program | The prevention program is up to date. |

| Written proof that every employee understands the program is on file. | |

| Employees who have no emergency duties know the evacuation plan. | |

| Employees who have emergency duties know the procedures. | |

| Training | New employees are trained for their tasks at their station (e.g., paired with an experienced partner). |

| A formal written training plan is in place. | |

| The employer files systematically written proof of training dispensed. | |

| Oversight of subcontractors | Subcontractors are apprised of the prevention program, the risks inherent in the company’s operations, and so on. |

| The subcontractor’s prevention program is requested and archived. | |

| All subcontractors sign when they have been apprised. |

| Indicator Category | Measurement |

|---|---|

| Identification of OHS risks | Number of hazards identified |

| Number of incident reports filed | |

| Number of inspections conducted | |

| Number of persons trained to identify hazards | |

| OHS risk estimation and evaluation | Number of estimations and evaluations conducted and validated |

| Number of risks identified per risk level | |

| Preventive and corrective actions | Number of preventive and corrective actions recommended |

| Number of effective preventives and corrective actions (verified and validated) | |

| Number of preventive actions per type of hazard (e.g., cramped spaces, heights, etc.) | |

| Number of actions correctives prioritized, per type of hazard (e.g., high or low severity) | |

| New number of hazards reported after implementation of preventive and corrective measures | |

| Characterization of risks | Correlation between proactive and reactive indicators |

| Number of potential hazards (of low or high severity, etc.) | |

| Number of hazards per specific category (e.g., cramped spaces, heights, etc.) | |

| Monitoring and review | Number of new evaluations of OHS risks |

| Effectiveness of corrective actions implemented |

| Code | Indicator Category | Examples of Measurements |

|---|---|---|

| O1 | Hazard identification | Number of hazards identified |

| Number of inspections focused on the safety of chemicals | ||

| Number of inspections focused on work-related risks | ||

| Number of persons trained to identify hazards | ||

| O2 | Risk estimation and evaluation | Number of estimations and re-evaluations performed |

| Risks identified per level or category | ||

| O3 | Preventive and corrective actions | Number of preventive and corrective actions recommended |

| Number of preventive/corrective actions judged effective | ||

| Number of preventive measures per type of hazard (e.g., closed spaces, sparks, etc.) | ||

| New number of hazards reported after implementing preventive and corrective measures | ||

| O4 | Characterization of risks | Correlation proactive and reactive indicators |

| Number of potential hazards ranked by severity | ||

| Number of hazards by specific category (e.g., closed spaces, heights, etc.) | ||

| O5 | Follow-up and examination | Number of new evaluations of risk |

| Effectiveness and efficiency of corrective actions implemented |

| OHS Factor | Key Indicator of Performance |

|---|---|

| Organizational | Preventive management practices |

| Protective measures for employees | |

| Human | Safety improvement program |

| Tool | Cited in [5] | Design Method | Content | Sector and Country | Intended User | Strengths | Weaknesses | |

|---|---|---|---|---|---|---|---|---|

| 1 | OHS self-diagnostic tool [26] | Yes | Indicators seen in the literature; reviewed by OHS experts; iterative process | Proactive indicators; 10-point Likert scale | Printing (Canada) | Managers | Simple and user-friendly | Vagueness and ambiguity; Needs to be adapted to each new milieu |

| 2 | Safety and health assessment system standard in construction [20] | No | Indicators taken from building construction master plan | 14 proactive indicators, scored with 1 to 5 stars | Construction (Malaysia) | Unspecified construction site personnel | Measures OHS performance; Guides improvement | Not generalizable; Elements vary from one study to the next |

| 3 | Organizational performance metric [32] | Yes | Indicators seen in the literature; Reviewed by OHS experts | 8 proactive indicators; 5-point Likert scale | All (Canada) | Entire staff | Simple and general | Limited reliability;Corrective measures difficult to identify |

| 4 | Project safety index [17] | Yes | Indicators chosen by managers and clients of the company | 11 indicators: 7 proactive, 4 reactive; 14 questions intended for employees | Construction (Australia) | Managers | Ease of application; Combination of reactive and proactive indicators | Needs to be adapted for use in other economic sectors |

| 5 | SIDECE ergonomics in building construction [21] | No | Indicators seen in the literature or based on ergonomic standards | 62 indicators: 33 proactive, 29 reactive, scored 1 to 5 | Building construction (Brazil) | Managers | Combination of indicators; Integration of ergonomic indicators | Vagueness and ambiguity; Focus on ergonomics |

| 6 | Proactive OHS indicator measures [15] | No | Indicators seen in the literature; reviewed by OHS experts | 62 proactive indicators chosen using Delphi | Construction (South Africa) | Entire staff | Precise use of proactive indicators | Needs to be adapted to each economic sector |

| 7 | Total safety performance [1] | Yes | Indicators seen in the literature; reviewed by OHS experts | 25 proactive indicators scored 1 to 5 | Electronics (Taiwan) | Not specified | Variety of indicators; Broad vision of OHS performance | Choice of indicators affects recommendations |

| 8 | HSE fuzzy comprehensive performance evaluation [16] | Yes | Indicator choice based on company In-house procedure | 29 proactive indicators scored 1 to 5; Data processed by software | Petrochemical (China) | Managers; OHS practitioners | Effective and practical for comparative studies | Difficult to generalize; Not all indicators express clearly what is being evaluated |

| 9 | Organizational performance metric [29] | No | Indicators seen in the literature; adaptation of the OPM model (tool 3) | 10 proactive indicators scored 1 to 5 | All (Australia) | Entire staff | Simplified measure used as an initial inquiry into OHS status | Not generalizable without adaptation |

| 10 | CORESafety HSMS [27] | No | Indicator selection aided by inquiry and review by OHS experts | 22 indicators; encoding; qualitative content analysis | Mining (USA) | Managers; OHS professionals | Broad vision of OHS performance | Designed for a single sector; too few indicators to evaluate OHS management |

| 11 | OHS commitment diagnostic tool [22] | No | Created by OHS experts at the CNESST | Questionnaire | All but construction (Québec, Canada) | OHS professionals | Simple, no specific OHS training required | Effectiveness depends on answers; no software support |

| 12 | “Profil SST” [7] | No | Indicators seen in the literature; reviewed by OHS experts | 94 proactive indicators scored 0 or 1 | Forestry, pulp, and paper (Québec, Canada) | Prevention practitioners | Simple and user-friendly | Not applicable outside the sector |

| 13 | Preliminary model of risk management maturity evaluation [28] | No | Indicators seen in the literature | 23 proactive indicators scored 1 to 5 | All (Canada) | Managers | Not limited to any specific sector of activity | No mode of indicator weighting or quantifying is provided |

| 14 | Measurable proactive indicators of OHS risk [30] | No | Indicators seen in the literature | 23 indicators (50 examples) case study | Chemical (China) | Managers | Proactive focus | For small and medium-sized chemical companies only |

| 15 | OHS management performance evaluation [23] | No | Indicators seen in the literature; reviewed by OHS experts | 28 proactive indicators scored 1 to 5 | Food industry (Taiwan) | Not specified | Complete model | Effectiveness unclear; some poor choices of indicator |

| Type | Advantages | Drawbacks |

|---|---|---|

| Reactive |

|

|

| Proactive |

|

|

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jemai, H.; Badri, A.; Ben Fredj, N. State of the Art and Challenges for Occupational Health and Safety Performance Evaluation Tools. Safety 2021, 7, 64. https://doi.org/10.3390/safety7030064

Jemai H, Badri A, Ben Fredj N. State of the Art and Challenges for Occupational Health and Safety Performance Evaluation Tools. Safety. 2021; 7(3):64. https://doi.org/10.3390/safety7030064

Chicago/Turabian StyleJemai, Hajer, Adel Badri, and Nabil Ben Fredj. 2021. "State of the Art and Challenges for Occupational Health and Safety Performance Evaluation Tools" Safety 7, no. 3: 64. https://doi.org/10.3390/safety7030064

APA StyleJemai, H., Badri, A., & Ben Fredj, N. (2021). State of the Art and Challenges for Occupational Health and Safety Performance Evaluation Tools. Safety, 7(3), 64. https://doi.org/10.3390/safety7030064