Revolutionizing Cow Welfare Monitoring: A Novel Top-View Perspective with Depth Camera-Based Lameness Classification

Abstract

1. Introduction

2. Research Background and Related Works

3. Materials and Methods

3.1. Data Collection and Preprocessing

3.2. Automatic Cow Detection

3.2.1. Noise Removing

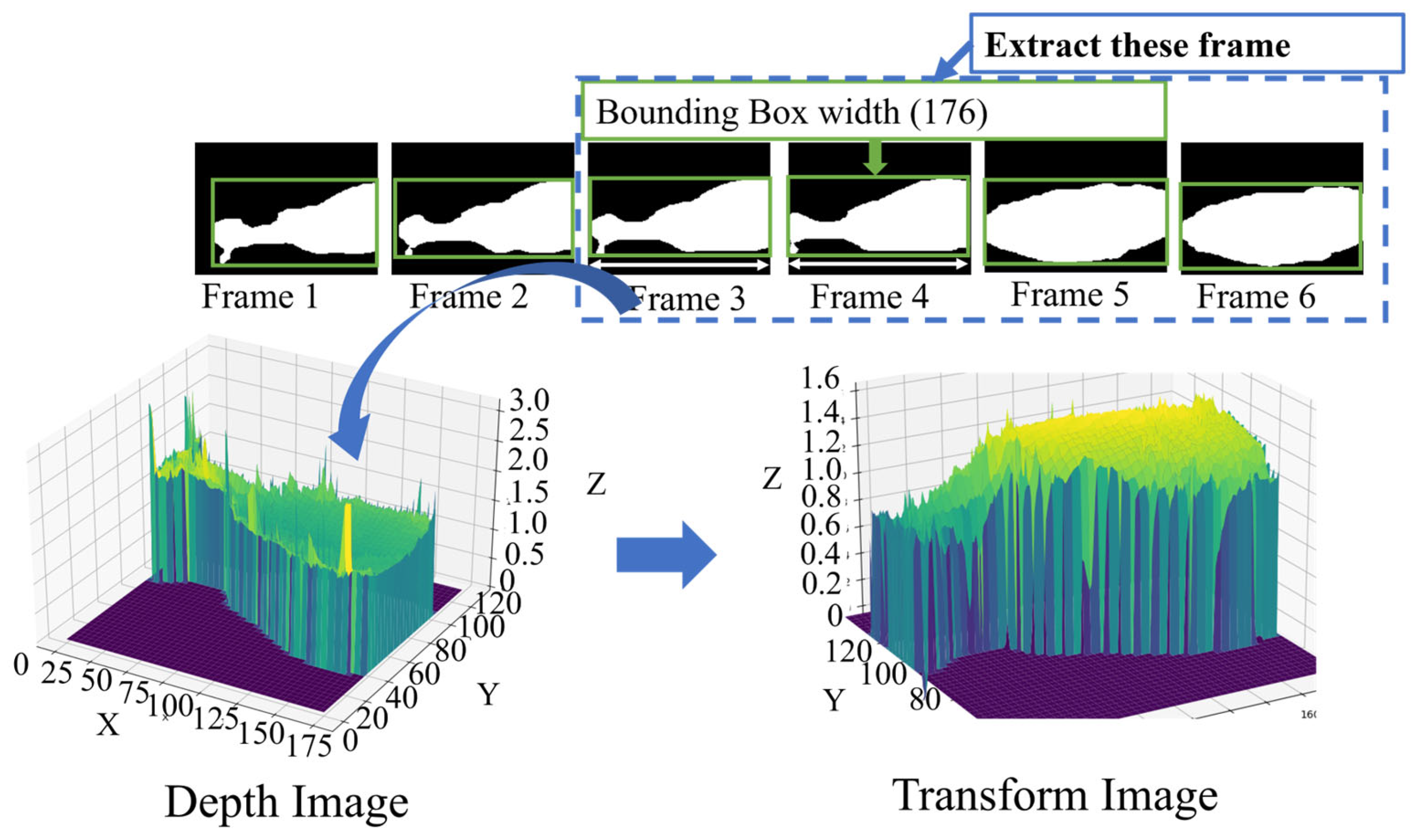

3.2.2. Cow Depth Region Extraction

3.3. Automatic Cow Tracking

3.4. Cow Lameness Classification

3.4.1. Cow Lameness Classification

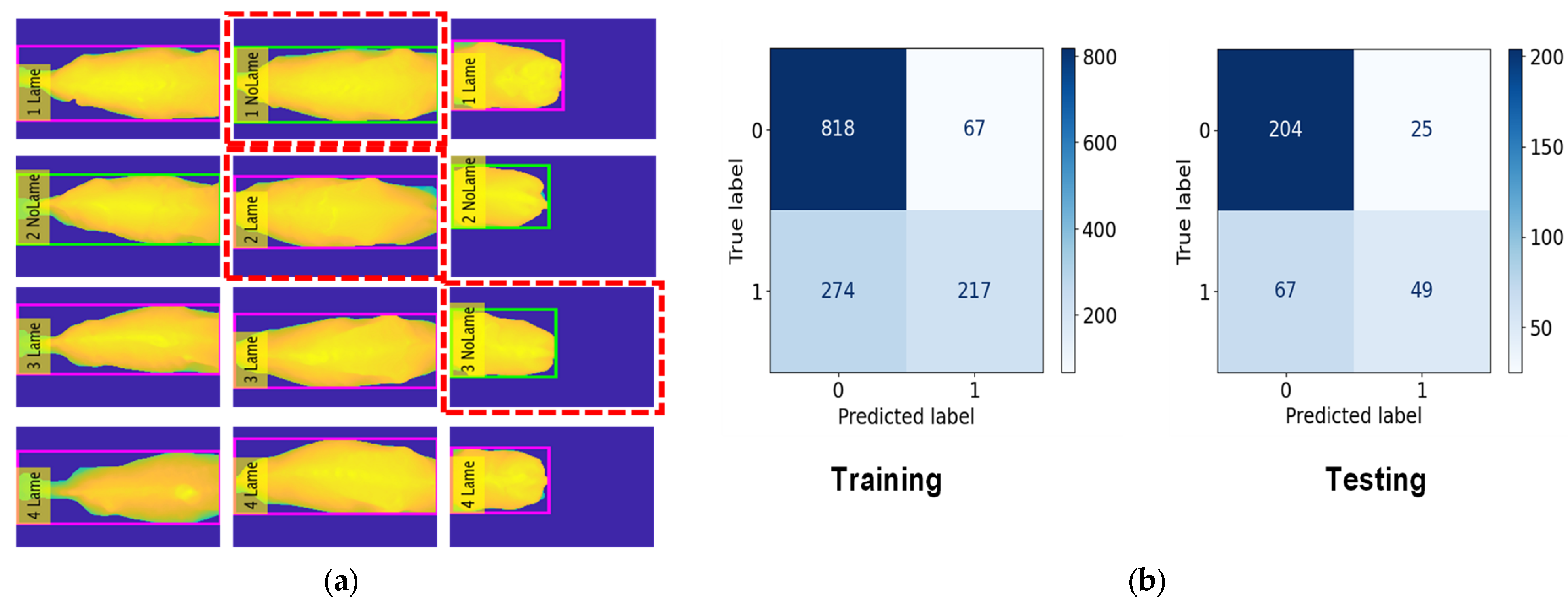

Lameness and No-Lameness Cows

4. Performance Evaluation

4.1. Automatic Cow Detection Accuracy

4.2. Automatic Cow Tracking Accuracy

4.3. Cow Lameness Classification Accuracy

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Van Nuffel, A.; Zwertvaegher, I.; Pluym, L.; Van Weyenberg, S.; Thorup, V.M.; Pastell, M.; Sonck, B.; Saeys, W. Lameness detection in dairy cows: Part 1. How to distinguish between non-lame and lame cows based on differences in locomotion or behavior. Animals 2015, 5, 838–860. [Google Scholar] [CrossRef]

- Meseret, S. A review of poultry welfare in conventional production system. Livest. Res. Rural Dev. 2016, 28, 234–245. [Google Scholar]

- Jiang, B.; Song, H.; Wang, H.; Li, C. Dairy cow lameness detection using a back curvature feature. Comput. Electron. Agric. 2022, 194, 106729. [Google Scholar] [CrossRef]

- Tun, S.C.; Zin, T.T.; Tin, P.; Kobayashi, I.I. Cow Lameness Detection Using Depth Image Analysis. In Proceedings of the IEEE 11th Global Conference on Consumer Electronics (GCCE), Osaka, Japan, 18–21 October 2022; pp. 492–493. [Google Scholar]

- Arazo, E.; Aly, R.; McGuinness, K. Segmentation Enhanced Lameness Detection in Dairy Cows from RGB and Depth Video. arXiv 2022, arXiv:2206.04449. [Google Scholar]

- Pham, V.; Pham, C.; Dang, T. Road damage detection and classification with detectron2 and faster r-cnn. In Proceedings of the 2020 IEEE International Conference on Big Data (Big Data), Atlanta, GA, USA, 10–13 December 2020; pp. 5592–5601. [Google Scholar]

- Kattenborn, T.; Leitloff, J.; Schiefer, F.; Hinz, S. Review on Convolutional Neural Networks (CNN) in vegetation remote sensing. ISPRS J. Photogramm. Remote Sens. 2021, 173, 24–49. [Google Scholar] [CrossRef]

- Fregonesi, J.A.; Veira, D.M.; Von Keyserlingk, M.A.G.; Weary, D.M. Effects of bedding quality on lying behavior of dairy cows. J. Dairy Sci. 2007, 90, 5468–5472. [Google Scholar] [CrossRef] [PubMed]

- Viazzi, S.; Bahr, C.; Schlageter-Tello, A.A.; Van Hertem, T.; Romanini, C.E.B.; Pluk, A.; Halachmi, I.; Lokhorst, C.; Berckmans, D. Analysis of individual classification of lameness using automatic measurement of back posture in dairy cattle. J. Dairy Sci. 2013, 96, 257–266. [Google Scholar] [CrossRef]

- Van Nuffel, A.; Zwertvaegher, I.; Van Weyenberg, S.; Pastell, M.; Thorup, V.M.; Bahr, C.; Sonck, B.; Saeys, W. Lameness detection in dairy cows: Part 2. Use of sensors to automatically register changes in locomotion or behavior. Animals 2015, 5, 861–885. [Google Scholar] [CrossRef] [PubMed]

- Redmon, J.; Farhadi, A. Yolov3: An incremental improvement. arXiv 2018, arXiv:1804.02767. [Google Scholar]

- Wu, D.; Wu, Q.; Yin, X.; Jiang, B.; Wang, H.; He, D.; Song, H. Lameness detection of dairy cows based on the YOLOv3 deep learning algorithm and a relative step size characteristic vector. Biosyst. Eng. 2020, 189, 150–163. [Google Scholar] [CrossRef]

- Zin, T.T.; Htet, Y.; Tun, S.C.; Tin, P. Artificial Intelligence Topping on Spectral Analysis for Lameness Detection in Dairy Cattle. Proc. Annu. Conf. Biomed. Fuzzy Syst. Assoc. 2022, 35, C-3. [Google Scholar]

- Thorup, V.; Munksgaard, L.; Robert, P.-E.; Erhard, H.; Thomsen, P.; Friggens, N. Lameness detection via leg-mounted accelerometers on dairy cows on four commercial farms. Animal 2015, 9, 1704–1712. [Google Scholar] [CrossRef] [PubMed]

- Haladjian, J.; Haug, J.; Nüske, S.; Bruegge, B. A wearable sensor system for lameness detection in dairy cattle. Multimodal Technol. Interact. 2018, 2, 27. [Google Scholar] [CrossRef]

- Pastell, M.; Kujala, M.; Aisla, A.M.; Hautala, M.; Poikalainen, V.; Praks, J.; Veermäe, I.; Ahokas, J. Detecting cow’s lameness using force sensors. Comput. Electron. Agric. 2008, 64, 34–38. [Google Scholar] [CrossRef]

- Thorup, V.M.; Nielsen, B.L.; Robert, P.-E.; Giger-Reverdin, S.; Konka, J.; Michie, C.; Friggens, N.C. Lameness affects cow feeding but not rumination behavior as characterized from sensor data. Front. Veter- Sci. 2016, 3, 37. [Google Scholar] [CrossRef] [PubMed]

- Barker, Z.; Diosdado, J.V.; Codling, E.; Bell, N.; Hodges, H.; Croft, D.; Amory, J. Use of novel sensors combining local positioning and acceleration to measure feeding behavior differences associated with lameness in dairy cattle. J. Dairy Sci. 2018, 101, 6310–6321. [Google Scholar] [CrossRef]

- Maertens, W.; Vangeyte, J.; Baert, J.; Jantuan, A.; Mertens, K.C.; De Campeneere, S.; Pluk, A.; Opsomer, G.; Van Weyenberg, S.; Van Nuffel, A. Development of a real time cow gait tracking and analysing tool to assess lameness using a pressure sensitive walkway: The GAITWISE system. Biosyst. Eng. 2011, 110, 29–39. [Google Scholar] [CrossRef]

- Zheng, Z.; Zhang, X.; Qin, L.; Yue, S.; Zeng, P. Cows’ legs tracking and lameness detection in dairy cattle using video analysis and Siamese neural networks. Comput. Electron. Agric. 2023, 205, 107618. [Google Scholar] [CrossRef]

- Barney, S.; Dlay, S.; Crowe, A.; Kyriazakis, I.; Leach, M. Deep learning pose estimation for multi-cattle lameness detection. Sci. Rep. 2023, 13, 4499. [Google Scholar] [CrossRef]

- Venter, Z.S.; Hawkins, H.-J.; Cramer, M.D. Cattle don’t care: Animal behaviour is similar regardless of grazing management in grasslands. Agric. Ecosyst. Environ. 2019, 272, 175–187. [Google Scholar] [CrossRef]

- Alharthi, A.S.; Yunas, S.U.; Ozanyan, K.B. Deep learning for monitoring of human gait: A review. IEEE Sens. J. 2019, 19, 9575–9591. [Google Scholar] [CrossRef]

- Van Hertem, T.; Viazzi, S.; Steensels, M.; Maltz, E.; Antler, A.; Alchanatis, V.; Schlageter-Tello, A.A.; Lokhorst, K.; Romanini, E.C.; Bahr, C.; et al. Automatic lameness detection based on consecutive 3D-video recordings. Biosyst. Eng. 2014, 119, 108–116. [Google Scholar] [CrossRef]

- Kang, X.; Zhang, X.D.; Liu, G. A review: Development of computer vision-based lameness detection for dairy cows and discussion of the practical applica-tions. Sensors 2021, 21, 753. [Google Scholar] [CrossRef] [PubMed]

- Iltis, A.; Snoussi, H. The Temporal PET Camera: A New Concept With High Spatial and Timing Resolution for PET Imaging. J. Imaging 2015, 1, 45–59. [Google Scholar] [CrossRef]

- Varaksin, A.Y.; Ryzhkov, S.V. Mathematical Modeling of Structure and Dynamics of Concentrated Tornado-like Vortices: A Review. Mathematics 2023, 11, 3293. [Google Scholar] [CrossRef]

- De Pellegrini, M.; Orlandi, L.; Sevegnani, D.; Conci, N. Mobile-Based 3D Modeling: An In-Depth Evaluation for the Application in Indoor Scenarios. J. Imaging 2021, 7, 167. [Google Scholar] [CrossRef]

- Le Cozler, Y.; Allain, C.; Caillot, A.; Delouard, J.; Delattre, L.; Luginbuhl, T.; Faverdin, P. High-precision scanning system for complete 3D cow body shape imaging and analysis of morphological traits. Comput. Electron. Agric. 2019, 157, 447–453. [Google Scholar] [CrossRef]

- Jia, N.; Kootstra, G.; Koerkamp, P.G.; Shi, Z.; Du, S. Segmentation of body parts of cows in RGB-depth images based on template matching. Comput. Electron. Agric. 2021, 180, 105897. [Google Scholar] [CrossRef]

- Jabbar, K.A.; Hansen, M.F.; Smith, M.L.; Smith, L.N. Early and non-intrusive lameness detection in dairy cows using 3-dimensional video. Biosyst. Eng. 2017, 153, 63–69. [Google Scholar] [CrossRef]

- Miekley, B.; Traulsen, I.; Krieter, J. Principal component analysis for the early detection of mastitis and lameness in dairy 375 cows. J. Dairy Res. 2013, 80, 335–343. [Google Scholar] [CrossRef]

- Abhishek, A.V.S.; Kotni, S. Detectron2 object detection & manipulating images using cartoonization. Int. J. Eng. Res. Technol. (IJERT) 2021, 10. [Google Scholar]

- Bernardin, K.; Stiefelhagen, R. Evaluating multiple object tracking performance: The clear mot metrics. EURASIP J. Image Video Process. 2008, 2008, 246309. [Google Scholar] [CrossRef]

| Dataset | Date | Time | #Frames | #Instances |

|---|---|---|---|---|

| Training | 22 January 2023 (Morning) | 05:00–08:00 | 4120 | 4302 |

| Validation | 22 January 2023 (Morning) | 05:00–08:00 | 824 | 915 |

| Date | Time | #Cow | TP | TN | FP | FN | Accuracy (%) |

|---|---|---|---|---|---|---|---|

| 3 September 2022 | AM | 56 | 1217 | 0 | 0 | 0 | 100 |

| PM | 56 | 1273 | 0 | 4 | 0 | 99.69 | |

| 4 September 2022 | AM | 56 | 1240 | 0 | 0 | 0 | 100 |

| PM | 64 | 1836 | 1 | 4 | 0 | 99.95 | |

| 5 September 2022 | AM | 64 | 1736 | 0 | 0 | 0 | 100 |

| PM | 64 | 1477 | 0 | 0 | 0 | 100 | |

| Average Accuracy | 99.94 | ||||||

| Date | Time | #Cow | GT | FP | FN | IDS | MOTA (%) |

|---|---|---|---|---|---|---|---|

| 3 September 2022 | AM | 56 | 1247 | 0 | 0 | 0 | 100 |

| PM | 56 | 1297 | 0 | 2 | 3 | 99.61 | |

| 4 September 2022 | AM | 56 | 1257 | 0 | 0 | 0 | 100 |

| PM | 64 | 1843 | 1 | 0 | 1 | 99.89 | |

| 5 September 2022 | AM | 64 | 1778 | 0 | 0 | 0 | 100 |

| PM | 64 | 1498 | 0 | 0 | 0 | 100 | |

| Average Accuracy | 99.92 | ||||||

| Dataset | Date | Period | Classification Accuracy | ||

|---|---|---|---|---|---|

| RF (%) | KNN (%) | DT (%) | |||

| Training | 3 September 2022, 4 September 2022, 5 September 2022 | a.m., p.m. a.m., p.m. a.m. | 82.3 | 81.2 | 70.4 |

| Testing | 5 September 2022 | p.m. | 81.1 | 78.2 | 69.2 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tun, S.C.; Onizuka, T.; Tin, P.; Aikawa, M.; Kobayashi, I.; Zin, T.T. Revolutionizing Cow Welfare Monitoring: A Novel Top-View Perspective with Depth Camera-Based Lameness Classification. J. Imaging 2024, 10, 67. https://doi.org/10.3390/jimaging10030067

Tun SC, Onizuka T, Tin P, Aikawa M, Kobayashi I, Zin TT. Revolutionizing Cow Welfare Monitoring: A Novel Top-View Perspective with Depth Camera-Based Lameness Classification. Journal of Imaging. 2024; 10(3):67. https://doi.org/10.3390/jimaging10030067

Chicago/Turabian StyleTun, San Chain, Tsubasa Onizuka, Pyke Tin, Masaru Aikawa, Ikuo Kobayashi, and Thi Thi Zin. 2024. "Revolutionizing Cow Welfare Monitoring: A Novel Top-View Perspective with Depth Camera-Based Lameness Classification" Journal of Imaging 10, no. 3: 67. https://doi.org/10.3390/jimaging10030067

APA StyleTun, S. C., Onizuka, T., Tin, P., Aikawa, M., Kobayashi, I., & Zin, T. T. (2024). Revolutionizing Cow Welfare Monitoring: A Novel Top-View Perspective with Depth Camera-Based Lameness Classification. Journal of Imaging, 10(3), 67. https://doi.org/10.3390/jimaging10030067