Dataset on Force Myography for Human–Robot Interactions

Abstract

1. Summary

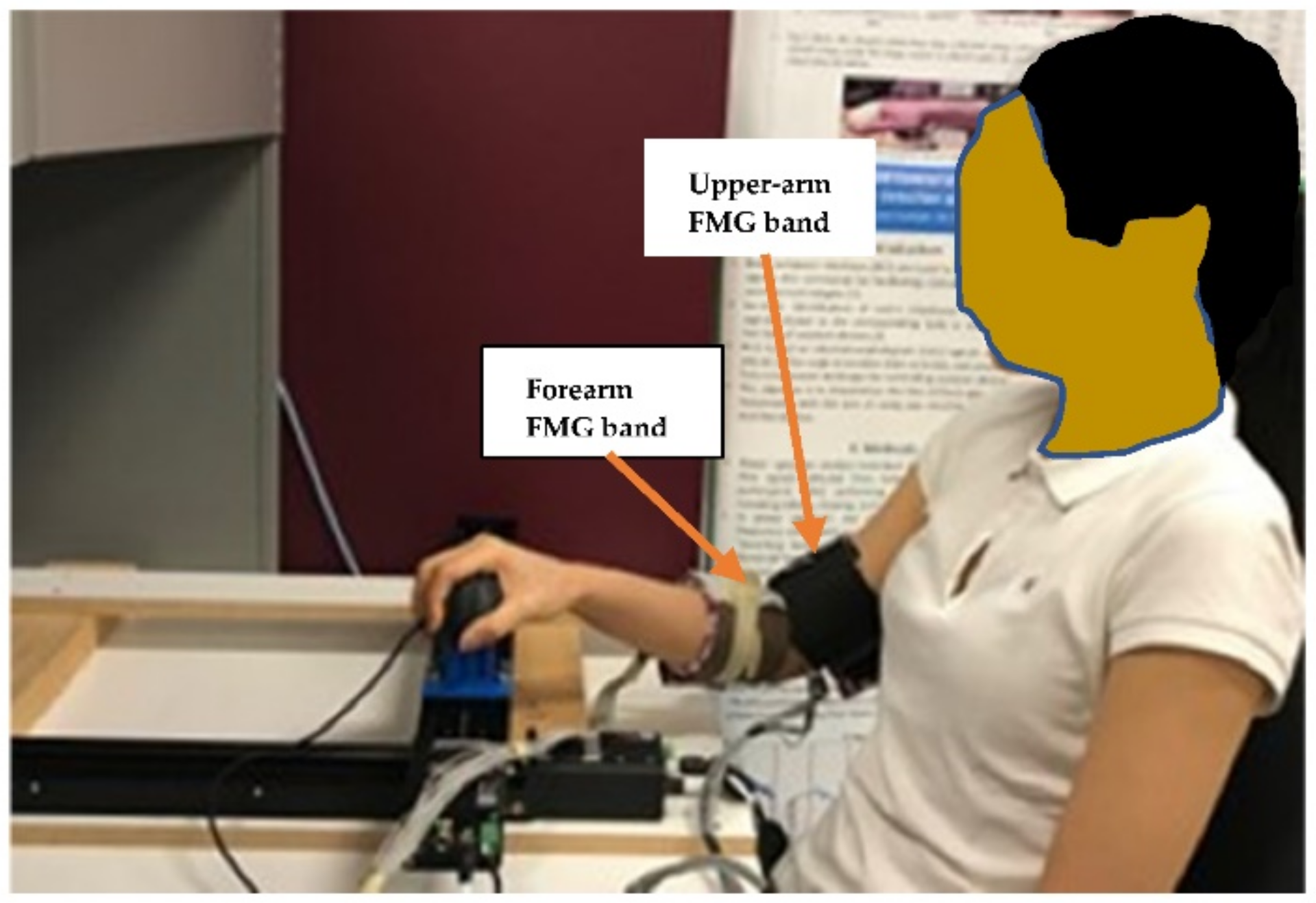

2. Dataset Collection Instrumentations

- FMG Bands

- The Biaxial Stage (2-DoF Linear Robot)

- The Serial Manipulator (7-DoF Kuka Robot)

3. Dataset Association

3.1. Dataset 1: pHRI between Human Participants and the Biaxial Stage

3.2. Dataset 2: pHRI between a Human Participant and a Manipulator

4. Dataset Description

4.1. Dataset 1: pHRI_Biaxial Stage

4.2. Dataset 2: pHRI_Manipulator

5. Discussion

6. Conclusions

7. User Notes

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Xiao, Z.G.; Menon, C. Towards the development of a wearable feedback system for monitoring the activities of the upper-extremities. J. NeuroEng. Rehabil. 2014, 11, 2. [Google Scholar] [CrossRef] [PubMed]

- Xiao, Z.G.; Menon, C. A Review of Force Myography Research and Development. Sensors 2019, 19, 4557. [Google Scholar] [CrossRef] [PubMed]

- Jiang, X.; Merhi, L.-K.; Menon, C. Force exertion affects grasp classification using force myography. IEEE Trans. Hum.-Mach. Syst. 2018, 48, 219–226. [Google Scholar] [CrossRef]

- Sakr, M.; Menon, C. On the estimation of isometric wrist/forearm torque about three axes using force myography. In Proceedings of the 2016 6th IEEE International Conference on Biomedical Robotics and Biomechatronics (BioRob), Singapore, 26–29 June 2016; pp. 827–832. [Google Scholar]

- Zakia, U.; Jiang, X.; Menon, C. Deep learning technique in recognizing hand grasps using FMG signals. In Proceedings of the 2020 11th IEEE Annual Information Technology, Electronics and Mobile Communication Conference (IEMCON), Vancouver, BC, Canada, 4–7 November 2020; pp. 0546–0552. [Google Scholar] [CrossRef]

- Zakia, U.; Menon, C. Estimating Exerted Hand Force via Force Myography to Interact with a Biaxial Stage in Real-Time by Learning Human Intentions: A Preliminary Investigation. Sensors 2020, 20, 2104. [Google Scholar] [CrossRef] [PubMed]

- Zakia, U.; Menon, C. Toward Long-Term FMG Model-Based Estimation of Applied Hand Force in Dynamic Motion during Human–Robot Interactions. IEEE Trans. Hum.-Mach. Syst. 2021, 51, 310–323. [Google Scholar] [CrossRef]

- Zakia, U.; Menon, C. Force Myography-Based Human Robot Interactions via Deep Domain Adaptation and Generalization. Sensors 2022, 22, 211. [Google Scholar] [CrossRef]

- Zakia, U.; Menon, C. Human Robot Collaboration in 3D via Force Myography based Interactive Force Estimations using Cross-Domain Generalization. IEEE Access 2022, 10, 35835–35845. [Google Scholar] [CrossRef]

- Zakia, U.; Barua, A.; Jiang, X.; Menon, C. Unsupervised, Semi-supervised Interactive Force Estimations during pHRI via Generated Synthetic Force Myography Signals. IEEE Access 2022, 10, 69910–69921. [Google Scholar] [CrossRef]

- Lemaignan, S.; Edmunds, C.E.; Senft, E.; Belpaeme, T. The PInSoRo dataset: Supporting the data-driven study of child-child and child-robot social dynamics. PLoS ONE 2018, 13, e0205999. [Google Scholar] [CrossRef]

- Billing, E.; Belpaeme, T.; Cai, H.; Cao, H.L.; Ciocan, A.; Costescu, C.; David, D.; Homewood, R.; Hernandez Garcia, D.; Gómez Esteban, P.; et al. The DREAM Dataset: Supporting a data-driven study of autism spectrum disorder and robot enhanced therapy. PLoS ONE 2020, 15, e0236939. [Google Scholar] [CrossRef]

- CADDY Underwater Gestures Dataset. Available online: http://www.caddian.eu/ (accessed on 9 June 2022).

- Novitzky, M.; Robinette, P.; Benjamin, M.R.; Fitzgerald, C.; Schmidt, H. Aquaticus: Publicly available datasets from a marine human-robot teaming testbed. In Proceedings of the 14th ACM/IEEE International Conference on Human-Robot Interaction (HRI ‘19), Daegu, Korea, 11–14 March 2019; IEEE Press: Piscataway, NJ, USA, 2019; pp. 392–400. [Google Scholar]

- MAHNOB-HCI Tagging DATABASE. HCI Tagging Database–Home. Available online: mahnob-db.eu/hci-tagging/ (accessed on 9 June 2022).

- Celiktutan, O.; Skordos, E.; Gunes, H. Multimodal Human-Human-Robot Interactions (MHHRI) Dataset for Studying Personality and Engagement. IEEE Trans. Affect. Comput. 2017, 10, 484–497. [Google Scholar] [CrossRef]

- Singh, N.; Lee, J.J.; Grover, I.; Breazeal, C. P2PSTORY: Dataset of Children Storytelling and Listening in Peer-to-Peer Interactions. In Proceedings of the CHI Conference on Human Factors in Computing Systems, Montreal, QC, Canada, 21–26 April 2018. [Google Scholar]

- Ben-Youssef, A.; Clavel, C.; Essid, S.; Bilac, M.; Chamoux, M.; Lim, A. UE-HRI: A new dataset for the study of user engagement in spontaneous human-robot interactions. In Proceedings of the 19th ACM International Conference on Multimodal Interaction (ICMI ‘17), Glasgow, UK, 13–17 November 2017; Association for Computing Machinery: New York, NY, USA, 2017; pp. 464–472. [Google Scholar] [CrossRef]

- Peng, W.; Dicai, C. A Physical Human-Robot Interaction Dataset-TacAct [Data set]. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2021), Prague, Czech Republic, 27 September–1 October 2021. [Google Scholar]

- Ludovico, M.; Yoshimura, N.; Koike, Y. Hybrid control of a vision-guided robot arm by EOG, EMG, EEG biosignals and head movement acquired via a consumer-grade wearable device. IEEE Access 2016, 4, 9528–9541. [Google Scholar]

- Maki, Y.; Sano, G.; Kobashi, Y.; Nakamura, T.; Kanoh, M.; Yamada, K. Estimating subjective assessments using a simple biosignal sensor. In Proceedings of the 2012 IEEE International Conference on Fuzzy Systems, Brisbane, QLD, Australia, 10–15 June 2012; pp. 1–6. [Google Scholar]

- Cao, T.; Sun, J.; Liu, D.; Wang, Q.; Wang, H. Wireless Collaboration Technology Based on Electroencephalograph and Electromyography. In Proceedings of the IEEE 4th International Conference on Power, Intelligent Computing and Systems (ICPICS), Shenyang, China, 29–31 July 2022; pp. 921–925. [Google Scholar]

- Liu, Y.; Yang, C.; Wang, M. Human-Robot Interaction Based on Biosignals. In Proceedings of the IEEE International Symposium on Autonomous Systems (ISAS), Guangzhou, China, 6–8 December 2020; pp. 58–63. [Google Scholar]

- Specht, B.; Tayeb, Z.; Dean, E.; Soroushmojdehi, R.; Cheng, G. Real-Time Robot Reach-To-Grasp Movements Control Via EOG and EMG Signals Decoding. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2020; pp. 3812–3817. [Google Scholar]

- Perdiz, J.; Pires, G.; Nunes, U.J. Emotional state detection based on EMG and EOG biosignals: A short survey. In Proceedings of the IEEE 5th Portuguese Meeting on Bioengineering (ENBENG), Coimbra, Portugal, 16–18 February 2017; pp. 1–4. [Google Scholar]

- Al-Qaysi, Z.T.; Zaidan, B.B.; Zaidan, A.A.; Suzani, M.S. A review of disability EEG based wheelchair control system: Coherent taxonomy, open challenges and recommendations. Comput. Methods Programs Biomed. 2018, 164, 221–237. [Google Scholar] [CrossRef]

- Geethanjali, P.; Ray, K.K. A low-cost real-time research platform for EMG pattern recognition-based prosthetic hand. IEEE/ASME Trans. Mechatron. 2015, 20, 1948–1955. [Google Scholar] [CrossRef]

- Gui, K.; Liu, H.; Zhang, D. A Practical and Adaptive Method to Achieve EMG-Based Torque Estimation for a Robotic Exoskeleton. IEEE/ASME Trans. Mechatron. 2019, 24, 483–494. [Google Scholar] [CrossRef]

- Yokoyama, M.; Koyama, R.; Yanagisawa, M. An evaluation of hand-force prediction using artificial neural-network regression models of surface EMG signals for handwear devices. J. Sens. 2017, 2017, 3980906. [Google Scholar] [CrossRef]

- Zhang, Q.; Hayashibe, M.; Fraisse, P.; Guiraud, D. FES-Induced torque prediction with evoked EMG sensing for muscle fatigue tracking. IEEE/ASME Trans. Mechatron. 2011, 16, 816–826. [Google Scholar] [CrossRef]

- Duan, F.; Dai, L.; Chang, W.; Chen, Z.; Zhu, C.; Li, W. sEMG-based identification of hand motion commands using wavelet neural network combined with discrete wavelet transform. IEEE Trans. Ind. Electron. 2016, 63, 1923–1934. [Google Scholar] [CrossRef]

- Allard, U.C.; Nougarou, F.; Fall, C.L.; Giguère, P.; Gosselin, C.; Laviolette, F.; Gosselin, B. A convolutional neural network for robotic arm guidance using sEMG based frequency-features. In Proceedings of the 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Daejeon, Korea, 9–14 October 2016; pp. 2464–2470. [Google Scholar]

- Meattini, R.; Benatti, S.; Scarcia, U.; De Gregorio, D.; Benini, L.; Melchiorri, C. An sEMG-based human–robot interface for robotic hands using machine learning and synergies. IEEE Trans. Compon. Packag. Manuf. Technol. 2018, 8, 1149–1158. [Google Scholar] [CrossRef]

- Oskoei, M.A.; Hu, H. Myoelectric control systems—A survey. Biomed. Signal Process. Control 2007, 2, 275–294. [Google Scholar] [CrossRef]

- Sanford, J.; Patterson, R.; Popa, D.O. Concurrent surface electromyography and force myography classification during times of prosthetic socket shift and user fatigue. J. Rehabil. Assist. Technol. Eng. 2017, 4, 1–13. [Google Scholar] [CrossRef] [PubMed]

- Jiang, X.; Merhi, L.K.; Xiao, Z.G.; Menon, C. Exploration of force myography and surface electromyography in hand gesture classification. Med. Eng. Phys. 2017, 41, 63–73. [Google Scholar] [CrossRef] [PubMed]

- Belyea, A.; Englehart, K.; Scheme, E. FMG Versus EMG: A comparison of usability for real-time pattern recognition based control. IEEE Trans. Biomed. Eng. 2019, 66, 3098–3104. [Google Scholar] [CrossRef] [PubMed]

- Anvaripour, M.; Khoshnam, M.; Menon, C.; Saif, M. FMG- and RNN-Based Estimation of Motor Intention of Upper-Limb Motion in Human-Robot Collaboration. Front. Robot. AI 2020, 7, 183. [Google Scholar] [CrossRef]

- Kahanowich, N.D.; Sintov, A. Robust Classification of Grasped Objects in Intuitive Human-Robot Collaboration Using a Wearable Force-Myography Device. IEEE Robot. Autom. Lett. 2021, 6, 1192–1199. [Google Scholar] [CrossRef]

- Bamani, E.; Kahanowich, N.D.; Ben-David, I.; Sintov, A. Robust Multi-User In-Hand Object Recognition in Human-Robot Collaboration Using a Wearable Force-Myography Device. IEEE Robot. Autom. Lett. 2022, 7, 104–111. [Google Scholar] [CrossRef]

| X | Y | DG |

|  |  |

| SQ | SQ-diffSize | DM |

|  |  |

| Dataset 1: pHRI_BiaxialStage | Dataset 2: pHRI_Manipulator | ||

|---|---|---|---|

| 1D-X | pHRI_BiaxialStage_SubID_1D_X_Rep0: Rep4.csv pHRI_BiaxialStage_SubID_1D_X_Session2_Rep0: Rep1.csv | 1D-X | pHRI_Manipulator_1D_SubID_X_Rep0: Rep4.csv |

| 1D-Y | pHRI_BiaxialStage_SubID_1D_Y_Rep0: Rep4.csv | 1D-Y | pHRI_Manipulator_SubID_1D_Y_Rep0: Rep4.csv |

| 2D-DG | pHRI_BiaxialStage_SubID_2D_DG_Rep0: Rep4.csv pHRI_BiaxialStage_SubID_2D_DG_Session2_Rep0: Rep1.csv | 2D-XY | pHRI_Manipulator_SubID_2D_XY_Rep0: Rep4.csv |

| 2D-SQ | pHRI_BiaxialStage_SubID_2D_SQ_Rep0: Rep4.csv | 2D-YZ | pHRI_Manipulator_SubID_2D_YZ_Rep0: Rep4.csv |

| 2D-DM | pHRI_BiaxialStage_2D_SubID_DM_Rep0: Rep4.csv | 2D-XZ | pHRI_Manipulator_SubID_2D_XZ_Rep0: Rep4.csv |

| 2D-SQ-Diff-Size | pHRI_BiaxialStage_2D_SubID_SQ_diffSize_Rep0: Rep15.csv | 3D-XYZ | pHRI_Manipulator_SubID_3D_XYZ_Rep0: Rep4.csv |

| HRC in 3D-XYZ | pHRI_Manipulator_SubID_3D_XYZ_HRC_Rep0: Rep4.csv |

| pHRI | 1D | 2D | 3D |

|---|---|---|---|

| Biaxial Stage | Total files: 120 | Total files: 186 | NA |

| Participant: S1-S17 Upper arm & Forearm FMG data | Col 1 # Fx/Fy data Col 2:33 # FMG data | Col 1:2 # Fx, Fy data Col 3:34 # FMG data | |

| Manipulator | Total files: 15 | Total files: 15 | Total files: 10 |

| Participant: S18 Forearm FMG data | Col 1:3 # Fx, Fy, Fz data Col 4:19 # FMG data | Col 1:3 # Fx, Fy, Fz data Col 4:19 # FMG data | Col 1:3 # Fx, Fy, Fz data Col 4:19 # FMG data |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zakia, U.; Menon, C. Dataset on Force Myography for Human–Robot Interactions. Data 2022, 7, 154. https://doi.org/10.3390/data7110154

Zakia U, Menon C. Dataset on Force Myography for Human–Robot Interactions. Data. 2022; 7(11):154. https://doi.org/10.3390/data7110154

Chicago/Turabian StyleZakia, Umme, and Carlo Menon. 2022. "Dataset on Force Myography for Human–Robot Interactions" Data 7, no. 11: 154. https://doi.org/10.3390/data7110154

APA StyleZakia, U., & Menon, C. (2022). Dataset on Force Myography for Human–Robot Interactions. Data, 7(11), 154. https://doi.org/10.3390/data7110154