SIMADL: Simulated Activities of Daily Living Dataset

Abstract

:1. Introduction

2. Related Work

2.1. Real Datasets

2.2. Simulation Tool

3. OpenSHS

3.1. OpenSHS Advantages

4. Methodology

4.1. Smart Home Design

4.2. The Participants

- The researcher guides the participant and shows him/her the virtual smart home.

- The participant is asked to play with the virtual smart home to get familiar with it.

- The participant’s familiarity with the virtual smart home is tested by asking them to perform specific tasks.

- The actual simulation takes place, and the participant is asked to give us their actual starting times for each context.

- The participant is asked to complete the usability questionnaire.

4.3. The Anomalies

4.4. Dataset Aggregation

- Days: We chose 30 and 60.

- Start-date: We chose 1 February 2016.

- Time-margin: We chose the values 0, 5, and 10.

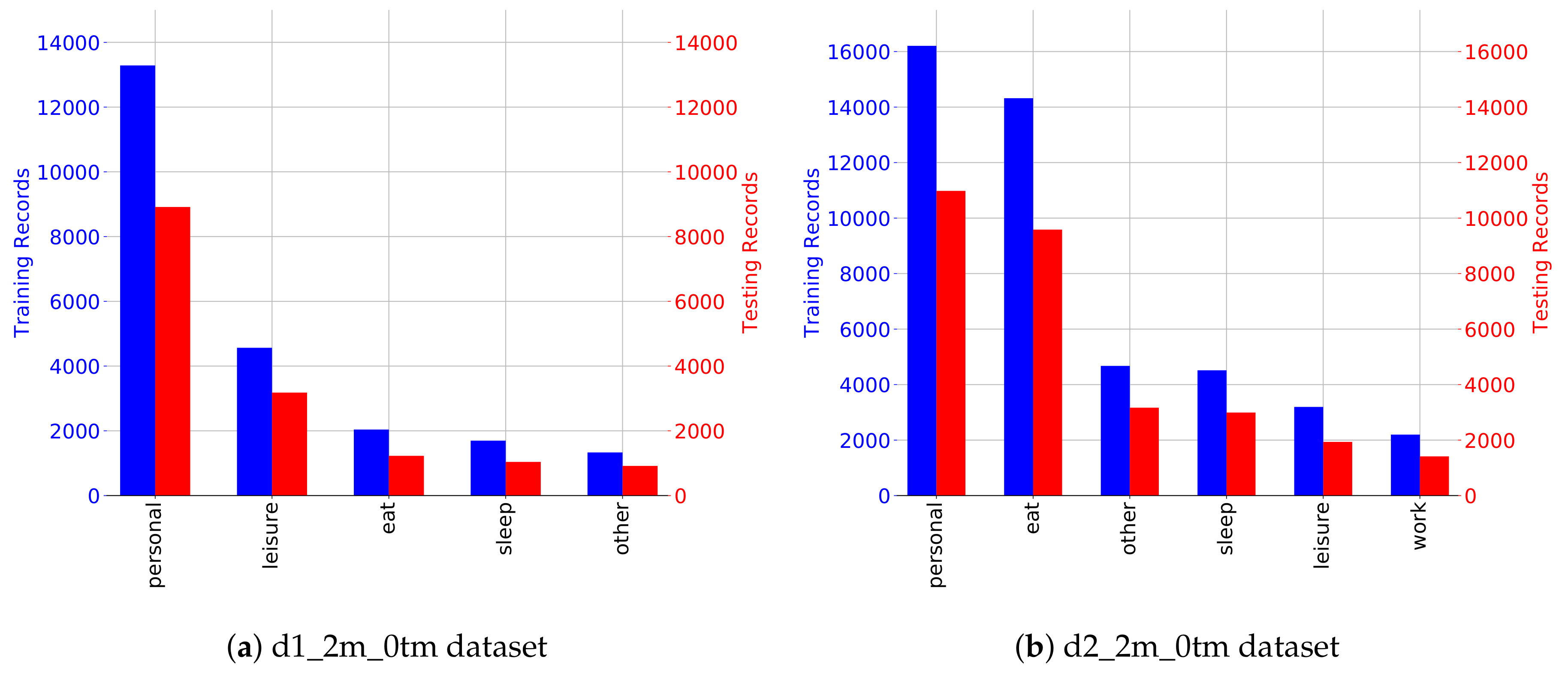

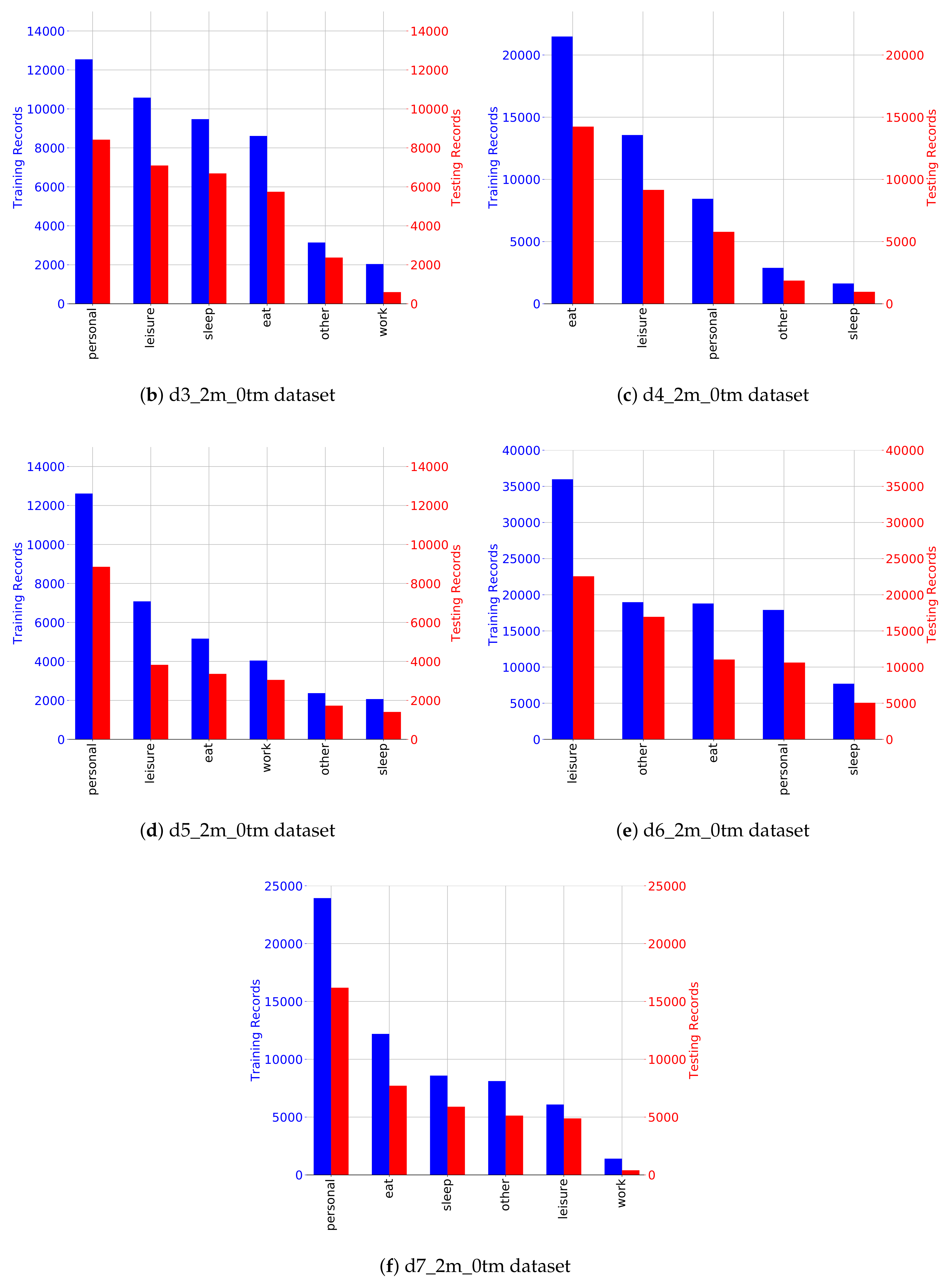

5. Dataset Description

- x is an index number to uniquely identify a dataset;

- y is the number of months generated; and

- z is the time-margin value.

6. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

Abbreviations

| ADL | Activities of Daily Living |

| IoT | Internet of Things |

| OpenSHS | Open Smart Home Simulator |

References

- 8.4 Billion Connected Things Will Be in Use in 2017, Up 31 Percent from 2016. 2017. Available online: http://www.gartner.com/newsroom/id/3598917 (accessed on 31 March 2018).

- Alshammari, T.; Alshammari, N.; Sedky, M.; Howard, C. Evaluating Machine Learning Techniques for Activity Classification in Smart Home Environments. Int. J. Comput. Electr. Autom. Control Inf. Eng. 2018, 12, 48–54. [Google Scholar]

- Alemdar, H.; Ertan, H.; Incel, O.D.; Ersoy, C. ARAS human activity datasets in multiple homes with multiple residents. In Proceedings of the 2013 7th International Conference on Pervasive Computing Technologies for Healthcare and Workshops, Venice, Italy, 5–8 May 2013; pp. 232–235. [Google Scholar]

- WSU CASAS Datasets. Available online: http://ailab.wsu.edu/casas/datasets/ (accessed on 31 March 2018).

- PlaceLab Datasets. Available online: http://web.mit.edu/cron/group/house_n/data/PlaceLab/PlaceLab.htm (accessed on 31 March 2018).

- Lee, J.W.; Cho, S.; Liu, S.; Cho, K.; Helal, S. Persim 3D: Context-Driven Simulation and Modeling of Human Activities in Smart Spaces. IEEE Trans. Autom. Sci. Eng. 2015, 12, 1243–1256. [Google Scholar] [CrossRef]

- Kormányos, B.; Pataki, B. Multilevel simulation of daily activities: Why and how? In Proceedings of the 2013 IEEE International Conference on Computational Intelligence and Virtual Environments for Measurement Systems and Applications (CIVEMSA), Milan, Italy, 15–17 July 2013; pp. 1–6. [Google Scholar]

- Bouchard, K.; Ajroud, A.; Bouchard, B.; Bouzouane, A. SIMACT: A 3D Open Source Smart Home Simulator for Activity Recognition. In Advances in Computer Science and Information Technology; Kim, T.H., Adeli, H., Eds.; Springer: Berlin/Heidelberg, Germany, 2010; pp. 524–533. [Google Scholar]

- Synnott, J.; Chen, L.; Nugent, C.; Moore, G. The creation of simulated activity datasets using a graphical intelligent environment simulation tool. In Proceedings of the 2014 36th Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Chicago, IL, USA, 26–30 August 2014; pp. 4143–4146. [Google Scholar]

- Ariani, A.; Redmond, S.J.; Chang, D.; Lovell, N.H. Simulation of a Smart Home Environment. In Proceedings of the 2013 3rd International Conference on Instrumentation, Communications, Information Technology and Biomedical Engineering (ICICI-BME), Bandung, Indonesia, 7–8 November 2013; pp. 27–32. [Google Scholar]

- Fu, Q.; Li, P.; Chen, C.; Qi, L.; Lu, Y.; Yu, C. A configurable context-aware simulator for smart home systems. In Proceedings of the 6th International Conference on Pervasive Computing and Applications (ICPCA), Port Elizabeth, South Africa, 26–28 October 2011; pp. 39–44. [Google Scholar]

- Cook, D.J.; Crandall, A.S.; Thomas, B.L.; Krishnan, N.C. CASAS: A smart home in a box. Computer 2013, 46, 62–69. [Google Scholar] [CrossRef] [PubMed]

- Skubic, M.; Alexander, G.; Popescu, M.; Rantz, M.; Keller, J. A smart home application to eldercare: Current status and lessons learned. Technol. Health Care 2009, 17, 183–201. [Google Scholar] [PubMed]

- Anguita, D.; Ghio, A.; Oneto, L.; Parra, X.; Reyes-Ortiz, J.L. A Public Domain Dataset for Human Activity Recognition using Smartphones. In Proceedings of the European Symposium on Artificial Neural Networks, Computational Intelligence and Machine Learning, Bruges, Belgium, 24–26 April 2013. [Google Scholar]

- Casale, P.; Pujol, O.; Radeva, P. Human activity recognition from accelerometer data using a wearable device. In Iberian Conference on Pattern Recognition and Image Analysis; Springer: Berlin/Heidelberg, Germany, 2011; pp. 289–296. [Google Scholar]

- Bruno, B.; Mastrogiovanni, F.; Sgorbissa, A.; Vernazza, T.; Zaccaria, R. Analysis of human behavior recognition algorithms based on acceleration data. In Proceedings of the 2013 IEEE International Conference on Robotics and Automation, Karlsruhe, Germany, 6–10 May 2013; pp. 1602–1607. [Google Scholar]

- Van Kasteren, T.; Englebienne, G.; Kröse, B.J. Transferring knowledge of activity recognition across sensor networks. In International Conference on Pervasive Computing; Springer: Berlin/Heidelberg, Germany, 2010; pp. 283–300. [Google Scholar]

- Pirsiavash, H.; Ramanan, D. Detecting activities of daily living in first-person camera views. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition, Providence, RI, USA, 16–21 June 2012; pp. 2847–2854. [Google Scholar]

- Lago, P.; Lang, F.; Roncancio, C.; Jiménez-Guarín, C.; Mateescu, R.; Bonnefond, N. The ContextAct@A4H real-life dataset of daily-living activities. In International and Interdisciplinary Conference on Modeling and Using Context; Springer: Cham, Switzerland, 2017; pp. 175–188. [Google Scholar]

- Synnott, J.; Nugent, C.; Jeffers, P. Simulation of Smart Home Activity Datasets. Sensors 2015, 15, 14162. [Google Scholar] [CrossRef] [PubMed]

- Cook, D.; Schmitter-Edgecombe, M.; Crandall, A.; Sanders, C.; Thomas, B. Collecting and disseminating smart home sensor data in the CASAS project. In Proceedings of the CHI Workshop on Developing Shared Home Behavior Datasets to Advance HCI and Ubiquitous Computing Research, Boston, MA, USA, 4–9 April 2009; pp. 1–7. [Google Scholar]

- Helal, S.; Lee, J.W.; Hossain, S.; Kim, E.; Hagras, H.; Cook, D. Persim-Simulator for human activities in pervasive spaces. In Proceedings of the 7th International Conference on Intelligent Environments (IE), Nottingham, UK, 25–28 July 2011; pp. 192–199. [Google Scholar]

- Helal, A.; Cho, K.; Lee, W.; Sung, Y.; Lee, J.; Kim, E. 3D modeling and simulation of human activities in smart spaces. In Proceedings of the 2012 9th International Conference on Ubiquitous Intelligence and Computing and 9th International Conference on Autonomic and Trusted Computing, Fukuoka, Japan, 4–7 September 2012; pp. 112–119. [Google Scholar]

- McDonald, H.; Nugent, C.; Hallberg, J.; Finlay, D.; Moore, G.; Synnes, K. The homeML suite: Shareable datasets for smart home environments. Health Technol. 2013, 3, 177–193. [Google Scholar] [CrossRef]

- Alshammari, N.; Alshammari, T.; Sedky, M.; Champion, J.; Bauer, C. OpenSHS: Open Smart Home Simulator. Sensors 2017, 17, 1003. [Google Scholar] [CrossRef] [PubMed]

| # | Name | Type | Description | Active/Passive |

|---|---|---|---|---|

| 1 | bathroomCarp | binary | Bathroom carpet sensor | Passive |

| 2 | bathroomDoor | binary | Bathroom door sensor | Active |

| 3 | bathroomDoorLock | binary | Bathroom door lock sensor | Active |

| 4 | bathroomLight | binary | Bathroom ceiling light | Active |

| 5 | bed | binary | Bed contact sensor | Passive |

| 6 | bedTableLamp | binary | Bedroom table lamp | Active |

| 7 | bedroomCarp | binary | Bedroom carpet sensor | Passive |

| 8 | bedroomDoor | binary | Bedroom door sensor | Active |

| 9 | bedroomDoorLock | binary | Bedroom door lock sensor | Active |

| 10 | bedroomLight | binary | Bedroom ceiling light | Active |

| 11 | couch | binary | Living room couch | Passive |

| 12 | fridge | binary | Kitchen fridge | Active |

| 13 | hallwayLight | binary | Hallway ceiling light | Active |

| 14 | kitchenCarp | binary | Kitchen carpet sensor | Passive |

| 15 | kitchenDoor | binary | Kitchen door sensor | Active |

| 16 | kitchenDoorLock | binary | Kitchen door lock sensor | Active |

| 17 | kitchenLight | binary | Kitchen ceiling light | Active |

| 18 | livingCarp | binary | Living room carpet sensor | Passive |

| 19 | livingLight | binary | Living room ceiling light | Active |

| 20 | mainDoor | binary | Main door sensor | Active |

| 21 | mainDoorLock | binary | Main door lock sensor | Active |

| 22 | office | binary | Office room desk sensor | Passive |

| 23 | officeCarp | binary | Office room carpet sensor | Passive |

| 24 | officeDoor | binary | Office door sensor | Active |

| 25 | officeDoorLock | binary | Office door lock sensor | Active |

| 26 | officeLight | binary | Office ceiling light | Active |

| 27 | oven | binary | Kitchen oven sensor | Active |

| 28 | tv | binary | Living room TV sensor | Active |

| 29 | wardrobe | binary | Bedroom wardrobe sensor | Active |

| 30 | Activity | String | The current participant activity | |

| 31 | timestamp | String | The timestamp every second |

| Participants | Anomaly Definition |

|---|---|

| participant 1 | leaving the fridge door open. |

| participant 2 | leaving the oven on for long time. |

| participant 3 | leaving the main door open. |

| participant 4 | leaving the fridge door open. |

| participant 5 | leaving the bathroom light on. |

| participant 6 | leaving tv on. |

| participant 7 | leaving light bedroom and wardrobe open. |

| Timestamp | Bed Table Lamp | Bed | Bathroom Light | Bathroom Door | … | Activity |

|---|---|---|---|---|---|---|

| 2016-04-01 08:00:00 | 0 | 1 | 0 | 0 | … | sleep |

| 2016-04-01 08:00:01 | 0 | 1 | 0 | 0 | … | sleep |

| 2016-04-01 08:00:02 | 0 | 1 | 0 | 0 | … | sleep |

| 2016-04-01 08:00:03 | 0 | 1 | 0 | 0 | … | sleep |

| 2016-04-01 08:00:04 | 1 | 1 | 0 | 0 | … | sleep |

| 2016-04-01 08:00:05 | 1 | 0 | 0 | 0 | … | sleep |

| 2016-04-01 08:00:06 | 1 | 0 | 0 | 1 | … | personal |

| 2016-04-01 08:00:07 | 1 | 0 | 0 | 1 | … | personal |

| 2016-04-01 08:00:08 | 1 | 0 | 1 | 1 | … | personal |

| 2016-04-01 08:00:09 | 1 | 0 | 1 | 1 | … | personal |

| 2016-04-01 08:00:10 | 1 | 0 | 1 | 1 | … | personal |

| ⋮ | ⋮ | ⋮ | ⋮ | ⋮ | ⋮ |

| Name | Classification Dataset | Anomaly Dataset |

|---|---|---|

| d1-1m-0tm | 18,800 | 18,120 |

| d1-1m-5tm | 18,966 | 18,096 |

| d1-1m-10tm | 18,828 | 18,044 |

| d1-2m-0tm | 38,204 | 35,033 |

| d1-2m-5tm | 37,532 | 34,967 |

| d1-2m-10tm | 38,012 | 35,065 |

| d2-1m-0tm | 37,332 | 35,358 |

| d2-1m-5tm | 36,261 | 35,679 |

| d2-1m-10tm | 35,687 | 35,541 |

| d2-2m-0tm | 75,183 | 74,171 |

| d2-2m-5tm | 72,302 | 72,163 |

| d2-2m-10tm | 73,526 | 72,751 |

| d3-1m-0tm | 39,832 | 40,603 |

| d3-1m-5tm | 42,526 | 40,064 |

| d3-1m-10tm | 40,730 | 41,681 |

| d3-2m-0tm | 77,328 | 88,091 |

| d3-2m-5tm | 83,346 | 88,091 |

| d3-2m-10tm | 79,933 | 87,552 |

| d4-1m-0tm | 40,232 | 30,031 |

| d4-1m-5tm | 40,015 | 30,923 |

| d4-1m-10tm | 38,629 | 29,645 |

| d4-2m-0tm | 80,033 | 61,114 |

| d4-2m-5tm | 79,171 | 59,444 |

| d4-2m-10tm | 79,176 | 56,829 |

| d5-1m-0tm | 27,762 | 41,343 |

| d5-1m-5tm | 28,008 | 39,724 |

| d5-1m-10tm | 28,450 | 40,817 |

| d5-2m-0tm | 55,577 | 78,267 |

| d5-2m-5tm | 56,200 | 79,048 |

| d5-2m-10tm | 56,919 | 78,627 |

| d6-1m-0tm | 81,859 | 88,883 |

| d6-1m-5tm | 85,763 | 90,434 |

| d6-1m-10tm | 84,672 | 88,942 |

| d6-2m-0tm | 165,596 | 174,809 |

| d6-2m-5tm | 165,038 | 174,189 |

| d6-2m-10tm | 167,282 | 169,654 |

| d7-1m-0tm | 49,282 | 53,321 |

| d7-1m-5tm | 49,605 | 51,972 |

| d7-1m-10tm | 49,769 | 52,262 |

| d7-2m-0tm | 100,544 | 99,193 |

| d7-2m-5tm | 100,498 | 102,340 |

| d7-2m-10tm | 100,502 | 100,974 |

| Total | 2,674,910 | 2,743,855 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Alshammari, T.; Alshammari, N.; Sedky, M.; Howard, C. SIMADL: Simulated Activities of Daily Living Dataset. Data 2018, 3, 11. https://doi.org/10.3390/data3020011

Alshammari T, Alshammari N, Sedky M, Howard C. SIMADL: Simulated Activities of Daily Living Dataset. Data. 2018; 3(2):11. https://doi.org/10.3390/data3020011

Chicago/Turabian StyleAlshammari, Talal, Nasser Alshammari, Mohamed Sedky, and Chris Howard. 2018. "SIMADL: Simulated Activities of Daily Living Dataset" Data 3, no. 2: 11. https://doi.org/10.3390/data3020011

APA StyleAlshammari, T., Alshammari, N., Sedky, M., & Howard, C. (2018). SIMADL: Simulated Activities of Daily Living Dataset. Data, 3(2), 11. https://doi.org/10.3390/data3020011