3.1. Overview of the Proposed Framework

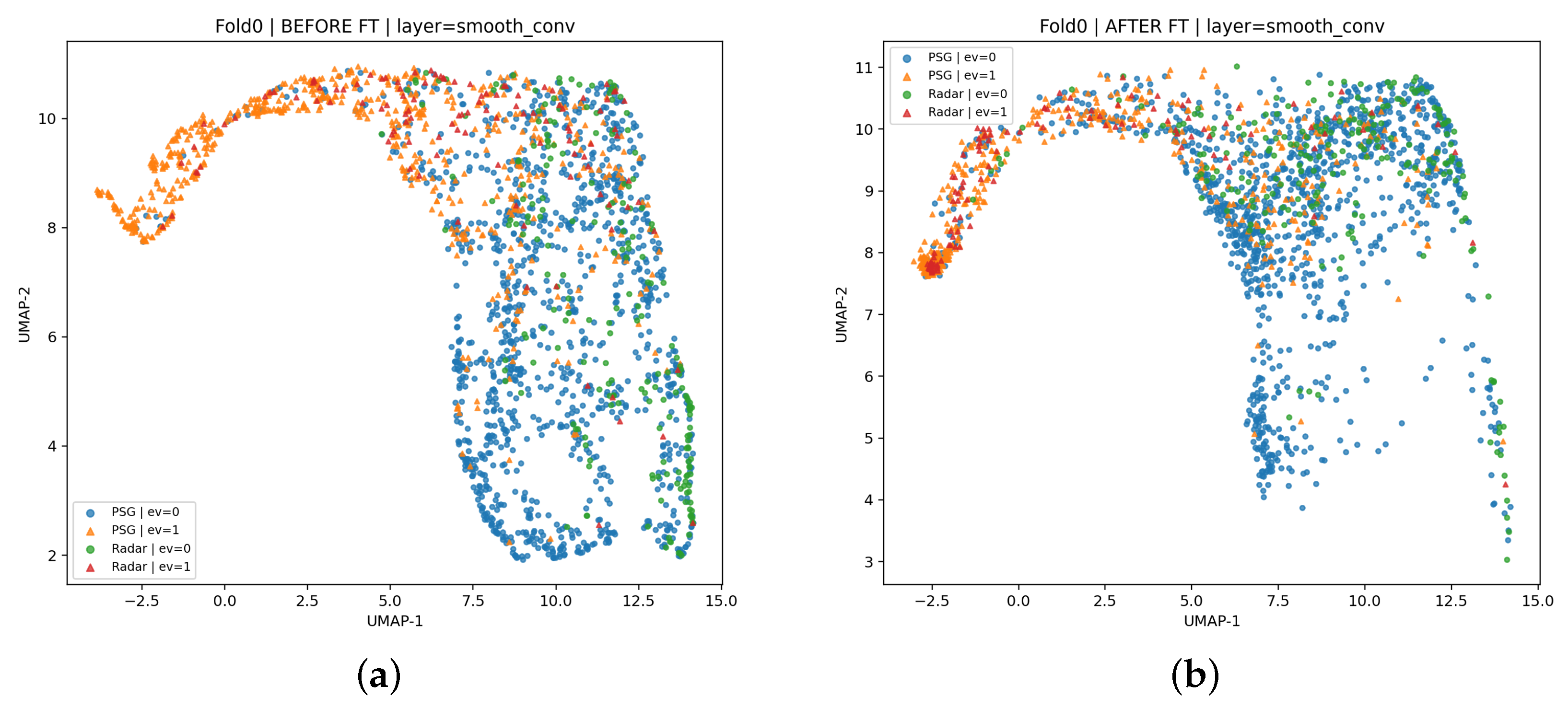

To enable accurate and scalable sleep apnea–hypopnea detection in home-like settings, we propose a two-stage cross-modality learning framework that transfers event-related knowledge from clinically established PSG respiratory signals to non-contact radar-derived respiration measurements. The pipeline is designed to address two practical constraints: PSG provides high-quality, clinically annotated events but is unsuitable for widespread longitudinal deployment, whereas radar supports unobtrusive monitoring but typically suffers from limited labeled data and substantial domain shift. An overview of the proposed two-stage cross-modality learning pipeline is illustrated in

Figure 1. Accordingly, we leveraged large-scale PSG supervision for representation learning and then adapted the model to clinically synchronized radar data via fine-tuning. Specifically, the framework consists of the following:

- 1.

PSG pre-training (source domain). We trained a sequence-to-sequence segmentation model on a large PSG cohort curated from the HSP, selecting 1526 overnight recordings with reliable respiratory annotations. The model mapped PSG respiratory effort signals (e.g., thoracic and abdominal channels) to dense per-sample probabilities of apnea/hypopnea-related events, learning general event morphology, temporal context, and intra-event dynamics expected to transfer across modalities.

- 2.

Radar fine-tuning (target domain). We initialized the radar model with the PSG-pretrained weights and fine-tuned it on 35 overnight recordings collected at Beijing Tiantan Hospital with synchronized PSG-based annotations. Radar inputs were respiration motion waveforms extracted from mmWave measurements; fine-tuning adapted the representation to radar-specific variability while preserving event-relevant features learned from PSG.

Across both stages, the model output per-sample event probabilities rather than epoch-level labels, enabling event-centric inference and clinically meaningful endpoints. At test time, probabilities were thresholded and temporally post-processed (e.g., merging fragmented detections and filtering short segments) to produce event-level predictions. We report (i) event-level precision, recall, and F1-score to assess detection and localization accuracy and (ii) recording-level AHI estimation and severity classification to quantify screening utility. The subsequent Method Section details PSG curation/preprocessing and windowing, as well as radar waveform extraction, label alignment, and dataset splitting, mirroring the proposed two-stage pipeline.

3.2. PSG Data Preparation and Feature Extraction

PSG data were obtained from the HSP v2.0 hosted on the Brain Data Science Platform (BDSP). The HSP dataset contains large-scale, clinically acquired PSG studies (26,200 PSG studies from 19,492 patients) and provides standardized signal recordings and clinical annotations following AASM conventions. In HSP v2.0, PSG recordings include thoracic and abdominal respiratory effort channels, and signals are provided at (or resampled to) 200 Hz to enable synchronized multi-channel analyses [

13].

Given the objective of learning apnea/hypopnea morphology for subsequent cross-modality transfer to radar, we curated an apnea/hypopnea-enriched subset from the full HSP repository. Specifically, we first selected nights with StudyType = “PSG Diagnostic” (diagnostic studies rather than titration or follow-up sessions) and then retained those with pre-sleep questionnaire information indicating evalForSleepApnea = 1, consistent with suspected SAHS evaluation. The HSP metadata provides these fields, enabling scalable cohort filtering.

Next, to ensure the AHI calculation corresponds to a physiologically meaningful sleep interval (and to reduce long wake segments that dilute event prevalence), we extracted the sleep segment from the first sleep onset to the final awakening. We then computed the AHI within the extracted interval and retained nights with AHI

, which yielded 1526 nights for PSG pre-training [

5]. The resulting AHI-based severity distribution of the curated HSP cohort (together with the target radar cohort) is summarized in

Table 1, highlighting the underlying class composition and potential source–target imbalance.

These 1526 nights corresponded to 1252 unique subjects, with only a small fraction of subjects contributing multiple nights (typically 2–3 nights). This targeted curation improved the positive-sample density for supervised segmentation, thereby increasing the probability that each training window contained informative event morphology. In practice, this helped the model learn apnea/hypopnea-related temporal patterns more efficiently than training on a heavily imbalanced random sample of the full cohort.

We used the thoracic (chest) and abdominal respiratory effort belt channels as two-channel 1D inputs. HSP provides synchronized PSG signals with respiratory effort channels resampled to 200 Hz; in our pipeline, the channels were read from EDF and treated as time-aligned sequences (shared recording clock), allowing consistent label mapping to both inputs.

HSP annotations are provided in tabular form with entries defined by (

epoch,

time,

duration,

event), where

time and

duration are in seconds. We loaded the CSV, coerced numeric fields, removed missing/invalid rows (e.g., undefined

time or non-positive

duration), and retained respiratory-event descriptors. Because respiratory events may appear with two related prefixes (e.g., “Respiratory Event” and “RespEvent”) and include multiple subtypes, we implemented a robust parser that mapped event strings to integer codes via an explicit dictionary, as summarized in

Table 2.

In this study, we formulated a binary segmentation task in which codes {1, 2, 3, 4} (obstructive apnea, central apnea, mixed apnea, and hypopnea) were treated as the positive class, whereas Normal (0) and OtherRespEvent (5) were treated as negative. OtherRespEvent captured respiratory-related annotations that were not scored as apnea/hypopnea under AASM rules and terminology [

5], including Respiratory Effort-Related Arousal (RERA) and Partial Obstructive events, and was retained only for bookkeeping and optional secondary analyses.

To obtain per-sample supervision, each annotated interval was rasterized into a label sequence aligned to the resampled respiratory signals. Several edge cases were handled to reduce label noise: (i) events that wrapped across midnight (end time < start time) were corrected by adding 24 h to the end time; (ii) overlapping events were resolved by a priority rule (apnea subtypes dominated hypopnea; otherwise, the longer event was retained); and (iii) adjacent events separated by ≤3 s were merged to avoid artificial fragmentation. These steps improved temporal continuity for sequence segmentation.

Although HSP respiratory effort belts are available at 200 Hz, apnea/hypopnea morphology is dominated by low-frequency respiration dynamics. We therefore downsampled both thoracic and abdominal belts to 10 Hz via integer-factor decimation (200 Hz→10 Hz), which also provided anti-aliasing filtering and reduced computational cost, while matching the radar respiration sampling rate used in the target domain. To mitigate inter-night variability from sensor placement and baseline drift, we applied night-wise robust normalization independently per channel: NaN/Inf values were replaced with zeros and signals were centered by the median, clipped to , and scaled by IQR (with a standard deviation fallback when the IQR was too small). The resulting integer label sequence was then binarized by marking codes in {1, 2, 3, 4} as positive and all others as negative.

Finally, each night was segmented into overlapping windows of 2048 samples with a stride of 300 samples (204.8 s windows with 30 s steps at 10 Hz), yielding and . This windowing provided a long temporal context for pre-event baseline and post-event recovery while maintaining a practical stride consistent with conventional PSG epoching. The radar dataset and its preprocessing, including waveform extraction and PSG synchronization, are described next.

3.3. Radar Data Preparation and Feature Extraction

Radar respiration data were collected at Beijing Tiantan Hospital using a 60 GHz FMCW radar (Texas Instruments, Dallas, TX, USA), yielding 35 overnight recordings acquired from 35 independent subjects (one night per subject). Synchronized PSG was acquired in parallel for each subject-night. Specifically, the radar front-end was implemented with the Texas Instruments (Dallas, TX, USA) IWR6843ISK evaluation board together with a DCA1000 EVM data acquisition card; raw data were streamed in real time to a bedside computer and synchronized with PSG via timestamps. PSG technicians/clinicians provided time-aligned respiratory event annotations, which were then used as the ground truth for radar learning. This synchronized acquisition enabled training and evaluation under clinically consistent labeling, reducing ambiguities that often arise in radar-only studies. Key radar acquisition parameters are summarized in

Table 3.

The real-world ward environment at Beijing Tiantan Hospital and the radar installation position during data acquisition are shown in

Figure 2.

In the following, we describe the radar signal processing pipeline that transformed raw radar returns into two respiration-related waveforms (radar-chest and radar-abd) and then produced temporally aligned binary event labels for model fine-tuning. The overall pipeline is consistent with common FMCW-based respiration extraction practice and follows the processing logic implemented in our scripts.

For an FMCW radar transmitting a linear chirp, a standard complex-baseband formulation can be written as:

where

is the carrier frequency and

denotes the chirp slope (bandwidth

B, chirp duration

). The received signal from a dominant range bin experiences a round-trip delay

, and, after mixing/dechirping with the transmitted signal, the intermediate-frequency (IF) signal can be expressed (up to constants) as:

where the residual phase term

contains fine motion information. When chest wall motion induces a small displacement

around a nominal range

, the phase modulation approximately satisfies:

with wavelength

and

, the complex signal of the selected range bin (or a linear combination of bins). This phase-to-displacement conversion and phase unwrapping operation is explicitly used in our implementation.

After standard radar front-end processing (e.g., range FFT on I/Q samples), we obtained a range–time representation (or an equivalent “FFT cube”) in which each range bin provided a complex-valued slow-time sequence. Because respiration-induced micro-motion can be distributed across neighboring bins (due to multipath, posture changes, and torso extent), using multiple bins can improve robustness; prior FMCW apnea studies similarly emphasize that different range bins may contain complementary respiration information.

In our dataset generation, we retained 40 torso-related range bins for subsequent motion extraction. Concretely, each recording stores an array fft-cube whose second dimension equals 40 (bins), serving as the multi-bin input for thoracoabdominal waveform reconstruction. Given the complex slow-time signal for each bin, we first removed per-bin DC components and then computed the unwrapped phase to recover continuous displacement trajectories. In code, this corresponded to: (i) subtracting the mean complex value per bin, (ii) computing , (iii) applying np.unwrap along time, and (iv) converting phase to displacement using .

To isolate respiration dynamics, we applied a band-pass Butterworth filter targeting typical breathing frequencies. The implementation used a 4th-order Butterworth design and filtfilt to avoid phase distortion.

A key step was transforming the 40-bin displacement matrix into two respiration-related signals intended to approximate thoracic and abdominal components. Let be the matrix of filtered displacement signals (here, ).

We estimated two weight vectors

and

, such that:

where

and

correspond to radar-derived chest and abdomen motion signals.

We adopted a ridge regression solution with an additional orthogonality-promoting constraint between the two projections to encourage disentanglement of chest/abd motion contributions—specifically, ridge regression provides a stable estimator under multicollinearity:

and we further refined

to reduce correlation (approximate orthogonality) between the two weight vectors. This “orthogonal ridge” procedure was implemented in our script by iteratively solving ridge regression and projecting one weight vector onto the orthogonal complement of the other.

To improve temporal stability, weights were estimated within overlapping windows and then merged by overlap-add averaging (rather than fitting a single global mapping). The script used 120 s windows with a 30 s stride during waveform construction and aggregated overlapping predictions to form full-length radar-chest and radar-abd sequences.

After reconstruction, we applied an additional band-pass filter to suppress residual drift and high-frequency noise. The radar slow-time sampling rate in preprocessing was 50 Hz, and the respiration waveforms were downsampled to 10 Hz to match the PSG training interface and reduce computation; the 50 Hz→10 Hz conversion was implemented by decimation. To handle minor length mismatches across channels and labels (e.g., trimming or missing frames), we enforced per-night alignment by trimming radar-chest, radar-abd, and label sequences to the same minimum length.

We then applied the same robust per-night normalization used for PSG to radar-chest and radar-abd, including NaN/Inf handling, median–IQR scaling, and outlier clipping, which reduced inter-night amplitude variability and improved fine-tuning stability. Radar annotations followed the same categorical definition and codebook as PSG (

Table 2); for the binary task, labels were binarized with {1, 2, 3, 4} as positive (apnea/hypopnea) and {0, 5} as negative.

Finally, radar sequences were segmented using the same sliding-window protocol as PSG—overlapping windows of 2048 samples with a stride of 300 samples (204.8 s windows with 30 s steps at 10 Hz)—yielding input tensors corresponding to the radar-chest and radar-abd channels and label tensors derived from synchronized PSG annotations. Overall, the preprocessing pipeline converted multi-bin FMCW slow-time complex signals into two normalized respiration-motion waveforms via phase-based displacement recovery, respiration-band filtering, and orthogonal ridge projection with overlap–add fusion, while standardizing sampling rate, channel count, labeling, and windowing to enable principled PSG-to-radar transfer learning.