Privacy-Aware Continual Self-Supervised Learning on Multi-Window Chest Computed Tomography for Domain-Shift Robustness

Abstract

1. Introduction

- We propose a novel LR-based CSSL framework to ensure data privacy and effectively address catastrophic forgetting during pretraining with chest CT images across two domains.

- We introduce a novel WKD-BKE-integrated feature distillation method to simultaneously enable robust feature-representation learning and mitigate data interference.

- Our extensive experiments reveal that our method outperforms state-of-the-art approaches on two public chest-CT-image datasets.

2. Related Studies

2.1. Self-Supervised Learning for Addressing Domain Shifts

2.2. Continual Self-Supervised Learning for Addressing Domain Shifts

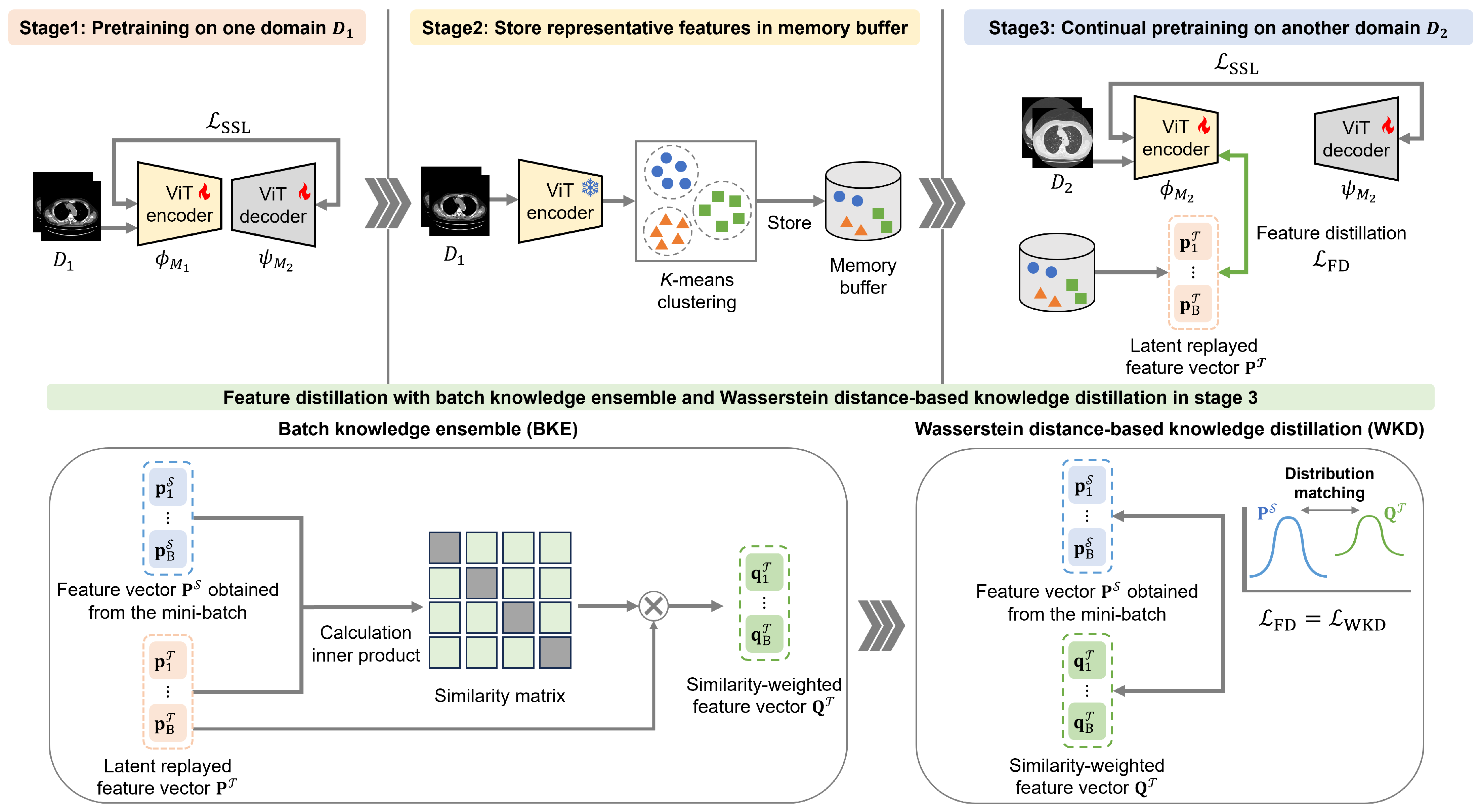

3. Privacy-Aware Continual Self-Supervised Learning Integrating Latent Replay and Feature Distillation

3.1. Stage 1: Self-Supervised Learning on the First-Domain Dataset

3.2. Stage 2: Sampling Features in the Memory Buffer

3.3. Stage 3: Continual Self-Supervised Learning with Feature Distillation Using the Second-Domain Dataset

3.3.1. Wasserstein Distance-Based Knowledge Distillation

3.3.2. Batch Knowledge Ensemble

| Algorithm 1 Algorithm of the proposed CSSL framework. |

| Input: : two subsets from different domains, B: memory buffer, : tokenizers, : encoders, : model-specific decoders, K-: k-means clustering operation, : operation for sampling cluster centers, : LR operation Output: , Stage 1: SSL on 1: Set the training dataset: 2: Update , , and by minimizing , following Equation (1) Stage 2: Sampling Features into the Memory Buffer 3: Obtain clusters: 4: Populate the memory buffer: Stage 3: CSSL with Feature Distillation on 5: Set the training dataset: 6: Extract the mini-batch feature representations: 7: Retrieve replayed feature representations from B: 8: Obtain by calculating the similarity between and , following Equations (5)–(9) 9: Update , , and by minimizing and with and , following Equations (1) and (4), respectively. |

4. Experiments

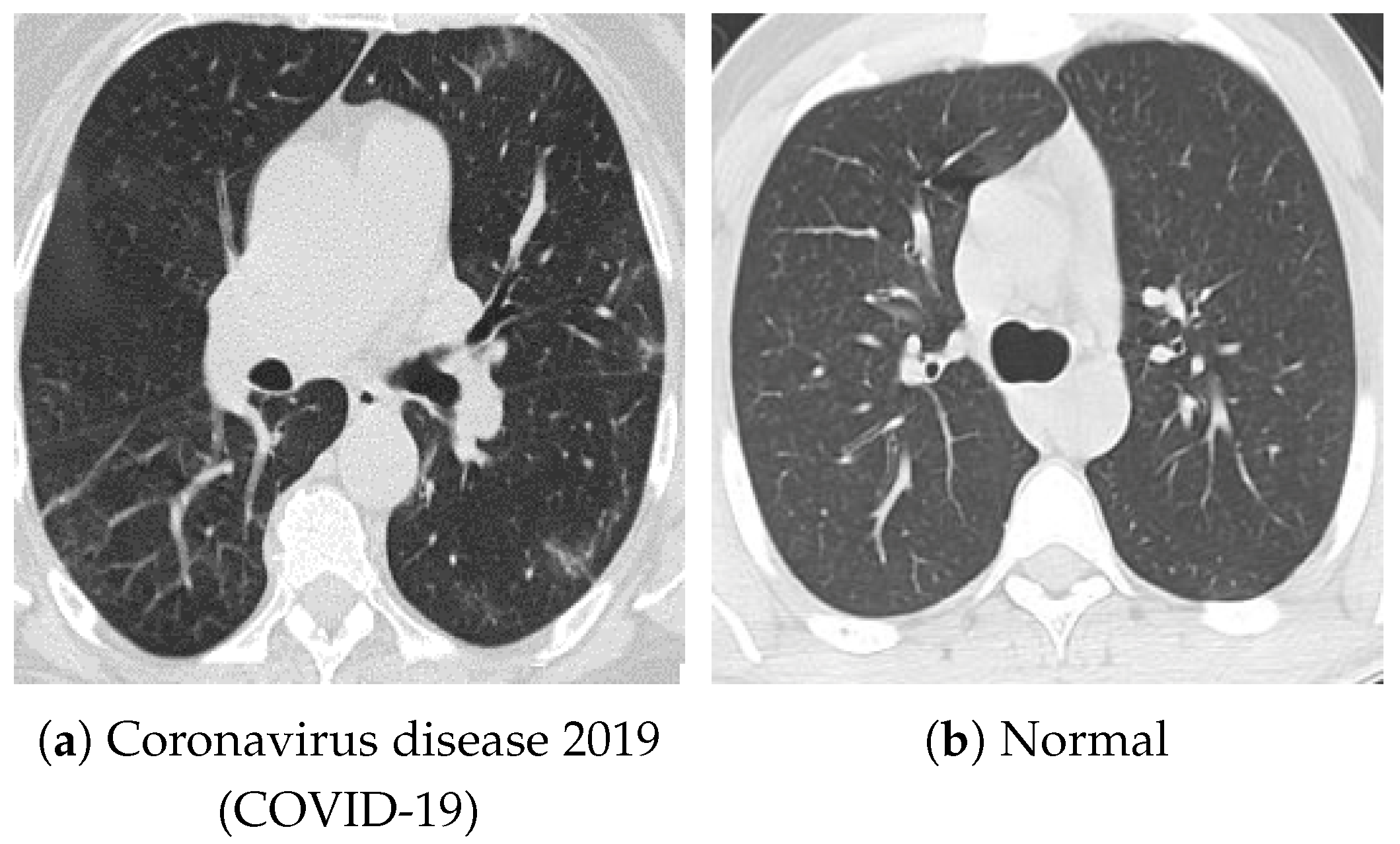

4.1. Datasets and Settings

4.2. Classification-Task Performance with Different Pretraining Datasets

4.3. Impact of Hyperparameters on the Experimental Results

4.4. Ablation Studies

4.5. Impact of Stage Extension on Continual Pretraining

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| SL | Supervised Learning |

| SSL | Self-Supervised Learning |

| CT | Computed Tomography |

| CSSL | Continual Self-Supervised Learning |

| SCL | Supervised Continual Learning |

| LR | Latent Replay |

| NNs | Neural Networks |

| WD | Wasserstein Distance |

| WKD | Wasserstein distance-based Knowledge Distillation |

| BKE | Batch-Knowledge Ensemble |

| MRI | Magnetic Resonance Imaging |

| ULM | Ultrasound Localization Microscopy |

| MAE | Masked AutoEncoder |

| COVID-19 | Coronavirus Disease 2019 |

| ACC | Accuracy |

| AUC | Area Under the receiver operating characteristic Curve |

| F1 | F1-score |

| FL | Federated Learning |

References

- Pinto-Coelho, L. How artificial intelligence is shaping medical imaging technology: A survey of innovations and applications. Bioengineering 2023, 10, 1435. [Google Scholar] [CrossRef] [PubMed]

- Maleki Varnosfaderani, S.; Forouzanfar, M. The role of AI in hospitals and clinics: Transforming healthcare in the 21st century. Bioengineering 2024, 11, 337. [Google Scholar] [CrossRef] [PubMed]

- Zhao, Y.; Wang, X.; Che, T.; Bao, G.; Li, S. Multi-task deep learning for medical image computing and analysis: A review. Comput. Biol. Med. 2023, 153, 106496. [Google Scholar] [CrossRef]

- Rayed, M.E.; Islam, S.S.; Niha, S.I.; Jim, J.R.; Kabir, M.M.; Mridha, M. Deep learning for medical image segmentation: State-of-the-art advancements and challenges. Inform. Med. Unlocked 2024, 47, 101504. [Google Scholar] [CrossRef]

- Shobayo, O.; Saatchi, R. Developments in deep learning artificial neural network techniques for medical image analysis and interpretation. Diagnostics 2025, 15, 1072. [Google Scholar] [CrossRef]

- Garcea, F.; Serra, A.; Lamberti, F.; Morra, L. Data augmentation for medical imaging: A systematic literature review. Comput. Biol. Med. 2023, 152, 106391. [Google Scholar] [CrossRef]

- Li, Y.; Wynne, J.F.; Wu, Y.; Qiu, R.L.; Tian, S.; Wang, T.; Patel, P.R.; Yu, D.S.; Yang, X. Automatic medical imaging segmentation via self-supervising large-scale convolutional neural networks. Radiother. Oncol. 2025, 204, 110711. [Google Scholar] [CrossRef]

- Singh, P.; Chukkapalli, R.; Chaudhari, S.; Chen, L.; Chen, M.; Pan, J.; Smuda, C.; Cirrone, J. Shifting to machine supervision: Annotation-efficient semi and self-supervised learning for automatic medical image segmentation and classification. Sci. Rep. 2024, 14, 10820. [Google Scholar] [CrossRef]

- VanBerlo, B.; Hoey, J.; Wong, A. A survey of the impact of self-supervised pretraining for diagnostic tasks in medical X-ray, CT, MRI, and ultrasound. BMC Med. Imaging 2024, 24, 79. [Google Scholar] [CrossRef]

- Zeng, X.; Abdullah, N.; Sumari, P. Self-supervised learning framework application for medical image analysis: A review and summary. BioMedical Eng. OnLine 2024, 23, 107. [Google Scholar] [CrossRef]

- Li, G.; Togo, R.; Ogawa, T.; Haseyama, M. COVID-19 detection based on self-supervised transfer learning using chest X-ray images. Int. J. Comput. Assist. Radiol. Surg. 2022, 18, 715–722. [Google Scholar] [CrossRef] [PubMed]

- Li, G.; Togo, R.; Ogawa, T.; Haseyama, M. TriBYOL: Triplet BYOL for Self-supervised Representation Learning. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Virtual, 23–27 May 2022; pp. 3458–3462. [Google Scholar]

- Zhao, Z.; Alzubaidi, L.; Zhang, J.; Duan, Y.; Naseem, U.; Gu, Y. Robust and explainable framework to address data scarcity in diagnostic imaging. Comput. Biol. Med. 2025, 197, 111052. [Google Scholar] [CrossRef] [PubMed]

- Li, G.; Togo, R.; Ogawa, T.; Haseyama, M. Self-supervised learning for gastritis detection with gastric X-ray images. Int. J. Comput. Assist. Radiol. Surg. 2023, 18, 1841–1848. [Google Scholar] [CrossRef] [PubMed]

- Li, G.; Togo, R.; Ogawa, T.; Haseyama, M. RGMIM: Region-Guided Masked Image Modeling for Learning Meaningful Representations from X-Ray Images. In Proceedings of the European Conference on Computer Vision (ECCV) Workshops, Milan, Italy, 29 September–4 October 2024; pp. 148–157. [Google Scholar]

- Ceccon, M.; Pezze, D.D.; Fabris, A.; Susto, G.A. Multi-label continual learning for the medical domain: A novel benchmark. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), Tucson, AZ, USA, 28 February–4 March 2025; pp. 7163–7172. [Google Scholar]

- Li, W.; Zhang, Y.; Zhou, H.; Yang, W.; Xie, Z.; He, Y. CLMS: Bridging domain gaps in medical imaging segmentation with source-free continual learning for robust knowledge transfer and adaptation. Med. Image Anal. 2025, 100, 103404. [Google Scholar] [CrossRef]

- Liu, X.; Shih, H.A.; Xing, F.; Santarnecchi, E.; El Fakhri, G.; Woo, J. Incremental learning for heterogeneous structure segmentation in brain tumor MRI. In Proceedings of the International Conference on Medical Image Computing and Computer Assisted Intervention (MICCAI), Vancouver, BC, Canada, 8–12 October 2023; pp. 46–56. [Google Scholar]

- Wang, Q.; Tan, X.; Ma, L.; Liu, C. Dual windows are significant: Learning from mediastinal window and focusing on lung window. In Proceedings of the CAAI International Conference on Artificial Intelligence, Beijing, China, 27–28 August 2022; pp. 191–203. [Google Scholar]

- Lu, H.; Kim, J.; Qi, J.; Li, Q.; Liu, Y.; Schabath, M.B.; Ye, Z.; Gillies, R.J.; Balagurunathan, Y. Multi-window CT based radiological traits for improving early detection in lung cancer screening. Cancer Manag. Res. 2020, 12, 12225–12238. [Google Scholar] [CrossRef]

- Mushtaq, E.; Yaldiz, D.N.; Bakman, Y.F.; Ding, J.; Tao, C.; Dimitriadis, D.; Avestimehr, S. CroMo-Mixup: Augmenting Cross-Model Representations for Continual Self-Supervised Learning. In Proceedings of the European Conference on Computer Vision (ECCV), Milan, Italy, 29 September–4 October 2024; pp. 311–328. [Google Scholar]

- Purushwalkam, S.; Morgado, P.; Gupta, A. The challenges of continuous self-supervised learning. In Proceedings of the European Conference on Computer Vision (ECCV), Tel Aviv, Israel, 23–27 October 2022; pp. 702–721. [Google Scholar]

- Fini, E.; da Costa, V.G.T.; Alameda-Pineda, X.; Ricci, E.; Alahari, K.; Mairal, J. Self-supervised models are continual learners. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 9621–9630. [Google Scholar]

- Cheng, H.; Wen, H.; Zhang, X.; Qiu, H.; Wang, L.; Li, H. Contrastive continuity on augmentation stability rehearsal for continual self-supervised learning. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2–6 October 2023; pp. 5684–5694. [Google Scholar]

- Xinyao, W.; Zhe, X.; Raymond; Kai-yu, T. Continual learning in medical image analysis: A survey. Comput. Biol. Med. 2024, 182, 109206. [Google Scholar] [CrossRef]

- Ye, Y.; Xie, Y.; Zhang, J.; Chen, Z.; Wu, Q.; Xia, Y. Continual self-supervised Learning: Towards universal multi-modal medical data representation learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 17–21 June 2024; pp. 11114–11124. [Google Scholar]

- Tasai, R.; Li, G.; Togo, R.; Tang, M.; Yoshimura, T.; Sugimori, H.; Hirata, K.; Ogawa, T.; Kudo, K.; Haseyama, M. Continual self-supervised learning considering medical domain knowledge in chest CT images. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Hyderabad, India, 6–11 April 2025; pp. 1–5. [Google Scholar]

- Madaan, D.; Yoon, J.; Li, Y.; Liu, Y.; Hwang, S.J. Representational continuity for unsupervised continual learning. In Proceedings of the International Conference on Learning Representations (ICLR), Virtual, 25–29 April 2022; pp. 1–18. [Google Scholar]

- Hu, D.; Yan, S.; Lu, Q.; Hong, L.; Hu, H.; Zhang, Y.; Li, Z.; Wang, X.; Feng, J. How well does self-supervised pre-training perform with streaming data? In Proceedings of the International Conference on Learning Representations (ICLR), Virtual, 25–29 April 2022; pp. 1–23. [Google Scholar]

- French, R.M. Catastrophic interference in connectionist networks: Can it be predicted, can it be prevented? In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Denver, CO, USA, 29 November–2 December 1993; Volume 6, pp. 1176–1177. [Google Scholar]

- Kirkpatrick, J.; Pascanu, R.; Rabinowitz, N.; Veness, J.; Desjardins, G.; Rusu, A.A.; Milan, K.; Quan, J.; Ramalho, T.; Grabska-Barwinska, A.; et al. Overcoming catastrophic forgetting in neural networks. Proc. Natl. Acad. Sci. USA 2017, 114, 3521–3526. [Google Scholar] [CrossRef]

- Rolnick, D.; Ahuja, A.; Schwarz, J.; Lillicrap, T.; Wayne, G. Experience replay for continual learning. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Vancouver, BC, USA, 8–14 December 2019; Volume 32, pp. 350–360. [Google Scholar]

- Buzzega, P.; Boschini, M.; Porrello, A.; Calderara, S. Rethinking experience replay: A bag of tricks for continual learning. In Proceedings of the International Conference on Pattern Recognition (ICPR), Milan, Italy, 10–15 January 2021; pp. 2180–2187. [Google Scholar]

- Ziller, A.; Usynin, D.; Braren, R.; Makowski, M.; Rueckert, D.; Kaissis, G. Medical imaging deep learning with differential privacy. Sci. Rep. 2021, 11, 13524. [Google Scholar] [CrossRef]

- Sahiner, B.; Chen, W.; Samala, R.K.; Petrick, N. Data drift in medical machine learning: Implications and potential remedies. Br. J. Radiol. 2023, 96, 20220878. [Google Scholar] [CrossRef]

- Hayes, T.L.; Kafle, K.; Shrestha, R.; Acharya, M.; Kanan, C. Remind your neural network to prevent catastrophic forgetting. In Proceedings of the European Conference on Computer Vision (ECCV), Glasgow, UK, 23–28 August 2020; pp. 466–483. [Google Scholar]

- Srivastava, S.; Yaqub, M.; Nandakumar, K.; Ge, Z.; Mahapatra, D. Continual domain incremental learning for chest X-ray classification in low-resource clinical settings. In Proceedings of the International Conference on Medical Image Computing and Computer Assisted Intervention (MICCAI), Workshop, Virtual, 27 September–1 October 2021; pp. 226–238. [Google Scholar]

- Wolf, D.; Payer, T.; Lisson, C.S.; Lisson, C.G.; Beer, M.; Götz, M.; Ropinski, T. Self-supervised pre-training with contrastive and masked autoencoder methods for dealing with small datasets in deep learning for medical imaging. Sci. Rep. 2023, 13, 20260. [Google Scholar] [CrossRef]

- Jiang, J.; Rangnekar, A.; Veeraraghavan, H. Self-supervised learning improves robustness of deep learning lung tumor segmentation models to CT imaging differences. Med. Phys. 2025, 52, 1573–1588. [Google Scholar] [CrossRef] [PubMed]

- Tasai, R.; Li, G.; Togo, R.; Tang, M.; Yoshimura, T.; Sugimori, H.; Hirata, K.; Ogawa, T.; Kudo, K.; Haseyama, M. Lung cancer classification using masked autoencoder pretrained on J-MID database. In Proceedings of the IEEE Global Conference on Consumer Electronics (GCCE), Kitakyushu, Japan, 29 October–1 November 2024; pp. 456–457. [Google Scholar]

- Chang, X.; Cai, X.; Dan, Y.; Song, Y.; Lu, Q.; Yang, G.; Nie, S. Self-supervised learning for multi-center magnetic resonance imaging harmonization without traveling phantoms. Phys. Med. Biol. 2022, 67, 145004. [Google Scholar] [CrossRef] [PubMed]

- Fiorentino, M.C.; Villani, F.P.; Benito Herce, R.; González Ballester, M.A.; Mancini, A.; López-Linares Román, K. An intensity-based self-supervised domain adaptation method for intervertebral disc segmentation in magnetic resonance imaging. Int. J. Comput. Assist. Radiol. Surg. 2024, 19, 1753–1761. [Google Scholar] [CrossRef] [PubMed]

- Gu, R.; Wang, G.; Lu, J.; Zhang, J.; Lei, W.; Chen, Y.; Liao, W.; Zhang, S.; Li, K.; Metaxas, D.N.; et al. CDDSA: Contrastive domain disentanglement and style augmentation for generalizable medical image segmentation. Med. Image Anal. 2023, 89, 102904. [Google Scholar] [CrossRef]

- Mojab, N.; Noroozi, V.; Yi, D.; Nallabothula, M.P.; Aleem, A.; Yu, P.S.; Hallak, J.A. Real-world multi-domain data applications for generalizations to clinical settings. In Proceedings of the IEEE International Conference on Machine Learning and Applications (ICMLA), Virtual, 14–17 December 2020; pp. 677–684. [Google Scholar]

- Yu, X.; Luan, S.; Lei, S.; Huang, J.; Liu, Z.; Xue, X.; Ma, T.; Ding, Y.; Zhu, B. Deep learning for fast denoising filtering in ultrasound localization microscopy. Phys. Med. Biol. 2023, 68, 205002. [Google Scholar] [CrossRef]

- Luan, S.; Yu, X.; Lei, S.; Ma, C.; Wang, X.; Xue, X.; Ding, Y.; Ma, T.; Zhu, B. Deep learning for fast super-resolution ultrasound microvessel imaging. Phys. Med. Biol. 2023, 68, 245023. [Google Scholar] [CrossRef]

- Peng, X.; Bai, Q.; Xia, X.; Huang, Z.; Saenko, K.; Wang, B. Moment matching for multi-source domain adaptation. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 1406–1415. [Google Scholar]

- Aljundi, R.; Babiloni, F.; Elhoseiny, M.; Rohrbach, M.; Tuytelaars, T. Memory Aware Synapses: Learning What (not) to Forget. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 144–161. [Google Scholar]

- Yao, Y.; Wu, R.; Zhou, Y.; Zhou, T. Continual Retinal Vision-Language Pre-training upon Incremental Imaging Modalities. In Proceedings of the International Conference on Medical Image Computing and Computer Assisted Intervention (MICCAI), Daejeon, Republic of Korea, 23–27 September 2025; pp. 111–121. [Google Scholar]

- Mansourian, A.M.; Ahmadi, R.; Ghafouri, M.; Babaei, A.M.; Golezani, E.B.; Ghamchi, Z.Y.; Ramezanian, V.; Taherian, A.; Dinashi, K.; Miri, A.; et al. A Comprehensive Survey on Knowledge Distillation. arXiv 2025, arXiv:2503.12067. [Google Scholar] [CrossRef]

- Gou, J.; Yu, B.; Maybank, S.J.; Tao, D. Knowledge distillation: A survey. Int. J. Comput. Vis. 2021, 129, 1789–1819. [Google Scholar] [CrossRef]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16x16 words: Transformers for image recognition at scale. In Proceedings of the International Conference on Learning Representations (ICLR), Virtual, 3–7 May 2021; pp. 1–21. [Google Scholar]

- He, K.; Chen, X.; Xie, S.; Li, Y.; Dollár, P.; Girshick, R. Masked Autoencoders are scalable vision learners. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 16000–16009. [Google Scholar]

- Raghu, M.; Unterthiner, T.; Kornblith, S.; Zhang, C.; Dosovitskiy, A. Do Vision Transformers See Like Convolutional Neural Networks? In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Online, 6–14 December 2021; pp. 12116–12128. [Google Scholar]

- Lv, J.; Yang, H.; Li, P. Wasserstein distance rivals Kullback-Leibler divergence for knowledge distillation. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Vancouver, BC, Canada, 10–15 December 2024; Volume 37, pp. 65445–65475. [Google Scholar]

- Peyr’e, G.; Cuturi, M. Computational optimal transport. Found. Trends Mach. Learn. 2019, 11, 355–607. [Google Scholar] [CrossRef]

- Yang, E.; Lozano, A.C.; Ravikumar, P. Elementary estimators for sparse covariance matrices and other structured moments. In Proceedings of the International Conference on Machine Learning (ICML), Beijing, China, 21–26 June 2014; pp. 397–405. [Google Scholar]

- Tsai, E.B.; Simpson, S.; Lungren, M.P.; Hershman, M.; Roshkovan, L.; Colak, E.; Erickson, B.J.; Shih, G.; Stein, A.; Kalpathy-Cramer, J.; et al. The RSNA international COVID-19 open radiology database (RICORD). Radiology 2021, 299, E204–E213. [Google Scholar] [CrossRef]

- Soares, E.; Angelov, P.; Biaso, S.; Froes, M.H.; Abe, D.K. SARS-CoV-2 CT-scan dataset: A large dataset of real patients CT scans for SARS-CoV-2 identification. medRxiv 2020. [Google Scholar] [CrossRef]

- Ge, Y.; Choi, C.L.; Zhang, X.; Zhao, P.; Zhu, F.; Zhao, R.; Li, H. Self-distillation with batch knowledge ensembling improves ImageNet classification. arXiv 2021, arXiv:2104.13298. [Google Scholar]

- Li, G.; Togo, R.; Ogawa, T.; Haseyama, M. Boosting automatic COVID-19 detection performance with self-supervised learning and batch knowledge ensembling. Comput. Biol. Med. 2023, 158, 106877. [Google Scholar] [CrossRef]

- Li, G.; Togo, R.; Ogawa, T.; Haseyama, M. Self-knowledge distillation based self-supervised learning for COVID-19 detection from chest X-ray images. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Virtual, 23–27 May 2022; pp. 1371–1375. [Google Scholar]

- Loshchilov, I.; Hutter, F. Decoupled weight decay regularization. In Proceedings of the International Conference on Learning Representations (ICLR), New Orleans, LA, USA, 6–9 May 2019; pp. 1–18. [Google Scholar]

- Caccia, L.; Aljundi, R.; Asadi, N.; Tuytelaars, T.; Pineau, J.; Belilovsky, E. New Insights on Reducing Abrupt Representation Change in Online Continual Learning. In Proceedings of the International Conference on Learning Representations (ICLR), Virtual, 25–29 April 2022; pp. 1–27. [Google Scholar]

- Sarfraz, F.; Arani, E.; Zonooz, B. Error Sensitivity Modulation based Experience Replay: Mitigating Abrupt Representation Drift in Continual Learning. In Proceedings of the International Conference on Learning Representations (ICLR), Kigali, Rwanda, 1–5 May 2023; pp. 1–18. [Google Scholar]

- Dosovitskiy, A.; Brox, T. Inverting visual representations with convolutional networks. In Proceedings of the IEEE conference on computer vision and pattern recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 4829–4837. [Google Scholar]

- Kumari, P.; Reisenbüchler, D.; Luttner, L.; Schaadt, N.S.; Feuerhake, F.; Merhof, D. Continual domain incremental learning for privacy-aware digital pathology. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention (MICCAI), Marrakesh, Morocco, 6–10 October 2024; pp. 34–44. [Google Scholar]

- Lemke, N.; González, C.; Mukhopadhyay, A.; Mundt, M. Distribution-Aware Replay for Continual MRI Segmentation. In Proceedings of the International Workshop on Personalized Incremental Learning in Medicine, Marrakesh, Morocco, 10 October 2024; pp. 73–85. [Google Scholar]

- Xia, Y.; Yu, W.; Li, Q. Byzantine-Resilient Federated Learning via Distributed Optimization. arXiv 2025, arXiv:2503.10792. [Google Scholar] [CrossRef]

- Zhu, H.; Togo, R.; Ogawa, T.; Haseyama, M. Prompt-based personalized federated learning for medical visual question answering. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Seoul, Republic of Korea, 14–19 April 2024; pp. 1821–1825. [Google Scholar]

- Yang, X.; Yu, H.; Gao, X.; Wang, H.; Zhang, J.; Li, T. Federated Continual Learning via Knowledge Fusion: A Survey. IEEE Trans. Knowl. Data Eng. 2024, 36, 3832–3850. [Google Scholar] [CrossRef]

- Zhong, Z.; Bao, W.; Wang, J.; Chen, J.; Lyu, L.; Yang Bryan Lim, W. SacFL: Self-Adaptive Federated Continual Learning for Resource-Constrained End Devices. IEEE Trans. Neural Netw. Learn. Syst. 2025, 36, 17169–17183. [Google Scholar] [CrossRef] [PubMed]

| SARS-CoV-2 CT-Scan Dataset | Chest CT-Scan Images Dataset | ||||||

|---|---|---|---|---|---|---|---|

| Method | Domain | ACC | AUC | F1 | ACC | AUC | F1 |

| Ours | → | ||||||

| MedCoSS [26] | → | ||||||

| Ours | → | ||||||

| MedCoSS [26] | → | ||||||

| MAE [53] | + | ||||||

| MAE [53] | |||||||

| MAE [53] | |||||||

| Baseline | None | ||||||

| SARS-CoV-2 CT-Scan Dataset | Chest CT-Scan Images Dataset | ||||||

|---|---|---|---|---|---|---|---|

| Method | Domain | ACC | AUC | F1 | ACC | AUC | F1 |

| Ours | → | ||||||

| MedCoSS [26] | → | ||||||

| Ours | → | ||||||

| MedCoSS [26] | → | ||||||

| MAE [53] | + | ||||||

| MAE [53] | |||||||

| MAE [53] | |||||||

| Baseline | None | ||||||

| Domain | ACC | AUC | F1 | |

|---|---|---|---|---|

| → | 0.0 | |||

| 1.0 | ||||

| 2.0 | ||||

| 3.0 | ||||

| 4.0 | ||||

| → | 0.0 | |||

| 1.0 | ||||

| 2.0 | ||||

| 3.0 | ||||

| 4.0 |

| Domain | ACC | AUC | F1 | |

|---|---|---|---|---|

| → | 0.0 | |||

| 1.0 | ||||

| 2.0 | ||||

| 3.0 | ||||

| 4.0 | ||||

| → | 0.0 | |||

| 1.0 | ||||

| 2.0 | ||||

| 3.0 | ||||

| 4.0 |

| Domain | Batch Size | ACC | AUC | F1 |

|---|---|---|---|---|

| → | 16 | |||

| 32 | ||||

| 64 | ||||

| 128 | ||||

| → | 16 | |||

| 32 | ||||

| 64 | ||||

| 128 |

| Domain | Batch Size | ACC | AUC | F1 |

|---|---|---|---|---|

| → | 16 | |||

| 32 | ||||

| 64 | ||||

| 128 | ||||

| → | 16 | |||

| 32 | ||||

| 64 | ||||

| 128 |

| LR | WKD | BKE | ACC | AUC | F1 |

|---|---|---|---|---|---|

| ✔ | |||||

| ✔ | ✔ | ||||

| ✔ | ✔ | ||||

| ✔ | ✔ | ✔ |

| LR | WKD | BKE | ACC | AUC | F1 |

|---|---|---|---|---|---|

| ✔ | |||||

| ✔ | ✔ | ||||

| ✔ | ✔ | ||||

| ✔ | ✔ | ✔ |

| Stage | ACC | AUC | F1 |

|---|---|---|---|

| Stage 1 | |||

| Stage 2 | |||

| Stage 3 | |||

| Stage 4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Tasai, R.; Li, G.; Togo, R.; Ogawa, T.; Hirata, K.; Tang, M.; Yoshimura, T.; Sugimori, H.; Nishioka, N.; Shimizu, Y.; et al. Privacy-Aware Continual Self-Supervised Learning on Multi-Window Chest Computed Tomography for Domain-Shift Robustness. Bioengineering 2026, 13, 32. https://doi.org/10.3390/bioengineering13010032

Tasai R, Li G, Togo R, Ogawa T, Hirata K, Tang M, Yoshimura T, Sugimori H, Nishioka N, Shimizu Y, et al. Privacy-Aware Continual Self-Supervised Learning on Multi-Window Chest Computed Tomography for Domain-Shift Robustness. Bioengineering. 2026; 13(1):32. https://doi.org/10.3390/bioengineering13010032

Chicago/Turabian StyleTasai, Ren, Guang Li, Ren Togo, Takahiro Ogawa, Kenji Hirata, Minghui Tang, Takaaki Yoshimura, Hiroyuki Sugimori, Noriko Nishioka, Yukie Shimizu, and et al. 2026. "Privacy-Aware Continual Self-Supervised Learning on Multi-Window Chest Computed Tomography for Domain-Shift Robustness" Bioengineering 13, no. 1: 32. https://doi.org/10.3390/bioengineering13010032

APA StyleTasai, R., Li, G., Togo, R., Ogawa, T., Hirata, K., Tang, M., Yoshimura, T., Sugimori, H., Nishioka, N., Shimizu, Y., Kudo, K., & Haseyama, M. (2026). Privacy-Aware Continual Self-Supervised Learning on Multi-Window Chest Computed Tomography for Domain-Shift Robustness. Bioengineering, 13(1), 32. https://doi.org/10.3390/bioengineering13010032