4. Structural Properties and Functional Mechanisms

4.1. Physicochemical Characterization

To highlight the direct relationship between the synthesis strategies discussed above and the functional behavior of the resulting materials, a systematic analysis of the structural and chemical properties of the modified biochar is necessary. Physicochemical characterization is an essential step in validating the porous architecture, elemental composition, and nature of functional groups, parameters that control adsorption mechanisms, redox processes, and biological interactions.

Techniques such as BET analysis (determination of specific surface area and pore distribution), SEM/TEM electron microscopy (morphology and texture), FTIR and XPS spectroscopy (identification of functional groups and oxidation states), and Raman spectroscopy (degree of structural ordering and D/G ratio) provide complementary information on the material structure. Correlating these parameters allows for a mechanistic interpretation of performance in water treatment or catalysis applications.

Table 2 summarizes the main characterization methods used, the parameters determined, and their relevance to the functional mechanisms, thereby facilitating the integration of the structure–property–performance relationship.

4.2. Adsorption Mechanisms

Understanding the adsorption mechanisms of biochar-based supermaterials requires direct correlation of structural properties and surface chemistry with the type of interactions involved in contaminant retention. Adsorptive performance is not determined solely by specific surface area but by a combination of hierarchical porosity, pore-size distribution, functional group density, and the nature of heteroatoms incorporated into the carbon network.

For heavy metals, the dominant mechanisms include ion exchange, surface complexation, and pH-dependent electrostatic interactions. In contrast, for aromatic organic pollutants, π–π interactions, van der Waals forces, and hydrophobic effects are frequently at play. In doped or hybrid materials, redox processes can further facilitate the chemical transformation of contaminants (e.g., the reduction of Cr(VI) to Cr(III)). To systematize these correlations,

Table 3 summarizes the main adsorption mechanisms associated with them.

For aromatic organic pollutants, adsorption cannot be interpreted only in terms of pore filling or generic hydrophobicity. The aromaticity and electron density of the adsorbate also influence uptake, especially when the biochar surface contains condensed aromatic domains capable of π–π interactions. In such cases, the adsorption tendency may increase with the pollutant’s greater aromatic character, although this relationship is modulated by substituent effects, ionization state, steric accessibility, and competition with water.

Likewise, in N-doped systems used for Pb(II) adsorption, mechanistic assignment should not rely solely on improved uptake values. A stronger interpretation is obtained when adsorption is accompanied by shifts in XPS binding energies, particularly in N 1s, O 1s, and Pb 4f regions, indicating coordination or complexation between the metal ion and electron-donating surface groups. Accordingly, improved Pb(II) uptake should be linked, where possible, to spectroscopic evidence rather than to performance data alone.

The use of the Langmuir and the Freundlich models in the biochar literature should be interpreted with caution. Langmuir behavior is often reported when adsorption appears to approach a finite apparent capacity under relatively controlled single-solute conditions. In contrast, Freundlich behavior is more commonly observed on heterogeneous surfaces and energy distributions expected for modified biochars. However, because many studies fit both models to limited datasets, differences in R2 values are often too small to justify strong mechanistic conclusions. The same caution applies to kinetics: although the pseudo-second-order model is frequently associated with chemisorption, a good mathematical fit does not by itself prove that chemical bonding is rate-limiting; intraparticle diffusion, film diffusion, and heterogeneous site occupancy may remain important.

4.3. Photocatalytic Mechanisms and Redox Processes

Biochar is typically a cocatalyst, electron reservoir, or conductive support in photocatalytic and redox systems. Charge dynamics and reactive species change when combined with semiconductors or metal nanoparticles. Agricultural biochar may improve electron transport, inhibit charge recombination, and increase reactive oxygen species that eliminate pollutants [

77,

78].

Trapping electrons and separating charges, biochar’s conductive domains and imperfections capture electrons from photoexcited semiconductors such as TiO

2. These defects and domains act as electron sinks, reducing e

−/h

+ recombination and extending charge-carrier lifetimes, thereby boosting photocatalytic degradation rates [

77].

Assistance with nanoparticles and biochar dispersion provides high-surface-area anchoring sites for metal/metal-oxide photocatalysts such as Ag/TiO

2 and Fe

3O

4, thereby improving dispersion, interfacial contact, and the synergy between sorption and catalytic degradation. Reactive oxygen species generation of enhanced charge separation enables the formation of reactive oxygen species (ROS) (•OH, O

2•

−) at the semiconductor–charcoal interface. ROS oxidize dyes and organic substances and kill bacteria when metals like silver are present [

77,

79,

80].

Direct electron transfer and redox cycling may participate in Fenton-like or catalytic redox cycles due to redox-active heteroatoms or transition-metal sites. These cycles transport electrons to oxidants or contaminants, enabling deterioration beyond adsorption [

78,

81].

Increasing Ag concentration in Ag/TiO2-biochar composites accelerated dye degradation and reduced recombination. Multiple rounds of recyclability indicate stable electron-transfer-driven photocatalysis on agricultural biochar substrates. These practical outcomes were realized using biochar.

The datasets lack precise band alignment or charge-transfer rate measurements for agricultural biochars. Thus, molecular-level energetics need electrochemical or spectroscopic studies beyond the reporting [

77,

80].

In TiO2–biochar hybrid systems, the frequently proposed enhancement mechanism is based on improved light utilization, higher pollutant preconcentration near the catalyst surface, and more efficient separation of photogenerated charge carriers at the semiconductor–carbon interface. In the literature, reduced electron–hole recombination is typically supported by photoluminescence quenching, electrochemical impedance spectroscopy, transient photocurrent measurements, and, occasionally, Mott–Schottky analysis. Similarly, ROS pathways involving hydroxyl radicals or superoxide are often inferred from scavenger assays, but stronger evidence is available when spin-trapping or EPR measurements are reported.

4.4. Antimicrobial Activity and Biological Interactions

Biochar made from agricultural waste has antibacterial properties, particularly when combined with biocidal metals or photogenerated reactive oxygen species (ROS). Current research suggests ROS generation, metal-mediated toxicity, and sorption-mediated effects are key antimicrobial pathways.

Reactive oxygen species (ROS) stress in composites with photocatalysts and silver–charcoal-supported materials promotes ROS generation, which damages microbial components and produces bactericidal action [

77,

82,

83].

Silver incorporated or doped into biochar surfaces releases metal ions and interacts with biological components to induce antibacterial effects. Silver/titanium dioxide and biochar composites exhibit enhanced antibacterial activity through sorption, reactive oxygen species, and metal-mediated effects.

Nutrient sorption and sequestration: The sources show no indication that pure agricultural biochar breaks cell walls or bursts membranes. Biochar sorption may reduce food availability or sequester microbial signaling molecules, preventing growth [

77,

84,

85].

Certain functionalized or magnetic biochar composites have been tested for biocompatibility and antioxidant activity with antibacterial tests. The known molecular theories emphasize ROS and metal impacts above mechanical cell-wall breakage [

75,

77].

The antimicrobial behavior of modified biochars should not be reduced solely to the release of ROS or metal ions. Surface charge, hydrophobicity, roughness, defect density, and the material’s zeta potential may also influence cell adhesion, membrane disruption, and local electrostatic interactions. In silver-doped systems in particular, mechanistic interpretation should ideally include not only total antimicrobial efficiency but also Ag release kinetics and comparisons with relevant toxicity thresholds for the target organism and the surrounding medium.

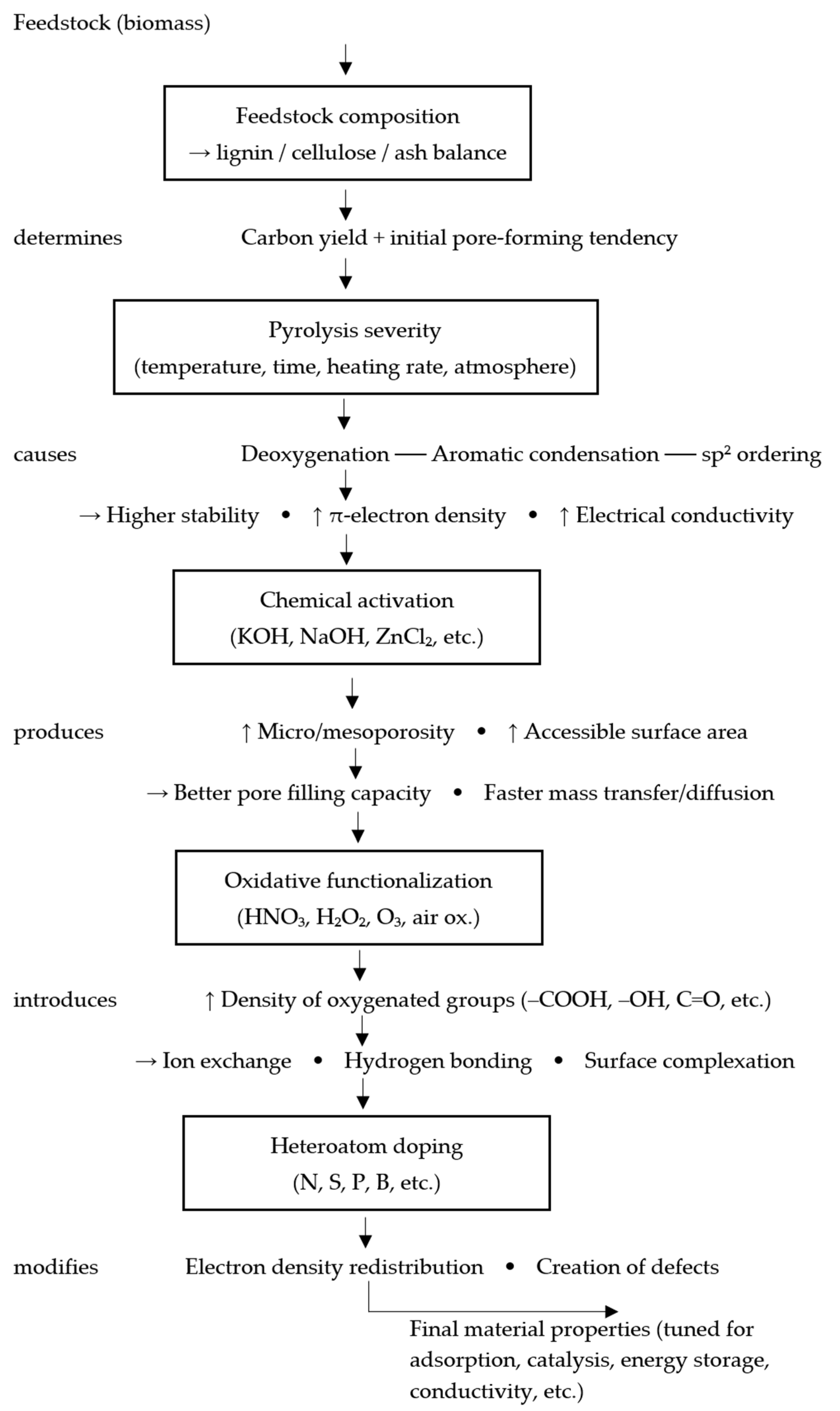

4.5. Structure–Property–Performance Relationship

Physicochemical characterization has identified measurable structural features that consistently transfer to adsorption, catalysis, and antibacterial activity. Agricultural biochar research has linked structure, property, and performance.

In this review, the structure-property-performance relationship is interpreted as a directional sequence in which feedstock composition and process conditions determine structural descriptors, structural descriptors determine dominant interaction modes, and these interaction modes determine application performance (

Figure 3).

Pore architecture, body surface area, and hierarchical micro- and mesoporosity increase the number of adsorption sites and the open pore volume for organic molecules. The enhanced surface area of KHCO

3/KOH-activated biochars improved tetracycline and antibiotic adsorption [

73,

74].

Pore-size distribution, compaction, and van der Waals forces help pores between 1 and 10 nanometers capture small organic contaminants. Organic trace impurities are removed differently by coir, rice husk, and other biochars, depending on their porous structures [

86].

Polarity, hydrogen-bonding capacity, ion-exchange potential, and surface complexation for ionic or polar pollutants increased with rich oxygen- and nitrogen-containing groups (carboxyl, hydroxyl, and carbonyl). Chemisorption and ion exchange enabled the modified carboxylate-appended biochars to absorb methylene blue readily [

73,

77].

High aromatic content and enhanced graphitic ordering in photocatalytic and redox systems enhance π–π interactions, electrical conductivity, and adsorption of aromatic contaminants, supporting electron transfer [

87].

Ash and mineral content, surface charge, inorganic content, and zeta potential affect electrostatic attraction, which may involve surface complexation sites for oxyanions such as arsenic. Due to the presence of oxygen functional groups and mineral content, mineral-rich colloids absorbed more [

76,

77].

Adding metals or metal oxides, such as Ag, TiO2, Fe3O4, and ZnCl2, alters chemical affinity (surface complexation and catalytic sites) and physical trapping (increased porosity). This creates combined adsorption, photocatalysis, and antibacterial action.

Following KHCO

3 activation, increasing surface area and micropore volume led to a 15-fold increase in tetracycline adsorption. KOH-activated bagasse biochar with hierarchical porosity removed norfloxacin due to pore-filling and electrostatic attraction [

73,

74]. Examples show that quantitative structural properties can predict practical removal efficiency and catalytic activity.

6. Post-Use Valorization and Dual Functionality

Post-use management of biochar should be addressed as an integral part of process design instead of a terminal disposal step. After adsorption, saturated biochar often retains a porous carbon matrix, mineral phases, and reactive surface functionalities that can support secondary applications in soils or construction materials. The recent review literature has increasingly framed spent biochar as a multifunctional material with potential for agronomic reuse, contaminant stabilization, thermochemical valorization, or incorporation into engineered products, which supports the concept of dual functionality in biochar-based treatment systems.

However, secondary valorization cannot be justified solely based on residual porosity or retained sorption capacity. The feasibility of reuse depends on the identity, concentration, mobility, and regulatory status of the adsorbed contaminants, as well as on the intrinsic quality of the biochar itself. For this reason, post-use routing should be assessed through a risk-based framework that distinguishes between nutrient-loaded or relatively benign spent materials, which may be suitable for controlled agronomic or material reuse, and contaminant-laden biochars, which may require stabilization, thermal reprocessing, or restricted use.

Spent biochar is typically reused as nutrient-loaded soil amendments, managed as contaminant-bearing waste requiring stabilization or disposal or diverted to non-agronomic products such as construction composites and energy/catalytic materials. Each route carries distinct performance needs, release risks, and policy gaps.

Spent biochar that carries recovered nutrients is increasingly discussed as a post-use product with agronomic value rather than as a residue requiring disposal. In this context, saturated biochar can be used directly as a slow-release fertilizer or incorporated into blended soil amendments, provided that its nutrient loading, post-processing, and field application strategy are compatible with crop requirements. Reviews and life cycle-oriented studies report encouraging agronomic outcomes and provide explicit examples, such as phosphate-saturated and Mg-modified biochars used as fertilizer carriers, supporting the relevance of this pathway for circular nutrient management [

94,

98,

99].

The agronomic function of nutrient-loaded spent biochar is inherently dual: it serves as both a nutrient carrier and a soil conditioner. Beyond nutrient supply, the biochar matrix can improve soil structure, moisture retention, and related physical properties, thereby enhancing nutrient-use efficiency and crop response over time [

94,

98]. However, these benefits are not automatic. Evidence indicates that agronomic performance is sensitive to post-processing, particularly particle-size distribution. Plant responses appear to improve when particle size is controlled, with favorable ranges reported around 0.5–1.0 mm, and additional treatments such as heating or aeration may further influence performance [

100]. Likewise, nutrient form and loading chemistry are critical. The nutrient must remain sufficiently plant-available while avoiding rapid leaching losses, and studies on Mg-modified phosphate-loaded biochars illustrate how material-specific loading strategies can be designed to balance retention and release [

98].

For this route to be credible in a process-oriented framework, agronomic reuse must be supported by consistent material characterization and release testing. At minimum, nutrient-loaded spent biochar should be evaluated for nutrient content, pH, electrical conductivity, and soluble fractions, along with its release behavior under conditions relevant to crop management [

94]. Without this level of characterization, field performance is difficult to predict and integration into fertilization programs remains uncertain.

At the same time, the risks associated with direct land application must be stated clearly. When biochar is sourced from wastewater or polluted streams, contaminant carryover becomes a major concern. Heavy metals, organic contaminants, pathogens, and antibiotic resistance genes (ARGs) may persist in spent material and pose risks to soil health and food safety if applied without adequate screening [

100]. In addition, leachate chemistry from post-processed biochars has been reported to be heterogeneous across studies, suggesting that short-term release behavior can vary substantially depending on feedstock, treatment history, and post-processing conditions [

101]. This variability underscores the need for end-use-specific leaching and release assessments rather than assuming uniform behavior across materials.

Regulatory and governance aspects remain significant limitations. Although the direct application of nutrient-loaded spent biochar is recognized in the policy and sustainability literature as a promising circular pathway, harmonized standards and application thresholds remain limited, and practical implementation generally depends on local regulations and case-specific testing [

99]. Based on the supplied literature, there is not yet sufficient evidence to define universally applicable regulatory limits or a standardized certification framework for nutrient-loaded spent biochars; accordingly, regulatory compliance must currently be addressed on a jurisdiction-by-jurisdiction basis.

In contrast to nutrient-loaded materials, biochar that has sorbed contaminants from wastewater treatment, remediation systems, or industrial process streams often cannot be safely returned to soil without further treatment. In these cases, the post-use challenge is no longer nutrient recovery but contaminant containment, and the spent biochar should be managed through stabilization, encapsulation, controlled disposal, or transfer to engineered sinks. Reviews on biochar in wastewater treatment and remediation consistently emphasize that end-of-life handling of contaminant-laden biochar remains a major operational and environmental constraint [

100,

102].

This management challenge is directly linked to the mechanisms by which biochar captures pollutants. Adsorption, ion exchange, pore entrapment, filtration, and biofilm-mediated biodegradation can all contribute to contaminant accumulation on or within the biochar particles [

100]. As a result, the spent material becomes a concentrated solid matrix in which contaminant speciation, binding strength, and mobility determine downstream risk. From a process perspective, this means that the success of the primary treatment stage cannot be evaluated independently of the fate of the loaded sorbent.

Several management routes are discussed in the literature. Stabilization and immobilization approaches aim to reduce contaminant leachability through chemical or physical treatment, including further modification of the biochar surface or incorporation into binding matrices [

103]. These strategies build on the same materials science principles used to enhance adsorption—namely, changing surface chemistry and strengthening sorption interactions—but apply them to containment rather than uptake. Encapsulation in construction or composite materials is another option, particularly where the goal is to isolate contaminants while generating a secondary-use product; however, such approaches require clear evidence of long-term containment and acceptable material performance [

94,

103]. When safe reuse cannot be demonstrated, controlled disposal in engineered landfills or secure recovery pathways remain necessary. Reviews repeatedly identify disposal and containment as unresolved issues for contaminant-bearing biochars, particularly at scale [

94,

102].

Regardless of the route chosen, safe management requires performance criteria that go beyond adsorption capacity. Reduced leachability is essential: contaminant release rates must remain low under the environmental conditions relevant to the intended end use or disposal setting [

98,

100]. Physical integrity is also important, especially for materials that are handled, transported, or weathered. Abrasion, fragmentation, and disintegration can increase the risk of particle mobilization and contaminant exposure [

4]. In addition, life cycle thinking is needed when comparing reuse and disposal options, since environmental burdens can shift downstream depending on how the spent biochar is treated within the broader nutrient recovery or remediation chain [

98].

The decision criteria are therefore risk-centered. Improper reuse may result in the release of metals, organic contaminants, or biological hazards into soil and water, undermining the original environmental benefits of the treatment process [

100,

102]. Although the literature clearly identifies disposal and containment as policy-relevant challenges, it does not yet provide standardized regulatory thresholds that apply across jurisdictions. As with agronomic reuse, specific regulatory requirements must therefore be determined locally, and the current evidence base is insufficient to support a single universal compliance framework.

When agronomic reuse is unsuitable or risky, non-agronomic valorization offers an alternative route to recover value from spent biochar. The current literature describes several pathways, including incorporation into building materials, polymer or cement composites, and conversion into catalytic or energy-related materials, such as electrodes. These routes are attractive because they may either immobilize contaminants within engineered matrices or extend the functional life of biochar through higher-value applications. Reviews covering construction additives, biocomposites, and energy and catalytic applications consistently show that the feasibility of these routes depends on targeted functionalization and end-use-specific material design [

94,

103,

104].

Construction-related uses are among the most developed non-agronomic options. Biochar has been incorporated into cementitious systems, asphalt, and insulation-type materials, where it may contribute to moisture regulation, thermal behavior, and electromagnetic properties, in addition to its role as a filler [

98]. From the perspective of spent biochar management, these applications are particularly relevant because they may combine valorization with containment, especially when contaminant-bearing biochar is embedded in a stable matrix. Related composite strategies use polymeric or mineral binders to encapsulate the particles while also contributing to the mechanical or functional performance of the final product [

103].

Catalytic and energy-related applications represent a more specialized yet technologically significant class of end uses. Functionalized biochars, including nanoscale or chemically activated variants, are being developed for catalytic systems and electrochemical energy storage, where conductivity, surface chemistry, and stable active sites are essential [

103,

104]. These applications usually require additional modifications—such as the activation or incorporation of metal oxides—to achieve the required performance, which means they are more processing-intensive than direct reuse in soil or in basic construction matrices.

Because these pathways move spent biochar into engineered products, their performance requirements are correspondingly strict. For construction and composite uses, the material must satisfy mechanical and durability criteria (e.g., strength, stiffness, fire resistance, and long-term stability) appropriate to the target application and relevant building standards [

94]. For catalytic and energy applications, the requirements shift toward conductivity, accessible surface area, and stable reactive sites, which are generally achieved through tailored functionalization [

103,

104]. When the application’s purpose is partly to sequester previously captured contaminants, containment verification becomes a central requirement. In such cases, leaching tests under accelerated weathering and service-life simulation conditions are needed to demonstrate long-term immobilization [

94,

103].

The literature supports the promise of these non-agronomic routes but also highlights important evidence gaps. Although benefits have been reported, comprehensive long-term datasets on leaching behavior and structural safety of contaminant-bearing biochar in engineered products remain limited. As a result, long-term risk and standardized regulatory acceptance cannot yet be considered fully established [

94,

103]. In addition, policy and market barriers continue to affect scale-up, especially for more engineered materials such as magnetic or highly functionalized biochars, indicating that nontechnical factors may be as important as material performance in determining industrial adoption [

99].

The climate relevance of sequential or post-use application depends on whether the secondary route merely delays disposal or actually displaces a more emission-intensive product or process. Recent case studies show that the net GHG effect can shift substantially depending on the accounting scenario, functional unit, and substitution credit used. The key implication is that sequential use should be presented as a potentially advantageous strategy, rather than an automatically carbon-negative outcome; illustrative case studies and scenario-based quantification should be incorporated into future revisions, where possible.

Conclusions regarding the reuse or environmental performance of manure-derived, sludge-derived, or otherwise contaminant-bearing biochars should be considered substantially more uncertain than those for relatively clean lignocellulosic residues. These materials may contain higher ash fractions, concentrated trace metals, salts, phosphorus-rich mineral phases, residual organic contaminants, or mobile toxic species whose behavior depends strongly on pyrolysis temperature, feedstock heterogeneity, and post-treatment history. Accordingly, the present review does not generalize conclusions from crop-residue-derived biochars to manure- or sludge-based systems without qualification; future LCAs should explicitly address this uncertainty through scenario analysis, sensitivity analysis, and separate inventory modeling for contaminant control steps.

6.1. Use of Saturated Biochar as a Soil Amendment

The use of saturated biochar as a soil amendment is most appropriate when the sorbed phase is beneficial (e.g., nutrient-enriched biochar) or when contaminant release is demonstrably controlled. Even after adsorption, biochar typically preserves key structural characteristics (porosity, surface area, aromatic carbon backbone) that can improve soil water retention, aggregation, cation exchange behavior, and nutrient retention.

From a process perspective, this route can be described as a two-stage valorization strategy: adsorption serves as the primary treatment function. In contrast, soil application provides a secondary agronomic and carbon-management function. This framing is particularly relevant for systems designed to recover nutrients or condition effluents before land-based reuse. The sustainability value of this pathway increases when it reduces mineral fertilizer demand or improves nutrient-use efficiency in degraded soils.

Saturated biochar can be safe and beneficial if sorbed contaminants are immobilized and nonextractable, but risks exist from intrinsic contaminants (e.g., PAHs) and from fractions mobilized under environmental conditions. Eligibility requires targeted desorption testing under field pH and rhizosphere conditions, and limits on total and extractable contaminants before soil reuse.

The use of saturated biochar in soil systems requires a balanced interpretation of both its immobilization potential and its residual risk. In many cases, saturated biochar can reduce contaminant bioavailability and alleviate pollutant-induced phytotoxicity, particularly when sorption is strong, and the bound fraction remains stable under soil conditions. At the same time, safety assessment must distinguish between two fundamentally different issues: (i) beneficial immobilization of contaminants captured during treatment, and (ii) harmful intrinsic contaminants already present in the biochar due to feedstock type or production method. This distinction is essential for any review of post-use biochar management.

Several studies support the immobilization benefit of saturated biochar under appropriate conditions. In sludge-amended soils, biochar addition has been shown to increase adsorption capacity while reducing desorption and bioavailability of metals such as Cd, Cu, Ni, and Zn relative to non-amended systems, indicating a clear stabilization effect for multiple potentially toxic elements [

105]. Similarly, experiments using metal-saturated softwood biochar reported improved plant growth and reduced phytotoxicity compared with controls, suggesting that sorbed metals can, under some conditions, be retained in forms that are less bioavailable and less harmful to plants [

106]. Comparable trends have also been reported for organic contaminants: biochar can strongly adsorb pesticides, thereby reducing desorption, limiting their mobility, and reducing bioavailability in soil [

92]. Taken together, these findings support the view that saturated biochar can provide a genuine environmental benefit when sorption is persistent and contaminant remobilization is low.

However, the presence of intrinsic contaminants in the biochar itself may offset or even negate these benefits. Some biochars, particularly those produced from certain feedstocks or under poorly controlled thermal conditions (e.g., traditional kilns), may contain elevated concentrations of polycyclic aromatic hydrocarbons (PAHs). In such cases, soil application has been associated with marked increases in soil PAH concentrations, demonstrating that biochar may act not only as a sorbent but also as a contaminant source [

107]. In addition, even when contaminants are captured during treatment, only part of the sorbed fraction may be strongly retained. A measurable proportion can remain loosely bound or become mobilizable in the presence of environmentally relevant extractants such as salts or organic acids. This necessitates distinguishing between total contaminant content and extractable or remobilizable fractions when evaluating safety [

106].

For these reasons, the benefits of saturated biochar should be interpreted conditionally rather than assumed. Evidence supports reduced bioavailability and phytotoxicity in many systems, but only when contaminant retention is robust and intrinsic contamination is adequately controlled [

92,

105,

106,

107].

Desorption behavior under field-relevant conditions is central to the safe reuse of saturated biochar, as contaminant retention is not static. Sorption strength and release potential vary with soil chemistry, particularly pH, ionic strength, ligand availability, and rhizosphere activity. As a result, laboratory adsorption data alone are insufficient to predict long-term safety after land application. A more appropriate assessment requires desorption testing under conditions that simulate realistic soil environments, including pH shifts and biologically relevant extractants.

Experimental evidence shows that sorption capacity and affinity are strongly pH-dependent. Controlled studies have reported clear pH effects on retention behavior, including differences in sorption trends among elements (e.g., Pb > Cu > As > Sb under some conditions), with substantial sorption quantified at around pH 6 [

106]. These results indicate that changes in soil pH can alter contaminant retention and therefore modify the risk of release over time. This is particularly relevant in agricultural soils where pH may vary due to liming, fertilizer inputs, root activity, or seasonal changes.

Organic ligands provide an additional, often underestimated, desorption pathway. Extraction tests using Ca(NO

3)

2 and environmentally relevant organic acids have shown that only a relatively small fraction of some potentially toxic elements may be released as loosely exchangeable material (approximately 6–11%), while much larger fractions—up to about 60% of total PTE mass in some cases—can be mobilized in the presence of organic acids [

106]. This finding is highly important for post-use evaluation because it suggests that rhizosphere compounds can substantially increase desorption risk even when conventional salt extractions indicate relatively low mobility.

At the same time, matrix effects may partially mitigate remobilization. In sewage-sludge-amended soils, the inclusion of biochar reduced desorption of several potentially toxic elements relative to sludge-only or unamended soils, indicating that interactions between soil, sludge, and biochar phases can improve retention [

105]. However, this reduction should not be interpreted as complete immobilization. Rather, it supports the need for system-specific testing that accounts for the full soil matrix rather than only biochar in isolation.

One notable gap in the available evidence concerns moisture dynamics. While the supplied studies provide useful data on pH and ligand effects, they do not offer quantified field-scale evidence on how wetting–drying cycles influence desorption and transport of sorbed contaminants. Because soil moisture fluctuations can alter redox conditions, pore–water chemistry, and transport pathways, moisture-specific desorption behavior remains insufficiently documented in this corpus.

Based on the reviewed evidence, desorption assessment for saturated biochar intended for land application should prioritize a set of field-relevant assays, including pH-dependent sorption/desorption testing, determination of ionic-exchangeable pools (e.g., Ca(NO

3)

2-extractable fractions), organic acid leaching to simulate rhizosphere conditions, and plant uptake or bioassays to evaluate biologically relevant remobilization [

92,

105,

106]. These approaches provide a more realistic basis for judging whether apparent sorption stability in laboratory systems will translate into safe performance in soil.

The reviewed literature does not provide a single set of universal numeric threshold values for the agricultural reuse of saturated biochar. Instead, it consistently indicates which contaminant classes, fractions, and exposure-relevant metrics should be monitored to avoid transferring contaminants from treatment systems into soils. In this context, regulatory criteria should not rely solely on total contaminant concentrations; they should also account for environmentally mobile fractions, particularly for metals and persistent organic pollutants (POPs).

A recurring theme in comparative studies and policy-oriented discussions is the lack of harmonized limits for spent or saturated biochar. Existing national guidance examples may be informative, but they are not uniform, and some biochars fail available country-level criteria due to elevated metal contents, such as Ni [

93,

107]. Importantly, the cited studies do not establish internationally harmonized numeric thresholds for reuse. This limits the ability to recommend fixed, universal cutoffs based solely on the current evidence base.

The case of PAHs illustrates why contaminant-specific limits are necessary. Reported Σ16PAH concentrations in biochar vary widely, ranging from hundreds to thousands of μg kg

−1, and field-relevant application rates (e.g., 10–40 t ha

−1) can produce measurable and sometimes substantial increases in soil PAH concentrations. In some treatments, kiln-produced wood biochar caused up to a tenfold increase in soil PAH levels [

107]. These findings demonstrate that limits for PAHs should be defined not only in terms of biochar content but also in terms of the resulting changes in soil concentration after application.

The literature also makes clear that total concentration alone is an incomplete safety metric. A substantial portion of sorbed contaminants may remain weakly bound or become mobilizable under environmentally relevant ligands, particularly organic acids [

106]. For this reason, regulatory evaluation should include multiple contaminant descriptors: total contaminant content, exchangeable or ionic-extractable fractions (e.g., Ca(NO

3)

2-extractable), and organic-acid-leachable fractions that simulate rhizosphere conditions. This multi-fraction approach is more consistent with actual environmental exposure pathways than a total-mass-only criterion.

In practical terms, the available evidence supports a precautionary pre-reuse assessment framework. Before agricultural deployment, saturated biochar should undergo (i) feedstock and production characterization to identify intrinsically contaminated materials, including problematic feedstocks or poorly controlled kiln products, (ii) analysis of total metals and POPs, and (iii) extractability testing under pH- and ligand-relevant conditions to demonstrate low mobilizable fractions [

93,

105,

106,

107]. These requirements are well supported by the reviewed studies, even though they do not converge on a single numeric threshold.

Accordingly, the current corpus is insufficient to justify specific universal cutoffs for heavy metals or POPs in saturated biochar intended for agricultural reuse. The development of numeric thresholds will require regulatory decisions informed by local background soil conditions, human and ecological risk criteria, and field-scale evidence linking extractable contaminant fractions to plant uptake, soil health, and long-term environmental outcomes.

In the European context, agronomic valorization must also be considered in light of the contaminant thresholds applicable to fertilizing products and soil improvers. Under Regulation (EU) 2019/1009, pyrolysis and gasification materials intended for EU fertilizing products are subject to compositional restrictions, including a PAH16 limit of 6 mg kg−1 dry matter. For organic soil improvers, relevant contaminant limits include Cd 2 mg kg−1 DM, Hg 1 mg kg−1 DM, Ni 50 mg kg−1 DM, Pb 120 mg kg−1 DM, inorganic As 40 mg kg−1 DM, Cu 300 mg kg−1 DM, and Zn 800 mg kg−1 DM. These benchmarks underscore that soil reuse of spent biochar should be considered conditional rather than automatic.

6.2. Stabilization and Immobilization of Contaminants in Soil

For contaminant-loaded biochar, a major post-use pathway is controlled application for stabilization/immobilization in contaminated soils. In this case, the biochar matrix can reduce contaminant mobility and bioavailability through multiple mechanisms, including pore adsorption, ion exchange, electrostatic attraction, surface complexation, and mineral-associated precipitation. The effectiveness of these mechanisms depends on pH, ash content, cation exchange capacity, and the abundance of oxygen-containing surface functional groups.

It has to be retained that immobilization performance in soil cannot be inferred directly from batch adsorption tests in water. Soil systems introduce competing ions, variable redox conditions, interactions with organic matter, and microbial effects that may alter sorbate stability over time. Therefore, claims of long-term immobilization should be supported by soil-specific evidence (e.g., fractionation, leaching tests, plant uptake data, and aging studies), rather than solely by aqueous adsorption isotherms. Composite strategies further improve safety and performance.

Combining biochar with mineral-rich industrial residues is increasingly recognized as a practical strategy for contaminated-soil remediation as it couples metal immobilization with waste valorization. Rather than relying on a single sorbent mechanism, these co-amendments bring together complementary chemical and physical functions: biochar provides porous carbon surfaces and functional groups for adsorption and complexation. In contrast, mineral residues provide alkalinity and reactive mineral phases that promote precipitation and convert metals into less labile forms. In parallel, the amendment can improve soil structure, cation exchange capacity (CEC), and nutrient availability, thereby supporting vegetation recovery and reducing long-term remobilization risk. From a circular-process perspective, this approach is particularly attractive because it simultaneously reuses industrial by-products and biomass-derived carbon, thereby closing material loops in remediation systems.

A central advantage of these combined amendments is the presence of synergistic immobilization pathways. Biochar provides a condensed aromatic structure and porous matrix that adsorb dissolved metal ions and facilitate the formation of organic carbon–metal complexes, especially during co-treatment with metal-rich residues [

27]. At the same time, metals may coordinate with oxygen-containing functional groups on the biochar surface while also being transformed into less bioavailable mineral forms, such as oxides, when the residue component catalyzes oxidation or provides reactive metal/oxide nuclei [

27,

108]. In this way, immobilization does not depend on a single interaction but on a network of parallel processes, which generally improves robustness under variable soil conditions. Another key mechanism is pH buffering. Alkaline residues such as carbide slag, apatite, and fly ash raise soil pH, increasing negative surface charge, reducing metal solubility, and promoting precipitation or adsorption onto oxide-rich surfaces [

109,

110]. Across the reviewed studies, this pH-driven pathway appears to be a dominant contributor to reduced metal mobility in combined amendments [

109,

110].

The specific role of the mineral residue varies with composition, and the literature highlights several representative functions. Fly ash contributes reactive oxides and supports precipitation and ion-exchange processes, making it a low-cost component in sorbent mixtures designed for metal immobilization [

108]. Red mud plays a more chemically active role in some systems, where co-pyrolysis with biomass promotes aromatic condensation in the carbon phase and facilitates the conversion of free metals into oxide-associated forms, thereby reducing bioavailability [

27]. Carbide slag and metallurgical slags are particularly effective as buffering agents: they increase soil pH and CEC, improve acid neutralization, and have been shown to reduce DTPA-extractable Cu, Pb, and Zn in mine-affected soils [

110]. Apatite contributes phosphate and increases negative surface charge, enhancing adsorption and precipitation-based immobilization, particularly for Pb and Zn, while also stabilizing exchangeable metal fractions [

109]. These differences are important for process design because they show that residue selection should be matched to the target contaminant and the desired soil functions.

Beyond direct immobilization, biochar-residue amendments improve soil physical and chemical properties in ways that further support remediation performance. Several studies report enhanced aggregate formation and improved aggregate stability when biochar is co-processed with red mud or applied as part of composite amendments, which contributes to both soil structural recovery and greater stability of the biochar phase itself [

27]. Improved aggregation can reduce erosion and particle transport, which indirectly lowers the risk of contaminant redistribution. Mineral-enriched biochars have also been associated with increased pore volume, higher CEC, and better water-holding capacity, all of which help retain metals in less mobile forms while supporting plant establishment on degraded soils [

108]. In addition, residues such as apatite or Ca/P-rich mineral sources can provide beneficial nutrients (especially Ca and P) that serve a dual role: they participate in metal immobilization through adsorption and precipitation while also improving nutrient availability for plants [

108,

109,

110]. This dual function is particularly important in contaminated soils, where fertility constraints and toxicity often co-occur.

The agronomic and ecological benefits of these co-amendments extend to the rhizosphere. Studies indicate that mineral-enriched biochar formulations can reduce DTPA-extractable Cu, Pb, and Zn and improve plant growth in contaminated soils, suggesting that chemical immobilization translates into lower biological exposure [

108,

109,

110]. At the same time, changes in rhizosphere microbial composition have been reported, including increases in beneficial microbial taxa and reductions in heavy-metal uptake by plants, suggesting ecological recovery alongside physicochemical remediation [

108].

From a circular-process design standpoint, integrating biochar with industrial residues provides a strong justification for scale-oriented remediation systems. Co-pyrolysis or blending of residues such as red mud, fly ash, slag, or apatite with biomass can generate composite amendments that immobilize metals while also stabilizing industrial by-products that might otherwise require disposal [

27,

111]. This is explicitly framed in the literature as resource-efficient reuse and a promising pathway for synergistic valorization of multiple waste streams [

27,

111]. In situ application of these composite sorbents is also described as a relatively low-cost and low-impact remediation option, particularly suitable for large contaminated areas where conventional excavation-based treatments are economically or environmentally burdensome [

108]. Field and pot-scale studies provide further support by showing measurable reductions in extractable metal fractions, stabilization of soil pH, and improvements in plant health when reclaimed residues are used as part of biochar-based soil amendments [

108,

109,

110]. Together, these findings support the positioning of biochar–mineral residue composites as a practical circular remediation step that can restore both environmental quality and land productivity.

At the same time, the evidence base remains incomplete in one important respect: quantitative system-level comparisons are still limited. Although the literature supports the technical and agronomic promise of these co-amendments, the supplied sources do not report detailed life cycle assessments or large-scale economic analyses directly comparing circular composite pathways with conventional remediation alternatives. As a result, claims regarding full cost–benefit performance or life cycle superiority should be made cautiously and identified as priorities for future research rather than established conclusions.

6.3. Integration into Construction Materials (Cement, Concrete, Composites)

When soil reuse is not suitable, or higher-value reuse is desired, incorporating biochar into construction materials is a promising alternative. In the literature, it is reported that biochar has been used in cementitious composites, concrete, asphalt, and polymer-based materials, where it can influence mechanical performance, durability, thermal behavior, and moisture regulation. These studies also highlight the potential of biochar-containing materials to contribute to carbon-oriented construction strategies.

For saturated biochar, construction integration is particularly attractive because it can serve both as a valorization route and as a containment strategy. In properly designed matrices, the solid phase may encapsulate contaminant-loaded biochar, thereby reducing release risk; however, this must be verified through leaching and durability testing under realistic service conditions. The suitability of this route depends on biochar particle size, ash content, residual sorbate chemistry, and compatibility with the host matrix.

Coupling biochar adsorption units to downstream materials manufacturing creates sequential product streams that recover adsorbed resources, monetize biochar services, and cut disposal needs while improving circularity and supply chain resilience. Process integration and coproduct strategies increase resource efficiency and industrial symbiosis.

A process-oriented discussion of post-use biochar should not treat adsorption as an isolated unit operation. In practice, the value of biochar-based treatment systems increases substantially when adsorption is linked to downstream manufacturing or reuse pathways, creating what the literature increasingly describes as sequential biochar systems. In this framework, biochar is not viewed simply as a consumable sorbent but as a carrier of environmental services that can move across sectors while retaining functional value. Depending on how it is loaded and processed, the same material may act first as an adsorbent, then as a nutrient carrier or carbon sink, and finally as a feedstock for another industrial or agrarian use [

112]. This sequential logic is central to circular-process design because it reduces reliance on single-use pathways and supports material cascading.

One of the most promising settings for this approach is the integrated biorefinery. Embedding biochar production and adsorption functions within biorefineries or related biomass-processing industries (including pulp and paper value chains) creates opportunities to co-produce energy and multiple material streams, which can then be routed into downstream manufacturing [

113,

114]. In such systems, biochar is no longer a side product with uncertain value but part of a coordinated process network in which by-products and intermediates are intentionally directed toward secondary uses. This integration also enables sidestream routing, in which feedstocks and process residues from bioenergy or biorefinery complexes are redirected to adsorption and subsequent material conversion steps, thereby closing loops and supplying secondary value chains [

115].

To implement these links effectively, process integration cannot rely on ad hoc decisions. The literature highlights the importance of formal process design and supply chain tools for identifying optimal routing strategies, coproduct combinations, and logistics for coupling adsorption units with downstream manufacturing [

116,

117].

Once biochar has been used as an adsorbent or environmental service carrier, several material valorization pathways become possible, and the literature increasingly documents these as viable secondary product streams rather than residual disposal options. The suitability of each pathway depends on the chemical and physical state of the post-use biochar, including whether it is nutrient-loaded, contaminant-bearing, or structurally intact enough for further conversion.

One important route is upgrading to activated carbon or other advanced carbon materials. Spent biochar or biochar precursors can be further processed into activated carbon and engineered carbon products suitable for high-value applications, including electrochemical systems such as supercapacitors [

114]. This pathway is particularly relevant when the carbon matrix remains structurally valuable and can be functionalized or activated to meet stricter performance requirements. In this case, the biochar is not only reused but transformed into a higher-value carbon material, extending its functional lifetime within the process chain.

A second major route is agronomic reuse, especially when the biochar carries recovered nutrients or immobilized elements that can be safely returned to soil. In these cases, the spent material can function as a soil amendment that supports nutrient cycling while retaining the carbon-related benefits of biochar application [

113]. This route is especially attractive in circular systems because it links treatment processes back to agricultural productivity, allowing nutrients captured from one stream to be reintroduced into another.

Integrated biorefineries provide a broader platform for valorization, enabling recovered biochar streams to be used as manufacturing feedstocks rather than waste residues. Within this setting, biochar can be routed into coproduct generation systems that produce energy, chemicals, or materials, thereby embedding the spent sorbent into a wider portfolio of value-added outputs [

113,

117]. This aligns closely with the broader concept of resource transfer and transformation described in sequential systems: pollutants, nutrients, and carbon captured by biochar are not seen as fixed burdens but as transformed resources that can enter downstream material processes under controlled conditions [

112].

Linking adsorption to downstream biochar valorization has implications that extend beyond waste reduction. At the process level, sequential reuse creates measurable gains in resource efficiency, improves economic resilience through product diversification, and can strengthen sustainability performance when compared with single-use or disposal-based systems. The core principle is straightforward: the more functional stages a material passes through before disposal, the greater the value extracted per unit of biomass input.

One immediate benefit is the reduction in disposal demand. Routing spent biochar into secondary manufacturing or reuse pathways avoids single-use endpoints and keeps the material in circulation across multiple life stages [

112]. This directly reduces the burden on disposal infrastructure and lowers the risk that a potentially useful carbon-rich material is prematurely treated as waste. In systems where disposal is costly or environmentally sensitive, this alone can justify additional integration effort.

A second benefit lies in the economics of coproducts. Techno-economic and life cycle studies of integrated biorefineries indicate that profitability and environmental performance improve when value-added coproducts are produced alongside primary outputs [

117]. In this context, spent biochar becomes part of a diversified product portfolio rather than a cost center. The ability to generate additional materials, energy products, or agronomic inputs from the same biomass processing chain can improve process economics and buffer volatility in individual product markets [

113,

117].

The literature also emphasizes the importance of monetizing multifunctionality. When biochar is treated as a provider of environmental services—such as adsorption, carbon sequestration, and nutrient retention—its value is no longer limited to its initial sale as a material. Instead, sequential applications create opportunities for new pricing structures and business models that capture value across multiple use phases [

112]. This is a crucial shift in circular bioeconomy thinking because it reframes post-use management as part of value creation rather than cost minimization.

At the system level, these integration strategies support broader sustainability gains. Co-production and optimized coproduct portfolios can improve the overall performance of biomass processing systems by reducing net waste, intensifying resource use, and increasing the functional output derived from a single feedstock stream [

113,

117].

Sequential routing of biochar across sectors is also a practical mechanism for industrial symbiosis. By connecting wastewater treatment, agriculture, materials manufacturing, and biomass processing, biochar-based systems can enable exchange relationships in which the output of one process becomes the input of another. This cascading use of biomass-derived resources is a defining characteristic of circular bioeconomy models and is increasingly described in the literature as a realistic pathway for cross-sector integration [

112,

115].

In this context, biochar acts as a transfer medium between sectors. A biochar stream may capture contaminants or nutrients in one industrial setting, then be redirected into remediation, agriculture, or materials production, creating a chain of interdependent value exchanges [

112]. This strengthens industrial symbiosis not only by reducing waste but also by increasing coordination between actors that would otherwise operate separately.

However, the literature also makes clear that technical feasibility alone is not sufficient to achieve implementation. Governance and institutional design play a major role. Policy incentives, institutional innovation, and coordinated value-chain governance are repeatedly identified as necessary conditions for unlocking waste valorization opportunities and scaling symbiotic exchange models in the bioeconomy [

118]. Without these enabling structures, even technically sound biochar pathways may remain fragmented or economically uncompetitive.

Several practical barriers also remain. Reviews point to limited economic attractiveness relative to fossil-derived alternatives, difficulties in monetizing biochar’s combined climate and material services, and bottlenecks in downstream valorization pathways as recurring obstacles to scale-up [

112,

114]. These challenges are especially relevant for more complex sequential systems, where value depends on coordination across multiple stages and markets rather than a single product transaction.

For this reason, implementation of sequential biochar systems must be supported by robust design and logistics planning. The literature consistently recommends combining process integration tools, supply-chain design, and life cycle assessment to optimize routing decisions, evaluate trade-offs, and verify that secondary uses genuinely reduce net environmental burdens rather than shifting impacts downstream [

116,

117]. Therefore, the success of industrial symbiosis in biochar systems depends on integrating technical design with governance, logistics, and life cycle evaluation from the outset.

6.4. Potential Risks and Long-Term Stability

A balanced review must address the potential risks associated with post-use biochar deployment. The main concern is not only the biochar itself but also the long-term fate of the adsorbed species. Reported risks in recent reviews include heavy metals inherent to some biochars, dust exposure during handling, greenhouse gas emissions under certain soil conditions, and uncertainties regarding contaminant remobilization during aging.

For saturated biochar, desorption and leaching are critical risk parameters. The adsorption literature emphasizes that regeneration/desorption studies are relevant not only for process reuse but also for environmental risk screening, as they reveal the stability of sorbed contaminants and the likelihood of release under changing conditions. Repeated use or regeneration may also alter pore structure and functional groups, affecting both adsorption performance and downstream stability.

Long-term stability should be assessed using field-relevant evidence whenever possible. While many studies report positive effects of biochar on soil properties and plant performance, long-term outcomes vary with feedstock, pyrolysis conditions, soil type, and climate. Accordingly, post-use applications should be supported by site-specific risk assessment and monitoring protocols, particularly when contaminant-loaded materials are involved.

Field evidence shows that biochar stability is highly variable, ranging from substantial short-term losses to measurable persistence over a decade or more. This variability is not a minor methodological issue; it reflects real differences in feedstock properties, pyrolysis conditions, soil texture, climate, and landscape processes. As a result, field-scale persistence cannot be reliably inferred from laboratory incubation studies alone, and any assessment of long-term biochar behavior—particularly for post-use or contaminant-bearing biochars—must be site-specific and supported by monitoring.

The field literature clearly illustrates this divergence. In a subtropical field study in Florida over 15 months, biochar-C losses ranged from 17.5% to 93.3% yr

−1, depending on feedstock and production temperature, with lower losses generally observed for chars produced at 650 °C (14.0% to 51.5% yr

−1) [

119]. By contrast, multi-year temperate field trials have shown both dissipation and persistence depending on soil type. In one German study, a sandy site lost most of the initial soil organic carbon (SOC) gain over nine years, whereas a loamy site retained elevated SOC after eleven years [

120]. A 13-year UK field experiment likewise found that biochar-amended plots maintained higher organic C density than controls and exhibited only limited loss of chemical stability, supporting medium- to long-term persistence under those conditions [

121]. Shorter-duration studies also show mixed outcomes: a four-year corn trial reported greater SOC decline with pine-chip biochar than with poultry-litter biochar. In contrast, a two-year vineyard study found no significant degradation but high variability among plots [

120,

122]. Taken together, these results indicate that persistence is strongly conditional and should not be generalized across sites or biochar types.

The main drivers of persistence are consistently linked to feedstock, pyrolysis temperature, soil type, and climate. Feedstock influences the elemental composition and the proportion of labile carbon, which, in turn, affects microbial accessibility and weathering behavior [

120,

122]. Pyrolysis temperature is also a strong determinant: higher-temperature chars (e.g., 650 °C) generally show lower short-term mineralization, which is commonly attributed to greater aromatic condensation and smaller labile carbon pools [

119]. Soil texture modifies retention through physical protection, aggregation, and transport behavior, with loamy and clay-rich soils often showing better long-term SOC retention than coarse sandy soils where gains may dissipate more quickly [

95,

120]. Climate further shapes outcomes, as subtropical environments with higher temperatures and greater moisture variability have been associated with greater short-term losses than temperate systems, where longer persistence has been documented [

119,

121]. These drivers act in combination, which explains why field outcomes remain heterogeneous even when similar application rates are used.

An important implication of the field literature is that long-term biochar fate is not controlled only by mineralization. Physical migration and soil interactions can be equally important and may strongly influence apparent persistence. Studies using

13C-labeled biochar have shown that downward transport can be substantial in some soils within 12 months, with recovery of biochar-C below 30–50 cm varying by soil type and, in some cases, exceeding estimated mineralization losses [

95]. This means that declines in topsoil biochar-C cannot automatically be interpreted as decomposition. Biochar also interacts with the surrounding soil environment (the “charosphere”), where it can alter microbial community composition, pH, and nutrient availability. In a 13-year field study, persistent shifts in bacterial and fungal communities were observed near biochar particles, together with elevated local pH and nitrogen availability, indicating that long-term biochar effects extend beyond carbon storage alone [

121]. Conversely, some studies reporting SOC dissipation explicitly note uncertainty about whether observed losses reflected microbial breakdown or lateral/vertical movement of biochar particles, underscoring the need to separate transport from mineralization in monitoring designs [

119,

120].

These findings make a strong case for site-specific risk assessment and monitoring, especially where biochar is used in remediation or carries sorbed contaminants. The literature does not provide a single universal protocol, but it does identify the core elements of robust field monitoring programs. Field studies commonly include baseline soil characterization, depth-resolved measurements of pyrogenic carbon and total carbon, pH, extractable nitrogen, and indicators of soil structure such as aggregation and water-holding capacity, with repeated sampling over multi-year intervals [

121,

123]. Where physical migration is a concern, depth-resolved sampling to at least 30–50 cm—and in some cases deeper—is recommended, as substantial downward transport of biochar-C and associated constituents has been observed within months to 1 year [

95,

119]. For remediation applications or contaminant-bearing biochars, authors also recommend feedstock-specific selection and site-scale ecological risk assessment, with monitoring extended beyond soils to include contaminants in solid phases, porewater, and runoff compartments [

119,

124].

A further methodological point emphasized across studies is the need to distinguish true mineralization losses from physical redistribution. To achieve this, monitoring designs should combine complementary tools, including molecular markers (e.g., BPCA or SPAC/hydropyrolysis approaches), isotopic tracing, and mass-balance methods, so that fate pathways can be attributed with greater confidence [

95,

120,

121]. Without this distinction, apparent declines in biochar-associated carbon may be misinterpreted, leading to incorrect conclusions about stability or risk.

Overall, the current evidence supports a precautionary, site-tailored approach rather than a standardized universal protocol. Based on the reviewed studies, the most defensible strategy is to develop monitoring programs that combine (i) baseline inventories of pyrogenic carbon and contaminants, (ii) periodic depth-resolved sampling for both carbon and contaminants, (iii) water and leachate monitoring where hydrologic connectivity is relevant, and (iv) multi-year follow-up to capture slow decomposition and transport processes [

95,

119,

123,

124].

7. Performance, Scale-Up, and Circular Sustainability

Performance evaluation in biochar-based treatment systems should extend beyond adsorption capacity and include reproducibility, environmental burdens, and deployment feasibility. This is especially important when biochar is intended for dual functionality, since the performance of the primary adsorption process and the safety/value of the post-use pathway are interdependent. A process-level framework is therefore more appropriate than a single-metric comparison.

7.1. Key Performance Indicators and Post-Use Functionality

A robust KPI framework for biochar-based systems should distinguish between intrinsic material properties, operational process performance, and post-use behavior. These tiers correspond to different decision points in process design: material KPIs support sorbent selection and modification, process KPIs support reactor and operating design, and post-use KPIs determine whether spent biochar can be safely reused or must be stabilized or disposed of. This distinction is especially important because the most commonly reported metrics are highly condition-dependent and therefore have limited value for cross-study comparison unless test conditions are standardized.

Intrinsic material properties define the mechanistic basis for contaminant uptake and therefore belong in the highest KPI tier. These indicators do not directly describe process performance, but they determine which removal mechanisms are likely to dominate and which application niches a given biochar is best suited for.

Surface area is one of the most frequently reported material metrics. It is generally associated with improved adsorption of hydrophobic organic contaminants because it increases the number of available sorption sites. In many studies, higher specific surface area is linked to higher pyrolysis temperatures and activation treatments, although the functional consequences depend on pore accessibility and chemistry [

125,

126]. Pore structure is equally important, since micropores tend to favor adsorption of small molecules, while meso- and macropores influence accessibility, diffusion, and transport. Feedstock and pyrolysis conditions strongly affect pore-size distribution, which in turn shapes contaminant selectivity [

125,

126].

Ash content is another key material KPI, particularly for metal removal. Elevated ash fractions may introduce reactive mineral phases that promote precipitation, complexation, or ion exchange and can therefore shift the dominant sorption mechanism from purely carbon-surface adsorption to mineral-assisted immobilization [

127,

128]. Similarly, bulk pH and point of zero charge (PZC) are critical descriptors because they control electrostatic attraction or repulsion for ionic species. Biochar performance is often strongly pH-dependent across the pH ranges commonly tested in adsorption studies, such as 3–9, and this behavior is directly tied to production conditions and surface chemistry [

125,

126]. Surface functional groups, especially oxygen-containing groups such as carboxyl and hydroxyl moieties, also play a central role by mediating polar adsorption, complexation, and ion exchange. Lower-temperature biochars typically retain more oxygenated functional groups, which may improve uptake of certain polar inorganic or organic contaminants [

125,

126].

Taken together, these material KPIs provide the mechanistic context for performance claims. However, they should not be interpreted as substitutes for operational testing, since favorable intrinsic properties do not guarantee performance under realistic process conditions.

Process KPIs describe how biochar performs under defined operating conditions and are the metrics most often used in adsorption studies to support claims of effectiveness. These indicators quantify uptake magnitude, uptake rate, and operational resilience during use and reuse, but they are highly sensitive to test setup and solution chemistry. For this reason, they are useful for process evaluation only when reported together with full experimental conditions.

Adsorption capacity from isotherm fits (mg g

−1) remains the most common KPI. However, it is not an intrinsic material constant. Reported qmax values depend strongly on biochar type, pyrolysis or activation history and batch-test conditions such as pH, contaminant concentration range, and solid-to-liquid ratio [

125]. As a result, adsorption capacity is most informative when used for comparison within a standardized test framework rather than across unrelated studies.

Adsorption rate, often represented by fitted kinetic constants or time-to-equilibrium, is similarly condition-dependent. Uptake rate is influenced by surface area, pore accessibility, particle size, and solution chemistry, and modeling studies identify adsorption time and specific surface area as strong predictors under controlled conditions. Reported rate constants can therefore only be meaningfully compared when contact time, mixing intensity, and particle-size distribution are similar [

125,

129].

Breakthrough behavior is a more relevant KPI for engineered systems, particularly continuous-flow applications. Metrics such as breakthrough time and mass-transfer-zone length in fixed-bed tests provide insight into service life and hydraulic performance, which batch isotherms cannot capture [

130,

131]. Particle size, porosity, flow rate, and sorption heterogeneity all affect breakthrough, making column data essential for scale-up and application-readiness claims [

131].

Regeneration stability is another critical but underreported process KPI. Capacity retention after repeated cycles can vary substantially depending on the sorbate type, regeneration method, and biochar modification approach [

129,

132]. Because long-term operational viability depends on this stability, regeneration performance should be treated as a formal KPI and reported using a clearly defined and reproducible cycling protocol [

129,

132].

Overall, process KPIs are indispensable for evaluating application performance, but they must be interpreted as operational metrics rather than universal properties of a biochar material.

Post-use KPIs are essential for determining whether spent biochar represents a risk or a resource. These indicators address environmental fate, secondary-use potential, and durability after service, and they are especially important in sequential biochar systems where the adsorbent is intended for reuse in soil, composites, or other downstream applications.

Leaching resistance is one of the most important post-use KPIs because both sorbed contaminants and native biochar constituents may be released under environmental conditions. Leaching behavior depends on sorbate binding strength, matrix stability, and pH and should therefore be assessed using standardized leaching protocols under conditions relevant to the intended end use [

129,

133]. For spent biochar intended for land application, leaching resistance directly determines environmental safety; for engineered materials, it informs containment performance.

Soil response is another key post-use KPI when land reuse is considered. Relevant indicators include effects on soil carbon stability, nutrient cycling, and priming of native organic matter. These outcomes are particularly important for evaluating spent biochar in remediation and carbon sequestration contexts, where the post-use pathway must provide agronomic or ecological benefits without causing secondary contamination [

129,

133].

Composite compatibility becomes important when spent biochar is redirected into construction or polymer matrices. In these cases, performance depends on mechanical stability, surface chemistry, and the potential for leachate formation. Laboratory adsorption metrics do not predict composite behavior, so application-specific compatibility testing is required before industrial use can be justified [

125,

132].

Secondary-use durability is the final major post-use KPI. Whether the biochar is reused as a soil amendment or as a construction filler, long-term chemical and mechanical stability must be demonstrated, along with continued retention of any sequestered contaminants. Reviews consistently highlight the need for standardized long-term durability testing and note that evidence remains mixed across applications [

129,

132]. At present, the available literature does not provide a sufficient basis for universal numerical pass/fail thresholds for leaching across multiple environmental scenarios or for long-term durability benchmarks, so these criteria remain context-specific.

Although adsorption, percentage removal, and fitted kinetic constants are widely used because they are relatively easy to generate, they have limited comparability across studies. Experimental conditions strongly constrain their interpretation, and without standardized reporting they can be misleading in process design or meta-analysis.

The most important limitation is that adsorption is conditional, not intrinsic. Isotherm-derived capacities depend on the selected concentration range, solid-to-liquid ratio, pH, and biochar preparation history. They should therefore not be treated as fixed material constants unless testing protocols are standardized [

125,

130]. Removal efficiency is also concentration-dependent: very high percentage removal at low contaminant concentrations does not necessarily indicate high adsorption capacity or suitability for higher-load systems [

125,

130].

Kinetic parameters are similarly sensitive to setup. Reported rate constants reflect not only material properties but also particle size, mixing intensity, contact time, and solution chemistry. As a result, comparisons across studies are only valid when these conditions are matched [

125,

129]. Among the most influential variables, pH and solid-to-liquid ratio are particularly important because they affect contaminant speciation, electrostatic interactions, and the apparent driving force for adsorption in batch systems [

129,

130].

One area where the current evidence remains insufficient is ionic strength. The supplied literature does not provide enough direct and comparable evidence to quantify how ionic strength systematically alters reported KPIs across different biochar systems, so specific generalized claims on ionic-strength effects cannot be substantiated from these sources alone.

For practical reporting, the implication is clear: KPIs should always be presented alongside standardized test conditions, including pH, solid-to-liquid ratio, ionic composition, contact time, and particle size. In addition, claims of application readiness should include both batch and column metrics, since batch performance alone does not capture the transport and hydraulic limitations relevant to real process systems [

129,

130,

132].

7.2. Experimental Limitations, Reproducibility, and Standardization

A major limitation in the literature is the lack of methodological standardization. Biochar properties vary widely with feedstock source, pretreatment, pyrolysis temperature, heating rate, and post-modification, while adsorption protocols often differ in matrix composition and operating conditions. As a result, published adsorption capacities may not be directly comparable, even when the same contaminant is studied.

Reproducibility is further weakened by the predominance of batch experiments using simplified synthetic solutions. Many studies do not include continuous-flow testing, competitive adsorption conditions, or realistic wastewater matrices, which are necessary for scale-up. In addition, uncertainty reporting (replicates, error bars, statistical tests) is often incomplete.

The reproducibility of biochar research begins with transparent reporting of feedstock and pyrolysis conditions because these factors largely determine the structural, chemical, and contaminant-related properties of the final material. For this reason, reports should provide enough detail to allow reconstruction of production conditions and meaningful grouping across studies. Without this information, differences in performance may be incorrectly attributed to “biochar effects” when they are in fact caused by unreported differences in raw materials or thermal processing.