A Multi-Timescale Cooperative Scheduling Method for Flexible Load in Power Distribution System Considering Dynamic Transformer Rating

Abstract

1. Introduction

- (1)

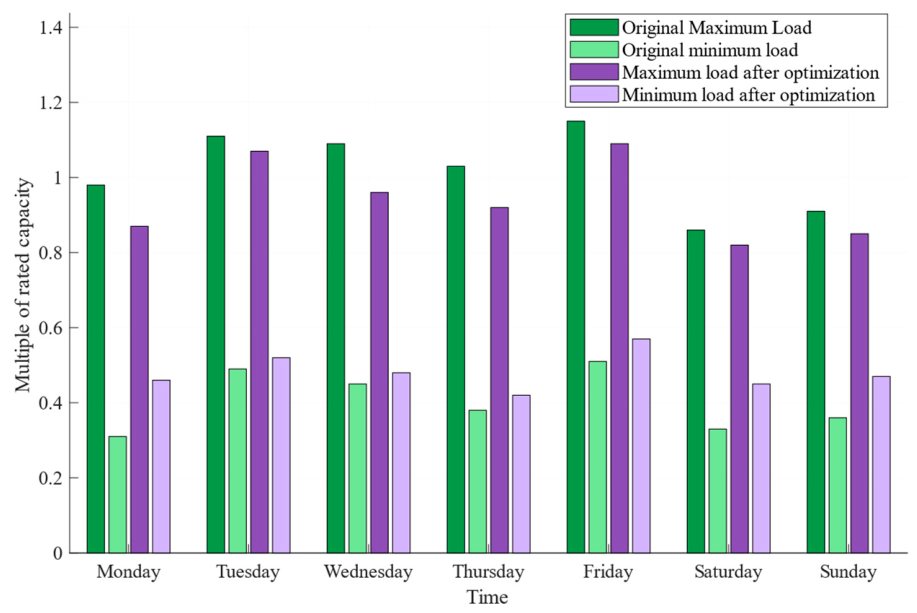

- We fully consider the characteristics of the power distribution system and electric vehicles in adjacent substations. We established physical models for EV charging status and charging station status and constructed a day-ahead scheduling layer based on multi-agent reinforcement learning to optimize power consumption plans and smooth peak–valley loads in the power distribution system.

- (2)

- We explored the dynamic load capacity of distribution transformers under varying environmental conditions and developed an evaluation model for the dynamic load capacity of distribution transformers.

- (3)

- We constructed an intraday scheduling layer for flexible loads. By dynamically optimizing electricity consumption strategies in real time to address price fluctuations and user behavior randomness, it is possible to achieve dynamic matching between distribution transformers and flexible load regulation demands.

2. Flexible Load Dispatch Model for a Distribution System in the Day-Ahead Stage

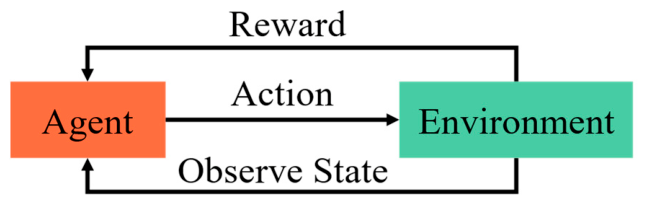

2.1. Observation State Space Model

2.2. Action Space Model

2.3. State Transition Model

2.4. Flexible Load Scheduling Model Based on Multi-Agent Reinforcement Learning

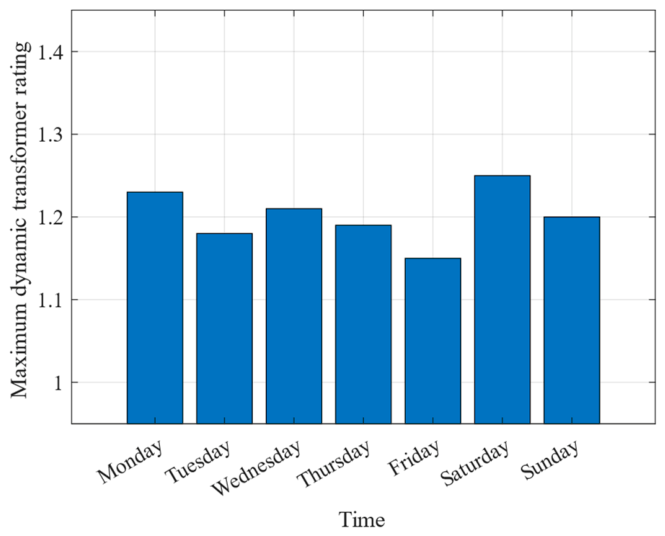

3. Flexible Load Scheduling Model for Distribution System Considering Dynamic Transformer Rating During Intraday Stages

3.1. Multi-Timescale Coupling and Receding Horizon Optimization

3.2. Dynamic Transformer-Rating Evaluation Model

4. Example Analysis

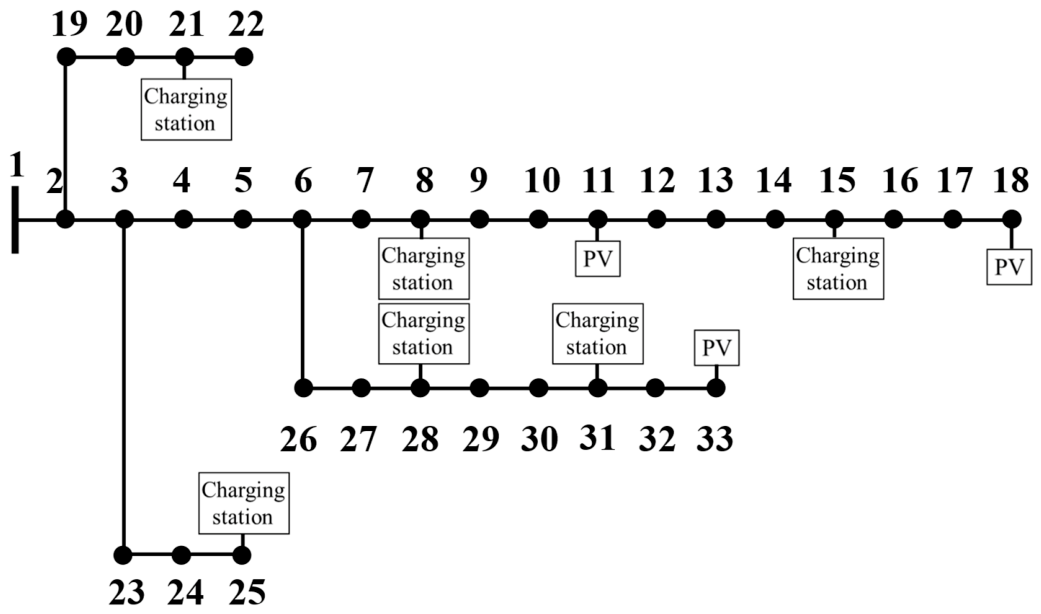

4.1. Test System and Simulation Environment

4.2. Baseline Algorithm Comparison

4.3. Sensitivity Analysis of Reward Coefficients

4.4. Scalability Validation

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Asna, M.; Shareef, H.; Prasanthi, A.; Errouissi, R.; Wahyudie, A. A Novel Multi-Level Charging Strategy for Electric Vehicles to Enhance Customer Charging Experience and Station Utilization. IEEE Trans. Intell. Transp. Syst. 2024, 25, 11497–11508. [Google Scholar] [CrossRef]

- Nikpour, B.; Sinodinos, D.; Armanfard, N. Deep Reinforcement Learning in Human Activity Recognition: A Survey and Outlook. IEEE Trans. Neural Netw. Learn. Syst. 2025, 36, 4267–4278. [Google Scholar] [CrossRef]

- Su, S.; Li, Y.; Yamashita, K.; Xia, M.; Li, N.; Folly, K.A. Electric Vehicle Charging Guidance Strategy Considering “Traffic Network-Charging Station-Driver” Modeling: A Multiagent Deep Reinforcement Learning-Based Approach. IEEE Trans. Transp. Electrif. 2024, 10, 4653–4666. [Google Scholar] [CrossRef]

- Lotfi, F.; Rajoli, H.; Afghah, F. Task-Specific Sharpness-Aware O-RAN Resource Management Using Multi-Agent Reinforcement Learning. IEEE Trans. Mach. Learn. Commun. Netw. 2026, 4, 98–114. [Google Scholar] [CrossRef]

- Dai, W.; Li, H.; Liu, H.; Goh, H.H.; Yuan, X.; Liu, Y.; Chen, B. An Efficient Affine Arithmetic-Based Optimal Dispatch Method for Active Distribution Networks with Uncertainties of Electric Vehicles. IEEE Trans. Sustain. Energy 2025, 16, 1021–1036. [Google Scholar] [CrossRef]

- Dai, W.; Li, D.; Liu, H.; Liu, Y. A Cost Surrogate Model for TSO-DSO Coordination Based on Polynomial Chaos Expansion. IEEE Trans. Power Syst. 2025, early access. [Google Scholar] [CrossRef]

- Dai, W.; Xu, J.; Goh, H.H.; Shi, T.; Zeng, Z. Small Signal Equivalent Modeling for Large ES-Embedded DFIG Wind Farm with Dynamic Frequency Response. IEEE Trans. Power Syst. 2025, 40, 2324–2335. [Google Scholar] [CrossRef]

- Siddiqua, A.; Liu, S.; Nipu, A.S.; Harris, A.; Liu, Y. Co-Evolving Multi-Agent Transfer Reinforcement Learning via Scenario Independent Representation. IEEE Access 2024, 12, 99439–99451. [Google Scholar] [CrossRef]

- Dahiwale, P.V.; Rather, Z.H.; Mitra, I. A Comprehensive Review of Smart Charging Strategies for Electric Vehicles and Way Forward. IEEE Trans. Intell. Transp. Syst. 2024, 25, 10462–10482. [Google Scholar] [CrossRef]

- Morstyn, T.; Crozier, C.; Deakin, M.; McCulloch, M.D. Conic Optimization for Electric Vehicle Station Smart Charging with Battery Voltage Constraints. IEEE Trans. Transp. Electrif. 2020, 6, 478–487. [Google Scholar] [CrossRef]

- Dong, X.; Si, Q.; Yu, X.; Mu, Y.; Ren, Y.; Dong, X. Charging Pricing Strategy of Charging Operators Considering Dual Business Mode Based on Noncooperative Game. IEEE Trans. Transp. Electrif. 2025, 11, 7334–7345. [Google Scholar] [CrossRef]

- Lai, S.; Dong, Z.Y.; Qiu, J.; Tao, Y.; Zhao, J.; Wang, G. Competitive Pricing Strategy for the Wireless Charging Lane Operator Considering Range Anxiety of Electric Vehicle Users. IEEE Trans. Intell. Transp. Syst. 2025, 26, 2187–2201. [Google Scholar] [CrossRef]

- Lai, S.; Qiu, J.; Tao, Y.; Zhao, J. Pricing Strategy for Energy Supplement Services of Hybrid Electric Vehicles Considering Bounded-Rationality and Energy Substitution Effect. IEEE Trans. Smart Grid 2023, 14, 2973–2985. [Google Scholar] [CrossRef]

- Qureshi, U.; Ghosh, A.; Panigrahi, B.K. Scheduling and Routing of Mobile Charging Stations With Stochastic Travel Times to Service Heterogeneous Spatiotemporal Electric Vehicle Charging Requests with Time Windows. IEEE Trans. Ind. Appl. 2022, 58, 6546–6556. [Google Scholar] [CrossRef]

- Bayram, I.S.; Galloway, S. Pricing-Based Distributed Control of Fast EV Charging Stations Operating Under Cold Weather. IEEE Trans. Transp. Electrif. 2022, 8, 2618–2628. [Google Scholar] [CrossRef]

- Lu, X.; Li, J.; Yuan, S.; Jin, H.; Wu, C.; Xu, Z. Toward Real-Time Pricing and Allocation for Surplus Resources in Electric Bus Charging Stations. IEEE Trans. Intell. Transp. Syst. 2024, 25, 2101–2115. [Google Scholar] [CrossRef]

- Gan, W.; Wen, J.; Yan, M.; Zhou, Y.; Yao, W. Enhancing Resilience with Electric Vehicles Charging Redispatching and Vehicle-to-Grid in Traffic-Electric Networks. IEEE Trans. Ind. Appl. 2024, 60, 953–965. [Google Scholar] [CrossRef]

- Li, S.; Zhao, P.; Gu, C.; Bu, S.; Li, J.; Cheng, S. Integrating Incentive Factors in the Optimization for Bidirectional Charging of Electric Vehicles. IEEE Trans. Power Syst. 2024, 39, 4105–4116. [Google Scholar] [CrossRef]

- Nimalsiri, N.I.; Ratnam, E.L.; Smith, D.B.; Mediwaththe, C.P.; Halgamuge, S.K. Coordinated Charge and Discharge Scheduling of Electric Vehicles for Load Curve Shaping. IEEE Trans. Intell. Transp. Syst. 2022, 23, 7653–7665. [Google Scholar] [CrossRef]

- Tan, M.; Ren, Y.; Pan, R.; Wang, L.; Chen, J. Fair and Efficient Electric Vehicle Charging Scheduling Optimization Considering the Maximum Individual Waiting Time and Operating Cost. IEEE Trans. Veh. Technol. 2023, 72, 9808–9820. [Google Scholar] [CrossRef]

- Li, Y.; Chen, Q.; Strbac, G.; Hur, K.; Kang, C. Active Distribution Network Expansion Planning with Dynamic Thermal Rating of Underground Cables and Transformers. IEEE Trans. Smart Grid 2024, 15, 218–232. [Google Scholar] [CrossRef]

- Paulhiac, L.; Desquiens, R. Dynamic Thermal Model for Oil Directed Air Forced Power Transformers with Cooling Stage Representation. IEEE Trans. Power Deliv. 2022, 37, 4135–4144. [Google Scholar] [CrossRef]

- Dong, M. A Data-Driven Long-Term Dynamic Rating Estimating Method for Power Transformers. IEEE Trans. Power Deliv. 2021, 36, 686–697. [Google Scholar] [CrossRef]

- Alvarez, D.L.; Rivera, S.R.; Mombello, E.E. Transformer Thermal Capacity Estimation and Prediction Using Dynamic Rating Monitoring. IEEE Trans. Power Deliv. 2019, 34, 1695–1705. [Google Scholar] [CrossRef]

- Li, Y.; Wang, Y.; Chen, Q. Optimal Dispatch With Transformer Dynamic Thermal Rating in ADNs Incorporating High PV Penetration. IEEE Trans. Smart Grid 2021, 12, 1989–1999. [Google Scholar] [CrossRef]

- Lin, H.; Fu, K.; Wang, Y.; Sun, Q.; Li, H.; Hu, Y.; Sun, B.; Wennersten, R. Characteristics of Electric Vehicle Charging Demand at Multiple Types of Location—Application of an Agent-Based Trip Chain Model. Energy 2019, 188, 116122. [Google Scholar] [CrossRef]

- Savari, G.F.; Krishnasamy, V.; Sugavanam, V.; Vakesan, K. Optimal Charging Scheduling of Electric Vehicles in Micro Grids Using Priority Algorithms and Particle Swarm Optimization. Mob. Netw. Appl. 2019, 24, 1835–1847. [Google Scholar] [CrossRef]

- Zhang, J.; He, Y.; Cui, M.; Lu, Y. Primal Dual Interior Point Dynamic Programming for Coordinated Charging of Electric Vehicles. J. Mod. Power Syst. Clean Energy 2017, 5, 1004–1015. [Google Scholar] [CrossRef]

- Peng, Z.; He, Z.; Wu, Y.; Xiao, S. Research on Charging Scheduling for Electric Vehicle Clusters Based on Multi-Agent Reinforcement Learning. Proc. CSEE 2026, 1, 1–16. (In Chinese) [Google Scholar]

- IEEE Std C57.12.39-2017; IEEE Standard for Requirements for Distribution Transformer Tank Pressure Coordination. IEEE: Piscataway, NJ, USA, 2017.

- Bunn, M.; Seet, B.C.; Baguley, C.; Martin, D. A Thermally-Based Dynamic Approach to the Load Management of Distribution Transformers. IEEE Trans. Power Deliv. 2022, 37, 5124–5132. [Google Scholar] [CrossRef]

- Li, B.; Liu, Z.; Huang, H.; Zhong, H.; Li, Z.; Kang, W.; Guerrero, J.M. Two-Stage Risk-Based Scheduling for Electricity-Biogas Rural Microgrids with Biomass Fermentation. IEEE Trans. Smart Grid 2026. [Google Scholar] [CrossRef]

- Zhao, A.P.; Li, S.; Xie, D.; Wang, Y.; Li, Z.; Hu, P.J.-H.; Zhang, Q. Hydrogen as the Nexus of Future Sustainable Transport and Energy Systems. Nat. Rev. Electr. Eng. 2025, 2, 447–466. [Google Scholar] [CrossRef]

- Sharif, M.; Seker, H. Context-Aware Agentic Power Resources Optimisation in EV using Smart2Charge App. arXiv 2024, arXiv:2512.12048. [Google Scholar]

- Altamimi, A.; Ali, M.B.; Kazmi, S.A.A.; Khan, Z.A. Multi-Agent Reinforcement Learning Optimization Framework for On-Grid Electric Vehicle Charging from Base Transceiver Stations Using Renewable Energy and Storage Systems. Energies 2024, 17, 3592. [Google Scholar] [CrossRef]

- Jamjuntr, P.; Techawatcharapaikul, C.; Suanpang, P. Adaptive Multi-Agent Reinforcement Learning for Optimizing Dynamic Electric Vehicle Charging Networks in Thailand. World Electr. Veh. J. 2024, 15, 453. [Google Scholar] [CrossRef]

- Li, Y.; Zhang, Z.; Xing, Q. Real-time online charging control of electric vehicle charging station based on a multi-agent deep reinforcement learning. Energy 2025, 319, 135095. [Google Scholar] [CrossRef]

| Variables | Description |

|---|---|

| N | Total number of accessible charging stations within the local distribution zone. |

| i, j | Indices for nodes in the distribution network. |

| t | Time step index. |

| r, x | Branch resistance and reactance, respectively. |

| Statistical mean times for morning (08:30) and evening (18:00) charging peaks. | |

| δ | Grid loss weighting coefficient. |

| V | Node voltage magnitude. |

| I | Branch current flowing between adjacent nodes. |

| γ | Discount factor for the MARL algorithm. |

| Active and reactive power flow from node to node . |

| Parameter | Value | Description |

|---|---|---|

| Actor Learning Rate | 1 × 10−4 | Controls the step size for updating the actor policy network |

| Critic Learning Rate | 1 × 10−3 | Controls the step size for updating the critic value network |

| Discount Factor | 0.95 | Determines the importance of future rewards |

| Target Soft Update Rate | 0.01 | Smoothing coefficient for updating target networks |

| Batch Size | 1024 | Number of experiences sampled per training iteration |

| Replay Buffer Capacity | 106 | Maximum number of stored transitions |

| Variation of Grid Loss Weighting Coefficient | Station Utilization Rate (%) | Relative Charging Cost Index |

|---|---|---|

| −15% | 88.5 | 105 |

| −5% | 87.2 | 102 |

| Base (0%) | 85.0 | 100 |

| +5% | 80.5 | 98 |

| +15% | 73.0 | 92 |

| Configuration | Transformer Utilization (%) | Safety Violations |

|---|---|---|

| Full Framework | 118.5 | 0 |

| w/o DTR Layer (Static Rating) | 98.2 | 7 |

| w/o CTDE (Independent Learners) | 112.4 | 11 |

| w/o Intraday Layer | 115.3 | 4 |

| EV Penetration Rate (%) | Centralized Optimization Execution Time (s) | Proposed MADDPG Execution Time (s) |

|---|---|---|

| 20% | 12.3 | 0.5 |

| 30% | 35.8 | 0.8 |

| 40% | 128.3 | 1.2 |

| 50% | 351.0 | 1.5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhang, T.; Li, P.; Wang, J.; Zhao, Q. A Multi-Timescale Cooperative Scheduling Method for Flexible Load in Power Distribution System Considering Dynamic Transformer Rating. Processes 2026, 14, 1584. https://doi.org/10.3390/pr14101584

Zhang T, Li P, Wang J, Zhao Q. A Multi-Timescale Cooperative Scheduling Method for Flexible Load in Power Distribution System Considering Dynamic Transformer Rating. Processes. 2026; 14(10):1584. https://doi.org/10.3390/pr14101584

Chicago/Turabian StyleZhang, Tiantian, Peng Li, Jun Wang, and Qiangsong Zhao. 2026. "A Multi-Timescale Cooperative Scheduling Method for Flexible Load in Power Distribution System Considering Dynamic Transformer Rating" Processes 14, no. 10: 1584. https://doi.org/10.3390/pr14101584

APA StyleZhang, T., Li, P., Wang, J., & Zhao, Q. (2026). A Multi-Timescale Cooperative Scheduling Method for Flexible Load in Power Distribution System Considering Dynamic Transformer Rating. Processes, 14(10), 1584. https://doi.org/10.3390/pr14101584