Evaluating and Revising the Digital Citizenship Scale

Abstract

:1. Introduction

1.1. Contemporary Citizenship and Engagement

1.2. Digital Citizenship and Its Measurement

1.3. The Current Study

2. Materials and Methods

2.1. Participants

2.2. Measures

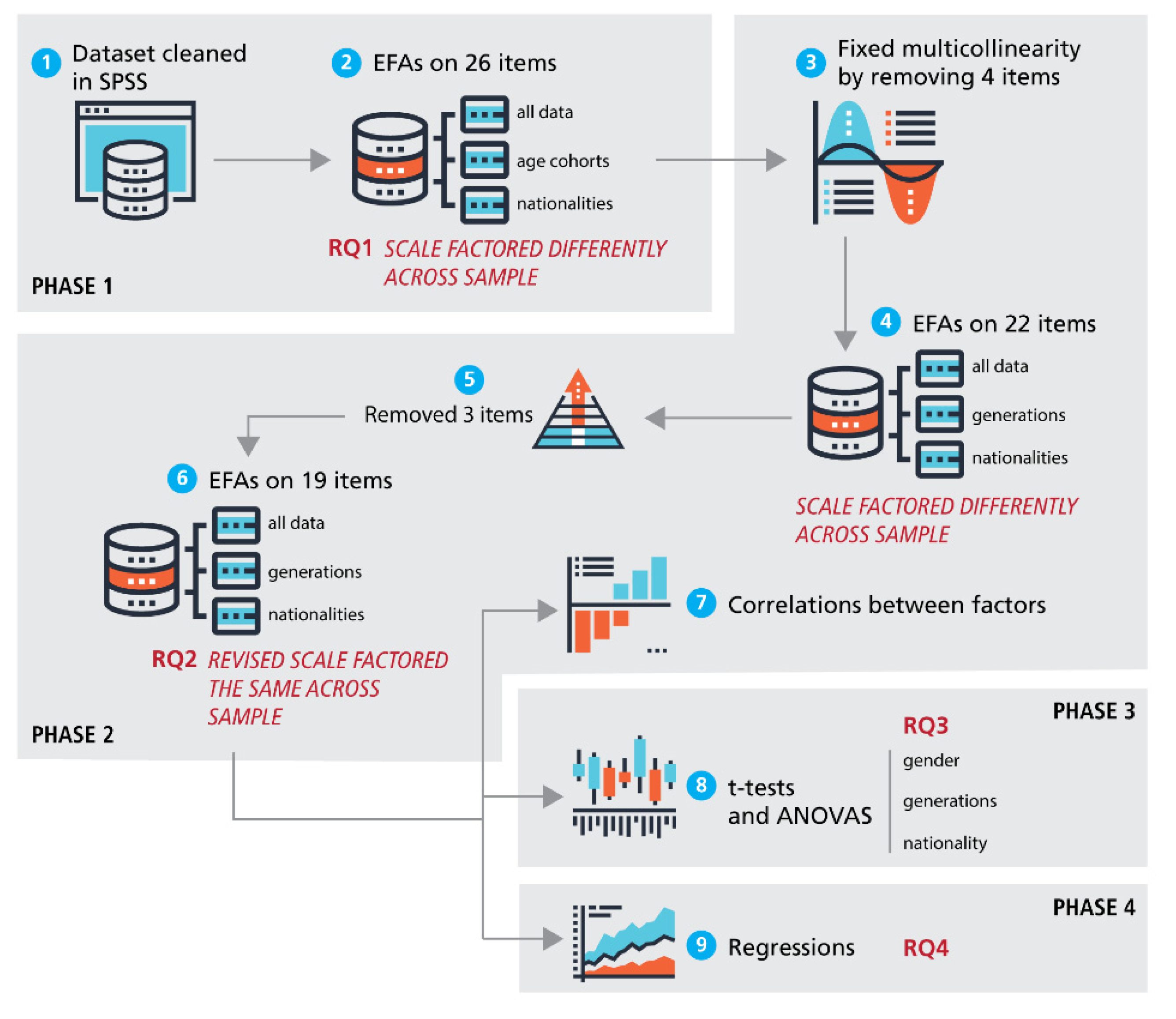

2.3. Statistical Analyses

3. Results

3.1. Phase 1: Initial Exploratory Factor Analysis

3.2. Phase 2: Revised Exploratory Factor Analysis

3.3. Phase 3: Descriptive and Comparative Statistics

3.4. Phase 4: Regressions and Modelling

4. Discussion

4.1. Revised DCS Improvements

4.2. Is IPA Equivalent to Digital Citizenship?

4.3. The Role of Critical Perspective

4.4. Limitations

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A. EFA Examination Procedure

References

- Choi, M.; Glassman, M.; Cristol, D. What it means to be a citizen in the internet age: Development of a reliable and valid digital citizenship scale. Comput. Educ. 2017, 107, 100–112. [Google Scholar] [CrossRef]

- Fernández-Prados, J.S.; Lozano-Díaz, A.; Ainz-Galende, A. Measuring digital citizenship: A comparative analysis. Informatics 2021, 8, 18. [Google Scholar] [CrossRef]

- Almond, G.A.; Verba, S. The Civic Culture: Political Attitudes and Democracy in Five Nations; Princeton University Press: Princeton, NJ, USA, 1963. [Google Scholar]

- Putnam, R. Bowling Alone: The Collapse and Revival of American Community; Simon and Schuster: New York, NY, USA, 2001. [Google Scholar]

- Phelps, E. Understanding electoral turnout among British young people: A review of the literature. Parliam. Aff. 2011, 65, 281–299. [Google Scholar] [CrossRef]

- Pontes, A.; Henn, M.; Griffiths, M. Youth political (dis)engagement and the need for citizenship education: Encouraging young people’s civic and political participation through the curriculum. Educ. Citizsh. Soc. Justice 2019, 14, 3–21. [Google Scholar] [CrossRef]

- Dalton, R. Citizenship norms and the expansion of political participation. Political Stud. 2008, 56, 76–98. [Google Scholar] [CrossRef]

- Xenos, M.; Vromen, A.; Loader, B. The great equalizer? Patterns of social media use and youth political engagement in three advanced democracies. Inf. Commun. Soc. 2014, 17, 151–167. [Google Scholar] [CrossRef]

- Oser, J.; Hooghe, M.; Marien, S. Is online participation distinct from offline participation? A latent class analysis of participation types and their stratification. Political Res. Q. 2013, 66, 91–101. [Google Scholar] [CrossRef]

- Bimber, B.; Cunill, M.C.; Copeland, L.; Gibson, R. Digital Media and Political Participation: The Moderating Role of Political Interest Across Acts and Over Time. Soc. Sci. Comput. Rev. 2015, 33, 21–42. [Google Scholar] [CrossRef]

- Weiss, J. What is youth political participation? Literature review on youth political participation and political attitudes. Front. Political Sci. 2020, 2, 1. [Google Scholar] [CrossRef]

- Boulianne, S.; Theocharis, Y. Young people, digital media, and engagement: A meta-analysis of research. Soc. Sci. Comput. Rev. 2020, 38, 111–127. [Google Scholar] [CrossRef]

- Ohme, J. Updating citizenship? The effects of digital media use on citizenship understanding and political participation. Inf. Commun. Soc. 2018, 22, 1903–1928. [Google Scholar] [CrossRef]

- Pangrazio, L.; Sefton-Green, J. Digital rights, digital citizenship and digital literacy: What’s the difference? J. New Approaches Educ. Res. 2021, 10, 15–27. [Google Scholar] [CrossRef]

- Ribble, M.; Bailey, G.D. Digital citizenship focus questions for implementation. Learn. Lead. Technol. 2004, 32, 12–15. [Google Scholar]

- Ribble, M.; Miller, T.N. Educational leadership in an online world: Connecting students to technology responsibly, safely, and ethically. J. Asynchronous Learn. Netw. 2013, 17, 137–145. [Google Scholar] [CrossRef]

- Hui, B.; Campbell, R. Discrepancy between learning and practicing digital citizenship. J. Acad. Ethics 2018, 16, 117–131. [Google Scholar] [CrossRef]

- Kim, M.; Choi, D. Development of youth digital citizenship scale and implication for educational setting. J. Educ. Technol. Soc. 2018, 21, 155–171. [Google Scholar]

- Peart, M.T.; Gutiérrez-Esteban, P.; Cubo-Delgado, S. Development of the digital and socio-civic skills (DIGISOC) questionnaire. Educ. Technol. Res. Dev. 2020, 68, 3327–3351. [Google Scholar] [CrossRef]

- Emejulu, A.; McGregor, C. Towards a radical digital citizenship in digital education. Crit. Stud. Educ. 2019, 60, 131–147. [Google Scholar] [CrossRef]

- Westheimer, J.; Kahne, J. What kind of citizen? The politics of educating for democracy. Am. Educ. Res. J. 2004, 41, 237–269. [Google Scholar] [CrossRef]

- Choi, M.; Cristol, D. Digital citizenship with intersectionality lens: Towards participatory democracy driven digital citizenship education. Theory Into Pract. 2021, 60, 361–370. [Google Scholar] [CrossRef]

- Heath, M.K. What kind of (digital) citizen? A between-studies analysis of research and teaching for democracy. Int. J. Inf. Learn. Technol. 2018, 35, 342–356. [Google Scholar] [CrossRef]

- Choi, M.; Cristol, D.; Gimbert, B. Teachers as digital citizens: The influence of individual backgrounds, internet use and psychological characteristics on teachers’ levels of digital citizenship. Comput. Educ. 2018, 121, 143–161. [Google Scholar] [CrossRef]

- Kara, N. Understanding university students’ thoughts and practices about digital citizenship. J. Educ. Technol. Soc. 2018, 21, 172–185. [Google Scholar]

- Yoon, S.; Kim, S.; Jung, Y. Needs analysis of digital citizenship education for university students in South Korea: Using importance-performance analysis. Educ. Technol. Int. 2019, 20, 1–24. [Google Scholar]

- Lozano-Díaz, A.; Fernández-Prados, J.S. Educating digital citizens: An opportunity to critical and activist perspective of sustainable development goals. Sustainability 2020, 12, 7260. [Google Scholar] [CrossRef]

- Kees, J.; Berry, C.; Burton, S.; Sheehan, K. An analysis of data quality: Professional panels, student subject pools, and Amazon’s Mechanical Turk. J. Advert. 2017, 46, 141–155. [Google Scholar] [CrossRef]

- Bentley, F.R.; Daskalova, N.; White, B. Comparing the Reliability of Amazon Mechanical Turk and Survey Monkey to Traditional Market Research Surveys. In Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems, Denver, CO, USA, 6–11 May 2017. [Google Scholar]

- Yong, A.G.; Pearce, S. A beginner’s guide to factor analysis: Focusing on exploratory factor analysis. Tutor. Quant. Methods Psychol. 2013, 9, 79–94. [Google Scholar] [CrossRef]

- Field, A. Discovering Statistics using IBM SPSS Statistics; Sage Press: Thousand Oaks, CA, USA, 2018. [Google Scholar]

- Theocharis, Y.; Van Deth, J.W. The continuous expansion of citizen participation: A new taxonomy. Eur. Political Sci. Rev. 2018, 10, 139–163. [Google Scholar] [CrossRef]

- Choi, M.A. Concept Analysis of Digital Citizenship for Democratic Citizenship Education in the Internet Age. Theory Res. Soc. Educ. 2016, 44, 565–607. [Google Scholar] [CrossRef]

- Jolliffe, F.R. Survey Design and Analysis; Ellis Horwood: Chichester, UK, 1986. [Google Scholar]

- Williams, B.; Onsman, A.; Brown, T. Exploratory factor analysis: A five-step guide for novices. Australas. J. Paramed. 2010, 8, 1–13. [Google Scholar] [CrossRef]

| Gender | Age Groups (Generation Name) | Total/% | |||||

|---|---|---|---|---|---|---|---|

| Males | Females | Gen-Z | Millennial Gen-Y | Gen-X | Boomer | ||

| Canada | 437 | 380 | 343 | 195 | 143 | 136 | 817/44.9% |

| Australia | 213 | 376 | 93 | 143 | 218 | 135 | 589/32.4% |

| Slovenia | 178 | 236 | 241 | 45 | 95 | 33 | 414/22.7% |

| Total/% | 828/45.5% | 992/54.5% | 677/37.2% | 383/21.0% | 456/25.1% | 304/16.7% | 1820 |

| Items | Factor 1 (IPA) | Factor 2 (TS) | Factor 3 (CP) | Factor 4 (NA) |

|---|---|---|---|---|

| Factor 1: Internet Political Activism (IPA) | ||||

| 0.82 | |||

| 0.79 | |||

| 0.78 | |||

| 0.75 | |||

| 0.61 | |||

| 0.47 | |||

| Factor 2: Technical Skills (TS) | ||||

| 0.84 | |||

| 0.79 | |||

| 0.77 | |||

| Factor 3: Critical Perspectives (CP) | ||||

| −0.83 | |||

| −0.74 | |||

| −0.66 | |||

| −0.56 | |||

| −0.51 | |||

| −0.51 | |||

| −0.49 | |||

| Factor 4: Networking Agency (NA) | ||||

| −0.88 | |||

| −0.70 | |||

| −0.51 | |||

| % Variance | 38.2% | 14.0% | 7.9% | 5.3% |

| Eigenvalue | 7.26 | 2.67 | 1.49 | 1.00 |

| Cronbach’s alpha (all) | 0.88 | 0.84 | 0.85 | 0.82 |

| Cronbach’s alpha (Canada) | 0.90 | 0.82 | 0.85 | 0.82 |

| Cronbach’s alpha (Slovenia) | 0.88 | 0.88 | 0.85 | 0.84 |

| Cronbach’s alpha (Australia) | 0.85 | 0.82 | 0.85 | 0.84 |

| IPA | TS | CP | NA | |

|---|---|---|---|---|

| Internet Political Activism (IPA) | - | |||

| Technical Skills (TS) | −0.073 ** | - | ||

| Critical Perspectives (CP) | 0.611 ** | 0.154 ** | - | |

| Networking Agency (NA) | 0.631 ** | 0.091 ** | 0.537 ** | - |

| IPA | TS | CP | NA | |

|---|---|---|---|---|

| All | 2.76 (1.41) | 6.38 (0.80) | 4.15 (1.18) | 3.57 (1.58) |

| Gender | ||||

| Male | 2.83 (1.51) | 6.37 (0.82) | 4.12 (1.19) | 3.56 (1.64) |

| Female | 2.69 (1.31) | 6.39 (0.78) | 4.17 (1.17) | 3.57 (1.53) |

| Nationality | ||||

| Canada | 2.60 (1.39) | 6.42 (0.72) | 4.18 (1.14) | 3.58 (1.56) |

| Australia | 3.19 (1.41) | 6.41 (0.75) | 4.41 (1.17) | 3.84 (1.62) |

| Slovenia | 2.43 (1.30) | 6.28 (0.99) | 3.73 (1.17) | 3.14 (1.47) |

| Generation | ||||

| Gen-Z (18–20) | 2.34 (1.36) | 6.48 (0.68) | 4.08 (1.19) | 3.27 (1.50) |

| Gen-Y/Millennial (21–30) | 2.85 (1.30) | 6.39 (0.83) | 4.26 (1.09) | 3.61 (1.49) |

| Gen-X (31–50) | 3.16 (1.43) | 6.37 (0.86) | 4.25 (1.18) | 3.92 (1.60) |

| Boomer (51+) | 2.98 (1.40) | 6.19 (0.80) | 4.04 (1.18) | 3.57 (1.71) |

| t | df | p | 95% CI | Cohen’s d | Effect Size | |

|---|---|---|---|---|---|---|

| IPA | 2.07 | 1777 | 0.039 * | 0.007, 0.270 | 0.098 | Very Small |

| TS | −0.72 | 1805 | 0.470 | −0.102, 0.047 | −0.034 | Tiny |

| CP | −0.99 | 1784 | 0.321 | −0.166, 0.054 | −0.048 | Tiny |

| NA | −0.22 | 1795 | 0.822 | −0.164,0.130 | −0.010 | Tiny |

| IPA (Internet Political Activism) | TS (Technical Skill) | |||||||

| Source | df | F | p | ηp2 | df | F | p | ηp2 |

| Nationality | (2, 1790) | 45.07 | 0.000 * | 0.048 ~ | (2, 1817) | 4.66 | 0.010 * | 0.005 |

| Generation | (3, 1790) | 69.81 | 0.000 * | 0.059 ~ | (3, 1817) | 9.15 | 0.000 * | 0.015 |

| CP (Critical Perspectives) | NA (Networking Agency) | |||||||

| Source | df | F | p | ηp2 | df | F | p | ηp2 |

| Nationality | (2, 1797) | 42.39 | 0.000 * | 0.045 ~ | (2, 1808) | 24.23 | 0.000 * | 0.026 |

| Generation | (3, 1797) | 3.87 | 0.009 * | 0.006 | (3, 1808) | 15.95 | 0.000 * | 0.026 |

| IPA | ||||||||

|---|---|---|---|---|---|---|---|---|

| Model 1 | Model 2 | Model 3 | Model 4 | |||||

| Variable | B | β | B | β | B | β | B | β |

| Constant | 21.488 | 16.653 | 10.566 | 4.556 | ||||

| Gender | −0.834 | –0.050 | –0.805 | –0.048 * | –0.919 | –0.055 ** | −1.058 | –0.063 ** |

| Nationality | 0.305 | 0.046 | 0.306 | 0.046 | 0.498 | 0.074 ** | 0.657 | 0.098 ** |

| TS | –0.72 | –0.068 | –0.488 | –0.046 | −1.204 | –0.113 ** | −1.63 | –0.153 ** |

| Generation | 1.547 | 0.205 ** | 0.879 | 0.116 ** | 0.998 | 0.132 ** | ||

| NA | 3.335 | 0.627 ** | 2.143 | 0.403 ** | ||||

| CP | 3.019 | 0.426 ** | ||||||

| R2 | 0.009 | 0.051 | 0.432 | 0.560 | ||||

| F | 5.256 ** | 22.908 ** | 261.404 ** | 363.744 | ||||

| ∆R2 | 0.009 | 0.042 | 0.381 | 0.128 | ||||

| ∆F | 5.256 ** | 75.183 ** | 1153.963 ** | 497.748 ** | ||||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Connolly, R.; Miller, J. Evaluating and Revising the Digital Citizenship Scale. Informatics 2022, 9, 61. https://doi.org/10.3390/informatics9030061

Connolly R, Miller J. Evaluating and Revising the Digital Citizenship Scale. Informatics. 2022; 9(3):61. https://doi.org/10.3390/informatics9030061

Chicago/Turabian StyleConnolly, Randy, and Janet Miller. 2022. "Evaluating and Revising the Digital Citizenship Scale" Informatics 9, no. 3: 61. https://doi.org/10.3390/informatics9030061

APA StyleConnolly, R., & Miller, J. (2022). Evaluating and Revising the Digital Citizenship Scale. Informatics, 9(3), 61. https://doi.org/10.3390/informatics9030061